Abstract

In a previous paper Amman et al. (Macroecon Dyn, 2018) compare the two dominant approaches for solving models with optimal experimentation (also called active learning), i.e. the value function and the approximation method. By using the same model and dataset as in Beck and Wieland (J Econ Dyn Control 26:1359–1377, 2002), they find that the approximation method produces solutions close to those generated by the value function approach and identify some elements of the model specifications which affect the difference between the two solutions. They conclude that differences are small when the effects of learning are limited. However the dataset used in the experiment describes a situation where the controller is dealing with a nonstationary process and there is no penalty on the control. The goal of this paper is to see if their conclusions hold in the more commonly studied case of a controller facing a stationary process and a positive penalty on the control.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

In recent years there has been a resurgent interest in economics on the subject of optimal or strategic experimentation also referred to as active learning, see e.g. Amman et al. (2018), Buera et al. (2011) and Savin and Blueschke (2016).Footnote 1 There are two prevailing methods for solving this class of models. The first method is based on the value function approach and the second on an approximation method. The former uses dynamic programming for the full problem as used in studies by Prescott (1972), Taylor (1974), Easley and Kiefer (1988), Kiefer (1989), Kiefer and Nyarko (1989), Aghion et al. (1991) and more recently used in the work of Beck and Wieland (2002), Coenen et al. (2005), Levin et al. (2003) and Wieland (2000a, b). A nice set of applications on optimal experimentation, using the value function approach, can be found in Willems (2012).

In principle, the value function approach should be the preferred method as it derives the optimal values for the policy variables through Bellman’s (1957) dynamic programming. Unfortunately, it suffers from the curse of dimensionality, as is shown in Bertsekas (1976). Hence, the value function approach is only applicable to small problems with one or two policy variables. This is caused by the fact that solution space needs to be discretized in such a fashion that it cannot be solved in feasible time. The approximation methods as described in Cosimano (2008) and Cosimano and Gapen (2005a, b), Kendrick (1981) and Hansen and Sargent (2007) use approaches, that are applied in the neighborhood of the linear regulator problems.Footnote 2 Because of this local nature with respect to the statistics of the model, the method is numerically far more tractable and allows for models of larger dimension. However, the verdict is still out as to how well it performs in terms of approximating the optimal solution derived through the value function. By the way, the approximation method described here, should not be mistaken for a cautious or passive learning method. Here we concentrate only on optimal experimentation—active learning—approaches.

Both solution methods consider dynamic stochastic models in which the control variables can be used not only to guide the system in desired directions but also to improve the accuracy of estimates of parameters in the models. There is a trade off in which experimentation of the policy variables early in time deviates from reaching current goals, but leads to learning or improved parameter estimates and improved performance of the system later in time. Ergo, the dual nature of the control. For this reason, we concentrate in the sections below on the policy function in the initial period. Usually most of the experimentation—active learning—is done in the beginning of the time interval, and therefore, the largest difference between results obtained with the two methods may be expected in this period.

Until very recently there was an invisible line dividing researchers using one approach from those using the other. It is only in Amman et al. (2018) that the value function approach and the approximation method are used to solve the same problem and their solutions are compared. In that paper the focus is on comparing the policy function results reported in Beck and Wieland (2002), through the value function, to those obtained through approximation methods. Therefore those conclusions apply to a situation where the controller is dealing with a nonstationary process and there is no penalty on the control. The goal of this paper is to see if they hold for the more frequently studied case of a stationary process and a positive penalty on the control. To do so a new value function algorithm has been written, to handle several sets of parameters, and more general formulae for the cost-to-go function of the approximation method are used. The remainder of the paper is organized as follows. The problem is stated in Sect. 2. Then the value function approach and the approximation approach are described (Sects. 3 and 4, respectively). Section 5 contains the experiment results. Finally the main conclusions are summarized (Sect. 6).

2 Problem Statement

The discrete time problem we want to investigate dates back to MacRae (1975) and it closely resembles that used in Beck and Wieland (2002). For this reason it is going to be referred to as MBW model throughout the paper. It is defined as follows:

Finding the control that minimize the cost functional, i.e.

subject to the one period loss function taking the form

and the linear system

with the time-varying parameter modeled as

where \(\epsilon _t \sim {\mathcal {N}}(0,\sigma ^2_{\epsilon })\) and \(\eta _t \sim {\mathcal {N}}(0,\sigma ^2_\eta )\). The parameter \(\beta _t\) is estimated using the Kalman filter

The parameters \(b_0\), \(\nu _0^{b}\), \(\sigma ^2_{\eta }\) and \(\sigma ^2_{\epsilon }\) are assumed to be known.

3 Solving the Value Function

The above problem can be solved using dynamic programming. The corresponding Bellman equation is

with the restrictions in (2)–(9), dropping \(b_t\) and \(v^b_t\) for convenience, \(f(x_t)\) being the normal distribution and \(\rho \) the discount factor

with mean \(E_{t-1}(x_t)=\mu _{ x}\) and \(Var_{t-1}(x_t)=\sigma _{ x}^2\), hence

If we use the transform

hence

and

Furthermore

and insert them in (12) we get

The integral part of the right hand side of (18) can be numerically approximated on the \(\{y_1 \ldots y_n\}\) nodes with weights \(\{\chi _1 \ldots \chi _n\}\) using a gauss-hermite quadrature

\(x_k\) the value of x at the node \(y_k\)

and the necessary updating equations

We can expand \(L(x_t,u_t)\) in (2) as followsFootnote 3

The computational challenge is to solve (19) numerically. If we set up a grid

of size \(m_x\),

of size \(m_b\) and

of size \(m_v\),

we can compute an initial guess for \(V^0\) by computing \(u_t\) that minimizes \(L(x_t,u_t)\) in Eq. (23) on each of the \(m_x \times m_b \times m_v\) tuples \(\{{b_g,v_g,x_g}\}\)

where the optimal value of the policy variable \(u_t^{CE}\) is equal to

which is the certainty equivalence (CE) solution of the problem in equations defined in (1)–(2). Now we have an initial value \(V^0\) we can solve equation (19) iteratively.Footnote 4

The value of \(u_t\) that minimizes the right hand side of (26) can be obtained through a simple line search. The value of \(V^j (x_k,u_t | b_k,v_k^b)\) in (26), can be found by finding the corresponding spot on the grid.

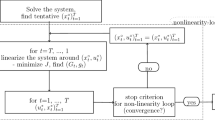

4 Approximating the Value Function

In this section we present a short summary of the derivations found in Amman and Tucci (2017). Following Tse and Bar-Shalom (1973) and Kendrick (1981, 2002), for each time period, the approximate cost-to-go at different values of the control is computed. The control yielding the minimum approximate cost is selected. This approximate cost-to-go is decomposed into a deterministic, cautionary and probing component. The deterministic component includes only terms which are not stochastic. The cautionary one includes uncertainty only in the next time period and the probing term contains uncertainty in all future time periods. Thus the probing term includes the motivation to perturb the controls in the present time period in order to reduce future uncertainty about parameter values.

The approximate cost-to-go in the infinite horizon BMW model looks like

Equation (29) is identical to equation (27) in Tucci et al. (2010), but now the parameters associated with the deterministic component, the \(\psi \)’s, are defined as

where \(b_{0}\) is the estimate of the unknown parameter at time 0 and

with \({ k}^{CE}\) the fixed point solution to the usual Riccati recursionFootnote 5

The parameters associated with the cautionary component, the \(\delta _i\), take the form

with

whereFootnote 6

Finally the parameters related to the probing component, the \({ \phi }\)’s, take the form

As shown in Amman and Tucci (2017) the new definitions are perfectly consistent with those associated to the two-period finite horizon model.

5 Experimentation

In this section the infinite horizon control for the MBW model is computed for the value function and approximation method when the system is assumed stationary. Moreover an equal penalty weight is applied to deviations of the state an control from their desired path, assumed zero here. In order to stay as close as possible to the case discussed in Beck and Wieland (2002, p. 1367) and Amman et al. (2018) the parameters are \(\alpha =0.7, \gamma =0, q=1, w=1, \lambda =1, \rho =0.95\).

Figures 1, 2, 3 and 4 contain the four typical solutions of the model for \(b_0=-0.05\), \(b_0=-0.4\), \(b_0=-1.0\) and \(b_0=-2.0\). For each value of \(b_0\), four different levels of uncertainty, as measured by the estimated parameter variance \(v_0\), are considered. By doing so it is possible to assess the relevance of relative uncertainty on the performance of the two approaches considered in this work. In this situation both the approximation approach (solid line) and the value function approach (dotted line) suggest a more conservative control than in the nonstationary and no penalty on the control case. The difference between the two approaches tends to be much smaller when the initial state is not too far from the desired path and the parameter uncertainty is relatively small, whereas it is approximately the same for \(x_0=-5\) or \(x_0=5\) (compare Figure (1) in Amman et al. (2018) with the top right panel in Fig. 2).Footnote 7 By comparing the different cases reported below, it is apparent that the difference between the solutions generated by the two methods depends heavily upon the level of relative uncertainty about the unknown parameter. For instance in Fig. 2, top-left panel , it is shown that the two approaches generate almost identical results for the whole spectrum of possible initial states when \(b_0\) is estimated with very little variance, i.e. 0.01. The situation changes, Fig. 2 remaining panels, when the same parameter estimate is associated with higher variances. A small shock from the desired initial state causes the two algorithms to give meaningfully different solutions when the variance is 1. Therefore it turns out that in all cases, Figs. 1, 2 and 3, relative uncertainty is very important in explaining the different results. Moreover it turns out that the distinction between high relative uncertainty and extreme uncertainty becomes relevant. For instance in Fig. 1, bottom panels, the unknown parameter is extremely uncertain and the two approaches suggest opposite controls.

A readily availble measure of relative uncertainty of an unknown parameter is the usual t-statistics automatically generated by all econometrics software, i.e. the ratio of the parameter estimate over its estimated standard deviation. By using this econometric tool it can be stated that when there is very little or no uncertainty about the unknown parameter as in Fig. 4, a situation where the t -statistics ranges from virtual certainty (top left panel) to 2 (bottom right panel), the two solutions are almost identical as it should be expected. As the level of uncertainty increases, as in Figs. 2 and 3, the difference becomes more pronounced and the approximation method is usually less active then the value function approach. Figure 2, with the t ranging from certainty to 0.4, and 3, with t going from certainty to 1, reflect the most common situations. However when there is high uncertainty as in Fig. 1, where the t goes from 5 (top left panel) to 0.05 (bottom right panel), the approximation method shows very aggressive solutions when the t -statistics is around 0.1–0.2 and the initial state is far from its desired path. In the extreme cases where the t drops below 0.1, bottom panels of Fig. 1, this method finds optimal to perturb the system in the ’opposite’ direction in order to learn something about the the unknown parameter. These are cases where the 99 percent confidence intervals for the unknown parameter are (− 2.15:2.05), when \(v_0=0.49\), and (− 3.05:2.95), when \(v_0=1\). Alternatively, if the initial state is close to the desired path this method is very conservative.

On the other hand the value function approach seems somehow ’insulated’ by the extreme uncertainty surrounding the unknown parameter. As apparent from Fig. 1 this optimal control stays more or less constant in the presence of an extremely uncertain parameter. The major consequence seems to be a bigger ’jump’ in the control applied when the initial state is around the desired path. Summarizing, a very higher parameter uncertainty results in a more aggressive control when the initial state is in the neighborhood of its desired path and a relatively less aggressive control when it is far from it.

Figures 1, 2, 3 and 4 show the importance of the parameter relative uncertainty, as measured by its t-statistics, in explaining the difference between the two alternative approaches. But is there a continuous monotonous relationship? At what levels of this t-value the differences become pronounced? Is there a threshold value under which ‘extreme uncertainty’ results appear? To answer these questions.

Figure 5 uses the same four values of \(b_0\) to compare the two methods at various variances, when the initial state is \(x_0=1\) explicitly taking into account the t-ratio. It is apparent that there is an inverse relationship between this statistics and the discrepancy between the two approaches under consideration. Again the difference is more noticeable when the t-statistics drops below 1. In the presence of extreme uncertainty, i.e. when this statistics falls below 0.5, and an initial state far from the desired path this difference not only gets larger and larger but it may be also associated with the approaches giving opposite solutions, i.e. a positive control vs a negative control. This is what happens in the top left panel of Fig. 5 where for very low t-statistics the value function approach suggests a positive control whereas the approximation approach suggests a slightly negative control.

The same qualitative results characterize a situation with a much smaller system variance, namely \(q=0.01\), as shown in Figs. 6, 7, 8 and 9. In this scenario controls are less aggressive than in the previous one and, as previously seen, the approximation approach is generally less active than the competitor. It looks like the optimal control is insensitive to system noise when the parameter associated with it has very little uncertainty as in the top left panel of Figs. 1 and 6. However, when the unknown parameter has a very low t-statistics the control is significantly affected by the system noise. Then the distinction between high and extreme uncertainty about the unknown parameter becomes even more relevant then before. At a preliminary examination it seems that a higher system noise has the effect of ’reducing’ the perceived parameter uncertainty. For example, the bottom right panels in Figs. 2 and 7 show the optimal controls when the t associated with the unknown parameter is around 0.5. This parameter uncertainty is associated with a very low system noise in the latter case. Therefore it is perceived in its real dimension and the approximation approach suggests a control in the ’opposite’ direction when the initial state is far from its desired path.

Figure 10 uses the same four values of \(b_0\) to compare the two methods at various variances, when the initial state is \(x_0=1\) and the system variance is \(q=0.1\). As in Fig. 10 the difference is more noticeable when the t-statistics drops below 1.

It is unclear at this stage if the distinction between high uncertainty and extreme uncertainty is relevant also for the nonstationary case treated in Amman et al. (2018). A hint may be given by their Fig. 8. It reports the results for the case where the parameter estimate is 0.3 and its variance is 0.49, i.e. the t-statistics of the unknown parameter is around 0.4. In this case the approximation approach is more active than the value function approach when the initial state is far from the desired path, i.e. \(x_0\) greater than 3. This seems to suggest that the distinction between high and extreme uncertainty is relevant also when the system is nonstationary and no penalty is applied to the controls.

6 Conclusions

In a previous paper Amman et al. (2018) compare the value function and the approximation method in a situation where the controller is dealing with a nonstationary process and there is no penalty on the control. They conclude that differences are small when the effects of learning are limited. In this paper we find that similar results hold for the more commonly studied case of a controller facing a stationary process and a positive penalty on the control. Moreover we find that a good proxy for parameter uncertainty is the usual t -statistics used by econometricians and that it is very important to distinguish between high and in extreme uncertainty about the unknown parameter. In the latter situation, i.e. t close to 0, when the initial state is very far from its desired path and the parameter associated with the control is very small the approximation method becomes very active. Eventually it even perturbs the system in the opposite direction.

This is something that needs further investigation with other models and parameter sets. It may be due to the fact that the computational approximation to the integral needed in value function approach does not fully incorporate these extreme cases. Or it may the consequence of some hidden relationships between the parameters and the components of the cost-to-go in the approximation approach. However the behavior of the ’approximation control’ makes full sense. Its suggestion is ’in the presence of extreme uncertainty don’t be very active if you are close to the desired path but ’go wild’ if you are far from it’. If this characteristics is confirmed it may represent a useful additional tool in the hands of the control researcher to discriminate between cases where the control can be reliably applied and cases where it cannot.

Notes

For consistency and clarity in the main text, we used the term approximation method instead of adaptive or dual control. The adaptive or dual control approach in MacRae (1975), see Kendrick (1981), Amman (1996) and Tucci (2004), uses methods that draw on earlier work in the engineering literature by Bar-Shalom and Sivan (1969) and Tse (1973).There are differences between this approach and the approximation approaches in Cosimano (2008) and Savin and Blueschke (2016) which we will not discuss in detail here. Through out the paper we will use the approach in Kendrick (1981).

Note that \(E_{t-1}(\beta ^2)=b_{t-1}^2+v_{t-1}^b+\sigma ^2_{\eta }\).

As far as we know there are no Riccati based recursion methods to solve Eq. (26).

In this case the Riccati equation is scalar function and can easily be solved. The multi-dimensional case can be more complicated to solve. See Amman and Neudecker (1997).

This compares with \({\tilde{k}}_{1}^{\beta x} =2w_{2} \left( { \alpha }+bG_{1} \right) G_{1} \) and

$$\begin{aligned} {\tilde{k}}_{1}^{\beta \beta } =w_{2} G_{1}^{2} +w_{2}^{2} \left( { \alpha }+2bG_{1} \right) ^{2}\left[ -\left( { \lambda }_{1} +b^{2} w_{2} \right) \right] ^{-1} \end{aligned}$$where the feedback matrix is defined as \(G_{1} =\left( -{\alpha bw_{2} \big / \lambda _{1} +b^{2} w_{2} } \right) \), in the two-period finite horizon model.

The reader should keep in mind that the opposite convention is used in Amman et al. (2018).

References

Aghion, P., Bolton, P., Harris, C., & Jullien, B. (1991). Optimal learning by experimentation. Review of Economic Studies, 58, 621–654.

Amman, H., & Tucci, M. (2017). The dual approach in an infinite horizon model. Quaderni del Dipartimento di Economia Politica 766. Università di Siena, Siena, Italy.

Amman, H. M. (1996). Numerical methods for linear-quadratic models. In H. M. Amman, D. A. Kendrick, & J. Rust (Eds.), Handbook of computational economics of handbook in economics (Vol. 13, pp. 579–618). Amsterdam: North-Holland Publishers.

Amman, H. M., Kendrick, D. A., & Tucci, M. P. (2018). Approximating the value function for optimal experimentation. Macroeconomic Dynamics (forthcoming).

Amman, H. M., & Neudecker, H. (1997). Numerical solution methods of the algebraic matrix riccati equation. Journal of Economic Dynamics and Control, 21, 363–370.

Bar-Shalom, Y., & Sivan, R. (1969). On the optimal control of discrete-time linear systems with random parameters. IEEE Transactions on Automatic Control, 14, 3–8.

Beck, G., & Wieland, V. (2002). Learning and control in a changing economic environment. Journal of Economic Dynamics and Control, 26, 1359–1377.

Bellman, R. E. (1957). Dynamic programming. Princeton, NJ: Princeton University Press.

Bertsekas, D. P. (1976). Dynamic programming and stochastic control (Vol. 125)., Mathematics in science and engineering New York: Academic Press.

Bolton, P., & Harris, C. (1999). Strategic experimentation. Econometrica, 67(2), 349–374.

Buera, F. J., Monge-Naranjo, A., & Primiceri, G. E. (2011). Learning the wealth of nations. Econometrica, 79(1), 1–45.

Coenen, G., Levin, A., & Wieland, V. (2005). Data uncertainty and the role of money as an information variable for monetary policy. European Economic Review, 49, 975–1006.

Cosimano, T. F., & Gapen, M. T. (2005a). Program notes for optimal experimentation and the perturbation method in the neighborhood of the augmented linear regulator problem. Working paper. Notre Dame, IN: Department of Finance, University of Notre Dame.

Cosimano, T. F., & Gapen, M. T. (2005b). Recursive methods of dynamic linear economics and optimal experimentation using the perturbation method. Working paper. Notre Dame, IN: Department of Finance, University of Notre Dame.

Cosimano, T. F. (2008). Optimal experimentation and the perturbation method in the neighborhood of the augmented linear regulator problem. Journal of Economics, Dynamics and Control, 32, 1857–1894.

Easley, D., & Kiefer, N. M. (1988). Controlling a stochastic process with unknown parameters. Econometrica, 56, 1045–1064.

Hansen, L. P., & Sargent, T. J. (2007). Robustness. Princeton, NJ: Princeton University Press.

Kendrick, D. A. (2002). Stochastic control for economic models, online edn. http://www.utexas.edu/cola/depts/economics/faculty/dak2.

Kendrick, D. A. (1981). Stochastic control for economic models (2nd ed., p. 2002). New York, NY: McGraw-Hill Book Company.

Kiefer, N. (1989). A value function arising in the economics of information. Journal of Economic Dynamics and Control, 13, 201–223.

Kiefer, N., & Nyarko, Y. (1989). Optimal control of an unknown linear process with learning. International Economic Review, 30, 571–586.

Levin, A., Wieland, V., & Williams, J. C. (2003). The performance of forecast-based monetary policy rules under model uncertainty. American Economic Review, 93, 622–645.

MacRae, E. C. (1972). Linear decision with experimentation. Annals of Economic and Social Measurement, 1, 437–448.

MacRae, E. C. (1975). An adaptive learning role for multi-period decision problems. Econometrica, 43, 893–906.

Moscarini, G., & Smith, L. (2001). The optimal level of experimentation. Econometrica, 69(6), 1629–1644.

Prescott, E. C. (1972). The multi-period control problem under uncertainty. Econometrica, 40, 1043–1058.

Salmon, T. C. (2001). An evaluation of econometric models of adaptive learning. Econometrica, 69(6), 1597–1628.

Savin, I., & Blueschke, D. (2016). Lost in translation: Explicitly solving nonlinear stochastic optimal control problems using the median objective value. Computational Economics, 48, 317–338.

Taylor, J. B. (1974). Asymptotic properties of multi-period control rules in the linear regression model. International Economic Review, 15, 472–482.

Tse, E. (1973). Further comments on adaptive stochastic control for a class of linear systems. IEEE Transactions on Automatic Control, 18, 324–326.

Tse, E., & Bar-Shalom, Y. (1973). An actively adaptive control for linear systems with random parameters. IEEE Transactions on Automatic Control, 18, 109–117.

Tucci, M. P. (2004). The rational expectation hypothesis, time-varying parameters and adaptive control. Dordrecht: Springer.

Tucci, M. P., Kendrick, D. A., & Amman, H. M. (2010). The parameter set in an adaptive control Monte Carlo experiment: Some considerations. Journal of Economic Dynamics and Control, 34, 1531–1549.

Wieland, V. (2000a). Learning by doing and the value of optimal experimentation. Journal of Economic Dynamics and Control, 24, 501–534.

Wieland, V. (2000b). Monetary policy, parameter uncertainty and optimal learning. Journal of Monetary Economics, 46, 199–228.

Willems, T. (2012). Essays on Optimal Experimentation. PhD thesis, Tinbergen Institute, University of Amsterdam, Amsterdam.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Amman, H.M., Tucci, M.P. How Active is Active Learning: Value Function Method Versus an Approximation Method. Comput Econ 56, 675–693 (2020). https://doi.org/10.1007/s10614-020-09968-2

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10614-020-09968-2

Keywords

- Optimal experimentation

- Value function

- Approximation method

- Adaptive control

- Active learning

- Time-varying parameters

- Numerical experiments