Abstract

In recent years, online radicalization has received increasing attention from researchers and policymakers, for instance, by analyzing online communication of radical groups and linking it to individual and collective pathways of radicalization into violent extremism. But these efforts often focus on radical individuals or groups as senders of radicalizing messages, while empirical research on the recipient is scarce. To study the impact of radicalized online content on vulnerable individuals, this study compared cognitive and affective appraisal and visual processing (via eye tracking) of three political Internet memes (empowering a right-wing group, inciting violence against out-groups, and emphasizing unity among human beings) between a right-wing group and a control group. We examined associations between socio-political attitudes, appraisal ratings, and visual attention metrics (total dwell time, number of fixations). The results show that right-wing participants perceived in-group memes (empowerment, violence) more positively and messages of overarching similarities much more negatively than controls. In addition, right-wing participants and participants in the control group with a high support for violence directed their attention towards graphical cues of violence (e.g., weapons), differentness, and right-wing groups (e.g., runes), regardless of the overall message of the meme. These findings point to selective exposure effects and have implications for the design and distribution of de-radicalizing messages and counter narratives to optimize the efficacy of prevention of online radicalization.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

The role of digital media in radicalization has received increasing attention from researchers, policymakers, the media, and the public (e.g., Neumann 2019; Thompson, 2012; Alfano et al., 2018). For instance, the Christchurch right-wing terrorist attack on the 15th March 2019 that resulted in 50 deaths of men, women, and children at a mosque was livestreamed on Facebook, and subsequent investigations showed a strong radical online presence of the perpetrator (Battersby & Ball, 2019; Hutchinson, 2019). To prevent such acts, it is necessary to understand the role of digital media in radicalization. Radicalization describes the development of attitudes (i.e., cognitive radicalization) and actions (i.e., behavioral radicalization) that are characterized by rejecting relevant societal, legal, and political norms (Neumann, 2013). Attitudes and behaviors that oppose basic human rights and equality and promote a desire to abolish or replace them, for instance, by using violence, are radical and even extremist (Beelmann, 2020). Therefore, attitudes that idealize inequality (e.g., promulgating superiority of a group because of their skin color or socioeconomic status) are radical, with violent extremism being one possible endpoint of the radicalization process. Several systematic reviews and meta-analyses have identified risk factors for radicalization (Vergani et al., 2018; McGilloway et al., 2015; Wolfowicz et al., 2019). Among them are push factors, such as relative deprivation and lower education; pull factors, such as propaganda, social dynamics (strong group bonds, identification with the group), or support for violence; and personal factors, such as mental stress, often connected to feeling lost and searching for meaning. However, earlier studies focused on factors of offline radicalization, and their impact on online radicalization is less clear.

Social Media and Radicalization

The Internet, particularly social media, is an accessible, easy way to find, share, and discuss radical content and develop and communicate radical ideologies, often under the disguise of free speech (Struck et al., 2020; Alava et al., 2017), therefore described as a catalyst for online radicalization. In social networks, like-minded individuals can easily connect and interact, which can speed up social identification and group-building, for instance, among right-wing groups, by establishing common values, goals, and forms of communication (Koehler, 2014; Meleagrou-Hitchens et al., 2017; Pauwels & Schils, 2016). Circulating messages and promoting images of a supposedly superior in-group with distinct features (e.g., strength, willpower, and an ideal of masculinity) can foster social identification in persons who assume these features as either shared or desired traits and devalue out-groups that do not share these traits (Roccas et al., 2008; Tajfel & Turner, 1986; Schmitt et al., 2020). Thus, many researchers conclude that processes of social identification (e.g., identification, engagement, and deference towards a social group) are important factors of radicalization. In their work on political mobilization, for instance, Moskalenko and McCauley (2009) underline the importance of intergroup conflict for radicalization. They differentiate legal, non-violent (activism), and illegal, violent (radicalism) political action to reach politically motivated goals, and they point to associations between both, activism and radicalism, and support for intergroup conflicts. Such conflicts are especially pronounced in social media, because of its reach, accessibility, and flexibility of social networks.

To further examine the role of social media, radicalization research often uses big data analytics to examine communication patterns surrounding experiences of deprivation or discrimination, to identify themes of online support for violent or extremist groups, and to study determining factors of liking and sharing radicalized content (Batzdorfer et al., 2020; Meleagrou-Hitchens et al., 2017). However, most studies focus on text mining (of Twitter messages) and report difficulties in differentiating serious, ironic, or sarcastic messages based on tone, intention, and structure. Furthermore, text mining neglects image-based and meme-based communication. This makes it difficult to compare and triangulate findings from text-based analysis (e.g., Twitter) and image-based or video-based analysis (e.g., Instagram, Facebook) to advance our understanding of complex online radicalization processes (Parekh et al., 2018).

Internet Memes

Internet memes combining images, videos, and text messages have emerged as a popular form of online communication (Knobel & Lankshear, 2007) in radical groups. Radical groups often pair pictures or videos mirroring or evoking emotionally charged events with text explaining causes or results of said events (Jukschat & Kudlacek, 2017; Huntington, 2018). They connect these explanations to their political attitudes, extremist beliefs, or values to create an ideology. The concept of memes derives from Richard Dawkins’s (1976) seminal work on evolutionary expression of culture, meaning a spread of ideas, concepts, catch-phrases or other culturally coded content from person to person, thus influencing human ideology, goal-setting, and behavior. Accordingly, successful memes are of high fidelity (i.e., a meme’s ability to be copied and passed on), fecundity (i.e., reproduction rate, robustness), and longevity (i.e., survival time) (Dawkins, 1976). While the original definition of memes referred to diverse ideas and concepts and their survival among different (social) groups, Internet memes or online memes refer to communicating these ideas online (Knobel & Lankshear, 2007). Likewise, Internet memes have a referential or ideational system (e.g., what information is being conveyed and how?), a contextual or interpersonal system (e.g., how does this meme relate to the people viewing it?), and an ideological or worldview system (e.g., what larger topics or themes are being conveyed?). Pictures, videos, text messages, and other media content shared online (e.g., via social media) can become a meme when they aim to convey a message (e.g., free Tibet), express political or other ideological assumptions (e.g., the meme “free Tibet” implies that Tibet is unfree and needs to be set free), and have implications for social groups (e.g., a shared belief of an unfree Tibet among proponents of the meme and a call to action). Similar to traditional memes, Internet memes differ in their fidelity (e.g., diverse combinations of images and text messages), fecundity (e.g., political campaign slogans attracting a large audience before an election), and longevity (e.g., pictures of GIFs of ambiguous facial expressions that encourage reuse and reinterpretation).

Research on meme-based communication of right-wing groups points to their efforts to establish, disseminate, and rationalize political worldviews such as xenophobia, via arousing imagery (i.e., evoking fear or anger) and ideological narratives, providing arguments for these circumstances and scapegoating others (Struck et al., 2020; Doerr, 2017; Flam & Doerr, 2015; Schmitt et al., 2020). Right-wing groups try to gather attention and mobilize sympathizers by creating content that either empowers the in-group or incites (discriminatory) action against out-groups; for instance, religious groups or refugees (Doerr, 2017; Flam & Doerr, 2015; Schmitt et al., 2020; Struck et al., 2020) describe the use of memes in right-wing groups to communicate via echo chambers, and private networks of their in-group (e.g., on Discord servers, often directly inciting violence against out-groups), and to communicate publicly to mobilize interest (e.g., in Instagram posts, often with ambiguous or humorous messages). Despite this body of literature, empirical and experimental studies linking exposure to radicalizing Internet memes and subsequent radicalization are scarce (Pauwels & Schils, 2016). As a theoretical framework, the social influence model of violent extremism proposes mechanisms linking memes to radicalization.

Social Influence Model of Violent Extremism

The social influence model of violent extremism (SIM-VE) (Smith & Talbot, 2019) describes cognitive, emotional, and behavioral factors that have implications for processing online propaganda. The SIM-VE describes social influence towards violent extremism as transforming a person’s identity towards alignment with a violent extremist group, shaping their beliefs to align with extremist ideology, and reconstructing their moral position to allow for violent action becoming acceptable (Smith & Talbot, 2019). The model captures individual beliefs and identity (micro level, e.g., as a traditionalist), social identities and dynamics, (meso level, e.g., devaluating out-groups, such as libertarian groups, and their worldviews), and societal and normative factors (macro level, e.g., believing in a judicial system that punishes outgroups). With social influence being multidirectional, this means that individuals can shape social processes by presenting their opinions, introducing different perspectives or values into social settings and groups, but groups can also attract individuals by aligning with their goals or motivations or provide means to achieve attractive ends (e.g., financial success, attractiveness, social dominance). Specific cognitive (e.g., seeking information) and affective mechanisms (e.g., avoiding negative affect) further influence this process. For example, Huntington (2018) finds that political Internet memes perceived as political arguments receive less favorable assessments and more scrutiny regarding their persuasiveness than non-political memes. It is important to note, however, that congruency of one’s own political beliefs with the message attenuates this effect, pointing to an increased susceptibility or vulnerability in persons with congruent beliefs.

In a similar manner, a recent systematic review (Hassan et al., 2018) examined the connection between the exposure to online radicalized content and radicalization towards (political) violence. Overall, radicalizing content, such as Internet memes, seems to foster radical attitudes, such as xenophobia, in vulnerable groups (e.g., among visitors of a neo-Nazi online discussion forum or deprived Kyrgyzian youth), but seems less impactful in the general population. However, the review also revealed that only two studies linked exposure and attitudes in a pre-post design (Lee & Leets, 2002; Rieger et al., 2013), challenging the causal interpretation of the association between exposure and radicalization. Alava et al. (2017) made a similar observation in their review of the literature on youth and violent extremism on social media. They state that most studies were retrospective, and of low methodological quality, and most studies “do not inform about which process of Internet and social media use might have led to actual violent radicalization” (ibd., p. 44). Therefore, we take a closer look at the two studies that prospectively investigated the impact of radicalized online content.

Lee and Leets (2002) observed a short-term increase in perceived persuasiveness in teenagers exposed to messages and images of White supremacy. Perceived persuasiveness dropped over time, but it remained higher in individuals with pre-exposure predisposition to White supremacy beliefs. Rieger et al. (2013) examined psychological effects of extremist Internet videos on physiological arousal and attitudes of university and vocational students. While extremist content did not affect physiological arousal, extremist videos (i.e., showing an Islamic suicide bomber) received more negative evaluations, including shame and aversion. However, these videos also ranked higher among participants’ interests, which was connected to an increased willingness to search for further information about the topic. This discrepancy between cognitive interest and affective rejection points to a gap in current research. Hence, it seems promising to combine psychophysiological and self-reported indicators of cognitive and affective appraisal to further our understanding of individual radicalization processes. In this sense, research on radicalization can benefit from psychophysiological research on attention and information processing.

Psychophysiological Perspectives on Radicalization

Psychophysiological research has connected eye gaze patterns to visual information processing, such as selective attention towards emotional stimuli (Calvo & Lang, 2004), facial and eye regions for emotion recognition (Lim et al., 2020), and examined eye movements as an indicator of social preference (Jiang et al., 2016), for instance, characterized by more frequent fixations and longer gaze durations towards relevant stimuli.

Regarding political attitudes, previous studies have established connections between a visual preference of aversive as opposed to appetitive stimuli and right-wing orientation (Dodd et al., 2012). The authors argue that participants with right-leaning beliefs show stronger physiological reactions and thus pay more attention to potential threats to their identity. Similarly, in an eye tracking study on race-based messages, White participants were focused on Black instead of White persons, who represent an out-group based on race (Granot et al., 2016). The focus on the in-group is stronger, though, if visual stimuli are connected to text messages (e.g., in political advertisements): Participants with congruent right-wing beliefs exhibited a preference (i.e., longer dwell time) of right-wing party advertisements and avoided incongruent liberal advertisements (Marquart et al., 2016; Schmuck et al., 2020). These findings have implications for meme-based research and correspond to the model of selective exposure self-and affect management (Knobloch-Westerwick, 2014). The model assumes a reciprocal association between media and self-cognitions in that the current self-concept drives selective media exposure, but media-activated self-concepts also determine further exposure and attention. Thus, participants with right-wing attitudes may be selective in their attention towards political stimuli and political stimuli may activate political self-concepts in control participants (i.e., without a pre-defined political group affiliation), and subsequently guide their attention. However, while previous studies underline the usefulness of eye tracking in analyzing visual attention towards picture-based political stimuli and (political) Internet memes, they fall short in describing how these stimuli were processed. In most studies, participants had to make visual choices between appetitive/congruent and aversive/incongruent messages, but it was not clear what part of these materials attracted and kept their attention. Moreover, a recent study revealed that political messages that were perceived as visually attractive and less negative received longer dwell times and lead to higher willingness of political participation (Geise et al., 2020). Yet, this study examined German university students and did not account for party or political group affiliation.

Research Objectives

Taken together, previous findings suggest that exposure to extremist online content might induce cognitive and emotional reactions, but their associations with radicalization are less clear. Therefore, we aim to add to the literature on the impact of radical Internet memes by performing a quasi-experimental study that integrates eye tracking, self-reports of attitudes, and evaluative ratings of Internet memes in participants that do (right-wing group) or do not (control group) identify with a political right-wing group. The study explores visual attention patterns in individuals exposed to radicalized online material. Thus, it extends previous research by focusing on the prospective impact of online material (e.g., Hassan et al., 2018), and it provides information on differential information processing in social groups (based on their self-reported social identity). To assess the impact of radical online content, we connect our research to the SIM-VE (Smith & Talbot, 2019) in that we examine ideological (i.e., political group affiliation), social (i.e., modes of social identification with one’s political group), and behavioral factors (i.e., political mobilization; support for violence), and their association with visual processing patterns and explicit evaluations of political Internet memes. In sum, this study examines the following research questions:

-

1.

How do participants from political right-wing groups and controls differ in their emotional and cognitive evaluations of Internet memes?

-

2.

Do these groups differ in their visual attention towards Internet memes?

-

3.

How are visual attention patterns connected to the evaluation of these memes?

Since this is a pilot study, our approach is exploratory. This approach does not allow for causal inference, but we think it can prove the feasibility of our methodological approach, and provide implications for further inquiry into the association between political attitudes and visual attention in radicalization into violence.

Methods

Via online advertisements on social networking sites, in local newspapers in [blinded for peer review], personal communication with institutions working in radicalism prevention, and via handouts, we recruited participants who were at least 18 years of age and could read and understand German between August 2017 and December 2018. We collected data at a university laboratory. A main goal of the study was to compare the impact of radical Internet memes between different social groups. Therefore, recruiting efforts addressed right-wing parties (e.g., NPD, Identitäre Bewegung) and groups (e.g., Kameradschaftsbund) via online communication (e.g., in Facebook groups and via email) and personal contact. As a control group, we aimed to recruit participants without a primary political affiliation to achieve a control group with presumably less prevalent radical attitudes. Hence, we recruited participants via community centers, supermarkets, and public facilities. Right-wing participants received an incentive of €50 for their participation, and all other participants received €20. We increased the incentive for right-wing participants, because we considered a lower amount insufficient to engage participation after initial attempts were frugal.

Overall, 91 people took part in the study; however the current analysis sample was limited to 44 participants (Mage=26.23 years, SD = 7.76; 66% male) that affiliated with either a political right-wing group (n = 20) or another group, for example, “humans,” “students,” or “teachers” (n = 24), which is treated as a control group in this analysis. The remaining participants named a religious group (e.g., Catholic) or a political group (e.g., Green party) as their main affiliation and were excluded from this analysis. Because we acknowledge the central role of social identity in radicalization processes (c. Moskalenko & McCauley 2009; Smith & Talbot, 2019), participants’ self-identified social group was chosen as an indicator of group affiliation for the analysis. We have excluded participants with opposing political attitudes, a different party affiliation, or mainly religious group affiliation to avoid confounding of our analyses, since some images contained religious symbols or presented right-wing symbols that could evoke stronger reactions in these participants than in the general population (i.e., persons that did not choose any of these groups as their main social affiliation). In line with the SIM-VE (Smith & Talbot, 2019) and the SESAM (Knobloch-Westerwick, 2014), we were interested in comparing participants that identified with a right-wing group and participants that did not identify with a group that presented an opposing or equally clear ideology, because we wanted to inspect visual processing and cognitive and affective appraisal for different levels of radical attitudes. While we cannot assume political attitudes based on a non-political affiliation (e.g., teachers), it at least tells us that political group affiliation was not in the foreground of their social identity.

Measures and Study Procedure

Data collection comprised five steps (three separate surveys, eye tracking, and an implicit association test); a flowchart of the study procedure is presented in Fig. 1. Due to the complexity of the implicit association test, this study is limited to the analysis of survey data and eye tracking data. Discussing the role of implicit attitudes in information processing and visual attention is beyond the scope of this study.

Following the informed consent procedure, we presented participants with a first questionnaire on sociodemographic data and group affiliation. Sociodemographic data comprised age, gender (1 (female), 2 (male)), country of origin (oneself and one’s parents) (1 (Germany), 2 (other)), current occupation (1 (student (school, university) or in vocational education), 2 (employed), 3 (freelancer/self-employed), 4 (retired), 5 (other)), and highest educational achievement (1 (ongoing), 2 (none), 3 (lower secondary education, i.e., “Hauptschule”), 4 (lower secondary education, i.e., “Realschule”), 5 (upper secondary education, i.e., “Gymnasium”)). Due to low cell counts, occupation (0 (student/vocational education), 1 (other)) and educational achievement (0 (ongoing/none/lower secondary education), 1 (upper secondary education)) were recoded for the analysis.

Group affiliation introduced the concept of social identification by asking respondents which of the following groups they felt they belonged to the most: a religious group (Catholic, Protestant, Muslim, Alevist, Jewish, Other), a political right-wing group, a political left-wing group, and others (open-ended). Participants were categorized into groups based on this response, leading to an analysis sample of 20 right-wing participants and 24 controls (i.e., other group affiliation).

Subsequently, we measured modes of social identification with the chosen group using a German translation of the measure by Roccas et al. (2008). Hence, importance (e.g., Belonging to this group is an important part of my identity) (α = 0.85), commitment (e.g., I feel strongly affiliated with this group) (α = 0.87), superiority (e.g., Other groups can learn a lot from us) (α = 0.87), and deference (e.g., It is disloyal to criticize this group) (α = 0.82) were assessed with four items each, on a 7-point Likert scale from 1 (strongly disagree) to 7 (strongly agree).

Finally, political mobilization was assessed via the German version (the translation was published by Jahnke et al., 2020) of the Activism and Radicalism Scale (ARIS; Moskalenko & McCauley 2009), where activism (e.g., I would donate money to an organization that fights for my group’s political and legal rights) (α = 0.91) and radicalism (e.g., I would attack police or security forces if I saw them beating members of my group) (α = 0.91) were rated on four items each via a 7-point Likert scale from 1 (strongly disagree) to 7 (strongly agree). We measured before both scales and after the exposure to radicalizing content and the eye tracking procedure.

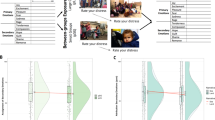

In the second step, participants’ eye movement was recorded, while they explored the Internet memes presented to them. The software BeGaze version 3.3.56 and the Experiment Center version 3.3.34 were used to process eye tracking data from a remote infrared eye tracking sensor (iView X RED-m) (SensoMotoric Instruments, 2011). We presented materials on a 344 mm x 194 mm screen with a distance of 60 cm between participants and the screen, with a screen resolution of 1600 × 900. Each participant completed a calibration phase of three test trials, with a maximally acceptable dispersion of 0.5° for each trial. Each participant viewed seventeen different Internet memes, three of which will be described and analyzed in more detail. These three memes share the purpose of social commentary, and they focus on group-related messages in relation or directly opposed to political right-wing ideology, whereas the remaining memes had different foci or purposes (e.g., a satirical depiction of a crusade). We presented each meme for 5 s.

For the analysis, we defined areas of interest (AOIs) for each meme, which characterize a specific object or region within a visual stimulus, for instance, faces, products, warning, or health messages in line with the research question (King et al., 2019). We defined faces, messages, weapons, and symbols as relevant AOIs, since they corresponded to similar categories in previous research, for instance, regarding cigarette advertisements (Krugman et al., 1994). A recent publication (Schmitt et al., 2020) has analyzed right-wing memes and identified similar themes, namely, humans or human faces, appellative written messages, weapons, and symbols (e.g., flags, runes), which validates our chosen approach. Five AOIs were analyzed (if available), namely, weapons (AOI 001); faces (AOI 002); symbols of radical groups, such as runes or flags (AOI 003); main titles or messages, usually written in the largest font (AOI 004); and additional text or messages written in smaller font (AOI 005) to explore their importance in guiding visual attention. For each AOI, we counted the frequency of fixations, and the total dwell time during exposure to reflect visual attention, interest, and depth of cognitive processing (King et al., 2019). We defined a fixation as a gaze duration of at least 100 ms per AOI. Data was preprocessed by excluding invalid trials (e.g., due to insufficient calibration parameters). For each AOI, we counted the number of fixations and divided the dwell time by exposure time (i.e., 5000 ms) to receive two indicators of relative importance of each AOI to visual attention processes compared to other areas of the stimulus (Jacob & Karn, 2003). The study team piloted the procedure to ensure feasibility and validity of the measurement procedure in a college sample (n = 20). Cognitive debriefings of the sample affirmed that all measures and instructions were comprehensible and easy to complete. See Fig. 2 for all three memes, including their respective AOIs. Although the AOIs vary in size for each meme, we approximate the relative dwell time as an indicator of relative interest, and we are particularly interested in between-group comparisons, between participants with and without right-wing group affiliation.

We selected the memes based on social media posts on Twitter and Facebook between February and July 2017. The goal was to find memes with a clear message, a connection to right-wing themes and symbols, and the intention to either strengthen the in-group or weaken the out-group. As a contrast, we included a meme with a message that aims to emphasize similarities instead of differences between groups, which we labeled deradicalizing (as opposed to radicalizing online content). Memes were pre-selected based on the clarity and distinctness of their message, and their presumed appeal. Previous research chose a similar approach (e.g., Flam & Doerr 2015). In a college sample (n = 20), we tested clarity, design, and attractiveness of memes. Main goal of the preselection was to identify memes that represented social commentary from a right-wing, and a counter-narrative perspective (Knobel & Lankshear, 2007). The three memes chosen for this study received highest ratings regarding clarity, design, and attractiveness. All memes depict human subjects and group-related messages and have a similar text-to-image ratio.

The first meme shows a young White woman in seemingly traditional clothing standing in an arborous landscape and holding a bow and arrow. Her expression seems determined, facing towards her left while nocking an arrow as if she was preparing an attack. The main title states that “European women are not helpless victims,” with a message in smaller font adding “justnationalistgirls” in cursive. We categorized this meme as potentially empowering a right-wing in-group that perceives a threatening out-group. By denouncing victimhood, European women, and by proxy the viewers, are asked to fight against potential perpetrators.

The second meme shows a Viking warrior in a battle, with his sword raised above his head and his shield by his side. He stands above an army that is unrecognizable but seems to be in a battle, as well. The meme presents this scenario in front of a background that strongly resembles the Imperial War Flag with a color scheme of black, white, and red. In the upper left-hand corner, an Odal rune is visible, which is a popular symbol of national socialist and modern nationalist movements, indicating beliefs of a strong heritage and an imperial claim. Overall, the color scheme and themes lead us to categorize this meme as inciting a right-wing in-group towards violence, which is further supported by the main title stating “Stand up and fight.”

The third meme shows a face of a young person looking directly at the observer. A scarf or cloth covers parts of the face, leaving only the eyes and most of the nose clearly visible. The position of the scarf or cloth resembles stereotypical Bedouin clothing, thus representing an out-group for a right-wing in-group. The unfazed expression seems to confront the observer to read the statements and think about their own opinions. The main title states “Though one thing connects us all: being human” (translated from German to English). Although this is written in larger font and presented at the bottom of the picture, a smaller font message is actually the first part of this argument, translated as “We may have different religions, different languages, different skin colors.” Overall, the meme promulgates a message of unity by emphasizing the common factor of being human despite obvious differences regarding religion, culture, or skin color.

Following the eye tracking procedure, six semantic differentials were used to capture participants’ emotional and cognitive appraisal on a 7-point scale (fascinating-boring, attractive-repulsive, appealing-shocking, plausible-implausible, clear-unclear, and familiar-unfamiliar). In addition, we asked participants’ agreement with the meme’s message (1 (totally disagree) to 7 (totally agree)). We adapted these items from previous research on affective and cognitive appraisal of radical online content (e.g., Huntington 2018; Rieger et al., 2013). To control for previous exposure effects, we asked participants whether they had seen any of the memes before, which none of the participants affirmed. To accurately evaluate each meme, we presented each meme separately.

In the third step, participants stated their current level of political mobilization via the ARIS scale to examine changes because of the exposure to radicalizing cues. In a fourth step, they reported their support for violence (Ulbrich-Herrmann, 2014) via six items (e.g., “I am ready to use physical violence against strangers”) on a 4-point scale (1 (totally disagree) to 4 (totally agree)). Finally, participants completed a picture-based Single Category Implicit Association Test (adapted from Karpinski & Steinman 2006) using the Inquisit software (www.millisecond.com) by pairing seven target pictures of right-wing rallies and assemblies with positive (e.g., joy, happy) and negative (e.g., stress, disgust) words. As described above, the analysis of implicit attitudes is beyond the scope of this study.

Statistical Analysis

First, we calculated chi-square tests and t-tests to compare participants with right-wing group affiliation to controls. Second, we investigated bivariate associations between variables using correlation coefficients (e.g., Pearson’s correlation for continuous variables) for each group. Third, to further inspect the impact of group affiliation on visual attention parameters, we used linear regression models to test the impact of group affiliation on relative dwell time and number of fixations per AOI, with significant sociodemographic variables and attitudinal correlates being controlled for. Due to a very high correlation (r ≥ .80) for social identity subscales in right-wing participants, we used the mean across all items as a single predictor to avoid multicollinearity. Fourth, we examined changes in political mobilization because of the exposure to radicalizing memes via repeated measures ANOVAs. We performed all analyses with SPSS 27.

Results

Several between-group differences emerged for push, pull, and personal factors of radicalization. Right-wing participants were mostly male, less educated, and reported higher political mobilization (i.e., on the radicalism subscale of the ARIS), and support for violence than the control group (see Table 1). By trend, modes of social identification were also higher in this group, although the difference did not reach statistical significance.

Both groups significantly differed regarding their emotional and cognitive appraisals (see Table 2) but not their visual attention patterns (see Table 3). The right-wing group rated the meme inciting violence as more appealing, attractive, and plausible than the control group, and a majority agreed with the message. On the contrary, the control group rated the meme emphasizing unity as more positive. The meme empowering the in-group received similar ratings across groups, but was rated as more plausible by the right-wing group. Although AOI-related differences were not significant, right-wing participants looked more often at weapons, and spent more time reading empowering and inciting messages and the text listing differences between groups (in the meme emphasizing unity) than controls.

Bivariate Correlations Between Attitudes, Evaluation of Internet Memes, and Visual Attention Metrics

To explore associations between indicators of visual attention, attitudes, and evaluations of memes, bivariate correlations were computed (see Table 4). Associations between sociodemographic variables, attitudes, and visual attention are reported separately for each meme (Online Resource 1 to 3).

For the first meme (empowering the in-group), social identification (importance, superiority, and deference) as well as self-rated activism correlated with a more positive emotional appraisal of and familiarity with the meme in the right-wing group. Higher levels of education positively skewed attention towards the additional text (justnationalistgirls).

For the second meme (inciting violence), similar positive associations between social identification (superiority, deference), emotional as well as cognitive appraisals emerged, albeit in the control group. Here, higher education was linked to a more negative assessment of the meme. In both groups, a support for violence correlated with a more positive evaluation. In the right-wing group, support for violence correlated with an increased dwell time on right-wing symbols. Activism and radicalism among right-wing participants were associated with a more positive assessment and longer dwell time on faces instead of the main text (stand up and fight). For control participants, radicalism also correlated with longer dwell time on right-wing symbols.

For the third meme (emphasizing unity), right-wing participants showed an increased frequency of fixations and by trend dwell time on additional text messages (i.e., stating differences between human beings). Furthermore, number of fixations was positively correlated with higher radicalism scores and support for violence. In the control group, males rated the meme less favorable, as did persons with a higher need for uniqueness and loss of significance. Occupational status and modes of social identification correlated with shorter dwell time for the main text (i.e., “Though one thing connects us all: being human”).

Linear regression models examined the association between group affiliation and visual attention parameters (Online Resources 4–6): Participants from a right-wing group focused less on faces (β=−0.55, E < .01) and more on the additional text (i.e., justnationalistgirls) (β = 0.54, p < .01) in the meme empowering a right-wing group. In addition, they looked more frequently (β = 0.50, p < .01) and for a longer amount of time (β = 0.55, p < .01) on symbols in the meme inciting violence.

Impact of Exposure to Radical Cues

The repeated measures ANOVA yielded nonsignificant results (F(1, 42) = 0.03, n.s., ηp = 0.001). While there was a slight increase in radicalism, it did not reach statistical significance (F(1, 40) = 0.07, n.s., ηp = 0.002). The nonsignificant interaction between group and exposure showed that changes in political activism (F(1, 42) = 0.03, n.s., ηp = 0.001) or radicalism (F(1, 40) = 0.64, n.s., ηp = 0.016) did not differ between groups.

Discussion and Conclusions

This study examined the visual exploration of radical Internet memes in groups with different levels of radical attitudes. First, the examined social groups (i.e., right-wing participants, and controls) showed attitudinal patterns that mirrored previous findings, for instance, a higher support for violence and level of radicalism in the right-wing group. Second, we observed differences in emotional and cognitive appraisals and visual processing of Internet memes between groups, pointing to differential efficacy of radicalizing messages. These differences have implications for preventive policy and research regarding online radicalization.

Initial group-based comparisons revealed that right-wing participants reported higher support for violence, radicalism, and social identification with their in-group than controls. In our study, right-wing participants were mostly male and had lower levels of education. This is in line with findings regarding support for violence, male gender, and poor education as risk factors for radicalization (Vergani et al., 2018; McGilloway et al., 2015; Wolfowicz et al., 2019). Arguably, group affiliation with a political right-wing group can be a driver of radicalization towards violent extremism, as it provides a context for reciprocal social and ideological influence to support a radical worldview (Smith & Talbot, 2019). Therefrom, evaluation and processing of Internet memes differed between groups: the second meme, which presents a virile warrior amidst battling armies in front of the Imperial War Flag with neo-Nazi symbols, was more attractive and appealing for right-wing participants. Thus, our findings corroborate the importance of congruency of online content and a recipient’s personal beliefs in online radicalization (Koehler, 2014; Meleagrou-Hitchens et al., 2017; Struck et al., 2020; Huntington, 2018; Schmitt et al., 2020; Rieger et al., 2013).

In this study, the right-wing group was mostly male and supported violence; therefore viewing a strong male avatar, a battle, and a clear directive (stand up and fight) might have appealed more strongly to this group. Further support for this assumption stems from the comparison with the other right-wing meme (empowering the in-group): While this meme also supported violent actions against an out-group that was non-European, and seemingly non-White, the main avatar was female. This meme received more positive ratings than the second meme in both groups, with the right-wing group reporting higher agreement and perceiving the message to be more plausible than the control group. Conceptually, this might indicate ideological differences between groups, where right-wing participants but not control participants agree with the idea of relative deprivation by framing European women as victims of unknown perpetrators and violent actions as necessary means to end victimhood (Pauwels & Schils, 2016; Rieger et al., 2013). This would align with the social commentary aspect of the meme itself (Knobel & Lankshear, 2007) and its potential to convey or support an ideology that legitimizes violence against out-groups.

Through the lens of the social influence model of violent extremism (SIM-VE) (Smith & Talbot, 2019), this meme represents ideological (i.e., a seemingly healthy and beautiful young White woman as a visual representation and ‘not helpless victims’ as a conceptual representation of desired identity), social (i.e., European versus non-European as a frame of reference), and behavioral factors (i.e., “not helpless victims” and bow and arrow as an implied call to arms) supporting a radical right-wing position that legitimizes violence to defend the in-group. Consequently, participants with political right-wing affiliation that already identify as a victim, European (as opposed to non-European) etc., might be further attracted towards this ideology to support their beliefs. This finding aligns with previous research on political advertisements (Marquart et al., 2016; Schmuck et al., 2020).

Nonetheless, the discrepancy in emotional reactivity towards the meme was less pronounced between groups, possibly because of lower message involvement, compared to the second meme inciting violence. Vis-à-vis this meme, the call to arms is only indirect in the first meme, and the depicted young woman shares fewer characteristics with the vulnerable group (e.g., being male). Hence, the lack of similarity and an imperative sentence could lead to a reduced involvement, which corresponds to previous findings on the attractiveness and persuasiveness of threat appeals (Cauberghe et al., 2009). However, this conclusion is merely hypothetical and warrants further exploration, as we did not explore the degree of message involvement for each meme.

The third meme (emphasizing unity) was rated highly positive in the control group (mean values were above 6.0 on a 7-point scale), but it received the lowest scores (except for clarity) in the right-wing group. Since the control group comprised persons with a mostly non-specific group affiliation (e.g., humans, students), this might explain their preference for this message. Right-wing ideologies, however, often maximize intergroup differences, resulting in incongruence with this message and its subsequent dismissal in the right-wing group (Koehler, 2014; Lee & Leets, 2002; Jensen et al., 2018; Dovidio et al., 1998).

While relative dwell time and number of fixations did not significantly differ between groups, several associations between attitudinal variables and indicators of visual attention did. In right-wing participants, stronger social identification, and support for violence were connected to increased fixations on symbols of right-wing nationalist ideology (e.g., Odal rune) as well as messages of differentness, which can indicate increased interest and attention (Jacob & Karn, 2003). Focusing on symbols and ideals of one’s in-group might serve social identity purposes (Roccas et al., 2008; Tajfel & Turner, 1986; Schmitt et al., 2020; Lee & Leets, 2002) by actualizing and strengthening one’s in-group identity. In right-wing groups, virility and physical strength and a tendency to maximize intergroup differences often define the in-group identity (Pauwels & Schils, 2016; Schmitt et al., 2020). The increased attention towards indicators of belief-congruent messages and in-group identity is in line with previous eye tracking studies on political party advertisements (Marquart et al., 2016; Schmuck et al., 2020). Conceptually, these findings also support the SESAM model (Knobloch-Westerwick, 2014), wherein symbols of right-wing groups might attract attention because of their congruency with one’s self-concept. The active self-concept as a member of a right-wing group might also guide attention towards depictions of one’s group, which leads to longer dwell times.

Interestingly, this hypothesized pattern emerged in linear regression models with group affiliation predicting visual attention parameters and other variables being controlled for. This is promising for further inquiry into visual attention patterns of right-wing participants regarding group-related symbols in different contexts (e.g., political advertisements, social media debates). Knobloch-Westerwick (2014) mentions selective exposure as a mechanism to elicit emotional reactions, for instance, listening to love songs before a dinner date to evoke positive affective reactions. Similarly, right-wing participants often listen to heavy metal or white power music that evokes anger and aggression and reinforces the ideology of white supremacy when preparing for rallies or group meetings (Gaudette et al., 2020).

Regarding visual cues, it is possible that right-wing participants focus on representations of their group and beliefs during media reports or online discussions to activate their self-concept as an in-group member, which could increase their emotional response and bias their interpretation of the information or discussion towards the in-group. The focus on differentness in the third meme (emphasizing unity) by right-wing participants also supports this assumption, which corresponds to findings from experimental social psychological research (Dovidio et al., 1998). Thus, the meme might have exacerbated group differences, which is counter-intuitive from the counter-narrative perspective (Knobel & Lankshear, 2007). Policies and practices that focus on counter narratives should therefore know this effect and design and test their counter narratives accordingly. To our knowledge, most interventions in this area have small effects and are methodologically flawed (Blaya, 2019; Carthy et al., 2020), pointing to the need for further research. From a neurobiological perspective, this focus on differentness corresponds with the increased focus of right-leaning participants on aversive stimuli or indicators of differentness and potential threats in visual stimuli (Dodd et al., 2012; Granot et al., 2016). Noticeably, we found a similar association in participants with increased social identification in the control group, regardless of group affiliation. This general impact of social identification on visual attention towards distinct instead of common features across groups may result from group bias to maximize in-group homogeneity and distinctiveness (Jensen et al., 2018; Dovidio et al., 1998).

To extend our knowledge about the impact of radicalized online material (e.g., Hassan et al., 2018) as well as similarly designed preventive counter measures, further prospective research is necessary to examine how participants with different political attitudes actually process online material, and if their physiological and affective reactions lead to stronger in-group focus, and radical attitudes. Our study proves the feasibility of this approach, but studies in larger samples, using different materials, and measures, such as heart rate or EDA, are welcome (Caruelle et al., 2019; Rieger et al., 2013). Finally, while exposure to potentially radicalized content did not lead to a significant increase in political mobilization (i.e., activism and radicalism), several relevant associations emerged. Baseline levels of mobilization were linked to positive evaluations and a visual focus on weapons, and symbols, as well as higher agreement with the second meme across both groups. Current research in social neuroscience supports the notion of a neurobiological proneness towards relevant stimuli in persons supporting (political) violence that may guide their visual attention and subsequent conscious and effortful decision-making (Decety et al., 2018). This could explain the similarities in visual attention and agreement across groups, despite significant differences in post hoc ratings of attractiveness and explicit attitudes. However, further research on biological determinants of proneness to violence and its correspondence with attitudinal and physiological measures is necessary to test this hypothesis.

Limitations

This study has a rather small and highly selective sample; therefore it was not possible to conduct more elaborate statistical tests, for instance, multivariate analyses or multiple regressions, and the results need to be interpreted with caution. Nevertheless, our sample size is comparable to other eye tracking studies in this area (e.g., n = 57; Marquart et al., 2016), particularly with specific groups (e.g., right-wing participants), which are very challenging to recruit (King et al., 2019). While examining Internet memes promises high ecological validity, their multi-layered design introduces potentially confounding factors (e.g., color, framing, text styles) that might have affected ratings and eye movements. It was also impossible to define identical AOIs across memes, which affected eye tracking metrics. The varying size of AOIs might have biased dwell time and number of fixations, which is why we interpret our findings as trends. Future studies might compare slightly altered versions of memes to one another, which allows for an even closer inspection of visual attention patterns and a direct comparison of AOI-based metrics. In addition, we chose the number of fixations, and the total dwell time on AOIs as two prominent and straight-forward eye tracking metrics; however, other metrics that were more strongly connected to emotional arousal (pupil size), processing flow (blink rate), or visual saliency (time to first fixation) (King et al., 2019) might offer new and interesting insight into visual information processing of political memes. Since we used a quasi-experimental design in a laboratory setting, we limited our research to cognitive radicalization (Neumann, 2013). It is unclear how memes in real-world settings might affect behavioral radicalization, as well. However, we think our results prove the feasibility of eye tracking method in analyzing associations between visual processing and attitudinal evaluations regarding (political) Internet memes. We propose several areas for future research that could connect psychophysiological research, behavioral observations, and self-reports (qualitative or quantitative) to understand online radicalization. Most constructs were measured with psychometrically sound scales, although our small sample size did not allow for more complex analyses, for example, of interaction effects of group affiliation and attitudinal variables. Finally, by relying on self-reported group affiliation, we accepted participants’ broad and lay definitions of social groups (e.g., political right-wing group) that did not allow for an in-depth analysis or a more detailed comparison, for instance, regarding attitudinal variables, such as xenophobia, within and between groups.

Conclusions and Implications

In this study, we examined evaluations and visual processing of three types of Internet memes (empowering a right-wing in-group, inciting violence, and emphasizing unity) in a vulnerable group of right-wing participants and a control group. We found that political memes that match one’s ideology are more appealing and persuasive within specific groups (e.g., right-wing group) as well as across groups (e.g., support for violence). Moreover, the analysis of eye movements suggests participants favor areas that support their core beliefs (e.g., differentness), even if the overall message presented a different frame (e.g., emphasizing unity). While these findings are tentative, they illustrate potential mechanisms of information processing that might connect risk factors for violent radicalization, such as a support for violence, to consuming and creating radical online communication. To this end, selective information processing points to potential side effects of memes with a counter-narrative frame, which requires further investigation. Given the current focus on counter speech and counter narratives as preventive measures of (online) radicalization, it is very important to consider possible negative effects, presumably exacerbated by selective exposure of individuals and groups with pre-existing risk factors for radicalization (e.g., right-wing group affiliation, strong support for violence). A more nuanced perspective, including basic research and psychophysiological research methods, is necessary to develop and evaluate counter speech and counter narratives. Prevention practice efforts using online communication to counter online radicalization should know these effects and adapt their materials accordingly. Policymakers can support this process by fostering exchange and learning between basic and applied research and practitioners working in radicalization prevention.

Data Availability

Data and study materials are available upon reasonable request.

Code Availability

Not applicable.

References

Alava, S., Frau-Meigs, D., & Hassan, G. (2017). Youth and violent extremism on social media: mapping the research. UNESCO Publishing

Alfano, M., Carter, J. A., & Cheong, M. (2018). Technological seduction and self-radicalization. Journal of the American Philosophical Association, 4(3), 298–322. https://doi.org/10.1017/apa.2018.27

Battersby, J., & Ball, R. (2019). Christchurch in the context of New Zealand terrorism and right wing extremism. Journal of Policing, Intelligence and Counter Terrorism, 14(3), 191–207

Batzdorfer, V., Steinmetz, H., & Bosnjak, M. (2020). Big Data in der Radikalisierungsforschung [Big data in radicalization research]. Psychologische Rundschau, 71(2), 96–102. https://doi.org/10.1026/0033-3042/a000480

Beelmann, A. (2020). A social-developmental model of radicalization: a systematic integration of existing theories and empirical research. International Journal of Conflict and Violence, 14(1), 1–14. https://doi.org/10.4119/ijcv-3778

Blaya, C. (2019). Cyberhate: A review and content analysis of intervention strategies. Aggression and Violent Behavior, 45, 163–172. https://doi.org/10.1016/j.avb.2018.05.006

Calvo, M. G., & Lang, P. J. (2004). Gaze patterns when looking at emotional pictures: motivationally biased attention. Motivation and Emotion, 28(3), 221–243

Carthy, S. L., Doody, C., Cox, K., O’Hora, D., & Sarma, K. (2020). Counter-narratives for the prevention of violent radicalisation: A systematic review of targeted interventions. Campbell Systematic Reviews, 16, e1106. https://doi.org/10.1002/cl2.1106

Caruelle, D., Gustafsson, A., Shams, P., & Lervik-Olsen, L. (2019). The use of electrodermal activity (EDA) measurement to understand consumer emotions – a literature review and a call for action. Journal of Business Research, 104, 146–160. https://doi.org/10.1016/j.jbusres.2019.06.041

Cauberghe, V., De Pelsmacker, P., Janssens, W., & Dens, N. (2009). Fear, threat and efficacy in threat appeals: message involvement as a key mediator to message acceptance. Accident Analysis and Prevention, 41(2), 276–285. https://doi.org/10.1016/j.aap.2008.11.006

Dawkins, R. (1976). The selfish gene (1st ed.). Oxford University Press

Decety, J., Pape, R., & Workman, C. I. (2018). A multilevel social neuroscience perspective on radicalization and terrorism. Social Neuroscience, 13(5), 511–529. https://doi.org/10.1080/17470919.2017.1400462

Dodd, M. D., Balzer, A., Jacobs, C. M., Gruszczynski, M. W., Smith, K. B., & Hibbing, J. R. (2012). The political left rolls with the good and the political right confronts the bad: connecting physiology and cognition to preferences. Philosophical Transactions of the Royal Society B: Biological Sciences, 367(1589), 640–649. https://doi.org/10.1098/rstb.2011.0268

Doerr, N. (2017). Bridging language barriers, bonding against immigrants: A visual case study of transnational network publics created by far-right activists in Europe. Discourse & Society, 28(1), 3–23. https://doi.org/10.1177/0957926516676689

Dovidio, J. F., Gaertner, S. L., & Validzic, A. (1998). Intergroup bias: Status, differentiation, and a common in-group identity. Journal of Personality and Social Psychology, 75(1), 109–120. https://doi.org/10.1037/0022-3514.75.1.109

Flam, H., & Doerr, N. (2015). Visuals and emotions in social movements. In H. Flam & J. Kleres (Eds.), Methods of Exploring Emotions (pp. 249–259). Routledge

Gaudette, T., Scrivens, R., & Venkatesh, V. (2020). The role of the internet in facilitating violent extremism: insights from former right-wing extremists. Terrorism and Political Violence, 1–18. https://doi.org/10.1080/09546553.2020.1784147

Geise, S., Heck, A., & Panke, D. (2020). The effects of digital media images on political participation online: results of an eye-tracking experiment integrating individual perceptions of “Photo News Factors”. Policy & Internet, 13, 54–85. https://doi.org/10.1002/poi3.235

Granot, Y., Caliendo, S. M., McIlwain, C. D., & Balcetis, E. (2016). Effects of intergroup language on eye-tracking and moment-to-moment responses in race-based political messages. Paper presented at the National Conference of Black Political Scientists (NCOBPS) Annual Meeting, Baltimore, October 15. 2015

Hassan, G., Brouillette-Alarie, S., Alava, S., Frau-Meigs, D., Lavoie, L., Fetiu, A., et al. (2018). Exposure to extremist online content could lead to violent radicalization: A systematic review of empirical evidence. International Journal of Developmental Science, 12(1–2), 71–88. https://doi.org/10.3233/DEV-170233

Huntington, H. E. (2018). The affect and effect of internet memes: Assessing perceptions and influence of online user-generated political discourse as media. Dissertation, Colorado State University, Fort Collins

Hutchinson, J. (2019). Far-right terrorism. Counter Terrorist Trends and Analyses, 11(6), 19–28

Jacob, R. J. K., & Karn, K. S. (2003). Commentary on Section 4 - Eye tracking in human-computer interaction and usability research: ready to deliver the promises. In J. Hyönä, R. Radach, & H. Deubel (Eds.), The Mind’s Eye (pp. 573–605). North-Holland

Jahnke, S., Schröder, C. P., Goede, L. R., Lehmann, L., Hauff, L., & Beelmann, A. (2020). Observer sensitivity and early radicalization to violence among young people in Germany. Social Justice Research, 33(3), 308–330. https://doi.org/10.1007/s11211-020-00351-y

Jensen, M. A., Seate, A., & James, P. A. (2018). Radicalization to violence: a pathway approach to studying extremism. Terrorism and Political Violence, 32(5), 1067–1090. https://doi.org/10.1080/09546553.2018.1442330

Jiang, T., Potters, J., & Funaki, Y. (2016). Eye-tracking social preferences. Journal of Behavioral Decision Making, 29(2–3), 157–168. https://doi.org/10.1002/bdm.1899

Jukschat, N., & Kudlacek, D. (2017). Ein Bild sagt mehr als tausend Worte? Zum Potenzial rekonstruktiver Bildanalysen für die Erforschung von Radikalisierungsprozessen in Zeiten des Internets – eine exemplarische Analyse [A picture says more than a thousand words? The potential of reconstructive image analysis for studying radicalization processes in the digital age -- an exemplary analysis]. In S. Hohnstein & M. Herding (Eds.), Digitale Medien und politisch-weltanschaulicher Extremismus im Jugendalter. Erkenntnisse aus Wissenschaft und Praxis (pp. 59–82). Deutsches Jugendinstitut e. V

Karpinski, A., & Steinman, R. B. (2006). The single category implicit association test as a measure of implicit social cognition. Journal of Personality and Social Psychology, 91(1), 16–32. https://doi.org/10.1037/0022-3514.91.1.16

King, A. J., Bol, N., Cummins, R. G., & John, K. K. (2019). Improving visual behavior research in communication science: An overview, review, and reporting recommendations for using eye-tracking methods. Communication Methods and Measures, 13(3), 149–177. https://doi.org/10.1080/19312458.2018.1558194

Knobel, M., & Lankshear, C. (2007). Online memes, affinities, and cultural production. In M. Knobel & C. Lankshear (Eds.), A New Literacies Sampler (pp. 199–227). Peter Lang

Knobloch-Westerwick, S. (2014). The Selective Exposure Self- and Affect-Management (SESAM) model: applications in the realms of race, politics, and health. Communication Research, 42(7), 959–985. https://doi.org/10.1177/0093650214539173

Koehler, D. (2014). The radical online: individual radicalization processes and the role of the internet. Journal for Deradicalization, 1, 116–134

Krugman, D. M., Fox, R. J., Fletcher, J. E., Fischer, P. M., et al. (1994). Do adolescents attend to warnings in cigarette advertising? An eye-tracking approach. Journal of Advertising Research, 34(6), 39–52

Lee, E., & Leets, L. (2002). Persuasive storytelling by hate groups online: examining its effects on adolescents. American Behavioral Scientist, 45(6), 927–957. https://doi.org/10.1177/0002764202045006003

Lim, J. Z., Mountstephens, J., & Teo, J. (2020). Emotion recognition using eye-tracking: taxonomy, review and current challenges. Sensors, 20(8), 2384. https://doi.org/10.3390/s20082384

Marquart, F., Matthes, J., & Rapp, E. (2016). Selective exposure in the context of political advertising: a behavioral approach using eye-tracking methodology. International Journal of Communication, 10, 20

McGilloway, A., Ghosh, P., & Bhui, K. (2015). A systematic review of pathways to and processes associated with radicalization and extremism amongst Muslims in Western societies. International Review of Psychiatry, 27(1), 39–50

Meleagrou-Hitchens, A., Alexander, A., & Kaderbhai, N. (2017). The impact of digital communications technology on radicalization and recruitment. International Affairs, 93(5), 1233–1249. https://doi.org/10.1093/ia/iix103

Moskalenko, S., & McCauley, C. (2009). Measuring political mobilization: The distinction between activism and radicalism. Terrorism and Political Violence, 21(2), 239–260. https://doi.org/10.1080/09546550902765508

Neumann, P. R. (2013). The trouble with radicalization. International Affairs, 89(4), 873–893. https://doi.org/10.1111/1468-2346.12049

Neumann, K. (2019). Medien und Islamismus: Der Einfluss von Medienberichterstattung und Propaganda auf islamistische Radikalisierungsprozesse [Media and Islamistic extremism: the influence of media reports and propaganda on Islamistic radicalization processes]. Springer VS

Parekh, D., Amarasingam, A., Dawson, L., & Ruths, D. (2018). Studying Jihadists on social media: a critique of data collection methodologies. Perspectives on Terrorism, 12(3), 5–23

Pauwels, L., & Schils, N. (2016). Differential online exposure to extremist content and political violence: testing the relative strength of social learning and competing perspectives. Terrorism and Political Violence, 28(1), 1–29. https://doi.org/10.1080/09546553.2013.876414

Rieger, D., Frischlich, L., & Bente, G. (2013). Propaganda 2.0: psychological effects of right-wing and Islamic extremist internet videos (Vol. 44, Polizei + Forschung). Luchterhand

Roccas, S., Sagiv, L., Schwartz, S., Halevy, N., & Eidelson, R. (2008). Toward a unifying model of identification with groups: integrating theoretical perspectives. Personality and Social Psychology Review, 12(3), 280–306. https://doi.org/10.1177/1088868308319225

Schmitt, J. B., Harles, D., & Rieger, D. (2020). Themen, Motive und Mainstreaming in rechtsextremen Online-Memes [On the topics, motives, and mainstreaming in right-wing extremist online memes]. Medien & Kommunikationswissenschaft, 68(1/2), 73–93. https://doi.org/10.5771/1615-634X-2020-1-2-73

Schmuck, D., Tribastone, M., Matthes, J., Marquart, F., & Bergel, E. M. (2020). Avoiding the other side? An eye-tracking study of selective exposure and selective avoidance effects in response to political advertising. Journal of Media Psychology: Theories, Methods, and Applications, 32(3), 158–164. https://doi.org/10.1027/1864-1105/a000265

Smith, D., & Talbot, S. (2019). How to make enemies and influence people: a Social Influence Model of Violent Extremism (SIM-VE). Journal of Policing, Intelligence and Counter Terrorism, 14(2), 99–114. https://doi.org/10.1080/18335330.2019.1575973

Struck, J., Müller, P., Mischler, A., & Wagner, D. (2020). Volksverhetzung und Volksvernetzung: Eine analytische Einordnung rechtsextremistischer Onlinekommunikation [Incitement to hatred and ideological networking: classification of right-wing extremist online communication]. Kriminologie - Das Online-Journal, 2(2020), 283–309. https://doi.org/10.18716/ojs/krimoj/2020.2.11

Tajfel, H., & Turner, J. C. (1986). The social identity theory of intergroup behavior. In S. Worchel & W. G. Austin (Eds.), Psychology of intergroup relations (pp. 7–24). Nelson-Hall

Thompson, R. (2012). Radicalization and the use of social media. Journal of Strategic Security, 4(4), 167–190. https://doi.org/10.5038/1944-0472.4.4.8

Ulbrich-Herrmann, M. (2014). Gewaltförmiges Verhalten. Zusammenstellung sozialwissenschaftlicher Items und Skalen (ZIS). https://doi.org/10.6102/zis122

Vergani, M., Iqbal, M., Ilbahar, E., & Barton, G. (2018). The three Ps of radicalization: push, pull and personal. A systematic scoping review of the scientific evidence about radicalization into violent extremism. Studies in Conflict & Terrorism, 43(10), 854–885. https://doi.org/10.1080/1057610X.2018.1505686

Wolfowicz, M., Litmanovitz, Y., Weisburd, D., & Hasisi, B. (2019). A field-wide systematic review and meta-analysis of putative risk and protective factors for radicalization outcomes. Journal of Quantitative Criminology, 36, 407–447. https://doi.org/10.1007/s10940-019-09439-4

Funding

Open Access funding enabled and organized by Projekt DEAL. This study was funded by the Federal Ministry of Education and Research, Germany (grant number: 13N14282). The funding institution was not involved in either study design, data collection, analysis or interpretation of the data, the writing of the manuscript or the decision to submit the manuscript for publication.

Author information

Authors and Affiliations

Contributions

All authors were responsible for study conception and design. DP and ST prepared materials, data collection and analysis. ST was responsible for data collection and interpretation and wrote the first draft of the manuscript. ST and SiS revised and approved the final version of the manuscript.

Corresponding author

Ethics declarations

Ethics Approval

All procedures performed in studies involving human participants were in accordance with the institutional and/or national research committee: The study was approved by the the Ethics Committee of the University Medicine Greifswald (BB 186/17) and it adhered to th1964 Helsinki Declaration and its later amendments or comparable ethical standards. Informed consent was obtained from all individual participants included in the study.

Conflict of Interest

The authors declare no competing interests.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

ESM 1

(DOCX 34.6 KB)

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Tomczyk, S., Pielmann, D. & Schmidt, S. More Than a Glance: Investigating the Differential Efficacy of Radicalizing Graphical Cues with Right-Wing Messages. Eur J Crim Policy Res 28, 245–267 (2022). https://doi.org/10.1007/s10610-022-09508-8

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10610-022-09508-8