Abstract

The mind–body problem is analyzed in a physicalist perspective. By combining the concepts of emergence and algorithmic information theory in a thought experiment, employing a basic nonlinear process, it is shown that epistemologically emergent properties may develop in a physical system. Turning to the significantly more complex neural network of the brain it is subsequently argued that consciousness is epistemologically emergent. Thus reductionist understanding of consciousness appears not possible; the mind–body problem does not have a reductionist solution. The ontologically emergent character of consciousness is then identified from a combinatorial analysis relating to universal limits set by quantum mechanics, implying that consciousness is fundamentally irreducible to low-level phenomena.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Understanding consciousness is a central problem in philosophy. The literature produced through the centuries, relating to the ‘mind–body’ problem, is also vast. A subset of some 2500 articles on theories of consciousness can be found in PhilPapers (2019). An apparent difficulty lies in the fact that while we normally seek scientific understanding from a reductionist perspective, in which the whole is understood from its constituents, consciousness has for millions of years naturally evolved into an extremely complex system with advanced high-level properties.

The theoretical difficulties we have faced strongly suggest that fundamentally new ideas are needed for the mind–body problem to reach its resolution. In this work it is argued that emergence, combined with results from algorithmic information theory and quantum mechanics, is such an idea. The meaning of these concepts will shortly be discussed; we may here briefly state that emergence relates to complex systems with characteristics that are difficult or impossible to reduce to the parts of the systems and algorithmic information theory concerns relationships between information and computing capacity. We reach the conclusion that the mind is epistemologically emergent, which implies that the mind–body problem cannot be solved reductionistically. Reductionistic understanding of the subjective aspects of consciousness, like introspection and qualia, therefore does not appear possible. The concept of the ‘explanatory gap’ (Levine 1983) is thus justified.

McGinn, in his influential work “Can we solve the mind–body problem?” (McGinn 1989), also concludes that the mind cannot be understood, but on other grounds. He focuses on the ability to understand phenomenal consciousness (Block 1995), and finds that we humans, because of ‘cognitive closure’ are not able to solve this ‘hard problem of consciousness’ (Chalmers 1995). With the reservation “the type of mind that can solve it is going to be very different from our” McGinn does not fully exclude that consciousness can be given some kind of explanation, an optimism not supported in this work.

We will, in a thought experiment, illustrate a process that produces an emergent property in the epistemological sense. This result will be helpful when discussing the neurological functions related to consciousness. It will be argued that consciousness is epistemologically emergent. The ontologically emergent character of consciousness is subsequently discussed, and argued for, in light of its complexity considered as a global system. Chalmers (2006) finds, on intuitive rather than on formal grounds, that the mind is ‘strongly emergent’; a term used here in the same meaning as ‘ontologically emergent’.

Definitions are important in this work. There are at least two reasons for this. The first is that several aspects of the concepts of consciousness, in particular emergence, are often used in different ways by different philosophers, neuroscientists and others. This may be understandable on the basis of that consciousness, not least semantically, is an elusive concept. The problem is rooted in its unique character, causing attempts for a definition to contain circular elements of some kind. The influential early characterisation of Locke (1690) “consciousness is the perception of what passes in a man’s own mind” suffers from reference to the subjective term ‘perception’. Nagel’s (1974) characterisation “there is something that it is like to be that organism—something it is like for the organism” has gained popularity, although “is like” refers back to the subject itself, that is to consciousness. A more exhaustive and recent discussion of possible criteria for and meanings of conscious states can be found in Van Gulick (2014). However, either of the above formulations sufficiently catches the subjective components of consciousness that are referred to in this work, thus we here consider phenomenal consciousness. When we discuss other key concepts, attempts will be made to render the treatment more precise, in some cases using formalisations from physics and mathematics.

A second reason for the need for clear definitions is simply that binding arguments require precision (Carnap 1950). The consequence of such specifications may of course be that the definitions of some philosophers are excluded; the results should be seen in this perspective.

There are some instances of mathematics in this paper. Hopefully the reader will not find them too challenging. The reasons for the mathematical content are the following. Firstly, we need to clarify, in a formal sense, the meaning of an explanatory theory for consciousness. Since algorithmic information theory is a natural starting point, examples from dynamic systems theory are shown to be quite elucidating in Sect. 2. Here the logistic equation helps to clarify what is required of an explanatory theory. Secondly, mathematics need to be employed for outlining the Jumping robot thought experiment in Sect. 4. Having already familiarised ourselves with the logistic equation, we can now see in a rather precise sense how an epistemically emergent system may arise. Thirdly, we need mathematics to quantitatively assess the complexity of low-level neuronal properties when discussing ontological reduction of consciousness in Sect. 6.2.

In the sections to follow we begin by discussing what requirements must be placed on a theory that can solve the mind–body problem, assuming physicalism and causality. This is conveniently done by employing algorithmic information theory. We draw the conclusion that one-to-one representations of consciousness will not constitute explanatory theories; a properly formulated theory for consciousness must be less complex than consciousness itself. It is subsequently argued that if consciousness is found to be an emergent property of the brain, no solution to the mind–body problem can be produced. Predictions of how low-level neural processes relate to high-level, emergent conscious behaviour cannot be made. To this end, the concepts of emergence and reduction are defined and discussed. A thought experiment, illustrating epistemological emergence, is then introduced. An argument for the epistemological emergence of consciousness is subsequently presented. Turning to ontological emergence, we employ results from quantum mechanics showing that the amount of information that can be processed in the material universe is limited. We find that this limit needs to be exceeded if consciousness should be reducible in principle to low-level neural processes. As a result consciousness is found also to be ontologically emergent. Finally the potential for neuroscience to shed light on consciousness is briefly addressed. The paper ends with discussion and conclusion.

2 What is Required of a Solution to the Mind–Body Problem?

The goal of the mind–body problem research is to find a theory that explains the relationship between mental and physical states and processes. The sub-problem which by far has attracted the most interest concerns the question how consciousness can be understood. We may initially ask the question: what is required from an adequate theory?

In this work, a ‘theory’ for consciousness refers to an explanatory theory, that is a theory that shows why a phenomenon or property is the way it is. Merely descriptive theories, that describes what phenomena are like, are by themselves insufficient for solving the mind–body problem. For a discussion of scientific theories, see Woodward (2017). In the present case, a descriptive theory could be an account of functions of a one-to-one computer copy of the cerebral neural network. An explanatory theory, on the other hand, should bridge the gap between subjective experiences and the physical matter of the brain.

Our method of approach will however not be to directly attempt to determine whether the subjective per se is reducible to the physical. Instead we will investigate to what extent methodological tools for such a reduction, namely the interpretation of the function of the cerebral neural network, can be devised.

Chaitin (1987) has clarified the necessary requirement that a theory must be inherently less complex than what it describes; in his terminology it must to some extent be ‘algorithmically compressible’ in relation to what it should explain. Let us illustrate this by an example. A relationship y = f(x) has been established to explain a phenomenon, but the precise dependence is not known. A series of experiments that generate N data points (xi, yi); i: 1 … N has thus been performed. Clearly, a polynomial Y(x) of degree N − 1 (N coefficients) can always be fitted through all the data points in an xy-diagram. Is it a theory? The answer is no, for the simple reason that Y(x) does not explain anything; it is always possible to draw a polynomial of degree N − 1 exactly through N data points. Had we instead adapted a polynomial of lower degree through all the points, say a second order polynomial through 10 data points, then we would have a theory worthy of the name; it predicts more than it must. That it is algorithmically compressible means that it can be formulated using fewer bits of binary information than those required for expressing Y(x). This also holds generally; the formulation of an algorithmically compressible theory contains less binary bits of information than the data it represents. Simply put: a proper theory must be simpler than the phenomenon it describes, otherwise it does not explain anything.

In this work we will make use of the discrete logistic equation for later comparisons with the neurological processes that form the basis of consciousness. This equation can be formulated as the discrete recursive relation xn+1 = λxn(1 − xn), n: 0 … nmax, where the positive integer nmax can be chosen freely. The discrete logistic equation then iteratively generates new numbers xn+1 for increasing values of numbers n. The parameter λ and the start value x0 must first be selected. We can now ask: is there an explicit theory for the value xn+1, that is is there a function u(k) which satisfies the relation xk = u(k), being algorithmically compressible as compared to repeated use of the iterative relationship xn+1 = λxn(1 − xn)? Of course we can form x1 = λx0(1 − x0), x2 = λx1(1 − x1) = λλx0(1 − x0)(1 − λx0(1 − x0)) and so on. This latter route is however not feasible; for large n we will find that the symbolic expression for xn+1 becomes extremely complex; this path to determine u(k) will not result in a valid theory. Alternatively formulated: the binary bits needed to represent the characters of these symbolic terms is at least of the same order as the bits representing the numbers x1, x2, x3… themselves. Unfortunately, it can be shown that the question we posed must be answered in the negative; no matter how we try, it is not possible (except for a very few values of λ) to derive a theory, that is a compact, explicit expression for u(k).

The cause of the problem is that the discrete logistic equation is a nonlinear recursive equation. Let us, for a moment, instead consider the simpler linear recursive equation xn+1 = A + λxn, n: 0 … nmax, where A is a constant, for which the general term xk can be derived in explicit form simply as xk = x0λk +A(1 − λk)/(1 − λ) for λ ≠ 1 and as xk = x0+Ak when λ = 1. The formal solution is expressed using only a few mathematical symbols; it is thus algorithmically compressible (may be represented by fewer digital bits of information) as compared to the solution xk obtained iteratively by forming x1 = A + λx0, x2 = A + λx1 = A + λ(A + λx0), x3 = A + λx2 = A + λ(A + λ(A + λx0)) and so on. This explicit solution was analytically available because of the low complexity involved in the solution of linear equations as compared to nonlinear. Furthermore, the solution for xk is derived mathematically by using well-known axioms and theorems; consequently we can theoretically explain the values xk for the linear recursive equation.

We have here employed examples from mathematics, but the reasoning applies generally when we seek any kind of formal explanation or theory for a phenomenon. Thus, a theory cannot explain consciousness if it refers to systems of the same level of complexity (like other minds). Understanding is only reached from theories that are less complex than a full formal representation of consciousness itself, and that relate to already established knowledge; in other words they should be algorithmically compressible in relation to consciousness.

As an application of algorithmic compression, we may consider explanations for the collective motion of flocking birds. If advanced models must be applied to the motion of each individual bird, an explanatory theory is far away. But a theory that identifies laws that are followed by the flocking birds collectively, a theory which is algorithmically compressed in relation to a one-to-one description of the individual behaviour of all the birds, is a candidate for a proper theory. In a Gestalt psychological framework, such laws could simply be stipulated in order to be experimentally verified, but here we envisage the laws reductionistically, as derivable from the low-level behaviour of the birds. In order to arrive at such a theory, however, a careful consideration of the varieties of the birds’ individual motion must be carried out, in the same manner that laws xk = u(k) for the iterative equations just described can only be found through a detailed mathematical analysis of successive iterations.

3 Emergence Stands in the Way

The emergent character of consciousness is persistently debated in the philosophical literature (Kim 1999, 2006; Chalmers 2006). We will here contend that consciousness is both epistemologically and ontologically emergent. To this end, definitions of these concepts are provided below. Consciousness thus has features that are not reducible to the properties of its components. By ‘not reducible to’ we mean that the characteristics of the low-level components, taken separately, of the phenomenon are insufficient to establish high-level properties. A more precise characterization will soon be presented. By ‘low-level’ and ‘high-level’ we refer to the parts of and integrated wholes of a system or phenomenon, respectively. An extensive account of these concepts are given in O’Connor and Wong (2015).

This conclusion is central to the mind–body problem since it settles the issue of the ‘explanatory gap’ (Levine 1983); an unbridgeable gap exists between the theories we can formulate on the basis of the basic neural physiology of the brain and the subjective, cognitive function of consciousness. We now turn to investigate the emergent character of consciousness.

3.1 Epistemological and Ontological Emergence

Emergence as a concept emerged in the literature in the late nineteenth and early twentieth centuries, mainly through the philosopher John Stuart Mill, the psychologists George Henry Lewes and Conwy Lloyd Morgan and the philosopher Charlie Dunbar Broad (1925), although already Aristotle had touched upon the subject in his Metaphysics. See Corning (2012) for a concise review and references. The literature in the field has since expanded significantly and criticism against careless use of emergence has been put forth (Goldstein 2013). Thus we find it essential to explicitly characterize emergence as it is used in the present work.

There are different varieties of emergence understood as wholes that posses properties that their parts lack (see Bunge 2014 for an overview). As an example, two switches, combined in series or in parallel, can represent the logical connectives ‘and’ and ‘or’, respectively. On their own, the switches would lack this property. We can, though, easily understand how the emergent properties of the combined switches arise. In the present context we are interested in more advanced levels of emergence, where emergent properties as features of the whole cannot be explained in terms of properties of its parts.

Epistemological emergence is defined in the following way: a high-level property is epistemologically emergent with respect to properties on low-level if the latter form the basis for the high-level property and if the theories that describe the low-level properties cannot predict properties at high-level.

Ontological emergence is defined as: a high-level property is ontologically emergent with respect to properties on low-level if the latter form the basis for the high-level property and if it is not reducible to properties at low-level.

These definitions are to a large extent aligned with characterizations of emergence in the literature. Comparing with Chalmers (2006), for example, his definitions of ‘weak’ and ‘strong’ emergence are similar, with the exception that he writes ‘truths’ rather than ‘theories’ and ‘deducible in principle’ instead of ‘reducible’. The reason for our choice of ‘theories’ is that the mind–body problem asks for a theoretical explanation.

The characterization of ‘reduction’ is widely debated among philosophers and there is little consensus when it comes to details (van Riel and van Gulick 2018; van Gulick 2001). For simplicity, rather general characterizations will be employed here, with the reservation that other characterizations may have an effect on our argument. We will refer to two types of reduction: epistemological and ontological. The first is a relation between representational items, like theories or models, and the second is a relation between real-world items, like objects or properties. By epistemological reduction we thus mean reduction of one explanation or theory to an explanation or theory with lower complexity in the sense that the latter is algoritmically compressed with respect to the former. A further requirement on epistemological reducibility concerns explicitness. Thus we need ‘reducible’ also to mean ‘in principle explicitly solvable’. A differential equation of mathematics could be a more algoritmically compressed theory for a physical system than its explicit solution, but it is implicit in the same sense that an explanation of human consciousness in terms of animal consciousness would be. We do not seek an implicit, descriptive theory for a system of interest but rather an explicit, explanatory theory.

It is reasonable to assume, as van Riel and van Gulick do, that ontological reduction should entail “identification of a specific sort of intrinsic similarity between non-representational objects, such as properties or events”. We will here follow Schröder (1998) and preferentially refer to emergent properties rather than to emergent things, events, behaviour, processes or laws. Emergent properties may be argued to be more basic than the latter categories. For a thing, event or behaviour to be emergent, it should be associated with at least one emergent property. Emergent processes or laws are concepts for representing emergent properties that are connected, rather than representing non-emergent properties of parts that connect with properties of a whole (Broad 1925).

The definition and use of the term ‘property’ is somewhat debated in philosophy. We here employ it synonymously as a characterisation or attribute of an object. An object at low-level is fully categorized by its properties, which motivates us to simply use the term ‘properties on low-level’ rather than, for example, ‘its constituent parts at low-level’ or ‘its lower-level base’ (Kim 1999) in the definitions above. Authors like Bunge (2014) also defines emergence in terms of properties. Consciousness, being at the focus in this work, is thus strictly not a property of the brain whereas conscious thinking, or the ability to produce it, is indeed such a property and is what we have in mind when discussing consciousness in relation to emergence.

An ontologically irreducible property, if it exists, could thus not be determined by its low-level-properties or behaviour; it could not be characterised by a statistical or law-like behaviour in relation to its low-level components. Loosely formulated it can be said that its behaviour comes as a surprise to nature. This distinction is crucial and we will indeed find in this work that even if causality holds, there are systems where extreme complexity can, in an ontological sense, ‘shield’ the dynamics of a high-level phenomenon from that of its associated low-level phenomena. For these systems it may thus hold that a certain high-level property is not implied by the system. The system becomes a mere vehicle for the property. An important consequence is that these systems are uncontrollable in principle.

It may be argued that any property or behaviour in a physicalist world would be reducible to low-level properties since we assume that the physical is all there is. But this is not what is meant by reduction; supervenience does not imply reducibility; see also Francescotti (2007) for clarifying the relation between supervenience and emergence.

The requirements for ontological emergence are indeed harder to satisfy than those for epistemologic emergence; the former relates to intrinsic properties of the system rather than to knowledge about and theories for the system. As defined above, ontological emergence implies epistemological emergence since if no connection is to be found in the world between an emergent property and its low-level basis, the emergent property cannot be explained in a predictive theory.

Note also that we define epistemological emergence in an a priori sense (high-level properties should be predictable from those of low-level) rather than in the weaker a posteriori sense (high-level properties should be explainable from those of low-level). This differentiation is, however, not important for the analysis presented here. What we are looking for is the possible existence of relations between particular high-level properties and low-level properties or states. In the epistemological case this amounts to the existence of theories that connect the two levels. In the ontological case we are concerned with establishing whether a direct relation between the two levels is possible in principle. Assuming, as we do here, supervenience and causality we know that complex properties of the mind, like consciousness, have evolved. But evolution is complex and does not, as we will see, guarantee that ontological reduction is possible.

The term weak epistemological emergence has been used for systems that can be simulated on a computer but otherwise would be characterized as epistemologically emergent. This definition will be adopted here as well. A possible example of weak epistemological emergence is Conway’s game of Life, where theoretically unpredictable complex patterns arise on a computer as a few simple rules for a cellular automaton are applied during a large number of time steps.

3.2 Emergence and Understanding

Emergence precludes reductionistic understanding. In an era where physicists talk about ‘theories for everything’, emergence tends not to be a welcome concept. It may thus be of interest to consider whether limits for understanding the world manifest themselves in other ways. The intention is here to, before addressing the complex problem of understanding consciousness, briefly outline a general perspective of epistemological limits for understanding the world. As we will see, there are indeed phenomena in, and properties of, the world that cannot be reduced further to known low-level phenomena. We must accept these higher-level phenomena and properties as they are, admitting that we cannot understand them in a deeper sense. The reason for this epistemical barrier lies in the process of reduction. A similar case holds for emergence.

We may consider at least four epistemological categories of phenomena and properties in nature in a physicalist perspective. The first two categories are at a basic level:

I. Brute facts. These are indeed also referred to as ‘facts without explanation’. To this category belong elementary concepts like matter, time, space, charge, particle spin and even the physical constants of nature. Of the latter, some 20 are presently believed to be independent of each other.

II. Laws of nature. Examples are Newton’s laws of motion, the law of gravitation, Coulomb’s law, relativity theory and the Schrödinger equation.

The low-level phenomena associated with these two categories cannot be understood in a traditional reductionist manner; they simply are. There are no simpler entities that could aid in an explanation of them. According to the debated Anthropic principle, the constants of nature should be tuned to some extent for there to be a universe at all where conscious minds can appear to discuss these matters. It has been shown, however, that there is an allowed window of variation for most constants of nature and thus their precise values, as they appear in nature, cannot be motivated or understood.

From a theoretical standpoint categories I and II are perfectly consistent with that any scientific reductionistic (non-circular) theory requires a basic set of unprovable axioms. Turning to the two higher level categories, we have:

III. Phenomena that are reducible to brute facts and laws of nature. Most phenomena belongs to this category, by virtue of causality.

IV. Phenomena that are not brute facts or laws of nature, nor reducible to these. These are the emergent phenomena.

In light of categories I and II, emergence is only one of several obstacles for understanding the world. Emergence, in particular ontological emergence, is sometimes criticized as an irrelevant construction (McLaughlin 1992; Kim 1999; McIntyre 2007). These views appear, at least partly, to be related to supervenience. By assuming supervenience, the notion that all processes of the world including consciousness must have physical counterparts, emergence may seem like a contradiction. An intention of the present work is to show how emergence can arise even when assuming supervenience, and to present evidence for instances of both epistemological and ontological emergence.

Arguments for emergence are predominantly related to complexity, but there are exceptions. See for example Silberstein and McGeever (1999) and Gambini et al. (2015) for a discussion of ontological emergence and non-reducibility related to basic phenomena in quantum mechanics. It is however questionable whether this approach is fruitful. If, for example, two entangled particles in a quantum mechanical interpretation should be assigned emergent properties, in consequence also Newton’s third law should be regarded as an emergent property of nature. The latter law states that for every action, there is an equal and opposite reaction in terms of forces. The law cannot be assigned to individual particles or bodies (low-level); it is manifested only when two or more of these are interconnected (high-level). But we do not refer to Newton’s third law as proof of an emergent law or property; we simply call it a law of nature. Emergence is mainly related to complexity.

Categories I–III are directly observable in nature. We accept the reality of brute facts and natural laws and we can usually identify combinations of these as category III phenomena. A falling snowflake, temporarily caught by the wind, exemplifies the latter. But category IV phenomena are not identified this way. We are usually accustomed to trying to interpret and understand the phenomena we encounter to the extent that occurrences of category IV phenomena are typically regarded as potential category III phenomena. Existence of category IV phenomena is hard to comprehend because of our natural insistence to interpret and understand on the basis of brute facts and natural laws. Consequently the fact that we have developed an advanced level of natural science understanding without introducing the concepts of emergence has sometimes lead to the erroneous conclusion that emergent phenomena and properties are of no relevance.

To summarize, whereas we speak of explanation and understanding of category III phenomena, these rely on acceptance of category I and II phenomena as mere facts. The latter do not have reductionistic explanations. In this perspective, emergent phenomena of category IV are not the only obstacles for our understanding of the world.

The main focus of this work is on emergence related to complexity. The brain features about 80 billion nerve cells (neurons), each connected to thousands of other nerve cells via synapses. A reductionistic model of the mind must be able to handle a corresponding complexity in order to ascertain law-like behaviour. As we have just discussed, algorithmic information theory implies that ‘models’ or ‘theories’ that cannot be algorithmically compressed to a complexity lower than that of the data they describe do not measure up. It is however not entirely clear how emergent properties arise. It would be of great help if we could actually point to a relevant example. Our approach will be to, using a thought experiment, provide an example of a system featuring emergent properties, being related to neural networks of the brain but with lower complexity. We will subsequently proceed to address emergence in relation to consciousness.

4 The Jumping Robot

Our thought experiment is the following. Let us imagine a number of robots that are deployed on an isolated island. All robots are designed in the same way. They are programmed to be able to freely walk around the island and perform certain tasks. The robots can communicate with each other and are also instructed to carry out their duties as effectively as possible. If a robot becomes more efficient by performing a certain action, it should ‘memorize’ it and ‘teach’ the other robots the same skill. Let us concentrate on the behaviour of one of these robots and call the thought experiment ‘the Jumping robot’.

In order to support the robots to move about freely, their movement patterns are partially governed by discrete logistic equations of the form we just described. Let us assume that for controlling the states of each of the robot’s say 20 joints, the iterative equations xn+1 = λxn(1 − xn) generate new numbers xn+1 in the interval [0,1] when x0 (also in the range [0,1]), and λ are set independently for each joint. These numbers affect how the robot should coordinate its joints, muscles and body parts, but the robot is programmed only to use information leading to safe motion without falling. Let us put λ = λ0, where λ0 is a number slightly less than 4. As discussed in Sect. 2, there is no algorithmically compressible explicit expression xk = u(k) that would provide a theory and thus an understanding of the robot’s motion. Interestingly, it can be shown mathematically that for values of λ in this range and for almost any value of x0, consecutive values of xk, xk+1, xk+2 will seem random. One should note, however, that the sequence of numbers indeed is deterministic; each number in the sequence is unambiguously defined by the former in a long chain.

Now assume that it would be of great value if the robots could perform jumps without falling. An attempt is thus made to provide a robot with this property. From a large number of x0 values different sequences of numbers are generated, using the discrete logistic equations, in the hope that one of these sequences would correspond to movements which when combined would result in a controlled jump by the robot. We ignore here that the procedure is obviously cumbersome; the complexity is partly caused by the fact that the robot consists of a large amount of joints, muscles and other bodyparts that should be coordinated, partly by our ignorance as to what movements the robot would need to perform for a successful jump and partly by that the discrete logistic equations do not allow control of the movements. After numerous unsuccessful attempts the task is thus given up; the robots cannot be taught to jump.

Instead now initiate robots with random x0 and leave them to themselves for some time on the island, after which we return. To our surprise, we now find that several of the robots make their way not only by walking, but also by jumping over obstacles. We cannot explain how one or more of the robots acquired the new property; no theory is to be found. This would entail finding relations xk = u(k) for the logistic equations, which is excluded. We could neither simulate the behaviour on a computer. If so, this would have been an example of weak epistemological emergence. Thus the theories that describe the low-level robot phenomena cannot predict behaviour at high-level. The robot’s ability to jump is an epistemologically emergent property. A main point here is that the emergent ability to jump per se is both fully comprehensible to us as well as fully plausible in the sense that we can imagine that a certain sequential use of joints, muscles and bodyparts indeed may accomplish this behaviour, at the same time realizing that some kind of chance or evolution beyond our modelling capacity was required in the light of the complexity involved. There is no magic involved in the process, rather the behaviour is similar to that of random mutations in the genome of an individual organism, producing improved characteristics through evolution. The behaviour in this thought experiment, however, is not necessarily ontologically emergent since the robot’s capacity to jump would appear to be reducible to the motions of its finite number of parts. Similar conclusions about the emergent properties of nonlinear systems have been reached by other authors (Silberstein and McGeever 1999).

The idea of the Jumping robot may associate to research in evolutionary robotics on interaction between autonomous agents and their environment (see Beer 1993, for an early paper). For the present purposes, however, it suffices to assume that the Jumping robot is constructed to be able to move in, and to some extent adapt to, its environment while memorizing both the basic characteristics of the environment and its ways of adaption to it.

No account of precisely how the robots acquire the skill to jump is given in this thought experiment. Actually, whether evolution or chance is involved is not relevant for the fact that a well known property, to jump, has emerged among these particular robots. We cannot compute or design this property, and still it emerges. We could, of course, design other types of robots, differently built and wired without a connection to the logistic equation, that indeed can jump. But emergence should always relate to specific systems; similarly as water molecules are much less likely to emerge in a mixture of hydrogen and nitrogen than in a hydrogen and oxygen mixture.

5 The Epistemologically Emergent Character of Consciousness

What then is the relevance of this epistemologically emergent system for the mind–body problem? It could be argued that we can make detailed studies of a jumping robot, simply ignoring how it reached its emergent state, in order to understand its functions and presumably build copies that perform the same movement patterns. We would simply map and reconstruct all the detailed states of the robot involved in the dynamics. Maybe we could also build consciousness in a similar manner?

Building a full robot copy, including its complete physical design and built-in software, would not solve the problem, however. The copy would feature the same complexity as the original robot, including the irreducible logistic equation. As we have seen, a procedure of this kind does not satisfy the criteria for a theory and does not constitute a path to understanding. The same conclusion holds if we make a simulated copy of the robot on a computer; the iterative use of the logistic equation in the simulations would amount to a one-to-one copy of the full robot system. A second possibility would entail identifying some reduced pattern of robot movements (‘reverse engineering’) that still would lead to stable jumping performance for this particular type of robots. This procedure, however, is not likely to succeed for several reasons. The main obstacle resides in the logistic equation itself. Since in spite of extensive and sophisticated efforts we were unable to design a jumping robot, it is quite unlikely that there exists a sequence of robot movements, providing stable jumping, simpler than the one generated by the logistic equation for the successful case. A second difficulty is that it is quite conceivable that the patterns for jumping are non-intuitive, being difficult to reveal for this reason. When the computer program AlphaGo beat the world number one ranked player in the game Go in 2017, an analysis of the game showed that the computer often chose to use non-intuitive and seemingly questionable unorthodox moves. AlphaGo reaches its excellence through engaging its neural networks in machine learning techniques, foremost by playing an extensive set of games against other instances of itself (Silver et al. 2016). A parallel can be drawn to evolution which does not design but rather ‘tries’ different possibilities which are then measured in a survival context.

In conclusion, the Jumping robot provides an example of the epistemological thesis that what can be built cannot always be understood. A proper theory for the properties of the Jumping robot, being algorithmically compressed in relation to what it explains, stands little chance to be developed.

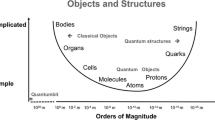

The roundworm Caenorhabditis elegans features only 302 neurons. The interconnections of all its neurons have been mapped. This mapping is an interesting first step towards understanding more complex neuronal networks. A bird’s brain has some 100 million neurons. Some argue that in a brain of this complexity, there are signs of basic characteristics of consciousness. The smallest primate brains feature about 500 millions and monkeys about 10 billion. The human brain features 80 billion neurons with some 16 billion interconnected in the cerebral cortex, being the primary area associated with consciousness. The question arises whether the conscious properties of the brain, such as thoughts and emotions, can be understood from a theoretical mapping of these neurons.

We thus turn to investigate the potentially emergent character of consciousness. The human brain works along vastly more complex paths than the discrete logistic equation, controlling the Jumping robot. Its neurons communicate, in brief, as follows. Via so-called dendrites, each neuron can obtain electrochemical signals from tens to tens of thousands (on average 7000) neighbouring neurons. The contributions from these signals are weighted in the neuron’s cell body to an electrical potential; the so-called membrane potential. When this reaches a certain threshold, the neuron sends out a pulse, the action potential, along a nerve fibre termed axon, which in turn connects via synapses and dendrites to other neurons. The outgoing signal from a neuron has the form of a spike rather than a continuous, nonlinear function of the incoming signal. Thus our choice of the discrete logistic equation rather than its continuous counterpart for the robot thought experiment. Neurons fire typically in the range of 1-100 signals per second (Maimon and Assad 2009) but also at higher frequencies (Gittis et al. 2010), with signal lengths of at most a few ms and with speeds of up to 100 meters per second. The behaviour varies between neurons. For networks of neurons, functions called sigmoids, with S-shaped dependence on the input signals, provide realistic activation function models of the relation between neuron firing and membrane potential.

Communication within the neural network of the brain thus occurs nonlinearly and discretely with a complexity vastly exceeding that of the simple logistic equation. Furthermore, evidence has been presented that even the activity of individual neurons play a role for conscious experiences (Houweling and Brecht 2007). Consequently it may be assumed that a reductionistic theory for consciousness should take into account firing of individual, or small clusters of, neurons. In the example of the Jumping robot it was the functional value, generated by the logistic equation, that was of interest. For consciousness, it is mainly the interspike intervals and patterns of neuronal action potentials that are of significance rather than the amplitudes of the action potentials.

A determining factor for the neuronal firing behaviour is the membrane potential in relation to the threshold for firing. This threshold is individual for each neuron and sensitively determined by the weighted contribution from thousands of other neurons through its dendrites. We saw, in the Jumping robot thought experiment, that the behaviour of the simple logistic equation is algoritmically incompressible. A proper, algorithmically compressed, theory for phenomenal consciousness involving thousands of networking neurons, obeying the behaviour outlined above, thus certainly seems out of reach. The argument will be substantially strengthened in the next section, as ontological emergence is considered.

Summarizing, we have compared the problem of understanding consciousness, that is predicting it from its low-level neural components, with the problem of understanding the Jumping robot. We have argued that the robot’s ability to jump is an epistemologically emergent property; it is impossible to construct a proper theory that explains how it can jump. The major problem lies in that its behaviour is partly attributed to an iterative nonlinear process in the form of the discrete logistic function. There is no possibility to construct an algorithmically compressed theory except for some singular special cases. Consciousness as a property of the mind is, as we just discussed, grounded in the low-level behaviour of a an extremely complex network of neurons with interspike intervals that can be modelled with a similar iterative theory as for the logistic equation. The robot can jump, and we know this ability stems from the coordination of its low-level components. The brain can be conscious, and we know consciousness stems from the activity of its low-level neurons. But since there is no middle ground, no possibility to reduce collective neural activity, generating consciousness, into a compressed theory, we are facing an explanatory gap between individual neuronal activity and consciousness.

Thus there is strong evidence that consciousness, and similarly subconsciousness, is epistemologically emergent. In the same way that the Jumping robot’s behaviour cannot be described reductionistically, the properties of consciousness cannot be epistemologically related to the properties of low-level neurons, it cannot be represented in a reductionistic theory. In consequence, the mind–body problem is reductionistically unsolvable.

It may also be noted that mental processes involve an additional, well known, complexity, not necessarily related to emergence: they cannot be scientifically related to measurable properties in the same manner as movements of the robot parts are linked to its externally measurable ability to jump. The phenomenological, or subjective, conscious properties of the mind are predominantly accessible internally or subjectively, not from externally distinguishable physical states. Our focus is here on emergence, so we will not dwell further on this difficulty.

6 The Ontologically Emergent Character of Consciousness

We may now ask whether consciousness is also ontologically emergent; are the properties of consciousness irreducible to the lower level states and processes that form the basis of consciousness, the ones that consciousness supervene on? Although having discussed in Sect. 3.1 what we mean by ‘reducibility’, we will now proceed to discuss in more detail the ontological limits of reduction. Let us return to the example of the Jumping robot. The property to be able to jump was at this stage not deemed ontologically emergent for the reason that in an objective meaning this property was an option that seemed reducible to the system, although its details were unknown to us. By ‘objective’ we refer to that the various possible sequences of numbers being generated by the discrete logistic equation, of which at least one potentially lead to jumping behaviour, correspond to an amount of information that is manageable in principle. This latter statement demands clarification, since we now have made contact with the consequences of quantum mechanics and information theory for ontological properties.

6.1 Ontological Limits

It has been shown (Lloyd 2002; Davies 2004) that the information storage capacity of the universe is limited by the available quantum states of matter inside the causal horizon. The latter is the distance, limited by the finite speed of light, outside which no events may be causally influential. It is found that the order of 10120 bits of digital information may be contained within this horizon. The fact that this ‘ontological information limit’ is an estimate is not essential; what matters here is that information storage capacity is universally limited to a magnitude of this nature. A property that is associated with a complexity transcending some 10120 bits of digital information can be characterized as ontologically emergent since then there is no possibility, even in principle, to connect it with the low-level phenomena on which it is based. This property is physically irreducible. The point made here is that quantum mechanics, which provides the basis for physicalism, limits the number of achievable states in nature and thus also implies ontological restrictions. This circumstance is usually absent in discussions of ontological emergence. It should be noted that ‘ontology’ is used in this work in the traditional, philosophical sense and not as a reference to properties of or interrelationships between entities used in computer science and information science.

An ontological information limit may be hard to digest and a natural reaction would be to claim that real processes and properties simply develop in the world, without any relation to its computational capacity. We should however realize that our quest, the topic of this paper, is an epistemologic one although we are investigating the ontological behaviour of the world. This entails using theoretical concepts like computability. Applying these to the world, we find that certain phenomena indeed feature a complexity to the degree that their appearance comes as a ‘surprise’, their complex behaviour is not immediately given by the state of the world.

There is a close and interesting analogy to be found in mathematics. Here Zermelo–Fraenkel set theory constitutes an axiomatic system for generating the truths (theorems) of standard mathematics including algebra and analysis. The system of axioms is quite limited, but the number of theorems that can be deduced is vast, covering most of mathematics. However, in 1931 Gödel showed that there exist true propositions of this system that cannot be proven inside the system. These propositions can, however, be proven by adding axioms, that is by stepping outside the system. The epistemological point we are making here is, by analogy, that the physical states of processes and properties in the world may imply future states, the occurrence (truth) of which are not reducible to present states. We cannot step outside the world to decide whether the former will appear or not; it is ontologically undecided. In mathematics, Gödel-undecidable propositions usually feature a complexity related to infinite sets that, although they may be formally dealt with, have a power extending outside of the system. Turning to the real world, its limitations for representing properties come not from dealing with infinity but from its discrete character, governed by quantum mechanical laws.

To shed some light on the implications of the information limit discussed above, we may ask what it would take for the Jumping robot’s ability to jump to be classified as an ontologically emergent property rather than merely an epistemologically emergent one.

Let us assume that a Jumping robot can be studied in detail while successfully performing a jump. To this end, sensors have been built into its joints and muscles. For a proper jump we require that both feet are above ground at some instant in time and that both feet thereafter touch the ground for a certain time whilst the robot’s centre of gravity remains above some certain height. As the robot satisfies these criteria, the corresponding positions of joints and muscular strengths used are measured. The task is now to reduce these to the low-level components of the robots, including the iterative equations that control its movements. Had these equations been the simple linear recursive equations discussed in Sect. 2, reduction would have been a relatively easy matter. Assuming λ is known, we could simply measure the values of xk at time instants k and solve the explicit relations xk = u(k) for the values of x0 that lead to the measured behaviour. But the Jumping robot is governed by the nonlinear recursive logistic equation for which no explicit relation xk = u(k) exists. Thus it is very hard to determine values of x0 that must be chosen for jumping behaviour. The more time steps being involved in the process, the more complex the logistic equation becomes, as shown in Sect. 2. Actually, after some number of time steps, its algorithmic complexity violates the ontological information limit discussed above. We may calculate a simple estimate of this limit as follows.

A basic assumption for the robot in the thought experiment is that its motion is partly controlled by discrete logistic equations, having the recurrence form xn+1 = λxn(1 − xn). Using extended ASCII 8 bit digital representation of each character of the formula, it is seen that there are two occurrences of ‘xn’ on the right hand side, each requiring 8 digital bits of information (omitting index n). The first three recurrences are x1 = λx0(1 − x0), x2 = λx1(1 − x1) = λλx0(1 − x0)(1 − λx0(1 − x0)), x3 = λx2(1 − x2) = λλλx0(1 − x0)(1 − λx0(1 − x0))(1 − λλx0(1 − x0)(1 − λx0(1 − x0))). It is seen that there is a doubling of the number of occurrences of the initial state ‘x0’ for each step to higher order recurrence. Focusing on x0 alone, disregarding other characters in order to arrive at a maximum requirement, we find that the number of occurrences of x0, as function of recurrence order n + 1, is 2n. Taking into account the number of bits needed to represent x0, xn+1 represents 8 × 2n bits of information with regards to x0. Solving the inequality 8 × 2n > 10120, we obtain n > 395. This number is thus an approximative, upper limit of the number of recurrences needed to obtain an expression with an information content exceeding the information storage capacity of the universe; the ontological information limit. An expression of this complexity and size hence cannot have an ontological representation. If n > 395 iterative time steps of the logistic equation are required for the robot to jump, this behaviour would come as complete surprise to nature. There would be nothing in nature pointing towards this property; there is no reason for it to appear. The amount of information required to relate the property to its underlying low-level states is simply not available. There is no ontological contact between the robot’s ability to jump and its low-level components.

It may also be helpful to identify a specific example of an ontologically emergent system in nature. To this end, we note that emergence rarely is associated with the results of human activities, with design, but rather with evolution; the self-development of nature. Evolution has through natural selection access to a tremendous diversity of degrees of freedom and features a huge potential to generate emergent systems. In chemistry myoglobin is an important oxygen binding molecule found in muscle tissue (Luisi 2002). Here 153 amino acids are interconnected in a so-called polypeptide chain. Since there are 20 different amino acids, the number of possible combinations of chains amounts to the enormous number 20153 ≈ 10199, which corresponds to a number of digital bits much larger than 10120. Myoglobin thus features an ontologically emergent property; the molecule is, in terms of its optimized high oxygen affinity, not reducible to its low-level constituents. It could only evolve, it could not be designed.

It could possibly be argued that in order to free memory, by employing some efficient algorithm, only the most relevant data relating to each computation need be stored. This is, however, not a successful procedure for the following reason. In nature, changes do not come and forces do not act instantaneously. All effects of interactions in nature, that is changes of state, are in fact due to combinations of the four basic forms of interaction through exchange of particles called bosons. This interaction indeed takes a finite time; the lower limit is given by a key relationship in quantum mechanics called Heisenberg’s uncertainty principle (Lloyd 2002). The limiting time is proportional to Planck’s constant and inversely proportional to the system’s average energy above the ground state, which for a one kilo system means that no more than 5 × 1050 changes of state are possible per second; a theoretical limit for a quantum computer. In the entire visible universe, which dates back some 14 billion years and has a mass of about 1053 kg, not more than about 10121 changes of quantum states have occurred. Although this is a huge number it is not infinite. The universe’s ‘capacity to act and compute’ is thus limited (Wolpert 2008). Since several quantum states are involved in each computation of the oxygen affinity of a polypeptide chain, it is clear that quantum mechanics sets a universal limit, prohibiting reduction to the amino acid low-level components.

For a moment returning to the Jumping robot, we can now see why it is not possible to explain its ability to jump even through computer simulation. In such a scenario, initial x0 values need to be guessed for all the 20 joints. Assuming 10 digit accuracy is required, the number of possible initial states of a robot to be considered would thus be (1010)20 = 10200, a value in conflict with the computational limit just discussed.

6.2 Ontological Emergence

It will now be argued that consciousness is ontologically emergent. The line of reasoning is the following. First we specify the conditions required for ontological emergence. Next we specify physical conditions for the neural network of the brain. It is subsequently argued that the information associated with conscious states, in relation to low-level neural states, exceeds the ontological information limit discussed above. In consequence, consciousness is found to be ontologically detached from its low-level neural states, whereupon ontological emergence follows.

Referring to the previously stated definition, consciousness is ontologically emergent if it cannot be reduced to the properties or behaviour of its low-level states. This, in turn, means that no explicit relation can be established, not even in principle, between consciousness and the activity of the neural network that generates it. Hence we want to find out whether such a relation, that reduces consciousness to its low-level states, can be expressed or not.

At this point we need to specify the details of the neural network that we assume as the basis for consciousness, out of which some will be used for our argument. Causality and supervenience, in the sense that the properties of consciousness correspond to certain configurations of low-level neural states, are both assumed. Whereas the cerebral cortex contains some 16 billion neurons, a lower number of interacting neurons appears sufficient for consciousness, perhaps of the order a billion neurons. We also assume that it is only particular configurations of these, in terms of their interrelations, and certain temporal neuronal activity that generate phenomenal consciousness. Each neuron is, on average, connected to about 7000 other neurons, affecting its behaviour. Individual neuronal activity is assumed important (Houweling and Brecht 2007) for consciousness. Furthermore we assume that there is a lower time limit for collective neural activity where consciousness cannot be upheld. It has been experimentally shown that after stimulation of neural brain activity there is a delay before the individual becomes conscious of it. A “substantial duration of appropriate cerebral activity (up to about 0.5 s) is required for the production of a conscious sensory experience” (Libet 1993). Later experimental studies (Soon et al. 2008; Klemm 2010) support these results.

We will now consider the amount of information associated with a conscious state, in terms of its relation to its low-level components, the neurons. Our approach will be to make a lower estimate, employing a crude and simplified model, and determine its implications. Thus we start by assessing the information processes associated with an individual neuron k. We assume it is, through its axon (output) and dendrites (input), connected to K neurons. Since the primary action of the neuron in its network contribution to consciousness is to fire action potentials at certain rates and in certain patterns, it is natural to focus on information relating to whether the neuron, upon integration of its input, reaches the threshold potential for firing or not. The associated membrane potential is found from adding the membrane’s electric self-potential to the integrated contributions from other connected neurons. The threshold potential for firing is as mentioned earlier a nonlinear function of the cell potential. For simplicity we here model this function as a third order polynomial. Mathematically, the membrane potential \(Z_{n + 1}^{k}\) of neuron k at state n + 1 at time \(T_{n + 1}\) can be symbolically modelled as \(Z_{n + 1}^{k} = a^{k} + b^{k} s_{n} + c^{k} s_{n}^{2} + d^{k} s_{n}^{3}\), where \(a^{k} ,b^{k}\), \(c^{k}\) and \(d^{k}\) are constants, unique for each neuron, and \(s_{n} = \sum\nolimits_{i = 1}^{K} Z_{n}^{i}\), a sum over the action potential contributions from neighbouring neurons. Note that self-dependence on the previous state is included.

Of primary information theoretical interest is the formal representation needed for expressing \(Z_{n + 1}^{k}\) in terms of its dependence on signals from neighbouring neurons for the full time \(T_{c}\) of neuronal activity required for conscious mind processes to take place. Using a computer math program (in our case Maple), it can be shown that the number of characters required to express \(Z_{n + 1}^{k}\) scales with temporal states approximately as 10 × (3K)n+1. Transformed to binary code (extended ASCII, for example), each character corresponds to 8 digital bits of information. Thus for K = 7000, the information content associated with state \(Z_{n + 1}^{k}\) entails some 8 × 10 × (21000)n+1 digital bits. Assuming an average interspike interval of 0.1 s, this is an amount of information that does not reach the ontological limit within the \(N\) intervals that need be accounted for. This is concluded from estimating nmax = N = 0.5/0.1 = 5, where \(T_{c}\) = 0.5 s is assumed. However, and importantly, account need also be taken for the significant number of neurons that fire at interspike intervals towards the estimated minimal interval of about 0.001 s (Softky and Koch 1992; Paré and Gaudreau 1996). We now find that already for average interspike intervals of 0.018 s (thus for N = 0.5/0.018 ≈ 27) the number of bits representing \(Z_{n + 1}^{k}\) becomes 8 × 10 × (21000)28 ≈ 8 × 10122, exceeding the ontological limit 10120. The result, in terms of nmax, is relatively insensitive to K and choice of model for \(Z_{n + 1}^{k}\). Choosing K = 2000 and 12,000, for example, yields nmax = 31 and 26, respectively, with corresponding interspike intervals 0.016 and 0.019 s. Again \(T_{c}\) = 0.5 s is assumed. Instead using a second order model for \(Z_{n + 1}^{k}\) results in the similar character scaling 12 × (2K)n+1. Thus, since the spectrum of action potential firing cannot be ontologically represented within the ontological information limit, we find that conscious high-level processes cannot be reduced to, or related to, neuronal low-level processes.

It should be remarked that the above estimate significantly underrepresents the information content associated with the dynamics of a single neuron. For example, its dendrites are not identical, the biological and chemical modelling of which would substantially increase the number of bits needed to represent \(Z_{n + 1}^{k}\). Furthermore, we have only studied a single of the 16 billion neurons of the cerebral cortex. Thus we may safely argue that the information content associated with neural processes for consciousness exceeds the ontological limit; consciousness is ontologically emergent.

To sum up, complex systems of the world, like the Jumping robot and myoglobin, develop or evolve. The properties of these systems, such as ability to jump and oxygen affinity, supervene on the systems. Some properties come as surprises in the sense that they cannot be reduced to anything less than the behaviour of the full system itself. These are the emergent properties. Epistemologically, we refer to the associated system (like the bits and parts of the Jumping robot), ontologically we refer to the physical universe. The notion of algorithmic incompressibility as a diagnostic for emergence is applicable in both the epistemological and the ontological cases. If properties of a complex system, being acquired through for example long term evolution, can only be represented by the system itself, that is if nature, because of the limited quantum mechanical information capacity of the world, cannot accommodate a compressed representation of its properties, then the system features ontologically emergent properties. The neural system relating to consciousness features an incompressible character due to its nonlinear complexity as described above; thus consciousness is ontologically emergent. We may ask: is this coherent with the definition that was introduced in Sect. 3.1? It is indeed, since also ontologically emergent properties must, in a physicalist perspective, directly depend on the physical arrangement of low-level states. We cannot ask that irreducibility should entail an independence of these states. Thus, assuming supervenience, we conclude that high-level ontological emergence cannot entail more than a principal impossibility to represent a connection between the emergent properties and their correlated low-level states in the physical world. Requiring more of ontological emergence would lead to the mystical. As a consequence of the definitions in Sect. 3.1 it follows from this analysis that consciousness is also epistemologically emergent.

7 Neuroscience

The neural networks of the brain communicate in discrete nonlinear processes to generate cognitive functions such as the abilities to feel pain, think, make choices, experience feelings and introspect. If these basic neural processes were linear in their physical character, their behaviour could possibly be reduced to a theory. This theory would have lower complexity than what it describes since it would be algorithmically compressible. Nonlinear systems like the neural network of the brain, however, generally feature higher, second order complexity. Since the neural network associated with consciousness thus is nonlinear and discrete to its nature, we argue that a theory cannot be produced for it; consciousness is emergent and cannot be understood in a reductionistic framework, regardless whether we seek a computational theory of mind or some other formally reductionistic theory of mind.

It could be of interest here to briefly discuss a quite different obstacle for understanding consciousness. Abandoning efforts for finding theories of consciousness, we may be inclined to instead turn to the possibility of artificially designing consciousness. In neuroscience there is a search for ‘neural correlates of consciousness’ (NCC), which form the neural processes in the brain that are directly linked to the individual’s current mind activities. Although consciousness is an emergent, unexplainable property of the brain there is in principle nothing that precludes design of artificial consciousness—the possibility of imitating evolution is always open. But a problem is that we cannot be certain that the goal is achieved.

Let us say that NCC:s indeed can be identified to an extent that serious attempts to create conscious processes in artificial brains can be made. On each such experimental attempt, the function must be ensured—the system must be diagnosed. Otherwise there is the possibility that we have designed an advanced system that externally behaves like a consciousness but actually lacks mental processes. But a problem with this approach is that essentially no limit exists for non-cognitive ‘intelligence’ of advanced computer programs. These would then, properly designed, be able to pass any kind of Turing test. In these tests, where the respondent is hidden so that the person performing the test does not know whether it communicates with a human or a machine, any machine producing similar responses as humans are deemed intelligent on the level of a human.

The Turing test is valuable for testing intelligence, but is obviously unreliable for testing consciousness. But what would then be an adequate diagnosis? Current definitions of phenomenal consciousness provide an answer: we must ensure that the system can have subjective experiences. But since all measurement of the functions of consciousness must be done externally, that is by laboratory personnel using diagnostic equipment, the system’s internal cognitive functions cannot be measured directly. There is simply no information externally available from the system that would be indistinguishable from that which can be produced by an advanced, but unconscious, computer program. We could be facing an intelligent robot, without ability for conscious behaviour. This is, as mentioned elsewhere, therefore not a viable route for solution of the mind–body problem.

In short: to understand a system implies the possibility of constructing it, with all of its functions. But construction does not imply understanding; since the intended functions, like generation of conscious thoughts, cannot be experimentally verified we thus cannot say with certainty that they are in place nor that we understand them.

8 Discussion

It is argued in this work that consciousness cannot be explained or understood, since it is an emergent property of the brain. To understand how emergence can be realized in a system we invented the thought experiment of the Jumping robot. Although jumping per se is well known to us (there are, for example, certain types of robots that can jump) there was no way that we could coordinate this particular robot’s limbs, joints and muscles to produce stable jumping behaviour. Left to its own device, it nevertheless found a way to jump, partially by learning from its earlier attempts. The property of being able to jump emerged. Similarly we are well acquainted with consciousness as a phenomenon, as well as with its low-level basis, the neurons, but we find that we are epistemologically unable to compose an overall theory for consciousness from their behaviour.

What is then the basic obstacle for designing the robot to jump and for designing a neural system to be conscious? By analyzing the behaviour of the low-level components of these systems we have found that no theory can be constructed to determine the future of the combined, high-level systems. Their time dynamics for individual scenarios can indeed be found by starting them using different initial conditions, but each high-level end state will come as a complete surprise. The high-level property that we wish to design for, like jumping or being conscious, cannot be linked to the initial states of the low-level systems. Thus emergence results, rendering understanding of high-level properties impossible.

The line of reasoning relies on the definitions of emergence and reduction employed. Both these concepts can be defined differently. While efforts have been made to align the definitions used with those frequently used in the literature, it will be of interest to see whether alternative definitions may change the conclusions of this work.

The theoretical approach has not been to explain why the subjective per se is irreducible to the physical. Instead we show that the methodological tools which are required for such a reduction, namely models of neurons for establishing the function of cerebral neural networks, are fundamentally insufficient because of the complexity of these networks.

One may ask: How does this work relate to the Gestalt psychological approach for understanding consciousness? In Gestalt psychology, attempts are made to explain consciousness primarily from a phenomenal, first person perspective (Epstein and Hatfield 1994; Smith 2018). By use of the so-called Principle of psychophysical isomorphism it is assumed, as in the present work, that there is a correlation between conscious experience and cerebral, or neuronal, activity. Classical reductionist science and analytic philosophy, however, attempt to find a solution to the mind–body problem, that would bridge the explanatory gap mainly through third person studies of physiological and electrochemical processes in the brain. Although physical reductionists do not view psychological theorizing as problematic, they do find the psychological approach redundant (Epstein and Hatfield 1994). In physical reductivism, anything that can be predicted or explained psychologically can also be predicted or explained through physiology alone.

Gestalt psychology thus attempts to find an explanation to the mind–body problem which differs from that of physical reductionists. Wertheimer’s basic principles of perceptual organization (Wertheimer 1938), being central in Gestalt psychology, can indeed be expressed using less information than the phenomena they represent, but they are typically descriptive rather than explanatory in the sense that they have the character of independent laws rather than being reductionistically derived from low-level phenomena or properties. Some Gestalt psychologists do, however, potentially see a possibility to ultimately relate these internal principles reductionistically to external low-level physiological properties.

The present work is essentially neutral with respect to both the physicalistic and phenomenal approaches. It is found that regardless of whether a reductionist physicalist or phenomenological perspective is assumed, fundamental difficulties will arise. These are found when searching for a correlation of phenomenal states of consciousness at high-level to the extremely complex neuronal activity at low-level. We have argued that any such reduction, be it epistemological or ontological, would be associated with an information storage and handling capacity that is not available in the universe.

Since non-reductive physicalism is consistent with the present analysis, there are similarities with Davidson’s theory of Anomalous monism (Davidson 1970). The Anomalism Principle of this theory claims that there are no strict laws on the basis of which mental events can be predicted or explained by other events. The present work provides an explanation for the non-existence of such laws.

9 Conclusion

In this work we have argued for non-reductive physicalism; mental states supervene on physical states but cannot be reduced to them. In a physicalist analysis of the mind–body problem, resting on results from mathematics and physics, the concepts of algorithmic information theory and emergence are used to argue that the problem is unsolvable. The vast neural complexity of the brain is the basic obstacle; from a thought experiment it is shown that even a much simpler but related nonlinear system may exhibit epistemologically emergent properties. Reductionistic understanding of consciousness is thus not possible. Neuroscience will continue to make progress—we will almost certainly find, for example, the cognitive centra that are active at certain stimuli or thought processes, and we may even be able to construct conscious systems—but emergent cognitive phenomena like qualia, feelings or introspection may not be expressed in a theory. The ‘explanatory gap’ cannot be bridged.

We furthermore argue that consciousness is ontologically emergent; there is no possibility, even in principle, to reduce its characteristic properties to the low-level phenomena on which it is based. The limited quantum mechanical information and computational capacities of the world present unsurmountable obstacles. A basic example of ontological emergence, featuring less complexity than consciousness, is discussed, namely the oxygen affinity of the protein myoglobin. The main argument is that if properties of a complex system, being the result of for example long term evolution, can only be represented by the evolution of the system itself—that is if nature cannot accommodate a reduced representation of the system—then the system features ontologically emergent properties. Without an expressible relation to its constituting low-level components, consciousness in a way comes as a surprise to nature.

Several arguments of the present work could be developed further. The ambition here has been to sketch some of the consequences for the mind–body problem when analyzed using the tools of algorithmic information theory, emergence and quantum mechanics.

References

Beer RD (1993) A dynamical systems perspective on agent-environment interaction. Artif Intell 72:173–215

Block N (1995) On a confusion about the function of consciousness. Behav Brain Sci 18:227–247

Broad CD (1925) Mind and its place in nature. Routledge & Kegan Paul, London

Bunge M (2014) Emergence and convergence—qualitative novelty and the unity of knowledge. University of Toronto Press, Toronto

Carnap R (1950) Logical foundations of probability. University of Chicago Press, Chicago

Chaitin GJ (1987) Algorithmic information theory. Cambridge University Press, Cambridge

Chalmers DJ (1995) Facing up to the problem of consciousness. J Conscious Stud 2:200–219

Chalmers DJ (2006) Strong and weak emergence. In: Davies P, Clayton P (eds) The re-emergence of emergence: the emergentist hypothesis from science to religion. Oxford University Press, Oxford

Corning PA (2012) The re-emergence of emergence, and the causal role of synergy in emergent evolution. Synthese 185:295–317

Davidson D (1970) Mental events, reprinted in Essays on actions and events. Clarendon Press, Oxford, pp 207–224

Davies PCW (2004) Emergent biological principles and the computational properties of the universe. Complexity 10:11–15

Epstein W, Hatfield G (1994) Gestalt psychology and the philosophy of mind. Philos Psychol 7(2):163–181

Francescotti RM (2007) Emergence. Erkenntnis 67:47–63

Gambini R, Lewowicz L, Pullin J (2015) Quantum mechanics, strong emergence and ontological non-reducibility. Found Chem 17:117–127

Gittis AH, Moghadam SH, du Lac S (2010) Mechanisms of sustained high firing rates in two classes of vestibular nucleus neurons: differential contributions of resurgent Na, Kv3, and BK currents. J Neurophysiol 104:1625–1634

Goldstein J (2013) Re-imagining emergence: part 1. Emerg Complex Organ 15(2):77–103

Houweling AR, Brecht M (2007) Behavioural report of single neuron stimulation in somatosensory cortex. Nature 451:65

Kim J (1999) Making sense of emergence. Philos Stud 95:3–36

Kim J (2006) Emergence: core ideas and issues. Synthese 151:47–559

Klemm WR (2010) Free will debates: simple experiments are not so simple. Adv Cogn Psychol 6:47–65

Levine J (1983) Materialism and qualia: the explanatory gap. Pac Philos Q 64:354–361

Libet B (1993) The neural time factor in conscious and unconscious events. In: Beck GR, Marsh J (eds) Novartis foundation symposia. Ciba Foundation, Basel, pp 123–146

Lloyd S (2002) Computational capacity of the universe. Phys Rev Lett 88:237901-1-4

Locke J (1690) An essay concerning human understanding. Clarendon Press, Oxford

Luisi PL (2002) Emergence in chemistry: chemistry as the embodiment of emergence. Found Chem 4:183–200

Maimon G, Assad JA (2009) Beyond poisson: increased spike-time regularity across primate parietal cortex. Neuron 62:426–440

McGinn C (1989) Can we solve the mind-body problem? Mind 98:349–366

McIntyre L (2007) Emergence and reduction in chemistry: ontological or epistemological concepts? Synthese 155:337–343

McLaughlin B (1992) The rise and fall of British emergentism. In: Beckermann A et al (eds) Emergence or reduction?. Walter de Gruyter, Berlin

Nagel T (1974) What is it like to be a bat? Philos Rev 83:435–450

O’Connor T, Wong HY (2015) Emergent properties. In: Zalta EN (ed) The stanford encyclopedia of philosophy (summer 2015 edition). https://plato.stanford.edu/archives/sum2015/entries/properties-emergent/. Accessed 24 Feb 2019

Paré D, Gaudreau H (1996) Projection cells and interneurons of the lateral and basolateral amygdala: distinct firing patterns and differential relation to theta and delta rhythms in conscious cats. J Neurosci 16:3334–3350

PhilPapers (2019) https://philpapers.org/browse/philosophy-of-mind

Schröder J (1998) Emergence: non-deducibility or downwards causation? Philos Q 48:433–452

Silberstein M, McGeever J (1999) The search for ontological emergence. Philos Q 49:182–200