Abstract

In this paper the concept of belief distorted Nash equilibrium (BDNE) is introduced. It is a new concept of equilibrium for games in which players have incomplete, ambiguous or distorted information about the game they play, especially in a dynamic context. The distortion of information of a player concerns the fact how the other players and/or an external system changing in response to players’ decisions, are going to react to his/her current decision. The concept of BDNE encompasses a broader concept of pre-BDNE, which reflects the fact that players best respond to their beliefs, and self-verification of those beliefs. The relations between BDNE and Nash or subjective equilibria are examined, as well as the existence and properties of BDNE. Examples are presented, including models of a common ecosystem, repeated Cournot oligopoly, a repeated Minority Game or local public good with congestion effect and a repeated Prisoner’s Dilemma.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

It is claimed that Nash equilibrium is the most important concept in noncooperative game theory.

Indeed, it is the only solution concept that can be sustained whenever rational players have full information about the game in which they participate: the number of players involved, their strategy sets and payoff functions while in the case of dynamic games, also the dynamics of the underlying system.

Nash equilibrium ceases to be a solution whenever at least one of the above is unknown, especially if the information about it is distorted.

On the other hand, incomplete, ambiguous or even distorted information is a really important problem of contemporaneity.

There are many attempts to extend the concept of Nash equilibria to make it work in the case of incomplete information: among others, Bayesian equilibria introduced by Harsanyi (1967), correlated equilibria introduced by Aumann (1974, 1987), \(\Delta \)-rationalizability introduced by Battigalli and Siniscalchi (2003), self-confirming equilibria introduced by Fudenberg and Levine (1993) and subjective equilibria introduced by Kalai and Lehrer (1993, 1995).

These and some other equilibrium concepts tackling the problem of incomplete information are described in a more detailed way in “Other concepts of equilibria taking incomplete, ambiguous or distorted information into account” section in Appendix 3.

All those concepts are based on two obvious basic assumptions:

-

(i)

players best respond to their beliefs;

-

(ii)

beliefs cannot be contradicted during the play (or repeated play) if players choose strategies maximizing their expected payoffs given those beliefs.

However, those concepts are not defined in a form applicable to dynamic games.

Moreover, all those concepts besides subjective equilibrium do not take into account players’ information about the game that can be not only incomplete, but severely distorted. For example, they do not take into account the situations in which even the number of players is not known correctly. Besides, none of those concepts copes with ambiguity.

To fill in this gap, the concept of belief distorted Nash equilibrium (BDNE) is introduced. It is defined in a form which can be applied both to repeated and dynamic games. It takes into account players’ information which can be incomplete, ambiguous or even distorted. Nevertheless, it is based on the same two underlying assumptions which are fulfilled by all the incomplete information equilibrium concepts. It seems to be especially appropriate for games with many players.

Another interesting property of BDNE is the fact that this concept reflects the character of scientific modelling in problems of a special structure, which often appears especially in socio-economic or cognitive context.

In that class of problems there are three aspects:

-

optimization of players without full objective knowledge about parameters which influence the result of this optimization,

-

the fact that decision makers try to build (or adopt from some sources) a model of this unknown reality in order to use it for their optimization, and

-

the fact that the future behaviour of observable data which are used to verify the model is a consequence of players’ choices (which, in turn, are consequences of initial choice of the model).

In such a case a model of reality is proposed (this scientific model in our paper is related to as beliefs), players best respond to their beliefs and data collected afterwards are influenced by previous choices of the players (e.g. prices in a model of stock exchange, state of the resource in ecological economics models). Obviously, if, in light of collected data, the model seems correct, there is no need to change it. Consequently, false knowledge about reality may sustain and people may believe that they play a Nash equilibrium.

1.1 Motivation

The most illustrative for the phenomenon we want to focus on, is a real life problem—the ozone hole problem caused by emission of fluorocarbonates (freons, CFCs).

After discovering the ozone hole, the cause of it and possible consequences for the global society in future, ecologists suggested to decrease the emission of CFCs, among others by stopping using deodorants containing them.

Making such a decision seemed highly unreasonable for each individual since his/her influence on the global emission of CFCs and, consequently, the ozone layer is negligible.

Nevertheless, ecologists made some consumers believe that they are not negligible. Those consumers reduced, among others, usage of deodorants containing CFCs.

Afterwards, the global level of emission of CFCs decreased and the ozone hole stopped expanding and, as it is claimed now, it started to shrink.

Whatever the mechanism is, the belief “if I decide not to use deodorants containing freons, then the ozone hole will shrink” represented by some consumers can be regarded by them as verified by the empirical evidence.

Since the actual mathematics behind the ozone hole problem is quite complicated (among others, the equation determining the size of the ozone layer contains a delay), in this paper we use another ecological Example 1, in which the result of human decisions may be disastrous to the whole society, but with much simpler dynamics. The example illustrates the concept before detailed formal definitions in general framework.

The paper is designed as follows: before the formal introduction of the model (in Sect. 3) and concepts (in Sect. 4), a non-technical introduction is given in Sect. 2. The notation in Sect. 2 is reduced to the minimal level at which all the reasonings from Sect. 4, devoted to definitions and properties of the concepts, and Sect. 5, with analysis of examples, can be understood, so that the readers who are not mathematically oriented and the readers who want to become acquainted with the concepts first, can read instead or before reading the formal introduction.

The concepts of pre-BDNE and BDNE are compared to Nash equilibria and subjective equilibria in Sect. 4, just after definitions and theorems about properties and existence.

The concepts introduced in this paper are presented and compared to another concepts of equilibria—Nash and subjective equilibria—using the following examples.

-

1. A simple ecosystem constituting a common property of its users. We assume that the number of users is large and that every player may have problems with assessing his/her marginal influence on the aggregate extraction and, consequently, the future trajectory of the state of the resource.

This example first appears before formal introduction of the model and concepts as a clarifying example in Sect. 2.2, and all the concepts are explained and their interesting properties described using this example.

-

2. A repeated Minority Game being a modification of the El Farol problem. There are players who choose each time whether to stay at home or to go to the bar. If the bar is overcrowded, then it is better to stay at home, the less it is crowded the better it is to go.

The same model can describe also problems of so called local public goods like e.g. communal beach or public transportation, in which there is no possibility of exclusion while congestion decreases the utility of consumption.

-

3. A model of a market describing either Cournot oligopoly or competitive market. These two cases appear as one model also in Kalai and Lehrer (1995) because of the same reason as in this paper: players may have problems with assessing their actual share in the market and, therefore, their actual influence on prices and we check the possibility of obtaining e.g. competitive equilibrium in Cournot oligopoly as the result of distortion of information.

-

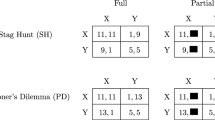

4. A repeated Prisoner’s Dilemma. At each stage each of two players assesses possible future reactions of the other player to his/her decision to cooperate or defect at this stage.

Another obvious examples for which the BDNE concept seems natural are e.g. taboos in primitive societies, formation of traffic jams, stock exchange and our motivating example of the ozone hole problem together with all the media campaigns concerning it.

For clarity of exposition, more technical proofs, a detailed review of other concepts of equilibria with incomplete, distorted or ambiguous information, introduction to and discussion about specific form of payoff function and games with many players are moved to the Appendices.

2 A non-technical introduction of the model and concepts

This section is intended for the readers who want to become acquainted with the general idea of the BDNE concept and the way it functions. It contains a brief extract from definitions and notation necessary to follow the way of reasoning and it explains everything using a clarifying example.

In this section technical details are skipped.

The full formal introduction of the mathematical model is in Sect. 3.

2.1 The model

Before detailed introduction of the model we briefly describe it.

We consider a discrete time dynamic game (which includes also the class of repeated games as a simpler case) with the set of players \(\mathbb {I}\), possibly infinite, the set of players’ decisions \(\mathbb {D}\) with sets of available decisions of each player changing during the game (which is described by a correspondence \(D_{i}\)) and the payoff functions of players \(\Pi _{i}\) being the sum of discounted instantaneous payoffs \(P_{i}\) and a terminal payoff \(G_{i}\).

Since the game is dynamic, it is played in a system \(\mathbb {X}\), whose rules of behaviour are described by a function \(\phi \) dependent on a finitely dimensional statistic of profiles of players’ strategies u, e.g. the aggregate or average of the profile.

The same statistic is, together with player’s own strategy and trajectory of the state variable, sufficient information to calculate payoff of this player.

Past states and statistics and current state are known to the players at each time instant. More specific information about other players’ strategies is not observable at any stage.

This ends the definition of the “usual” game.

Besides, at each time instant, given observations of states and statistics (called histories) of the game, players formulate their beliefs \(B_{i}\) of future behaviour of states and statistics (called future histories), being sets of trajectories of these parameters regarded as possible.

For conciseness of notation, actual and future histories are coded in one object, called history and denoted usually by H.

Beliefs define anticipated payoffs \(\Pi _{i}^e(t,\cdot )\) being the sum of the actual instantaneous payoff at time t and the guaranteed (with respect to the belief) value of future optimal payoff \(V_{i}\). The guaranteed payoff is the infinum over future histories in the belief, of the optimal future payoff for such a history, denoted by \(v_{i}\).

The word “anticipated” is used in the colloquial meaning of “expected”, while the word “expected” is not used in order to avoid associations with expected value with respect to some probability distribution, since we want to concentrate on ambiguity. A discussion why such forms of beliefs and payoffs are considered, is contained in “The form of beliefs and anticipated payoffs considered in this paper” section in Appendix 3.

This completes the definition of game with distorted information.

At a Nash equilibrium, in the usual game, every player maximizes his/her payoff given strategy choices of the remaining players—he/she best responds to his/her information about behaviour of the remaining players, and this information is perfect.

At a pre-BDNE, analogously, every player best responds to his/her information about the behaviour of the remaining players, while this information can be partial, distorted or ambiguous. This is represented by maximization of anticipated payoff at each stage of the game.

At a BDNE, beliefs cannot be falsified during the subsequent play—a profile is a BDNE if it is a pre-BDNE and at every stage of the game, the belief set formed at that stage contains the actual history of the game.

2.2 A clarifying example

Since the original ozone hole example has too complicated dynamics to illustrate the concepts used in this paper, we use another global ecological problem with simpler dynamics. We consider an example of exploitation of a common ecosystem from Wiszniewska-Matyszkiel (2005) in a new light of games with distorted information.

In this example the natural renewable resource is crucial for the society of its users, therefore exhausting it results in starvation.

Example 1

Common ecosystem Let us consider a model of a common ecosystem exploited by a large group of users, which is modelled as a game with either n players with the normalized counting measure or the set of players represented by the unit interval with the Lebesgue measure—this measure is denoted by \(\lambda \), while the set of players by \(\mathbb {I}\).

As it is discussed, among others in Wiszniewska-Matyszkiel (2005) and in Appendix 2, this form of measuring players makes the games with various numbers of players comparable—more players in such games do not mean that additional consumers of the resource entered the game, but it means that, with the same large number of actual consumers, the decision making process became more decentralized.

As the time set, we consider the set of nonnegative integers \(\mathbb {N}\).

In the simplest open loop formulation, the aim of player i is to maximize, over the set of his/her strategies \(S_i{:}\,\mathbb {N} \rightarrow \mathbb {R}_+\) Footnote 1

under constraints

The discount rate fulfils \(r>0\).

The trajectory of the state variable X corresponding to the whole strategy profile S is described by

for \(\zeta >0\), called the rate of regeneration, and the initial state is \(X(0)=\bar{x}>0\).

The function \(u^S\) in the definition of X represents the aggregate extraction at various time instants, therefore it is defined by

We also consider more complicated closed loop strategies (dependent on the state variable) or history dependent strategies. In those cases the formulation changes in the obvious way.

Generally, the model makes sense for \(c\le (1+\zeta )\), but the most interesting results are obtained whenever \(c=1+\zeta \). Therefore, we consider this case.

In this case, the so called “tragedy of the commons” is present in a very drastic form—in the continuum of players case, we have total destruction of the resource at finite time, which never takes place in any of finitely many players cases.

The following results are proven in Wiszniewska-Matyszkiel (2005).

Proposition 1

Let us consider the set of players being the unit interval with the Lebesgue measure.

No dynamic profile such that a set of players of positive measure get finite payoffs is an equilibrium, and every dynamic profile yielding the destruction of the system at finite timeFootnote 2 is a Nash equilibrium. At every Nash equilibrium, the payoff of a.e. player is \(-\infty \).

Proposition 2

Let us consider the set of players being the n-element set with the normalized counting measure.

-

(a)

No dynamic profile yielding payoff equal to \(-\infty \) for any player is a Nash equilibrium.

-

(b)

The profile S defined by \(S_{i}(x)=\bar{z}_{n}\cdot x\) for \(\bar{z}_{n}=\max \left( \frac{nr(1+r)}{1+nr},\zeta \right) \) is the only symmetric closed loop Nash equilibrium.

If players have full information about the game they play, then the only profiles that can sustain in the game are Nash equilibria.

This fact is really disastrous in the continuum of players case, while we cannot expect destruction of the resource in any case with finitely many players.

There are natural questions, which also appear in the context of the ozone hole problem.

-

What happens if players—actual decision makers—do not know the actual game they play: the equation for the state trajectory, other players’ payoff functions or extracting capacities, the number of players, or even their own marginal influence on the state variable?

-

What if they are susceptible to various campaigns?

-

What if they can only formulate some beliefs, not necessarily compatible with the actual structure of the game?

-

Can we always expect that false models of reality are falsified by real data if players use those beliefs as the input models of their optimization?

Obviously, in numerous games, especially games with many players, we cannot expect that every player can observe strategies of the other players, or he/she even knows exactly their strategy sets and payoff functions and he/she has the capability to process those data.

In this example with many participants, as well as in the ozone hole problem, with many millions of consumers, the expectation that the only observable variables besides player’s own strategy are the aggregate extraction/emission and the state variable, seems justified. Moreover, the equation describing the behaviour of the state trajectory may be not known to the general public and it is susceptible to various campaigns.Footnote 3 In such a case objective knowledge about the phenomenon considered, even if available, is not necessarily treated as the only possible truth.

The questions the concept of BDNE can answer in the case of ecological problems like this example or the ozone hole problem, are:

-

How dangerous such campaigns can be? What is the result of formulating beliefs according to them?

-

Is it possible, that because players optimize given such beliefs those beliefs are verified by the play? In such a case those beliefs may be regarded by players as scientifically verified.

-

Is it possible to construct a campaign or make some information confidential to save the resource even in the case when complete information results in destruction of it? Can we obtain the n-player equilibrium, not resulting in destruction of the resource, in our continuum of players game?

-

Can such beliefs be regarded as confirmed by the play?

To illustrate the process of calculation of a BDNE or checking self-verification of beliefs we choose the n-player game, time instant t and players’ beliefs that they are negligible but with different opinions about sustainability of the resource:

-

(a) beliefs of each player are “my decision has no influence on the state of the resource and at the next stage the resource will be depleted”;

-

(b) beliefs of each player are “my decision has no influence on the state of the resource and it is possible that at the next stage the resource will be depleted”;

-

(c) beliefs of each player are “my decision has no influence on the state of the resource and the level of the resource will be always as it is now”.

This process consists of the following steps.

-

At each stage t of the game, starting from 0, each player formulates beliefs and calculate the anticipated payoff functions \(\Pi ^e(S,t)\) given history and their decision \(S_i(t)\) (for simplicity of notation we write open loop form of the profile).

It is a sum of actual current payoff plus discounted optimal payoff that a player can obtain if the worst possible scenario happens.

In both (a) and (b), the anticipated payoff is independent on player’s own current decision and it is equal to \(- \infty \).

In (c), the guaranteed future payoff is also independent on player’s own decision and the anticipated payoff is equal to

\(\ln (S_i(t))+(1+r)^{-t}\sum _{i=1}^{\infty } \ln ((1+\zeta )X(t))(1+r)^{-i+1}\), which is finite and the second part is independent of player’s own decision.

-

A part of pre-BDNE profile, corresponding to players’ choices at stage t is chosen—a static profile such that, for every player, his/her current decision maximizes anticipated payoff. It is a static Nash equilibrium problem.

In cases (a) and (b), every static profile can be chosen as maximizing anticipated payoff, therefore, every profile is a pre-BDNE for these beliefs.

In case (c), the only optimal choice of a player is \((1+\zeta )X(t)\), therefore the only pre-BDNE for this belief is defined by \(S_i(t)=(1+\zeta )X(t)\) for every t and i.

-

After repeating this for all time instants, we have profiles that are pre-BDNE.

-

Self-verification is checked.

In (a), only the profiles with \(S_i(0)=(1+\zeta )X(0)\) results in \(X(1)=0\), and, consequently \(X(t)=0\) for all \(t>0\). In fact, there is only one open loop profile with this property. Any other profile (closed loop, history dependent) is equivalent to it in the sense that decisions at each stage of the game are identical: at stage 0 everything is consumed while afterwards nothing is left.

So, in case (a), there is a single BDNE up to equivalence of open loop forms.

These beliefs have the property which we shall call potential self-verification. Therefore, it may happen that they will not be falsified during the play.

The same profile as in (a) is a BDNE in (b).

At this stage, we note that beliefs in case (b) are not precisely defined—if it is not stated what are the other options regarded as possible.

If we additionally assume that e.g. the history corresponding to the n-players equilibrium profile is always in the belief set, then it is another BDNE.

If we expand the belief set and assume that every future scenario is possible, in this specific case, we obtain that every profile is a BDNEFootnote 4 and that beliefs are perfectly self-verifying.

In (c), at every pre-BDNE profile, \(X(1)=0\), which was not in the belief set at time 0. Therefore there is no BDNE and the beliefs are not even potentially self-verifying.

For this example it can be also easily proven that the Nash equilibria from Proposition 1 are pre-BDNE for a special form of beliefs, called perfect foresight and, therefore, BDNE (see Theorem 4).

However, we are more interested in BDNE that are not Nash equilibria.

An interesting problem is to find a belief for which a BDNE does not lead to the destruction of the system even in the continuum of players case.

It turns out that a wide class of profiles at which the system is not destroyed at finite time, including the n-player Nash equilibrium, is a BDNE in the continuum of players game. Moreover, to make it a BDNE in the game with a continuum of players, it is enough to educate using apparently counter-intuitive beliefs a small subset of the set of players (Propositions 10 and 12 b).

This result has an obvious interpretation. In the case of the real life ozone hole problem, ecological education made some people sacrifice their instantaneous utility in order to protect the system. This may happen even if people constitute a continuum. It is enough that they believe their decisions really have influence on the system.

What is even more promising, the beliefs used to obtain such a non-destructive profile as a BDNE in the continuum of players case are perfectly self-verifying (Proposition 12 a).

Since precise formulations and proofs of these results are laborious, they are moved to “Common ecosystem” section in Appendix 1.

The opposite situation—obtaining a destructive continuum of players Nash equilibrium in the n-player game—as we see from the above analysis, is also possible.

If people believe that their influence on the system is like in the case when they constitute a continuum, then they behave like a continuum. Consequently, they may destroy the system in finite time at a pre-BDNE (Proposition 11). Moreover, such a destructive profile is a BDNE (Proposition 12 c).

3 Formulation of the model

In this section we introduce the model formally.

Those who are not interested in mathematical precision, can achieve general orientation about the model by reading Sect. 2.

The main environment in which we work is a game with distorted information, denoted by \({\mathfrak {G}}^{\text {dist}}\).

It is built on a structure of a dynamic game \({\mathfrak {G}}\) with set of players \(\mathbb {I}\), discrete time set \(\mathbb {T}\), set of possible states \(\mathbb {X}\) and payoffs \(\Pi _i\), being a discounted sum of instantaneous payoffs \(P_i\) and, in the case of finite time horizon, terminal payoff \(G_i\). An important parameter in the game is some profile statistic u, observable by the players and representing all the information about the profile that influences trajectory of the state variable and all information about the profile besides player’s own strategy which influences his/her payoff.

The difference between the \({\mathfrak {G}}^{\text {dist}}\) and \({\mathfrak {G}}\) concerns payoffs.

In \({\mathfrak {G}}^{\text {dist}}\) at each stage player formulate beliefs \(B_i\), based on observation of trajectory of the state variable and past statistics and those beliefs are used to calculate anticipated payoffs \(\Pi _i\). Consequently, we obtain a sequence of subgames with distorted information \({\mathfrak {G}}^{\text {dist}}_{t,H}\).

However, the elements to define both kinds of games are almost all the same.

For the objective, dynamic game \({\mathfrak {G}}\) we need a tuple of the following objects \(((\mathbb {I},\mathfrak {I},\lambda ),\mathbb {T}, \mathbb {X}, (\mathbb {D},\mathcal {D)},\{D_{i}\}_{i\in \mathbb {I}},U,\phi , \{P_{i}\}_{i\in \mathbb {I}},\{G_{i}\}_{i\in \mathbb {I}},\{r_{i}\}_{i\in \mathbb {I}})\), while to define a game with distorted information \({\mathfrak {G}}^{\text {dist}}\) associated with it—\(((\mathbb {I},\mathfrak {I},\lambda ),\mathbb {T}, \mathbb {X}, (\mathbb {D},\mathcal {D)},\{D_{i}\}_{i\in \mathbb {I}},U,\phi ,\) \( \{P_{i}\}_{i\in \mathbb {I}},\{G_{i}\}_{i\in \mathbb {I}},\{r_{i}\}_{i\in \mathbb {I}},\{B_{i}\}_{i\in \mathbb {I}})\).

With this general description, we can start a detailed definition of both kinds of games.

The set of players is denoted by \(\mathbb {I}\). In order that the definitions of the paper could encompass not only games with finitely many players, but also games with a continuum of players, we introduce a structure on \(\mathbb {I}\) consisting of a \(\sigma \)-field \(\mathfrak {I}\) of its subsets and a measure \(\lambda \) on it. More information about games with a measure space of players can be found in Appendix 2.

The game is dynamic, played over a discrete time set \(\mathbb {T}\), without loss of generality \(\mathbb {T}=\{t_{0},t_{0}+1,\ldots ,T\}\) or \(\mathbb {T} =\{t_{0},t_{0}+1,\ldots \}\), which, for uniformity of notation, is treated as \(T=+\infty \). For the same reason, we introduce also the symbol \(\overline{\mathbb {T}}\) denoting \(\{t_{0},t_{0}+1,\ldots ,T+1\}\) for finite T and equal to \(\mathbb {T}\) in the opposite case.

The game is played in an environment (or system) with the set of states \(\mathbb {X}\). The state of the system (state for short) changes over time in response to players’ decisions, constituting a trajectory X, whose equation is stated in the sequel. The set of all potential trajectories—functions \(X{:}\,\overline{\mathbb {T}}\rightarrow \mathbb {X}\)—is denoted by \(\mathfrak {X}\).

At each time t, given state x, player i chooses a decision from his set of available actions/decisions \(D_{i}(t,x) \subset \mathbb {D}\)—the set of (potential) actions/decisions of players. These available decision sets of player i constitute a correspondence of available decision sets of player i, \(D_{i}{:}\,\mathbb {T}\times \mathbb {X}\multimap \mathbb {D}\), while all available decision sets constitute a correspondence of available decision sets, \(D{:}\,\mathbb {I}\times \mathbb {T}\times \mathbb {X}\multimap \mathbb {D}\) with nonempty values. We also need a \(\sigma \)-field of subsets of \(\mathbb {D}\), denoted by \(\mathcal {D}\).

For any time t and state x, we call any measurable function \(\delta {:}\,\mathbb {I\rightarrow D}\) which is a selection from the correspondence \(D(\cdot ,t,x)\) a static profile available at t and x. The set of all static profiles available at t and x is denoted by \(\Sigma (t,x)\). We assume that all these sets of static profiles are nonempty.Footnote 5

The union of all the sets of static profiles available at various t and x is denoted by \(\Sigma \).

The definitions of a strategy (dynamic strategy) and a profile (dynamic profile) appear in the sequel, since first we have to define the domains of these functions.

The influence of a whole static profile \(\delta \) on the state variable is via its statistic.

Without loss of generality, the same statistic is the only parameter besides player’s own action, influencing the value of his/her payoff.

Formally, a statistic is a function \(U{:}\,\Sigma \times \mathbb {X}\mathop {\rightarrow }\limits ^{\text {onto}}\mathbb {U}\subset \mathbb {R}^{m}\), defined by \(U(\delta ,x)=\left[ \int _{\mathbb {I}}g_{k}(i,\delta (i),x)d\lambda (i)\right] _{k=1}^{m}\), for a collection of functions \(g_{k}{:}\,\mathbb {I}\times \mathbb {D}\times \mathbb {X}\rightarrow \mathbb {R}\), which are \(\mathfrak {I}\otimes \mathcal {D}\)-measurable for every \(x\in \mathbb {X}\) and every k.Footnote 6

The set \(\mathbb {U}\) is called the set of profile statistics.

If \(\Delta {:}\,\mathbb {T}\rightarrow \Sigma \) represents choices of profiles at various time instants and X is a trajectory of the system, then we denote by \(U(\Delta ,X)\) the function \(u{:}\,\mathbb {T}\rightarrow \mathbb {U}\) such that \(u(t)=U(\Delta (t),X(t))\). The set of all such functions is denoted by \(\mathfrak {U}\).

Given a function \(u{:}\,\mathbb {T}\rightarrow \mathbb {U}\), representing the statistics of profiles chosen at various time instants, the system evolves according to the equation \(X(t+1)=\phi (X(t),u(t))\), with the initial condition \(X(t_{0})=\bar{x}\). We call such a trajectory corresponding to u and denote it by \(X^{u}\). If \(u=U(\Delta ,X^{u})\), where \(\Delta {:}\,\mathbb {T}\rightarrow \Sigma \) represents a choice of static profiles at various time instants, then, by a slight abuse of notation, we denote the trajectory corresponding to u by \(X^{\Delta }\) and call it corresponding to \(\Delta \) and instead of \(U(\Delta ,X^{\Delta })\), we write \(U(\Delta )\)—the statistic of \(\Delta \).

At each time instant during the game, players get instantaneous payoffs. The instantaneous payoff of player i is a function \(P_{i}{:}\,\mathbb {D}\times \mathbb {U}\times \mathbb {X}\rightarrow \mathbb {R\cup \{-\infty \}}\).

Besides, in the case of finite time horizon, players get also terminal payoffs (after termination of the game), defined by functions \(G_{i}{:}\,\mathbb {X}\rightarrow \mathbb {R\cup \{-\infty \}}\). For uniformity of notation, we take \(G_{i}\equiv 0\) in the case of infinite time horizon.

Players observe some histories of the game, but not the whole profiles. At time t they observe the states X(s) for \(s\le t\) and the statistics u(s) of static profiles for time instants \(s<t\). Therefore the set \(\mathbb {H}_{t}\) of observed histories at time t equals \(\mathbb {X}^{t-t_{0}+1}\mathbb {\times }\mathbb {U}^{t-t_{0}}\). To simplify further notation, we introduce the set of all, possibly infinite, histories of the game \(\mathbb {H}_{\infty }=\mathbb {X} ^{T-t_{0}+2}\mathbb {\times }\mathbb {U}^{T-t_{0}+1}\). For such a history \(H\in \mathbb {H}_{\infty }\) we denote by \(H|_{t}\) the actual history observed at time t.

Given an observed history \(H_{t}\in \mathbb {H}_{t}\), players formulate their suppositions about future values of u and X, depending on their decision a made at time t.

This is formalized as a multivalued correspondence of player’s i belief \(B_{i}{:}\,\mathbb {T}\times \mathbb {D}\times \mathbb {H}_{\infty }\multimap \mathbb {H}_{\infty }\) with nonempty values.

Obviously, we assume that beliefs \(B_{i}(t,a,H)\) are identical for histories H with identical observed history \(H|_{t} \) and that for all \(H^{\prime }\in B_{i}(t,a,H)\) we have \(H^{\prime }|_{t}=H|_{t}\). Note also that in fact, the value of u(t) is redundant in the definition of beliefs at time t, since as input we need u(s) for \(s<t\) only, while as output we are interested only in u(s) for \(s>t\). Therefore, for simplicity of further definitions of self-verification, we take the largest set of possible values of u(t)—\(\mathbb {U}\). Those assumptions are only consequences of using simplifying notational convention, which allows to code both observed histories and beliefs in one element of \(\mathbb {H}_{\infty }\).

Besides the above assumptions, we do not impose a priori any other constraints, like an equivalent of Bayesian updating.

This means that we consider a wide class of belief correspondences as inputs to our model. We shall return to the question how players update beliefs and whether some beliefs cannot be ex post regarded as justified in Sect. 4.3, when we introduce the self-verification of beliefs.

The next stage is the definition of players’ strategies in the games—both the actual dynamic game and the game with distorted information. We consider very compound closed loop strategies—dependent on time instant, state and the actual history of the game at this time instant. Formally, a (dynamic) strategy of player i is a function \(S_{i}{:}\,\mathbb {T}\times \mathbb {X}\times \mathbb {H}_{\infty }\rightarrow \mathbb {D}\) such that for each time t, state x and history H, we have \(S_{i}(t,x,H)\in D_{i}(t,x)\) and dependence of \(S_i(t,x,H)\) on H is restricted to the actual history observed at time t, i.e. \(H|_t\).

Since players’ beliefs in the game with distorted information depend on observed histories of the game, such a definition may encompass also dependence on beliefs.

Such choices of players’ strategies constitute a function \(S{:}\,\mathbb {I}\times \mathbb {T}\times \mathbb {X}\times \mathbb {H}_{\infty }\rightarrow \mathbb {D}\).Footnote 7 The set of all strategies of player i is denoted by \(\mathfrak {S}_{i}\).

For simplicity of further notation, for a choice of strategies \(S=\{S_{i}\}_{i\in \mathbb {I}}\), we consider the open loop form of it, \(S^{OL}{:}\,\mathbb {T}\rightarrow \Sigma \).

It is defined by \(S_{i}^{OL}(t)=S_{i}(t,X(t),H^S) \) for \(H^S\) denoting the history of the game resulting from choosing S.

Open loop form is well defined, whenever the history is well defined.Footnote 8

Therefore we restrict the notion (dynamic) profile (of players’ strategies) to choices of strategies such that for all t, the function \(S_{\cdot }^{OL}(t)\) is a static profile available at \(t\in \mathbb {T}\) and \(X^S(t)\) (\(=X^{S^{OL}}(t))\).

The set of all dynamic profiles is denoted by \(\mathbf {\Sigma }\).

If the players choose a dynamic profile S, then the actual payoff of player i, \(\Pi _{i}{:}\,\mathbf {\Sigma }\rightarrow \overline{\mathbb {R}}\), can be written in a way dependent only on the open loop form of the profile:

where \(r_{i}>0\) is a discount rate of player i.

Since we assumed that the instantaneous and terminal payoffs can have infinite values, we add an assumption that for all S the value \(\Pi _{i}(S) \) is well defined—which holds if e.g. \(P_{i}\) are bounded from above or for every profile S, the functions \(t \mapsto P_{i}\left( S_{i}^{OL}(t),U\left( S^{OL}(t)\right) ,X^{S}(t)\right) \) are bounded from above by a polynomial function whenever \(G_{i}\left( X^{S}(T+1)\right) \) can attain \(-\infty \).

However, players do not know the whole profile, therefore, instead of the actual payoff at each future time instant, they can use in their calculations the anticipated payoff functions, \(\Pi _{i}^{e}{:}\,\mathbb {T}\times \mathbf {\Sigma }\rightarrow \overline{\mathbb {R}}\), corresponding to their beliefs at the corresponding time instants.

This function for player i is defined by

where \(V_{i}{:}\,\overline{\mathbb {T}}\times \left( \mathfrak {P}(\mathbb {H}_{\infty })\backslash \emptyset \right) )\rightarrow \overline{\mathbb {R}}\), (the function of guaranteed anticipated value or guaranteed anticipated payoff) represents the present value of the minimal future payoff given his belief correspondence and assuming player i chooses optimally in the future.

Formally, for time t and belief set \(\mathbb {B}\in \mathfrak {P}(\mathbb {H}_{\infty })\backslash \emptyset \), we define

where the function \(v_{i}{:}\,\overline{\mathbb {T}}\mathbb {\times H}_{\infty }\rightarrow \overline{\mathbb {R}}\) is the present value of the future payoff of player i along a history under the assumption that he/she chooses optimally in the future:

Note that such a definition of anticipated payoff mimics the Bellman equation for calculating best responses of players’ to the strategies of the others. For various versions of this equation see e.g. Bellman (1957), Blackwell (1965) or and Stokey et al. (1989).

It is also worth emphasizing that the construction of the function \(V_i\) is analogous to the approach proposed by Gilboa and Schmeidler (1989).Footnote 9

This completes the definition of the game with distorted information \({\mathfrak {G}}^{\text {dist}}\).

We can also introduce the symbol \({\mathfrak {G}}^{\text {dist}}_{t,H}\) denoting the game with the set of players \(\mathbb {I}\), the sets of their strategies \(D_{i}(t,X(t))\) and the payoff functions \(\bar{\Pi }_{i}^{e}(t,H,\cdot ){:}\,\Sigma \rightarrow \overline{\mathbb {R}}\) defined by \(\bar{\Pi }_{i}^{e}(t,H,\delta )=\Pi _{i}^{e}(t,S)\) for a profile S such that \(S(t)=\delta \) and \(H^{S}|_{t}=H|_{t}\) (note that the dependence of \(\Pi _{i}^{e}(t,\cdot )\) on the profile is restricted to its static profile at time t only, and the history observed at time t, therefore the definition does not depend on the choice of specific S).

We call these \({\mathfrak {G}}^{\text {dist}}_{t,H}\) subgames with distorted information.

4 Nash equilibria, belief distorted Nash equilibria and subjective Nash equilibria

One of the basic concepts in game theory, Nash equilibrium, assumes that every player, or almost every in the case of large games with a measure space of players, chooses a strategy which maximizes his/her payoff given the strategies of the remaining players.

Notational convention In order to simplify notation, we need the following abbreviation: for a profile S and a strategy d of player i, the symbol \(S^{i,d}\) denotes the profile such that \(S_{i}^{i,d}=d\) and \(S_{j}^{i,d}=S_{j}\) for \(j\ne i\).

Definition 1

A profile S is a Nash equilibrium if for a.e. \(i\in \mathbb {I}\) and for every strategy d of player i, we have \(\Pi _{i}(S)\ge \Pi _{i}(S^{i,d})\).

4.1 Pre-BDNE: towards BDNE

The assumption that a player knows the strategies of the remaining players or at least the future statistics of these strategies which influence his payoff is usually not fulfilled in many real life situations. Moreover, even details of the other players’ payoff functions or available strategy sets are sometimes not known precisely, while other players’ information at a specific situation is usually unknown. Therefore, given their beliefs, players can only maximize their anticipated payoffs.

Definition 2

A profile S is a pre-belief distorted Nash equilibrium (pre-BDNE for short), if for a.e. \(i\in \mathbb {I}\), every strategy d of player i and every \(t\in \mathbb {T}\), we have \(\Pi _{i}^{e}(t,S)\ge \Pi _{i}^{e}(t,S^{i,d})\).

With notation introduced in Sect. 3, a profile S is a pre-BDNE in \({\mathfrak {G}}^{\text {dist}}\) if at each time t, the static profile \(S^{OL}(t)\) is a Nash equilibrium in \({\mathfrak {G}}^{\text {dist}}_{t,H^{S}}\).

This formulation reveals that, compared to looking for a Nash equilibrium in a dynamic game, finding a pre-BDNE, given beliefs, is simpler.Footnote 10

First, we have an obvious equivalence with Nash equilibria, since distortion of beliefs concerns only future.

Remark 1

In a one shot game i.e. for \(T=t_{0}\) and \(G\equiv 0\) a profile is a pre-BDNE if and only if it is a Nash equilibrium.

Now it is time to state an existence result for games with a nonatomic space of players.

Theorem 3

Let \((\mathbb {I},\mathfrak {I},\lambda )\) be a nonatomic measure space and let \(\mathbb {D}= \mathbb {R}^{n}\) with the \(\sigma \)-field of Borel subsets.

Assume that for every t, x, H and for almost every i, the following continuity assumptions hold: the sets \(D_{i}(t,x)\) are compact, the functions \(P_{i}(a,u,x)\) and \(V_{i}(t,B_{i}(t,a,H))\) are upper semicontinuous in (a, u) jointlyFootnote 11 while for every a, they are continuous in u, and for all k, the functions \(g_{k}(i,a,x)\) are continuous in a for \(a\in D_{i}(t,x)\).

Assume also that for every t, x, u and H, the following measurability assumptions hold: the graph of \(D_{\cdot }(t,x)\) is measurable and the following functions defined on \(\mathbb {I}\times \mathbb {D}\) are measurable \((i,a)\mapsto P_{i}(a,u,x)\), \(r_{i}\), \(V_{i}(t,B_{i}(t,a,H))\) and \(g_{k}(i,a,x)\) for every k.

Moreover, assume that for every k and x, there exists an integrable function \(\Gamma {:}\,\mathbb {I\rightarrow R}\) such that for every \(a\in D_{i}(t,x) \left| g_{k}(i,a,x)\right| \le \Gamma (i)\).

Under these assumptions there exists a pre-BDNE for B.

Proof

It is a conclusion from one of theorems on the existence of pure strategy Nash equilibria in games with a continuum of players: Wiszniewska-Matyszkiel (2000) Theorem 3.1 or Balder (1995) Theorem 3.4.1, applied to the sequence of games \({\mathfrak {G}}^{\text {dist}}_{t,H}\) for any history H such that \(H|_t\) is the actual history of the game observed at time t. \(\square \)

Theorem 3 is analogous to the existence results of pure strategy Nash equilibria in games with a continuum of players, which hold under quite weak assumptions.

Now we are going to show some properties of pre-BDNE for a special kind of belief correspondence.

Definition 3

A belief correspondence \(B_{i}\) of player i is the perfect foresight at a profile S if for all t, it fulfils \(B_{i}(t,S_{i}^{OL}(t),H^{S})=\{H^{S}\}\).

Theorem 4

Let \((\mathbb {I},\mathfrak {I},\lambda )\) be a nonatomic measure space and let player’s payoffs be bounded for a.e. player.

Consider a profile \(\bar{S}\) with statistic of its open loop form u and the corresponding trajectory X.

(a) Let \(\bar{S}\) be a Nash equilibrium profile.

If B is the perfect foresight at a profile \(\bar{S}\) and at the profiles \(\bar{S}^{i,d}\) for a.e. i and every strategy d of player i, then for all t and a.e. i, \(\bar{S} _{i}^{OL}(t)\in \mathop {\mathrm{{Argmax}}}_{a\in D_{i}(t,X(t))}\bar{\Pi }_{i}^{e}(t,H^{\bar{S}},(\bar{S}^{OL}(t))^{i,a})\) Footnote 12 and \(\bar{S}_{i}^{OL}|\{t+1,\ldots ,T\}\) is consistent with the results of player’s i optimizations used in the definition of \(v_{i}\) at consecutive time instants.Footnote 13

(b) If \(\bar{S}\) is a Nash equilibrium, then it is a pre-BDNE for a belief correspondence being the perfect foresight at \(\bar{S}\) and at all profiles \(\bar{S}^{i,d}\).

(c) Let \(\bar{S}\) be a pre-BDNE at a belief B. If B is the perfect foresight at a profile \(\bar{S}\) and at the profiles \(\bar{S}^{i,d}\) for a.e. i and every strategy d of player i, then for a.e. i, choices of player i are consistent with the results of his/her optimization used in definition of \(v_{i}\) at consecutive time instants.

(d) If a profile \(\bar{S}\) is a pre-BDNE for a belief B being the perfect foresight at this \(\bar{S}\) and \(\bar{S}^{i,d}\) for a.e. player i and every strategy d of player i, then it is a Nash equilibrium.

For transparency of exposition, the proof is in Appendix 1.

Theorem 4 says that in games with a continuum of players, pre-BDNE for the perfect foresight beliefs are equivalent to Nash equilibria. Moreover, we have time consistency: solving the optimization problem while stating what is his/her optimal guaranteed value of future payoff at any stage, player calculates his/her strategy which is actually played at the pre-BDNE.

Remark 2

A Nash equilibrium profile \(\bar{S}\) may be also represented as a pre-BDNE for a belief which is not the perfect foresight at \(\bar{S}\) or \(\bar{S}^{i,d}\).

Now we formulate an equivalence theorem for repeated games.

Theorem 5

Let \({\mathfrak {G}}^{\text {dist}}\) be a repeated game with a belief correspondence not dependent on players’ own strategies, in which payoffs and anticipated payoffs are bounded for a.e. player.

-

(a)

If \((\mathbb {I},\mathfrak {I},\lambda )\) is a nonatomic measure space, then a profile S is a pre-BDNE if and only if it is a Nash equilibrium.

-

(b)

Every profile S with strategies of a.e. player being independent of histories is a pre-BDNE if and only if it is a Nash equilibrium.

Proof of this theorem is also in Appendix 1.

4.2 The final concept of BDNE

It seems obvious that definition of equilibrium should combine the equilibrating and payoff maximization conditions defining pre-BDNE with some kind of self-verification of beliefs—the concept of pre-BDNE lacks some condition that our observation along the profile does not contradict our beliefs.

The most obvious way of measuring self-verification of beliefs is by accuracy of prediction of observable variables. Sometimes, in evolutionary game theory, players look only on payoffs and if they obtain payoffs as assumed, they do not have any incentive to change their choices and beliefs.

In this paper we consider the former measure: by accuracy of prediction of observable variables—statistic and state.

The main reason is that in reality, even if a player obtains payoffs as he/she assumed, the fact that he/she observes reality which was previously regarded as impossible, is an incentive to change beliefs.

With this idea of self-verification we can end up with the definition of BDNE.

Definition 4

-

(a)

A profile \(\bar{S}\) is a belief-distorted Nash equilibrium (BDNE) for a collection of beliefs \(B=\{B_{i}\}_{i\in \mathbb {I}}\) if \(\bar{S}\) is a pre-BDNE for B and for a.e. i and every t, \(H^{\bar{S}} \in B_{i}(t,\bar{S}_{i}^{OL}(t),H^{\bar{S}})\).

-

(b)

A profile \(\bar{S}\) is a belief-distorted Nash equilibrium (BDNE) if there exists a collection of beliefs \(B=\{B_{i}\}_{i\in \mathbb {I}}\) such that \(\bar{S}\) is a BDNE for B.

We can state the following equivalence results being immediate consequences of the corresponding equivalence results for pre-BDNE.

Theorem 6

Theorems 4 and 5 and Remark 1 remain true if we replace pre-BDNE by BDNE.

Proof

In remark 1 there is nothing to prove.

Equivalence in Theorems 4 and 5 is also immediate—if we take the perfect foresight beliefs, then a profile S is a pre-BDNE for perfect foresight if and only if it is a BDNE for perfect foresight, since for perfect foresight the actual history is in the belief set. \(\square \)

Corollary 7

In games with a continuum of players with payoffs of a.e. player bounded, every Nash equilibrium is a BDNE.

This implies existence of a BDNE in games with a continuum of players in every case whenever there exists a Nash equilibrium. Existence results and properties of equilibria for such games are proven, among others, in Wiszniewska-Matyszkiel (2002) for open loop information structure. However, by Wiszniewska-Matyszkiel (2014a), in discrete time dynamic games with a continuum of players the existence of an open loop Nash equilibrium is equivalent to existence of a closed loop Nash equilibrium.

Obviously, we are interested in the existence of BDNE which are not Nash equilibria. An obvious question is whether it is always possible to construct beliefs, with some minimal properties, such that a game without pure strategy Nash equilibria has a BDNE. The answer is negative and a counterexample is stated in Proposition 9.

After defining the concept and examining its properties, we compare BDNE with subjective Nash equilibrium—to the best of the author’s knowledge the only solution concept which can deal with seriously distorted information, like our concept of BDNE.

Since in our approach we consider only pure strategy profiles, we have to restrict only to pure strategy subjective equilibria.

First of all, subjective equilibria are used only in the environment of repeated games, or one stage games that potentially can be repeated. Therefore, the concept of BDNE can be used in a wider environment than subjective equilibria.

If we compare both kinds of equilibria in the same environment of repeated games, without state variables, there are another differences.

First, note that the concept of “consequences” of actions, used in the definition of subjective equilibria, corresponds to our statistic function and beliefs about its values.

The first difference is that they have the form of probability distribution, while here it is the set of histories regarded as possible, which are deterministic.Footnote 14

We still can compare these concepts in the case when both expectations are trivial: a probability distribution concentrated at a point in the subjective equilibrium approach and a singleton as the set of possible histories in our approach. In this case, we can see the second main difference.

In the subjective equilibrium approach, beliefs, coded by environmental response function, describing how the environment is going to react to our decision at the considered time instant, concern only that time instant.

Moreover, decisions at each time instant are taken without foreseeing the future, and beliefs (as in our paper based on histories) apply to the present stage of the game only. So at each stage players just optimize given their beliefs about behaviour of the statistic of the profile at this stage and do not think about future consequences of their moves.

So, in the subjective equilibrium approach, subsequent stage games are separated in the sense that a player does not take into account the fact that results of his/her current decision can influence future behaviour of the other players, unlike in most repeated games. Consequently, there is no risk of experimenting in order to discover the structure of the game other than loss of current payoff. The case of repeated Prisoner’s dilemma—Example 4 shows how erroneous such an approach can be.

In Example 3, we present a situation when direct comparison between subjective equilibria and pre-BDNE is possible (the case in which beliefs are concentrated at one point) first considered in Kalai and Lehrer (1995). In this case there are profiles of strategies that constitute pure strategy subjective equilibria and they are not even pre-BDNE.

Example 4 illustrates the opposite situation: there are BDNE which cannot constitute subjective equilibria without assuming beliefs which are totally against the logic of the game, since we have simultaneous moves in stage games. A pair of cooperative strategies can be a subjective equilibrium in the repeated game only if each of the players assumes that the opponent is going to punish his/her choice of defective strategy immediately, at the same stage of the game.

Besides, in the subjective equilibrium theory, there is a condition that beliefs are not contradicted by observations, i.e. that frequencies of various results correspond to the assumed probability distributions.

Similarly, in the definition of BDNE we introduce a criterion of self-verification saying that beliefs are not contradicted by observations—here in the sense that the actually observed history is always in the set of beliefs.

4.3 Self-verification of beliefs

Besides the concept of BDNE, we can also consider separately self-verification as a property of beliefs.

Assume that players, given some beliefs, choose a profile being a pre-BDNE for them.

We can consider potential and perfect self-verification—stating, correspondingly, that “it is possible that beliefs will never be falsified”, or “for sure, beliefs will never be falsified”.

Definition 5

-

(a)

A collection of beliefs \(B=\{B_{i}\}_{i\in \mathbb {I}}\) is perfectly self-verifying if there exists a BDNE for B and for every pre-BDNE \(\bar{S}\) for B, for a.e. \(i\in \mathbb {I}\) and a.e. t, we have \(H^{\bar{S}}\in B_{i}(t,\bar{S}_{i}^{OL}(t),H^{\bar{S}})\).

-

(b)

A collection of beliefs \(B=\{B_{i}\}_{i\in \mathbb {I}}\) is potentially self-verifying if there exists a pre-BDNE \(\bar{S}\) for B such that for a.e. \(i\in \mathbb {I}\) and a.e. t, we have \(H^{\bar{S}}\in B_{i}(t,\bar{S}_{i}^{OL}(t),H^{\bar{S}})\).

-

(c)

A collection of beliefs \(\{B_{i}\}_{i\in \mathbb {J}}\) of a set of players \(\mathbb {J}\) is perfectly self-verifying against beliefs of the other players \(\{B_{i}\}_{i\in \backslash \mathbb {J}}\) if there exists a BDNE for \(\{B_{i}\}_{i\in \mathbb {I}}\) and for every pre-BDNE \(\bar{S}\) for \(\{B_{i}\}_{i\in \mathbb {I}}\) for a.e. \(i\in \mathbb {J}\) and a.e. t, we have \(H^{\bar{S} }\in B_{i}(t,\bar{S}_{i}^{OL}(t),H^{\bar{S}})\).

-

(d)

A collection of beliefs \(\{B_{i}\}_{i\in \mathbb {J}}\) of a set of players \(\mathbb {J}\) is potentially self-verifying against beliefs of the other players \(\{B_{i}\}_{i\in \backslash \mathbb {J}}\) if there exists a pre-BDNE \(\bar{S}\) for \(\{B_{i}\}_{i\in \mathbb {I}}\) such that for a.e. \(i\in \mathbb {J}\) and a.e. t, we have \(H^{\bar{S}}\in B_{i}(t,\bar{S}_{i}^{OL}(t),H^{\bar{S}})\).

Remark 3

-

(a)

Every pre-BDNE for perfectly self-verifying beliefs is a BDNE.

-

(b)

If there exists a BDNE for B, then B are potentially self-verifying.

After introducing these concepts, we can return to the discussion about properties of beliefs.

Beliefs which are not even potentially self-verifying, cannot be regarded as rational in any situation.

On the other hand, beliefs which are perfectly self-verifying will never be falsified if players optimize according to them.

5 Examples

Now we illustrate the notions of pre-BDNE, self-verification and BDNE by the examples mentioned in Sect. 1.1. We also compare them with Nash equilibria and, if possible, with subjective equilibria.

In those examples we start by calculating pre-BDNE for various forms of beliefs with special focus on those which are inconsistent with the “common sense” implied by the objective dynamic game structure. Afterwards we check self-verification of those beliefs and check whether the pre-BDNE found are BDNE.

Example 2

Repeated El Farol bar problem with many players or public goods with congestion

Here we present a modification of the model first stated by Brian Arthur (1994) as the El Farol bar problem, allowing to analyse both continuum and finitely many players cases.

There are players who choose each time whether to stay at home—represented by 0—or to go to the bar—represented by 1. If the bar is overcrowded, then it is better to stay at home, the less it is crowded the better it is to go.

The same model can describe also problems of so called local public goods, where the congestion decreases the utility of consuming it.

We consider the space of players being either the unit interval with the Lebesgue measure or the set \( \{ 1,\ldots ,n \} \) with the normalized counting measure. The game is repeated, therefore dependence on state variables is trivial, so we skip them in the notation.

The statistic of a static profile \(\delta \) is \(U(\delta )=\int _{\mathbb {I}}\delta (i)d\lambda (i)\).

In our model, we reflect results of congestion by instantaneous payoff function \(P_{i}(0,u)=0\) and \(P_{i}(1,u)=\frac{1}{2}-u\).

To make the payoffs finite, we assume that either T is finite or \(r_i>0\). Besides, we take \(G \equiv 0\).

Proposition 8

Consider the continuum of players case and beliefs independent of player’s own choice a.

The set of BDNE coincides with the set of pure strategy Nash equilibria and the set of pure strategy subjective equilibria.

Moreover, at every pre-BDNE, for all t, \(u(t)=\frac{1}{2}\).

Proof

The last fact is obvious, the first one is a consequence of Theorem 5, so the only fact that remains to be proved is that at every subjective equilibrium \(u(t)=\frac{1}{2}\).

The environmental response functions assigns a probability distribution describing player’s beliefs about u(t). All players who believe that the event \(u(t)>\frac{1}{2}\) has greater probability than \(u(t)<\frac{1}{2}\), choose 0, while those who believe in the opposite inequality choose 1, the remaining players may choose any of two strategies. If the number of players choosing 0 is greater than those choosing 1, then the interval \(u(t)<\frac{1}{2}\) happens more frequently than \(u(t)>\frac{1}{2}\), which contradicts the beliefs of the two groups of players who choose 0. \(\square \)

Proposition 9

Consider the n-player game and players’ beliefs independent of player’s own action.

-

(a)

Let n be odd.

The set of BDNE coincides with the set of pure strategy Nash equilibria and the set of pure strategy subjective equilibria and they are empty.

-

(b)

Let n be even.

The set of Nash equilibria coincides with the set of pure strategy subjective Nash equilibria. At every equilibrium, for all t, \(u(t)=\frac{1}{2}\).

Proof

Obtaining \(u(t)=\frac{1}{2}\) in (a) is impossible for players using pure strategies. The rest of the proof is analogous to the proof of Proposition 8. \(\square \)

Example 3

Repeated Cournot oligopoly or competitive market

We consider a model of a market with a strictly decreasing inverse demand function \(p:\mathbb {R}_{+}\rightarrow \mathbb {R}_{+}\) and a strictly increasing and convex cost functions of players \(c_{i}{:}\,\mathbb {R}_{+}\rightarrow \mathbb {R}_{+}\).

The set of players—producers of the same product—is either finite with the normalized counting measure in the Cournot oligopoly case, or the interval [0, 1] with the Lebesgue measure in the competitive market case.

The statistic of a static profile \(\delta \) is \(U(\delta )= \int _{\mathbb {I} }\delta (i)d\lambda (i)\).

Therefore, the instantaneous payoff is \(P_{i}(a,u)=p(u)\cdot a-c_{i}(a)\).

The discount factors of all players are identical with \(r_{i}\) equal to the market interest rate \(r>0\).

Kalai and Lehrer consider a similar example with n identical players and linear cost functions in Kalai and Lehrer (1995) and they prove that, besides the Nash equilibrium of the model, i.e. the Cournot equilibrium, there is another subjective equilibrium, in which the competitive equilibrium production level and price are obtained.

It can be easily proved that the opposite situation also takes place: in the continuum-of-players model, in which the competitive equilibrium is the only (up to measure equivalence) Nash equilibrium, the Cournot equilibrium behaviour for n-player oligopoly, constitutes a subjective equilibrium. Indeed, it is enough to make every player believe that the price reacts to his production level as in the n-player oligopoly case.

This is usually not the case when we consider BDNE, since the distortion of information concerns only future.

Remark 4

If every player believes that his/her current decision does not influence future prices, then the only BDNE in the n player model is the Cournot-Nash equilibrium while in the continuum of players game—the competitive equilibrium.

Proof

An immediate consequence of Theorem 5—those profiles are the only Nash equilibria in the corresponding one shot games. \(\square \)

Example 4

Repeated Prisoner’s dilemma

We consider infinite time horizon in which a standard Prisoner’s Dilemma game is repeated infinitely many times.

There are two players who have two strategies in each stage game—cooperate coded as 1 and defect coded as 0.

The decisions are made simultaneously, therefore a player does not know the decision of his/her opponent.

If both players cooperate, they get payoffs C, if they both defect, they get payoffs N. If only one of the players cooperates, then he/she gets payoff A, while the defecting one gets R. These payoffs are ranked as follows: \(A<N<C<R\).

Obviously, the strictly dominant pair of defecting strategies (0, 0) is the only Nash equilibrium in one stage games, while a sequence of such decisions constitute a Nash equilibrium also in the repeated game, as well as a BDNE. We check whether a pair of cooperative strategies can also constitute a BDNE.

Let us consider beliefs of the form “if I defect now, the other player will defect in future for ever, while if I cooperate now, the other player will cooperate for ever”, i.e. “the other player plays grim trigger strategy”.

The pair of grim trigger strategies “cooperate to the first defection of the other player, then defect for ever”, denoted by GT, constitute a Nash equilibrium if the discount rates are not to large.

However, this does not hold for a pair of simple strategies “cooperate for ever”, denoted by CE.

We construct very simple beliefs which allow such a pair of strategies to be a BDNE. For clarity of exposition, we formulate them in “Repeated Prisoner’s Dilemma” section in Appendix 1 and prove that such a pair of strategies is a BDNE (Proposition 13).

Note that a pair of cooperative strategies as subjective equilibrium can be obtained only when we take the environmental response functions of both players describing the decision of the other player at the same stage. This means we at least have to assume that each player believes that his/her opponent knows his/her decision before making decision and immediately punishes for defection. This may happen only if we consider the situation of first mover in not simultaneous, but sequential Prisoner’s Dilemma game, which contradicts the basic assumption about the game.

Notes

Where \(\ln 0\) is understood as \(-\infty \).

Which means that \(\exists \bar{t}\quad \forall t>\bar{t}\quad X(t)=0\).

In the case of the ozone hole problem, there it was a positive ecological campaign in media making many consumers of deodorants containing freons believe that their potential impact is such that their decision is not negligible.

In the case of the greenhouse effect problem we can observe an opposite campaign—there are many voices saying that there is no global warming, some even saying that scientists writing about it consciously lie.

It is a very rare situation, possible to obtain only because of \(- \infty \) as the guaranteed future payoff, independently of player’s decision.

This holds always in the case of finitely many players, while for infinitely many players non-emptiness can be proved using measurability or analyticity of the graph of \(D(\cdot ,t,x)\) together with some properties of the strategy space and measure \(\lambda \)—see e.g. Wiszniewska-Matyszkiel (2000); it becomes trivial whenever all the sets \(D_{i}(t,x)\) have a nonempty intersection.

In the ozone hole example from the introduction, the statistic may represent the aggregate emission of fluorocarbonates, which can be expressed by one dimensional statistic with g returning player’s individual emission i.e. \(g(i,d,x)=d\). The same statistic function is used in our clarifying example.

Taking such a form of statistic function does not have to be restrictive in games with finitely many players—in that case statistic may be the whole profile, as it is presented in Example 4.

In fact, the second argument of strategy of a player (and, consequently, the third argument of S) is redundant—the state at a current time instant is a part of history. However, we include x implicitly in order to simplify the notation.

Note that the statistic is defined only for measurable selections from players’ strategy sets, therefore the statistics at time t is well defined whenever the function \(S_{\cdot }^{OL}(t-1)\) is measurable. Obviously, for games with finitely many players, this is always fulfilled.

See discussion in “The form of beliefs and anticipated payoffs considered in this paper” section in Appendix 3.

Finding a Nash equilibrium in a dynamic game requires solving a set of dynamic optimization problems coupled by finding a fixed point in a functional space.

Finding a pre-BDNE requires only finding a sequence of static Nash equilibria—each of them requires solving a set of simple static optimization problems coupled only by the value of u. Of course, some preliminary work has to be done to calculate anticipated payoffs, but in the case considered in this paper, it is again a sequence of static optimizations.

This formulation imposes conditions on derivative objects, like V, instead of conditions on primary objects, which may seem awkward. This is caused by the fact that, especially in infinite horizon problems, it is difficult to obtain any kind of regularity of \(V_i\)—a result of both dynamic and static optimization—given even very strong regularity assumptions on primary objects. Similarly, such an approach appears also in e.g. dynamic optimization in formulation of Bellman equation for continuous time, in which regularity conditions are imposed on the value function.

Where the symbol \(\delta ^{i,a}\), analogously to \(S^{i,d}\), for a static profile \(\delta \) denotes the profile \(\delta \) with strategy of player i changed to a.

I.e., for every t, \(\bar{S}_{i}^{OL}\in \mathop {\mathrm{{Argmax}}}_{d:\mathbb {T}\rightarrow \mathbb {D}\ d(s)\in D_{i}(s,X(s))\text { for }s\ge t}\sum _{s=t}^{T}\frac{P_{i}(s,d(s),u(s),X(s))}{(1+r_i) ^{s-t}}+\frac{G_{i}\left( X(T+1)\right) }{(1+r_i)^{T+1-t}}\).

This assumption is relaxed in Wiszniewska-Matyszkiel (2015), where probabilistic beliefs are considered.

Non typically, the value function of player i’s optimization is not directly dependent on state variable argument x since the trajectory X is fixed for the decision making problem of player i, so we skip this argument and consider \(\widetilde{V}_{i}\) dependent on time only.

References

Aumann, R. J. (1964). Markets with a continuum of traders. Econometrica, 32, 39–50.

Aumann, R. J. (1966). Existence of competitive equilibrium in markets with continuum of traders. Econometrica, 34, 1–17.

Aumann, R. J. (1974). Subjectivity and correlation in randomized strategies. Journal of Mathematical Economics, 1, 67–96.

Aumann, R. J. (1987). Correlated equilibrium as an expression of bounded rationality. Econometrica, 55, 1–19.

Azrieli, Y. (2009a). Categorizing others in large games. Games and Economic Behavior, 67, 351–362.

Azrieli, Y. (2009b). On pure conjectural equilibrium with non-manipulable information. International Journal of Game Theory, 38, 209–219.

Balder, E. (1995). A unifying approach to existence of Nash equilibria. International Journal of Game Theory, 24, 79–94.

Battigalli, P. (1987). Comportamento razionale ed equilibrio nei giochi e nelle situazioni sociali. Unpublished thesis, Universita Bocconi.

Battigalli, P., Cerreia-Vioglio, S., Maccheroni, F., & Marinaci, M. (2012). Selfconfirming equilibrium and model uncertainty, Working Paper 428, Innocenzo Gasparini Institute for Economic Research.

Battigalli, P., & Guaitoli, D. (1997). Conjectural equilibria and rationalizability in a game with incomplete information. In P. Battigali, A. Montesano, & F. Panunzi (Eds.), Decisions, games and markets (pp. 97–124). Dordrecht: Kluwer.

Battigalli, P., & Siniscalchi, M. (2003). Rationalization and incomplete information. Advances in Theoretical Economics, 3(1).

Bellman, R. (1957). Dynamic programming. Princeton: Princeton University Press.

Blackwell, D. (1965). Discounted dynamic programming. Annals of Mathematical Statistics, 36, 226–235.

Brian Arthur, W. (1994). Inductive reasoning and bounded rationality. American Economic Review, 84, 406–411.

Cartwright, E., & Wooders, M. (2012). Correlated equilibrium, conformity and stereotyping in social groups. The Becker Friedman Institute for Research in Economics, Working paper 2012-014.

Ellsberg, D. (1961). Risk, ambiguity and the savage axioms. Quarterly Journal of Economics, 75, 643–669.

Eyster, E., & Rabin, M. (2005). Cursed equilibrium. Econometrica, 73, 1623–1672.

Fudenberg, D., & Levine, D. K. (1993). Self-confirming equilibrium. Econometrica, 61, 523–545.

Gilboa, I., Maccheroni, F., Marinacci, M., & Schmeidler, D. (2010). Objective and subjective rationality in a multiple prior model. Econometrica, 78, 755–770.

Gilboa, I., & Marinacci, M. (2011). Ambiguity and the Bayesian paradigm, Working Paper 379, Universita Bocconi.

Gilboa, I., & Schmeidler, D. (1989). Maxmin expected utility with non-unique prior. Journal of Mathematical Economics, 18, 141–153.

Harsanyi, J. C. (1967). Games with incomplete information played by bayesian players, part I. Management Science, 14, 159–182.

Hennlock, M. (2008). A robust feedback Nash equilibrium in a climate change policy game. In S. K. Neogy, R. B. Bapat, A. K. Das, & T. Parthasarathy (Eds.), Mathematical programming and game theory for decision making (pp. 305–326).

Kalai, E., & Lehrer, E. (1993). Subjective equilibrium in repeated games. Econometrica, 61, 1231–1240.

Kalai, E., & Lehrer, E. (1995). Subjective games and equilibria. Games and Economic Behavior, 8, 123–163.

Klibanoff, P., Marinacci, M., & Mukerji, S. (2005). A smooth model of decision making under ambiguity. Econometrica, 73, 1849–1892.

Lasry, J.-M., & Lions, P.-L. (2007). Mean field games. Japanese Journal of Mathematics, 2, 229–260.

Marinacci, M. (2000). Ambiguous games. Games and Economic Behavior, 31, 191–219.

Mas-Colell, A. (1984). On the theorem of Schmeidler. Journal of Mathematical Economics, 13, 201–206.

Rubinstein, A., & Wolinsky, A. (1994). Rationalizable conjectural equilibrium: Between Nash and rationalizability. Games and Economic Behaviour, 6, 299–311.

Schmeidler, D. (1973). Equilibrium points of nonatomic games. Journal of Statistical Physics, 17, 295–300.

Stokey, N. L., Lucas, R. E, Jr, & Prescott, E. C. (1989). Recursive methods in economic dynamics. Cambridge: Harvard University Press.

Vind, K. (1964). Edgeworth-allocations is an exchange economy with many traders. International Economic Review, 5, 165–177.

Weintraub, G. Y., Benkard, C. L., & Van Roy, B. (2005). Oblivious equilibrium: A mean field. Advances in neural information processing systems. Cambridge: MIT Press.

Wieczorek, A. (2004). Large games with only small players and finite strategy sets. Applicationes Mathematicae, 31, 79–96.

Wieczorek, A. (2005). Large games with only small players and strategy sets in Euclidean spaces. Applicationes Mathematicae, 32, 183–193.

Wieczorek, A., & Wiszniewska, A. (1999). A game-theoretic model of social adaptation in an infinite population. Applicationes Mathematicae, 25, 417–430.

Wiszniewska-Matyszkiel, A. (2000). Existence of pure equilibria in games with nonatomic space of players. Topological Methods in Nonlinear Analysis, 16, 339–349.

Wiszniewska-Matyszkiel, A. (2002). Static and dynamic equilibria in games with continuum of players. Positivity, 6, 433–453.

Wiszniewska-Matyszkiel, A. (2003). Static and dynamic equilibria in stochastic games with continuum of players. Control and Cybernetics, 32, 103–126.

Wiszniewska-Matyszkiel, A. (2005). A dynamic game with continuum of players and its counterpart with finitely many players. In A. S. Nowak & K. Szajowski (Eds.), Annals of the international society of dynamic games (Vol. 7, pp. 455–469). Basel: Birkhäuser.

Wiszniewska-Matyszkiel, A. (2008a). Common resources, optimality and taxes in dynamic games with increasing number of players. Journal of Mathematical Analysis and Applications, 337, 840–841.

Wiszniewska-Matyszkiel, A. (2008b). Stock market as a dynamic game with continuum of players. Control and Cybernetics, 37(3), 617–647.

Wiszniewska-Matyszkiel, A. (2010). Games with distorted information and self-verification of beliefs with application to financial markets. Quantitative Methods in Economics, 11(1), 254–275.

Wiszniewska-Matyszkiel, A. (2014a). Open and closed loop nash equilibria in games with a continuum of players. Journal of Optimization Theory and Applications, 16, 280–301. doi:10.1007/s10957-013-0317-5.

Wiszniewska-Matyszkiel, A. (2014b). When beliefs about future create future—Exploitation of a common ecosystem from a new perspective. Strategic Behaviour and Environment, 4, 237–261.

Wiszniewska-Matyszkiel, A. (2015). Redefinition of belief distorted Nash equilibria for the environment of dynamic games with probabilistic beliefs, preliminary version available as preprint Belief distorted Nash equilibria for dynamic games with probabilistic beliefs, preprint 163/2007 Institute of Applied Mathematics and Mechanics, Warsaw University. http://www.mimuw.edu.pl/english/research/reports/imsm/

Author information

Authors and Affiliations

Corresponding author

Additional information

The project was financed by funds of National Science Centre granted by Decision Number DEC-2013/11/B/HS4/00857.

Appendices

Appendix 1: Proofs of results

1.1 Proof of the equivalence Theorems 4 and 5

Proof (of Theorem 4)

In all the subsequent reasonings, we consider player i outside the set of measure 0 of players for whom the condition of maximizing assumed payoff (actual or anticipated—depending on the assumption) does not hold or anticipated payoffs can be infinite.

In the continuum of players case, the statistics of profiles and, consequently, the trajectories corresponding to them are identical for \(\bar{S}\) and all \(\bar{S}^{i,d}\)—profile S with strategy of player i replaced by d. We denote this statistic function by u, while the corresponding trajectory by X.

(a) We show that along the perfect foresight path the equation for the anticipated payoff of player i becomes the Bellman equation for optimization of the actual payoff by player i while \(V_{i}\) coincides with the value function.

Formally, given the profile of the strategies of the remaining players coinciding with \(\bar{S}\), let us define the value function of the player’s i decision making problem \(\widetilde{V}_{i}{:}\,\mathbb {T} \rightarrow \overline{\mathbb {R}}\).Footnote 15

In the finite horizon case, \(\widetilde{V}_{i}\) fulfils the Bellman equation

with the terminal condition \(\widetilde{V}_{i}(T+1)=G_{i}(X(T+1))\).

In the infinite horizon case, \(\widetilde{V}_{i}\) also fulfils the Bellman equation, but the terminal condition sufficient for the solution of the Bellman equation to be the value function is different. The simplest form of it \(\lim _{t\rightarrow \infty }\widetilde{V}_{i}(t)\cdot \left( \frac{1}{1+r_{i}}\right) ^{t-t_{0}}=0\) (see e.g. Stokey et al. 1989). Here it holds by the assumption that the payoffs are bounded.

If we substitute the formula for \(\widetilde{V}_{i}\) at the r.h.s. of the Bellman equation by its definition, then we get

Note that the last supremum is equal to \(\widetilde{V}_{i}(t+1)\), but also to \(v_{i}(t+1,(X,u))\). Since (X, u) is the only history in the belief correspondence along all profiles \(\bar{S}^{i,d}\), it is also equal to \(V_{i}(t+1,\{(X,u)\})\).

Therefore,

where by \(d_{t,a}\) we denote a strategy of player i such that \(d(t,X(t),H^{\bar{S}})=a\) and at any other point of the domain it coincides with \(\bar{S}_{i}\).

Let us note that for all t, the set

is both the set of open loop forms of strategies of player i being his/her best responses to the strategies of the remaining players along the profiles \(\bar{S}\) and \(\bar{S}^{i,d}\) and the set at which the supremum in the definition of the function \(v_{i}(t,(X,u))\) is attained. We only have to show that \(\bar{S}_{i}(t)\in \mathop {\mathrm{{Argmax}}}_{a\in D_{i}(t,X(t))}\bar{\Pi } _{i}^{e}(t,H^{\bar{S}},\left( \bar{S}^{OL}(t)\right) ^{i,a})\). By the definition, this set is equal to

which, by the Bellman equation, defines the value of the best response at time t, which contains \(\bar{S}_{i}(t)\), since \(\bar{S}\) is an equilibrium profile.

(b) An immediate conclusion from (a).

(d) Let \(\bar{S}\) be a pre-BDNE for B being the perfect foresight at \(\bar{S}\) and all \(\bar{S}^{i,d}\). We consider \(\widetilde{V}_{i}\) defined as in the proof of (a).

By the definition of pre-BDNE,

If we add the fact that

\(\widetilde{V}_{i}(t)=\max _{a\in D_{i}(t,X(t))}P_{i}(a,u(t),X(t))+\left( \frac{1}{1+r_{i}}\right) \cdot \widetilde{V}_{i}(t+1)\), then, by the Bellman equation, the set \(\mathop {\mathrm{Argmax}}_{a\in D_{i}(t,X(t))} P_{i}(a,u(t),X(t))+\left( \frac{1}{1+r_{i}}\right) \cdot \widetilde{V}_{i}(t+1)\) represents the value of the optimal strategy of player i at time t, given u(t) and X(t). Since we have this property for a.e. i, and the profile defined in this way is a Nash equilibrium.

(c) By (d) and (a). \(\square \)

Proof (of Theorem 5)