Abstract

We consider the problem of estimating expectations with respect to a target distribution with an unknown normalising constant, and where even the un-normalised target needs to be approximated at finite resolution. This setting is ubiquitous across science and engineering applications, for example in the context of Bayesian inference where a physics-based model governed by an intractable partial differential equation (PDE) appears in the likelihood. A multi-index sequential Monte Carlo (MISMC) method is used to construct ratio estimators which provably enjoy the complexity improvements of multi-index Monte Carlo (MIMC) as well as the efficiency of sequential Monte Carlo (SMC) for inference. In particular, the proposed method provably achieves the canonical complexity of \(\hbox {MSE}^{-1}\), while single-level methods require \(\hbox {MSE}^{-\xi }\) for \(\xi >1\). This is illustrated on examples of Bayesian inverse problems with an elliptic PDE forward model in 1 and 2 spatial dimensions, where \(\xi =5/4\) and \(\xi =3/2\), respectively. It is also illustrated on more challenging log-Gaussian process models, where single-level complexity is approximately \(\xi =9/4\) and multilevel Monte Carlo (or MIMC with an inappropriate index set) gives \(\xi = 5/4 + \omega \), for any \(\omega > 0\), whereas our method is again canonical. We also provide novel theoretical verification of the product-form convergence results which MIMC requires for Gaussian processes built in spaces of mixed regularity defined in the spectral domain, which facilitates acceleration with fast Fourier transform methods via a cumulant embedding strategy, and may be of independent interest in the context of spatial statistics and machine learning.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

There has been an explosion of work over the past decade involving the enormously successful multilevel Monte Carlo (MLMC) method [27] for estimating expectations with respect to distributions which need to be approximated. The canonical example is the problem for forward uncertainty quantification (UQ), where a single realisation of the random variable of interest requires the solution to a stochastic, ordinary, or partial differential equation (SDE, ODE or PDE) [7, 53, 58]. The MLMC framework formulates this problem in terms of a sum of increments corresponding to approximations at successive resolutions, or levels. Under a suitable coupling of the increments, which is typically fairly trivial in the forward context, the variance of the increments decays as the resolution and cost increase, and so progressively fewer samples are required to control the variance at higher levels.

In the context of Bayesian inference, one typically requires expectations with respect to target distributions for which the normalising constant is unknown. As an example, let \(\pi \) denote a probability density on \({\textsf{X}} \times {\textsf{Y}}\). Assume we know how to evaluate \(\pi (x,y)=\pi (y|x)\pi _0(x)\) and \(\pi _0(x)\) but not \(\pi (y) = \int _{\textsf{X}} \pi (y|x) \pi _0(x) dx\). Now consider the case where one observes \(y \in {\textsf{Y}}\) and would like to infer the posterior distribution \(\pi (x|y)\), given by

This is referred to as the Bayesian framework, and \(\pi (y|x)\) and \(\pi _0(x)\) are referred to as the likelihood and the prior, respectively [55]. Note that once the goal of (1) is established, then a method should be capable of efficiently approximating integrals of the form:

where \(f(x) \propto \pi (y|x) \pi _0(x)\) and \(Z=\int _{\textsf{X}} f(x) dx\) (i.e. f(x) itself only needs to be proportional to the joint density). Methods which have been designed for exactly this purpose include Markov chain Monte Carlo (MCMC) [26], importance sampling [55], and combinations thereof such as sequential Monte Carlo (SMC) samplers [15, 21]. The latter methods are particularly powerful, handling elegantly some of the most challenging issues that arise in this context, such as small variance, strong dependence between variables, and multimodality.

Over the past decade the excitement about MLMC has intersected with the Bayesian computation community, in particular relating to the context of Bayesian inverse problems [59], where an intractable PDE often appears inside the likelihood of the posterior distribution of interest. For instance, we will later consider the case where the likelihood takes the from:

where y is an observed set of real-valued outputs and \({\mathcal {G}}(x)\) is a solution to the outputs from the intractable PDE for a given set of input parameters x. This context appears to be much more subtle, due to the complications of combining these technologies. Early work is related to MCMC [22, 34] and SMC samplers [5, 6, 48]. More recently, the methodology has also been applied to the context of partially observed diffusions [35, 39], for parameter inference [37], online state inference [2, 13, 28, 30, 39, 42], or both [19]. A notable recent body of work relates to continuous-time observations in this context [4, 45, 56]. Another notable trend is the application of randomised MLMC methods [11, 33, 40, 41, 44] in this context. Typically, these methods require unbiased estimators of increments, which is particularly challenging in the inference context. The first work to use randomised MLMC in the context of inference was [11], and unbiased increment estimators were available in the context of that work. Other more recent instalments have utilised double randomisation strategies in order to remove the bias of increment estimators [33, 40, 41].

The benefits of MLMC are somewhat hampered by the dimension of the underlying problem. This is an important issue, particularly in the context of a PDE or a SPDE. For example, the error associated with a finite element method (FEM) approximation of a PDE typically depends upon the mesh diameter, h, while the number of degrees of freedom typically scales like \(h^{-d}\), where d is the dimension of the associated PDE. The multi-index Monte Carlo (MIMC) method was introduced to gracefully handle the dimension dependence of this problem [29] following, in spirit, from the seminal work on sparse grids [10]. Instead of an estimator based on a sum of increments, the MIMC method constructs an estimator based on a sum over an index set of \(d-\)fold composition of increments. Under suitable regularity conditions, this approach is able to leverage convergence in each dimension independently and thereby mitigate the curse of dimensionality.

The MIMC method has very recently been applied to the inference context [19, 38, 43]; however, the estimates required for increments of increments have proven challenging from a theoretical perspective, and this has severely limited progress thus far. In particular, an MIMC method for inference with provable convergence guarantees does not currently exist, except a ratio estimator using simple importance sampling, as considered for MLMC and QMC in this work [57]. Such estimators are not expected to be practical for complex target distributions due to a large constant associated with importance sampling [1, 12].

The current work breaks down this theoretical barrier and unveils the MISMC sampler ratio estimator for posterior inference. By employing a ratio estimator, we introduce a theoretically tractable method which provably achieves the benefits of both SMC samplers for inference and MIMC for multi-dimensional discretisation. In particular, rather than dealing with self-normalised increments of increments, as previous methods have done, the innovation is to construct instead a ratio of MIMC estimators of an un-normalised integral and its normalising constant, both of which can be unbiasedly estimated with SMC sampler. This seemingly minor difference of formulation substantially simplifies the analysis and enables us to establish a theory for the convergence of an MIMC method for inference problems—a theory which until this point had been elusive.

This article is structured as follows. In Sect. 2, we provide a class of motivating problems for the methodology that is developed. In Sect. 3, we provide a review of the relevant computational methodology that is used in our approach. In Sect. 4, we present our method and theoretical results. In Sect. 6, we present numerical results. Finally, in the appendix several technical results are given, necessary for the theory that is presented in Sect. 4.

2 Motivating Problems

We consider the setting of Bayesian inference for an elliptic partial differential equation and for the log-Gaussian Cox model, where we must also perform numerical estimation.

2.1 Elliptic Partial Differential Equation

We consider the following elliptic PDE. Consider a convex domain \(\Omega \subset {\mathbb {R}}^D\) with boundary \(\partial \Omega \in C^0\), a function (force vector field) \({\textsf{f}}: \Omega \rightarrow {\mathbb {R}}\) and a function (permeability) \(a(x):\Omega \rightarrow {\mathbb {R}}_+\) which is parameterized by \(x\in {{\textsf{X}}}\). For each \(x\in {{\textsf{X}}}\), we define the (pressure field) \(u(x):\Omega \rightarrow {\mathbb {R}}\) as the solution to the following PDE on \(\Omega \)

In the above PDE, we assume the force vector field is known, e.g. \({\textsf{f}}=1\). However, we assume the permeability a(x) depends upon a parameter x which is a random variable, specifically \(x \sim \pi _0\). The dependence of a on x induces a dependence of the solution u on x. Hence, the solution itself, u(x)(z), is a random variable for each \(z \in \Omega \).

For concreteness, assume that \(D=2\) and \(\Omega =[0,1]^2\). Assume a uniform prior,

For \(x \sim \pi _0\), and \(z \in \Omega \), the permeability will take the form

where \(\psi _i\) are smooth functions with \({\Vert \psi _i\Vert _{L^\infty ({\Omega })}} \le 1\) for \(i=1,\dots ,d\), and \(a_0 > \sum _{i=1}^d x_i\). In particular, for simplicity and concreteness, let \(d=2\) and

2.1.1 Finite Element Approximation and Error Estimates

Consider the 1D piecewise linear nodal basis functions \(\phi _j^K\) defined as follows, for mesh \(\{z^K_i = i / (K+1) \}_{i=0}^{K+1}\), and for \(j=1,\dots ,K\),

Now, for \(\alpha = (\alpha _1, \alpha _2) \in {\mathbb {N}}^2\), consider the tensor product grid over \(\Omega =[0,1]^2\) formed by

where \(K_{1,\alpha }= 2^{\alpha _1}\) and \(K_{2,\alpha }= 2^{\alpha _2}\) (and the mesh-width in each direction is bounded by \(2^{-\alpha _k}\), \(k=1,2\)). Let \(i=i_1 + K_{1,\alpha } i_2\) for \(i_1 =1,\dots , K_{1,\alpha }\) and \(i_2=1,\dots , K_{2,\alpha }\) and \(K_\alpha =K_{1,\alpha }K_{2,\alpha }\), and let \(\phi ^\alpha _i(z) = \phi ^\alpha _{i_1,i_2}(z_1,z_2) = \phi ^{K_{1,\alpha }}_{i_1}(z_1) \phi ^{K_{2,\alpha }}_{i_2}(z_2)\) be piecewise bilinear functions. The weak solution of the PDE (2) and (3) will be approximated by \(u_\alpha (x) = \sum _{i=1}^{K_\alpha } u_\alpha ^i(x) \phi ^\alpha _i \in V\). Given x, the values of \(u^i_{\alpha }(x)\) are defined by substituting the expansion into (2) and taking inner product with \(\phi ^\alpha _j\) for \(j=1,\dots , K_\alpha \). In particular, observe that we have

Using integration by parts and observing that \(\phi _i^\alpha |_{\partial \Omega } \equiv 0\), then

We can represent the solution as a vector \(\textbf{u}_\alpha (x) = [u_\alpha ^i(x):i=1,\dots , K_\alpha ]\), and define \(\textbf{f}_{\alpha ,j} = \langle {\textsf{f}}, \phi ^\alpha _j \rangle \) and

where we introduce the notation \(j:=j_1 + j_2 K_{1,\alpha }\) (for \(j_1 =1,\dots , K_{1,\alpha }\) and \(j_2=1,\dots , K_{2,\alpha }\)).

Observe that if \(i=i_1 + i_2 K_{1,\alpha }\), then the integral is zero for all i such that \(i_k < j_k-1\) or \(i_k > j_k + 1\), for \(k \in \{1,2\}\). So the above matrix \(\textbf{A}_\alpha (x)\) is sparse, and it is straightforward to verify that it is symmetric positive definite.

The approximate weak solution to Eqs. (2), (3) is given by the system

Due to the sparsity of \(\textbf{A}_\alpha (x)\), for \(D \le 2\) the solution can be obtained for a cost of roughly \({\mathcal {O}}(K_\alpha )\) using an iterative solver based on Krylov subspaces, such as conjugate gradients [52]. For \(D \ge 3\) it may no longer be possible to achieve a linear cost—see, e.g. [29]. See the references [9, 16] for further description and much more.

The weak solution u of (2) and (3) is said to be \(W^{2,2}\) regular if there exists a \(C>0\), such that

for every \({\textsf{f}} \in L^2(\Omega ),\) where \(\Vert \cdot \Vert \) denotes the \(L^2(\Omega )\) norm. For the purposes of the present work, it suffices to observe the following proposition [8, 24].

Proposition 2.1

For a(x) given by (5) and uniformly over \(x \in [-1,1]^d\), \({\textsf{f}}\in L^2\) and \(\Omega \) convex, the weak solution of (2) and (3) is \(W^{2,2}\) regular, and there exists a \(C>0\) such that

Furthermore,

2.1.2 A Bayesian Inverse Problem

In the PDE (2) and (3), the parameter x is unknown. Here, we infer estimates about the true value x from noisy observations of the solution to the PDE, u(x). A further confounding factor is that the closed form solution to u(x) is, in general, not known in closed form and instead we must numerically approximate u(x) with \(u_{\alpha }(x)\) as described above.

Now observations y will be introduced and we will consider the inverse problem, given by

where \(L(x) \propto \pi (y|x)\) and the dependence upon y is suppressed in the notation. We will use the notations \(d\pi (x)=\pi (dx)=\pi (x)dx\) to mean the same thing, i.e. probability under \(\pi \) of an infinitesimal volume element dx (Lebesgue measure by default) centred at x, and the argument may be omitted from \(d\pi \) where the meaning is understood.

Define the following vector-valued function

where \(v_i \in L^2\) and \(v_i(u(x)) = \int v_i(z) u(x)(z) dz\) for \(i = 1,\dots ,{n}\), for some \({n}\ge 1\). It is assumed that the data take the form

Footnote 1 and we define

Here y is suppressed from the notation. Also we apply the convention that \(|w|_\Xi := (w^\top \Xi ^{-1} w)^{1/2} \).

In particular, u(x) is the (weak) solution map of (2) and (3), for given input x. Denote its weak approximation at resolution multi-index \(\alpha \) by \(u_\alpha (x)\). The approximated likelihood is given by

and the associated target is

The following proposition summarises the key result.

Proposition 2.2

In the present context, there is a \(C>0\) such that \(u,u_\alpha \le C\), hence a \(c>0\) such that \(L,L_\alpha \ge c > 0\), and so (6) and (9) are well defined. Furthermore, following Proposition 2.1 and the continuity of L as a function of u, the following rate estimate holds uniformly in x

For the concrete example of \(D=2\), let the observations be given by \(v_i(u):= u(z_i)\), for \(i=1,\dots ,4\), where \(z_i \in \{(0.25,0.25), (0.25,0.75), (0.75,0.75), (0.75,0.25)\}\), and let \(\Xi = \xi ^2 I\). This example has been considered in the context of an MLSMC sampler method in [6]. It is noted that this example extends the theory described, since \(v_i \notin L^2\).

2.2 Log-Gaussian Process Models

Another model problem which will be considered is the log-Gaussian process (LGP), and the related log-Gaussian Cox (LGC) process, which are commonly used in spatial statistics. In this example, the dimension of the state space grows with level.

Specifically, we aim to model a data set comprised of the location of \(n=126\) Scots pine saplings in a natural forest in Finland [47], denoted \(z_1,\dots ,z_{n} \in [0,1]^2\). The LGC version of our model is based on the one presented in [31]. The process of interest is defined as \(\Lambda = \exp (x)\) where x is a Gaussian process, a priori distributed in terms of a KL-expansion as follows, for \(z \in [0,2]^2\),

where \(\mathcal{C}\mathcal{N}(0,1)\) denotes a standard complex Normal distribution, \(\xi ^*_k\) is the complex conjugate of \(\xi _k\), and \(\phi _k(z) \propto \exp [\pi i z \cdot k]\) are Fourier series basis functions (with \(i=\sqrt{-1}\)), and

The coefficient \(\beta \) controls the smoothness of the Gaussian process. The parameters \(\theta \) will be assumed known in the present work, but these can also be fit within a hierarchical modelling framework. The associated prior measure is denoted by \(\mu _0\). Following the formulation from [31], the likelihoods are defined by

See, e.g. [61] for a description of the LGP version, which is given second above. Note that only \(z \in [0,1]^2\) is required. The periodic prior measure is defined on \([0,2]^2\) so that no boundary conditions are imposed on the sub-domain \([0,1]^2\) and the fast Fourier transform (FFT) can be used for approximation, as described below.

The finite approximation is constructed as follows. First the KL expansion (10) is truncated

where \({\mathcal {A}}_\alpha := \{-2^{\alpha _1/2},\dots ,2^{\alpha _1/2}\} \times \{1,\dots ,2^{\alpha _2/2}\} \cup \{1,\dots ,2^{\alpha _2/2}\}\times 0\). Note that \(x_\alpha (z)\) can be approximated on a grid \(\{0,2^{-\alpha _1}, \dots 1-2^{-\alpha _1}\}\times \{0,2^{-\alpha _2}, \dots 1-2^{-\alpha _2}\}\) using the FFT with a cost \({\mathcal {O}}((\alpha _1+\alpha _2) 2^{\alpha _1+\alpha _2})\). Now \({\hat{x}}_\alpha (z)\) is defined as an interpolant (for example linear) over the grid output from FFT. The finite approximation of the likelihood is then defined by

where Q denotes a quadrature rule, which may for example be given by \(Q(\exp (x_\alpha ))=2^{-(\alpha _1+\alpha _2)} \sum _{h \in \prod _{i=1}^2\{0,2^{-\alpha _i}, \dots , 1-2^{-\alpha _i}\}} \exp (x_{\alpha }(h))\) or \(Q(\exp (x_\alpha ))=\int {\hat{x}}_\alpha (z) dz \).

If one uses the prior with isotropic spectrum \({\rho _k^2(\theta ) = \theta _2/(\theta _3 + k_1^2+k_2^2)^{\frac{3}{2}}}\), then our target measure coincides with the standard prior of [31] in the limit as \(\min _i {\alpha _i} \rightarrow \infty \). One can understand the connection in this context via the circulant embedding method based on FFT [46]. However, previous work has employed the (dense) kernel representation of the covariance function instead of diagonalising it with FFT. For our product-form spectrum, the regularity would be matched for \(\beta =1\), corresponding to a product of Ornstein–Uhlenbeck processes. Instead, we will choose \(\beta =1.6\) for convenience, which means that our prior is slightly smoother.

2.2.1 LGP and LGC Theoretical Results

First, we state a simple convergence result for Gaussian process of the form (10) with spectral decay corresponding to (11).

It will be useful to define the following operator A on the space of functions in \(L^2(\Omega )\):

the mixed Sobolev-like norms

where \(A^{q/2} = \sum _{k \in {\mathbb {Z}}^2} a_k^{q/2} \phi _k \otimes \phi _k\) and we recall \(\Vert \cdot \Vert \) is the \(L^2(\Omega )\) norm, and the spaces

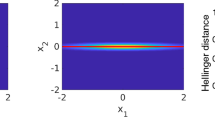

We note that these spaces ensure mixed regularity (hence superscript \(\textsf {m} \)), rather than the typical regularity associated with standard Sobolev spaces. It is precisely this property which multi-index methods are designed to exploit. Figure 1 shows the contours of functions in \(H_{1/2}^\textsf {m} \) (red) and \(W^{1/2,2}\) (blue), along with the regions associated with an increment (yellow) and increment of an increment at approximation level \(\alpha =(a,a)\). From inspection, it is clear that increments of increments are of higher order in comparison with increments for functions in the mixed space, but not for functions in the standard space.

The following proposition is proven in “Appendix B”.

Proposition 2.3

Let \(x \sim \pi _0\), where \(\pi _0\) is a Gaussian process of the form (10) with spectral decay corresponding to (11), and let \(x_\alpha \) correspond to truncation on the index set \({\mathcal {A}}_\alpha \) as in (14). Then \(x \in H_q^\textsf {m} \) for all \(q<\beta /2\), and for \(r \in [0,q)\) there is a \(C>0\) such that

A cartoon of variance contours associated with a function in \(H_{1/2}^\textsf {m} \) (red) and a function in \(W^{1/2,2}\) (blue). Letting \(\delta _a = [2^{a/2},2^{a}]\), the spectral region associated with an increment of approximations on the index sets defined in (14), \(\{ |k|_1 \in \delta _a \} = \{ k \in {\mathcal {A}}_\alpha \} \cap \{k\notin {\mathcal {A}}_{\alpha /2}\}\) (with \(\alpha =(a,a)\)), is depicted in yellow. Its intersection with the region associated with an increment of increments, \(\cap _{i=1}^2\{ {|k_i|} \in \delta _a\}\), is depicted in green (Color figure online)

For \(\beta =1\), (11) looks like a product of OU processes, with the regularity of Wiener measure [54]. Hence, following from Proposition 2.3, x is almost surely continuous for \(\beta \ge 1\), and (12) and (13) are well defined. It is worth noting that (12) and (13) are not well defined for the standard prior, e.g. from [31], since the Sobolev embedding theorem (see, e.g. [59]) does not guarantee that the solution is almost surely continuous. However, non-infinitesimal representations of (12), i.e. for finite partitions of the domain, can still be computed as long as \(\Lambda \) is integrable.

The following proposition ensures our LGC and LGP posterior measures are well defined on function space and has a density with respect to the prior. It is proven in “Appendix B”.

Proposition 2.4

Given \(x: \Omega \rightarrow {\mathbb {R}}\) is a Gaussian process, with probability measure denoted \(\pi _0\), defined on compact finite dimensional space \(\Omega \), that is almost surely continuous and has a finite mean and covariance. If we define \(\pi \) by

for \(n \in {\mathbb {N}}\) then \(\pi (dx)\) is a well-defined probability measure, and can be represented in terms of its density with respect to \(\pi _0\):

The analogue to Proposition 2.2 takes the following form. The proposition is again reproduced, and proven, in “Appendix B”.

Proposition 2.5

For both LGP and LGC, there is a \(C>0\) such that for \(x \sim \pi _0\) and \(x_\alpha = P_{{\mathcal {A}}_\alpha }x\), where \(P_{{\mathcal {A}}_\alpha }\) denotes the orthogonal projection onto the index set \({\mathcal {A}}_\alpha \) defined in (14), the following rate estimate holds for all \(q<(\beta -1)/2\)

3 Computational Methodology

3.1 Approximate Monte Carlo

For concreteness, in this subsection we will consider the case of the PDE example from Sect. 2.1. The case of Sect. 2.2 follows similarly. Let \({{\textsf{X}}}:= [-1,1]^d\) be the domain of x.

3.1.1 Monte Carlo

The forward uncertainty quantification (UQ) problem is the following. Given a quantity of interest \(\varphi _\alpha = \varphi \circ u_\alpha : {{\textsf{X}}}\rightarrow {\mathbb {R}}\), compute its expectation

The typical strategy is to independently sample \(x^{i} \sim \pi _0\), for \(i = 1, \dots , N\), and then approximate

For example, we can let

where the latter can be written as \(\textbf{u}_\alpha ^T \textbf{K}_\alpha \textbf{u}_\alpha \), where \(\textbf{K}_{\alpha ,ij}:= \langle \phi _i^\alpha , \phi _j^\alpha \rangle \).

3.1.2 Multi-index Monte Carlo

With MIMC [29], we apply the approximation

where the difference of differences operator is defined as \(\Delta \varphi _\alpha := \Delta _D \circ \cdots \circ \Delta _1 \varphi _\alpha \) with \(\Delta _i \varphi _\alpha := \varphi _\alpha - \varphi _{\alpha - e_i}\), \(e_i\) is the \(i^{\textrm{th}}\) standard basis vector in \({\mathbb {R}}^D\), and \(\varphi _{\alpha } \equiv 0\) if \(\alpha _i<0\) for any i. The task is then to approximate the expectation of the increment of increments for each \(\alpha \in \mathcal{I}\subset {\mathbb {Z}}^D\). For example, for \(D=2\), one must approximate

To do this we sample \(x^i_\alpha \sim \pi _0\), i.i.d. for \(i = 1, \dots , N_\alpha \), and then approximate

Observe that \({\mathbb {E}}[ {\mathbb {E}}_\alpha ^{N_\alpha } [ \Delta \varphi _\alpha ( x ) ] ] = {\mathbb {E}}[ \Delta \varphi _\alpha ( x ) ]\). Furthermore, based on approximation properties of \(u_\alpha \), one expects a \(C>0\) such that

For the example in Sect. 2.1.1, we have \(\beta _1=\beta _2=4\) [29].

In particular, as we will now describe, we know how to choose the index set \(\mathcal{I}\) and schedule of \(\{N_\alpha \}_{\alpha \in \mathcal{I}}\) such that the following estimator delivers the same MSE for significantly smaller cost than the standard method of Sect. 3.1

where \({\mathbb {E}}_\alpha ^{N_\alpha }\) indicates that \(N_\alpha \) independent samples are used at each level \(\alpha \). A concise, but not comprehensive, summary of the approach is given in the review [27]. For a detailed treatment see [29]. MLMC corresponds to the case in which there is one index. The MLMC methodology is more generally applicable to problems in which the target distribution—in this case the pushforward of \(\pi _0\) through u, \((u)_{\#}\pi _0\), i.e. the distribution of u(x) for \(x\sim \pi _0\)—needs to be approximated first to finite resolution, \(\alpha \), before Monte Carlo can be applied.

Assumption 3.1

There exist positive constants \(s_i\), \(\beta _i\), \(\gamma _i\) and C for \(i = 1,2,\ldots ,D\), such that the following holds

-

(a)

\(|{\mathbb {E}}[\Delta \varphi _\alpha ( x )]| \le C 2^{-\langle \alpha , s \rangle }\);

-

(b)

\({\mathbb {E}}\left[ ({\mathbb {E}}_\alpha ^{N_\alpha } [ \Delta \varphi _\alpha ( X ) ] - {\mathbb {E}}[ \Delta \varphi _\alpha ( X ) ] )^2 \right] \le C N_{\alpha }^{-1} 2^{-\langle \alpha , \beta \rangle }\);

-

(c)

\(\textrm{COST}(\Delta \varphi _\alpha ( x )) \le C 2^{\langle \alpha , \gamma \rangle }\).

For a random variable X, the cost function \(\textrm{COST}(X)\) denotes the computational complexity of a single sample of X.The following two propositions are standard results for MIMC and are proven in [29].

Proposition 3.1

Assume Assumption 3.1, with \(\beta _i > \gamma _i\), for all \(i = 1,\ldots ,D\). Then for the total degree index set \({\mathcal {I}}_{L}:=\{\alpha \in {\mathbb {N}}^{D}:\sum _{i=1}^{D}\delta _{i}\alpha _{i} \le L, \sum _{i=1}^{D}\delta _{i} = 1\}\), there are values of \(L \in {\mathbb {N}}\), \(\delta _{i} \in (0,1]\) and \(\{N_\alpha \}_{\alpha \in {\mathcal {I}}_{L}}\) such that

with a computational complexity of \({\mathcal {O}}(\varepsilon ^{-2})\) for any small \(\varepsilon > 0\).

Remark 3.1

Under the same assumptions as in Proposition 3.1, if the index set is replaced with the tensor product index set \({\mathcal {I}}_{L_1:L_d}:=\{\alpha \in {\mathbb {N}}^{D}:\alpha _1 \in \{0,\ldots ,L_1\},\ldots ,\alpha _D \in \{0,\ldots ,L_D\}\}\), then the same complexity result can be obtained only with an additional constraint that \(\sum _{j=1}^{D}\gamma _j/ s_j \le 2\).

3.2 Monte Carlo for Inference

For simplicity, in this subsection, we define the algorithm for the target \(\pi \), although we note that in practice this cannot be implemented for finite cost for our targets, and it must be replaced with \(\pi _\alpha \). This sets the stage for our method, which combines the inference approach with the approximation approach described in Sect. 3.1.

3.2.1 Markov Chain Monte Carlo and Importance Sampling

In the context of Bayesian inference, the objective is ultimately to compute expectations with respect to a probability distribution \(\pi \) proportional to \(f>0\), where one can evaluate f but not its integral, denoted by \(Z = \int f(dx)\), so \(\pi (dx) = f(dx)/Z\). In particular, we define \(f(dx):= L(x) \pi _0(dx)\) as the target, in the limit \(\alpha \rightarrow \infty \) of (9). That is, for arbitrary \(\varphi :{{\textsf{X}}}\rightarrow {\mathbb {R}}\), we want to compute integrals

If we could simulate from \(\pi \), we would approximate this by

However, in the present context this is not possible because the normalising constant Z is typically unknown and must be calculated numerically. Markov chain Monte Carlo (MCMC) and (self-normalised) importance sampling are the standard methods to solve such problems [55]. Both methods provide estimators \({\widehat{\varphi }}^{N}\) with a dimension-independent convergence rate analogous to \({\mathbb {E}}^N(\varphi )\), for some \(C_\varphi > 0\):

For MCMC, \(C_\varphi \) typically depends at worst polynomially on d, and can sometimes be made independent [17, 50]. However, due to its intrinsic locality, MCMC is doomed to fail for distributions which are concentrated around several modes with low probability in between. In the case of importance sampling, the latter case is handled gracefully; however, one must be careful since often \(C_\varphi = {\mathcal {O}}(e^d)\) [1, 3, 12]. To be precise, estimating \(\int \varphi d\pi \) using samples from \({\overline{\pi }}\) results in \(C_\varphi = {\mathcal {O}}( \exp (D_{KL}(\pi _\varphi \Vert {\overline{\pi }} )))\), where

and \(D_{KL}( \nu \Vert \mu )\) is the Kullback–Leibler divergence from \(\mu \) to \(\nu \) [12].

If one can simulate from some \({\overline{\pi }}\) such that \({\overline{\pi }}(dx)=\frac{1}{{\overline{Z}}}{\overline{f}}(dx)\) with \({\overline{Z}} = \int {\overline{f}}(dx)\), \({\overline{f}}(dx) = {\overline{L}}(x) \pi _0(dx)\), and \(L/{\overline{L}} \le C\), then importance sampling consists of replacing the above unbiased approximation by the following biased but consistent one

MCMC methods instead proceed by constructing a Markov chain \({\mathcal {M}}: {{\textsf{X}}}\times \sigma ({{\textsf{X}}}) \rightarrow [0,1]\), where \(\sigma ({{\textsf{X}}})\) is the sigma algebra of measurable sets, such that for all \(A \in \sigma ({{\textsf{X}}})\)

i.e. the Markov chain is \(\pi -\)invariant. Provided the Markov chain is also ergodic then one may simulate a trajectory and approximate (23) by

Here, as above, \(N_s\) is the number of samples used, while \(N_b\) is the number of initial samples that are unused because we must first allow our Markov chain to approach stationarity.

The most popular MCMC method is Metropolis–Hastings (MH), which proceeds by designing a proposal Markov kernel \({\mathcal {Q}}\) such that the following composition Markov kernel is ergodic. First, sample \(x' \sim {\mathcal {Q}}(x^{(i)}, dx') = q(x^{(i)}, x') \pi _0(dx')\), then let \(x^{(i+1)} = x'\) with probability

Otherwise, let \(x^{(i+1)} = x^{(i)}\). Notice that again, as in (25), only the un-normalised target density L is required. Note that in order to customise the presentation to the context at hand, we presented a particular category of MH methods, designed for probability measures on general state spaces, which have densities with respect to \(\pi _0\). Such methods are justified by the framework of [60], and a particularly convenient instantiation arises for Gaussian process priors \(\pi _0\), where it is easy to define \({\mathcal {Q}}\) such that \(q(x,x')=q(x',x)\) for all \(x,x' \in {{\textsf{X}}}\). See [17, 50], and the more recent slice sampler variant [49].

Sequential Monte Carlo samplers combine these 2 fundamental algorithms – importance sampling, and propagation by MCMC kernels – along a sequence of intermediate targets, and are able to achieve some very impressive results. The next subsection introduces this technology.

3.2.2 Sequential Monte Carlo Samplers

Sequential Monte Carlo (SMC) samplers are able to merge the best of both worlds, by repeatedly leveraging importance sampling on a sequence of target distributions which are close. In particular, define \(h_1, \dots , h_{J-1}\) by \(h_j = L_{j+1}/L_j\), where \(L_1 = {\overline{L}}\), \(L_J = L\), \({\overline{L}}\) appears in (25) (and may likely be \(\pi _0\)), \(f(dx)=L(x)\pi _0(dx)\) is the un-normalised target, and for \(j=2,\dots , J-1\), \(L_i\) interpolates in between.

Let \(\pi _j = f_j/Z_j\), where \(Z_j = \int f_j(dx)\) and \(f_j(dx) = L_j(x) \pi _0(dx)\). Note that \({\overline{f}}(dx) \prod _{i=1}^{J-1} h_j(x) = f(dx)\). The idea of SMC is to simulate from \({\overline{\pi }}=\pi _1\) and use these samples to construct a self-normalised importance sampling estimator of \(f_2\) with weights \(h_1\) as in (25), and iterate for \(j=1,\dots , J-1\), resulting in a self-normalised importance sampling estimator of \(\pi \). There is, however, an obvious issue with this idea. In particular, the locations of the sampled points remain unchanged over each stage of the algorithm for this sequential importance sampling estimator. This leads to degeneracy that is no better than the original (one step) importance sampling estimator (25).

The key idea introduced in [14, 21, 36, 51] is to use Markov transition kernels between successive target distributions \(\pi _j\) and \(\pi _{j+1}\) in order to decorrelate (or “jitter”) the particles, while preserving the intermediate target. The standard approach is to let \({\mathcal {M}}_j\) for \(j=2,\dots ,J\) be such that \((\pi _j {\mathcal {M}}_j)(dx) = \int \pi _j(dx') {\mathcal {M}}_j(x',dx) = \pi _j(dx)\), e.g. an MCMC kernel of the type introduced in the previous subsection, (27).

The resulting SMC algorithm is given in Algorithm 1. Define

In the resampling step of Algorithm 1, the samples are resampled according to their weights, so that some “unfit” (low weight) particles will “die” while other “fit” (high weight) ones will “multiply”. As such, it can be viewed as a sort of genetic selection mechanism [20]. One can understand this operation as preserving the distribution of particles as well as the degeneracy of the sample, while exchanging variance of weights for redundancy of particles. Therefore, at a given instance, there is no net gain; however, future generations will have replenished diversity. As an example, one can use multinomial resampling. See [15] for details.

3.2.3 Estimating the Normalising Constant with SMC

Recall \(Z_j = \int f_j(dx)\), and observe that

It follows that the ratio of normalising constants of \(\pi _J=\pi \) to \(\pi _1={\overline{\pi }}\), \(Z/{\overline{Z}}\), is given by

If \(Z_1=1\) is known, then this is simply equal to Z, the normalising constant of \(\pi \).

Observe that using Algorithm 1 we can construct an estimator of each factor by

Recall that for any \(\varphi :{{\textsf{X}}}\rightarrow {\mathbb {R}}\) we have \(f_J(\varphi ):= \int \varphi (x) f_J(dx) = f_J(1) \pi _J(\varphi )\), by definition. Now define the following estimator

where \(\pi _j^N(\varphi )\) and \(Z_J^N\) are as defined in Algorithm 1.

Note that by definition

4 Multi-index Sequential Monte Carlo

With the necessary notation and concepts defined in the previous section, we now establish our theoretical results for Multi-Index Sequential Monte Carlo. Through this we can provide theoretical guarantees for the Bayesian inverse problems, such as those defined in Sect. 2.1.2 and we develop methods which apply the MIMC methodology of Sect. 3.1.2 to that problem.

The main result is an estimator which retains the well-known efficiency of SMC samplers while provably achieving the complexity benefits of MIMC. This problem has been considered before in [19, 38, 43], but the present work is the first to establish convergence guarantees under reasonable verifiable assumptions. To this end, our objective is to apply SMC samplers to estimate (23) while utilising a multi-index decomposition of the form (20).

After formulating our problem and introducing some additional notation, we present and prove our main convergence result Theorem 4.1.

4.1 Formulation

For convenience we denote the vector of multi-indices

where \({\varvec{\alpha }}_1(\alpha )=\alpha \), \({\varvec{\alpha }}_{2^D}(\alpha )=\alpha - \sum _{i=1}^D e_i\), and \({\varvec{\alpha }}_i(\alpha )\) for \(1<i<2^D\) correspond to the intermediate multi-indices involved in computing \(\Delta \varphi _\alpha \), as described above (23). We note that when \(\alpha \) is on the boundary of \({\mathbb {Z}}^D_+\) then several of the terms involved in \(\Delta \varphi _\alpha \) are 0, but we find this notation more expedient than letting \({\varvec{\alpha }}(\alpha ) \in {\mathbb {Z}}_+^{D\times k_\alpha }\) where \(k_\alpha =2^{\#\{i; \alpha _i\ne 0\}} \in \{0,2,\dots , 2^D\}\) adjusts the dimension \(k_\alpha \) when \(\alpha \) is on the boundary of the index set.

Define \(f_\alpha (dx):= L_\alpha (x) \pi _0(dx)\), \(Z_\alpha := \int _{{\textsf{X}}}f_\alpha (dx)\) and \(\pi _{\alpha }(dx) = f_\alpha (dx) / Z_{\alpha }\), following from (9). There are two fundamental strategies one may adopt for estimating \(\pi (\varphi ) = f(\varphi )/f(1)\) using a multi-telescoping identity as in (20). The first considers the following representation

Note we allow \(\varphi _\alpha \) to depend on \(\alpha \)*—for example it could involve the solution to the PDE.

Directly estimating \(\Delta (\pi _\alpha (\varphi _\alpha ))\) would be quite natural if we were able to sample from a coupling of \((\pi _{{\varvec{\alpha }}_1(\alpha )},\dots , \pi _{{\varvec{\alpha }}_{2^D}(\alpha )})\), i.e. a distribution \(\Pi _\alpha : \sigma ({{\textsf{X}}}^{2^D}) \rightarrow [0,1]\) such that

Footnote 2 In practice, however, this is non-trivial to achieve. One successful strategy for MLMC methods is to construct instead an approximate coupling \(\Pi _\alpha \) such that \(\pi _{{\varvec{\alpha }}_i(\alpha )}/\Pi _\alpha \) is bounded for all \(i=1,\dots , 2^D\), then simulate from this and construct self-normalised importance sampling estimators of the type (25) for each of the individual summands of \(\Delta \left( \frac{1}{Z_\alpha } f_\alpha (\varphi _\alpha ) \right) \) appearing in (30). This strategy was introduced for MLMCMC in [37] and has subsequently been applied to MIMC in the contexts of MCMC [38] and SMC [19, 43]. These MIMC works lack rigorous convergence results, due to the challenge of achieving rigorous rates for the individual summands, as well as the effect of cumbersome off-diagonal terms in the MSE estimates arising from bias of the summands (which are higher-order for MLMC). Both of these issues are handled elegantly with the present method.

In the present work, we adopt the second fundamental strategy, which is to use the ratio decomposition

In their limiting forms in (30) and (31), the expressions are equivalent; however, from an approximation perspective they are fundamentally different. In the context of SMC, there are advantages to the latter. In particular, this alleviates both of the issues with arising from bias of the summands in the aforementioned approach, which have prevented rigorous convergence results until now. A similar strategy was used to construct randomised MLMC estimators for Bayesian parameter estimation with particle MCMC in [11]. This method comprises the main result of this work, and its development is the topic of the following subsection.

4.2 Main Result

In order to make use of (31), we need to construct estimators of \(\Delta ( f_\alpha (\zeta _\alpha ) )\), both for our quantity of interest \(\zeta _\alpha = \varphi _\alpha \) and for \(\zeta _\alpha =1\). To that end, we shall construct a coupling which approximates \(\Pi _\alpha \), and has well-behaved importance weights with respect to \(\Pi _\alpha \). Let

where \(\delta _{{\varvec{x}}_1}\) denotes the Dirac delta function at \({\varvec{x}}_1\). Note that this is an exact coupling of the prior in the sense that for any \(j \in \{1,\dots , 2^D\}\)

Indeed, it is the same coupling used in Sect. 3.1.2. It is hoped that this coupling of the prior will carry over to provide error estimates analogous to (21) for the estimator (31), when computed using SMC. We note that one can estimate (31) directly by importance sampling with respect to the prior, as described in Sect. 3.1.2; however, this is not expected to be as efficient as using SMC. We hence adapt Algorithm 1 to an extended target which is an approximate coupling of the actual target as in [11, 19, 37, 38, 43], and utilise a ratio of estimates analogous to (29), similar to what was done in [11]. To this end, we define a likelihood on the coupled space as

Note that \(k_\alpha =2^{\#\{i; \alpha _i\ne 0\}} \in \{0,2,\dots , 2^D\} \le 2^D\) adjusts the effective dimension of the target when \(\alpha \) is on the boundary of the index set. The approximate coupling is defined by

Example 4.1

(Approximate Coupling) As an example of the approximate coupling constructed in equations (32), (34), and (35), let \(D=2\), \(d=1\), and \(\alpha =(1,1)\). Then, we have

Note that for our choice of prior coupling (32), we effectively have a single distribution

but any coupling of the prior which preserves the marginals as in (33) is admissible, so we prefer to consider this as a target on the “diagonal hyperplane” \(x_1=x_2=x_3=x_4\), as above.

Let \(H_{\alpha ,j} = \textbf{L}_{\alpha , j+1}/\textbf{L}_{\alpha ,j}\) for some intermediate distributions \(F_{\alpha ,1}, \dots , F_{\alpha ,J}=F_{\alpha }\). In our case, we use the natural intermediate targets \(F_{\alpha ,j}(d{\varvec{x}}) = \textbf{L}_\alpha ({\varvec{x}})^{\tau _j} \Pi _0(d{\varvec{x}})\), where \(\tau _1=0\), \(\tau _j<\tau _{j+1}\), and \(\tau _J=1\). For \(j=1,\dots ,J\), we define

and we let \({\varvec{{\mathcal {M}}}}_{\alpha ,j}\) be a Markov transition kernel such that \((\Pi _{\alpha ,j} {\varvec{{\mathcal {M}}}}_{\alpha ,j})(d{\varvec{x}}) = \Pi _{\alpha ,j}(d{\varvec{x}})\), analogous to \({\mathcal {M}}\) in Sect. 3.2.2. Any MCMC kernel as described in Sect. 3.2.1 with target distribution \(\Pi _{\alpha ,j}\) is suitable for this purpose. An example is the Metropolis–Hastings kernel described above and in (27).

Remark 4.1

(Tempering) Tempering accurately is crucial, because if the effective sample size drops too low, then the population will lack sufficient diversity to survive. The purpose of the sequential resampling and mutation is precisely to preserve diversity in the sample. Sometimes a fixed tempering schedule is suitable for this purpose, for example \(\tau _j = (j-1)/(J-1)\). An alternative is to use an adaptive tempering strategy.

Given a (possibly un-normalised) weighted sample \(\{w^{(k)},{\varvec{x}}^{(k)}\}_{k=1}^N\), the effective sample size (\(\textrm{ESS}\)) is defined as follows

This quantity serves as a proxy for the variance of the weighted sample. To understand the name, note that if \(w^{(k)}\propto 1\) for all k, then \(\textrm{ESS}=N\), while if \(w^{(k^*)}\propto 1\) for some \(k^*\) and \(w^{(k)}=0\) for \(k \ne k^*\) then \(\textrm{ESS}=1\). If \(\tau _j = \tau _{j-1} + h\), for \(h > 0\), then the intermediate weights will be \(w^{(k)} = {{\textbf {L}}}_\alpha ({\varvec{x}}^{(k)})^{h}\), and the corresponding \(\textrm{ESS}(h)\) is a scalar function of h which quantifies the sample attrition that results from the importance sampling step; precisely what we are aiming to control. The adaptive tempering parameter \(\tau _j\) is computed by firstly solving \(\textrm{ESS}(h)=\mathrm{ESS_{min}}\) with a pre-specified value of \(\mathrm{ESS_{min}}\), and then letting \(\tau _j \leftarrow \tau _{j-1} + h\). In this way, the effective sample size is \(\mathrm{ESS_{min}}\) each time importance sampling is carried out. The tempering procedure is carried until \(\tau _j = 1\).

Remark 4.2

(Role of Dimension D) Note that in high dimensions one would select an index set in which there are few (or no) terms on the interior. In the present work, we do not explicitly consider the dependence on D (which is reasonable for small \(D\le 5\) say); however, the methodology is applicable for high-dimensional targets and that is the subject of future work. The cost of simulating the approximate coupling at level \(\alpha \) will feature a constant \(2^D\) multiplying Assumption 4.2(C), because that is how many likelihood evaluations are required to compute (34), and hence corresponding multi-increment. The constant can be large, but this will not alter the complexity estimates.

For \(j=1,\dots , J\), and for random variables \({\varvec{x}}_j^{(i)}\), \(i=1,\ldots ,N\) (which will be sampled from the Markov chain \({{\varvec{{\mathcal {M}}}}_{\alpha ,j}}\)) we define

and then define

We require the following assumption

Assumption 4.1

Let \(J\in {\mathbb {N}}\) be given, and let \({{\textsf{X}}}\) be a Banach space. For each \(j\in \{1,\dots ,J\}\) there exists some \(C>0\) such that for all \((\alpha ,{\varvec{x}})\in {{\mathbb {Z}}_+^D\times {{\textsf{X}}}^{2^D}}\)

The following proposition can easily be deduced from [Theorem 7.4.2, [20]].

Proposition 4.1

Under Assumption 4.1, we have \( {\mathbb {E}}[F_{\alpha }^N(\psi )] = F_\alpha (\psi )\).

Define

where \(\iota _k \in \{-1,1\}\) is the sign of the \(k^{\textrm{th}}\) term in \(\Delta f_\alpha \) and \(\zeta _\alpha : {{\textsf{X}}}\rightarrow {\mathbb {R}}\). Following from Proposition 4.1, we have that

Now given \(\mathcal{I}\subseteq {\mathbb {Z}}_+^D\), \(\{N_\alpha \}_{\alpha \in \mathcal{I}}\), and \(\varphi :{{\textsf{X}}}\rightarrow {\mathbb {R}}\), for each \(\alpha \in \mathcal{I}\), run an independent SMC sampler as in Algorithm 2 with \(N_\alpha \) samples, define \(Z_\alpha ^N = Z_J^N\), and define the MIMC estimator as

where \(Z_{\textrm{min}}\) is a lower bound on Z as given in Assumption 4.1, and \(F^{N_\alpha }_\alpha \) is defined in (37).

4.2.1 Theoretical Results

Throughout this subsection, \(C>0\) is a constant whose value may change from line to line. The following theorem is the main theoretical result which underpins the results to follow.

Theorem 4.1

Assume Assumption 4.1. Then, for any \(J\in {\mathbb {N}}\) there exists a \(C>0\) such that for any \(N\in {\mathbb {N}}\), \(\psi : {{\textsf{X}}}^{2^D} \rightarrow {\mathbb {R}}\) bounded and measurable and \(\alpha \in {\mathbb {Z}}_+^D\)

Furthermore,

where \(\psi _{\zeta _\alpha }({\varvec{x}})\) is as (38).

Proof

The first result follows from Lemmas 5.1, 5.2 and 5.3, given in Sect. 5. The second result is derived as follows:

The first 2 lines are direct substitution and the inequality follows by defining \(C^{-1} = \inf _{{\varvec{x}}\in {{\textsf{X}}}^{2^D}} \textbf{L}_\alpha ({\varvec{x}})\) and using the definition of \(\Pi _0\) in (32). \(\square \)

Following from above, the assumptions below will be made.

Assumption 4.2

For any \(\zeta : {{\textsf{X}}}\rightarrow {\mathbb {R}}\) bounded and Lipschitz, there exist \(C, \beta _i, s_i, \gamma _i >0\) for \(i=1,\dots , D\) such that for resolution vector \((2^{-\alpha _1},\dots , 2^{-\alpha _D})\), i.e. resolution \(2^{-\alpha _i}\) in the \(i^{\textrm{th}}\) direction, the following holds:

-

(B) \(|\Delta f_\alpha (\zeta )|\) \(=: B_\alpha \) \(\le C 2^{-\langle \alpha , s \rangle }\);

-

(V) \(\int _{{\textsf{X}}}(\Delta ( L_\alpha (x)\zeta _\alpha (x) ))^2 \pi _0(dx) =: V_\alpha \le C 2^{-\langle \alpha , \beta \rangle }\);

-

(C) \(\textrm{COST}(F_\alpha (\psi _\varphi )) =: C_\alpha \propto 2^{\langle \alpha , \gamma \rangle }\).

The proofs of the main theorems will rely on one more result, Lemma 4.1, given immediately afterwards.

The next theorem comprises the main result of the paper.

Theorem 4.2

Assume Assumptions 4.1 and 4.2, with \(\beta _i>\gamma _i\) for \(i=1,\dots ,D\). Then, for any \(\varepsilon >0\) and suitable \(\varphi : {{\textsf{X}}}\rightarrow {\mathbb {R}}\), it is possible to choose a total degree index set \({\mathcal {I}}_{L}:=\{\alpha \in {\mathbb {N}}^{D}:\sum _{i=1}^{D}\delta _{i}\alpha _{i} \le L, \sum _{i=1}^{D}\delta _{i} = 1\}\), \(\delta _{i} \in (0,1]\) and \(\{N_\alpha \}_{\alpha \in {\mathcal {I}}_{L}}\), such that for some \(C>0\)

and \(\textrm{COST}({{\widehat{\varphi }}}^{\textrm{MI}}_\mathcal{I}) \le C \varepsilon ^{-2}\), the canonical rate. The estimator \({{\widehat{\varphi }}}^{\textrm{MI}}_\mathcal{I}\) is defined in equation (40).

Proof

Starting from Lemma 4.1 and given Theorem 4.1, and the Assumptions 4.2, the result follows in a similar fashion to standard MIMC theory [19, 27, 29, 38, 43]. The proof is given in “Appendix A” for completeness. \(\square \)

Remark 4.3

Under the same assumptions as in Theorem 4.2, and similar to Proposition 3.1, if the index set is replaced with the tensor product index set \({\mathcal {I}}_{L_1:L_d}:=\{\alpha \in {\mathbb {N}}^{D}:\alpha _1 \in \{0,\ldots ,L_1\},\ldots ,\alpha _D \in \{0,\ldots ,L_D\}\}\), then the same complexity result can be obtained only with an additional constraint that \(\sum _{j=1}^{D}\gamma _j/ s_j \le 2\).

Lemma 4.1

For the estimator (40) \({{\widehat{\varphi }}}^{\textrm{MI}}_\mathcal{I}= \frac{\sum _{\alpha \in \mathcal{I}} F^{N_\alpha }_\alpha (\psi _{\varphi _\alpha })}{\max \{\sum _{\alpha \in \mathcal{I}} F^{N_\alpha }_\alpha (\psi _{1}), Z_{\min }\}}\), the following inequality holds

Proof

Recall that from (40) we have \({{\widehat{\varphi }}}^{\textrm{MI}}_\mathcal{I}= \frac{\sum _{\alpha \in \mathcal{I}} F^{N_\alpha }_\alpha (\psi _{\varphi _\alpha })}{\max \{\sum _{\alpha \in \mathcal{I}} F^{N_\alpha }_\alpha (\psi _{1}), Z_{\min }\}}\). So

Since \(f(1) \ge Z_{\min }\) and \(| \max \{A,Z\} - \max \{B, Z\} | \le | A-B|\), we have

Then, we have

\(\square \)

Remark 4.4

It is remarked that one always has \(Z >0\); therefore, given a target error level \(\varepsilon \), one can always replace \(Z_{\textrm{min}} \leftarrow \varepsilon \), and achieve the same result. To see this, denoting

observe that line (41) can be replaced with

Theorem 4.2 formulates the total degree index set with general \(\delta \) satisfying some loose conditions. In the paper [29], optimal \(\delta \) is constructed according to a profit indicator. The focus of the present work is on the canonical case, where the complexity is dominated by low levels, so we simply choose \(\delta \propto s\). The proof of Theorem 4.2 is based on the general \(\delta \), and it is easy to see that this choice suffices.

To achieve the canonical rate of complexity, Theorem 4.3 with the tensor product index set relies on the essential assumption that \(\sum _{j=1}^{D}\frac{\gamma _j}{s_j}\le 2\), which ensures that the samples at the finest index do not dominate the cost. If the assumption is violated, then only the subcanonical complexity \(\sum _{j=1}^{D}\frac{\gamma _j}{s_j}\) can be achieved. This rate may often be \(D-\)dependent, resulting in a so-called curse of dimensionality. In comparison, Theorem 4.2 with the total degree index set releases this constraint, and improves the computational complexity for many problems from subcanonical to canonical, as illustrated in the numerical examples.

4.3 Verification of Assumptions

Here we briefly discuss the models considered before in connection with the required Assumptions 4.2. Note that both posteriors have the form \(\exp (\Phi (x))\) for some \(\Phi : X \rightarrow {\mathbb {R}}\), and are approximated by \(\Phi _\alpha : X \rightarrow {\mathbb {R}}\).

Proposition 4.2

Let \({{\textsf{X}}}\) be a Banach space with \(D=2\) s.t. \(\pi _0({{\textsf{X}}})=1\), with norm \(\Vert \cdot \Vert _{{\textsf{X}}}\). For all \(\epsilon >0\), there exists a \(C(\epsilon )>0\) such that the following holds for \(\Phi , \Phi _\alpha \) given by (13), (16), or \(\log (L),\log (L_\alpha )\) from (9), respectively:

Proof

Let us introduce the shorthand notation \(A_{11}=\Phi _\alpha (x_\alpha )\), \(A_{10}=\Phi _{\alpha -e_2}(x_{\alpha -e_2})\), \(A_{01}=\Phi _{\alpha -e_1}(x_{\alpha -e_1})\), \(A_{00}=\Phi _{\alpha -e_1-e_2}(x_{\alpha -e_1-e_2})\). We have

where we have added and subtracted \(\exp (A_{10}) \left( \exp (A_{01}-A_{00})- 1 \right) \) in going from the second to the third line. The final line follows from the mean value theorem and equations (59) and (72) with \({{\textsf{X}}}=H^\textsf {m} _r\), \(r>1/2\). These trivially hold for (9).

The issue which prevented us from achieving above for LGC is that terms like \(\exp (-A_{10}) \propto \exp (-\Phi _\alpha (x_\alpha ))\) appear in the constant, which involve a factor like \(\exp (\int \exp (x(z))dz)\). Fernique theorem does not guarantee that such double exponentials are finite. However, for LGP, we instead have

\(\square \)

PDE. The following proposition updates Proposition 2.1 and is proven in the literature on mixed regularity of the solution of elliptic PDE, as mentioned already in Sect. 3.1.2. See, e.g. [29] and references therein.

Proposition 4.3

Let \(u_\alpha \) be the solution of (2) and (3) at resolution \(\alpha \), as described in Sect. 2.1.1, for a(x) given by (5) and uniformly over \(x \in [-1,1]^d\), and \({{ f}}\) suitably smooth. Then, there exists a \(C>0\) such that

Furthermore,

Note that since \(L_\alpha (x) \le C < \infty \) by Assumption 4.1, the constant in Proposition 4.2 can be made uniform over x, and hence the required rate in Assumption 4.2 is established immediately.

LGP. Will restrict consideration to LGP here, since LGC features double exponentials which are difficult to handle theoretically in this context. The following proposition updates Proposition 2.3 as required for differences of differences.

Proposition 4.4

Let \(x \sim \pi _0\), where \(\pi _0\) is a Gaussian process of the form (10) with spectral decay corresponding to (11), and let \(x_\alpha \) correspond to truncation on the index set \({\mathcal {A}}_\alpha = \cap _{i=1}^2 \{|k_i|\le 2^{\alpha _i}\}\) as in (14). Then there is a \(C>0\) such that for all \(q<(\beta -1)/2\)

Proof

The proof follows along the same lines as that of Proposition 2.3 (B.1), except instead of projection onto \(\cup _{i=1}^2 \{|k_i| > 2^{\alpha _i}\}\), the projection here is onto the set of indices \(\cap _{i=1}^2 \{2^{\alpha _i-1} \le |k_i| \le 2^{\alpha _i}\}\), i.e.

\(\square \)

The key phenomenon that takes place is that the difference of difference \(\Delta x_\alpha \) leaves a remainder which is an intersection \(\cap _{i=1}^2 \{2^{\alpha _i-1} \le |k_i| \le 2^{\alpha _i}\}\), rather than the union \(\cup _{i=1}^2 \{2^{\alpha _i-1} \le |k_i| \le 2^{\alpha _i}\}\), associated with the truncation error in Proposition 2.3, which one would achieve with a single difference from \(x_\alpha - x_{\alpha -1}\). This eliminates all indices in which \(k_i^{-1} ={\mathcal {O}}(1)\) for some i, and provides the required product-form rates.

Proposition 4.5

The rate from Proposition 4.4 is inherited by the likelihood, resulting in verification of Assumption 4.2(V) with \(\beta _i=\beta \).

Proof

The proof follows along the lines of Proposition 2.5. In this case, following from Proposition 4.2, for all \(\epsilon >0\) and \(q<\beta /2\), we have

where the second line is computed with estimates similar to (72), and Fernique Theorem to conclude, as in the proof of Proposition 2.5. \(\square \)

5 Proofs Relating Theorem 4.1

In this section, we prove Lemmas 5.1, 5.2 and 5.3 from which Theorem 4.1 is an immediate consequence. We fix \(\alpha \in \mathcal{I}\) throughout this section and thus, to avoid notational overload, we henceforth suppress it from our notation.

For \(j=2,\dots , J\), we define

and observe that the iterates of the algorithm of Sect. 3.2.2 can be rewritten in the concise form

where we recall the definition (28) for the empirical measure \(\Pi ^N_{j-1}\). Let \(\Phi _1(\Pi ):= \Pi \). A finer error analysis beyond Proposition 4.1 requires keeping track of the effect of errors \((\Pi _j^N - \Phi _j(\Pi _{j-1}^N))\) and accounting for the cumulative error at time J. To this end, and for any \(\psi : {{\textsf{X}}}^{2^D} \rightarrow {\mathbb {R}}\) bounded, we define the following partial propagation operator for \(p = 1,\dots , J\)

where \(Q_{J,J} = I_{2^D}\), i.e. \(Q_{J,J}(\psi )({\varvec{x}}_J) = \psi ({\varvec{x}}_J)\). We will assume for simplicity that \(F_1:= \Pi _0\), the prior, so that \(F_1(1)=1\). Note that then \(\Pi _0(Q_{1}(\psi )({\varvec{x}}_1)) = F_J(\psi ) = F(\psi )\).

We present now the following well-known representation of the error as a martingale w.r.t. the natural filtration of the particle system (see [11, 20])

where we denote the summands as \(S_{n,J}^N(\psi )\). This clearly shows the unbiasedness property presented in Proposition 4.1. In particular, \({\mathbb {E}}F_{J}^N(\psi ) = F(\psi )\), as can be seen by backwards induction conditioning first on \(\{{\varvec{x}}_{J-1}^i\}_{i=1}^N\) and recalling the form of \(F_n^N(1)\) given in (37). This brings us to our first supporting lemma. Throughout these calculations, C is a finite constant whose value may change on each appearance. The dependencies of this constant on the various algorithmic parameters are made clear from the statement.

Lemma 5.1

Assume Assumption 4.1. Then, for any \(J\in {\mathbb {N}}\) there exists \(C>0\) such that for any \(N\in {\mathbb {N}}\) and any \(\psi :{{\textsf{X}}}^{2^D} \rightarrow {\mathbb {R}}\) bounded and measurable

Proof

Following from (45), we have

The first inequality results from application of the Burkholder–Gundy–Davis inequality. The second inequality follows via an application of the conditional Marcinkiewicz–Zygmund inequality and the fact that \(F_n^N(1)\) is upper-bounded by a constant via Assumption 4.1. \(\square \)

Lemma 5.2

Assume Assumption 4.1. Then, for any \(J\in {\mathbb {N}}\) and \(n\in \{1,\dots ,J\}\) there exists a \(C>0\) such that for any \(N\in {\mathbb {N}}\) and \(\psi :{{\textsf{X}}}^{2^D} \rightarrow {\mathbb {R}}\) bounded and measurable

Proof

Observe that for any \({\varvec{x}}_n \in {{\textsf{X}}}^{2^D}\), \(Q_{n,J}(\psi )({\varvec{x}}_n)/Q_{n,J}(1)({\varvec{x}}_n)\) is a probability distribution. Therefore, Jensen’s inequality provides

The result follows with \(C = \sup _{{\varvec{x}}_n\in {{\textsf{X}}}^{2^D}} Q_{n,J}(1)({\varvec{x}}_n)\). \(\square \)

Lemma 5.3

Assume Assumption 4.1. Then for any \(J\in {\mathbb {N}}\) and \(n\in \{1,\dots ,J\}\) there exists a \(C>0\) such that for any \(N\in {\mathbb {N}}\) and any \(\psi :{{\textsf{X}}}^{2^D} \rightarrow {\mathbb {R}}\) bounded and measurable

Proof

We proceed by induction. The result for \(n=1\) follows immediately from Lemma 5.2 and the fact that we defined \(\Phi _1(\Pi _1) = \Pi _1 = F_1 = \Pi _0\):

Now, assume the result holds for \(n-1\):

and we will show that this implies it holds for n.

We have that

We consider bounding the expectations of \(T_1\) and \(T_2\) in turn.

\({\varvec{T_1}}\). We have

By Assumption 4.1\(\inf _{{\varvec{x}}} H_{n-1}({\varvec{x}})\ge C^{-1}\) and

Therefore, by the inductive hypothesis

\({\varvec{T_2}}\). For the second term, we have

These two terms are now considered.

\({\varvec{T_{2,1}}}\). The expected value of \(T_{2,1}\) can be bounded as follows:

Where the triangle inequality is used in the first line, positivity is used in the second, the Martingale property is used in the third, and the induction hypothesis is used to conclude. Thus, after appropriately redefining the constant C, we have that

\({\varvec{T_{2,2}}}\). Finally, for the second term, note that by Assumption 4.1 there is a \(C<\infty \) such that

Thus, by again applying the induction hypothesis (46) one has that

and this suffices to complete the proof. \(\square \)

6 Numerical Results

First, we considered the toy example of a 1D DE simplification of the PDE introduced in Sect. 2.1. Since the method reduces to a multilevel method in this case, the results are provided in “Appendix C.1”.

6.1 2D Elliptic PDE with Random Diffusion Coefficient

In this subsection, we look at the 2D elliptic PDE with random diffusion coefficient from Sect. 2.1. The problem is defined in (2)–(3). The domain of interest is \(\Omega = [0,1]^2\), the forcing term is \({\textsf{f}} = 100\), \(a(x)(z) = 3+x_1\cos (3z_1)\sin (3z_2)+x_2\cos (z_1)\sin (z_2)\), and the prior is \(x\sim U[-1,1]^2\). The observation operator and observation take the form of (7) and (8), respectively.

Let the observations be given at a set of four points - {(0.25,0.25), (0.25,0.75), (0.75,0.25), (0.75,0.75)}. Corresponding observations are generated by \(y = u_{\alpha }(x^*) + \nu \), where \(u_{\alpha }(x^*)\) is the approximate solution of the PDE at \(\alpha = [10,10]\) with \(x^*=[-0.4836, -0.5806]\) drawn from \(U[-1,1]^2\), and \(\nu \sim N(0,0.5^2)\). Due to the zero Dirichlet boundary condition, the solution is zero when \(\alpha _i = 0\) and \(\alpha _i=1\) for \(i = 1,2\). So we set \(\alpha _i \leftarrow \alpha _i + 2\) for \(i = 1,2\) as the starting indices. The 2D PDE solver applied here is modified based on a MATLAB toolbox called IFISS [23] such that the solver can accept a random coefficient and solve the problem of interest. The algorithm is applied with Metropolis–Hastings method and a fixed tempering schedule for all \(\alpha \), where \(J = 3\) and \(\tau _j=(j-1)/2\).

2D Elliptic PDE with random diffusion coefficient rate of convergence for MLSMC and MISMC with the self-normalised increments estimator and the ratio estimator, where MISMC is applied with the tensor product index set and the total degree index set. Each MSE is computed with 200 realisations. Rates of regression: (1) MISMCSN_TP: \(-1.007\) (2) MISMCSN_TD: \(-0.996\) (3) MISMCRE_TP: \(-0.964\) (4) MISMCRE_TD: \(-0.925\) (5) MLSMCSN: \(-0.880\) (6) MLSMCRE: \(-0.918\)

For this example, we have \(s_1 = s_2 = 2\) and \(\beta _1 = \beta _2 = 4\) for the mixed rates corresponding to Assumptions 4.2, which implies that along the diagonal \(\alpha _1 = \alpha _2\) the rates for \(\Delta F_\alpha \) are \(s_1 + s_2 = 4\) and \(\beta _1 + \beta _2 = 8\). This is shown in Figs. 7 and 8. The contour plot 9 performs a more general illustration. For the multilevel formulation, \(s = 2\) and \(\beta = 4\), which can be observed from Fig. 10.

Considering the quantity of interest \(x_1^2 + x_2^2\), MSE in Fig. 2 is calculated with 200 realisations. Total computational costs are computed with the same idea as the previous questions. The reference solution is computed by MLSMC instead of MISMC to avoid errors in algorithm. MISMC algorithm is carried on with the two different index sets mentioned above—tensor product and total degree index set. According to the rates of regression in the caption of Fig. 2, both MLSMC and MISMC with the self-normalised increment estimator and the ratio estimator have the rate of convergence close to -1 falling into the canonical case, which is as expected.

The advantage of MISMC can be shown in the following examples, where we can only achieve subcanonical rates with MLSMC and MISMC with the tensor product index set but the canonical rate with MISMC with the total degree index set. It is worth to note that this advantage is because the total degree index set which abandons the most expensive estimation.

LGC model with MLSMC and MISMC with the self-normalised increments estimator and the ratio estimator, where MISMC corresponds to the total degree index set. Each MSE is computed with 100 realisations. Rate of regression: (1) MISMCRE_TD: \(-\)1.022 (2) MISMCSN_TD: \(-\)0.973 (3) MLSMCRE: \(-\)0.686 (4) MLSMCSN: \(-\)0.677

6.2 Log-Gaussian Process Models

After considering the PDE examples in previous subsections, we show the numerical results of the LGC model introduced in Sect. 2.2. The parameters are chosen as \(\theta =(\theta _1,\theta _2,\theta _3) = (0, 1, 110.339)\). For this particular example, the increment rates associated with MLSMC are \(s = 0.8\) and \(\beta = 1.6\), while the mixed rates associated with MISMC are \(s_{i} = 0.8\) and \(\beta _{i} = 1.6\) for \(i = 1,2\). The rates for s and \(\beta \) can be observed from Fig. 14 and mixed rates for \(s_i\) and \(\beta _i\) for \(i = 1,2\) can be observed from Figs. 11, 12 and 13. This forward simulation method has a cost rate of \(\gamma = 2 + \omega \), for any \(\omega > 0\), while the traditional full factorisation method used in [32] (and references therein) has \(\gamma =6\). However, one has \(\gamma _i=1+\omega< \beta _i < \gamma \). This means circulant embedding will deliver a single level complexity of approximately \(\hbox {MSE}^{-9/4}\), while the traditional grid-based approach has complexity \(\hbox {MSE}^{-19/4}\). An implementation of MLMC delivers \(\hbox {MSE}^{-5/4 - \omega }\). Finally, MIMC with TD index set (\(\delta _{i} = 0.5\) for \(i = 1,2\)) delivers canonical complexity of \(\hbox {MSE}^{-1}\). Note that, because \(\sum _{j=1}^{2}\frac{\gamma _{j}}{s_{j}} = 5/2 > 2\), the important assumption \(\sum _{j=1}^{2}\frac{\gamma _{j}}{s_{j}} \le 2\) for MISMC with TP index set is violated, the cost of the finest level samples dominates the total cost, and MISMC TP therefore has the same subcanonical complexity as MLMC.

LGP model with MLSMC and MISMC with the self-normalised increments estimator and the ratio estimator, where MISMC corresponds to the total degree index set. Each MSE is computed with 100 realisations. Rate of regression: (1) MISMCRE_TD: \(-\)0.994 (2) MISMCSN_TD: \(-\)0.950 (3) MLSMCRE: \(-\)0.643 (4) MLSMCSN: -712

SMC sampler is applied with the pre-conditioned Crank-Nicolson (pCN) MCMC [17, 50] as the mutation kernel and adaptive tempering described in Remark 4.1. The quantity of interest is taken as \(\varphi (x)=\int _{[0,1]^2}\exp (x(z))dz\) and \(\alpha _{i} \leftarrow \alpha _i + 5\) for \(i = 1,2\) are the starting indices. Figure 3, and the rate of regression in the caption, show the above claims that MISMC TD is canonical with rate of convergence close to -1 and MLSMC is subcanonical. MISMC TP is not included here since the computational complexity of this method is the same as that of MLSMC for this example. The only difference between the two methods is the constant. Compared with MLSMC, MISMC TP has extra indices and two extra terms at all internal indices. This means that MISMC TP has a larger constant than MLSMC. MISMC TD turns the computational complexity from subcanonical, which all that is achievable with MISMC TP, MLSMC, and SMC, to canonical, indicating the benefits of MISMC TD.

6.3 Log-Gaussian Process Model

In this subsection, we consider the LGP model introduced in Sect. 2.2. By changing the likelihood and parameters accordingly as \(\theta =(\theta _1,\theta _2,\theta _3) = (0, 1, 27.585)\), the LGP model follows the same analysis as the LGC model in the previous subsection and gives the same numerical results for regularity and complexity. More precisely, the increment rates associated with MLSMC are \(s = 0.8\) and \(\beta = 1.6\), and the mixed rates associated with MISMC are \(s_{i} = 0.8\) and \(\beta _{i} = 1.6\) for \(i = 1,2\), which are the same as LGC. Figure 18 shows the increment rates for s and \(\beta \), and Figs. 15, 16 and 17 show the mixed rates for \(s_i\) and \(\beta _i\) for \(i = 1,2\). The rates corresponding to the computational costs of MLSMC and MISMC are \(\gamma = 2+\omega \) and \(\gamma _i = 1+\omega <\gamma \), for any \(\omega >0\), respectively. Being the same as that of LGC, the complexity of LGP is \(\hbox {MSE}^{-5/4 - \omega }\) with MLSMC and \(\hbox {MSE}^{-1}\) with MISMC.

As above, SMC sampler is applied with the pre-conditioned Crank-Nicolson (pCN) MCMC [17, 50] as the mutation kernel and adaptive tempering described in Remark 4.1. Considering the quantity of interest \(\varphi (x)=\int _{[0,1]^2}\exp (x(z))dz\) and letting the starting indices \(\alpha _{i} \leftarrow \alpha _i + 5\) for \(i = 1,2\), Fig. 4, and the rate of regression in the caption, show the same claims that MISMC TD is canonical with rate of convergence close to -1 and MLSMC is subcanonical.

Notes

Here we use \(\perp \) to denote pairwise independence of random variables.

Here \({\varvec{x}}_{-j}\) omits the \(j^{\textrm{th}}\) coordinate from \({\varvec{x}}= ({\varvec{x}}_1,\dots , {\varvec{x}}_{2^D}) \in {{\textsf{X}}}^{2^D}\)

References

S Agapiou, Omiros Papaspiliopoulos, D Sanz-Alonso, AM Stuart, et al. Importance sampling: Intrinsic dimension and computational cost. Statistical Science, 32(3):405–431, 2017.

Marco Ballesio, Ajay Jasra, Erik von Schwerin, and Raul Tempone. A Wasserstein coupled particle filter for multilevel estimation. arXiv:2004.03981, 2020.

Thomas Bengtsson, Peter Bickel, Bo Li, et al. Curse-of-dimensionality revisited: Collapse of the particle filter in very large scale systems. In Probability and statistics: Essays in honor of David A. Freedman, pages 316–334. Institute of Mathematical Statistics, 2008.

Alexandros Beskos, Dan Crisan, Ajay Jasra, Nikolas Kantas, and Hamza Ruzayqat. Score-based parameter estimation for a class of continuous-time state space models. SIAM Journal on Scientific Computing, 43(4):A2555–A2580, 2021.

Alexandros Beskos, Ajay Jasra, Kody Law, Youssef Marzouk, and Yan Zhou. Multilevel sequential Monte Carlo with dimension-independent likelihood-informed proposals. SIAM/ASA Journal on Uncertainty Quantification, 6(2):762–786, 2018.

Alexandros Beskos, Ajay Jasra, Kody J.H. Law, Raul Tempone, and Yan Zhou. Multilevel sequential Monte Carlo samplers. Stochastic Processes and their Applications, 127(5):1417–1440, 2017.

William E Boyce, Richard C DiPrima, and Douglas B Meade. Elementary differential equations. John Wiley & Sons, 2017.

Dietrich Braess. Finite elements: Theory, fast solvers, and applications in solid mechanics. Cambridge University Press, 2007.

Susanne Brenner and Ridgway Scott. The mathematical theory of finite element methods, volume 15. Springer Science & Business Media, 2007.

Hans-Joachim Bungartz and Michael Griebel. Sparse grids. Acta numerica, 13:147–269, 2004.

Neil K Chada, Jordan Franks, Ajay Jasra, Kody J Law, and Matti Vihola. Unbiased inference for discretely observed hidden markov model diffusions. SIAM/ASA Journal on Uncertainty Quantification, 9(2):763–787, 2021.

Sourav Chatterjee, Persi Diaconis, et al. The sample size required in importance sampling. The Annals of Applied Probability, 28(2):1099–1135, 2018.

Alexey Chernov, Håkon Hoel, Kody JH Law, Fabio Nobile, and Raul Tempone. Multilevel ensemble Kalman filtering for spatio-temporal processes. Numerische Mathematik, 147(1):71–125, 2021.

Nicolas Chopin. A sequential particle filter method for static models. Biometrika, 89(3):539–552, 2002.

Nicolas Chopin, Omiros Papaspiliopoulos, et al. An introduction to sequential Monte Carlo, volume 4. Springer, 2020.

Philippe G Ciarlet. The finite element method for elliptic problems. SIAM, 2002.

Simon L Cotter, Gareth O Roberts, Andrew M Stuart, and David White. MCMC methods for functions: modifying old algorithms to make them faster. Statistical Science, pages 424–446, 2013.

David Cruz-Uribe and CJ Neugebauer. Sharp error bounds for the trapezoidal rule and simpson’s rule. J. Inequal. Pure Appl. Math, 3(4):1–22, 2002.

T. Cui, Ajay Jasra, and Kody J. H. Law. Multi-index sequential Monte Carlo methods. Preprint.

Pierre Del Moral. Feynman-Kac formulae. In Feynman-Kac Formulae, pages 47–93. Springer, 2004.

Pierre Del Moral, Arnaud Doucet, and Ajay Jasra. Sequential Monte Carlo samplers. Journal of the Royal Statistical Society: Series B (Statistical Methodology), 68(3):411–436, 2006.

Tim J Dodwell, Christian Ketelsen, Robert Scheichl, and Aretha L Teckentrup. A hierarchical multilevel Markov chain Monte Carlo algorithm with applications to uncertainty quantification in subsurface flow. SIAM/ASA Journal on Uncertainty Quantification, 3(1):1075–1108, 2015.

Howard Elman, Alison Ramage, and David Silvester. Algorithm 866: IFISS, a Matlab toolbox for modelling incompressible flow. ACM Trans. Math. Softw., 33:2–14, 2007.

Howard C Elman, David J Silvester, and Andrew J Wathen. Finite elements and fast iterative solvers: with applications in incompressible fluid dynamics. Numerical Mathematics and Scie, 2014.

Alexandre Ern and Jean-Luc Guermond. Theory and practice of finite elements, volume 159. Springer, 2004.

Charles J Geyer. Practical Markov chain Monte Carlo. Statistical science, pages 473–483, 1992.

Michael B Giles. Multilevel Monte Carlo methods. Acta Numerica, 24:259, 2015.

Alastair Gregory, Colin J Cotter, and Sebastian Reich. Multilevel ensemble transform particle filtering. SIAM Journal on Scientific Computing, 38(3):A1317–A1338, 2016.

Abdul-Lateef Haji-Ali, Fabio Nobile, and Raúl Tempone. Multi-index Monte Carlo: when sparsity meets sampling. Numerische Mathematik, 132(4):767–806, 2016.

Raúl Tempone Håkon Hoel, Gaukhar Shaimerdenova. Multilevel ensemble Kalman Filtering based on a sample average of independent EnKF estimators. Foundations of Data Science, 2(4):351–390, 2020.

Jeremy Heng, Adrian N Bishop, George Deligiannidis, and Arnaud Doucet. Controlled sequential Monte Carlo. The Annals of Statistics, 48(5):2904–2929, 2020.

Jeremy Heng and Pierre E Jacob. Unbiased Hamiltonian Monte Carlo with couplings. Biometrika, 106(2):287–302, 2019.

Jeremy Heng, Ajay Jasra, Kody JH Law, and Alexander Tarakanov. On unbiased estimation for discretized models. arXiv preprint arXiv:2102.12230, 2021.

Viet Ha Hoang, Christoph Schwab, and Andrew M Stuart. Complexity analysis of accelerated MCMC methods for Bayesian inversion. Inverse Problems, 29(8):085010, 2013.

Håkon Hoel, Kody JH Law, and Raul Tempone. Multilevel ensemble Kalman filtering. SIAM Journal on Numerical Analysis, 54(3):1813–1839, 2016.

Christopher Jarzynski. Nonequilibrium equality for free energy differences. Physical Review Letters, 78(14):2690, 1997.

Ajay Jasra, Kengo Kamatani, Kody J. H. Law, and Yan Zhou. Bayesian static parameter estimation for partially observed diffusions via multilevel Monte Carlo. SIAM Journal on Scientific Computing, 40(2):A887–A902, 2018.

Ajay Jasra, Kengo Kamatani, Kody J. H. Law, and Yan Zhou. A multi-index Markov chain Monte Carlo method. International Journal for Uncertainty Quantification, 8(1), 2018.

Ajay Jasra, Kengo Kamatani, Kody JH Law, and Yan Zhou. Multilevel particle filters. SIAM Journal on Numerical Analysis, 55(6):3068–3096, 2017.

Ajay Jasra, Kody Law, and Fangyuan Yu. Unbiased filtering of a class of partially observed diffusions. To appear in Advances in Applied Probability, arXiv preprint arXiv:2002.03747, 2020.

Ajay Jasra, Kody JH Law, and Deng Lu. Unbiased estimation of the gradient of the log-likelihood in inverse problems. Statistics and Computing, 31(3):1–18, 2021.

Ajay Jasra, Kody JH Law, and Prince Peprah Osei. Multilevel particle filters for lévy-driven stochastic differential equations. Statistics and Computing, 29(4):775–789, 2019.

Ajay Jasra, Kody JH Law, and Yaxian Xu. Multi-index sequential Monte Carlo methods for partially observed stochastic partial differential equations. International Journal for Uncertainty Quantification, 11(3), 2021.

Ajay Jasra, Kody JH Law, and Fangyuan Yu. Randomized multilevel Monte Carlo for embarrassingly parallel inference. To appear in SMC 2022 Proceedings, arXiv preprint arXiv:2107.01913, 2021.

Ajay Jasra, Fangyuan Yu, and Jeremy Heng. Multilevel particle filters for the non-linear filtering problem in continuous time. Statistics and Computing, 30(5):1381–1402, 2020.

Gabriel J Lord, Catherine E Powell, and Tony Shardlow. An introduction to computational stochastic PDEs, volume 50. Cambridge University Press, 2014.

Jesper Møller, Anne Randi Syversveen, and Rasmus Plenge Waagepetersen. Log Gaussian Cox processes. Scandinavian journal of statistics, 25(3):451–482, 1998.

Pierre Del Moral, Ajay Jasra, Kody JH Law, and Yan Zhou. Multilevel sequential Monte Carlo samplers for normalizing constants. ACM Transactions on Modeling and Computer Simulation (TOMACS), 27(3):1–22, 2017.

Iain Murray, Ryan Adams, and David MacKay. Elliptical slice sampling. In Proceedings of the thirteenth international conference on artificial intelligence and statistics, pages 541–548. JMLR Workshop and Conference Proceedings, 2010.

Radford Neal. Regression and classification using Gaussian process priors. Bayesian statistics, 6:475, 1998.

Radford M Neal. Annealed importance sampling. Statistics and computing, 11(2):125–139, 2001.

Jorge Nocedal and Stephen Wright. Numerical optimization. Springer Science & Business Media, 2006.

Bernt Øksendal. Stochastic differential equations. In Stochastic differential equations. Springer, 2003.

Grigorios A Pavliotis. Stochastic processes and applications: diffusion processes, the Fokker-Planck and Langevin equations, volume 60. Springer, 2014.

Christian Robert and George Casella. Monte Carlo statistical methods. Springer Science & Business Media, 2013.

Hamza Ruzayqat, Neil K Chada, and Ajay Jasra. Multilevel estimation of normalization constants using the ensemble Kalman-Bucy filter. arXiv preprint arXiv:2108.03935, 2021.

Robert Scheichl, Andrew M Stuart, and Aretha L Teckentrup. Quasi-Monte Carlo and multilevel Monte Carlo methods for computing posterior expectations in elliptic inverse problems. SIAM/ASA Journal on Uncertainty Quantification, 5(1):493–518, 2017.

Walter A Strauss. Partial differential equations: An introduction. John Wiley & Sons, 2007.

Andrew M Stuart. Inverse problems: a Bayesian perspective. Acta numerica, 19:451–559, 2010.

Luke Tierney. A note on metropolis-hastings kernels for general state spaces. Annals of applied probability, pages 1–9, 1998.

Surya T Tokdar and Jayanta K Ghosh. Posterior consistency of logistic Gaussian process priors in density estimation. Journal of statistical planning and inference, 137(1):34–42, 2007.

David Williams. Probability with martingales. Cambridge university press, 1991.

Acknowledgements

AJ was supported by KAUST baseline funding.

Author information

Authors and Affiliations

Corresponding author

Additional information

Communicated by Martin Burger.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Proofs relating to Theorem 4.2 and Remark 4.3

Let \(B_{\alpha } = F_\alpha (\psi _{\zeta _\alpha })\), and recall from Assumption 4.2 (V) that

and from Assumption 4.2 (C) that the total computational cost is \(\sum _{\alpha \in \mathcal{I}}N_{\alpha }C_{\alpha }\). Following from Theorem 4.1, we have