Abstract

Randomized trace estimation is a popular and well-studied technique that approximates the trace of a large-scale matrix B by computing the average of \(x^T Bx\) for many samples of a random vector X. Often, B is symmetric positive definite (SPD) but a number of applications give rise to indefinite B. Most notably, this is the case for log-determinant estimation, a task that features prominently in statistical learning, for instance in maximum likelihood estimation for Gaussian process regression. The analysis of randomized trace estimates, including tail bounds, has mostly focused on the SPD case. In this work, we derive new tail bounds for randomized trace estimates applied to indefinite B with Rademacher or Gaussian random vectors. These bounds significantly improve existing results for indefinite B, reducing the number of required samples by a factor n or even more, where n is the size of B. Even for an SPD matrix, our work improves an existing result by Roosta-Khorasani and Ascher (Found Comput Math, 15(5):1187–1212, 2015) for Rademacher vectors. This work also analyzes the combination of randomized trace estimates with the Lanczos method for approximating the trace of f(B). Particular attention is paid to the matrix logarithm, which is needed for log-determinant estimation. We improve and extend an existing result, to not only cover Rademacher but also Gaussian random vectors.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

This paper is concerned with approximating the trace of a symmetric matrix \(B \in {\mathbb {R}}^{n \times n}\) that is accessible only implicitly via matrix-vector products or, more precisely, (approximate) quadratic forms. If X is a random vector of length n such that \({\mathbb {E}}[X] = 0\) and \({\mathbb {E}}[XX^T] = I\), then \({\mathbb {E}}[X^T B X] = \,\mathrm {tr}(B)\). Based on this result, a stochastic trace estimator [27] is obtained from sampling an average of N quadratic forms:

where \(X^{(i)}\), \(i = 1,\ldots , N\), are independent copies of X. The most common choices for X are standard Gaussian and Rademacher random vectors. The latter are defined by having i.i.d. entries that take values \(\pm 1\) with equal probability. We will consider both choices in this paper and denote the resulting trace estimates by \(\,\mathrm {tr}^{\mathsf {G}}_N(B)\) and \(\,\mathrm {tr}^{\mathsf {R}}_N(B)\), respectively.

Hutchinson [27] used \(\,\mathrm {tr}^{\mathsf {R}}_N(B)\) to approximate the trace of the influence matrix of Laplacian smoothing splines. In this setting, \(B = A^{-1}\) for a symmetric positive definite (SPD) matrix A and, in turn, A is SPD as well. Other applications, such as spectral density estimation [31], triangle counting in graphs [3, 17], and determinant computation [5], may feature a symmetric but indefinite matrix B. For approximating the determinant, one exploits the relation

where \(\log (A)\) denotes the matrix logarithm of A. The need for estimating determinants arises, for instance, in statistical learning [2, 18, 20], lattice quantum chromodynamics [39], and Markov random fields models [43]. Certain quantities associated with graphs can be formulated as determinants, such as the number of spanning trees, and various negative approximation results exist in this context; see, e.g., [16, 35]. Relying on the Cholesky factorization, the exact computation of the determinant is often infeasible for a large matrix A. In contrast, the Hutchinson estimator combined with (2) bypasses the need for factorizing A and instead requires to (approximately) evaluate the quadratic form \(x^T \log (A) x\) for several vectors \(x \in {\mathbb {R}}^n\). Compared to the task of estimating the trace of \(A^{-1}\), the determinant computation via (2) is complicated by two issues: (a) Even when A is SPD, the matrix \(B = \log (A)\) may be indefinite; and (b) the quadratic forms \(x^T \log (A) x\) themselves are expensive to compute exactly, so they need to be approximated. We mention in passing that there are other methods to approximate traces and determinants, including randomized subspace iteration [37] and block Krylov methods [30], but they only work well in specific cases, e.g., when \(A = \sigma I + C\) for a matrix C of low numerical rank. The Hutch++ trace estimator, recently proposed and analyzed for the SPD case in [32], overcomes this limitation via a combination with stochastic trace estimation. Although it is not difficult to imagine that the results presented in this work are useful in extending the analysis from [32] to the indefinite case, a thorough discussion of this extension is beyond the scope of this work. Another direction of work on large-scale determinant estimation has explored the use of spectral sparsifiers for symmetric diagonally dominant matrices [16, 26].

Trace Estimation of Indefinite Matrices. By the central limit theorem, estimate (1) can be expected to become more reliable as N increases; see, e.g., [13, Corollaries 3.3 and 4.3] for such an asymptotic result as \(N \rightarrow \infty \). Most existing non-asymptotic results for trace estimation are specific to an SPD matrix B; see [4, 22, 36] for examples. They provide a bound on the estimated number N of probe vectors to ensure a small relative error with high probability:

see Remark 2 for a specific example. As already mentioned, the assumption that B is SPD is usually not met when computing the determinant of an SPD matrix A via \(\,\mathrm {tr}(\log (A))\) because this would require all eigenvalues of A to be larger than one. For general indefinite B, it is unrealistic to aim at a bound of form (3) for the relative error, because \(\,\mathrm {tr}(B) = 0\) does not imply zero error. Ubaru, Chen, and Saad [40] derive a bound for the absolute error via rescaling, that is, the results from [36] are applied to the matrix \(C := -\log (\lambda A)\) for a value of \(\lambda > 0\) that ensures C to be SPD. Specifically, for Rademacher vectors it is shown in [40, Corollary 4.5] that

is satisfied with fixed failure probability \(\delta \) if the number of samples N grows proportionally to \(\varepsilon ^{-2} n^2 \log (1 + \kappa (A))^2 \log \frac{2}{\delta }\) where \(\kappa (A)\) denotes the condition number of A. Unfortunately, this estimated number of samples compares unfavorably with a much simpler approach; computing the trace from the diagonal elements of \(\log (A)\) only requires the evaluation of n quadratic forms, using all n unit vectors of length n. A more general result for indefinite matrices is shown in [3] and it also features a worst-case dependence on \(n^2\); we refer to Remarks 1 and 5 for a comparison with our new results.

Approximation of Quadratic Forms. To approximate the quadratic forms \({x^T B x} = x^T \log (A) x\), a polynomial approximation of the logarithm can be used, see [24, 34] for approximation by Chebyshev expansion/interpolation and [6, 10, 45] for approximation by Taylor series expansion. Often, a better approximation can be obtained by the Lanczos method, which is equivalent to applying Gaussian quadrature to the integral \(\int \log ({\lambda }) \mathrm{d}\mu ({\lambda })\) on the spectral interval of A, for a suitably defined measure \(\mu \); see [21]. In this case, upper and lower bounds for the quantity \(x^T \log (A) x\) can be determined without much additional effort [5]. Moreover, the convergence of Gaussian quadrature for the quadratic form can be related to the best polynomial approximation of the logarithm on the spectral interval of A; see [40, Theorem 4.2]. By combining the polynomial approximation error with (4), one obtains a total error bound that takes into account both sources of errors. Such a result is presented in [40, Corollary 4.5] for Rademacher vectors; the fact that all such vectors have bounded norm is essential in the analysis.

Contributions. In this paper, we improve the results from [3, 40] by first showing that the number of samples required to achieve (4) is much lower. In particular, we show for a general symmetric matrix B that

is satisfied with fixed failure probability \(\delta \) if the number of samples N grows proportionally with the stable rank \(\rho (B) := \Vert B \Vert _F^2 / \Vert B \Vert _2^2\); as \(\rho (B) \in [1, n]\), the growth is at most linear in n (instead of quadratic). We derive such a result for both, Gaussian and Rademacher vectors, and demonstrate that the dependence on n is asymptotically tight with an explicit example. For SPD matrices B, our bound also improves the state-of-the-art result [36, Theorem 1] for Rademacher vectors by establishing that the number of probe vectors is inversely proportional to the stable rank of \(B^{1/2}\).

Specialized to determinant computation, we combine our results with an improved analysis of the Lanczos method, to get a sharper total error bound for Rademacher vectors. Finally, we extend this combined error bound to Gaussian vectors, which requires some additional consideration because of the unboundedness of such vectors. We remark that some of our results are potentially of wider interest, beyond stochastic trace and determinant estimation, such as a tail bound for Rademacher chaos (Theorem 2) and an error bound (Corollary 3 combined with Corollary 5) on the polynomial approximation of the logarithm.

We note in passing that some results of this paper also apply to a non-symmetric matrix B, because of the relations \(\,\mathrm {tr}(B) = \,\mathrm {tr}(B_s)\) and \(x^T B x = x^T B_{s} x\) with the symmetric part \(B_s = (B+B^T)/2\).

2 Tail Bounds for Trace Estimates

In this section we derive tail bounds of the form (5) for the stochastic trace estimator applied to a symmetric, possibly indefinite matrix \(B \in {\mathbb {R}}^{n\times n}\). We will analyze Gaussian and Rademacher vectors separately. In the following, we will frequently use a spectral decomposition \(B = Q\Lambda Q^T\), where \(\Lambda = \,\mathrm {diag}(\lambda _1, \ldots , \lambda _n)\) contains the eigenvalues of B and Q is an orthogonal matrix.

2.1 Standard Gaussian Random Vectors

The case of Gaussian vectors will be addressed by using a tail bound for sub-Gamma random variables, which follows from Chernoff bounds; see, e. g., [9].

Definition 1

A random variable X is called sub-Gamma with variance parameter \(\nu > 0\) and scale parameter \(c > 0\) if

Lemma 1

[9, Section 2.4] Let X be a sub-Gamma random variable with parameters \((\nu , c)\). Then, for all \(\varepsilon \ge 0\), we have

Lemma 2

[42, Proposition 2.10] Let X be a random variable such that \({\mathbb {E}}[X] = 0\), and such that both X and \(-X\) are sub-Gamma with parameters \((\nu , c)\). Then, for all \(\varepsilon \ge 0\), we have

Lemma 2 implies the following result for the tail of a single-sample trace estimate. This result is similar, but not identical, to [9, Example 2.12] and [29, Lemma 1], which apply to symmetric matrices with zero diagonal and SPD matrices, respectively.

Lemma 3

For a Gaussian vector X of length n we have

for all \(\varepsilon >0\).

Proof

We let

where \(Z_i \sim \mathcal {N}(0,1)\) is the ith component of the Gaussian vector \(Q^T X\). To show that Y is sub-Gamma, we define for \(\lambda \in {\mathbb {R}}\) the function

By direct computation, it follows that \(\psi (\lambda ) = -\lambda - \frac{1}{2} \log (1-2\lambda )\) for \(\lambda <\frac{1}{2}\). In particular, this implies \(\psi (\lambda ) \le \frac{\lambda ^2}{1-2\lambda }\) for \(0 \le \lambda < \frac{1}{2}\), and \(\psi (\lambda ) \le \lambda ^2 \le \frac{\lambda ^2}{1 + c\lambda }\) for \(-\frac{1}{c}< \lambda < 0\) for all \(c > 0\). Using the independence of \(Z_i\) for different i we obtain

for \(0< \lambda < \frac{1}{2\Vert B \Vert _2}\). This shows that Y is sub-Gamma with parameters \((\nu ,c) = (2\Vert B \Vert _F^2, 2\Vert B \Vert _2)\). Moreover, \(-Y = X^T (-B) X - \,\mathrm {tr}(-B)\) is also sub-Gamma with the same parameters. Because \({\mathbb {E}}[Y]=0\), Lemma 2 implies the desired result.\(\square \)

A diagonal embedding trick turns Lemma 3 into a tail bound for the stochastic trace estimator (1).

Theorem 1

Let \(B\in {\mathbb {R}}^{n\times n}\) be symmetric. Then

for all \(\varepsilon > 0\). In particular, for \(N \ge \frac{4}{\varepsilon ^2}(\Vert B \Vert _F^2 + \varepsilon \Vert B \Vert _2) \log \frac{2}{\delta }\) it holds that \({\mathbb {P}}(| \,\mathrm {tr}^{\mathsf {G}}_N(B) - \,\mathrm {tr}(B) | \ge \varepsilon ) \le \delta \).

Proof

We apply Lemma 3 to the matrix

that is, the block diagonal matrix with the N diagonal blocks containing rescaled copies of B. In turn, the trace estimate (1) equals \(X^T \mathcal {B} X\) for a Gaussian vector X of length Nn. Noting that \(\Vert \mathcal {B} \Vert _F = N^{-1/2} \Vert B \Vert _F\) and \(\Vert \mathcal {B} \Vert _2 = N^{-1} \Vert B \Vert _2\), the first part of the corollary follows from Lemma 3. Setting

we obtain \( N = \frac{4}{\varepsilon ^2} \left( \Vert B \Vert _F^2 + \varepsilon \Vert B \Vert _2 \right) \log \frac{2}{\delta }. \) \(\square \)

Remark 1

The result of Theorem 1 compares favorably with Lemma 4 in [3], which shows that \({\mathbb {P}}(| \,\mathrm {tr}^{\mathsf {G}}_N(B) - \,\mathrm {tr}(B) | \ge \varepsilon ) \le \delta \) for \(N \ge \frac{20}{\varepsilon ^2} \Vert B \Vert _*^2 \log \frac{4}{\delta }\). Because of \(\Vert B \Vert _F \le \Vert B \Vert _* \le \sqrt{n} \Vert B \Vert _F\), the bound of Theorem 1 is always better for reasonable values of \(\varepsilon \), and it can improve the estimated number of samples N in [3] by a factor proportional to n.

We recall that the stable rank of B is defined as \(\rho = \Vert B \Vert _F^2 / \Vert B \Vert _2^2\) and satisfies \(\rho \in [1,n]\). In particular, \(\rho (B) = 1\) when B has rank one and \(\rho (B) = n\) when all singular values are equal. Intuitively, \(\rho (B)\) tends to be large when B has many singular values not significantly smaller than the largest one. The minimum number of probe vectors required by Theorem 1 depends on the stable rank of B in the following way:

The upper bound indicates that N may need to be chosen proportionally with n to reach a fixed (absolute) accuracy \(\varepsilon \) with constant success probability, provided that \(\Vert B \Vert _2\) remains constant as well. The following lemma shows for a simple matrix B that such a linear growth of N can actually not be avoided.

Lemma 4

Let n be even and consider the traceless matrix \(B = \left[ \begin{array}{cc} I_{\frac{n}{2}} &{} 0 \\ 0 &{} -I_{\frac{n}{2}} \end{array}\right] \). Then, for every \(\varepsilon > 0\), it holds that

Proof

By the definition of B, the trace estimate takes the form

for independent \(X_i, Y_j \sim N(0,1)\). In other words,

where X, Y are independent Chi-squared random variables with \(\frac{nN}{2}\) degrees of freedom. The probability density function f of \(Z = X-Y\) can be expressed as

where \(K_{\frac{nN}{4}-\frac{1}{2}}\) is a modified Bessel function of the second kind [15]. In particular,

where we used the duplication formula for Gamma functions and the inequality \(\frac{1}{2^{2k}}\left( {\begin{array}{c}2k\\ k\end{array}}\right) \le \frac{1}{\sqrt{\pi k}}\); see [41].

As f is an autocorrelation function (of the density function of a Chi-squared variable with nN/2 degrees of freedom), its maximum is at 0. We can therefore estimate the probability of \(X-Y\) being in the interval \([-N\varepsilon , N\varepsilon ]\) in the following way:

\(\square \)

We can reformulate Theorem 1 in such a way that, given a number N of probe vectors and a failure probability \(\delta \in (0,1)\), we have \(\varepsilon = \varepsilon (B, N, \delta )\) such that with probability at least \(1-\delta \) one has \(\,\mathrm {tr}^{\mathsf {G}}_N(B) \in [\,\mathrm {tr}(B) - \varepsilon , \,\mathrm {tr}(B) + \varepsilon ]\). The random variable \(X^T \mathcal {B} X - \,\mathrm {tr}({\mathcal {B}})\), where \(\mathcal {B}\) is defined as in (6) and X is a Gaussian vector of length nN, is sub-Gamma with parameters \(\left( 2\frac{\Vert B\Vert _F^2}{N}, 2\frac{\Vert B\Vert _2}{N} \right) \), and the same holds for \(-X^T \mathcal {B} X\). By Lemma 1 we have

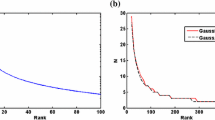

As the example in Lemma 4 shows, the potential growth of \(\varepsilon \) with \(\sqrt{n}\) cannot be avoided in general. Figure 1 illustrates this growth. In the case of relative error estimates for symmetric positive semidefinite (SPSD) matrices, it is shown in [44] that the dependence on \(\log \frac{2}{\delta }\) and \(\frac{1}{\varepsilon ^2}\) cannot be improved.

Remark 2

For a nonzero SPSD matrix B, the result of Theorem 1 can be turned into a relative error estimate. Let \(\mu := \Vert B \Vert _2 / \,\mathrm {tr}(B) = \rho (B^{1/2})^{-1}\). Replacing \(\varepsilon \) by \(\varepsilon \cdot \,\mathrm {tr}(B)\) in Theorem 1 and noting that \(\Vert B \Vert _F^2 / \,\mathrm {tr}(B)^2 \le \mu \), one obtains

State-of-the-art results of a similar form are Theorem 3 in [36], which requires \(N \ge \frac{8}{\varepsilon ^2} \mu \log \frac{2}{\delta }\), and Corollary 3.3 in [22], which requires \(N \ge \frac{2}{\varepsilon ^2} {\mu } \log \frac{2}{\delta }\) and \(\varepsilon \in \left( 0, \frac{1}{2}\right) \). Compared to [22], our result imposes no restriction on \(\varepsilon \) at the expense of a somewhat larger constant. On the other hand, as \(\varepsilon \le 1\), our result is always more favorable than the result from [36] for SPSD matrices.

2.2 Rademacher Random Vectors

The quadratic form \(X^T B X\) for a Rademacher vector X is called Rademacher chaos of order 2. We will first consider the homogeneous case, corresponding to a matrix B with zero diagonal, which has been studied extensively in the literature, see, e.g., [9, 19, 25, 28, 38]. The non-homogeneous case is easily obtained from the homogeneous case; see Corollary 1. We make use of the the entropy method [9] to establish the following tail bound for a single-sample trace estimate.

Theorem 2

Let X be a Rademacher vector of length n and let B be a nonzero symmetric matrix such that \(B_{ii} = 0\) for \(i=1, \ldots , n\). Then, for all \(\varepsilon > 0\),

Proof

The proof follows closely [1, Theorem 6] and [9, Theorem 17]; see Remark 3 for a comparison with these results. The main idea of the proof is as follows. Using the logarithmic Sobolev inequalities discussed in the appendix, a bound on the entropy of the random variable \(X^T B X\) is obtained. Using a (modified) Herbst argument, we derive a bound on the moment generating function (MGF) of \(X^T B X\), establishing that it is sub-Gamma with certain constants, which then allows us to apply Lemma 2.

Without loss of generality, we may assume \(\Vert B \Vert _2 = 1\); the general case follows from applying the result to \({\tilde{B}} := B / \Vert B\Vert _2\). Let us consider the function \(f:\{-1,1\}^n \rightarrow {\mathbb {R}}\) defined as

We want to apply the logarithmic Sobolev inequality (20) from Theorem 6 to f(X). For this purpose, we let

where \(e_i\) denotes the ith unit vector. Using that B has zero diagonal entries, we obtain

Therefore, denoting

We denote by \({\mathbb {H}}(Z)\) the entropyFootnote 1 of a random variable Z. Theorem 6 establishes, for all \(\lambda > 0\),

The decoupling inequality in [19, Lemma 8.50], which follows from Jensen’s inequality, gives

Combined with (9), this implies

To find an upper bound on the MGF of Y, we use again a logarithmic Sobolev inequality, then transform the obtained bound on the entropy into a bound on the MGF by Herbst argument. We do so by applying inequality (19) from Theorem 6 to the function \(h: {\mathbb {R}}^n \rightarrow {\mathbb {R}}\) defined by \(h(x) := \Vert B x \Vert _2^2\). For this purpose, note that

and, hence,

Therefore, Theorem 6 gives

Letting \(g(\lambda ) := 4{\mathbb {E}}[Y \exp (\lambda Y)] /{\mathbb {E}}[ \exp (\lambda Y)]\), we have obtained a bound of form (18), as required by Lemma 7. Note that \(g(\lambda ) = 4 \psi '(\lambda )\), where \(\psi (\lambda ) := \log {\mathbb {E}}[\exp (\lambda Y)]\). The result of Lemma 7 gives

Inserting this inequality into (10) gives

The random variable f(X) satisfies (18) for the function \(g(\lambda ) := \frac{2\Vert B\Vert _F^2}{(1-4\lambda )(1-2\lambda )}\) in the interval [0, 1/4). Recalling that \({\mathbb {E}}[f(X)]=0\), the result of Lemma 7 gives

where we used \(\log (1+x) \le x\) in the last inequality.

Replacing f by \(-f\) and B by \(-B\), we also obtain

Therefore, the random variables f(X) and \(-f(X)\) are sub-Gamma with parameters \(( 4\Vert B\Vert _F^2, 4)\). Applying Lemma 2 concludes the proof.\(\square \)

Remark 3

The proof of Theorem 2 follows the proof of [1, Theorem 6], which in turn refines a result from [8, Theorem 17] (see also [9]) by substituting the more general logarithmic Sobolev inequality from [8, Proposition 10] with the ones from Theorem 6 specific for Rademacher random variables. However, let us stress that the results in [1, 8] feature larger constants partly because they deal with the more general Rademacher chaos

where \(\mathcal {B}\) is a set of symmetric matrices with zero diagonal. Restricted to the case \(\mathcal {B} = \{B\}\), the results stated in [1, Theorem 6] and [9, Exercise 6.9] give \({\mathbb {P}} \left( | X^T B X | \ge \varepsilon \right) \le 2\exp \left( -\frac{\varepsilon ^2}{16\Vert B\Vert _F^2 + 16 \Vert B\Vert _2 \varepsilon } \right) \) and \({\mathbb {P}} \left( | X^T B X | \ge \varepsilon \right) \le 2\exp \left( -\frac{\varepsilon ^2}{32\Vert B\Vert _F^2 + 128 \Vert B\Vert _2 \varepsilon } \right) \), respectively. Proposition 8.13 in [19] states \({\mathbb {P}} \left( | X^T B X | \ge \varepsilon \right) \le 2\exp \left( -\min \left\{ \frac{3\varepsilon ^2}{128 \Vert {B} \Vert _F^2}, \frac{\varepsilon }{32 \Vert {B} \Vert _2} \right\} \right) \).

As for Gaussian vectors, the result of Theorem 2 can be turned into a tail bound for \(\,\mathrm {tr}^{\mathsf {R}}_N(B)\) by block diagonal embedding. In the following, let \(\,\mathrm {D}_B\) denote the diagonal matrix containing the diagonal entries of B.

Corollary 1

Let B be a nonzero symmetric matrix. Then

for every \(\varepsilon > 0\). In particular, for

it holds that \({\mathbb {P}}\big (| \,\mathrm {tr}^{\mathsf {R}}_N(B) - \,\mathrm {tr}(B) | \ge \varepsilon \big ) \le \delta \).

Proof

Let \(C := B - \,\mathrm {D}_B\) and \(\mathcal {C} := \,\mathrm {diag}\big ( N^{-1} C,\ldots , N^{-1} C\big ) \in {\mathbb {R}}^{Nn\times Nn}\). Then, \(\,\mathrm {tr}^{\mathsf {R}}_N(B) - \,\mathrm {tr}(B) = X^T \mathcal {C} X\) for a Rademacher vector X of length Nn.

The matrix \(\mathcal {C}\) has zero diagonal, \(\Vert \mathcal {C} \Vert _F = N^{-1/2} {\Vert C \Vert _F}\), and \(\Vert \mathcal {C} \Vert _2 = N^{-1} \Vert C\Vert _2\). Now, the first part of the corollary directly follows from Theorem 2. Imposing a failure probability of \(\delta \) in (8) gives

and hence \( N = \frac{8}{\varepsilon ^2} \left( \Vert C \Vert _F^2 + \varepsilon \Vert C \Vert _2 \right) \log \frac{2}{\delta } \). \(\square \)

Remark 4

It is instructive to compare the result of Corollary 1 to the straightforward application of Bernstein’s inequality, which gives

Clearly, a disadvantage of this bound is the explicit dependence of the denominator on n, which does not appear in Corollary 1.

An alternative expression for the lower bound on N is obtained by noting that \(\Vert B - \,\mathrm {D}_B\Vert _F \le \Vert B\Vert _F\) and \(\Vert B - \,\mathrm {D}_B\Vert _2 \le 2 \Vert B \Vert _2\) (the factor 2 in the latter inequality is asymptotically tight, see, e.g., [7]). The result of Corollary 1 thus states that N needs to be at least in the following way:

where \(\rho \) is the stable rank of B.

Remark 5

In analogy to the Gaussian case (see Remark 1), the result of Corollary 1 compares favorably with Lemma 5 in [3], which shows that \({\mathbb {P}}(| \,\mathrm {tr}^{\mathsf {R}}_N(B) - \,\mathrm {tr}(B) | \ge \varepsilon ) \le \delta \) for \(N \ge \frac{6}{\varepsilon ^2} \Vert B \Vert _*^2 \log \frac{2\cdot \mathrm {rank}(B)}{\delta }\).

In analogy to the Gaussian case, the following lemma shows that a potential linear dependence of N on n cannot be avoided in general.

Lemma 5

Let n be even and consider the traceless matrix  . Then

. Then

for every \(\varepsilon > 0\).

Proof

We first note that \(\,\mathrm {tr}^{\mathsf {R}}_N(B) = \frac{2}{N} \sum _{i=1}^{nN/2} Z_i\) with independent Rademacher random variables \(Z_i\). In turn, \( {\mathbb {P}} \left( |\,\mathrm {tr}^{\mathsf {R}}_N(B)| \le \varepsilon \right) = {\mathbb {P}} \left( \left|\sum _{i=1}^{nN/2} Z_i \right|\le \frac{N\varepsilon }{2} \right) \) equals the probability that the number of variables satisfying \(Z_i = 1\) is at least \(\frac{n-\varepsilon }{4}N\) and at most \(\frac{n+\varepsilon }{4}N\). Therefore,

where we used the inequality \(\frac{1}{2^{2k}}\left( {\begin{array}{c}2k\\ k\end{array}}\right) \le \frac{1}{\sqrt{\pi k}}\). \(\square \)

We do not report a figure analogous to Fig. 1 because the observed errors are very similar to the Gaussian case.

For SPSD matrices, a relative error estimate follows from Corollary 1 similarly to what has been discussed in Remark 2 for Gaussian vectors. We recall that \(\rho = \Vert B \Vert _F^2 / \Vert B \Vert _2^2\) denotes the stable rank of B.

Corollary 2

For a nonzero SPSD matrix B, we have

Proof

First of all, it is immediate that \(\Vert B - \,\mathrm {D}_B \Vert _F \le \Vert B \Vert _F\). As shown, e.g., in [7, Theorem 4.1], the same holds for the spectral norm when B is SPSD. For convenience, we provide a short proof: For every \(y\in {\mathbb {R}}^n\) it holds that

where the first inequality uses that both \(y^T B y\) and \(y^T \,\mathrm {D}_B y\) are nonnegative. By taking the maximum with respect to all vectors of norm 1 one obtains \(\Vert B - \,\mathrm {D}_B \Vert _2\) on the left-hand side, which shows that it is bounded by \(\Vert B \Vert _2\). Now, the claimed result follows from Corollary 1 using the arguments of Remark 2.\(\square \)

Corollary 2 improves the result from [36, Theorem 1], which requires \(N \ge \frac{6}{\varepsilon ^2} \log \frac{2}{\delta }\); a lower bound that does not improve as \(\mu \) decreases.

3 Lanczos Method to Approximate Quadratic Forms

Let us now consider the problem of estimating the log determinant through \(\log (\det (A)) = \,\mathrm {tr}(\log (A))\), or more generally the problem of computing the trace of f(A) for an analytic function f.

Applying a trace estimator to \(\,\mathrm {tr}(f(A))\) requires the (approximate) computation of the quadratic forms \(x^T f(A) x\) for fixed vectors \(x \in {\mathbb {R}}^n\). Following [40], we use the Lanczos method, Algorithm 1, for this purpose.

For theoretical considerations, it is helpful to view the quadratic form as an integral. For this purpose, we consider the spectral decomposition \(A = Q\Lambda Q^T\), \(\Lambda = \,\mathrm {diag}(\lambda _1, \ldots , \lambda _n)\), with \(\lambda _{\min } = \lambda _1 \le \lambda _2 \le \cdots \le \lambda _n = \lambda _{\max }\). Then

with the piecewise constant measure

where \(\chi \) denotes the indicator function. It is well-known [21, Theorem 6.2] that the approximation \(I_m\) returned by the m-points Gaussian quadrature rule applied to I is identical to the approximation returned by m steps of the Lanczos method:

To bound the error \(|I - I_m|\), the analysis in [40] proceeds by using existing results on the polynomial approximation error of analytic functions. Although our analysis is along the same lines, it differs in a key technical aspect; we derive and use an improved error bound for the approximation of the logarithm; see Corollary 4. We have also noted two minor erratas in [40]; see the proof of Theorem 3 and the remark after Corollary 3 for details.

Theorem 3

Let \(f:[-1,1] \rightarrow {\mathbb {R}}\) admit an analytic continuation to a Bernstein ellipse \(E_{\rho _0}\) with foci \(\pm 1\) and elliptical radius \(\rho _0\). For \(1< \rho < \rho _0\), let \(M_{\rho }\) be the maximum of |f(z)| on \(E_{\rho }\). Then

Proof

As in [40], this result follows directly from bounds on the polynomial approximation error of analytic functions via Chebyshev expansion, combined with the fact that m-points Gaussian quadrature is exact for polynomials up to degree \(2m-1\). However, the proof of [40, Theorem 4.2] uses an extra ingredient, which seems to be wrong. It claims that the integration error for odd-degree Chebyshev polynomials is zero thanks to symmetry. While this fact is indeed true for the standard Lebesgue measure, it does not hold for the measure (12). In turn, one obtains the slightly worse factor \(1-\rho ^{-1}\) in the denominator, compared to the factor \(1-\rho ^{-2}\) that would have been obtained from [40, Theorem 4.2] translated into our setting. \(\square \)

The affine linear transformation

is used to map an interval \([\lambda _{\min }, \lambda _{\max }]\) containing the eigenvalues of A to the interval \([-1,1]\) of Theorem 3. Defining \(g:= f \circ \varphi ^{-1}\), one has

By its shift and scaling invariance, the Lanczos method with g, \(\varphi (A)\), and x returns the approximation \(e_1^T g(\varphi (T_m))e_1\). This allows us to apply Theorem 3. Combined with relations (13), the following result is obtained.

Corollary 3

With the notation introduced above, it holds that

Note that \(M_\rho \) is the maximum of g on \(E_\rho \), which is equal to the maximum of f on the transformed ellipse with foci \(\lambda _{\min }, \lambda _{\max }\), and elliptical radius \((\lambda _{\max }-\lambda _{\min }) \rho /2\). The result of Corollary 3 differs from the corresponding result in [40, page 1087], which features an additional, erroneous factor \((\lambda _{\max }(A) - \lambda _{\min }(A))/2\).

For the special case of the logarithm, the following result is obtained.

Corollary 4

Let \(A \in {\mathbb {R}}^{n \times n}\) be SPD with condition number \(\kappa (A)\), \(f\equiv \log \) and \(x \in {\mathbb {R}}^n \backslash \{0\}\). Then the error of the Lanczos method after m steps satisfies

where \(c_A := 2 (\sqrt{\kappa (A)+1}+1) \log (2\kappa (A))\).

Proof

The proof consists of applying Corollary 3 to a rescaled matrix. More specifically, we choose \(B := \lambda A\) with \(\lambda := 1/ ( 2\lambda _{\min } ) > 0\). The tridiagonal matrix returned by the Lanczos method with A replaced by B satisfies \(T^B_m = \lambda T_m\). Together with the identity \(\log (\lambda A) = \log \lambda I + \log (A)\), this implies

Note that the smallest/largest eigenvalues of B are given by 1/2 and \(\kappa (A)/2\), respectively. Applying Corollary 3 to B withFootnote 2\(\rho := \frac{\sqrt{\kappa (A)+1}+1}{\sqrt{\kappa (A) +1}-1}\) thus gives

The constant \(M_{\rho }\) is the maximum absolute value of the logarithm on the ellipse with foci 1/2 and \(\kappa (A)/2\) that intersects the real axis at \(\alpha := \frac{1}{2\kappa (A)}\) and \(\beta := \frac{\kappa (A)^2 + \kappa (A) - 1}{2\kappa (A)}\). By Corollary 5, \(M_\rho = |\log (\alpha )| = \log (2 \kappa (A))\), where we used \(\alpha \le 1/\beta \le 1\). Noting that

concludes the proof. \(\square \)

4 Combined Bounds for Determinant Estimation

Combining randomized trace estimation with the Lanczos method, we obtain the following (stochastic) estimate for \(\log (\det (A))\):

where \(X^{(1)}, \ldots , X^{(N)}\) are independent Gaussian or Rademacher random vectors and \(T_m^{(i)}\) is the tridiagonal matrix obtained from the Lanczos method with starting vector \(X^{(i)} / \Vert X^{(i)} \Vert _2\). By combining the results obtained so far, we now derive new bounds on the number of samples and number of Lanczos steps needed to ensure an approximation error of at most \(\varepsilon \) (with high probability).

4.1 Standard Gaussian Random Vectors

Theorem 4

Suppose that the following holds for N (number of Gaussian probe vectors) and m (number of Lanczos steps per probe vector):

-

(i)

\(N \ge 16 \varepsilon ^{-2} (\rho _{\log } \Vert \log (A) \Vert _2^2 + \varepsilon \Vert \log (A) \Vert _2) \log \frac{4}{\delta }\), where \(\rho _{\log }\) denotes the stable rank of \(\log (A)\);

-

(ii)

\(m \ge \frac{\sqrt{\kappa (A)+1}}{4} \log \big ( {4\varepsilon ^{-1} n^2(\sqrt{\kappa (A)+1}+1) \log (2\kappa (A))} \big )\).

If, additionally, \(n\ge 2\) and \(N \le \frac{\delta }{2} \exp \big ( \frac{n^2}{16} \big )\) then \( {\mathbb {P}}(|\,\mathrm {est}^{\mathsf {G}}_{N,m} - \log \det (A)| \ge \varepsilon ) \le \delta . \)

Proof

For a Gaussian vector X, the squared norm \(\Vert X\Vert _2^2\) is a Chi-squared random variable with n degrees of freedom. Therefore, by [29, Lemma 1] we have

for every \(t > 0\). For \(t = \log \frac{2N}{\delta }\), the additional assumptions of the theorem imply

and therefore \({\mathbb {P}}(\Vert X\Vert _2^2 \ge n^2) \le \frac{\delta }{2N}\). By the union bound, it holds that

Corollary 4, together with condition (ii) and (14) imply that \( | \,\mathrm {est}^{\mathsf {G}}_{N,m} - \,\mathrm {tr}^{\mathsf {G}}_N(\log (A)) | \le \frac{\varepsilon }{2} \) holds with probability at least \(1 - \delta / 2\), where we also used that \( \log \left( \frac{ \sqrt{\kappa (A)+1}+ 1}{\sqrt{\kappa (A)+1} -1}\right) \ge \frac{2}{\sqrt{\kappa (A)+1}}\).

Applying Theorem 1 to the matrix \(\log (A)\), for which \(\Vert \log (A) \Vert _F^2 = \rho _{\log }\Vert \log (A)\Vert _2^2\), we find that \( |\,\mathrm {tr}^{\mathsf {G}}_N(\log (A)) - \log \det (A)| \le \frac{\varepsilon }{2} \) holds with probability at least \(1 - \delta / 2\). The proof is concluded by applying the triangle inequality. \(\square \)

4.2 Rademacher Random Vectors

Theorem 5

Suppose that the following holds for N (number of Rademacher probe vectors) and m (number of Lanczos steps per probe vector):

-

(i)

\(N \ge 32 \varepsilon ^{-2} \left( \rho _{\mathrm {logd}} \Vert \log (A) - \,\mathrm {D}_{\log (A)} \Vert _2^2 + \frac{\varepsilon }{2} \Vert \log (A) - \,\mathrm {D}_{\log (A)} \Vert _2 \right) \log \frac{2}{\delta }\), where \(\rho _{\mathrm {logd}}\) denotes the stable rank of \(\log (A) - \,\mathrm {D}_{\log (A)}\) and \(\,\mathrm {D}_{\log (A)}\) is the diagonal matrix containing the diagonal entries of \(\log (A)\);

-

(ii)

\(m \ge \frac{\sqrt{\kappa (A)+1}}{4} \log \big ( {4 \varepsilon ^{-1} n(\sqrt{\kappa (A) + 1} + 1)\log (2\kappa (A)) }\big )\).

Then \({\mathbb {P}}(|\,\mathrm {est}^{\mathsf {R}}_{N,m} - \log \det (A)| \ge \varepsilon ) \le \delta \).

Proof

Using Corollary 3 and the fact that Rademacher random vectors have norm \(\sqrt{n}\), the bound \( \big | \,\mathrm {est}^{\mathsf {R}}_{N,m} - \,\mathrm {tr}^{\mathsf {R}}_N(\log (A)) \big | \le \frac{\varepsilon }{2} \) holds if

Because of \(\log \Big ( \frac{ \sqrt{\kappa (A)+1}+ 1}{\sqrt{\kappa (A)+1} -1}\Big ) \ge \frac{2}{\sqrt{\kappa (A)+1}}\), condition (ii) ensures that this inequality is satisfied.

Applying Corollary 1 to \(\log (A)\) and with \(\varepsilon \) replaced by \(\varepsilon /2\), immediately shows

with probability at least \(1 - \delta \) if condition (i) is satisfied. The proof is concluded by applying the triangle inequality. \(\square \)

Comparison with existing result. To compare Theorem 5 with an existing result from [40], it is helpful to first derive a simpler (but usually stronger) condition on N.

Lemma 6

The statement of Theorem 5 holds with condition (i) replaced by \(N \ge 8 \varepsilon ^{-2} \big ( n \log ^2 \kappa (A) + 2\varepsilon \log \kappa (A) \big ) \log \frac{2}{\delta }.\)

Proof

We set \(B := \lambda A\) with \(\lambda := 1/\sqrt{\lambda _{\min }(A) \lambda _{\max }(A)}\) and note that

Using \(\lambda _{\max }(B) = \sqrt{\kappa (A)}\), \(\lambda _{\min }(B) = 1/\sqrt{\kappa (A)}\), and \(\kappa (B) = \kappa (A)\), we obtain

An application of Corollary 1 to \(\log (B)\) therefore yields (15) with probability at least \(1-\delta \) for \(N \ge 8 \varepsilon ^{-2} \big ( n \log ^2 \kappa (A) + 2 \varepsilon \log \kappa (A) \big ) \log \frac{2}{\delta }\).\(\square \)

Correcting for the two minor erratas explained above, the result from [40, Corollary 4.5] states that \({\mathbb {P}}(|\,\mathrm {est}^{\mathsf {R}}_{N,m} - \,\mathrm {tr}(\log (A))| \ge \varepsilon ) \le \delta \) holds if

and

Compared to (16), Lemma 6 reduces the explicit dependence on the matrix size from \(n^2\) to n, while the dependence of the bounds on \(\kappa (A)\) is comparable. Let us stress that even a dependence on n does not compare favorably to simply computing the diagonal elements, but the bound from condition (i) of Theorem 5 can often be expected to be significantly better than the simplified bound of Lemma 6. Below we describe a situation in which the former only depends logarithmically on n. Condition (ii) of Theorem 5 improves (17) clearly but less drastically, roughly by a factor \(\sqrt{3}\).

Implications of Low Stable Rank. Let us consider a family of matrices \(\{ A_n \}\) of increasing dimension, a fixed failure probability \(\delta \), and a fixed accuracy \(\varepsilon \); the number of probe vectors required to get \({\mathbb {P}} (|\,\mathrm {tr}^{\mathsf {G,R}}_N(\log (A_n)) - \,\mathrm {tr}(\log (A_n))| \ge \varepsilon ) \le \delta \) is proportional to \(O(\rho _{n} \Vert \log (A_n)\Vert _2^2)\), where \(\rho _n\) is the stable rank of \(\log (A_n)\). In certain applications, including regularized kernel matrices (see, e.g., [12, 20]), the stable rank grows slowly when the matrix size increases. For such situations, our bounds lead to favorable implications. To illustrate this, let us consider matrices \(A_n := I + B_n\), where the eigenvalues satisfy \(\lambda _i(B_n) \le nC\alpha ^i\) for some constants \(C>0\) and \(0< \alpha < 1\), for all \(i\le n\), such as in the discretization of a radial basis function kernel on a fixed domain [20]. In this case, \(\rho _n = O(\log n)\). As a second example, if \(B_n\) comes from a discretization of a Matérn kernel on a regular grid in a fixed domain, its eigenvalues satisfy \(\lambda _i(B_n) \le nCi^{-\beta }\) for some constants \(C>0\) and \(\beta >1\), for all \(i \le n\) [12]; the stable rank of \(\log (A_n) = \log (I + B_n)\) is bounded by \(\rho _{n} = O(n^{1/\beta })\).

To apply Theorems 4 and 5 one also needs to take into account that, for both our examples, \(\Vert \log (A_n)\Vert _2\) and \(\kappa (A_n)\) grow proportionally to \(\log (n)\) and n, respectively. Finally, note that in practice one would consider \(A_n = \sigma I + B_n\) with the regularization parameter \(\sigma \) chosen adaptively; see, e.g., [11].

5 Numerical Experiments

In this section, we report on a number of numerical experiments illustrating the bounds obtained in this work. All numerical experiments have been performed in Matlab, version 9.9 (R2020b).

Example 1

To compare the estimates from Theorem 1 and Corollary 1 with the convergence of randomized trace estimation using Gaussian and Rademacher vectors, we use an example from [3, 32]. The number of triangles in an undirected graph is equal to \(\frac{1}{6} \,\mathrm {tr}(A^3)\) where A is the (usually indefinite) adjacency matrix. Note that the quadratic forms \(X^T A^3 X\) can be evaluated exactly using two matrix-vector multiplications. The results for an arXiv collaboration network with \(n = 5\,242\) nodes and \(48\,260\) triangles.Footnote 3

We estimate \(\,\mathrm {tr}(A^3)\) using \(N = 2, 2^2, 2^3, \ldots , 2^{11}\) samples. For each value of N we performed 1000 experiments and discarded the 5% worst approximations in order to estimate an error bound that holds with probability \(95\%\). The obtained results are represented by the shaded regions in Fig. 2 and match the obtained bounds fairly well, especially for Gaussian vectors.

Figure 3 shows the empirical failure probability \({\mathbb {P}}(|\,\mathrm {tr}^{\mathsf {G,R}}_N(A^3) - \,\mathrm {tr}(A^3)| \ge \varepsilon )\) with \(\varepsilon = \frac{1}{10} \,\mathrm {tr}(A^3)\) using 1000 experiments for \(N = 2, 2^2, 2^3, \ldots , 2^{11}\) (blue and red lines). The vertical purple and yellow lines are the estimated number of samples needed to achieve failure probability \(\delta = 0.05\) from Theorem 1 and Corollary 1, respectively.

Example 2

To compare the results of Theorems 4 and 5 with the number of sample vectors N and Lanczos steps m (per sample) required to reach a fixed accuracy, we consider the matrices listed in Table 1. The matrix thermomec_TC is contained in the University of Florida sparse matrix collection [14] and has been considered, for instance, in [10, 18, 40]. The matrix lowrank is defined in [30, 37] as

where each \(x_j\) is a sparse vector of length 20,000 with approximately \(2.5\%\) uniformly distributed nonzero entries, generated with the Matlab command sprand. The matrix precip is a two-dimensional Gaussian kernel matrix with length parameter \(\gamma = 64\) and regularization parameter \(\lambda = 0.008\) taken from [32], involving precipitation data from Slovakia [33]. As the matrices thermomec_TC and lowrank are too large for \(\log (A)\) to be computed explicitly, the quantities \(\Vert \log (A)\Vert _F\) and \(\Vert \log (A) - \,\mathrm {D}_{\log (A)}\Vert _F\) are approximated by randomized trace estimation combined with the Lanczos method to estimate the diagonal elements of \(\log (A)\).

For quadratic forms involving the logarithm, there is a relatively inexpensive way to obtain an upper bound on the error of the Lanczos method. As discussed in [5], Gauss quadrature always yields an upper bound for \(x^T \log (A)x\), while Gauss-Lobatto quadrature always yields a lower bound. We fix \(\delta = 0.1\) and for several values of \(\varepsilon \) we investigate how many samples and Lanczos iterations are needed in practice. When approximating quadratic forms while aiming at accuracy \(\varepsilon \), we stop the Lanczos method when the difference between upper and lower bound is less than \(\varepsilon /2\). Starting from \(N = 1\), we compute the empirical failure probability \({\mathbb {P}}(|\mathrm {est}_{N,m} - \log \det (A)| \ge \varepsilon )\); if this probability is larger than \(\delta \), we double the number of samples N and repeat.

Results for matrix thermomec_TC from [14]

Results for matrix lowrank from [30]

Results for matrix precip from [32]

The results for the three matrices from Table 1 are reported in Figs. 4, 5, and 6. The left plots show, for the considered values of \(\varepsilon \) (which have been normalized by dividing them by the true \(|\log \det (A)|\)), the number of samples required to attain \(90\%\) success probability over 30 runs of the algorithm, versus the number of samples given by Theorems 4 and 5. The plots on the right show, for the same (normalized) values of \(\varepsilon \), the average number of Lanczos steps required to reach accuracy \(\varepsilon /2\) versus the number of Lanczos steps predicted by Theorems 4 and 5.

For thermomec_TC, the diagonal of \(\log (A)\) is large relative to the rest of the matrix: \(\Vert \log (A)- \,\mathrm {D}_{\log (A)}\Vert _F/\Vert \log (A)\Vert _F \approx 0.07\). Therefore, our bounds predict that Rademacher vectors perform much better than Gaussian vectors; this is indeed confirmed by Fig. 4. The matrix A is well-conditioned and, hence, the bounds correctly predict that the Lanczos method only needs relatively few iterations to attain good accuracy.

For lowrank, Fig. 5 shows that Rademacher and Gaussian vectors perform similarly. Although the condition number of A is \(\kappa (A) \approx 1560\), the eigenvalues have a strong decay, and hence its adaptivity lets the Lanczos method perform much better than predicted by our bounds, see, e.g., [23] for a discussion.

For precip, the ratio \(\Vert \log (A)- \,\mathrm {D}_{\log (A)}\Vert _F/\Vert \log (A)\Vert _F \approx 0.44\) is reflected in Fig. 6, showing that Rademacher vectors attain somewhat better accuracy. The condition number of A is high and there is no strong decay or gaps in the singular values; a relatively large number of Lanczos steps is necessary to obtain the desired accuracy when approximating the quadratic forms.

Notes

The entropy of Z is defined as \(\,{\mathbb {H}}(Z) := {\mathbb {E}}[Z \log Z] - {\mathbb {E}}[Z] \log {\mathbb {E}}[Z]\) provided that all expected values exist.

In fact, it is possible to choose \(\rho = \frac{\sqrt{\kappa (A)+\varepsilon }+1}{\sqrt{\kappa (A)+\varepsilon }-1}\) for arbitrary \(\varepsilon > 0\).

References

R. Adamczak. The entropy method and concentration of measure in product spaces. Master’s thesis, University of Warsaw and Vrije Universiteit van Amsterdam, 2003. Available at http://duch.mimuw.edu.pl/radamcz/Old/Papers/master.pdf.

R. H. Affandi, E. Fox, R. Adams, and B. Taskar. Learning the parameters of determinantal point process kernels. In International Conference on Machine Learning, pages 1224–1232, 2014.

H. Avron. Counting triangles in large graphs using randomized matrix trace estimation. In Workshop on Large-scale Data Mining: Theory and Applications, volume 10, pages 10–9, 2010.

H. Avron and S. Toledo. Randomized algorithms for estimating the trace of an implicit symmetric positive semi-definite matrix. J. ACM, 58(2):Art. 8, 17, 2011.

Z. Bai, M. Fahey, and G. Golub. Some large-scale matrix computation problems. J. Comput. Appl. Math., 74(1-2):71–89, 1996.

R. P. Barry and R. K. Pace. Monte Carlo estimates of the log determinant of large sparse matrices. Linear Algebra Appl., 289(1-3):41–54, 1999.

R. Bhatia, M. D. Choi, and C. Davis. Comparing a matrix to its off-diagonal part. In The Gohberg anniversary collection, Vol. I (Calgary, AB, 1988), volume 40 of Oper. Theory Adv. Appl., pages 151–164. Birkhäuser, Basel, 1989.

S. Boucheron, G. Lugosi, and P. Massart. Concentration inequalities using the entropy method. Ann. Probab., 31(3):1583–1614, 2003.

S. Boucheron, G. Lugosi, and P. Massart. Concentration inequalities. Oxford University Press, Oxford, 2013. A nonasymptotic theory of independence, With a foreword by Michel Ledoux.

C. Boutsidis, P. Drineas, P. Kambadur, E.-M. Kontopoulou, and A. Zouzias. A randomized algorithm for approximating the log determinant of a symmetric positive definite matrix. Linear Algebra Appl., 533:95–117, 2017.

A. Caponnetto and E. De Vito. Optimal rates for the regularized least-squares algorithm. Found. Comput. Math., 7(3):331–368, 2007.

J. Chen. On the use of discrete Laplace operator for preconditioning kernel matrices. SIAM J. Sci. Comput., 35(2):A577–A602, 2013.

J. Chen. How accurately should I compute implicit matrix-vector products when applying the Hutchinson trace estimator? SIAM J. Sci. Comput., 38(6):A3515–A3539, 2016.

T. A. Davis and Y. Hu. The University of Florida sparse matrix collection. ACM Trans. Math. Software, 38(1):Art. 1, 25, 2011.

Distribution of difference of chi-squared variables. https://math.stackexchange.com/questions/85249/distribution-of-difference-of-chi-squared-variables. Accessed: 06/03/2020.

D. Durfee, J. Peebles, R. Peng, and A. B. Rao. Determinant-preserving sparsification of SDDM matrices. SIAM J. Comput., 49(4):350–408, 2020.

T. Eden, A. Levi, D. Ron, and C. Seshadhri. Approximately counting triangles in sublinear time. SIAM J. Comput., 46(5):1603–1646, 2017.

J. Fitzsimons, D. Granziol, K. Cutajar, M. Osborne, M. Filippone, and S. Roberts. Entropic trace estimates for log determinants. In Joint European Conference on Machine Learning and Knowledge Discovery in Databases, pages 323–338. Springer, 2017.

S. Foucart and H. Rauhut. A mathematical introduction to compressive sensing. Applied and Numerical Harmonic Analysis. Birkhäuser/Springer, New York, 2013.

J. R. Gardner, G. Pleiss, D. Bindel, K. Q. Weinberger, and A. G. Wilson. GPyTorch: Blackbox matrix-matrix Gaussian process inference with GPU acceleration. In Advances in Neural Information Processing Systems, volume 2018-December, pages 7576–7586, 2018.

G. H. Golub and G. Meurant. Matrices, moments and quadrature with applications. Princeton Series in Applied Mathematics. Princeton University Press, Princeton, NJ, 2010.

S. Gratton and D. Titley-Peloquin. Improved bounds for small-sample estimation. SIAM J. Matrix Anal. Appl., 39(2):922–931, 2018.

S. Güttel, D. Kressner, and K. Lund. Limited-memory polynomial methods for large-scale matrix functions. GAMM-Mitt., 43(3):e202000019, 19, 2020.

I. Han, D. Malioutov, H. Avron, and J. Shin. Approximating spectral sums of large-scale matrices using stochastic Chebyshev approximations. SIAM J. Sci. Comput., 39(4):A1558–A1585, 2017.

D. L. Hanson and F. T. Wright. A bound on tail probabilities for quadratic forms in independent random variables. Ann. Math. Statist., 42:1079–1083, 1971.

T. Hunter, A. E. Alaoui, and A. M. Bayen. Computing the log-determinant of symmetric, diagonally dominant matrices in near-linear time. CoRR, abs/1408.1693, 2014.

M. F. Hutchinson. A stochastic estimator of the trace of the influence matrix for Laplacian smoothing splines. Comm. Statist. Simulation Comput., 18(3):1059–1076, 1989.

F. Krahmer and R. Ward. New and improved Johnson-Lindenstrauss embeddings via the restricted isometry property. SIAM J. Math. Anal., 43(3):1269–1281, 2011.

B. Laurent and P. Massart. Adaptive estimation of a quadratic functional by model selection. Ann. Statist., 28(5):1302–1338, 2000.

H. Li and Y. Zhu. Randomized block Krylov space methods for trace and log-determinant estimators. arXiv preprintarXiv:2003.00212, 2020.

L. Lin, Y. Saad, and C. Yang. Approximating spectral densities of large matrices. SIAM Rev., 58(1):34–65, 2016.

R. A. Meyer, C. Musco, C. Musco, and D. P. Woodruff. Hutch++: Optimal stochastic trace estimation. In Symposium on Simplicity in Algorithms (SOSA), pages 142–155. SIAM, 2021.

M. Neteler and H. Mitasova. Open source GIS: a GRASS GIS approach, volume 689. Springer Science & Business Media, 2013.

R. K. Pace and J. P. LeSage. Chebyshev approximation of log-determinants of spatial weight matrices. Comput. Statist. Data Anal., 45(2):179–196, 2004.

W. Peng and H. Wang. A general scheme for log-determinant computation of matrices via stochastic polynomial approximation. Comput. Math. Appl., 75(4):1259–1271, 2018.

F. Roosta-Khorasani and U. Ascher. Improved bounds on sample size for implicit matrix trace estimators. Found. Comput. Math., 15(5):1187–1212, 2015.

A. K. Saibaba, A. Alexanderian, and I. C. F. Ipsen. Randomized matrix-free trace and log-determinant estimators. Numer. Math., 137(2):353–395, 2017.

M. Talagrand. New concentration inequalities in product spaces. Invent. Math., 126(3):505–563, 1996.

C. Thron, S. J. Dong, K. F. Liu, and H. P. Ying. Padé-\(Z_2\) estimator of determinants. Physical Review D - Particles, Fields, Gravitation and Cosmology, 57(3):1642–1653, 1998.

S. Ubaru, J. Chen, and Y. Saad. Fast estimation of \(\text{ tr }(f(A))\) via stochastic Lanczos quadrature. SIAM J. Matrix Anal. Appl., 38(4):1075–1099, 2017.

Upper limit on the central binomial coefficient. https://mathoverflow.net/questions/133732/upper-limit-on-the-central-binomial-coefficient. Accessed: 23/03/2020.

M. J. Wainwright. High-dimensional statistics, volume 48 of Cambridge Series in Statistical and Probabilistic Mathematics. Cambridge University Press, Cambridge, 2019. A non-asymptotic viewpoint.

M. J. Wainwright and M. I. Jordan. Log-determinant relaxation for approximate inference in discrete Markov random fields. IEEE Trans. Signal Process., 54(6):2099–2109, 2006.

K. Wimmer, Y. Wu, and P. Zhang. Optimal query complexity for estimating the trace of a matrix. In International Colloquium on Automata, Languages, and Programming, pages 1051–1062. Springer, 2014.

Y. Zhang and W. E. Leithead. Approximate implementation of the logarithm of the matrix determinant in Gaussian process regression. J. Stat. Comput. Simul., 77(3-4):329–348, 2007.

Acknowledgements

We thank Radosław Adamczak, Rasmus Kyng, and Shashanka Ubaru for helpful discussions on topics related to this work. We also thank the referees for providing valuable feedback.

Funding

Open Access funding provided by EPFL Lausanne.

Author information

Authors and Affiliations

Corresponding author

Additional information

Communicated by Nicholas J. Higham.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

The work of Alice Cortinovis has been supported by the SNSF Research Project Fast algorithms from low-rank updates, Grant Number: 200020_178806.

A Auxiliary Results

A Auxiliary Results

1.1 A.1 Herbst Argument and Logarithmic Sobolev Inequalities

This section contains auxiliary results used in the proof of Theorem 2. We recall that the entropy of a random variable Z is defined as

provided that all the involved expected values exist.

The Herbst argument (see, e.g., [9, page 11], [19, pages 239–240], and [42, Section 3.1.2]) turns a bound on the entropy of a random variable into a bound on the moment generating function. By Chernoff’s bound, the latter implies a bound on the tail of the random variable. Specifically, we use the following (modified) Herbst argument.

Lemma 7

Let Z be a random variable and \(g:[0, a) \rightarrow {\mathbb {R}}\) such that

Then for all \(\lambda \in [0, a)\) it holds

Proof

For \(\psi (\lambda ) := \log {\mathbb {E}}[\exp (\lambda Z)]\), it holds that \(\psi '(\lambda ) = {\mathbb {E}}[Z\exp (\lambda Z)] / {\mathbb {E}}[\exp (\lambda Z)]\). Recalling the definition of entropy, this allows us to rewrite (18) as

which is equivalent to

Integration on the interval \([0, \lambda ]\) gives

We conclude by noting that \(\lim _{\lambda \rightarrow 0^+} \frac{\psi }{\lambda } = {\mathbb {E}}[Z]\).\(\square \)

For deriving bounds on the entropy, we need the following two variations of Gross’ logarithmic Sobolev inequality.

Theorem 6

Let \(f:\{-1,1\}^n \rightarrow {\mathbb {R}}\) and let X be a Rademacher vector with components \(X_1, \ldots , X_n\). Define \(f(\bar{X}^{(i)}) := f(X_1, \ldots , X_{i-1}, -X_i, X_{i+1}, \ldots , X_n)\) for \(i=1, \ldots , n\). Then for all \(\lambda > 0\) we have

and

Proof

Inequality (19) is a standard result and can be found, e.g., in [9, page 122]. Inequality (20) is a variation of the same argument; see also [9, Exercise 5.5] for a related (but not identical) result. Inequality (20) can, in fact, be found in a Master’s thesis [1, Theorem 5]. For convenience of the reader, we provide a proof of (20) based on the textbook [9].

In [9, page 122] it is proven that

For \(a\ge b\) we have

where the first inequality follows from the concavity of the exponential and the Hermite–Hadamard inequality. Therefore, for all \(a, b\in {\mathbb {R}}\) we have

Applying (22) to each summand in Eq. 21) one obtains

where the last equality follows from the fact that f(X) and \(f(\bar{X}^{(i)})\) have the same distribution and changing the sign of the ith entry of \(\bar{X}^{(i)}\) gives X again.\(\square \)

1.2 A. 2 Bounds on the Complex Logarithm

The following two elementary results on the complex logarithm are needed in the convergence proofs of the Lanczos method in Sect. 3.

Lemma 8

Consider a circle in the complex plane with center \(a \in {\mathbb {R}}^+\), \(a>1\) and radius b such that \(b^2 = a^2 - 1\). Then the maximum absolute value of the logarithm on this circle is attained on the real axis.

Proof

By symmetry, we can restrict ourselves to the upper half of the circle. For fixed \(\theta \in [0, \arctan (b)]\) the line \(r e^{ \mathrm {i} \theta }\) for \(r> 0\) intersects the circumference when \((r \cos \theta - a)^2 + r^2 \sin ^2\theta = b^2\). Clearly, this equality holds for \(r_{\pm } = a \cos \theta \pm \sqrt{a^2\cos ^2\theta - 1}\). Note that these points parametrize the entire upper semi-circle and we have \(r_- = \frac{1}{r_+}\), so

Therefore, to prove the lemma it is sufficient to show that the function \(g: [0, \arctan (b)] \rightarrow {\mathbb {R}}\) given by \(g(\theta ) := |f(\theta )|^2\), where \(f(\theta ) = \log (r_+ e^{ \mathrm {i} \theta })\), attains its maximum for \(\theta = 0\). We will establish this fact by showing that g decreases monotonically. We have

and therefore

For \(\theta \in (0, \arctan (b))\) we have that

Using the facts that \(x\mapsto \frac{x}{\sin (x)}\) is increasing for \(x \in [0, \pi ]\), \(\arctan (x) < \,\mathrm {arcsinh}(x)\) for \(x > 0\), and \(x\mapsto \frac{\,\mathrm {arcsinh}(x)}{x}\) is decreasing for \(x>0\), one obtains

for every \(\theta \in (0, \arctan (b))\). In particular, this shows \(g'(\theta ) < 0\) for \(\theta \in (0, \arctan (b))\) and hence g is decreasing.\(\square \)

Corollary 5

Consider an ellipse \(\mathcal {E}\) in the open right-half complex plane, with foci on the real axis. Then the maximum absolute value of the logarithm on this ellipse is attained on the real axis.

Proof

Let \(0< \alpha < \beta \) be the two intersections of the ellipse with the real axis. If \(|\log \alpha | \ge |\log \beta |\) then \(\mathcal {E}\) is contained in the circle \(\mathcal {C}_1\) of center \(a := \frac{1}{2} \left( \frac{1}{\alpha } + \alpha \right) \) and radius \(b := \frac{1}{2} \left( \frac{1}{\alpha } - \alpha \right) = \sqrt{a^2 - 1}\), and \(\mathcal {E}\) is tangent to \(\mathcal {C}_1\) in \(\alpha \); otherwise \(\mathcal {E}\) is contained in the circle \(\mathcal {C}_2\) of center \(a := \frac{1}{2} \left( \beta + \frac{1}{\beta } \right) \) and radius \(b := \frac{1}{2} \left( \beta - \frac{1}{\beta } \right) = \sqrt{a^2-1}\), and \(\mathcal {E}\) is tangent to \(\mathcal {C}_2\) in \(\beta \). In both cases, the result follows from Lemma 8.\(\square \)

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Cortinovis, A., Kressner, D. On Randomized Trace Estimates for Indefinite Matrices with an Application to Determinants. Found Comput Math 22, 875–903 (2022). https://doi.org/10.1007/s10208-021-09525-9

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10208-021-09525-9