Abstract

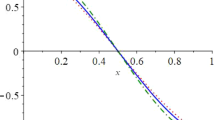

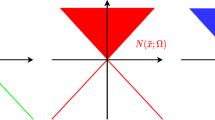

In this paper, we consider the maximizing of the probability \({\mathbb {P}}\left\{ \, \zeta \, \mid \, \zeta \, \in \, {\mathbf {K}}({\mathbf {x}}) \, \right\} \) over a closed and convex set \({\mathcal {X}}\), a special case of the chance-constrained optimization problem. Suppose \({\mathbf {K}}({\mathbf {x}}) \, \triangleq \, \left\{ \, \zeta \, \in \, {\mathcal {K}}\, \mid \, c({\mathbf {x}},\zeta ) \, \ge \, 0 \right\} \), and \(\zeta \) is uniformly distributed on a convex and compact set \({\mathcal {K}}\) and \(c({\mathbf {x}},\zeta )\) is defined as either \(c({\mathbf {x}},\zeta )\, \triangleq \, 1-\left| \zeta ^T{\mathbf {x}}\right| ^m\) where \(m\ge 0\) (Setting A) or \(c({\mathbf {x}},\zeta ) \, \triangleq \, T{\mathbf {x}}\, - \, \zeta \) (Setting B). We show that in either setting, by leveraging recent findings in the context of non-Gaussian integrals of positively homogenous functions, \({\mathbb {P}}\left\{ \,\zeta \, \mid \, \zeta \, \in \, {\mathbf {K}}({\mathbf {x}}) \, \right\} \) can be expressed as the expectation of a suitably defined continuous function \(F(\bullet ,\xi )\) with respect to an appropriately defined Gaussian density (or its variant), i.e. \({\mathbb {E}}_{{{\tilde{p}}}} \left[ \, F({\mathbf {x}},\xi )\, \right] \). Aided by a recent observation in convex analysis, we then develop a convex representation of the original problem requiring the minimization of \(g\left( {\mathbb {E}}\left[ \, F(\bullet ,\xi )\, \right] \right) \) over \({\mathcal {X}}\), where g is an appropriately defined smooth convex function. Traditional stochastic approximation schemes cannot contend with the minimization of \(g\left( {\mathbb {E}}\left[ F(\bullet ,\xi )\right] \right) \) over \(\mathcal X\), since conditionally unbiased sampled gradients are unavailable. We then develop a regularized variance-reduced stochastic approximation (r-VRSA) scheme that obviates the need for such unbiasedness by combining iterative regularization with variance-reduction. Notably, (r-VRSA) is characterized by almost-sure convergence guarantees, a convergence rate of \(\mathcal {O}(1/k^{1/2-a})\) in expected sub-optimality where \(a > 0\), and a sample complexity of \(\mathcal {O}(1/\epsilon ^{6+\delta })\) where \(\delta > 0\). To the best of our knowledge, this may be the first such scheme for probability maximization problems with convergence and rate guarantees. Preliminary numerics on a portfolio selection problem (Setting A) and a set-covering problem (Setting B) suggest that the scheme competes well with naive mini-batch SA schemes as well as integer programming approximation methods.

Similar content being viewed by others

References

van Ackooij, W.: Eventual convexity of chance constrained feasible sets. Optimization 64(5), 1263–1284 (2015)

van Ackooij, W.: A discussion of probability functions and constraints from a variational perspective. Set-Valued Var. Anal. 28(4), 585–609 (2020). https://doi.org/10.1007/s11228-020-00552-2

van Ackooij, W., Aleksovska, I., Munoz-Zuniga, M.: (Sub-)differentiability of probability functions with elliptical distributions. Set-Valued Var. Anal. 26(4), 887–910 (2018). https://doi.org/10.1007/s11228-017-0454-3

van Ackooij, W., Berge, V., de Oliveira, W., Sagastizábal, C.: Probabilistic optimization via approximate \(p\)-efficient points and bundle methods. Comput. Oper. Res. 77, 177–193 (2017). https://doi.org/10.1016/j.cor.2016.08.002

van Ackooij, W., Demassey, S., Javal, P., Morais, H., de Oliveira, W., Swaminathan, B.: A bundle method for nonsmooth DC programming with application to chance-constrained problems. Comput. Optim. Appl. 78(2), 451–490 (2021). https://doi.org/10.1007/s10589-020-00241-8

van Ackooij, W., Henrion, R.: Gradient formulae for nonlinear probabilistic constraints with Gaussian and Gaussian-like distributions. SIAM J. Optim. 24(4), 1864–1889 (2014). https://doi.org/10.1137/130922689

van Ackooij, W., Henrion, R.: (Sub-)gradient formulae for probability functions of random inequality systems under Gaussian distribution. SIAM/ASA J. Uncertain. Quantif. 5(1), 63–87 (2017). https://doi.org/10.1137/16M1061308

van Ackooij, W., Henrion, R., Möller, A., Zorgati, R.: Joint chance constrained programming for hydro reservoir management. Optim. Eng. 15(2), 509–531 (2014). https://doi.org/10.1007/s11081-013-9236-4

van Ackooij, W., Pérez-Aros, P.: Gradient formulae for nonlinear probabilistic constraints with non-convex quadratic forms. J. Optim. Theory Appl. 185(1), 239–269 (2020). https://doi.org/10.1007/s10957-020-01634-9

van Ackooij, W., Sagastizábal, C.: Constrained bundle methods for upper inexact oracles with application to joint chance constrained energy problems. SIAM J. Optim. 24(2), 733–765 (2014). https://doi.org/10.1137/120903099

Ahmed, S., Luedtke, J., Song, Y., Xie, W.: Nonanticipative duality, relaxations, and formulations for chance-constrained stochastic programs. Math. Program. 162(1–2, Ser. A), 51–81 (2017)

Balasubramanian, K., Ghadimi, S., Nguyen, A.: Stochastic multi-level composition optimization algorithms with level-independent convergence rates. arXiv preprint arXiv:2008.10526 (2020)

Bardakci, I., Lagoa, C.M.: Distributionally robust portfolio optimization. In: 2019 IEEE 58th Conference on Decision and Control (CDC), pp. 1526–1531. IEEE (2019)

Bardakci, I.E., Lagoa, C., Shanbhag, U.V.: Probability maximization with random linear inequalities: Alternative formulations and stochastic approximation schemes. In: 2018 Annual American Control Conference, ACC 2018, Milwaukee, WI, USA, June 27-29, 2018, pp. 1396–1401. IEEE (2018)

Bienstock, D., Chertkov, M., Harnett, S.: Chance-constrained optimal power flow: Risk-aware network control under uncertainty. SIAM Rev. 56(3), 461–495 (2014)

Bobkov, S.G.: Convex bodies and norms associated to convex measures. Probab. Theory Relat. Fields 147(1–2), 303–332 (2010)

Brascamp, H.J., Lieb, E.H.: On extensions of the Brunn-Minkowski and Prékopa-Leindler theorems, including inequalities for log concave functions, and with an application to the diffusion equation. J. Functional Analysis 22(4), 366–389 (1976). https://doi.org/10.1016/0022-1236(76)90004-5

Burke, J.V., Chen, X., Sun, H.: The subdifferential of measurable composite max integrands and smoothing approximation. Math. Program. 181(2, Ser. B), 229–264 (2020). https://doi.org/10.1007/s10107-019-01441-9

Byrd, R.H., Chin, G.M., Nocedal, J., Wu, Y.: Sample size selection in optimization methods for machine learning. Math. Program. 134(1), 127–155 (2012)

Campi, M.C., Garatti, S.: A sampling-and-discarding approach to chance-constrained optimization: feasibility and optimality. J. Optim. Theory Appl. 148(2), 257–280 (2011)

Charnes, A., Cooper, W.W.: Chance-constrained programming. Management Sci. 6, 73–79 (1959/1960)

Charnes, A., Cooper, W.W., Symonds, G.H.: Cost horizons and certainty equivalents: An approach to stochastic programming of heating oil. Management Science 4(3), 235–263 (1958). https://EconPapers.repec.org/RePEc:inm:ormnsc:v:4:y:1958:i:3:p:235-263

Chen, L.: An approximation-based approach for chance-constrained vehicle routing and air traffic control problems. In: Large scale optimization in supply chains and smart manufacturing, Springer Optim. Appl., vol. 149, pp. 183–239. Springer, Cham (2019)

Chen, T., Sun, Y., Yin, W.: Solving stochastic compositional optimization is nearly as easy as solving stochastic optimization. IEEE Trans. Signal Process. 69, 4937–4948 (2021)

Chen, W., Sim, M., Sun, J., Teo, C.P.: From cvar to uncertainty set: Implications in joint chance-constrained optimization. Oper. Res. 58(2), 470–485 (2010)

Cheng, J., Chen, R.L.Y., Najm, H.N., Pinar, A., Safta, C., Watson, J.P.: Chance-constrained economic dispatch with renewable energy and storage. Comput. Optim. Appl. 70(2), 479–502 (2018). https://doi.org/10.1007/s10589-018-0006-2

Clarke, F.H.: Optimization and nonsmooth analysis, Classics in Applied Mathematics, vol. 5, second edn. Society for Industrial and Applied Mathematics (SIAM), Philadelphia, PA (1990). https://doi.org/10.1137/1.9781611971309

Cui, Y., Liu, J., Pang, J.S.: Nonconvex and nonsmooth approaches for affine chance constrained stochastic programs. Set-Valued Variat. Anal. 30, 1149–1211 (2022)

Curtis, F.E., Wächter, A., Zavala, V.M.: A sequential algorithm for solving nonlinear optimization problems with chance constraints. SIAM J. Optim. 28(1), 930–958 (2018)

Ermoliev, Y.: Methods of Stochastic Programming. Monographs in Optimization and OR, Nauka, Moscow (1976)

Fiacco, A.V., McCormick, G.P.: The sequential maximization technique \(({{\rm SUMT}})\) without parameters. Operations Res. 15, 820–827 (1967). https://doi.org/10.1287/opre.15.5.820

Fiacco, A.V., McCormick, G.P.: Nonlinear programming: Sequential unconstrained minimization techniques. John Wiley and Sons Inc, New York-London-Sydney (1968)

Ghadimi, S., Lan, G.: Accelerated gradient methods for nonconvex nonlinear and stochastic programming. Math. Program. 156(1–2), 59–99 (2016)

Ghadimi, S., Ruszczynski, A., Wang, M.: A single timescale stochastic approximation method for nested stochastic optimization. SIAM J. Optim. 30(1), 960–979 (2020)

Gicquel, C., Cheng, J.: A joint chance-constrained programming approach for the single-item capacitated lot-sizing problem with stochastic demand. Ann. Oper. Res. 264(1–2), 123–155 (2018). https://doi.org/10.1007/s10479-017-2662-5

Göttlich, S., Kolb, O., Lux, K.: Chance-constrained optimal inflow control in hyperbolic supply systems with uncertain demand. Optimal Control Appl. Methods 42(2), 566–589 (2021). https://doi.org/10.1002/oca.2689

Guo, G., Zephyr, L., Morillo, J., Wang, Z., Anderson, C.L.: Chance constrained unit commitment approximation under stochastic wind energy. Comput. Oper. Res. 134, Paper No. 105398, 13 (2021). https://doi.org/10.1016/j.cor.2021.105398

Guo, S., Xu, H., Zhang, L.: Convergence analysis for mathematical programs with distributionally robust chance constraint. SIAM J. Optim. 27(2), 784–816 (2017). https://doi.org/10.1137/15M1036592

Gurobi Optimization, LLC.: Gurobi Optimizer Reference Manual (2022). https://www.gurobi.com

Henrion, R.: Optimierungsprobleme mit wahrscheinlichkeitsrestriktionen: Modelle, struktur, numerik. Lecture notes p. 43 (2010)

Hong, L.J., Yang, Y., Zhang, L.: Sequential convex approximations to joint chance constrained programs: A monte carlo approach. Oper. Res. 59(3), 617–630 (2011)

Jalilzadeh, A., Shanbhag, U.V., Blanchet, J.H., Glynn, P.W.: Optimal smoothed variable sample-size accelerated proximal methods for structured nonsmooth stochastic convex programs. arXiv preprint arXiv:1803.00718 (2018)

Lagoa, C.M., Li, X., Sznaier, M.: Probabilistically constrained linear programs and risk-adjusted controller design. SIAM J. Optim. 15(3), 938–951 (2005)

Lasserre, J.B.: Level sets and nongaussian integrals of positively homogeneous functions. IGTR 17(1), 1540001 (2015)

Lei, J., Shanbhag, U.V.: Asynchronous variance-reduced block schemes for composite non-convex stochastic optimization: block-specific steplengths and adapted batch-sizes. Optimization Methods and Software 0(0), 1–31 (2020)

Lian, X., Wang, M., Liu, J.: Finite-sum composition optimization via variance reduced gradient descent. In: Artificial Intelligence and Statistics, pp. 1159–1167. PMLR (2017)

Lieb, E., Loss, M.: Analysis. Crm Proceedings & Lecture Notes. American Mathematical Society (2001). https://books.google.com/books?id=Eb_7oRorXJgC

Luedtke, J., Ahmed, S.: A sample approximation approach for optimization with probabilistic constraints. SIAM J. Optim. 19(2), 674–699 (2008)

Markowitz, H.: Portfolio selection. J. Financ. 7(1), 77–91 (1952)

Miller, B.L., Wagner, H.M.: Chance constrained programming with joint constraints. Oper. Res. 13(6), 930–945 (1965)

Morozov, A., Shakirov, S.: Introduction to integral discriminants. J. High Energy Phys. 2009(12), 002 (2009)

Nemirovski, A., Juditsky, A., Lan, G., Shapiro, A.: Robust stochastic approximation approach to stochastic programming. SIAM J. Optim. 19(4), 1574–1609 (2009)

Nemirovski, A., Shapiro, A.: Convex approximations of chance constrained programs. SIAM J. Optim. 17(4), 969–996 (2006)

Norkin, V.I.: The analysis and optimization of probability functions (1993)

Pagnoncelli, B.K., Ahmed, S., Shapiro, A.: Sample average approximation method for chance constrained programming: theory and applications. J. Optim. Theory Appl. 142(2), 399–416 (2009). https://doi.org/10.1007/s10957-009-9523-6

Pagnoncelli, B.K., Ahmed, S., Shapiro, A.: Sample average approximation method for chance constrained programming: theory and applications. J. Optim. Theory Appl. 142(2), 399–416 (2009)

Peña-Ordieres, A., Luedtke, J.R., Wächter, A.: Solving chance-constrained problems via a smooth sample-based nonlinear approximation. arXiv:1905.07377 (2019)

Pflug, G.C., Weisshaupt, H.: Probability gradient estimation by set-valued calculus and applications in network design. SIAM J. Optim. 15(3), 898–914 (2005). https://doi.org/10.1137/S1052623403431639

Polyak, B.T.: New stochastic approximation type procedures. Automat. i Telemekh 7(98–107), 2 (1990)

Polyak, B.T., Juditsky, A.B.: Acceleration of stochastic approximation by averaging. SIAM J. Control. Optim. 30(4), 838–855 (1992)

Prékopa, A.: A class of stochastic programming decision problems. Math. Operationsforsch. Statist. 3(5), 349–354 (1972). https://doi.org/10.1080/02331937208842107

Prékopa, A.: On logarithmic concave measures and functions. Acta Scientiarum Mathematicarum 34, 335–343 (1973)

Prékopa, A.: Probabilistic programming. In: Stochastic programming, Handbooks Oper. Res. Management Sci., vol. 10, pp. 267–351. Elsevier Sci. B. V., Amsterdam, Netherlands (2003). https://doi.org/10.1016/S0927-0507(03)10005-9

Prékopa, A.: Stochastic programming, vol. 324. Springer Science & Business Media (2013)

Prékopa, A., Szántai, T.: Flood control reservoir system design using stochastic programming. In: Mathematical programming in use, pp. 138–151. Springer (1978)

Robbins, H., Monro, S.: A stochastic approximation method. The annals of mathematical statistics pp. 400–407 (1951)

Royset, J.O., Polak, E.: Extensions of stochastic optimization results to problems with system failure probability functions. J. Optim. Theory Appl. 133(1), 1–18 (2007). https://doi.org/10.1007/s10957-007-9178-0

Scholtes, S.: Introduction to piecewise differentiable equations. Springer Science & Business Media, New York (2012)

Shanbhag, U.V., Blanchet, J.H.: Budget-constrained stochastic approximation. In: Proceedings of the 2015 Winter Simulation Conference, Huntington Beach, CA, USA, December 6-9, 2015, pp. 368–379 (2015)

Shapiro, A., Dentcheva, D., Ruszczyński, A.: Lectures on stochastic programming: modeling and theory. SIAM (2009)

Sun, Y., Aw, G., Loxton, R., Teo, K.L.: Chance-constrained optimization for pension fund portfolios in the presence of default risk. European J. Oper. Res. 256(1), 205–214 (2017). https://doi.org/10.1016/j.ejor.2016.06.019

Uryasev, S.: Derivatives of probability functions and integrals over sets given by inequalities. pp. 197–223 (1994). https://doi.org/10.1016/0377-0427(94)90388-3. Stochastic programming: stability, numerical methods and applications (Gosen, 1992)

Uryasev, S.: Derivatives of probability functions and some applications. pp. 287–311 (1995). https://doi.org/10.1007/BF02031712. Stochastic programming (Udine, 1992)

Wang, M., Fang, E.X., Liu, H.: Stochastic compositional gradient descent: Algorithms for minimizing compositions of expected-value functions. Math. Program. 161(1–2), 419–449 (2017)

Wang, M., Liu, J., Fang, E.X.: Accelerating stochastic composition optimization. The Journal of Machine Learning Research 18(1), 3721–3743 (2017)

Xie, Y., Shanbhag, U.V.: SI-ADMM: A stochastic inexact ADMM framework for stochastic convex programs. IEEE Trans. Autom. Control 65(6), 2355–2370 (2020)

Yadollahi, E., Aghezzaf, E.H., Raa, B.: Managing inventory and service levels in a safety stock-based inventory routing system with stochastic retailer demands. Appl. Stoch. Models Bus. Ind. 33(4), 369–381 (2017). https://doi.org/10.1002/asmb.2241

Yang, S., Wang, M., Fang, E.X.: Multilevel stochastic gradient methods for nested composition optimization. SIAM J. Optim. 29(1), 616–659 (2019)

Acknowledgements

The authors would like to acknowledge support from NSF CMMI-1538605, EPCN-1808266, DOE ARPA-E award DE-AR0001076, NIH R01-HL142732, and the Gary and Sheila Bello chair funds. Preliminary efforts at studying Setting A were carried out in [14]

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix

Appendix

Proof of Theorem 2:

-

(a)

When considering uniform distributions over a compact and convex set \(\mathcal {K}\), the density is constant in this set and zero outside the set. It can then be concluded that \(\zeta \) has a log-concave density. Furthermore, \(\zeta \) has a symmetric density about the origin since \(\mathcal {K}\) is a symmetric set about the origin. Hence by Lemma 6.2 in [16], h is convex where \(h({\mathbf {x}})\triangleq 1/f({\mathbf {x}})\). \(\square \)

-

(b)

Since (11) is a convex program, any solution \({\mathbf {x}}^*\) satisfies \({ h({\mathbf {x}}^*) \le h({\mathbf {x}}), \ \forall \mathbf {x} \in \mathcal {X}.}\) From the positivity of f over \({\mathcal {X}}\), \( \frac{1}{f({\mathbf {x}}^*)} \le \frac{1}{f({\mathbf {x}})}\) for every \({\mathbf {x}}\in \mathcal {X}\) implying that \(f({\mathbf {x}}^*) \ge f({\mathbf {x}})\) for every \({\mathbf {x}}\in \mathcal {X}.\) Consequently, \({\mathbf {x}}^*\) is a global maximizer of (11). \(\square \)

Proof of Lemma 3:

We prove this result by showing the unimodality of f on \({\mathbb {R}}_+\) where \(f(u) = u^c e^{-u}\), implying that \(f'(u) = cu^{c-1}e^{-u} - u^ce^{-u} = 0\) if \(u = c.\) Furthermore, \(f'(u) > 0\) when \(u < c\) and \(f'(u) < 0\) when \(u > c\). Finally, \(f(0) = 0\). It follows that \(u^* = c\) is a maximizer of \(u^ce^{-u}\) on \([0,\infty )\) where \(f(c) = \frac{c^c}{e^{c}}\). \(\square \)

Proof of Proposition 2:

Recall the definition of \(F({\mathbf {x}},\xi )\) from the statement of Lemma 4. We prove (a) by considering two cases. Case (i): \(\xi \in \varXi _1({\mathbf {x}}) \cup \varXi _0({\mathbf {x}}).\) It follows that

Case (ii): \(\xi \in \varXi _2({\mathbf {x}}).\) Proceeding similarly, we obtain that

Consequently, \(\left| F({\mathbf {x}},\xi )\right| ^2 \le \mathcal {C}^2_{{\mathcal {K}}} (2\pi )^n\) for every \(\xi \in {\mathbb {R}}^n\).

(b) We observe that \(\partial F({\mathbf {x}},\xi )\) is defined as follows.

Consequently, it follows that \({\mathbb {E}}_{{\tilde{p}}} \left[ \, \Vert G({\mathbf {x}},\xi )\Vert ^2 \, \right] \) is bounded as follows.

where the last equality follows from observing that \(G({\mathbf {x}},\xi ) = 0\) for \(\xi \in \varXi _2({\mathbf {x}})\) and the integral in (33) is zero because \(\varXi _0({\mathbf {x}})\) is a measure zero set. It follows that \({\mathbb {E}}\left[ \, \Vert G({\mathbf {x}},\xi )\Vert ^2\, \right] \) can be bounded as follows:

where the inequality follows from \(\xi \in \varXi _1({\mathbf {x}},u)\). Next, we consider the expression \((\xi ^T{\mathbf {x}})^2 e^{-(\xi ^T{\mathbf {x}})^2}\) or \(ue^{-u}\). We note that by Lemma 3, \(ue^{-u}\) is a unimodal function and \(u^* = 1\) is a maximizer with value \(e^{-1}\). Consequently, we have that

implying that

\(\square \)

Proof of Proposition 3:

(a) Since \(\Vert \xi \Vert _{{\mathcal {K}}}^2 = \Vert \xi \Vert _p^2\), it follows from Theorem 1 that

(b) Omitted (similar to proof of Proposition 2(a).

(c) Next, we derive a bound on the second moment of \(\Vert G({\mathbf {x}},\xi )\Vert \) akin to Prop. 2(b). We observe that \( \partial F({\mathbf {x}},\xi ) \) is defined as

Consequently, \({\mathbb {E}}\left[ \Vert G({\mathbf {x}},\xi )\Vert ^2\right] \) can be bounded as follows.

where the last equality follows from observing that \(G({\mathbf {x}},\xi ) = 0\) for \(\xi \in \varXi _2({\mathbf {x}})\) and the integral in (36) is zero because \(\varXi _0({\mathbf {x}})\) is a measure zero set. It follows that

where (38) follows from \(\xi \in \varXi _1({\mathbf {x}})\) and (37) follows from

We may then conclude that

where (39) follows from Lemma 3. \(\square \)

Proof of Lemma 6:

Suppose \(({\mathbf {x}},{\mathbf {y}})\) is feasible with respect to (PM\(_{A,\mathrm{ext}}^{2}\)). Then \({\mathbf {x}}\in \mathcal {X}\) and is therefore feasible with (PM\(_{A}^{{\mathcal {E}}}\)). In addition,

\(\square \)

Proof of Proposition 6:

(a) The result follows by a transformation argument. We define a new variable \({ {\tilde{\zeta }} \in {\tilde{{\mathcal {K}}}}}\) such that \({ {\tilde{\zeta }}\triangleq \zeta -\mu }\) where \({ {\tilde{{\mathcal {K}}}} \triangleq \{ {\tilde{\zeta }}: \Vert {\tilde{\zeta }} \Vert _p \le \alpha \}}\). The set \({\tilde{{\mathbf {K}}}}({\mathbf {x}})\) can be defined as the following

We first show that \(\zeta \in {\mathbf {K}}({\mathbf {x}})\) if and only if \({\tilde{\zeta }} \in {\tilde{{\mathbf {K}}}}({\mathbf {x}})\). Suppose \(\zeta \in {\mathbf {K}}({\mathbf {x}})\). Then \(\zeta \in \mathcal {K}\) and \(c({\mathbf {x}},\zeta ) = T{\mathbf {x}}-\zeta \ge 0\). If \(\zeta \in \mathcal {K}\), then \(\Vert \zeta -\mu \Vert _p \le \alpha \) or \(\Vert {\tilde{\zeta }}\Vert _p \le \alpha \) where \({\tilde{\zeta }} = \zeta - \mu \). Furthermore, \(T{\mathbf {x}}\ge \zeta \) can be rewritten as \(T{\mathbf {x}}- \mu \ge \zeta - \mu \) or \(T{\mathbf {x}}- \mu \ge {\tilde{\zeta }}\). It follows that

The reverse direction follows similarly. Consequently, \({\mathbb {P}}\left\{ \zeta \, \left| \, \zeta \in {\mathbf {K}}({\mathbf {x}}) \right. \right\} = {\mathbb {P}}\left\{ {\tilde{\zeta }} \, \left| \, {\tilde{\zeta }} \in {\tilde{{\mathbf {K}}}}({\mathbf {x}}) \right. \right\} .\) We now analyze the latter probability. It may be observed that the Minkowski functional associated with \( {\tilde{{\mathcal {K}}}}\) is given by \( \Vert {\tilde{\zeta }}\Vert _{{\tilde{{\mathcal {K}}}}} = \tfrac{1}{\alpha }\Vert {\tilde{\zeta }}\Vert _p\). Since \( {T_{i,\bullet } {\mathbf {x}}-\mu _i \ge \delta >0 }\) for \( i=1,\ldots ,d \), it follows that

Since \( g_i({\mathbf {x}},{\tilde{\zeta }}) \ \triangleq \ \left( \frac{\max \{{\tilde{\zeta }}_i,0\}}{T_{i,\bullet } {\mathbf {x}}-\mu _i}\right) ^2 \) for \( i=1,\ldots , d \) and \( g_{d+1}({\mathbf {x}}, {\tilde{\zeta }}) \triangleq \tfrac{1}{\alpha ^2}\Vert {\tilde{\zeta }}\Vert ^{2}_{p} \) are PHFs with degree 2, then \( g({{\mathbf {x}}}, {\tilde{\zeta }}) \triangleq \max \{ g_1({\mathbf {x}}, {\tilde{\zeta }}),\ldots ,g_{d+1}({\mathbf {x}},{\tilde{\zeta }}) \} \) is positively homogeneous with degree 2. By selecting \(h(\zeta ) = 1\) and \({\varLambda } = {\tilde{{\mathbf {K}}}}({\mathbf {x}})\), we may invoke Lemma 2, leading to the following equality.

The Eq. (40) can be rewritten as

(b) Omitted (similar to proof of Lemma 8 (a)).

(c) When \( {\mathcal {K}}\) satisfies Assumption 2, the proof of Lemma 8(b) requires slight modification. Suppose \( F({\mathbf {x}},\xi ) \) and \( p(\xi ) \) are defined as in (a). Then we may define \(\partial F({\mathbf {x}},\xi )\) as

where \(H({\mathbf {x}},\xi )\) denotes the Clarke generalized gradient of \(g({\mathbf {x}},\xi )\), defined as in (17). Consequently, it follows that \({\mathbb {E}} \left[ \Vert G({\mathbf {x}},\xi )\Vert ^2\right] \) is bounded as follows.

where the last equality follows from observing that \(G({\mathbf {x}},\xi ) = 0\) for \(\xi \in \varXi _{d+1}({\mathbf {x}})\) and the integral in (41) is zero because \(\varXi _0({\mathbf {x}})\) is a measure zero set. It follows that

where the first inequality follows from \( {T_{i,\bullet } {\mathbf {x}}-\mu _i \ge \delta >0 }\) for all i, and the second inequality follows from \( \xi \in \varXi _i({\mathbf {x}})\). It follows from Lemma 3 that given any \( \alpha \), by choosing the variance \( \sigma ^2\) of the random variable \( \xi \) such that \(\sigma ^2 = \alpha ^2 \) leads to the bound \( {{\mathbb {E}} \left[ \Vert G({\mathbf {x}},\xi )\Vert ^2 \right] \le 16\mathcal {C}^2(2\pi \sigma ^2)^d {\sum _{i=1}^d} \frac{\Vert T_{i,\bullet }\Vert ^2}{\delta ^2 e^2}}. \) \(\square \)

Proof of Lemma 10:

If \({{\tilde{G}}}({\mathbf {x}}_k,\xi ) \triangleq G({\mathbf {x}}_k,\xi ) - {\mathbb {E}}[G({\mathbf {x}}_k,\xi )]\), by the conditional independence of \({\tilde{G}}({\mathbf {x}}_k,\xi _j)\) and \({\tilde{G}}({\mathbf {x}}_k,\xi _{\ell })\) for \(j \ne \ell \), we have

By (42) and Prop. 2, \({\mathbb {E}}\left[ \Vert {\bar{w}}_{G,k}\Vert ^2 \, \left| \, \mathcal {F}_k \right. \right] \ \le \ \frac{\mathcal {C}^2_{{\mathcal {K}}}(2\pi )^n}{eN_k} {\mathbb {E}}_{{{\tilde{p}}}}\left[ \Vert \xi \Vert ^2\right] \) for Setting A. Similarly, for Setting B, by Lemma. 8,

In addition, for Setting A, \( {\mathbb {E}}\left[ \Vert {\bar{w}}_{f,k}\Vert ^2 \, \left| \, \mathcal {F}_k \right. \right] \le \frac{2(\mathcal {C}_{{\mathcal {K}}}^2(2\pi )^n+1)}{N_k}\) while for Setting B, we obtain that \({\mathbb {E}}\left[ \, \Vert {\bar{w}}_{f,k}\Vert ^2 \, \left| \, \mathcal {F}_k \right. \right] \le \frac{\mathcal {C}^2(2\pi \sigma ^2)^d}{N_k}.\) \(\square \)

Proof of Lemma 11:

(Setting A) Consider \({\bar{w}}_k\), where \({\bar{w}}_k\) is defined as \( {\bar{w}}_k \triangleq \frac{-(G_k+{\bar{w}}_{G,k})}{(f({\mathbf {x}}_k) + {\bar{w}}_{f,k})^{2}+\epsilon _k}- \frac{-G_k}{(f({\mathbf {x}}_k))^{2}}.\) We have that

where \(f({\mathbf {x}}_k) \ge \epsilon _f\) for every \({\mathbf {x}}_k \in \mathcal {X}.\) Taking conditional expectations and recalling the independence of \({\bar{w}}_{f,k}\) and \({\bar{w}}_{G,k}\) conditional on \(\mathcal {F}_k\), the following bound emerges.

where \(\Vert G_k\Vert ^2 = \Vert {\mathbb {E}}\left[ G({\mathbf {x}}_k,\xi ) \, \left| \, \mathcal {F}_k \right. \right] \Vert ^2 \le {\mathbb {E}}\left[ \Vert G({\mathbf {x}}_k,\xi )\Vert ^2 \, \left| \, \right. \mathcal {F}_k\right] \le M_G^2\) by Jensen’s inequality. From Prop. 2(b,c), \(| F({\mathbf {x}},\xi )| \le {M_F}\) for any \({\mathbf {x}}, \xi \), implying that

Consequently, by recalling that \(\epsilon _k = 1/N_k^{1/4}\), the following holds a.s.

(Setting B) Since \( {\bar{w}}_k \triangleq {\frac{{-}(G_{k}+{\bar{w}}_{G,k})}{(f({\mathbf {x}}_k) + {\bar{w}}_{f,k})+\epsilon _k}+ \frac{G_{k}}{f({\mathbf {x}}_k)}}\) and

where \(f({\mathbf {x}}_k) \ge \epsilon _f\) and for every \({\mathbf {x}}_k \in \mathcal {X}.\) Taking expectations conditioned on \(\mathcal {F}_k\) and recalling the independence of \({\bar{w}}_{f,k}\) and \({\bar{w}}_{G,k}\) conditional on \(\mathcal {F}_k\), we have the following bound.

By selecting \(\epsilon _k = 1/N_k^{1/4}\), we have that

\(\square \)

Proof of Proposition 7:

(i) Using the update rule of \( {\mathbf {x}}_{k+1}\) and the fact that \({\mathbf {x}}^*=\varPi _{{\mathcal {X}}} [{\mathbf {x}}^*]\), for any \(d_k + {\bar{w}}_{k}\) where \(d_k \in \partial h({\mathbf {x}}_k)\) and \(k \ge 1\),

where in the second inequality, we employ the non-expansivity of projection operator. Now by using the convexity of h, we obtain:

where we use \(a^Tb\le {1\over 2}\Vert a\Vert ^2+{1\over 2}\Vert b\Vert ^2\). Now by summing from \(k = {\widehat{K}}\) to \(K-1\), where \({\widehat{K}}\) is an integer satisfying \(0 \le {\widehat{K}} < K-1\), we obtain the next inequality.

Dividing both sides by \(2\sum _{k={\widehat{K}}}^{K-1} \gamma _k\), taking expectations on both sides, and invoking Lemma 11 which leads to \({\mathbb {E}}[\Vert {{\bar{w}}}_{k}\mid {\mathcal {F}}_k\Vert ]^2\le {\nu ^2\over \sqrt{N_k}}\) and the bound of the subgradient, i.e., \({\mathbb {E}}[\Vert d_k + {\bar{w}}_k\Vert ^2]\le M_G^2\), we obtain the following bound.

By utilizing Jensen’s inequality, we obtain that

where \({\bar{x}}_{{\widehat{K}},K} \triangleq \tfrac{\sum _{k={\widehat{K}}}^{K-1} \gamma _k x_k}{\sum _{k={\widehat{K}}}^{K-1} \gamma _k},\) which when combined with (44) leads to (30). \(\square \)

Rights and permissions

About this article

Cite this article

Bardakci, I.E., Jalilzadeh, A., Lagoa, C. et al. Probability maximization via Minkowski functionals: convex representations and tractable resolution. Math. Program. 199, 595–637 (2023). https://doi.org/10.1007/s10107-022-01859-8

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10107-022-01859-8