Abstract

Pervasive sensing has opened up new opportunities for measuring our feelings and understanding our behavior by monitoring our affective states while mobile. This review paper surveys pervasive affect sensing by examining and considering three major elements of affective pervasive systems, namely “sensing,” “analysis,” and “application.” Sensing investigates the different sensing modalities that are used in existing real-time affective applications, analysis explores different approaches to emotion recognition and visualization based on different types of collected data, and application investigates different leading areas of affective applications. For each of the three aspects, the paper includes an extensive survey of the literature and finally outlines some of challenges and future research opportunities of affective sensing in the context of pervasive computing.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

This review article creates a platform for understanding the growing field of pervasive affective sensing and offers designers, computer scientists, and researchers from other related disciplines an opportunity to further engage with this field.

The proliferation of smartphones and sensor-based technologies has opened up new territory with respect to the development of systems that can recognize and process human affective states. One of the key challenges in such systems is the recognizing of people’s feelings and related behaviors. Advances in pervasive sensing have enabled us to measure peoples’ affective states in real-time situations by harnessing the properties that such mobile and sensing technologies now afford.

Affect plays an important role in our daily life and is generally reported in the literature as a spontaneous mental feeling or state [1, 2]. Emotions in general can overwhelm the human body, which responds through various signals that are manifested in physical and physiological forms. Physical responses include facial expressions, voice intonation, gestures, and movements, whereas physiological response indicators relate to respiration, pulse rate, skin conductance, body temperature, and blood pressure. In terms of human psychology, affect sensing can be categorized as follows: self-reports, physiological recordings, and behavioral observations [3]. Self-reporting is an explicit way to gather information related to a person’s feelings or emotional state by using questionnaires or interviews in order to report on one’s own state. Physiological recording is an implicit way to identify emotional reactions by recognizing the user’s physiological changes with the use of biosensors. The behavioral observations method is used to identify the user’s emotional state by observing their externalized reactions, such as facial expressions and speech. Affect has numerous models of definition and typically follows two approaches: a categorical approach, which models affect as a distinct category, such as joy, anger, surprise, fear, or sadness, and a dimensional approach, which characterizes affect in terms of several continuous dimensions, such as arousal or valence [4].

The main goal of affective computing is the development of systems and devices that are able to recognize, interpret, and simulate human emotions [5]. Equipped with cutting-edge sensing technology and high-end processors, smartphones are able to unobtrusively identify human emotions and are an ideal platform for delivering feedback and behavioral therapy in an “all the time everywhere” pervasive computing model. Moreover, smartphones have embedded sensing capabilities, such as the accelerometer, microphone, positioning systems, compass, ambient light detector, and proximity detector, which can be mainly used to detect, recognize, and identify a user’s context and activity. In addition, mobile social network data, phone network data such as call detail records (CDR), and application use can also help to discover the user’s context and behavior. Wireless wearable biosensors can also be used for measuring physiological signals, such as electrodermal activity, heart rate, temperature, and respiratory rate. Information gathered from these sensors can be used to make inferences about peoples’ states of affect.

In this paper, we survey existing affective mobile sensing systems, related emotion recognition techniques, applications, and the possible future developments emergent in this research area. The motivation behind this article is to bring the latest developments in this field to researchers interested in this area, whether specialist or novice. The remainder of the paper is organized as follows: Sect. 2 describes pervasive affect sensing and defines the elements that make up such systems. Section 3 provides an overview of different sensing modalities used in natural settings. Section 4 reviews existing approaches for affective state recognition and visualization. Section 5 discusses the leading application areas of pervasive affective sensing. Sections 6 and 7 outline some of the challenges and future directions of pervasive affect sensing, respectively, and the final section concludes this paper.

2 Pervasive affect sensing

The increasing popularity of pervasive sensing has led to new research and application areas, one of which is affective computing.

Smartphones are ubiquitous, unobtrusive, and sensor-rich computing devices. They are carried by billions of users every day; more importantly, their presence is likely to be “forgotten” by their owners.

In recent years, the pervasive nature of mobile and sensing technologies has driven the development of novel affective applications. Pervasive sensing offers exciting new possibilities for monitoring and analyzing the emotional experiences of people in regard to the passive and effortless collection of data streams that can capture user behavior and emotions, anytime and anywhere. It provides sensing modalities that are able to continuously produce a rich stream of data that reflect a person’s emotional state. In a pervasive setting, we can identify various aspects of emotional states through monitoring a user’s voice, facial expressions, behavior, activities, and physiological reactions by utilizing the ubiquitous sensing capabilities of these new technologies.

It is now possible to define pervasive affect sensing by considering its three major characteristics: “sensing,” “analysis,” and “applications.” Firstly, sensing means gathering affective data relating to people using pervasive tools such as mobile phones, wearable sensors, and digital cameras. The pervasive tools can be developed and then implemented in order to gather self-reported data, physiological signals, contextual data, facial expression, and speech. Secondly, analysis can include affect recognition and visualization, in which both are reliant on the gathered data and application requirements. Thirdly, pervasive affective sensing has many applications, promoting the health and well-being of individuals and communities. The existing applications can be mainly categorized as affect “sharing” and “awareness,” “mental health tracking,” “behavior change support” and “urban affect sensing.” In order to organize our review of research, we present a format here that emphasizes the main building blocks of mobile affective systems through a consensual, componential model, see Fig. 1.

3 Emotion monitoring techniques

Mobile affective sensing is the process of collecting a variety of affective data using different devices such as mobile phones, wearable sensors, and cameras. The collected data are combined to infer the emotional states by either a categorical or a dimensional scale.

3.1 Self-reporting

Self-reporting is the most traditional method for gathering people’s affect states. It is a subjective measure in which subjects are asked to enter their emotional state manually.

A common self-report measurement is the verbal scale in which words are used to describe the participant’s emotional states. Another alternative to this is a self-report measurement that is based on pictograms or animated cartoons instead of declarative words to represent certain emotional states. Examples of such systems are the Self-Assessment Manikin [6] and Emocards [7]. Other types of measurement sets use a numerical scale to characterize emotions in terms of different dimensions.

Generally, most of the research-based and commercially available affective systems use the self-reporting method to gather emotions directly. For example, Mappiness [8] is a smartphone application that relies on self-reporting to elicit the users’ feelings; it uses three emotional dimensions—happy, relaxed, and awake. These are then used to investigate how their local environment—air pollution, noise, and green spaces—affects people’s happiness. Self-reporting is also employed by WiMo [9] to allow users to express and share the emotional feelings about a place according to two scales: “comfortable” to “uncomfortable” and “like it” to “don’t like it.”

Self-reporting is a relatively feasible and lightweight method, but users may not be able to express or may not wish to report their true emotions. Self-reports will only be available at the times that users volunteer them.

3.2 Physiological signals

Recently, there has been an increase in the number of devices that measure physiological signals. The wearability and the wireless nature of many of these devices have enabled researchers to look into detecting people’s emotional states. Typically, patterns in the activities can be identified that correspond to the expression of different emotions. Recent development in wireless wearable biosensors allows the detecting of emotion from physiological signals in real-world situations. There are many devices to measure one or a mixture of variables that correspond to the physiological states of an individual. The most common measures of physiological signals used in mobile settings include:

-

Electrodermal activity (EDA) that represents changing electrical conductivity of the skin surface including measures such as skin conductance (SC), and galvanic skin response (GSR). Dermal activities can indicate the level of arousal from low to high.

-

Electrocardiogram (ECG) represents heart activity such as heart rate variability (HRV) and pulse, blood pressure (BP). Cardiovascular systems measure the activity of the heart, which could indicate stress, and valence, which ranges from negative to positive emotions.

-

Electroencephalography (EEG) that measures brain activity, i.e., the central nervous system (CNS).

There are many wireless wearable sensors that are available today to provide continuous physiological signal measurements by connecting them to mobile platforms such as Affectiva Q Sensor [10], BioHarness [11], BMS smartband [12], and NeuroSky [13]. Affectiva Q Sensor measures emotional arousal via skin conductance, skin temperature, and activity. BioHarness captures physiological data including heart rate, respiration rate, thoracic skin temperature, 3D activity, orientation, and position. BMS measures skin conductance, skin temperature, ambient temperature, pulse wave, and motion. NeuroSky measures brainwave EEG and heartbeat ECG signals.

Physiological signals are often collected along with other contextual parameters in order to create understandable mobile affective experience data. FEEL [14] and Affective Diary [15] are two systems that use electrodermal activity (EDA) to measure the arousal levels of individuals while carrying out their daily activities, while systems such as MOLMOD [16] use skin temperature and heart rate to detect pleasure and arousal levels in different locations. Perttula et al. [17] employ heart rate as a measure of user experience in order to monitor the feelings of ice hockey game audiences using mobile phones with heart rate belts.

Most of these devices are small, can be carried in a pocket, and do not restrict movement; physically, they are unobtrusive and can be used for extended periods of time. Along with mobile phones, they are able to capture large amounts of data automatically. They offer long-term continuous data, based on the user’s emotional state; however, the data are often one-dimensional and lack an explanatory meaning for an observed preference or attitude, i.e., it may be possible to tell that someone is emotional or stressed but not directly possible to tell what provoked these emotional responses.

Table 1 shows a variety of available physiological sensors and the variables they measure. It can be seen that patterns exist in what each given device measures.

3.3 Facial expression

Emotional states can be interpreted by observing a person’s facial expression. A camera is required to capture either an image or a video of the person’s face in order to detect expressed emotions. The captured images are usually segmented in order to observe individual movements of facial muscles, including the muscles of the cheeks, eyes, forehead, and chin, and the movements of the other parts of the face including eyebrows and mouth.

In previous years, there have been many projects dedicated to the recognition of facial expressions [18–20]. Commercially, two facial recognition systems have been developed: FaceReader [21] is one of the first tools that are capable of automatically analyzing facial expressions, providing users with an objective assessment of a person’s emotions.

Another commercially available system is Affdex [22], an affective system that uses a webcam to interpret a person’s emotional state from their facial expressions in real time. It measures a range of emotion states including attention, confusion, surprise, and dislike.

In terms of mobile platforms, new developments have started to emerge recently. Mood Meter [23] is a recent research project that uses facial expressions to measure emotional states collectively in the wild. In particular, Mood Meter has created an interactive installation that automatically encouraged, recognized, and counted the smiles of participants strolling by and deployed four of the systems at major locations on a college campus for 10 weeks. The online “portrait gallery” continuously showed the collected information using a variety of visualizations and interactive graphs to represent smile intensity at defined locations.

Although facial expressions can be used as the main cue for emotion elicitation, these expressions can be manipulated. People can display various expressions which do not reflect their actual feelings.

3.4 Speech

Recognizing emotion from speech has also been an ongoing area of research that has been carried out in order to convey a person’s emotional state: explicitly “what is said” or implicitly (how it is said) [24].

The most common method of understanding a person’s emotional state through speech is by analyzing the acoustic features of speech and associating them with the emotional state of the speaker [25]. Bänziger et al. [26] have argued that statistics related to pitch convey considerable information about emotional status. Speech-based emotion detection is considered one of the most suitable applications for interpreting emotion in the real world. For example, Lee et al. [27] used k-NN classifiers, support vector machines (SVM), and linear discrimination in call center environments in order to distinguish between two emotions: negative and positive. In mobile settings, the phone’s microphone or sound sensor can be used for recording both human and environmental audio. For instance, StressSense [28] is a mobile system that is able to detect stress in real-life situations based on a user’s voice and using a smartphone’s microphone. EmotionSense [29] is also a mobile platform that gathers segments of conversations in addition to other context-based information including location, movement, and proximity in order to infer the user’s emotional state. The main drawback in developing applications for emotion recognition using speech analysis is that these applications are not universal. They can only be used in situations that use the language for which they were developed.

3.5 Phone usage

Tracking the mobile usage and activity of a user can indicate the context of their phone use. Many recent research studies have attempted to investigate the possible link between phone usage and the phone user’s feelings. For example, MoodSense [30] analyzes various patterns of mobile usage including application access, phone calls, SMSs, e-mails, web browsing history, and location, to infer the phone user’s mood according to two-dimensional states: pleasure and activeness. MoodMiner [31] assesses an individual’s daily mood in terms of three dimensions: pleasure, tiredness, and relaxation. This is based on mobile phone data including acceleration, GPS, call logs, and SMS logs. EmotionSense [29] also employs GPS, acceleration, and Bluetooth on mobile phones in addition to using a sound sensor to get an understanding of the user’s feelings. Nonetheless, most of the results reported in the aforementioned projects remain preliminary until larger field experiments can be conducted with a more diverse set of participants. Running short experiments does not give enough evidence of a correlation between behavior and mood, since these small sets of trials do not take into account the changes in the user’s lifestyle, gender, age, or current activities, and impacts arising from the local environment such as the weather and traffic.

3.6 Social networks

The rise of social networks has opened up new opportunities for reliable affective assessment. This has meant that researchers have been able to apply computational methods to social media data to predict either an individual’s or a community’s emotional state. Conceivably, the mood of a community can be assessed based on interpreting the mood of messages on, for example, Twitter. A research project called “Pulse of the Nation: U.S. Mood Throughout the Day” [32] by the Northeastern University College and Harvard Medical School used this approach to determine how happy or sad Americans are. They analyzed more than 300 million tweets collected between September 2006 and August 2009 from Twitter’s service to determine the mood of America over a 24-h, 7-day period, and by geo-location. As a result, their analysis indicated that people are much happier on weekends, and the peak of happiness is on Sunday mornings. Although their analysis is not on real-time data, such projects open new frontiers for affective systems that can use social networks in conjunction with other sources to identify affect states in real time.

3.7 Mobile network data

Recently, mobile network data have become an important resource as a part of pervasive sensing systems’ affect analysis. Noulas et al. [33] have attempted to infer urban social activity and location by analyzing mobile network data from a telecommunication provider using geo-tagged areas from the social network application Foursquare. The aim was to predict the specific urban activity of an area based on a supervised learning framework. In terms of affective sensing, Kanjo et al. [34] have proposed a framework to infer the mood of the city from mobile network datasets with the long-term objective of combining mobile data with sensor data. Their preliminary results have shown that collective human communications in selected areas of a city can reflect the social mood, which could provide additional insights into how collective social interactions shape urban sensing.

4 Data analysis

As previously stated, mobile phones have a higher memory capacity and more processing power than ever before. These developments have paved the way to powerful machine learning algorithms for statistical inferences, which originated from sensor data to directly run on mobile phones or in the cloud. In general, the basic analysis of emotional data involves emotion recognition and visualization. The following section discusses existing approaches to affect recognition and visualization.

4.1 Affective recognition

Recent advances in the area of mobile and sensor technologies have enabled user’s emotional states to be recognized in real-life settings. Although it is possible to assess whether someone is experiencing a particular emotion in an objective manner, from a scientific research perspective, one of the most challenging problems in affective computing is measuring a person’s subjective emotional state.

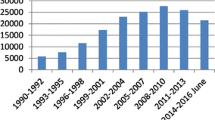

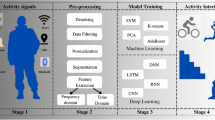

Consequently, recent research has examined whether emotional states are associated with specific and invariant patterns of experience, physiology, and behavior. Typically, recognizing emotional states has followed a statistical, probabilistic, and machine learning approach, where a huge amount of labeled data is collected for training and testing a classifier [35]. This involves designing experiments to collect data that then label it based on such emotional models or different sets of emotional states. Then, feature extraction and classification techniques are applied to classify different emotional states. Certainly, feature selection and classification techniques can differ according to the emotion detected from mobile phones and sensors, physiological signals, speech, facial expression, and text. Basically, emotion recognition processing involves capturing raw data, transforming it into features, and then applying classification algorithms to identify classes of emotions, as shown in Fig. 2. In the following section, we will describe different emotion recognition algorithms according to collected data.

4.1.1 Mobile phone context-based analysis

At an individual level, emotion can be recognized in real-life settings using machine learning techniques to identify patterns in individual behavioral activities that correspond to different emotions. Based on this concept, recent research has investigated the detection of emotions by analyzing mobile phone data and sensors: MoodMiner [31] infers the emotions of their owners based on different sets of features and according to different emotional models. MoodMiner classifies daily mood using three dimensions: displeasure, tiredness, and levels of tenseness. It extracts a set of individual’s behavioral features from mobile phone communication logs and sensors including location, micro-motion, communication frequency, and activity. Other systems, such as MoodSense [30], also measure mood using two dimensions: pleasure and activeness. These dimensions are based on recognizing social interactions and daily activities from mobile data. Social interaction is obtained collectively from three types of data: phone calls, SMS, and e-mails. Daily activities are represented by browser history, phone application usage, and location history. Using a simple clustering classifier, MoodSense infers participants’ mood out of four categories with an average accuracy rate of 61 %. It suggested that the accuracy increased to 91 % when using personalized models in which the model is trained using the user’s own data.

Lee et al. [36] propose an approach to recognize the emotions of a mobile user by collecting, analyzing, and classifying mobile usage patterns, using sensory data. Accordingly, the data are collected to recognize the behavior and context of the users when they use a certain application. These data include the coordinates of touch positions, the degree of device movement, current location, and weather. From these data, ten features are extracted in order to classify emotions into seven classes, including happiness, surprise, anger, disgust, sadness, fear, and neutral, using a Bayesian network classifier. To this end, a system was built called the Affective Twitter client (i.e., AT client) that collects the aforementioned data while the user writes a tweet on Twitter. They found that this system could classify the emotions of the user with a high level of accuracy.

Oh et al. [37] proposed a system that infers a user’s activity and emotion based on collected mobile logs, using Bayesian networks (BNs). Two BNs are constructed to recognize the user’s high-level context from low-level information; this includes call logs, SMS logs, GPS, device status, Bluetooth, and weather. One of the BNs is used in two ways: one is used to calculate the probability of each activity based on five factors that are a mobile device status factor, a spatial factor, a temporal factor, an environmental factor, and a social factor. The other BN uses the result of the activity inference to infer the emotion; thus, the activity has a direct influence on the user’s emotion. The result is the probability of arousal and valance, which is then used to indicate the user’s feeling according to eight states including excited, happy, contented, relaxed, bored, sad, upset, and nervous. ContextViewer is an interface that uses this system to represent the user’s activity and emotion. This is then presented as an icon in a phonebook and a map browser for smartphones.

EmoSens [38] is a service recommendation framework that works by sensing the emotional state of a user in order to provide personalized recommendations that match a user’s current emotional state. It is based on an affective entity-scoring algorithm. The algorithm creates an affective scoring vector for each entity on a mobile device (such as installed applications, multimedia content, and people’s contacts). The vector is calculated as the difference between the prior and post-emotional states of the user. Based on the affective entity scoring, EmoSens provides emotion-based recommendations and tracks the user’s temporal pattern of emotional change.

4.1.2 Physiological signal-based analysis

Human reaction to emotional events is manifested through many changes in the patterns of physiological signals such as heart rate and respiratory rate. This means that, physiological signals can be measured using biosensors and then analyzed in order to identify the emotional state that an individual feels. To be precise, physiological signals are combined with other contextual information in order to correlate the emotional reaction to its stimuli. For example, FEEL [14] is a mobile phone-based system that measures the stress level of social interactions on mobile phones, such as calls, an incoming SMS, a new e-mail, a meeting in the phone calendar. It also analyzes the corresponding EDA recording. Subsequently, EDA signals are preprocessed, normalized, and analyzed to classify each interaction as a stressful or non-stressful event. Generally, the system works on a learning phase where the user is asked to rate their stress level on a seven-point Likert scale after each interaction. The system stops asking the user when it has collected a sufficient number of samples, so it can predict the stress level confidently. Other systems such as MOLMOD [16] use skin temperature and heart rate to infer the user’s feelings toward a location. They propose a feeling model that maps the average of the two sensor readings into a value that represents one of eight feelings relating to the Russell Model (i.e., surprise, excitement, happiness, calmness, sleepiness, depression, sadness, and stressfulness). Then, the mood of the location is calculated as a proportion of the eight feelings experienced by all the people visiting that place. Moreover, NeuroPlace [39] exploited people’s EEG signal in making sense of outdoor places. In this work, brain activity signals are analyzed in order to discriminate between stressful and non-stressful places to recommend places for therapeutic relief or to restore one’s well-being.

4.1.3 Speech-based analysis

Vocal characteristics can be used to infer people’s emotional states [3]. Moreover, speech is considered low-cost, and a non-intrusive signal that encodes affective information. In general, acoustic features, such as pitch and speaking rate, are decomposed in order that they can be associated with the emotional state of the speaker. Pitch is considered as an indication of stress and the arousal levels of the speaker [21].

There has been a growing interest in inferring stress and emotions from recording speech using mobile phone-based sensors. The Affective and Mental health MONitor (AMMON) [40, 41] is a speech analysis library that is designed to work on mobile phones, to recognize emotions and analyze the mental stress of the user based on voice. AMMON is evaluated against speech under simulated and actual stress (SUSAS) [42], a dataset which is the most common dataset used for stress detection tasks.

EmotionSense [29] recognizes emotions by analyzing audio recording samples along with other context data and detects emotion as five broad types: happy, sad, fear, anger, and neutral. In EmotionSense, the emotion recognition process is based on Gaussian mixture model (GMM) classifiers, and it trains its emotion classifier using an emotional speech dataset of emotional prosody speech and a transcripts library. In addition, StressSense [28] detects stress in real-life situations from a human voice that is recorded using a smartphone’s microphone. The StressSense classifier is based on a non-iterative maximum a posteriori (MAP) adaptation scheme for GMMs that is trained on real speech data. The StressSense classifier can robustly identify stressful and neutral situations across multiple individuals in diverse acoustic environments.

4.1.4 Facial expression-based analysis

Facial expression analysis is required to discriminate between different emotional states. Emotion detection techniques for the face can be applied to either a static image or a dynamic video. The leading method of classifying emotion from facial expression is Facial Action Coding System (FACS) [43, 44]. It was developed to measure facial activity to identify emotions. FACS measures facial activity and classifies its motions into six basic emotions: anger, disgust, fear, joy, sadness, and surprise [45]. In addition, SHORE [46] is another framework that can detect faces and recognize emotion from facial expressions. SHORE identifies five types of emotion: “happy,” “angry,” “surprised,” “sad,” and “neutral.” It provides a percentage of the probability for the first four emotions. Thus, the face is identified as expressing the given emotion if the probability of an emotion is higher than the set threshold. Otherwise, the emotional state is identified as “neutral.”

The idea here is to use a camera to identify faces and analyze emotional expressions. Based on this technique, MoodMeter [23] has measured the happiness of people in the MIT community by detecting smiling and non-smiling faces from real-time video streams from the campus. The detection of smiles supports the analysis of a smile’s intensity in the campus over time at four different places. Their analysis was composed of two machine learning tasks: face detection and facial feature analysis that were implemented using the SHORE framework. In a similar way, the Feel-o-meter project [47] uses a camera that captures the faces of passersby and classifies them as either happy, sad, or indifferent. It then represents the results of collective emotions as a giant smiley face placed on a public building.

4.1.5 Text-based analysis

A huge number of messages are posted every day in the form of SMSs, e-mails, and on social network sites. Emotions seem frequently to be important in these texts for expressing friendship, revealing one’s mood, or showing moral support to other people. Many algorithms have been devised to identify emotions and sentiment strength in this modern form of communication. ANEW “Affective Norms for English Words” [48] is one of several projects to develop sets of normative emotional ratings for collections of emotion elicitation from English words. For instance, the “Pulse of the Nation” project [32] revealed the mood of the USA by analyzing the affective content of Twitter feeds during a defined period of time. The tweets were assigned a mood score by calculating the number of positive or negative words it contained based on a (ANEW) list. The analysis output is the average mood score of all of the users, hour by hour, that can be presented as a series of time-varying mood maps.

Besides the analysis of formal English words in texts, informal text English analysis was also investigated [49], using new methods to exploit the de facto grammars and spelling styles of texts in modern communication. SentiStrength uses a custom built dictionary of popular nonstandard English words that are often used in SMS messaging in order measure the sentiment strength implied by the text.

Similar to speech recognition, applications for emotion recognition based on text analysis are not universal. It can only be applied to words or sentences of a particular language.

4.2 Affective data inference and visualization

Visualization makes it easier and faster to find similarities, patterns, and correlations between affective states in different contexts and at different scales. Therefore, visualizations are important when working with sentimental data to make insightful decisions; however, visualizing the affective data is very task and domain specific.

In the literature, affective information is pictured in map-based visualization to represent collective emotion. Among the first to do this, Matei et al. [50] created a mental map that reported people’s feeling of fear and comfort corresponding to different places. It was illustrated as digital, subjective, and collective layers that were added to maps of Los Angeles. Similarly, BioMapping [51] is an art project by Christian Nold who created an emotion map for San Francisco that visualizes traces of high and low arousal. In BioMapping, each participant is asked to explore a neighborhood area carrying a GPS to indicate the participant’s location and an EDA to reveal the person’s emotional arousal.

To harness the potential of mobile phones as anywhere–anytime devices, real-time visualization becomes a natural technique to represent an affective state in the wild. For instance, Mood Meter [23] visualized the mood of the campus on a heat map that showed the intensity of smiles in each location with a higher smile count indicated as “hotter” regions, along with four gauge meters that displayed the average intensity of smiles in each location. The Feel-o-meter [47] on the other hand used a large smiley face sculpture that was placed on top of a building to reflect the mood of the city. Moreover, Mappiness [8] uses a colored geographical map to represent happiness across the country, in addition to charts that reported the happiness of each participant in different contexts. Globally, Glow [10], which is a smartphone application, aims to discover how people are feeling around the world, and it aggregates and visualizes the rating of users’ feelings using a heat map that shows how people are feeling using different colors that range from red (not so awesome) to blue (awesome).

5 Applications

Pervasive sensing has opened up opportunities for developing new applications based on collecting affective information in pervasive situations.

In what follows, we discuss a number of the emerging leading application domains which can be categorized according to their use cases into four categories: “affect sharing,” “mental health tracking,” “behavior change support,” and, finally, “urban affect sensing.”

5.1 Data sharing and awareness

Emotional awareness and emotion sharing can play a role in improving people’s health and mental well-being when attempting to encourage social behavior change [52]. Emotion sharing and awareness can be implemented efficiently via mobile devices [53]. For instance, Aurora [54] is a mobile application whose primary function is emotion recording and sharing. The research team at Cornell University developed Aurora to provoke and energize social emotion sharing. It uses photographs as a mean of expressing and exchanging emotions. The pilot study found that Aurora motivated people to be more aware of their emotions and to share them with others, which led to a boost in people participating in socially supportive behaviors with others. Also, WiMo [9] and Glow [10] prompt people to share their emotional feelings in relation to public spaces. WiMo and Glow are two mobile applications that enable users to share and communicate their emotions relating to places through geo-emotional tagging.

5.2 Mental health tracking

Mobile applications have the potential for monitoring people’s mental health and well-being, as well as supporting related interventions [55]. The mobile phone is considered as an excellent tool to offer real-time monitoring, support, and guidance for the benefit of patients, caregivers, and healthcare professionals. For instance, Empath [56] is a real-time monitoring system that detects and tracks depression symptoms. It collects behavioral data such as speech, sleep, weight, and movement using wireless sensors, a touch screen station, and a mobile device. The reported results provide information about the patient’s state and help assessing and tracking the patient’s symptoms. Optimism App [57] is another mobile monitoring application that enables users to log their mental health. It records self-reported mood, along with medication intake, exercise, and sleep quality to produce mood charts. It is recommended by psychiatrists and therapists to monitor mental health. Mobilyze [58] is a mobile phone application for depression that predicts the patients’ mood, emotions, activities, environmental context, and social context based on the information available on the phone (i.e., recent calls and active phone applications) and from the phone sensors (i.e., GPS, Wi-Fi, Bluetooth, accelerometer, and ambient light). Psychlog [59] is also a mobile phone application that is designed for mental health research. It collects the users’ psychological data through self-reported questionnaires, physiological and activity data via a wireless electrocardiogram equipped with a three-axial accelerometer. The aim is to monitor the psychological and physiological states of the users, their activities over time and to investigate the relationship between them.

5.3 Behavior change support

Facilitating mobile phones for affect sensing is potentially valuable for promoting behavior change interventions. Knowing the affective state, a mobile application can potentially provide an instant and personal intervention along with therapies to improve the mental and physical health of people individually or as part of a community. For this purpose, Morris et al. [60] present a mobile phone application to provide emotional self-awareness and therapies for cardiovascular disease. The application provides mood reporting and mobile therapies. The application captures the user’s mood using a single-dimensional mood scale relating to anger, anxiety, happiness, and sadness in addition to a touch screen scale on the mood map that represents the circumplex model of emotion. The mobile therapies include a breathing meter, body scan, which is a physical relaxation animation, and mind scan, which a series of cognitive reappraisal exercises. Such applications could facilitate people’s access to psychotherapies over mobile phones.

5.4 Urban affective sensing

Additionally, there is the potential for pervasive affective sensing to make sense of urban spaces. In the literature, urban affective sensing is currently exploited to understand the affective reactions of people toward specific places or context across time and space dimensions.

In regard to urban planning, pervasive sensing has the potential to model people’s affective reactions toward space. “Sensing the city” [61] presents studies of the potential defining qualities of a place according to how people sense their surroundings. Using sensors, the study measured physiological signals, skin conductance, and skin temperature, in addition to noise level in order to explore the correlation between stress levels and noise load. This study shows the possibilities of using psychophysiological monitoring in urban planning.

Recently, the EmoMap project [62] developed a crowdsourcing approach to collect the affective experiences of people toward space (anytime and anywhere). The project involved collecting, modeling, and visualizing people’s experiences of space. The project designed a mobile application that gathers affective data using a self-reported method. It asks users to rate their affective experience on a seven-point Likert scale for each of the following parameters: pleasant, comfort, safety, diversity, attractiveness, and relaxation. The aim of the project is to build emotion-aware navigation services for pedestrians. Additionally, an open online database called “OpenEmotionMap.org” [63] was established, which contained all the data collected from the project EmoMap.

The “Sense of Space” project [64] is a work in progress that investigates how people’s feelings are affected by their surrounding’s environmental conditions by integrating wearable sensors into smartphones to gather all the required emotional and environmental data and then visualizing the results as a mental map.

For research purposes, Mappiness [8] studied people’s happiness in relation to their local environment. Through the mobile application, the project asked users to rate how happy, relaxed, and awake they were in addition to supplying some contextual information, such as their activity and company. Then, users’ locations were determined automatically using the GPS of the mobile phone; these coordinates relate to systems which provided information on environmental and weather conditions. Consequently, this results in relating users’ feelings to features of the environment. As an outcome, the project found that participants are significantly happier outdoors in any natural or green habitat type than in the urban environment. Likewise, a research project called “Track Your Happiness” [65] studied factors that affect people’s happiness in their daily lives by examining the causes of the happiness in relation to a set of subjective user responses. This project gathered the required information about a person’s feelings and their current activities, locations, company, and time of day via a smartphone application. Accordingly, people can track their happiness and find out what factors are associated with greater happiness in a personal or collective manner. Mappiness collected the location parameter as geographical positions, whereas “Track Your Happiness” used the user’s contexts (e.g., at home, at work, in a car). Figure 3 exemplifies the various common terminologies used in urban affective sensing research. The figure also summarizes the relationships between the main characteristics and issues related to this application domain.

6 Research challenges

There are many research challenges that relate to the design of pervasive affect sensing systems, and each of these challenges is multifaceted. In this section, we discuss some of these challenges in order that other researchers might understand them in the context of the rest of the paper. Firstly, efficient inference algorithms need to be developed that are able to extract high-level information from the available raw emotion data. Secondly, it is important that any system that is developed should be easily programmable and highly configurable. This is important because we need to take into account the user and also the use context. Obviously, this is highly important for specialized contexts. The adaptable and configurable nature of such systems is a key to their use in experimental settings where different types of experiments will have specific requirements. Thirdly, it could be argued that in order to get a large number of user groups, there needs to be a degree of incentive given to users in order to get them to participate in an experiment. Lastly, spatial and temporal visualizations need to be developed in order to allow for the analysis of the system and the dissemination of information and related findings in both real-time and historical contexts.

Within this next section, we highlight some of the issues that arise from the literature in regard to pervasive affective systems. In this section, we further discuss and articulate the challenges and issues relating to both the use and development of such systems.

6.1 Privacy

There has been much research conducted in the recent years in relation to the privacy implications of mobile sensing [66]. In most of the aforementioned research, deployments in an urban setting take collated user data that, when analyzed together, are able to represent an understanding of place. Personal location information is not singled out.

However, researchers need to be aware of the potential risks relating to the invasion of privacy in relation to the recording of: voice, photos, SMS, emotions, physical behavior, and even intention can be recorded. An effective relationship should be built between system developments on the one hand, and with privacy rights, ethical computing and the formulation of social policy on the other, to promote and guard the privacy rights of participants. Also, it is vital to take an extra protection measure when it comes to processing the data on the cloud, which requires the transfer of raw data. For example, processing a conversation on a mobile phone will not reveal the speakers’ identities; however, transferring the whole conversation to a remote server for cloud-processing could cause concern. In most of the related emotion frameworks, users’ participation should be encouraged by guaranteeing their privacy.

6.2 Data integrity

Many sensor-based technologies, including physiological and brain activity sensors, are still in their infancy. In many respects, this is highly problematic, as this may contribute to corrupted or erroneous data being produced. To prevent this data from degrading the accuracy of any results, the devices or the data in question need to be identified and discarded from the pool of tasked devices and sensed data. Research on reputation systems that cater for both data integrity and the requirements and specifics of the sensing scenarios is therefore required. However, it is not only at the systemic level of the technology that it is important to understand data integrity and analysis. In understanding the responses and data produced by pervasive affective systems, one must engage in multidisciplinary discourses and debates in order that the data can be properly analyzed and interpreted. It is then that the true value of such multidisciplinary research approaches can add to our understandings in regard to the data produced.

6.3 Limited datasets and lack of generality

When it comes to emotion analysis at the level of a city or a place such as a shop or school, it is important to run large number of experiments which include a wide range of users of different abilities, gender, age, race, etc. It is important not only to run such experiments with large and diverse user groups, but also to run these experiments in a variety of different contexts. In order to accomplish this, it may be advantageous to use mobile technologies that enable the researcher to examine the participants’ affective responses as they move from one context to another. Determining a participant’s response to an ever changing set of environmental factors while they are mobile may be challenging, but it is important to remember that if we are to study the real world, in the “wild” [67] (responses that people have in their day-to-day lives), these are challenges that have to be understood and met.

6.4 Diversity

Generally speaking, affective mobile sensing requires a large number of participants to make sense of people’s behavior toward a place or in a specific situation. These users differ from one another in a variety of ways, for example, physical differences such as sex, weight, height, or health. Beyond these physical differences, there are also differences that are based on culture, education, and lifestyle (e.g., diet and work). These can affect the performance of a specific model or classification. For example, rating shops in Shopmobia [68] will give different results when the emotion data are collected from female users than from males. Their age can make a significant difference too. Any future data classification method should attempt to address the issues related to diversity when the number of participants scales to such a high level. Recently, a number of methods have attempted to capture similarity in human behavior, with methods such as community similarity networks [69] being proposed. The underlying idea is to generalize findings by identifying citizens who can be treated as uniform for the sake of emotion inference.

6.5 Advocacy and civic engagement

A number of challenges remain when it comes to user participation and engagement. Currently, most mobile sensing applications rely on a small number of volunteers to gather data, and this means that the amount of collected data can be limited. This by its very nature could hinder the large-scale deployment of mobile affective applications.

For instance, the lack of incentives for users to participate in mobile affective sensing can lead to a lack of participation and, therefore, data. To participate, users have to trigger their sensors to measure physiological data (e.g., to obtain EDA measurements), which may use up the battery of their smartphone. Also, the user may have to move to a specific location to sense the required data.

A large number of users are needed to participate in various campaigns in order to understand the peoples’ behavior in the city, and in natural resources planning and services, all using data that are systematic and can be validated. A piece of recent research has started to address the problem of providing privacy-aware incentives for mobile sensing [70]. They have adopted a credit-based approach that allows each user to earn credits by participating in experiments and contributing the data without leaking which data they have contributed. Mass use participation can bring to light hazards, personal safety concerns, cultural assets, or other data relevant to urban planning. What is the effect of various triggers on the user’s compliance? How can we give incentives to users in order to get them to participate? When is the right moment for providing an intervention?

6.6 Battery life

The availability of sensors on mobile devices, such as mobile phones offers new capabilities that can continuously monitor emotional state and behavior change. Many of the affective sensing applications often run in the “background” in an attempt to continuously sense the user’s context, which quickly depletes the mobile device’s battery. The use of supplementary low-power processors has been proposed on mobile devices used for continuous personal activity monitoring [71]. The research suggests that a low processing power design could be beneficial to run simple and frequent sampling and buffering, and arithmetic operations. Other complicated tasks require a careful dynamic job scheduling system, based on accurate resource monitoring at runtime. Understanding the efficient use of the low-power processor could provide a more thorough understanding of this problem space. For example, this may raise some fundamental questions, such as which segments of the application are most efficient when hosted on the low-power processor, and when is it appropriate for the system to use it? In EmotionSense [24], the energy efficiency issue was tackled by dynamically adapting the sensor duty cycling in order to increase the sampling rate when the user’s context changed.

There are a plethora of research-related questions in relation to power consumption on mobile devices: How do we design for this in relation to sensor sampling adaption, how do we monitor power consumption, and how do we then design systems that can effectively control this? Would it be possible to use a general model or would it be better to develop a context-aware management system, will this be automated, or user configurable? Are there generic domain models that might allow for the development of, for example, pollution monitoring, certain sorts of emotional responses, and if so, how will we develop different sensor control techniques?

7 Future directions, understandings, and issues

In this final section, we have taken a somewhat unorthodox, yet carefully reasoned approach in order to appropriately understand some of the issues and the future direction and development of mobile affective sensing systems. In order to further expand upon our debate, we elicited the opinions of experts in regard to the future direction and core issues relating to the research field. Within this section, we offer up a series of varying arguments from experts related to this field of research. The researchers were from different organizations ranging from MIT to Cambridge University, and their contribution is noted within the “Acknowledgements” section of this journal paper.

7.1 Social implications

We take it for granted that one is able to develop systems that are able to both recognize and respond to human emotional states, but as one of our respondents wrote “One of the key challenges confronting the field of affective computing is to recognise and respond to the incarnate social foundations of “affective sensing” (i.e., our mundane ability as human beings to see, recognise and respond appropriately to one another’s emotional states), as given in the real world to real people in the course of their ordinary interactions and its observable location in embodied conduct and which provides for the ascription of putative “inner” conditions and states.” Where, how, and when is appropriate to intervene, recognize, and respond? What are the appropriate actions and how do we develop systems that are able to do this? At a cursory glance, affective computing systems might seem like simple systems that react to a factor. The issue that faces both the developer and designer of such systems is that they are in many respects attempting to replicate what is a human experience, skills that are learned and honed over a lifetime and are an important part of our existence.

7.2 Applied technology in the wild

Another key researcher in the field importantly noted that, “Most of the research from computer science has been very functional—we care about the prediction accuracy, sometimes even without considering the “why” and the “how”.” However, this does not take into account the uses of such technologies in the “real world.” Studies have highlighted this [72–74] and have discussed the importance of this and the use of, for example, ethnomethodologically inspired ethnographic studies [75] that can inform both the design and understanding of pervasive systems. As we further develop, design, and produce pervasive affective systems, a key aspect of understanding these will be the use of mixed methods, inspired by the social sciences that take note of the “lived” experience that users will have of this technology. Longitudinal studies could also be employed in order to fully understand the complex issues that impact upon users over sustained periods of use. As we move from the laboratory to the living room, our understanding of technology and applications will fundamentally be both challenged and change.

As we have just discussed, a move from the laboratory to the real world is imminent, but what will these application areas be and will such technologies be robust, reliable and private.

7.3 Privacy, ethics, and the medicalization of technology

A key concern of the experts that reported back was one of privacy. Privacy is a complex issue and one might argue, something that people have managed and dealt with prior to the existence of digital technologies. However, affective technologies when combined with ubiquitous systems mean that there is a very strong possibility that our emotional responses, both residual and in response to stimuli may be available to be perceived, interpreted, and used by the system. As yet we do not know how this might be accomplished, but there is a danger that such information may become public or that data might be used for purposes that they was not intended, e.g., medical assessments of people without their prior consent. So the problem is not only one of privacy it is one of ethics too, and one that the researcher in such a field needs to be aware of.

As one researcher wrote in regard to the ethics of self-medication and diagnosis, “On the whole promoting awareness of emotional states tends to be seen as a good thing, leading to a greater sense of self-control. However, the proliferation of apps that allow this—outside of medical supervision—is effectively like buying prescription drugs online fore self-medication.” And although we need to be aware of the issues relating to the medicalization of pervasive affective systems, there are opportunities that may arise from the appropriate development of such systems. Indeed, we need to ask ourselves, “Can sensing applications be designed to modify physical behavior and encourage more positive behavior and hence more positive mental state?”

We must always be aware that as one expert pointed out, “Studying questions related to affect, health, or well-being using technology is inherently a multidisciplinary endeavor, as it requires expertise in both the technology being used and the behaviour under investigation.”

8 Conclusion

The purpose of this paper has been to examine the use of pervasive affect sensing in terms of the literature and as an applied technology. To this end, we have reviewed and represented such works from a number of different angles in order to give a real-world perspective on the ways that mobile affective sensing has been used and is developing, as a research field in its own right.

Various pervasive affective sensing systems are growing toward improving and maintaining the well-being of human beings in non-controlled settings. As we described, pervasive sensing technologies that quantitatively measure the emotional experiences of individuals in real-world scenarios will provide us with an extraordinary opportunity to monitor useful behavioral and contextual information related to users. Understanding the link between people’s emotions and behavior in different contexts will guide the next generation of real-world applications to accommodate people’s cognitive and emotional requirements. Finally, aggregation of emotional and behavioral data across places over time and in relation to individuals is vital to the development of emotional models that best describe people’s feelings in real-world situations. This in turn will inform, support, and energize those who seek to change behavior. In order to realize the full potential of mobile affective sensing, some issues need further investigation. In general, pervasive affect systems measure either personal or collective emotions or both. Personal sensing is concerned with monitoring the emotions of one individual. On the other hand, collective sensing relates to measuring the emotion of many individuals, which can be aggregated spatially or temporally to provide an impression of emotional responses for a group of people. Therefore, the participatory sensing of emotion would need to be significantly powerful to recognize collective emotion patterns of a group of people in certain area or context. Crowdsourcing through participatory sensing opens new domains for affect recognition and behavior change support. Therefore, a new large-scale data collection analysis and visualization methods are required for new kinds of applications that can scale from an individual, to a target community or even the general population.

Furthermore, pervasive affective systems may endanger people’s privacy. These systems have to collect information about people’s feelings and activities along with their spatiotemporal context. However, the granularity of data collection can be tuned in different ways depending on the application needs to preserve privacy [61]. However, developing polices and mechanisms to preserve privacy in real-world affect sensing are mandatory. Finally, affective applications require the collection of data from pervasive devices on a continuous basis; this consumes a considerable amount of their battery life. To address this, a sampling rate has to be adjusted to make a trade-off between energy consumption and accuracy, thus resulting in energy savings. This demonstrates that an optimization methodology is essential for yielding energy-aware pervasive affective systems.

References

Jerritta S, Murugappan M, Nagarajan R, Wan K (2011) Physiological signals based human emotion recognition: a review. In: 2011 IEEE 7th international colloquium on signal processing and its applications (CSPA), pp 410–415

Lang PJ (1995) The emotion probe: studies of motivation and attention. Am Psychol 50:372–385

Mauss IB, Robinson MD (2009) Measures of emotion: a review. Cogn Emot 23:209–237

Peter C, Herbon A (2006) Emotion representation and physiology assignments in digital systems. Interact Comput 18:139–170

Picard RW, Papert S, Bender W, Blumberg B, Breazeal C, Cavallo D, Machover T, Resnick M, Roy D, Strohecker C (2004) Strohecker, affective learning—a manifesto. BT Technol J 22:253–269

Bradley MM, Lang PJ (1994) Measuring emotion: the self-assessment manikin and the semantic differential. J Behav Ther Exp Psychiatry 25:49–59

Desmet P, Overbeeke K, Tax S (2001) Designing products with added emotional value: development and application of an approach for research through design. Des J 4:32–47

MacKerron G (2011) mappiness.org.uk

Mody RN, Willis KS, Kerstein V (2009) WiMo: location-based emotion tagging. In: Proceedings of the 8th international conference on mobile and ubiquitous multimedia, ACM, p 14

Affectiva Q. http://www.affectiva.com/q-sensor/

BioHarness. http://www.zephyranywhere.com/products/bioharness-3/

Bodymonitor Systeme (BMS). http://www.bodymonitor.de/

NeuroSky. http://www.neurosky.com/

Ayzenberg Y, Rivera JH, Picard R (2012) FEEL: frequent EDA and event logging—a mobile social interaction stress monitoring system. In: CHI ‘12 extended abstracts on human factors in computing systems, ACM, Austin, pp 2357–2362

Ståhl A, Höök K, Svensson M, Taylor AS, Combetto M (2009) Experiencing the affective diary. Personal Ubiquitous Comput 13:365–378

Yamamoto J, Kawazoe M, Nakazawa J, Takashio K, Tokuda H (2009) MOLMOD: analysis of feelings based on vital information for mood acquisition. Skin 4:6

Perttula A, Tuomi P, Suominen M, Koivisto A, Multisilta J (2010) Users as sensors: creating shared experiences in co-creational spaces by collective heart rate. In: Proceedings of the 14th international academic MindTrek conference: envisioning future media environments, ACM, pp 41–48

Matsumoto D, Willingham B (2009) Spontaneous facial expressions of emotion of congenitally and non-congenitally blind individuals. J Pers Soc Psychol 96(1):1–10

Valstar M, Pantic M (2006) Fully automatic facial action unit detection and temporal analysis. In: Proceedings of the 2006 conference on computer vision and pattern recognition workshop, June 2006

Yang S, Bhanu B (2011) Facial expression recognition using emotion avatar image. In: Recognition and workshops, Santa Barbara, pp 866–871

Affdex. http://www.affectiva.com/affdex/

Hernandez J Facereader Noduls

Hoque ME, Drevo W, Picard RW (2012) Mood meter: counting smiles in the wild. In: Proceedings of the 2012 ACM conference on ubiquitous computing, ACM, pp 301–310

Calvo RA, D’Mello S (2010) Affect detection: an interdisciplinary review of models, methods, and their applications. Affect Comput IEEE Trans 1:18–37

Juslin PN, Scherer KR (2005) Vocal expression of affect. In: Harrigan JA, Rosenthal R, Scherer KR (eds) The new handbook of methods in nonverbal behavior research. Oxford University Press, New York, pp 65–135

Bänziger T, Scherer KR (2005) The role of intonation in emotional expression. Speech Commun 46:252–267

Lee C, Narayanan S, Pieraccini R (2002) Classifying emotions in human-machine spoken dialogs. Presented at proceedings of international conference on multimedia and Expo, Lausanne, Switzerland, August 2002

Lu H, Rabbi M, Chittaranjan GT, Frauendorfer D, Mast MS, Campbell AT, Gatica-Perez D, Choudhury T (2012) StressSense: detecting stress in unconstrained acoustic environments using smartphones. In: Proceedings of 14th international conference ubiquitous computing

Rachuri KK, Musolesi M, Mascolo C, Rentfrow PJ, Longworth C, Aucinas A (2010) EmotionSense: a mobile phones based adaptive platform for experimental social psychology research. In: Proceedings of the 12th ACM international conference on ubiquitous computing, ACM, 2010, pp 281–290

LiKamWa R, Liu Y, Lane ND, Zhong L (2011) Can your smartphone infer your mood. In: PhoneSense workshop, 2011

Yuanchao M, Bin X, Yin B, Guodong S, Run Z (2012) Daily mood assessment based on mobile phone sensing. In: Wearable and implantable body sensor networks (BSN), 2012 ninth international conference on, 2012, pp 142–147

Mislove A, Lehmann S, Ahn Y, Onnela J, Rosenquist J (2010) Pulse of the nation: US mood throughout the day inferred from Twitter 2010

Noulas A, Mascolo C, Frias-Martinez E (2013) Exploiting foursquare and cellular data to infer user activity in urban environments

Kanjo E, El-Mawass N, Craveiro J, Ramos F (2013) Social, disconnected or in between: mobile data reveals urban mood. In: The 3rd international conference on the analysis of mobile phone datasets (NetMob’13), MIT, MA, p 9

André E (2011) Experimental methodology in emotion-oriented computing. Pervasive Comput IEEE 10:54–57

Lee H, Choi YS, Lee S, Park I (2012) Towards unobtrusive emotion recognition for affective social communication. In: Consumer communications and networking conference (CCNC), 2012 IEEE, IEEE, pp. 260–264

Oh K, Park H-S, Cho S-B (2010) A mobile context sharing system using activity and emotion recognition with Bayesian networks. In: 2010 7th international conference on ubiquitous intelligence & computing and 7th international conference on autonomic & trusted computing (UIC/ATC), IEEE, 2010, pp 244–249

Kim H-J, Choi YS (2011) EmoSens: affective entity scoring, a novel service recommendation framework for mobile platform. In: Workshop on personalization in mobile application of the 5th international conference on recommender system

Al-Barrak L, Kanjo E (2013) NeuroPlace: making sense of a place. In: Proceedings of the 4th augmented human international conference, ACM, pp 186–189

Chang DFK-h, Canny J (2011) Ammon: a speech analysis library for analyzing affect, stress, and mental health on mobile phones. In: Proceedings of PhoneSense, 2011

Chang K-h, Fisher D, Canny J (2011) Bj, 246, r. Hartmann, How’s my mood and stress?: an efficient speech analysis library for unobtrusive monitoring on mobile phones. In: Proceedings of the 6th international conference on body area networks, ICST (Institute for Computer Sciences, Social-Informatics and Telecommunications Engineering), Beijing, 2011, pp 71–77

Patil SA, Hansen JH (2007) Speech under stress: analysis, modeling and recognition

Ekman P, Friesen WV Facial action coding system: a technique for the measurement of facial movement. Consulting Psychologists Press, Palo Alto

Ellsworth PC, Smith CA (1988) From appraisal to emotion: differences among unpleasant feelings. Motiv Emot 12(1978):271–302

Ekman P (1992) An argument for basic emotions. Cogn Emot 6:169–200

Küblbeck C, Ernst A (2006) Face detection and tracking in video sequences using the modifiedcensus transformation. Image Vis Comput 24:564–572

Wilhelmer R, Bismarck J, Maus B (2008) Feel-o-meter: Stimmungsgasometer. http://www.fühlometer.de

Bradley MM, Lang PJ (1999) Affective norms for English words (ANEW): instruction manual and affective ratings. In: Technical report C-1, the center for research in psychophysiology, University of Florida, 1999

Thelwall Mike, Buckley Kevan, Paltoglou Georgios, Cai Di, Kappas Arvid (2010) Sentiment in short strength detection informal text. JASIST 61(12):2544–2558

Matei S, Ball-Rokeach SJ, Qiu JL (2001) Fear and misperception of Los Angeles urban space: a spatial-statistical study of communication-shaped mental maps. Commun Res 28:429–463

Nold C (2006) Bio mapping. http://biomapping.net/

Pressman SD, Cohen S (2005) Does positive affect influence health? Psychol Bull 131:925

Fogg B (2007) Mobile persuasion: 20 perspectives on the future of behavior change. Mobile Persuasion 2007

Gay G, Pollak J, Adams P, Leonard J (2011) Pilot study of aurora, a social, mobile-phone-based emotion sharing and recording system. J Diabetes Sci Technol 5:325–332

Chang T-R, Kaasinen E, Kaipainen K (2013) Persuasive design in mobile applications for mental well-being: multidisciplinary expert review. In: Godara B, Nikita K (eds) Wireless mobile communication and healthcare. Springer, Berlin Heidelberg, pp 154–162

Dickerson RF, Gorlin EI, Stankovic JA (2011) Empath: a continuous remote emotional health monitoring system for depressive illness. In: Proceedings of the 2nd conference on wireless health, ACM, 2011, p 5

O.A.P. Ltd., Optimism apps (2011)

Burns MN, Begale M, Duffecy J, Gergle D, Karr CJ, Giangrande E, Mohr DC (2011) Harnessing context sensing to develop a mobile intervention for depression. J Med Internet Res 13(3):e55

Gaggioli A, Pioggia G, Tartarisco G, Baldus G, Corda D, Cipresso P, Riva G (2013) A mobile data collection platform for mental health research. Personal Ubiquitous Comput 17:241–251

Morris ME, Kathawala Q, Leen TK, Gorenstein EE, Guilak F, Labhard M, Deleeuw W (2010) Mobile therapy: case study evaluations of a cell phone application for emotional self-awareness. J Med Internet Res 12(2):e10

Bergner BS, Exner J-P, Zeile P, Rumberg M (2012) Sensing the city–how to identify recreational benefits of urban green areas with the help of sensor technology. In: Proceedings REAL CORP, 2012

Klettner S, Huang H, Schmidt M, Gartner G (2013) Crowdsourcing affective responses to space. KN Kartographische Nachrichten. J Cartogr Geogr Inf 2

Gartner G (2012) Openemotionmap. org—Emotional response to space as an additional concept in cartography. Int Arch Photogramm Remote Sens Spat Inf Sci (ISPRS) 39-B4:473–476

Doerflinger J, Gross T, Lyra O, Karapanos E, Kostakos V, Cuadrado-Cordero I, Soria-Morillo LM, Gonzalez-Abril L, Ortega-Ramirez JA, Pau de la Cruz I (2012) ICTD work, plus mFeel, pervasive computing, IEEE, vol 11, pp 43–45. Replace with Sense of space paper

Killingsworth MA, Gilbert DT (2010) A wandering mind is an unhappy mind. Science 330:932

Christin D, Reinhardt A, Kanhere SS, Hollick M (2011) A survey on privacy in mobile participatory sensing applications. J Syst Softw 84:1928–1946

Crabtree A, Chamberlain A, Grinter R, Jones M, Rodden T, Rogers Y (eds) (2013) Special issue on “The Turn to the Wild Introduction”. ACM Transactions on Computer-Human Interaction—ToCHI 20(3), 13:1–13:4

Kanjo E, Alajmi N, El-Mawass N (2013) ShopMobia: emotion based shop rating system, affective computing and intelligent interaction conference. In Submission, Geneva, Switzerland

Lane ND, Xu Y, Lu H, Hu S, Choudhury T, Campbell AT, Zhao F (2011) Enabling large-scale human activity inference on smartphones using community similarity networks (csn). In: Proceedings of the 13th international conference on Ubiquitous computing, ACM, 2011, pp 355–364

Li Q, Cao G (2013) Providing privacy-aware incentives for mobile sensing. In: IEEE international conference on pervasive computing and communications (PerCom), 2013

Ra M-R, Priyantha B, Kansal A, Liu J (2012) Improving energy efficiency of personal sensing applications with heterogeneous multi-processors. In: Proceedings of the 2012 ACM conference on ubiquitous computing (UbiComp ‘12). ACM, New York, pp 1–10. doi:10.1145/2370216.2370218

Chamberlain A, Crabtree A, Rodden T, Jones M, Rogers Y (201) Research in the wild: understanding ‘in the wild’ approaches to design and development. In: Conference on designing interactive systems 2012 ACM DIS, ACM Press

Crabtree A, Chamberlain A, Grinter RR, Jones M, Rodden T, Rogers Y (eds) (2013) Special issue on “The Turn to the Wild” with authored introduction”. ACM Transactions on Computer-Human Interaction—ToCHI 20(3), 13:1–13:4

Crabtree A, Chamberlain A, Davies M, Glover K, Reeves S, Rodden T, Tolmie P, Jones M (2013) “Doing Innovation in the Wild”, CHItaly 2013. Trento, Italy—(ACM Library)

Tolmie P, Chamberlain A, Benford S (2014) Designing for Reportability: sustainable gamification, public engagement and promoting environmental debate. In Personal and ubiquitous computing journal, Springer, New York

Acknowledgments

We would like to thank the following people for their insightful comments to this piece: Dr Andy Crabtree, University of Nottingham; Prof Alan Dix, University of Birmingham; Professor Sumi Hela, Florida University; Dr Neal Lathia, Cambridge University; Dr Vivek K. Singh, MIT; and Dr Hussein Al Osman, Ottowa University. We would also like to reference the following grants EPSRC EP/M001636/1 Privacy-by-Design: Building Accountability into the Internet of Things (Dr Andy Crabtree) and EP/L019981/1 Fusing Semantic and Audio Technologies for Intelligent Music Production and Consumption (Dr Alan Chamberlain).

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made.

The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder.

To view a copy of this licence, visit https://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Kanjo, E., Al-Husain, L. & Chamberlain, A. Emotions in context: examining pervasive affective sensing systems, applications, and analyses. Pers Ubiquit Comput 19, 1197–1212 (2015). https://doi.org/10.1007/s00779-015-0842-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00779-015-0842-3