Abstract

In topological data analysis (TDA), persistence diagrams (PDs) have been a successful tool. To compare them, Wasserstein and bottleneck distances are commonly used. We address the shortcomings of these metrics and show a way to investigate them in a systematic way by introducing bottleneck profiles. This leads to a notion of discrete Prokhorov metrics for PDs as a generalization of the bottleneck distance. These metrics satisfy a stability result and can be used to bound Wasserstein metrics from above and from below. We provide algorithms to compute the newly introduced quantities and end with an discussion about experiments.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

The field of topological data analysis (TDA) is becoming a popular tool to study the structure of complex data. One of the major tools of TDA is persistent homology (PH) [11]. Its pipeline takes a (often highly complex) point cloud in Euclidean space as input and produces a point cloud in the plane, the persistence diagram (PD), as output. Intuitively, PDs serve as a summary of the shape of the input data. As a consequence, one can compare different shapes indirectly, by comparing their PDs. The need for a robust and computationally efficient notion of distance for PDs arises. Classically, one uses the bottleneck and Wasserstein distances to this end [18]. However, the bottleneck distance only picks up the single biggest difference and the Wasserstein distance is prone to noise, as it picks up every difference no matter how small.

This fact motivates our work to search for new metrics. Starting from the investigation of bottlenecks, we introduce the notion of the bottleneck profile of two PDs, which is a map \(\mathbb {R}_{\ge 0}\rightarrow \mathbb {N}\cup \{\infty \}\) (Definition 9). This tool summarizes metric information at varying scales and generalizes the bottleneck distance. Also the Wasserstein distance can be, in special cases, computed from the bottleneck profile; in general, it can be bounded given a bottleneck profile.

The bottleneck profiles arises naturally in a discrete version of the Prokhorov distance, which is a classical tool in probability theory. It turns out that the bottleneck and the Prokhorov distance are just two instances of a whole family of Prokhorov-style metrics discussed in this paper (Definition 10). This family is parameterised by subclass of functions \(f:[0,\infty )\rightarrow [0,\infty )\). Not every function f gives in fact rise to a genuine metric; we examine the conditions on f in which cases it does (Definition 11, such f are called admissible).

Theorem 4.3

Fix an admissible function \(f:\mathbb {R}_{\ge 0}\rightarrow \mathbb {R}_{\ge 0}\). The discrete f-Prokhorov metric is an extended pseudometric.

In addition to theoretical development, we discuss algorithms to compute the bottleneck profile and various Prokhorov-type distances.

Proposition 4.16

Let \(f:[0,\infty )\rightarrow [0,\infty )\) be monotonically increasing. Assume that the values and preimages of f can be computed in O(1). Then \(\pi _f(X,Y)\) can be computed in \(O(n^2 \log n)\).

We provide a run-time analysis and experiments on a number of data sets. The algorithms are provided as an open source implementation.

2 Background

2.1 Measure Theory

Let (X, d) be a metric space. It is complete if every Cauchy sequence has a limit in X. It is separable if it has a countable dense subset. A complete separable metrizable topological space is called a Polish space. For example, all Euclidean spaces \(\mathbb {R}^n\) are Polish. Polish spaces are a convenient setting for measure or probability theory. In general, we endow X with the Borel \(\Sigma \)-algebra \(\mathfrak {B}(M)\) and denote the set of probability measures by \(\mathcal {P}(X)\).

Let us recall an important inequality [15, p. 6]:

Lemma 2.1

(Chebyshev’s inequality) Let \((X,\Sigma , \mu )\) be a measure space and let \(f:X\rightarrow \mathbb {R}\) be a measurable function. Then for any \(p>0\) and \(t>0,\)

2.1.1 Metrics for Probability Measures

There are various ways to compare different probability measures.

Definition 1

For \(p\ge 1\), the p-Wasserstein metric is

where the infimum is taken over all couplings \(\gamma \) with marginals \(\mu \) and \(\nu \).

The 1-Wasserstein metric is also known as Kantorovich metric or earth mover’s distance. The latter name is motivated by the idea of thinking about \(\gamma \) as a transport plan for moving a pile of earth \(\mu \) into the pile \(\nu \). The cost of transportation equals the distance by which the earth is moved.

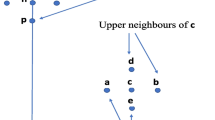

Intuitively, there are two different ways to “slightly change” a measure. The first one is to move all the mass by a tiny distance. The second one is to move a tiny part of the mass arbitrarily, possibly very far away. While the Wasserstein metric is stable under perturbations of the first kind, small changes of the second kind can result in large differences in the metric. The Prokhorov metric [23] seeks to resolve this problem. It is constructed in such a way that an \(\varepsilon \)-neighborhood of a measure is characterized as follows: One may move \(\varepsilon \) of the mass arbitrarily and the rest by at most \(\varepsilon \), see Fig. 1 for an illustration.

We now formalize this idea. For a Borel set \(A\subset X\), the (open) \(\varepsilon \)-ball around A is

The Prokhorov metric \(\pi \) for two probability measures \(\mu , \nu \) is defined as follows:

By Strassen’s Theorem (cf. [27, Appendix 1.4, Remark 1.29]), an alternative characterization of the Prokhorov metric is given in terms of couplings \(\gamma \) which marginalize to \(\mu \) and \(\nu \) (compare Fig. 2),

This allows for a discretization suitable for PDs, see Sect. 4.

Example 1

Let \(x_1, x_2 \in X\) with \(d(x_1,x_2)<1\) and consider the Dirac measures \(\delta _{x_1}, \delta _{x_2}\). We claim that \(\pi (\delta _{x_1}, \delta _{x_2}) = d(x_1,x_2)\). The only coupling with correct marginals is \(\delta _{(x_1,x_2)}\). Then we have

we write \(\mathbb {1}_{\varepsilon <d(x_1,x_2)}\) as a shorthand notation for the right hand side. Consequently, we have

In their survey [13], Gibbs and Su show that the 1-Wasserstein can be related to the Prokhorov metric via

where \({{\,\textrm{diam}\,}}(X)\) is the diameter of the underlying space. We provide discrete analogues of this estimate in Propositions 4.7 and 4.12.

For more on metrics of probability measures, see the book [24]; references for optimal transport include [22] which takes on a computational perspective.

2.2 Persistent Homology

Definition 2

The category \(\textbf{PersMod}\) is the functor category

from the reals as a poset category to finite dimensional vector spaces. Its objects are called pointwise finite dimensional (p.f.d.) persistence modules. A p.f.d. persistence module \(A = (A_t)_{t\in \mathbb {R}}\) comes with transition maps

for \(s\le t\).

Definition 3

An interval module for an interval \(J\subset \mathbb {R}\) is a p.f.d. persistence module with

and

for \(s\le t\). The start and endpoint of J are referred to as birth time b(J) and death time d(J), respectively. Their difference \(d(J)-b(J)\) is called the persistence or lifetime of an interval.

Note that we do not specify whether the endpoints are contained in the interval; they may be \(\pm \infty \). Interval modules are of special interest because p.f.d. persistence modules admit an interval decomposition.

Theorem 2.2

[9, Theorem 1.1] Let \(\mathbb {A}\in \textbf{PersMod}\). Then there exists a collection of intervals \(\mathcal {J}\) such that

Such an interval decomposition (sometimes called barcode) can be visualized via a PD.

Definition 4

A persistence diagram (PD) is multiset of points in \(\mathbb {R}^2\), consisting of

-

points above the diagonal \((b,d), b<d\), each with finite multiplicity, and

-

each point on the diagonal \(\Delta = \{(s,s)\in \mathbb {R}^2\}\) with countable multiplicity.

The convention to include diagonal points with infinite multiplicity will be useful for the construction of distances between persistence diagrams.

To obtain a PD from the above interval decomposition, collect the birth and death times of the intervals

(where the angled brackets indicate that the endpoints may or may not be included); add all the points on the diagonal with countable multiplicities. Off-diagonal points have finite multiplicities since the persistence module is p.f.d. We will freely identify off-diagonal points in the diagram with the corresponding interval. Points close to the diagonal have a short lifetime and are often regarded as noise. To compare persistence diagrams, we consider one-to-one correspondences between them. To take care of different cardinalities of off-diagonal points and to get rid of noisy, short-lifetime points, we allow them to be mapped to the diagonal. This explains the inclusion of the diagonal with infinite multiplicity in the above definition.

Definition 5

A matching \(\eta \) between PDs X and Y is a bijection which fixes all but finitely many diagonal points. The cardinality or size of a matching \(\eta \), denoted by \(|\eta |\) is the number of points which are not fixed.

Definition 6

The bottleneck distance between two PDs X, Y is

where \(\eta \) ranges over all matchings.

Definition 7

Let \(1\le p<\infty \). The p-Wasserstein distance between two PDs X, Y is

where \(\eta \) ranges over all matchings.

The notation of Definition 7 has the advantage of being compact, but note that we have uncountably many summands. Although usually, only finitely many, namely \(|\eta |\) for the optimal matching \(\eta \), will be non-zero. Similarly, also only finitely many elements of the uncountable set of which we take the supremum in Definition 6 are non-zero.

Definition 8

Let \(p\ge 1\). We say a PD X has finite pth moment, if the p-Wasserstein distance to the empty diagram is finite: \(W_p(X,\emptyset ) <\infty \).

Except from Sect. 4.2, the persistence diagrams in this paper are assumed to have finitely many off-diagonal points. Therefore, the infima in Definitions 6 and 7 are actually minima.

Notice the analogy between Definitions 1 and 7. We replace probability measures by counting measures and hence turn the integral into a sum. The infimum is taken over all matchings instead of all couplings. This observation will serve as a blueprint for the construction of the discrete Prokhorov metric for PDs in Sect. 4.

The motivation to compare PDs comes from TDA, where they serve as a summary statistic of topological information.

Example 2

Given a finite subset X of some metric space, we can consider the Vietoris–Rips complex, cf. [11, III.2]. This is a filtered simplicial complex; for filtration value \(r>0\) it is given by

Applying the homology functor gives rise to a persistence module: for \(r\le s\), the inclusion

induces a module homomorphism

This is called the (Vietoris–Rips) persistent homology of X, denoted \(PH _*(X)_t\). Summands in its interval decomposition are interpreted as topological features which are “born” at a certain point in the filtration and “persist” for some time. They are regarded to be more significant the longer the intervals are. The following theorem ascertains that this is a useful tool.

Theorem 2.3

[7, Theorem 3.1] Let X, Y be finite metric spaces, fix some \(k\ge 0\). Then we have

where \(d_{GH }\) is the Gromov–Hausdorff distance [16].Footnote 1

In other words, if we change the input point cloud by 2\(\varepsilon \) in the Gromov–Hausdorff metric, the resulting PDs differ by at most \(\varepsilon \) in the bottleneck distance.

3 Bottleneck Profiles

The bottleneck distance \(W_\infty \) has a major drawback: It only captures the single most extreme difference between two PDs. This implies that the same bottleneck distance can be realized by different pairs of PDs, cf. Fig. 3. We introduce the notion of the bottleneck profile to address the topic of secondary, tertiary, ... bottlenecks and their multiplicities.

Definition 9

Given two PDs X, Y, define their bottleneck profile to be

where \(|\,{\cdot }\,|\) denotes the cardinality of the set.

For \(d:\mathbb {R}^2 \times \mathbb {R}^2 \rightarrow \mathbb {R}_{\ge 0}\) we take an \(\ell ^p\)-metric \(d(x,y) = \Vert x-y\Vert _p\), where the choice of p might depend on the setting. For example, when comparing with the p-Wasserstein distance, one might like to choose this same p.

Since the infimum is taken over a subset of the natural numbers, it is actually a minimum. To be consistent with the notation in Definitions 6 and 7, we choose to adhere to the use of infimum. The following observation is immediate:

Lemma 3.1

The bottleneck profile \(D_{X,Y}\) is monotonically decreasing.

Proof

Let \(\eta :X\rightarrow Y\) be any matching realizing \(D_{X,Y}(s)\) for some s. Let now \(t>s\), then every distance longer than t is in particular longer than s and consequently

Taking the infimum over all matchings decreases the left hand side and yields \(D_{X,Y}(t)\).

\(\square \)

Knowing this, it is interesting when the bottleneck profile becomes zero.

Lemma 3.2

\(D_{X,Y}(t)=0\ \Longleftrightarrow \ t\ge W_\infty (X,Y)\).

Proof

By definition, the bottleneck distance is the smallest \(t>0\) such that there is a matching mapping all points within distance t. In formulas,

\(\square \)

Thus we recover the bottleneck distance from the bottleneck profile. The bottleneck cost of a matching is the longest distance over which two points are matched. Minimizing the bottleneck cost over all matchings yields the bottleneck distance, which we can think of as the primary bottleneck. Similarly, the secondary bottleneck cost of a matching is the second longest distance over which two points are matched. Taking the minimum over all matchings here gives a notion of a secondary bottleneck, which equals \(\inf {\{t>0:D_{X,Y}(t)\le 1\}}\) by an argument analogous to the previous proof. This motivates the name bottleneck profile.

Example 3

Let \(X=\{x\}\) and \(Y=\{y\}\) both consist of one point each and assume that \(d(x,y)<d(x,x')+d(y,y')\), where the prime denotes the projection to the diagonal. That means that \(x\mapsto y\) is an optimal matching. Consequently, the bottleneck profile looks as follows:

Example 4

If we take one of the PDs to be the empty one, there is only one choice of matching: everything is paired with the diagonal. As a consequence,

This is also known as the stable rank function corresponding to the contour \(C(a,\varepsilon )=a+2 \varepsilon \), introduced in [6], which counts the bars of X of length \(>2t\).

Example 5

Consider some particular simple PDs. The first three parts of Fig. 4 each show a base diagram (“Diagram X”, in blue) with four points and perturbations of it: The orange diagram (“Diagram Y”) in the first image is obtained by shifting the blue one by three. The green diagram (“Diagram Z”) shifts the top point of X by three, the next point by two, the third by one and leaves the lowest point unchanged. For the yellow diagram (“Diagram W”) in the third image, we only shift two points from X by three and leave the other two untouched. Clearly, the bottleneck distance between the base diagram and each of the shifted versions is three. But the amount of shifted points is reflected in the bottleneck profile: While \(D_{X,Y}(t)\) is four, \(D_{X,Z}(t)\) is two (i.e., the multiplicity of the bottleneck) for \(0<t<3\). And \(D_{X,W}\) displays more steps, reflecting the fact that there are secondary and tertiary bottlenecks.

Note that the function D enjoys some properties reminiscent of a metric (hence the notation D): It is obviously symmetric. The triangle inequality does not hold pointwise but in a scaled version, that is:

Lemma 3.3

For all PDs X, Y, Z and all real numbers \(s,t\ge 0,\) \(D_{X,Z}(s+t)\le D_{X,Y}(s)+D_{Y,Z}(t)\).

The situation in the proof of Lemma 3.3

Proof

This follows from the triangle inequality on \(\mathbb {R}^2\). Fix \(s,t\ge 0\), let \(\eta _{X,Y}:X\rightarrow Y\) and \(\eta _{Y,Z}:Y\rightarrow Z\) denote optimal matchings realizing \(D_{X,Y}(s)\) and \(D_{Y,Z}(t)\), respectively. Let \(\eta = \eta _{Y,Z}\circ \eta _{X,Y}:X\rightarrow Z\) be the matching obtained by composition. It suffices to show that

because the left hand side only decreases if we take the infimum over all matchings. Hence we have to investigate what happens when a point x is matched to \(\eta (x)\) which is farther apart than \(s+t\). Note that \(\eta (x) = \eta _{Y,Z}( \eta _{X,Y}(x))\), so we compare the distances of the matched points using the triangle inequality,

Therefore, it cannot be that both \(d(x,\eta _{X,Y}(x))\le s\) and \(d(\eta _{X,Y}(x), \eta (x))\le t\) (compare Fig. 5). That means, we have \(d(x,\eta _{X,Y}(x))> s\) or \(d(\eta _{X,Y}(x), \eta (x))> t\) or both. Using the principle of inclusion–exclusion, conclude

Note that \(D_{X,Y}(t)=0\) for all \(t>0\) implies \(X=Y\) only under some finiteness assumptions. For example, consider a converging sequence \((a_n)_{n\in \mathbb {N}}\subset \mathbb {R}^2\) above the diagonal with limit \(a\notin (a_n)_{n\in \mathbb {N}}\), which is also above the diagonal. Set X to consist of all elements of the sequence \((a_n)_{n\in \mathbb {N}}\). Set Y to be \(X\cup \{a\}\). Then for all \(\varepsilon >0\) there exists \(\eta :X\rightarrow Y\) such that \(d(x,\eta (x))<\varepsilon \) for all \(x\in X\). Therefore, \(D_{X,Y}(t)=0\) for every \(t>0\), but \(X\ne Y\).

Following [3], we denote by \(\bar{\mathcal {B}}\) the set of PDs such that for each \(\varepsilon >0\) there are finitely many points of persistence \(>\varepsilon \). The next lemma is an immediate consequence of [3, Lemma 3.4].

Lemma 3.4

The bottleneck profile satisfies \(D_{X, X}(t) = 0\) for all PDs X and \(t>0\). Moreover, \(D_{X, Y}(t) = 0\) for all \(t>0\) implies \(X=Y\) for \(X, Y\in \bar{\mathcal {B}}\).

Proof

If \(D_{X, Y}(t) = 0\) for all \(t>0\), then \(W_\infty (X,Y)=0\) by Lemma 3.2. Now for \(X,Y \in \bar{\mathcal {B}}\), this only happens if \(X=Y\) by [3, Lemma 3.4]. \(\square \)

3.1 Relation to Wasserstein Distances

We have already seen how the bottleneck profile is related to the bottleneck distance. This is actually part of a more general result comparing it to p-Wasserstein metrics.

Lemma 3.5

Let X, Y be two PDs, and let \(p>0\). Then

Proof

This follows from the Chebyshev inequality (Lemma 2.1) for counting measures. To spell out the details, estimate that for every bijection \(\eta \),

Now choosing \(\eta \) to minimize the right hand side, we have by definition of the Wasserstein distance an estimate for \(D_{X,Y}\):

This is illustrated by Fig. 6. Note that we recover Lemma 3.2 in the limit for \(p \rightarrow \infty \):

For 1-Wasserstein, we have a further estimate:

Illustrating the proof of Lemma 3.6: decomposing the area under the graph into rectangles

Lemma 3.6

\( \int _0^\infty D_{X,Y}(t)\,dt \le W_1(X,Y)\).

Proof

Let \(\eta :X\rightarrow Y\) be the matching realizing \(W_1(X,Y)\). We compute the area under the graph of the function \(t\mapsto |\{x:d(x,\eta (x))>t\}|\), which is piece-wise constant. Decomposing it into rectangles of height one yields a width of \(\inf {\{t>0:|\{x:d(x,\eta (x))>t\}|<i\}}\) for \(i\ge 1\), cf. Fig. 7. The width of the ith rectangle is the length of the ith longest edge in the matching. Summing over all i is therefore the same as summing the distances over which points are matched. In formulas:

Proposition 3.7

If the bottleneck profile \(D_{X,Y}(t)\) can be realized by the same matching \(\eta \) for all \(t>0,\) then \(\eta \) realizes \(W_1(X,Y)\).

Proof

If \(\eta \) realizes \(D_{X,Y}(t)\) for all \(t>0\), then the inequality in the proof of the previous lemma becomes an equality

Combining this with Lemma 3.6, we obtain

Consequently, the inequality \((*)\) is actually an equality, which is what we wanted to prove. \(\square \)

3.2 Algorithms

Recall the definition

and let \(\eta \) be the matching realizing the infimum. Then \(\eta \) also realizes the following supremum:

and consequently

Here, \(|\eta |\) denotes the number of matched pairs which involve at least one off-diagonal point. The computation of \(\sup \nolimits _\eta \,|\{x:d(x,\eta (x))\le t\}|\) is a version of the unweighted maximum cardinality bipartite matching problem. First, set up the following notation (following [11, Chap. VIII.4]). Denote by \(X_0\) the off-diagonal points of X and by \(X_0'\) their projections to the diagonal (and analogously for Y). Set \(U=X_0\cup Y_0'\) and \(V=Y_0\cup X_0'\) and consider the bipartite graph \(G=(U \cup V, E)\) with \(e=\{u,v\}\in E\) if either of the following holds:

-

\(u\in X_0\), \(v\in Y_0\), and \(d(u,v)\le t\),

-

\(u\in X_0\), \(v\in X_0'\) is its projection to the diagonal, and \(d(u,v)\le t\),

-

\(v\in Y_0\), \(u\in Y_0'\) is its projection to the diagonal, and \(d(u,v)\le t\),

-

\(u\in Y_0'\) and \(v\in X_0'\).

Let \(M\subset E\) be a matching of maximal cardinality. Observe that such a matching corresponds to a bijection \(\eta :X\rightarrow Y\) maximizing \(|\{x:d(x,\eta (x))\le t\}|\).

To estimate the run-time of this algorithm, let \(n = |X|+|Y|\). We solve the unweighted maximum cardinality bipartite matching problem using the Hopcroft–Karp algorithm [17]. Let us briefly recall this classical algorithm. The algorithm extends a partial matching M until it reaches a maximum one. It achieves this by augmenting paths: A path p that starts at an unmatched vertex in U and ending at an unmatched vertex in V such that edges from U to V are not in M but edges from V to U are. Removing edges from \(p\cap M\) from the matching and instead inserting edges from \(p\cap (E\setminus M)\) increases the size of M by one. The Hopcroft–Karp algorithm finds vertex-disjoint augmenting paths in \(O(n^2)\) via the so-called layer subgraph, which is constructed via a depth-first search in \(O(n^2)\). After extending the matching using all these augmenting paths, the algorithm starts over. The algorithm terminates after \(O(\sqrt{n})\) of these iterations.

While this consequently takes \(O(n^{2.5})\) in the worst case, we perform a variant which exploits the geometric nature of the setting, as suggested in [12]. Instead of building the layer graph explicitly, one can use a geometric data structure that allows for querying neighbors within a given distance, as well as removing points. Following [18], k–d trees achieve this requiring \(O(\sqrt{n})\) for either of the two operations. Consequently, as noted by [18] and [12], our variant of the Hopcroft–Karp algorithm runs in \(O(n^2)\). Summarizing, we find the following:

Proposition 3.8

Let X, Y be finite PDs and denote \(n=|X|+|Y|\). The value of the bottleneck profile at t, \(D_{X,Y}(t),\) can be computed in \(O(n^2)\).

Remark 1

Using k–d trees is useful in practice, but does not yield optimal theoretical run-times. Indeed, the more sophisticated data structure from [12, Sect. 5.1] can be constructed in \(O(n\log n)\). The two relevant operations on it require \(O(\log n)\), so that the bottleneck profile could be evaluated in \(O(n^{1.5}\log n)\) using this method.

Remark 2

Instead of using Hopcroft–Karp, one can regard the matching problem as a linear program. For each \(x \in X\) and \(y\in Y\), we have a binary variable \(f_{xy}\) indicating whether the edge from x to y is in the matching. The coefficients (the cost of the edge) are given by

The objective is

4 Discrete Prokhorov Metrics for Persistence Diagrams

A straight-forward discretization of the coupling characterization of the probabilistic Prokhorov metric (1) gives the main notion of this section.

Definition 10

Given two PDs X, Y, consider matchings \(\eta :X \rightarrow Y\) to define their Prokhorov distance as

Informally, we look at the intersection of the bottleneck profile with the diagonal. Similarly, we have already seen that the bottleneck distance arises as the intersection of \(D_{X,Y}\) with the horizontal axis. This motivates the question, what functions we can intersect the bottleneck profile with to obtain a sensible notion of distance.

Definition 11

Consider a function \(f:[0,\infty ]\rightarrow [0,\infty ]\). We say f is superadditive if for any \(s,t\ge 0\) we have \(f(s+t)\ge f(s)+f(t)\). A superadditive function f is called admissible if \(\lim _{t\searrow 0}f(t)=0\). Furthermore, the function \(f\equiv 1\) is also said to be admissible.

Notice that such superadditive functions are monotonically non-decreasing. For example, any linear function with non-negative slope is admissible. Moreover, increasing convex functions f with \(f(0)=0\) are admissible. For instance, polynomials with non-negative coefficients and absolute term zero fulfill this criterion.

Definition 12

Given a fixed admissible function \(f:\mathbb {R}_{\ge 0}\rightarrow \mathbb {R}_{\ge 0},\) define for any two PDs X, Y their f-Prokhorov distance to be

Plugging in \(f =id \) gives the Prokhorov distance, plugging in \(f\equiv 1\) recovers the bottleneck distance (this is why this function is admissible even though it is not superadditive).

Intuitively, for \(n\in \mathbb {N}\), plugging in \(f\equiv n\) (although this is not an admissible function) gives the nth bottleneck.

For two Prokhorov-close PDs, we require the number (= counting measure) of unmatched points to be small. Points with small persistence get matched to the diagonal and thus do not blow up the Prokhorov distance. Hence it is robust with respect to noise.

Example 6

Assume f is invertible. Recall the situation of Example 3: \(X=\{x\}\), \(Y=\{y\}\) both consist of one point each and we assume that \(d(x,y)<d(x,x')+d(y,y')\), where the prime denotes the projection to the diagonal. We saw that the bottleneck profile looks as follows:

It follows that

Lemma 4.1

For f admissible, \(D_{X,Y}(\pi _f(X,Y)) \le f(\pi _f(X,Y))\).

Proof

Note that \(D_{X,Y}\) is right-continuous by construction. \(\square \)

The triangle inequality follows from Lemma 3.3.

Lemma 4.2

Fix an admissible function \(f:\mathbb {R}_{\ge 0}\rightarrow \mathbb {R}_{\ge 0}\). For any three PDs X, Y, Z, we have

Proof

We make the following estimates:

Here we used Lemma 3.3 for the first inequality, Lemma 4.1 for the second, and superadditivity of f for the final one. Therefore,

the left hand side is the definition of \(\pi _f(X,Z)\), as desired. \(\square \)

As the symmetry is clear, we have shown:

Theorem 4.3

Fix an admissible function \(f:\mathbb {R}_{\ge 0} \rightarrow \mathbb {R}_{\ge 0}\). The discrete f-Prokhorov metric is an extended pseudometric.

Just like for the bottleneck distance, we need some finiteness property for the \(\pi _f\) to be a genuine metric. Let \(\bar{\mathcal {B}}\) denote the PDs which for every \(\varepsilon >0\) have only finitely many points of persistence \(>\varepsilon \). Then Lemma 3.4 implies:

Lemma 4.4

Let \(f:\mathbb {R}_{\ge 0} \rightarrow \mathbb {R}_{\ge 0}\) be admissible. For \(X,Y\in \bar{\mathcal {B}},\) we have \(\pi _f(X,Y) = 0\) only if \(X=Y\).

Proof

If \(\pi _f(X,Y) = 0\), then \(D_{X,Y}(t)<f(t)\) for all \(t>0\). As the bottleneck profile is monotonically decreasing and \(\lim _{t\searrow 0} f(t) = 0\), this implies \(D_{X,Y}(t)=0\) for all \(t>0\). By Lemma 3.4, this happens only if \(X=Y\). \(\square \)

Our next task is to investigate how \(\pi _f\) depends on the function f. While from a metric point of view, we need to fix f, the context of data science suggests a different perspective: For given training data (a fixed set of PDs) adjust f to obtain a metric that performs well on it (e.g. in a classification problem, cf. Sect. 5).

Lemma 4.5

Let \(f,g:\mathbb {R}_{\ge 0}\rightarrow \mathbb {R}_{\ge 0}\) such that \(f(t)\le g(t)\) for all \(t\ge 0\). Then for any two PDs X, Y, we have \(\pi _g(X,Y)\le \pi _f(X,Y)\).

Proof

If \(t>0\) satisfies \(D_{X,Y}(t)<f(t)\), then also \(D_{X,Y}(t)<g(t)\). Therefore,

and by definition \(\pi _g(X,Y) \le \pi _f(X,Y)\). \(\square \)

For fixed PDs, the Prokhorov metric is continuous with respect to the functions in supremum metric.

Proposition 4.6

Fix two PDs X, Y. Let \(f:\mathbb {R}_{\ge 0} \rightarrow \mathbb {R}_{\ge 0}\) be admissible. Then for all \(\varepsilon >0\) there is \(\delta >0\) such that for each admissible \(g:\mathbb {R}_{\ge 0}\rightarrow \mathbb {R}_{\ge 0},\) we have

Proof

Without loss of generality, assume that \(f(\pi _f(X,Y)) \le g(\pi _g(X,Y))\) (otherwise exchange f and g below). This implies \(\pi _f(X,Y)\ge \pi _g(X,Y)\) by monotonicity of \(D_{X,Y}\). We choose \(\delta < f(\varepsilon )\) and estimate

By monotonicity of f we find that

\(\square \)

From a data science perspective, the preceding lemma allows us to tune the parameter function f on a fixed training set of PDs.

4.1 Comparison with Wasserstein

Fix a PD X and consider Wasserstein metrics and Prokhorov distances to some other diagram Y. We can perturb Y by adding more “noise”. More precisely, we add k points whose distance to the diagonal is less than \(\pi _f(X,Y)\) and denote this diagram by \(Y_k\). This does not affect the Prokhorov metric at all, while for all \(p\in [1,\infty )\), the value of \(W_p(X,Y_k)\) goes to infinity when k does. This is what we mean when we say that the Prokhorov metric is more robust with respect to noise compared to the Wasserstein metric. In other (more mathematical) words, the identity map \(id :({{\,\textrm{Dgm}\,}},\pi _f)\rightarrow ({{\,\textrm{Dgm}\,}},W_p)\), where \({{\,\textrm{Dgm}\,}}\) is the set of all PDs, is nowhere continuous for \(p\in [1,\infty )\).Footnote 2 In this section, we further explore the relation between Prokhorov and Wasserstein distances.

Similarly to the proofs in [13] for the measure-theoretic variants, we can bound our metric in terms of the Wasserstein distance. As we will explain, the metrics \(\pi _{t\mapsto t^q}\) are of special interest.

Proposition 4.7

Let \(p\ge 1,\) \(q\ge 0,\) \(c>0,\) and \(f(t) = c \cdot t^q\). For two PDs X, Y we have

Proof

Recall from Lemma 3.5 that

We now want to find a suitable value of t such that \(D_{X,Y}(t)<c \cdot t^q\) to infer that \(\pi _f(X,Y)\le t\). Plugging in \(t =W_p(X,Y)^{p/(p+q)}\cdot c^{-1/(p+q)}\), one obtains

Now if \(q=0\), the right hand side simplifies to \(c = f\bigl (W_p(X,Y)\cdot c^{-1/p}\bigr )\). If \(q>0\), we compute

Therefore,

and we conclude with (2) as desired. \(\square \)

Corollary 4.8

Let \(p\ge 1,\) \(q\ge 0,\) and \(c>0\). The map \(\text {id}:({{\,\textrm{Dgm}\,}}, W_p) \rightarrow ({{\,\textrm{Dgm}\,}}, \pi _{c\cdot t^q})\) is continuous.

When comparing with the bottleneck distance, i.e., \(p=\infty \) in the above setting, we can say even more:

Proposition 4.9

For all admissible f and all PDs we have \(\pi _f(X,Y) \le W_\infty (X,Y)\).

Proof

We recall by Lemma 3.2 that

and therefore \(\pi _f(X,Y)\le W_\infty (X,Y)\). \(\square \)

Specializing to \(c=1\) and \(p\in \{1,\infty \}\) or \(q\in \{0,1\}\), we obtain

Corollary 4.10

The following inequalities hold:

In particular, the Bottleneck Stability Theorem 2.3 implies stability for the new metrics by Proposition 4.9:

Theorem 4.11

Let X, Y be finite metric spaces, fix some admissible function f and \(k \in \mathbb {N}\). Then we have

where \(d_GH \) is the Gromov–Hausdorff distance.

We can provide not only lower but also upper bounds for Wasserstein distances in terms of the Prokhorov distance.

Proposition 4.12

where \(\eta :X \rightarrow Y\) is any matching realizing \(\pi _{t^q}(X,Y)\).

Proof

For an arbitrary bijection \(\eta :X \rightarrow Y\), consider \(t>0\,\,\text {with}~|\{d(x,\eta (x))\) \(\,>t\}|\le t^q\). We estimate:

Taking the infimum over all matchings and all such t we obtain the desired inequality

\(\square \)

Combining the two inequalities from Propositions 4.7 and 4.12, we obtain a comparison for different Wasserstein metrics.

Corollary 4.13

\(W_q(X,Y)^q\le W_p(X,Y)^{pq/(p+q)}(\max (d(x,\eta (x)))^q+|\eta |).\)

Remark 3

Another inequality relating Wasserstein distances for different p and q originates from the Hölder inequality, given in [2, Lemma 3.5]: for finite PDs X, Y and real numbers \(1\le q<p<\infty \), we have

where \(\eta \) is the matching realizing \(W_p(X,Y)\). Our inequality above yields a lower exponent for \(W_p(X,Y)\) at the cost of multiplying with the largest distance in the matching. In particular, for \(q=1\), \(p=2\), our formula reads

with \(\eta \) realizing \(\pi _{t^q}(X,Y)\), whereas the one of [2] reads [with \(\eta \) realizing \(W_2(X,Y)\)]

Depending on the size of \(W_p(X,Y)\) relative to the size of X and Y, our inequality can provide sharper bounds than the one of [2]. To investigate the size of \(\max (d(x,\eta (x)))\) remains an interesting question for future work. One possible application of such inequalities is that they allow to infer stability results for vectorizations with respect to \(W_p\) for \(p>1\) from the stability with respect to \(W_1\). Another use of Propositions 4.7 and 4.12 is that the bounds they provide for Wasserstein distances are easily computed, as we will see in Sect. 4.3.

4.2 Metric and Topological Properties

Using the comparison with Wasserstein (Sect. 4.1) and the results from [20], we address questions of convergence and separability. We run into similar issues as [4, Theorems 4.20, 4.24, 4.25] and [3, Sect. 3]. In this section, we explicitly allow diagrams with a countably infinite number of off-diagonal points under certain finiteness assumptions specified below.

Theorem 4.14

Let \(p\ge 1\). The space of PDs with finite pth moment endowed with the \(ct^q\)-Prokhorov metric is separable.

Proof

Let \(\varepsilon >0\), X a PD and \(p\ge 1\). Let S be a countable dense subset for the p-Wasserstein metric; this exists by [20, Theorem 12]. In fact they show that we can take S to be the set of finite diagrams whose points have rational coordinates. Let \(X_S\in S\) be a PD such that \(W_p(X,X_S)<\varepsilon ^{(p+q)/p}\cdot c^{1/p}\). Then by Proposition 4.7, we have

\(\square \)

Note that the assumptions in the previous theorem are weaker than the ones usually considered for the bottleneck distance, compare [4, Theorem 4.18].

Recall that \(\bar{\mathcal {B}}\) denotes the PDs which for all \(\varepsilon >0\) have finitely many points of persistence \(>\varepsilon \). The next theorem is a consequence of [3, Theorem 3.5], which asserts that the bottleneck distance makes \(\bar{\mathcal {B}}\) into a Polish space.

Theorem 4.15

The space \(\bar{\mathcal {B}}\) endowed with the Prokhorov metric \(\pi _f\) is Polish for all admissible f.

Proof

Let \((X_n) \subset \bar{\mathcal {B}}\) be a Cauchy sequence with respect to the Prokhorov metric \(\pi _f\). Let \(\varepsilon >0\) such that \(f(\varepsilon )\le 1\). Then the inequality \(\pi _f(X_m,X_n)<\varepsilon \) implies by definition of \(\pi _f\) that

As the bottleneck profile takes values in the integers, we conclude that \(D_{X_m,X_n}(\varepsilon )=0\) and hence, by Lemma 3.2, we have \(\varepsilon \ge W_\infty (X_m,X_n)\). In particular, \(X_n\) is a Cauchy sequence with respect to the bottleneck distance. By completeness of \(\bar{\mathcal B}\) with the bottleneck distance, there is a limit diagram \(X\in \bar{\mathcal B}\) to which the sequence converges. Finally, by Lemma 4.9, convergence in bottleneck implies convergence in Prokhorov.

Now for separability, consider a subset \(A\subset \bar{\mathcal B}\) which is dense with respect to the bottleneck distance. Let \(X\in \bar{\mathcal B}\) and \(\varepsilon >0\). Then by assumption, there is \(Y\in A\) with \(W_\infty (X,Y)<\varepsilon \). Then, since by Proposition 4.9, \(\pi _f(X,Y)\le W_\infty (X,Y)\), we also have \(\pi _f(X,Y)<\varepsilon \). Therefore, A is dense in \(\bar{\mathcal {B}}\) with respect to \(\pi _f\) as well. \(\square \)

4.3 Algorithms

In this section, all PDs are finite. Now we will provide an algorithm to compute \(\pi _f(X,Y)\) for continuous monotonically increasing functions f. In this case, there is always a single value \(t_0\in [0,\infty )\) such that \(D_{X,Y}(t)<f(t)\) for \(t>t_0\) and \(D_{X,Y}(t)>f(t)\) for \(t<t_0\). We can find its location by bisection. Recall that we set \(n=|X|+|Y |\).

Proposition 4.16

Let \(f:[0,\infty )\rightarrow [0,\infty )\) be monotonically increasing. Assume that the values and preimages of f can be computed in O(1). Then \(\pi _f(X,Y)\) can be computed in \(O(n^2 \log n)\).

Proof

First, observe that the Prokhorov distance takes its value among the pairwise distances of points in the PDs (if f crosses the bottleneck profile at one of its vertical gaps) or among preimages of integers under f (if f crosses the bottleneck profiles at one of its constant pieces), in formulas

To perform a binary search, we sort the elements in \(T_1\) as a preprocess, which has runtime complexity \(O(n^2\log n)\). In each iteration of the binary search we pick the median \(t\in T_i\). Next we compute the value of the bottleneck profile \(D_{X,Y}(t)\) using Proposition 3.8, taking \(O(n^2)\). Then we compute f(t), which by assumption takes O(1). Now if \(D_{X,Y}(t)>f(t)\) set \(T_{i+1}\) to be the right half, if \(D_{X,Y}(t)\le f(t)\) set \(T_{i+1}\) to be the left half of \(T_i\). Hence we obtain a runtime of \(O(n^2 \log {n})\) for the binary search as well. \(\square \)

In particular, if one uses a more efficient geometric data structure to improve the runtime of the matching algorithm, the sorting preprocessing dominates the runtime. Compare [12, Theorem 3.2] and the preceding discussion therein for more details and possible improvements of the runtime complexity. Please refer to Sect. 6 for details about our implementation and its availability.

There is an easy modification to the above algorithm to approximate \(\pi _f\) up to an additive error of \(\varepsilon \). Instead of performing the binary search on the indicated discrete set (which needs to be sorted or otherwise pre-processed in a costly way, as noted), one can run it on an interval [0, M]. Here, M is some upper bound, for example the sum of the longest lifespans of points in X and Y respectively [which is computed in O(n)]. We bisect the interval until we arrive at one of length less than \(2\varepsilon \). Its midpoint is guaranteed to be less than \(\varepsilon \) away from the true value of \(\pi _f(X,Y)\).

5 Experiments

A simple application of the bottleneck profile, based on simple synthetic PDs, was already presented in Example 5.

5.1 Highlighting Geometric Intuition

This experiment is a toy example, showing how the Prokhorov distance can capture our geometric intuition more accurately than bottleneck or Wasserstein. Consider three different shapes in \(\mathbb {R}^2\): (a) a big circle (\(r=6\)), (b) a big (\(r=6\)) and a medium circle (\(r=4\)), (c) a big (\(r=6\)), a medium (\(r=4\)), and small circle (\(r=2\)). We take five samples with noise from each shape according to Table 1. For each point cloud we compute the first PH modules of it alpha complex filtration and represent them as PDs (see Fig. 8). We can look at the averaged D-function for each pair of shapes (Fig. 9). After careful inspection of this figure and some trial and error, we come up with the choice of \(f(t)=t^3\cdot 20^t\) to separate three bottleneck profiles in a most efficient way: Between around 0.55 and 0.65, the averaged bottleneck profiles involving shape (c) with the small circle decrease, while the one comparing (a) and (b) stays constant. Intersecting with a function in this interval will provide a good choice for the Prokhorov distance: it puts the two and three circles closest to each other and one and three circles the farthest apart. In data science tasks, we will of course need an automated way to find a good parameter function f, we will discuss this in more detail below.

MDS plots of the dataset in Sect. 5.1

Now we want to compare the bottleneck, Prokhorov, and Wasserstein distances. The bottleneck distance between shapes (a) and both (b) and (c) is roughly the same. This distance does not take the presence of the additional small circle in shape (c). By blowing up the sample size and the noise in shape (b), the Wasserstein distance from (a) and (c) to it are artificially blown up (Figs. 10 and 11). The Prokhorov distance is built to avoid these pitfalls and nicely captures the geometry of the setting. The MDS plot for Prokhorov agrees with our intuition and places (b) between (a) and (c) (Fig. 10).

5.2 Classification Experiments

We now turn to more sophisticated data sets to illustrate the usage and advantages of the Prokhorov distance. In particular, we consider PDs that actually arise in applications of TDA. We use the library [21] for standard machine learning algorithms (in particular K-neighbors). For the bottleneck and Wasserstein metrics we use the GUDHI library [14] and [10]. To score the different metrics, we use K-neighbors classification accuracy as well as classification accuracy based on K-medoids clustering with the “build” initialization [25, 26]. In the latter case, points are assigned to the class of the medoid of their cluster. We split the data sets into training and testing with 50% of the points each. All computations were carried out on a laptop with an Intel i5-8265U CPU with 1.60 GHz and 8 GB memory. The code to reproduce the experiments is available online.Footnote 3

Distance matrices of the dataset in Sect. 5.1

5.2.1 Parameter Tuning: Choosing f

One needs to specify an admissible function f as a parameter for the Prokhorov distance \(\pi _f\). The set off all such functions is vast, therefore it is sensible to restrict to a smaller subset. In the experiments below, we choose f from linear functions with integer slope \(\in [10,100]\). We do this by performing a grid search over the parameters and evaluating them by fivefold cross-validation. By selecting this subset of parameters, we reduce the risk of overfitting and are able to run the parameter selection in reasonable time. We leave it as a problem for further investigation to find better means to run the parameter selection, but note that the fact that the bottleneck profile is piecewise constant obstructs the use of gradient descent.

5.2.2 Prokhorov Distance for Cubical Complexes with Outlier Pixels

We generateFootnote 4\(100\times 100\) pixel greyscale images according to the following procedure, cf. Fig. 12. Initializing every pixel with 0, we choose n points at random, at which we add a Gaußian with \(\sigma =3\). We normalize the values to [0, 2] and then shift them up by 64. The goal is to distinguish images with \(n=15\) from images with \(n=20\). The obstacle is that we superimpose a particular kind noise, similar to salt-and-pepper noise. We choose k pixels randomly at which we set the value to a random integer from [1, 128]; the eight surrounding pixels are set to zero.

For each of the four combinations \(n\in \{15,20\}\) and \(k\in \{3,5\}\) we sample 50 greyscale images. We then create a cubical complex from each using the pixels as top-dimensional cells (lower-star filtration) and compute PH in dimensions 0 and 1. We proceed as indicated at the beginning of this section to assess the accuracy of the different metrics. The results are summarized in Table 2. Both in dimension 0 and 1, the K-neighbors classifier is inconclusive in the setting of bottleneck and Wasserstein. With a suitable Prokhorov metric, we are able to achieve an accuracy of more than 80%. In the K-medoids approach, the story is similar but less pronounced: bottleneck and Wasserstein are inconclusive, but Prokhorov achieves around 60% accuracy.

5.2.3 3D Segmemtation

We adapt an example from [5] and [10], which is based on the dataset [8]. The task is to classify 3D-meshes based on the PDs of certain functions defined on them. The shapes are for example airplanes, hands, chairs ... The results of classification are presented in the Table 3. All the considered metrics yield a similar accuracy. Prokhorov is the fastest, however at the cost of first having to find the suitable parameter, which took more than 10 h in this case.

5.2.4 Synthetic Dataset

Finally, we consider the dataset introduced by [1, Sect. 6.1]. It contains six shape classes: A sphere, a torus, clusters, clusters within clusters, a circle and the unit cube. From each class take 25 samples of 500 points. Then add two levels of Gaussian noise (\(\eta =0.05,0.1\)) and the zeroth and first PH of the Vietoris–Rips filtration are computed. We compute the distance matrices and evaluate them based on the K-neighbors and K-medoids classifiers. The results are displayed in Table 5. We find that Prokhorov performs better bottleneck and only slightly worse than Wasserstein. Prokhorov takes at most similarly long as 1-Wasserstein; Bottleneck is faster and 2-Wasserstein is slower.

5.3 Discussion

First and foremost, we found that Prokhorov is able to produce good results in situations where the classical tools of bottleneck and Wasserstein fail. In order to explain the differences in the computation time, we note the size of the PDs in the various settings:

By inspecting Table 4 we see that the 3D segmentation dataset contains way smaller diagrams, on which the Prokhorov metric seems to perform well, both in terms of runtime and score. On the bigger diagrams from the synthetic dataset, the Wasserstein metrics yield the highest scores. Prokhorov outperforms bottleneck in the scores at the cost of higher runtimes. The difference in the computation time is caused by the evaluation of f(t), which is the only difference between the bottleneck and Prokhorov implementations.

Bottleneck—and to some extend also Prokhorov—work less well on zero-dimensional PDs. There, every class is born at time zero, hence the PD is intrinsically one-dimensional and points are matched in linear order. The bottleneck distance is less meaningful in this setting. Moreover, the Prokhorov (and even more the bottleneck) distance do not take points matched over a small distance into account. This is a consequence of being designed to be robust against noise. However, this data can actually contain meaningful information, which is picked up by the Wasserstein distances. This is a possible explanation for the fact that Wasserstein yields better scores in the synthetic dataset.

Hence, the Prokhorov metric works best on rather small diagrams and runs fastest with simple (e.g. linear) parameter functions f. Even then, one needs to take the additional time for tuning the parameter f into account.

6 Discussion and Outlook

Summarizing the results from the previous section, we find that the Prokhorov metric is well-suited for small PDs. Large scale computations can be improved by the technique of entropic regularization from the theory of optimal transport [19]. As the classical Prokhorov metric admits an optimal transport characterization, our discrete variant might be tractable using similar techniques.

A major aspect of the importance of the bottleneck distance is its algebraic formulation in terms of interleavings. This theory generalizes to incorporate the family of Prokhorov metrics. An algebraic formulation would also provide a perspective on generalizations to multiparameter persistence.

Our results in Sect. 4.2 establish that our construction yields a Polish space. This makes it suitable for statistical inference. In a similar vein, one can also investigate bottleneck profiles PDs arising from random geometric complexes. What kind of limit objects appear in this context? Can they be used to perform statistical testing?

Morally, stability theorems should involve related metrics on the input point cloud and on the PD side. This motivates to investigate Prokhorov-type distances for point clouds in \(\mathbb {R}^n\). Such distances might be useful throughout data science.

Code Availability

We provide an implementation as a part of a custom GUDHI fork at https://github.com/nihell/persistence-prokhorov. It is a modification of the GUDHI implementation of the bottleneck distance [14]. Let us first illustrate how to use it before we come to runtime considerations. The algorithm is implemented in C++ and comes with Python bindings.

prokhorov_distance(diagram_1: numpy.ndarray[numpy.float64],

diagram_2: numpy.ndarray[numpy.float64],

coef: numpy.ndarray[numpy.float64]) -> float

It asks for three inputs: diagram_1, diagram_2, and coef. The two diagrams need to be presented as 2D numpy arrays. The third parameter is a 1D numpy array representing the coefficients of a polynomial to be used as f. Note that the zeroth entry needs to be zero in order to obtain a metric, compare Lemma 4.4. However, setting the polynomial to be a constant integer one recovers the values of \(D_{X,Y}\), which is a feature. In the technical details, our approach follows [14], which follows [18]. In addition, we also add the Prokhorov metric to [10], allowing for parallel computations of distance matrices and integration with sklearn.

Notes

To avoid such problems, one usually restricts to a subset of \({{\,\textrm{Dgm}\,}}\) of diagrams with “finite pth moment” [20] when using p-Wasserstein distances.

References

Adams, H., Emerson, T., Kirby, M., Neville, R., Peterson, Ch., Shipman, P., Chepushtanova, S., Hanson, E., Motta, F., Ziegelmeier, L.: Persistence images: a stable vector representation of persistent homology. J. Mach. Learn. Res. 18, # 8 (2017)

Atienza, N., Gonzalez-Díaz, R., Soriano-Trigueros, M.: On the stability of persistent entropy and new summary functions for topological data analysis. Pattern Recognit. 107, # 107509 (2020)

Blumberg, A.J., Gal, I., Mandell, M.A., Pancia, M.: Robust statistics, hypothesis testing, and confidence intervals for persistent homology on metric measure spaces. Found. Comput. Math. 14(4), 745–789 (2014)

Bubenik, P., Vergili, T.: Topological spaces of persistence modules and their properties. J. Appl. Comput. Topol. 2(3–4), 233–269 (2018)

Carriere, M.: 3D shape segmentation using TDA. https://github.com/MathieuCarriere/sklearn-tda/tree/master/example/3DSeg

Chachólski, W., Riihimäki, H.: Metrics and stabilization in one parameter persistence. SIAM J. Appl. Algebra Geom. 4(1), 69–98 (2020)

Chazal, F., Cohen-Steiner, D., Guibas, L.J., Mémoli, F., Oudot, S.Y.: Gromov–Hausdorff stable signatures for shapes using persistence. Comput. Graph. Forum 28, 1393–1403 (2009)

Chen, X., Golovinskiy, A., Funkhouser, T.: A benchmark for 3D mesh segmentation. ACM Trans. Graph. 28(3), # 73 (2009)

Crawley-Boevey, W.: Decomposition of pointwise finite-dimensional persistence modules. J. Algebra Appl. 14(5), # 1550066 (2015)

Dlotko, P., Carriere, M., Royer, M.: Persistence representations. In: GUDHI User and Reference Manual, 3.4.1 edn (2021). https://gudhi.inria.fr/doc/latest/group___persistence__representations.html

Edelsbrunner, H., Harer, J.L.: Computational Topology: An Introduction. American Mathematical Society, Providence (2010)

Efrat, A., Itai, A., Katz, M.J.: Geometry helps in bottleneck matching and related problems. Algorithmica 31(1), 1–28 (2001)

Gibbs, A.L., Su, F.E.: On choosing and bounding probability metrics. Int. Stat. Rev. 70(3), 419–435 (2002)

Godi, F.: Bottleneck distance. In: GUDHI User and Reference Manual, 3.4.1 edn (2021). https://gudhi.inria.fr/doc/latest/group__bottleneck__distance.html

Grafakos, L.: Classical Fourier Analysis. Graduate Texts in Mathematics, vol. 249. Springer, New York (2014)

Gromov, M.: Metric Structures for Riemannian and Non-Riemannian Spaces. Modern Birkhäuser Classics. Birkhäuser, Boston (2007)

Hopcroft, J.E., Karp, R.M.: An \(n^{5/2}\) algorithm for maximum matchings in bipartite graphs. SIAM J. Comput. 2, 225–231 (1973)

Kerber, M., Morozov, D., Nigmetov, A.: Geometry helps to compare persistence diagrams. ACM J. Exp. Algorithmics 22, # 1.4 (2017)

Lacombe, T., Cuturi, M., Oudot, S.: Large scale computation of means and clusters for persistence diagrams using optimal transport (2018). arXiv:1805.08331

Mileyko, Yu., Mukherjee, S., Harer, J.: Probability measures on the space of persistence diagrams. Inverse Probl. 27(12), # 124007 (2011)

Pedregosa, F., Varoquaux, G., Gramfort, A., Michel, V., Thirion, B., Grisel, O., Blondel, M., Prettenhofer, P., Weiss, R., Dubourg, V., Vanderplas, J., Passos, A., Cournapeau, D., Brucher, M., Perrot, M., Duchesnay, É.: Scikit-learn: machine learning in Python. J. Mach. Learn. Res. 12, 2825–2830 (2011)

Peyré, G., Cuturi, M.: Computational optimal transport: with applications to data science. Found. Trends Mach. Learn. 11(5–6), 355–607 (2019)

Prokhorov, Yu.V.: Convergence of random processes and limit theorems in probability theory. Teor. Veroyatnost. i Primenen. 1, 177–238 (1956). (in Russian)

Rachev, S.T.: Probability Metrics and the Stability of Stochastic Models. Wiley Series in Probability and Mathematical Statistics: Applied Probability and Statistics. Wiley, Chichester (1991)

Schubert, E., Lenssen, L., Muhr, D.: k-medoids clustering with the FasterPAM algorithm. https://github.com/kno10/python-kmedoids

Schubert, E., Rousseeuw, P.J.: Fast and eager \(k\)-medoids clustering: \(O(k)\) runtime improvement of the PAM, CLARA, and CLARANS algorithms. Inf. Syst. 101, # 101804 (2021)

Villani, C.: Topics in Optimal Transportation. Graduate Studies in Mathematics, vol. 58. American Mathematical Society, Providence (2003)

Acknowledgements

This work was in part supported by he Centre for Topological Data Analysis, EPSRC Grant EP/R018472/1, and by the Dioscuri Program initiated by the Max Planck Society, jointly managed with the National Science Centre (Poland), and mutually funded by the Polish Ministry of Science and Higher Education and the German Federal Ministry of Education and Research. We thank Gesine Reinert and Sayan Mukherjee for valuable discussions and Davide Gurnari for providing help with the experiments. Finally, we thank the anonymous referees for their helpful suggestions.

Author information

Authors and Affiliations

Corresponding author

Additional information

Editor in Charge: Kenneth Clarkson

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Dłotko, P., Hellmer, N. Bottleneck Profiles and Discrete Prokhorov Metrics for Persistence Diagrams. Discrete Comput Geom 71, 1131–1164 (2024). https://doi.org/10.1007/s00454-023-00498-w

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00454-023-00498-w