Abstract

In the Edge Bipartization problem one is given an undirected graph G and an integer k, and the question is whether k edges can be deleted from G so that it becomes bipartite. Guo et al. (J Comput Syst Sci 72(8):1386–1396, 2006) proposed an algorithm solving this problem in time \(\mathcal {O}(2^k\cdot {m}^2)\); today, this algorithm is a textbook example of an application of the iterative compression technique. Despite extensive progress in the understanding of the parameterized complexity of graph separation problems in the recent years, no significant improvement upon this result has been yet reported. We present an algorithm for Edge Bipartization that works in time \(\mathcal {O}(1.977^k\cdot {nm})\), which is the first algorithm with the running time dependence on the parameter better than \(2^k\). To this end, we combine the general iterative compression strategy of Guo et al. (2006), the technique proposed by Wahlström (in: Proceedings of SODA’14, SIAM, 2014) of using a polynomial-time solvable relaxation in the form of a Valued Constraint Satisfaction Problem to guide a bounded-depth branching algorithm, and an involved Measure&Conquer analysis of the recursion tree.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

The Edge Bipartization problem asks, for a given graph G and integer k, whether one can turn G into a bipartite graph using at most k edge deletions. Together with its close relative Odd Cycle Transversal (OCT), where one deletes vertices instead of edges, Edge Bipartization was one of the first problems shown to admit a fixed-parameter (FPT) algorithm using the technique of iterative compression. In a breakthrough paper [28] that introduces this methodology, Reed et al. showed how to solve OCT in time \(\mathcal {O}(3^k\cdot {kmn})\).Footnote 1 In fact, this was the first FPT algorithm for OCT. Following this, Guo et al. [14] applied iterative compression to show fixed-parameter tractability of several closely related problems. Among other results, they designed an algorithm for Edge Bipartization with running time \(\mathcal {O}(2^k\cdot {m}^2)\). Today, both the algorithms of Reed et al. and of Guo et al. are textbook examples of the iterative compression technique [5, 11].

Iterative compression is in fact a simple idea that boils down to an algorithmic usage of induction. In case of Edge Bipartization, we introduce edges of G one by one, and during this process we would like to maintain a solution F to the problem, i.e., \(F\subseteq E(G)\) is such that \(|F|\le k\) and \(G-F\) is bipartite. When the next edge e is introduced to the graph, we observe that \(F\cup \{e\}\) is a solution of size at most \(k+1\), that is, at most one too large. Then the task reduces to solving Edge Bipartization Compression: given a graph G and a solution that exceeds the budget by at most one, we are asked to find a solution that fits into the budget.

Surprisingly, this simple idea leads to great algorithmic gains, as it reduces the matter to a cut problem. Guo et al. [14] showed that a simple manipulation of the instance reduces Edge Bipartization Compression to the following problem that we call Terminal Separation: We are given an undirected graph G with a set \(\mathcal {T}\) of \(k+1\) disjoint pairs of terminals, where each terminal is of degree 1 in G. The question is whether one can color one terminal of every pair white and the second black in such a way that the minimum edge cut between white and black terminals is at most k. Thus, the algorithm of Guo et al. [14] boils down to trying all the \(2^{k+1}\) colorings of terminals and solving a minimum edge cut problem. For \(\textsc {OCT} \), we similarly have a too large solution \(X\subseteq V(G)\) of size \(k+1\), and we are looking for a partition of X into (L, R, Z), where the size of the minimum vertex cut between L and R in \(G-Z\) is at most \(k-|Z|\). Thus it suffices to solve \(3^{k+1}\) instances of a flow problem.

The search for FPT algorithms for cut problems has been one of the leading directions in parameterized complexity in the recent years. Among these, Odd Cycle Transversal and Edge Bipartization play a central role; see for instance [14, 16, 18,19,20, 22, 25, 28] and references therein. Of particular importance is the work of Kratsch and Wahlström [22], who gave the first (randomized) polynomial kernelization algorithms for Odd Cycle Transversal and Edge Bipartization. The main idea is to encode the cut problems that arise when applying iterative compression into a matroid with a representation that takes small space. The result of Kratsch and Wahlström sparked a line of further work on applying matroid methods in parameterized complexity.

Another thriving area in parameterized complexity is the optimality program, probably best defined by Marx in [27]. The goal of this program is to systematically investigate the optimum complexity of algorithms for parameterized problem by proving possibly tight lower and upper bounds. For the lower bounds methodology, the standard complexity assumptions used are the Exponential Time Hypothesis (ETH) and the Strong Exponential Time Hypothesis (SETH). In the recent years, the optimality program has achieved a number of successes. For instance, under the assumption of SETH, we now know the precise bases of exponents for many classical problems parameterized by treewidth [7, 8, 23]. To explain the complexity of fundamental parameterized problems for which natural algorithms are based on dynamic programming on subsets, Cygan et al. [3] introduced a new hypothesis resembling SETH, called the Set Cover Conjecture (SeCoCo). See [24, 27] for more examples.

For our techniques, the most important is the line of work of Guillemot [13], Cygan et al. [9], Lokshtanov et al. [25], and Wahlström [30] that developed a technique for designing parameterized algorithm for cut problems called LP-guided branching. The idea is to use the optimum solution to the linear programming relaxation of the considered problem in order to measure progress. Namely, during the construction of a candidate solution by means of a backtracking process, the algorithm achieves progress not only when the budget for the size of the solution decreases (as is usual in branching algorithms), but also when the lower bound on the optimum solution increases. Using this concept, Cygan et al. [9] showed a \(2^k n^{\mathcal {O}(1)}\)-time algorithm for Node Multiway Cut. Lokshtanov et al. [25] further refined this technique and applied it to improve the running times of algorithms for several important cut problems. In particular, they obtained a \(2.3146^k n^{\mathcal {O}(1)}\)-time algorithm for Odd Cycle Transversal, which was the first improvement upon the classic \(\mathcal {O}(3^k\cdot {kmn})\)-time algorithm of Reed et al. [28]. From the point of view of the optimality program, this showed that the base 3 of the exponent was not the final answer for Odd Cycle Transversal.

In works [9, 25] it was essential that the considered linear programming relaxation is half-integral, which restricts the applicability of the technique. Recently, Wahlström [30] proposed to use stronger relaxations in the form of certain polynomial-time solvable Valued Constraint Satisfaction Problems (VCSPs). Using this idea, he was able to show efficient FPT algorithms for the node and edge deletion variants of Unique Label Cover, for which natural LP relaxations are not half-integral.

Despite substantial progress on the node deletion variant, for Edge Bipartization there has been no improvement since the classic algorithm of Guo et al. [14] that runs in time \(\mathcal {O}(2^k\cdot {m}^2)\). The main technical contribution of Lokshtanov et al. [25] is a \(2.3146^k n^{\mathcal {O}(1)}\)-time algorithm for Vertex Cover parameterized by the excess above the value of the LP relaxation (VC-above-LP); the algorithm for OCT is a corollary of this result due to folklore reductions from OCT to VC-above-LP via the Almost 2-SAT problem. Thus the algorithm for OCT in fact relies on the LP relaxation for the Vertex Cover problem, which has very strong combinatorial properties; in particular, it is half-integral. No such simple and at the same time strong relaxation is available for Edge Bipartization. The natural question stemming from the optimality program, whether the \(2^k\) term for Edge Bipartization could be improved, was asked repeatedly in the parameterized complexity community, for example by Daniel Lokshtanov at WorKer’13 [6], repeated later at [4].

1.1 Our Results and Techniques

In this paper we answer this question in affirmative by proving the following theorem.

Theorem 1.1

Edge Bipartization can be solved in time \(\mathcal {O}(1.977^k ~\mathrm{nm})\).

Thus, the 2 in the base of the exponent is not the ultimate answer for Edge Bipartization.

To prove Theorem 1.1, we pursue the approach proposed by Guo et al. [14] and use iterative compression to reduce solving Edge Bipartization to solving Terminal Separation (see Sect. 2 for a formal definition of the latter). This problem has two natural parameters: \(|\mathcal {T}|\), the number of terminal pairs, and p, the bound on the size of the cut between white and black terminals. The approach of Guo et al. is to use a simple \(\mathcal {O}(2^{|\mathcal {T}|}\cdot pm)\) algorithm that tries all colorings of terminal pairs and computes the size of a minimum cut between the colors.

The observation that is crucial to our approach is that one can express Terminal Separation as a very restricted instance of the Edge Unique Label Cover problem. More precisely, in this setting the task is to assign each vertex of G a label from \(\{\mathbf {A},\mathbf {B}\}\). Pairs of \(\mathcal {T}\) present hard (of infinite cost) inequality constraints between the labels of terminals involved, while edges of G present soft (of unit cost) equality constraints between the endpoints. The goal is to minimize the cost of the labeling, i.e., the number of soft constraints broken. An application of the results of Wahlström [30] (with the further improvements of Iwata et al. [17] regarding linear dependency on the input size) immediately gives an \(\mathcal {O}(4^p\cdot {m})\) algorithm for Terminal Separation.

Thus, we have in hand two substantially different algorithms for \({\textsc {Terminal}} {\textsc {Separation}}\). If we plug in \(|\mathcal {T}|=k+1\) and \(p=k\), as is the case in the instance that we obtain from Edge Bipartization Compression, then we obtain running times \(\mathcal {O}(2^k\cdot {km})\) and \(\mathcal {O}(4^k\cdot {m})\), respectively. The idea now is that these two algorithms present two complementary approaches to the problem, and we would like to combine them to solve the problem more efficiently. To this end, we need to explain more about the approach of Wahlström [30].

The algorithm of Wahlström [30] is based on measuring the progress by means of the optimum solution to the relaxation of the problem in the form of a Valued CSP instance. In our case, this relaxation has the following form: We assign each vertex a label from \(\{\bot ,\mathbf {A},\mathbf {B}\}\), where \(\bot \) is an additional marker that should be thought of as not yet decided. The hard constraints have zero cost only for labelings \((\mathbf {A},\mathbf {B})\), \((\mathbf {B},\mathbf {A})\) and \((\bot ,\bot )\), and infinite cost otherwise. The soft constraints have cost 0 for equal labels on the endpoints, 1 for unequal from \(\{\mathbf {A},\mathbf {B}\}\), and \(\frac{1}{2}\) when exactly one endpoint is assigned \(\bot \). Based on previous results of Kolmogorov et al. [21], Wahlström observed that this relaxation is polynomial-time solvable, and moreover it is persistent: whenever the relaxation assigns \(\mathbf {A}\) or \(\mathbf {B}\) to some vertex, then it is safe to perform the same assignment in the integral problem (i.e., only with the “integral” labels \(\mathbf {A},\mathbf {B}\)). The algorithm constructs an integral labeling by means of a backtracking process that fixes the labels of consecutive vertices of the graph. During this process, it maintains an optimum solution to the relaxation that is moreover maximal, in the sense that one cannot extend the current labeling by fixing integral labels on some undecided vertices without increasing the cost. This can be done by dint of persistence and polynomial-time solvability: we can check in polynomial time whether a non-trivial extension exists, and then it is safe to fix the labels of vertices that get decided. Thus, when the algorithm considers the next vertex u and branches into two cases, fixing label \(\mathbf {A}\) or \(\mathbf {B}\) on it, the optimum cost of the relaxation increases by at least \(\frac{1}{2}\) in each branch. Hence the recursion tree can be pruned at depth 2p, and we obtain an \(4^p n^{\mathcal {O}(1)}\)-time algorithm.

Our algorithm for Terminal Separation applies a similar branching strategy, where at each point we maintain some labeling of the vertices with \(\mathbf {A}\), \(\mathbf {B}\), and \(\bot \) (undecided). Every terminal pair is either already resolved [assigned \((\mathbf {A},\mathbf {B})\) or \((\mathbf {B},\mathbf {A})\)], or unresolved [assigned \((\bot ,\bot )\)]. Using the insight of Wahlström we can assume that this labeling is maximal. Intuitively, we look at the unresolved pairs from \(\mathcal {T}\) and try to identify a pair (s, t) for which branching into labelings \((\mathbf {A},\mathbf {B})\) and \((\mathbf {B},\mathbf {A})\) leads to substantial progress. Here, we measure the progress in terms of a potential \(\mu \) that is a linear combination of three components:

-

t, the number of unresolved terminal pairs;

-

k, the current budget for the cost of the sought integral solution;

-

\(\nu \), the difference between k and the cost of the current solution to the relaxation.

These ingredients are taken with weights \(\alpha _t = 0.59950\), \(\alpha _\nu = 0.29774\), and \(\alpha _k = 1-\alpha _t-\alpha _\nu = 0.10276\). Thus, the largest weight is put on the progress measured in terms of the number of resolved terminal pairs. Indeed, we want to argue that if we can identify a possibility of recursing into two instances, where in each of them at least one new terminal pair gets resolved, but in one of them we resolve two terminal pairs, then we can pursue this branching step.

Therefore, we are left with the following situation: when branching on any terminal pair, only this terminal pair gets resolved in both branches. Then the idea is to find a branching step where the decrease of the auxiliary components of the potential, namely \(\nu \) and k, is significant enough to ensure the promised running time of the algorithm. Here we apply an extensive combinatorial analysis of the instance to show that finding such a branching step is always possible. In particular, our analysis can end up with a branching not on a terminal pair, but on the label of some other vertex; however, we make sure that in both branches some terminal pair gets eventually resolved. Also, in some cases we localize a part of the input that can be simplified (a reduction step), and then the analysis is restarted.

To sum up, we would like to highlight two aspects of our contribution. First, we answer a natural question stemming from the optimality program, showing that \(2^k\) is not the final dependency on the parameter for Edge Bipartization. Second, our algorithm can be seen as a “proof of concept” that the LP-guided branching technique, even in the more abstract variant of Wahlström [30], can be combined with involved Measure&Conquer analysis of the branching tree. Note that in the past Measure&Conquer and related techniques led to rapid progress in the area of moderately-exponential algorithms [12].

We remark that the goal of the current paper is clearly improving \(2^k\) factor, and not optimizing the dependence of the running time on the input size. However, we do estimate it. Using the tools prepared by Iwata, Wahlström, and Yoishida [17], we are able to implement the algorithm so that it runs in time \(\mathcal {O}(1.977^k\cdot {nm})\). Naively, this seems like an improvement over the algorithm of Guo et al. [14] that had quadratic dependence on m, however this is not the case. We namely use the recent approximation algorithm for Edge Bipartization of Kolay et al. [19] that in time \(\mathcal {O}(k^{\mathcal {O}(1)}\cdot {m})\) either returns a solution \(F^{\text {apx}}\) of size at most \(\mathcal {O}(k^2)\), or correctly concludes that there is no solution of size k. Then we start iterative compression from \(G-F^{\text {apx}}\) and introduce edges of \(F^{\text {apx}}\) one by one, so we need to solve the Terminal Separation problem only \(\mathcal {O}(k^2)\) times. In our case each iteration takes time \(\mathcal {O}(1.977^k\cdot {nm})\), but for the approach of Guo et al. it would take time \(\mathcal {O}(2^k\cdot {km})\). Thus, by using the same idea based on [19], the algorithm of Guo et al. can be adjusted to run in time \(\mathcal {O}(2^k\cdot {k}^3m)\). It is just that the newer algorithm of Kolay et al. [19] was not known at the time of writing [14].

1.2 Organization of the Paper

In Sect. 2 we give background on iterative compression and the VCSP-based tools borrowed from [17, 30]. In particular, we introduce formally the Terminal Separation problem and reduce solving Edge Bipartization to it. In Sect. 3 we set up the Measure&Conquer machinery that will be used by our branching algorithm, and we introduce preliminary reductions. In Sect. 4 we prove some auxiliary results on low excess set, which is the key technical notion used in our combinatorial analysis. Finally, we present the whole algorithm in Sect. 5. Sect. 6 is devoted to some concluding remarks and open problems.

2 Preliminaries

2.1 Graph Notation

For all standard graph notation, we refer to the textbook of Diestel [10]. For the input instance (G, k) of Edge Bipartization , we denote \(n = |V(G)|\) and \(m = |E(G)|\). As isolated vertices are irrelevant for the Edge Bipartization problem, we assume that G does not contain any such vertices, and hence \(n = \mathcal {O}(m)\).

2.2 Cuts and Submodularity

As edge cuts in a graph are the main topic of this work, let us introduce some convenient notation. In all graphs in this paper we allow multiple edges, but not loops, as they are irrelevant for the problem. For a graph G and two disjoint vertex sets \(A,B \subseteq V(G)\), by \(E_G(A,B)\) we denote the set of edges with one endpoint in A and the second endpoint in B. If any of the sets A or B is a singleton, say \(A = \{a\}\), we write \(E_G(a,B)\) instead of \(E_G(\{a\},B)\). We drop the subscript if the graph G is clear from the context.

For a set \(A \subseteq V(G)\), we denote \(d(A) = |E(A,V(G) {\setminus } A)|\). It is well known that the \(d(\cdot )\) function is submodular, that is, for every \(A,B \subseteq V(G)\) it holds that

In fact, a study of the proof of (1) allows us to state the difference in the inequality.

Since \(d(\cdot )\) is symmetric (i.e., \(d(A) = d(V(G) {\setminus } A)\)), by applying (1) to A and the complement of B, we obtain a property sometimes called posimodularity: for every \(A,B \subseteq V(G)\) it holds that

Or, with the error term:

2.3 Iterative Compression and the Compression Variant

Let (G, k) be an input Edge Bipartization instance. The opening step of our algorithm is the standard usage of iterative compression. We start by applying the approximation algorithm of [19] that, given (G, k), in time \(\mathcal {O}(k^{\mathcal {O}(1)} m)\) either correctly concludes that it is a no-instance, or produces a set \(Z \subseteq E(G)\) of size \(\mathcal {O}(k^2)\) such that \(G-Z\) is bipartite.

Let Z be the obtained set, \(|Z| = r = \mathcal {O}(k^2)\) and \(Z = \{e_1,e_2,\ldots ,e_r\}\). Let \(G_i = G-\{e_{i+1},e_{i+2},\ldots ,e_r\}\) for \(0 \le i \le r\); note that \(G_r = G\) while \(G_0 = G-Z\), which is bipartite. Our algorithm, iteratively for \(i=0,1,\ldots ,r\), computes a solution \(X_i\) to the instance \((G_i,k)\), or concludes that no such solution exists. Clearly, since \(G_i\) is a subgraph of G, if we obtain the latter conclusion for some i, we can report that (G, k) is a no-instance.

For \(i=0\), \(G_0=G-Z\) is bipartite, thus \(X_0 = \emptyset \) is a solution. Consider now an instance \((G_i,k)\) for \(1 \le i \le r\), and assume that a solution \(X_{i-1}\) has already been computed. Let \(X_i' = X_{i-1} \cup \{e_i\}\). If \(|X_i'| \le k\), we can take \(X_i = X_i'\) and continue. Otherwise, we can make use of the structural insight given by the set \(X_i'\) and solve the following problem.

If we could efficiently solve an Edge Bipartization Compression instance \((G_i,k,X_i')\), we can take the output solution as \(X_i\) and proceed to the next step of this iteration. Consequently, it suffices to prove the following theorem.

Theorem 2.1

Edge Bipartization Compression can be solved in time \(\mathcal {O}(c^k nm)\) for some constant \(c < 1.977\).

2.4 The Terminal Separation Problem

Following the algorithm of [14], we phrase Edge Bipartization Compression as a separation problem.

Consider a graph G with a family \(\mathcal {T}\) of pairs of terminals in G. A pair (A, B) with \(A,B \subseteq V(G)\) is a terminal separation if \(A \cap B = \emptyset \) and, for every terminal pair P, either one of the terminals in P belongs to A and the second to B, or \(P \subseteq V(G) {\setminus } (A \cup B)\). A terminal separation (A, B) is integral if \(A \cup B = V(G)\).Footnote 2 A terminal separation \((A',B')\)extends (A, B) if \(A \subseteq A'\) and \(B \subseteq B'\). The cost of a terminal separation (A, B) is defined as \(c(A,B) = (d(A) + d(B))/2\). Note that if (A, B) is integral, then we have \(c(A,B) = d(A) = d(B)\).

We will solve the following separation problem.

Lemma 2.2

Given an Edge Bipartization Compression instance \((G,k,X')\), one can in polynomial time compute an equivalent instance \((G',\mathcal {T},(A^\circ ,B^\circ ),k')\) of Terminal Separation, such that \(|E(G')| = |E(G)| + \mathcal {O}(|X'|)\), \(|V(G')| = |V(G)| + \mathcal {O}(|X'|)\), \(|\mathcal {T}| = |X'|\), \(A^\circ =B^\circ = \emptyset \), and \(k' = k\).

Proof

Let \(G'\) be the graph obtained from G by replacing every edge uv in \(X'\) with two new vertices s, t and two pendant edges us, vt. Let \(\mathcal {T}\) be the set of vertex pairs \(\{s,t\}\) created this way, \(A^\circ =B^\circ =\emptyset , k'=k\). We show this constructed instance is equivalent to the original instance.

If the constructed instance is a yes-instance, let A, B be an integral terminal separation of cost at most k. Take \(X=E(A,B)\), and then, for every edge in X incident to a terminal s, replace this edge with uv, where uv is the edge of \(X'\) for which the terminal pair containing s was created. We claim X is a solution to the original instance (clearly \(|X| \le |E(A,B)| \le k\)). Indeed, let \(L',R'\) be a bipartition of \(G-X'\). We show that \((L'\cap A) \cup (R' \cap B), (R'\cap A) \cup (L' \cap B)\) gives a bipartition of \(G-X\). Suppose that, to the contrary, there is an edge uv in \(G-X\) with both endpoints in \((L'\cap A) \cup (R' \cap B)\) (the case of \((R'\cap A) \cup (L' \cap B)\) being symmetrical). Since all edges with one endpoint in \(A\cap V(G)\) and the other in \(B \cap V(G)\) were deleted by X, we may assume uv is an edge with both endpoints in \(L' \cap A\) (the case of \(R' \cap B\) being symmetrical).

Since \(L'\) is one side of a bipartition of \(G-X'\), uv must be an edge in \(X'\). Let us, vt be the corresponding edges to terminals created by the construction. Since both u and v are in A and exactly one of s, t is in A (as A, B is a terminal separation), one of us, vt must be an edge in E(A, B). Hence uv was deleted by \(X'\), a contradiction.

For the other side, if the original instance is a yes-instance, let X be a set of at most k edges such that \(G-X\) has a bipartition L, R. By taking X to be minimal, we can assert that every edge in X has both endpoints on one side of this bipartition. Let A contain all vertices in \((L \cap L') \cup (R \cap R')\), let B contain all vertices of \((L \cap R')\cup (R \cap L')\). For every edge uv in X, add the corresponding terminal vertices s, t to A and B so that s is in the same set as u and t is in the other one. Clearly (A, B) is an integral terminal separation extending \((\emptyset ,\emptyset )\), it suffices to show that \(|E_{G'}(A,B)| \le |X|\).

Let \(e \in E_{G'}(A,B)\). If neither endpoint of e is a terminal, then let \(e=uv\) for \(u\in (L \cap L') \cup (R \cap R')\) and \(v\in (L \cap R')\cup (R \cap L')\). Since all edges with both endpoints in \(L'\) were in \(X'\) and hence replaced by edges to terminals in \(G'\), it cannot be that both u and v are in \(L'\). Similarly, for \(R'\), so let us assume that \(u\in L'\) and \(v\in R'\) (the other case is symmetrical). Then \(u\in (L\cap L')\) and \(v\in (L \cap R')\), which means both u and v are on the same side of the (L, R) bipartition of \(G-X\) but on different sides of the \((L',R')\) bipartition of \(G-X'\). Hence \(e\in X {\setminus } X'\).

Otherwise, assume that some endpoint of e is a terminal. Recall that we have defined A and B in such a way that the edge us for a terminal pair \(\{s,t\}\) is never cut. Hence, let \(e=vt\), and without loss of generality assume that \(v \in (L \cap L') \cup (R \cap R')\). (Here we keep the notation that s, t are two terminal with edges us and vt replacing in the construction an edge uv in \(X'\).) In particular \(v\in A\), so \(t\in B\). By construction of the separation (A, B), it must be that s is in the same side as u and t is on the other side, so \(s,u\in A\). Since u is not a terminal, \(u,v\in (L \cap L') \cup (R \cap R')\). Hence \(uv \in X' = E_G(L',L')\cup E_G(R',R')\) implies that u and v are on the same side of the \((L',R')\) partition, thus also on the same side of the (L, R) partition and hence \(uv \in X \cap X'\). Note also that of the two edges that replace uv in the construction, only e is in \(E_{G'}(A,B)\), because \(s,u \in A\).

Hence every edge in \(E_{G'}(A,B)\) is either an edge in \(X{\setminus } X'\) or an edge to a terminal uniquely corresponding to an edge in \(X \cap X'\), which implies \(|E_{G'}(A,B)| \le |X|\), concluding the other side of the proof. \(\square \)

We say that a terminal pair P is resolved in a Terminal Separation instance \((G,\mathcal {T},(A^\circ ,B^\circ ),k)\) if \(P \subseteq A^\circ \cup B^\circ \), and unresolved otherwise (i.e., \(P \subseteq V(G) {\setminus } (A^\circ \cup B^\circ )\)). Thus, our goal is to design an efficient branching algorithm for Terminal Separation , with parameters being k, the excess in the cutset \(k - c(A^\circ ,B^\circ )\), and the number t of unresolved terminal pairs. A precise statement of the result can be found in Sect. 3, where an appropriate progress measure is defined.

2.5 LP Branching

The starting point in designing an algorithm for Terminal Separation using the aforementioned parameters is the generic LP branching framework of Wahlström [30].

Observe that one can phrase an instance of Terminal Separation as a Valued CSP instance, with vertices being variables over the domain \(\{\mathbf {A},\mathbf {B}\}\), edges being soft (unit cost) equality constraints, terminal pairs being hard (infinite or prohibitive cost) inequality constraints, while membership in \(A^\circ \) or \(B^\circ \) translates to hard unary constraints on vertices of \(A^\circ \cup B^\circ \). Observe that this Valued CSP instance is in fact a Unique Label Cover instance over binary alphabet, with additional hard unary constraints.

In a relaxed instance, we add to the domain the “do not know” value \(\bot \), and extend the cost function for an edge (equality) constraint to be 0 for both endpoints valued \(\bot \), and \(\frac{1}{2}\) for exactly one endpoint valued \(\bot \); the hard inequality constraints on terminal pairs additionally allows both terminals to be valued \(\bot \). Observe that now feasible solutions \(f: V(G) \rightarrow \{\bot ,\mathbf {A},\mathbf {B}\}\) to the so-defined instance are in one-to-one correspondence with terminal separations \((A,B) = (f^{-1}(\mathbf {A}),f^{-1}(\mathbf {B}))\), and the cost of f equals exactly \(c(f^{-1}(\mathbf {A}), f^{-1}(\mathbf {B}))\). Furthermore, as shown by Wahlström [30], the cost functions in this relaxation are bisubmodular, which implies the following two corollaries.

Theorem 2.3

(persistence [30]) Let \((G,\mathcal {T},(A^\circ ,B^\circ ),k)\) be a Terminal Separation instance, and let (A, B) be a terminal separation in G of minimum cost among separations that extend \((A^\circ ,B^\circ )\). Then there exists an integral separation \((A^*,B^*)\) that has minimum cost among all separations extending \((A^\circ ,B^\circ )\), with the additional property that \((A^*,B^*)\) extends (A, B).

We say that a terminal separation (A, B) is maximal if every other separation extending it has strictly larger cost.

Theorem 2.4

(polynomial-time solvability [17, 30]) Given a Terminal Separation instance \((G,\mathcal {T},(A^\circ ,B^\circ ),k)\) with \(c(A^\circ ,B^\circ ) \le k\), one can in \(\mathcal {O}(k^{\mathcal {O}(1)} m)\) time find a maximal terminal separation (A, B) in G that has minimum cost among all separations extending \((A^\circ ,B^\circ )\).

From Theorems 2.3 and 2.4 it follows that, while working on a Terminal Separation instance \((G,\mathcal {T},(A^\circ ,B^\circ ),k)\), we can always assume that \((A^\circ ,B^\circ )\) is a maximal separation: If that is not the case, we can obtain an extending separation (A, B) via Theorem 2.4, and set \((A^\circ ,B^\circ ) := (A,B)\); the safeness of the last step is guaranteed by Theorem 2.3.

We remark here that, in the course of the algorithm, we will often merge sets of vertices in the processed graph. For a nonempty set \(X \subseteq V(G)\), the operation of merging X into a vertex replaces X with a new vertex x, and replaces every edge \(uv \in E(X,V(G) {\setminus } X)\), \(u \in X\), \(v \notin X\), with an edge xv. That is, in this process we do not supress multiple edges while identifying some vertices. However, we do supress loops, as they are irrelevant for the problem. Consequently, we allow the graph G to have multiple edges, but not loops; we remark that both theorems cited in this section work perfectly fine in this setting as well.

3 The Structure of the Branching Algorithm

In this section we describe the structure of the branching algorithm for Terminal Separation . Before we state the main result, we introduce the potential that will measure the progress made in each branching step.

Let \(\mathcal {I}= (G,\mathcal {T},(A^\circ ,B^\circ ),k)\) be a Terminal Separation instance, where \((A_0,B_0)\) is a maximal terminal separation; we henceforth call such an instance maximal. We are interested in keeping track of the following partial measures:

-

\(t_\mathcal {I}\) is the number of unresolved terminal pairs;

-

\(\nu _\mathcal {I}= k - c(A^\circ ,B^\circ )\);

-

\(k_\mathcal {I}= k\).

The \(\mathcal {O}(2^k km)\)-time algorithm used in [14] can be interpreted in our framework as an \(\mathcal {O}(2^{t_\mathcal {I}} k_\mathcal {I}m)\)-time algorithm for Terminal Separation , while the generic LP-branching algorithm for Edge Unique Label Cover of [30] can be interpreted as an \(\mathcal {O}(4^{\nu _\mathcal {I}} m)\)-time algorithm. As announced in the intro, our main goal is to blend these two algorithms, by analysing the cases where both these algorithms perform badly.

An important insight is that all these inefficient cases happen when \(A^\circ \) and \(B^\circ \) increase their common boundary. If this is the case, a simple reduction rule is applicable that also reduces the allowed budget k; in some sense, with this reduction rule the budget k represents the yet undetermined part of the boundary between \(A^*\) and \(B^*\) in the final integral solution \((A^*,B^*)\). For this reason, we also include the budget k in the potential.

Formally, we fix three constants \(\alpha _t = 0.59950\), \(\alpha _\nu = 0.29774\), and \(\alpha _k = 1-\alpha _t-\alpha _\nu = 0.10276\) and define a potential of an instance \(\mathcal {I}\) as

Our main technical result, proved in the remainder of this paper, is the following.

Theorem 3.1

A Terminal Separation instance \(\mathcal {I}\) can be solved in time \(\mathcal {O}(c^{\mu _\mathcal {I}} nm)\) for some \(c < 1.977\).

Observe that if \(\mathcal {I}\) is an instance output by the reduction of Lemma 2.2, then \(t_\mathcal {I}= |X'| = k+1\), \(\nu _\mathcal {I}= k\) since \(A^\circ =B^\circ =\emptyset \), and \(k_\mathcal {I}= k\). Consequently, \(\mu _\mathcal {I}< k+1\), and Theorem 1.1 follows from Theorem 3.1.

The algorithm of Theorem 3.1 follows a typical outline of a recursive branching algorithm. At every step, the current instance is analyzed, and either it is reduced, or some two-way branching step is performed. The potential \(\mu _\mathcal {I}\) is used to measure the progress of the algorithm and to limit the size of the branching tree.

3.1 Reductions

We use a number of reductions in our algorithm. Every reduction decreases \(|V(G)|+|\mathcal {T}|+k\), and after any application of any reduction we re-run Theorem 2.4 to ensure that the considered instance is maximal.

The first one is the trivial termination condition.

Reduction 1

(Terminator Reduction) If \(k_\mathcal {I}< 0\) or \(\nu _\mathcal {I}< 0\), then we terminate the current branch with the conclusion that there is no solution. If \((A^\circ ,B^\circ )\) is integral, return it as a solution.

Observe that if all terminals are resolved, then both \((A^\circ , V(G){\setminus } A^\circ )\) and \((V(G) {\setminus } B^\circ ,B^\circ )\) are integral separations, and one of them is of cost at most \(c(A^\circ ,B^\circ )\). Consequently, since \(\mathcal {I}\) is maximal, in fact \((A^\circ ,B^\circ )\) is integral. We infer that if the Terminator Reduction does not trigger, then there exists at least one unresolved terminal pair, i.e., \(t_\mathcal {I}> 0\).

We now provide the promised reduction of the boundary between \(A^\circ \) and \(B^\circ \).

Reduction 2

(Boundary Reduction) If there exists an edge ab with \(a \in A^\circ \), \(b \in B^\circ \), delete the edge ab and decrease k by one. If there exist two edges va, vb with \(a \in A^\circ \), \(b \in B^\circ \), and \(v \notin A^\circ \cup B^\circ \), delete both edges va and vb, and decrease k by one.

Lemma 3.2

Let \(\mathcal {I}= (G,\mathcal {T},(A^\circ ,B^\circ ),k)\) be a maximal Terminal Separation instance, and assume that the Boundary Reduction have been applied once, giving a graph \(G'\). Then \(\mathcal {I}' = (G',\mathcal {T},(A^\circ ,B^\circ ),k-1)\) is a maximal Terminal Separation instance, equivalent to \(\mathcal {I}\). Furthermore, \(\mu _{\mathcal {I}'} = \mu _\mathcal {I}- \alpha _k\).

Proof

Observe that whether (A, B) is a terminal separation extending \((A^\circ ,B^\circ )\) does not depend on the instance we are looking at: \(\mathcal {I}\) and \(\mathcal {I}'\) differ only in the edgeset of the graph and the budget. For such a separation, by c(A, B) we denote its cost in \(\mathcal {I}\), and by \(c'(A,B)\) its cost in \(\mathcal {I}'\).

We claim that for any terminal separation (A, B) extending \((A^\circ ,B^\circ )\) it holds that \(c(A,B) = c'(A,B) + 1\). The claim is straightforward if an edge ab is deleted. For the second case, consider subcases depending on where the vertex v lies. If \(v \in A\), then \(d_G(A) = d_{G'}(A) + 1\) due to missing edge vb, while if \(v \notin A\), then also \(d_G(A) = d_{G'}(A) + 1\) due to missing edge va. Symmetrically, \(d_G(B) = d_{G'}(B)+1\), which proves the claim. Consequently, the instances \(\mathcal {I}\) and \(\mathcal {I}'\) are equivalent, and \((A^\circ ,B^\circ )\) remains a maximal separation. Furthermore, since \(c(A^\circ ,B^\circ ) = c'(A^\circ ,B^\circ )+1\), we have \(t_{\mathcal {I}'} = t_\mathcal {I}\) and \(\nu _{\mathcal {I}'} = \nu _\mathcal {I}\), hence \(\mu _{\mathcal {I}'} = \mu _\mathcal {I}- \alpha _k\). \(\square \)

It is easy to observe that the Boundary Reduction can be applied exhaustively in linear time.

In a number of reductions in this section, in a few places in the analysis of different cases in the branching algorithm, as well as in the reduction rules defined in the next section, we find a set \(X \subseteq V(G)\) of at least two vertices without any terminals, with at least one vertex of \(V(G) {\setminus } (A^\circ \cup B^\circ )\), for which we can argue that there exists an integral solution \((A^*,B^*)\) to \(\mathcal {I}\) of minimum cost such that \(X \subseteq A^*\) or \(X \subseteq B^*\). In this case, we identify X into a single vertex (that belongs to \(A^\circ \) if \(X \cap A^\circ \ne \emptyset \) and to \(B^\circ \) if \(X \cap B^\circ \ne \emptyset \)), and start from the beginning.

Note that after such reduction \((A^\circ ,B^\circ )\) may not be a maximal separation if the contracted set X contains at least one vertex of \(A^\circ \cup B^\circ \), and we need to apply Theorem 2.4 to extend it to a maximal one. However, note that the operation of merging vertices only shrinks the space of all terminal separations, and thus the cost of \((A^\circ ,B^\circ )\) cannot decrease with such a reduction (and, consequently, \(\nu _\mathcal {I}\) cannot increase).

We now introduce four simple rules. The first one reduces clearly superfluous pieces of the graph.

Reduction 3

(Pendant Reduction) If there exists a vertex set \(X \subseteq V(G) {\setminus } (A^\circ \cup B^\circ )\) that does not contain any terminal and \(|N(X)| \le 1\), then delete X from G.

If there exists a vertex set \(X \subseteq V(G) {\setminus } (A^\circ \cup B^\circ )\) that does not contain any terminal and \(|N(X)| = 2\), then let \(\lambda \) be the size of the minimum (edge) cut between the two vertices of N(X) in G[N[X]]. If \(\lambda \le k\), then replace X with \(\lambda \) edges between the two vertices of N(X), and otherwise identify N[X] into a single vertex.

The safeness of the Pendant Reduction is straightforward: in the first case, for any integral separation (A, B) of the reduced graph, one can add X to the set A or B that contains N(X), without increasing the cost of the separation, while in the second case we can do exactly the same if N(X) belongs to the same side A or B, and otherwise we can greedily cut G[N[X]] along the minimum cut between the vertices of N(X).

Observe that an application of a Pendant Reduction does not merge two terminals and does not spoil the invariant that every terminal in G is of degree at most one. If \(|N(X)| \le 1\), then clearly the deletion of X cannot spoil this property. Otherwise, if \(|N(X)|=2\), then \(\lambda \) is at most the degree of any vertex of N(X) in G[N[X]]; in particular, if N(X) contains a terminal, then \(\lambda \le 1\) and the vertices of N(X) are not identified.

This reduction also does not decrease \(c(A^\circ ,B^\circ )\) (and thus does not increase \(\nu _\mathcal {I}\)). This is clear for \(N(X) = \emptyset \). For \(|N(X)| = 1\) or \(\lambda > k\), it can be modelled as identifying N[X] into a single vertex. Otherwise, for \(|N(X)| = 2\) and \(\lambda \le k\), it can be modelled as identifying the sides of a minimum cut in G[N[X]] between vertices of N(X) onto the corresponding elements of N(X).

Let us now argue that the Pendant Reduction can be applied efficiently.

Lemma 3.3

One can in \(\mathcal {O}(km)\) time find a set X on which the Pendant Reduction is applicable, or correctly conclude that no such set exists.

Proof

First, compute an auxiliary graph \(G'\) from G by adding a clique K on four vertices, and making K fully adjacent to \(L := A^\circ \cup B^\circ \cup \mathcal {T}\). In this manner, the size of \(G'\) is bounded linearly in the size of G, while \(G'[K \cup L]\) is three-connected. Compute the decomposition into three-connected components [2, 29], which can be done in linear time [15]. It is easy to see that the Pendant Reduction is not applicable if and only if the decomposition consists of a single bag, and otherwise any leaf bag of the decomposition different than the bag containing \(K \cup L\) equals N[X] for some X to which the Pendant Reduction is applicable. Furthermore, for such a set X with \(|N(X)|=2\), one can compute \(\min (\lambda , k+1)\) in time \(\mathcal {O}(km)\) using \(\mathcal {O}(k)\) rounds of the Ford–Fulkerson algorithm. \(\square \)

The next three reduction rules consider some special cases of how terminals can lie in the graph.

Reduction 4

(Lonely Terminal Reduction) If there exists an unresolved terminal pair \(P = \{s,t\}\) such that s is an isolated vertex, delete P from \(\mathcal {T}\) and V(G).

The safeness of the Lonely Terminal Reduction follows from the observation that in every terminal separation (A, B) of the reduced graph, we can always put t on the same side as its neighbor (if it exists) and s on the opposite side.

Reduction 5

(Adjacent Terminals Reduction) If there exist two neighboring unresolved terminals \(t_1\) and \(t_2\), then proceed as follows. If they belong to the same terminal pair, delete both of them from G and from \(\mathcal {T}\), and reduce k by one. If they belong to different terminal pairs, say \(\{s_1,t_1\}\) and \(\{s_2,t_2\}\), then delete both these terminal pairs from \(\mathcal {T}\), delete the vertices \(t_1\) and \(t_2\) from G, and add an edge \(s_1s_2\).

For safeness of the Adjacent Terminals Reduction, first recall that terminals are of degree one in G, thus \(\{t_1,t_2\}\) is a connected component of G. If they belong to the same terminal pair, the edge \(t_1t_2\) always belongs to the solution cut and can be deleted. If they belong to different terminal pairs \(\{s_1,t_1\}\) and \(\{s_2,t_2\}\), then the edge \(t_1t_2\) is cut by a solution \((A^*,B^*)\) if and only if \(s_1\) and \(s_2\) are on different sides of the solution, thus we can just as well account for it by replacing it with an edge \(s_1s_2\).

Reduction 6

(Common Neighbor Reduction) If there exists an unresolved terminal pair \(\{s,t\} \in \mathcal {T}\), such that s and t share a neighbor a, then delete both terminals from \(\mathcal {T}\) and G, and decrease k by one.

For safeness of the Common Neighbor Reduction, note that in any solution, exactly one edge as or at is cut.

It is straightforward to check in linear time if any of the last three reductions is applicable, and apply one if this is the case. It follows from maximality of \((A^\circ ,B^\circ )\) and the above safeness arguments that none of these reductions increases \(\nu _\mathcal {I}\): any potential extension (A, B) of \((A^\circ ,B^\circ )\) in the reduced graph can be translated to an extension in the original graph, with a cost larger than c(A, B) by exactly the number of times the budget k has been decreased by the reduction. Consequently, every application of any of the last three reductions decreases the potential \(\mu _\mathcal {I}\) by at least \(\alpha _t\), as each removes at least one terminal pair.

The last reduction is the following.

Reduction 7

(Majority Neighbour Reduction) If there exists two non-terminal vertices \(u,v \in V(G) {\setminus } (A^\circ \cup B^\circ )\) such that at least half of the edges incident to u have the second endpoint in v, identify u and v.

The safeness of the Majority Neighbour Reduction is straightforward: in any integral separation that puts u and v on opposite sides, changing the side of u does not increase the cost of the separation. Also, it is straightforward to find vertices u, v for which the Majority Neighbour Reduction applies and execute it in linear time. Note that, since we require \(u,v \notin A^\circ \cup B^\circ \), the considered instance remains maximal.

Two more reduction rules will be introduced in Sect. 4, where we study sets \(A \supseteq A^\circ \) with small \(d(A) - d(A^\circ )\).

3.2 Branching Step

In every branching step, we identify two terminal separations \((A_1,B_1)\) and \((A_2,B_2)\) extending \((A^\circ ,B^\circ )\), and branch into two subcases; in subcase i we replace \((A^\circ ,B^\circ )\) with \((A_i,B_i)\). We always argue the correctness of a branch by showing that there exists a solution \((A^*,B^*)\) extending \((A^\circ ,B^\circ )\) of minimum cost, with the additional property that \((A^*,B^*)\) extends \((A_i,B_i)\) for some \(i=1,2\). In subcase i, we apply the algorithm of Theorem 2.4 to \((G,\mathcal {T},(A_i,B_i),k)\) to obtain a maximal separation \((A^\circ _i,B^\circ _i)\), and pass the instance \(\mathcal {I}_i = (G,\mathcal {T},(A^\circ _i,B^\circ _i),k)\) to a recursive call.

To show the running time bound for a branching step, we analyze how the measure \(\mu _\mathcal {I}\) decreases in the subcases, taking into account the reductions performed in the subsequent recursive calls. More formally, we say that a branching case fulfills a branching vector

if, in subcase \(i=1,2\), at least \(t_i\) terminal pairs become resolved or reduced with one of the reductions, the cost of the separation \((A^\circ _i,B^\circ _i)\) grows by at least \(\nu _i/2\), and the Boundary Reduction is applied at least \(k_i\) times in the instance \((G,\mathcal {T},(A^\circ _i,B^\circ _i),k)\).

A branching vector \([t_1,\nu _1,k_1;t_2,\nu _2,k_2]\) is good if

In other words, if in subcase \(i=1,2\), the potential \(\mu _\mathcal {I}\) of the instance \(\mathcal {I}\) decreases by \(\delta _i\), then we require that \(1.977^{-\delta _1}+1.977^{-\delta _2}<1\). A standard inductive argument for branching algorithms show that, if in every case we perform a branching step that fulfills some good branching vector, the branching tree originating from an instance \(\mathcal {I}\) has \(\mathcal {O}(c^{\mu _\mathcal {I}})\) leaves for some \(c < 1.977\) (so that \(c^{\mu _\mathcal {I}-\delta _1}+c^{\mu _\mathcal {I}-\delta _2} \le c^{\mu _\mathcal {I}}\)). To simplify further exposition, we gather in the next lemma good branching vectors used in the analysis; the fact that they are good can be checked by direct calculations.

Lemma 3.4

The following branching vectors are good:

Let us stop here to comment that the vectors in Lemma 3.4 explain our choice of constants \(\alpha _t\), \(\alpha _\nu \), \(\alpha _k\). The constant \(\alpha _t\) is sufficiently large to make the vector [1, 1, 0; 2, 1, 0] good; intuitively speaking, we are always done when in one branch we manage to resolve or reduce at least two terminal pairs. The choice of \(\alpha _\nu \) and \(\alpha _k\) represents a very delicate tradeoff that makes both [1, 1, 1; 1, 2, 3] and [1, 2, 0; 1, 3, 1] good; note that setting \(\alpha _\nu = 1-\alpha _t\) and \(\alpha _k = 0\) makes the first vector not good, while setting \(\alpha _\nu = 0\) and \(\alpha _k = 1-\alpha _t\) makes the second vector not good.Footnote 3 In fact, arguably the possibility of a tradeoff that makes both the second and the third vector of Lemma 3.4 good at the same time is one of the critical insights in our work.

3.3 Running Time Bound

In the subsequent sections, we will only argue that

-

1.

every single application of a reduction or a branching step is executed in \(\mathcal {O}(k^{\mathcal {O}(1)} m)\) time;

-

2.

every reduction either terminates or reduces \(k + |\mathcal {T}| + |V(G)|\) by at least one; note that this is true for the reductions defined so far;

-

3.

every branching step is correct and fulfills one of the good vectors mentioned in Lemma 3.4.

Observe that these properties guarantee correctness and the claimed running time of the algorithm.

In a number of places in the branching algorithm, the algorithm attempts some branching \((A_1,B_1),(A_2,B_2)\), and withdraws this decision if the measure decrease is too small. A naive implementation of such behaviour would lead to an additional n factor in the running time bound, as exhaustive application of our reduction rules may take \(\mathcal {O}(k^{\mathcal {O}(1)} nm)\) time, only to be later withdrawn. To maintain the \(\mathcal {O}(nm)\) polynomial factor in our running time bound, we restrict such attempts to only the following procedure: for \(i=1,2\), we apply Theorem 2.4 to obtain a minimum cost extension \((A^\circ _i,B^\circ _i)\) of \((A_i,B_i)\), and report:

-

1.

the number of terminal pairs contained in \((A^\circ _i \cup B^\circ _i) {\setminus } (A^\circ \cup B^\circ )\), i.e., the immediate decrease in \(t_\mathcal {I}\);

-

2.

the difference \(c(A^\circ _i,B^\circ _i) - c(A^\circ ,B^\circ )\), i.e., the immediate decrease in \(\nu _\mathcal {I}\);

-

3.

the number of immediately applicable Boundary Reductions, defined as follows:

$$\begin{aligned} \rho _i := |E(A^\circ _i,B^\circ _i)| + \sum _{v \in V(G) {\setminus } (A^\circ _i \cup B^\circ _i)} \min (|E(v,A^\circ _i)|,|E(v,B^\circ _i)|). \end{aligned}$$

Clearly, the aforementioned numbers are computable in \(\mathcal {O}(k^{\mathcal {O}(1)}m)\) time.

4 Low Excess Sets

Let \(\mathcal {I}= (G,\mathcal {T},(A^\circ ,B^\circ ),k)\) be a maximal Terminal Separation instance. A set \(A \subseteq V(G)\) is an \(A^\circ \)-extension if \(A^\circ \subseteq A \subseteq V(G) {\setminus } B^\circ \). It is terminal-free if \(A {\setminus } A^\circ \) does not contain any terminal. We denote by \(\Delta (A) := d(A)-d(A^\circ )\) the excess of an \(A^\circ \)-extension A. An \(A^\circ \)-extension A is compact if \(A {\setminus } A^\circ \) is connected and \(E(A {\setminus } A^\circ , A^\circ ) \ne \emptyset \).

In this section we consider extensions of small excess, and show that their structure can be reduced to have a relatively simple picture. While in this section we focus on supersets of the set \(A^\circ \), by symmetry the same conclusion holds if we swap the roles of \(A^\circ \) and \(B^\circ \). In our algorithm, we exhaustively apply the reduction rules defined in this section both for the A-side and B-side of the separation \((A^\circ ,B^\circ )\).

Before we start, let us first observe that we can efficiently enumerate all maximal sets of particular constant excess.

Lemma 4.1

For every fixed constant r, one can in \(\mathcal {O}(k^{\mathcal {O}(1)}(n+m))\) time enumerate all inclusion-wise maximal compact \(A^\circ \)-extensions of excess at most r.

Proof

Our algorithm will in fact enumerate all compact \(A^\circ \)-extensions A of excess at most r with the property that every compact \(A^\circ \)-extension \(A'\) with \(A \subsetneq A'\) satisfies \(\Delta (A') > \Delta (A)\). The approach closely follows the algorithm for enumerating important separators (see, e.g., [5, Chapter 8] ).

By the maximality of \((A^\circ ,B^\circ )\), \(A^\circ \) is the only such extension of excess 0. We initiate a queue Q with \(Q = \{A^\circ \}\). Iteratively, until Q is not empty, we extract an extension A from Q, and proceed as follows. For every \(v \in N(A)\), we compute a set \(A_v\) such that \(E(A_v,V(G) {\setminus } A_v)\) is a minimum cut between \(A \cup \{v\}\) and \(B^\circ \cup (\mathcal {T}{\setminus } A^\circ )\), or take \(A_v = \bot \) if for such a set \(d(A_v)\) would be larger than \(d(A^\circ )+r\). Such a set \(A_v\) can be computed using \(\mathcal {O}(k+r)\) rounds of the Ford–Fulkerson algorithm, and furthermore it allows us to compute \(A_v\) being the unique inclusion-wise maximal set with the required properties.

If \(A_v \ne \bot \), we insert \(A_v\) into the queue Q. Otherwise, if \(A_v = \bot \) for every \(v \in N(A)\), then we output A as one of the desired sets. For correctness, observe that every set A in the queue has excess at most r, and the described procedure uses the definition of compactness to check if there exists any other extension of excess at most r being a strict superset of A. For the time bound, observe that whenever a set \(A_v\) is inserted into the queue, it holds that \(d(A_v) > d(A)\), while \(d(A^\circ ) \le 2k\) (because of the Terminator Reduction). Hence, \(\mathcal {O}((2k+r)^r)\) sets are inserted into the queue. Moreover, the computation for a single set A extracted from the queue takes \(\mathcal {O}(k^{\mathcal {O}(1)} (n+m))\) time. \(\square \)

We now proceed to the promised description of reductions. A straightforward corollary of the assumption that \(\mathcal {I}\) is maximal is the following.

Lemma 4.2

If A is a terminal-free \(A^\circ \)-extension of excess zero or less, then \(A = A^\circ \).

We now study extensions of excess 1.

Lemma 4.3

If A is a terminal-free \(A^\circ \)-extension of excess 1, then there exists a minimum cost integral terminal separation \((A^*,B^*)\) extending \((A^\circ ,B^\circ )\), such that \((A{\setminus } A^\circ )\) is either completely contained in \(A^*\) or completely contained in \(B^*\).

Proof

Let \((A^*, B^*)\) be a minimum cost integral terminal separation extending \((A^\circ ,B^\circ )\).

If \((A{\setminus } A^\circ )\) is completely contained in \(B^*\), then \((A^*, B^*)\) proves the claim, so let us assume the contrary: \((A {\setminus } A^\circ ) \cap A^*\ne \emptyset \). Then \(A\cap A^*\ne A^\circ \). We show that \((A^*\cup A, B^*{\setminus } A)\) is a minimum cost integral separation, proving the claim.

Indeed, since A is terminal-free, \((A^*\cup A, B^*{\setminus } A)\) is an integral terminal separation. It suffices to show that it is minimum, that is, \(d(A^*\cup A) \le d(A^*)\). By submodularity, \(d(A^*\cup A) + d(A^*\cap A) \le d(A^*) + d(A)\). Since \(A \cap A^*\) is a terminal-free \(A^\circ \)-extension and \(A \cap A^*\ne A^\circ \), by Lemma 4.2 we have \(\Delta (A \cap A^*) > 0\), which means \(d(A \cap A^*)\ge 1 + d(A^\circ )\). By assumption \(d(A) = 1+d(A^\circ )\). Taking this together, \(d(A^*\cup A) \le d(A^*) + d(A) - d(A^*\cap A) \le d(A^*)\), which concludes the proof. \(\square \)

Lemma 4.3 proves safeness of the following reduction rule.

Reduction 8

(Excess-1 Reduction) If there exists a terminal-free \(A^\circ \)-extension of excess 1 with \(|A {\setminus } A^\circ | > 1\), merge all vertices of \(A {\setminus } A^\circ \) into a single vertex.

The next lemma shows that one can apply the Excess-1 Reduction efficiently.

Lemma 4.4

Given a maximal instance \(\mathcal {I}\) for which none of the previously defined reduction rules is applicable, one can in \(\mathcal {O}(k^{\mathcal {O}(1)} (n+m))\) time find a set A for which the Excess-1 Reduction rule is applicable, or correctly conclude that no such set exists.

Proof

Let A be a terminal-free \(A^\circ \)-extension of excess 1. If \(A {\setminus } A^\circ \) is disconnected, then for any connected component C of \(A {\setminus } A^\circ \) we have that \(d(A^\circ \cup C) + d(A {\setminus } C) = d(A^\circ ) + d(A)\), hence either \(d(A^\circ \cup C) \le d(A^\circ )\) or \(d(A {\setminus } C) \le d(A^\circ )\), contradicting the maximality of \((A^\circ ,B^\circ )\). Thus, \(A {\setminus } A^\circ \) is connected. If \(E(A{\setminus } A^\circ , A^\circ )\) were empty, then \(A{\setminus } A^\circ \) would be a terminal-free set with \(d(A{\setminus } A^\circ )=1\), and would hence be deleted by the Pendant Reduction.

Consequently, every terminal-free \(A^\circ \)-extension of excess 1 is compact. We can enumerate all such inclusion-wise maximal extensions by Lemma 4.1, and apply the reduction for any such set A with \(|A {\setminus } A^\circ | > 1\). \(\square \)

We can henceforth assume that for every terminal-free \(A^\circ \)-extension A of excess 1, the set \(A {\setminus } A^\circ \) is a singleton.

We now move to an analysis of sets of excess 2.

Lemma 4.5

Assume that the Pendant Reduction and Excess-1 Reduction have been exhaustively applied. If A is a terminal-free \(A^\circ \)-extension of excess 2, then there exists a partition \(A {\setminus } A^\circ = D \uplus C_1 \uplus C_2 \uplus \cdots \uplus C_r\) for some \(r \ge 0\), such that:

-

1.

there exists a minimum cost integral terminal separation \((A^*,B^*)\) extending \((A^\circ ,B^\circ )\), such that one of the following holds:

-

\((A {\setminus } A^\circ ) \cap A^*= \emptyset \);

-

\((A {\setminus } A^\circ ) \cap A^*= C_i\) for some \(1 \le i \le r\); or

-

\(A \subseteq A^*\).

-

-

2.

for every \(1 \le i \le r\), the sets \(C_i\) and \(E(C_i,A^\circ )\) are nonempty, and \(A^\circ \cup C_i\) is a terminal-free \(A^\circ \)-extension of excess 1;

-

3.

if \(D \ne \emptyset \), then for every \(1 \le i \le r\) the set \(E(C_i,D)\) is nonempty and \(A{\setminus }A^\circ \) is connected;

-

4.

if \(D = \emptyset \), then \(r=2\);

-

5.

for every \(1 \le i < j \le r\), there are no edges between \(C_i\) and \(C_j\).

Proof

Let \(C'_1,\dots ,C'_r\) be all the inclusion-wise maximal subsets of A that are \(A^\circ \)-extensions of excess 1. Let \(C_i = C'_i{\setminus } A^\circ \) and let \(D=A {\setminus } (A^\circ \cup C_1 \cup \dots \cup C_r)\). We show the claim is true for these sets. Let \(1\le i\ne j \le r\).

The Excess-1 Reduction allows us to assume that \(C_i\) is a singleton and hence \(C_i\) is disjoint from \(C_j\). Since \(\Delta (C'_i)=\Delta (C'_j)=1\) and \(\Delta (C'_i \cup C'_j) \ge 2\) (by maximality of \(C'_i\)), there are no edges between \(C_i\) and \(C_j\), proving point 5.

If \(E(C_i,A^\circ )\) were empty, then \(d(C_i)=1\) and \(C_i\) would be deleted by the Pendant Reduction; this proves point 2. If \(D\ne \emptyset \) but \(A{\setminus }A^\circ \) was disconnected, then consider a component C of \(A{\setminus }A^\circ \). Then \(\Delta (A) = \Delta (A^\circ \cup C) + \Delta (A {\setminus } C)\), hence either \(\Delta (A^\circ \cup C) = \Delta (A {\setminus } C) = 1\), which would contradict that \(D\ne \emptyset \), or one of \(A^\circ \cup C, A{\setminus } C\) has excess 0, which would contradict Lemma 4.2. Hence \(A{\setminus } A^\circ \) is connected and as there are no edges between \(C_i\) and \(C_j\), there must be edges between \(C_i\) and D, proving point 3. If \(D=\emptyset \), then \(\Delta (A) = \sum _{i=1}^r \Delta (A^\circ \cup C_i) = r\). Hence \(r=2\), proving point 4.

To prove point 1, consider a minimum cost integral terminal separation \((A^*,B^*)\). Since \(C_i\) is a singleton, it is either completely contained in \(A^*\) or disjoint from it. If \(A^*\cap (A{\setminus } A^\circ )\) is empty or equal to one of \(C_i\), the claim follows. Otherwise, \(A^*\cap (A{\setminus } A^\circ )\) contains a vertex of D or two of the \(C_i\) sets; by their maximality, the excess of \(A^*\cap A\) is then at least 2, so \(d(A^*\cap A) \ge d(A)\). By submodularity, \(d(A^*\cup A) + d(A^*\cap A) \le d(A^*) + d(A)\) and thus \(d(A^*\cup A) \le d(A^*)\). Therefore, since A is terminal-free, \((A^*\cup A, B^*{\setminus } A)\) is an integral terminal separation, concluding the proof. \(\square \)

Lemma 4.5 ensures safeness of the following reduction rule.

Reduction 9

(Excess-2 Reduction) If there exists a terminal-free \(A^\circ \)-extension A of excess 2 such that in the partition \(D \uplus C_1 \uplus \cdots \uplus C_r\) defined by Lemma 4.5, \(|D|>1\), then merge D into a single vertex.

We are left with an efficient implementation of this rule.

Lemma 4.6

Given a maximal instance \(\mathcal {I}\) for which none of the previously defined reduction rules is applicable, one can in \(\mathcal {O}(k^{\mathcal {O}(1)} (n+m))\) time find a set A for which the Excess-2 Reduction is applicable and compute the decomposition of \(A {\setminus } A^\circ \) of Lemma 4.5, or correctly conclude that no such set A exists.

Proof

Let A be a terminal-free \(A^\circ \)-extension of excess 2, and let \(D, C_1,C_2,\ldots ,C_r\) be the sets promised by Lemma 4.5 and let \(|D|>1\). The inapplicability of the Excess-1 Reduction ensures that every set \(C_i\) is a singleton, \(C_i = \{c_i\}\).

Let us first deal with the corner case in which \(r=0\) and \(E(D,A^\circ ) = \emptyset \). Then, since A is of excess 2, we have \(d(D) = 2\). However, as D does not contain any terminal, the Pendant Reduction is applicable to it.

In the remaining cases, Lemma 4.5 guarantees that A is compact. We enumerate all inclusion-wise maximal compact excess-2 extensions using Lemma 4.1. For every output extension A, we first identify the set \(C \subseteq A {\setminus } A^\circ \) of all vertices v such that \(A^\circ \cup \{v\}\) is of excess one. By Lemma 4.5, we have \(D = A {\setminus } (A^\circ \cup C)\). If \(|D| > 1\), then we can apply the reduction.

To complete the proof, note that if the Excess-2 Reduction is applicable to some compact \(A^\circ \)-extension A, then it is also applicable to any compact \(A^\circ \)-extension \(A'\) of excess 2 being a superset of A: the corresponding set D for A is a subset of the corresponding set \(D'\) for \(A'\). \(\square \)

The set D of Lemma 4.5 is often a very convenient branching pivot: putting it into \(A^\circ \) makes the boundary of \(A^\circ \) extend by two, while putting it into \(B^\circ \) triggers a number of Boundary Reductions. In the next few lemmata we summarize the properties of an excess-2 set after reductions, and outcomes on branching on the set D.

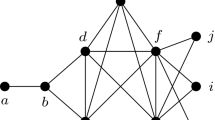

We start from a slightly more useful presentation of the properties promised by Lemma 4.5 (Fig. 1).

Lemma 4.7

Assume that no reduction is applicable, and let A be a terminal-free \(A^\circ \)-extension of excess 2. Then one can in \(\mathcal {O}(k^{\mathcal {O}(1)} m)\) time compute a decomposition \(A {\setminus } A^\circ = \{d,c_1,c_2,\ldots ,c_r\}\) for some \(r \ge 0\) or \(A {\setminus } A^\circ = \{c_1,c_2\}\) with the following properties:

-

1.

if the vertex d exists, then A is compact and for every \(1 \le i \le r\), there are \(p_i\) edges \(dc_i\) for some \(p_i \ge 1\); we put \(p_1 = p_2 = 0\) if the vertex d does not exists;

-

2.

for every \(1 \le i \le r\), the set \(A^\circ \cup \{c_i\}\) is an \(A^\circ \)-extension of excess 1, the vertex \(c_i\) has \(x_i+1 \ge 1\) edges towards \(V(G) {\setminus } (A \cup B^\circ )\) and \(p_i+x_i \ge 1\) edges towards \(A^\circ \), for some \(x_i \ge 0\);

-

3.

the vertices \(c_i\) are pairwise nonadjacent;

-

4.

the set \(A^\circ \cup \{d\}\) is an \(A^\circ \)-extension of excess larger than 1.

Proof

Most of the enumerated properties are just repetitions of the points of Lemma 4.5, after each set of the partition has been identified into a single vertex. Recall that noncompact \(A^\circ \)-extensions of excess 2 are completely reduced by the Pendant Reduction.

For the count on the number of edges incident to a vertex \(c_i\), define \(p_i\) as claimed and \(x_i := |E(c_i,V(G) {\setminus } A)|-1\); clearly \(x_i \ge -1\). Since \(A^\circ \cup \{c_i\}\) is of excess 1, and no two vertices \(c_i\) are adjacent, we have \(|E(c_i,A^\circ )| = p_i+x_i\). Furthermore, note that no edge may connect \(c_i\) and \(B^\circ \), as it would trigger a Boundary Reduction. It remains to refute the case \(x_i = -1\), i.e., \(E(c_i,V(G) {\setminus } A) = \emptyset \). In this case \(p_i + x_i = |E(c_i,A^\circ )| \ge 0\) implies \(p_i \ge 1\), so the vertex d exists. However, the Majority Neighbour Reduction then applies to \(c_i\) and d, a contradiction.

If \(A^\circ \cup \{d\}\) is an \(A^\circ \)-extension of excess at most 1, then \(r \ge 1\) as A has excess 2, but then an edge count shows that \(A^\circ \cup \{d,c_1\}\) would be an \(A^\circ \)-extension of nonpositive excess, a contradiction to the maximality of \(A^\circ \).

Finally, the decomposition of \(A{\setminus } A^\circ \) can be identified by inspecting the edges incident to every vertex \(v \in A {\setminus } A^\circ \) to check whether \(A^\circ \cup \{v\}\) is of excess 1 or larger. \(\square \)

We now investigate what happens in a branch when we put the vertex d onto the A-side.

Lemma 4.8

Assume that no reduction is applicable, and let \(A,A'\) be two terminal-free \(A^\circ \)-extensions of excess 2 with \(A \subsetneq A'\). Then \(A' {\setminus } A^\circ \) decomposes as \(\{d,c_1,c_2,\ldots ,c_r\}\) for some \(r \ge 2\), and \(A {\setminus } A^\circ \) consists of two vertices \(c_i\) of this decomposition.

Proof

If \(A' {\setminus } A^\circ = \{c_1,c_2\}\), then there is no choice for the set A, as \(A^\circ \cup \{c_i\}\) is of excess 1 for \(i=1,2\). Hence, \(A' {\setminus } A^\circ = \{d,c_1,c_2,\ldots ,c_r\}\) for some \(r \ge 1\); note that \(|A' {\setminus } A^\circ | \ge 2\) as \(A^\circ \subsetneq A \subsetneq A'\). A direct edge count using Lemma 4.7 shows that for every \(C \subseteq \{c_1,c_2,\ldots ,c_r\}\) we have \(\Delta (A^\circ \cup C) = |C|\) and \(\Delta (A^\circ \cup C \cup \{d\}) \ge 2 + (r-|C|)\). Hence, the only option to get excess 2 is to have \(A = A^\circ \cup C\) for some \(|C|=2\). \(\square \)

Lemma 4.9

Assume that no reduction is applicable, and let A be a terminal-free \(A^\circ \)-extension of excess 2 with \(A {\setminus } A^\circ = \{d,c_1,c_2,\ldots ,c_r\}\) for some \(r \ge 0\). If we furthermore consider a branch \((A_1,B_1)\) such that \(d \in A_1\), but \(A_1 {\setminus } A^\circ \) does not contain any terminal, then

-

1.

if \(B_1\) contains at least one vertex \(c_i\), then there does not exist any minimum cost integral terminal separation \((A^*,B^*)\) extending \((A^\circ ,B^\circ )\) that also extends \((A_1,B_1)\);

-

2.

\(d(A_1) \ge d(A^\circ ) + 2\);

-

3.

if \(d(A_1) = d(A^\circ ) + 2\), then \(A_1 = A\).

Proof

Define \(A' := A_1 \cup A\) and \(B' := B_1 {\setminus } A\); note that \(A' {\setminus } A^\circ \) is terminal-free and \((A',B')\) is a terminal separation as well.

Observe that if \((A_1,B_1)\) is a terminal separation extending \((A^\circ ,B^\circ )\) with \(d \in A_1\) but \(c_i \notin A_1\) for some \(1 \le i \le r\), then a direct edge count from Lemma 4.7 shows that \(d(A_1 \cup \{c_i\}) < d(A_1)\), \(d(B_1 {\setminus } \{c_i\}) \le d(B_1)\), hence \(c(A_1 \cup \{c_i\}, B_1 {\setminus } \{c_i\}) < c(A_1,B_1)\). This proves the first point, and shows that \(d(A') \le d(A_1)\), \(d(B') \le d(B_1)\), thus \(c(A',B') \le c(A_1,B_1)\), and the equality holds only if \((A',B') = (A_1,B_1)\).

Since \(A \subseteq A'\), the Excess-1 Reduction is inapplicable, and \(\Delta (A) = 2\), we have \(\Delta (A') \ge 2\). Consequently, \(d(A_1) \ge d(A') \ge d(A^\circ )+2\), and \(d(A_1) = d(A^\circ )+2\) only if \(d(A_1) = d(A') = d(A^\circ )+2\). As discussed in the previous paragraph, this can only happen if \(A' = A_1\) and \(\Delta (A') = 2\). By Lemma 4.8, this implies \(A' = A\), finishing the proof of the lemma. \(\square \)

In the last lemma we study what happens in a branch when we put the vertex d onto the B-side (Fig. 2).

Lemma 4.10

Assume that no reduction is applicable, and let A be a terminal-free \(A^\circ \)-extension of excess 2 with \(A {\setminus } A^\circ = \{d,c_1,c_2,\ldots ,c_r\}\) for some \(r \ge 0\). Furthermore, if we consider a branch \((A_1,B_1)\) such that \(d \in B_1\), then at least one Boundary Reduction is immediately triggered. If only one is triggered, then one of the following holds:

-

1.

\(r = 0\), \(A {\setminus } A^\circ = \{d\}\), and the vertex d is of degree four, with one incident edge having second endpoint in \(A^\circ \) and the remaining three edges having second endpoint in \(V(G) {\setminus } (A \cup B^\circ )\); or

-

2.

\(r=1\), \(A {\setminus } A^\circ = \{d,c_1\}\), the vertex d is of degree three, with one incident edge being \(c_1d\) and the remaining two edges having second endpoint in \(V(G) {\setminus } A\), and the vertex \(c_1\) is of degree \(2x+1\) for some \(x \ge 1\), with one incident edge being \(c_1d\), x incident edges having second endpoint in \(A^\circ \), and x incident edges having second endpoint in \(V(G) {\setminus } (A \cup B^\circ )\).

Proof

In the branch \((A_1,B_1)\), a Boundary Reduction is immediately triggered for every edge in \(E(d,A^\circ )\), and every vertex \(c_i\) triggers \(\min (p_i,p_i+x_i) = p_i\) Boundary Reductions. Note that \(r\ge 1\) or \(E(D,A^\circ )\ne \emptyset \), as A is compact by Lemma 4.7. Hence at least one reduction is triggered. If only one reduction is triggered, then \(|E(d,A^\circ )| + \sum _{i=1}^r p_i = 1\). In particular r is either 0 or 1.

If \(r = 0\), then \(|E(d,A^\circ )| = 1\) and the assumption that A is of excess 2 implies that \(|E(d, V(G) {\setminus } A^\circ )| = 3\). No edge incident to d may have a second endpoint in \(B^\circ \), as it would trigger the Boundary Reduction together with the edge in \(E(d,A^\circ )\). Thus the first case of the claim holds.

If \(r=1\), then \(|E(d,A^\circ )| = 0\) and \(p_1=1\). Since \(c_1\) has \(p_1+x_1\) edges to \(A^\circ \) and \(x_1+1\) edges to \(V(G){\setminus } A\), the assumption that A is of excess 2 implies that d has exactly two edges to \(V(G){\setminus } A\). No edge incident to \(c_1\) can have the second endpoint in \(B^\circ \), as otherwise it would trigger the Boundary Reduction with any edge in \(E(c_1,A^\circ )\). Thus the second case of the claim holds. \(\square \)

5 The Detailed Cases of the Branching Algorithm

In this section we assume we have a maximal instance \(\mathcal {I}= (G,\mathcal {T},(A^\circ ,B^\circ ),k)\) for which none of the previously defined reduction rules is applicable. Our goal is to find a branching step that fulfils a good vector, or a set of vertices to merge (a reduction step). Recall that when we consider a branching into terminal separations \((A_1,B_1)\) and \((A_2,B_2)\) that extend \((A^\circ ,B^\circ )\), then \(t_i,\nu _i,k_i\) for \(i=1,2\) measure respectively the number of terminals resolved in branch i, two times the growth of the cost of the separation in branch i (i.e., \(2(c(A_i,B_i)-c(A^\circ ,B^\circ ))\)), and the decrease in the budget k after applying all the reduction rules when recursing into branch i.

Assume that we have identified a branching step into separations \((A_1,B_1)\) and \((A_2,B_2)\) that both extend, but are different than \((A^\circ ,B^\circ )\). Then, from the maximality of \((A^\circ ,B^\circ )\) we infer than \(\nu _1,\nu _2 \ge 1\). Since [1, 1, 0; 2, 1, 0] is a good vector, any branching step in which in both cases we resolve or reduce at least one terminal pair, while in at least one case we resolve or reduce at least two terminal pairs, is fine for our purposes.

5.1 Basic Branching and Reductions

Let \(\mathcal {T}' \subseteq \mathcal {T}\) be the set of unresolved terminal pairs (not in \(A^\circ \cup B^\circ \)). For every terminal pair \(\{s,t\}\in \mathcal {T}'\), we apply the algorithm of Theorem 2.4 twice: once for terminal separation \((A^\circ \cup \{s\},B^\circ \cup \{t\})\), and the second time for terminal separation \((A^\circ \cup \{t\},B^\circ \cup \{s\})\). In this manner we obtain two maximal terminal separations \((A_s,B_t)\) and \((A_t,B_s)\) that extend \((A^\circ \cup \{s\},B^\circ \cup \{t\})\) and \((A^\circ \cup \{t\},B^\circ \cup \{s\})\) respectively. Of course, the number of unresolved pairs decreases by at least one in both \((A_s,B_t)\) and \((A_t,B_s)\), due to resolving \(\{s,t\}\). If the number of unresolved pairs either in \((A_s,B_t)\) or in \((A_t,B_s)\) decreases by more than one, then, as we argued, performing a branching step \((A_1,B_1)=(A_s,B_t)\) and \((A_2,B_2)=(A_t,B_s)\) leads to the branching vector [1, 1, 0; 2, 1, 0] or a better one, which is good. We can test in \(\mathcal {O}(k^{\mathcal {O}(1)} m)\) time whether this holds for any pair \(\{s,t\}\in \mathcal {T}'\), and if so then we pursue the branching step.

Branching step 1

If in either \((A_s,B_t)\) or in \((A_t,B_s)\), more than one terminal pair gets resolved, then perform branching into \((A_1,B_1)=(A_s,B_t)\) and \((A_2,B_2)=(A_t,B_s)\).

Hence, if this branching step cannot be performed, then we assume the following:

Assumption 1

For every pair \(\{s,t\}\in \mathcal {T}'\) in both \((A_s,B_t)\) and \((A_t,B_s)\) only the pair \(\{s,t\}\) gets resolved.

We now proceed with some structural observations about the instance at hand.

Lemma 5.1

\(G[A_s{\setminus } A^\circ ]\), \(G[A_t{\setminus } A^\circ ]\), \(G[B_s{\setminus } B^\circ ]\), \(G[B_t{\setminus } B^\circ ]\) are connected.

Proof

We prove the statement for \(G[A_s{\setminus } A^\circ ]\), since the other statements are symmetric. Suppose \(G[A_s{\setminus } A^\circ ]\) is disconnected, and let C be any of its connected component that does not contain s. Then C is terminal-free, so by the maximality of \((A^\circ ,B^\circ )\) we infer that \(d(C\cup A^\circ )>d(A^\circ )\). But then \(d(A_s{\setminus } C)<d(A_s)\), which contradicts the optimality of \((A_s,B_s)\). \(\square \)

Lemma 5.2

Let \(\{s,t\}\in \mathcal {T}'\), and let \((A_s,B_t)\) and \((A_t,B_s)\) be any optimum-cost terminal separations extending \((A^\circ \cup \{s\},B^\circ \cup \{t\})\) and \((A^\circ \cup \{t\},B^\circ \cup \{s\})\), respectively. Suppose that \((A_s,B_t)\) and \((A_t,B_s)\) do not resolve any terminal pair apart from \(\{s,t\}\). Then for any set A with \(A^\circ \cup \{s\}\subseteq A\subseteq V(G){\setminus } B^\circ \) that has only s among the terminals of \(\mathcal {T}'\), it holds that \(\Delta (A)\ge \Delta (A_s)\). Symmetrically, for any set B with \(B^\circ \cup \{s\}\subseteq B\subseteq V(G){\setminus } A^\circ \) that has only s among the terminals of \(\mathcal {T}'\), it holds that \(\Delta (B)\ge \Delta (B_s)\).

Proof

We prove only the first claim for the second one is symmetric. Let A be such a set, and for the sake of contradiction suppose \(\Delta (A)<\Delta (A_s)\). Then \(d(A)+d(B_t)<2c(A_s,B_t)\). However, from posimodularity of cuts it follows that either \(d(B_t{\setminus } A)+d(A)\le d(B_t)+d(A)\) or \(d(B_t)+d(A{\setminus } B_t)\le d(B_t)+d(A)\). Both \((A,B_t{\setminus } A)\) and \((A{\setminus } B_t,B_t)\) are terminal separations that extend \((A^\circ \cup \{s\},B^\circ \cup \{t\})\), and one of them has strictly smaller cost than \((A_s,B_t)\). This is a contradiction with the optimality of \((A_s,B_t)\). \(\square \)

5.1.1 Pushing \(A_s\) and \(B_s\)

The problem that we will soon face is that separations \((A_s,B_t)\) and \((A_t,B_s)\) are not uniquely defined. For instance, there can be some set of vertices \(Z\subseteq A_s{\setminus } A^\circ \) that could be moved from \(A_s\) to \(B_t\) without changing the cost of the separation. We now make an adjustment of these separations so that we can assume that \(A_s\), resp. \(B_s\), is maximal. For this, we need the following technical results.

Lemma 5.3

Suppose that \((A_s,B_t)\) and \((A_s',B_t')\) are maximal terminal separations of minimum cost among separations that extend \((A^\circ \cup \{s\},B^\circ \cup \{t\})\). Suppose further that they do not resolve any other terminal pair from \(\mathcal {T}'\). Then

-

(a)

\(d(A_s)=d(A_s')\) and \(d(B_t)=d(B_t')\);

-

(b)

\((A_s\cap A_s',B_t\cup B_t')\) and \((A_s\cup A_s',B_t\cap B_t')\) are also terminal separations of minimum cost among separations that extend \((A^\circ \cup \{s\},B^\circ \cup \{t\})\);

-

(c)

\(A_s\cup B_t=A_s'\cup B_t'\).

Proof

(a) Let \(C=c(A_s,B_t)=c(A_s',B_t')\) be the minimum cost of a terminal separation extending \((A^\circ \cup \{s\},B^\circ \cup \{t\})\). Suppose w.l.o.g. that \(d(A_s)<d(A_s')\), then we have that \(d(B_t)>d(B_t')\). By posimodularity, we have that

Observe that \((A_s{\setminus } B_t',B_t')\) is a terminal separation that extends \((A^\circ \cup \{s\},B^\circ \cup \{t\})\), and hence

Symmetrically, by considering terminal separation \((A_s,B_t'{\setminus } A_s)\) we obtain that

Thus, from (5), (6), and (7) we obtain that

which is a contradiction.

(b) Observe that \(d(A_s\cap A_s')\ge d(A_s)\), because otherwise \(A_s\) could have been replaced with \(A_s\cap A_s'\) in separation \((A_s,B_t)\). By submodularity of cuts we have that \(d(A_s\cap A_s')+d(A_s\cup A_s')\le d(A_s)+d(A_s')\), and hence \(d(A_s\cup A_s')\le d(A_s')=d(A_s)\). By posimodularity, we have that

On the other hand, for terminal separation \(((A_s\cup A_s') {\setminus } B_t,B_t)\) we have that

and for terminal separation \((A_s\cup A_s',B_t{\setminus } (A_s\cup A_s'))\) we have that

which means that all the inequalities above are in fact equalities. In particular:

-

\(d(A_s\cap A_s')=d(A_s)=d(A_s\cup A_s')\), and

-

\(c((A_s\cup A_s') {\setminus } B_t,B_t)=C\).

Symmetric arguments can be used to show that:

-

\(d(B_t\cap B_t') = d(B_t)=d(B_t\cup B_t')\),

-

\(c((A_s\cup A_s') {\setminus } B_t',B_t')=C\),

-

\(c(A_s,(B_t\cup B_t'){\setminus } A_s)=C\), and

-

\(c(A_s',(B_t\cup B_t'){\setminus } A_s')=C\).

Therefore, both \((A_s\cap A_s',B_t\cup B_t')\) and \((A_s\cup A_s',B_t\cap B_t')\) have cost C.

(c) For the sake of contradiction, assume that \(A_s\cup B_t\ne A_s'\cup B_t'\). Suppose first that there is an element \(u\in A_s\) such that \(u\notin A_s'\cup B_t'\). In the proof of (b) we have showed that \(c((A_s\cup A_s') {\setminus } B_t',B_t')=C\). Note that \(((A_s\cup A_s') {\setminus } B_t',B_t')\) is a terminal separation that extends \((A_s',B_t')\), and moreover its left side is has at least one additional element u. Since its cost is the same as the cost of \((A_s',B_t')\), we obtain a contradiction with the maximality of \((A_s',B_t')\).\(\square \)

Lemma 5.4