Abstract

This article presents an overview of a theory for performing temporal smoothing on temporal signals in such a way that: (i) temporally smoothed signals at coarser temporal scales are guaranteed to constitute simplifications of corresponding temporally smoothed signals at any finer temporal scale (including the original signal) and (ii) the temporal smoothing process is both time-causal and time-recursive, in the sense that it does not require access to future information and can be performed with no other temporal memory buffer of the past than the resulting smoothed temporal scale-space representations themselves. For specific subsets of parameter settings for the classes of linear and shift-invariant temporal smoothing operators that obey this property, it is shown how temporal scale covariance can be additionally obtained, guaranteeing that if the temporal input signal is rescaled by a uniform temporal scaling factor, then also the resulting temporal scale-space representations of the rescaled temporal signal will constitute mere rescalings of the temporal scale-space representations of the original input signal, complemented by a shift along the temporal scale dimension. The resulting time-causal limit kernel that obeys this property constitutes a canonical temporal kernel for processing temporal signals in real-time scenarios when the regular Gaussian kernel cannot be used, because of its non-causal access to information from the future, and we cannot additionally require the temporal smoothing process to comprise a complementary memory of the past beyond the information contained in the temporal smoothing process itself, which in this way also serves as a multi-scale temporal memory of the past. We describe how the time-causal limit kernel relates to previously used temporal models, such as Koenderink’s scale-time kernels and the ex-Gaussian kernel. We do also give an overview of how the time-causal limit kernel can be used for modelling the temporal processing in models for spatio-temporal and spectro-temporal receptive fields, and how it more generally has a high potential for modelling neural temporal response functions in a purely time-causal and time-recursive way, that can also handle phenomena at multiple temporal scales in a theoretically well-founded manner. We detail how this theory can be efficiently implemented for discrete data, in terms of a set of recursive filters coupled in cascade. Hence, the theory is generally applicable for both: (i) modelling continuous temporal phenomena over multiple temporal scales and (ii) digital processing of measured temporal signals in real time. We conclude by stating implications of the theory for modelling temporal phenomena in biological, perceptual, neural and memory processes by mathematical models, as well as implications regarding the philosophy of time and perceptual agents. Specifically, we propose that for A-type theories of time, as well as for perceptual agents, the notion of a non-infinitesimal inner temporal scale of the temporal receptive fields has to be included in representations of the present, where the inherent nonzero temporal delay of such time-causal receptive fields implies a need for incorporating predictions from the actual time-delayed present in the layers of a perceptual hierarchy, to make it possible for a representation of the perceptual present to constitute a representation of the environment with timing properties closer to the actual present.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

When processing time-dependent measurement signals, there is often a need to perform temporal smoothing prior to more refined data analysis. A commonly stated general motivation for this need is to suppress measurement noise, often based on the assumption that there is a well-defined underlying noise free signal that has been corrupted with some amount of measurement noise.

A more fundamental approach to take on the need for performing temporal smoothing of temporal signals is to follow a multi-scale approach, based on the observation that measurements performed on real-world data may reflect different types of temporal structures at different temporal scales. In other words, even for the underlying noise free signal in the above signal+noise model, it may hold that the data reflect different types of underlying physical or biological processes at different temporal scales. The measurement process itself, by which a non-infinitesimal amount of energy needs to be integrated over some non-infinitesimal temporal duration on the physical sensor, does in this respect define an inner temporal scale of the measurements, beyond which there is no way to resolve temporal phenomena that occur faster than this inner temporal scale. Any real-world physical measurement does in this respect involve an inherent notion of temporal scale.Footnote 1

Specifically, in the areas of image processing, computer vision, machine listeningFootnote 2 and computational modelling of visual and auditory perception, this need is well understood, and has led to multi-scale approaches for spatial, spatio-temporal and spectro-temporal receptive fields expressed in terms of multi-scale representations over the spatial, spectral and temporal domains, where specifically the theoretical framework known as scale-space theory is based upon solid theory in terms of axiomatic derivations concerning how the multi-scale processing operations should be performed (Iijima 1962; Witkin 1983; Koenderink 1984; Koenderink and van Doorn 1987, 1992; Lindeberg 1993b, 1994, 2011, 2013b; Florack 1997; Sporring et al. 1997; Weickert et al. 1999; ter Haar Romeny 2003). It has also been found that biological perception, memory and cognition has developed biological processes at multiple temporal scales (DeAngelis et al. 1995; DeAngelis and Anzai 2004; Gütig and Sompolinsky 2006; Gentner 2008; Holcombe 2009; Goldman 2009; Gauthier et al. 2012; Atencio and Schreiner 2012; Chait et al. 2015; Teng et al. 2016; Buzsáki and Llinás 2017; Tsao et al. 2018; Osman et al. 2018; Latimer et al. 2019; Bright et al. 2020; Cavanagh et al. 2020; Monsa et al. 2020; Spitmaan et al. 2020; Howard and Hasselmo 2020; Howard 2021; Guo et al. 2021; Miri et al. 2022); see Sect. 7.3 for a more detailed retrospective review.

The subject of this article is to describe a theoretical framework for representing temporal signals at multiple temporal scales, intended for a more general audience without background in these areas and with the focus on the temporal domain only, thus without the complementary spatial or spectral domains that this theory has previously been combined with for expressing spatio-temporal and spectro-temporal receptive fields (Lindeberg and Fagerström 1996; Lindeberg 1997a, 2016, 2017, 2018a, b, 2021b; Lindeberg and Friberg 2015b, a). This theoretical framework, referred to as temporal scale-space theory, guarantees non-creation of the temporal structures with increasing temporal scales, in the sense that it ensures that a temporal representation at any coarser temporal scale constitutes a simplification of a temporal representation at any finer temporal scale, in the respect that the number of local temporal extrema, alternatively the number of temporal zero-crossings, is guaranteed to not increase from finer to coarser temporal scales.

Additionally, these temporal scale-space representations are time-causal, in the sense that they do not require access to future data, and are time-recursive, in the respect that the temporal representation at the next temporal moment can be computed with no other additional memory of the past than the temporal scale-space representation itself. For a specific choice of temporal scale-space kernel, referred to as the time-causal limit kernel, the temporal scale-space representations are also scale covariant, meaning that the set of temporal scale-space representations is closed under temporal rescalings of the input. A rescaling of the input signal by a uniform scaling factor merely corresponds to a rescaling of the temporal scale-space representations complemented by a shift of the temporal scale levels in the temporal scale-space representation. In this way, the temporal scale-space representation ensures an internally consistent way of processing temporal signals that may be subject to temporal scaling transformations, by phenomena or events that may occur faster or slower in the world.

A main purpose of this article is to describe this theory in a self-contained manner, without need for the reader to digest the original references, where the information is distributed over several papers, and may require a substantial effort for a reader not previously familiar with this framework, to get an updated view of the latest version of this theory.Footnote 3 Furthermore, we will describe explicit relations to other previously used temporal models, such as Koenderink’s scale-time kernels (Koenderink 1988) and the ex-Gaussian model (Grushka 1972; Bright et al. 2020), making it possible to transfer modelling results from those temporal models to the time-causal limit kernel described in this article.

We will also relate the presented temporal scale-space theory to other approaches for processing signals at multiple temporal scales, such as wavelet analysis and time-frequency analysis. Specifically, we will outline how the temporal derivatives of the proposed time-causal limit kernel described and analyzed in this article allow for fully time-causal and time-recursive wavelet analysis methods, without need for additional temporal buffering, and thus enabling minimal temporal response times in a time-critical context. We will also outline how a complex-valued extension of the proposed time-causal limit kernel can be seen as a time-causal analogue of Gabor functions, thus allowing for capturing essentially similar transformations of temporal signals as for the family of Gabor functions, and thereby providing a way to define a scale-covariant time-frequency representation over a time-causal temporal domain, which by a slight modification can also be extended to additionally being implemented in terms strictly time-recursive operations.

Additionally, we will describe implications of using this theory for modelling perceptual, neural and memory processes in biological systems by mathematical models, as well as implications of the theory with regard to the philosophy of time and perceptual agents. Specifically, we will argue that when modelling a perceptual representation of the present, it is essential to include the inner temporal scales of the perceptual processes that lead to any percept, where the inherent temporal delays of such time-causal operations imply that a representation of the present will de facto constitute a representation of some temporal intervals in the past, unless complemented by prediction processes to enable better timing properties of a perceptual agent that interacts with a dynamic world.

1.1 Structure of this article

This paper is organized as follows: Sect. 2 introduces the problem of constructing a temporal scale-space representation, as constituting a multi-scale representation of temporal signals, with the property that a measure of the amount of structure in the signal, quantified as the number of local extrema over time, must not increase from any finer to any coarser temporal scale. A complete classification of the time-causal convolution kernels that enable this property is given, and it is shown that the only possible time-causal scale-space kernels over a continuous temporal domain consist of truncated exponential kernels coupled in cascade.

Section 3 then adds a complementary condition on this structure, in terms of temporal scale covariance, and meaning that if the temporal input signal is rescaled by a uniform temporal scaling factor, then the result of temporal scale-space filtering of this kernel should also be a mere rescaling of the result of performing temporal scale-space filtering on the input signal, complemented by a shift in along the temporal scale axis and a possibly complementary shift in the magnitude of the signal. It is shown that a specific kernel, the time-causal limit kernel, defined from an infinite convolution of truncated exponential kernels in cascade, with specially chosen time constants, obeys temporal scale covariance. We do also show how this time-causal limit kernel relates to previously used temporal models, such as Koenderink’s scale-time kernels and the ex-Gaussian kernel.

In Sect. 4, we complement the above treatment for continuous signals with a corresponding discrete theory, ensuring that the number of local extrema in a discrete signal is also guaranteed to not increase from any finer to any coarser temporal scale. The discrete analogue of the truncated exponential kernels are first-order recursive filters coupled in cascade. Section 5 furthermore generalizes the above theory from temporal smoothing of a raw temporal signal, to the computation of temporal scale-space derivatives, which measure the amount of change in the signal with respect to any level of temporal scale. Section 6 outlines how the proposed temporal scale-space representation is related to other approaches for handling temporal signals at multiple temporal scales, specifically wavelet analysis and time-frequency analysis, with conceptual extensions of these notions with respect to strictly time-causal and time-recursive operations for real-time applications.

Section 7 describes how this general theory can be used for modelling time-dependent processes and mechanisms in perceptual and neural systems, with emphasis on spatio-temporal and spectro-temporal receptive fields as well as temporal memory processes. Section 8 outlines more general implications of the theory with regard to the philosophy of time and how time is handled by a perceptual agent. Specifically, we develop how the inner temporal scale associated with any biophysical measurement of time-dependent phenomena implies that a non-infinitesimal inner temporal scale needs to be included in a representation of the perceptual present, and also that the nonzero temporal delay of such time-causal kernels implies that a biophysical representation of the present will de facto constitute a representation of what has occurred over some temporal intervals in the past, in turn implying a need for prediction mechanisms to extrapolate the de facto time-delayed representation of the present into a better predicted representation of the actual present.

Section 9 gives a retrospective historic overview of the different parts of temporal scale-space theory that this paper is based on, follows and extends, as well as a conceptual overview of some of the main contributions to temporal scale-space theory made in this article. Finally, Sect. 10 summarizes some of the main results.

The main idea of a scale-space representation is to, given any input signal \(f\mathrm{(t)}\), create a set of derived signals \(L(t;\; \tau )\) intended to represent the information in the original signal at a set of coarser levels of scale \(\tau \), with \(L(t;\; 0) = f(t)\). These derived signals should preferably constitute true simplifications of each other, in such a way that the signal at a coarser level of scale does not contain more structures or information than any signal at any finer level of scale. Over spatial image domains, the notion of scale-space representation has been extensively studied, where several axiomatic derivations have shown that the Gaussian kernel and its corresponding Gaussian derivatives constitute a canonical class of convolution kernels for generating a spatial scale-space representation and have also been demonstrated to constitute a suitable basis of image primitives for computing different types of features from spatial image data. In this paper, we develop the associated notion of temporal scale-space theory, based on the additional constraints that (i) the temporal scale-space kernels are not allowed to access information from the future in relation to any time moment and that (ii) the computations should be possible to perform in a purely time-recursive manner, implying no other need for a temporal memory of the past than the temporal scale-space representation itself. Furthermore, we add a complementary requirement of (iii) temporal scale covariance, meaning that under temporal scaling variations of the input, the temporal scale-space representations should also constitute mere temporal rescalings of the temporal scale-space representation computed from the original temporal signal before the temporal rescaling operation, complemented by a shift along the temporal scale axis

2 Time-causal and time-recursive scale-space model for temporal signals

The problem that we consider is that we are given a temporal signal f(t) and want to define a set of successively smoothed temporal scale-space representations \(L(t;\; \tau )\) for different values of a temporal scale parameter \(\tau \ge 0\), as schematically illustrated in Fig. 1. We will throughout this treatment assume linearity and translational shift covariance, implying that the transformation from the original signal \(f :{\mathbb {R}}\rightarrow {\mathbb {R}}\) to the temporal scale-space representation \(L :{\mathbb {R}}\times {\mathbb {R}}_+ \rightarrow {\mathbb {R}}\) is given by convolution with some one-parameter family of scale-dependent convolution kernels \(h :{\mathbb {R}}\times {\mathbb {R}}_+ \rightarrow {\mathbb {R}}\)

A crucial condition on this family of temporal scale-space representations is that the temporal scale-space representation \(L(t;\; \tau _2)\) at any coarser temporal scale \(t_2\) should correspond to a simplification of the temporal scale-space representation \(L(t;\; \tau _1)\) at any finer temporal scale \(t_1\).

Following (Lindeberg 1990), we shall measure this simplification property in terms of the number of local extrema in the signal at any temporal scale, and define a scale-space kernel as a kernel that obeys the property that the number of local extrema in the signal after convolution is guaranteed to not exceed the number of local extrema prior to the convolution operation, with the important qualifier that this property should hold for any input signal. Equivalently, this property can also be expressed by measuring the number of zero-crossings before and after the convolution operation. A scale-space kernel \(h(t;\; \tau )\) is referred to as a temporal scale-space kernel (Lindeberg and Fagerström 1996) if it additionally satisfies \(h(t;\; \tau ) = 0\) for \(t < 0\), meaning that it does not require access to the future relative to any time moment.

To make the scale simplification property from finer to coarser temporal scales hold, we will assume that the family of temporal smoothing kernels \(h(u;\ \tau )\) should obey the following cascade smoothing propertyFootnote 4

for any pair of temporal scales \((\tau _1, \tau _2)\) with \(\tau _2 > \tau _1\) and for some family of transformation kernels \((\varDelta h)(t;\; \tau _1 \mapsto \tau _2)\). We can then obtain a temporal scale-space representation if and only if the transformation kernel \((\varDelta h)(t;\; \tau _1 \mapsto \tau _2)\) between adjacent temporal scale levels \(t_1\) and \(t_2\) is always a temporal scale-space kernel.

2.1 Classification of scale-space kernels for continuous signals

A fundamental question with regard to smoothing of temporal signals concerns what convolution kernels satisfy the conditions of being scale-space kernels.

2.1.1 Complete classification of continuous scale-space kernels

Interestingly, the class of one-dimensional scale-space kernels can be completely classified based on classical results by Schoenberg (1930, 1946, 1947, 1948, 1950, 1953, 1988), see also the excellent monograph by Karlin (1968). Summarizing the treatment in (Lindeberg 1993b, Sect. 3.5; 2016, Sect. 3.2), a continuous smoothing kernel is a scale-space kernel if and only if it has a bilateral Laplace-Stieltjes transform of the form (Schoenberg 1950)

for \(-c< \text{ Re }(s) < c\) and some \(c > 0\), where \(C \ne 0\), \(\gamma \ge 0\), \(\delta \) and \(a_i\) are real and \(\sum _{i=1}^{\infty } a_i^2\) is convergent.

2.1.2 Basic classes of primitive scale-space kernels over a continuous signal domain

Interpreted over the temporal domain,Footnote 5 this result means that there, beyond trivial rescaling and translation, are two main classes of one-dimensional scale-space kernels:

-

convolution with Gaussian kernels

$$\begin{aligned} h(\xi ) = e^{-\gamma \xi ^2}, \end{aligned}$$(4) -

convolution with truncated exponential functions

$$\begin{aligned} h(\xi ) = \left\{ \begin{array}{lcl} e^{- |\lambda | \xi } &{} &{} \xi \ge 0, \\ 0 &{} &{} \xi < 0, \end{array} \right. \quad \quad h(\xi ) = \left\{ \begin{array}{lcl} e^{|\lambda | \xi } &{} &{} \xi \le 0, \\ 0 &{} &{} \xi > 0, \end{array} \right. \end{aligned}$$(5)for some strictly positive \(|\lambda |\).

Moreover, the result means that a continuous smoothing kernel is a scale-space kernel if and only if it can be decomposed into a cascaded convolution of these primitives.

2.2 Time-causal temporal scale-space kernels over continuous temporal domain

Among the above primitive smoothing kernels, we recognize the Gaussian kernel, which is a good and natural temporal smoothing kernel to use when analysing pre-recorded signals in offline scenarios. When analysing temporal signals in a real-time situation, or when modelling biological processes that operate in real time, we cannot, however, use a temporal smoothing kernel that requires access to information in the future relative to any time moment.

For building a time-causal temporal scale-space representation, the truncated exponential kernels are therefore the only possible primitive time-causal temporal smoothing kernels (Lindeberg and Fagerström 1996)

where we will throughout this treatment adopt the convention of normalizing these kernels to unit \(L_1\)-norm. The Laplace transform of such a kernel is given by

Coupling K such kernels in cascade leads to a composed kernel

having a Laplace transform of the form

The temporal mean and variance of the composed kernel is

The temporal mean \(m_K\) is a coarse measure of the temporal delay of the time-causal temporal scale-space kernel, and the temporal variance \(\tau _K\) is a measure of the temporal duration, also referred to as the temporal scale.

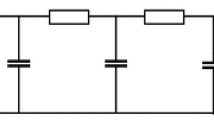

In terms of physical models, repeated convolution with this class of temporal scale-space kernels corresponds to coupling a series of first-order integrators with time constants \(\mu _k\) in cascade

with \(L(t;\; 0) = f(t)\), where the temporal scale-space representations for larger values of the scale parameter \(t_k\) constitute successively temporally smoothed representations of each other. An important property of this type of temporal scale-space representation is that it is also time-recursive. The temporal scale-space representations \(L(t;\; \tau _k)\) constitute a sufficient temporal memory of the past to compute the temporal scale-space representation and the next temporal moment, given a new input in the input signal f(t).

An important consequence of the above necessity result, is that this type of scale-space representation constitutes the only way to compute a time-causal temporal scale-space representation, given the requirement that the number of local extrema, or equivalently the number of zero-crossings, in the signal must not increase from finer to coarser temporal scales. In this respect, the temporal scale-space representations can be seen as gradual simplifications of each other from finer to coarser temporal scales.

Electric wiring diagram consisting of a set of resistors and capacitors that emulate a series of first-order integrators coupled in cascade, if we regard the time-varying voltage \(f_{\text{ in }}\) as representing the time varying input signal and the resulting output voltage and \(f_{\text{ out }}\) as representing the time varying output signal at a coarser temporal scale. Such first-order temporal integration can be used as a straightforward computational model for temporal processing in biological neurons; see also Koch (1999, Chapters 11–12) regarding physical modelling of the information transfer in the dendrites of neurons

Figure 2 shows an illustration of this model in terms of an electric wiring diagram for transforming an input signal \(f_{\text{ in }}\) to an output signal \(f_{\text{ out }}\) using a set of first-order integrators coupled in cascade.

2.3 Logarithmic distribution of the temporal scale levels

When implementing this temporal scale-space concept in practice, a set of intermediate temporal scale levels \(\tau _k\) has to be distributed between some minimum and maximum temporal scale levels \(\tau _{\text{ min }} = \tau _1\) and \(\tau _{\text{ max }} = \tau _K\). Then, it is natural to choose these temporal scale levels according to a geometric series, corresponding to a uniform distribution in units of effective temporal scale \(\tau _{{\text{ eff }}} = \log \tau \) (Lindeberg 1993a).

If we have a free choice of what minimum temporal scale level \(\tau _{\text{ min }}\) to use, a natural way of parameterizing these temporal scale levels is by using a distribution parameter \(c > 1\) such that

which by Eq. (10) implies that the time constants of the individual first-order integrators should be given by (Lindeberg 2016, Eqs. (19)–(20))

If the temporal signal is on the other hand given at some minimum temporal scale \(\tau _{\text{ min }}\), corresponding to an a priori given inner temporal scale of the measurement device, we can instead determine

in (12) such that \(\tau _1 = \tau _{\text{ min }}\) and add \(K - 1\) temporal scales with \(\mu _k\) according to (14).

Temporal smoothing kernels of this form, combined with temporal differentiation for different orders of differentiation, to obtain ripples of opposite contrast in the resulting temporal receptive fields, have been used for modelling the temporal part of the processing in models for spatio-temporal receptive fields (Lindeberg and Fagerström 1996; Lindeberg 2015, 2016, 2021b) and spectro-temporal receptive fields (Lindeberg and Friberg 2015a, b).

2.4 Logarithmic memory of the past

When using a logarithmic distribution of the temporal scale levels according to either of these methods, the different levels in the temporal scale-space representation at increasing temporal scales will serve as a logarithmic memory of the past, with qualitative similarity to the mapping of the past onto a logarithmic time axis in the scale-time model by Koenderink (1988). Such a logarithmic memory of the past can also be extended to later stages in a visual, auditory or other form of neural hierarchy.

An alternative type of temporal memory structure can be obtained if the different truncated exponential kernels are applied, not in a cascade as above, but instead in parallel with a single temporal time constant for each temporal memory channel,

for \(\mu _k = \sqrt{\tau _k}\), again with a logarithmic distribution of the temporal scale levels \(\tau _k\). Such a model for temporal memory has been studied by Howard and his co-workers (Howard 2021; Bright et al. 2020). Then, each temporal memory channel is also a simplification of the input signal f(t), and a record of the past with a given temporal delay and temporal duration. Inversion from the temporal memory channels to the input signal is also more straightforward, from the conceptual similarity to a real-valued Laplace transform (Howard et al. 2018; Howard and Hasselmo 2020). The different temporal memory channels are, however, not guaranteed to constitute formal simplifications of each other, as they are for the cascade model.

The theoretical framework for time-causal and time-recursive temporal scale-space representations presented earlier in (Lindeberg and Fagerström 1996; Lindeberg 2016) and here can be seen as providing a theoretical foundation for such time-recursive temporal memory models.

2.5 Uniform distribution of the temporal scale levels

An alternative approach to distributing the temporal scale levels is to use a uniform distribution of the intermediate temporal scales

implying that the time constants in the individual smoothing steps are given by

Then, a compact expression can be easily obtained for the composed convolution kernel corresponding to a cascade of K such kernels

Such kernels have also been used in memory models (Goldman 2009). The temporal Poisson model studied in more detail in (Lindeberg 1997a) can be seen as the limit case of such a uniform distribution of the temporal scale levels in the time-discrete case, when the difference between adjacent temporal scales tends to zero, a limit case that, however, only exists for discrete temporal signals (Lindeberg and Fagerström 1996), and which also serves as a multi-scale temporal memory of the past (see the illustrations of how the temporal scale-space representation evolves over time and temporal scales in the time-scale diagrams in Figs. 3–5 in (Lindeberg 1997a), which demonstrate the temporal memory properties of such a temporal scale-space representation — specifically observe the property that an event that occurs at a certain temporal moment first appears in the temporal scale-space representation at the finest temporal scale, and then moves to gradually coarser temporal scales as time passes by, and is thus also after some short times gradually forgotten at the finer temporal scales, being taken over temporal structures that appear after the initial temporal event).

For constructing temporal memory processes that are to operate over wide ranges of temporal scales, such models based on a uniform sampling of the temporal scale levels do, however, require a larger number of primitive temporal integrators, and thus more hardware or wetware, compared to a temporal memory model based on a logarithmic distribution of the temporal scale levels.

Combined with temporal differentiation of the smoothing kernel, such temporal kernels have been used for modelling the temporal response properties of neurons in the visual system (den Brinker and Roufs 1992) and for computing spatio-temporal image features in computer vision (Rivero-Moreno and Bres 2004; van der Berg et al. 2014).

For a given value of the temporal scale (the temporal variance) of such time-causal kernels, the temporal delay for a temporal kernel based on a uniform distribution of the temporal scale levels will, however, also be longer than for a temporal kernel constructed from a logarithmic distribution of the intermediate temporal scale levels. Thus, for formulating computational algorithms for expressing time-critical decision processes in computer vision or machine listening, as well as for modelling time-critical decision processes in biological perception or cognition, we argue that a logarithmic distribution of the temporal scale levels should be a much better choice.

For these reasons, we will henceforth in this treatment focus solely on models based on a logarithmic distribution of the temporal scale levels.

3 Time-causal temporal scale-space representations that also obey temporal scale covariance

Beyond the task of representing temporal signals at multiple temporal scales, a main requirement on a temporal scale-space representation should also be the notion of temporal scale covariance,Footnote 6 so as to be able to consistently handle temporal phenomena and events that occur faster or slower in the world. Temporal scale covariance means that if a signal f(t) is subject to a temporal scaling transformation

and then processed, here with a temporal convolution kernel \(T(t';\; \tau ')\) that depends on a temporal scale parameter \(\tau '\),

the result should be essentially similar to the result of applying the same type of processing to the original signal

and then rescaling the processed original signal

(for other types of processes possibly also complemented with some minor modification, such as a correction of the magnitude of the response). For the task of temporal filtering in a temporal scale-space representation, this implies that the temporal scale-space kernel should commute with temporal scaling transformations, as illustrated in the commutative diagram in Fig. 3.

Commutative diagram for temporal receptive field responses under temporal scaling transformations of the temporal domain. Such transformations describe the effect of events occurring slower or faster in the world. (The commutative diagram should be read from the lower left corner to the upper right corner, and means that irrespective of whether the image is first convolved with a temporal smoothing kernel and then subject to temporal scaling transformation, or whether the temporal signal is first subject to a temporal scaling transformation and then convolved with a temporal smoothing kernel, we should get the same result provided that the temporal scale parameters \(\tau \) and \(\tau '\) are properly matched to the relative temporal scaling factor S between the two temporal patterns)

This algebraic closedness property under temporal scaling transformations will imply that similar temporal phenomena that occur faster or slower in the world will be treated in a conceptually similar manner. Under variations caused by scaling transformations in the input, the output of applying scale-covariant processing to such temporally rescaled data will be mere temporal rescalings of each other, thus without bias to any particular scales, which would otherwise be a severe shortcoming, if the computational model is not well-behaved under temporal scaling transformations.

In this section, we will describe a theory for how to obtain time-causal temporal scale-space representations that also obey such temporal scale covariance, which in turn makes it possible to construct provably scale-invariant temporal representations at higher levels in a temporal processing hierarchy. The way that we will reach this goal is by constructing a limit kernel that is the convolution of an infinite number of truncated exponential kernels in cascade, with specially chosen time constants that correspond to a geometric distribution of the intermediate temporal scale levels.

Unfortunately, there is no known simple compact explicit expression for this limit kernel in the temporal domain, implying that some of the closed-form calculations using the limit kernel may be interpreted as somewhat technical at the first encounter with this function. Once these algebraic transformation properties have been established for the limit kernel, however, this function can be handled and used in a similar way as other standard functions in mathematics.

For practical implementations, the limit kernel can furthermore for the purpose of computing the representation at a single temporal scale often be very well approximated by a moderate finite number of truncated exponential kernels coupled in cascade, usually between 4 and 8 in our implementations of this concept, because of its rapid convergence properties for suitable values of its internal distribution parameter. In turn, for the purpose of computing another temporal scale-space representation at the next coarser temporal scale, applying a single truncated exponential kernel to the nearest finer temporal scale is sufficient.

In this section, we will first define the limit kernel and derive its transformation properties. Then, we will turn to relating and comparing the limit kernel to two other models used for expressing temporal variations over time.

Approximations of the time-causal limit kernel for \(\tau = 1\) using \(K = 7\) truncated exponential kernels in cascade and their first- and second-order derivatives. (top row) Logarithmic distribution of the scale levels for \(c = \sqrt{2}\). (bottom row) Logarithmic distribution for \(c = 2\). (Horizontal axes: time. Vertical axes: function values)

3.1 The time-causal limit kernel

Consider the Fourier transform of the composed convolution kernel that we obtain by coupling K truncated exponential kernels in cascade with a logarithmic distribution of the temporal scale levels and thus time constants according to (13) and (14) for some \(c > 1\):

By formally letting the number of primitive smoothing steps K tend to infinity and renumbering the indices by a shift in terms of one unit, we obtain a limit object of the form (Lindeberg 2016, Eq. 38)

By treating this limit kernel as an object by itself, which will be well-defined because of the rapid convergence by the summation of variances according to a geometric series, interesting relations can be expressed between the temporal scale-space representations

obtained by convolution with this limit kernel.

3.1.1 Self-similar recurrence relation for the time-causal limit kernel over temporal scales

Using the limit kernel, an infinite number of discrete temporal scale levels is implicitly defined given the specific choice of one temporal scale \(\tau = \tau _0\):

Directly from the definition of the limit kernel, we obtain the following recurrence relation between adjacent temporal scales:

and in terms of the Fourier transform:

3.1.2 Behaviour under temporal rescaling transformations

From the Fourier transform of the limit kernel (25), we can observe that for any temporal scaling factor S it holds that

Thus, the limit kernel transforms as follows under a scaling transformation of the temporal domain:

If we, for a given choice of distribution parameter c, rescale the input signal f by a temporal scaling factor \(S = 1/c\) such that \(t' = t/c\), it then follows that the scale-space representation of \(f'\) at temporal scale \(\tau ' = \tau /c^2\)

will be equal to the temporal scale-space representation of the original signal f at scale \(\tau \) (Lindeberg 2016, Eq. 46)

Hence, under a rescaling of the original signal by a temporal scaling factor c, a rescaled copy of the temporal scale-space representation of the original signal can be found at the next lower discrete temporal scale, relative to the temporal scale-space representation of the original signal.

3.1.3 Provable temporal scale covariance

Applied recursively, the above result implies that the temporal scale-space representation obtained by convolution with the limit kernel obeys a closedness property over all temporal scaling transformations \(t' = c^j t\) with temporal rescaling factors \(S = c^{j}\) (\(j \in {\mathbb {Z}}\)) that are integer powers of the distribution parameter c (Lindeberg 2016, Eq. 47),

thus allowing for perfect scale covariance over the restricted subset of scaling factors \(S = c^j\) that precisely matches the specific set of discrete temporal scale levels that is defined by a specific choice of the distribution parameter c. Based on this desirable and highly useful property, it is natural to refer to the limit kernel as the scale-covariant time-causal limit kernel (Lindeberg 2016, Sect. 5).

Illustration of temporal smoothing of two signals: (left) a Brownian noise signal generated from a simulated Wiener process and (right) a synthetic sine wave signal \(f(t) = \sin (\exp ((b-t)/a))\) for \(a = 200\) \(b = 1000\) with temporally varying frequency so that the wavelength increases with time t, computed using a discrete approximation of the time-causal limit kernel for \(c = 2\) in terms of a set of recursive filters coupled in cascade. Observe how fine-scale structures corresponding to higher frequencies are successively suppressed when going from finer to coarser temporal scales, and also that the temporal scale-space representations at coarser temporal scales are associated with longer temporal delays, in this figure seen as different offsets in the positions of the peaks in the temporal signal at different temporal scales. (Horizontal axes: time. Vertical axes: signal values)

Illustration of the temporal scale covariance property of the temporal scale-space representation defined from convolutions with the time-causal limit kernel. In the bottom row, the signal in the right column is a rescaling of the signal in the left column by a temporal scaling factor \(S = 2\) (with the temporal rescaling performed relative to the center of the temporal interval). In the top row, the temporal scale-space representations at the matching temporal scale levels \(\sqrt{\tau } = 128\) and \(\sqrt{\tau '} = 256\) have for distribution parameter \(c = 2\) been computed from the corresponding input signals in the bottom row. Due to the temporal scale-covariance property, these temporal scale-space representations are in the ideal continuous case related by a temporal scaling transformation with the same temporal scaling factor \(S = 2\) as between the input signals. If one for experimental purposes compares a corresponding temporal rescaling of the output from the discrete implementation in terms of recursive filters (described in more detail in Sect. 4), one can see that the corresponding graphs are practically indistinguishable (see Fig. 7). In this way, this experiment verifies and visualizes the theoretical properties reflected in the commutative diagram in Fig. 3. (Horizontal axes: time. Vertical axes: signal values)

Comparison between the two different ways of computing the representation in the upper right corner in Fig. 6 from the corresponding representation in the lower left corner, using either the clockwise direction (marked in green) or the counterclockwise direction (marked in red). When generating this illustration, we have first essentially performed a rescaling of the scale-space representation of the signal in the left column and marked the result as solid green curve, and then overlayed the scale-space representation of the signal in the right column with a dashed red curve. (Technically, in the discrete implementation, we have, however, instead visualized the equivalent result of such a computation at a lower resolution, to avoid the formally ill-defined operation of interpolating the discrete signal in the left column to a higher resolution, and instead subsampled the signal in the right column, which explains the change in the labelling of the temporal axis.) (left) The result for the entire temporal interval used in the right column in Fig. 6. (right) Enlargement of a central region of the temporal interval. As can be seen from the visualization, the results computed in the clockwise or counterclockwise directions are basically indistinguishable, demonstrating the scale covariance property of the temporal scale-space representation defined by convolution with the time-causal limit kernel. (The result is best viewed by zooming in to a digital copy of the article.) (Horizontal axes: time. Vertical axes: signal values)

3.1.4 Qualitative properties

Figure 4 shows graphs of this time-causal limit kernel as well its first- and second-order temporal derivatives for a few values of the distribution parameter c. As can be seen from the graphs, the raw smoothing kernels have a skewed shape, where the temporal delay increases with decreasing values of the distribution parameter c, and with the explicit measures of the skewness \(\gamma _1\) and kurtosis \(\gamma _2\) of these kernels increasing as function of the distribution parameter c according to (Lindeberg 2016, Eqs. (130) and (131))

3.1.5 Experimental results

Figure 5 shows the result of smoothing two synthetic temporal signals with the time-causal limit kernel for different values of the temporal scale parameter \(\tau \). As can be seen from the graphs, the signal is gradually smoothed from finer to coarser temporal scales, here clearly seen in the way that finer-scale structures are suppressed before coarser-scale structures in the left column and that higher frequencies are suppressed before lower frequencies in the right column. In addition, the temporal delay increases from finer to coarser temporal scales, here seen in terms of different temporal offsets regarding the temporal moments at which the temporal peaks occur.

When using a comparably large value of the distribution parameter c, as used in this figure, the temporal delay will be comparably low, which is a preferable property when needing to respond fast in a time-critical context. When using lower values of the distribution parameter, the temporal delay at a given temporal scale will be longer, which may be a preferable property if you want to use the temporal scale-space representations as temporal memory buffers, with the coarser temporal scale representations then constituting memories of what has happened further in the past.

Figure 6 gives an experimental illustration of the temporal scale covariant property of the time-causal limit kernel. Here, a synthetic signal generated from a simulated Wiener process has been rescaled by a temporal rescaling factor \(S = 2\). From these two input signals, temporal scale-space representations have then been computed at the matching temporal scale levels \(\sqrt{\tau } = 128\) and \(\sqrt{\tau '} = 256\). Due to the temporal scale covariance property, these temporal scale-space representations are then also related by the same temporal scaling factor \(S = 2\).

Figure 7 gives an illustration of the equality between the two different ways of computing the representation in the upper right corner from the signal in the lower left corner in Fig. 6, using either a clockwise orientation or a counterclockwise orientation in the corresponding commutative diagram in Fig. 3. As can be seen from the visualization, the results are essentially indistinguishable, showing that a good numerical approximation to temporal scale covariance can also be achieved in a discrete implementation (to be described further in Sect. 4).

3.1.6 Applications of the time-causal limit kernel

The time-causal limit kernel and its temporal derivatives has been used for modelling the temporal component in spatio-temporal receptive fields in the retina, the LGN and the primary visual cortex (V1) (Lindeberg 2021b), for modelling the temporal component in methods for spatio-temporal feature detection in video data (Lindeberg 2016), for expressing methods for temporal scale selection in temporal signals (Lindeberg 2017, 2018b), for modelling the temporal component of spatio-temporal smoothing in methods for spatio-temporal scale selection (Lindeberg 2018a, b) and for modelling the temporal component of smoothing in computer vision methods for video analysis (Jansson and Lindeberg 2018).

In Sect. 7.3, we do additionally propose to use the time-causal limit kernel for modelling temporal phenomena at multiple temporal scales in neural signals, and in Sect. 7.2 specifically to use this kernel for modelling the temporal variability in auditory receptive fields.

In Sect. 6.1 we outline how the time-causal limit kernel can be used for defining time-causal and time-recursive wavelet representations, and in Sect. 6.2 how the time-causal limit kernel makes it possible to define time-causal and time-recursive time-frequency representations (spectrograms) that additionally obey temporal scale covariance.

Comparison between (blue curves) the time-causal limit kernel according to (25) and approximated using the first \(K = 7\) components of the infinite convolution of truncated exponential kernels in cascade with its first- and second-order temporal derivatives and (brown curves) the temporal kernels in Koenderink’s scale-time model (37) and their first- and second-order temporal derivatives. All kernels correspond to temporal scale (variance) \(\tau = 1\) with the additional parameters determined such that the temporal mean values (the first-order temporal moments) become equal in the limit case when the number of temporal scale levels K tends to infinity (Eq. 38). (top row) Logarithmic distribution of the temporal scale levels for \(c = \sqrt{2}\) (bottom row) Corresponding results for \(c = 2\). (Horizontal axes: time. Vertical axes: function values)

Comparison between (brown curves) the ex-Gaussian model according to (40) and (blue curves) the time-causal limit kernel according to (25) and approximated using the first \(K = 7\) components of the infinite convolution of truncated exponential kernels in cascade. (left) for \(\mu = 1\), \(\sigma = 1/2\), \(m = 1\), \(a_0 = 0\) and \(a_1 = 1\) corresponding to \(\tau \approx 1.24\), \(c \approx 1.89\), \(b_0 = 0\) and \(b_1 \approx 1.25\), (middle) for \(\mu = 4\), \(\sigma = 1/2\), \(m = 2\), \(a_0 = 0\) and \(a_1 = 1\) corresponding to \(\tau \approx 16.25\), \(c \approx 2.65\), \(b_0 = 0\) and \(b_1 \approx 5.01\), (right) for \(\mu = 4\), \(\sigma = 2\), \(m = 2\), \(a_0 = 0\) and \(a_1 = 1\) corresponding to \(\tau \approx 18.94\), \(c \approx 2.89\), \(b_0 = 0\) and \(b_1 \approx 19.37\). (Horizontal axes: time. Vertical axes: function values)

3.2 Alternative scale-covariant temporal models

An alternative type of temporal model that one could also consider from the general classification of temporal scale-space kernels is to use a set of parallel temporal channels formed by convolution of the input signal, with a single truncated exponential function in each channel, and with a geometric distribution of the their time constants, of the form (16). As previously explained in Sect. 2.4, such temporal models have been previously used as models of temporal memory in neuroscience (Howard 2021; Bright et al. 2020).

Because of the geometric distribution of the time constants in these temporal channels, they will obey temporal scale covariance. Temporal scale covariance will also apply to different types of generalizations of such a model, e.g. by having the same small number of truncated exponential kernels in cascade in each temporal channel, with the time constants between the different temporal channels coupled according to a geometric distribution.

A fundamental difference between such temporal models and the temporal scale-space model based on the time-causal limit kernel, however, is that in the first class of models the temporal channels for larger values of the scale parameter are not guaranteed to constitute simplifications of the temporal channels for smaller values of the scale parameter. By the temporal smoothing kernels being scale-space kernels, each temporal channel is guaranteed to be a simplification of the input signal. When relating different temporal scale channels to each other, however, the number of local extrema in a temporal channel for a larger value of the temporal scale parameter is not guaranteed to not exceed the number of local extrema in a temporal channel for a finer smaller value of the temporal scale parameter.

Because of the scale-recursive property (28) of the time-causal limit kernel, it is on the other hand formally guaranteed that the temporal scale-space representation at the next coarser temporal scale corresponds to the result of applying temporal smoothing with a truncated exponential kernel to the temporal scale-space representation at the nearest finer temporal scale. Applied recursively, the temporal scale-space representation at any coarser temporal scale corresponds to the result of applying a set of truncated exponential kernels in cascade to the representation at any finer temporal scale. In this way, for the temporal scale-space representation generated by convolution with the time-causal limit kernel for different values of the temporal scale parameter, every temporal scale-space representation at a given temporal scale is guaranteed to constitute a formal simplification of any other temporal scale-space representation at any finer temporal scale.

The time-causal limit kernel is special in that it both obeys temporal scale covariance and guarantees non-creation of new local extrema with increasing temporal scales with regard to convolutions over a time-causal temporal domain.

3.3 Relation to Koenderink’s scale-time model

In his scale-time model, Koenderink (1988) proposed to perform a logarithmic mapping of the past via a temporal delay \(\delta \) and then applying Gaussian smoothing with standard deviation \(\sigma \) in the transformed domain. If we additionally normalize these kernels to unit \(L_1\)-norm, we obtain a time-causal kernel of the form (Lindeberg 2016, Eq. 151)

In (Lindeberg 2016, Appendix 2) a formal mapping between this scale-time kernel and the time-causal limit kernel is derived, by requiring the first- and second-order moments of these two classes of kernels to be equal:

which hold as long as \(c > 1\) and \(\sigma < \sqrt{\log 2} \approx 0.832\).

Figure 8 shows a comparison between the time-causal limit kernel and Koenderink’s scale-time kernels regarding the zero-order convolution kernels as well as their first- and second-order derivatives. As can be seen from the graphs, these two classes of kernels have qualitatively rather similar shapes. The time-causal limit kernel does, however, have the conceptual advantage that it can be computed in a time-recursive manner, whereas the scale-time kernel does not have any known time-recursive implementation, implying that it formally requires an infinite memory of the past (or some substantially extended temporal buffer, if the infinite temporal convolution integral is truncated at the tail).

While we do not have any compact explicit expression for the time-causal limit kernel over the temporal domain, if we approximate the time-causal limit kernel by a scale-time kernel according to the mapping (38), we obtain the following estimate for the location of the maximum point of the time-causal limit kernel:

This estimate can be expected to be an overestimate, and is a better estimate of the temporal delay of the time-causal limit kernel than the temporal mean according to (10).

3.4 Relation to the ex-Gaussian model used by Bright et al.

In (Bright et al. 2020), a so-called ex-Gaussian model (Grushka 1972), that is the convolution of an unnormalized Gaussian function with an unnormalized truncated exponential kernel

is used for fitting temporal response functions of neurons to an analytical temporal model. In Appendix A.1, a relation between this ex-Gaussian model and a corresponding model based on the time-causal limit kernel

is derived by requiring the zero-, first- and second-order temporal moments of these kernels to be equal, if the DC-offsets \(a_0\) and \(b_0\) are disregarded and assumed to be equal.

This leads to the following mapping between the parameters of the two models

where \(\delta \) and V denote the temporal mean and the temporal variance of the ex-Gaussian model for \(a_0 = 0\)

and \(M_0\), \(M_1\) and \(M_2\) denote the explicit expressions for the zero-, first- and second-order moments of the ex-Gaussian model for \(a_0 = 0\), according to (88), (89) and (90).

Figure 9 shows a few examples of ex-Gaussian temporal models approximated by models based on the time-causal limit kernel in this way. As can be seen from the graphs, the two classes of kernels can capture qualitative similar temporal shapes in time-causal temporal data,Footnote 7 with the conceptual differences that: (i) the model based on the time-causal limit kernel always tends to zero at the temporal origin \(t = 0\) when the DC-offset is zero, whereas the ex-Gaussian model may take nonzero values for \(t = 0\), (ii) the time-causal limit kernel does not contain any internal non-causal temporal component as the time-shifted Gaussian kernel in (40) constitutes, and (iii) the time-causal limit kernel has a completely time-recursive implementation, which is essential when modelling temporal phenomena in real time as they, for example, occur in biological neurons. The model based on the time-causal limit kernel is also specifically possible to implement based on a cascade of first-order integrators in cascade, which is a natural model for the information transfer in the dendrites of neurons (Koch 1999, Chapters 11–12).

3.4.1 Extension to third-order moment-based model fitting involving also a flexible temporal offset

In Appendix A.2, an extension of the above second-order moment-based model to a third-order moment-based model is performed, which makes it possible to also determine a temporal offset \(t_0\)

and which may be relevant in situations when the temporal origin of the signal cannot be accurately determined in an experimental situation. Since the closed-form expressions for the solutions become more complex in this case (they are determined from the solutions of a fourth-order algebraic equation), we restrict ourselves to a conceptual and algorithmic description in this treatment, see Appendix A.2 for further theoretical details and experimental results.

3.4.2 Extension to model fitting for other signals or functions

The above general procedures, whereby the parameters in the model based on the time-causal limit kernel are determined from the lower-order temporal moments of the data, can also be more generally used for fitting models based on the time-causal limit kernel to other signals and functions that: (i) are defined for non-negative values of time, (ii) assume non-negative values only, (iii) have a roughly unimodal shape of first increasing and then decreasing and (iv) decay towards zero towards infinity. The approach for fitting basically implies replacing the temporal moments \(M_0\), \(M_1\), \(M_2\) and optionally \(M_3\) of the ex-Gaussian model by the temporal moments of the signal or function to be fit with a model based on the time-causal limit kernel, see Appendix A.3 for additional details.

4 Computational implementation of convolutions with the time-causal limit kernel on discrete temporal data

In the theory presented so far, we have throughout assumed that the signal is continuous over time. When implementing this model on sampled temporal data, the theory must be transferred to a discrete temporal domain.

In this section, we will describe how the temporal receptive fields can be implemented in terms of corresponding discrete temporal scale-space kernels that possess scale-space properties over a discrete temporal domain, and in addition are both time-causal and fully time-recursive.

Following Lindeberg (1990) and in a corresponding way as the treatment in Sect. 2, let us define a discrete kernel as a discrete scale-space kernel if for any input signal it is guaranteed that the number of local extrema, alternatively the number of zero-crossings, cannot increase under convolution with the discrete scale-space kernel.

4.1 Classification of scale-space kernels for discrete signals

To characterize the class of discrete scale-space kernels, we can, in a corresponding way as for the continuous case, also build upon classical results by Schoenberg (1930, 1946, 1947, 1948, 1950, 1953, 1988), and as further developed in the monograph by Karlin (1968).

Making a summary of the treatment in Lindeberg (1990, Sect. IV) (2016, Sect. 6.1), a discrete smoothing kernel is a discrete scale-space kernel if and only if it has its generating function of the sequence of filter coefficients \(\varphi (z) = \sum _{n=-\infty }^{\infty } c_n z^n\) of the form (Schoenberg 1948)

where \(c > 0\), \(k \in {\mathbb {Z}}\), \(q_{-1}, q_1, \alpha _i, \beta _i, \gamma _i, \delta _i \ge 0\) and \(\sum _{i=1}^{\infty }(\alpha _i + \beta _i + \gamma _i + \delta _i) < \infty \).

4.1.1 Basic classes of primitive scale-space kernels over a discrete signal domain

With regard to the original temporal domain,Footnote 8 this characterization means that, besides trivial rescalings and translations, there are three basic classes of discrete smoothing transformations:

-

two-point weighted average or generalized binomial smoothing

$$\begin{aligned} \begin{aligned} f_{\text{ out }}(x)&= f_{\text{ in }}(x) + \alpha _i \, f_{\text{ in }}(x - 1) \quad (\alpha _i \ge 0),\\ f_{\text{ out }}(x)&= f_{\text{ in }}(x) + \delta _i \, f_{\text{ in }}(x + 1) \quad (\delta _i \ge 0), \end{aligned} \end{aligned}$$(49) -

moving average or first-order recursive filtering

$$\begin{aligned} \begin{aligned} f_{\text{ out }}(x)&= f_{\text{ in }}(x) + \beta _i \, f_{\text{ out }}(x - 1) \quad (0 \le \beta _i< 1), \\ f_{\text{ out }}(x)&= f_{\text{ in }}(x) + \gamma _i \, f_{\text{ out }}(x + 1) \quad (0 \le \gamma _i < 1), \end{aligned} \end{aligned}$$(50) -

infinitesimal smoothingFootnote 9 or diffusion as arising from the continuous semi-groups made possible by the factor \(e^{(q_{-1}z^{-1} + q_1z)}\).

To transfer the continuous first-order integrators derived in Sect. 2.2 to a discrete implementation, we shall in this treatment focus on the first-order recursive filters (50), which by additional \(l_1\)-normalization constitute both the discrete correspondence and a numerical approximation of time-causal and time-recursive first-order temporal integration (11).

4.2 Discrete temporal scale-space kernels based on recursive filters

Given a signal that has been sampled by some temporal frame rate r, the temporal scale \(\sigma _t\) in the continuous model in units of seconds is first transformed to a temporal variance \(\tau \) relative to a unit time sampling

Then, a discrete set of intermediate temporal scale levels \(\tau _k\) is defined by (12) or (17), with the difference between successive scale levels according to

with \(\tau _0 = 0\).

For implementing the temporal smoothing operation between two such adjacent scale levels (with the lower level in each pair of adjacent scales referred to as \(f_{\text{ in }}\) and the upper level as \(f_{\text{ out }}\)), we make use of a first-order recursive filter normalized to the form

and having a generating function of the form

which is a time-causal kernel and satisfies discrete scale-space properties of guaranteeing that the number of local extrema or zero-crossings in the signal will not increase with increasing scale (Lindeberg 1990; Lindeberg and Fagerström 1996). These recursive filters are the discrete analogue of the continuous first-order integrators (11).

Each primitive recursive filter (53) has temporal mean value \(m_k = \mu _k\) and temporal variance \(\varDelta \tau _k = \mu _k^2 + \mu _k\), and we compute \(\mu _k\) from \(\varDelta \tau _k\) in (52) according to

By the additive property of variances under convolution, the discrete variances of the discrete temporal scale-space kernels will perfectly match those of the continuous model, whereas the temporal mean values and the temporal delays may differ somewhat. If the temporal scale \(\tau _k\) is large relative to the temporal sampling distance, the discrete model should be a good approximation in this respect.

By the time-recursive formulation of this temporal scale-space concept, the computations can be performed based on a compact temporal buffer over time, which contains the temporal scale-space representations at temporal scales \(\tau _k\), and with no need for storing any additional temporal buffer of what has occurred in the past, to perform the corresponding temporal smoothing operations.

For practical implementations, we often approximate the time-causal limit kernel using 4–8 layers of recursive filters coupled in cascade using either \(c = \sqrt{2}\) or \(c = 2\).

A summarizing algorithmic description of how to implement these temporal filtering operations in practice is given in Appendix B.

5 Computation of temporal scale-space derivatives

So far, we have been concerned with the problem of how to smooth a temporal signal in such a way that the smoothing transformation is guaranteed to not increase the number of local extrema in the signal, or equivalently the number of zero-crossings. In many applications, one is, however, more interested in studying the change in the signal over time, as can be modelled by temporal derivatives.

For a purely time-dependent signal, the first-order temporal derivative will lead to strong responses in the signal when the temporal slope is high, corresponding to, e.g. onsets or offsets of a sound in auditory processing, or motion in the world, alternatively changes in the illumination, for video processing. Regarding visual processing over a purely spatial domain, first-order spatial derivatives will respond to edges in the image domain, which in turn may correspond to discontinuities in either depth, surface orientation, reflectance or illumination in the world.

For a purely time-dependent signal, the second-order derivatives may on the other hand often lead to strong responses near local maxima or minima over time, if the sign of the first-order temporal derivative changes rapidly at those points. Concerning audio processing, a second-order temporal derivative applied to a spectrogram representation may give a strong response to, e.g. a beep or some other brief temporal sound, provided that the temporal scale is sufficiently near the temporal duration of the sound. Applying second-order derivatives with respect to logarithmic frequencies to a spectrogram will in turn enhance spectral bands and formants, provided that the logspectral scales are appropriately selected. Regarding visual processing, a second-order temporal derivative applied to a video stream may give a strong response to a flashing light, again assuming that the temporal scale is sufficiently near the temporal duration of the flash. Assuming that the visual observer does not fixate a moving object, second-order temporal derivatives may also give strong responses to image patterns that move relative to the viewing direction. For visual processing on a purely spatial domain, second-order spatial derivative operators can be specially designed to give strong responses to blob-like or corner-like image structures, which can be detected by interest point detectors.

Beyond such pointwise or regionwise responses over time, as described above, temporal derivatives can also be interpreted and used densely, for every time moment, and, for example, be combined according to a local Taylor expansion around any temporal moment \(t_0\):

to characterize the local temporal structures in the temporal signal at any scale \(\tau \). Such a representation involving temporal derivatives up to order N is referred to as a temporal N-jet representation.

A practical complication that, however, arises, when computing temporal derivatives at multiple scales concerns how to compare the responses between different levels of scale. Due to the temporal smoothing operation, the amplitude of the temporal derivatives can be expected to decrease monotonically with increasing amount of temporal smoothing, provided that the temporal smoothing operation is sufficiently well-designed. This does, for example, hold for temporal smoothing with the truncated exponential kernels, which arise as the only possible temporal smoothing primitives in the time-causal scale-space kernels, including the time-causal limit kernel.

In this section, we will describe a way to reduce the problem of decreasing amplitude of temporal derivatives with increasing values of the temporal scale parameter, by instead using scale-normalized temporal derivatives. The intention is that by using appropriately designed scale-normalized derivative operators, it should be possible to judge if a temporal derivative response of a certain order at a certain temporal scale should be regarded as stronger or weaker than a corresponding temporal derivative response at some other temporal scale. We will also describe how temporal scale covariance can be obtained for temporal derivative operators that are combined with the time-causal limit kernel.

Illustration of the result of computing discrete approximations of second-order scale-normalized temporal derivatives \(L_{\zeta \zeta }\) from the time-causal temporal scale-space representation L at different scales (using distribution parameter \(c = 2\) and scale normalization power \(\gamma = 1\)), here for a synthetic input signal f consisting of two temporal peaks generated as discrete approximations to time-causal limit kernels for temporal scales \(\tau = 16\) and \(\tau = 256\) with a certain amount of relative temporal delay to separate the responses as well as a small amount of added white Gaussian noise. (Horizontal axes: time. Vertical axes: Signal values)

5.1 The scale-normalized derivative concept

For the non-causal Gaussian scale-space concept defined over a purely spatial domain, and corresponding to Gaussian smoothing at all scales, it can be shown that the canonical way of defining scale-normalized derivatives at different spatial scales s is according to (Lindeberg 1998a, b, 2021a)

where \(\gamma \) is a free parameter. Specifically, it can be shown (Lindeberg 1998a, Sect. 9.1) that this notion of \(\gamma \)-normalized derivatives corresponds to normalizing the m:th order Gaussian derivatives \(g_{\xi ^m}\) over N-dimensional image space to constant \(L_p\)-norms over scale

with the power p in the \(L_p\)-norm depending on the scale normalization power \(\gamma \), the order of differentiation m and the spatial dimensionality N of the signal according to

where the perfectly scale-invariant case \(\gamma = 1\) corresponds to \(L_1\)-normalization for all orders m.

5.2 Scale normalization for time-causal temporal derivatives

For temporal derivativesFootnote 10 defined from the time-causal scale-space concept corresponding to convolution with truncated exponential kernels coupled in cascade, it can be shown to be meaningful to define time-causal scale-space derivatives in a corresponding manner (Lindeberg 2016, 2017):

-

By variance-based scale normalization, we define scale-normalized temporal derivatives according to

$$\begin{aligned} \partial _{\zeta ^n} = \tau ^{n \gamma /2} \, \partial _{t^n}, \end{aligned}$$(60)where \(\tau \) denotes the variance of the temporal smoothing kernel.

-

By \(L_p\)-norm-based scale normalization, we determine a temporal scale normalization factor \(\alpha _{n,\gamma }(\tau )\)

$$\begin{aligned} \partial _{\zeta ^n} = \alpha _{n,\gamma }(\tau ) \, \partial _{t^n} \end{aligned}$$(61)such that the \(L_p\)-norm (with p determined as function of \(\gamma \) according to (59)) of the corresponding composed scale-normalized temporal derivative computation kernel \(\alpha _{n,\gamma }(\tau ) \, h_{t^n}\) equals the \(L_p\)-norm of some other reference kernel, where we may initially take the \(L_p\)-norm of the corresponding Gaussian derivative kernels (Lindeberg 2016, Sect. 7.3)

$$\begin{aligned} \Vert \alpha _{n,\gamma }(\tau ) \, h_{t^n}(\cdot ;\; \tau ) \Vert _p&= \alpha _{n,\gamma }(\tau ) \, \Vert h_{t^n}(\cdot ;\; \tau ) \Vert _p \nonumber \\&= \Vert g_{\xi ^n}(\cdot ;\; \tau ) \Vert _p = G_{n,\gamma }. \end{aligned}$$(62)

5.3 Scale covariance property of scale-normalized temporal derivatives

In the special case when the temporal scale-space representation is defined by convolution with the scale-covariant time-causal limit kernel according to (26) and (25), it is shown in (Lindeberg 2016, Appendix 3) that the corresponding scale-normalized derivatives become truly scale covariant under temporal scaling transformations \(t' = c^j t\) with scaling factors \(S = c^j\) that are integer powers of the distribution parameter c

between matching temporal scale levels \(\tau ' = c^{2j} \tau \). Specifically, for \(\gamma = 1\) corresponding to \(p = 1\) the magnitude values of the scale-normalized temporal derivatives at matching scales become fully scale invariant

allowing for well-defined comparisons between the magnitude values of different types of temporal structures in a signal at different temporal scales.

5.4 A canonical class of time-causal, time-recursive and scale-covariant temporal basis functions

The above scale covariance property implies that the scale-normalized temporal derivatives of the time-causal limit kernel constitute a canonical class of temporal basis functions over a time-causal temporal domain.

These kernels have been used as temporal basis functions for spatio-temporal receptive fields (Lindeberg 2016, 2021b; Jansson and Lindeberg 2018) and for expressing methods for temporal scale selection (Lindeberg 2017, 2018b) and spatio-temporal scale selection (Lindeberg 2018a, b) that detect and compare temporal structures at different temporal scales in a completely scale-invariant manner.

In this treatment, we additionally propose to use this family of temporal basis functions to model the temporal variability of neurons over multiple scales (Sect. 7.3) and specifically the temporal variability in computational models of auditory receptive fields (Sect. 7.2).

5.5 Discrete approximations of scale-normalized temporal scale-space derivatives

For the discrete temporal scale-space concept over discrete time described in Sect. 4.2, discrete approximations of temporal derivatives are obtained by applying temporal difference operators

to the discrete temporal scale-space representation at any temporal scale, which in turn is constructed from a cascade of first-order recursive filters of the form (53), with the time constants \(\mu _k\) given by (55) from the differences in temporal scale levels \(\varDelta \tau _k = \tau _k - \tau _{k-1}\) with \(\tau _k\) according to (12).

Scale normalization factors for discrete \(l_p\)-normalization are then defined in an analogous way as for continuous signals, (60) or (61), with the only difference that the continuous \(L_p\)-norm is replaced by a discrete \(l_p\)-norm.

5.5.1 Experimental results

Figure 10 shows an illustration of computing discrete approximations of second-order scale-normalized temporal derivatives in this way,Footnote 11 for a synthetic input signal consisting of two temporal peaks generated from discrete approximations of the time-causal limit kernel for \(\tau = 16\) and \(\tau = 256\), respectively, and with some amount of relative temporal delay to separate the responses as well as a small amount of added white Gaussian noise.

Observe how the dominant responses to the finer-scale structures in the input signal are obtained at finer levels of scale in the temporal scale-space representation, whereas the dominant responses to the coarser-scale structures in the input signal are obtained at coarser levels of scale in the temporal scale-space.

Do also observe how the responses at coarser temporal scales are associated with longer temporal delays, manifesting themselves as temporal peaks corresponding to the underlying signal structures appearing at later time moments at coarser levels of scale.

Do furthermore note that the range of values on the vertical axis in these graphs is the same for all the scale values, demonstrating the ability to make relative comparisons between the magnitudes of the derivative responses at different scales, due to the notion of scale normalization of the temporal derivatives, here with regard to the \(l_1\)-norm.

6 Relations to wavelet analysis and time-frequency analysis

For analyzing temporal signals at multiple temporal scales, wavelet analysis (Grossmann and Morlet 1984; Mallat 1989, 1999; Heil and Walnut 1989; Meyer 1992; Daubechies 1992; Chui 1992; Rioul and Duhamel 1992; Graps 1995; Debnath and Shah 2002) and time-frequency analysis (Gabor 1946; Cohen 1995; Feichtinger and Strohmer 1998; Qian and Chen 1999; Gröchenig 2001; Flandrin 2018) constitute two other main classes of conceptual tools. In this treatment, we do, however, not follow those notions as prototype models, instead adhering to the scale-space paradigm because of its special properties. Nevertheless, the presented temporal scale-space theory can be related to wavelet analysis and time-frequency analysis in the following ways:

6.1 Relations to wavelet analysis

By construction, the temporal derivatives of the time-causal limit kernel \(\varPsi (t;\; \tau , c)\) defined from (25) have integral equal to zero

In this respect, the temporal derivatives of the time-causal kernel, complemented by normalization with respect to a suitably chosen norm, can serveFootnote 12 as a mother wavelet over a continuous time-causal temporal domain,

in a similar way as Gaussian derivative kernels of a certain order

such as the Mexican hat wavelet (Marr 1976, 1982), also known as a Ricker wavelet (Ricker 1944; Hosken 1988), and corresponding to the second-order derivative of the Gaussian, can serve as a mother wavelet over a continuous non-causal temporal domain.

In wavelet analysis, one usually normalizes both the mother wavelet and the child wavelets to unit \(L_2\)-norm, leading to translated and rescaled child wavelets of the form

In scale-space theory, the most common way of normalizing the Gaussian derivative kernels as well as temporal derivatives of the time-causal limit kernel is to constant \(L_1\)-norm over scales (and corresponding to scale-normalized derivatives for \(\gamma = 1\) according to Sect. 5.1), although other scale normalizations, including \(L_2\)-normalization, are also possible, as further described in Sect. 5.1. Such \(L_1\)-normalization then leads to translated and rescaled child wavelets of the form

In the following, we will describe how the corresponding wavelet representations obtained my mapping a signal f onto the child wavelets can be computed if the mother wavelet is chosen as a temporal derivative of the time-causal limit kernel.

6.1.1 Handling the transformation properties of the child wavelets within the algebra of the time-causal temporal scale-space representation

By using the transformation properties of scale-normalized derivatives of the time-causal scale-space representation of the time-causal limit kernel (63), it follows that under a scaling transformation of time \(t' = c^j t\) for some integer j with c being the distribution parameter of the time-causal limit kernel, and with a corresponding transformation of the temporal scale parameter \(\tau ' = c^{2j} \tau \), similar transformation properties hold for the scale-normalized temporal derivatives of the time-causal limit kernel (let the input signal be the continuous delta function \(f(t) = \delta (t)\) in (63))

where \(\gamma \) is the power in the temporal scale-normalized derivative concept and p is the power in the corresponding \(L_p\)-norm that is kept constant over scale by the scale-normalized derivatives.