Abstract

We study the Double Coverage (DC) algorithm for the k-server problem in tree metrics in the (h, k)-setting, i.e., when DC with k servers is compared against an offline optimum algorithm with h ≤ k servers. It is well-known that in such metric spaces DC is k-competitive (and thus optimal) for h = k. We prove that even if k > h the competitive ratio of DC does not improve; in fact, it increases slightly as k grows, tending to h + 1. Specifically, we give matching upper and lower bounds of \(\frac {k(h+1)}{k+1}\) on the competitive ratio of DC on any tree metric.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

We consider the k-server problem defined as follows. There are k servers in a given metric space. In each step, a request arrives at some point of the metric space and must be served by moving some server to that point. The goal is to minimize the total distance traveled by the servers.

The k-server problem was introduced by Manasse et al. [8] as a far reaching generalization of various online problems. The most well-studied of those is the paging (caching) problem, which corresponds to k-server problem on a uniform metric space. Sleator and Tarjan [9] gave several k-competitive algorithms for paging and showed that this is the best possible ratio for any deterministic algorithm.

Interestingly, the k-server problem does not seem to get harder on more general metrics. The celebrated k-server conjecture states that a k-competitive deterministic algorithm exists for every metric space. In a breakthrough result, Koutsoupias and Papadimitriou [7] showed that the work function algorithm (WFA) is (2k − 1)-competitive for every metric space, almost resolving the conjecture. The conjecture has been settled for several special metrics (an excellent reference is [2]). In particular for the line metric, Chrobak et al. [3] gave an elegant k-competitive algorithm called Double Coverage (DC). This algorithm was later extended and shown to be k-competitive for all tree metrics [4]. Additionally, in [1] it was shown that WFA is k-competitive for some special metrics, including the line.

The (h, k)-Server Problem

In this paper, we consider the (h, k)-setting, where the online algorithm has k servers, but its performance is compared to an offline optimal algorithm with h ≤ k servers. This is also known as the weak adversaries model [6], or the resource augmentation version of k-server. It is a salient point whether the algorithm knows the value of h. We assume that it does not, as the DC algorithm that we analyze does not utilize this value (and the same is true of WFA). Moreover, this assumption is more in the spirit of resource augmentation. Note that in general this distinction matters, as knowing h, an algorithm might decide to limit the number of servers it will use to serve the requests. The (h, k)-server setting turns out to be much more intriguing and is much less understood.

For the uniform metric (the (h, k)-paging problem), k/(k − h + 1)-competitive algorithms are known [9] and no deterministic algorithm can achieve a better ratio. Note that this guarantee equals k for h = k, and tends to 1 as the ratio of the number of online to offline servers k/h becomes arbitrarily large. This shows that the weak adversaries model could give more accurate interpretation on the performance of online algorithms: The competitive ratio of k obtained in the classical setting grows with the number of servers, which could possibly mean that more servers worsen the performance of an algorithm. On the other hand, the ratio obtained in the (h, k) setting shows that the performance improves substantially when the number of servers grows. The same competitive ratio can also be achieved for the weighted caching problem [10] (and even the more general file caching problem [11], which is not a special case of the (h, k)-server problem).

However, unlike classical k-server, the underlying metric space seems to play an important role in the (h, k)-setting. Bar-Noy and Schieber (cf. [2]) showed that, for the (2,k)-server problem on a line metric, no deterministic algorithm can be better than 2-competitive for any k. In particular, the ratio does not tend to 1 as k increases.

In fact, there is huge gap in our understanding of the problem, even for very special metrics. For example, for the line no guarantee better than h is known even when k/h → ∞. On the other hand, the only lower bounds known are the result of Bar-Noy and Schieber mentioned above and a general lower bound of k/(k − h + 1) for any metric space with at least k + 1 points (cf. [2] for both results). In particular, no lower bound better than 2 is known for any metric space and any h > 2, if we let k/h → ∞. The only general upper bound is due to Koutsoupias [6], who showed that WFA is 2h-competitiveFootnote 1 for the (h, k)-server problem on any metric. It is worth stressing that this is an upper bound for WFA that is oblivious of h and uses all of its k servers, and that ratio 2h − 1 can be attained by running WFA with h servers when this value is known to the algorithm.

The DC Algorithm

This motivates us to consider the (h, k)-server problem on the line and more generally on trees. In particular, we consider the DC algorithm [3] originally defined for a line, and its generalization to trees [4]. We refer to both as DC, since the latter specializes to the former when the underlying tree is in fact a line. As understanding both the algorithm and its analysis for the line may be simpler and more insightful, we provide definitions of both variants. In both, we call an algorithm’s server s adjacent to the request r if there are no algorithm’s servers on the unique path between the locations of r and s, excluding the point where s is located. Note that there may be multiple servers in one location, satisfying this requirement — in such case, one of them is chosen arbitrarily as the adjacent server for this location, and the others are considered non-adjacent.

DC-Line

If the current request r lies outside the convex hull of current servers, serve it with the nearest server. Otherwise, we move the two servers adjacent to r towards it with equal speed until some server reaches r.

DC-Tree

Repeat the following until a server reaches the request r, constantly updating the set of adjacent servers: move all the servers adjacent to r towards r at equal speed. Note that the set of servers adjacent to r can change only when one of them reaches either a vertex of the tree or the request itself, which ends the move. We call the parts of the move between updates of the set of adjacent servers elementary moves.

There are several natural reasons to consider DC for line and trees. For paging (and weighted paging), all known k-competitive algorithms also attain the optimal ratio for the (h, k) version. This suggests that a k-competitive algorithm for the k-server problem might attain the “right” ratio in the (h, k)-setting. The only algorithm that satisfies this condition for a non-trivial metric is DC for trees, as well as WFA for the simpler case of a line. Of the two, DC has the advantage that it attains the optimum k/(k − h + 1)-competitive ratio for the (h, k)-paging problem, when it is modelled as a star graph where requests appear in leaves, since it is equivalent to Flush-When-Full algorithm, as pointed out by Chrobak and Larmore [4]; see Appendix A for an explicit proof. As for WFA, all known upper bounds, including [6], bound the extended cost instead of the actual cost of the algorithm. Using this approach we can easily show that WFA is (h + 1)-competitive for the line (cf. Appendix B).

Our Results

We show that the exact competitive ratio of DC on lines and trees in the (h, k)-setting is \(\frac {k(h+1)}{(k+1)}\).

Theorem 1

The competitive ratio of DC is at least \(\frac {k(h+1)}{(k+1)}\) , even for a line.

Note that for a fixed h, the competitive ratio worsens slightly as the number of online servers k increases. In particular, it equals h for k = h and it approaches h + 1 as k → ∞.

Consider the seemingly trivial case of h = 1. If k = 1, clearly DC is 1-competitive. However, for k = 2 it becomes 4/3 competitive, as we now sketch. Consider the instance where all servers are at x = 0 initially. A request arrives at x = 2, upon which both DC and offline move a server there and pay 2. Then a request arrives at x = 1. DC moves both servers there and pays 2 while offline pays 1. All servers are now at x = 1, and the instance repeats.

Generalizing this example to (1,k) already becomes quite involved. Our lower bound in Theorem 1 for general h and k is based on an adversarial strategy obtained by a careful recursive construction.

We also give a matching upper bound.

Theorem 2

For any tree, the competitive ratio of DC is at most \(\frac {k(h+1)}{(k+1)}\).

This generalizes the previous results for h = k [3, 4]. Our proof also follows similar ideas, but our potential function is more involved (it has three terms instead of two), and the analysis is more subtle. To keep the main ideas clear, we first prove Theorem 2 for the simpler case of a line in Section 3. The proof for trees is analogous but more involved, and is described in Section 4.

2 Lower Bound for the Line Metric

We now prove Theorem 1. We will describe an adversarial strategy S k for the setting where DC has k servers and the offline optimum (adversary) has h servers, whose analysis establishes that the competitive ratio of DC is at least k(h + 1)/(k + 1).

Roughly speaking (and ignoring some details), the strategy S k works as follows. Let I = [0,b k ] be the working interval associated with S k . Let L = [0,𝜖 b k ] and R = [(1−𝜖)b k , b k ] denote the (tiny) left front and right front of I. Initially, all offline and online servers are located in L. The adversary moves all its h servers to R and starts requesting points in R, until DC eventually moves all its servers to R. The strategy inside R is defined recursively depending on the number of DC servers currently in R: if DC has i servers in R, the adversary executes the strategy S i repeatedly inside R, until another DC server arrives there, at which point it switches to the strategy S i + 1. When all DC servers reach R, the adversary moves all its h servers back to L and repeats the symmetric version of the above instance until all servers move from R to L. This defines a phase. To show the desired lower bound, we recursively bound the online and offline costs during a phase of S k in terms of costs incurred by strategies S 1, S 2, …,S k − 1.

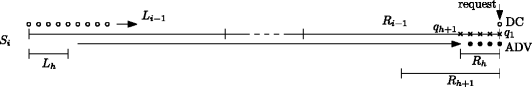

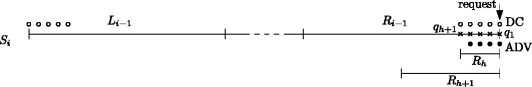

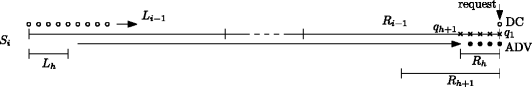

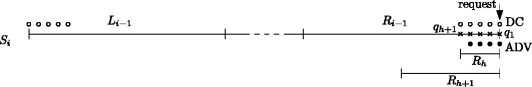

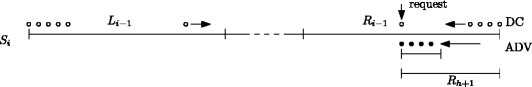

A crucial parameter of a strategy will be the pull. Recall that DC moves some server q L closer to R if and only if q L is the rightmost DC server outside R and a request is placed to the left of q R , the leftmost DC server in R, as shown in Fig. 1. In this situation q R moves by δ to the left and q L moves to the right by the same distance, and we say that the strategy in R exerts a pull of δ on q L . We will be interested in the amount of pull exerted by a strategy during one phase.

Formal Description

We now give a formal definition of the instance. We begin by introducing the quantities (that we bound we define the size of working later) associated with each strategy S i during a single phase:

-

d i , lower bound for the cost of DC inside the working interval.

-

A i , upper bound for the cost of the adversary.

-

p i , P i , lower resp. upper bound for the “pull” exerted on any external DC servers located to the left of the working interval of S i . Note that, as will be clear later, by symmetry the same pull is exerted to the right.

For i ≥ h, the ratio \(r_{i} = \frac {d_{i}}{A_{i}}\) is a lower bound for the competitive ratio of DC with i servers against an adversary with h servers.

We now define the right and left front precisely. Let ε > 0 be a sufficiently small constant. For i ≥ h, we define the size of working intervals for strategy S i as s h : = h and s i + 1: = s i /ε. Note that s k = h/ε k − h. The working interval for strategy S k is [0,s k ], and inside it we have two working intervals for strategies S k − 1: [0,s k − 1] and [s k − s k − 1, s k ]. We continue this construction recursively and the nesting of these intervals creates a tree-like structure as shown in Fig. 2. For i ≥ h, the working intervals for strategy S i are called type-i intervals. Strategies S i , for i ≤ h, are special and are executed in type-h intervals.

Strategies S i for i ≤ h

For i ≤ h, strategies S i are performed in a type-h interval (recall this has length h). Let Q be h + 1 points in such an interval, with distance 1 between consecutive points.

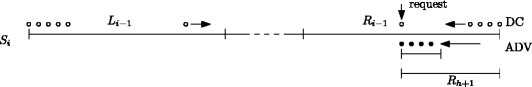

There are two variants of S i that we call \(\overset {\rightarrow }{S_{i}}\) and \(\overset {\leftarrow }{S_{i}}\). We describe \(\overset {\rightarrow }{S_{i}}\) in detail, and the construction of \(\overset {\leftarrow }{S_{i}}\) will be exactly symmetric. At the beginning of \(\overset {\rightarrow }{S_{i}}\), we ensure that DC servers occupy the rightmost i points of Q and adversary servers occupy the rightmost h points of Q as shown in Fig. 3. The adversary requests the sequence q i + 1, q i , …,q 1. It is easily verified that DC incurs cost d i = 2i, and its servers return to the initial position q i , …,q 1, so we can iterate \(\overset {\rightarrow }{S_{i}}\) again. Moreover, a pull of p i = 1 = P i is exerted in both directions.

The initial position for Strategy \(\overset {\rightarrow }{S_{3}}\) (for h ≥ 3), in which the adversary requests q 4, q 3, q 2, q 1. DC’s servers move for a total of 6, exerting a pull of 1 in the process, only to return to the same position. The adversary’s cost is 0 if h > 3 and 2 if h = 3: in such case, the adversary serves both q 4 and q 3 with the server initially located in q 3

As for the cost incurred by the adversary, we have A i = 0, for i<h, as the offline servers do not have to move at all. For i = h, the offline can serve the sequence with cost 2, by using the server in q h to serve request in q h + 1 and then moving it back to q h , therefore A h = 2.

For strategy \(\overset {\leftarrow }{S_{i}}\), we just number the points of Q in the opposite direction (q 1 will be leftmost and q h + 1 rightmost). The request sequence, analysis, and assumptions about initial position are the same.

Strategies S i for i > h

We define the strategy S i for i > h, assuming that S 1, …,S i − 1 are already defined. Let I denote the working interval for S i . We assume that, initially, all offline and DC servers lie in the leftmost (or analogously rightmost) type- (i − 1) interval of I. Indeed, for S k this is achieved by the initial configuration, and for i<k we will ensure this condition before applying strategy S i . In this case our phase consists of left-to-right step followed by right-to-left step (analogously, if all servers start in the rightmost interval, we apply first right-to-left step followed by left-to-right step to complete the phase).

For each h ≤ j<i, let L j and R j denote the leftmost and the rightmost type-j interval contained in I respectively.Left-to-right step

-

1.

Adversary moves all its servers from L i − 1 to R h , specifically to the points q 1, …,q h to prepare for the strategy \(\overrightarrow {S_{1}}\). Next, point q 1 is requested, which forces DC to move one server to q 1, thus satisfying the initial conditions of \(\overrightarrow {S_{1}}\). The figure below illustrates the servers’ positions after these moves are performed.

-

2.

For j = 1 to h: keep applying \(\overset {\rightarrow }{S}_{j}\) to interval R h until the (j + 1)-th server arrives at the point q j + 1 of R h . (Recall that Fig. 3 illustrates Strategy \(\overrightarrow {S_{j}}\) for j ≤ h.) Once it arrives there, complete the request sequence \(\overset {\rightarrow }{S_{j}}\), so that DC servers will reside in points q j + 1, …,q 1, ready for strategy \(\overset {\rightarrow }{S_{j+1}}\). The figure below illustrates the servers’ positions after all those moves (i.e., the whole outer loop, for j = 1…,h) are performed.

-

3.

For j = h + 1 to i − 1: keep applying S j to interval R j until the (j + 1)-th server arrives in R j . To clarify, S j stands for either \(\overrightarrow {S_{j}}\) or \(\overleftarrow {S_{j}}\), depending on the locations of servers within R j . In particular, the first S j for any j is \(\overleftarrow {S_{j}}\). Note that there is exactly one DC server in the working interval of S i moving toward R j from the left: the other servers in that working interval are either still in L i − 1 or not moving, since they are not adjacent to the request, or already in R j . Since R j is the rightmost interval of R j + 1 and L i − 1∩R j + 1 = ∅, the resulting configuration is ready for strategy \(\overleftarrow {S_{j+1}}\). The figure below illustrates the very beginning of this sequence of moves, for j = h + 1, right after the execution of the first step (of this three-step description) of \(\overleftarrow {S_{j+1}}\).

Right-to-Left Step Same as Left-to-right, just replace \(\overset {\rightarrow }{S_{j}}\) by \(\overset {\leftarrow }{S_{j}}\), R j intervals by L j , and L j by R j .

Bounding Costs

We begin with a simple but useful observation that follows directly from the definition of DC. For any subset X of i ≤ k consecutive DC servers, let us call center of mass of X the average position of servers in X. We call a request external with respect to X, when it is outside the convex hull of X and internal otherwise.

Lemma 1

For any sequence of internal requests with respect to X, the center of mass of X remains the same.

Proof

Follows trivially since for any internal request, DC moves precisely two servers towards it, by an equal amount in opposite directions. □

Let us derive bounds on d i , A i , p i , and P i in terms of these quantities for j<i. First, we claim that the cost A i incurred by the adversary for strategy S i during a phase can be upper bounded as follows:

In the inequality above, we take the cost for left-to-right step multiplied by 2, since left-to-right and right-to-left step are symmetric. The term s i h is the cost incurred by the adversary in the beginning of the step, when moving all its servers from the left side of I to the right. The costs \(A_{j} \frac {s_{i}}{p_{j}}\) are incurred during the phases S j for j = 1,…,i − 1, because A j is an upper bound on the cost of the adversary during a phase of strategy S j and \(\frac {s_{i}}{p_{j}}\) is an upper bound on the number of iterations of S j during S i . This follows because S j (during left to right phase) executes as long as the (j + 1)-th server moves from left of I to right of I. It travels a distance of at most s i and receives a pull of p j during each iteration of S j in R. Finally, the equality in (1) follows, as A j = 0 for j<h.

Similarly, we bound the cost of DC from below. Let us denote δ:=(1−2ε). The length of I∖(L i − 1∪R i − 1) is δ s i and all DC servers moving from right to left have to travel at least this distance. Furthermore, as \(\frac {\delta s_{j}}{P_{j}}\) is a lower bound for the number of iterations of strategy S j , we obtain:

It remains to show the upper and lower bounds on the pull P i and p i exerted on external servers due to the (right-to-left step of) strategy S i . Suppose S i is being executed in interval I. Let x denote the closest DC server strictly to the left of I. Let X denote the set containing x and all DC servers located in I. During the right-to-left step of S i , all requests are internal with respect to X. So by Lemma 1, the center of the mass of X remains unchanged. As i servers moved from right to left during right-to-left step of S i , this implies that q should have been pulled to the left by the same total amount, which is at least i δ s i and at most i s i . Hence,

Due to a symmetric argument, during the left-to-right step, the same amount of pull is exerted to the right.

Now we are ready to prove Theorem 1.

Proof of Theorem 1

The proof is by induction. In particular, we will show that the following holds for each i ∈ [h, k]:

Note that this claim already implies the theorem for i = k, since the competitive ratio r k of D C k satisfies the following inequality:

Therefore, as δ = (1−2ε), it is easy to see that \(r_{k} \rightarrow \frac {k(h+1)}{k+1}\) when ε → 0:

Induction Base (i = h ) For the base case we have a h = 2, d h = 2h, and p h = P h = 1, so \(\frac {d_{h}}{P_{h}} = 2h\) and \(\frac {A_{h}}{p_{h}} = 2\), i.e., (4) holds.

Induction Step ( i > h ) Using (2), (3), and induction hypothesis, we obtain

where the last inequality follows from the fact that \({sum }_{j=1}^{i-1} 2j = i(i-1)\). Similarly, we prove the second part of (4). The first inequality follows from (1) and (3), the second from the induction hypothesis:

The last inequality follows from \(2{\sum }_{j=h}^{i-1} (j+1) = i(i+1) - h(h+1) \). □

3 Upper Bound

In this section, we give an upper bound on the competitive ratio of DC that matches the lower bound from the previous section.

We begin by introducing some notation. We denote the optimal offline algorithm by OPT. For the current request r at time t, we let X and Y denote the configurations (i.e. the multisets of points in which their servers are located) of DC and OPT respectively before serving request r. Similarly, X ′ and Y ′ denote their corresponding configurations after serving r.

In order to prove our upper bound, we will define a potential function Φ(X, Y) such that

where \(c = \frac {k(h+1)}{k+1}\) is the desired competitive ratio, and D C(t) and O P T(t) denote the cost incurred by DC and OPT at time t. Coming up with a potential that satisfies (5) is sufficient, as c-competitiveness follows from summing this inequality over time.

For a set of points A, let D A denote the sum of all \(\binom {|A|}{2}\) pairwise distances between points in A. Let M ⊆ X be some fixed set of h servers of DC and \(\mathcal {M}(M,Y)\) denote the minimum weight perfect matching between M and Y, where the weights are determined by distances in the metric space (i.e., tree). Abusing the notation slightly, we will denote by \(\mathcal {M}(M,Y)\) both the matching and its cost. With that in mind, we let

Then the potential function is defined as follows:

Note this generalizes the potential considered in [3, 4] for the case of h = k. In that setting, all the online servers are matched and hence D M = D X and is independent of M, and thus the potential above becomes k times that minimum cost matching between X and Y plus D x . On the other hand in our setting, we need to select the right set M of DC servers to be matched to the offline servers based on minimizing Ψ M (X, Y).

Let us first give a useful property concerning minimizers of Ψ, which will be crucial later in our analysis. Note that Ψ M (X, Y) is not simply the best matching between X and Y, but also includes the term D M which makes the argument slightly subtle.

Lemma 2

Let X and Y be the configurations of DC and OPT and consider some fixed offline server at location y ∈ Y. There exists a minimizer M of Ψ that contains some DC server x which is adjacent to y. Moreover, there is a minimum cost matching \(\mathcal {M}\) between M and Y that matches x to y.

We remark that the adjacency in the lemma statement and the proof is defined as for the DC algorithm (cf. Section 1); specifically, as if there was a request at y’s position. Moreover, we tote that the statement does not necessarily hold simultaneously for every offline server, but only for a single fixed offline server y.

Proof Proof of Lemma 2

Let M ′ be some minimizer of Ψ M (X, Y) and \(\mathcal {M}^{\prime }\) be some associated minimum cost matching between M ′ and Y. Let x ′ denote the online server currently matched to y in \(\mathcal {M}^{\prime }\) and suppose that x ′ is not adjacent to y. Let x denote the server in X adjacent to y on the path from y to x ′.

We will show that we can always modify the matching (and M ′) without increasing the cost of Φ, so that y is matched to x. We consider two cases depending on whether x is matched or unmatched.

-

1.

If x ∈ M ′: Let y ′ denote the offline server which is matched to x in \(\mathcal {M}^{\prime }\). To create new matching \(\mathcal {M}\), we swap the edges and match x to y and x ′ to y ′, see Fig. 4. The cost of the edge connecting y in the matching reduces by exactly d(x ′, y)−d(x, y) = d(x ′, x). On the other hand, the cost of the matching edge for y ′ increases by d(x ′, y ′)−d(x, y ′)≤d(x, x ′), due to triangle inequality. Thus, the new matching has no larger cost. Moreover, the set of matched servers does not change, i.e., M = M ′, and hence \(D_{M} = D_{M^{\prime }}\), which implies that \(\Psi _{M}(X,Y) \leq \Psi _{M^{\prime }}(X,Y)\).

-

2.

If x ∉ M ′: In this case, we set M = M ′∖{x ′}∪{x} and we form \(\mathcal {M}\), where y is matched to x and all other offline servers are matched to the same server as in \(\mathcal {M}^{\prime }\). Now, the cost of the matching reduces by d(x ′, y)−d(x, y) = d(x, x ′). Moreover, \(D_{M} \leq D_{M^{\prime }} + (h-1) \cdot d(x,x^{\prime })\), as the distance of each server in M ′∖{x ′} to x can be greater than the distance to x ′ by at most d(x, x ′). This gives

$$\begin{array}{@{}rcl@{}} \Psi_{M}(X,Y) - \Psi_{M^{\prime}}(X,Y) &\leq& - \frac{(h+1)k}{k+1} \cdot d(x,x^{\prime}) + \frac{k(h-1)}{k+1} \cdot d(x,x^{\prime}) \\ &=& - \frac{2k}{k+1} \cdot d(x,x^{\prime}) <0 \quad, \end{array} $$and hence Ψ M (X, Y) is strictly smaller than \(\Psi _{M^{\prime }}(X,Y)\).

□

We are now ready to prove Theorem 2 for the line.

Proof

Recall, that we are at time t and request r is arriving. We divide the analysis into two steps: (i) OPT serves r, and then (ii) DC serves r. As a consequence, whenever a server of DC serves r, we can assume that a server of OPT is already there.

In all the steps considered, M is the minimizer of Ψ M (X, Y) in the beginning of the step. It might happen that, after change of X, Y during the step, a better minimizer can be found. However, an upper bound for ΔΨ M (X, Y) is sufficient to bound the change in the first term of the potential function. □

OPT Moves

If OPT moves one of its servers by distance d to serve r, the value of Ψ M (X, Y) increases by at most \(\frac {k (h+1)}{k+1} d\). As O P T(t) = d and X does not change, it follows that

and hence (5) holds. We now consider the second step when DC moves.

DC Moves

We consider two cases depending on whether DC moves a single server or two servers.

-

1.

Suppose DC moves its rightmost server (the leftmost server case is identical) by distance d. Let y denote the offline server at r. By Lemma 2 we can assume that y is matched to the rightmost server of DC. Thus, the cost of the minimum cost matching between M and Y decreases by d. Moreover, D M increases by exactly (h − 1)d (as the distance to rightmost server increases by d for all servers of DC). Thus, Ψ M (X, Y) changes by

$$-\frac{k(h+1)}{k+1} \cdot d + \frac{k(h-1)}{k+1} \cdot d = -\frac{2k}{k+1} \cdot d \quad. $$Similarly, D X increases by exactly (k − 1)d. This gives us that

$$\Delta \Phi(X,Y) \leq -\frac{2k}{k+1} \cdot d + \frac{k-1}{k+1} \cdot d = -d \quad. $$As D C(t) = d, this implies that (5) holds.

-

2.

We now consider the case when DC moves 2 servers x and x ′, each by distance d. Let y denote the offline server at the request r. By Lemma 2 applied to y, we can assume that M contains at least one of x or x ′, and that y is matched to one of them (say x) in some minimum cost matching \(\mathcal {M}\) of M to Y.

We note that D X decreases by precisely 2d. In particular, the distance between x and x ′ decreases by 2d, and for any other server of X∖{x, x ′} its total distance to other servers does not change. Moreover, D C(t) = 2d. Hence, to prove (5), it suffices to show

$$ \Delta \Psi_{M}(X,Y) \leq -\frac{k}{k+1} \cdot 2d \quad. $$(6)To this end, we consider two sub-cases.

-

(a)

Both x and x ′ are matched: In this case, the cost of the matching \(\mathcal {M}\) does not increase as the cost of the matching edge (x, y) decreases by d and the move of x ′ can increase the cost of the matching by at most d. Moreover, D M decreases by precisely 2d (due to x and x ′ moving closer). Thus, \(\Delta \Psi _{M}(X,Y) \leq -\frac {k}{k+1} \cdot 2d\), and hence (6) holds.

-

(b)

Only x is matched (to y) and x ′ is unmatched: In this case, the cost of the matching \(\mathcal {M}\) decreases by d. Moreover, D M can increase by at most (h − 1)d, as x can move away from each server in M∖{x} by distance at most d. So

$$\Delta \Psi_{M}(X,Y) \leq -\frac{(h+1)k}{k+1} \cdot d + \frac{k(h-1)}{k+1} \cdot d = - \frac{2k}{k+1} \cdot d \quad, $$i.e., (6) holds.

-

(a)

4 Extension to Trees

The proof for trees is similar to the one in the previous section. The main difference is that the set of servers adjacent to the request can now be arbitrary (i.e., it no longer contains at most two servers) and that it can change as the move is executed, see Fig. 5. To cope with this, we analyze elementary moves, as did Chrobak and Larmore [4]. Recall that an elementary move is a part of the move between successive updates of the set of servers adjacent to the request; consequently, this set remains fixed during such move.

Proof Proof of Theorem 2

We use the same potential as before, i.e, we let

and define

To prove the theorem, we show that for any time t the following holds:

where \(c = \frac {k(h+1)}{k+1}\).

As in the analysis for the line, we split the analysis in two parts: (i) OPT serves r, and then (ii) DC serves r. As a consequence, whenever a server of DC serves r, we can assume that a server of OPT is already there. □

OPT Moves

If OPT moves a server by distance d, only the matching cost is affected in the potential function, and it can increase by at most d⋅k(h + 1)/(k + 1). Therefore

and hence (7) holds.

DC Moves

Instead of focusing on the whole move done by DC to serve request r, we prove that (7) holds for each elementary move.

Consider an elementary move where q servers are moving by distance d. Let A denote the set of servers adjacent to r. Imagine that the tree is rooted at r, and let, for all a ∈ A, Q a denote the subset of X (i.e., DC servers) that are located in the subtree rooted at a’s location, including that point a, see Fig. 5. We set q a :=|Q a | and h a :=|Q a ∩M|. Finally, let A M : = A∩M. By Lemma 2, we can assume that one of the servers in A is matched to the OPT’s server in r, which implies

In order to calculate the change in D X and D M , it is convenient to consider the moves of active servers sequentially rather than simultaneously.

We start with D X . Clearly, each a ∈ A moves further away from q a − 1 servers in X by distance d and gets closer to the remaining k − q a ones by the same distance. Thus, the change of D X associated with a is (q a − 1−(k − q a ))d = (2q a − k − 1)d. Therefore we have

as \({\sum }_{a \in A} q_{a} = k\).

Similarly, for D M , we first note that it can change only due to moves of servers in A M . Specifically, each a ∈ A M moves further away from h a − 1 servers in M and gets closer to the remaining h − h a of them. Thus, the change of D M associated with a is (2h a − h − 1)d. Therefore we have

since \({\sum }_{a \in A_{M}} h_{a} \leq {\sum }_{a \in A} h_{a} = h\).

Using above inequalities, we see that the change of potential is at most

since

Thus, (7) holds, as D C(t) = q⋅d.

Notes

1 Actually, [6] gives a stronger bound: WFA k ≤ 2h⋅OPT h − OPT k + O(1), where the algorithm’s subscripts specify how many servers they use.

References

Bartal, Y., Koutsoupias, E.: On the competitive ratio of the work function algorithm for the k-server problem. Theor. Comput. Sci 324(2–3), 337–345 (2004)

Borodin, A., El-Yaniv, R.: Online computation and competitive analysis. Cambridge University Press (1998)

Chrobak, M., Karloff, H.J., Payne, T.H., Vishwanathan, S.: New results on server problems. SIAM J. Discrete Math. 4(2), 172–181 (1991)

Chrobak, M., Larmore, L.L.: An optimal on-line algorithm for k-servers on trees. SIAM J. Comput. 20(1), 144–148 (1991). doi:10.1137/0220008

Chrobak, M., Larmore, L.L.: The server problem and on-line games. In: On-line Algorithms, volume 7 of DIMACS Series in Discrete Mathematics and Theoretical Computer Science, pp. 11–64. AMS/ACM (1992)

Koutsoupias, E.: Weak adversaries for the k-server problem. In: Proc. of the 40th Symp. on Foundations of Computer Science (FOCS), pp. 444–449 (1999)

Koutsoupias, E., Papadimitriou, C.H.: On the k-server conjecture. J. ACM 42(5), 971–983 (1995)

Manasse, M.S., McGeoch, L.A., Sleator, D.D.: Competitive algorithms for server problems. J. ACM 11(2), 208–230 (1990)

Sleator, D.D., Tarjan, R.E.: Amortized efficiency of list update and paging rules. Commun. ACM 28(2), 202–208 (1985). doi:10.1145/2786.2793

Young, N.E.: The k-server dual and loose competitiveness for paging. Algorithmica 11(6), 525–541 (1994). doi:10.1007/BF01189992

Young, N.E.: On-line file caching. Algorithmica 33(3), 371–383 (2002). Journal version of [1998] doi:10.1007/s00453-001-0124-5

Author information

Authors and Affiliations

Corresponding author

Additional information

A preliminary version of this article appeared in the Proceedings of the 13th Workshop on Approximation and Online Algorithms (WAOA 2015). This work was supported by NWO grant 639.022.211, ERC consolidator grant 617951, NCN grant DEC-2013/09/B/ST6/01538, NSF grants CCF-1115575, CNS-1253218, CCF-1421508, and an IBM Faculty Award.

Appendices

Appendix A: Analysis of DC for Paging

The paging problem is the special case of k-server on a uniform metric. It is also equivalent (up to a constant additive term) to the k-server problem on a star graph, where all edges have weight \(\frac {1}{2}\) and requests appear at the leaves. This connection was pointed out by Chrobak and Larmore [4], who also noticed that DC-Tree can be interpreted as Flush-When-Full (FWF). It thus follows that it is \(\frac {k}{k-h+1}\)-competitive in the (h, k)-setting. As we are not aware an explicit proof of this fact, we give one that uses a potential function.

Let X and Y denote the configurations of DC and OPT respectively. Note that any server of DC can only be at the root or at a leaf, and servers of OPT can only be at leaves. Let ℓ denote the number of DC servers at the root.

We define the potential function as follows:

Analysis We consider the moves of OPT and DC separately. We assume that, whenever a point is requested, first OPT moves a server there and then DC moves its servers.

Offline Moves

When OPT moves any single server from one leaf to another, it pays 1. Clearly, ℓ does not change, and |Y∖X| can increase by at most one. Thus, \(\Delta \Phi \leq \frac {k}{k-h+1} = \frac {k}{k-h+1} \cdot OPT \).

DC Moves

Let us now consider moves of DC. We distinguish between two cases depending on the value of ℓ:

-

ℓ > 0: In this case, DC moves one server from the root to the requested leaf, paying 1/2. Clearly, both ℓ and |Y∖X| decrease by 1. Thus,

$$\Delta \Phi = \frac{k+h-1}{2(k-h+1)} - \frac{k}{k-h+1} = \frac{-k+h-1}{2(k-h+1)} = - \frac{1}{2} \quad, $$and hence D C + ΔΦ=0.

-

ℓ = 0: In this case, DC moves all the servers from the leaves toward the root (and then we go to the case above). In that case DC occurs a cost of k/2. Let us call a the number of online servers that coincide with servers of OPT before the move of DC. Then ℓ is increasing by k while |Y∖X| increases by a. We get that

$$\Delta \Phi = \frac{-k-h+1}{2(k-h+1)} k + \frac{k}{k-h+1} a \quad. $$Observe that a ≤ h − 1, as OPT already has a server covering the current request (and DC does not). Thus we can upper bound ΔΦ as follows:

$$\Delta \Phi \leq \frac{-k-h+1+2(h-1)}{2(k-h+1)} k = \frac{-k+h-1}{2(k-h+1)} k = - \frac{k -h + 1}{2(k-h+1)} k = - \frac{k}{2} \quad. $$Overall we get that \(DC + \Delta \Phi \leq \frac {k}{2} - \frac {k}{2} = 0\).

Appendix B: Proof of (h + 1)-Competitiveness of WFA

We consider the WFA on the line in the (h, k) setting. Specifically we show that WFA with k servers is (h + 1)-competitive against an adversary with h servers, as an immediate consequence of results shown in [1, 6].

Most known upper bounds for the WFA do not bound the algorithm’s actual cost directly. Instead, they bound its extended cost, defined as the maximum increase the value of the work function for any single configuration. To define it formally, we use the following notation: w t (X) is the value of the work function of configuration X at time t, WFA i and OPT i the overall cost incurred by WFA and OPT of i servers respectively. Then, if M denotes the metric space, the extended cost at time t is defined as follows:

and the total extended cost of a sequence of requests is

As with WFA and OPT, we let ExtCost i denote the total extended cost over configurations of i servers. The extended cost satisfies the following inequality, as shown by Chrobak and Larmore [5]:

In [1] it was shown that WFA with h servers is h-competitive in the line by proving the following inequality:

Moreover, it is known that the extended cost is a non-increasing function of the number of servers [6], which implies

for all request sequences.

Putting (8), (9) and (10) together, we get

which implies that WFA k is (h + 1)-competitive. In fact, a slightly stronger inequality holds:

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Bansal, N., Eliáš, M., Jeż, Ł. et al. Tight Bounds for Double Coverage Against Weak Adversaries. Theory Comput Syst 62, 349–365 (2018). https://doi.org/10.1007/s00224-016-9703-3

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00224-016-9703-3