Abstract

Given a large sample from a location-scale population we estimate the unknown parameters by means of confidence regions constructed on the basis of two order statistics. The problem of the best choice of those statistics to obtain good estimates, as \(n\rightarrow \infty ,\) is considered.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

A problem of optimal choice of order statistics in large samples for the best estimation of the location and scale is not new. For example, Subsection 10.4 of David and Nagaraja (2003) is devoted to such a problem in case of the point estimation (see also the references cited therein). However, the same problem for the confidence region estimation has not attracted the attention so far, as far as we know. This paper is an attempt to fill the gap.

Let \(x=(x_1, x_2, \ldots , x_n)\) be a sample from a distribution \(P_{\theta },\ \theta =(\theta _1, \theta _2),\) that is \(\{x_i\}\) are independent real-valued random variables having the distribution \(P_{\theta }.\) We deal with the case where \(\theta _1\in R\) is a location parameter and \(\theta _2>0\) is a scale parameter. As the estimators of \(\theta =(\theta _1, \theta _2),\) let us consider two-dimensional confidence regions.

Let \(\alpha \in (0,1)\) be a given confidence level. A strong confidence region of level \(\alpha \) is a mapping \(B: R^n\mapsto {\mathcal {B}}^2\) such that

where \({\mathcal {B}}^2\) is the \(\sigma \)-algebra of Borel subsets of \(R^2.\) The quality of a confidence region can be characterized by the risk function defined as

where \(\lambda _2\) is the Lebesgue measure on \({\mathcal B}^2.\) Among strong confidence regions we distinguish those having the minimal risk and call them optimal.

The method for construction of an optimal confidence region is well-known (see, for example, Alama-Bućko et al. 2006 or Czarnowska and Nagaev 2001) and is based on using a pivot. Let \(t_1(x)\) and \(t_2(x)\) be a couple of statistics satisfying the following conditions: for any \(a\in R,\ b>0,\)

where \(\mathbf 1 _n=(1, 1,\ldots ,1)\in R^n.\) Let from now on \(y=(y_1, y_2, \ldots , y_n)\) be a sample from the standard distribution \(P_{(0,1)}.\) Taking a set \(A\in {\mathcal {B}}^2\) such that

one can obtain due to (1)

That is,

is a pivot.

Thus, the set

is a strong confidence region for \((\theta _1, \theta _2).\) In this case,

that is the risk function is proportional to the area of the set \(A,\) and the problem is to choose the set \(A\) with the smallest area.

Assume that the density function \(g\) of the random vector \((-t_1(y)/t_2(y),\) \(1/t_2(y)-1)\) exists, continuous and such that

The confidence region is optimal among all the confidence regions of the form (5), if

where \(z_{\alpha }\) is defined by the equation

This is a corollary of Proposition 2.1 of Einmahl and Mason (1992).

Of course, the optimal confidence region depends on the choice of \(t_1\) and \(t_2.\)

For the natural interpretation of confidence region (5) it is reasonable to take as \(t_1(x)\) and \(t_2(x)\) the estimators of the location and scale parameters, respectively. Then \((t_1(x), t_2(x))\) is the center of the region, while the set \(A\) defines the shape of the region and \(t_2(x)\) is responsible for its rescaling.

In this paper we consider the case, where \(t_1\) and \(t_2\) are linear functions of two order statistics. Some other cases were considered in Alama-Bućko et al. (2006) and Czarnowska and Nagaev (2001).

Let \(x_{k:n}\) and \(x_{m:n}\) be the \(k\)-th and the \(m\)-th order statistic of the sample \(x,\) respectively, \(k<m.\) The main goal of the paper is to make the best possible choice of \(k=k_n\) and \(m=m_n\) to minimize risk function (6), as \(n\rightarrow \infty ,\) under the assumption that \(k/n\rightarrow p,\ m/n\rightarrow q,\ p<q.\)

Asymptotics of the optimal confidence region in case \(0<p<q<1\) is obtained in Sect. 2. Our main results are established in Sect. 3, while Sect. 4 contains examples. In “Appendix” we prove three useful auxiliary lemmas.

2 Asymptotics of the optimal confidence region

Let \(F=F_{(0,1)}\) be the continuous distribution function corresponding to \(P_{(0,1)}\) and

be the so-called quantile function. We assume that the distribution \(F\) is absolutely continuous and denote by \(f\) its density function. Let \(\varphi _V\) be the density corresponding to the normal distribution with zero mean vector and covariance matrix \(V.\)

We start with the classical result on limit distribution for central order statistics (see, for example, Theorem 10.3 of David and Nagaraja 2003 or Theorem 4.1.3 of Reiss 1989).

Proposition 1

Let \(0<p<q<1\) be fixed and \(k/n-p=o(n^{-1/2}),\ m/n-q=o(n^{-1/2}),\) as \(n\rightarrow \infty .\) Assume also that \(f(F^{-1}(p))>0,\ f(F^{-1}(q))>0.\) Then the limit distribution of the vector \(n^{1/2}(y_{k:n}-F^{-1}(p), y_{m:n}-F^{-1}(q)),\) as \(n\rightarrow \infty ,\) is normal with zero mean vector and covariance matrix

Assume that \(F^{-1}(q)\ne F^{-1}(p)\) and take

Note that \(t_1(x)\) and \(t_2(x)\) from (7) satisfy (1) and are asymptotically unbiased estimators of the location and scale, respectively.

Making use of statement (i) of Lemma 1 from “Appendix” with \(a_n=c_n=n^{1/2},\ b_n=F^{-1}(p),\ d_n=F^{-1}(q),\ \xi _n=y_{k:n},\ \eta _n=y_{m:n},\ f(u_1,u_2)=\varphi _V(u_1,u_2),\) we immediately obtain the following result.

Corollary 1

Under the conditions of Proposition 1, the limit distribution of the vector \(n^{1/2}(-t_1(y)/t_2(y), 1/t_2(y)-1),\) where \(t_1\) and \(t_2\) are defined by (7), as \(n\rightarrow \infty ,\) is normal with zero mean vector and the covariance matrix \(W=(H^{-1})^TVH^{-1},\) where

Applying the method of construction of optimal confidence regions described in Sect. 1 (see formulae (2)–(5)), one can obtain the following optimal confidence region based on the vector \(n^{1/2}(-t_1(y)/t_2(y),\) \(1/t_2(y)-1)\):

where the set \(A_n\) is defined by

and \(g_n\) is the density corresponding to \(n^{1/2}(-t_1(y)/t_2(y), 1/t_2(y)-1).\) The corresponding risk function has the form

Let us investigate the behaviour of \(R(\theta ,B_{A_n})\) as \(n\rightarrow \infty .\) From Proposition 1 it follows that

Moreover, basing on Proposition 1, as it was shown in Theorem 2 of Alama-Bućko and Zaigraev (2006), one can obtain the asymptotic expansion of the set \(A_n\) as \(n\rightarrow \infty .\) Namely, the set \(A_n,\) as \(n\rightarrow \infty ,\) approximates the ellipse \(A_0\) of the form

where \(z^{\prime }_{\alpha }\) is defined by the equation

In other words,

or

Therefore,

and

Summing up, \(R(\theta ,B_{A_n})\) is of order \(1/n\) as \(n\rightarrow \infty ,\) if \(0<p<q<1,\ F^{-1}(q)\ne F^{-1}(p),\ f(F^{-1}(q))>0,\ f(F^{-1}(p))>0.\)

The problem of interest is to search for \(p^*\) and \(q^*\) to minimize (11). In other words, this is the problem of choice the order statistics \(x_{k:n}, x_{m:n}\) to obtain the optimal confidence region for \(\theta \) with the smallest risk function.

3 Optimal choice of order statistics

After changing the notation \(u=F^{-1}(p), v=F^{-1}(q),\) the right-hand side of (11) is rewritten as

Let

where \(-\infty \le u_F^-<u_F^+\le \infty \) are the lower and the upper end of the support of the distribution \(F,\) respectively.

Note that for any fixed \(u\in (u_F^-, u_F^+),\)

Therefore, \(G(u,v)\uparrow \infty \) as \(v\downarrow u\) for any fixed \(u\in (u_F^-, u_F^+).\) Similarly, it can be shown that \(G(u,v)\uparrow \infty \) as \(u\uparrow v\) for any fixed \(v\in (u_F^-, u_F^+).\)

In what follows, we assume that the function \(f\) is differentiable at any point \(u\in (u_F^-, u_F^+).\) Then

By simple calculations,

and, therefore, \(H(u,v)<0\) in a neighborhood of any fixed \(u\in (u_F^-, u_F^+),\) that is the function \(G(u,v)\) is decreasing in \(v\) in a neighborhood of \(u.\) Similarly, the function \(G(u,v)\) is increasing in \(u\) in a neighborhood of any fixed \(v\in (u_F^-, u_F^+)\) since

and

In the sequel we need some well-known facts from the extreme value theory (see, for example, Subsection 10.5 of David and Nagaraja 2003).

If there exist \(c_n>0\) and \(d_n\in R\) such that the limit distribution of the sequence \(c_n(y_{n:n}-d_n)\) exists, as \(n\rightarrow \infty ,\) then the limit distribution function is one of just three types (\(\beta >0\)):

-

(Fréchet) \(\ H_1(u; \beta )=\left\{ \begin{array}{ll} 0,&u\le 0\\ \exp (-u^{-\beta }),&u>0, \end{array}\right.\)

-

(Weibull) \(\ H_2(u; \beta )=\left\{ \begin{array}{ll} \exp (-(-u)^{\beta }),&u\le 0\\ 1,&u>0,\end{array}\right.\)

-

(Gumbel) \(\ H_3(u)=\exp (-\exp (-u)),\ u\in R.\)

In this case it is said that \(F\) belongs to the domain of attraction of the distribution \(H_i, i=1,2,3\) (written \(F\in D(H_i)\)).

Let \(h\) be the hazard rate function, that is

It turns out that the possible limit laws for the properly centered and normed maximal order statistics \(x_{n:n}\) are determined by the behaviour of the function \(h\) in a neighborhood of the right endpoint of \(F.\) The following result (see, for example, Theorems 8.3.3 and 8.3.4 of Arnold et al. 1992) contains the well-known sufficient von Mises conditions of attraction to \(D(H_i),\ i=1, 2, 3,\) and description of sequences \(\{c_n,d_n\}.\) In what follows, \(L(v)\) denotes a slowly varying function as \(v\rightarrow \infty .\)

Proposition 2

The following statements hold:

-

\(F\in D(H_1),\) if \(u_F^+=\infty \) and for some \(\beta >0,\)

$$\begin{aligned} \lim _{u\rightarrow \infty }\ uh(u)=\beta ; \end{aligned}$$(14)here \(d_n=0,\ c_n=(F^{-1}(1-1/n))^{-1}=n^{-1/\beta }L(n);\)

-

\(F\in D(H_2),\) if \(u_F^+<\infty \) and for some \(\beta >0,\)

$$\begin{aligned} \lim _{u\rightarrow u_F^+}\ (u_F^+-u)h(u)=\beta ; \end{aligned}$$(15)here \(d_n=u^+_F,\ c_n=(u^+_F-F^{-1}(1-1/n))^{-1}=n^{1/\beta }L(n);\)

-

\(F\in D(H_3),\) if \(f(u)\) is differentiable for all \(u>u_0\) and

$$\begin{aligned} \lim _{u\rightarrow u_F^+}\ (1/h(u))^{\prime }=0; \end{aligned}$$(16)here \(d_n=F^{-1}(1-1/n),\ c_n=h(d_n)=nf(d_n).\)

Remark 1.

Comparing the norming sequences \(\{c_n\}\) from all the above cases to \(n^{1/2},\) one can conclude that: \(c_n\gg n^{1/2}\) if \(F\in D(H_2)\) with \(\beta <2,\) while \(c_n\ll n^{1/2}\) if \(F\in D(H_1),\) or \(F\in D(H_3),\) or \(F\in D(H_2)\) with \(\beta >2.\) In what follows, we exclude the case \(F\in D(H_2)\) with \(\beta =2\) from the consideration since uncertainty remains here.

Now we are able to establish the crucial result for optimal choice of order statistics.

Theorem 1.

The following statements hold for any fixed \(u\in (u^-_F, u^+_F)\):

-

(i)

if condition (14) holds, then \(G(u,v)\uparrow \infty \) as \(v\uparrow u^+_F=\infty ;\)

-

(ii)

if condition (16) holds, then \(G(u,v)\) is a non-decreasing function for all \(v>v_0;\)

-

(iii)

if condition (15) holds, then

$$\begin{aligned}&\beta >2\ \Longrightarrow \ G(u,v)\uparrow \infty ,\ v\uparrow u^+_F,\\&\beta <2\ \Longrightarrow \ G(u,v)\downarrow 0,\ v\uparrow u^+_F. \end{aligned}$$

Proof

Statement (i) is a direct consequence of (14).

To prove the statement (ii), it is enough to show that

From (12) it follows that

In view of condition (16), it is enough to prove that

For this purpose one can use the arguments from the proof of Remark 2 of Subsection 3.3.3 of Embrechts et al. (1997). If \(u^+_F=\infty ,\) then since \((1/h(v))^{\prime }\downarrow 0,\) as \(v\uparrow \infty ,\) the Cesàro mean of this function also converges, that is

If \(u^+_F<\infty ,\) then

Since \((1/h(u^+_F-s))^{\prime }\downarrow 0,\) as \(s\downarrow 0,\) the last limit tends to \(0\) and (18) holds.

Statement (iii) follows from Theorem 3.3.12 of Embrechts et al. (1997) and properties of slowly varying functions.

Theorem 1 immediately implies the following result.

Corollary 2

If condition (15) holds with \(\beta <2,\) then \(v^*=u^+_F<\infty \ (q^*=1)\) and \(\inf G(u,v)=0.\) In other cases (condition (14), or condition (16), or condition (15) with \(\beta >2\) holds), \(v^*<u^+_F\ (q^*<1)\) and \(\ \inf G(u,v)>0.\)

As it is known, similar results hold also for the minimal order statistic \(y_{1:n}.\) More precisely, if there exist \(a_n>0\) and \(b_n\in R\) such that the limit distribution of the sequence \(a_n(y_{1:n}-b_n)\) exists, as \(n\rightarrow \infty ,\) then the limit distribution function is one of just three types: \(H^*_i(u;\gamma )=1-H_i(-u;\gamma ),\ i=1, 2, 3.\) So, with the small evident modifications one can establish for \(y_{1:n}\) the similar results as for \(y_{n:n}.\) We have gathered them in the following theorem.

Denote

Theorem 2

The following statements hold for any fixed \(v\in (u^-_F, u^+_F).\)

-

1.

If \(u_F^-=-\infty \) and for some \(\gamma >0,\)

$$\begin{aligned} \lim _{u\rightarrow -\infty }\ uh^*(u)=-\gamma , \end{aligned}$$(19)then \(F\in D(H^*_1)\) and \(G(u,v)\uparrow \infty \) as \(u\downarrow u^-_F=-\infty .\)

-

2.

If \(f(u)\) is differentiable for all \(u<u^*_1\) and

$$\begin{aligned} \lim _{u\rightarrow u_F^-}\ (1/h^*(u))^{\prime }=0, \end{aligned}$$(20)then \(F\in D(H^*_3)\) and \(G(u,v)\) is a non-increasing function for \(u<u^*_0.\)

-

3.

If \(u_F^->-\infty \) and for some \(\gamma >0,\)

$$\begin{aligned} \lim _{u\rightarrow u_F^-}\ (u-u_F^-)h^*(u)=\gamma , \end{aligned}$$(21)then \(F\in D(H^*_2)\) and

$$\begin{aligned}&\gamma >2\ \Longrightarrow \ G(u,v)\uparrow \infty ,\ u\downarrow u^-_F,\\&\gamma <2\ \Longrightarrow \ G(u,v)\downarrow 0,\ u\downarrow u^-_F. \end{aligned}$$

If condition (21) with \(\gamma <2\) holds, then \(u^*=u^-_F>-\infty \ (p^*=0)\) and \(\inf G(u,v)=0.\) In other cases (condition (19), or condition (20), or condition (21) with \(\gamma >2\) holds), \(u^*>u^-_F\ (p^*>0)\) and \(\ \inf G(u,v)>0.\)

Summing up, assuming that the underlying distribution in a neighborhood of \(u_F^+\) satisfies one of von Mises conditions (14)–(16) and in a neighborhood of \(u_F^-\) satisfies one of von Mises conditions (19)–(21), we can formulate the results on optimal choice of order statistics distinguishing between four cases.

Case I

If in a neighborhood of \(u^+_F\) (14), or (16), or (15) with \(\beta >2\) holds and in a neighborhood of \(u^-_F\) (19), or (20), or (21) with \(\gamma >2\) holds, then \(0<p^*<q^*<1.\) In this case we take (see (7))

where, for example, \(k^*=[np^*]+1,\ m^*=[nq^*]+1.\) The optimal confidence region is based on the vector \(T_n(y)=n^{1/2}(-t_1(y)/t_2(y),1/t_2(y)-1)\) and is given by (8) with risk function (9), where \(\lim _{n\rightarrow \infty }E_{\theta } t_2^2(x)=\theta ^2_2\) (see (10)). The limit law for \(T_n(y),\) established in Corollary 1, allows us to state that

The risk function is of order \(1/n,\) as \(n\rightarrow \infty .\)

The important note: the order of the risk function for the optimal confidence region equals to the reciprocal of the product of norming sequences of the components of \(T_n(y),\) that is \(1/n=1/(n^{1/2}\cdot n^{1/2}).\)

It remains to consider the cases when in a neighborhood of \(u^+_F\ (u^+_F<\infty )\) (15) with \(\beta <2\) holds and/or in a neighborhood of \(u^-_F\ (u^-_F>-\infty )\) (21) with \(\gamma <2\) holds. Here, the order of the corresponding risk function is evidently \(o(1/n)\) and \(q^*=1\) and/or \(p^*=0.\) In this case we need to change the vector \(T_n(y)\) and norming sequences according to statements (ii) or (iii) of Lemma 1 from “Appendix”. Again the reciprocal of the product of norming sequences of the components of \(T_n(y)\) determines the order of the risk function.

Let \(q^*=1\) (the case \(p^*=0\) can be considered similarly). In general, one can distinguish between three types of sequences \(\{m_n\}\) satisfying \(m_n/n\rightarrow q^*=1, n\rightarrow \infty \):

-

(a)

\(m_n=n;\) in this case \(y_{m_n:n}=y_{n:n},\) i. e. we deal with the extreme order statistics;

-

(b)

\(m_n=n-j+1,\ j>1\) is fixed; in this case \(y_{m_n:n}=y_{n-j+1:n},\) i. e. we deal with other extreme order statistics;

-

(c)

\(m_n=n-j+1,\ j=j_n\rightarrow \infty ,\ j_n/n\rightarrow 0,\ n\rightarrow \infty ;\) in this case \(y_{m_n:n}=y_{n-j_n+1:n},\) i. e. we deal with intermediate order statistics.

The question arises: what type of the sequence one should choose to obtain the better confidence region?

First of all, note that according to the end of Subsection 10.8 of David and Nagaraja (2003), lower extremes are asymptotically independent of upper extremes and both are asymptotically independent of central order statistics as well as of intermediate order statistics.

In situation (a) the possible limit laws and corresponding norming sequences are given in Proposition 2. In situation (b), as it follows from Theorem 8.4.1 of Arnold et al. (1992), \(F\in D(H_i),\ i=1, 2, 3,\) iff the limit distribution function of an extreme order statistic \(y_{n-j+1:n},\) as \(n\rightarrow \infty ,\) where \(j\) is fixed, is of the form \(\sum ^{j-1}_{r=0}H_i(u)[-\ln (H_i(u))]^r/r!,\) \(i=1, 2, 3;\) the sequences \(\{c_n, d_n\}\) are the same as in Proposition 2. Therefore, comparing the choice of \(y_{n:n}\) with that of \(y_{n-j+1:n},\) where \(j>1\) is fixed, we conclude that the norming sequences are the same, but the first choice is better since it gives the shorter interval for the appropriate coordinate (see Lemma 2 from “Appendix”).

At last, in situation (c), as it follows from Theorem 8.5.3 of Arnold et al. (1992), if von Mises conditions (14)–(16) hold, then the limit law for \(y_{n-j+1:n},\ n\rightarrow \infty ,\ j\rightarrow \infty ,\) \(j/n\rightarrow 0,\) is standard normal and \(d_n=F^{-1}(1-j/n),\ c_n=nf(d_n)/j^{1/2}.\) Note that in the case of interest (when in a neighborhood of \(u^+_F\) (15) with \(\beta <2\) holds) this norming sequence \(\{c_n\}\) is less than that for \(y_{n:n}\) given in Proposition 2 (see Lemma 3 from “Appendix”); in all other cases it is less than \(n^{1/2}.\)

Case II

If in a neighborhood of \(u^+_F\) (one can take \(u^+_F=0\) without loss in generality) (15) with \(\beta <2\) holds, while in a neighborhood of \(u^-_F\) (19), or (20), or (21) with \(\gamma >2\) holds, then \(0<p^*<q^*=1.\) In this case, drawing on (22), we take

The pivotal quantity is

where \(c_n=n^{1/\beta }L(n)\gg n^{1/2},\ n\rightarrow \infty .\) The optimal confidence region is based on the vector \((-c_nt_1(y)/t_2(y), n^{1/2}(1/t_2(y)-1))\) and has the risk of order \(1/(c_nn^{1/2})\ll 1/n,\) as \(n\rightarrow \infty .\) It has the form

where \(A^*_n=\{(z_1, z_2) :\ (c_nz_1,n^{1/2}z_2)\in A_n\}.\)

Case III

If in a neighborhood of \(u^+_F\) (14), or (16), or (15) with \(\beta >2\) holds, while in a neighborhood of \(u^-_F\) (one can take \(u^-_F=0\) without loss in generality) (21) with \(\gamma <2\) holds, then \(0=p^*<q^*<1.\) In this case, drawing on (22), we take

The pivotal quantity is

where \(a_n=n^{1/\gamma }L(n)\gg n^{1/2},\ n\rightarrow \infty .\) The optimal confidence region is based on the vector \((-a_nt_1(y)/t_2(y), n^{1/2}(1/t_2(y)-1))\) and has the risk of order \(1/(a_nn^{1/2})\ll 1/n,\) as \(n\rightarrow \infty .\) It has the form

where \(A^*_n=\{(z_1, z_2) :\ (a_nz_1,n^{1/2}z_2)\in A_n\}.\)

Case IV

At last, if in a neighborhood of \(u^+_F\) (15) with \(\beta <2\) holds and in a neighborhood of \(u^-_F\) (21) with \(\gamma <2\) holds, then \(p^*=0,\ q^*=1.\) In this case the construction repeats one of the previous cases depending on the relation between \(\beta \) and \(\gamma \) (see Examples).

At last, it is worth to note that if the distribution \(F\) is symmetric, that is its density \(f\) satisfies the condition \(f(-u)=f(u),\) and, moreover, if \(f\) is a differentiable infinitely many times function such that \(f^{\prime }(-u)=-f^{\prime }(u),\) then \(p^*=1-q^*\) (see Theorem 10.4 of David and Nagaraja 2003 and also Ogawa 1998 for the proof and discussion).

4 Examples

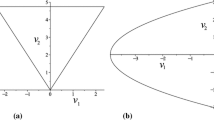

Here we consider three examples of distributions. In all the cases we calculate the values of the risk function, according to (6): firstly, for \((p,q)=(0.25,0.75),\) and secondly, for \((p^*,q^*).\) Even for the realistic sample sizes, the risk is smaller in the second case.

Example 1.

Uniform distribution \(U(\theta _1-\theta _2/2,\theta _1+\theta _2/2).\)

It is the case IV since in a neighborhood of \(u^+_F=1/2\) (15) with \(\beta =1\) holds, while in a neighborhood of \(u^-_F=-1/2\) (21) with \(\gamma =1\) holds. Therefore, \((p^*,q^*)=(0,1).\) Here \(\beta =\gamma ,\) and the optimal confidence region for \((\theta _1,\theta _2)\) is based on

while the pivot looks as \(n(\frac{\theta _1-t_1(x)}{t_2(x)}, \frac{\theta _2}{t_2(x)}-1).\)

The optimal confidence region has the risk of order \(1/n^2.\)

Calculations of the risk function for \((p,q)=(0.25,0.75)\) (the first table) and for \((p^*,q^*)=(0,1)\) (the second table):

\(n\) | \(k\) | \(m\) | \(\lambda _2(A)\) | \( \mathrm{E}_{(0,1)}t_2^2(y)\) | \(R(\theta ,B_A)/\theta _2^2\) |

|---|---|---|---|---|---|

30 | 8 | 23 | 1.076282 | 0.241935 | 0.260390 |

40 | 10 | 31 | 0.640971 | 0.243902 | 0.156334 |

50 | 13 | 38 | 0.599687 | 0.245098 | 0.146982 |

60 | 15 | 46 | 0.429006 | 0.245901 | 0.105493 |

70 | 18 | 53 | 0.414028 | 0.246478 | 0.102049 |

80 | 20 | 61 | 0.326711 | 0.246913 | 0.080669 |

90 | 23 | 68 | 0.312016 | 0.247252 | 0.077147 |

100 | 25 | 76 | 0.262212 | 0.247524 | 0.064904 |

500 | 125 | 376 | 0.052771 | 0.249500 | 0.013166 |

\(n\) | \(k\) | \(m\) | \(\lambda _2(A)\) | \( \mathrm{E}_{(0,1)}t_2^2(y)\) | \(R(\theta ,B_A)/\theta _2^2\) |

|---|---|---|---|---|---|

30 | 1 | 30 | 0.015230 | 0.877016 | 0.013357 |

40 | 1 | 40 | 0.008145 | 0.905923 | 0.007379 |

50 | 1 | 50 | 0.005059 | 0.923831 | 0.004674 |

60 | 1 | 60 | 0.003444 | 0.936012 | 0.003224 |

70 | 1 | 70 | 0.002495 | 0.944835 | 0.002357 |

80 | 1 | 80 | 0.001890 | 0.951520 | 0.001798 |

90 | 1 | 90 | 0.001481 | 0.956760 | 0.001417 |

100 | 1 | 100 | 0.001192 | 0.960978 | 0.001145 |

500 | 1 | 500 | 0.000050 | 0.992039 | 0.000049 |

Example 2.

Exponential distribution \(E(\theta _1,\theta _2).\)

It is the case III since in a neighborhood of \(u^-_F=0\) (21) with \(\gamma =1\) holds, while in a neighborhood of \(u^+_F=\infty \) (16) holds.

By simple calculations we obtain

and \(\mathrm{arg}\ \mathop {\inf }_{0<u<v<\infty }G(u,v)=(0,v_*),\) where \(v_*\) is the solution of the equation \((1-v/2)e^v=1\) (\(v_*=1.5936\)). Therefore, \((p^*,q^*)=(0,v_*/2)=(0,0.7968).\)

The optimal confidence region has the risk of order \(1/n^{3/2}.\)

Calculations of the risk function for \((p,q)=(0.25,0.75)\) (the first table) and for \((p^*,q^*)=(0,0.7968)\) (the second table):

\(n\) | \(k\) | \(m\) | \(\lambda _2(A)\) | \(\mathrm{E}_{(0,1)} t_2^2(y)\) | \(R(\theta ,B_A)/\theta _2^2\) |

|---|---|---|---|---|---|

30 | 8 | 23 | 0.636060 | 1.294206 | 0.823192 |

40 | 10 | 31 | 0.361046 | 1.431981 | 0.517011 |

50 | 13 | 38 | 0.332177 | 1.259720 | 0.418450 |

60 | 15 | 46 | 0.237054 | 1.354291 | 0.321040 |

70 | 18 | 53 | 0.224124 | 1.244763 | 0.278981 |

80 | 20 | 61 | 0.177264 | 1.316461 | 0.233361 |

90 | 23 | 68 | 0.171444 | 1.236410 | 0.211975 |

100 | 25 | 76 | 0.141810 | 1.294084 | 0.183514 |

500 | 125 | 376 | 0.027746 | 1.224075 | 0.033963 |

\(n\) | \(j\) | \(k\) | \(\lambda _2(A)\) | \(\mathrm{E}_{(0,1)} t_2^2(y)\) | \(R(\theta ,B_A)/\theta _2^2\) |

|---|---|---|---|---|---|

30 | 1 | 24 | 0.059575 | 2.783466 | 0.165825 |

40 | 1 | 32 | 0.036114 | 2.973219 | 0.107374 |

50 | 1 | 40 | 0.025097 | 3.149164 | 0.079034 |

60 | 1 | 48 | 0.018263 | 3.320204 | 0.060636 |

70 | 1 | 56 | 0.014387 | 3.490169 | 0.050213 |

80 | 1 | 64 | 0.011406 | 3.660958 | 0.041756 |

90 | 1 | 72 | 0.009464 | 3.833603 | 0.036281 |

100 | 1 | 80 | 0.008152 | 4.008691 | 0.032678 |

500 | 1 | 399 | 0.000517 | 12.822575 | 0.006629 |

Example 3.

Normal distribution \(N(\theta _1,\theta _2).\)

It is the case I since in a neighborhood of \(u^-_F=-\infty \) (20) holds, while in a neighborhood of \(u^+_F=\infty \) (16) holds.

Here \(G(u,v)=G(-v,-u),\) and by simple calculations one can obtain that

where \(v^*=1.1106\) is the solution of the equation

Therefore, \(q^*=F(v^*)=0.8666,\ p^*=1-q^*=0.1334.\)

The optimal confidence region has the risk of order \(1/n.\)

Calculations of the risk function for \((p,q)=(0.25,0.75)\) (the first table) and for \((p^*,q^*)=(0.1334,0.8666)\) (the second table):

\(n\) | \(k\) | \(m\) | \(\lambda _2(A)\) | \( \mathrm{E}_{(0,1)}t_2^2(y)\) | \(R(\theta ,B_A)/\theta _2^2\) |

|---|---|---|---|---|---|

30 | 8 | 23 | 0.520142 | 1.869376 | 0.972341 |

40 | 10 | 31 | 0.338648 | 2.079427 | 0.704193 |

50 | 13 | 38 | 0.294872 | 1.850483 | 0.545655 |

60 | 15 | 46 | 0.219608 | 1.991273 | 0.437299 |

70 | 18 | 53 | 0.204000 | 1.841996 | 0.375767 |

80 | 20 | 61 | 0.166246 | 1.947792 | 0.323812 |

90 | 23 | 68 | 0.156422 | 1.837179 | 0.287375 |

100 | 25 | 76 | 0.133028 | 1.921895 | 0.255665 |

500 | 125 | 376 | 0.026954 | 1.840690 | 0.049613 |

\(n\) | \(k\) | \(m\) | \(\lambda _2(A)\) | \( \mathrm{E}_{(0,1)}t_2^2(y)\) | \(R(\theta ,B_A)/\theta _2^2\) |

|---|---|---|---|---|---|

30 | 5 | 26 | 0.182234 | 4.335487 | 0.790073 |

40 | 6 | 35 | 0.114722 | 4.794549 | 0.550040 |

50 | 7 | 44 | 0.086356 | 5.096922 | 0.440149 |

60 | 9 | 52 | 0.079592 | 4.625095 | 0.368120 |

70 | 10 | 61 | 0.063934 | 4.855632 | 0.310440 |

80 | 11 | 70 | 0.053892 | 5.037201 | 0.271464 |

90 | 13 | 78 | 0.050402 | 4.726140 | 0.238206 |

100 | 14 | 87 | 0.043930 | 4.879758 | 0.214367 |

500 | 67 | 434 | 0.008606 | 4.952811 | 0.042623 |

References

Alama-Bućko M, Nagaev AV, Zaigraev A (2006) Asymptotic analysis of minimum volume confidence regions for location-scale families. Applicationes Mathematicae (Warszawa) 33:1–20

Alama-Bućko M, Zaigraev A (2006) Asymptotics of optimal confidence regions for location-scale parameters basing on two ordered statistics. In: Statistical methods of estimation and testing hypotheses. Perm University, Perm, pp 49–65 (in Russian)

Arnold BC, Balakrishnan N, Nagaraja HN (1992) A first course in order statistics. Wiley series in probability and mathematical statistics. Wiley, New York

Czarnowska A, Nagaev AV (2001) Confidence regions of minimal area for the scale-location parameter and their applications. Applicationes Mathematicae 28:125–142

David HA, Nagaraja HN (2003) Order statistics. Wiley, New York

Einmahl JHJ, Mason DM (1992) Generalized quantile processes. Ann Stat 20:1062–1078

Embrechts P, Klüppelberg C, Mikosch T (1997) Modelling extremal events for finance and insurance applications of mathematics (New York), vol 33. Springer, Berlin

Ogawa J (1998) Optimal spacing of the selected sample quantiles for the joint estimation of the location and scale parameters of a symmetric distribution. J Stat Plan Inf 70:345–360

Reiss R-D (1989) Approximate distributions of order statistics: with applications to nonparametric statistics. Springer series in statistics. Springer, New York

Shaked M, Shanthikumar JG (2007) Stochastic orders and their applications. Academic Press, San Diego

Acknowledgments

The authors are grateful to the referee for useful suggestions improving the paper.

Open Access

This article is distributed under the terms of the Creative Commons Attribution License which permits any use, distribution, and reproduction in any medium, provided the original author(s) and the source are credited.

Author information

Authors and Affiliations

Corresponding author

Appendix

Appendix

Here we establish three useful auxiliary lemmas.

Lemma 1

Let \(\{\xi _n\}\) and \(\{\eta _n\}\) be two sequences of random variables and assume that there exist sequences of positive numbers \(\{a_n\}\) and \(\{c_n\}\) and sequences of numbers \(\{b_n\}\) and \(\{d_n\}\) such that the sequence of two-dimensional random vectors \((a_n(\xi _n-b_n),\) \(c_n(\eta _n-d_n)),\) as \(n\rightarrow \infty ,\) converges in distribution to a random vector with continuous density function \(f(u_1,u_2).\) If \(a_n\rightarrow \infty ,\) \(c_n\rightarrow \infty , b_n\rightarrow b\in R, d_n\rightarrow d\in R, d\ne b,\) then

-

(i)

under the condition \(a_n=c_n\) the random vector

$$\begin{aligned} a_n\Bigl (\frac{b_n\eta _n-d_n\xi _n}{\eta _n-\xi _n}, \frac{d_n-b_n}{\eta _n-\xi _n}-1\Bigr ),\quad \text{ as} \ n\rightarrow \infty , \end{aligned}$$converges in distribution to the random vector with the density \(|d\!-\!b|f(-\!v_1\!-\!bv_2,\!-\!v_1\!-\!dv_2);\)

-

(ii)

under the condition \(a_n\gg c_n\) the random vector

$$\begin{aligned} \Bigl (a_n\frac{(b_n-\xi _n)(d_n-b_n)}{\eta _n-\xi _n}, c_n\Bigl ( \frac{d_n-b_n}{\eta _n-\xi _n}-1\Bigr )\Bigr ),\quad \text{ as} \ n\rightarrow \infty , \end{aligned}$$converges in distribution to the random vector with the density \(|d-b|f(-v_1,-(d-b)v_2);\)

-

(iii)

under the condition \(a_n\ll c_n\) the random vector

$$\begin{aligned} \Bigl (c_n\frac{(d_n-\eta _n)(d_n-b_n)}{\eta _n-\xi _n}, a_n\Bigl (\frac{d_n-b_n}{\eta _n-\xi _n}-1\Bigr )\Bigr ),\quad \text{ as} \ n\rightarrow \infty , \end{aligned}$$converges in distribution to the random vector with the density \(|d-b|f((d-b)v_2,-v_1).\)

Proof

We establish only statement (i); the other cases are treated similarly. The transformation \((u_1,u_2)\mapsto (v_1,v_2)\) of

is given by

This transformation has the Jakobian

Therefore, the density function of the random vector \(T_n\) has the form

Since \(a_n\rightarrow \infty , b_n\rightarrow b, d_n\rightarrow d,\) as \(n\rightarrow \infty ,\) statement (i) follows.

Lemma 2

For \(\beta >0\) consider two densities

where \(j>1,\) and let \((a,b)\) and \((a^{\prime },b^{\prime })\) be such intervals that for given \(\alpha \in (0,1)\)

If \(\beta \le 1,\) then for any \(j>1\)

Proof

Let \(F_{\beta }\) and \(G_{\beta ,j}\) be the distribution functions corresponding to the densities \(f_{\beta }\) and \(g_{\beta ,j},\) respectively, i. e.

and let \(X_{\beta }\) and \(Y_{\beta ,j}\) be the corresponding random variables.

Recall two notions of stochastic ordering (see e.g. Sect. 1A and Sect. 3B of Shaked and Shanthikumar 2007):

-

\(X_{\beta }\le _{st}Y_{\beta ,j},\) if \(F_{\beta }(u)\ge G_{\beta ,j}(u),\quad \forall u>0;\)

-

\(X_{\beta }\le _{disp}Y_{\beta ,j},\) if \(F^{-1}_{\beta }(q)-F^{-1}_{\beta }(p)\le G^{-1}_{\beta ,j}(q)-G^{-1}_{\beta ,j}(p),\quad \forall \ 0<p\le q<1.\)

Thus, if we show that \(X_{\beta }\le _{disp}Y_{\beta ,j},\) the lemma follows.

It is not difficult to check that \(-X_1\le _{st}-Y_{1,j}\) and that \(-X_1\le _{disp}-Y_{1,j}\) for any \(j>1\) (e.g. by Theorem 3.B.18 of Shaked and Shanthikumar 2007). Moreover, \(-X_{\beta }\) has the same distribution as \((-X_1)^{1/\beta },\) and \(-Y_{\beta ,j}\) has the same distribution as \((-Y_{1,j})^{1/\beta }.\) Since the function \(\phi (u)=u^{1/\beta },\ u\ge 0,\) is increasing and convex, from Theorem 3.B.10 of Shaked and Shanthikumar (2007) it follows that \((-X_1)^{1/\beta }=\phi (-X_1)\le _{disp} \phi (-Y_{1,j})=(-Y_{1,j})^{1/\beta }.\) Therefore, \(-X_{\beta }\le _{disp}-Y_{\beta ,j},\) but the latter means, from Theorem 3.B.6 of Shaked and Shanthikumar (2007), that \(X_{\beta }\le _{disp}Y_{\beta ,j}.\)

Numerical calculations show that Lemma 2 is true not only for \(\beta \in (0,1],\) but also for \(\beta \in (1,2).\)

Lemma 3

If in a neighborhood of \(u^+_F\) (15) with \(\beta <2\) holds, then for \(j\rightarrow \infty ,\ j/n\rightarrow 0,\) \(n\rightarrow \infty ,\) the relation \(nf(F^{-1}(1-j/n))/j^{1/2}\ll (u^+_F-F^{-1}(1-1/n))^{-1}\) holds.

Proof

Let \(z_n=F^{-1}(1-1/n)\) and \(d_n=F^{-1}(1-j/n).\) Our goal is to prove that \(nf(d_n)/j^{1/2} \ll (u^+_F-z_n)^{-1} , n\rightarrow \infty .\) By (15) and equality \(1-F(d_n)=j/n\) it is equivalent to

To show (23) remind that for distributions satisfying (15) we have

(see, for example, Theorem 3.3.12 of Embrechts et al. 1997). Since

and \(j\rightarrow \infty \) as \(n\rightarrow \infty ,\) we obtain \((u_F^+-z_n)/(u_F^+-d_n) \rightarrow 0,\ n\rightarrow \infty ,\) for \(\beta >0.\) Then it is obvious that for \(\beta <2\)

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 2.0 International License (https://creativecommons.org/licenses/by/2.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

About this article

Cite this article

Zaigraev, A., Alama-Bućko, M. On optimal choice of order statistics in large samples for the construction of confidence regions for the location and scale. Metrika 76, 577–593 (2013). https://doi.org/10.1007/s00184-012-0405-9

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00184-012-0405-9