Abstract

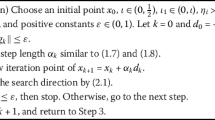

This paper presents a modified Wei-Yao-Liu conjugate gradient method, which automatically not only has sufficient descent property but also owns trust region property without carrying out any line search technique. The global convergence property for unconstrained optimization problems is satisfied with weak Wolfe-Powell (WWP) line search. Meanwhile, the present method can be extended to solve nonlinear equations problems. Under some mild condition, line search method and project technique, the global convergence is established. Some preliminary numerical tests are presented. The numerical results show its effectiveness.

This work is supported by the National Natural Science Foundation of China (Grant No. 11661009), the Guangxi Science Fund for Distinguished Young Scholars (No. 2015GXNSFGA139001), and the Guangxi Natural Science Key Fund (No. 2017GXNSFDA198046).

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Brown, P.N., Saad, Y.: Convergence theory of nonlinear Newton-Krylov algorithms. SIAM J. Optim. 4(2), 297–330 (1994)

Solodov, M.V., Svaiter, B.F.: A globally convergent inexact Newton method for systems of monotone equations. In: Reformulation: Nonsmooth, Piecewise Smooth, Semismooth and Smoothing Methods, pp. 355–369. Springer, US (1999)

Andrei, N.: An adaptive scaled BFGS method for unconstrained optimization. Numer. Algorithms 77(2), 413–432 (2018)

Huang, W., Absil, P.A., Gallivan, K.A.: A Riemannian BFGS method without differentiated retraction for nonconvex optimization problems. SIAM J. Optim. 28(1), 470–495 (2018)

Yuan, G., Sheng, Z., Wang, B., Hu, W., Li, C.: The global convergence of a modified BFGS method for nonconvex functions. J. Comput. Appl. Math. 327, 274–294 (2018)

Yuan, G., Wei, Z., Lu, X.: Global convergence of the BFGS method and the PRP method for general functions under a modified weak Wolfe-Powell line search. Appl. Math. Model. 47, 811–825 (2017)

Yuan, G., Wei, Z.: Convergence analysis of a modified BFGS method on convex minimizations. Comp. Optim. Appl. 47, 237–255 (2010)

Dai, Y.H., Yuan, Y.: A nonlinear conjugate gradient method with a strong global convergence property. SIAM J. Optim. 10(1), 177–182 (2000)

Fornasier, M., Peter, S., Rauhut, H., et al.: Conjugate gradient acceleration of iteratively re-weighted least squares methods. Comput. Optim. Appl. 65(1), 205–259 (2016)

Sellami, B., Laskri, Y., Benzine, R.: A new two-parameter family of nonlinear conjugate gradient methods. Optimization 64(4), 993–1009 (2015)

Wei, Z., Li, G., Qi, L.: New nonlinear conjugate gradient formulas for large-scale unconstrained optimization problems. Appl. Math. Comput. 179(2), 407–430 (2006)

Hager, W.W., Zhang, H.: Algorithm 851: CG-DESCENT, a conjugate gradient method with guaranteed descent. ACM Trans. Math. Softw. (TOMS) 32(1), 113–137 (2006)

Andrei, N.: Another conjugate gradient algorithm with guaranteed descent and conjugacy conditions for large-scale unconstrained optimization. J. Optim. Theory Appl. 159(1), 159–182 (2013)

Hager, W.W., Zhang, H.: A new conjugate gradient method with guaranteed descent and an efficient line search. SIAM J. Optim. 16(1), 170–192 (2005)

Dai, Z., Tian, B.S.: Global convergence of some modified PRP nonlinear conjugate gradient methods. Optim. Lett. 5(4), 615–630 (2011)

Fletcher, R., Reeves, C.M.: Function minimization by conjugate gradients. Comput. J. 7(2), 149–154 (1964)

Polyak, B.T.: The conjugate gradient method in extremal problems. USSR Comput. Math. Math. Phys. 9(4), 94–112 (1969)

Polak, E., Ribière, G.: Note sur la convergence de directions conjuges, Rev. Franaise Informat. Recherche Opertionelle, 3e année 16, 35–43 (1969)

Wei, Z., Yao, S., Liu, L.: The convergence properties of some new conjugate gradient methods. Appl. Math. Comput. 183(2), 1341–1350 (2006)

Zhang, L.: An improved Wei-Yao-Liu nonlinear conjugate gradient method for optimization computation. Appl. Math. Comput. 215(6), 2269–2274 (2009)

Dai, Z., Wen, F.: Another improved Wei-Yao-Liu nonlinear conjugate gradient method with sufficient descent property. Appl. Math. Comput. 218(14), 7421–7430 (2012)

Yuan, G., Lu, X.: A modified PRP conjugate gradient method. Anna. Operat. Res. 166, 73–90 (2009)

Yuan, G., Lu, X., Wei, Z.: A conjugate gradient method with descent direction for unconstrained optimization. J. Comput. Appl. Math. 233, 519–530 (2009)

Li, X., Wang, X., Sheng, Z., et al.: A modified conjugate gradient algorithm with backtracking line search technique for large-scale nonlinear equations. Int. J. Comput. Math. 95(2), 382–395 (2018)

Yuan, G., Meng, Z., Li, Y.: A modified Hestenes and Stiefel conjugate gradient algorithm for large-scale nonsmooth minimizations and nonlinear equations. J. Optim. Theory. Appl. 168, 129–152 (2016)

Li, D.H., Wang, X.L.: A modified Fletcher-Reeves-type derivative-free method for symmetric nonlinear equations. Numer. Algebra Control Optim. 1(1), 71–82 (2011)

Yuan, G., Wei, Z., Lu, X.: A BFGS trust-region method for nonlinear equations. Computing 92, 317–333 (2011)

Yuan, G., Wei, Z., Lu, S.: Limited memory BFGS method with backtracking for symmetric nonlinear equations. Math. Comput. Model. 54, 367–377 (2011)

Yu, Z., Lin, J., Sun, J., et al.: Spectral gradient projection method for monotone nonlinear equations with convex constraints. Appl. Numer. Math. 59(10), 2416–2423 (2009)

Yuan, G., Zhang, M.: A three-terms Polak-Ribi\(\grave{e}\)re-Polyak conjugate gradient algorithm for large-scale nonlinear equations. J. Comput. Appl. Math. 286, 186–195 (2015)

Dai, Z., Chen, X., Wen, F.: A modified Perrys conjugate gradient method-based derivative-free method for solving large-scale nonlinear monotone equations. Appl. Math. Comput. 270, 378–386 (2015)

Dai, Z., Li, D.W.: Worse-case conditional value-at-risk for asymmetrically distributed asset scenarios returns. J. Comput. Anal. Appl. 20, 237–251 (2016)

Li, D., Fukushima, M.: A global and superlinear convergent Gauss-Newton-based BFGS method for symmetric nonlinear equations. SIAM J. Numer. Anal. 37, 152–172 (1999)

Yuan, G., Lu, X.: A new backtracking inexact BFGS method for symmetric nonlinear equations. Comput. Math. Appl. 55(1), 116–129 (2008)

Li, Q., Li, D.H.: A class of derivative-free methods for large-scale nonlinear monotone equations. IMA J. Numer. Anal. 31(4), 1625–1635 (2011)

Dolan, E.D., Moré, J.J.: Benchmarking optimization software with performance profiles. Math. Program. 91(2), 201–213 (2002)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2018 Springer Nature Switzerland AG

About this paper

Cite this paper

Wang, X., Hu, W., Yuan, G. (2018). A Modified Wei-Yao-Liu Conjugate Gradient Algorithm for Two Type Minimization Optimization Models. In: Sun, X., Pan, Z., Bertino, E. (eds) Cloud Computing and Security. ICCCS 2018. Lecture Notes in Computer Science(), vol 11063. Springer, Cham. https://doi.org/10.1007/978-3-030-00006-6_13

Download citation

DOI: https://doi.org/10.1007/978-3-030-00006-6_13

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-00005-9

Online ISBN: 978-3-030-00006-6

eBook Packages: Computer ScienceComputer Science (R0)