Abstract

It is argued that some elusive “entropic” characteristics of chemical bonds, e.g., bond multiplicities (orders), which connect the bonded atoms in molecules, can be probed using quantities and techniques of Information Theory (IT). This complementary perspective increases our insight and understanding of the molecular electronic structure. The specific IT tools for detecting effects of chemical bonds and predicting their entropic multiplicities in molecules are summarized. Alternative information densities, including measures of the local entropy deficiency or its displacement relative to the system atomic promolecule, and the nonadditive Fisher information in the atomic orbital resolution(called contragradience) are used to diagnose the bonding patterns in illustrative diatomic and polyatomic molecules. The elements of the orbital communication theory of the chemical bond are briefly summarized and illustrated for the simplest case of the two-orbital model. The information-cascade perspective also suggests a novel, indirect mechanism of the orbital interactions in molecular systems, through “bridges” (orbital intermediates), in addition to the familiar direct chemical bonds realized through “space”, as a result of the orbital constructive interference in the subspace of the occupied molecular orbitals. Some implications of these two sources of chemical bonds in propellanes, π-electron systems and polymers are examined. The current–density concept associated with the wave-function phase is introduced and the relevant phase-continuity equation is discussed. For the first time, the quantum generalizations of the classical measures of the information content, functionals of the probability distribution alone, are introduced to distinguish systems with the same electron density, but differing in their current(phase) composition. The corresponding information/entropy sources are identified in the associated continuity equations.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

For the chemical understanding of molecular electronic structure it is vital to interpret the system equilibrium electron density \( \rho (\varvec{r}) = Np(\varvec{r}) \) in terms of pieces representing the relevant subsystems, e.g., Atoms-in-Molecules (AIM) (Bader 1990), functional groups or other pieces of interest, e.g., the σ and π electrons in benzene, and through such intuitive concepts as multiplicities (orders) of the internal (intra-subsystem) and external (inter-fragment) chemical bonds, describing the bonding pattern between these molecular fragments (Nalewajski 2006a, 2010a, 2012a). Here, N stands for the system overall number of electrons and the electron probability distribution p(r) determines the so called shape factor of the molecular density. In general, the semantics of these traditional chemical descriptors is not sharply defined in modern quantum mechanics, although quite useful definitions are available from several alternative perspectives (Nalewajski 2012a), which reflect the accepted chemical intuition quite well. However, since these chemical concepts do not represent specific observables, i.e., specific quantum mechanical operators, they ultimately have to be classified as Kantian noumenons of chemistry (Parr et al. 2005). It has been demonstrated recently that the Information Theory (IT) (Fisher 1925; Frieden 1998; Shannon 1948; Shannon and Weaver 1949; Kullback and Leibler 1951; Kullback 1959) can be used to elucidate their more precise meaning in terms of the entropy/information quantities (Nalewajski 2002, 2003a, b, 2006a, 2010a, 2012a; Nalewajski and Parr 2000, 2001; Nalewajski et al. 2002; Nalewajski and Świtka 2002; Nalewajski and Broniatowska 2003a, 2007; Nalewajski and Loska 2001). This article summarizes the diverse IT perspectives on the molecular electronic structure, in which the molecular states, their electron distributions and probability currents carry the complete information about the system bonding patterns. Some of these chemical characteristics are distinctly “entropic” in character, being primarily designed to reflect the “pairing” patterns between electrons, rather than the molecular energetics.

The Information Theory (see Appendix 1) is one of the youngest branches of the applied probability theory, in which the probability ideas have been introduced into the field of communication, control, and data processing. Its foundations have been laid in 1920s by Fisher (1925) in his classical measurement theory, and in 1940s by Shannon (Shannon 1948; Shannon and Weaver 1949), in his mathematical theory of communication. The electronic quantum state of a molecule is determined by the system wave function, the amplitude of the particle probability distribution which carries the information. It is intriguing to explore the information content of electronic densities in molecules and to extract from them the pattern of chemical bonds, reactivity trends and “entropic” molecular descriptors, e.g., bond multiplicities (“orders”) and their covalent/ionic composition. In this brief survey we summarize some of the recent developments in such IT probes of the molecular electronic structure. In particular, we shall explore the information displacements due to subtle electron redistributions accompanying the bond formation and diagnose locations of the direct chemical bonds using the nonadditive information contributions in the Atomic Orbital (AO) resolution. We shall also examine patterns of entropic connectivities between AIM, which result from “communications” between these basis functions. Their combinations represent the Molecular Orbitals (MO) in typical Self-Consistent Field (SCF) calculations. In these SCF LCAO MO theories the electronic structure is expressed in terms of either the Hartree–Fock (HF) MO of the Wave-Function Theories (WFT) or their Kohn–Sham (KS) analogs in the modern Density Functional Theory (DFT), the two main avenues of the contemporary computational quantum mechanics.

The other fundamental problem is the question: what is the adequate measure of the “information content” of the given quantum state of a molecule? The classical IT and the information measures it introduces deal solely with the electron density (probability) distribution, reflecting the modulus part of the complex wave function. However, in the degenerate (complex) electronic states the quantum measure of information should reflect not only the spatial distribution of electrons but also their probability currents related to the gradient of the phase part of the complex wave function, in order to distinguish between the information content of two systems exhibiting the same electron density but differing in their current composition. Such quantum extension of the classical gradient (local) measure, the Fisher information (1925), has indeed been proposed by the Author (Nalewajski 2008a). It introduces a nonclassical information term proportional to the square of the particle current. However, no quantum generalization of the complementary (global) measure represented by the familiar Shannon entropy (Shannon 1948; Shannon and Weaver 1949) is currently available. We shall address this question in the final sections of this work.

It has been amply demonstrated (Nalewajski 2006a, 2010a, 2012a) that many classical problems of theoretical chemistry can be approached afresh using this novel IT perspective. For example, the displacements in the information distribution in molecules, relative to the promolecular reference consisting of the nonbonded constituent atoms in their molecular positions, have been investigated and the least-biased partition of the molecular electron distributions into subsystem contributions, e.g., densities of AIM have been investigated. The IT approach has been shown to lead to the “stockholder” molecular fragments of Hirshfeld (1977). These optimum density pieces have been derived from alternative global and local variational principles of IT. Information theory facilitates a deeper insight into the nature of bonded atoms (Nalewajski 2002, 2003a, b, 2006a, 2010a, 2012a; Nalewajski and Parr 2000, 2001; Nalewajski et al. 2002; Nalewajski and Świtka 2002; Nalewajski and Broniatowska 2003a, 2007; Nalewajski and Loska 2001), the electron fluctuations between them (Nalewajski 2003c), and a thermodynamic-like description of molecules (Nalewajski 2003c, 2006b, 2004a). It also increases our understanding of the elementary reaction mechanisms (Nalewajski and Broniatowska 2003b; López-Rosa et al. 2010). By using the complementary Shannon (global) and Fisher (local) measures of the information content of the electronic distributions in the position and momentum spaces, respectively, it has been demonstrated that these classical IT probes allow one to precisely locate the substrate bond-breaking and the product bond-forming stages along the reaction coordinate, which are not seen on the reaction energy profile alone (López-Rosa et al. 2010).

These applications have advocated the use of several IT concepts and techniques as efficient tools for exploring and understanding the electronic structure of molecules, e.g., in facilitating the spatial localization of the system electrons and chemical bonds, extraction of the entropic bond-orders and their covalent/ionic composition, in monitoring the promotion (polarization/hybridization) and charge-transfer processes determining the valence state of bonded atoms, etc. The spatial localization and multiplicity of specific bonds, not to mention some qualitative questions about their very existence, e.g., between the bridgehead carbon atoms in small propellanes, presents another challenging problem that has been successfully tackled by this novel treatment of molecular systems. The nonadditive Fisher information in the AO resolution has been recently used as the Contra-Gradience (CG) criterion for localizing the direct bonding regions in molecules (Nalewajski 2006a, 2008a, 2010a, b, 2012a, b; Nalewajski et al. 2010, 2012a), while the related information density in the Molecular Orbital (MO) resolution has been shown (Nalewajski 2006a, 2010a, 2012a; Nalewajski et al. 2005) to determine the vital ingredient of the successful Electron-Localization Function (ELF) (Becke and Edgecombe 1990; Silvi and Savin 1994; Savin et al. 1997).

The Communication Theory of the Chemical Bond (CTCB) has been developed using the basic entropy/information descriptors of the molecular information (communication) channels at various levels of resolving the molecular probability distributions (Nalewajski 2000, 2004b, c, d, 2005a, b, c, 2006a, c, d, e, f, g, 2007, 2008b, c, d, 2009a, b, c, d, 2010a, 2012a; Nalewajski and Jug 2002). The entropic probes of the molecular bond structure have provided new, attractive tools for describing the chemical bond phenomenon in information terms. It is the main purpose of this survey to illustrate the efficiency of alternative local entropy/information probes of the molecular electronic structure, explore the information origins of the chemical bonds, and to present recent developments in the Orbital Communication Theory (OCT) (Nalewajski 2009e, f, g, 2010a, c, 2011a, b, 2012a; Nalewajski et al. 2011, 2012b).

In OCT the chemical bonding is synonymous with some degree of communications (probability scatterings) between AO. It can be realized either directly, through the constructive interference of interacting orbitals, i.e., as the “dialogue” between the given pair of orbitals, or indirectly, through the information cascade involving other orbitals, which can be compared to the “rumor” spread through these orbital intermediates. The importance of the non-additive effects in the chemical-bond phenomena will be emphasized throughout and some implications of the information-cascade (bridge) propagation of electronic probabilities in molecular information systems, which generate the indirect bond contributions due to orbital intermediaries (Nalewajski 2010d, e, 2011c, 2012c; Nalewajski and Gurdek 2011, 2012), will be examined.

This work surveys representative applications of alternative entropy densities as probes of the bonding patterns in molecules. The nonadditive information contributions defined in alternative orbital resolutions will be examined, the IT tools for locating electrons and chemical bonds will be introduced, and the bond direct/indirect origins will be explored in prototype molecules. The conditional probabilities in AO resolution, which define the molecular information (communication) system of OCT, can be generated using the bond-projected superposition principle of quantum mechanics. The entropy/information measures of the bond covalency/iconicity reflect the average noise and the flow of information in such molecular network, respectively (Nalewajski 2006a, 2009e, f, g, 2010a, c, 2011a, b, 2012a, b; Nalewajski et al. 2011). Finally, the quantum extension of the classical Shannon entropy will be proposed, following a similar generalization of the Fisher measure related to the system average kinetic energy (Nalewajski 2008a), and the phase-current concept will be introduced in the information-continuity context (Nalewajski 2010a, 2012a).

Direct and indirect orbital interactions

In typical molecular scenarios one probes the information contained in the ground state distribution of electrons and examines its displacement relative to the system promolecule, the initial state in the bond formation process. The latter is dominated by reconstructions of the valence shells of constituent AIM. In the simplest, single-determinant description of the familiar orbital approximation the equilibrium electron distribution is determined by the optimum shapes of the occupied MO resulting from the relevant SCF approach, i.e., from the familiar HF or KS equations of the computational quantum mechanics. In this MO subspace the superposition principle of quantum mechanics then generates the direct (through-space) communication network between AO participating in the bond formation proces. Its consecutive (cascade) combinations subsequently give rise to the relevant indirect probability scatterings, which are responsible for the through-bridge bonds in the molecule.

In OCT the molecule is treated as the information system involving elementary events of localizing electrons on AO, both in its input and output (see Fig. 1). In this description each AO constitutes both the “emitter” and “receiver” of the signal of assigning electrons in a molecule to these elementary basis functions of the separated atoms. Besides the direct communications between AO, i.e., a “dialogue” between basis functions, the given pair of orbitals also exhibits the indirect (cascade) communications involving remaining orbitals acting as intermediaries in this “gossip” exchange of information (Nalewajski 2010a, 2010d, e, 2011b, c, 2012a, 2012; Nalewajski and Gurdek 2011, 2012). The direct communication network of the system chemical bonds is determined by the conditional probabilities \( {\mathbf{P}}({\varvec{\chi}}^{\prime } |{\varvec{\chi}} ) = {\mathbf{P}}(\varvec{a} \to \varvec{b}) = \{ P_{i \to j} \} \) of observing alternative AO outputs \( \varvec{b} = {\varvec{\chi}}^{\prime } = \{ \chi_{j} \} \) for the given AO inputs \( \varvec{a} =\varvec{\chi}= \{ \chi_{i} \} \). In SCF LCAO MO theory they result from the superposition principle of quantum mechanics (Dirac 1958) supplemented by the projection onto the occupied MO the subspace. They are determined by the squares of the associated scattering amplitudes \( \{ A_{i \to j} \} \),

which are proportional to the corresponding elements of the system density matrix γ AO (Appendix 2). For the given input probability \( \varvec{P}(\varvec{a}) = \varvec{p} \) the scattered signals from all inputs generate the resultant output probabilities \( \varvec{P}(\varvec{b}) = \varvec{q} \), with the molecular input giving rise to the same distribution in the channel output of such a “stationary” probability propagation:

This molecular channel can be probed using different input signals, some specifically prepared to extract desired properties of the chemical bond (Nalewajski et al. 2011). These descriptors can be global in character, when they describe the molecule as a whole, or they can refer to localized bonds in and between molecular fragments (Nalewajski 2006a, 2010a, 2012a; Nalewajski et al. 2011). Both the internal and external bonds of molecular pieces can be determined in this way. The molecular communication system can be applied in the full AO resolution, or it can be used in alternative “reductions” (Nalewajski 2005b, 2006a, 2010a, 2012a), when some input and/or output orbital events are combined into groups defining less resolved level of molecular communications. The direct probability scattering networks can be also combined into information cascades (Fig. 2), involving a single or several AO intermediates, in order to generate relevant networks for the indirect communications between the basis functions of the SCF LCAO MO calculations. These indirect communications in molecules generate the so called bridge-contributions to the resultant bond orders (Nalewajski 2010d, e, 2011c, 2012a, c; Nalewajski and Gurdek 2011, 2012).

Information cascade for generating the indirect probability propagations between the representative pair of “terminal” AO (identified by an asterisk), the input orbital χ i ∈ χ and the output orbital χ j ∈ χ′, via the single AO intermediate (“bridge”) \( [\chi_{k} \ne (\chi_{i} ,\chi_{j} )] \in {\varvec{\chi}}_{1} \). Therefore, the broken communication connections in the diagram are excluded from the set of the admissible bridge communications

The conditional probabilities between AO basis functions in the occupied subspace of MO, \( {\mathbf{P}}({\varvec{\chi}}^{\prime } |{\varvec{\chi}} ) = \{ P(\chi_{j} |\chi_{i} ) = P(\chi_{i} \wedge \chi_{j} )/p_{i} \equiv P(j|i)\} \) (Nalewajski 2009e; f; g, 2010a, c, 2011a, 2012a; Nalewajski et al. 2011), which result from the quantum–mechanical superposition principle (Dirac 1958), determine the proper communication network (see Appendix 1) for discussing the entropic origins of the chemical bond. Here, \( P(\chi_{i} \wedge \chi_{j} ) \) stands for the joint molecular probability of simultaneously observing orbitals χ i and χ j , in the “input” and “output” of the underlying orbital communication system, respectively, while p i is the probability of the electron occupying ith AO in the molecule (Nalewajski 2006a, 2010a, 2012a) (see also Appendix 1). The complementary information-scattering (covalent) and information-flow (ionic) descriptors of the molecular information system, represented by the channel conditional entropy \( {\fancyscript{S}} \) and mutual information \( {\fancyscript{I}} \), respectively, then generate the IT multiplicities of these two bond components, which together give rise to the overall IT bond order \( {\fancyscript{N}} = {\fancyscript{I}} + {\fancyscript{S}} \) (Nalewajski 2000, 2004b, c, d, 2005a, b, c, 2006a, c, d, e, f, g, 2007, 2008b, c, d, 2009a, b, c, d, e, f, g, 2010a, c, 2011a, 2012a, b; Nalewajski and Jug 2002; Nalewajski et al. 2011). The amplitudes of the conditional probabilities exhibit typical for electrons property of interference (Nalewajski 2011c). An example of such an information channel, determined by the doubly occupied bonding MO originating from an interaction of two (singly occupied) AO is shown in Fig. 1.

The global IT descriptors of the whole AO network for the direct communications in a molecule (see Appendix 1) involve the conditional entropy \( S\left( {\varvec{P}\left( \varvec{b} \right)|\varvec{P}\left( \varvec{a} \right)} \right) \equiv S(\varvec{q}|\varvec{p}) \equiv {\fancyscript{S}} \), which measures the channel average communication noise, and the mutual information relative to the promolecular input signal p 0, \( I( {\varvec{P}}^{0} ( {\varvec{a}}):{\varvec{P}} ( {\varvec{b}} )) \equiv I\left( {{\varvec{p}}^{0} :{\varvec{q}}} \right) \equiv {\fancyscript{I}} \), which reflects the network information flow. In OCT they signify the IT bond covalency and ionicity, respectively, and generate the associated overall IT index of the bond multiplicity in the molecular system under consideration: \( {\fancyscript{N}} = {\fancyscript{S}} + {\fancyscript{I}} \). An appropriate probing of this communication network allows one to extract the associate indices of the localized bonds, between pairs of AIM, and the internal and external bond characteristics of molecular fragments (Nalewajski 2010a, 2012a; Nalewajski et al. 2011). Alternative channel reductions can be used to eliminate the subsystem internal bond multiplicities, in order to extract the complementary external descriptors, of the fragment bonds with its molecular environment (Nalewajski 2005b, 2006a). This communication approach also allows one to index the molecular couplings, between internal bonds in different subsystems (Nalewajski 2010c, 2011a; Nalewajski et al. 2011).

Thus, in the global description the molecular AO channel is probed by the molecular input signal \( {\varvec{p}} = \left\{ {p_{i} } \right\} \), when one extracts the purely molecular (covalency) descriptor, and by the promolecular signal \( \varvec{p}^{0} = \left\{ {p_{i}^{0} } \right\} \), when one is interested in the IT “displacement” quantity, relative to this reference of the molecularly placed, initially nonbonded atoms (see Fig. 1). Direct information scattering \( P(j|i) \) between the given pair of AO originating from different atoms, \( \chi_{i} \in {\text{A}} \) and \( \chi_{j} \in {\text{B}} \) is then proportional to the square of the coupling element \( \gamma_{j,i} = \gamma_{i,j} \) of the Charge and Bond-Order (CBO), density matrix γ AO (Appendix 2). Therefore, this probability is also proportional to the associated contribution \( {\fancyscript{M}}_{i,j} = \gamma_{i,j}^{2} \) of these two AO to the Wiberg index (Wiberg 1968) measuring the “multiplicity” of the direct chemical bond A–B:

As an illustration consider the simplest 2-AO model of the chemical bond, resulting from the interaction between the given pair of the (orthonormal) AO, A(r) ∈ A and B(r) ∈ B, with each atom contributing a single electron to form the chemical bond in the ground molecular state determined by the doubly occupied bonding MO.

Its communication system, shown in Fig. 1, conserves the overall (single) IT bond order for all admissible bond polarizations measured by the probability parameter P:

However, as explicitly shown in Fig. 3, the IT covalent/ionic composition of such a model chemical bond changes with the MO polarization P, so that these two bond components compete with one another. In accordance with an accepted chemical intuition the symmetrical bond, for \( P = Q = {\raise.5ex\hbox{$\scriptstyle 1$}\kern-.1em/ \kern-.15em\lower.25ex\hbox{$\scriptstyle 2$} } \), gives rise to the maximum bond covalency, \( {\fancyscript{S}}\left( {P = \raise.5ex\hbox{$\scriptstyle 1$}\kern-.1em/ \kern-.15em\lower.25ex\hbox{$\scriptstyle 2$} } \right) = 1 \) bit, e.g., in the σ bond of H2 or the π bond of ethylene, while the ion-pair configurations, for P = (0, 1), signify the purely ionic bond: \( {\fancyscript{I}}(P) = 1 \) bit.

Variations of the IT-covalent \( {\fancyscript{S}}\left( P \right) \) and and IT-ionic \( {\fancyscript{I}}\left( P \right) \) components of the chemical bond in the 2-AO model with changing MO polarization P, and the conservation of the overall bond-order \( {\fancyscript{N}}\left( P \right) \) = 1bit

In this nonsymmetrical binary channel the molecular input signal \( \varvec{p} = (P,Q = 1 - P) \) probes the model bond covalency, while the promolecular input p 0 = (½, ½) determines the model IT iconicity descriptor. It should be stressed, however, that this system of indexing, intended for the fixed molecular (equilibrium) geometry, is insensitive to changes in the actual AO coupling strength. For an appropriate modification, which correctly predicts the monotonically decreasing bond order with increasing bond length see (Nalewajski in press b).

The average entropy/information descriptors of the localized bond A—B in polyatomic systems where similarly shown (Nalewajski et al. 2011) to adequately approximate the associated Wiberg bond orders,

at the same providing a resolution of this resultant index into its covalent (\( {\fancyscript{S}}_{\text{A,B}} \)) and ionic (\( {\fancyscript{I}}_{\text{A,B}} \)) components.

In MO theory the direct chemical coupling between “terminal” atomic orbitals on different centers is strongly influenced by their mutual interaction-strength and overlap, which together condition the associated energy of their bonding combination (in the constructive AO interference), relative to the initial AO energies. This direct textbook mechanism, e.g., of the π bond between the nearest neighbors (ortho carbons) in benzene ring, is realized “through space”, without any interference of the remaining AO. It generally implies an accumulation of electrons between the atomic nuclei, the so called bond charge, which may exhibit different polarizations up to the full electron transfer, in accordance with the electronegativity/hardness differences between the two atoms. In MO theory the chemical multiplicity of such direct interaction is generally adequately represented by the Wiberg index \( {\fancyscript{M}}_{\text{A,B}} \).

For more distant atoms, e.g., in the cross-ring (meta and para) π interactions in benzene, these conditions for an effective mixing of AO into the bonding MO combination are not fulfilled. The natural question then arises: are there any additional possibilities for bonding such more distant neighbors in atomic chain or ring? An example of such controversy has arisen in explaining the central-bond problem in the small [1.1.1] propellane, for which both the density and entropy-deficiency/displacement diagrams do not confirm a presence of the direct chemical bonding, in contrast to a larger [2.2.2] system, where the full central bond has been diagnosed (Nalewajski 2006a, 2010a, 2012a). We also recall, that to justify the existence of “some” direct bonding between atoms without the bond-charge feature, the alternative (correlational) “Charge–shift” mechanism has been proposed by Shaik et al. within the generalized Valence-Bond (VB) description. It is realized via charge fluctuations resulting from a strong resonance between the bond covalent and ionic VB structures.

However, since in general the HF and KS theories describe the bond-patterns in molecules quite well, one should search for some additional long-range bonding capabilities, present even at this lowest, one-determinantal description the molecular electronic structure, in terms of the occupied subspace of MO in the ground-state electron configuration. One would indeed expect that this level of theory, which was demonstrated of being perfectly capable of tackling larger propellanes, should be also adequate to treat smaller systems as well. It has indeed been demonstrated (Nalewajski 2010d, e, 2011b, c, 2012c; Nalewajski and Gurdek 2011, 2012), that in OCT there are indeed additional, indirect sources of entropic multiplicities of chemical interactions, which explain a presence of a non-vanishing bond orders between more distant AIM.

More specifically, within the OCT description all communications between AO originating from different centers ultimately generate the associated bond-order contributions. The direct components result from the orbital mutual probability propagation (the orbital “dialogue”), which does not involve any orbital intermediatries (Fig. 1). However, the transfer of information can be also realized indirectly, as a “gossip” spread via the remaining orbitals, e.g., in the single-bridge cascade of Fig. 2, obtained from the consecutive combination of the two direct channels. Examples of such “combined” communications in the π system of benzene are shown in Fig. 4. The bond multiplicity \( {\fancyscript{M}}_{{{\text{A,B}}|bridges}} \) of such multicentre “bonds” in MO theory can be measured by the sum of the relevant products of the Wiberg indices of all intermediate interactions in the occupied subspace of MO (Nalewajski 2010d, e, 2011c, 2012c; Nalewajski and Gurdek 2011,2012). Therefore, the larger the bridge, the less information is transferred in this indirect manner, and the weaker through-bridge interaction. The latter is the most effective, when realized through the real chemical bridges, i.e., the mutually (directly) bonded pairs of AIM.

Together the direct \( ({\fancyscript{M}}_{\text{A,B}} ) \) and indirect \( ({\fancyscript{M}}_{{{\text{A,B}}|bridges}} ) \) components determine the resultant bond order in this generalized perspective on communicational multiplicities of chemical bonds:

As an illustration we report below the relevant bond-order data (from Hückel theory) for the π interactions in benzene, in the consecutive atom/orbital numbering of Fig. 4,

It follows from these results that the artificial distinction of the meta π interaction as completely nonbonding does not hold in this generalized OCT perspective. The strongest “half” bond, of predominantly direct origin, is indeed detected for the nearest (ortho) neighbors in the ring, while both cross-ring iterations are predicted to give rise to similar, weaker resultant interactions: the meta bond order is exclusively of the bridge origin, while the para bond exhibits comparable direct and indirect contributions.

Theoretical analysis of illustrative polymer chains (Nalewajski 2012c; Nalewajski and Gurdek 2012) indicates that this indirect mechanism effectively extends the range of chemical interactions to about three-atom bridges. It has been also demonstrated that the source of these additional interactions lies in the mutual dependencies between (nonorthogonal) bond projections of AO (Nalewajski and Gurdek 2011). An important conclusion from this analysis is that the given pair of (terminal) AO can be still chemically bonded even when their directly coupling CBO matrix element vanishes, provided that these two basis functions directly couple to a chain of the mutually bonded orbital intermediates. This effectively extends the range of the bonding influence between AO, which is of a paramount importance for polymers, supramolecular, catalytical and biological systems.

Electron densities as information carriers

The electrons carry the information in molecular systems (Nalewajski 2006a, 2010a, 2012a). Several IT quantities (see Appendix 1) have provided sensitive probes into changes in the equilibrium particle distributions, relative to the promolecular reference, and generated information detectors of chemical bonds or devices monitoring the valence state of AIM. In orbital approximation the molecular electron density is given by the sum of MO contributions \( \varvec{\rho}^{\text{MO}} = \{ \rho_{\alpha } \} ,\rho = \sum\nolimits_{\alpha } \rho_{\alpha } \). This partition defines the associated MO-additive (add) component, \( A^{add} [\varvec{\rho}^{\text{MO}} ] = \sum\nolimits_{\alpha } A[\rho_{\alpha } ] \), of the density functional \( A[\rho ] \equiv A^{total} [\varvec{\rho}^{\text{MO}} ] \) attributed to the given physical property A, and hence also its MO-nonadditive (nadd) part at this resolution level (see Appendix 2):

Such MO partitioning of the Fisher information density \( f\left[ {{\rho};\varvec{r}} \right] \equiv f^{total} \left[ {\varvec{\rho}^{\text{MO}} ;\varvec{r}} \right] \) (Appendix 1),

has been shown (Nalewajski et al. 2005) to generate the key conditional probability used to define ELF (Becke and Edgecombe 1990).

In a search for the entropic origins of chemical bonds the AO resolution is required. It generates the AO-addtivive partitioning of the promolecular density. This partitioning of the intrinsic accuracy density \( f\left[ {\rho ;\varvec{r}} \right] \equiv f^{total} \left[ {\varvec{\Upomega}^{\text{AO}} ;\varvec{r}} \right] \) (Appendix 2) leads to a related CG criterion, which represents an effective tool for the bond localization (Nalewajski 2010a, b, 2012a, b; Nalewajski et al. 2010, 2012a). Thus, the AO-nonadditive component of the Fisher information density used to define the CG criterion, related to the atomic (promolecular) reference, reads:

It should be recalled at this point that the set of AO densities on constituent atoms {X} gives rise to densities of isolated atoms \( \{ \rho_{\text{X}}^{0} (\varvec{r}) = \sum\nolimits_{{i \in {\text{X}}}} \rho_{i} (\varvec{r})\} \), which by the Hohenberg–Kohn (HK) theorem of DFT (Hohenberg and Kohn 1964) uniquely identify the atomic external potentials (due to the atom own nucleus), {v X(r) = v X[ρ 0X ; r]}, and hence also the atomic Hamiltonians {HX(N 0X , v X)}, where N 0X stands for the overall number of electrons in the isolated atom X. Since atomic positions R = {R X} are parametrically fixed in the Born–Oppenheimer (BO) approximation, this information suffices to uniquely identify the molecular external potential as well, \( v(\varvec{r}) = \sum\nolimits_{{\text{X}}} {v_{{\text{X}}} (\varvec{r} - \varvec{R}_{{\text{X}}} )} \), and hence also the molecular Hamiltonian H(N, v), where \( N = \sum\nolimits_{{\text{X}}} {N_{{\text{X}}} ^{0} \equiv N^{0} } \), which generates the equilibrium electron distribution ρ(r) of the whole system. Therefore, in the adiabatic approximation there is also one-to-one mapping from the densities ρ AO of the isolated atoms to the molecular density, ρ(r) = ρ[ρ AO; r]. Hence,

so that the definition of the AO-nonadditive component of Eq. (10) can be also interpreted in the spirit of the multi-functional of Eq. (9):

The negative values of f nadd[ρ AO; r] reflect an extra delocalization of electrons via the system chemical bonds (Nalewajski 2008a, 2010a, b, 2012a, b; Nalewajski et al. 2010, 2012a). Therefore, the valence basins of its negative values can serve as sensitive detectors of the spatial localization of the direct chemical bonds in a molecule. For two interacting AO this is the case when the gradient of one orbital exhibits the negative projection on the direction of the gradient of the other orbital, which explains the name of the CG probe (Nalewajski 2008a). This criterion localizes chemical bonds, regions of an increased electron delocalization, in an analogous manner as the inverse of the negative f nadd[ρ MO; r] indexes the localization of electrons in the ELF approach (Nalewajski et al. 2005; Becke and Edgecombe 1990).

A transformation of the initial (promolecular) distribution of electrons ρ 0 into the final molecular equilibrium density ρ, as reflected by the familiar density-difference function (see Appendix 2), Δρ(r) = ρ(r) − ρ 0(r), also called the deformation density, can be alternatively probed using several local IT probes (Nalewajski 2006a, 2010a, 2012a) of Appendix 1. For example, the missing information functional,

and its density Δs(r) = ρ(r)I(r) can be used to monitor changes in the information content due to chemical bonds. It measures the so called cross(relative)-entropy referenced to the promolecular state of nonbonded atoms. Alternatively, the corresponding change in the Shannon entropy (see Appendix 1),

and its density Δh(r) can be used to extract the displacement descriptors of the system chemical bonds (Nalewajski 2006a, 2010a, 2012a).

The Δρ(r) and Δs(r) probes where shown to be practically equivalent in monitoring the effects of chemical bonds and of the associated AIM promotion. Indeed, these two displacement maps so strongly resemble one another that they are hardly distinguishable. This confirms a close relation between the local density and entropy-deficiency relaxation patterns, thus attributing to the former the complementary IT interpretation of the latter. This strong resemblance between these molecular diagrams also indicates that the local inflow of electrons increases the cross-entropy, while the outflow of electrons gives rise to a diminished level of this relative uncertainty content of the electron density in molecules. The density displacement and the missing information distributions can be thus viewed as equivalent probes of the system direct chemical bonds.

In Fig. 5 we compare the illustrative Δρ(r) and Δh(r) plots for a representative set of linear molecules. The main feature of Δh diagrams, an increase in the electron uncertainty in te bonding region between the two nuclei, is due to the inflow of electrons to this region. This manifests the bond-covalency phenomenon, attributed to the electron-sharing effect and a delocalization of the bonding electrons, now moving effectively in the field of both nuclei. In all these maps one detects a similar nodal structure. The nonbonding regions are seen to exhibit a decreased uncertainty, either due to a transfer of the electron density from this area to the vicinity of the two nuclei and the region between them, or as a result of the orbital hybridization.

A comparison between (non-equidistant) contour diagrams of the density-difference Δρ(r) (first column) and entropy-difference Δh(r) (second column) functions for representative linear molecules. The corresponding profiles of Δh(r), for the cuts along the bond axis, are shown in the third column of the figure

Therefore, the molecular entropy difference function also displays all typical features in the reconstruction of electron distribution in a molecule, relative to free atoms. Its diagrams thus provide an alternative information tool for diagnosing the presence of chemical bonds through displacements in the entropy (uncertainty) content of the molecular electron densities.

As an additional illustration we present the combined density-difference, entropy-displacement, and information-distance analysis of the central C′–C′ bond in small propellanes. The main objective of this study was to examine the effect of a successive increase in the size of the carbon bridges in the series of the [1.1.1], [2.1.1], [2.2.1], and [2.2.2] systems shown in Fig. 6. Figure 7 reports the contour maps of the density difference function Δρ(r), the missing information density Δs(r), and of the local entropy-displacement Δh(r), for the planes of sections displayed in Fig. 6. The relevant ground-state densities have been generated using the DFT-LDA calculations in the extended (DZVP) basis set.

A comparison between the equidistant-contour maps of the density-difference function Δρ(r) (first column), the information-distance density Δs(r) (second column), and the entropy-displacement density Δh(r) (third column), for the four propellanes of Fig. 6

The density difference plots show that in small [1.1.1] and [2.1.1] propellanes there is on average a depletion of the electron density between the bridgehead carbon atoms, relative to the atomic promolecule, while the larger [2.2.1] and [2.2.2] systems exhibit a net density buildup in this region. A similar conclusion follows from the entropy-displacement and entropy-deficiency plots of the figure. The two entropic maps are again seen to be qualitatively similar to the corresponding density-difference plots. This resemblance is seen to be particularly strong between Δρ(r) and Δs(r) diagrams shown in first two columns of the figure.

Therefore, all these bond detectors predict an absence of the direct chemical bonding in two small propellanes and their full presence in larger systems. This qualitatively agrees with predictions from the simple models of the localized chemical bonds in the smallest and largest of these propellanes, which are summarized in Fig. 8. Indeed, in the minimum basis set framework the bond structure in these two systems can be qualitatively understood in terms of the localized MO resulting from interactions between the directed orbitals on neighboring atoms and the non-bonding electrons occupying the non-overlapping atomic hybrids.

In the smallest [1.1.1] system the nearly tetrahedral (sp 3) hybridization on both bridgehead and bridging carbons is required to form the chemical bonds of the three carbon bridges and to accommodate two hydrogens on each of the bridge carbons. Thus three sp 3 hybrids on each of the bridgehead atoms are used to form the chemical bonds with the bridge carbons and the fourth hybrid, directed away from the central-bond region, remains nonbonding and singly occupied.

In the largest [2.2.2] propellane the two central carbons acquire a nearly trigonal (sp 2) hybridization, to form bonds with the bridge neighbors, each left with a single 2p σ orbital directed along the central-bond axis, which have not been used in this hybridization scheme, now being available to form a regular σ bond, the through-space component of the overall multiplicity of the central C′–C′ bond. This explains the missing direct interaction in a smaller (diradical) [1.1.1] propellane and its full presence in a larger [2.2.2] system.

Use of ELF/CG densities as localization/delocalization probes

The nonadditive Fisher information densities in MO [Eq. (9)] and AO [Eqs. (10, 12)] resolutions have been also shown to provide sensitive tools for localizing electrons and chemical bonds in molecular systems, through the ELF (or IT-ELF) (Nalewajski 2010a, 2012a; Nalewajski et al. 2005; Becke and Edgecombe 1990; Silvi and Savin 1994; Savin et al. 1997) and CG (Nalewajski 2008a, 2010b, 2012b; Nalewajski et al. 2010, 2012a) concepts, respectively. The electron localization property of the former, proportional to the inverse of the square (ELF) or just the simple inverse (IT-ELF) of the negative f nadd[ρ MO; r] (Nalewajski et al. 2005; Becke and Edgecombe 1990), is demonstrated in Fig. 9. It reports an application of these tools in a study of the central bond in the [1.1.1] and [2.2.2] propellanes of the preceding section. This analysis again indicates an absence of the direct bond in the smaller system and its full presence in the larger propellane.

Plots of ELF (first column) and IT-ELF (second column) for the [1.1.1] (top row) and [2.2.2] (bottom row) propellanes of Fig. 6, on the indicated planes of section

The density f nadd[ρ AO; r] of the nonadditive Fisher information in AO resolution is shown in Figs. 10, 11, 12, 13. It is seen to represent an efficient CG tool for the bond localization, with the valence basins of its negative values, signifying an increased electron delocalization, now identifying the bonding regions in molecules (Nalewajski 2010a, b, 2012a, b; Nalewajski et al. 2010, 2012a). Accordingly, the areas of its positive values identify the nonbonding regions of the molecule. They represent a relative contraction/localization of electrons as a result of the AIM polarization/hybridization in their valence states, due to a presence of the bond partners in the molecule.

The same as in Fig. 10 for N2

In Fig. 13 this CG analysis has been employed to study the central bond problem in propellanes. Each row in the figure corresponds to the specified molecular system, with the left diagram displaying the contour map in the section perpendicular to the central bond, in its midpoint, and the right diagram corresponding to the bond section containing the bridge carbon. This independent analysis generally confirms our previous conclusions of the practical absence of the direct C′–C′ bond in the smallest propellane, and its full presence in the largest compound. However, this transition is now seen to be less abrupt, since even in the [1.1.1] system one detects a small central bonding region, which gradually increases with successive bridge enlargements. The figure also demonstrates the efficiency of the CG criterion in localizing the remaining C–C and C–H bonds.

It follows from the virial theorem analysis of the diatomic BO potential that changes in the bond energy with the internuclear distance can be uniquely partitioned into the associated displacements of its kinetic and potential components. In this overall perspective the electronic kinetic energy drives the atomic approach only at an early stage of the bond-formation process, while at the equilibrium separation between the nuclei it constitutes a destabilizing factor. Indeed, it is the potential energy of electrons which is globally responsible for the chemical bonding at this stage. In other words, the overall contraction (promotion) of atomic densities in a molecule, which increases the kinetic energy relative to separated-atom limit, dominates the truly bonding kinetic energy contribution reflected by the nonadditive Fisher information. This is why most of the physical interpretations of the origins of the direct chemical bond emphasize the potential component, e.g., a stabilization of the diatomic system due to an attraction of the screened nuclei to the shared “bond charge” and the contraction of atomic distributions in the molecular external potential. In this interpretation the kinetic energy is regarded only as a prior “catalyst” of the bond formation, at an earlier stage of the atomic approach.

In the CG approach a separation of the nonadditive component of the kinetic energy eliminates the atom-promotion effects, which effectively hide the bonding influence of this energy contribution, effective also at the equilibrium separation between the nuclei. As we have seen in this section, this partition is also vital for gaining an insight into the information origins of the chemical bond.

Nonclassical Shannon entropy

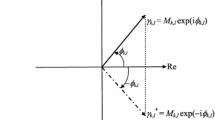

We have already stressed in the “Introduction” section, there is a need for designing the quantum-generalized measures of the information content, applicable to the complex probability amplitudes (wave functions) encountered in quantum mechanics. The classical measures (summarized in Appendix 1) probe only the probability amplitudes, related to the modulus of the system state function, neglecting the information content of its phase (current) component. Elsewhere (Nalewajski 2008a) the Author has already examined a natural quantum extension of the classical Fisher information [Eq. (33)], which introduces the nonclassical term due to probability current [Eq. (63)] (Nalewajski 2008a, 2010a, 2012a).

One could expect that a similar generalization of the classical Shannon entropy S[p] [Eq. (34)] is required in quantum mechanics, to include the relevant phase/current contribution. In the remaining part of this overview we therefore discuss, for the first time, the entropy content of the phase feature of the molecular quantum states. We shall address this problem using the already known quantum contribution to the Fisher information, by adopting the natural requirement that the relations between the known classical information densities of the Fisher and Shannon measures should also hold for their quantum complements. The related issues of the phase current and information continuity are addressed in Appendix 3.

It follows from Eq. (63) that both the electron distribution p(r) and its current j(r) determine the resultant quantum Fisher information content I[p, j]. Its first, classical part I[p] explores the information contained in the probability distribution, while the nonclassical contribution I[j] measures the gradient information in the probability current, i.e., in the phase gradient of Eq. (61). Thus, the quantum Fisher functional I[ψ] symmetrically probes the gradient content of both aspects of the complex wave-function:

Hence, the classical Fisher information measures the “length” of the “reduced” gradient \( \overline{\nabla }p \) of the probability density, while the other contribution represents the corresponding “length” of the reduced vector of the probability current density \( \bar{\varvec{j}} \). In one electron system of Appendix 3 this generalized measure becomes identical with the classical functional I[p] only for the stationary quantum states characterized by the time-independent probability amplitude R(r) = φ(r) and the position-independent phase Φ(t) = −ωt:

These two information contributions in Eq. (63) can be alternatively expressed in terms of the real and imaginary parts of the gradient of the wave-function logarithm, \( \nabla \ln \psi = (\nabla \psi )/\psi \),

Therefore, these complementary components of the quantum Fisher information have a common interpretation in quantum mechanics, as the p-weighted averages of the gradient content of the real and imaginary parts of the logarithmic gradient of the system wave-function, thus indeed representing a natural (complex) generalization of the classical (real) gradient information measure [Eq. (31).

Let us examine some properties of the resultant density of this generalized Fisher information,

or the associated information density per electron:

The latter is generated by the squares of the local values of the related quantities per electron: the probability gradient \( (\widetilde{\nabla }p)^{2} \) and the current density \( (\tilde{\varvec{j}})^{2} \), which ultimately shape these classical and nonclassical information terms in quantum mechanics. This expression emphasizes the basic equivalence of the roles played by the probability density and its current in shaping the resultant value of the generalized Fisher information density.

We now search for a possible relation between the classical gradient density,

and the associated density per particle, \( \tilde{s}^{class.} = s^{class.} /p = - \ln p \), of the classical Shannon entropy of Eq. (34):

The logarithm of the probability density is seen to also shape the classical part of the Shannon entropy density per electron, \( \tilde{s}^{class.} \), giving rise to the gradient \( \nabla \tilde{s}^{class.} = - (\nabla p)/p \).

Hence, the two classical information densities per electron are indeed related:

with the classical Fisher measure \( \tilde{f}^{class.} = (\nabla \ln p)^{2} \) representing the squared gradient of the associated classical Shannon density: \( \tilde{s}^{class.} = - \ln p \).

This relation can now be used to introduce the unknown nonclassical part \( \tilde{s}^{nclass.} \) of the density per-electron of the generalized Shannon entropy in quantum mechanics,

which includes the nonclassical term \( \tilde{s}^{nclass.} [p,\Upphi ] = \tilde{s}^{nclass.} [p,\varvec{j}] \). This can be accomplished by postulating that the relation of Eq. (22) also holds for the nonclassical information contributions:

Therefore, the gradient of the nonclassical part of the generalized Shannon entropy is proportional to the probability current per electron, i.e., the velocity of the probability fluid, and hence, to an additive constant [see Eq. (60)],

Alternatively, the negative of the phase modulus or its multiplicity can be used as the nonclassical (quantum) complement of the classical entropy density, to conform to the maximum entropy level at the system ground state.

We thus conclude that, to a physically irrelevant constant, the phase function of Eq. (60) can be itself regarded as the nonclassical part of the Shannon information density per particle. It gives a transparent division of the quantum-generalized entropy density:

The density per particle of the generalized Shannon entropy density \( \tilde{s}[\psi ] \) is seen to be divided into the familiar classical component \( \tilde{s}^{class.} [R] \), determined solely by the wave function modulus factor R(r), and the nonclassical supplement \( \tilde{s}^{nclass.} [\Upphi ] \), reflecting the phase function of the system wave function.

Let us now examine the associated source (see Appendix 3) of the quantum Shannon entropy functional:

Again, due to the sourceless character of the probability distribution [Eq. (65)] the classical term generates the vanishing source contribution of the Shannon entropy and its density,

Hence, for the phase current J Φ of Eq. (78) one finds

where the phase source \( \sigma_{\Upphi } (\varvec{r}) \) is defined in Eq. (79). Alternatively, the current \( \varvec{J}_{\Upphi }^{'} \) [Eq. (80)] and the conjugated source \( \sigma_{\Upphi }^{'} \) [Eq. (81)] can be applied to define this rate of change of the quantum entropy function and its source

Conclusion

In this short overview of the information origins of chemical bonds and the quantum generalization of the classical information measures we have surveyed a wide range of IT concepts and techniques which can be used to probe various aspects of chemical interactions between atomic orbitals in molecules. Alternative measures of the classical information densities were used to explore the “promotional” and “interference” displacements the bonded atoms in the molecular environment, relative to the system promolecular reference. Nonadditive parts of the gradient (Fisher) information density in the MO and AO resolutions, which define ELF and CG localization criteria, respectively, were shown to provide efficient and sensitive tools for locating electrons and bonds in molecular systems.

In OCT, which regards a molecule as the communication network in AO resolution, with AO constituting both the elementary “emitters” and “receivers” of the electron AO-assignment signals, the typical descriptors of the channel average “noise” (conditional entropy) and the information flow (mutual information) constitute adequate IT descriptors of the molecular overall entropy covalency and information ionicity, due to all chemical bonds in the system under consideration. By shaping the input signal in these networks and “reducing” (condensing) (Nalewajski 2006a) the resolution level of these AO probabilities, it is also possible to extract the interal and external IT descriptors of specific bonds in molecular fragments, e.g., the localized bond multiplicities of diatomic subsystems, as well as measures of the information coupling between bonds located in different parts of the molecule.

The cascade generalization of OCT and the indirect extension of the classical Wiberg bond-order concept both suggest a novel, indirect (bridge) mechanism of chemical interactions, via orbital intermediates, which complements the familiar direct bonding mechanism via the constructive interference of the valence AO. The model study of linear polymers indicates that this new component effectively extends the bonding range up to three intermediate bonds in the polymer chain. In this generalized perspective on chemical interactions the overall bond multiplicity reflects all nonadditivities between AO on different atoms. On one hand, this dependency is realized directly, via the bonding combination of AO, which generally generates the bond-charge accumulation between nuclei of the interacting atoms. On the other hand, it also has an indirect source, due to the chemical coupling to the remaining, directly interacting orbitals in the molecule, in the whole bond system determined by the subspace of the occupied MO. The intermediate communications (bonds) between AO where shown to result from the implicit interdependencies between (nonorthogonal) bond projections of AO, i.e., from their simultaneous involvement in all chemical bonds of the molecule.

In OCT the intermediate bonds can be linked to the information transfer via the AO cascade, in which the AO inputs and outputs are linked by the single or multiple AO bridges. Both MO theory and IT allow one to generate the through-bridge multiplicities of such indirect couplings between more distant AO, which complement the familiar direct bond orders in the corresponding resultant indices combining the through-space and through-bridges components. This generalized outlook on the bond pattern in molecules was shown to give a more balanced picture of the weaker (cross-ring) π bonds in benzene, with the meta interactions (directly nonbonding) exhibiting a strong indirect bond multiplicity.

In the closing section of this article we have emphasized a need for the quantum extensions of the classical information measures, in order to accommodate the complex wave functions (probability amplitudes) of the quantum mechanical description. The appropriate generalization of the gradient (Fisher) information introduces the information contribution due to the probability current, giving rise to a nonvanishing information source. We have similarly introduced the phase current of the complex wave function, and generalized the Shannon entropy by including the (additive) phase contribution. This extension has been accomplished by requesting that the relation between the classical Shannon and Fisher information densities should also reflect the relation between their nonclassical (quantum) contributions.

The variational rules involving these quantum measures of information, for the fixed ground-state electron density (probability distribution), provide “thermodynamic” Extreme Information Principles (EPI) (Frieden 1998) for determining the equilibrium states of the quantum system in question (Nalewajski in press a, c; Nalewajski submitted).

References

Abramson, N.: Information Theory and Coding. McGraw-Hill, New York (1963)

Bader, R.F.: Atoms in Molecules. New York, Oxford (1990)

Becke, A.D., Edgecombe, K.E.: A simple measure of electron localization in atomic and molecular systems. J. Chem. Phys. 92, 5397–5403 (1990)

Callen, H.B.: Thermodynamics: An Introduction to the Physical Theories of Equilibrium Thermostatics and Irreversible Thermodynamics. Wiley, New York (1960)

Dirac, P.A.M.: The Principles of Quantum Mechanics, 4th edn. Clarendon, Oxford (1958)

Fisher, R.A.: Theory of statistical estimation. Proc. Cambridge Phil. Soc. 22, 700–725 (1925)

Frieden, B.R.: Physics from the Fisher Information—A Unification, 2nd edn. Cambridge University Press, Cambridge (1998)

Gordon, R.G., Kim, Y.S.: Theory for the forces between closed-shell atoms and molecules. J. Chem. Phys. 56, 3122–3133 (1972)

Hirshfeld, F.L.: Bonded-atom fragments for describing molecular charge densities. Theoret. Chim. Acta (Berl.) 44, 129–138 (1977)

Hohenberg, P., Kohn, W.: Inhomogeneous electron gas. Phys. Rev. 136B, 864–871 (1964)

Kullback, S.: Information Theory and Statistics. Wiley, New York (1959)

Kullback, S., Leibler, R.A.: On information and sufficiency. Ann. Math. Stat. 22, 79–86 (1951)

López-Rosa, S.: Information-theoretic measures of atomic and molecular systems, PhD Thesis, University of Granada (2010)

Nalewajski, R.F.: Entropic measures of bond multiplicity from the information theory. J. Phys. Chem. A 104, 11940–11951 (2000)

Nalewajski, R.F.: Hirshfeld analysis of molecular densities: subsystem probabilities and charge sensitivities. Phys. Chem. Chem. Phys. 4, 1710–1721 (2002)

Nalewajski, R.F.: Information principles in the theory of electronic structure. Chem. Phys. Lett. 372, 28–34 (2003a)

Nalewajski, R.F.: Electronic structure and chemical reactivity: density functional and information theoretic perspectives. Adv. Quant. Chem. 43, 119–184 (2003b)

Nalewajski, R.F.: Information theoretic approach to fluctuations and electron flows between molecular fragments. J. Phys. Chem. A107, 3792–3802 (2003c)

Nalewajski, R.F.: Local information-distance thermodynamics of molecular fragments. Ann. Phys. Leipzig 13, 201–222 (2004a)

Nalewajski, R.F.: Communication theory approach to the chemical bond. Struct. Chem. 15, 391–403 (2004b)

Nalewajski, R.F.: Entropy descriptors of the chemical bond in information theory. I. Basic concepts and relations. Mol. Phys. 102, 531–546 (2004c)

Nalewajski, R.F.: Entropy descriptors of the chemical bond in information theory. II. Applications to simple models. Mol. Phys. 102, 547–566 (2004d)

Nalewajski, R.F.: Partial communication channels of molecular fragments and their entropy/information indices. Mol. Phys. 103, 451–470 (2005a)

Nalewajski, R.F.: Reduced communication channels of molecular fragments and their entropy/information bond indices. Theoret. Chem. Acc. 114, 4–18 (2005b)

Nalewajski, R.F.: Entropy/information bond indices of molecular fragments. J. Math. Chem. 38, 43–66 (2005c)

Nalewajski, R.F.: Information Theory of Molecular Systems. Elsevier, Amsterdam (2006a)

Nalewajski, R.F.: Electronic chemical potential as information temperature. Mol. Phys. 104, 255–261 (2006b)

Nalewajski, R.F.: Comparison between valence-bond and communication theories of the chemical bond in H2. Mol. Phys. 104, 365–375 (2006c)

Nalewajski, R.F.: Atomic resolution of bond descriptors in two-orbital model. Mol. Phys. 104, 493–501 (2006d)

Nalewajski, R.F.: Molecular communication channels of model excited electron configurations. Mol. Phys. 104, 1977–1988 (2006e)

Nalewajski, R.F.: Orbital resolution of molecular information channels and their stockholder atoms. Mol. Phys. 104, 2533–2543 (2006f)

Nalewajski, R.F.: Entropic bond indices from molecular information channels in orbital resolution: excited configurations. Mol. Phys. 104, 3339–3370 (2006g)

Nalewajski, R.F.: Information-scattering perspective on orbital hybridization. J. Phys. Chem. A 111, 4855–4861 (2007)

Nalewajski, R.F.: Use of Fisher information in quantum chemistry. Int. J. Quantum Chem. 108, 2230–2252 (2008a)

Nalewajski, R.F.: Entropic bond indices from molecular information channels in orbital resolution: ground-state systems. J. Math. Chem. 43, 265–284 (2008b)

Nalewajski, R.F.: Chemical bonds through probability scattering: information channels for intermediate-orbital stages. J. Math. Chem. 43, 780–830 (2008c)

Nalewajski, R.F.: Entropic bond descriptors of molecular information systems in local resolution. J. Math. Chem. 44, 414–445 (2008d)

Nalewajski, R.F.: On molecular similarity in communication theory of the chemical bond. J. Math. Chem. 45, 607–626 (2009a)

Nalewajski, R.F.: Communication-theory perspective on valence-bond theory. J. Math. Chem. 45, 709–724 (2009b)

Nalewajski, R.F.: Manifestations of the Pauli exclusion principle in communication theory of the chemical bond. J. Math. Chem. 45, 776–789 (2009c)

Nalewajski, R.F.: Entropic descriptors of the chemical bond in h2: local resolution of stockholder atoms. J. Math. Chem. 45, 1041–1054 (2009d)

Nalewajski, R.F.: Chemical bond descriptors from molecular information channels in orbital resolution. Int. J. Quantum Chem. 109, 425–440 (2009e)

Nalewajski, R.F.: Information origins of the chemical bond: bond descriptors from molecular communication channels in orbital resolution. Int. J. Quantum Chem. 109, 2495–2506 (2009f)

Nalewajski, R.F.: Multiple, localized and delocalized bonds in the orbital-communication theory of molecular systems. Adv. Quant. Chem. 56, 217–250 (2009g)

Nalewajski, R.F.: Information Origins of the Chemical Bond. Nova, New York (2010a)

Nalewajski, R.F.: Use of non-additive information measures in exploring molecular electronic structure: stockholder bonded atoms and role of kinetic energy in the chemical bond. J. Math. Chem. 47, 667–691 (2010b)

Nalewajski, R.F.: Additive and non-additive information channels in orbital communication theory of the chemical bond. J. Math. Chem. 47, 709–738 (2010c)

Nalewajski, R.F.: Through-space and through-bridge components of chemical bonds. J. Math. Chem. 49, 371–392 (2010d)

Nalewajski, R.F.: Chemical bonds from through-bridge orbital communications in prototype molecular systems. J. Math. Chem. 49, 546–561 (2010e)

Nalewajski, R.F.: Use of the bond-projected superposition principle in determining the conditional probabilities of orbital events in molecular fragments. J. Math. Chem. 49, 592–608 (2011a)

Nalewajski, R.F.: Entropy/information descriptors of the chemical bond revisited. J. Math. Chem. 49, 2308–2329 (2011b)

Nalewajski, R.F.: On interference of orbital communications in molecular systems. J. Math. Chem. 49, 806–815 (2011c)

Nalewajski, R.F.: Perspectives in Electronic Structure Theory. Springer, Berlin (2012a)

Nalewajski, R.F.: Information tools for probing chemical bonds. In: Putz, M.V. (ed.) Chemical Information and Computation Challenges in 21st century. Nova Science Publishers, NewYork (2012b) (in press)

Nalewajski, R.F.: Direct (through-space) and indirect (through-bridge) components of molecular bond multiplicities. Int. J. Quantum Chem. 112, 2355–2370 (2012c)

Nalewajski, R.F., Broniatowska, E.: Entropy displacement analysis of electron distributions in molecules and their Hirschfield atoms. J. Phys. Chem. A 107, 6270–6280 (2003a)

Nalewajski, R.F., Broniatowska, E.: Information distance approach to Hammond postulate. Chem. Phys. Lett. 376, 33–39 (2003b)

Nalewajski, R.F., Broniatowska, E.: Atoms-in-molecules from the stockholder partition of the molecular two-electron distribution. Theoret. Chem. Acc. 117, 7–27 (2007)

Nalewajski, R.F., Gurdek, P.: On the implicit bond-dependency origins of bridge interactions. J. Math. Chem. 49, 1226–1237 (2011)

Nalewajski, R.F., Jug, K.: Information distance analysis of bond multiplicities in model systems. In: Sen, K.D. (ed.) Reviews of Modern Quantum Chemistry: A Celebration of the Contributions of Robert G. Parr, vol. 1, pp. 148–203. World Scientific, Singapore (2002)

Nalewajski, R.F., Loska, R.: Bonded atoms in sodium chloride – the information theoretic approach. Theoret. Chem. Acc. 105, 374–382 (2001)

Nalewajski, R.F., Parr, R.G.: Information theory, atoms-in-molecules and molecular similarity. Proc. Natl. Acad. Sci. USA 97, 8879–8882 (2000)

Nalewajski, R.F., Parr, R.G.: Information theoretic thermodynamics of molecules and their fragments. J. Phys. Chem. A 105, 7391–7400 (2001)

Nalewajski, R.F., Gurdek, P.: Bond-order and entropic probes of the chemical bonds. Struct. Chem. (M. Witko issue) 23, 1383–1398 (2012)

Nalewajski, R.F.: Entropic representation in the theory of molecular electronic structure. J. Math. Chem. (in press, a)

Nalewajski, R.F.: Information theoretic multiplicities of chemical bond in Shull’s model of H2. J. Math. Chem. (in press, b)

Nalewajski, R.F.: Use of Harriman’s construction in determining molecular equilibrium states. J. Math. Chem. (in press, c)

Nalewajski, R.F.: Exploring molecular equilibria using quantum information measures. Ann. Phys. Leipzig (submitted)

Nalewajski, R.F., Świtka, E.: Information theoretic approach to molecular and reactive systems. Phys. Chem. Chem. Phys. 4, 4952–4958 (2002)

Nalewajski, R.F., Świtka, E., Michalak, A.: Information distance analysis of molecular electron densities. Int. J. Quantum Chem. 87, 198–213 (2002)

Nalewajski, R.F., Köster, A.M., Escalante, S.: Electron localization function as information measure. J. Phys. Chem. A 109, 10038–10043 (2005)

Nalewajski, R.F., de Silva, P., Mrozek, J.: Use of non-additive Fisher information in probing the chemical bonds. J. Mol. Struct. Theochem 954, 57–74 (2010)

Nalewajski, R.F., Szczepanik, D., Mrozek, J.: Bond differentiation and orbital decoupling in the orbital communication theory of the chemical bond. Adv. Quant. Chem. 61, 1–48 (2011)

Nalewajski, R.F., de Silva, P., Mrozek, J.: Kinetic-energy/Fisher-information indicators of chemical bonds. In: Roy, A.K. (ed.) Theoretical and Computational Developments in Modern Density Functional Theory. Nova Science Publishers, New York (2012a) (in press)

Nalewajski, R.F., Szczepanik, D., Mrozek, J.: Basis set dependence of molecular information channels and their entropic bond descriptors. J. Math. Chem. 50, 1437–1457 (2012b)

Parr, R.G., Ayers, P.W., Nalewajski, R.F.: What is an atom in a molecule? J. Phys. Chem. A 109, 3957–3959 (2005)

Pfeifer, P.E.: Concepts of Probability Theory, 2nd edn. Dover, New York (1978)

Savin, A., Nesper, R., Wengert, S., Fässler, T.F.: ELF: the electron localization function. Angew. Chem. Int. Ed. Engl. 36, 1808–1832 (1997)

Shannon, C.E.: The mathematical theory of communication. Bell Syst. Tech. J. 27, 379–493, 623–656 (1948)

Shannon, C.E., Weaver, W.: The Mathematical Theory of Communication. University of Illinois, Urbana (1949)

Silvi, B., Savin, A.: Classification of chemical bonds based on topological analysis of electron localization functions. Nature 371, 683–686 (1994)

von Weizsäcker, C.F.: Zur Theorie der Kernmassen. Z. Phys. 96, 431–458 (1935)

Wesołowski, T.: Hydrogen-bonding-induced shifts of the excitation energies in nucleic acid bases: an interplay between electrostatic and electron density overlap effects. J. Am. Chem. Soc. 126, 11444–11445 (2004a)

Wesołowski, T.: Quantum chemistry “without orbitals”—an old idea and recent developments. Chimia 58, 311–315 (2004b)

Wiberg, K.A.: Application of the Pople-Santry-Segal CNDO method to the cyclopropylcarbinyl and cyclobutyl cation and to bicuclobutane. Tetrahedron 24, 1083–1096 (1968)

Open Access

This article is distributed under the terms of the Creative Commons Attribution License which permits any use, distribution, and reproduction in any medium, provided the original author(s) and the source are credited.

Author information

Authors and Affiliations

Corresponding author

Additional information

Here A, A, and A denote the scalar quantity, row vector and a square or rectangular matrix, respectively.

Appendices

Appendix 1: Classical information measures

Here we briefly summarize the classical IT quantities, related to the particle probability distributions alone, which are used in this survey to describe the bonding patterns in molecules. We begin with the historically first (local) measure of Fisher (Fisher 1925; Frieden 1998), formulated in about the same time when the final shape of Quantum Theory has emerged. For the local events of finding an electron at r with probability p(r), the shape factor of the density \( \rho (\varvec{r}) = Np(\varvec{r}) \) in N-electron system, this approach defines the following gradient measure of the average information content,

This classical Fisher information in p(r), also called the intrinsic accuracy, is reminiscent of the familiar von Weizsäcker (1935) inhomogeneity correction to the kinetic energy functional in the Thomas–Fermi-Dirac theory. It characterizes the effective “localization” (compactness) of the random (position) variable around its average value. For example, in the normal (Gauss) distribution the Fisher information measures the inverse of its variance, i.e., the invariance. The functional I[p] can be simplified, when expressed in terms of classical probability amplitude \( A(\varvec{r}) = \sqrt {p(\varvec{r})} \):

In order to cover the quantum (complex) amplitudes in quantum mechanics, this classical measure has to be appropriately generalized as the square of the modulus of the wave-function gradient (Nalewajski 2010a, 2012b, 2008a). For example, in a single-electron state \( \psi (\varvec{r}) \), when \( p(\varvec{r}) = \psi^{*} (\varvec{r})\psi (\varvec{r}) = |\psi (\varvec{r})|^{2} \),

where \( T[\psi ] \) stands for the expectation value of the particle kinetic energy. Therefore, this quantum-generalized (local) measure of Fisher, proportional to the particle average kinetic energy, probes the length of the amplitude gradient \( \nabla \psi (\varvec{r}) \).

The other popular information measure has been introduced by Shannon (Shannon 1948; Shannon and Weaver 1949). This complementary (global) descriptor of the average information content in the normalized probability distribution p(r), or in its discrete representation p = {p i } of the orbital events in the molecule, \( \sum\nolimits_{i} p_{i} =\int p(\varvec{r})d\varvec{r} = 1 \),

called the Shannon entropy, reflects the indeterminacy (“spread”) of the random variable(s) involved around the corresponding average value(s). It measures the average amount of the information received, when this uncertainty is removed be an appropriate “localization” experiment.

An important generalization of this information measure, called the directed divergence, cross(relative)-entropy, or the entropy deficiency, has been proposed by Kullback and Leibler (Kullback and Leibler 1951, Kullback 1959). It reflects the information “distance” between two normalized distributions defined for the same set of elementary events. For example, the missing information \( \Updelta S[p|p^{0} ] \) in the continuous probability distribution p(r) relative to the reference probability density p 0(r) is given by the average value of the surprisal, \( I(\varvec{r}) = \log [p(\varvec{r})/p^{0} (\varvec{r})] \equiv \log w(\varvec{r}) \), the logarithm of the local enhancement factor w(r),

The information distance \( \Updelta S({\varvec{p}}|{\varvec{p}}^{0} ) \) between the two discrete distributions p = {p i } and p 0 = {p 0 i } similarly reads:

The (non-negative) entropy deficiency reflects the information similarity between the two compared probability distributions. The more they differ from one another the higher information distance, which identically vanishes only when they are identical: \( \Updelta S[{\varvec{p}}|{\varvec{p}}] = \Updelta S({\varvec{p}}|{\varvec{p}}) = 0. \)

For two mutually dependent probability vectors \( \varvec{P}(\varvec{a}) = \left\{ {P\left( {a_{i} } \right) = p_{i} } \right\} \equiv \varvec{p}\quad {\text{and}}\quad \varvec{P}(\varvec{b}) = \left\{ {P\left( {b_{j} } \right) = q_{j} } \right\} \equiv {\varvec{q}} \), for events a = {a i } and b = {b j }, respectively, one decomposes the joint probabilities of the simultaneous events \( \varvec{a} \wedge \varvec{b} = \{ a_{i} \wedge b_{j} \} ,{\mathbf{P}}(\varvec{a} \wedge \varvec{b}) = \{ P(a_{i} \wedge b_{j} ) = \pi_{i,j} \} \equiv {\varvec{\pi}} \), as products of the “marginal” probabilities of events in one set, say a, and the corresponding conditional probabilities \( {\mathbf{P}}(\varvec{b}|\varvec{a}) = \{ P(j|i)\} \) of outcomes in the other set b, given that the events a have already occurred: \( \{ \pi_{i,j} = p_{i}\,P(j|i)\} . \) The relevant normalization conditions for the joint and conditional probabilities then read:

The Shannon entropy of this “product” distribution of simultaneous events,

has been expressed above as the sum of the average entropy in the marginal probability distribution, S(p), and the average conditional entropy in q given p:

The latter represents the extra amount of uncertainty about the occurrence of events b, given that the events a are known to have occurred. In other words, the amount of information obtained as a result of simultaneously observing the events a and b equals to the amount of information in one set, say a, supplemented by the extra information provided by the occurrence of events in the other set b, when a are known to have occurred already. This additivity property is qualitatively illustrated in Fig. 14.

Schematic diagram of the conditional-entropy and mutual-information quantities for two dependent probability distributions p = P(a) and q = P(b). Two circles enclose areas representing the entropies S(p) and S(q) of two separate probability vectors, while their common (overlap) area corresponds to the mutual information I(p:q) in these two distributions. The remaining part of each circle represents the corresponding conditional entropy, S(p|q) or S(q|p), measuring the residual uncertainty about events in one set, when one has the full knowledge of the occurrence of events in the other set of outcomes. The area enclosed by the circle envelope then represents the entropy of the “product” (joint) distribution: \(S\left( \varvec{\uppi } \right) = S({\text{P}}(\user2{a} \wedge \user2{b})) = S\left( \user2{p} \right) + S\left( \user2{q} \right) - I\left( {\user2{p}:\user2{q}} \right) = S\left( \user2{p} \right) + S(\user2{q}|\user2{p}) = S\left( \user2{q} \right) + S(\user2{p}|\user2{q})\)

The common amount of information in two events a i and b j , I(i:j), measuring the information about a i provided by the occurrence of b j , or the information about b j provided by the occurrence of a i , is called the mutual information in two events:

It may take on any real value, positive, negative, or zero. It vanishes when both events are independent, i.e., when the occurrence of one event does not influence (or condition) the probability of the occurrence of the other event, and it is negative when the occurrence of one event makes a nonoccurrence of the other event more likely.

It also follows from the preceding equation that

where the self-information of the joint event \( I(i \wedge j) = - \log \pi_{i,j} \). Thus, the information in the joint occurrence of two events a i and b j is the information in the occurrence of a i plus that in the occurrence of b j minus the mutual information. Therefore, for independent events, when \( \pi_{i,j} = p_{i} q_{j} ,I\left( {i:j} \right) = 0\,{\text{and}}\,I\left( {i \wedge j} \right) = I\left( i \right) + I\left( j \right) \).

The mutual information of an event with itself defines its self-information: \( I\left( {i:i} \right) \equiv I\left( i \right) = \log [P(i|i)/p_{i} ] = - \log p_{i} \), since \( P(i|i) = 1 \). It vanishes when p i = 1, i.e., when there is no uncertainty about the occurrence of a i , so that the occurrence of this event removes no uncertainty, hence conveys no information. This quantity provides a measure of the uncertainty about the occurrence of the event, i.e., the information received, when the event actually occurs. The Shannon entropy of Eq. (34) can be thus interpreted as the mean value of self-informations in all individual events, e.g., \( S\left( \varvec{p} \right) = \sum_{i} p_{i} I\left( i \right). \)

One similarly defines the average mutual information in two probability distributions as the π-weighted mean value of the mutual information quantities for individual joint events:

where the equality holds for the independent distributions, when \( \varvec{\pi}_{i,j} =\varvec{\pi}_{i,j}^{0} = p_{i} q_{j} \). Indeed, the amount of uncertainty in q can only decrease when p has been known beforehand, \( S(\varvec{q}) \ge S(\varvec{q}|\varvec{p}) = S(\varvec{q}) - I(\varvec{p}:\varvec{q}) \), with equality being observed when two sets of events are independent, thus giving rise to the non-overlapping entropy circles. These average entropy/information relations are also illustrated in Fig. 14.