Abstract

The collision of two plane gravitational waves in Einstein’s theory of relativity can be described mathematically by a Goursat problem for the hyperbolic Ernst equation in a triangular domain. We use the integrable structure of the Ernst equation to present the solution of this problem via the solution of a Riemann–Hilbert problem. The formulation of the Riemann–Hilbert problem involves only the prescribed boundary data, thus the solution is as effective as the solution of a pure initial value problem via the inverse scattering transform. Our results are valid also for boundary data whose derivatives are unbounded at the triangle’s corners—this level of generality is crucial for the application to colliding gravitational waves. Remarkably, for data with a singular behavior of the form relevant for gravitational waves, it turns out that the singular integral operator underlying the Riemann–Hilbert formalism can be explicitly inverted at the boundary. In this way, we are able to show exactly how the behavior of the given data at the origin transfers into a singular behavior of the solution near the boundary.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Half a century after Einstein presented his theory of relativity, Ernst made the remarkable discovery that, in the presence of one space-like and one time-like Killing vector, the entire solution of the vacuum Einstein field equations reduces to solving a single equation for a complex-valued function \(\mathcal {E}\) of two variables [10]. This single equation, now known as the (elliptic) Ernst equation, has proved instrumental in the study and construction of stationary axisymmetric spacetimes, cf. [21].

It later became clear that a similar reduction of Einstein’s equations is possible also in the presence of two space-like Killing vectors, a situation relevant for the description of two colliding plane gravitational waves [4]. In this case the associated equation is known as the hyperbolic Ernst equation and can be written in the form

where the Ernst potential \(\mathcal {E}(x,y)\) is a complex-valued function of the two real variables (x, y) and subscripts denote partial derivatives.

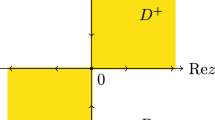

The problem of finding the nonlinear interaction of two plane gravitational waves following their collision has a distinguished history going back to the work of Khan and Penrose [19], Szekeres [36], Nutku and Halil [32], and Chandrasekhar and coauthors [4, 5]; see the monograph [17] for further references and historical remarks. In terms of the Ernst potential, this collision problem reduces to a Goursat problem for equation (1.1) in the triangular region D defined by (see Fig. 1)

More precisely, the problem can be formulated as follows (see [17] and the “Appendix”):

In this paper, we use the integrable structure of equation (1.1) and Riemann–Hilbert (RH) techniques to analyze the Goursat problem (1.3). We present four main results, denoted by Theorem 1–4:

Theorem 1 is a solution representation result: Assuming that the given data satisfy the following conditions for some \(n \ge 2\):

$$\begin{aligned} {\left\{ \begin{array}{ll} \mathcal {E}_0, \mathcal {E}_1 \in C([0,1)) \cap C^n((0,1)),\\ x^{\alpha } \mathcal {E}_{0x}, y^{\alpha } \mathcal {E}_{1y} \in C([0,1))\text { for some }\alpha \in [0,1),\\ \mathcal {E}_0(0) = \mathcal {E}_1(0) = 1,\\ \text {Re} \,\mathcal {E}_0(x)> 0\text { for }x \in [0,1),\\ \text {Re} \,\mathcal {E}_1(y) > 0\text { for }y \in [0,1), \end{array}\right. } \end{aligned}$$(1.4)and the Goursat problem (1.3) has a solution (in the precise sense specified in Definition 3.1), we give a representation formula for this solution. This formula is given in terms of the solution of a corresponding RH problem whose formulation only involves the given boundary data.

Theorem 2 is a uniqueness result: Assuming that the given data satisfy the conditions (1.4) for some \(n \ge 2\), we show that the solution of the Goursat problem (1.3) is unique, if it exists.

Theorem 3 is an existence and regularity result: Assuming that the given data satisfy the conditions (1.4) for some \(n \ge 2\), we show that there exists a unique solution \(\mathcal {E}\) of the problem (1.3) whenever the associated RH problem has a solution, and this \(\mathcal {E}\) has the same regularity as the given data. In the case of collinearly polarized waves, this yields existence for general data; for noncollinearly polarized waves, a small-norm assumption is also needed.

Theorem 4 provides exact formulas for the singular behavior of the solution \(\mathcal {E}\) near the boundary for data satisfying (1.4).

We emphasize that the assumptions (1.4) allow for functions \(\mathcal {E}_0(x)\) and \(\mathcal {E}_1(y)\) whose derivatives blow up as x and y approach the origin. This level of generality is necessary for the application to gravitational waves. Indeed, in order for the problem (1.3) to be relevant in the context of gravitational waves, it turns out that the solution should obey the conditions (see [17] and the “Appendix”)

where \(m_1\) and \(m_2\) are real constants such that \(m_1, m_2 \in [1, \sqrt{2})\) and \(\alpha = 1/2\). Remarkably, for data with a singular behavior at the origin of the form given in (1.4), the singular integral operator underlying the RH formalism can be explicitly inverted in the limit of small x or y. This leads to the characterization of the boundary behavior given in Theorem 4. In particular, it implies the following important conclusion for the collision of gravitational waves: A solution \(\mathcal {E}(x,y)\) of the Goursat problem for (1.1) fulfills (1.5) iff the boundary data are such that \(\lim _{x \downarrow 0} x^\alpha |\mathcal {E}_{0x}(x)|\) and \(\lim _{y \downarrow 0} y^\alpha |\mathcal {E}_{1y}(y)|\) lie in the interval \([1, \sqrt{2})\).

The assumptions \(\text {Re} \,\mathcal {E}_0(x) > 0\) and \(\text {Re} \,\mathcal {E}_1(y) > 0\) in (1.4) are natural because in the context of gravitational waves the real part of the Ernst potential is automatically strictly positive. The assumption \(\mathcal {E}_0(0) = \mathcal {E}_1(0)\) in (1.4) expresses the compatibility of the boundary values at the origin. If \(\mathcal {E}\) is a solution of (1.1), then so is \(a\mathcal {E} + ib\) for any choice of the real constants a and b. Thus, since \(\mathcal {E}(0,0) \ne 0\) as a consequence of the assumption \(\text {Re} \,\mathcal {E}_0(x) > 0\), there is no loss of generality in assuming that \(\mathcal {E}(0,0) = 1\).

The analysis of a boundary or initial-boundary value problem for an integrable equation is usually complicated by the fact that not all boundary values are known for a well-posed problem cf. [13]. This issue does not arise for (1.3) which is a Goursat problem. This means that the presented solution is as effective as the solution of the initial value problem via the inverse scattering transform for an equation such as the KdV or nonlinear Schrödinger equation.

Despite its great importance in the context of gravitational waves, there are few results in the literature on the Goursat problem (1.3). In fact, rather than solving a given initial or boundary value problem, most of the literature on the Ernst equation has dealt with the generation of new exact solutions via solution-generating techniques, cf. [17, 21, 22]. Solving an initial or boundary value problem is much more difficult than generating particular solutions. In fact, even if a large class of particular solutions are known, the problem of determining which of these solutions satisfies the given initial and boundary conditions remains a highly nonlinear problem, often as difficult as the original problem. As noted by Griffiths [17, p. 210], “What would be much more significant would be to find a practical way to determine the solution in the interaction region for an arbitrary set of initial conditions.”

Regarding the problem of determining the interaction of two colliding plane waves from arbitrary initial conditions, important first progress was made in a series of papers by Hauser and Ernst, see [18]. Their approach is based on the so-called Kinnersley H-potential [20] rather than on equation (1.1). In terms of the \(2\times 2\)-matrix valued Kinnersley potential H(r, s), the problem of determining the spacetime metric in the interaction region can be formulated as a Goursat problem in the triangular region

for the equation (see Eq. (2.10) in [18])

Hauser and Ernst were able to relate the solution of this problem to the solution of a homogeneous Hilbert problem. The analysis of [18] relies, at least implicitly, on the fact that equation (1.7) admits the Lax pair (see Eq. (3.1) in [18])

where \(P(r,s,\tau )\) is a \(2 \times 2\)-matrix valued eigenfunction and \(\tau \in {\mathbb {C}}\) is the spectral parameter.

The authors of [15] have addressed the Goursat problem in the triangle \(\Delta \) for the equation

where g(r, s) is a \(2 \times 2\)-matrix valued function. Equation (1.8) is related to the hyperbolic Ernst equation (1.1) as follows: Letting

Eq. (1.8) reduces to the scalar equation

which is related to Eq. (1.1) by the change of variables \(y = (r+1)/2\) and \(x = (1-s)/2\). Through a clever series of steps, the authors of [15] express the solution of (1.8) in terms of the solution of a RH problem.

Our approach here is inspired by the works [23, 25, 31] on the elliptic Ernst equation. We have also drawn some inspiration from [15] and [18], although in contrast to these references, we analyze equation (1.1). Two further differences between the present work and [15] are:

- (i)

It is assumed in [15] that the solution is \(C^2\) on all of \(\Delta \) up to and including the non-diagonal part of the boundary. However, as explained above (see equation (1.5)), the Ernst potentials relevant for gravitational waves have boundary values \(\mathcal {E}(x,0)\) and \(\mathcal {E}(0,y)\) whose derivatives are not continuous (actually unbounded) at the origin. Here we allow for such singularities in \(\mathcal {E}_x(x,0)\) and \(\mathcal {E}_y(0,y)\). These singularities transfer, in general, into singularities of the associated eigenfunction solutions of the Lax pair, and the rigorous treatment of all these singularities was one of the main challenges of the present work.

- (ii)

The normalization condition for the RH problem derived in [15] involves the solution itself; hence the solution representation is not effective. We circumvent this problem by defining the eigenfunctions on a Riemann surface \(\mathcal {S}_{(x,y)}\) with branch points at x and \(1-y\). The Riemann surface \(\mathcal {S}_{(x,y)}\) is dynamic in the sense that it depends on the spatial point (x, y). This dependence on (x, y) creates some technical difficulties which we handle by introducing a map \(F_{(x,y)}\) from \(\mathcal {S}_{(x,y)}\) to the standard Riemann sphere which takes the two moving branch points to the two fixed points \(-1\) and 1. After transferring the RH problem to the Riemann sphere in this way, we can analyze it using techniques from the theory of singular integral equations.

In the traditional implementation of the inverse scattering transform, the two equations in the Lax pair are treated separately—usually the spatial part of the Lax pair is first used to define the scattering data and the temporal part is then used to determine the time evolution. The Goursat problem (1.3) does not fit this pattern, so a different approach is required; this is one reason why the solution of the problem (1.3) has proved elusive. Actually, the approach in [15] was one of the first implementations of a general framework for the analysis of boundary value problems for integrable PDEs now known as the unified transform or Fokas method [11] (see also [3, 7, 12, 13, 34]). In this method the two equations in the Lax pair are analyzed simultaneously rather than separately [14]. The ideas of this method play an important role also in this paper.

It is an interesting open problem to investigate whether existence and uniqueness results for (1.3) can be obtained also via functional analytic techniques. As was explained already in Chapter IV of Goursat’s original treatise [16], existence and uniqueness results for Goursat problems for linear hyperbolic PDEs can be established by means of successive approximations and Riemann’s method (see also [6]). It is possible to extend these ideas to prove existence theorems also for certain nonlinear Goursat problems [35, 38]. However, even in the linear case, these theorems tend to assume that \(\{\mathcal {E}, \mathcal {E}_x, \mathcal {E}_y, \mathcal {E}_{xy}\}\) are all continuous [6, 16, 35], or at least that the boundary values are Lipschitz [38]. These conditions fail for the assumptions (1.4) relevant for gravitational waves.

We recently became aware of some relatively recent works in the physics literature which also address the initial value problem for two colliding plane gravitational waves [1, 2, 33]. Compared with these papers, our work is more mathematical in character and there appears to be little overlap.

Let us finally point out that many exact solutions describing colliding plane gravitational waves are known (see e.g. [5, 9, 32, 37]) and that there is a growing literature on colliding gravitational waves which are not necessarily plane (see e.g. [26, 27]).

1.1 Organization of the paper

We begin by establishing some notation in Sect. 2. Our main results (Theorems 1–4) are stated in Sect. 3.

In Sect. 4, as preparation for the general case, we analyze the special case in which the colliding waves have collinear polarization. In this case, the problem reduces to a problem for the so-called Euler–Darboux equation. We prove a theorem for this equation (Theorem 5) which is analogous to Theorem 1–4.

In Sect. 5, we discuss the Lax pair of equation (1.1) and analyze the spectral data as well as the uniqueness of the solution of the corresponding RH problem.

In Sect. 6, we present the proofs of Theorem 1–4.

Section 7 contains two short examples and the “Appendix” contains some background on the origin of the Goursat problem (1.3) in the context of colliding gravitational waves.

2 Notation

We introduce notation that will be used throughout the paper.

We let D denote the triangular region defined in (1.2) and displayed in Fig. 1. Given \(\delta > 0\), we let \(D_\delta \) denote the slightly smaller triangular region obtained by removing a narrow strip along the diagonal of D as follows (see Fig. 2):

The interiors of D and \(D_\delta \) will be denoted by \({{\,\mathrm{int}\,}}D\) and \({{\,\mathrm{int}\,}}D_\delta \), respectively. The Riemann sphere will be denoted by \(\hat{{\mathbb {C}}} = {\mathbb {C}}\cup \{\infty \}\).

The triangle \(D_\delta \) defined in (2.1)

2.1 The Riemann surface \(\mathcal {S}_{(x,y)}\)

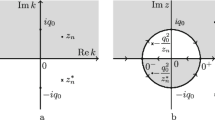

For each \((x,y) \in D\), we let \(\mathcal {S}_{(x,y)}\) denote the Riemann surface consisting of all points \(P := (\lambda , k) \in {\mathbb {C}}^2\) such that

together with two points \(\infty ^+=(1,\infty )\) and \(\infty ^-=(-1,\infty )\) at infinity and a branch point \(x \equiv (\infty ,x)\) which make the surface compact. The surface \(\mathcal {S}_{(x,y)}\) is two-sheeted in the sense that to each \(k \in \hat{{\mathbb {C}}} {\setminus } \{x, 1-y\}\), there correspond exactly two values of \(\lambda \). We introduce a branch cut in the complex k-plane from x to \(1-y\) and, for \(k \in \hat{{\mathbb {C}}}{\setminus } [x,1-y]\), we let \(k^+\) and \(k^-\) denote the corresponding points on the upper and lower sheet of \(\mathcal {S}_{(x,y)}\), respectively. By definition, the upper (lower) sheet is characterized by \(\lambda \rightarrow 1\) (\(\lambda \rightarrow -1\)) as \(k \rightarrow \infty \). Writing \(\lambda (x,y,P)\) for the value of \(\lambda \) corresponding to the point \(P \in \mathcal {S}_{(x,y)}\), we have

where the sign of the square root in (2.3) is chosen so that \(\lambda (x,y,k^+)\) has positive real part.

The map \(F_{(x,y)}:k \mapsto z = \frac{1+ \lambda }{1 - \lambda }\) is a biholomorphism from the two-sheeted Riemann surface \(\mathcal {S}_{(x,y)}\) to the Riemann sphere \(\hat{{\mathbb {C}}} = {\mathbb {C}}\cup \{\infty \}\). It maps the branch points x and \(1-y\) to \(z = -1\) and \(z = 1\), respectively, and the upper (lower) sheet to the outside (inside) of the unit circle

2.2 The map \(F_{(x,y)}\)

For each point \((x,y) \in D\), \(\mathcal {S}_{(x,y)}\) is a compact genus zero Riemann surface with branch points at \(k = x\) and \(k = 1-y\). In order to fix the locations of these branch points, we introduce a new variable z by

and let \(F_{(x,y)}:\mathcal {S}_{(x,y)} \rightarrow \hat{{\mathbb {C}}}\) be the map that sends P to z, i.e.,

For each \((x,y) \in D\), \(F_{(x,y)}\) is a biholomorphism (i.e. a bijective holomorphic function whose inverse is also holomorphic) from \(\mathcal {S}_{(x,y)}\) to \(\hat{{\mathbb {C}}}\) which maps the two branch points x and \(1-y\) to \(z = -1\) and \(z = 1\), respectively, see Fig. 3.

2.3 The contours \(\Sigma \) and \(\Gamma \)

For each \((x,y) \in D\), we let \(\Sigma _0 \equiv \Sigma _0(x,y)\) denote the shortest path from \(0^+\) to \(0^-\) in \(\mathcal {S}_{(x,y)}\), and we let \(\Sigma _1 \equiv \Sigma _1(x,y)\) denote the shortest path from \(1^-\) to \(1^+\) in \(\mathcal {S}_{(x,y)}\). More precisely,

where, for a subset S of the complex plane, we use the notation \(S^\pm = \{k^\pm \in \mathcal {S}_{(x,y)}\,| \, k \in S\}\) to denote the sets in the upper and lower sheets of \(\mathcal {S}_{(x,y)}\) which project onto S, see Fig. 4. We write \(\Sigma := \Sigma _0 \cup \Sigma _1\) for the union of \(\Sigma _0\) and \(\Sigma _1\).

Given \((x,y) \in D\), we let \(\Gamma _0 \equiv \Gamma _0(x,y)\) and \(\Gamma _1 \equiv \Gamma _1(x,y)\) denote two clockwise nonintersecting smooth contours in the complex z-plane which encircle the real intervals

and

respectively, but which do not encircle zero, see Fig. 5. We let \(\Gamma \equiv \Gamma (x,y)\) denote the union \(\Gamma := \Gamma _0 \cup \Gamma _1\) of \(\Gamma _0\) and \(\Gamma _1\).

2.4 Boundary values and function spaces

Let \(\Gamma \subset {\mathbb {C}}\) be a piecewise smooth oriented contour. For an analytic function \(m: {\mathbb {C}}{\setminus } \Gamma \rightarrow {\mathbb {C}}\) which extends continuously to \(\Gamma \) from either side, we denote the boundary values of m from the left and right sides of \(\Gamma \) by \(m_+\) and \(m_-\), respectively. Given a subset \(S \subset {\mathbb {R}}^n\), \(n \ge 1\), we let C(S) denote the space of complex-valued continuous functions on S. If S is open, we define \(C^n(S)\) as the space of complex-valued functions on S which are n times continuously differentiable, i.e., all partial derivatives of order \(\le n\) exist and are continuous. By \(\mathcal {B}(X,Y)\), we denote the space of bounded linear maps from a Banach space X to another Banach space Y equipped with the standard operator norm; if \(X = Y\), we write \(\mathcal {B}(X) \equiv \mathcal {B}(X,X)\).

3 Main results

We adopt the following notion of a \(C^n\)-solution of the Goursat problem (1.3).

Definition 3.1

Let \(\mathcal {E}_0(x)\), \(x \in [0, 1)\), and \(\mathcal {E}_1(y)\), \(y \in [0,1)\), be complex-valued functions. A function \(\mathcal {E}:D \rightarrow {\mathbb {R}}\) is called a \(C^n\)-solution of the Goursat problem for (1.1) inDwith data\(\{\mathcal {E}_0, \mathcal {E}_1\}\) if

We next state the four main results of the paper (Theorem 1–4), which all address different aspects of the Goursat problem (1.3).

In the formulation of Theorem 1–4, it is assumed that \(n \ge 2\) is an integer and that \(\mathcal {E}_0(x)\), \(x \in [0, 1)\), and \(\mathcal {E}_1(y)\), \(y \in [0,1)\), are two complex-valued functions satisfying the assumptions in (1.4) for a fixed \(\alpha \in [0,1)\). The first theorem provides a representation formula for the solution in terms of the given boundary data via a RH problem.

Theorem 1

(Representation formula) If \(\mathcal {E}(x,y)\) is a \(C^n\)-solution of the Goursat problem for (1.1) in D with data \(\{\mathcal {E}_0, \mathcal {E}_1\}\), then this solution can be expressed in terms of the boundary values \(\mathcal {E}_0(x)\) and \(\mathcal {E}_1(y)\) by

where m(x, y, z) is the unique solution of the \(2 \times 2\)-matrix RH problem

and the jump matrix v(x, y, z) is defined as follows: Let \(\Phi _0\) and \(\Phi _1\) be the unique solutions of the linear Volterra integral equations

where \(\textsf {U} _0\) and \(\textsf {V} _1\) are defined by

Then

Theorem 2 establishes uniqueness of the \(C^n\)-solution.

Theorem 2

(Uniqueness) The \(C^n\)-solution \(\mathcal {E}(x,y)\) of the Goursat problem for (1.1) in D with data \(\{\mathcal {E}_0, \mathcal {E}_1\}\) is unique, if it exists. In fact, the value of \(\mathcal {E}\) at a point \((x,y) \in D\) is uniquely determined by the boundary values \(\mathcal {E}_0(x')\) and \(\mathcal {E}_1(y')\) for \(0 \le x' \le x\) and \(0 \le y' \le y\).

Theorem 3 establishes existence of a \(C^n\)-solution—in the collinear case, for general data; otherwise under a small-norm assumption.

Theorem 3

(Existence and regularity) For each \(\delta > 0\), the following three existence and regularity results hold:

- (a)

Suppose the \(2 \times 2\)-matrix RH problem (3.2) has a solution for all \((x,y)\in D_\delta \). Then there exists a \(C^n\)-solution of the Goursat problem for (1.1) in \(D_\delta \) with data \(\{\mathcal {E}_0|_{[0,1-\delta )},\mathcal {E}_1|_{[0,1-\delta )} \}\).

- (b)

Whenever the \(L^1\)-norms of \(\mathcal {E}_{0x}/(\text {Re} \,\mathcal {E}_0)\) and \(\mathcal {E}_{1y}/(\text {Re} \,\mathcal {E}_1)\) on \([0,1-\delta )\) are sufficiently small, there exists a \(C^n\)-solution of the Goursat problem for (1.1) in \(D_\delta \) with data \(\{\mathcal {E}_0|_{[0,1-\delta )},\mathcal {E}_1|_{[0,1-\delta )}\}\).

- (c)

If \(\mathcal {E}_0, \mathcal {E}_1 >0\) on \([0,1-\delta )\), i.e., if the incoming waves are collinearly polarized, then there exists a \(C^n\)-solution of the Goursat problem for (1.1) in \(D_\delta \) with data \(\{\mathcal {E}_0|_{[0,1-\delta )},\mathcal {E}_1|_{[0,1-\delta )} \}\).

Remark 3.2

Part (a) of Theorem 3 shows that the solution \(\mathcal {E}(x,y)\) exists and has the same regularity as the given data as long as the associated RH problem has a solution. By taking \(\delta > 0\) arbitrarily small, we see that the same statement holds also in all of D.

Theorem 4 establishes explicit formulas for the singular behavior of the solution near the boundary in terms of the given data.

Theorem 4

(Boundary behavior) Let \(\alpha \in (0,1)\) and \(n \ge 2\) be an integer. Let \(\mathcal {E}(x,y)\) be a \(C^n\)-solution of the Goursat problem for (1.1) in D with data \(\{\mathcal {E}_0, \mathcal {E}_1\}\). Let \(m_1, m_2 \in {\mathbb {C}}\) denote the values of these functions at the origin, i.e.,

Then the solution \(\mathcal {E}(x,y)\) has the following behavior near the boundary:

In particular,

Remark 3.3

Theorem 4 yields the following important result for the collision of plane gravitational waves: A solution \(\mathcal {E}(x,y)\) of the Goursat problem for (1.1) fulfills the gravitational wave boundary conditions (1.5) if and only if the boundary data \(\mathcal {E}(x,0) = \mathcal {E}_0(x)\) and \(\mathcal {E}(0,y) = \mathcal {E}_1(y)\) are such that \(\lim _{x \downarrow 0} x^{\alpha } |\mathcal {E}_{0x}(x)|\) and \(\lim _{y \downarrow 0} y^{\alpha } |\mathcal {E}_{1y}(y)|\) belong to the real interval \([1, \sqrt{2})\). In particular, the behavior of \(\mathcal {E}_{x}(x,0)\) and \(\mathcal {E}_{y}(0,y)\) at the origin fully determines whether the functions \(\mathcal {E}_x(x,y)\) and \(\mathcal {E}_y(x,y)\) have the appropriate singular behavior near the edges \(\partial D \cap \{x =0\}\) and \(\partial D \cap \{y =0\}\).

4 Collinearly polarized waves

Before turning to the general case, it is useful to first consider the special case in which the Ernst potential \(\mathcal {E}\) is strictly positive. In the context of gravitational waves, this corresponds to the important situation when the two colliding waves have collinear polarization, see [17].

4.1 The Euler–Darboux equation

If the Ernst potential \(\mathcal {E}\) is strictly positive, we can write \(\mathcal {E}(x,y) = e^{-V(x,y)}\), where V(x, y) is a real-valued function. A simple computation then shows that \(\mathcal {E}\) satisfies the Ernst equation (1.1) if and only if V satisfies the linear hyperbolic equation

which is a version of the Euler–Darboux equation [29]. Since (4.1) is a linear equation, we can, without loss of generality, assume that V is real-valued and that \(V(0,0) =0\).

Remark 4.1

(Linear limit) In addition to being a reformulation of (1.1) in the special case of collinearly polarized waves, equation (4.1) can also be viewed as the linearized version of (1.1). Indeed, substituting \(\mathcal {E}(x,y) = 1 + \epsilon V(x,y) + O(\epsilon ^2)\) into (1.1) and considering the terms of \(O(\epsilon )\), we see that (4.1) is the linear limit of (1.1).

The analysis of the Euler–Darboux equation (4.1) presented in this section serves two purposes. First, it is used to prove the part of Theorem 3 regarding existence in the collinearly polarized case. Second, it turns out that the more difficult case of noncollinearly polarized solutions can be analyzed following steps which are conceptually very similar to—but technically more difficult than—those involved in the analysis of the collinear case. In fact, the analysis of (1.1) presented in later sections strongly relies on the insight gained in this section.

We are interested in the following Goursat problem for (4.1) in the triangle D: Given \(V_0(x)\), \(x \in [0, 1)\), and \(V_1(y)\), \(y \in [0,1)\), find a solution V(x, y) of (4.1) in D such that \(V(x,0) = V_0(x)\) for \(x \in [0,1)\) and \(V(0,y) = V_1(y)\) for \(y \in [0,1)\). We introduce a notion of \(C^n\)-solution of this problem as follows.

Definition 4.2

Let \(V_0(x)\), \(x \in [0, 1)\), and \(V_1(y)\), \(y \in [0,1)\), be real-valued functions and \(\alpha \in [0,1)\). We define a function \(V:D \rightarrow {\mathbb {R}}\) to be a \(C^n\)-solution of the Goursat problem for (4.1) inDwith data\(\{V_0, V_1\}\) if

The following theorem establishes the unique existence of a solution of the Goursat problem for (4.1) in D. It also provides a representation for the solution in terms of the boundary data and characterizes the singular behavior near the boundary.

Theorem 5

(Solution of the Euler–Darboux equation in a triangle) Let \(n \ge 2\) be an integer. Let \(V_0(x)\), \(x \in [0, 1)\), and \(V_1(y)\), \(y \in [0,1)\), be two real-valued functions such that

Then there exists a unique \(C^n\)-solution V(x, y) of the Goursat problem for (4.1) in D with data \(\{V_0, V_1\}\). Moreover, this solution is given in terms of the boundary values \(V_0(x)\) and \(V_1(y)\) by

where m(x, y, z) is the unique solution of the scalar RH problem

and the jump v(x, y, z) is defined by

with

Furthermore, if \(\alpha \in (0,1)\) is such that the functions \(x^\alpha V_{0x}\) and \(y^\alpha V_{1y}\) are continuous on [0, 1) and

then the solution V(x, y) has the following behavior near the boundary:

Remark 4.3

The scalar RH problem (4.4) has the unique solution

Hence the solution V(x, y) can be expressed in terms of v by

Collapsing the contour \(\Gamma \) in (4.9) onto the intervals in (2.5) and changing variables from z to k leads to the following representation for the solution in terms of Abel type integrals:

for \((x,y) \in D\). Formulas analogous to (4.10) for equation (4.1) have been derived in [18] and [15].

Remark 4.4

The representation (4.10) can be found more directly by formulating a RH problem for \(\Phi \) on \(\mathcal {S}_{(x,y)}\) with jump across \(\Sigma \). This is essentially the approach adopted in [15]. The representation (4.10) has the advantage that it is explicit in its dependence on \(V_0\) and \(V_1\), but it has the disadvantage that the integrands are singular at some of the endpoints of the integration intervals. These singularities complicate the verification that V satisfies the appropriate regularity and boundary conditions, especially in the situation relevant for gravitational waves where \(V_{0x}\) and \(V_{1y}\) are singular at the origin. For the nonlinear equation (1.1), this becomes a serious complication. For this reason, we have formulated the RH problems in Theorem 1 and Theorem 5 in terms of the contour \(\Gamma \) (which avoids the problematic endpoints of the intervals in (2.5)) rather than in terms of a contour running along the real axis. However, the representation (4.10) allows for applying more classical techniques. This approach is used in [28] to compute an asymptotic expansion of the solution near the diagonal of D.

Remark 4.5

In [36] there was derived an alternative integral formula for the solution of the Goursat problem for the Euler–Darboux equation by applying Riemann’s classical method [6, 16]. Whereas the representation (4.10) relies on Abel integrals, the expression of [36] is given in terms of the Legendre function \(P_{-1/2}\) of order \(-1/2\).

Remark 4.6

In order to emphasize the analogy between (1.1) and its linearized version (4.1), we will use the same symbols in this section for the various linearized quantities as we use elsewhere for the corresponding quantities of the nonlinear problem. Many quantities which are matrices in the noncollinear case reduce to scalar quantities in the collinear case. For example, in other sections \(\Phi \) will denote a \(2 \times 2\)-matrix valued eigenfunction, but in this section \(\Phi \) is a scalar-valued eigenfunction.

4.2 Proof of Theorem 5

The proof of Theorem 5 is divided into three parts. In the first part, we prove uniqueness and establish the solution representation formula (4.3). In the second part, we prove existence. In the third part, we consider the boundary behavior.

4.2.1 Proof of uniqueness and of (4.3)

Let \(V_0(x)\), \(x \in [0, 1)\), and \(V_1(y)\), \(y \in [0,1)\) be real-valued functions satisfying (4.2) for some \(n \ge 2\) and \(\alpha \in [0, 1)\). Suppose that V(x, y) is a \(C^n\)-solution of the Goursat problem for (4.1) in D with data \(\{V_0, V_1\}\). We will show that V(x, y) can be expressed in terms of \(V_0\) and \(V_1\) by (4.3).

Equation (4.1) admits the Lax pair

where \(\Phi (x,y,k)\) is an eigenfunction, \(\lambda = \lambda (x,y,k)\) is defined by (2.2), and k is a complex spectral parameter. Indeed, using the relations

it is straightforward to check that the compatibility condition \(\Phi _{xy} = \Phi _{yx}\) of (4.11) is equivalent to (4.1).

The occurrence of \(\lambda \) in (4.11) implies that the spectral parameter is naturally considered as an element of the Riemann surface \(\mathcal {S}_{(x,y)}\). Thus, we will henceforth view \(\Phi (x,y,\cdot )\) as a function defined on \(\mathcal {S}_{(x,y)}\) and write \(\Phi (x,y,P)\) for the value of \(\Phi \) at \(P = (\lambda , k) \in \mathcal {S}_{(x,y)}\). We emphasize, however, that the partial derivatives \(\Phi _x(x,y,P)\) and \(\lambda _x(x,y,P)\) (resp. \(\Phi _y(x,y,P)\) and \(\lambda _y(x,y,P)\)) are still computed with (y, k) (resp. (x, k)) held fixed (and \(\lambda \) allowed to change).

The basic idea in what follows is to write (4.11) in the differential form \(d\Phi = W\), where W denotes the one-form \(W = \lambda V_x dx + \frac{1}{\lambda } V_y dy\), and then define a solution \(\Phi \) of (4.11) by

Since the one-form W is closed, the integral on the right-hand side is independent of path. However, since W in general is singular on the boundary of D, we need to be more careful when defining \(\Phi \). We therefore choose to define \(\Phi \) using the specific contour which consists of the horizontal segment from (0, 0) to (x, 0) followed by the vertical segment from (x, 0) to (x, y) (see the left half of Fig. 6), that is, we define

Since \(x^\alpha V_x, y^\alpha V_y \in C(D)\), the integrals on the right-hand side of (4.12) are well-defined. The next lemma establishes several properties of \(\Phi \).

Lemma 4.7

(Solution of Lax pair equations) The function \(\Phi (x,y,P)\) defined in (4.12) has the following properties:

- (a)

\(\Phi \) can be alternatively expressed using the contour consisting of the vertical segment from (0, 0) to (0, y) followed by the horizontal segment from (0, y) to (x, y) (see the right half of Fig. 6):

$$\begin{aligned} \Phi (x,y, k^\pm )&= \int _0^y \lambda (0,y',k^\pm )^{-1} V_y(0,y') dy' + \int _0^x \lambda (x',y,k^\pm ) V_x(x',y) dx',\nonumber \\&\quad (x,y) \in D, \ k \in \hat{{\mathbb {C}}} {\setminus } [0,1]. \end{aligned}$$(4.13) - (b)

For each \(k \in \hat{{\mathbb {C}}} {\setminus } [0,1]\), the function \((x,y) \mapsto \Phi (x,y,k^+)\) is continuous on D and is \(C^n\) on \({{\,\mathrm{int}\,}}D\).

- (c)

For each \(k \in \hat{{\mathbb {C}}} {\setminus } [0,1]\), the functions

$$\begin{aligned}&(x,y) \mapsto x^\alpha \Phi _x(x,y,k^+), \quad (x,y) \mapsto y^\alpha \Phi _y(x,y,k^+), \\&(x,y) \mapsto x^\alpha y^\alpha \Phi _{xy}(x,y,k^+), \end{aligned}$$are continuous on D.

- (d)

\(\Phi \) obeys the symmetries

$$\begin{aligned} {\left\{ \begin{array}{ll} \Phi (x,y,k^+) = -\Phi (x, y, k^-),\\ \Phi (x,y,k^\pm ) = \overline{\Phi (x, y,\bar{k}^\pm )}, \end{array}\right. } \qquad (x,y) \in D, \ k \in \hat{{\mathbb {C}}} {\setminus } [0,1]. \end{aligned}$$ - (e)

For each \((x,y) \in D\), \(\Phi (x,y,P)\) extends continuously to an analytic function of \(P \in \mathcal {S}_{(x,y)} {\setminus } \Sigma \), where \(\Sigma = \Sigma _0 \cup \Sigma _1\) is the contour defined in (2.4).

- (f)

\(\Phi (x,y,\infty ^+) = V(x,y)\) for \((x,y) \in D\).

Proof

Let \((x,y) \in D\). In order to prove (a), we need to show that the expression

vanishes. Since \(x^\alpha V_x, y^\alpha V_y, x^\alpha y^\alpha V_{xy} \in C(D)\), the function \(\lambda (\cdot ,y',k^\pm )^{-1} V_y(\cdot ,y')\) is absolutely continuous on the compact interval [0, x] for each \(y' \in (0, y]\). Similarly, the function \(\lambda (x',\cdot ,k^\pm ) V_x(x',\cdot )\) is absolutely continuous on [0, y] for each \(x' \in (0, x]\). Hence, we can write (4.14) as

Since V is a solution of (4.1), the Lax pair compatibility condition \((\lambda V_x)_y = (\lambda ^{-1} V_y)_x\) is satisfied for \((x,y) \in {{\,\mathrm{int}\,}}D\). The assumption \(x^\alpha V_x, y^\alpha V_y, x^\alpha y^\alpha V_{xy} \in C(D)\) implies that \(V_x, V_y, V_{xy} \in L^1(D_\delta )\) for each \(\delta > 0\). Hence Fubini’s theorem implies that the expression in (4.15) vanishes. This proves (a). Moreover, if \(k \in \hat{{\mathbb {C}}} {\setminus } [0,1]\), then it follows from (4.12) and (4.13) that \(\Phi \) is a continuous function of \((x,y) \in D\) and a \(C^n\)-function of \((x,y) \in {{\,\mathrm{int}\,}}D\), which proves (b).

Let \(k \in \hat{{\mathbb {C}}} {\setminus } [0,1]\). Then

The assumption \(x^\alpha V_x \in C(D)\) implies that the right-hand side of (4.16) is a continuous function of \((x,y) \in D\). Similarly, we see that \(y^\alpha \Phi _y(x,y,k^+)\) and

are continuous functions of \((x,y) \in D\). This proves (c).

The symmetries in (d) are a consequence of the symmetries

and the definition (4.12) of \(\Phi \).

To prove (e), we note that \(\lambda (x',0,k^+)\) is an analytic function of \(k \in \hat{{\mathbb {C}}} {\setminus } [x',1]\) and \(\lambda (x,y',k^+)^{-1}\) is an analytic function of \(k \in \hat{{\mathbb {C}}} {\setminus } [x, 1-y']\). It follows that \(\Phi (x,y, k^+)\) and \(\Phi (x,y, k^-) = -\Phi (x,y, k^+)\) are analytic functions of \(k \in \hat{{\mathbb {C}}} {\setminus } [0,1]\). Moreover, since

we have

This shows that the values of \(\Phi \) on the upper and lower sheets of \(\mathcal {S}_{(x,y)}\) fit together across the branch cut; hence \(\Phi \) extends to an analytic function of \(P \in \mathcal {S}_{(x,y)} {\setminus } \Sigma \). This proves (e).

To prove (f), we note that \(\lambda (x,y,\infty ^+) = 1\) for all \((x,y) \in D\), which gives

Let \(\delta > 0\). Since \(V_{0x} \in L^1((1-\delta ))\), \(V_0\) belongs to the Sobolev space \(W^{1,1}((0,1-\delta ))\). Hence \(V_0\) is absolutely continuous on \((0, 1-\delta )\). Using that \(V_0\in C([0, 1))\), we see that \(V_0\) is absolutely continuous on the compact interval \([0, 1-\delta ]\). Hence,

Moreover, since \(V_y \in L^1(D_\delta )\), we have \(V_y(x, \cdot ) \in L^1((0,1-x-\delta ))\) for a.e. \(x \in [0,1-\delta )\). Hence \(V(x, \cdot ) \in W^{1,1}((0,1-x-\delta ))\) for a.e. \(x \in [0,1-\delta )\). Since V is also continuous on D, we conclude that \(V(x, \cdot )\) is absolutely continuous on the compact interval \([0,1-x-\delta ]\) for a.e. \(x \in [0,1-\delta )\). Hence,

Hence, substituting (4.19) and (4.20) into (4.18) yields

Since \(V(0,0) = 0\), part (f) follows. \(\square \)

Lemma 4.8

For each \((x,y) \in D\),

extend continuously to analytic functions \(\mathcal {S}_{(x,y)} {\setminus } \Sigma _1 \rightarrow {\mathbb {C}}\) and \(\mathcal {S}_{(x,y)} {\setminus } \Sigma _0 \rightarrow {\mathbb {C}}\), respectively.

Remark 4.9

The point P in (4.21) belongs to \(\mathcal {S}_{(x,y)}\) whereas the maps \(\Phi (x,0,\cdot )\) and \(\Phi (0,y,\cdot )\) are defined on \(\mathcal {S}_{(x,0)}\) and \(\mathcal {S}_{(0,y)}\), respectively. The interpretation of equation (4.21) therefore deserves a comment of clarification: If (x, y) and \((\tilde{x}, \tilde{y})\) are two points in D and F is a map from \(\mathcal {S}_{(x,y)}\) to some space X, then F naturally induces a map \(\tilde{F}\) from \(\mathcal {S}_{(\tilde{x}, \tilde{y})} {\setminus } \big ([0,1]^+ \cup [0,1]^-\big )\) to X according to \(\tilde{F}(k^\pm ) = F(k^\pm )\) for \(k \in \hat{{\mathbb {C}}} {\setminus } [0,1].\) We sometimes, as in (4.21) (and also in (3.5)), identify these two maps and simply write F for \(\tilde{F}\).

Proof of Lemma 4.8

Fix \((x,y) \in D\). Let U be an open set in \(\mathcal {S}_{(x,y)} {\setminus } \Sigma _0\). Then

where the values of \(\Phi (0,y,P)\) and \(\lambda (x',y,P)\) in (4.22) are to be interpreted as in Remark 4.9. Since

defines an analytic map \(U \rightarrow {\mathbb {C}}\) for each \(x' \in [0,x]\), the map (4.22) is also analytic for \(P \in U\). Since U was arbitrary, this establishes the desired statement for the second map in (4.21); the proof for the first map is similar. \(\square \)

Let \(\Omega _0\), \(\Omega _1\), and \(\Omega _\infty \) denote the three open components of \(\hat{{\mathbb {C}}} {\setminus } \Gamma \) chosen so that (see Fig. 7)

Lemma 4.10

The complex-valued function m(x, y, z) defined by

satisfies the RH problem (4.4) and the relation (4.3) for each \((x,y) \in D\).

Proof

Since \(F_{(x,y)}\) is a biholomorphism \(\mathcal {S}_{(x,y)} \rightarrow \hat{{\mathbb {C}}}\), we infer from Lemmas 4.7 and 4.8 that \(m(x,y, \cdot )\) is analytic in \(\hat{{\mathbb {C}}} {\setminus } \Gamma \) and \(m(x,y,z) =O(z^{-1})\) as \(z\rightarrow \infty \) for each \((x,y) \in D\). The jump condition in (4.4) holds as a consequence of the definition (4.5) of v(x, y, z) and the fact that

Finally, since \(0 \in \Omega _\infty \) and \(F_{(x,y)}^{-1}(0) = \infty ^-\), (4.24) and Lemma 4.7 yield

This proves (4.3). \(\square \)

We have showed that if V(x, y) is a \(C^n\)-solution of the Goursat problem for (4.1) in D with data \(\{V_0, V_1\}\), then V(x, y) can be expressed in terms of \(V_0\) and \(V_1\) by (4.3). This also proves that the solution V is unique if it exists, and completes the first part of the proof.

4.2.2 Proof of existence

The second part of the proof is devoted to proving existence. Let us therefore suppose that \(V_0(x)\), \(x \in [0, 1)\), and \(V_1(y)\), \(y \in [0,1)\) are real-valued functions satisfying (4.2) for some \(n \ge 2\). We will construct a solution V(x, y) of the associated Goursat problem as follows: Using the given data \(V_0\) and \(V_1\), we define \(\Phi _0(x,P)\) and \(\Phi _1(x,P)\) by (4.6). Then we define the jump matrix v by (4.5) and let m(x, y, z) denote the unique solution of the RH problem (4.4). Finally, we show that the function V(x, y) defined in terms of m(x, y, 0) via (4.3) constitutes a \(C^n\)-solution of the Goursat problem in D with data \(\{V_0, V_1\}\). The proof proceeds through a series of lemmas.

Lemma 4.11

(Solution of the x-part) The eigenfunction \(\Phi _0(x,P)\) defined in (4.6a) has the following properties:

- (a)

For each \(k \in \hat{{\mathbb {C}}} {\setminus } [0,1]\), the function \(x \mapsto \Phi _0(x,k^+)\) is continuous on [0, 1) and is \(C^n\) on (0, 1).

- (b)

\(\Phi _0\) obeys the symmetries

$$\begin{aligned} {\left\{ \begin{array}{ll} \Phi _0(x,k^+) = -\Phi _0(x, k^-),\\ \Phi _0(x,k^\pm ) = \overline{\Phi _0(x, \bar{k}^\pm )}, \end{array}\right. } \qquad x \in [0,1), \ k \in \hat{{\mathbb {C}}} {\setminus } [0, 1]. \end{aligned}$$(4.25) - (c)

For each \(x \in [0, 1)\), \(\Phi _0(x,P)\) extends continuously to an analytic function of \(P \in \mathcal {S}_{(x,0)} {\setminus } \Sigma _0\).

- (d)

\(\Phi _0(x,\infty ^+) = V_0(x)\) for \(x \in [0, 1)\).

- (e)

For each \(x \in (0,1)\), \(\Phi _{0x}(x,P)\) is an analytic function of \(P \in \mathcal {S}_{(x,0)}\) except for a simple pole (at most) at the branch point \(k = x\).

- (f)

For each \(x_0 \in (0,1)\) and each compact subset \(K \subset \hat{{\mathbb {C}}} {\setminus } [0, x_0]\),

$$\begin{aligned} x \mapsto \big (k \mapsto \Phi _0(x,k^+)\big ) \end{aligned}$$(4.26)is a continuous map \([0, x_0) \rightarrow L^\infty (K)\) and a \(C^n\)-map \((0, x_0) \rightarrow L^\infty (K)\). Moreover, \(x \mapsto (k \mapsto x^\alpha \Phi _{0x}(x,k^+))\) and \(x \mapsto (k\mapsto \Phi _{0k}(x,k^+)\) are continuous maps \([0, x_0) \rightarrow L^\infty (K)\).

Proof

If we note that \(\Phi _0(x,P)\) is analytic at the points \(1^\pm \in \mathcal {S}_{(x,0)}\) for each \(x \in [0, 1)\), the properties (a)-(d) follow immediately by setting \(y = 0\) in Lemma 4.7. Moreover, since \(\Phi _{0x}(x,k^\pm ) = \lambda (x,0,k^\pm ) V_{0x}(x)\) for \(x \in (0,1)\), property (e) follows from the definition of \(\lambda \).

It remains to prove (f). Fix \(x_0 \in (0,1)\) and let K be a compact subset \(\hat{{\mathbb {C}}} {\setminus } [0, x_0]\). The function \(\lambda (x,0,\cdot )\) is bounded on \(\mathcal {S}_{(x,0)}\) except for a simple pole at \(k = x\). Hence, for \(x_1, x_2 \in [0, x_0)\),

where the right-hand side tends to zero as \(x_2 \rightarrow x_1\) because \(V_{0x} \in L^1((0,x_0))\). This shows that the map (4.26) is continuous \([0, x_0) \rightarrow L^\infty (K)\).

If \(x \in (0, x_0)\), then

where \(\xi \) lies between x and \(x+h\). As \(h \rightarrow 0\), the right-hand side goes to zero. Hence (4.26) is differentiable as a map \((0,x_0) \rightarrow L^\infty (K)\) and the derivative satisfies \(\Phi _{0x}(x,k^+) = \lambda (x,0,k^+)V_{0x}(x)\). The same argument with \(\lambda _k\) instead of \(\lambda \) implies continuity of \(x \mapsto (k \mapsto \Phi _{0k}(x,k))\).

The map

is \(C^\infty \) from \((0, x_0)\) to \(L^\infty (K)\) and \(V_{0x}\) is \(C^{n-1}\) on (0, 1). Hence the map

is \(C^{n-1}\) from \((0, x_0)\) to \(L^\infty (K)\). It follows that (4.26) is a \(C^n\)-map \((0, x_0) \rightarrow L^\infty (K)\). Moreover, equation (4.16) evaluated at \(y = 0\) implies \(x \mapsto x^\alpha \Phi _{0x}(x,k^+)\) is continuous \([0, x_0) \rightarrow L^\infty (K)\). This proves (f) and completes the proof of the lemma. \(\square \)

In the same way that we constructed the eigenfunction \(\Phi _0(x,k)\) of the x-part, we can construct an eigenfunction \(\Phi _1(y,k)\) of the y-part.

Lemma 4.12

(Solution of the y-part) The eigenfunction \(\Phi _1(y,P)\) defined in (4.6b) has the following properties:

- (a)

For each \(k \in \hat{{\mathbb {C}}} {\setminus } [0,1]\), the function \(y \mapsto \Phi _1(y,k^+)\) is continuous on [0, 1) and is \(C^n\) on (0, 1).

- (b)

\(\Phi _1\) obeys the symmetries

$$\begin{aligned} {\left\{ \begin{array}{ll} \Phi _1(y,k^+) = -\Phi _1(y, k^-),\\ \Phi _1(y,k^\pm ) = \overline{\Phi _1(y, \bar{k}^\pm )}, \end{array}\right. } \qquad y \in [0,1), \ k \in \hat{{\mathbb {C}}} {\setminus } [0,1]. \end{aligned}$$(4.27) - (c)

For each \(y \in [0, 1)\), \(\Phi _1(y,P)\) extends continuously to an analytic function of \(P \in \mathcal {S}_{(0,y)} {\setminus } \Sigma _1\).

- (d)

\(\Phi _1(y,\infty ^+) = V_1(y)\) for \(y \in [0, 1)\).

- (e)

For each \(y \in (0,1)\), \(\Phi _{1y}(y,P)\) is an analytic function of \(P \in \mathcal {S}_{(0,y)}\) except for a simple pole at the branch point \(k = 1- y\).

- (f)

For each \(y_0 \in (0,1)\) and each compact subset \(K \subset \hat{{\mathbb {C}}} {\setminus } [1-y_0, 1]\),

$$\begin{aligned} y \mapsto \big (k \mapsto \Phi _1(y,k^+)\big ) \end{aligned}$$(4.28)is a continuous map \([0, y_0] \rightarrow L^\infty (K)\) and a \(C^n\)-map \((0, y_0) \rightarrow L^\infty (K)\). Moreover, \(y\mapsto (k \mapsto y^\alpha \Phi _{1y}(y,k^+))\) and \(y\mapsto (k \mapsto \Phi _{1k}(y,k^+))\) are continuous maps \([0, y_0) \rightarrow L^\infty (K)\).

Proof

The proof is analogous to that of Lemma 4.11. \(\square \)

Recall from the definition in Sect. 2 that the contour \(\Gamma \equiv \Gamma (x,y)\) consists of two nonintersecting clockwise loops \(\Gamma _0\) and \(\Gamma _1\) which encircle the intervals \(F_{(x,y)}(\Sigma _0)\) and \(F_{(x,y)}(\Sigma _1)\) respectively, but which do not encircle the origin. We are free to choose \(\Gamma _0\) and \(\Gamma _1\) as long as these requirements are met. It turns out to be convenient to choose \(\Gamma _0\) and \(\Gamma _1\) independent of (x, y). However, we see from (2.5) that the intervals \(F_{(x,y)}(\Sigma _0)\) and \(F_{(x,y)}(\Sigma _1)\) get arbitrarily close to the origin as (x, y) approaches the diagonal edge \(x+y=1\) of D. Hence we cannot take \(\Gamma \) independent of (x, y) for all \((x,y) \in D\). However, if we restrict ourselves to points (x, y) which lie in the slightly smaller triangle \(D_\delta \), \(\delta > 0\), defined in (2.1), then we can choose \(\Gamma \) independent of (x, y).

Thus, fix \(\delta \in (0,1)\) and choose \(\epsilon > 0\) so small that \(F_{(x,y)}(\Sigma _0)\) and \(F_{(x,y)}(\Sigma _1)\) are contained in the intervals \([-\epsilon ^{-1}, -\epsilon ]\) and \([\epsilon , \epsilon ^{-1}]\), respectively, for all \((x,y) \in D_\delta \). Fix two smooth nonintersecting clockwise contours \(\Gamma _0\) and \(\Gamma _1\) in the complex z-plane which encircle once the intervals \([-\epsilon ^{-1}, -\epsilon ]\) and \([\epsilon , \epsilon ^{-1}]\), respectively, but which do not encircle zero, see Fig. 8. Suppose also that \(\Gamma _0\) and \(\Gamma _1\) are invariant under the involutions \(z \mapsto z^{-1}\) and \(z \mapsto \bar{z}\). Let \(\Gamma = \Gamma _0 \cup \Gamma _1\) and, using this particular choice of \(\Gamma \), define V(x, y) for \((x,y) \in D_\delta \) by (4.9), i.e.,

where v(x, y, z) is given by (4.5). We will show that

Since \(\delta > 0\) can be chosen arbitrarily small, this will complete the proof of the theorem.

Consider the family of scalar RH problems given in (4.4) parametrized by the two parameters \((x,y) \in D_\delta \). For each \((x,y) \in D_\delta \), the unique solution of (4.4) is given by

Lemma 4.13

The map \((x,y) \mapsto v(x,y, \cdot )\) is continuous from \(D_\delta \) to \(L^\infty (\Gamma )\) and \(C^n\) from \({{\,\mathrm{int}\,}}D_\delta \) to \(L^\infty (\Gamma )\). Moreover, the three maps

are continuous from \(D_\delta \) to \(L^\infty (\Gamma )\).

Proof

The map \((x,y) \mapsto v(x,y, \cdot )\) is continuous from \(D_\delta \) to \(L^\infty (\Gamma )\) and \(C^n\) from \({{\,\mathrm{int}\,}}D_\delta \) to \(L^\infty (\Gamma )\) as a consequence of part (f) of Lemmas 4.11 and 4.12. Furthermore,

Part (f) of Lemma 4.11 implies that the terms \(x^\alpha \Phi _{0x}\big (x, F_{(x,y)}^{-1}(\cdot )\big )\) and \(\Phi _{0k}\big (x, F_{(x,y)}^{-1}(\cdot )\big )\) are continuous \(D_\delta \rightarrow L^\infty (\Gamma _0))\). Similarly, part (f) of Lemma 4.12 implies that the term \(\Phi _{1k}\big (y, F_{(x,y)}^{-1}(\cdot )\big )\) is continuous \(D_\delta \rightarrow L^\infty (\Gamma _1))\). We conclude that \((x,y) \mapsto x^\alpha v_x(x,y, \cdot )\) is continuous \(D_\delta \rightarrow L^\infty (\Gamma )\). The other two maps in (4.32) are treated in a similar way. \(\square \)

Lemma 4.14

The solution m(x, y, z) defined in (4.31) has the following properties:

- (a)

For each point \((x,y) \in D_\delta \), \(m(x,y,\cdot )\) obeys the symmetries

$$\begin{aligned} m(x,y,z) = m(x,y,0) - m(x,y,z^{-1}) = \overline{m(x,y,\bar{z})}, \qquad z \in \hat{{\mathbb {C}}} {\setminus } \Gamma . \end{aligned}$$(4.33) - (b)

For each \(z \in \hat{{\mathbb {C}}}{\setminus } \Gamma \), the map \((x,y) \mapsto m(x,y,z)\) is continuous from \(D_\delta \) to \({\mathbb {C}}\) and is \(C^n\) from \({{\,\mathrm{int}\,}}D_\delta \) to \({\mathbb {C}}\).

- (c)

For each \(z \in \hat{{\mathbb {C}}}{\setminus } \Gamma \), the three maps

$$\begin{aligned}&(x,y) \mapsto x^\alpha m_x(x,y, z), \qquad (x,y) \mapsto y^\alpha m_x(x,y, z), \\&(x,y) \mapsto x^\alpha y^\alpha m_{xy}(x,y, z), \end{aligned}$$are continuous from \(D_\delta \) to \({\mathbb {C}}\).

Proof

The symmetries in (4.25) and (4.27) show that v satisfies

These symmetries imply that \(m(x,y,0) - m(x,y,z^{-1})\) and \(\overline{m(x,y,\bar{z})}\) satisfy the same RH problem as m(x, y, z). Hence, by uniqueness, (4.33) holds. This proves (a).

For each \(z \in \hat{{\mathbb {C}}}{\setminus } \Gamma \), the map

is a bounded linear map \(L^\infty (\Gamma ) \rightarrow {\mathbb {C}}\). Hence properties (b) and (c) follow immediately from (4.31) and Lemma 4.13. \(\square \)

Given a contour \(\gamma \subset {\mathbb {C}}\), we use the notation \(N(\gamma )\) to denote an open tubular neighborhood of \(\gamma \). We extend the definition (4.5) of v to a tubular neighborhood \(N(\Gamma ) = N(\Gamma _0) \cup N(\Gamma _1)\) of \(\Gamma \) as follows, see Fig. 9:

We choose \(N(\Gamma )\) so narrow that it does not intersect the intervals \([-\epsilon ^{-1}, -\epsilon ]\) and \([\epsilon , \epsilon ^{-1}]\). Then, for each \((x,y) \in D_\delta \), \(v(x,y,\cdot )\) is an analytic function of \(z \in N(\Gamma )\). Using the notation \(z(x,y,P) := F_{(x,y)}(P)\), we can write (4.35) as

We define functions \(f_0(x,y,z)\) and \(f_1(x,y,z)\) for \((x,y) \in D_\delta \) by

Moreover, we let \(n_0(x,y,z)\) and \(n_1(x,y,z)\) denote the functions given by

and

Lemma 4.15

For each \((x,y) \in {{\,\mathrm{int}\,}}D_\delta \), it holds that

- (a)

\(n_0(x,y,z)\) is an analytic function of \(z \in \hat{{\mathbb {C}}} {\setminus } \{-1\}\) and has at most a simple pole at \(z = -1\).

- (b)

\(n_1(x,y,z)\) is an analytic function of \(z \in \hat{{\mathbb {C}}} {\setminus } \{1\}\) and has at most a simple pole at \(z = 1\).

- (c)

\(n_0(x,y,\infty ) = 0\) and \(n_0(x,y,0) = -2V_x(x,y)\).

- (d)

\(n_1(x,y,\infty ) = 0\) and \(n_1(x,y,0) = -2V_y(x,y)\).

Proof

Let \((x,y) \in {{\,\mathrm{int}\,}}D_\delta \). The function

is analytic for \(z \in \hat{{\mathbb {C}}}{\setminus } \{-1, \infty \}\) with simple poles at \(z = -1\) and \(z = \infty \). Equation (4.31) implies that \(m_z(x,y, z) = O(z^{-2})\) and \(m_x(x,y, z) = O(z^{-1})\) as \(z \rightarrow \infty \). Hence \(f_0(x,y,z)\) is analytic at \(z = \infty \). It follows that \(f_0(x,y,z)\) is analytic for all \(z \in \hat{{\mathbb {C}}}{\setminus } (\Gamma \cup \{-1\})\) with a simple pole at \(z = -1\) at most. Now \(f_0\) has continuous boundary values on \(\Gamma \) and satisfies the following jump condition across \(\Gamma \):

Differentiating (4.36) with respect to x and y and evaluating the resulting equations at \(k = F_{(x,y)}^{-1}(z)\), we find, for \((x,y) \in {{\,\mathrm{int}\,}}D_\delta \),

and

Using the first equations in (4.40) and (4.41) in (4.39), we conclude that \(f_0\) is analytic across \(\Gamma _1\) and has the following jump across \(\Gamma _0\):

Consequently, \(n_0\) is analytic across \(\Gamma \). Furthermore, by Lemma 4.11, \(\Phi _{0x}(x,F_{(x,y)}^{-1}(z))\) is analytic for \(z \in \hat{{\mathbb {C}}}{\setminus } \{-1\}\) with at most a simple pole at \(z = -1\). It follows that \(n_0\) satisfies (a). The proof of (b) is similar and relies on the second equations in (4.40) and (4.41).

Using (4.38) in the definition (4.37a) of \(n_0\), we can write

Since \(m_z(x,y, z) = O(z^{-2})\) and \(m_x(x,y, z) = O(z^{-1})\) as \(z \rightarrow \infty \), this gives \(n_0(x,y,\infty ) = 0\). On the other hand, evaluating (4.43) at \(z = 0\), we find \(n_0(x,y,0) = m_x(x,y,0) = -2V_x(x,y)\). This proves (c); the proof of (d) is analogous. \(\square \)

Equation (4.24) suggests that we define a function \(\Phi (x,y,P)\) for \((x,y) \in D_\delta \) and \(P \in F_{(x,y)}^{-1}(\Omega _\infty ) \subset \mathcal {S}_{(x,y)}\) by

Lemma 4.16

The function \(\Phi \) defined in (4.44) satisfies the Lax pair equations

for \((x,y) \in {{\,\mathrm{int}\,}}D_\delta \) and \(P \in F_{(x,y)}^{-1}(\Omega _\infty )\).

Proof

The analyticity structure of \(n_0\) established in Lemma 4.15 implies that there exists a function C(x, y) independent of z such that

We determine C(x, y) by evaluating (4.46) at \(z = 0\). By Lemma 4.15 (d), this gives \(C(x,y) = -2V_x(x,y)\). It follows that

Note that we did not exclude that \(n_0\) is free of singularities. In this case we have \(C=-2V_x=0\) by Lemma 4.15.

Differentiating (4.44) with respect to x and using (4.43) and (4.47), we find, for \(P \in F_{(x,y)}^{-1}(\Omega _\infty )\),

Since

this yields the first equation in (4.45). A similar argument gives the second equation in (4.45). This proves the lemma. \(\square \)

Lemma 4.17

The real-valued function \(V:D \rightarrow {\mathbb {R}}\) defined by (4.29) has the properties listed in (4.30).

Proof

The function \(V(x,y) = -\frac{1}{2}m(x,y,0)\) is real-valued by (4.33). Moreover, by part (b) of Lemma 4.14, the map \((x,y) \mapsto m(x,y,0)\) is continuous from \(D_\delta \) to \({\mathbb {C}}\) and is \(C^n\) from \({{\,\mathrm{int}\,}}D_\delta \) to \({\mathbb {C}}\). Hence \(V \in C(D_\delta ) \cap C^n({{\,\mathrm{int}\,}}D_\delta )\). Similarly, part (c) of Lemma 4.14 implies that \(x^\alpha V_x, y^\alpha V_y, x^\alpha y^\alpha V_{xy} \in C(D_\delta )\).

Let \(P = (\lambda , k)\) be a point in \(F_{(x,y)}^{-1}(\Omega _\infty ) \subset \mathcal {S}_{(x,y)}\). For each fixed \(k \in \hat{{\mathbb {C}}}\) with \(k^+ \in F_{(x,y)}^{-1}(\Omega _\infty )\), the map \((x,y) \rightarrow \Phi (x,y,k^+)\) is \(C^n\) from \({{\,\mathrm{int}\,}}D_\delta \) to \({\mathbb {C}}\). By Lemma 4.16, it satisfies the Lax pair equations (4.45). Since \(n \ge 2\), it follows that

It follows that V(x, y) satisfies Euler–Darboux equation (4.1) for \((x,y) \in {{\,\mathrm{int}\,}}D_\delta \).

Finally, we show that \(V(x,0) = V_0(x)\) for \(x \in [0, 1-\delta )\); the proof that \(V(0,y) = V_1(y)\) for \(y \in [0,1-\delta )\) is similar. By definitions (4.29) and (4.5) of V and v, we have

But \(\Phi _0(x,F_{(x,0)}^{-1}(z))\) is analytic for \(z \in \hat{{\mathbb {C}}} {\setminus } [-\epsilon ^{-1}, -\epsilon ]\) by Lemma 4.11, so using Cauchy’s formula to compute the contributions from \(z = 0\) and \(z = \infty \), we find

This completes the proof of the lemma. Since \(\delta > 0\) was arbitrary, it also completes the proof of existence. \(\square \)

4.2.3 Proof of boundary behavior

Let \(V_0(x)\), \(x \in [0, 1)\), and \(V_1(y)\), \(y \in [0,1)\) be real-valued functions satisfying (4.2) for some \(n \ge 2\) and some \(\alpha \in (0, 1)\). Suppose V(x, y) is a \(C^n\)-solution of the Goursat problem for (4.1) in D with data \(\{V_0, V_1\}\) and define \(m_1, m_2 \in {\mathbb {R}}\) by (4.7). By (4.9), we have

Hence

Now

so

It follows from Lemmas 4.11 and 4.12 that the last two integrals on the right-hand side of (4.48) remain bounded as \(x \downarrow 0\). Moreover,

Using that \(F_{(0,y)}^{-1}(z)= -\frac{(y-1) (z+1)^2}{4 z}\), we find

where the square roots have positive (negative) real part for \(|z| > 1\) (\(|z| < 1\)). Thus

where the square root has a branch cut along the interval \([\frac{1-\sqrt{y}}{1+\sqrt{y}}, \frac{1+\sqrt{y}}{1-\sqrt{y}}]\) and the branch is fixed so that the root has positive real part for \(z < 0\). Hence

This proves (4.8a); the proof of (4.8b) is similar. Thus the proof of Theorem 5 is complete.

5 Lax pair and eigenfunctions

In this section we introduce a Lax pair for (1.1) and define appropriate eigenfunctions in preparation for the proofs of Theorems 1–4.

5.1 Lax pair

The hyperbolic Ernst equation (1.1) admits the Lax pair

where k is the spectral parameter, the function \(\Phi (x,y, k)\) is a \(2 \times 2\)-matrix valued eigenfunction, and the \(2\times 2\)-matrix valued functions \(\textsf {U} (x,y,k)\) and \(\textsf {V} (x,y,k)\) are defined as follows:

with \(\lambda \) given by (2.2). This Lax pair is easily obtained from the Lax pair for the elliptic Ernst equation [30, 31] by making small modifications (the same Lax pair is used in [33]).

We write (5.1) in terms of differential forms as

where W is the closed one-form

As in Sect. 4, we will view the map \(\Phi (x,y,\cdot )\) as being defined on the Riemann surface \(\mathcal {S}_{(x,y)}\) and write \(\Phi (x,y,P)\) for the value of \(\Phi \) at \(P = (\lambda , k) \in \mathcal {S}_{(x,y)}\).

5.2 Spectral analysis

Suppose that \(\mathcal {E}_0(x)\), \(x \in [0, 1)\), and \(\mathcal {E}_1(y)\), \(y \in [0,1)\) are real-valued functions satisfying (1.4) for some \(n \ge 2\). Let \(\textsf {U} _0\) and \(\textsf {V} _1\) be given by (3.4), i.e., \(\textsf {U} _0\) and \(\textsf {V} _1\) denote the functions \(\textsf {U} \) and \(\textsf {V} \) evaluated at \(y = 0\) and \(x = 0\), respectively. Let \(\Phi _0(x,P)\) and \(\Phi _1(y,P)\) be the eigenfunctions defined in terms of \(\mathcal {E}_0\) and \(\mathcal {E}_1\) via the Volterra integral equations (3.3).

Lemma 5.1

(Solution of the x-part) The eigenfunction \(\Phi _0(x,P)\) defined via the Volterra integral equation (3.3) has the following properties:

- (a)

For each \(k \in \hat{{\mathbb {C}}} {\setminus } [0,1]\), the function \(x \mapsto \Phi _0(x,k^+)\) is continuous on [0, 1) and is \(C^n\) on (0, 1). Furthermore, for each \(x \in [0,1)\), the function \(k \mapsto \Phi _0(x,k^+)\) is analytic on \(\hat{{\mathbb {C}}} {\setminus } [0,1]\).

- (b)

\(\Phi _0\) obeys the symmetries

$$\begin{aligned} {\left\{ \begin{array}{ll} \Phi _0(x,k^+) = \sigma _3\Phi _0(x, k^-)\sigma _3,\\ \Phi _0(x,k^\pm ) = \sigma _1\overline{\Phi _0(x, \bar{k}^\pm )}\sigma _1, \end{array}\right. } \qquad x \in [0,1), \ k \in \hat{{\mathbb {C}}} {\setminus } [0, 1]. \end{aligned}$$(5.4) - (c)

For each \(x \in [0, 1)\), \(\Phi _0(x,P)\) extends continuously to an analytic function of \(P \in \mathcal {S}_{(x,0)} {\setminus } \Sigma _0\).

- (d)

The value of \(\Phi _0\) at \(P = \infty ^+\) is given by

$$\begin{aligned} \Phi _0(x,\infty ^+) = \frac{1}{2} \begin{pmatrix} \overline{\mathcal {E}_0(x)} &{} 1 \\ \mathcal {E}_0(x) &{} -1 \end{pmatrix}\begin{pmatrix}1 &{} 1 \\ 1 &{} -1 \end{pmatrix}, \qquad x \in [0, 1). \end{aligned}$$(5.5) - (e)

The determinant of \(\Phi _0\) is given by

$$\begin{aligned} \det \Phi _0(x,P) = \text {Re} \,\mathcal {E}_0(x), \qquad x \in [0,1), \ P \in \mathcal {S}_{(x,0)} {\setminus } \Sigma _0. \end{aligned}$$(5.6) - (f)

For each \(x_0 \in (0,1)\) and each compact subset \(K \subset \hat{{\mathbb {C}}} {\setminus } [0, x_0]\),

$$\begin{aligned} x \mapsto \big (k \mapsto \Phi _0(x,k^+)\big ) \end{aligned}$$(5.7)is a continuous map \([0, x_0) \rightarrow L^\infty (K)\) and a \(C^n\)-map \((0, x_0) \rightarrow L^\infty (K)\). Moreover, the map \(x \mapsto \big ( k \mapsto x^\alpha \Phi _{0x}(x,k^+) \big )\) is continuous \([0, x_0) \rightarrow L^\infty (K)\).

Remark 5.2

Some of the properties listed in Lemma 5.1 can be found in [33].

Proof

We first use successive approximations to show that the integral equation

has a unique solution for each \(k \in \hat{{\mathbb {C}}} {\setminus } [0,1]\). Let K be a compact subset of \(\hat{{\mathbb {C}}} {\setminus } [0,1]\). Let \(\Phi _0^{(0)} = I\) and define \(\Phi _0^{(j)}(x,k^+)\) for \(j \ge 1\) inductively by

Then

The function \(\lambda (x,0,k^+)\) is analytic for \(k \in \hat{{\mathbb {C}}} {\setminus } [x,1]\); in particular, it is a bounded function of \(k \in K\) for each fixed \(x \in [0,1)\). In view of the assumptions (1.4), this implies

where the function C(x) is bounded on each compact subset of [0, 1). Thus

Hence the series

converges absolutely and uniformly for \(k \in K\) and x in compact subsets of [0, 1) to a continuous solution \(\Phi _0(x,k^+)\) of (5.8). The fact that \(x \mapsto \Phi _0(x,k^+) \in C^n((0,1)) \) follows from differentiating \(x\mapsto \Phi _0^{(j)}(x,k^+)\) and applying estimates similar to (5.10) to the derivative. Differentiating (with respect to k) under the integral sign in (5.9), we see that \(k \mapsto \Phi _0^{(j)}(x, k^+)\) is analytic on \({{\,\mathrm{int}\,}}K\) for each j; the uniform convergence then proves that \(k \mapsto \Phi _0(x, k^+)\) is analytic on \({{\,\mathrm{int}\,}}K\). A similar argument applies to the integral equation defining \(\Phi _0(x,k^-)\). We conclude that the functions \(\Phi _0(x,k^+)\) and \(\Phi _0(x,k^-)\) are well-defined for \(x \in [0,1)\) and \(k \in \hat{{\mathbb {C}}} {\setminus } [0,1]\) and are analytic functions of \(k \in \hat{{\mathbb {C}}} {\setminus } [0,1]\) for each fixed x.

We next show uniqueness. Assume that \(\tilde{\Phi }_0\) is another solution of the Volterra equation (5.8) such that \(x \mapsto \Phi _0(x,k^\pm )\) is continuous on [0, 1), respectively, and let \(\Psi =\Phi _0 - \tilde{\Phi }_0\). Then \(\Psi \) is a solution of the homogeneous equation

Iterating this yields

Hence, as in the proof of existence, we get the estimate

which yields \(\Psi = 0\). This proves (a).

The symmetries (4.17) of \(\lambda \) show that

Hence \(\sigma _3\Phi _0(x, k^-)\sigma _3\) and \(\sigma _1\overline{\Phi _0(x, \bar{k}^+)}\sigma _1\) satisfy the same Volterra equation as \(\Phi _0(x,k^+)\). By uniqueness, all three functions must be equal. This proves (b).

We next show that \(\Phi _0(x, k^\pm )\) can be continuously extended across the branch cut to an analytic function on \(\mathcal {S}_{(x,0)} {\setminus } \Sigma _0\). Since \(\textsf {U} _0(x,k^\pm )\) has continuous boundary values on the interval (x, 1), the above argument (applied with a K that reaches up to the boundary) shows that \(\Phi _0(x,k^\pm )\) also has continuous boundary values on (x, 1). Moreover, since

the boundary functions \(\Phi (x,0, (k + i0)^+)\) and \(\Phi (x,0, (k - i0)^-)\) satisfy the same integral equation, so by uniqueness they are equal:

Hence the values of \(\Phi _0\) on the upper and lower sheets of \(\mathcal {S}_{(x,0)}\) fit together across the branch cut (x, 1), showing that \(\Phi _0\) extends to an analytic function of \(P \in \mathcal {S}_{(x,0)} {\setminus } \big (\Sigma _0 \cup \{1\}\big )\). But \(\lambda (x,0,P)\) is bounded in a neighborhood of the branch point 1, hence the possible singularity of \(\Phi _0(x,P)\) at this point must be removable. This shows that \(\Phi _0\) satisfies (c).

Since \(\lambda (x,y,\infty ^+) = 1\), \(\Phi _0(x,\infty ^+)\) satisfies the equation

This equation has the two linearly independent solutions

Hence there exists a constant matrix A such that

We determine A by evaluating this equation at \(x = 0\) and using that \(\mathcal {E}_0(0) = 1\) and \(\Phi _0(0, \infty ^+) = I\). This yields (5.5) and proves (d).

The proof of (e) relies on the general identity

where \(B = B(x)\) is a differentiable matrix-valued function taking values in \(GL(n, {\mathbb {C}})\). We find

This relation is valid at least for small x because \(\Phi _0(0, k^\pm ) = I\) is invertible. In fact, since \(\text {Re} \,\mathcal {E}_0(x) > 0\) for \(x \in [0,1)\) by assumption (1.4), it extends to all of [0, 1) and we infer that, for \(P \in \mathcal {S}_{(x,0)} {\setminus } \Sigma _0\),

where \(C(P) \in {\mathbb {C}}\) is independent of x. Evaluation at \(x = 0\) gives \(C(P) = 1\). This proves (e).

It remains to prove (f). Fix \(x_0 \in (0,1)\) and let K be a compact subset of \(\hat{{\mathbb {C}}} {\setminus } [0, x_0]\). The function \(\lambda (x,0,\cdot )\) is bounded on \(\mathcal {S}_{(x,0)}\) except for a simple pole at \(k = x\). Hence,

where the right-hand side tends to zero as \(x_2 \rightarrow x_1\), because

where \(C(x_0)\) is chosen as in the proof of (a). This shows that the map (5.7) is continuous \([0, x_0) \rightarrow L^\infty (K)\). If \(x \in (0, x_0)\), then

where \(\xi \) lies between x and \(x+h\). As \(h \rightarrow 0\), the right-hand side goes to zero. Hence (5.7) is differentiable as a map \((0,x_0) \rightarrow L^\infty (K)\) and the derivative satisfies \(\Phi _{0x}(x,k^+) = \textsf {U} _0(x,k^+)\Phi _{0}(x)\). Furthermore, the map

is \(C^\infty \) from \((0, x_0)\) to \(L^\infty (K)\) and \(\mathcal {E}_{0}\) is \(C^{n}\) on (0, 1). Hence the map

is \(C^{n-1}\) from \((0, x_0)\) to \(L^\infty (K)\). It follows that (5.7) is a \(C^n\)-map \((0, x_0) \rightarrow L^\infty (K)\).

Finally, since

we see that \(x \mapsto x^\alpha \Phi _{0x}(x,k^+)\) is continuous \([0, x_0) \rightarrow L^\infty (K)\). This proves (f) and completes the proof of the lemma. \(\square \)

Lemma 5.3

(Solution of the y-part) The eigenfunction \(\Phi _1(y,P)\) is well-defined for \(y \in [0,1)\) and \(P \in \mathcal {S}_{(0,y)} {\setminus } \Sigma _1\) and has the following properties:

- (a)

For each \(k \in \hat{{\mathbb {C}}} {\setminus } [0,1]\), the function \(y \mapsto \Phi _1(y,k^+)\) is continuous on [0, 1) and is \(C^n\) on (0, 1). Furthermore, for each \(y \in [0,1)\), the function \(k \mapsto \Phi _1(y,k^+)\) is analytic on \(\hat{{\mathbb {C}}} {\setminus } [0,1]\).

- (b)

\(\Phi _1\) obeys the symmetries

$$\begin{aligned} {\left\{ \begin{array}{ll} \Phi _1(y,k^+) = \sigma _3\Phi _1(y, k^-)\sigma _3,\\ \Phi _1(y,k^\pm ) = \sigma _1\overline{\Phi _1(y, \bar{k}^\pm )}\sigma _1, \end{array}\right. } \qquad y \in [0,1), \ k \in \hat{{\mathbb {C}}} {\setminus } [0, 1]. \end{aligned}$$(5.12) - (c)

For each \(y \in [0, 1)\), \(\Phi _1(y,P)\) is an analytic function of \(P \in \mathcal {S}_{(0,y)} {\setminus } \Sigma _1\).

- (d)

The value of \(\Phi _1\) at \(P = \infty ^+\) is given by

$$\begin{aligned} \Phi _1(y,\infty ^+) = \frac{1}{2} \begin{pmatrix} \overline{\mathcal {E}_1(y)} &{} 1 \\ \mathcal {E}_1(y) &{} -1 \end{pmatrix}\begin{pmatrix}1 &{} 1 \\ 1 &{} -1 \end{pmatrix}, \qquad y \in [0, 1). \end{aligned}$$(5.13) - (e)

The determinant of \(\Phi _1\) is given by

$$\begin{aligned} \det \Phi _1(y,P) = \text {Re} \,\mathcal {E}_1(y), \qquad y \in [0,1), \ P \in \mathcal {S}_{(0,y)} {\setminus } \Sigma _1. \end{aligned}$$(5.14) - (f)

For each \(y_0 \in (0,1)\) and each compact subset \(K \subset \hat{{\mathbb {C}}} {\setminus } [1-y_0,1]\),

$$\begin{aligned} y \mapsto \big (k \mapsto \Phi _1(y,k^+)\big ) \end{aligned}$$(5.15)is a continuous map \([0, y_0) \rightarrow L^\infty (K)\) and a \(C^n\)-map \((0, y_0) \rightarrow L^\infty (K)\). Moreover, the map \(y \mapsto \big ( k \mapsto y^\alpha \Phi _{1y}(y,k^+)\big )\) is continuous \([0, y_0) \rightarrow L^\infty (K)\).

Proof

The proof is similar to that of Lemma 5.1. \(\square \)

5.3 Uniqueness

The following lemma ensures uniqueness of the solution of the RH problem (3.2). The proof relies on the fact that the determinant of the jump matrix v defined in (3.5) is constant on each of the subcontours \(\Gamma _0\) and \(\Gamma _1\).

Lemma 5.4

Suppose that \(\mathcal {E}_0(x)\), \(x \in [0, 1)\), and \(\mathcal {E}_1(y)\), \(y \in [0,1)\) are real-valued functions satisfying (1.4) for some \(n \ge 2\). Then, for each \((x,y)\in D\), the solution \(m(x,y,\cdot )\) of the RH problem (3.2) is unique, if it exists. Moreover,

Proof

Fix \((x,y) \in D\). By (5.6), (5.14), and the definition (3.5) of v, we have

Hence

where the two functions \(c_0(x) > 0\) and \(c_1(y) > 0\) are independent of z. The function \(m(x,y,\cdot )\) is a solution of the RH problem (3.2) if and only if the function \(\tilde{m}(x,y,\cdot )\) defined by

satisfies the RH problem

where

But \(\det \tilde{v}(x,y,z) = 1\) for all \(z \in \Gamma \); hence the solution \(\tilde{m}(x,y,\cdot )\) is unique and \(\det \tilde{m} =1\). It follows that the solution m is unique and that \(\det m(x,y,z) = \det \tilde{m}(x,y,z) = 1\) for \(z \in \Omega _\infty \). \(\square \)

6 Proofs of main results

In this section, we use the lemmas from the previous section to prove Theorem 1–4.

6.1 Proofs of Theorem 1 & 2

Let \(\mathcal {E}_0(x)\), \(x \in [0, 1)\), and \(\mathcal {E}_1(y)\), \(y \in [0,1)\) be complex-valued functions satisfying (1.4) for some \(n \ge 2\). Suppose \(\mathcal {E}(x,y)\) is a \(C^n\)-solution of the Goursat problem for (1.1) in D with data \(\{\mathcal {E}_0, \mathcal {E}_1\}\). We will show that \(\mathcal {E}(x,y)\) can be uniquely expressed in terms of \(\mathcal {E}_0\) and \(\mathcal {E}_1\) by (3.1).

The idea in what follows is to introduce a solution \(\Phi \) of (5.1) as the solution of the integral equation

However, since W in general is singular on the boundary of D, we need to be more careful with the definition. We therefore instead define \(\Phi \) as the solution of

Lemma 6.1

(Solution of Lax pair equations) The function \(\Phi (x,y,P)\) defined in (6.1) has the following properties:

- (a)

\(\Phi (x,y,k^\pm )\) is a well-defined \(2\times 2\)-matrix valued function of \((x,y) \in D\) and \(k \in \hat{{\mathbb {C}}} {\setminus } [0,1]\) which also satisfies the alternative Volterra integral equation:

$$\begin{aligned} \Phi (x, y, k^+) = \Phi _1(y, k^+) + \int _0^x (\textsf {U} \Phi )(x',y,k^+) dx', \qquad (x,y) \in D, \ k \in \hat{{\mathbb {C}}} {\setminus } [0,1]. \end{aligned}$$(6.2) - (b)

For each \(k \in \hat{{\mathbb {C}}} {\setminus } [0,1]\), the function \((x,y) \mapsto \Phi (x,y,k^+)\) is continuous on D and is \(C^n\) on \({{\,\mathrm{int}\,}}D\).

- (c)

For each \(k \in \hat{{\mathbb {C}}} {\setminus } [0,1]\), the functions

$$\begin{aligned}&(x,y) \mapsto x^\alpha \Phi _x(x,y,k^+), \quad (x,y) \mapsto y^\alpha \Phi _y(x,y,k^+), \\&(x,y) \mapsto x^\alpha y^\alpha \Phi _{xy}(x,y,k^+), \end{aligned}$$are continuous on D.

- (d)

\(\Phi \) obeys the symmetries

$$\begin{aligned} {\left\{ \begin{array}{ll} \Phi (x,y,k^+) = \sigma _3\Phi (x, y, k^-)\sigma _3,\\ \Phi (x,y,k^\pm ) = \sigma _1\overline{\Phi (x, y,\bar{k}^\pm )}\sigma _1, \end{array}\right. } \qquad (x,y) \in D, \ k \in \hat{{\mathbb {C}}} {\setminus } [0,1]. \end{aligned}$$(6.3) - (e)

For each point \((x,y) \in D\), \(\Phi (x,y,P)\) extends continuously to an analytic function of \(P \in \mathcal {S}_{(x,y)} {\setminus } \Sigma \), where \(\Sigma = \Sigma _0 \cup \Sigma _1\) is the contour defined in (2.4).

- (f)

The value of \(\Phi \) at \(P = \infty ^+\) is given by

$$\begin{aligned} \Phi (x,y,\infty ^+) = \frac{1}{2} \begin{pmatrix} \overline{\mathcal {E}(x,y)} &{} 1 \\ \mathcal {E}(x,y) &{} -1 \end{pmatrix}\begin{pmatrix}1 &{} 1 \\ 1 &{} -1 \end{pmatrix}, \qquad (x,y) \in D. \end{aligned}$$(6.4) - (g)

The determinant of \(\Phi \) is given by

$$\begin{aligned} \det \Phi (x,y,P) = \text {Re} \,\mathcal {E}(x,y) > 0, \qquad (x,y) \in D, \ P \in \mathcal {S}_{(x,y)} {\setminus } \Sigma . \end{aligned}$$(6.5)

Proof

By Lemma 5.1 the lemma holds for \(y = 0\), i.e., the function \(\Phi (x,0,P)\) is well-defined and the properties (a)-(d) are satisfied when \(x = 0\) or \(y=0\). In order to see that \(\Phi \) is well-defined also for (x, y) in the interior of D, we note that (6.1) implies

The same type of successive approximation argument already used in the proof of Lemma 5.1 shows that the Volterra equation (6.6) has a unique solution for each fixed \(x \in (0,1)\) and each \(k \in \hat{{\mathbb {C}}} {\setminus } [0,1]\), and that this solution \(\Phi (x,y,P)\) extends continuously to an analytic function of \(P \in \mathcal {S}_{(x,y)} {\setminus } \Sigma \). This proves (b).

In order to prove (a), it remains to deduce the alternative representation (6.2). Note that \(\Phi _y = V\Phi \) by definition and

Since \(\mathcal {E}\) is a solution of the Goursat problem, we have

and, moreover, \(\Phi _x(x,0,k^+) ={\textsf {U}} \Phi (x,0,k^+)\). Now a straightforward calculation shows

Thus the function \(\tilde{\Phi }=\Phi _x -{\textsf {U}} \Phi \) is the unique solution of the Volterra integral equation

giving \(\tilde{\Phi }=0\). This implies \(\Phi _x = {\textsf {U}} \Phi \). Consequently, \(\Phi \), defined by (6.1), is an eigenfunction for the Lax pair equations (5.1). The difference between (6.1) and (6.2) is given by

and \(({\textsf {V} }\Phi )_x = ({\textsf {U}} \Phi )_y\) is the compatibility condition for the Lax pair. Hence the two representations (6.1) and (6.2) are equal. This proves (a).

The symmetries (4.17) of \(\lambda \) show that

Since \(\lambda (x,y,\infty ^+) = 1\), \(\Phi (x,y,\infty ^+)\) satisfies the equation

Using the above equations and arguing as in the proof of Lemma 5.1, the statements (c), (d), (e), (f), and (g) follow from equation (6.6) and the corresponding statements in Lemma 5.1. \(\square \)

Part (g) of Lemma 6.1 implies that the inverse matrix \(\Phi (x,y,P)^{-1}\) is well-defined for \((x,y) \in D\) and \(P \in \mathcal {S}_{(x,y)} {\setminus } \Sigma \).

Lemma 6.2

For each \((x,y) \in D\),

are analytic functions of \(P \in \mathcal {S}_{(x,y)} {\setminus } \Sigma _1\) and \(P \in \mathcal {S}_{(x,y)} {\setminus } \Sigma _0\), respectively.

Proof

Let U be an open set in \(\mathcal {S}_{(x,y)} {\setminus } \Sigma _1\). Multiplying (6.6) by \(\Phi (x,0,P)^{-1}\) from the right, we find

where the values of \(\Phi (x,0,P)\) and \(\lambda (x,y',P)\) in (6.8) are to be interpreted as in Remark 4.9. Since

is an analytic map \(U \rightarrow {\mathbb {C}}\) for each \(y'\), so is \(\textsf {V} (x,y',\cdot )\). It follows that the solution \(\Phi (x, y, P)\Phi (x,0,P)^{-1}\) of (6.8) also is analytic for \(P \in U\). This establishes the desired statement for the first map in (6.7); the proof for the second map is similar. \(\square \)

Let \(\Omega _0\), \(\Omega _1\), and \(\Omega _\infty \) denote the three components of \(\hat{{\mathbb {C}}} {\setminus } \Gamma \) defined in (4.23) and displayed in Fig. 7.

Lemma 6.3

The \(2\times 2\)-matrix valued function m(x, y, z) defined for \((x,y)\in D\) by

satisfies the RH problem (3.2) and the relation (3.1) for each \((x,y) \in D\).

Proof

Since \(F_{(x,y)}\) is a biholomorphism \(\mathcal {S}_{(x,y)} \rightarrow \hat{{\mathbb {C}}}\), we infer from Lemma 6.1 together with Lemma 6.2 that \(m(x,y, \cdot ) \) is analytic in \({\mathbb {C}}{\setminus } \Gamma \) and that \(m(x,y,z) \rightarrow I\) as \(z \rightarrow \infty \) for each \((x,y) \in D\). The jump condition in (3.2) holds as a consequence of the definition (3.5) of v(x, y, z) and the fact that

Finally, since \(0 \in \Omega _\infty \) and \(F_{(x,y)}^{-1}(0) = \infty ^-\), the first symmetry in (6.3) yields

Substituting in the expression (6.4) for \(\Phi \big (x,y, \infty ^+\big )\), the (11) and (21) entries of (6.10) give

Solving these two equations for \(\mathcal {E}\) and \(\bar{\mathcal {E}}\), we find (3.1). \(\square \)

We have showed that if \(\mathcal {E}(x,y)\) is a \(C^n\)-solution of the Goursat problem for (1.1) in D with data \(\{\mathcal {E}_0, \mathcal {E}_1\}\), then \(\mathcal {E}(x,y)\) can be expressed in terms of the function m defined in (6.9) via equation (3.1). By Lemma 5.4, this function m(x, y, z) is the unique solution of the RH-problem (3.2) whose formulation involves only the values \(\mathcal {E}_0(x')\) and \(\mathcal {E}_1(y')\) for \(0\le x' \le x\) and \(0\le y'\le y\). As a consequence, the value of the solution \(\mathcal {E}\) at (x, y) is uniquely determined by the values \(\mathcal {E}_0(x')\) and \(\mathcal {E}_1(y')\) for \(0\le x' \le x\) and \(0\le y'\le y\), if it exists. This completes the proofs of Theorem 1 and 2.

6.2 Proof of Theorem 3