Abstract

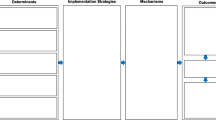

The advancement of implementation science is dependent on identifying assessment strategies that can address implementation and clinical outcome variables in ways that are valid, relevant to stakeholders, and scalable. This paper presents a measurement agenda for implementation science that integrates the previously disparate assessment traditions of idiographic and nomothetic approaches. Although idiographic and nomothetic approaches are both used in implementation science, a review of the literature on this topic suggests that their selection can be indiscriminate, driven by convenience, and not explicitly tied to research study design. As a result, they are not typically combined deliberately or effectively. Thoughtful integration may simultaneously enhance both the rigor and relevance of assessments across multiple levels within health service systems. Background on nomothetic and idiographic assessment is provided as well as their potential to support research in implementation science. Drawing from an existing framework, seven structures (of various sequencing and weighting options) and five functions (Convergence, Complementarity, Expansion, Development, Sampling) for integrating conceptually distinct research methods are articulated as they apply to the deliberate, design-driven integration of nomothetic and idiographic assessment approaches. Specific examples and practical guidance are provided to inform research consistent with this framework. Selection and integration of idiographic and nomothetic assessments for implementation science research designs can be improved. The current paper argues for the deliberate application of a clear framework to improve the rigor and relevance of contemporary assessment strategies.

Similar content being viewed by others

References

Eccles, M. P., & Mittman, B. S. (2006). Welcome to Implementation Science. Implement Sci, 1, 1.

Khoury, M. J., Gwinn, M., Yoon, P. W., Dowling, N., Moore, C. A., & Bradley, L. (2007). The continuum of translation research in genomic medicine: how can we accelerate the appropriate integration of human genome discoveries into health care and disease prevention? Genet Med, 9, 665–674.

Bauer, M. S., Damschroder, L., Hagedorn, H., Smith, J., & Kilbourne, A. M. (2015). An introduction to implementation science for the non-specialist. BMC Psychol, 3, 32.

Proctor, E., Silmere, H., Raghavan, R., Hovmand, P., Aarons, G., Bunger, A., Griffey, R., & Hensley, M. (2011). Outcomes for implementation research: conceptual distinctions, measurement challenges, and research agenda. Admin Pol Ment Health, 38, 65–76.

Powell, B. J., Waltz, T. J., Chinman, M. J., Damschroder, L. J., Smith, J. L., Matthieu, M. M., Proctor, E. K., & Kirchner, J. E. (2015). A refined compilation of implementation strategies: results from the expert recommendations for implementing change (ERIC) project. Implement Sci, 10, 21.

Brown CH, Curran G, Palinkas LA, Aarons GA, Wells KB, Jones L, Collins LM, Duan N, Mittman BS, Wallace A, Tabak RG, Ducharme L, Chambers D, Neta G, Wiley T, Landsverk J, Cheung K, Cruden G: An overview of research and evaluation designs for dissemination and implementation. Annu Rev Public Health 2016, 38:null.

Landsverk, J., Brown, C. H., Reutz, J. R., Palinkas, L., & Horwitz, S. M. (2010). Design elements in implementation research: a structured review of child welfare and child mental health studies. Adm Policy Ment Health Ment Health Serv Res, 38, 54–63.

Mercer, S. L., DeVinney, B. J., Fine, L. J., Green, L. W., & Dougherty, D. (2007). Study designs for effectiveness and translation research. Am J Prev Med, 33, 139–154 e2.

Glasgow, R. E. (2013). What does it mean to be pragmatic? Pragmatic methods, measures, and models to facilitate research translation. Health Educ Behav, 40, 257–265.

Krist, A. H., Glenn, B. A., Glasgow, R. E., Balasubramanian, B. A., Chambers, D. A., Fernandez, M. E., Heurtin-Roberts, S., Kessler, R., Ory, M. G., Phillips, S. M., Ritzwoller, D. P., Roby, D. H., Rodriguez, H. P., Sabo, R. T., Gorin, S. N. S., Stange, K. C., & Group TMS. (2013). Designing a valid randomized pragmatic primary care implementation trial: the my own health report (MOHR) project. Implement Sci, 8, 1–13.

Behn, R. D. (2003). Why measure performance? Different purposes require different measures. Public Adm Rev, 63, 586–606.

Lee, R. G., & Dale, B. G. (1998). Business process management: a review and evaluation. Bus Process Manag J, 4, 214–225.

McGlynn, E. A. (2004). There is no perfect health system. Health Aff (Millwood), 23, 100–102.

Castrucci, B. C., Rhoades, E. K., Leider, J. P., & Hearne, S. (2015). What gets measured gets done: an assessment of local data uses and needs in large urban health departments. J Public Health Manag Pract, 21(Suppl 1), S38–S48.

Ijaz, K., Kasowski, E., Arthur, R. R., Angulo, F. J., & Dowell, S. F. (2012). International health regulations–what gets measured gets done. Emerg Infect Dis, 18, 1054–1057.

Herman, K. C., Riley-Tillman, T. C., & Reinke, W. M. (2012). The role of assessment in a prevention science framework. Sch Psychol Rev, 41, 306–314.

Fuchs, D., & Fuchs, L. S. (2006). Introduction to response to intervention: what, why, and how valid is it? Read Res Q, 41, 93–99.

McKay, R., Coombs, T., & Duerden, D. (2014). The art and science of using routine outcome measurement in mental health benchmarking. Australas Psychiatry, 22, 13–18.

Thacker, S. B. (2007). Public health surveillance and the prevention of injuries in sports: what gets measured gets done. J Athl Train, 42, 171–173.

Lefkowith, D. (2001). What gets measured gets done: turning strategic plans into real world results. Manag Q, 42, 20–24.

Woodard JR: What gets measured, gets done! Agric Educ Mag 2004.

Trivedi, P. (1994). Improving government performance: what gets measured, gets done. Econ Polit Wkly, 29, M109–M114.

Proctor, E. K., Landsverk, J., Aarons, G., Chambers, D., Glisson, C., & Mittman, B. (2009). Implementation research in mental health services: an emerging science with conceptual, methodological, and training challenges. Adm Policy Ment Health Ment Health Serv Res, 36, 24–34.

Carlier, I. V. E., Meuldijk, D., Van Vliet, I. M., Van Fenema, E., Van der Wee, N. J. A., & Zitman, F. G. (2012). Routine outcome monitoring and feedback on physical or mental health status: evidence and theory. J Eval Clin Pract, 18, 104–110.

Harding, K. J. K., Rush, A. J., Arbuckle, M., Trivedi, M. H., & Pincus, H. A. (2011). Measurement-based care in psychiatric practice: a policy framework for implementation. J Clin Psychiatry, 72, 1–478.

Trivedi, M. H., & Daly, E. J. (2007). Measurement-based care for refractory depression: a clinical decision support model for clinical research and practice. Drug Alcohol Depend, 88, S61–S71.

Hollingsworth, B. (2008). The measurement of efficiency and productivity of health care delivery. Health Econ, 17, 1107–1128.

Hoagwood, K. E., Jensen, P. S., Acri, M. C., Serene Olin, S., Eric Lewandowski, R., & Herman, R. J. (2012). Outcome domains in child mental health research since 1996: have they changed and why does it matter? J Am Acad Child Adolesc Psychiatry, 51, 1241–1260 e2.

Lewis, C. C., Stanick, C. F., Martinez, R. G., Weiner, B. J., Kim, M., Barwick, M., & Comtois, K. A. (2015). The Society for Implementation Research Collaboration Instrument Review Project: a methodology to promote rigorous evaluation. Implement Sci, 10, 1–18.

Rabin, B. A., Purcell, P., Naveed, S., Moser, R. P., Henton, M. D., Proctor, E. K., Brownson, R. C., & Glasgow, R. E. (2012). Advancing the application, quality and harmonization of implementation science measures. Implement Sci, 7, 119.

Aarons, G. A., Hurlburt, M., & Horwitz, S. M. (2011). Advancing a conceptual model of evidence-based practice implementation in public service sectors. Adm Policy Ment Health Ment Health Serv Res, 38, 4–23.

Damschroder, L. J., Aron, D. C., Keith, R. E., Kirsh, S. R., & Alexander, J. A. (2009). Lowery JC, others: fostering implementation of health services research findings into practice: a consolidated framework for advancing implementation science. Implement Sci, 4, 50.

Martinez, R. G., Lewis, C. C., & Weiner, B. J. (2014). Instrumentation issues in implementation science. Implement Sci, 9, 118.

Coomber, B., & Louise Barriball, K. (2007). Impact of job satisfaction components on intent to leave and turnover for hospital-based nurses: a review of the research literature. Int J Nurs Stud, 44, 297–314.

Novick, M. R. (1966). The axioms and principal results of classical test theory. J Math Psychol, 3, 1–18.

Cone, J. D. (1986). Idiographic, nomothetic, and related perspectives in behavioral assessment. In Conceptual foundations of behavioral assessment, 111–128.

Glisson, C., Landsverk, J., Schoenwald, S., Kelleher, K., Hoagwood, K. E., Mayberg, S., & Green, P. (2008). Health TRN on YM: assessing the organizational social context (OSC) of mental health services: implications for research and practice. Adm Policy Ment Health Ment Health Serv Res, 35, 98–113.

Kilbourne, A. M., Almirall, D., Eisenberg, D., Waxmonsky, J., Goodrich, D., Fortney, J., Kirchner, J. E., Solberg, L., Main, D., Bauer, M. S., Kyle, J., Murphy, S., Nord, K., & Thomas, M. (2014). Protocol: adaptive implementation of effective programs trial (ADEPT): cluster randomized SMART trial comparing a standard versus enhanced implementation strategy to improve outcomes of a mood disorders program. Implement Sci, 9.

Lochman, J. E., Boxmeyer, C., Powell, N., Qu, L., Wells, K., & Windle, M. (2009). Dissemination of the coping power program: importance of intensity of counselor training. J Consult Clin Psychol, 77, 397–409.

Self-Brown, S., Valente, J. R., Wild, R. C., Whitaker, D. J., Galanter, R., Dorsey, S., & Stanley, J. (2012). Utilizing benchmarking to study the effectiveness of parent–child interaction therapy implemented in a community setting. J Child Fam Stud, 21, 1041–1049.

Weisz, J. R., Chorpita, B. F., Frye, A., Ng, M. Y., Lau, N., Bearman, S. K., Ugueto, A. M., Langer, D. A., & Hoagwood, K. E. (2011). Youth top problems: using idiographic, consumer-guided assessment to identify treatment needs and to track change during psychotherapy. J Consult Clin Psychol, 79, 369–380.

Biglan, A., Ary, D., & Wagenaar, A. C. (2000). The value of interrupted time-series experiments for community intervention research. Prev Sci, 1, 31–49.

Cronbach L, Gleser G, Nanda H, Rajaratnam N: The Dependability of Behavioral Measurements: Theory of Generalizability for Scores and Profiles. John Wiley & Sons; 1972.

Barrios B, Hartman DP: THe contributions of traditional assessment: Concepts, issues, and methodologies. In Conceptual foundations of behavioral assessment. New York, NY: Guilford; 1986.

Foster, S. L., & Cone, J. D. (1995). Validity issues in clinical assessment. Psychol Assess, 7, 248–260.

Mash, E. J., & Hunsley, J. (2008). Commentary: evidence-based assessment—strength in numbers. J Pediatr Psychol, 33, 981–982.

McLeod BD, Jensen-Doss A, Ollendick TH: Overview of diagnostic and behavioral assessment. In Diagnostic and behavioral assessment in children and adolescents: A clinical guide. Edited by McLeod BD, Jensen-Doss A, Ollendick TH, McLeod BD (Ed), Jensen-Doss A (Ed), Ollendick TH (Ed). New York, NY, US: Guilford Press; 2013:3–33.

Biglan A: Changing Cultural Practices: A Contextualist Framework for Intervention Research. Reno, NV, US: Context Press; 1995.

Jaccard J, Dittus P: Idiographic and nomothetic perspectives on research methods and data analysis. In Research methods in personality and social psychology. 11th edition.; 1990:312–351.

Vlaeyen, J. W. S., de Jong, J., Geilen, M., Heuts, P. H. T. G., & van Breukelen, G. (2001). Graded exposure in vivo in the treatment of pain-related fear: a replicated single-case experimental design in four patients with chronic low back pain. Behav Res Ther, 39, 151–166.

Weisz JR, Chorpita BF, Palinkas LA, Schoenwald SK, Miranda J, Bearman SK, Daleiden EL, Ugueto AM, Ho A, Martin J, Gray J, Alleyne A, Langer DA, Southam-Gerow MA, Gibbons RD: Testing standard and modular designs for psychotherapy treating depression, anxiety, and conduct problems in youth: A randomized effectiveness trial. Arch Gen Psychiatry 2012.

Elliott, R., & Wagner, J. (2016). D M, Rodgers B, Alves P, Café MJ: psychometrics of the personal questionnaire: a client-generated outcome measure. Psychol Assess, 28, 263–278.

Creswell JW, Klassen AC, Clark VLP, Smith KC: Best Practices for Mixed Methods Research in the Health Sciences. .

Robins, C. S. (2008). Ware NC, dosReis S, Willging CE, Chung JY, Lewis-Fernandez R: dialogues on mixed-methods and mental health services research: anticipating challenges, building solutions. Psychiatr Serv, 59, 727–731.

Palinkas, L. A., Horwitz, S. M., Chamberlain, P., Hurlburt, M. S., & Landsverk, J. (2011). Mixed-methods designs in mental health services research: a review. Psychiatr Serv, 62, 255–263.

Teddlie C, Tashakkori A., Major issues and controversies in the use of mixed methods in the social and behavioral sciences. In Handbook of mixed methods in social &behavioral research. SAGE; 2003:3–50.

Hirsch, O., Strauch, K., Held, H., Redaelli, M., Chenot, J.-F., Leonhardt, C., Keller, S., Baum, E., Pfingsten, M., Hildebrandt, J., Basler, H.-D., Kochen, M. M., Donner-Banzhoff, N., & Becker, A. (2014). Low back pain patient subgroups in primary care: pain characteristics, psychosocial determinants, and health care utilization. Clin J Pain, 30, 1023–1032.

Lambert, M. J., Harmon, C., Slade, K., Whipple, J. L., & Hawkins, E. J. (2005). Providing feedback to psychotherapists on their patients’ progress: clinical results and practice suggestions. J Clin Psychol, 61, 165–174.

Fisher, A. J., & Newman, M. G. (2011). M C: a quantitative method for the analysis of nomothetic relationships between idiographic structures: dynamic patterns create attractor states for sustained posttreatment change. J Consult Clin Psychol, 79, 552–563.

Sheu, C., Chae, B., & Yang, C.-L. (2004). National differences and ERP implementation: issues and challenges. Omega, 32, 361–371.

Wandner, L. D., Torres, C. A., Bartley, E. J., George, S. Z., & Robinson, M. E. (2015). Effect of a perspective-taking intervention on the consideration of pain assessment and treatment decisions. J Pain Res, 8, 809–818.

Glasgow, R. E., & Riley, W. T. (2013). Pragmatic measures: what they are and why we need them. Am J Prev Med, 45, 237–243.

Connors, E. H., Arora, P., Curtis, L., & Stephan, S. H. (2015). Evidence-based assessment in school mental health. Cogn Behav Pract, 22, 60–73.

Lyon, A. R., Ludwig, K., Wasse, J. K., Bergstrom, A., Hendrix, E., & McCauley, E. (2015). Determinants and functions of standardized assessment use among school mental health clinicians: a mixed methods evaluation. Adm Policy Ment Health Ment Health Serv Res, 43, 122–134.

Duong, M. T., Lyon, A. R., Ludwig, K., Wasse, J. K., & McCauley, E. (2016). Student perceptions of the acceptability and utility of standardized and idiographic assessment in school mental health. Int J Ment Health Promot, 18, 49–63.

Lewis, C., Darnell, D., Kerns, S., Monroe-De Vita, M., Landes, S. J., Lyon, A. R., Stanick, C., Dorsey, S., Locke, J., Marriott, B., Puspitasari, A., Dorsey, C., Hendricks, K., Pierson, A., Fizur, P., Comtois, K. A., Palinkas, L. A., Chamberlain, P., Aarons, G. A., Green, A. E., Ehrhart, M. G., Trott, E. M., Willging, C. E., Fernandez, M. E., Woolf, N. H., Liang, S. L., Heredia, N. I., Kegler, M., Risendal, B., Dwyer, A., et al. (2016). Proceedings of the 3rd biennial conference of the Society for Implementation Research Collaboration (SIRC) 2015: advancing efficient methodologies through community partnerships and team science. Implement Sci, 11, 1–38.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Information/findings not previously published

Although no original data are presented in the current manuscript, the information contained in the manuscript has not been published previously is not under review at any other publication.

Statement on any previous reporting of data

None of the information contained in the current manuscript has been previously published.

Statement indicating that the authors have full control of all primary data and that they agree to allow the journal to review their data if requested

Not applicable. No data are presented in the manuscript.

Statement indicating all study funding sources

This publication was supported in part by grant K08 MH095939 (Lyon), awarded from the National Institute of Mental Health. The content is solely the responsibility of the authors and does not necessarily represent the official views of the National Institutes of Health.

Statements indicating any actual or potential conflicts of interest

No conflicts of interest.

Statement on human rights

Not applicable. No data were collected from individuals for the submitted manuscript.

Statement on the welfare of animals

Not applicable. No data were collected from animals for the submitted manuscript.

Informed consent statement

Not applicable.

Helsinki or comparable standard statement

Not applicable.

IRB approval

Not applicable.

Additional information

Implications

Research: Implementation research studies should be designed with the intentional integration of idiographic and nomothetic approaches for specifically-stated functional purposes (i.e., Convergence, Complementarity, Expansion, Development, Sampling).

Practice: Implementation intermediaries (e.g., consultants or support personnel) and health care professionals (e.g., administrators or service providers) are encouraged to track (1) employee and organizational factors (e.g., implementation climate), (2) implementation processes and outcomes (e.g., adoption), and (3) individual and aggregate service outcomes using both idiographic (comparing within organizations or individuals) and nomothetic (comparing to standardized benchmarks) approaches when monitoring intervention implementation.

Policy: The intentional collection and integration of idiographic and nomothetic assessment approaches in implementation science is likely to result in data-driven policy decisions that are more comprehensive, pragmatic, and relevant to stakeholder concerns than either alone.

About this article

Cite this article

Lyon, A.R., Connors, E., Jensen-Doss, A. et al. Intentional research design in implementation science: implications for the use of nomothetic and idiographic assessment. Behav. Med. Pract. Policy Res. 7, 567–580 (2017). https://doi.org/10.1007/s13142-017-0464-6

Published:

Issue Date:

DOI: https://doi.org/10.1007/s13142-017-0464-6