Abstract

It is well known that nonlinear conjugate gradient methods are very effective for large-scale smooth optimization problems. However, their efficiency has not been widely investigated for large-scale nonsmooth problems, which are often found in practice. This paper proposes a modified Hestenes–Stiefel conjugate gradient algorithm for nonsmooth convex optimization problems. The search direction of the proposed method not only possesses the sufficient descent property but also belongs to a trust region. Under suitable conditions, the global convergence of the presented algorithm is established. The numerical results show that this method can successfully be used to solve large-scale nonsmooth problems with convex and nonconvex properties (with a maximum dimension of 60,000). Furthermore, we study the modified Hestenes–Stiefel method as a solution method for large-scale nonlinear equations and establish its global convergence. Finally, the numerical results for nonlinear equations are verified, with a maximum dimension of 100,000.

Similar content being viewed by others

References

Kärkkäinen, T., Majava, K., Mäkelä, M.M.: Comparison of formulations and solution methods for image restoration problems. Inverse Probl. 17, 1977–1995 (2001)

Li, J., Li, X., Yang, B., Sun, X.: Segmentation-based image copy-move forgery detection scheme. IEEE Trans. Inf. Forensics Secur. 10, 507–518 (2015)

Zhang, H., Wu, Q., Nguyen, T., Sun, X.: Synthetic aperture radar image segmentation by modified student’s t-mixture model. IEEE Trans. Geosci. Remote Sens. 52, 4391–4403 (2014)

Mäkelä, M.M., Neittaanmäki, P.: Nonsmooth Optimization: Analysis and Algorithms with Applications to Optimal Control. World Scientific Publishing Co., Singapore (1992)

Chen, S.S., Donoho, D.L., Saunders, M.A.: Atomic decomposition by basis pursuit. SIAM J. Sci. Comput. 20, 33–61 (1998)

Birge, J.R., Qi, L., Wei, Z.: A general approach to convergence properties of some methods for nonsmooth convex optimization. Appl. Math. Optim. 38, 141–158 (1998)

Rockafellar, R.T.: Monotone operators and proximal point algorithm. SIAM J. Control Optim. 14, 877–898 (1976)

Birge, J.R., Qi, L., Wei, Z.: Convergence analysis of some methods for minimizing a nonsmooth convex function. J. Optim. Theory Appl. 97, 357–383 (1998)

Bonnans, J.F., Gilbert, J.C., Lemaréchal, C., Sagastizábal, C.A.: A family of variable metric proximal methods. Math. Program. 68, 15–47 (1995)

Wei, Z., Qi, L.: Convergence analysis of a proximal Newton method. Numer. Funct. Anal. Optim. 17, 463–472 (1996)

Wei, Z., Qi, L., Birge, J.R.: A new methods for nonsmooth convex optimization. J. Inequal. Appl. 2, 157–179 (1998)

Yuan, G., Wei, Z.: The Barzilai and Borwein gradient method with nonmonotone line search for nonsmooth convex optimization problems. Math. Model. Anal. 17, 203–216 (2012)

Sagara, N., Fukushima, M.: A trust region method for nonsmooth convex optimization. J. Ind. Manag. Optim. 1, 171–180 (2005)

Yuan, G., Wei, Z., Wang, Z.: Gradient trust region algorithm with limited memory BFGS update for nonsmooth convex minimization. Comput. Optim. Appl. 54, 45–64 (2013)

Lemaréchal, C.: Extensions diverses des méthodes de gradient et applications. Thèse d’Etat, Paris (1980)

Wolfe, P.: A method of conjugate subgradients for minimizing nondifferentiable convex functions. Math. Program. Stud. 3, 145–173 (1975)

Kiwiel, K.C.: Proximity control in bundle methods for convex nondifferentiable optimization. Math. Program. 46, 105–122 (1990)

Schramm, H., Zowe, J.: A version of the bundle idea for minimizing a nonsmooth function: conceptual idea, convergence analysis, numerical results. SIAM J. Optim. 2, 121–152 (1992)

Kiwiel, K.C.: Methods of Descent for Nondifferentiable Optimization. Lecture Notes in Mathematics, vol. 1133. Springer, Berlin (1985)

Kiwiel, K.C.: Proximal level bundle methods for convex nondifferentiable optimization, saddle-point problems and variational inequalities. Math. Program. 69, 89–109 (1995)

Schramm, H.: Eine kombination yon bundle-und trust-region-verfahren zur Lösung nichtdifferenzierbare optimierungsprobleme. Bayreuther Mathematische Schriften, Heft 30. Universitat Bayreuth, Germany (1989)

Haarala, M., Miettinen, K., Mäkelä, M.M.: New limited memory bundle method for large-scale nonsmooth optimization. Optim. Methods Softw. 19, 673–692 (2004)

Hestenes, M.R., Stiefel, E.: Method of conjugate gradient for solving linear equations. J. Res. Natl. Bur. Stand. 49, 409–436 (1952)

Fletcher, R., Reeves, C.: Function minimization by conjugate gradients. Comput. J. 7, 149–154 (1964)

Dai, Y., Yuan, Y.: A nonlinear conjugate gradient with a strong global convergence properties. SIAM J. Optim. 10, 177–182 (2000)

Fletcher, R.: Practical Method of Optimization, Vol I: Unconstrained Optimization, 2nd edn. Wiley, New York (1997)

Polak, E., Ribière, G.: Note sur la convergence de directions conjugees. Rev. Fr. Inform. Rech. Opér. 3, 35–43 (1969)

Gilbert, J.C., Nocedal, J.: Global convergence properties of conjugate gradient methods for optimization. SIAM J. Optim. 2, 21–42 (1992)

Hu, Y.F., Storey, C.: Global convergence result for conjugate method. J. Optim. Theory Appl. 71, 399–405 (1991)

Wei, Z., Li, G., Qi, L.: Global convergence of the Polak–Ribière–Polyak conjugate gradient methods with inexact line search for nonconvex unconstrained optimization problems. Math. Comput. 77, 2173–2193 (2008)

Ahmed, T., Storey, D.: Efficient hybrid conjugate gradient techniques. J. Optim. Theory Appl. 64, 379–394 (1990)

Al-Baali, A.: Descent property and global convergence of the Flecher–Reeves method with inexact line search. IMA J. Numer. Anal. 5, 121–124 (1985)

Hager, W.W., Zhang, H.: A new conjugate gradient method with guaranteed descent and an efficient line search. SIAM J. Optim. 16, 170–192 (2005)

Wei, Z., Yao, S., Liu, L.: The convergence properties of some new conjugate gradient methods. Appl. Math. Comput. 183, 1341–1350 (2006)

Yuan, G.: Modified nonlinear conjugate gradient methods with sufficient descent property for large-scale optimization problems. Optim. Lett. 3, 11–21 (2009)

Yuan, G., Lu, X.: A modified PRP conjugate gradient method. Ann. Oper. Res. 166, 73–90 (2009)

Yuan, G., Lu, X., Wei, Z.: A conjugate gradient method with descent direction for unconstrained optimization. J. Comput. Appl. Math. 233, 519–530 (2009)

Zhang, L., Zhou, W., Li, D.: A descent modified Polak–Ribière–Polyak conjugate method and its global convergence. IMA J. Numer. Anal. 26, 629–649 (2006)

Buhmiler, S., Krejić, N., Lužanin, Z.: Practical quasi-Newton algorithms for singular nonlinear systems. Numer. Algorithms 55, 481–502 (2010)

Solodov, M.V., Svaiter, B.F.: A globally convergent inexact Newton method for systems of monotone equations. In: Fukushima, M., Qi, L. (eds.) Reformulation: Nonsmooth, Piecewise Smooth, Semismooth and Smoothing Methods, pp. 355–369. Kluwer Academic Publishers, Dordrecht (1998)

Toint, P.L.: Numerical solution of large sets of algebraic nonlinear equations. Math. Comput. 173, 175–189 (1986)

Yuan, G., Lu, X.: A new backtracking inexact BFGS method for symmetric nonlinear equations. Comput. Math. Appl. 55, 116–129 (2008)

Yuan, G., Yao, S.: A BFGS algorithm for solving symmetric nonlinear equations. Optimization 62, 82–95 (2013)

La Cruz, W., Martínez, J.M., Raydan, M.: Spectral residual method without gradient information for solving large-scale nonlinear systems of equations. Math. Comput. 75, 1429–1448 (2006)

La Cruz, W., Raydan, M.: Nonmonotone spectral methods for large-scale nonlinear systems. Optim. Methods Softw. 18, 583–599 (2003)

Tong, X., Qi, L.: On the convergence of a trust-region method for solving constrained nonlinear equations with degenerate solutions. J. Optim. Theory Appl. 123, 187–211 (2004)

Yuan, G., Lu, X., Wei, Z.: BFGS trust-region method for symmetric nonlinear equations. J. Comput. Appl. Math. 230, 44–58 (2009)

Yuan, G., Wei, Z., Lu, X.: A BFGS trust-region method for nonlinear equations. Computing 92, 317–333 (2011)

Zhang, J., Wang, Y.: A new trust region method for nonlinear equations. Math. Methods Oper. Res. 58, 283–298 (2003)

Grippo, L., Sciandrone, M.: Nonmonotone derivative-free methods for nonlinear equations. Comput. Optim. Appl. 37, 297–328 (2007)

Yuan, Y.: Subspace methods for large scale nonlinear equations and nonlinear least squares. Optim. Eng. 10, 207–218 (2009)

Fasano, G., Lampariello, F., Sciandrone, M.: A truncated nonmonotone Gauss–Newton method for large-scale nonlinear least-squares problems. Comput. Optim. Appl. 34, 343–358 (2006)

Li, D., Fukushima, M.: A global and superlinear convergent Gauss–Newton-based BFGS method for symmetric nonlinear equations. SIAM J. Numer. Anal. 37, 152–172 (1999)

Li, D., Qi, L., Zhou, S.: Descent directions of quasi-Newton methods for symmetric nonlinear equations. SIAM J. Numer. Anal. 40, 1763–1774 (2002)

Kanzow, C., Yamashita, N., Fukushima, M.: Levenberg–Marquardt methods for constrained nonlinear equations with strong local convergence properties. J. Comput. Appl. Math. 172, 375–397 (2004)

Marquardt, D.W.: An algorithm for least-squares estimation of nonlinear parameters. SIAM J. Appl. Math. 11, 431–441 (1963)

Bouaricha, A., Schnabel, R.B.: Tensor methods for large sparse systems of nonlinear equations. Math. Program. 82, 377–400 (1998)

Cheng, W.: A PRP type method for systems of monotone equations. Math. Comput. Model. 50, 15–20 (2009)

Yu, G., Guan, L., Chen, W.: Spectral conjugate gradient methods with sufficient descent property for large-scale unconstraned optimization. Optim. Methods Softw. 23, 275–293 (2008)

Yuan, G., Wei, Z., Lu, S.: Limited memory BFGS method with backtracking for symmetric nonlinear equations. Math. Comput. Model. 54, 367–377 (2011)

Wang, C.W., Wang, Y.J., Xu, C.L.: A projection method for a system of nonlinear monotone equations with convex constraints. Math. Methods Oper. Res. 66, 33–46 (2007)

Yu, Z.S., Lian, J., Sun, J., Xiao, Y.H., Liu, L., Li, Z.H.: Spectral gradient projection method for monotone nonlinear equations with convex constraints. Appl. Numer. Math. 59, 2416–2423 (2009)

Li, D., Wang, L.: A modified Fletcher–Reeves-type derivative-free method for symmetric nonlinear equations. Numer. Algebra Control Optim. 1, 71–82 (2011)

Yuan, G., Wei, Z., Zhao, Q.: A modified Polak–Ribière–Polyak conjugate gradient algorithm for large-scale optimization problems. IIE Trans. 46, 397–413 (2014)

Yuan, G., Zhang, M.: A modified Hestenes–Stiefel conjugate gradient algorithm for large-scale optimization. Numer. Funct. Anal. Optim. 34, 914–937 (2013)

Zhang, L., Zhou, W.J.: Spectral gradient projection method for solving nonlinear monotone equations. J. Comput. Appl. Math. 196, 478–484 (2006)

Andrei, N.: Another hybrid conjugate gradient algorithm for unconstrained optimization. Numer. Algorithms 47, 143–156 (2008)

Li, Q., Li, D.: A class of derivative-free methods for large-scale nonlinear monotone equations. IMA J. Numer. Anal. 31, 1625–1635 (2011)

Fukushima, M., Qi, L.: A global and superlinearly convergent algorithm for nonsmooth convex minimization. SIAM J. Optim. 6, 1106–1120 (1996)

Qi, L., Sun, J.: A nonsmooth version of Newton’s method. Math. Program. 58, 353–367 (1993)

Correa, R., Lemaréchal, C.: Convergence of some algorithms for convex minimization. Math. Program. 62, 261–273 (1993)

Hiriart-Urruty, J.B., Lemaréchal, C.: Convex Analysis and Minimization Algorithms II. Springer, Berlin (1993)

Calamai, P.H., Moré, J.J.: Projected gradient methods for linear constrained problems. Math. Program. 39, 93–116 (1987)

Qi, L.: Convergence analysis of some algorithms for solving nonsmooth equations. Math. Oper. Res. 18, 227–245 (1993)

Conn, A.R., Gould, N.I.M., Toint, P.L.: Trust-Region Methods. SIAM, Philadelphia (2000)

Yuan, G., Wei, Z.: A modified PRP conjugate gradient algorithm with nonmonotone line search for nonsmooth convex optimization problems. J. Appl. Math. Comput. (2011, in press)

Yuan, G., Wei, Z., Li, G.: A modified Polak–Ribière–Polyak conjugate gradient algorithm with nonmonotone line search for nonsmooth convex minimization. J. Comput. Appl. Math. 255, 86–96 (2014)

Lukšan, L., Vlšek, J.: Test problems for nonsmooth unconstrained and linearly constrained optimization. Technical Report No. 798. Institute of Computer Science, Academy of Sciences of the Czech Republic, Prague (2000)

Lukšan, L., Vlšek, J.: A bundle-Newton method for nonsmooth unconstrained minimization. Math. Program. 83, 373–391 (1998)

Polak, E.: The conjugate gradient method in extreme problems. Comput. Math. Math. Phys. 9, 94–112 (1969)

Fukushima, M.: A descent algorithm for nonsmooth convex optimization. Math. Program. 30, 163–175 (1984)

Karmitsa, N., Bagirov, A., Mäkelä, M.M.: Comparing different nonsmooth minimization methods and software. Optim. Methods Softw. 27, 131–153 (2012)

Kappel, F., Kuntsevich, A.: An implementation of Shor’s r-algorithm. Comput. Optim. Appl. 15, 193–205 (2000)

Kuntsevich, A., Kappel, F.: SolvOpt-the Solver for Local Nonlinear Optimization Problems. Karl-Franzens University of Graz, Graz (1997)

Shor, N.Z.: Minimization Methods for Non-Differentiable Functions. Springer, Berlin (1985)

Mäkelä, M.M.: Multiobjective proximal bundle method for nonconvex nonsmooth optimization: Fortran subroutine MPBNGC 2.0. Reports of the Department of Mathematical Information Technology, Series B, Scientific Computing, No. B 13/2003, University of Jyväkylä, Jyväkylä (2003)

Haarala, M., Miettinen, K., Mäkelä, M.M.: Globally convergent limited memory bundle method for large-scale nonsmooth optimization. Math. Program. 109, 181–205 (2007)

Bagirov, A.M., Karasozen, B., Sezer, M.: Discrete gradient method: a derivative free method for nonsmooth optimization. J. Optim. Theory Appl. 137, 317–334 (2008)

Bagirov, A.M., Ganjehlou, A.N.: A quasisecant method for minimizing nonsmooth functions. Optim. Methods Softw. 25, 3–18 (2010)

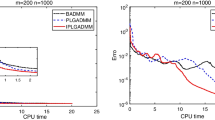

Dolan, E.D., Moré, J.J.: Benchmarking optimization software with performance profiles. Math. Program. 91, 201–213 (2002)

Moré, J., Garbow, B., Hillström, K.: Testing unconstrained optimization software. ACM Trans. Math. Softw. 7, 17–41 (1981)

Solodov, M.V., Svaiter, B.F.: A hybrid projection-proximal point algorithm. J. Convex Anal. 6, 59–70 (1999)

Acknowledgments

The authors thank the referees and the editor for their valuable comments which greatly improve our paper. The authors would like to thank Postdoctoral Researcher Yajun Xiao of the University of Technology, Sydney, for his assistance in editing this manuscript. This work was supported by the QFRC visitor funds of the University of Technology, Sydney; the study abroad funds for Guangxi talents in China; the Program for Excellent Talents in Guangxi Higher Education Institutions (Grant No. 201261); the Guangxi NSF (Grant No. 2012GXNSFAA053002); and the China NSF (Grant Nos. 11261006 and 11161003).

Author information

Authors and Affiliations

Corresponding author

Additional information

Communicated by Ryan P. Russell.

Rights and permissions

About this article

Cite this article

Yuan, G., Meng, Z. & Li, Y. A Modified Hestenes and Stiefel Conjugate Gradient Algorithm for Large-Scale Nonsmooth Minimizations and Nonlinear Equations. J Optim Theory Appl 168, 129–152 (2016). https://doi.org/10.1007/s10957-015-0781-1

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10957-015-0781-1