Abstract

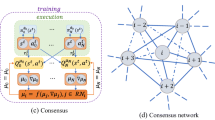

Reinforcement learning (RL) as an effective tool has attracted great attention in wireless communication field nowadays. In this paper, we investigate the offloading decision and resource allocation problem in mobile edge computing (MEC) systems based on RL methods. Different from existing literature, our research focuses on improving mobile operators’ revenue by maximizing the amount of the offloaded tasks while decreasing the energy expenditure and time-delays. Considering the dynamic characteristics of wireless environment, the above problem is modeled as a Markov decision process (MDP). Since the action space of the MDP is multidimensional continuous variables mixed with discrete variables, traditional RL algorithms are powerless. Therefore, an actor-critic (AC) with eligibility traces algorithm is proposed to resolve the problem. The actor part introduces the parameterized normal distribution to generate the probabilities of continuous stochastic actions, and the critic part employs a linear approximator to estimate the value of states, based on which the actor part updates policy parameters in the direction of performance improvement. Furthermore, an advantage function is designed to reduce the variance of the learning process. Simulation results indicate that the proposed algorithm can find the best strategy to maximize the amount of the tasks executed by the MEC server while decreasing the energy consumption and time-delays.

Similar content being viewed by others

References

Al-Shuwaili A, Simeone O (2017) Energy-efficient resource allocation for mobile edge computing-based augmented reality applications. IEEE Wirel Commun Lett 6(3):398–401

Barto AG, Sutton RS, Anderson CW (1983) Neuronlike adaptive elements that can solve difficult learning control problems. IEEE Trans Syst Man Cybern SMC 13(5):834–846

Burd TD, Brodersen RW (1996) Processor design for portable systems. J VLSI Signal Process Syst 13(3):203–221

Dinh TQ, Tang J, La QD, Quek TQS (2017) Offloading in mobile edge computing: task allocation and computational frequency scaling. IEEE Trans Commun 65(8):3571–3584

Ge C, Wang N, Foster G, Wilson M (2017) Toward qoe-assured 4k video-on-demand delivery through mobile edge virtualization with adaptive prefetching. IEEE Trans Multimed 19(10):2222–2237

Gong J, Zhou S, Zhou Z, Niu Z (2017) Policy optimization for content push via energy harvesting small cells in heterogeneous networks. IEEE Trans Wirel Commun 16(2):717–729

He Y, Zhao N, Yin H (2018) Integrated networking, caching, and computing for connected vehicles: a deep reinforcement learning approach. IEEE Trans Veh Technol 67(1):44–55

Kim Y, Kwak J, Chong S (2015) Dual-side dynamic controls for cost minimization in mobile cloud computing systems. In: 2015 13th International symposium on modeling and optimization in mobile, ad hoc, and wireless networks (WiOpt), pp. 443–450

Kim Y, Kwak J, Chong S (2018) Dual-side optimization for cost-delay tradeoff in mobile edge computing. IEEE Trans Veh Technol 67(2):1765–1781

Konda VR, Tsitsiklis JN (2003) On actor-critic algorithms. SIAM J Control Optim 42(4):1143–1166

Kwak J, Kim Y, Lee J, Chong S (2015) Dream: dynamic resource and task allocation for energy minimization in mobile cloud systems. IEEE J Sel Areas Commun 33(12):2510–2523

Lakshminarayana S, Quek TQS, Poor HV (2014) Cooperation and storage tradeoffs in power grids with renewable energy resources. IEEE J Sel Areas Commun 32(7):1386–1397

Lee G, Saad W, Bennis M, Mehbodniya A, Adachi F (2017) Online ski rental for on/off scheduling of energy harvesting base stations. IEEE Trans Wirel Commun 16(5):2976–2990

Liang C, He Y, Yu FR, Zhao N (2017) Enhancing qoe-aware wireless edge caching with software-defined wireless networks. IEEE Trans Wirel Commun 16(10):6912–6925

Mach P, Becvar Z (2017) Mobile edge computing: a survey on architecture and computation offloading. IEEE Commun Surv Tutor 19(3):1628–1656

Mao Y, Zhang J, Letaief KB (2016) Dynamic computation offloading for mobile-edge computing with energy harvesting devices. IEEE J Sel Areas Commun 34(12):3590–3605

Mao Y, Zhang J, Song SH, Letaief KB (2017) Stochastic joint radio and computational resource management for multi-user mobile-edge computing systems. IEEE Trans Wirel Commun 16(9):5994–6009

Miao G, Himayat N, Li GY (2010) Energy-efficient link adaptation in frequency-selective channels. IEEE Trans Commun 58(2):545–554

Rummery GA, Niranjan M (1994) On-line Q-learning using connectionist systems. Univ. Cambridge, Cambridge

Sanguanpuak T, Guruacharya S, Rajatheva N, Bennis M, Latva-Aho M (2017) Multi-operator spectrum sharing for small cell networks: a matching game perspective. IEEE Trans Wirel Commun 16(6):3761–3774

Sardellitti S, Scutari G, Barbarossa S (2015) Joint optimization of radio and computational resources for multicell mobile-edge computing. IEEE Trans Signal Inf Process Over Netw 1(2):89–103

Suto K, Nishiyama H, Kato N (2017) Postdisaster user location maneuvering method for improving the qoe guaranteed service time in energy harvesting small cell networks. IEEE Trans Veh Technol 66(10):9410–9420

Sutton RS, Barto AG (1998) Reinforcement learning: an introduction. MIT Press, Cambridge

Sutton RS, Barto AG (2017) Reinforcement learning: an introduction. MIT Press, Cambridge

Sutton RS, McAllester D, Singh S, Mansour Y (2000) Policy gradient methods for reinforcement learning with function approximation. ACM Sigmetrics Perform. Eval. Rev. 12:1057–1063

Vogeleer KD, Memmi G, Jouvelot P, Coelho F (2013) The energy/frequency convexity rule: Modeling and experimental validation on mobile devices. In: Proceedings of international conference on parallel processing and applied mathematical (PPAM), Warsaw, Poland, pp 93–803

Wang C, Liang C, Yu FR, Chen Q, Tang L (2017) Computation offloading and resource allocation in wireless cellular networks with mobile edge computing. IEEE Trans Wirel Commun 16(8):4924–4938

Wang C, Yu FR, Liang C, Chen Q, Tang L (2017) Joint computation offloading and interference management in wireless cellular networks with mobile edge computing. IEEE Trans Veh Technol 66(8):7432–7445

Watkins CJCH, Dayan P (1992) Q-learning. Mach Learn 8:279–292

Wei Y, Yu FR, Song M, Han Z (2019) Joint optimization of caching, computing, and radio resources for fog-enabled iot using natural actorccritic deep reinforcement learning. IEEE Int Things J 6(2):2061–2073

Wolfstetter E (1999) Topics in microeconomics: industrial organization, auctions, and incentives. Cambridge University Press, Cambridge

Yang L, Cao J, Yuan Y, Li T, Han A, Chan A (2013) A framework for partitioning and execution of data stream applications in mobile cloud computing. ACM SIGMETRICS Perform Eval Rev 40(4):23–32

You C, Huang K, Chae H, Kim BH (2017) Energy-efficient resource allocation for mobile-edge computation offloading. IEEE Trans Wirel Commun 16(3):1397–1411. https://doi.org/10.1109/TWC.2016.2633522

Zhang Z, Wang R, Yu FR, Fu F, Yan Q (2019) Qos aware transcoding for live streaming in edge-clouds aided hetnets: an enhanced actor-critic approach. IEEE Trans Veh Technol 68(11):11295–11308

Zhang Z, Yu FR, Fu F, Yan Q, Wang Z (2018) Joint offloading and resource allocation in mobile edge computing systems: an actor-critic approach. In: 2018 IEEE global communications conference (GLOBECOM), pp. 1–6

Zhao P, Tian H, Qin C, Nie G (2017) Energy-saving offloading by jointly allocating radio and computational resources for mobile edge computing. IEEE Access 5:11255–11268

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix 1

Appendix 1

In the following, we discuss the obtained solution by the actor-critic algorithm is global optimal. First, we discuss the quasi-concavity of the utility function in two cases.

Case 1:\({T_n}(t) + {c_n}(t){\varLambda _n}(t) > D_n^{\max }(\varDelta t) = \varDelta t{f_n}(t)L_e^{ - 1}\), we have \({D_n}(t) = D_n^{\max }(\varDelta t)\) and \({H_n}(t) = {T_n}(t) + {c_n}(t){\varLambda _n}(t) - D_n^{\max }(\varDelta t)\). The utility function is rewritten as,

where \(\varTheta _n {(t)}= ({\rho _n} - {\upsilon _n}\xi f_n^2(t){L_e} - {\omega _n}){D_n}(t)\), \({\varUpsilon _n}(t) = -{{\upsilon _n}D_n^{dl}(t)(1 - {b_n}(t))}[{p_n}(t) + p_n^{cir}]/{R_n}(t)\), and \({\varXi _n}(t) = -{\omega _n}\{ {T_n}(t) + {c_n}(t){\varLambda _n}(t)\}\).

Definition 1

A function \(\mathcal F\), which maps from a convex set of real n-dimensional vectors \(\mathrm I\) to a real number, is call strictly quasi-concave, if for any \(x_1\), \(x_2\)\(\in \mathrm{I}\), and \({x_1} \ne {x_2}\), \(\mathcal F(\lambda {x_1} + (1 - \lambda ){x_2}) \ge \min \{ \mathcal F({x_1}),\mathcal F({x_2})\} , \forall \lambda ,0 \le \lambda \le 1\) [31].

Based on the proposition C.9 in [31], \({\varUpsilon _n}(t)\) is strictly quasi-concave if and only if the upper contour sets

are convex for all \(y\in R\). If \(y \ge 0\), the upper contour sets are empty; if \(y<0\), (46) is equivalent to \(UC({\varUpsilon _n}(t),y) = \{ {p_n}(t) > 0|y{R_n}(t) + {\upsilon _n}D_n^{dl}(t)(1 - {b_n}(t))[{p_n}(t) + p_n^{cir}] < 0\}\). Since \(R_n(t)\) is concave in \({p_n}(t)\), the function, \({\mathcal F^*} = y{R_n}(t) + {\upsilon _n}D_n^{dl}(t)(1 - {b_n}(t))[{p_n}(t) + p_n^{cir}]\) is also concave. Therefore, the upper contour set \(UC({\varUpsilon _n}(t),y)\) is convex. Based on the above analysis, \({\varUpsilon _n}(t)\) is strictly quasi-concave. Obviously, \(\varTheta _n {(t)}\) and \({\varXi _n}(t)\) are strictly concave. Therefore, \(\varOmega _n (t)\) is strictly quasi-concave.

Case 2: i.e., \({T_n}(t) + {c_n}(t){\varLambda _n}(t) \le D_n^{\max }(\varDelta t)\), we have \({D_n}(t) = {T_n}(t) + {c_n}(t){\varLambda _n}(t)\) and \({H_n}(t) = 0\) which means no tasks left in the buffer at the tth time slot, the utility function is rewritten as,

where \({{{\widehat{\varTheta }}} _n}(t) = {({\rho _n} - {\upsilon _n}\xi f_n^2(t){L_e})}D_n(t)\) and \({{{\widehat{\varUpsilon }}} _n}(t) = {\varUpsilon _n}(t)\). Similarly, it is easy to proof that \(\varOmega _n (t)\) is strictly quasi-concave.

According to Theorem 2, the policy gradient-based actor-critic RL algorithm of this study is convergent. The actor part utilizes a parameterized Gaussian distribution to generate stochastic actions, and a local optimal policy can be obtained by updating the parameters with the stochastic gradient method [24]. Since the utility function \(\varOmega _n (t)\) is strictly quasi-concave as analyzed above, according to [18, 31], the local optimal solution of strictly quasiconcave functions equals to the global optimal solution.

Therefore, the obtained solution by the RL algorithm is global optimal.

Rights and permissions

About this article

Cite this article

Fu, F., Zhang, Z., Yu, F.R. et al. An actor-critic reinforcement learning-based resource management in mobile edge computing systems. Int. J. Mach. Learn. & Cyber. 11, 1875–1889 (2020). https://doi.org/10.1007/s13042-020-01077-8

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s13042-020-01077-8