Abstract

In this paper, we study the problem of estimating latent variable models with arbitrarily corrupted samples in high dimensional space (i.e., \(d\gg n\)) where the underlying parameter is assumed to be sparse. Specifically, we propose a method called Trimmed (Gradient) Expectation Maximization which adds a trimming gradients step and a hard thresholding step to the Expectation step (E-step) and the Maximization step (M-step), respectively. We show that under some mild assumptions and with an appropriate initialization, the algorithm is corruption-proofing and converges to the (near) optimal statistical rate geometrically when the fraction of the corrupted samples \(\epsilon\) is bounded by \({\tilde{O}}\bigg (\frac{1}{\sqrt{n}}\bigg )\). Moreover, we apply our general framework to three canonical models: mixture of Gaussians, mixture of regressions and linear regression with missing covariates. Our theory is supported by thorough numerical results.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

As one of the most popular techniques for estimating the maximum likelihood of mixture models or incomplete data problems, Expectation Maximization (EM) algorithm has been widely applied to many areas such as genomics (Laird 2010), finance (Faria and Gonçalves 2013), and crowdsourcing (Dawid and Skene 1979). Although EM algorithm is well-known to converge to an empirically good local estimator (Wu et al. 1983), finite sample statistical guarantees for its performance have not been established until recent studies (Balakrishnan et al. 2017b; Zhu et al. 2017; Wang et al. 2015; Yi and Caramanis 2015). Specifically, the first local convergence theory and finite sample statistical rate of convergence for the classical EM and its gradient ascent variant (gradient EM) were established in Balakrishnan et al. (2017b). Later, Wang et al. (2015) extended the classical EM and gradient EM algorithms to the high dimensional sparse setting, and the key idea in their methods is an additional truncation step after the M-step, which can exploit the intrinsic sparse structure of the high dimensional latent variable models. Later on, Yi and Caramanis (2015) also studied the high dimensional sparse EM algorithm and proposed a method which uses a regularized M-estimator in the M-step. Recently, Zhu et al. (2017) considered the computational issue of the previous methods of the problem in high dimensional sparse case. They proposed a method called VRSGEM (Variance Reduced Stochastic Gradient EM) which combines the idea of SVRG (Stochastic Variance Reduced Gradient) (Johnson and Zhang 2013) and the high dimensional gradient EM algorithm. Their method has less gradient complexity while also can achieve almost the same statistical estimation errors as the previous ones.

Although the above methods could achieve (near) optimal minimax rate for some statistical models such as Gaussian mixture model, mixture of regressions and linear regression with missing covariates (see Sect. 3 for details), all of these results need to assume that the data samples have no corruptions and also should satisfy some statistical assumptions, such as sub-Gaussian. This means that some arbitrary corruptions among the data samples may cause the dataset violate these statistical assumptions which are required for convergence of the above methods, or they will even make the above methods achieve unacceptable statistical estimation errors (see Fig. 1 for experimental studies). Thus, the classical EM algorithm and its variants are sensitive to these corruptions. Although statistical estimation with arbitrary corruptions has long been a focus in robust statistics (Huber 2011), it is still unknown that whether there exist some variant of (gradient) EM algorithm which is robust to arbitrary corruptions while also has finite sample statistical guarantees as in the non-corrupted case.

To address the aforementioned issue, in this paper, we study the problem of statistical estimation of latent variable models with arbitrarily corrupted samples in high dimensional spaceFootnote 1 (i.e., \(d\gg n\)) where the underlying parameter is assumed to be sparse. Specifically, we propose a new algorithm called Trimmed (Gradient) Expectation Maximization, which attaches a trimming gradient and hard thresholding step to the E-step and M-step in each iteration, respectively. We show that under certain conditions, our algorithm is robust against corruption and converges with a statistical estimation error which is (near) statistically optimal. Below is a summary of our main contributions.

-

1.

We show that, given an appropriate initialization \(\beta ^{\text {init}}\), i.e., \(\Vert \beta ^{\text {init}}-\beta ^*\Vert \le \kappa \Vert \beta ^*\Vert _2\) for some constant \(\kappa \in (0,1)\), if the model satisfies some additional assumptions, the iterative solution sequence \(\beta ^{t}\) of our algorithm satisfies \(\Vert \beta ^t-\beta ^*\Vert _2\le {\tilde{O}}\left( c_1 \rho ^{t}+\sqrt{s^*}c_2(\epsilon \log (nd)+\sqrt{\frac{\log d}{n}})\right)\) with high probability, where \(\rho \in (0,1)\), \(c_1, c_2\) are some constants dependent on the model, \(\epsilon\) is the fraction of the perturbed samples, and \(s^*\) is the sparsity parameter of the underlying parameter \(\beta ^*\). Particularly, when \(c_2\) is a constant and \(\epsilon \le O\left( \frac{1}{\sqrt{n}\log (nd)}\right)\), the above estimation error geometrically converges to \(O\left( \sqrt{\frac{s^*\log d}{n}}\right)\), which is statistically optimal. This means that our algorithm is corruption-proofing for a certain level of corruption that is only dependent on the sample size, which is quite useful in the high dimensional setting.

-

2.

We implement our algorithm on three canonical models: mixture of Gaussians, mixture of regressions and linear regression with missing covariates. Experimental results on these models support our theoretical analysis.

Some background, lemmas and all the proofs are included in the “Appendix”.

2 Related work

There are mainly two perspectives on the study of EM algorithm. The first one focuses on its statistical guarantees (Balakrishnan et al. 2017b; Zhu et al. 2017; Wang et al. 2015; Yi and Caramanis 2015). However, there are many differences compared with our results. Firstly, as we mentioned above, although in this paper we study the same statistical setting as these previous work, our method is corruption-proofing while the performance of their algorithms is heavily affected by outliers. Secondly, in our paper we use a robust version of the gradient instead of the original gradient, this make the proof of our theoretical result different with the above previous papers. Another direction focus on the practical performance, and there are many robust variants of the EM algorithm such as Aitkin and Wilson (1980); Yang et al. (2012). However, we note that these methods are incomparable with ours. Firstly, in this paper we mainly focus on statistical setting and the statistical guarantees while there is no any theoretical guarantees of these methods. Secondly, previous methods can only be used in the low dimension case while we focus on the high dimensional sparse case. Thus, to our best knowledge, there is no previous work on the variants of the EM algorithm that is both robust to some corruptions and also has statistical guarantees. Thus, in the following we will only compare with some other methods that are close to ours.

Diakonikolas et al. (2016, 2017, 2018), Chen et al. (2013) studied the problem of robustly estimating the mixture of distributions. However, some of them are not computationally practical as they rely on the rather time-consuming ellipsoid method. Moreover, these methods in general cannot be extended to the distributed or Byzantine setting (Chen et al. 2017), while ours can be easily extended to such scenarios.

Du et al. (2017), Balakrishnan et al. (2017a), Li (2017), Suggala et al. (2019), Dalalyan and Thompson (2019), Thompson and Dalalyan (2018) studied the robust high dimensional sparse estimation problem for some specified tasks, such as GLM, linear regression, mean and covariance matrix estimation. However, none of them considered estimating the latent variable models and thus is quite different from ours.

Recently, several robust methods have been proposed based on (stochastic) gradient descent, such as Alistarh et al. (2018), Chen et al. (2017), Yin et al. (2018), Prasad et al. (2018), Holland (2018). However, none of them studies the latent variable models and all of them consider only the low dimensional case.

We have to note that the most closed work to ours is given by Liu et al. (2019). Specifically, Liu et al. (2019) recently investigated the robust high dimensional sparse M-estimation problem (such as linear regression and logistic regression) by combining hard thresholding with trimming steps. However, their results are incomparable with ours. Particularly, their method can only be used in the M-estimation, and they only consider the case where the loss function is convex while ours focuses on the latent variable model and the EM algorithm, and the loss function (Q-function) is non-convex. Thus, we cannot use their proofs directly to get our theoretical results.

3 Preliminaries

Let Y and Z be two random variables taking values in the sample spaces \({\mathcal {Y}}\) and \({\mathcal {Z}}\), respectively. Suppose that the pair (Y, Z) has a joint density function \(f_{\beta ^*}\) that belongs to some parameterized family \(\{f_{\beta ^*}|\beta ^* \in \varOmega \}\). Rather than considering the whole pair of (Y, Z), we observe only component Y. Thus, component Z can be viewed as the missing or latent structure. We assume that the term \(h_{\beta }(y)\) is the marginal distribution over the latent variable Z, i.e., \(h_\beta (y)=\int _{{\mathcal {Z}}} f_\beta (y, z) dz.\) Let \(k_{\beta }(z|y)\) be the density of Z conditional on the observed variable \(Y=y\), that is, \(k_\beta (z|y)=\frac{f_\beta (y, z)}{h_\beta (y)}.\)

Given n observations \(y_1, y_2, \ldots , y_n\) of Y, the EM algorithm is to maximize the log-likelihood \(\max _{\beta \in \varOmega }\ell _n(\beta ) = \sum _{i=1}^n\log h_\beta (y_i).\) Due to the unobserved latent variable Z, it is often difficult to directly evaluate \(\ell _n(\beta )\). Thus, we consider the lower bound of \(\ell _n(\beta )\) . By Jensen’s inequality, we have

Let \(Q_n(\beta ; \beta ')=\frac{1}{n}\sum _{i=1}^n q_i(\beta ;\beta ')\), where

Also, it is convenient to let \(Q(\beta ; \beta ')\) denote the expectation of \(Q_n(\beta ; \beta ')\) w.r.t \(\{y_i\}_{i=1}^n\), that is,

We can see that the second term on the right hand side of (1) is not dependent on \(\beta\). Thus, given some fixed \(\beta '\), we can maximize the lower bound function \(Q_n(\beta ; \beta ')\) over \(\beta\) to obtain sufficiently large \(\ell _n(\beta )-\ell _n (\beta ')\). Thus, in the t-th iteration of the standard EM algorithm, we can evaluate \(Q_n(\cdot ; \beta ^t)\) at the E-step and then perform the operation of \(\max _{\beta \in \varOmega }Q_n(\beta ; \beta ^t)\) at the M-step. See McLachlan and Krishnan (2007) for more details.

In addition to the exact maximization implementation of the M-step, we add a gradient ascent implementation of the M-step, which performs an approximate maximization via a gradient descent step.

Gradient EM Procedure (Balakrishnan et al. 2017b) When \(Q_n(\cdot ; \beta ^t)\) is differentiable, the update of \(\beta ^t\) to \(\beta ^{t+1}\) consists of the following two steps.

-

E-step: Evaluate the functions in (2) to compute \(Q_n(\cdot ; \beta ^t)\).

-

M-step: Update \(\beta ^{t+1}=\beta ^t+\eta \nabla Q_n(\beta ^t; \beta ^t)\), where \(\nabla\) is the derivative of \(Q_n\) w.r.t the first component and \(\eta\) is the step size.

Next, we give some examples that use the gradient EM algorithm. Note that they are the typical examples for studying the statistical property of EM algorithm (Wang et al. 2015; Balakrishnan et al. 2017b; Yi and Caramanis 2015; Zhu et al. 2017).

Gaussian Mixture Model Let \(y_1, \ldots , y_n\) be n i.i.d. samples from \(Y\in {\mathbb {R}}^d\) with

where Z is a Rademacher random variable (i.e., \({\mathbb {P}}(Z=+1)= {\mathbb {P}}(Z=-1)=\frac{1}{2}\)), and \(V\sim {\mathcal {N}}(0, \sigma ^2 I_d)\) is independent of Z for some known standard deviation \(\sigma\). In our high dimensional setting, we assume that \(\Vert \beta ^*\Vert _0=s^*\) is sparse.Footnote 2

For Gaussian Mixture Model, we have

where \(w_\beta (y)=\frac{1}{1+\exp (-\langle \beta , y\rangle /\sigma ^2)}\).

Mixture of (Linear) Regressions Model Let n samples \((x_1, y_1)\), \((x_2, y_2)\), \(\ldots , (x_n, y_n)\) i.i.d.. sampled from \(Y\in {\mathbb {R}}\) and \(X\in {\mathbb {R}}^d\) with

where \(X\sim {\mathcal {N}}(0, I_d)\), \(V\sim {\mathcal {N}}(0, \sigma ^2)\),Footnote 3Z is a Rademacher random variable, and X, V, Z are independent. In the high dimensional case, we assume that \(\Vert \beta ^*\Vert _0=s^*\) is sparse.

In this case, we have

where \(w_\beta (x_i, y_i)=\frac{1}{1+\exp (-y\langle \beta ,x \rangle /\sigma ^2)}\).

Linear Regression with Missing Covariates We assume that \(Y\in {\mathbb {R}}\) and \(X\in {\mathbb {R}}^d\) satisfy

where \(X\sim {\mathcal {N}}(0, I_d)\) and \(V\sim {\mathcal {N}}(0, \sigma ^2)\) are independent. In our high dimensional setting, we assume that \(\Vert \beta ^*\Vert _0=s^*\) is sparse. Let \(x_1, x_2, \ldots , x_n\) be n observations of X with each coordinate of \(x_i\) missing (unobserved) independently with probability \(p_m\in [0,1)\).

In this case, we have

where the functions \(m_\beta (x_i^{\text {obs}},y_i)\in {\mathbb {R}}^d\) and \(K_\beta (x_i^{\text {obs}}, y_i)\in {\mathbb {R}}^{d\times d}\) are defined as:

and

where vector \(z_i \in {\mathbb {R}}^d\) is defined as \(z_{i,j}=1\) if \(x_{i,j}\) is observed and \(z_{i,j}=0\) is \(x_{i,j}\) is missing, and \(\odot\) denotes the Hadamard product of matrices.

Next, we provide several definitions on the required properties of functions \(Q_n(\cdot ; \cdot )\) and \(Q(\cdot ; \cdot )\). Note that some of them have been used in the previous studies on EM (Balakrishnan et al. 2017b; Wang et al. 2015; Zhu et al. 2017).

Definition 1

Function \(Q(\cdot ; \beta ^*)\) is self-consistent if \(\beta ^*=\arg \max _{\beta \in \varOmega }Q(\beta ; \beta ^*).\) That is, \(\beta ^*\) maximizes the lower bound of the log likelihood function.

Definition 2

\(Q(\cdot ; \cdot )\) is called Lipschitz–Gradient-2(\(\gamma , {\mathcal {B}}\)), if for the underlying parameter \(\beta ^*\) and any \(\beta \in {\mathcal {B}}\) for some set \({\mathcal {B}}\), the following holds

We note that there are some differences between the definition of Lipschitz–Gradient-2 and the Lipschitz continuity condition in the convex optimization literature (Nesterov 2013). Firstly, in (12), the gradient is w.r.t the second component, while the Lipschitz continuity is w.r.t the first component. Secondly, the property holds only for fixed \(\beta ^*\) and any \(\beta\), while the Lipschitz continuity is for all \(\beta , \beta '\in {\mathcal {B}}\).

Definition 3

(\(\mu\)-smooth) \(Q(\cdot ; \beta ^*)\) is \(\mu\)-smooth, that is if for any \(\beta , \beta '\in {\mathcal {B}}\), \(Q(\beta ;\beta ^*)\ge Q(\beta '; \beta ^*)+(\beta -\beta ')^T\nabla Q(\beta ';\beta ^*)-\frac{\mu }{2}\Vert \beta '-\beta \Vert _2^2.\)

Definition 4

(\(\upsilon\)-strongly concave) \(Q(\cdot ; \beta ^*)\) is \(\upsilon\)-strongly concave, that is if for any \(\beta , \beta '\in {\mathcal {B}}\), \(Q(\beta ;\beta ^*)\le Q(\beta '; \beta ^*)+(\beta -\beta ')^T\nabla Q(\beta ';\beta ^*)-\frac{\upsilon }{2}\Vert \beta '-\beta \Vert _2^2.\)

Next, we assume that each coordinate of \(\nabla q(\beta ; \beta )\) in (2) is sub-exponential for every \(\beta \in {\mathcal {B}}\), where \(\nabla\) is the derivative of q w.r.t the first component.

Definition 5

A random variable X with mean \({\mathbb {E}}(X)\) is \(\xi\)-sub-exponential for \(\xi >0\) if for all \(|t|<\frac{1}{\xi }\), \({\mathbb {E}}\{\exp \bigg (t[X-{\mathbb {E}}(X)] \bigg )\}\le \exp (\frac{\xi ^2t^2}{2}).\)

Assumption 1

We assume that \(Q(\cdot ; \cdot )\) in (3) is self-consistent, Lipschitz–Gradient-2(\(\gamma , {\mathcal {B}}\)), \(\mu\)-smooth and \(\upsilon\)-strongly convex for some \({\mathcal {B}}\). Moreover, we assume that for any fixed \(\beta \in {\mathcal {B}}\) with \(\Vert \beta \Vert _0\le s\) (where the value of s will be specified later) and \(\forall j\in [d]\), the j-th coordinate of \(\nabla q(\beta ; \beta )\) (i.e., \([\nabla q(\beta ; \beta )]_j\)) is \(\xi\)-sub-exponential and for each \(i\in [n]\), \([\nabla q_i(\beta , \beta )]_j\) is independent with others.

We note that the sub-exponential assumption on each coordinate is stronger than the assumption of Statistical-Error in Wang et al. (2015); Balakrishnan et al. (2017b). However, since the model considered in this paper could have arbitrarily corrupted samples, we will see later that this assumption is necessary.

Finally, we give the definition of the corruption model studied in the paper.

Definition 6

(\(\epsilon\)-corrupted samples) Let \(\{y_1, y_2, \ldots , y_n\}\) be n i.i.d. observations with distribution P. We say that a collection of samples \(\{z_1, z_2, \ldots , z_n\}\) is \(\epsilon\)-corrupted if an adversary chooses an arbitrary \(\epsilon\)-fraction of the samples in \(\{y_i\}_{i=1}^n\) and modifies them with arbitrary values.

We note that this is a quite common model in robust estimation or robust statistics. Equivalently, it means that there are \(\epsilon\)-fraction of samples in the dataset are outliers (or they are corrupted arbitrarily).

4 Trimmed expectation maximization algorithm

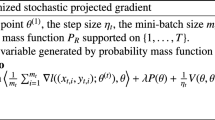

To obtain a robust estimator for the high dimensional model with \(\epsilon\)-corrupted samples, we propose a trimmed EM algorithm, which is based on the gradient EM algorithm. See Algorithm 1 for details.

Note that compared with the previous gradient EM algorithm, Trimmed EM algorithm has two additional steps in each iteration, i.e., the trimming gradient and hard thresholding step. For the trimming gradient step 4 in Algorithm 1, we use the dimensional \(\alpha\)-trimmed estimator (i.e., \(\text {D-Trim}_{\alpha }\)) on the gradients \(\{\nabla q_i(\beta ^t; \beta ^t)\}_{i=1}^n\). We note that while this operator has also been studied in Liu et al. (2019); Yin et al. (2018) for the M-estimators, we use it for the EM algorithm. Here is the definition of the function \(\text {D-Trim}_{\alpha }(\cdot )\).

Definition 7

(Dimensional \(\alpha\)-trimmed estimator) Given a set of \(\epsilon\)-corrupted samples in the form of d-dimensional vectors \(\{z_i\}_{i=1}^n\), the D-Trim operator \(\text {D-Trim}_{\alpha }(\{z_i\}_{i=1}^n)\in {\mathbb {R}}^d\) performs as follows. For each dimension \(j\in [d]\), it first removes the largest and the smallest \(\alpha\) fraction of elements in the j-th coordinate of \(\{z_i\}_{i=1}^n\), i.e., \(\{z_{i,j}\}_{i=1}^n\), and then calculates the mean of the remaining terms, where \(\alpha =c_0\epsilon\) and \(\alpha \le \frac{1}{2}-c_1\) for some constant \(c_0\ge 1\) and a small constant \(c_1\).

The rationale behind the use of the dimensional trimmed estimator is that due to the existence of \(\epsilon\) fraction of corrupted samples, directly calculating the the mean of the gradient could introduce a large error to the population gradient \(\nabla Q(\beta ^t; \beta ^t)\) in (3). Also, it can be shown that if each coordinate of \(\nabla q_i(\beta ^t; \beta ^t)\) is sub-exponential, it will be robust against the \(\epsilon\)-corruption for some small \(\epsilon\). This motivates us to use the dimensional trimmed operation.

To ensure the sparsity of our estimator, after getting \(\beta ^{t+0.5}\), we need to use the hard thresholding operation (Blumensath and Davies 2009). More specifically, we first find the set \(\hat{{\mathcal {S}}}^{t+0.5}\subseteq [d]\) of indices j corresponding to the top s largest \(|\beta ^{t+0.5}_j|\) (we denote \(\hat{{\mathcal {S}}}^{t+0.5}=\text {supp}(\beta ^{t+0.5}, s)\)Footnote 4), and make the value of the remaining entries \(\beta ^{t+0.5}_j\) for \(j\in [d]\backslash \hat{{\mathcal {S}}}^{t+0.5}\) be 0 (we denote \(\beta ^{t+1}=\text {trunc}(\beta ^{t+0.5}, \hat{{\mathcal {S}}}^{t+0.5})\)Footnote 5). The sparsity level s controls the sparsity of the estimator and the estimation error.

The following main theorem shows that under Assumption 1 and with some proper initial vector \(\beta ^{\text {init}}\), the estimator \(\beta ^T\) converges to the underlying \(\beta ^*\) at a geometric rate with high probability.

Theorem 1

Let \({\mathcal {B}}=\{\beta : \Vert \beta -\beta ^*\Vert _2\le R\}\) be a set with \(R=k\Vert \beta ^*\Vert _2\) for some \(k\in (0,1)\). Assume that Assumption 1holds for parameters \({\mathcal {B}}, \gamma , \mu , \upsilon , \xi\) satisfying the condition of \(1-2\frac{\upsilon -\gamma }{\upsilon +\mu }\in (0,1)\) and the sparsity parameter s is chosen to be

where C is some absolute constant. Also, assume that \(\Vert \beta ^{\text {init}}-\beta ^*\Vert _2\le \frac{R}{2}\) and there exist some absolute constants \(C_1\) and \(C_2\) satisfying the condition of

Then, if taking \(\eta =\frac{2}{\upsilon +\mu }\) in Algorithm 1, the following holds for \(t=1, \dots , T\) with probability at least \(1-Td^{-3}\)

In the above theorem, assumption (13) indicates that the sparsity level s in Algorithm 1 should be sufficiently large but still in the same order as the underlying sparsity \(s^*\). Although s seems quite complex, in the experiments, we can see that it is suffcient to set \(s=s^*\). Assumption (14) suggests that in order to ensure an upper bound in the hard thresholding step, we need \(\sqrt{s^*}\xi (\epsilon \log (nd)+\sqrt{\frac{\log d}{n}})\le O(\Vert \beta ^*\Vert _2)\), which means that n should be sufficiently large and the fraction of corruption \(\epsilon\) cannot be too large. In the error bound of (15), there are three types of errors. The first one is caused by optimization, which decreases to zero at a geometric rate of convergence. The second one is the term related to \(\epsilon\) [i.e., \(O(\xi \sqrt{s^*}\epsilon \log (nd))\)], which is caused by estimating the population gradient via the trimming step due the \(\epsilon\)-corrupted samples. In the special case of no corrupted samples (i.e., \(\epsilon =0\)), the bound will be zero. The third one is the term \(O\bigg (\xi \sqrt{\frac{s^*\log d}{n}}\bigg )\), which corresponds to the statistical error. It is independent of both \(\epsilon\) and t and only dependent on the model itself. Even though Theorem 1 requires that the initial estimator be close enough to the optimal one, our experiments show that the algorithm actually performs quite well for any random initialization.

From Theorem 1, we can also see that when the fraction of corruption \(\epsilon\) is sufficiently small such that \(\epsilon \le O\bigg (\frac{1}{\sqrt{n\log (nd)}}\bigg )\) and the iteration number is sufficiently large, the error bound in (15) becomes \(O\bigg (\xi \sqrt{\frac{s^*\log d }{n}}\bigg )\), which is the same as the optimal rate of estimating a high dimensional sparse vector when \(\xi\) is some constant. This means that our method has the same rate as the non-corrupted ones in Wang et al. (2015). This rate of corruption also has been appeared in the corrupted sparse linear regression (Dalalyan and Thompson 2019; Liu et al. 2019). Also, we can see that when \(\alpha =0\), our algorithm will be reduced to the high dimensional gradient EM algorithm in Wang et al. (2015).

5 Implications for some specific models

In this section, we apply our framework (i.e., Algorithm 1) to the models mentioned in Sect. 3. To obtain results for these models, we only need to find the corresponding \({\mathcal {B}}, \gamma , k, R, \upsilon , \mu , \xi\) to ensure that Assumption 1 and assumptions in Theorem 1 hold.

5.1 Corrupted Gaussian mixture model

The following lemma, which was given in Balakrishnan et al. (2017b), ensures the properties of Lipschitz–Gradient-2(\(\gamma , {\mathcal {B}}\)), smoothness and strongly concave for model (4). It is easy to show that the model is self-consistent (Yi and Caramanis 2015).

Lemma 1

(Balakrishnan et al. 2017b; Yi and Caramanis 2015) If \(\frac{\Vert \beta ^*\Vert _2}{\sigma }\ge r\), where r is a sufficiently large constant denoting the minimum signal-to-noise ratio (SNR), then there exists an absolute constant \(C>0\) such that the properties of self-consistent, Lipschitz–Gradient-2(\(\gamma , {\mathcal {B}})\), \(\mu\)-smoothness and \(\upsilon\)-strongly concave hold for function \(Q(\cdot ; \cdot )\) with \(\gamma =\exp (-Cr^2), \mu =\upsilon =1, R=k\Vert \beta ^*\Vert _2, k=\frac{1}{4}, \text { and } {\mathcal {B}}=\{\beta :\Vert \beta -\beta ^*\Vert _2\le R\}.\)

Lemma 2

With the same notations as in Lemma 1, for each \(\beta \in {\mathcal {B}}\) with \(\Vert \beta \Vert _0\le s\), the j-th coordinate of \(\nabla q_i(\beta ; \beta )\) is \(\xi\)-sub-exponential with

where \(C_1\) is some absolute constant. Also, each \([\nabla q_i(\beta ;\beta )]_j\), where \(i\in [n]\), is independent of others for any fixed \(j\in [d]\).

Theorem 2

In an \(\epsilon\)-corrupted high dimensional Gaussian Mixture Model with \(\epsilon\) satisfying the condition of

if \(\frac{\Vert \beta ^*\Vert _2}{\sigma }\ge r\) for some sufficiently large constant r denoting the minimum SNR and the initial estimator \(\beta ^{\text {init}}\) satisfies the inequality of \(\Vert \beta ^{\text {init}}-\beta ^*\Vert _2\le \frac{1}{8}\Vert \beta ^*\Vert _2,\) then the output \(\beta ^T\) of Algorithm 1 after choosing \(s=O(s^*)\) and \(\eta =O(1)\) satisfies the following with probability at least \(1-Td^{-3}\)

where C is some absolute constant.

From Theorem 2, we can see that when \(\epsilon \le {\tilde{O}}\left( \frac{1}{\sqrt{n}}\right)\) and \(T=O\left( \log \frac{n}{s^*\log d}\right)\), the output achieves an estimation error of \(O\left( \sqrt{\frac{s^*\log d}{n}}\right)\), which matches the best-known error bound of the no-outlier case (Yi and Caramanis 2015; Wang et al. 2015). Also, we assume that the SNR is large, which is reasonable since it has been shown that for Gaussian Mixture Model with low SNR, the variance of noise makes it harder for the algorithm to converge (Ma et al. 2000).

5.2 Corrupted mixture of regressions model

The following lemma, which was given in Balakrishnan et al. (2017b); Yi and Caramanis (2015), shows the properties of Lipschitz–Gradient-2(\(\gamma , {\mathcal {B}}\)), smoothness and strongly concave for model (6).

Lemma 3

(Balakrishnan et al. 2017b; Yi and Caramanis 2015) If \(\frac{\Vert \beta ^*\Vert _2}{\sigma }\ge r\), where r is a sufficiently large constant denoting the required minimal signal-to-noise ratio (SNR), then function \(Q(\cdot ; \cdot )\) of the Mixture of Regressions Model has the properties of self-consistent, Lipschitz–Gradient-2(\(\gamma , {\mathcal {B}})\), \(\mu\)-smoothness, and \(\upsilon\)-strongly with \(\gamma \in (0,\frac{1}{4}), \mu =\upsilon =1, {\mathcal {B}}=\{\beta : \Vert \beta -\beta ^*\Vert _2\le R\}, R=k\Vert \beta ^*\Vert _2\), and \(k=\frac{1}{32}.\)

Lemma 4

With the same notations as in Lemma 3, for each \(\beta \in {\mathcal {B}}\) and \(\Vert \beta \Vert _0=s\), the j-th coordinate of \(\nabla q_i(\beta ; \beta )\) is \(\xi\)-sub-exponential with

where \(C>0\) is some absolute constant. Also, each \([\nabla q_i(\beta ;\beta )]_j\), where \(i\in [n]\), is independent of others for any fixed \(j\in [d]\).

Theorem 3

In an \(\epsilon\)-corrupted high dimensional Mixture of Regressions Model with \(\epsilon\) satisfying the condition of

if \(\frac{\Vert \beta ^*\Vert _2}{\sigma }\ge r\) for some sufficiently large constant r denoting the minimum SNR and the initial estimator \(\beta ^{\text {init}}\) satisfies the inequality of \(\Vert \beta ^{\text {init}}-\beta ^*\Vert _2\le \frac{1}{64}\Vert \beta ^*\Vert _2,\) then the output \(\beta ^T\) of Algorithm 1 after choosing \(s=O(s^*)\) and \(\eta =O(1)\) satisfies the following with probability at least \(1-Td^{-3}\)

where \(\gamma \in (0, \frac{1}{4})\) is a constant.

Note that in the above theorem, when \(\epsilon \le {\tilde{O}}\left( \frac{1}{\sqrt{n}}\right)\) and \(T=O\left( \log \frac{\sqrt{n}}{\sqrt{\log d} s^*}\right)\), the estimation error becomes \(O\left( s^*\sqrt{\frac{\log d}{n}}\right)\), which differs from the \(O\left( \sqrt{\frac{s^*\log d}{n}}\right)\) minimax lower bound by only a factor of \(\sqrt{s^*}\). We leave it as an open problem for further improvement. Recently, Chen et al. (2018) shows that in the no-outlier and low dimensional setting, an assumption of \(SNR\ge \rho\) for some constant \(\rho\) is necessary for achieving the optimal rate \(\varTheta \left( \sqrt{\frac{d}{n}}\right)\).

5.3 Corrupted linear regression with missing covariates

Lemma 5

(Balakrishnan et al. 2017b; Yi and Caramanis 2015) If \(\frac{\Vert \beta ^*\Vert _2}{\sigma }\le r\) and \(p_m<\frac{1}{1+2b+2b^2}\), where r is a constant denoting the required maximum signal-to-noise ratio (SNR) and \(b=r^2(1+k)^2\) for some constant \(k\in (0,1)\), then function \(Q(\cdot ; \cdot )\) of the linear regression with missing covariates has the properties of self-consistent, Lipschitz–Gradient-2(\(\gamma , {\mathcal {B}})\), \(\mu\)-smoothness and \(\upsilon\)-strongly with

Lemma 6

With the same assumptions as in Lemma 5, for each \(\beta \in {\mathcal {B}}\) with \(\Vert \beta \Vert _0=s\), \([\nabla q_i(\beta ; \beta )]_j\) is \(\xi\)-sub-exponential with

for some constant \(C>0\). Also, each \([\nabla q_i(\beta ;\beta )]_j\), where \(i\in [n]\), is independent of others for any fixed \(j\in [d]\).

Theorem 4

In an \(\epsilon\)-corrupted high dimensional linear regression with missing covariates model with \(\epsilon\) satisfying the condition of

for some \(k\in (0,1)\), if \(\Vert \beta ^{\text {init}}-\beta ^*\Vert _2\le \frac{k\Vert \beta ^*\Vert _2^2}{2}\) and the assumptions in Lemma 5hold, then, the output \(\beta ^T\) of Algorithm 1 after taking \(s=O(s^*)\) and \(\eta =O(1)\) satisfies the following with probability at least \(1-Td^{-3}\)

where the Big-O term hides the terms of k and r.

Note that similar to the mixture of regressions model, when \(\epsilon \le {\tilde{O}}\left( \frac{1}{\sqrt{n}}\right)\), the estimation error is \(O\left( s^*\sqrt{\frac{\log d}{n}}\right)\), which is only a factor of \(\sqrt{s^*}\) away from the optimal. However, unlike the previous two models, we assume here that SNR is upper bounded by some constant which is unavoidable as pointed out in Loh and Wainwright (2011).

6 Experiments

In this section, we empirically study the performance of Algorithm 1 on the three models mentioned in the previous section. Since in the paper we mainly focus on the statistical setting and its theoretical behaviors, thus, we will only perform our algorithm on the synthetic data. It is notable that previous papers on the statistical guarantees of EM algorithm all perform their algorithms on synthetic data only such as Balakrishnan et al. (2017b), Wang et al. (2015), Yi and Caramanis (2015). Thus, performing experiments on synthetic data only is enough for the paper.

For each of these models, we generate synthesized datasets according to the underlying distribution. We will use \(\Vert \beta -\beta ^*\Vert _2\) to measure the estimation error, and test how it is affected by different parameter settings from two aspects. Firstly, we examine how the underlying sparsity parameter \(s^*\) of the model affects the estimation error and whether it is consistent with our theoretical results. Secondly, we test how the corruption fraction \(\epsilon\) of the data and the dimensionality d affect the convergence rate, as well as the estimation error. For each experiment, the data is corrupted as follows: We first randomly choose \(\epsilon\) fraction of the input data, then we add a Gaussian noise for each of these data samples. The noise is sampled from a multivariate Gaussian distribution \({\mathcal {N}}(0, 50 \Vert X\Vert _\infty I_d)\). All experiments are repeated for 20 runs and the average results are reported.

Parameter setting Throughout the experiments we will follow the setting of the previous related works on high dimensional EM algorithms which have statistical guarantees but are not corruption-proofing (Zhu et al. 2017; Wang et al. 2015; Yi and Caramanis 2015). We fix the dataset size n to be 2000, because using a larger n does not exhibit significant difference. For each model, the experiment is divided into three parts as mentioned previously: The first one (Fig. 2) measures \(\Vert \beta -\beta ^*\Vert _2\) versus \(\sqrt{n/(s^*\log d)}\) by varying \(s^*\) from 3 to 15, with d fixed to be 100, which follows the previous works (Wang et al. 2015; Zhu et al. 2017); The second one (Fig. 3) examines the convergence behavior under different corruption rate \(\epsilon\) which varies from 0 to 0.2; The last one (Fig. 4) shows the convergence behavior under different data dimensionality d which ranges from 80 to 240, with fixed \(\epsilon =0.2\).

For each experiment, instead of choosing the initial vectors which are close to the optimal ones, we use random initialization. We will set \(s=s^*\) in our algorithm, which is also used in the previous methods. Besides the parameter s, there are also two other parameters of the algorithm that need to be specified: the D-Trim parameter \(\alpha\) and the step size \(\eta\). We are also required to set the “noise level” for each of the three models, which is quantified by \(\sigma\) in their definitions. It is notable that the choices of these parameters are quite flexible.

-

GMM: Corrupted Gaussian Mixture Model (4). We fix \(\sigma\) to 0.5, \(\alpha\) to 0.2 and \(\eta\) to 0.1.

-

MRM Corrupted Mixture of Regressions Model (6). We fix \(\sigma\) to 0.2, \(\alpha\) to 0.2 and \(\eta\) to 0.1.

-

RMC Corrupted Linear Regression with Missing Covariates Model (8). We set \(\sigma =0.1\), \(\alpha =0.3\), and the missing probability \(p_m=0.1\), but use three different step sizes \(\eta =0.05, 0.1, 0.08\) for the three parts of the experiment, respectively.

Results Firstly, we will mainly show that the classical high dimensional gradient EM algorithm in Wang et al. (2015) is not robust against to the corruptions. Here we conduct the algorithm on the three models. For each experiment, we tune the parameters to be optimal as showed in Wang et al. (2015). We test the algorithm w.r.t to \(\sqrt{n/(s^*\log d)}\), iteration and different dimensions d.

As we can see from Fig. 1. In all the three models, the algorithm performs quite well if there is no corruptions (\(\epsilon =0\)) which also has been showed in the previous papers (Wang et al. 2015; Zhu et al. 2017). However, when there are \(\epsilon =0.05\) fraction of the samples are corrupted, the classical high dimensional EM algorithm will achieve a large estimation error. These results motivate us to design some robust high dimensional EM algorithms while also have provable statistical guarantees.

Estimation error of classical high dimensional gradient EM algorithm in Wang et al. (2015) w.r.t sample size, iteration and dimension

Next, we show the performance of our Algorithm 1. For the first part (Fig. 2), we can see that when \(\epsilon\) is small, the final estimation error in each of the three models decreases when the term \(\sqrt{n/(s^*\log d)}\) increases, as predicted by Theorem 2. But when \(\epsilon\) is relatively large, the trend becomes less obvious for the Gaussian Mixture Model and the Mixture of Regressions model, because now the factor \(\epsilon \log (nd)\) comes into play.

Figure 3 shows that our algorithm achieves linear convergence on all three models and all values of \(\epsilon\), but the final converged error is heavily affected by \(\epsilon\), and especially for the Gaussian Mixture and Linear Regression with Missing Covariates Models. Moreover, when \(\epsilon\) is small, the estimation errors are comparable to or even the same as the non-corrupted ones, this is actually reasonable since it is corruption-proofing when \(\epsilon\) is small theoretically. In the third part of the experiments (Fig. 4), varying d seems not affect the convergence behavior much, which is reasonable as the error bound depends on d only logarithmically and changes fairly slow. Thus, these results support Theorem 1.

All the results show that our algorithm is robust against to some level of corruption while also could achieve an estimation error that is comparable to the non-corrupted ones.

7 Conclusion

In this paper we study the problem of estimating latent variable models with arbitrarily corrupted samples in the high dimensional sparse case and propose a method called Trimmed Gradient Expectation Maximization. Specifically, we show that our algorithm is corruption-proofing and could achieve the (near) optimal statistical rate for some statistical models under some levels of corruption. Experimental results support our theoretical analysis and also show that our algorithm is indeed robust against to some corrupted samples.

There are still many open problems. Firstly, in this paper, all of our theoretical guarantees need the initial parameter be close enough to the underlying parameter, which is quite strong. So how do we relax this assumption? Second, the three specific models we considered in the paper are quite simple, can we generalize to more models such as multi-component Gaussian Mixture Model or Mixture of Linear Regressions Model? Thirdly, in this paper we assume that the sparsity of the underlying parameter is known, how to deal with the case where it is unknown?

Notes

Since high dimensional sparse case is much more harder than the low dimension case, our algorithm can be easily extended to the low dimension case by using the results in Balakrishnan et al. (2017b). Due to the space limit, we omit it in the paper.

For a vector \(v\in {\mathbb {R}}^d\), \(\Vert v\Vert _0\) represents the number of entries in v that are non-zero.

\(\langle \cdot , \cdot \rangle\) represents the inner product of two vectors.

In general, given a vector \(v\in {\mathbb {R}}^d\) and an integer s, function \(\text {supp}(v,s)\) returns a set of s number ofis indices corresponding to the top s largest value among \(\{|v_j|, j\in [d]\}\).

In general, given a vector \(v\in {\mathbb {R}}^d\) and a set of indices \({\mathcal {S}}\subseteq [d]\), function \(\text {trunc}(v, {\mathcal {S}})\in {\mathbb {R}}^d\), where \([\text {trunc}(v, {\mathcal {S}})]_j=v_j\) if \(j\in {\mathcal {S}}\) and \([\text {trunc}(v, {\mathcal {S}})]_j=0\) otherwise.

References

Aitkin, M., & Wilson, G. T. (1980). Mixture models, outliers, and the EM algorithm. Technometrics, 22(3), 325–331.

Alistarh, D., Allen-Zhu, Z., & Li, J. (2018). Byzantine stochastic gradient descent. In Advances in neural information processing systems, pp 4613–4623.

Balakrishnan, S., Du, S.S., Li, J., & Singh, A. (2017a). Computationally efficient robust sparse estimation in high dimensions. In Conference on learning theory, pp 169–212.

Balakrishnan, S., Wainwright, M. J., Yu, B., et al. (2017b). Statistical guarantees for the EM algorithm: From population to sample-based analysis. The Annals of Statistics, 45(1), 77–120.

Blumensath, T., & Davies, M. E. (2009). Iterative hard thresholding for compressed sensing. Applied and computational harmonic analysis, 27(3), 265–274.

Boucheron, S., Lugosi, G., & Massart, P. (2013). Concentration inequalities: A nonasymptotic theory of independence. Oxford: Oxford University Press.

Chen, Y., Caramanis, C., & Mannor, S. (2013). Robust sparse regression under adversarial corruption. In International conference on machine learning, pp 774–782.

Chen, Y., Su, L., & Xu, J. (2017). Distributed statistical machine learning in adversarial settings: Byzantine gradient descent. Proceedings of the ACM on measurement and analysis of computing systems, 1(2), 44.

Chen, Y., Yi, X., & Caramanis, C. (2018). Convex and nonconvex formulations for mixed regression with two components: Minimax optimal rates. IEEE Transactions on Information Theory, 64(3), 1738–1766.

Dalalyan, A.S., & Thompson, P. (2019). Outlier-robust estimation of a sparse linear model using \(\ell _1\)-penalized huber’s \(m\)-estimator. arXiv preprint arXiv:1904.06288.

Dawid, A. P., & Skene, A. M. (1979). Maximum likelihood estimation of observer error-rates using the EM algorithm. Journal of the Royal Statistical Society: Series C (Applied Statistics), 28(1), 20–28.

Diakonikolas, I., Kamath, G., Kane, D.M., Li, J., Moitra, A., & Stewart, A. (2016). Robust estimators in high dimensions without the computational intractability. In 2016 IEEE 57th annual symposium on foundations of computer science (FOCS), IEEE, pp 655–664.

Diakonikolas, I., Kane, D.M., & Stewart, A. (2017). Statistical query lower bounds for robust estimation of high-dimensional Gaussians and Gaussian mixtures. In 2017 IEEE 58th annual symposium on foundations of computer science (FOCS), IEEE, pp 73–84.

Diakonikolas, I., Kane, D.M., & Stewart, A. (2018). List-decodable robust mean estimation and learning mixtures of spherical gaussians. In Proceedings of the 50th annual ACM SIGACT symposium on theory of computing, ACM, pp 1047–1060.

Du, S.S., Balakrishnan, S., & Singh, A. (2017). Computationally efficient robust estimation of sparse functionals. arXiv preprint arXiv:1702.07709.

Faria, S., & Gonçalves, F. (2013). Financial data modeling by Poisson mixture regression. Journal of Applied Statistics, 40(10), 2150–2162.

Holland, M.J. (2018). Robust descent using smoothed multiplicative noise. arXiv preprint arXiv:1810.06207.

Huber, P. J. (2011). Robust statistics. Berlin: Springer.

Johnson, R., & Zhang, T. (2013). Accelerating stochastic gradient descent using predictive variance reduction. In Advances in neural information processing systems, pp 315–323.

Laird, N.M. (2010). The em algorithm in genetics, genomics and public health. Statistical Science, 25(4), 450–457.

Li, J. (2017). Robust sparse estimation tasks in high dimensions. arXiv preprint arXiv:1702.05860.

Liu, L., Li, T., & Caramanis, C. (2019). High dimensional robust estimation of sparse models via trimmed hard thresholding. arXiv preprint arXiv:1901.08237.

Loh, P.L., & Wainwright, M.J. (2011). High-dimensional regression with noisy and missing data: Provable guarantees with non-convexity. In Advances in neural information processing systems, pp 2726–2734.

Ma, J., Xu, L., & Jordan, M. I. (2000). Asymptotic convergence rate of the EM algorithm for Gaussian mixtures. Neural Computation, 12(12), 2881–2907.

McLachlan, G., & Krishnan, T. (2007). The EM algorithm and extensions (Vol. 382). Hoboken: Wiley.

Nesterov, Y. (2013). Introductory lectures on convex optimization: A basic course (Vol. 87). Berlin: Springer Science & Business Media.

Prasad, A., Suggala, A.S., Balakrishnan, S., & Ravikumar, P. (2018). Robust estimation via robust gradient estimation. arXiv preprint arXiv:1802.06485.

Suggala, A.S., Bhatia, K., Ravikumar, P., & Jain, P. (2019). Adaptive hard thresholding for near-optimal consistent robust regression. arXiv preprint arXiv:1903.08192.

Thompson, P., & Dalalyan, A.S. (2018). Restricted eigenvalue property for corrupted gaussian designs. arXiv preprint arXiv:1805.08020.

Vershynin, R. (2010). Introduction to the non-asymptotic analysis of random matrices. arXiv preprint arXiv:1011.3027.

Wang, Z., Gu, Q., Ning, Y., Liu, H. (2015). High dimensional EM algorithm: Statistical optimization and asymptotic normality. In Advances in neural information processing systems, pp 2521–2529.

Wu, C. J., et al. (1983). On the convergence properties of the EM algorithm. The Annals of statistics, 11(1), 95–103.

Yang, M. S., Lai, C. Y., & Lin, C. Y. (2012). A robust EM clustering algorithm for Gaussian mixture models. Pattern Recognition, 45(11), 3950–3961.

Yi, X., & Caramanis, C. (2015). Regularized em algorithms: A unified framework and statistical guarantees. In Advances in neural information processing systems, pp 1567–1575.

Yin, D., Chen, Y., Ramchandran, K., & Bartlett, P. (2018). Byzantine-robust distributed learning: Towards optimal statistical rates. arXiv preprint arXiv:1803.01498.

Zhu, R., Wang, L., Zhai, C., & Gu, Q. (2017). High-dimensional variance-reduced stochastic gradient expectation-maximization algorithm. In Proceedings of the 34th international conference on machine learning Volume 70, JMLR. org, pp 4180–4188.

Funding

The funding was provided by National Science Foundation (Grant Nos. IIS-1910492, CCF-1716400).

Author information

Authors and Affiliations

Author notes

Editors: Kee-Eung Kim, Vineeth N. Balasubramanian.

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Auxiliary lemmas

In this section, we introduce prerequisite knowledge and technical lemmas in order to prove the main results.

In order to analyze the Dimensional \(\alpha\)-trimmed estimator, we first give some results for 1-dimensional samples and denote it as \(\text {trmean}_\alpha (\cdot )\).

Definition 8

Given a set of \(\epsilon\)-corrupted samples \(\{z_i\}_{i=1}^n\subseteq {\mathbb {R}}\), the trimmed mean estimator \(\text {trmean}_\alpha (\{z_i\}_{i=1}^n)\in {\mathbb {R}}\) removes the largest and smallest \(\alpha\) fraction of elements in \(\{z_i\}_{i=1}^n\) and calculate the mean of the remaining terms. We choose \(\alpha =c_0\epsilon\), for some constant \(c_0\ge 1\). We also require that \(\alpha \le \frac{1}{2}-c_1\) for some small constant \(c_1>0\).

For the 1-dimensional trimmed mean estimator, we have the following upper bound on the error w.r.t the population mean.

Lemma 7

[Lemma A.2 in Liu et al. (2019)] Let \(\{z_i\}_{i=1}^n\subset {\mathbb {R}}^d\) be \(n=\varOmega (\log d)\) \(\epsilon\)-corrupted samples. If the j-th coordinate, for each \(j\in [d]\), of the samples \(\{z_{i, j}\}_{i=1}^n\) are i.i.d. \(\xi\)-exponential with mean \(\mu ^j\), then after using the dimensional \(\alpha\)-trimmed mean estimator, the following upper bound of error holds with probability at least \(1-d^{-3}\), for every \(j\in [d]\)

where \(C_2\) is some constant dependent on \(c_1\).

Next, we provide some symmetrization results of random variables, which will be used in our proofs. See Boucheron et al. (2013) for details.

Lemma 8

Let \(y_1, y_2, \ldots , y_n\) be the n independent realizations of the random vector \(Y\in {\mathcal {Y}}\), and \({\mathcal {F}}\) be a function class defined on \({\mathcal {Y}}\). For any increasing convex function \(\phi (\cdot )\), the following holds

where \(\epsilon _1, \ldots , \epsilon _n\) are i.i.d. Rademacher random variables that are independent of \(y_1, \ldots , y_n\).

Lemma 9

Let \(y_1, \ldots , y_n\) be n independent realization of the random vector \(Z\in {\mathcal {Z}}\) and \({\mathcal {F}}\) be a function class defined on \({\mathcal {Z}}\). If Lipschitz functions \(\{\phi _i(\cdot )\}_{i=1}^n\) satisfy the following for all \(v, v'\in {\mathbb {R}}\)

and \(\phi _i(0)=0\), then for any increasing convex function \(\phi (\cdot )\), the following holds

where \(\epsilon _1, \ldots , \epsilon _n\) are i.i.d. Rademacher random variables that are independent of \(y_1, \ldots , y_n\).

Finally we recall some definitions and lemmas on the sub-exponential and sub-Gaussian random variables. See Vershynin (2010) for details.

Definition 9

For a sub-exponential random vector X, its sub-exponential norm \(\Vert X\Vert _{\psi _1}\) is defined as

Lemma 10

Let X be a zero-mean sub-exponential random variable, then there are absolute constants \(C, c>0\), such that when \(|t|\le \frac{c}{\Vert X\Vert _{\psi _1}}\) ,

Lemma 11

(Bernstein’s inequality) Let \(X_1,\ldots , X_n\) be n i.i.d. realizations of \(\upsilon\)-sub-exponential random variable X with mean \(\mu\). Then,

Definition 10

A random variable X is sub-Gaussian with variance \(\sigma ^2\) if for all \(t>0\), the following holds

Definition 11

For a sub-Gaussian random variable X, its sub-Gaussian norm \(\Vert X\Vert _{\psi _2}\) is defined as

Lemma 12

If X is sub-Gaussian or sub-exponential, then \(\Vert X-{\mathbb {E}} X\Vert _{\psi _2}\le 2\Vert X\Vert _{\psi _2}\) or \(\Vert X-{\mathbb {E}} X\Vert _{\psi _1}\le 2\Vert X\Vert _{\psi _1}\) holds, respectively.

Lemma 13

For two sub-Gaussian random variables \(X_1, X_2\), \(X_1\cdot X_2\) is a sub-exponential random variable with

Lemma 14

Let \(X_1, X_2, \ldots , X_k\) be k independent zero-mean sub-Gaussian random variables, and \(X= \sum _{j=1}^kX_j\). Then, X is sub-Gaussian with \(\Vert X\Vert _{\psi _2}^2\le C\sum _{j=1}^k\Vert X_j\Vert _{\psi _2}^2\) for some absolute constant \(C>0\).

Omitted proofs

1.1 Proof of Theorem 1

By Lemma 7 and our assumption on the \(\xi\)-sub-exponential property of each coordinate, we have the following in the t-th iteration with probability at least \(1-d^{-3}\) for some constant \(C_2>0\)

For convenience, we let \(\alpha =C_2 \xi (\epsilon \log (nd)+\sqrt{\frac{\log d}{n}})\), and assume that for all iterations \(t\in [T-1]\), event (26) holds (then all events hold with probability at least \(1-Tp^{-3}\)).

In the t-th iteration, we define

and

That is, \({\bar{\beta }}^{t+0.5}\) is the gradient update of \(\beta ^t\) w.r.t the non-corrupted population gradient of \(Q_n(\beta ^t; \beta ^t)\), and \({\bar{\beta }}^{t+1}\) is the estimation after truncating \({\bar{\beta }}^{t+0.5}\) w.r.t set \(\hat{{\mathcal {S}}}^{t+0.5}\), which is the set of the s-largest coordinates of \(\beta ^{t+0.5}\).

By the definition, we have the following inequalities

For the term A, we have

Thus, if \(\beta ^t\in {\mathcal {B}}\), i.e., \(\Vert \beta ^t-\beta ^*\Vert \le k\Vert \beta ^*\Vert _2\)) and \(\Vert \beta ^t\Vert _0=s\), then by the assumption and (26), we have

Next, we will bound the term B. To do this, we need the following lemma, which follows (Wang et al. 2015).

Lemma 15

If

for some \(k\in (0,1)\) and

then, the following holds

Proof of Lemma 15

By assumption (32), we have

We then denote

and the sets \({\mathcal {I}}_1, {\mathcal {I}}_2\) and \({\mathcal {I}}_3\) as the follows

where \(S^*=\text {supp}(\beta ^*)\). Let \(s_i=|{\mathcal {I}}_i|\) for \(i=1, 2, 3\), respectively. Also, we define \(\varDelta =\langle {\bar{\theta }}, \theta ^*\rangle\). Note that

By Cauchy–Schwartz inequality, we have

Since \({\mathcal {I}}_3\subseteq \hat{{\mathcal {S}}}^{t+0.5}\) and \({\mathcal {I}}_1\bigcap \hat{{\mathcal {S}}}^{t+0.5}=\emptyset\), we have

We let \({\tilde{\epsilon }}=2\Vert {\bar{\theta }}-\theta \Vert _\infty =2\frac{\Vert {\bar{\beta }}^{t+0.5}-\beta ^{t+0.5}\Vert _\infty }{\Vert {\bar{\beta }}^{t+0.5}\Vert _2}\). Note that we have

which implies that

where inequality (a) is due to (40). Plugging (42) into (39), we have

Solving \(\Vert {\bar{\theta }}_{{\mathcal {I}}_1}\Vert _2\) in (43), we get

The final inequality is due to the inequality \(\frac{s_1}{s_3}\le \frac{s_1+s_2}{s_3+s_2}=\frac{s^*}{s}\), which follows from \(\frac{s^*}{s}\le \frac{(1-k)^2}{4(1+k)^2}\le 1\) and \(s_3\ge s-s^*\ge s^*\ge s_1\).

In the following, we will prove that the right hand side of (44) is upper bounded by \(\varDelta\). To achieve this, it is sufficient to show that

To prove (45), we first note that \(\sqrt{s^*}{\tilde{\epsilon }}\le \varDelta\), which is due to

where the second inequality is due to assumption (33) and the final inequality is due to

where inequality (a) is due to Assumption (32).

Now, we show that (45) holds. By (46), we have

which implies that \({\tilde{\epsilon }}\le \frac{\sqrt{s^*+s}}{s}\).

For the right hand side of (45), we have

Thus, in total, by (44) we can get

From (39), we can see that

that is,

Solving the above inequality, we get

where the final inequality is due to (44). Combining this with (44) and (52), we have

Now, by the definition of \({\bar{\theta }}\), we have

Therefore, we get

Let \(\chi =\Vert {\bar{\beta }}^{t+0.5}\Vert _2\Vert \beta ^*\Vert _2\). Then, by (55) and (53) we have

For the term \(\sqrt{\chi (1-\varDelta ^2)}\), we have

For the term \(\sqrt{\chi }{\tilde{\epsilon }}\), we have

Plugging (57) and (58) into (56), we get

Also, since \(\Vert {\bar{\beta }}^{t+1}\Vert _2^2+\Vert \beta ^*\Vert _2^2\le \Vert {\bar{\beta }}^{t+0.5}+\Vert \beta ^*\Vert _2^2\), subtracting (59), we obtain

Thus, we have

This completes the proof of Lemma 15. \(\square\)

Next, we bound the term \(\Vert {\bar{\beta }}^{t+0.5}-\beta ^*\Vert _2\) in (34).

Lemma 16

Under the assumptions in Theorem 1, the following inequality holds

Proof of Lemma 16

We first note that the self-consistent property in McLachlan and Krishnan (2007) implies that

which means that \(\beta ^*\) is a maximizer of \(Q(\beta ; \beta ^*)\). Thus, the proof follows from the convergence rate of the strongly convex and smooth functions \(Q(\beta ; \beta ^*)\) in Nesterov (2013). For the step size \(\eta =\frac{2}{\mu +\upsilon }\), we have

Thus, we get

Taking \(\eta =\frac{2}{\mu +\upsilon }\), we complete the proof. \(\square\)

Combining Lemmas 15, 16, and Eq. (31), we have the following lemma.

Lemma 17

If

for some \(k\in (0,1)\) and further assuming that

then it holds with probability at least \(1-d^{-3}\) that

where \(\alpha =C_2\xi (\epsilon \log (nd)+\sqrt{\frac{\log d}{n}})\).

We now prove Theorem 1.

Proof of Theorem 1

By Lemma 17, we know that it is sufficient to prove (68), which can be shown by mathematical induction.

We first prove \(\beta ^0\in {\mathcal {B}}\). By assumption, we have \(\Vert \beta ^{\text {init}}-\beta ^*\Vert _2\le \frac{R}{2}\). By the same proof of Lemma 15, we can get \(\Vert \beta ^0-\beta ^*\Vert _2\le (1+4\sqrt{\frac{s^*}{s}})^\frac{1}{2}\Vert \beta ^{\text {init}}-\beta ^*\Vert _2\le (1+4\sqrt{\frac{1}{4}})^\frac{1}{2}\frac{R}{2}\le R=k\Vert \beta ^*\Vert _2\). Thus, by Lemma 16, we can see that (68) holds for \(t=0\).

Now suppose that (68) holds for all \(t\le k\). Then, we have

by assumption we can see that \(\left(1+4\sqrt{\frac{s^*}{s}}\right)^{\frac{1}{2}}\left(1-2\frac{\upsilon -\gamma }{\upsilon +\mu }\right)\le \sqrt{1-2\frac{\upsilon -\gamma }{\upsilon +\mu }}\). Thus, we have

By the assumption of \(\frac{1}{\upsilon +\mu }\frac{(2\sqrt{s}+4\sqrt{2}\sqrt{s^*}/\sqrt{1-k})\alpha }{1-\sqrt{1-2\frac{\upsilon -\gamma }{\upsilon +\mu }}}\le \left({1-\sqrt{1-2\frac{\upsilon -\gamma }{\upsilon +\mu }}}\right)R\), we have

Hence, by Lemma 16, we obtain (68) for the case of \(t=k+1\). This completes the proof. \(\square\)

1.2 Proof of Lemma 2

From (5) it is oblivious that \([\nabla q_i(\beta ,\beta ))]_j\) is independent of other \(i\in [n]\) for fixed \(j\in [d]\). Next, we prove the property of sub-exponential for each coordinate.

Note that

and

For convenience, we let \(\nabla q_{i,j}\) denote \([\nabla q_i(\beta ,\beta ))]_j\) and \(\nabla q_{j}\) denote \({\mathbb {E}}[\nabla q_i(\beta ,\beta ))]_j\).

By the symmetrization lemma in Lemma 8, we have the following for any \(t>0\)

where \(\epsilon\) is a Rademacher random variable.

Next, we use Lemma 9 with \(f(y_{i,j})=y_{i,j}\), \({\mathcal {F}}=\{f\}\), \(\phi _i(v)=[2w_\beta (y_i)-1]v\) and \(\phi (v)=\exp (u\cdot v)\). It is easy to see that \(\phi _i\) is 1-Lipschitz. Thus, by Lemma 9 we have

By the formulation of the model, we have \(y_{i, j}=z_i \beta ^*_{j}+v_{i,j}\), where \(z_i\) is a Rademacher random variable and \(v_{i,j}\sim {\mathcal {N}}(0, \sigma ^2)\). It is easy to see that \(y_{i,j}\) is sub-Gaussian and

for some absolute constants \(C, C'\), where the last inequality is due to the facts that \(\Vert z_j\beta _j^*\Vert _{\psi _2}\le |\beta _j^*|\) and \(\Vert v_{i,j}\Vert _{\psi _2}\le C''\sigma ^2\) for some \(C''>0\).

Since \(|\epsilon y_{i,j}|=|y_{i,j}|\), \(\Vert \epsilon y_{i,j}\Vert _{\psi _2}=\Vert y_{i,j}\Vert _{\psi _2}\) and \({\mathbb {E}}(\epsilon y_{i,j})=0\), by Lemma 5.5 in Vershynin (2010) we have that for any \(u'\) there exists a constant \(C^{(4)}>0\) such that

Thus, for any \(t>0\) we get

for some constant \(C^{(5)}\). Therefore, in total we have the following for some constant \(C^{(6)}>0\)

Combining this with Lemma 10 and the definition, we know that \(\nabla q_{i,j}\) is \(O(\sqrt{\Vert \beta ^*\Vert _\infty ^2+\sigma ^2})\)-sub-exponential.

1.3 Proof of Lemma 4

From (7) it is oblivious that \([\nabla q_i(\beta ,\beta ))]_j\) is independent of other \(i\in [n]\) for any fixed \(j\in [d]\). Next, we prove the property of sub-exponential.

Note that \({\mathbb {E}}\nabla q_{i,j} = {\mathbb {E}} 2w_\beta (x, y)y\cdot x_j-\beta _j\). Thus, we have

For term A and any \(t>0\), we have

Using Lemma 9 on \(f(y_ix_{i,j})=y_ix_{i,j}\), \({\mathcal {F}}=f\), \(\phi _i(v)= 2w_\beta (x,y)v\) and \(\phi (v)=\exp (uv)\), we have

Note that since \(y_i=z_i\langle \beta ^*,x_i\rangle +v_i\) and \(\Vert z_i\langle \beta ^*,x_i\rangle \Vert _{\psi _2}=\Vert \langle \beta ^*,x_i\rangle \Vert _{\psi _2}\le C\Vert \beta ^*\Vert _2\) and \(\Vert v_i\Vert _{\psi _2}\le C'\sigma\) for some constants \(C, C'>0\), by Lemma 14 we know that there exists a constant \(C''>0\) such that

Thus, by Lemma 13 we have

For term B, we have

where \(x_j, x_k\sim {\mathcal {N}}(0, 1)\). Now, by Lemma 13 we have \(\Vert x_jx_k\beta _k\Vert _{\psi _1}\le |\beta _k|C^{(5)}\) for some constant \(C^{(5)}>0\). Thus, we get \(\Vert \sum _{k=1}^d x_jx_k\beta _k\Vert _{\psi _1}\le C^{(5)}\Vert \beta \Vert _1\).

Also, we know that \(\Vert \beta \Vert _1\le \sqrt{s}\Vert \beta \Vert _2\), since by assumption \(\Vert \beta \Vert _0=s\). Furthermore, we have \(\Vert \beta \Vert _2\le \Vert \beta ^*\Vert _2+\Vert \beta ^*-\beta \Vert _2\le (1+\frac{1}{32})\Vert \beta ^*\Vert _2\), since \(\beta \in {\mathcal {B}}\) (by assumption). From Lemma 12, we get \(\Vert B\Vert _{\psi _1}\le C^{(6)}\sqrt{s}\Vert \beta ^*\Vert _2\) with some constant \(C^{(6)}>0\).

Thus, we know that there exist some constants \(C^{(7)}>0\) and \(C^{(8)}>0\) such that

This means that \(\nabla q_{i,j}\) is \(O(\max \{\Vert \beta ^*\Vert _2^2+\sigma ^2, 1, \sqrt{s}\Vert \beta ^*\Vert _2\})\) sub-exponential.

1.4 Proof of Lemma 6

For simplicity, we use notations \({\bar{m}}^i=m_\beta (x_i^{\text {obs}}, y_i)\), \({\bar{m}}=\beta (x^{\text {obs}}, y)\), \({\bar{K}}^i=K_\beta (x_i^{\text {obs}}, y_i)\), and \({\bar{K}}=K_\beta (x^{\text {obs}}, y)\). Then, we have

For the j-th coordinate of A, we have

We note that \({\bar{m}}_j\) is a zero-mean sub-Gaussian random variable with \(\Vert {\bar{m}}_j\Vert _{\psi _2}\le C(1+kr)\) (see Lemma B.3 in Wang et al. (2015))

Lemma 18

Under the assumption of Lemma 6, for each \(j\in [d]\), \({\bar{m}}_j\) is sub-Gaussian with mean zero and \(\Vert {\bar{m}}_j\Vert _{\psi _2}\le C(1+kr)\).

Thus, by Lemma 13 we have

where the last inequality is due to the fact that \(y=\langle \beta ^*, x\rangle +v\). Thus, \(\Vert y\Vert _{\psi _2}^2\le C_3(\Vert \langle \beta ^*, x\rangle \Vert _{\psi _2}^2+\Vert v\Vert _{\psi _2}^2)\) for some \(C_3\).

For term B, we have

For term C, we have the following [by Example 5.8 in Vershynin (2010)]

For term D, by Lemma 18 and 13 we have

Since \(\beta \in {\mathcal {B}}\), we get \(\Vert \beta \Vert _1\le \sqrt{s}\Vert \beta \Vert _2\le (1+k)\sqrt{s}\Vert \beta ^*\Vert _2\). Thus, we have

For term E, since \(1-z_i\in [0,1]\), we have \(\Vert (1-z_{i,j}){\bar{m}}^i_j\Vert _{\psi _2}\le \Vert {\bar{m}}^i_j\Vert _{\psi _2}\le C(1+kr)\). Hence, by Lemma 13 we get

This gives us

By Lemma 12, we get

Rights and permissions

About this article

Cite this article

Wang, D., Guo, X., Li, S. et al. Robust high dimensional expectation maximization algorithm via trimmed hard thresholding. Mach Learn 109, 2283–2311 (2020). https://doi.org/10.1007/s10994-020-05926-z

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10994-020-05926-z