Abstract

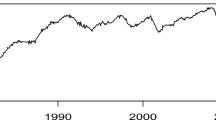

In this paper additive models with p-order autoregressive conditional symmetric errors based on penalized regression splines are proposed for modeling trend and seasonality in time series. The aim with this kind of approach is try to model the autocorrelation and seasonality properly to assess the existence of a significant trend. A backfitting iterative process jointly with a quasi-Newton algorithm are developed for estimating the additive components, the dispersion parameter and the autocorrelation coefficients. The effective degrees of freedom concerning the fitting are derived from an appropriate smoother. Inferential results and selection model procedures are proposed as well as some diagnostic methods, such as residual analysis based on the conditional quantile residual and sensitivity studies based on the local influence approach. Simulations studies are performed to assess the large sample behavior of the maximum penalized likelihood estimators. Finally, the methodology is applied for modeling the daily average temperature of San Francisco city from January 1995 to April 2020.

Similar content being viewed by others

References

Akaike H (1973) Information theory and an extension of the maximum likelihood principle. In: Petrov BN, Csaki F (eds) International symposium on information theory. Akademiai Kiado Budapest, Hungary, pp 267–281

Barros M, Paula GA (2019) Discussion of Birnbaum-Saunders distributions: a review of models, analysis and applications. Appl Stoch Models Bus Ind 35:96–99

Byrd RH, Lu P, Nocedal J, Zhu C (1995) A limited memory algorithm for bound constrained optimization. SIAM J Sci Comput 16:1190–1208

Cao CZ, Lin JG, Zhu LX (2010) Heteroscedasticity and/or autocorrelation diagnostics in nonlinear models with AR(1) and symmetrical errors. Stat Pap 51:813–836

Cook RD (1986) Assessment of local influence. J R Stat Soc B 48:133–169

Cook RD, Weisberg S (1982) Residuals and influence in regression. Chapman and Hall, London

Cleveland WS, McRae JE, Terpenning I (1990) STL: a seasonal-trend decomposition. J Off Stat 6:3–73

Cysneiros FJA, Paula GA (2005) Restricted methods in symmetrical linear regression models. Comput Stat Data Anal 49:689–708

Davidon WC (1991) Variable metric method for minimization. SIAM J Optim 1:1–17

Dunn PK, Smyth GK (1996) Randomized quantile residuals. J Comput and Graph Stat 5:236–244

Efron B, Hinkley DV (1978) Assessing the accuracy of the maximum likelihood estimator: observed versus expected Fisher information. Biometrika 65:457–487

Eilers PH, Marx BD (1996) Flexible smoothing with B-splines and penalties. Stat Sci 11:89–102

Fang KT, Kotz S, Ng KW (1990) Symmetric multivariate and related distributions. Chapman and Hall, London

Fox J (2015) Applied regression analysis and generalized linear models, 3rd edn. Sage Publications, London

Green PJ, Silverman BW (1994) Nonparametric regression and generalized linear models: a roughness penalty approach. Chapman and Hall/CRC, London

Hastie TJ, Tibshirani RJ (1990) Generalized additive models. Chapman and Hall/CRC, London

Huang L, Jiang H, Wang H (2019) A novel partial-linear single-index model for time series data. Comput Stat Data Anal 134:110–122

Huang L, Xia Y, Qin X (2016) Estimation of semivarying coefficient time series models with ARMA errors. Ann Stat 44:1618–1660

Ibacache-Pulgar G, Paula GA, Cysneiros FJA (2013) Semiparametric additive models under symmetric distributions. TEST 22:103–121

Judge GG, Griffiths WE, Hill RC, Lutkepohl H, Lee TC (1985) The theory and practice of econometrics, 2nd edn. Wiley, New York

Kissock JK (1999) UD EPA Average Daily Temperature Archive, http://academic.udayton.edu/kissock/http/Weather/default.htm. Accessed 20 Feb 2021

Lancaster P, Salkauskas K (1986) An introduction curve and surface fitting. Academic Press, London

Lee SY, Xu L (2004) Influence analyses of nonlinear mixed-effects models. Comput Stat Data Anal 45:321–341

Liu JM, Chen R, Yao Q (2010) Nonparametric transfer function models. J Econom 157:151–164

Liu S (2004) On diagnostics in conditionally heteroscedastic time series models under elliptical distributions. J Appl Probab 41A:393–405

Lucas A (1997) Robustness of the student-t based M-estimator. Commun Stat Theory Methods 26:1165–1182

Mittelhammer RC, Judge GG, Miller DJ (2000) Econometric foundations. Cambridge University Press, New York

Paula GA, Medeiros MJ, Vilca-Labra FE (2009) Influence diagnostics for linear models with first-order autoregressive elliptical errors. Stat Probab Lett 79:339–346

Poon WY, Poon YS (1999) Conformal normal curvature and assessment of local influence. J R Stat Soc B 61:51–61

R Core Team (2020) R: A Language and Environment for Statistical Computing. R Foundation for Statistical Computing, Vienna, Austria. https://www.Rproject.org. Accessed 10 Jan 2021

Relvas CEM, Paula GA (2016) Partially linear models with first-order autoregressive symmetric errors. Stat Pap 57:795–825

Schwarz GE (1978) Estimating the dimension of a model. Ann Stat 6:461–464

Vanegas LH, Paula GA (2016) An extension of log-symmetric regression models: R codes and applications. J Stat Comp Simul 86:1709–1735

Wise J (1955) The autocorrelation function and the spectral density function. Biometrika 42:151–159

Wood SN (2017) Generalized additive models: an introduction with R, 2nd edn. Chapman and Hall/CRC, London

Acknowledgements

The authors are grateful to the Associate Editor and reviewers for their helpful comments. This study was partially supported by the Coordenação de Aperfeiçoamento de Pessoal de Nível Superior (CAPES) - Finance Code 001 and CNPq, Brazil.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Appendix A: Penalized score function

Let \(\text {L}_p({\varvec{\theta }},{\varvec{\lambda }})\) denote the penalized log-likelihood function for the parameter vector \({\varvec{\theta }}=({\varvec{\gamma }}_T^\top ,{\varvec{\gamma }}_S^\top ,\phi ,\rho _1, \ldots , \rho _p)^\top \). One has that

where \(\delta _i=\frac{(\epsilon _i-\rho _1\epsilon _{i-1}-\ldots -\rho _p\epsilon _{i-p})^2}{\phi }\), \(\epsilon _i=y_i-\mathbf{n}_T(t_i)^\top {\varvec{\gamma }}_T-\mathbf{n}_S(s_i)^\top {\varvec{\gamma }}_S\), for \(i=1,\ldots ,n\).

The penalized score functions for \(\phi \) and \({\varvec{\rho }}\) are, respectively, given by

and

In addition, the derivatives of \(\text {L}_p({\varvec{\theta }},{\varvec{\lambda }})\) with respect to \(\gamma _{T_j}\) and \(\gamma _{S_l}\) yield

and

where \([\mathbf{M}_T{\varvec{\gamma }}_T]_j\) and \([\mathbf{M}_S{\varvec{\gamma }}_S]_l\) denote the jth and lth positions of the vectors \(\mathbf{M}_T{\varvec{\gamma }}_T\) and \(\mathbf{M}_S{\varvec{\gamma }}_S\), respectively.

In matrix notation we obtain

where the quantities \(\mathbf{N}_T,\mathbf{N}_S,{\varvec{\epsilon }},\mathbf{D}_v,\mathbf{D}_m,\mathbf{A}\) and \(\mathbf{C}_j\) were defined in Sect. 4.

Appendix B: Penalized Hessian matrix

For simplicity of notation we will consider \(n_{T_{ij}}=n_{T_j}(t_i)\) and \(n_{S_{il}}=n_{S_l}(t_i)\), \((i=1,\ldots ,n)\), \((j=1,\ldots ,r_T)\) and \((l=1,\ldots ,r_S-1)\).

Consider the parameters \(({\gamma _{T_j}},{\gamma _{T_h}})\) for which we obtain the derivatives

In matrix notation, we obtain

Similarly, for the parameter vector \({\varvec{\gamma }}_S\) one has

The second derivatives of \(\text {L}_p({\varvec{\theta }},{\varvec{\lambda }})\) with respect to \(\phi \) and \(\rho _j\) yield

and

where \(\mathbf{D}_c=\text {diag}\left\{ c_1,\ldots ,c_n\right\} \) with \(c_i=W'_g(\delta _i)\) and \(\mathbf{D}_d=\text {diag}\left\{ d_1,\ldots ,d_n\right\} \) with \(d_i=W'_g(\delta _i)\delta _i\).

The derivatives of \(\text {L}_p({\varvec{\theta }},{\varvec{\lambda }})\) with respect to \((\gamma _{T_j},\gamma _{S_l})\) yield

In matrix notation, we obtain

For the derivatives of \(\text {L}_p({\varvec{\theta }},{\varvec{\lambda }})\) with respect to \((\gamma _{T_j},\phi )\) and \((\gamma _{T_j},\rho _{j'})\), we obtain

which in matrix form may be expressed as

and

Similarly, the derivatives of \(\text {L}_p({\varvec{\theta }},{\varvec{\lambda }})\) with respect to \(({\varvec{\gamma }}_S,\phi )\) and \(({\varvec{\gamma }}_S,\rho _{j'})\) yield

and

Finally, for the derivatives of \(\text {L}_p({\varvec{\theta }},{\varvec{\lambda }})\) with respect to \((\phi , \rho _j)\) and \((\rho _j, \rho _{j'})\) we obtain

Appendix C: Penalized Fisher information matrix

Similarly to Relvas and Paula (2016) the penalized Fisher information matrix will be derived from the regularity conditions applied in the regular log-likelihood function L\(({\varvec{\theta }})\), namely E\(\{\partial \text {L}({\varvec{\theta }})/\partial {\varvec{\theta }}\}\!=\!\mathbf{0}\) and E\(\{\partial ^2 \text {L}({\varvec{\theta }})/\partial {\varvec{\theta }}\partial {\varvec{\theta }}^\top \}\!=\!\) −E\(\{\left[ \partial \text {L}({\varvec{\theta }})\!/\!\partial {\varvec{\theta }}\right] \left[ \partial \text {L}({\varvec{\theta }})\!/\!\partial {\varvec{\theta }}^\top \right] \}\), and the results \(f_g=\text {E}\left\{ W_g^2(z^2)z^4\right\} \) and \(d_g=\text {E}\left\{ W_g^2(z^2)z^2\right\} \) with \(z\sim S(0,1)\).

For the parameter \({\varvec{\gamma }}_T\) it follows that

We may show that E\(\left( -\mathbf{D}_v+4\mathbf{D}_d\right) =-4d_g\) and consequently the penalized Fisher information matrix for \({\varvec{\gamma }}_T\) is

Similarly, we obtain

From the regularity condition E\(\left( \text {U}_{\theta }^\phi \right) =0\) we obtain E\(\{W_g(\delta _i)\delta _i\}=-\frac{1}{2}, \ (i=1,\ldots ,n)\). Then,

where \(\text {L}_{p_i}({\varvec{\theta }},{\varvec{\lambda }})\) denotes the ith element of the penalized log-likelihood function. Therefore, we obtain \(\text {K}_p^{\phi \phi }=\frac{n}{4\phi ^2}(4f_g-1)\).

For the parameter \(\rho _j\) one has that

which implies

For AR(1) errors, we may express (see, for instance, Judge, 1982) the error \(\epsilon _i\) as

where \(j\rightarrow \infty \) means that the series has a past over time. Since \(|\rho _1|<1\) the process is stationary. One obtains

and

Then, the Fisher information of \(\rho _1\) reduces to

It is also a simple matter to find autocorrelation in lag s. For example, in lag 1, one has

Similarly, for lag 2, one obtains

and the covariance between l periods of two errors is given by

Thus, matrix \({\varvec{\varUpsilon }}_1\) may be constructed.

For AR(2) errors \(\epsilon _i\) may be expressed as

where E\((e_i)=0\), E\((e_ie_{i'})=0\), for \(i\ne i'\), and E\((e_i^2) = \phi \xi \), thereby E\((\epsilon _i)=0\). This process is stationary if \(\rho _1 + \rho _2 <1 \), \(\rho _2-\rho _1<1 \) and \(-1<\rho _2<1\). According to Judge et al. (1985) and Fox (2015), the elements of the covariance matrix \({\varvec{\varUpsilon }}_2\) may be found from the variance

Multiplying (9) by \(\epsilon _{i-1} \) and taking expectation, one obtains

and since E\((\epsilon _{i-1}^2)=\phi _\epsilon \) and E\((\epsilon _{i-1}\epsilon _{i-2})= \text {Cov}(\epsilon _{i-1},\epsilon _{i-2})=\text {Cov}(\epsilon _i,\epsilon _{i-1})\), solving for autocovariance one obtains

Similarly, for \(l>1\),

so we may find the the autocovariance recursively. For example, for \(l=2\), one has

where \(\phi _0=\phi _{\epsilon }\), and for \(l=3\),

In general for AR(p) errors one may write

and the Fisher information for (\(\rho _j,\rho _{j'})\), \(j \ne j'=1,\ldots ,p\), is given by

Considering \(j'<j\), we may write the Fisher information for \((\rho _j,\rho _{j'})\), \(j \ne j'\), as follows

The expression for the \(\varUpsilon _p\) becomes progressively more complicated. A general expression is given in Wise (1955).

The penalized Fisher information matrix for \(({\varvec{\gamma }}_T^\top ,{\varvec{\gamma }}_S^\top )^\top \) is given by

and we can see that \({\varvec{\gamma }}_T\) and \({\varvec{\gamma }}_S\) are not orthogonal.

From the properties of the symmetric distributions we have that

Then, we may obtain the following penalized Fisher information matrices:

From E\((\mathbf{U}_p^{\gamma _T})=\mathbf{0}\) it follows that

then \(\text {E}(\mathbf{D}_v\mathbf{A}{\varvec{\epsilon }})=\mathbf{0} \), so we may obtain

and since E\(\left\{ (\mathbf{C}_j{\varvec{\epsilon }})^\top \right\} =\mathbf{0} \) we have that

Similarly, we may show that \(\mathbf{K}_p^{\rho _j\gamma _S}=\mathbf{0} \).

Appendix D: Case-weight perturbation scheme

Consider the attributed weights in the penalized log-likelihood function as

where \(\text {L}_p({\varvec{\theta }}\mid {\varvec{\omega }})= \sum _{i=1}^{n}\omega _i\text {L}_i({\varvec{\theta }})\), \({\varvec{\omega }}=(\omega _1,\ldots ,\omega _n)^\top \) is the vector of weights, with \(0~\le ~\omega _i~\le ~1\). In this case the vector of no perturbation is given by \({\varvec{\omega }}_0=\mathbf{1} _{n}\).

For this perturbation scheme we obtain

where \(\mathbf{D}_v=\text {diag}\left\{ v_1,\ldots ,v_n\right\} \) with \(v_i=-2W_g(\delta _i)\), \(\mathbf{D}_m=\text {diag}\left\{ m_1,\ldots ,m_n\right\} \) with \(m_i~=~v_i~\delta _i\), for \(i=1,\ldots ,n\), \(\epsilon _0=0\) and \(\mathbf{D}_{(\text {A}_{\epsilon })}\) is a diagonal matrix with elements given by \(\mathbf{A}{\varvec{\epsilon }}\) evaluated at \(\widehat{{\varvec{\theta }}}\). In addition,

Rights and permissions

About this article

Cite this article

Oliveira, R.A., Paula, G.A. Additive models with autoregressive symmetric errors based on penalized regression splines. Comput Stat 36, 2435–2466 (2021). https://doi.org/10.1007/s00180-021-01106-2

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00180-021-01106-2

Keywords

- Cubic splines

- Cyclic splines

- Daily temperature

- Model checking

- Penalized likelihood

- Student-t models

- Robust estimation