Abstract

This paper investigates in a non-parametric framework whether academic programmes maximize their student graduation rates and programme quality ratings given the first-year student dropout rates. In addition, it explores what institutional and programme characteristics explain this interaction. The results show a large variation in how academic programmes are able to deal with the selective nature of first-year dropout. Nevertheless, we can accurately explain the variation among programmes by programme and institutional characteristics. It seems that universities can maximize the relation between first-year dropout, graduation rates and quality ratings in several ways: (1) by improving student programme satisfaction, (2) by better preparing certain groups of students for higher education, (3) by supporting male students, (4) by supporting ethnic minority students, (5) by attracting older staff, and (6) by strengthening the selective nature of the first year (ie, increasing the academic dismissal policy threshold).

Similar content being viewed by others

Notes

Note that graduation rates and quality ratings are often not measured in a consistent way. We incorporated the used definitions/measurements in Tables A1 and A2 in Appendix A.

However, few studies have investigated the direct relationship between satisfaction and graduation rates.

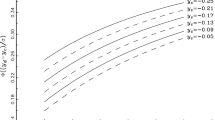

Note that academic programme, given their dropout, can choose not to obtain the highest graduation rate and quality rating as possible (ie, the Free Disposability Assumption). Further in the section, we discuss this more deeply.

Note that in the DEA literature, this ‘trade-off line’ is known as the ‘efficient frontier’.

The only exceptions are the numerus fixus programmes in medicine, dentistry and other areas that have a limited number of student places. Numerus fixus means that programmes can choose which students they will accept. Note that only 0.30% of the available places can be filled in by this practice (Jongbloed, 2003).

Thus, the student graduation rate is measured conditional on first-year dropout.

Note that these sample sizes differ because we only included programmes which have information on all the control variables. Programmes with missing values are thus removed. There are more academic programmes with missing values in the academic year 2010–2011 compared with 2011–2012. This is not surprising since the data gathering of Studiekeuze123 is improving every year.

The number of bootstrap replications only matters for the statistical inference. The conditional order-m model has been estimated in R by using the integral formulation, as this procedure is more time efficient and precise.

Note that when we combine the two years in one data set and we do the above analyses again we find similar results. Moreover, we find a significant negative correlation between the graduation and dropout rate and an insignificant negative relation between graduation rate and the quality ratings.

In line with Jongbloed et al (1994, 2003), we divided the academic programmes into an arts, a sciences and a medical cluster and reran the analyses. The results of the arts cluster are robust with the current findings. The results of the sciences cluster showed differences concerning the influence of the control variables. Owing to insignificant power we did not find results for the medical cluster.

References

Abbott M and Doucouliagos C (2003). The efficiency of Australian universities: A data envelopment analysis. Economics of Education Review 22(1): 89–97.

Agasisti T (2011). Performances and spending efficiency in higher education: A European comparison through non‐parametric approaches. Education Economics 19(2): 199–224.

Archibald RB and Feldman DH (2008). Graduation rates and accountability: Regressions versus production frontiers. Research in Higher Education 49(1): 80–100.

Arnold I and Van den Brink W (2010). Naar een effectiever bindend studieadvies [Towards a more effective binding study advice]. Tijdschrift voor Hoger Onderwijs en Management 2010(5): 10–13.

Astin AW and Solmon LC (1981). Are reputational ratings needed to measure quality? Change: The Magazine of Higher Learning 13(7): 14–19.

Athanassopoulos AD and Shale E (1997). Assessing the comparative efficiency of higher education institutions in the UK by the means of data envelopment analysis. Education Economics 5(2): 117–134.

Avkiran NK (2001). Investigating technical and scale efficiencies of Australian universities through data envelopment analysis. Socio-Economic Planning Sciences 35(1): 57–80.

Avkiran NK and Rowlands T (2008). How to better identify the true managerial performance: State of the art using DEA. Omega 36(2): 317–324.

Bailey T, Calcagno JC, Jenkins D, Leinbach T and Kienzl G (2006). Is student-right-to-know all you should know? An analysis of community college graduation rates. Research in Higher Education 47(5): 491–519.

Benneworth P et al (2011). Quality-Related Funding, Performance Agreements and Profiling in Higher Education. Center for Higher Education Policy Studies: Enschede.

Bonaccorsi A and Daraio C (2008). The differentiation of the strategic profile of higher education institutions. New positioning indicators based on microdata. Scientometrics 74(1): 15–37.

Bonaccorsi A, Daraio C and Simar L (2007). Efficiency and productivity in European universities: Exploring trade-offs in the strategic profile. In: Bonaccorsi A and Daraio C (eds). Universities and Strategic Knowledge Creation: Specialization and Performance in Europe. Specialization and Performance in Europe. Edward Elgar PRIME collection: Cheltenham.

Bound J, Lovenheim MF and Turner S (2010). Why have college completion rates declined? An analysis of changing student preparation and collegiate resources. American Economic Journal. Applied Economics 2(3): 129.

Bradley S, Johnes J and Little A (2010). The measurement and determinants of efficiency and productivity in the further education sector in England. Bulletin of Economic Research 62(1): 1–30.

Calcagno JC, Bailey T, Jenkins D, Kienzl G and Leinbach T (2008). Community college student success: What institutional characteristics make a difference? Economics of Education Review 27(6): 632–645.

Cave M, Hanney S, Kogan M and Trevett G (1991). The Use of Performance Indicators in Higher Education: A Critical Analysis of Developing Practice. Jessica Kingsley: London.

Cazals C, Florens J-P and Simar L (2002). Nonparametric frontier estimation: A robust approach. Journal of Econometrics 106(1): 1–25.

Chickering AW and Reisser L (1993). Education and Identity. Jossey-Bass: San Francisco.

Daraio C and Simar L (2005). Introducing control variables in nonparametric frontier models: A probabilistic approach. Journal of Productivity Analysis 24(1): 93–121.

Daraio C and Simar L (2007). Advanced Robust and Nonparametric Methods in Efficiency Analysis: Methodology and Applications. Springer: New York.

De Corte E (2014). Evaluation of Universities in Western Europe: From Quality Assessment to Accreditation. Belgium, Leuven: KU Leuven, from: http://vo.hse.ru/data/2015/01/14/1106361466/2014-4_DeCorte_En.pdf, accessed 13 April 2015.

De Koning BB, Loyens SMM, Rikers RMJP., Smeets G and van der Molen HT (2014). Impact of binding study advice on study behavior and pre-university education qualification factors in a problem-based psychology bachelor program. Studies in Higher Education 39(5): 835–847.

De Paola M (2009). Does teacher quality affect student performance? Evidence from an Italian university. Bulletin of Economic Research 61(4): 353–377.

Deprins D, Simar L and Tulkens H (1984). Measuring labor-efficiency in post offices. In: Marchand M, Pestieau P and Tulkens H (eds). The Performance of Public Enterprises. Elsevier Science Publishers: Amsterdam, pp 243–267.

De Witte K and Hudrlikova L (2013). What about excellence in teaching? A benevolent ranking of universities. Scientometrics 96(1): 337–364.

De Witte K and Kortelainen M (2013). What explains performance of students in a heterogeneous environment? Conditional efficiency estimation with continuous and discrete control variables. Applied Economics 45(17): 2401–2412.

De Witte K, Rogge N, Cherchye L and Van Puyenbroeck T (2013a). Accounting for economies of scope in performance evaluations of university professors. Journal of the Operational Research Society 64(11): 1595–1606.

De Witte K, Rogge N, Cherchye L and Van Puyenbroeck T (2013b). Economies of scope in research and teaching: A non-parametric investigation. Omega—International Journal of Operational Research 41(2): 305–314.

Drennan LT and Beck M (2001). Teaching quality performance indicators—key influences on the UK universities’ scores. Quality Assurance in Education 9(2): 92–102.

Gijbels D, Van der Rijt J and Van de Watering G (2004). Het bindend studieadvies in het hoger wetenschappelijk onderwijs: worden de juiste studenten geselecteerd? [ The binding study advice in higher scientific education: Are the right students selected? ]. Tijdschrift voor Hoger Onderwijs 22(2): 62–72.

Hall M (1999). Why students take more than four years to graduate. Paper presented at the Association for Institutional Research Forum, Seattle, WA.

Hosch BJ (2008). Institutional and student characteristics that predict graduation and retention rates. Paper presented at the North East Association for Institutional Research Annual Meeting.

Huisman J (2008). Shifting boundaries in higher education: Dutch hogescholen on the move. In: Taylor J, Ferreira JB, De Lourdes Machado M and Santiago R (eds). Non-University Higher Education in Europe. Springer: Milton Keynes, pp 147–167.

Huisman J and Currie J (2004). Accountability in higher education: Bridge over troubled water? Higher Education 48(4): 529–551.

Inspectie van het onderwijs (2009). Uitval en rendement in het hoger onderwijs: achtergrondrapport bij werken aan een beter rendement, from http://www.onderwijsinspectie.nl/binaries/content/assets/Actueel_publicaties/2009/Werken+aan+een+beter+rendement+-+achtergrondrapport.pdf, accessed 24 October 2013.

Johnes J (1996). Performance assessment in higher education in Britain. European Journal of Operational Research 89(1): 18–33.

Johnes J (2006a). Measuring teaching efficiency in higher education: An application of data envelopment analysis to economics graduates from UK universities 1993. European Journal of Operational Research 174(1): 443–456.

Johnes J (2006b). Data envelopment analysis and its application to the measurement of efficiency in higher education. Economics of Education Review 25(3): 273–288.

Johnes J and Taylor J (1990). Performance Indicators in Higher Education: UK Universities. Open University Press and the Society for Research into Higher Education: Milton Keynes.

Jongbloed B (2001). Performance-based funding in higher education: An international survey. Centre for the Economics of Education and Training, Monash University, Australia, Working Paper n.35.

Jongbloed B (2003). Marketisation in higher education, Clark’s triangle and the essential ingredients of markets. Higher Education Quarterly 57(2): 110–135.

Jongbloed BWA, Koelman JBJ, Goudriaan R, de Groot H, Haring HMM and van Ingen DC (1994). Kosten en doelmatigheid van het hoger onderwijs in Nederland, Duitsland en Groot-Brittannië. Beleidsgerichte studies Hoger Onderwijs en Wetenschappelijk onderzoek 57, Ministerie van Onderwijs, Cultuur en Wetenschappen. SDU, Den Haag.

Jongbloed B, Salerno C and Kaiser F (2003). Kosten per Student, Methodologie Schattingen en een Internationale Vergelijking. CHEPS, Enschede.

Jongbloed B and Vossensteyn H (2001). Keeping up performances: An international survey of performance-based funding in higher education. Journal of Higher Education Policy and Management 23(2): 127–145.

Kokkelenberg EC, Sinha E, Porter JD and Blose GL (2008). The efficiency of private universities as measured by graduation rates, Cornell Higher Education Research Institute Working paper, 113.

Lau LK (2003). Institutional factors affecting student retention. Education 124(1): 126–136.

Lee C and Buckthorpe S (2008). Robust performance indicators for non‐completion in higher education. Quality in Higher Education 14(1): 67–77.

McMillan ML and Datta D (1998). The relative efficiency of Canadian universities: A DEA perspective. Canadian Public Policy 24(4): 485–511.

McNabb R, Pal S and Sloane P (2002). Gender differences in educational attainment: The case of university students in England and Wales. Economica 69(275): 481–503.

Mellanby J, Martin M and O’Doherty J (2000). The ‘gender gap’ in final examination results at Oxford University. British Journal of Psychology 91(3): 377–390.

Neumann R (2001). Disciplinary differences and university teaching. Studies in Higher Education 26(2): 135–146.

OCW (2011). Hoofdlijnenakkoord OCW-VSNU, from http://www.vsnu.nl/files/documenten/Domeinen/Accountability/HLA/Hoofdlijnenakkoord_universiteiten_DEF_20111208.pdf, accessed 24 October 2013.

Porter SR (2000). The robustness of the ‘graduation rate performance’ indicator used in the U.S. news and world report college ranking. International Journal of Educational Advancement 1(2): 10–30.

Reason RD (2003). Using an ACT-based merit-index to predict between-year retention. Journal of College Student Retention: Research, Theory and Practice 5(1): 71–87.

Reason RD (2009). Student variables that predict retention: Recent research and new developments. Naspa Journal 40(4): 172–191.

Robst J (2001). Cost efficiency in public higher education institutions. Journal of Higher Education 72(6): 730–750.

Salerno C (2003). What We Know About the Efficiency of Higher Education Institutions: The Best Evidence. CHEPS, Universiteit Twente: the Netherlands.

Schwarz S and Westerheijden DF (2004). Accreditation and Evaluation in the European Higher Education Area. Springer Science & Business Media: Dordrecht, the Netherlands.

Suhre CJ, Jansen EP and Harskamp EG (2007). Impact of degree program satisfaction on the persistence of college students. Higher Education 54(2): 207–226.

Scott M, Bailey T and Kienzl G (2006). Relative success? Determinants of college graduation rates in public and private colleges in the US. Research in Higher Education 47(3): 249–279.

Sneyers E and De Witte K (2015). The effect of an academic dismissal policy on dropout, graduation rates and student satisfaction. Evidence from the Netherlands. Studies in Higher Education. in press.

Stegers-Jager KM, Cohen-Schotanus J, Splinter TAW and Themmen APN (2011). Academic dismissal policy for medical students: Effect on study progress and help-seeking behaviour. Medical education 45(10): 987–994.

Stensaker B and Harvey L (2006). Old wine in new bottles? A comparison of public and private accreditation schemes in higher education. Higher Education Policy 19(1): 65–85.

Stevens PA (2001). The Determinants of Economic Efficiency in English and Welsh universities. National Institute of Economic and Social Research: London.

Sweitzer K and Volkwein JF (2009). Prestige among graduate and professional schools: comparing the U.S. News’ graduate School reputation ratings between disciplines. Research in Higher Education 50(8): 129–148.

Task force studiesucces (2009). Eindadvies studiesucces. Universiteit Leiden: Leiden.

Teichler U (2007). Accreditation: The role of a new assessment approach in Europe and the overall map of evaluation in European higher education. In: Schwarz S and Westerheijden DF (eds.). Evaluation and Accreditation in the European Higher Education Area. Kluwer Academic Publishers: Dordrecht.

Tinto V (1993). Leaving College: Rethinking the Causes and Cures of Student Attrition. 2nd edn, The University of Chicago Press: Chicago.

Tinto V (2002). Promoting student retention: Lessons learned from the United States. Paper presented at the 11th Annual Conference of the European Access Network, Prato, Italy.

Van Damme D (2000). Internationalization and quality assurance: Towards worldwide accreditation? European Journal for Education Law and Policy 4(1): 1–20.

Van Vught FA and Westerheijden DF (1994). Towards a general model of quality assessment in higher education. Higher Education 28(3): 355–371.

VSNU (2012). Prestaties in perspectief: Trendrapportage universiteiten 2000–2020, from http://www.vsnu.nl/files/documenten/Publicaties/Trendrapportage_DEF.pdf, accessed 13 November 2013.

Acknowledgements

We would like to thank participants of the Sixth North American Productivity Workshop, the Dutch Ministry of Education and ORD 2014, Wim Groot, Henriëtte Maassen van den Brink, Kees Boele, Subal Kumbhakar, Tommaso Agasisti, the associate editor and two referees for valuable insights and comments. We gratefully acknowledge the support with the data received by Studiekeuze123, especially by Bram Enning and Constance Dutmer.

Author information

Authors and Affiliations

Corresponding author

Appendices

Appendix A

1.1 Definitions used in earlier literature

Appendix B

1.1 Robustness test: the AD policy as input variable

Appendix C

1.1 Which programme characteristics have the largest influence on the efficiency model?

Rights and permissions

About this article

Cite this article

Sneyers, E., De Witte, K. The interaction between dropout, graduation rates and quality ratings in universities. J Oper Res Soc 68, 416–430 (2017). https://doi.org/10.1057/jors.2016.15

Published:

Issue Date:

DOI: https://doi.org/10.1057/jors.2016.15