Abstract

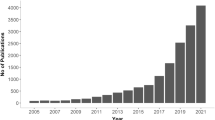

Various recent events, such as the COVID-19 pandemic or the European elections in 2019, were marked by the discussion about potential consequences of the massive spread of misinformation, disinformation, and so-called “fake news.” Scholars and experts argue that fears of manipulated elections can undermine trust in democracy, increase polarization, and influence citizens’ attitudes and behaviors (Benkler et al. 2018; Tucker et al. 2018). This has led to an increase in scholarly work on disinformation, from less than 400 scientific articles per year before 2016 to about 1’500 articles in 2019. Within social sciences, surveys and experiments dominated in the last few years. Content analysis is used less frequently and studies conducting content analyses mostly use automated approaches or mixed methods designs.

You have full access to this open access chapter, Download chapter PDF

Similar content being viewed by others

Keywords

1 Introduction

Various recent events, such as the COVID-19 pandemic or the European elections in 2019, were marked by the discussion about potential consequences of the massive spread of misinformation, disinformation, and so-called “fake news.” Scholars and experts argue that fears of manipulated elections can undermine trust in democracy, increase polarization, and influence citizens’ attitudes and behaviors (Benkler et al. 2018; Tucker et al. 2018). This has led to an increase in scholarly work on disinformation, from less than 400 scientific articles per year before 2016 to about 1’500 articles in 2019Footnote 1.

One initial challenge for this field of research is the definition and conceptualization of the phenomenon. Researchers have discussed different terms, including misinformation (non-intentional deception), disinformation (intentional deception), and mal-information (harmful content) (Wardle and Derakhshan 2017). Research often examined the phenomenon of disinformation, as it is relevant from a democratic theory perspective and can have serious societal consequences. The term refers to fabricated news reports and decontextualized information, but is sometimes also used in relationship with similar concepts, such as conspiracy theories, propaganda, or rumors (Freelon and Wells 2020; Tandoc et al. 2018). However, empirical research often fails to determine clearly whether false information was disseminated deliberately or inadvertently (Freelon and Wells 2020). Furthermore, it is argued that the term “fake news” is politized and used for different purposes in both scholarly articles and in the news (Quandt et al. 2019). Thus, Egelhofer and Lecheler (2019) suggest to differentiate between the “fake news label” used by politicians, for example, to discredit news media, and the “fake news genre” (e.g. fabricated news reports).

Recent communication research in the field of disinformation has mainly dealt with online and social media environments. Researchers argue that although the phenomenon is not new, it is seems to be precisely these environments where disinformation spreads massively because it can be easily disseminated by users.Thus, a large audience can be reached and possibly manipulated (Miller and Vaccari 2020; Vosoughi et al. 2018).

2 Common Research Designs and Combination of Methods

The concept of disinformation is studied across various disciplines, e.g. social sciences, computer science, medicine, or law. Within social sciences, surveys and experiments dominated in the last few years—presumably because of the societal need to answer urgent questions regarding exposure (Allcott and Gentzkow 2017; Grinberg et al. 2019; Guess et al. 2020), concerns (European Commission 2018; Jang and Kim 2018), or digital literacy and the ability to recognize disinformation (Pennycook et al. 2018; Roozenbeek and van der Linden 2019; Vraga and Tully 2019). Moreover, scholars frequently investigated connected concepts such as knowledge (Amazeen and Bucy 2019), traits and beliefs (Anthony and Moulding 2019; Petersen et al. 2018), or credibility of and trust in the news media and public actors (Newman et al. 2018; Zimmermann and Kohring 2020). Content analysis is used less frequently and studies conducting content analyses mostly use automated approaches or mixed methods designs (Amazeen et al. 2019; Chadwick et al. 2018; Grinberg et al. 2019; Guess et al. 2019). Those studies link survey data to digital trace data in order to examine who is exposed to disinformation, and which users interact with disinformation and for what reason. For example, Guess et al. (2019) link a representative survey to behavioral data on Facebook and identify age and political ideology as relevant factors explaining the willingness to share mis- and disinformation. Such approaches go beyond self-reported activities on social media but pose challenges in terms of storing personal data. Besides the methodological approaches, research frequently focuses on samples from the U.S. and analyzes social media platforms, such as Twitter and Facebook (Allcott et al. 2019; Bovet and Makse 2019; Grinberg et al. 2019; Guess et al. 2019; Ross and Rivers 2018). It should be noted that the samples are often a result of limited data access by platforms (Bruns 2019; Freelon 2018; Puschmann 2019) and that user data from other relevant channels such as messenger services (e.g. WhatsApp) are difficult to access for research (Rossini et al. 2020). Moreover, research on disinformation mostly focuses on specific issues and real-world events, such as election campaigns or times of crises. This poses challenges for the comparability and replication of studies.

3 Main Constructs

Despite the increasing interest in the subject, this is a rather young field of research. Therefore, the following research areas and analytical constructs should be understood as a current snapshot of recent years, and not yet as an overview of saturated research fields. However, the various approaches with content analyses can be summarized in five areas, which are neither exhaustive nor disjunctive. In these studies, the identification and operationalization of disinformation is a crucial part which often poses challenges. The identification of disinformation is of great interest since it is relevant for research in two ways: detection of disinformation for sampling purposes and the automated detection as an own object of research. Since these two objectives may overlap in the future, a distinction is made between manual (see 1) and automated identification (see 2) of disinformation.

1. Manual detection of disinformation. To manually detect and operationalize disinformation, research mainly follows two approaches, which Li (2020, p. 126) labels as story or source. Focusing on sources, studies are not primarily concerned with the falseness of information, but with the producers and publishers of false messages (Grinberg et al. 2019). In this context, several authors have argued that alternative right-wing media are potential disseminators of disinformation (Figenschou and Ihlebæk 2019; Post 2019). So far, research has predominantly focused on the US (Guess et al. 2018; Lazer et al. 2018; Nelson and Taneja 2018). To foster comparative research from a territorial (national information environments), as well as from a temporal (ephemerality of certain alternative media sites) perspective, key dimensions and typologies of alternative media need to be established (Frischlich et al. 2020; Holt et al. 2019).

The story-based approach for the identification of disinformation uses single false stories or lists of false claims (Allcott and Gentzkow 2017; Humprecht 2019) published by factchecking websites (e.g. snopes.com, politifact.com, factcheck.org), or news media (e.g. The Guardian, The Washington Post, Buzzfeed). Studies in this area (Al-Rawi et al. 2019; Ferrara 2020; Graham et al. 2020; Graham and Keller 2020; Hindman and Barash 2018; Metaxas and Finn 2019) analyze content on Twitter using hashtags referring to specific false claims (#pizzagate), events (#covid19), or a combination of both (#australienbushfires, #ArsonEmergency).

2. Automated detection of disinformation: Besides the manual detection of disinformation, automated approaches are frequently applied. Automated detection approaches are superior to manual approaches in terms of capacity, veracity, and ephemerality (Zhang and Ghorbani 2020). They also allow the identification of a multitude of possible actors as well as human or non-human distributers (e.g. social bots, Shao et al. 2018).

According to the extensive overview work by Zhang and Ghorbani (2020, p. 11), state-of-the-art research-based detection approaches are: Component-based (creator-, content-, and context analysis), data mining-based (supervised learning: deep learning, machine learning, unsupervised learning), implementation-based (online, offline), and platform-based (social media, other online news platforms) methods. The authors point at current challenges and future studies needed in the field of unsupervised learning, such as (i) cluster analysis to identify homogenous content and authors, (ii) outlier analysis of abnormal behavior of objects, (iii) embedding technologies of natural language processing as an important component of the detection processes (Word2vec, FastText, Sent2vec, Doc2vec), and (iv) semantic similarity analysis to detect near-duplicate content (Zhang and Ghorbani 2020, pp. 19–21). The latter is especially relevant regarding the identification of decontextualized information.

3. Dissemination of disinformation: Exploring the dissemination and spread of false messages presents another strand of research, especially in the social sciences. In research on the dissemination of disinformation, three main foci can be distinguished: 1) diffusion, 2) amplification and 3) strategy. (1) Research focusing on diffusion examines the spread of false messages and focuses on the amount of user interactions with “fake sources” or “fake stories” over time (Allcott et al. 2019). In addition, research explores what types of content users are sharing across different countries (Bradshaw et al. 2020; Marchal et al. 2020; Neudert et al. 2019). To further investigate the origin and variations of content, studies compared the original sender (incl. implemented links, e.g. from alternative media sites) and modifications of the content of false and real stories with evolutionary tree analysis (Jang et al. 2018), or analyzed if initial publications reappear and if they modify over time using time series analysis and text similarity (Shin et al. 2018). Moreover, studies using content or network analysis frequently analyze the extent and reach of disinformation. Those studies identify an increased potential exposure towards disinformation among ideologically homogeneous and polarized communities (Bessi et al. 2016; Del Vicario et al. 2016; Hjorth and Adler-Nissen 2019; Schmidt et al. 2018; Shin et al. 2017; Walter et al. 2020). (2) Regarding amplifications, research investigates how dissemination processes can be driven or amplified by the news media. Research seem to agree on the agenda-setting power of false messages and alternative media sites (Rojecki and Meraz 2016; Tsfati et al. 2020), but some studies also relativize its influence (Vargo et al. 2018). (3) Research on strategic coordination, for example, investigates how rumors actively turn into a disinformation campaign by applying document-driven multi-site trace ethnography (Krafft and Donovan 2020). Another study by Keller et al. (2020) focuses on actors and the identification and validation of astroturfing agents. Lukito (2020) uses a different approach and investigates the temporally coordination of a disinformation campaign, namely IRA activities, with time series analysis (2015–2017) on several social media platforms (Facebook, Twitter, Reddit).

4. Content of disinformation: Another strand of research focusses on the content of disinformation. Studies in this area have been conducted in various filed, including political communication, health and science communication (Brennen et al. 2020; Wang et al. 2019). The aim of these—so far few—studies is to identify different types of disinformation. Thus, this research area is of crucial importance for the conceptualization of disinformation. The two studies presented here both find and highlight that online disinformation is not only a technology-driven phenomenon, but additionally defined by partisanship, identity politics and national information environments (Humprecht 2019; Mourão and Robertson 2019). Humprecht (2019) qualitatively identifies different types of online disinformation and finds cross-national differences by quantitatively analyzing topics, speakers, and target objects of fact-checked disinformation articles. Mourão and Robertson (2019) investigate sensationalism, bias, clickbait and misinformation of “Fake News”-sites and analyzed which articles and components triggered engagement on social media.

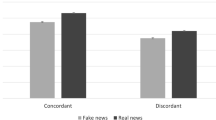

5. User participation: Digital interactions of users on online or social media can be investigated not only in terms of what content attracts attention and spreads (for example via the number of shares/retweets), but also in terms of how people respond to certain messages in terms of liking or commenting. Barfar (2019) analyzed emotions, incivility and cognitive thinking in the comments of Facebook posts by automated text analysis (using the Linguistic inquiry and word count dictionary, see Pennebaker et al. 2007) and compared posts with true and false claims. Additionally, a study by Introne et al. (2018) examines online discussions within specific issue-related forums on a website called “abovetopsecret”. The authors applied discourse-, narrative- and content analysis in order to investigate how false narratives are constructed.

4 Research Desiderata

Research on disinformation has considerably increased in recent years, but there are still some significant gaps, e.g. in terms of comparative research, dimensions and typologies, and methods.

Debarking from the 2016 presidential elections in the U.S., many studies have focused on the role of disinformation in the context of this election campaign. As a result, findings were generalized without considering the different political and media opportunity structures in the individual countries. However, some notable exceptions show that significant differences exist between countries regarding various aspects of disinformation (Humprecht et al. 2020; Neudert et al. 2019). In order to enable comparative research, established criteria for sampling of disinformation sources are needed, e.g. of alternative media that potentially disseminate disinformation.

An important contribution to the state of research would also be the investigation of key features of disinformation by means of content analyses, e.g. types and forms of presentation of disinformation. This would both enable reproducibility and strengthen the theoretical discourse (Freelon and Wells 2020).

Moreover, research has largely neglected to role of news media in the dissemination of disinformation (Tsfati et al. 2020). It has been argued that news media act as multiplicators for disinformation, e.g. by republishing social media posts of political actors. Another promising, but not yet sufficiently researched aspect is the use of the term “fake news” by political actors to discredit the media. This is an important aspect against the background of increasing polarization and mistrust in news media in many countries (Egelhofer and Lecheler 2019).

Methodologically, the detection of disinformation is probably the greatest challenge for current research. To be able to compare the extent of the spread of disinformation between different platforms and countries, established dictionaries and identifiers are needed. More importantly, researchers need better access to the data of platform operators. For future research, it would be desirable that platform operators cooperate with researchers and make the data available in a transparent manner so that the production and dissemination of disinformation can be scientifically traced.

To sum up, the field of research on disinformation offers great potential for content analysis research. Moreover, combinations of automated and manual content analysis could be very fruitful with regard to the research gaps mentioned above.

Relevant Variables in DOCA—Database of Variables for Content Analysis

-

Publishers/sources: https://doi.org/10.34778/4c

-

Topics: https://doi.org/10.34778/4d

-

Types: https://doi.org/10.34778/4e

Notes

- 1.

Search terms: misinformation OR disinformation OR “fake news” (July 2nd, 2020; Web of Science).

References

Allcott, H., & Gentzkow, M. (2017). Social media and fake news in the 2016 election. Journal of Economic Perspectives, 31(2), 211–236.

Allcott, H., Gentzkow, M., & Yu, C. (2019). Trends in the diffusion of misinformation on social media. Research & Politics, 6(2).

Al-Rawi, A., Groshek, J., & Zhang, L. (2019). What the fake? Assessing the extent of networked political spamming and bots in the propagation of #fakenews on Twitter. Online Information Review, 43(1), 53–71.

Amazeen, M. A., & Bucy, E. P. (2019). Conferring resistance to digital disinformation: The inoculating influence of procedural news knowledge. Journal of Broadcasting & Electronic Media, 63(3), 415–432.

Amazeen, M. A., Vargo, C. J., & Hopp, T. (2019). Reinforcing attitudes in a gatewatching news era: Individual-level antecedents to sharing fact-checks on social media. Communication Monographs, 86(1), 112–132.

Anthony, A., & Moulding, R. (2019). Breaking the news: Belief in fake news and conspiracist beliefs. Australian Journal of Psychology, 71(2), 154–162.

Barfar, A. (2019). Cognitive and affective responses to political disinformation in Facebook. Computers in Human Behavior, 101, 173–179.

Benkler, Y., Faris, R., & Roberts, H. (2018). Network propaganda: Manipulation, disinformation, and radicalization in American politics. New York: Oxford University Press.

Bessi, A., Petroni, F., Vicario, M. D., Zollo, F., Anagnostopoulos, A., Scala, A., . . . Quattrociocchi, W. (2016). Homophily and polarization in the age of misinformation. The European Physical Journal Special Topics, 225(10), 2047–2059.

Bovet, A., & Makse, H. A. (2019). Influence of fake news in Twitter during the 2016 US presidential election. Nature Communications, 10(1), 1–14.

Bradshaw, S., Howard, P. N., Kollanyi, B., & Neudert, L.‑M. (2020). Sourcing and automation of political news and information over social media in the United States, 2016-2018. Political Communication, 37(2), 173–193.

Brennen, A. J. S., Simon, F. M., Howard, P. N., & Nielsen, R. K. (2020). Types, sources, and claims of COVID-19 misinformation. Oxford University Press, April, 1–13.

Bruns, A. [Axel] (2019). After the ‘APIcalypse’: social media platforms and their fight against critical scholarly research. Information, Communication & Society, 22(11), 1544–1566.

Chadwick, A., Vaccari, C., & O’Loughlin, B. (2018). Do tabloids poison the well of social media? Explaining democratically dysfunctional news sharing. New Media & Society, 20(11), 4255–4274.

Del Vicario, M., Bessi, A., Zollo, F., Petroni, F., Scala, A., Caldarelli, G., . . . Quattrociocchi, W. (2016). The spreading of misinformation online. Proceedings of the National Academy of Sciences of the United States of America, 113(3), 554–559.

Egelhofer, J. L., & Lecheler, S. (2019). Fake news as a two-dimensional phenomenon: A framework and research agenda. Annals of the International Communication Association, 43(2), 97–116.

European Commission (2018). Report on the implementation of the Communication ‘Tackling online disinformation: a European approach’. Retrieved from https://www.europeansources.info/record/report-on-the-implementation-of-the-communication-tackling-online-disinformation-a-european-approach/

Ferrara, E. (2020). What types of COVID-19 conspiracies are populated by Twitter bots? First Monday. Advance online publication.

Figenschou, T. U., & Ihlebæk, K. A. (2019). Media criticism from the far-right: Attacking from many angles. Journalism Practice, 13(8), 901–905.

Freelon, D. (2018). Computational research in the post-API age. Political Communication, 35(4), 665–668.

Freelon, D., & Wells, C. (2020). Disinformation as political communication. Political Communication, 37(2), 145–156.

Frischlich, L., Klapproth, J., & Brinkschulte, F. (2020). Between mainstream and alternative – Co-orientation in right-wing populist alternative news media. In C. Grimme, M. Preuss, F. W. Takes, & A. Waldherr (Eds.), Lecture Notes in Computer Science. Disinformation in open online media (Vol. 12021, pp. 150–167). Cham: Springer International Publishing.

Graham, T., Bruns, A., Zhu, G., & Campbell, R. (May 2020). Like a virus: The coordinated spread of coronavirus disinformation. Retrieved from https://d3n8a8pro7vhmx.cloudfront.net/theausinstitute/pages/3316/attachments/original/1590956846/P904_Like_a_virus_-_COVID19_disinformation__Web_.pdf?1590956846

Graham, T., & Keller, T. R. (2020, January 10). Bushfires, bots and arson claims: Australia flung in the global disinformation spotlight. The Conversation. Retrieved from https://theconversation.com/bushfires-bots-and-arson-claims-australia-flung-in-the-global-disinformation-spotlight-129556

Grinberg, N., Joseph, K., Friedland, L., Swire-Thompson, B., & Lazer, D. (2019). Fake news on Twitter during the 2016 U.S. Presidential election. Science (New York, N.Y.), 363(6425), 374–378.

Guess, A., Nagler, J., & Tucker, J. (2019). Less than you think: Prevalence and predictors of fake news dissemination on Facebook. Science Advances, 5(1).

Guess, A., Nyhan, B., & Reifler, J. (2018). Selective exposure to misinformation: Evidence from the consumption of fake news during the 2016 US presidential campaign. European Research Council, 9(3), 1–14.

Guess, A. M., Nyhan, B., & Reifler, J. (2020). Exposure to untrustworthy websites in the 2016 US election. Nature Human Behaviour, 4(5), 472–480.

Hindman, M., & Barash, V. (2018). Disinformation, 'fake news' and influence campaigns on Twitter. Retrieved from Knight Foundation website: https://www.issuelab.org/resources/33163/33163.pdf

Hjorth, F., & Adler-Nissen, R. (2019). Ideological asymmetry in the reach of pro-Russian digital disinformation to United States audiences. Journal of Communication, 69(2), 168–192.

Holt, K., Ustad Figenschou, T., & Frischlich, L. [Lena] (2019). Key dimensions of alternative news media. Digital Journalism, 7(7), 860–869.

Humprecht, E. (2019). Where ‘fake news’ flourishes: a comparison across four Western democracies. Information, Communication & Society, 22(13), 1973–1988.

Humprecht, E., Esser, F., & van Aelst, P. (2020). Resilience to online disinformation: A framework for cross-national comparative research. The International Journal of Press/Politics. Advance online publication. doi: https://doi.org/10.1177/1940161219900126

Introne, J., Gokce Yildirim, I., Iandoli, L., DeCook, J., & Elzeini, S. (2018). How people weave online information into pseudoknowledge. Social Media + Society, 4(3).

Jang, S. M., Geng, T., Queenie Li, J.‑Y., Xia, R., Huang, C.‑T., Kim, H., & Tang, J. (2018). A computational approach for examining the roots and spreading patterns of fake news: Evolution tree analysis. Computers in Human Behavior, 84, 103–113.

Jang, S. M., & Kim, J. K. (2018). Third person effects of fake news: Fake news regulation and media literacy interventions. Computers in Human Behavior, 80, 295–302.

Keller, F. B., Schoch, D., Stier, S., & Yang, J. (2020). Political astroturfing on Twitter: How to coordinate a disinformation campaign. Political Communication, 37(2), 256–280.

Krafft, P. M., & Donovan, J. (2020). Disinformation by design: The use of evidence collages and platform filtering in a media manipulation campaign. Political Communication, 37(2), 194–214.

Lazer, D. M. J., Baum, M. A., Benkler, Y., Berinsky, A. J., Greenhill, K. M., Menczer, F., . . . Zittrain, J. L. (2018). The science of fake news. Science (New York, N.Y.), 359(6380), 1094–1096.

Li, J. (2020). Toward a research agenda on political misinformation and corrective information. Political Communication, 37(1), 125–135.

Lukito, J. (2020). Coordinating a multi-platform disinformation campaign: Internet research agency activity on three U.S. social media platforms, 2015 to 2017. Political Communication, 37(2), 238–255.

Marchal, N., Kollanyi, B., Neudert, L.‑M., Au, H., & Howard, P. N. (2020). Junk news & information sharing during the 2019 UK general election. Retrieved from http://arxiv.org/pdf/2002.12069v1

Metaxas, P., & Finn, S. (2019). Investigating the unfamous #Pizzagate conspiracy theory. Technology Science. Retrieved from https://techscience.org/a/2019121802

Miller, M. L., & Vaccari, C. (2020). Digital threats to democracy: Comparative lessons and possible remedies. The International Journal of Press/Politics. Advance online publication. doi: https://doi.org/10.1177/1940161220922323

Mourão, R. R., & Robertson, C. T. (2019). Fake news as discursive integration: An analysis of sites that publish false, misleading, hyperpartisan and sensational information. Journalism Studies, 20(14), 2077–2095.

Nelson, J. L., & Taneja, H. (2018). The small, disloyal fake news audience: The role of audience availability in fake news consumption. New Media & Society, 20(10), 3720–3737.

Neudert, L.‑M., Howard, P., & Kollanyi, B. (2019). Sourcing and automation of political news and information during three European elections. Social Media + Society, 5(3).

Newman, N., Fletcher, R., Kalogeropoulos, A., Levy, D., & Nielsen, R. K. (2018). Reuters Institute Digital News Report 2018. Retrieved from https://ssrn.com/abstract=3245355

Pennebaker, J., Francis, M., & Booth, R. (2007). Linguistic inquiry and word count: A computer-based text analysis program [Computer software]. Austin, TX: LIWC.net.

Pennycook, G., Cannon, T. D., & Rand, D. G. (2018). Prior exposure increases perceived accuracy of fake news. Journal of Experimental Psychology. General, 147(12), 1865–1880.

Petersen, M., Osmundsen, M., & Arceneaux, K. (2018). A ‘need for chaos’ and the sharing of hostile political rumors in advanced democracies. PsyArXiv Preprint.

Post, S. (2019). Polarizing communication as media effects on antagonists. Understanding communication in conflicts in digital media societies. Communication Theory, 29(2), 213–235.

Puschmann, C. (2019). An end to the wild west of social media research: a response to Axel Bruns. Information, Communication & Society, 22(11), 1582–1589.

Quandt, T., Frischlich, L., Boberg, S., & Schatto‐Eckrodt, T. (2019). Fake news. The International Encyclopedia of Journalism Studies, 2019, 1–6.

Rojecki, A., & Meraz, S. (2016). Rumors and factitious informational blends: The role of the web in speculative politics. New Media & Society, 18(1), 25–43.

Roozenbeek, J., & van der Linden, S. (2019). The fake news game: actively inoculating against the risk of misinformation. Journal of Risk Research, 22(5), 570–580.

Ross, A. S., & Rivers, D. J. (2018). Discursive deflection: Accusation of “fake news” and the spread of mis- and disinformation in the Tweets of president Trump. Social Media + Society, 4(2).

Rossini, P., Stromer-Galley, J., Baptista, E. A., & Veiga de Oliveira, V. (2020). Dysfunctional information sharing on WhatsApp and Facebook: The role of political talk, cross-cutting exposure and social corrections. New Media & Society. Advance online publication. doi: https://doi.org/10.1177/1461444820928059

Schmidt, A. L., Zollo, F., Scala, A., Betsch, C., & Quattrociocchi, W. (2018). Polarization of the vaccination debate on Facebook. Vaccine, 36(25), 3606–3612.

Shao, C. et al. (2018) ‘The spread of low-credibility content by social bots’, Nature communications. Springer US, 9(1), p. 4787.

Shin, J., Jian, L., Driscoll, K., & Bar, F. (2017). Political rumoring on Twitter during the 2012 US presidential election: Rumor diffusion and correction. New Media & Society, 19(8), 1214–1235.

Shin, J., Jian, L., Driscoll, K., & Bar, F. (2018). The diffusion of misinformation on social media: Temporal pattern, message, and source. Computers in Human Behavior, 83, 278–287.

Tandoc, E. C., Lim, Z. W., & Ling, R. (2018). Defining “fake news”. Digital Journalism, 6(2), 137–153.

Tsfati, Y., Boomgaarden, H. G., Strömbäck, J., Vliegenthart, R., Damstra, A., & Lindgren, E. (2020). Causes and consequences of mainstream media dissemination of fake news: Literature review and synthesis. Annals of the International Communication Association, 44(2), 157–173.

Tucker, J., Guess, A., Barbera, P., Vaccari, C., Siegel, A., Sanovich, S., . . . Nyhan, B. (2018). Social media, political polarization, and political disinformation: A review of the scientific literature. SSRN Electronic Journal. Advance online publication. doi: https://doi.org/10.2139/ssrn.3144139

Vargo, C. J., Guo, L., & Amazeen, M. A. (2018). The agenda-setting power of fake news: A big data analysis of the online media landscape from 2014 to 2016. New Media & Society, 20(5), 2028–2049.

Vosoughi, S., Roy, D., & Aral, S. (2018). The spread of true and false news online. Science (New York, N.Y.), 359(6380), 1146–1151.

Vraga, E. K., & Tully, M. (2019). News literacy, social media behaviors, and skepticism toward information on social media. Information, Communication & Society, 1–17.

Walter, D., Ophir, Y., & Jamieson, K. H. (2020). Russian Twitter accounts and the partisan polarization of vaccine discourse, 2015-2017. American Journal of Public Health, 110(5), 718–724.

Wang, Y., McKee, M., Torbica, A., & Stuckler, D. (2019). Systematic literature review on the spread of health-related misinformation on social media. Social Science & Medicine, 240(September), 112552.

Wardle, C., & Derakhshan, H. (2017). Information disorder: Toward an interdisciplinary framework for research and policy making. Council of Europe Report, 27.

Zhang, X., & Ghorbani, A. A. (2020). An overview of online fake news: Characterization, detection, and discussion. Information Processing & Management, 57(2).

Zimmermann, F., & Kohring, M. (2020). Mistrust, disinforming news, and vote choice: A panel survey on the origins and consequences of believing disinformation in the 2017 German parliamentary election. Political Communication, 37(2), 215–237.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Open Access Dieses Kapitel wird unter der Creative Commons Namensnennung 4.0 International Lizenz (http://creativecommons.org/licenses/by/4.0/deed.de) veröffentlicht, welche die Nutzung, Vervielfältigung, Bearbeitung, Verbreitung und Wiedergabe in jeglichem Medium und Format erlaubt, sofern Sie den/die ursprünglichen Autor(en) und die Quelle ordnungsgemäß nennen, einen Link zur Creative Commons Lizenz beifügen und angeben, ob Änderungen vorgenommen wurden.

Die in diesem Kapitel enthaltenen Bilder und sonstiges Drittmaterial unterliegen ebenfalls der genannten Creative Commons Lizenz, sofern sich aus der Abbildungslegende nichts anderes ergibt. Sofern das betreffende Material nicht unter der genannten Creative Commons Lizenz steht und die betreffende Handlung nicht nach gesetzlichen Vorschriften erlaubt ist, ist für die oben aufgeführten Weiterverwendungen des Materials die Einwilligung des jeweiligen Rechteinhabers einzuholen.

Copyright information

© 2023 Der/die Autor(en)

About this chapter

Cite this chapter

Staender, A., Humprecht, E. (2023). Content Analysis in the Research Field of Disinformation. In: Oehmer-Pedrazzi, F., Kessler, S.H., Humprecht, E., Sommer, K., Castro, L. (eds) Standardisierte Inhaltsanalyse in der Kommunikationswissenschaft – Standardized Content Analysis in Communication Research. Springer VS, Wiesbaden. https://doi.org/10.1007/978-3-658-36179-2_29

Download citation

DOI: https://doi.org/10.1007/978-3-658-36179-2_29

Published:

Publisher Name: Springer VS, Wiesbaden

Print ISBN: 978-3-658-36178-5

Online ISBN: 978-3-658-36179-2

eBook Packages: Social Science and Law (German Language)