Abstract

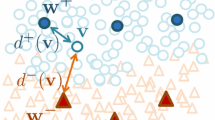

Prototype-based classification schemes offer very intuitive and flexible classifiers with the benefit of easy interpretability of the results and scalability of the model complexity. Recent prototype-based models such as robust soft learning vector quantization (RSLVQ) have the benefit of a solid mathematical foundation of the learning rule and decision boundaries in terms of probabilistic models and corresponding likelihood optimization. In its original form, they can be used for standard Euclidean vectors only. In this contribution, we extend RSLVQ towards a kernelized version which can be used for any positive semidefinite data matrix. We demonstrate the superior performance of the technique, kernel RSLVQ, in a variety of benchmarks where results competitive or even superior to state-of-the-art support vector machines are obtained.

Chapter PDF

Similar content being viewed by others

Keywords

- Support Vector Machine

- Learning Rule

- Vector Quantization

- Learn Vector Quantization

- Learn Vector Quantization Network

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Biehl, M., Ghosh, A., Hammer, B.: Dynamics and generalization ability of LVQ algorithms. Journal of Machine Learning Research 8, 323–360 (2007)

Biehl, M., Hammer, B., Verleysen, M., Villmann, T. (eds.): Similarity-Based Clustering. LNCS (LNAI), vol. 5400. Springer, Heidelberg (2009)

Boulet, R., Jouve, B., Rossi, F., Villa, N.: Batch kernel SOM and related Laplacian methods for social network analysis. Neurocomputing 71(7-9), 1257–1273 (2008)

Chen, Y., Garcia, E.K., Gupta, M.R., Rahimi, A., Cazzanti, L.: Similarity-based classification: Concepts and algorithms. JMLR 10, 747–776 (2009)

Cottrell, M., Hammer, B., Hasenfuss, A., Villmann, T.: Batch and median neural gas. Neural Networks 19, 762–771 (2006)

Frasconi, P., Gori, M., Sperduti, A.: A general framework for adaptive processing of data structures. IEEE TNN 9(5), 768–786 (1998)

Frey, B.J., Dueck, D.: Clustering by passing messages between data points. Science 315, 972–976 (2007)

Gärtner, T.: Kernels for Structured Data. PhD thesis, Univ. Bonn (2005)

Hammer, B., Hasenfuss, A.: Topographic mapping of large dissimilarity datasets. Neural Computation 22(9), 2229–2284 (2010)

Hammer, B., Micheli, A., Sperduti, A.: Universal approximation capability of cascade correlation for structures. Neural Computation 17, 1109–1159 (2005)

Hammer, B., Villmann, T.: Generalized relevance learning vector quantization. Neural Networks 15(8-9), 1059–1068 (2002)

Kohonen, T.: Self-Oganizing Maps, 3rd edn. Springer (2000)

Kohonen, T., Somervuo, P.: How to make large self-organizing maps for nonvectorial data. Neural Networks 15(8-9), 945–952 (2002)

Pekalska, E., Duin, R.P.: The Dissimilarity Representation for Pattern Recognition. Foundations and Applications. World Scientific (2005)

Qin, A.K., Suganthan, P.N.: Kernel neural gas algorithms with application to cluster analysis. In: Proceedings of the 17th International Conference on Pattern Recognition (ICPR 2004), vol. 4, pp. 617–620. IEEE Computer Society, Washington, DC (2004)

Qin, A.K., Suganthan, P.N.: A novel kernel prototype-based learning algorithm. In: Proc. of the 17th International Conference on Pattern Recognition (ICPR 2004), Cambridge, UK (August 2004)

Rossi, F., Villa-Vialaneix, N.: Consistency of functional learning methods based on derivatives. Pat. Rec. Letters 32(8), 1197–1209 (2011)

Sato, A., Yamada, K.: Generalized Learning Vector Quantization. In: NIPS (1995)

Scarselli, F., Gori, M., Tsoi, A.C., Hagenbuchner, M., Monfardini, G.: Computational capabilities of graph neural networks. IEEE TNN 20(1), 81–102 (2009)

Schneider, P., Biehl, M., Hammer, B.: Distance learning in discriminative vector quantization. Neural Computation 21, 2942–2969 (2009)

Seo, S., Obermayer, K.: Soft learning vector quantization. Neural Comput. 15, 1589–1604 (2003)

van der Maaten, L., Hinton, G.: Visualizing high-dimensional data using t-sne. JMLR 9, 2579–2605 (2008)

Vellido, A., Martin-Guerroro, J.D., Lisboa, P.: Making machine learning models interpretable. In: ESANN 2012 (2012)

Williams, C., Seeger, M.: Using the nyström method to speed up kernel machines. In: Advances in Neural Information Processing Systems 13, pp. 682–688. MIT Press (2001)

Zhu, X., Gisbrecht, A., Schleif, F.-M., Hammer, B.: Approximation techniques for clustering dissimilarity data. Neurocomputing 90, 72–84 (2012)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2012 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Hofmann, D., Hammer, B. (2012). Kernel Robust Soft Learning Vector Quantization. In: Mana, N., Schwenker, F., Trentin, E. (eds) Artificial Neural Networks in Pattern Recognition. ANNPR 2012. Lecture Notes in Computer Science(), vol 7477. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-33212-8_2

Download citation

DOI: https://doi.org/10.1007/978-3-642-33212-8_2

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-33211-1

Online ISBN: 978-3-642-33212-8

eBook Packages: Computer ScienceComputer Science (R0)