Abstract

There are two camps in the theory of meaning: the referentialist one including Davidson, and the inferentialist one including Dummett and Brandom. Proof-theoretic semantics is a semantic enterprise to articulate an inferentialist account of the meaning of logical constants and inferences within the proof-theoretic tradition of Gentzen, Prawitz, and Martin-Löf, replacing Davidson’s path “from truth to meaning” by another path “from proof to meaning”. The present paper aims at contributing to developments of categorical proof-theoretic semantics, proposing the principle of categorical harmony, and thereby shedding structural light on Prior’s “tonk” and related paradoxical logical constants. Categorical harmony builds upon Lawvere’s conception of logical constants as adjoint functors, which amount to double-line rules of certain form in inferential terms. Conceptually, categorical harmony supports the iterative conception of logic. According to categorical harmony, there are intensional degrees of paradoxicality of logical constants; in the light of the intensional distinction, Russell-type paradoxical constants are maximally paradoxical, and tonk is less paradoxical. The categorical diagnosis of the tonk problem is that tonk mixes up the binary truth and falsity constants, equating truth with falsity; hence Prior’s tonk paradox is caused by equivocation, whereas Russell’s paradox is not. This tells us Prior’s tonk-type paradoxes can be resolved via disambiguation while Russell-type paradoxes cannot. Categorical harmony thus allows us to demarcate a border between tonk-type pseudo-paradoxes and Russell-type genuine paradoxes. I finally argue that categorical semantics based on the methods of categorical logic might even pave the way for reconciling and uniting the two camps.

You have full access to this open access chapter, Download chapter PDF

Similar content being viewed by others

Keywords

- Categorical proof-theoretic semantics

- Categorical harmony

- Iterative conception of logic

- Prior’s tonk

- Degrees of paradoxicality of logical constants

1 Introduction

Broadly speaking, there are two conceptions of meaning: the referentialist one based on truth conditions as advocated by Davidson [7], and the inferentialist one based on verification or use conditions as advocated by Dummett [10] or more recent Brandom [5]. Along the latter strand of the theory of meaning, proof-theoretic semantics undertakes the enterprise of accounting for the meaning of logical constants and inferences in terms of proof rather than truth, thus replacing Davidson’s path “from truth to meaning” by another Dummettian path “from proof to meaning”; the term “proof-theoretic semantics” was coined by Schroeder-Heister (for a gentle introduction, see Schroeder-Heister [21]; he also coined the term “substructural logic” with Došen). It builds upon the proof-theoretic tradition of Gentzen, Prawitz, and Martin-Löf, tightly intertwined with developments of Brouwer’s intuitionism and varieties of constructive mathematics, especially the Brouwer-Heyting-Kolmogorov interpretation, and its younger relative, the Curry-Howard correspondence between logic and type theory, or rather the Curry-Howard-Lambek correspondence between logic, type theory, and category theory (see, e.g., Lambek and Scott [12]). Note however that Brouwer himself objected to the very idea of formal logic, claiming the priority of mathematics to logic (cf. Hilbert’s Kantian argument in [11] concluding: “Mathematics, therefore, can never be grounded solely on logic”). “Harmony” in Dummett’s terms and the justification of logical laws have been central issues in proof-theoretic semantics (see, e.g., Dummett [10] and Martin-Löf [14]).

Combining proof-theoretic semantics with category theory (see, e.g., Awodey [2]), the present paper aims at laying down a foundation for categorical proof-theoretic semantics, proposing the principle of categorical harmony, and thereby shedding structural light on Prior’s “tonk” and related paradoxical logical constants. Prior’s invention of a weird logical connective “tonk” in his seminal paper [18] compelled philosophical logicians to articulate the concept of logical constants, followed by developments of the notion of harmony. In a way harmony prescribes the condition of possibility for logical connectives or their defining rules to be meaning-conferring, and as such, it works as a conceptual criterion to demarcate pseudo-logical constants from genuine logical constants. Let us recall the definition of Prior’s tonk. Tonk can be defined, for example, by the following rules of inference as in the system of natural deduction:

In any standard logical system (other than peculiar systems as in Cook [6]), adding tonk makes (the deductive relation of) the system trivial, and thus we presumably ought not to accept tonk as a genuine logical constant. In order to address the tonk problem, different principles of harmony have been proposed and discussed by Belnap [3], Prawitz [17], Dummett [10], and many others. From such a point of view, harmony is endorsed to ban tonk-like pathological connectives on the ground that their defining rules violate the principle of harmony, and are not meaning-conferring as a consequence of the violation of harmony. They cannot be justified after all.

In the present paper, we revisit the tonk problem, a sort of demarcation problem in philosophy of logic, from a novel perspective based on category theory. In his seminal paper [13], Lawvere presented a category-theoretical account of logical constants in terms of adjoint functors, eventually giving rise to the entirely new discipline of categorical logic (see, e.g., Lambek and Scott [12]) including categorical proof theory. Note that “categorical logic” is sometimes called “categorial logic” in philosophy to avoid confusion with the philosopher’s sense of “categorical” (like “categorical judgements”); especially, Došen, a leading researcher in the field, uses “categorial logic”, even though “categorical logic” is widely used in the category theory community. Categorical logic is relevant to proof-theoretic semantics in various respects, as explicated in this paper as well. Among other things, a fundamental feature of the link between categorical logic and proof theory is that categorical adjunctions amount to bi-directional inferential rules (aka double-line rules) of certain specific form in terms of proof theory; by this link, categorical terms can be translated into proof-theoretic ones. Building upon Lawvere’s understanding of logical constants, in this paper, I formulate the adjointness-based principle of categorical harmony, and compare it with other notions of harmony and related principles, including Belnap’s harmony [3], Došen’s idea of logical constants as punctuation marks [8, 9], and the reflection principle and definitional equations by Sambin et al. [19]. And the final aim of the present paper is to shed new light on the tonk problem from the perspective of categorical harmony.

In comparison with other concepts of harmony, there is a sharp contrast between categorical harmony and Belnap’s harmony in terms of conservativity; at the same time, however, uniqueness is a compelling consequence of categorical harmony, and so both endorse uniqueness, yet for different reasons. The principle of categorical harmony looks quite similar to Došen’s theory of logical constants as punctuation marks, and also to the theory of the reflection principle and definitional equations by Sambin et al. It nevertheless turns out that there are striking differences: some logical constants are definable in Sambin’s or Došen’s framework, but not definable according to categorical harmony, as we shall see below. It is not obvious at all whether this is an advantage of categorical harmony or not. It depends upon whether those logical constants ought to count as genuine logical constants, and thus upon our very conception of logical constants; especially, what is at stake is the logical status of substructural connectives (aka multiplicative connectives; in categorical terms, monoidal connectives).

Finally, several remarks would better be made in order to alleviate common misunderstandings on categorical semantics. The Curry-Howard correspondence is often featured with the functional programmer’s dictum “propositions-as-types, proofs-as-programs”. Likewise, the Curry-Howard-Lambek correspondence may be characterised by the categorical logician’s dictum “propositions-as-objects, proofs-as-morphisms.” One has to be careful of categorical semantics, though. For the Curry-Howard-Lambek correspondence does not hold in some well-known categorical semantics. The correspondence surely holds in the cartesian (bi)closed category semantics for propositional intuitionistic logic, yet at the same time, it is not true at all in the topos semantics for higher-order intuitionistic logic (or intuitionistic ZF set theory), and it does not even hold in the logos (aka Heyting category) semantics for first-order intuitionistic logic. For the very facts, the latter two semantics are called proof-irrelevant: they only allow for completeness with respect to the identity of propositions, and does not yield what is called full completeness, i.e., completeness with regard to the identity of proofs. Some category theorists who do not care about the proof-relevance of semantics tend to say that the topos semantics is a generalisation of the cartesian (bi)closed category semantics; however, the claim is only justified in view of the identity of propositions, and it is indeed wrong in light of the identity of proofs, which is not accounted for in the topos semantics at all. Note that locally cartesian closed categories yield proof-relevant semantics for Martin-Löf’s dependent type theory, and toposes are locally cartesian closed. If we see toposes as semantics for dependent type theory, then the topos semantics is proof-relevant; yet, this is an unusual way to interpret the term “topos semantics”. The Curry-Howard-Lambek correspondence is now available for a broad variety of logical systems including substructural logics as well as intuitionistic logic, yet with the possible exception of classical logic. Although quite some efforts have been made towards semantics of proofs for classical logic, there is so far no received view on it, and there are impossibility theorems on semantics of classical proofs, including the categorical Joyal lemma. For classical linear logic, nonetheless, we have fully complete semantics in terms of so-called \(*\)-autotonomous categories.

The rest of the paper is organised as follows. In Sect. 2, we review Lawvere’s categorical account of logical constants, and then formulate the principle of categorical harmony. There are several subtleties on how to formulate it, and naïve formulations cannot properly ban tonk-type or Russell-type logical constants. Comparison with Došen’s theory is given as well. In Sect. 3, we further compare the principle of categorical harmony with Belnap’s harmony conditions and Sambin’s reflection principle and definitional equations. The logical status of multiplicative (monoidal) connectives is discussed as well, and three possible accounts (i.e., epistemic, informational, and physical accounts) are given. In Sect. 4, we look at tonk and other paradoxical logical constants on the basis of categorical harmony, thus exposing different degrees of paradoxicality among them. Especially, what is wrong with tonk turns out to be equivocation. The paper is then concluded with prospects on the broader significance of categorical logic in view of the theory of meaning. Little substantial knowledge on category theory is assumed throughout the paper.

2 The Principle of Categorical Harmony

In this section, we first see how logical constants can be regarded as adjoint functors, and finally lead to the principle of categorical harmony. Although I do not explain the concept of categories from the scratch, from a logical point of view, you may conceive of a category as a sort of proof-theoretic consequence relation: suppose we have the following concepts given.

-

The concept of formulae \(\varphi \).

-

The concept of hypothetical proofs (or deductions) from formulae \(\varphi \) to \(\psi \). For any formula \(\varphi \) there must be an identity proof \(id_{\varphi }\) from \(\varphi \) to \(\varphi \).

-

The concept of proof-decorated relation \(\vdash _{p}\):

$$\begin{aligned} \varphi \vdash _{p} \psi \end{aligned}$$(2)where p is a proof from \(\varphi \) to \(\psi \).

-

The concept of sequential composition \(\circ \) of proofs: composing \(\varphi \vdash _p \psi \) and \(\psi \vdash _q \xi \), we obtain

$$\begin{aligned} \varphi \vdash _{q\circ p} \xi . \end{aligned}$$(3)If we think of a monodical category for substructural logic, we additionally have parallel composition \(\otimes \):

$$\begin{aligned} \varphi \otimes \varphi ' \vdash _{p\otimes p'} \psi \otimes \psi ' \end{aligned}$$(4)where \(\varphi \vdash _p \psi \) and \(\varphi ' \vdash _{p'} \psi '\).

-

The concept of reduction of proofs such that the identity proofs may be canceled out (i.e., \(id_{\varphi }\circ p\) equals p modulo reducibility; \(q\circ id_{\varphi }\) equals q modulo reducibility), and proofs may locally be reduced in any order (i.e., \((p\circ q) \circ r\) equals \(p\circ (q \circ r)\) modulo reducibility). Moreover, reduction must respect composition (i.e., if p equals q and r equals s modulo reducibility, \(p\circ q\) must equal \(r\circ s\) modulo reducibility).

The concept of a proof-theoretic consequence relation (resp. with parallel composition) thus defined is basically the same as the concept of a category (resp. monoidal category): a formula corresponds to an object in a category, and a proof to a morphism in it. To be precise, a proof-theoretic consequence relation is a way of presenting a category rather than category per se. In most parts of the article, however, full-fledged category theory shall not be used, for simplicity and readability, and it suffices for the reader to know some basic logic and order theory, apart from occasional exceptions.

From the perspective of Schroeder-Heister [23], categorical logic (or categorial logic to avoid confusion) places primary emphasis on hypothetical judgements, which are concerned with the question “what follows from what?”, rather than categorical judgements in the philosopher’s sense, which are concerned with the question “what holds on their own?”. In the traditional accounts of both model-theoretic and proof-theoretic semantics, the categorical is prior to the hypothetical, and the hypothetical is reduced to the categorical via the so-called transmission view of logical consequence. These are called dogmas of standard semantics, whether model-theoretic or proof-theoretic, in Schroeder-Heister [23]. His theory takes the hypothetical to precede the categorical, and it is in good harmony with the idea of categorical logic, in which the hypothetical, i.e., the concept of morphisms, is conceptually prior to the categorical, i.e., the concept of morphisms with their domains being a terminal object (or monoidal unit in the case of substructural logic).

Some authors have discussed how logical constants can be derived from logical consequence relations (see, e.g., Westerståhl [25]). To that end, category theory allows us to derive logical constants from abstract proof-theoretic consequence relations (i.e., categories) through the concept of adjunctions, which even give us inferential rules for the derived logical constants, and hence a proof system as a whole (in the case of intuitionistic logic, for example, the proof system thus obtained is indeed equivalent to standard ones, such as NJ and LJ). Given a category (abstract proof-theoretic consequence relation), we can always mine logical constants (if any) in the category via the generic criteria of adjunctions. In such a way, category-theoretical logic elucidates a generic link between logical constants and logical consequence, without focusing on a particular system of logic.

In the following, let us review the concept of adjoint functors in the simple case of preorders, which is basically enough for us, apart from occasional exceptions. A preorder

consists of a set P with a reflexive and transitive relation \(\vdash _L\) on L. Especially, the deductive relations of most logical systems form preorders; note that reflexivity and transitivity amount to identity and cut in logical terms. It is well known that a preorder can be seen as a category in which the number of morphisms between fixed two objects in it are at most one. Then, a functor \(F:L\rightarrow L'\) between preorders L and \(L'\) is just a monotone map: i.e., \(\varphi \vdash _{L} \psi \) implies \(F(\varphi ) \vdash _{L'} F(\psi )\). Now, a functor

is called left adjoint to

(or G is right adjoint to F) if and only if

for any \(\varphi \in L\) and \(\psi \in L'\). This situation of adjunction is denoted by \(F\dashv G\). Note that a left or right adjoint of a given functor does not necessarily exist.

In this formulation, it would already be evident that an adjunction \(F\dashv G\) is equivalent to a sort of bi-directional inferential rule:

where the double line means that we can infer the above “sequent” from the one below, and vice versa.

Let us look at several examples to illustrate how logical constants are characterised by adjunctions, and to articulate the inferential nature of them. Suppose that L is intuitionistic logic. We define the diagonal functor \(\varDelta :L\rightarrow L\times L\) by

where \(\times \) denotes binary product on categories (in this case just preorders) or in the category of (small) categories. Then, the right adjoint of \(\varDelta \) is conjunction \(\wedge :L\times L\rightarrow L\):

The left adjoint of \(\varDelta \) is disjunction \(\vee :L\times L\rightarrow L\):

The associated bi-directional rule for \(\wedge \) turns out to be the following:

which, by the definition of product on categories, boils down to the following familiar rule:

This is the inferential rule for conjunction \(\wedge \) that is packed in the adjunction \(\varDelta \dashv \wedge \). We omit the case of \(\vee \). Now, implication \(\varphi \rightarrow (\text{- }) :L\rightarrow L\) for each \(\varphi \) is the right adjoint of \(\varphi \wedge (\text{- })\), where the expressions “\(\varphi \rightarrow (\text{- }) \)” and “\(\varphi \wedge (\text{- })\)” mean that \(\varphi \) is fixed, and \((\text{- })\) is an argument:

The derived inferential rule is the following:

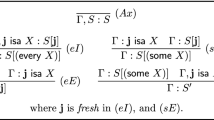

Note that we may replace \(\wedge \) by comma in the format of sequent calculus. Quantifiers can be treated in a similar (but more heavily categorical) way using indexed or fibrational structures \((L_C)_{C\in \mathbf{C}}\) (intuitively, each object C in a category \(\mathbf{C}\) is a collection of variables or a so-called context) rather than single L as in the above discussion (see, e.g., Pitts [16] for the case of intuitionistic logic; for a general variety of substructural logics over full Lambek calculus, categorical treatment of quantifiers is presented in Maruyama [15]). The corresponding double-line rules are the following:

with the obvious eigenvariable conditions (which naturally emerge from a categorical setting). Categorical logicians say that \(\forall \) is a right adjoint, and \(\exists \) is a left adjoint of substitution (or pullback in categorical terms). Finally, truth constants \(\bot \) and \(\top \) are left and right adjoints of the unique operation from L to the one-element set \(\{*\}\), with the following double-line rules:

which come down to:

All the double-line rules above yield a sound and complete axiomatisation of intuitionistic logic; equivalence with other standard systems can easily be verified.

Building upon these observations, we can articulate the categorical inferentialistic process of introducing a logical constant in a meaning-conferring manner:

-

At the beginning, there are universally definable operations, i.e., those operations that are definable in the general language of category theory.

-

We may replace “the general language of category theory” by “the general language of monoidal category theory” if we want to account for substructural logics as well as logics with unrestricted structural rules.

-

For example, diagonal \(\varDelta \) above is a universally definable operation. As observed in the above case of the double-line rule for \(\wedge \), the existence of \(\varDelta \) amounts to our meta-theoretical capacity to handle multiple sequents at once (in particular, ability to put two sequents in parallel in the case of \(\wedge \)).

-

-

Logical constants are introduced step by step, by requiring the existence of right or left adjoints of existing operations, i.e., universally definable operations or already introduced logical constants.

-

In other words, we define logical constants by bi-directional inferential rules corresponding to adjunctions concerned. Thus, this may be conceived of as a special sort of inferentialistic process to confer meaning on connectives. The condition of adjointness bans non-meaning-conferring rules like tonk’s (discussed later).

-

For example, conjunction and disjunction above can be introduced as adjoints of a universally definable operation (i.e., diagonal); after that, implication can be introduced as an adjoint of an existing logical constant (i.e., conjunction).

-

-

Genuine logical constants are those introduced according to the above principle, namely the principle of categorical harmony. Others are pseudo-logical constants.

According to this view, logical constants in a logical system must be constructed step by step, from old simple to new complex ones, based upon different adjunctions. This may be called the iterative conception of logic. The rôle of the powerset operation (or making a set of already existing sets) in the iterative conception of sets is analogous to the rôle of the operation of taking adjoint functors.

A remark on monoidal categories for substructural logics is that the language of monoidal categories is more general than the language of (plain) categories in the sense that monoidal products \(\otimes \) encompass cartesian products \(\times \). Formally, we have the fact that a monoidal product \(\otimes \) is a cartesian product if and only if there are both diagonals \(\delta :C\rightarrow C\otimes C\) and projections \(p_1:C\otimes D\rightarrow C\) and \(p_2:C\otimes D\rightarrow D\) where \(\otimes \) is assumed to be symmetric. The logical counterpart of this fact is that multiplicative conjunction \(\otimes \) is additive conjunction if and only if both contraction and weakening hold where exchange is assumed. Since contraction may be formulated as \(\varphi \vdash \varphi \otimes \varphi \), and weakening as \(\varphi \otimes \psi \vdash \varphi \) and \(\varphi \otimes \psi \vdash \psi \), it is evident that diagonals correspond to contraction, and projections to weakening (this correspondence can be given a precise meaning in terms of categorical semantics).

There are different conceptions of harmony in proof-theoretic semantics, discussed by different authors. In the present article, adjointness is conceived of as a sort of proof-theoretic harmony, and it is somehow akin to Prawitz’s inversion principle in that both put emphasis on (different sorts of) “invertibility” of rules; recall that an adjunction amounts to the validity of a “bi-directional” rule of certain form. Categorically speaking, adjointness exhibits harmony between two functors; logically speaking, adjointness tells us harmony between the upward and the downward rules of the induced bi-directional rule. The precise procedure of introducing logical constants according to categorical harmony has already been given above. Let us summarise the main point of categorical harmony as follows.

-

A logical constant must be introduced by (the double-line rule of) an adjunction with respect to an existing operation.

As we observed above, standard logical constants can be characterised by adjunctions or adjunction-induced double-line rules. The idea of capturing logicality by double-line rules was pursued by Došen [8, 9]. It seems, however, that his focus was not on harmony, but rather on logicality only (as pointed out by Schroeder-Heister [21]), and moreover he did not really use adjointness as a criterion to ban pathological, non-meaning-conferring rules. Indeed, Bonnay and Simmenauer [4] show that Došen’s theory of logicality cannot ban a weird connective “blonk”; nonetheless, the adjointness harmony of the present paper is immune to blonk, since it is not definable by an adjunction, even though it is defined by a double-line rule. The approach of this paper takes adjointness as the primary constituent of harmony, analysing issues in proof-theoretic semantics from that particular perspective. Although the double-line and adjointness approaches are quite similar at first sight, however, they are considerably different as a matter of fact, as seen in the case of Bonnay and Simmenauer’s blonk. There are actually several subtleties lurking behind the formulation above:

-

It turns out that definability via one adjunction is crucial, since tonk can be defined via two adjunctions.

-

A logical constant must be defined as an adjoint of an existing operation, since Russell-type paradoxical constants can be defined as adjoints of themselves.

These points shall be addressed later in detail. Before getting into those issues, in the next section, we briefly compare and contrast categorical harmony with other principles.

3 Categorical Harmony in Comparison with Other Principles

Here we have a look at relationships with Belnap’s harmony and the so-called reflection principle and definitional equations by Sambin and his collaborators.

The categorical approach to harmony poses several questions to Belnap’s notion of harmony. As we saw above, implication \(\rightarrow \) in intuitionistic logic is right adjoint to conjunction \(\wedge \). Suppose that we have a logical system L with logical constants \(\wedge \) and \(\vee \) only, which are specified as the right and left adjoints of diagonal \(\varDelta \) as in the above. And suppose we want to add implication \(\rightarrow \) to L. Of course, this can naturally be done by requiring the right adjoint of \(\wedge \). Now, Freyd’s adjoint functor theorem tells us that any right adjoint functor preserves limits (e.g., products), and any left adjoint functor preserves colimits (e.g., coproducts). This is a striking characteristic of adjoint functors. In the present case, the theorem tells us that \(\wedge \) preserves \(\vee \); in other words, \(\wedge \) distributes over \(\vee \). Thus, defining implication according to categorical harmony is not conservative over the original system L, since the bi-directional rules for \(\wedge \) and \(\vee \) only never imply the distributive law. Note that sequent calculus for \(\wedge \) and \(\vee \) allows us to derive the distributive law without any use of implication; yet the bi-directional rules alone do not imply it.

Although proponents of Belnap’s harmony would regard this as a strange (and perhaps unacceptable) feature, nevertheless, this sort of non-conservativity is necessary and natural from a category-theoretical point of view. Furthermore, conservativity may be contested in some way or other. One way would be to advocate categorical harmony against Belnap’s on the ground of the Quinean holistic theory of meaning, which implies that the meaning of a single logical constant in a system, in principle, can only be determined by reference to the global relationships with all the other logical constants in the whole system. If the meaning of a logical constant depends on the whole system, then adding a new logical constant may well change the meaning of old ones. Non-conservativity on logical constants is arguably a consequence of a form of holism on meaning, even though it violates Belnap’s harmony condition. Anyway, we may at least say that the principle of categorical harmony, or Lawvere’s idea of logical constants as adjoints, is in sharp conflict with Belnap’s notion of harmony, in terms of the conservativity issue.

Another distinctive characteristic of adjoint functors is that any of a right adjoint and a left adjoint of a functor is uniquely determined (up to isomorphism). By this very fact, we are justified to define a concept via an adjunction. This actually implies that Belnap’s uniqueness condition automatically holds if we define a logical constant according to the principle of categorical harmony. Thus, uniqueness is not something postulated in the first place; rather, it is just a compelling consequence of categorical harmony. However, it is not very obvious whether this is really a good feature or not. As a matter of fact, for example, exponentials (!, ?) in linear logic do not enjoy the uniqueness property (as noted in Pitts [16]; it is essentially because there can be different (co)monad structures on a single category). At the same time, however, we could doubt that exponentials are genuine logical constants. Indeed, it is sometimes said that they were introduced by Girard himself not as proper logical constants but as a kind of device to analyse structural rules. The rôle of exponentials is to have control on resources in inference, and not to perform inference per se on their own. It would thus be a possible view that exponentials are a sort of “computational constants” discriminated from ordinary logical constants. This is an issue common to both categorical harmony and Belnap’s harmony.

There are even more subtleties on uniqueness in categorical harmony, which involve a tension between cartesian and monoidal structures in category theory. When formulating the categorical procedure to introduce logical constants in the last section, it was remarked that we may replace the language of (plain) category theory with that of monoidal category theory if we want to treat substructural logics as well. In such a case, we first have a monoidal product \(\otimes \) in our primitive language, and then require, for example, a right adjoint of \(\otimes \), which functions as multiplicative implication. Since any adjoint is unique, there appears to be no room for non-uniqueness. However, the starting point \(\otimes \) may not be unique if it cannot be characterised as an adjoint functor, and you can indeed find many such cases in practice. The point is that, in general, monoidal structures can only be given from “outside” categories, i.e., the same one category can have different monoidal structures on it. If we have both \(\otimes \) and the corresponding implication \(\rightarrow \), then \(\otimes \) is a left adjoint of \(\rightarrow \). However, if we do not have implication, then \(\otimes \) may not be characterised as an adjoint, and thus may not be unique. This is the only room for non-uniqueness in categorical harmony, since any other logical constant must be introduced as an adjoint in the first place.

From a proof-theoretic point of view, having a monoidal structure on a category amounts to having the comma “,” as a punctuation mark in the meta-language of sequent calculus. In sequent calculus, we are allowed to put sequents in parallel (otherwise we could not express quite some rules of inference), and at the same time, we are allowed to put formulae in parallel inside a sequent by means of commas. The former capacity corresponds to the categorical capacity to have cartesian products, and the latter corresponds to the capacity to have monoidal products. This seems relevant to the following question. Why can monoidal structures \(\otimes \) be allowed in category theory in spite of the fact that in general they cannot be defined via universal mapping properties? To put it in terms of categorical harmony, why can monoidal structures \(\otimes \) be allowed as primitive vocabularies to generate logical constants? (And why are others not allowed as primitive vocabularies?) This is a difficult question, and there would be different possible accounts of it. One answer is that there is no such reason, and \(\otimes \) ought not to be accepted as primitive vocabularies in the principle of categorical harmony. Yet I would like to seek some conceptual reasons for permitting \(\otimes \) as primitive vocabularies in the following.

For one thing, \(\otimes \) is presumably grounded upon a sort of our epistemic capacity to put symbols in parallel (inside and outside sequents) as discussed above. The epistemic capacity may be so fundamental that it plays fundamental rôles in symbolic reasoning as well as many other cognitive practices; this will lead to a sort of epistemic account of admissibility of \(\otimes \) in the principle of categorical harmony. Another “informational” account of it seems possible as well. There are three fundamental questions: What propositions hold? Why do they hold? How do they hold? The first one is about truth and falsity, the second one about proofs, and the last one about the mechanisms of proofs. An answer to the last question must presumably include an account of the way how resources or assumptions for inference are used in proofs, or how relevant inferential information is used in proofs. And \(\otimes \) may be seen as a means to address that particular part of the third question. This is the informational account, which has some affinities with the view of linear logic as the logic of resources.

Yet another “physical” account may be came up with. In recent developments of categorical quantum mechanics by Abramsky and Coecke (see Abramsky [1] and references therein), the capacities to put things in parallel as well as in sequence play vital rôles in their so-called graphical calculus for quantum mechanics and computation, where parallel composition represents the composition of quantum systems (resp. processes), i.e., the tensor product of Hilbert spaces (resp. morphisms), which is crucial in quantum phenomena involving entanglement, such as the Einstein-Podolsky-Rosen paradox and the violation of the Bell inequality. In general, \(\otimes \) lacks diagonals and projections, unlike cartesian \(\times \), and this corresponds to the No-Cloning and No-Deleting theorems in quantum computation stating that quantum information can neither be copied nor deleted (note that diagonals \(\varDelta :X\rightarrow X\otimes X\) copy information X, and projections \(p:X\otimes Y\rightarrow X\) delete information Y). On the other hand, classical information can be copied and deleted as you like. So, the monoidal feature of \(\otimes \) witnesses a crucial border between classical and quantum information. To account for such quantum features of the microscopic world, we do need \(\otimes \) in the logic of quantum mechanics, and this would justify to add \(\otimes \) to primitive vocabularies.

The physical account seems relevant to the well-known question “Is logic empirical?”, which was originally posed in the context of quantum logic, and has been discussed by Quine, Putnam, Dummett, and actually Kripke (see Stairs [24]). The need of multiplicative \(\otimes \) in the “true” logic of quantum mechanics is quite a recent issue which has not been addressed in the philosophy community yet, and this may have some consequences to both the traditional question “Is logic empirical?” and the present question “Why are substructural logical constants are so special?”, as partly argued above. A more detailed analysis of these issues will be given somewhere else.

Sambin et al. [19] present a novel method to introduce logical constants by what they call the reflection principle and definitional equalities, some of which are as follows:

-

\(\varphi \vee \psi \vdash \xi \) iff \(\varphi \vdash \xi \) and \(\psi \vdash \xi \).

-

\(\varphi , \psi \vdash \xi \) iff \(\varphi \otimes \psi \vdash \xi \).

-

\(\varGamma \vdash \varphi \rightarrow \psi \) iff \(\varGamma \vdash (\varphi \vdash \psi )\).

As these cases show, definitional equalities are quite similar to adjointness conditions in categorical harmony (when they are formulated as bi-directional rules), even though Sambin et al. do not mention category theory at all. Especially, in the case of additive connectives, their definitional equivalences are exactly the same as the bi-directional rules induced by the corresponding adjunctions. There are crucial differences, however. Among them, the following fact should be emphasised:

-

Definitional equalities do not always imply adjointness, partly due to what they call the “visibility” condition, which requires us to restrict context formulae in sequent-style rules of inference (categorically, this amounts to restricting so-called Frobenius reciprocity conditions).

-

For example, implication is not necessarily a right adjoint of conjunction in the system of “basic logic” derived via their guiding principles.

-

This deviation from adjointness actually seems to be inevitable for Sambin et al., because they want to include Birkhoff-von Neumann’s quantum logic with some concept of implication as a structural extension of their basic logic; however, quantum implication (if any) cannot be a right adjoint of conjunction, due to the non-distributive nature of it, which is essential in Birkhoff-von Neumann’s quantum logic to account for superposition states in quantum systems.

In contrast, categorical harmony cannot allow for any sort of non-adjoint implication. Is this a good feature or not? It depends on whether such implication counts as genuine implication, and so on our very conception of logical constants. The categorical logician’s answer would be no: for example, Abramsky [1] asserts that Birkhoff-von Neumann’s quantum logic is considered to be “non-logic” because it does not have any adequate concept of implication (on the other hand, categorical quantum logic is said to be “hyper-logic”).

Finally, it should be noted that Schroeder-Heister [21] compares the framework of Sambin et al. [19] with his framework of definitional reflection, and that Bonnay and Simmenauer [4] proposes to exploit the idea of Sambin et al. [19] in order to remedy the aforementioned defect (the “blonk” problem) of Došen’s double-line approach in [8, 9].

4 Degrees of Paradoxicality of Logical Constants

In this section, we first discuss whether tonk is an adjoint functor or not, or whether tonk counts as a genuine logical constant according to categorical harmony, and we finally lead to the concept of intensional degrees of paradoxicality.

Let L be a (non-trivial) logical system with a deductive relation \(\vdash _L\) admitting identity and cut. And suppose L contains truth constants \(\bot \) and \(\top \), which are specified by adjunction-induced rules \(\bot \vdash \varphi \) and \(\varphi \vdash \top \), respectively. The first straightforward observation is that, if L has tonk, then tonk has both left and right adjoints, and thus tonk is the left and right adjoint of two functors. Recall that the inferential rôle of tonk is given by:

which are equivalent to the following simpler rules in the presence of identity and cut:

We can see tonk as a functor from \(L\times L\) to L. Now, define a “truth diagonal” functor \(\varDelta _{\top }:L\rightarrow L\times L\) by

and also define a “falsity diagonal” functor \(\varDelta _{\bot }:L\rightarrow L\times L\) by

We can then prove that \(\varDelta _{\bot }\) is a left adjoint of tonk, and that \(\varDelta _{\top }\) is a right adjoint of tonk. In other words, tonk is a right adjoint of \(\varDelta _{\bot }\) and a left adjoint of \(\varDelta _{\top }\); therefore, tonk is an adjoint functor in two senses (if L is already endowed with tonk).

At the same time, however, this does not mean that the principle of categorical harmony cannot exclude tonk, a pathological connective we ought not to have in a logical system. Indeed, it is a problem in the other way around: in order to define tonk in a logical system, the principle of categorical harmony requires us to add it as a right or left adjoint of some functor, or equivalently, via an adjunction-induced bi-directional rule. Thus, when one attempts to define tonk in a logical system L according to categorical harmony, the task is the following:

-

1.

Specify a functor \(F:L\rightarrow L\times L\) that has a (right or left) adjoint.

-

2.

Prove that tonk is a (left or right) adjoint of F, or that the rules for tonk are derivable in the system L extended with the bi-directional rule that corresponds to the adjunction.

As a matter of fact, however, this turns out to be impossible.

Let us give a brief proof. Suppose for contradiction that it is possible. Then we have a functor \(F:L\rightarrow L\times L\), and its right or left adjoint is tonk. Assume that tonk is a left adjoint of F, which means that F is right adjoint to tonk. It then follows that F must be truth diagonal \(\varDelta _{\top }\) as defined above. The bi-directional rule that corresponds to the adjunction \({\text {tonk}}\dashv F\) is actually equivalent to the following (by the property of \(\varDelta _{\top }\)):

But this condition is not sufficient to make the rules for tonk derivable, thus the right adjoint of F cannot be tonk, and hence a contradiction. Next, assume that tonk is a right adjoint of F, i.e., F is a left adjoint of tonk. Then, F must be falsity diagonal \(\varDelta _{\bot }\), and the rule of the adjunction \(F\dashv {\text {tonk}}\) is equivalent to the following:

This is not enough to derive the rules for tonk, and hence a contradiction. This completes the proof.

It has thus been shown that:

-

Tonk cannot be defined as an adjoint functor (of some functor) in a logical system without tonk, even though tonk is an adjoint functor in a logical system that is already equipped with tonk.

-

This is a subtle phenomenon, and we have to be careful of what exactly the question “Is tonk an adjoint functor?” means. Due to this, naïvely formulating categorical harmony as “logical constants \(=\) adjoint functors” does not work.

-

-

Consequently, tonk cannot be introduced in any way according to the principle of categorical harmony.

We may then conclude that tonk is a pseudo-logical constant, and the rules for tonk are not meaning-conferring, not because it is non-conservative (i.e., Belnap’s harmony fails for tonk), but because it violates the principle of categorical harmony (which is able to allow for non-conservativity as discussed above). Still, it is immediate to see the following:

-

Tonk can actually be defined as being right adjoint to falsity diagonal \(\varDelta _{\bot }\), and left adjoint to truth diagonal \(\varDelta _{\top }\) at once. We may say that tonk is a “doubly adjoint” functor.

-

In categorical harmony, therefore, it is essential to allow for a single adjunction only rather than multiple adjunctions, which are harmful in certain cases.

We again emphasise that tonk cannot be defined in a system without tonk by a single adjunction (i.e., there is no functor F such that an adjoint of F is tonk); nevertheless tonk can be defined by two adjunctions: \(\varDelta _{\bot }\) \(\dashv \) tonk \(\dashv \) \(\varDelta _{\top }\), i.e., \(\varDelta _{\bot }\) is left adjoint to tonk, and tonk is left adjoint to \(\varDelta _{\top }\). Note that double adjointness itself is not necessarily paradoxical.

What is then the conceptual meaning of all this? After all, what is wrong with tonk? The right adjoint t of falsity diagonal \(\varDelta _{\bot }\) may be called the binary truth constant (the ordinary truth constant \(\top \) is nullary), because the double-line rule of this adjunction boils down to \(\varphi \vdash _L \psi _1 \,\text {t}\, \psi _2\), which means that \(\psi _1 \,\text {t}\, \psi _2\) is implied by any formula \(\varphi \) (for any \(\psi _1,\psi _2\)). Likewise, the left adjoint s of truth diagonal \(\varDelta _{\top }\) may be called the “binary falsity constant”, because the double-line rule of this adjunction boils down to \(\psi _1 \,\text {s}\, \psi _2 \vdash _L \varphi \), which means that \(\psi _1 \,\text {s}\, \psi _2\) implies any \(\varphi \). Now, the rôle of tonk is to make the two (binary) truth and falsity constants (t and s) collapse into the same one constant, thus leading the logical system to inconsistency (or triviality); obviously, truth and falsity cannot be the same. This confusion of truth and falsity is the problem of tonk.

To put it differently, a right adjoint of \(\varDelta _{\bot }\) and a left adjoint of \(\varDelta _{\top }\) must be different, nevertheless tonk requires the two adjoints to be the same; the one functor that are the two adjoints at once is tonk. The problem of tonk, therefore, lies in confusing two essentially different adjoints as if they represented the same one logical constant. We may thus conclude as follows:

-

The problem of tonk is the problem of equivocation. The binary truth constant and the binary falsity constant are clearly different logical constants, yet tonk mixes them up, to be absurd.

This confusion of essentially different adjoints is at the root of the paradoxicality of tonk. There is no problem at all if we add to a logical system the right adjoint of \(\varDelta _{\bot }\) and the left adjoint of \(\varDelta _{\top }\) separately, any of which is completely harmless. Unpleasant phenomena only emerge if we add the two adjoints as just a single connective, that is, we make the fallacy of equivocation.

Let us think of a slightly different sort of equivocation. As explained above, \(\wedge \) is right adjoint to diagonal \(\varDelta \), and \(\vee \) is left adjoint to it. What if we confuse these two adjoints? By way of experiment, let us define “disconjunction” as the functor that is right adjoint to diagonal, and left adjoint to it at the same time. Of course, a logical system with disconjunction leads to inconsistency (or triviality). Needless to say, the problem of disconjunction is the problem of equivocation: conjunction and disjunction are different, yet disconjunction mixes them up.

Then, is the problem of disconjunction precisely the same as the problem of tonk? This would be extensionally true, yet intensionally false. It is true in the sense that both pseudo-logical constants fall into the fallacy of equivocation. Nonetheless, it is false in the sense that the double adjointness condition of disconjunction is stronger than the double adjointness condition of tonk.

What precisely makes the difference between tonk and disconjunction? Tonk is a right adjoint of one functor, and at the same time a left adjoint of another functor. In contrast to this, disconjunction is a right and left adjoint of just a single functor. Disconjunction is, so to say, a uniformly doubly adjoint functor, as opposed to the fact that tonk is merely a doubly adjoint functor. The difference between tonk and disconjunction thus lies in uniformity. Hence:

-

On the ground that uniform double adjointness is in general stronger than double adjointness, we could say that disconjunction is more paradoxical than tonk, endorsing a stronger sort of equivocation.

-

We thereby lead to the concept of intensional degrees of paradoxicality of logical constants. Degrees concerned here are degrees of uniformity of double adjointness or equivocation.

What is then the strongest degree of paradoxicality in terms of adjointness? It is self-adjointness, and it is at the source of Russell-type paradoxical constants. A self-adjoint functor is a functor that is right and left adjoint to itself. This is the strongest form of double adjointness. Now, let us think of a nullary paradoxical connective R defined by the following double-line rule (this sort of paradoxical connectives has been discussed in Schroeder-Heister [20, 22]):

Reformulating this, we obtain the following:

We may consider R as a unary constant connective \(\tilde{R}:L\rightarrow L\) defined by \(\tilde{R}(\varphi )=R\). Then, the double-line rule above shows that R is right and left adjoint to R, and therefore the Russell-type paradoxical constant R is a self-adjoint functor.

In order to express double adjointness, we need two functors (i.e., \(\varDelta _{\bot }\) and \(\varDelta _{\top }\)) in the case of tonk, one functor (i.e., \(\varDelta \)) in the case of disconjunction, and no functor at all in the case of paradox R. These exhibit differences in the uniformity of double adjointness. Tonk exemplifies the most general case of double adjointness and exhibits the lowest degree of uniformity. On the other hand, paradox instantiates the strongest double adjointness, and exhibits the highest degree of uniformity. Disconjunction exemplifies the only possibility in between the two.

We have thus led to three intensional degrees of paradoxicality (double adjointness \(<\) uniform double adjointness \(<\) self-adjointness):

Right adjoint to | Left adjoint to | |

|---|---|---|

Genuine paradox R | Itself R | Itself R |

Disconjunction | Diagonal \(\varDelta \) | Diagonal \(\varDelta \) |

Tonk | Truth diagonal \(\varDelta _{\top }\) | Falsity diagonal \(\varDelta _{\bot }\) |

The last two are caused by equivocation according to the categorical account of logical constants. In contrast, paradox R is not so for the reason that self-adjointness can be given by a single adjunction: if a functor is right (resp. left) adjoint to itself, it is left (resp. right) adjoint to itself. This is the reason why we call it “genuine paradox” in the table above. More conceptually speaking:

-

Pseudo-paradoxes due to equivocation can be resolved by giving different names to right and left adjoints, respectively, which are indeed different logical constants, and it is natural to do so.

-

The paradoxicality of such pseudo-paradoxes is just in mixing up actually different logical constants which are harmless on their own.

-

-

On the other hand, we cannot resolve genuine paradox in such a way: there are no multiple meanings hidden in the Russell-type paradoxical constant, and there is nothing to be decomposed in genuine paradox.

-

Genuine paradox is a truly single constant, and the paradoxicality of genuine paradox is not caused by equivocation, unlike tonk or disconjunction.

-

If we admit any sort of adjoint functors as logical constants, then we cannot really ban genuine paradox, which is surely an adjoint functor. A naïve formulation of Lawvere’s idea of logical constants as adjoint functors, like “logical constants \(=\) adjoint functors”, does not work here again (recall that we encountered another case of this in the analysis of tonk). This is the reason why we have adopted the iterative conception of logic in our formulation of categorical harmony. In that view, logical constants must be constructed step by step, from old to new ones, via adjunctions. Since genuine paradox emerges via self-adjointness, however, there is no “old” operation that is able to give rise to genuine paradox via adjunction. In this way, categorical harmony based upon the iterative conception of logic allows us to avoid genuine paradox.

5 Concluding Remarks: From Semantic Dualism to Duality

Let us finally address further potential implications of categorical logic to the theory of meaning. The dualism between the referentialist and inferentialist conceptions of meaning may be called the semantic dualism. Categorical logic may (hopefully) yield a new insight into the semantic dualism, as argued in the following.

From a categorical point of view, “duality” may be discriminated from “dualism”. Dualism is a sort of dichotomy between two concepts. Duality goes beyond dualism, showing that the two concepts involved are actually two sides of the same coin, just as two categories turn out to be equivalent by taking the mirror image of each other in the theory of categorical dualities. Duality in this general sense seems to witness universal features of category theory. Indeed, the classic dualism between geometry and algebra breaks down in category theory. For example, the categorical concept of algebras of monads encompasses topological spaces in addition to algebraic structures. Category theory may be algebraic at first sight (indeed, categories are many-sorted algebras), yet it is now used to formulate geometric concepts in broad fields of geometry, ranging from algebraic and arithmetic geometry to knot theory and low-dimensional topology. It is also a vital method in representation theory and mathematical physics. Technically, there are a great number of categorical dualities between algebraic and geometric structures (e.g., the Gelfand duality and the Stone duality). It may thus be said that the concept of categories somehow captures both algebraic and geometric facets of mathematics at a deeper level, and so there is duality, rather than dualism, between algebra and geometry.

Just as in this sense category theory questions the dualism between algebra and geometry, categorical logic opaques the generally received, orthodox distinction between model theory and proof theory, and presumably even the semantic dualism above, suggesting that they are merely instances of the one concept of categorical logic. For example, the Tarski semantics and the Kripke semantics, which are two major instances of set-theoretic semantics, amount to interpreting logic in the category of sets and the category of (pre)sheaves, respectively (from a fibrational, or in Lawvere’s terms hyperdoctrinal, point of view, we conceive of topos-induced subobject fibrations rather than toposes themselves). On the other hand, proof systems or type theories give rise to what are called syntactic categories, and their proof-theoretic properties are encapsulated in those syntactic categories. For example, cartesian biclosed categories and \(*\)-autonomous categories give fully complete semantics of intuitionistic logic and classical linear logic, respectively, in the sense that the identity of proofs exactly corresponds to the identity of morphisms (note also that the possibility of proof normalisation is implicitly built-in to categorical semantics; if normalisation is not well behaved, syntactic categories are not well defined). There is thus no dualism between model-theoretic and proof-theoretic semantics in categorical semantics. That is, there is just the one concept of categorical semantics that can transform into either of the two semantics by choosing a suitable category (fibration, hyperdoctrine) for interpretation. Put another way, we can make a proof system out of a given structured category (which is called the internal logic of the category; some conditions are of course required to guarantee desirable properties of the proof system), and at the same time, we can also model-theoretically interpret logic in that category. This feature of categorical logic allows us to incorporate both model-theoretic and proof-theoretic aspects of logic into the one concept. In a nutshell, categorical semantics has both proof-theoretic and model-theoretic semantics inherent in it, and from this perspective, there is no dualism, but duality between proof-theoretic and model-theoretic semantics, which may be called the semantic duality.

We must, however, be careful of whether this sort of unification makes sense philosophically as well as mathematically. There may indeed be some conceptual reasons for arguing that we ought to keep model-theoretic and proof-theoretic semantics separate as usual. Yet we may at least say that categorical logic exposes some common features of the two ways of accounting for the meaning of logical constants; at a level of abstraction, model-theoretic and proof-theoretic semantics become united as particular instances of the one categorical semantics. The philosophical significance of that level of abstraction is yet to be elucidated.

References

Abramsky, S.: Temperley-Lieb algebra: from knot theory to logic and computation via quantum mechanics. In: Chen, G., Kauffman, L., Lomonaco, S. (eds.) Mathematics of Quantum Computing and Technology, pp. 515–558. Taylor and Francis (2007)

Awodey, S.: Category Theory. Oxford University Press, Oxford (2006)

Belnap, N.: Tonk, plonk and plink. Analysis 22, 130–134 (1962)

Bonnay, D., Simmenauer, B.: Tonk strikes back. Australas. J. Log. 3, 33–44 (2005)

Brandom, R.: Articulating Reasons: An Introduction to Inferentialism. Harvard University Press, Cambridge (2000)

Cook, R.T.: What’s wrong with tonk(?). J. Philos. Log. 34, 217–226 (2005)

Davidson, D.: Inquiries into Truth and Interpretation. Clarendon Press, New York (2001)

Došen, K.: Logical constants: an essay in proof theory. Ph.D. thesis, Oxford University (1980)

Došen, K.: Logical constants as punctuation marks. Notre Dame J. Form. Log. 30, 362–381 (1989)

Dummett, M.: The Logical Basis of Metaphysics. Harvard University Press, Cambridge (1991)

Hilbert, D.: On the infinite. In: van Heijenoort, J. (ed.) From Frege to Gódel: A Source Book in Mathematical Logic, 1897–1931, pp. 369–392. Harvard University Press, Cambridge (1967)

Lambek, J., Scott, P.J.: Introduction to Higher-Order Categorical Logic. Cambridge University Press, Cambridge (1986)

Lawvere, F.W.: Adjointness in foundations. Dialectica 23, 281–296 (1969)

Martin-Löf, P.: On the meanings of the logical constants and the justifications of the logical laws. Nord. J. Philos. Log. 1, 11–60 (1996)

Maruyama, Y.: Full lambek hyperdoctrine: categorical semantics for first-order substructural logics. In: Kohlenbach, U., Libkin, L., de Queiroz, R. (eds.) Logic, Language, Information, and Computation. LNCS, vol. 8071, pp. 211–225. Springer, Berlin (2013)

Pitts, A.: Categorical logic. In: Abramsky, S., Gabbay, D.M., Maibaum, T.S.E. (eds.) Handbook of Logic in Computer Science, Chap. 2. Oxford University Press, Oxford (2000)

Prawitz, D.: Natural Deduction. Almqvist & Wiksell, Stockholm (1965)

Prior, A.N.: The runabout inference-ticket. Analysis 21, 38–39 (1960)

Sambin, G., Battilotti, G., Faggian, C.: Basic logic: reflection, symmetry, visibility. J. Symb. Log. 65, 979–1013 (2000)

Schroeder-Heister, P.: Definitional reflection and paradoxes, supplement to [21]. In: Stanford Encyclopedia of Philosophy (2012)

Schroeder-Heister, P.: Proof-theoretic semantics. In: Stanford Encyclopedia of Philosophy (2012)

Schroeder-Heister, P.: Proof-theoretic semantics, self-contradiction, and the format of deductive reasoning. Topoi 31, 77–85 (2012)

Schroeder-Heister, P.: The categorical and the hypothetical: a critique of some fundamental assumptions of standard semantics. Synthese 187, 925–942 (2012)

Stairs, A.: Could logic be empirical? The Putnam-Kripke debate. In: Chubb, J., Eskandarian, A., Harizanov, V. (eds.) Logic and Algebraic Structures in Quantum Computing and Information. Cambridge University Press, Cambridge (2015). Forthcoming

Westerståhl, D.: From constants to consequence, and back. Synthese 187, 957–971 (2012)

Acknowledgments

I would like to thank Peter Schroeder-Heister for fruitful discussions in Oxford in spring 2012, which, inter alia, led to the table of degrees of paradoxicality of logical constants. I am also grateful to the two reviewers for their detailed comments and suggestions for improvement. And last, I hereby acknowledge generous financial support from the following institutions: the National Institute of Informatics, the Nakajima Foundation, Wolfson College, and the University of Oxford.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Open Access This chapter is distributed under the terms of the Creative Commons Attribution Noncommercial License, which permits any noncommercial use, distribution, and reproduction in any medium, provided the original author(s) and source are credited.

Copyright information

© 2016 The Author(s)

About this chapter

Cite this chapter

Maruyama, Y. (2016). Categorical Harmony and Paradoxes in Proof-Theoretic Semantics. In: Piecha, T., Schroeder-Heister, P. (eds) Advances in Proof-Theoretic Semantics. Trends in Logic, vol 43. Springer, Cham. https://doi.org/10.1007/978-3-319-22686-6_6

Download citation

DOI: https://doi.org/10.1007/978-3-319-22686-6_6

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-22685-9

Online ISBN: 978-3-319-22686-6

eBook Packages: Mathematics and StatisticsMathematics and Statistics (R0)