Abstract

We first define the real matrix-variate gamma function, the gamma integral and the gamma density, wherefrom their counterparts in the complex domain are developed. An important particular case of the real matrix-variate gamma density known as the Wishart density is widely utilized in multivariate statistical analysis. Additionally, real and complex matrix-variate type-1 and type-2 beta density functions are defined. Various results pertaining to each of these distributions are then provided. More general structures are considered as well.

You have full access to this open access chapter, Download chapter PDF

Similar content being viewed by others

5.1. Introduction

The notations introduced in the preceding chapters will still be followed in this one. Lower-case letters such as x, y will be utilized to represent real scalar variables, whether mathematical or random. Capital letters such as X, Y will be used to denote vector/matrix random or mathematical variables. A tilde placed on top of a letter will indicate that the variables are in the complex domain. However, the tilde will be omitted in the case of constant matrices such as A, B. The determinant of a square matrix A will be denoted as |A| or det(A) and, in the complex domain, the absolute value or modulus of the determinant of B will be denoted as |det(B)|. Square matrices appearing in this chapter will be assumed to be of dimension p × p unless otherwise specified.

We will first define the real matrix-variate gamma function, gamma integral and gamma density, wherefrom their counterparts in the complex domain will be developed. A particular case of the real matrix-variate gamma density known as the Wishart density is widely utilized in multivariate statistical analysis. Actually, the formulation of this distribution in 1928 constituted a significant advance in the early days of the discipline. A real matrix-variate gamma function, denoted by Γ p(α), will be defined in terms of a matrix-variate integral over a real positive definite matrix X > O. This integral representation of Γ p(α) will be explicitly evaluated with the help of the transformation of a real positive definite matrix in terms of a lower triangular matrix having positive diagonal elements in the form X = TT ′ where T = (t ij) is a lower triangular matrix with positive diagonal elements, that is, t ij = 0, i < j and t jj > 0, j = 1, …, p. When the diagonal elements are positive, it can be shown that the transformation X = TT ′ is unique. Its associated Jacobian is provided in Theorem 1.6.7. This result is now restated for ready reference: For a p × p real positive definite matrix X = (x ij) > O,

where T = (t ij), t ij = 0, i < j and t jj > 0, j = 1, …, p. Consider the following integral representation of Γ p(α) where the integral is over a real positive definite matrix X and the integrand is a real-valued scalar function of X:

Under the transformation in (5.1.1),

Observe that tr(X) = tr(TT′) = the sum of the squares of all the elements in T, which is \(\sum _{j=1}^pt_{jj}^2+\sum _{i>j}t_{ij}^2\). By letting \(t_{jj}^2=y_j\Rightarrow \text{d}t_{jj}=\frac {1}{2}y_j^{\frac {1}{2}-1}\text{d}y_j\), noting that t jj > 0, the integral over t jj gives

the final condition being \(\Re (\alpha )>\frac {p-1}{2}\). Thus, we have the gamma product \(\varGamma (\alpha )\varGamma (\alpha -\frac {1}{2})\cdots \varGamma (\alpha -\frac {p-1}{2})\). Now for i > j, the integral over t ij gives

Therefore

For example,

This Γ p(α) is known by different names in the literature. The first author calls it the real matrix-variate gamma function because of its association with a real matrix-variate gamma integral.

5.1a. The Complex Matrix-variate Gamma

In the complex case, consider a p × p Hermitian positive definite matrix \(\tilde {X}=\tilde {X}^{*}>O\), where \(\tilde {X}^{*}\) denotes the conjugate transpose of \(\tilde {X}\). Let \(\tilde {T}=(\tilde {t}_{ij})\) be a lower triangular matrix with the diagonal elements being real and positive. In this case, it can be shown that the transformation \(\tilde {X}=\tilde {T}\tilde {T}^{*}\) is one-to-one. Then, as stated in Theorem 1.6a.7, the Jacobian is

With the help of (5.1a.1), we can evaluate the following integral over p × p Hermitian positive definite matrices where the integrand is a real-valued scalar function of \(\tilde {X}\). We will denote the integral by \(\tilde {\varGamma }_p(\alpha )\), that is,

Let us evaluate the integral in (5.1a.2) by making use of (5.1a.1). Parallel to the real case, we have

As well,

Since t jj is real and positive, the integral over t jj gives the following:

the final condition being \(\Re (\alpha )>p-1\). Note that the absolute value of \(\tilde {t}_{ij}\), namely, \(|\tilde {t}_{ij}|\) is such that \(|\tilde {t}_{ij}|{ }^2=t_{ij1}^2+t_{ij2}^2\) where \(\tilde {t}_{ij}=t_{ij1}+it_{ij2}\) with t ij1, t ij2 real and \(i=\sqrt {(-1)}\). Thus,

Then

We will refer to \(\tilde {\varGamma }_p(\alpha )\) as the complex matrix-variate gamma because of its association with a complex matrix-variate gamma integral. As an example, consider

5.2. The Real Matrix-variate Gamma Density

In view of (5.1.3), we can define a real matrix-variate gamma density with shape parameter α as follows, where X is p × p real positive definite matrix:

Example 5.2.1

Let

where x 11, x 12, x 22, x 1, x 2, y 2, x 3 are all real scalar variables, \(i=\sqrt {(-1)}\), \(x_{22}>0,~ x_{11}x_{22}-x_{12}^2>0\). While these are the conditions for the positive definiteness of the real matrix X, \(x_1>0,~x_3>0,~ x_1x_3-(x_2^2+y_2^2)>0\) are the conditions for the Hermitian positive definiteness of \(\tilde {X}\). Let us evaluate the following integrals, subject to the previously specified conditions on the elements of the matrix:

Solution 5.2.1

(1): Observe that δ 1 can be evaluated by treating the integral as a real matrix-variate integral, namely,

and hence the integral is

This result can also be obtained by direct integration as a multiple integral. In this case, the integration has to be done under the conditions \(x_{11}>0,~x_{22}>0,~ x_{11}x_{22}-x_{12}^2>0\), that is, \(x_{12}^2<x_{11}x_{22}\) or \(-\sqrt {x_{11}x_{22}}<x_{12}<\sqrt {x_{11}x_{22}}\). The integral over x 12 yields \(\int _{-\sqrt {x_{11}x_{22}}}^{\sqrt {x_{11}x_{22}}}\text{d}x_{12}=2\sqrt {x_{11}x_{22}}\), that over x 11 then gives

and on integrating with respect to x 22, we have

so that \(\delta _1=\frac {1}{2}\sqrt {\pi }\sqrt {\pi }=\frac {\pi }{2}\).

(2): On observing that δ 2 can be viewed as a complex matrix-variate integral, it is seen that

This answer can also be obtained by evaluating the multiple integral. Since \(\tilde {X}>O\), we have \(x_1>0,~x_3>0,~ x_1x_3-(x_2^2+y_2^2)>0\), that is, \(x_1>\frac {(x_2^2+y_2^2)}{x_3}\). Integrating first with respect to x 1 and letting \(y=x_1-\frac {(x_2^2+y_2^2)}{x_3}\), we have

Now, the integrals over x 2 and y 2 give

that with respect to x 3 then yielding

so that \(\delta _2=(1)\sqrt {\pi }\sqrt {\pi }=\pi .\)

(3): Observe that δ 3 can be evaluated as a real matrix-variate integral. Then

Let us proceed by direct integration:

letting \(y=x_{11}-\frac {x_{12}^2}{x_{22}}\), the integral over x 11 yields

Now, the integral over x 12 gives \(\sqrt {x_{22}}\sqrt {\pi }\) and finally, that over x 22 yields

(4): Noting that we can treat δ 4 as a complex matrix-variate integral, we have

Direct evaluation will be challenging in this case as the integrand involves \(|\text{det}(\tilde {X})|{ }^2\).

If a scale parameter matrix B > O is to be introduced in (5.2.1), then consider \(\text{tr}(BX)=\text{tr}(B^{\frac {1}{2}}XB^{\frac {1}{2}})\) where \(B^{\frac {1}{2}}\) is the positive definite square root of the real positive definite constant matrix B. On applying the transformation \(Y=B^{\frac {1}{2}}XB^{\frac {1}{2}}\Rightarrow \text{d}X=|B|{ }^{-\frac {(p+1)}{2}}\text{d}Y\), as stated in Theorem 1.6.5, we have

This equality brings about two results. First, the following identity which will turn out to be very handy in many of the computations:

As well, the following two-parameter real matrix-variate gamma density with shape parameter α and scale parameter matrix B > O can be constructed from (5.2.2):

5.2.1. The mgf of the real matrix-variate gamma distribution

Let us determine the mgf associated with the density given in (5.2.4), that is, the two-parameter real matrix-variate gamma density. Observing that X = X ′, let T be a symmetric p × p real positive definite parameter matrix. Then, noting that

it is seen that the non-diagonal elements in X multiplied by the corresponding parameters will have twice the weight of the diagonal elements multiplied by the corresponding parameters. For instance, consider the 2 × 2 case:

where α 1 and α 2 represent elements that are not involved in the evaluation of the trace. Note that due to the symmetry of T and X, t 21 = t 12 and x 21 = x 12, so that the cross product term t 12 x 12 in (ii) appears twice whereas each of the terms t 11 x 11 and t 22 x 22 appear only once.

However, in order to be consistent with the mgf in a real multivariate case, each variable need only be multiplied once by the corresponding parameter, the mgf being then obtained by taking the expected value of the resulting exponential sum. Accordingly, the parameter matrix has to be modified as follows: let ∗ T = (∗ t ij) where \({{ }_{*}t_{jj}}=t_{jj},~ {{ }_{*}t_{ij}}=\frac {1}{2}t_{ij},~ i\ne j,\) and t ij = t ji for all i and j or, in other words, the non-diagonal elements of the symmetric matrix T are weighted by \(\frac {1}{2}\), such a matrix being denoted as ∗ T. Then,

and the mgf in the real matrix-variate two-parameter gamma density, denoted by M X(∗ T), is the following:

Now, since

for (B −∗ T) > O, that is, \(~ (B-{{ }_{*}T})^{\frac {1}{2}}>O,\) which means that \(Y=(B-{{ }_{*}T})^{\frac {1}{2}}X(B-{{ }_{*}T})^{\frac {1}{2}}\Rightarrow \text{ d}X=|B-{{ }_{*}T})|{ }^{-(\frac {p+1}{2})}\text{d}Y\), we have

When ∗ T is replaced by −∗ T, (5.2.5) gives the Laplace transform of the two-parameter gamma density in the real matrix-variate case as specified by (5.2.4), which is denoted by L f(∗ T), that is,

For example, if

then |B| = 5 and

If ∗ T is partitioned into sub-matrices and X is partitioned accordingly as

where ∗ T 11 and X 11 are r × r, r ≤ p, then what can be said about the densities of the diagonal blocks X 11 and X 22? The mgf of X 11 is available from the definition by letting ∗ T 12 = O,∗ T 21 = O and ∗ T 22 = O, as then \(E[\text{e}^{\text{tr}({{ }_{*}T}X)}]=E[\text{ e}^{\text{tr}({{ }_{*}T}_{11}X_{11})}].\) However, B −1 ∗ T is not positive definite since B −1 ∗ T is not symmetric, and thereby I − B −1 ∗ T cannot be positive definite when ∗ T 12 = O,∗ T 21 = O,∗ T 22 = O. Consequently, the mgf of X 11 cannot be determined from (5.2.6). As an alternative, we could rewrite (5.2.6) in the symmetric format and then try to evaluate the density of X 11. As it turns out, the densities of X 11 and X 22 can be readily obtained from the mgf in two situations: either when B = I or B is a block diagonal matrix, that is,

Hence we have the following results:

Theorem 5.2.1

Let the p × p matrices X > O and ∗ T > O be partitioned as in (iii). Let X have a p × p real matrix-variate gamma density with shape parameter α and scale parameter matrix I p . Then X 11 has an r × r real matrix-variate gamma density and X 22 has a (p − r) × (p − r) real matrix-variate gamma density with shape parameter α and scale parameters I r and I p−r, respectively.

Theorem 5.2.2

Let X be partitioned as in (iii). Let the p × p real positive definite parameter matrix B > O be partitioned as in (iv). Then X 11 has an r × r real matrix-variate gamma density with the parameters (α and B 11 > O) and X 22 has a (p − r) × (p − r) real matrix-variate gamma density with the parameters (α and B 22 > O).

Theorem 5.2.3

Let X be partitioned as in (iii). Then X 11 and X 22 are statistically independently distributed under the restrictions specified in Theorems 5.2.1 and 5.2.2.

In the general case of B, write the mgf as M

X(∗

T) = |B|α|B −∗

T|−α, which corresponds to a symmetric format. Then, when  ,

,

which is obtained by making use of the representations of the determinant of a partitioned matrix, which are available from Sect. 1.3. Now, on comparing the last line with the first one, it is seen that X 11 has a real matrix-variate gamma distribution with shape parameter α and scale parameter matrix \(B_{11}-B_{12}B_{22}^{-1}B_{21}\). Hence, the following result:

Theorem 5.2.4

If the p × p real positive definite matrix has a real matrix-variate gamma density with the shape parameter α and scale parameter matrix B and if X and B are partitioned as in (iii), then X 11 has a real matrix-variate gamma density with shape parameter α and scale parameter matrix \(B_{11}-B_{12}B_{22}^{-1}B_{21}\) , and the sub-matrix X 22 has a real matrix-variate gamma density with shape parameter α and scale parameter matrix \(B_{22}-B_{21}B_{11}^{-1}B_{12}\).

5.2a. The Matrix-variate Gamma Function and Density, Complex Case

Let \(\tilde {X}=\tilde {X}^{*}>O\) be a p × p Hermitian positive definite matrix. When \(\tilde {X}\) is Hermitian, all its diagonal elements are real and hence \(\text{ tr}(\tilde {X})\) is real. Let \(\text{det}(\tilde {X})\) denote the determinant and \(|\text{det}(\tilde {X})|\) denote the absolute value of the determinant of \(\tilde {X}\). As a result, \(|\text{det}(\tilde {X})|{ }^{\alpha -p}\,\text{e}^{-\text{tr}(\tilde {X})}\) is a real-valued scalar function of \(\tilde {X}\). Let us consider the following integral, denoted by \(\tilde {\varGamma }_p(\alpha )\):

which was evaluated in Sect. 5.1a. In fact, (5.1a.3) provides two representations of the complex matrix-variate gamma function \(\tilde {\varGamma }_p(\alpha )\). With the help of (5.1a.3), we can define the complex p × p matrix-variate gamma density as follows:

For example, let us examine the 2 × 2 complex matrix-variate case. Let \(\tilde {X}\) be a matrix in the complex domain, \(\bar {\tilde {X}}\) denoting its complex conjugate and \(\tilde {X}^{*},\) its conjugate transpose. When \(\tilde {X}=\tilde {X}^{*},\) the matrix is Hermitian and its diagonal elements are real. In the 2 × 2 Hermitian case, let

Then, the determinants are

due to Hermitian positive definiteness of \(\tilde {X}\). As well,

Note that \(\text{tr}(\tilde {X})=x_1+x_3\) and \(\tilde {\varGamma }_2(\alpha )=\pi ^{\frac {2(1)}{2}}\varGamma (\alpha )\varGamma (\alpha -1),~ \Re (\alpha )>1,~ p=2\). The density is then of the following form:

for \(x_1>0,~x_3>0,~ x_1x_3-(x_2^2+y_2^2)>0,~ \Re (\alpha )>1\), and \(f_1(\tilde {X})=0\) elsewhere.

Now, consider a p × p parameter matrix \(\tilde {B}>O\). We can obtain the following identity corresponding to the identity in the real case:

A two-parameter gamma density in the complex domain can then be derived by proceeding as in the real case; it is given by

5.2a.1. The mgf of the complex matrix-variate gamma distribution

The moment generating function in the complex domain is slightly different from that in the real case. Let \(\tilde {T}>O\) be a p × p parameter matrix and let \(\tilde {X}\) be p × p two-parameter gamma distributed as in (5.2a.4). Then \(\tilde {T}=T_1+iT_2\) and \(\tilde {X}=X_1+iX_2\), with T 1, T 2, X 1, X 2 real and \(i=\sqrt {(-1)}\). When \(\tilde {T}\) and \(\tilde {X}\) are Hermitian positive definite, T 1 and X 1 are real symmetric and T 2 and X 2 are real skew symmetric. Then consider

Note that tr(T 1 X 1) + tr(T 2 X 2) contains all the real variables involved multiplied by the corresponding parameters, where the diagonal elements appear once and the off-diagonal elements each appear twice. Thus, as in the real case, \(\tilde {T}\) has to be replaced by \({{ }_{*}\tilde {T}}={{ }_{*}T}_1+i{{ }_{*}T}_2\). A term containing i still remains; however, as a result of the following properties, this term will disappear.

Lemma 5.2a.1

Let \(\tilde {T},~ \tilde {X},~ T_1,~T_2,~X_1,~X_2\) be as defined above. Then, tr(T 1 X 2) = 0, tr(T 2 X 1) = 0, tr(∗ T 1 X 2) = 0, tr(∗ T 2 X 1) = 0.

Proof

For any real square matrix A, tr(A) = tr(A′) and for any two matrices A and B where AB and BA are defined, tr(AB) = tr(BA). With the help of these two results, we have the following:

as T 1 is symmetric and X 2 is skew symmetric. Now, tr(T 1 X 2) = −tr(T 1 X 2) ⇒tr(T 1 X 2) = 0 since it is a real quantity. It can be similarly established that the other results stated in the lemma hold.

We may now define the mgf in the complex case, denoted by \(M_{\tilde {X}}({ }_{*}T)\), as follows:

Since \(\text{tr}(\tilde {X}(\tilde {B}-{{ }_{*}\tilde {T}}^{*}))=\text{ tr}(C\tilde {X}C^{*})\) for \(C=(\tilde {B}-{{ }_{*}\tilde {T}}^{*})^{\frac {1}{2}}\) and C > O, it follows from Theorem 1:6a.5 that \(\tilde {Y}=C\tilde {X}C^{*} \Rightarrow \text{d}\tilde {Y}=|\text{det}(CC^{*})|{ }^{p}\,\text{d}\tilde {X}\), that is, \(\text{d}\tilde {X}=|\text{det}(\tilde {B}-{{ }_{*}\tilde {T}}^{*})|{ }^{-p}\text{ d}\tilde {Y}\) for \(\tilde {B}-{{ }_{*}\tilde {T}}^{*}>O\). Then,

For example, let p = 2 and

with \(\tilde {x}_{21}=\tilde {x}_{12}^{*}\) and \( {{{ }_{*}\tilde t}}_{21}={{{ }_{*}\tilde t}}_{12}^{\,*}\). In this case, the conjugate transpose is only the conjugate since the quantities are scalar. Note that B = B ∗ and hence B is Hermitian. The leading minors of B being |(3)| = 3 > 0 and |B| = (3)(2) − (−i)(i) = 5 > 0, B is Hermitian positive definite. Accordingly,

Now, consider the partitioning of the following p × p matrices:

where \(\tilde {X}_{11}\) and \({{ }_{*}\tilde {T}}_{11}\) are r × r, r ≤ p. Then, proceeding as in the real case, we have the following results:

Theorem 5.2a.1

Let \(\tilde {X}\) have a p × p complex matrix-variate gamma density with shape parameter α and scale parameter I p , and \(\tilde {X}\) be partitioned as in (i). Then, \(\tilde {X}_{11}\) has an r × r complex matrix-variate gamma density with shape parameter α and scale parameter I r and \(\tilde {X}_{22}\) has a (p − r) × (p − r) complex matrix-variate gamma density with shape parameter α and scale parameter I p−r.

Theorem 5.2a.2

Let the p × p complex matrix \(\tilde {X}\) have a p × p complex matrix-variate gamma density with the parameters ( \(\alpha ,\tilde {B}>O)\) and let \(\tilde {X}\) and \(\tilde {B}\) be partitioned as in (i) and \(\tilde {B}_{12}=O,~\tilde {B}_{21}=O\) . Then \(\tilde {X}_{11}\) and \(\tilde {X}_{22}\) have r × r and (p − r) × (p − r) complex matrix-variate gamma densities with shape parameter α and scale parameters \(\tilde {B}_{11}\) and \(\tilde {B}_{22},\) respectively.

Theorem 5.2a.3

Let \(\tilde {X},\ \tilde {X}_{11},\ \tilde {X}_{22}\) and \(\tilde {B}\) be as specified in Theorems 5.2a.1 or 5.2a.2 . Then, \(\tilde {X}_{11}\) and \(\tilde {X}_{22}\) are statistically independently distributed as complex matrix-variate gamma random variables on r × r and (p − r) × (p − r) matrices, respectively.

For a general matrix B where the sub-matrices B 12 and B 21 are not assumed to be null, the marginal densities of \(\tilde {X}_{11}\) and \(\tilde {X}_{22}\) being given in the next result can be determined by proceeding as in the real case.

Theorem 5.2a.4

Let \(\tilde {X}\) have a complex matrix-variate gamma density with shape parameter α and scale parameter matrix B = B ∗ > O. Letting \(\tilde {X}\) and B be partitioned as in (i), then the sub-matrix \(\tilde {X}_{11}\) has a complex matrix-variate gamma density with shape parameter α and scale parameter matrix \(B_{11}-B_{12}B_{22}^{-1}B_{21}\) , and the sub-matrix \(\tilde {X}_{22}\) has a complex matrix-variate gamma density with shape parameter α and scale parameter matrix \(B_{22}-B_{21}B_{11}^{-1}B_{12}\).

Exercises 5.2

5.2.1

Show that

5.2.2

Show that \(\varGamma _r(\alpha )\varGamma _{p-r}(\alpha -\frac {r}{2})=\varGamma _p(\alpha ).\)

5.2.3

Evaluate (1): ∫X>Oe−tr(X)dX, (2): ∫X>O|X| e−tr(X)dX.

5.2.4

Write down (1): Γ 3(α), (2): Γ 4(α) explicitly in the real and complex cases.

5.2.5

Evaluate the integrals in Exercise 5.2.3 for the complex case. In (2) replace det(X) by |det(X)|.

5.3. Matrix-variate Type-1 Beta and Type-2 Beta Densities, Real Case

The p × p matrix-variate beta function denoted by B p(α, β) is defined as follows in the real case:

This function has the following integral representations in the real case where it is assumed that \(\Re (\alpha )>\frac {p-1}{2}\) and \(\Re (\beta )>\frac {p-1}{2}\):

For example, for p = 2, let

Then for example, (5.3.2) will be of the following form:

We will derive two of the integrals (5.3.2)–(5.3.5), the other ones being then directly obtained. Let us begin with the integral representations of Γ p(α) and Γ p(β) for \(\Re (\alpha )>\frac {p-1}{2},~\Re (\beta )>\frac {p-1}{2}\):

Making the transformation U = X + Y, X = V , whose Jacobian is 1, taking out U from \(|U-V|=|U|~|I-U^{-\frac {1}{2}}VU^{-\frac {1}{2}}|\), and then letting \(W= U^{-\frac {1}{2}}VU^{-\frac {1}{2}}\Rightarrow \text{d}V=|U|{ }^{\frac {p+1}{2}}\text{d}W\), we have

Thus, on dividing both sides by Γ p(α, β), we have

This establishes (5.3.2). The initial conditions \(\Re (\alpha )>\frac {p-1}{2},~\Re (\beta )>\frac {p-1}{2}\) are sufficient to justify all the steps above, and hence no conditions are listed at each stage. Now, take Y = I − W to obtain (5.3.3). Let us take the W of (i) above and consider the transformation

which gives

Taking determinants and substituting in (ii) we have

On expressing W, I − W and dW in terms of Z, we have the result (5.3.4). Now, let T = Z −1 with the Jacobian dT = |Z|−(p+1)dZ, then (5.3.4) transforms into the integral (5.3.5). These establish all four integral representations of the real matrix-variate beta function. We may also observe that B p(α, β) = B p(β, α) or α and β can be interchanged in the beta function. Consider the function

for \(O<X<I,~\Re (\alpha )>\frac {p-1}{2},~\Re (\beta )>\frac {p-1}{2}\), and f 3(X) = 0 elsewhere. This is a type-1 real matrix-variate beta density with the parameters (α, β), where O < X < I means X > O, I − X > O so that all the eigenvalues of X are in the open interval (0, 1). As for

whenever \(Z>O,~ \Re (\alpha )>\frac {p-1}{2},~\Re (\beta )>\frac {p-1}{2}\), and f 4(Z) = 0 elsewhere, this is a p × p real matrix-variate type-2 beta density with the parameters (α, β).

5.3.1. Some properties of real matrix-variate type-1 and type-2 beta densities

In the course of the above derivations, it was shown that the following results hold. If X is a p × p real positive definite matrix having a real matrix-variate type-1 beta density with the parameters (α, β), then: (1): Y 1 = I − X is real type-1 beta distributed with the parameters (β, α); (2): \(Y_2=(I-X)^{-\frac {1}{2}}X(I-X)^{-\frac {1}{2}}\) is real type-2 beta distributed with the parameters (α, β); (3): \(Y_3=(I-X)^{\frac {1}{2}}X^{-1}(I-X)^{\frac {1}{2}}\) is real type-2 beta distributed with the parameters (β, α). If Y is real type-2 beta distributed with the parameters (α, β) then: (4): Z 1 = Y −1 is real type-2 beta distributed with the parameters (β, α); (5): \(Z_2=(I+Y)^{-\frac {1}{2}}Y(I+Y)^{-\frac {1}{2}}\) is real type-1 beta distributed with the parameters (α, β); (6): \(Z_3=I-(I+Y)^{-\frac {1}{2}}Y(I+Y)^{-\frac {1}{2}}=(I+Y)^{-1}\) is real type-1 beta distributed with the parameters (β, α).

5.3a. Matrix-variate Type-1 and Type-2 Beta Densities, Complex Case

A matrix-variate beta function in the complex domain is defined as

with a tilde over B. As \(\tilde {B}_p(\alpha ,\beta )=\tilde {B}_p(\beta ,\alpha ),\) clearly α and β can be interchanged. Then, \(\tilde {B}_p(\alpha ,\beta )\) has the following integral representations, where \(\Re (\alpha )>p-1, \Re (\beta )>p-1\):

For instance, consider the integrand in (5.3a.2) for the case p = 2. Let

the diagonal elements of \(\tilde {X}\) being real; since \(\tilde {X}\) is Hermitian positive definite, we have \(x_1>0,~x_3>0,~x_1x_2-(x_2^2+y_2^2)>0\), \(\text{ det}(\tilde {X})=x_1x_3-(x_2^2+y_2^2)>0\) and \(\text{det}(I-\tilde {X})=(1-x_1)(1-x_3)-(x_2^2+y_2^2)>0\). The integrand in (5.3a.2) is then

The derivations of (5.3a.2)–(5.3a.5) being parallel to those provided in the real case, they are omitted. We will list one case for each of a type-1 and a type-2 beta density in the complex p × p matrix-variate case:

for \(O<\tilde {X}<I,~\Re (\alpha )>p-1,~ \Re (\beta )>p-1\) and \(\tilde {f}_3(\tilde {X})=0\) elsewhere;

for \(\tilde {Z}>O,~ \Re (\alpha )>p-1,~ \Re (\beta )>p-1\) and \(\tilde {f}_4(\tilde {Z})=0\) elsewhere.

Properties parallel to (1) to (6) which are listed in Sect. 5.3.1 also hold in the complex case.

5.3.2. Explicit evaluation of type-1 matrix-variate beta integrals, real case

A detailed evaluation of a type-1 matrix-variate beta integral as a multiple integral is presented in this section as the steps will prove useful in connection with other computations; the reader may also refer to Mathai (2014,b). The real matrix-variate type-1 beta function which is denoted by

has the following type-1 beta integral representation:

for \(\Re (\alpha )>\frac {p-1}{2},~\Re (\beta )>\frac {p-1}{2}\) where X is a real p × p symmetric positive definite matrix. The standard derivation of this integral relies on the properties of real matrix-variate gamma integrals after making suitable transformations, as was previously done. It is also possible to evaluate the integral directly and show that it is equal to Γ p(α)Γ p(β)∕Γ p(α + β) where, for example,

A convenient technique for evaluating a real matrix-variate gamma integral consists of making the transformation X = TT ′ where T is a lower triangular matrix whose diagonal elements are positive. However, on applying this transformation, the type-1 beta integral does not simplify due to the presence of the factor \(|I-X|{ }^{\beta -\frac {p+1}{2}}\). Hence, we will attempt to evaluate this integral by appropriately partitioning the matrices and then, successively integrating out the variables. Letting X = (x ij) be a p × p real matrix, x pp can then be extracted from the determinants of |X| and |I − X| after partitioning the matrices. Thus, let

where X 11 is the (p − 1) × (p − 1) leading sub-matrix, X 21 is 1 × (p − 1), X 22 = x pp and \(X_{12}=X_{21}^{\prime }\). Then \(|X|=|X_{11}||x_{pp}-X_{21}X_{11}^{-1}X_{12}|\) so that

and

It follows from (i) that \(x_{pp}>X_{21}X_{11}^{-1}X_{12}\) and, from (ii) that x pp < 1 − X 21(I − X 11)−1 X 12; thus, we have \(X_{21}X_{11}^{-1}X_{12}<x_{pp}<1-X_{21}(I-X_{11})^{-1}X_{12}\). Let \(y=x_{pp}-X_{21}X_{11}^{-1}X_{12}\Rightarrow \text{d}y=\text{d}x_{pp}\) for fixed X 21, X 11, so that 0 < y < b where

The second factor on the right-hand side of (ii) then becomes

Now letting \(u=\frac {y}{b}\) for fixed b, the terms containing u and b become \(b^{\alpha +\beta -(p+1)+1}u^{\alpha -\frac {p+1}{2}}\) \((1-u)^{\beta -\frac {p+1}{2}}\). Integration over u then gives

for \(~\Re (\alpha )>\frac {p-1}{2}, ~\Re (\beta )>\frac {p-1}{2}.\) Letting \(W=X_{21}X_{11}^{-\frac {1}{2}}(I-X_{11})^{-\frac {1}{2}}\) for fixed X 11, \(\text{d}X_{21}=|X_{11}|{ }^{\frac {1}{2}}|I-X_{11}|{ }^{\frac {1}{2}}\text{d}W\) from Theorem 1.6.1 of Chap. 1 or Theorem 1.18 of Mathai (1997), where X 11 is a (p − 1) × (p − 1) matrix. Now, letting v = WW ′ and integrating out over the Stiefel manifold by applying Theorem 4.2.3 of Chap. 4 or Theorem 2.16 and Remark 2.13 of Mathai (1997), we have

Thus, the integral over b becomes

Then, on multiplying all the factors together, we have

whenever \(\Re (\alpha )>\frac {p-1}{2},~\Re (\beta )>\frac {p-1}{2}\). In this case, \(X_{11}^{(1)}\) represents the (p − 1) × (p − 1) leading sub-matrix at the end of the first set of operations. At the end of the second set of operations, we will denote the (p − 2) × (p − 2) leading sub-matrix by \(X_{11}^{(2)}\), and so on. The second step of the operations begins by extracting x p−1,p−1 and writing

where \(X_{21}^{(2)}\) is a 1 × (p − 2) vector. We then proceed as in the first sequence of steps to obtain the final factors in the following form:

for \(\Re (\alpha )>\frac {p-2}{2},~\Re (\beta )>\frac {p-2}{2}\). Proceeding in such a manner, in the end, the exponent of π will be

and the gamma product will be

These gamma products, along with \(\pi ^{\frac {p(p-1)}{4}},\) can be written as \(\frac {\varGamma _p(\alpha )\varGamma _p(\beta )}{\varGamma _p(\alpha +\beta )}=B_p(\alpha ,~\beta )\); hence the result. It is thus possible to obtain the beta function in the real matrix-variate case by direct evaluation of a type-1 real matrix-variate beta integral.

A similar approach can yield the real matrix-variate beta function from a type-2 real matrix-variate beta integral of the form

where X is a p × p positive definite symmetric matrix and it is assumed that \(\Re (\alpha )>\frac {p-1}{2}\) and \(\Re (\beta )>\frac {p-1}{2}\), the evaluation procedure being parallel.

Example 5.3.1

By direct evaluation as a multiple integral, show that

for p = 2.

Solution 5.3.1

The integral to be evaluated will be denoted by δ. Let

It is seen from (i) that

Letting \(y=x_{22}-\frac {x_{12}^2}{x_{11}}\) so that \(0\le y\le b,\ \mbox{and}\ b=1-\frac {x_{12}^2}{x_{11}}-\frac {x_{12}^2}{1-x_{11}}=1-\frac {x_{12}^2}{x_{11}(1-x_{11})},\) we have

Now, integrating out y, we have

whenever \(\Re (\alpha )>\frac {1}{2}\) and \(\Re (\beta )>\frac {1}{2},\) b being as previously defined. Letting \(w=\frac {x_{12}}{[x(1-x)]^{\frac {1}{2}}}\), \(\text{d}x_{12}=[x_{11}(1-x_{11}]^{\frac {1}{2}}\text{d}w\) for fixed x 11. The exponents of x 11 and (1 − x 11) then become \(\alpha -\frac {3}{2}+\frac {1}{2}\) and \(\beta -\frac {3}{2}+\frac {1}{2}\), and the integral over w gives the following:

Now, integrating out x 11, we obtain

Then, on collecting the factors from (i) to (iv), we have

Finally, noting that for \(p=2,~\pi ^{\frac {p(p-1)}{4}}=\pi ^{\frac {1}{2}}=\frac {\pi ^{\frac {1}{2}}\pi ^{\frac {1}{2}}}{\pi ^{\frac {1}{2}}}\), the desired result is obtained, that is,

This completes the computations.

5.3a.1. Evaluation of matrix-variate type-1 beta integrals, complex case

The integral representation for B p(α, β) in the complex case is

whenever \(\Re (\alpha )>p-1,~\Re (\beta )>p-1\) where det(⋅) denotes the determinant of (⋅) and |det(⋅)|, the absolute value (or modulus) of the determinant of (⋅). In this case, \(\tilde {X}=(\tilde {x}_{ij})\) is a p × p Hermitian positive definite matrix and accordingly, all of its diagonal elements are real and positive. As in the real case, let us extract x pp by partitioning \(\tilde {X}\) as follows:

where \(\tilde {X}_{22}\equiv x_{pp}\) is a real scalar. Then, the absolute value of the determinants have the following representations:

where * indicates conjugate transpose, and

Note that whenever \(\tilde {X}\) and \(I-\tilde {X}\) are Hermitian positive definite, \(\tilde {X}_{11}^{-1}\) and \((I-\tilde {X}_{11})^{-1}\) are too Hermitian positive definite. Further, the Hermitian forms \(\tilde {X}_{21}\tilde {X}_{11}^{-1}\tilde {X}_{12}^{*}\) and \(\tilde {X}_{21}(I-\tilde {X}_{11})^{-1}\tilde {X}_{12}^{*}\) remain real and positive. It follows from (i) and (ii) that

Since the traces of Hermitian forms are real, the lower and upper bounds of x pp are real as well. Let

for fixed \(\tilde {X}_{11}\). Then

and \(|\text{det}(\tilde {X})|{ }^{\alpha -p},~ |\text{det}(I-\tilde {X}_{11})|{ }^{\beta -p}\) will become \(|\text{det}(\tilde {X}_{11})|{ }^{\alpha +1-p},~ |\text{ det}(I-\tilde {X}_{11})|{ }^{\beta +1-p},\) respectively. Then, we can write

Now, letting u = y∕b, the factors containing u and b will be of the form u α−p (1 − u)β−p b α+β−2p+1; the integral over u then gives

for \(\Re (\alpha )>p-1,~\Re (\beta )>p-1\). Letting \(v=\tilde {W}\tilde {W}^{*}\) and integrating out over the Stiefel manifold by making use of Theorem 4.2a.3 of Chap. 4 or Corollaries 4.5.2 and 4.5.3 of Mathai (1997), we have

The integral over b gives

for \(\Re (\alpha )>p-1,~\Re (\beta )>p-1\). Now, taking the product of all the factors yields

for \(\Re (\alpha )>p-1,~\Re (\beta )>p-1\). On extracting x p−1,p−1 from \(|\tilde {X}_{11}|\) and \(|I-\tilde {X}_{11}|\) and continuing this process, in the end, the exponent of π will be \((p-1)+(p-2)+\cdots +1=\frac {p(p-1)}{2}\) and the gamma product will be

These factors, along with \(\pi ^{\frac {p(p-1)}{2}}\) give

The procedure for evaluating a type-2 matrix-variate beta integral by partitioning matrices is parallel and hence will not be detailed here.

Example 5.31

For p = 2, evaluate the integral

as a multiple integral and show that it evaluates out to \(\tilde {B}_2(\alpha ,\beta )\), the beta function in the complex domain.

Solution 5.31

For p = 2, \(\pi ^{\frac {p(p-1)}{2}}=\pi ^{\frac {2(1)}{2}}=\pi \), and

whenever \(\Re (\alpha )>1 \) and \(\Re (\beta )>1\). For p = 2, our matrix and the relevant determinants are

where \(\tilde {x}_{12}^{*}\) is only the conjugate of \(\tilde {x}_{12}\) as it is a scalar quantity. By expanding the determinants of the partitioned matrices as explained in Sect. 1.3, we have the following:

From (i) and (ii), it is seen that

Note that when \(\tilde {X}\) is Hermitian, \(\tilde {x}_{11}\) and \(\tilde {x}_{22}\) are real and hence we may not place a tilde on these variables. Let \(\tilde {y}=x_{22}-{\tilde {x}_{12}\tilde {x}_{12}^{*}}/{x_{11}}.\) Note that \(\tilde {y}\) is also real since \(\tilde {x}_{12}\tilde {x}_{12}^{*}\) is real. As well, 0 ≤ y ≤ b, where

Further, b is a real scalar of the form \(b=1-\tilde {w}\tilde {w}^{*}\) where \(\tilde {w}=\frac {\tilde {x}_{12}}{[x_{11}(1-x_{11})]^{\frac {1}{2}}}\Rightarrow \text{d}\tilde {x}_{12}=x_{11}(1-x_{11})\text{d}\tilde {w}\). This will make the exponents of x 11 and (1 − x 11) as α − p + 1 = α − 1 and β − 1, respectively. Now, on integrating out y, we have

Integrating out \(\tilde {w}\), we have the following:

This integral is evaluated by writing \(z=\tilde {w}\tilde {w}^{*}\). Then, it follows from Theorem 4.2a.3 that

Now, collecting all relevant factors from (i) to (iv), the required representation of the initial integral, denoted by δ, is obtained:

whenever \(\Re (\alpha )>1\) and \(\Re (\beta )>1\). This completes the computations.

5.3.3. General partitions, real case

In Sect. 5.3.2, we have considered integrating one variable at a time by suitably partitioning the matrices. Would it also be possible to have a general partitioning and integrate a block of variables at a time, rather than integrating out individual variables? We will consider the real matrix-variate gamma integral first. Let the p × p positive definite matrix X be partitioned as follows:

so that X 12 is p 1 × p 2 with \(X_{21}=X_{12}^{\prime }\) and p 1 + p 2 = p. Without any loss of generality, let us assume that p 1 ≥ p 2. The determinant can be partitioned as follows:

Letting

for fixed X 11 and X 22 by making use of Theorem 1.6.4 of Chap. 1 or Theorem 1.18 of Mathai (1997),

Letting S = Y Y ′ and integrating out over the Stiefel manifold, we have

refer to Theorem 2.16 and Remark 2.13 of Mathai (1997) or Theorem 4.2.3 of Chap. 4. Now, the integral over S gives

for \(\Re (\alpha )>\frac {p_1-1}{2}\). Collecting all the factors, we have

One can observe from this result that the original determinant splits into functions of X 11 and X 22. This also shows that if we are considering a real matrix-variate gamma density, then the diagonal blocks X 11 and X 22 are statistically independently distributed, where X 11 will have a p 1-variate gamma distribution and X 22, a p 2-variate gamma distribution. Note that tr(X) = tr(X 11) + tr(X 22) and hence, the integral over X 22 gives \(\varGamma _{p_2}(\alpha )\) and the integral over X 11, \(\varGamma _{p_1}(\alpha )\). Thus, the total integral is available as

since \(\pi ^{\frac {p_1p_2}{2}}\varGamma _{p_1}(\alpha )\varGamma _{p_2}(\alpha -\frac {p_1}{2})=\varGamma _p(\alpha )\).

Hence, it is seen that instead of integrating out variables one at a time, we could have also integrated out blocks of variables at a time and verified the result. A similar procedure works for real matrix-variate type-1 and type-2 beta distributions, as well as the matrix-variate gamma and type-1 and type-2 beta distributions in the complex domain.

5.3.4. Methods avoiding integration over the Stiefel manifold

The general method of partitioning matrices previously described involves the integration over the Stiefel manifold as an intermediate step and relies on Theorem 4.2.3. We will consider another procedure whereby integration over the Stiefel manifold is not required. Let us consider the real gamma case first. Again, we begin with the decomposition

Instead of integrating out X 21 or X 12, let us integrate out X 22. Let X 11 be a p 1 × p 1 matrix and X 22 be a p 2 × p 2 matrix, with p 1 + p 2 = p. In the above partitioning, we require that X 11 be nonsingular. However, when X is positive definite, both X 11 and X 22 will be positive definite, and thereby nonsingular. From the second factor in (5.3.8), \(X_{22}>X_{21}X_{11}^{-1}X_{12}\) as \(X_{22}-X_{21}X_{11}^{-1}X_{12}\) is positive definite. We will attempt to integrate out X 22 first. Let \(U=X_{22}-X_{21}X_{11}^{-1}X_{12}\) so that dU = dX 22 for fixed X 11 and X 12. Since tr(X) = tr(X 11) + tr(X 22), we have

On integrating out U, we obtain

since \(\alpha -\frac {p+1}{2}=\alpha -\frac {p_1}{2}-\frac {p_2+1}{2}\). Letting

for fixed X 11 (Theorem 1.6.1), we have

But tr(Y Y ′) is the sum of the squares of the p 1 p 2 elements of Y and each integral is of the form \(\int _{-\infty }^{\infty }\text{e}^{-z^2}\text{ d}z=\sqrt {\pi }\). Hence,

We may now integrate out X 11:

Thus, we have the following factors:

since

and

Hence the result. This procedure avoids integration over the Stiefel manifold and does not require that p 1 ≥ p 2. We could have integrated out X 11 first, if needed. In that case, we would have used the following expansion:

We would have then proceeded as before by integrating out X 11 first and would have ended up with

Note 5.3.1: If we are considering a real matrix-variate gamma density, such as the Wishart density, then from the above procedure, observe that after integrating out X 22, the only factor containing X 21 is the exponential function, which has the structure of a matrix-variate Gaussian density. Hence, for a given X 11, X 21 is matrix-variate Gaussian distributed. Similarly, for a given X 22, X 12 is matrix-variate Gaussian distributed. Further, the diagonal blocks X 11 and X 22 are independently distributed.

The same procedure also applies for the evaluation of the gamma integrals in the complex domain. Since the steps are parallel, they will not be detailed here.

5.3.5. Arbitrary moments of the determinants, real gamma and beta matrices

Let the p × p real positive definite matrix X have a real matrix-variate gamma density with the parameters (α, B > O). Then for an arbitrary h, we can evaluate the h-th moment of the determinant of X with the help of the matrix-variate gamma integral, namely,

By making use of (i), we can evaluate the h-th moment in a real matrix-variate gamma density with the parameters (α, B > O) by considering the associated normalizing constant. Let u 1 = |X|. Then, the moments of u 1 can be obtained by integrating out over the density of X:

Thus,

This is evaluated by observing that when E[u 1]h is taken, α is replaced by α + h in the integrand and hence, the answer is obtained from equation (i). The same procedure enables one to evaluate the h-th moment of the determinants of type-1 beta and type-2 beta matrices. Let Y be a p × p real positive definite matrix having a real matrix-variate type-1 beta density with the parameters (α, β) and u 2 = |Y |. Then, the h-th moment of Y is obtained as follows:

In a similar manner, let u 3 = |Z| where Z has a p × p real matrix-variate type-2 beta density with the parameters (α, β). In this case, take α + β = (α + h) + (β − h), replacing α by α + h and β by β − h. Then, considering the normalizing constant of a real matrix-variate type-2 beta density, we obtain the h-th moment of u 3 as follows:

Relatively few moments will exist in this case, as \(\Re (\alpha +h)>\frac {p-1}{2}\) implies that \(\Re (h)>-\Re (\alpha )+\frac {p-1}{2}\) and \(\Re (\beta -h)>\frac {p-1}{2}\) means that \(\Re (h)<\Re (\beta )-\frac {p-1}{2}\). Accordingly, only moments in the range \(-\Re (\alpha )+\frac {p-1}{2}<\Re (h)<\Re (\beta )-\frac {p-1}{2}\) will exist. We can summarize the above results as follows: When X is distributed as a real p × p matrix-variate gamma with the parameters (α, B > O),

When Y has a p × p real matrix-variate type-1 beta density with the parameters (α, β) and if u 2 = |Y | then

When the p × p real positive definite matrix Z has a real matrix-variate type-2 beta density with the parameters (α, β), then letting u 3 = |Z|,

Let us examine (5.3.9):

where x j is a real scalar gamma random variable with shape parameter \(\alpha -\frac {j-1}{2}\) and scale parameter λ j where λ j > 0, j = 1, …, p are the eigenvalues of B > O by observing that the determinant is the product and trace is the sum of the eigenvalues λ 1, …, λ p. Further, x 1, .., x p, are independently distributed. Hence, structurally, we have the following representation:

where x j has the density

for \(\Re (\alpha )>\frac {j-1}{2},~ \lambda _j>0\) and zero otherwise. Similarly, when the p × p real positive definite matrix Y has a real matrix-variate type-1 beta density with the parameters (α, β), the determinant, |Y |, has the structural representation

where y j is a real scalar type-1 beta random variable with the parameter \((\alpha -\frac {j-1}{2},~\beta )\) for j = 1, …, p. When the p × p real positive definite matrix Z has a real matrix-variate type-2 beta density, then |Z|, the determinant of Z, has the following structural representation:

where z j has a real scalar type-2 beta density with the parameters \((\alpha -\frac {j-1}{2},~\beta -\frac {j-1}{2})\) for j = 1, …, p.

Example 5.3.2

Consider a real 2 × 2 matrix X having a real matrix-variate distribution. Derive the density of the determinant |X| if X has (a) a gamma distribution with the parameters (α, B = I); (b) a real type-1 beta distribution with the parameters \((\alpha =\frac {3}{2},~\beta =\frac {3}{2})\); (c) a real type-2 beta distribution with the parameters \((\alpha =\frac {3}{2},~\beta =\frac {3}{2})\).

Solution 5.3.2

We will derive the density in these three cases by using three different methods to illustrate the possibility of making use of various approaches for solving such problems. (a) Let u 1 = |X| in the gamma case. Then for an arbitrary h,

Since the gammas differ by \(\frac {1}{2}\), they can be combined by utilizing the following identity:

which is the multiplication formula for gamma functions. For m = 2, we have the duplication formula:

Thus,

Now, by taking \(z=\alpha -\frac {1}{2}+h\) in the numerator and \(z=\alpha -\frac {1}{2}\) in the denominator, we can write

Accordingly,

This shows that \(v=2u_1^{\frac {1}{2}}\) has a real scalar gamma distribution with the parameters (2α − 1, 1) whose density is

Hence the density of u 1, denoted by f 1(u 1), is the following:

and zero elsewhere. It can easily be verified that f 1(u 1) is a density.

(b) Let u 2 = |X|. Then for an arbitrary h, \(\alpha =\frac {3}{2}\) and \(\beta =\frac {3}{2}\),

the last expression resulting from an application of the partial fraction technique. This results from h-th moment of the distribution of u 2, whose density which is

and zero elsewhere, is readily seen to be bona fide.

(c) Let the density u 3 = |X| be denoted by f 3(u 3). The Mellin transform of f 3(u 3), with Mellin parameter s, is

the corresponding density being available by taking the inverse Mellin transform, namely,

where \(i=\sqrt {(-1)}\) and c in the integration contour is such that 0 < c < 2. The integral in (i) is available as the sum of residues at the poles of \(\varGamma (s)\varGamma (s+\frac {1}{2})\) for 0 ≤ u 3 ≤ 1 and the sum of residues at the poles of \(\varGamma (2-s)\varGamma (\frac {5}{2}-s)\) for 1 < u 3 < ∞. We can also combine Γ(s) and \(\varGamma (s+\frac {1}{2})\) as well as Γ(2 − s) and \(\varGamma (\frac {5}{2}-s)\) by making use of the duplication formula for gamma functions. We will then be able to identify the functions in each of the sectors, 0 ≤ u 3 ≤ 1 and 1 < u 3 < ∞. These will be functions of \(u_3^{\frac {1}{2}}\) as done in the case (a). In order to illustrate the method relying on the inverse Mellin transform, we will evaluate the density f 3(u 3) as a sum of residues. The poles of \(\varGamma (s)\varGamma (s+\frac {1}{2})\) are simple and hence two sums of residues are obtained for 0 ≤ u 3 ≤ 1. The poles of Γ(s) occur at s = −ν, ν = 0, 1, …, and those of \(\varGamma (s+\frac {1}{2})\) occur at \(s=-\frac {1}{2}-\nu ,~\nu =0,1,\ldots \). The residues and the sum thereof will be evaluated with the help of the following two lemmas.

Lemma 5.3.1

Consider a function Γ(γ + s)ϕ(s)u −s whose poles are simple. The residue at the pole s = −γ − ν, ν = 0, 1, …, denoted by R ν , is given by

Lemma 5.3.2

When Γ(δ) and Γ(δ − ν) are defined

where, for example, (a)ν = a(a + 1)⋯(a + ν − 1), (a)0 = 1, a≠0, is the Pochhammer symbol.

Observe that Γ(α) is defined for all α≠0, −1, −2, …, and that an integral representation requires \(\Re (\alpha )>0\). As well, Γ(α + k) = Γ(α)(α)k, k = 1, 2, ….. With the help of Lemmas 5.3.1 and 5.3.2, the sum of the residues at the poles of Γ(s) in the integral in (i), excluding the constant \(\frac {4}{\pi }\), is the following:

where the 2 F 1(⋅) is Gauss’ hypergeometric function. The same procedure consisting of taking the sum of the residues at the poles \(s=-\frac {1}{2}-\nu ,~ \nu =0,1,\ldots , \) gives

The inverse Mellin transform for the sector 1 < u 3 < ∞ is available as the sum of residues at the poles of \(\varGamma (\frac {5}{2}-s)\) and Γ(2 − s) which occur at \(s=\frac {5}{2}+\nu \) and s = 2 + ν for ν = 0, 1, … . The sum of residues at the poles of \(\varGamma (\frac {5}{2}-s)\) is the following:

and the sum of the residues at the poles of Γ(2 − s) is given by

Now, on combining all the hypergeometric series and multiplying the result by the constant \(\frac {4}{\pi },\) the final representation of the required density is obtained as

This completes the computations.

5.3a.2. Arbitrary moments of the determinants in the complex case

In the complex matrix-variate case, one can consider the absolute value of the determinant, which will be real; however, the parameters will be different from those in the real case. For example, consider the complex matrix-variate gamma density. If \(\tilde {X}\) has a p × p complex matrix-variate gamma density with the parameters \((\alpha ,~\tilde {B}>O)\), then the h-th moment of the absolute value of the determinant of \(\tilde {X}\) is the following:

that is, \(|\text{ det}(\tilde {X})|\) has the structural representation

where the \(\tilde {x}_j\) is a real scalar gamma random variable with the parameters (α − (j − 1), λ j), j = 1, …, p, and the \(\tilde {x}_j\)’s are independently distributed. Similarly, when \(\tilde {Y}\) is a p × p complex Hermitian positive definite matrix having a complex matrix-variate type-1 beta density with the parameters (α, β), the absolute value of the determinant of \(\tilde {Y}\), \(|{\text{det}(\tilde {Y})}|\), has the structural representation

where the \(\tilde {y}_j\)’s are independently distributed, \(\tilde {y}_j\) being a real scalar type-1 beta random variable with the parameters (α − (j − 1), β), j = 1, …, p. When \(\tilde {Z}\) is a p × p Hermitian positive definite matrix having a complex matrix-variate type-2 beta density with the parameters (α, β), then for arbitrary h, the h-th moment of the absolute value of the determinant is given by

so that the absolute value of the determinant of \(\tilde {Z}\) has the following structural representation:

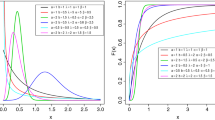

where the \(\tilde {z}_j\)’s are independently distributed real scalar type-2 beta random variables with the parameters (α − (j − 1), β − (j − 1)) for j = 1, …, p. Thus, in the real case, the determinant and, in the complex case, the absolute value of the determinant have structural representations in terms of products of independently distributed real scalar random variables. The following is the summary of what has been discussed so far:

Distribution | Parameters, real case | Parameters, complex case |

gamma | \((\alpha -\frac {j-1}{2},~\lambda _j)\) | (α − (j − 1), λ j) |

type-1 beta | \((\alpha -\frac {j-1}{2},~\beta )\) | (α − (j − 1), β) |

type-2 beta | \((\alpha -\frac {j-1}{2},~\beta -\frac {j-1}{2})\) | (α − (j − 1), β − (j − 1)) |

for j = 1, …, p. When we consider the determinant in the real case, the parameters differ by \(\frac {1}{2}\) whereas the parameters differ by 1 in the complex domain. Whether in the real or complex cases, the individual variables appearing in the structural representations are real scalar variables that are independently distributed.

Example 5.3a.2

Even when p = 2, some of the poles will be of order 2 since the gammas differ by integers in the complex case, and hence a numerical example will not be provided for such an instance. Actually, when poles of order 2 or more are present, the series representation will contain logarithms as well as psi and zeta functions. A simple illustrative example is now considered. Let \(\tilde {X}\) be 2 × 2 matrix having a complex matrix-variate type-1 beta distribution with the parameters (α = 2, β = 2). Evaluate the density of \(\tilde {u}=|\text{det}(\tilde {X})|\).

Solution 5.3a.2

Let us take the (s − 1)th moment of \(\tilde {u}\) which corresponds to the Mellin transform of the density of \(\tilde {u}\), with Mellin parameter s:

The inverse Mellin transform then yields the density of \(\tilde {u}\), denoted by \(\tilde {g}(\tilde {u})\), which is

where the c in the contour is any real number c > 0. There is a pole of order 1 at s = 0 and another pole of order 1 at s = −2, the residues at these poles being obtained as follows:

The pole at s = −1 is of order 2 and hence the residue is given by

Hence the density is the following:

and zero elsewhere, where u is real. It can readily be shown that \(\tilde {g}(\tilde {u})\ge 0\) and \(\int _0^1\tilde {g}(\tilde {u})\text{d}u=1\). This completes the computations.

Exercises 5.3

5.3.1

Evaluate the real p × p matrix-variate type-2 beta integral from first principles or by direct evaluation by partitioning the matrix as in Sect. 5.3.3 (general partitioning).

5.3.2

Repeat Exercise 5.3.6 for the complex case.

5.3.3

In the 2 × 2 partitioning of a p × p real matrix-variate gamma density with shape parameter α and scale parameter I, where the first diagonal block X 11 is r × r, r < p, compute the density of the rectangular block X 12.

5.3.4

Repeat Exercise 5.3.8 for the complex case.

5.3.5

Let the p × p real matrices X 1 and X 2 have real matrix-variate gamma densities with the parameters (α 1, B > O) and (α 2, B > O), respectively, B being the same for both distributions. Compute the density of (1): \(U_1=X_2^{-\frac {1}{2}}X_1X_2^{-\frac {1}{2}}\), (2): \(U_2=X_1^{\frac {1}{2}}X_2^{-1}X_1^{\frac {1}{2}}\), (3): \(U_3=(X_1+X_2)^{-\frac {1}{2}}X_2(X_1+X_2)^{-\frac {1}{2}}\), when X 1 and X 2 are independently distributed.

5.3.6

Repeat Exercise 5.3.10 for the complex case.

5.3.7

In the transformation Y = I − X that was used in Sect. 5.3.1, the Jacobian is \(\text{d}Y=(-1)^{\frac {p(p+1)}{2}}\text{d}X\). What happened to the factor \((-1)^{\frac {p(p+1)}{2}}\)?

5.3.8

Consider X in the (a) 2 × 2, (b) 3 × 3 real matrix-variate case. If X is real matrix-variate gamma distributed, then derive the densities of the determinant of X in (a) and (b) if the parameters are \(\alpha =\frac {5}{2},~B=I\). Consider \(\tilde {X}\) in the (a) 2 × 2, (b) 3 × 3 complex matrix-variate case. Derive the distributions of \(|\text{det}(\tilde {X})|\) in (a) and (b) if \(\tilde {X}\) is complex matrix-variate gamma distributed with parameters (α = 2 + i, B = I).

5.3.9

Consider the real cases (a) and (b) in Exercise 5.3.13 except that the distribution is type-1 beta with the parameters \((\alpha =\frac {5}{2},~\beta =\frac {5}{2})\). Derive the density of the determinant of X.

5.3.10

Consider \(\tilde {X}\), (a) 2 × 2, (b) 3 × 3 complex matrix-variate type-1 beta distributed with parameters \(\alpha =\frac {5}{2}+i,~\beta =\frac {5}{2}-i)\). Then derive the density of \(|\text{det}(\tilde {X})|\) in the cases (a) and (b).

5.3.11

Consider X, (a) 2 × 2, (b) 3 × 3 real matrix-variate type-2 beta distributed with the parameters \((\alpha =\frac {3}{2},~\beta =\frac {3}{2})\). Derive the density of |X| in the cases (a) and (b).

5.3.12

Consider \(\tilde {X},\) (a) 2 × 2, (b) 3 × 3 complex matrix-variate type-2 beta distributed with the parameters \((\alpha =\frac {3}{2},~\beta =\frac {3}{2})\). Derive the density of \(|\text{det}(\tilde {X})|\) in the cases (a) and (b).

5.4. The Densities of Some General Structures

Three cases were examined in Section 5.3: the product of real scalar gamma variables, the product of real scalar type-1 beta variables and the product of real scalar type-2 beta variables, where in all these instances, the individual variables were mutually independently distributed. Let us now consider the corresponding general structures. Let x j be a real scalar gamma variable with shape parameter α j and scale parameter 1 for convenience and let the x j’s be independently distributed for j = 1, …, p. Then, letting v 1 = x 1⋯x p,

Now, let y 1, …, y p be independently distributed real scalar type-1 beta random variables with the parameters \((\alpha _j,~\beta _j), ~ \Re (\alpha _j)>0,~\Re (\beta _j)>0,~j=1,\ldots ,p,\) and v 2 = y 1⋯y p,

for \(\Re (\alpha _j)>0,~\Re (\beta _j)>0,~ \Re (\alpha _j+h)>0,~j=1,\ldots ,p\). Similarly, let z 1, …, z p, be independently distributed real scalar type-2 beta random variables with the parameters (α j, β j), j = 1, …, p, and let v 3 = z 1⋯z p. Then, we have

for \(\Re (\alpha _j)>0,~ \Re (\beta _j)>0,~ \Re (\alpha _j+h)>0,~ \Re (\beta _j-h)>0,~j=1,\ldots ,p\). The corresponding densities of v 1, v 2, v 3, respectively denoted by g 1(v 1), g 2(v 2), g 3(v 3), are available from the inverse Mellin transforms by taking (5.4.1) to (5.4.3) as the Mellin transforms of g 1, g 2, g 3 with h = s − 1 for a complex variable s where s is the Mellin parameter. Then, for suitable contours L, the densities can be determined as follows:

where \(\Re (\alpha _j+s-1)>0,~ j=1,\ldots ,p,\) and g 1(v 1) = 0 elsewhere. This last representation is expressed in terms of a G-function, which will be defined in Sect. 5.4.1.

where \(G_{p,p}^{p,0}\) is a G-function, \(\Re (\alpha _j+s-1)>0,~\Re (\alpha _j)>0,~ \Re (\beta _j)>0,~j=1,\ldots ,p,\) and g 2(v 2) = 0 elsewhere.

where \(\Re (\alpha _j)>0,~\Re (\beta _j)>0,~\Re (\alpha _j+s-1)>0,~\Re (\beta _j-s+1)>0,~ j=1,\ldots ,p,\) and g 3(v 3) = 0 elsewhere.

5.4.1. The G-function

The G-function is defined in terms of the following Mellin-Barnes integral:

where the parameters a j, j = 1, …, p, b j, j = 1, …, q, can be complex numbers. There are three general contours L, say L 1, L 2, L 3 where L 1 is a loop starting and ending at −∞ that contains all the poles of Γ(b j + s), j = 1, …, m, and none of those of Γ(1 − a j − s), j = 1, …, n. In general L will separate the poles of Γ(b j + s), j = 1, …, m, from those of Γ(1 − a j − s), j = 1, …, n, which lie on either side of the contour. L 2 is a loop starting and ending at + ∞, which encloses all the poles of Γ(1 − a j − s), j = 1, …, n. L 3 is the straight line contour c − i∞ to c + i∞. The existence of the contours, convergence conditions, explicit series forms for general parameters as well as applications are available in Mathai (1993). G-functions can readily be evaluated with symbolic computing packages such as MAPLE and Mathematica.

Example 5.4.1

Let x 1, x 2, x 3 be independently distributed real scalar random variables, x 1 being real gamma distributed with the parameters (α 1 = 3, β 1 = 2), x 2, real type-1 beta distributed with the parameters \((\alpha _2=\frac {3}{2}+2i,~\beta _2=\frac {1}{2})\) and x 3, real type-2 beta distributed with the parameters \((\alpha _3=\frac {5}{2}+i,~\beta _3=2-i)\). Let \(u_1=x_1x_2x_3,~ u_2=\frac {x_1}{x_2x_3}\) and \( u_3=\frac {x_2}{x_1x_3}\) with densities g j(u j), j = 1, 2, 3, respectively. Derive the densities g j(u j), j = 1, 2, 3, and represent them in terms of G-functions.

Solution 5.4.1

Observe that \(E\big [\frac {1}{x_j}\big ]^{s-1}\!\!\!\!=\!E[x_j^{-s+1}],~j=1,2,3\), and that g 1(u 1), g 2(u 2) and g 3(u 3) will share the same ‘normalizing constant’, say c, which is the product of the parts of the normalizing constants in the densities of x 1, x 2 and x 3 that do not cancel out when determining the moments, respectively denoted by c 1, c 2 and c 3, that is, c = c 1 c 2 c 3. Thus,

The following are \(E[x_j^{s-1}]\) and \(E[x_j^{-s+1}]\) for j = 1, 2, 3:

Then from (i)-(iv),

Taking the inverse Mellin transform and writing the density g 1(u 1) in terms of a G-function, we have

Using (i)-(iv) and rearranging the gamma functions so that those involving + s appear together in the numerator, we have the following:

Taking the inverse Mellin transform and expressing the result in terms of a G-function, we obtain the density g 2(u 2) as

Using (i)-(iv) and conveniently rearranging the gamma functions involving + s, we have

On taking the inverse Mellin transform, the following density is obtained:

This completes the computations.

5.4.2. Some special cases of the G-function

Certain special cases of the G-function can be written in terms of elementary functions. Here are some of them:

for p ≤ q or p = q + 1 and |z| < 1.

5.4.3. The H-function

If we have a general structure corresponding to v 1, v 2 and v 3 of Sect. 5.4, say w 1, w 2 and w 3 of the form

for some δ j > 0, j = 1, …, p the densities of w 1, w 2 and w 3 are then available in terms of a more general function known as the H-function. It is again a Mellin-Barnes type integral defined and denoted as follows:

where α j > 0, j = 1, …, p, β j > 0, j = 1, …, q, are real and positive, a j, j = 1, …, p, and b j, j = 1, …, q, are complex numbers. Three main contours L 1, L 2, L 3 are utilized, similarly to those described in connection with the G-function. Existence conditions, properties and applications of this generalized hypergeometric function are available from Mathai et al. (2010) among other monographs. Numerous special cases can be expressed in terms of known elementary functions.

Example 5.4.2

Let x 1 and x 2 be independently distributed real type-1 beta random variables with the parameters (α j > 0, β j > 0), j = 1, 2, respectively. Let \(y_1=x_1^{\delta _1},~\delta _1>0,\) and \(y_2=x_2^{\delta _2},~\delta _2>0\). Compute the density of u = y 1 y 2.

Solution 5.4.2

Arbitrary moments of y 1 and y 2 are available from those of x 1 and

x 2.

Accordingly, the density of u, denoted by g(u), is the following:

where 0 ≤ u ≤ 1, \(\Re (\alpha _j-\delta _j+\delta _js)>0,~ \Re (\alpha _j)>0,~\Re (\beta _j)>0,~j=1,2\) and g(u) = 0 elsewhere.

When α 1 = 1 = ⋯ = α p, β 1 = 1 = ⋯ = β q, the H-function reduces to a G-function. This G-function is frequently referred to as Meijer’s G-function and the H-function, as Fox’s H-function.

5.4.4. Some special cases of the H-function

Certain special cases of the H-function are listed next.

where the Bessel function

where the generalized Mittag-Leffler function

where Γ(γ) is defined. For γ = 1, we have \(E_{\alpha ,\beta }^1(z)=E_{\alpha ,\beta }(z)\); when γ = 1, β = 1, \(E_{\alpha ,1}^1(z)=E_{\alpha }(z)\) and when γ = 1 = β = α, we have E 1(z) = ez.

where \(K_{\rho }^{\nu }(z)\) is Krätzel function

Exercises 5.4

5.4.1

Show that

5.4.2

Show that

5.4.3

Show that

5.4.4

Show that

5.4.5

Show that

5.5,5.5a. The Wishart Density

A particular case of the real p × p matrix-variate gamma distribution, known as the Wishart distribution, is the preeminent distribution in multivariate statistical analysis. In the general p × p real matrix-variate gamma density with parameters (α, B > O), let \(\alpha =\frac {m}{2},~ B=\frac {1}{2}\varSigma ^{-1}\) and Σ > O; the resulting density is called a Wishart density with degrees of freedom m and parameter matrix Σ > O. This density, denoted by f w(W), is given by

for m ≥ p, and f w(W) = 0 elsewhere. This will be denoted as W ∼ W p(m, Σ). Clearly, all the properties discussed in connection with the real matrix-variate gamma density still hold in this case. Algebraic evaluations of the marginal densities and explicit evaluations of the densities of sub-matrices will be considered, some aspects having already been discussed in Sects. 5.2 and 5.2.1.

In the complex case, the density is the following, denoted by \(\tilde {f}_w(\tilde {W})\):

and \(\tilde {f}_w(\tilde {W})=0\) elsewhere. This will be denoted as \(\tilde {W}\sim \tilde {W}_p(m,\varSigma )\).

5.5.1. Explicit evaluations of the matrix-variate gamma integral, real case

Is it possible to evaluate the matrix-variate gamma integral explicitly by using conventional integration? We will now investigate some aspects of this question.

When the Wishart density is derived from samples coming from a Gaussian population, the basic technique relies on the triangularization process. When Σ = I, that is, W ∼ W p(m, I), can the integral of the right-hand side of (5.5.1) be evaluated by resorting to conventional methods or by direct evaluation? We will address this problem by making use of the technique of partitioning matrices. Let us partition

where let X 22 = x pp so that \(X_{21}=(x_{p1},\ldots ,x_{p\,p-1}), ~X_{12}=X_{21}^{\prime }\). Then, on applying a result from Sect. 1.3, we have

Note that when X is positive definite, X 11 > O and x pp > 0, and the quadratic form \(X_{21}X_{11}^{-1}X_{12}>0\). As well,

Letting \(Y=x_{pp}^{-\frac {1}{2}}X_{21}X_{11}^{-\frac {1}{2}},\) then referring to Mathai (1997, Theorem 1.18) or Theorem 1.6.4 of Chap. 1, \(\text{d}Y=x_{pp}^{-\frac {p-1}{2}}|X_{11}|{ }^{-\frac {1}{2}}\text{d}X_{21}\) for fixed X 11 and x pp, . The integral over x pp gives

If we let u = Y Y ′, then from Theorem 2.16 and Remark 2.13 of Mathai (1997) or using Theorem 4.2.3, after integrating out over the Stiefel manifold, we have

(Note that n in Theorem 2.16 of Mathai (1997) corresponds to p − 1 and p is 1). Then, the integral over u gives

Now, collecting all the factors, we have

for \(\Re (\alpha )>\frac {p-1}{2}\). Note that \(|X_{11}^{(1)}|\) is (p − 1) × (p − 1) and |X 11|, after the completion of the first part of the operations, is denoted by \(|X_{11}^{(1)}|\), the exponent being changed to \(\alpha +\frac {1}{2}-\frac {p+1}{2}\). Now repeat the process by separating x p−1,p−1, that is, by writing

Here, \(X_{11}^{(2)}\) is of order (p − 2) × (p − 2) and \(X_{21}^{(2)}\) is of order 1 × (p − 2). As before, letting u = Y Y ′ with \(Y=x_{p-1,p-1}^{-\frac {1}{2}}X_{21}^{(2)}[X_{11}^{(2)}]^{-\frac {1}{2}},\) \(\text{d}Y=x_{p-1,p-1}^{-\frac {p-2}{2}}|X_{11}^{(2)}|{ }^{-\frac {1}{2}}\text{d}X_{21}^{(2)}.\) The integral over the Stiefel manifold gives \(\frac {\pi ^{\frac {p-2}{2}}}{\varGamma (\frac {p-2}{2})}u^{\frac {p-2}{2}-1}\text{d}u\) and the factor containing (1 − u) is \((1-u)^{\alpha +\frac {1}{2}-\frac {p+1}{2}}\), the integral over u yielding

and that over v = x p−1,p−1 giving

The product of these factors is then

Successive evaluations carried out by employing the same procedure yield the exponent of π as \(\frac {p-1}{2}+\frac {p-2}{2}+\cdots +\frac {1}{2}=\frac {p(p-1)}{4}\) and the gamma product, \(\varGamma (\alpha -\frac {p-1}{2})\varGamma (\alpha -\frac {p-2}{2})\cdots \varGamma (\alpha ),\) the final result being Γ p(α). The result is thus verified.

5.5a.1. Evaluation of matrix-variate gamma integrals in the complex case

The matrices and gamma functions belonging to the complex domain will be denoted with a tilde. As well, in the complex case, all matrices appearing in the integrals will be p × p Hermitian positive definite unless otherwise stated; as an example, for such a matrix X, this will be denoted by \(\tilde {X}>O\). The integral of interest is

A standard procedure for evaluating the integral in (5.5a.2) consists of expressing the positive definite Hermitian matrix as \(\tilde {X}=\tilde {T}\tilde {T}^{*}\) where \(\tilde {T}\) is a lower triangular matrix with real and positive diagonal elements t jj > 0, j = 1, …, p, where an asterisk indicates the conjugate transpose. Then, referring to (Mathai (1997, Theorem 3.7) or Theorem 1.6.7 of Chap. 1, the Jacobian is seen to be as follows:

and then

and

Now. integrating out over \(\tilde {t}_{jk}\) for j > k,

and

As well,

for j = 1, …, p. Taking the product of all these factors then gives

and hence the result is verified.

An alternative method based on partitioned matrix, complex case

The approach discussed in this section relies on the successive extraction of the diagonal elements of \(\tilde {X}\), a p × p positive definite Hermitian matrix, all of these elements being necessarily real and positive, that is, x jj > 0, j = 1, …, p. Let

where \(\tilde {X}_{11}\) is (p − 1) × (p − 1) and

and

Then,

Let

referring to Theorem 1.6a.4 or Mathai (1997, Theorem 3.2(c)) for fixed x pp and X 11. Now, the integral over x pp gives

Letting \(u=\tilde {Y}\tilde {Y}^{*}\), \(\text{ d}\tilde {Y}=u^{p-2}\frac {\pi ^{p-1}}{\varGamma (p-1)}\text{d}u\) by applying Theorem 4.2a.3 or Corollaries 4.5.2 and 4.5.3 of Mathai (1997), and noting that u is real and positive, the integral over u gives

Taking the product, we obtain

where \(\tilde {X}_{11}^{(1)}\) stands for \(\tilde {X}_{11}\) after having completed the first set of integrations. In the second stage, we extract x p−1,p−1, the first (p − 2) × (p − 2) submatrix being denoted by \(\tilde {X}_{11}^{(2)}\) and we continue as previously explained to obtain \(|\text{det}(\tilde {X}_{11}^{(2)})|{ }^{\alpha +2-p}\pi ^{p-2}\varGamma (\alpha -(p-2))\). Proceeding successively in this manner, we have the exponent of π as (p − 1) + (p − 2) + ⋯ + 1 = p(p − 1)∕2 and the gamma product as Γ(α − (p − 1))Γ(α − (p − 2))⋯Γ(α) for \(\Re (\alpha )>p-1\). That is,

5.5.2. Triangularization of the Wishart matrix in the real case

Let W ∼ W p(m, Σ), Σ > O be a p × p matrix having a Wishart distribution with m degrees of freedom and parameter matrix Σ > O, that is, let W have a density of the following form for Σ = I:

and f w(W) = 0 elsewhere. Let us consider the transformation W = TT ′ where T is a lower triangular matrix with positive diagonal elements. Since W > O, the transformation W = TT ′ with the diagonal elements of T being positive is one-to-one. We have already evaluated the associated Jacobian in Theorem 1.6.7, namely,

Under this transformation,

In view of (5.5.6), it is evident that t jj, j = 1, …, p and the t ij’s, i > j are mutually independently distributed. The form of the function containing t ij, i > j, is \(\text{e}^{-\frac {1}{2}t_{ij}^2},\) and hence the t ij’s for i > j are mutually independently distributed real standard normal variables. It is also seen from (5.5.6) that the density of \(t_{jj}^2\) is of the form

which is the density of a real chisquare variable having m − (j − 1) degrees of freedom for j = 1, …, p, where c j is the normalizing constant. Hence, the following result:

Theorem 5.5.1

Let the real p × p positive definite matrix W have a real Wishart density as specified in (5.5.4) and let W = TT ′ where T = (t ij) is a lower triangular matrix whose diagonal elements are positive. Then, the non-diagonal elements t ij such that i > j are mutually independently distributed as real standard normal variables, the diagonal elements \(t_{jj}^2,~ j=1,\ldots ,p,\) are independently distributed as a real chisquare variables having m − (j − 1) degrees of freedom for j = 1, …, p, and the \(t_{jj}^2\) ’s and t ij ’s are mutually independently distributed.

Corollary 5.5.1

Let W ∼ W p(n, σ 2 I), where σ 2 > 0 is a real scalar quantity. Let W = TT ′ where T = (t ij) is a lower triangular matrix whose diagonal elements are positive. Then, the t jj ’s are independently distributed for j = 1, …, p, the t ij ’s, i > j, are independently distributed, and all t jj ’s and t ij ’s are mutually independently distributed, where \(t_{jj}^2/{\sigma ^2}\) has a real chisquare distribution with m − (j − 1) degrees of freedom for j = 1, …, p, and t ij, i > j, has a real scalar Gaussian distribution with mean value zero and variance σ 2 , that is, \(t_{ij} \overset {iid}{\sim } N(0,\sigma ^2)\) for all i > j.

5.5a.2. Triangularization of the Wishart matrix in the complex domain

Let \(\tilde {W}\) have the following Wishart density in the complex domain:

and \(\tilde {f}_w(\tilde {W})=0\) elsewhere, which is denoted \(\tilde {W}\sim \tilde {W}_p(m,I)\). Consider the transformation \(\tilde {W}=\tilde {T}\tilde {T}^{*}\) where \(\tilde {T}\) is lower triangular whose diagonal elements are real and positive. The transformation \(\tilde {W}=\tilde {T}\tilde {T}^{*}\) is then one-to-one and its associated Jacobian, as given in Theorem 1.6a.7, is the following:

Then we have

In light of (5.5a.6), it is clear that all the t jj’s and \(\tilde {t}_{ij}\)’s are mutually independently distributed where \(\tilde {t}_{ij},~i>j,\) has a complex standard Gaussian density and \(t_{jj}^2\) has a complex chisquare density with degrees of freedom m − (j − 1) or a real gamma density with the parameters (α = m − (j − 1), β = 1), for j = 1, …, p. Hence, we have the following result:

Theorem 5.5a.1

Let the complex Wishart density be as specified in (5.5a.4), that is, \(\tilde {W}\sim \tilde {W}_p(m,I)\) . Consider the transformation \(\tilde {W}=\tilde {T}\tilde {T}^{*}\) where \(\tilde {T}=(\tilde {t}_{ij})\) is a lower triangular matrix in the complex domain whose diagonal elements are real and positive. Then, for i > j, the \(\tilde {t}_{ij}\) ’s are standard Gaussian distributed in the complex domain, that is, \(\tilde {t}_{ij}\sim \tilde {N}_1(0,1),~i>j\), \(t_{jj}^2\) is real gamma distributed with the parameters (α = m − (j − 1), β = 1) for j = 1, …, p, and all the t jj ’s and \(\tilde {t}_{ij}\) ’s, i > j, are mutually independently distributed.

Corollary 5.5a.1

Let \(\tilde {W}\sim \tilde {W}_p(m,\sigma ^2I)\) where σ 2 > 0 is a real positive scalar. Let \(\tilde {T},~ t_{jj},~ \tilde {t}_{ij},~i>j\) , be as defined in Theorem 5.5a.1 . Then, \(t_{jj}^2/{\sigma ^2}\) is a real gamma variable with the parameters (α = m − (j − 1), β = 1) for j = 1, …, p, \(\tilde {t}_{ij}\sim \tilde {N}_1(0,\sigma ^2)\) for all i > j, and the t jj ’s and \(\tilde {t}_{ij}\) ’s are mutually independently distributed.

5.5.3. Samples from a p-variate Gaussian population and the Wishart density

Let the p × 1 real vector X j be normally distributed, X j ∼ N p(μ, Σ), Σ > O. Let X 1, …, X n be a simple random sample of size n from this normal population and the p × n sample matrix be denoted in bold face lettering as X = (X 1, X 2, …, X n) where \(X_j^{\prime }=(x_{1j},x_{2j},\ldots ,x_{pj})\). Let the sample mean be \(\bar {X}=\frac {1}{n}(X_1+\cdots +X_n)\) and the matrix of sample means be denoted by the bold face \({\bar {\mathbf {X}}}=(\bar {X},\ldots ,\bar {X})\). Then, the p × p sample sum of products matrix S is given by

where \(\bar {x}_r=\sum _{k=1}^nx_{rk}/n,~ r=1,\ldots ,p,\) are the averages on the components. It has already been shown in Sect. 3.5 for instance that the joint density of the sample values X 1, …, X n, denoted by L, can be written as

But \((\mathbf {X}-{\bar {\mathbf {X}}})J=O,~ J^{\prime }=(1,\ldots ,1),\) which implies that the columns of \((\mathbf {X}-{\bar {\mathbf {X}}})\) are linearly related, and hence the elements in \((\mathbf {X}-{\bar {\mathbf {X}}})\) are not distinct. In light of equation (4.5.17), one can write the sample sum of products matrix S in terms of a p × (n − 1) matrix Z n−1 of distinct elements so that \(S=Z_{n-1}Z_{n-1}^{\prime }\). As well, according to Theorem 3.5.3 of Chap. 3, S and \(\bar {X}\) are independently distributed. The p × n matrix Z is obtained through the orthonormal transformation X P = Z, PP ′ = I, P′P = I where P is n × n. Then dX = dZ, ignoring the sign. Let the last column of P be p n. We can specify p n to be \(\frac {1}{\sqrt {n}}J\) so that \(\mathbf {X}p_n=\sqrt {n}\bar {\mathbf {X}}\). Note that in light of (4.5.17), the deleted column in Z corresponds to \(\sqrt {n}\bar {\mathbf {X}}\). The following considerations will be helpful to those who might need further confirmation of the validity of the above statement. Observe that \(\mathbf {X} -{\bar {\mathbf {X}}}=\mathbf {X}(I-B),\) with \(B=\frac {1}{n}JJ^{\prime }\) where J is a n × 1 vector of unities. Since I − B is idempotent and of rank n − 1, the eigenvalues are 1 repeated n − 1 times and a zero. An eigenvector, corresponding to the eigenvalue zero, is J normalized or \(\frac {1}{\sqrt {n}}J\). Taking this as the last column p n of P, we have \(\mathbf {X}p_n=\sqrt {n}\bar {\mathbf {X}}\). Note that the other columns of P, namely p 1, …, p n−1, correspond to the n − 1 orthonormal solutions coming from the equation BY = Y where Y is a n × 1 non-null vector. Hence we can write \(\text{d}Z=\text{d}Z_{n-1}\wedge \text{d}\bar {X}\). Now, integrating out \(\bar {X}\) from (5.5.7), we have

where c is a constant. Since Z n−1 contains p(n − 1) distinct real variables, we may apply Theorems 4.2.1, 4.2.2 and 4.2.3, and write dZ n−1 in terms of dS as

Then, if the density of S is denoted by f(S),

where c 1 is a constant. From a real matrix-variate gamma density, we have the normalizing constant, thereby the value of c 1. Hence

for S > O, Σ > O, n − 1 ≥ p and f(X) = 0 elsewhere, Γ p(⋅) being the real matrix-variate gamma given by

Usually the sample size is taken as N so that N − 1 = n the number of degrees of freedom associated with the Wishart density in (5.5.10). Since we have taken the sample size as n, the number of degrees of freedom is n − 1 and the parameter matrix is Σ > O. Then S in (5.5.10) is written as S ∼ W p(m, Σ), with m = n − 1 ≥ p. Thus, the following result:

Theorem 5.5.2

Let X 1, …, X n be a simple random sample of size n from a N p(μ, Σ), Σ > O. Let \(X_j,~\mathbf {X},~\bar {X},~{\bar {\mathbf {X}}},~S\) be as defined in Sect. 5.5.3 . Then, the density of S is a real Wishart density with m = n − 1 degrees of freedom and parameter matrix Σ > O, as given in (5.5.10).

5.5a.3. Sample from a complex Gaussian population and the Wishart density

Let \(\tilde {X}_j\sim \tilde {N}_p(\tilde {\mu },\varSigma ),~ \varSigma >O,~ j=1,\ldots ,n\) be independently distributed. Let \(\tilde {\mathbf {X}}=(\tilde {X}_1,\ldots ,\tilde {X}_n),~ \bar {\tilde {X}}=\frac {1}{n}(\tilde {X}_1+\cdots +\tilde {X}_n),~ {\bar {\tilde {\mathbf {X}}}}=(\bar {\tilde {X}},\ldots ,\bar {\tilde {X}})\) and let \(\tilde {S}=(\tilde {\mathbf {X}}-{\bar {\tilde {\mathbf {X}}}})(\tilde {\mathbf {X}}-{\bar {\tilde {\mathbf {X}}}})^{*}\) where a * indicates the conjugate transpose. We have already shown in Sect. 3.5a that the joint density of \(\tilde {X}_1,\ldots ,\tilde {X}_n\), denoted by \(\tilde {L}\), can be written as

Then, following steps parallel to (5.5.7) to (5.5.10), we obtain the density of \(\tilde {S}\), denoted by \(\tilde {f}(\tilde {S})\), as the following:

for \(\tilde {S}>O,~\varSigma >O,~ n-1\ge p,\) and \(\tilde {f}(\tilde {S})=0\) elsewhere, where the complex matrix-variate gamma function being given by

Hence, we have the following result:

Theorem 5.5a.2

Let \(\tilde {X}_j\sim \tilde {N}_p(\mu ,\varSigma ),~\varSigma >O,~j=1,\ldots ,n\) , be independently and identically distributed. Let \(\tilde {\mathbf {X}},~ \bar {\tilde {X}}, ~{\bar {\tilde {\mathbf {X}}}},~\tilde {S}\) be as previously defined. Then, \(\tilde {S}\) has a complex matrix-variate Wishart density with m = n − 1 degrees of freedom and parameter matrix Σ > O, as given in (5.5a.8).

5.5.4. Some properties of the Wishart distribution, real case

If we have statistically independently distributed Wishart matrices with the same parameter matrix Σ, then it is easy to see that the sum is again a Wishart matrix. This can be noted by considering the Laplace transform of matrix-variate random variables discussed in Sect. 5.2. If S j ∼ W p(m j, Σ), j = 1, …, k, with the same parameter matrix Σ > O and the S j’s are statistically independently distributed, then from equation (5.2.6), the Laplace transform of the density of S j is

where ∗ T is a symmetric parameter matrix T = (t ij) = T′ > O with off-diagonal elements weighted by \(\frac {1}{2}\). When S j’s are independently distributed, then the Laplace transform of the sum S = S 1 + ⋯ + S k is the product of the Laplace transforms:

Hence, the following result:

Theorems 5.5.3, 5.5a.3