Abstract

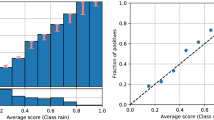

Considering the classification problem in which class priors or misallocation costs are not known precisely, receiver operator characteristic (ROC) analysis has become a standard tool in pattern recognition for obtaining integrated performance measures to cope with the uncertainty. Similarly, in situations in which priors may vary in application, the ROC can be used to inspect performance over the expected range of variation. In this paper we argue that even though measures such as the area under the ROC (AUC) are useful in obtaining an integrated performance measure independent of the priors, it may also be important to incorporate the sensitivity across the expected prior-range. We show that a classifier may result in a good AUC score, but a poor (large) prior sensitivity, which may be undesirable. A methodology is proposed that combines both accuracy and sensitivity, providing a new model selection criterion that is relevant to certain problems. Experiments show that incorporating sensitivity is very important in some realistic scenarios, leading to better model selection in some cases.

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Adams, N.M., Hand, D.J.: Comparing classifiers when misallocation costs are uncertain. Pattern Recognition 32(7), 1139–1147 (1999)

Bradley, A.P.: The use of the area under the ROC curve in the evaluation of machine learning algorithms. Pattern Recognition 30(7), 1145–1159 (1997)

Duda, R., Hart, P., Stork, D.: Pattern Classification, 2nd edn. Wiley - Interscience, Chichester (2001)

Duin, R.P.W.: PRTools, A Matlab Toolbox for Pattern Recognition. Pattern Recognition Group, TUDelft (January 2000)

ELENA. European ESPRIT 5516 project. Phoneme dataset (2004)

Metz, C.: Basic principles of ROC analysis. Seminars in Nuclear Medicine 3(4) (1978)

Murphy, P.M., Aha, D.W.: UCI repository of machine learning databases, University of California, Department of Information and Computer Science (1992), ftp://ftp.ics.uci.edu/pub/machine-learning-databases

Paclík, P.: Building road sign classifiers. PhD thesis, CTU Prague, Czech Republic (December 2004)

Provost, F., Fawcett, T.: Robust classification for imprecise environments. Machine Learning 42, 203–231 (2001)

Yuen, J.: Bayesian approaches to plant disease forecasting. Plant Health Progress (November 2003)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2006 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Landgrebe, T., Duin, R.P.W. (2006). Combining Accuracy and Prior Sensitivity for Classifier Design Under Prior Uncertainty. In: Yeung, DY., Kwok, J.T., Fred, A., Roli, F., de Ridder, D. (eds) Structural, Syntactic, and Statistical Pattern Recognition. SSPR /SPR 2006. Lecture Notes in Computer Science, vol 4109. Springer, Berlin, Heidelberg. https://doi.org/10.1007/11815921_56

Download citation

DOI: https://doi.org/10.1007/11815921_56

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-37236-3

Online ISBN: 978-3-540-37241-7

eBook Packages: Computer ScienceComputer Science (R0)