Abstract

In this paper, we propose a new dynamic learning framework that requires a small amount of labeled data in the beginning, then incrementally discovers informative unlabeled data to be hand-labeled and incorporates them into the training set to improve learning performance. This approach has great potential to reduce the training expense in many medical image analysis applications. The main contributions lie in a new strategy to combine confidence-rated classifiers learned on different feature sets and a robust way to evaluate the “informativeness” of each unlabeled example. Our framework is applied to the problem of classifying microscopic cell images. The experimental results show that 1) our strategy is more effective than simply multiplying the predicted probabilities, 2) the error rate of high-confidence predictions is much lower than the average error rate, and 3) hand-labeling informative examples with low-confidence predictions improves performance efficiently and the performance difference from hand-labeling all unlabeled data is very small.

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Cristianini, N., Shawe-Taylor, J.: Support Vector Machines and other kernel-based methods. Cambridge University Press, Cambridge (2000)

Breiman, L.: Bagging predictors. Machine Learning 26, 123–140 (1996)

Quinlan, J.R.: Bagging, boosting, and C4.5. In: Proc. AAAI-1996 Fourteenth National Conf. on Artificial Intelligence, pp. 725–730 (1996)

Freund, Y., Schapire, R.E.: A decision-theoretic generalization of on-line learning and an application to boosting. Journal of Computer and System Sciences 55, 119–139 (1997)

Schapire, R.E.: A brief introduction to boosting. In: Proc. of 16th Int’l Joint Conf. on Artificial Intelligence, pp. 1401–1406 (1999)

Bauer, E., Kohavi, R.: An empirical comparison of voting classification problems: Bagging, boosting and variants. Machine Learning 36, 105–142 (1999)

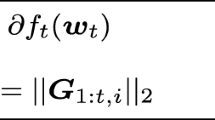

Schapire, R.E., Singer, Y.: Improved boosting algorithms using confidence-rated predictions. Machine Learning 37, 297–336 (1999)

Blum, A., Mitchell, T.: Combining labeled and unlabeled data with co-training. In: Proc. of the 1998 Conf. on Computational Learning Theory, pp. 92–100 (1998)

Levin, A., Viola, P., Freund, Y.: Unsupervised improvement of visual dectectors using co-training. In: Proc. of the Int’l Conf. on Computer Vision, pp. 626–633 (2003)

Turney, P., Littman, M., Bigham, J., Shnayder, V.: Combining independent modules to solve multiple-choice synonym and analogy problems. In: Proc. of the Int’l Conf. on Recent Advances in Natural Language Processing, pp. 482–489 (2003)

Anderson, J.A.: Logistic discrimination. In: Krishnaiah, P.R., Kanal, L.N. (eds.) Handbook of Statistics, vol. 2, pp. 169–191 (1982)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2005 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

He, W., Huang, X., Metaxas, D., Ying, X. (2005). Efficient Learning by Combining Confidence-Rated Classifiers to Incorporate Unlabeled Medical Data. In: Duncan, J.S., Gerig, G. (eds) Medical Image Computing and Computer-Assisted Intervention – MICCAI 2005. MICCAI 2005. Lecture Notes in Computer Science, vol 3749. Springer, Berlin, Heidelberg. https://doi.org/10.1007/11566465_92

Download citation

DOI: https://doi.org/10.1007/11566465_92

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-29327-9

Online ISBN: 978-3-540-32094-4

eBook Packages: Computer ScienceComputer Science (R0)