Abstract

In the present paper we apply a recently developed pattern recognition algorithm SPs to the problem of automated detection of artificial disturbances in one-second magnetic observatory data. The SPs algorithm relies on the theory of discrete mathematical analysis, which has been developed by some of the authors for more than 10 years. It continues the authors’ research in the morphological analysis of time series using fuzzy logic techniques. We show that, after a learning phase, this algorithm is able to recognize artificial spikes uniformly with low probabilities of target miss and false alarm. In particular, a 94% spike recognition rate and a 6% false alarm rate were achieved as a result of the algorithm application to raw one-second data acquired at the Easter Island magnetic observatory. This capability is critical and opens the possibility to use the SPs algorithm in an operational environment.

Similar content being viewed by others

1. Introduction

The global network of magnetic observatories is one of the main observation infrastructures for geomagnetic research. Magnetic observatory data are used for investigating the geomagnetic secular variation originating in the Earth’s outer core, as well as the rapid variations generated by electric currents in the ionosphere, the magnetosphere and the oceans (e.g., recent papers such as Love, 2008; Matzka et al., 2010). They are also used by a variety of governmental and industrial customers for applications such as directional drilling, reduction of magnetic survey data and space weather monitoring and forecasting (e.g., Reay et al., 2005; Marshall et al., 2011). Unlike other magnetometer networks, observatories are aimed at operating for several decades using internationally agreed standards of operations. About 120 observatories currently cooperate toward this goal within the INTERMAGNET program (www.intermagnet.org).

One of the main challenges faced by observatories is to being able to provide data of the highest quality on times scales ranging from one second to several decades. Up until a few years ago, most INTERMAGNET observatories were producing one-minute filtered data (from measurements sampled at a higher frequency, for example every 5 seconds; see St-Louis, 2008). However, in order to address the needs of the space physics community, several observatory programs have embarked into a modernization of their equipment in order to being able to produce one-second filtered data (e.g., Chulliat et al., 2009b; Worthington et al., 2009). As expected, the faster measurement sampling rate uncovered various signals that were previously filtered out in one-minute data, including some artificial disturbances that have to be removed from the final observatory data products. While at many observatories the one-second data cleaning represents a reasonable amount of work, it becomes a daunting task at some observatories, particularly those installed in remote but important locations where no optimal observatory site could be found. For example, it is the case at the recently installed magnetic observatory in Easter Island (Isla de Pascua Mataveri, IAGA code IPM; see Chulliat et al., 2009a and Fig. 1), where the close-by traffic of trucks and planes may generate more than hundred artificial disturbances every day.

Aerial view of the Isla de Pascua (Easter Island) magnetic observatory. The white, rectangular-shaped magnetometer container is located on the left side of the picture; the absolute hut is about 10 m to its right. Trucks circulating on the dirt road behind the observatory may generate more than hundred spikes per day.

In the present paper we apply a recently developed pattern recognition algorithm SPs (from SPIKEsecond) to the problem of automatically detecting artificial disturbances in one-second magnetic observatory data. The first important step towards automated magnetogram filtering was undertaken by Soloviev et al. (2009) and Bogoutdinov et al. (2010). The SPs algorithm relies on the theory of discrete mathematical analysis (Gvishiani et al., 2008a, 2010), which has been developed by some of the authors for more than 10 years. It continues the authors’ research in the morphological analysis of time series using fuzzy logic techniques (see e.g., Agayan et al., 2005; Gvishiani et al., 2008a, b). We show that, after a learning phase, this algorithm is able to distinguish artificial disturbances from natural ones, such as short-period geomagnetic pulsations in the 1 s–1 min period range (e.g., Samson, 1991). This capability is critical and opens the possibility to use the SPs algorithm in an operational environment.

2. Description of the SPs Algorithm

The SPs algorithm is a tool applicable to any time series that has specific time anomalies (disturbances), which have to be identified. The algorithm is aimed at recognition of singular spikes S of any nature with a simple morphology on a record y. (Note that SPs is not able to recognize jumps; this is done by another algorithm, JM, currently being developed by some of us.) An example of such spike, generated by a nearby running truck, is given in Fig. 2. The logic, which underlies the algorithm, is based on the following model of a spike. A spikeS is defined as a record fragment having a tipt (S), where two opposite sharp slopesSl and Sr meet, surrounded by quiet spike wingsWl (S) and Wr (S) (Fig. 2). In order to formalize the logic of the algorithm, we use the concepts of fuzzy comparison and fuzzy extremality (Zadeh, 1965; Gvishiani et al., 2008a, b). The detailed mathematical description of the algorithm is given in Soloviev et al. (2012). In what follows, we provide a brief summary of SPs.

The SPs algorithm consists of three blocks: “Λ-analysis”, “Search for quasi-spikes” and “Selection of spikes” (Fig. 3). The starting record y is a time series y = y(t) givenonan interval of discrete positive semiaxis  , where h is the discretization step and k is the observation node.

, where h is the discretization step and k is the observation node.

The SPs algorithm begins its search by considering a local extremum of y as a possible tip t = t(S) of a spike S. The algorithm evaluates the slopes Sl and Sr on each side of t. If they turn out to be sharp enough, the triplet S = (Sl, t, Sr), referred to as a quasi-spike, is further examined. Next, the algorithm searches for quiet wings Wl(S) and Wr(S) to the left and to the right of Sl and Sr, respectively. If quiet wings are detected, the quasi-spike is recognized as a spike, as defined above. The algorithm is aimed specifically at recognizing such spikes on a record y.

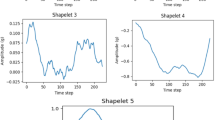

The central part of the algorithm is the “Λ-analysis” (see Fig. 3), which provides a quantitative evaluation of the level of sharpness of slopes and the level of quietness of wings. It also distinguishes “ascending” and “descending” slopes. For a given fragment Δky = {y k ,…, yk+ Δ } of the record y, a linear regression (Draper and Smith, 1966) is calculated by the least-square technique. The regression coefficients are then used to determine whether the fragment is ascending or descending, and to derive an indicator of activity within the fragment. Determining whether this activity is large (“sharp” fragment) or small (“quiet” fragment) is performed by using fuzzy comparisons (Gvishiani et al., 2008a, b) between a large number of fragments of varying lengths Λ = {Δ1,…, Δ m }. In SPs, the following fuzzy comparison function is used:

for two numbers A and B, and where ν is a fixed parameter. It yields a number between −1 and 1 which quantifies how much B is larger than A.

The other blocks of SPs algorithm, “Search for quasispikes” and “Selection of spikes” (Fig. 3), use the described classifications and correspondingly identify quasispikes and choose genuine spikes among them.

The algorithm depends on the three free parameters SPs = SPs (ν, ρ1, ρ2) (Fig. 3):

-

ν—parameter of fuzzy comparison,

-

ρ1—level of sharpness of the slopes Sl and Sr,

-

ρ2—level of quietness of the wings Wl(S) and Wr(S).

A given set of free parameters is denoted by π = (ν, ρ1, ρ2).

3. Testing Dataset and Methodology

We tested the SPs algorithm on raw one-second data acquired at the Easter Island magnetic observatory in July and August 2009 (IPM, Fig. 1). The data include measurement values of the three components of the geomagnetic field vector along the North (X), East (Y) and downward vertical (Z) directions before baseline correction, and total intensity F of the geomagnetic field. Each 1-day 1-channel record registered with 1 Hz frequency consists of 86,400 data points.

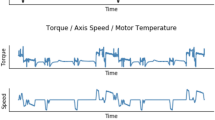

The testing dataset was entirely cleaned using standard observatory tools; i.e., spikes caused by trucks, planes and other artificial sources were manually removed after a detailed inspection of daily magnetograms. Figure 4 shows examples of such spikes. They have a characteristic shape, which makes them easily recognizable by eye. However, due to a vast amount of spikes in one-second magnetograms (around 2,000 spikes per month for each component, see Tables 1, 2, 5) the manual filtering procedure becomes extremely laborious. Moreover, they should not be confused with geomagnetic pulsations or other geophysical events (Fig. 5), which should not be removed. The complete statistical information on the events detected by eye, including estimation of spike amplitudes and durations, is given in Tables 1 and 2.

The first part of the testing dataset, from 1 to 20 July 2009, was used for the training of the algorithm. As can be seen in Table 1 the mean spike amplitude vary from one channel to the next, and therefore we performed algorithm learning for each channel X, Y, Z, F separately. As a result, we were able to obtain the optimal free parameter values of the algorithm for each channel independently. In order to select optimal values of free parameters, we implemented a brute-force search, i.e., we systematically tried a large number of values (Knuth, 1968). First, each 1-day 1-channel data series was processed by the algorithm using the following set of free parameter values:

These values were pre-selected based upon the known behavior of the fuzzy comparison function and some preliminary tests. In total, |Π| = 100 combinations of free parameters were tested. To assess recognition quality we introduce the following function to be minimized:

where SPs(π) is a result of the algorithm operation with some combination of free parameter values π expressed in a set of intervals on the time axis, which define recognized events; P1 is the probability of the first kind error (target miss) defined as  (where N is the number of spikes); P2 is the probability of the second kind error (false alarm) defined as

(where N is the number of spikes); P2 is the probability of the second kind error (false alarm) defined as  (Bogoutdinov et al., 2010). In the criterion Kλ we put λ = 0.8, thus expressing a higher degree of importance of not missing spikes versus avoiding false alarms. The value of the parameter λ was obtained by testing the algorithm for λ = 0.1, 0.2, …, 0.9 on an arbitrary set of free parameters and selecting the value for which the best recognition was achieved.

(Bogoutdinov et al., 2010). In the criterion Kλ we put λ = 0.8, thus expressing a higher degree of importance of not missing spikes versus avoiding false alarms. The value of the parameter λ was obtained by testing the algorithm for λ = 0.1, 0.2, …, 0.9 on an arbitrary set of free parameters and selecting the value for which the best recognition was achieved.

One should note that the range of free parameter values given above is quite wide. In order to better identify free parameter values, we took a small neighborhood around the already found optimal solution. It entailed examination of additional 125 combinations of free parameters. Following the same line for assessing the recognition quality as on the first stage of learning, we obtained the optimal free parameter values for each channel. In Bogoutdinov et al. (2010), it was shown that different optimal combinations of the free parameters were found for different observatories recording one-minute data. It is expected that a similar situation will arise in the case of other observatories recording one-second second data.

Once the free parameters were fixed, we first tested the algorithm by applying it to the time interval from 21 to 31 July 2009 and comparing with the results of manual data cleaning. By separating the dataset in two parts, we thus made sure that the testing was performed on an independent dataset. Next, we applied the algorithm to the time interval from 1 to 31 August 2009 and then performed recognition of spikes by eye in order to check the results.

4. Results

4.1 Results of the learning phase

The following optimal free parameter values were found to give the best results for the overall criterion of recognition K0.8 for each channel:

The overall numbers of target misses and false alarms as well as the values of the recognition criterion for each component are provided in Table 3. The best results of the algorithm learning were achieved in the case of the horizontal components X and Y, where the error probabilities varied between 3.5% and 11.5%. Less good results were obtained in the case of the vertical component Z, where the error probabilities of the first and the second kinds were 17.1% and 18.0% correspondingly. This difference is attributed to the smaller average amplitude of the spikes on the Z component during the learning phase time interval, which made them more difficult to detect.

Some screenshots illustrating application results of the algorithm  are given in Figs. 6 and 7.

are given in Figs. 6 and 7.

4.2 Results of the testing phase

The results of the testing phase are provided in Table 4. Comparison of recognition results obtained on data for 1–20 July (learning material) and 21–31 July (testing material) shows that the recognition quality is about the same. Formally it is confirmed by very close values of the calculated quality criterion (Tables 3, 4). It can be concluded that the overall recognition performance achieved during the learning phase could be reproduced during the testing phase.

4.3 Results of the blind test

The blind test involved data recorded from 1 to 31 August 2009 with no a priori expert opinion. The results of the recognition by the algorithm SPs = SPs(π*) were subsequently evaluated by eye. The overall recognition statistics for the whole set of data are provided in Table 5.

The probability of missed spikes for the X component is 3.72%, that of false alarms is 0.68%, to be compared with 4.7% of missed spikes and 8.7% of false alarms for the 1/07–20/07 time interval (Table 3) and 5.9% of missed spikes and 6.0% of false alarms for the 21/07–31/07 time interval (Table 4). In the case of the other components Y, Z and the total intensity F the blind test also demonstrated higher efficiency of the algorithm application comparing to results of learning and testing phases, which is well reflected in the corresponding values of K0.8 quality criterion (Tables 3–5).

The difference in algorithm recognition quality K0.8 obtained for records for August and July 2009 is likely due to the fact that it was easier to carry out manual data processing by eye having at the disposal the results of the algorithm recognition (August data), rather than to analyze raw magnetograms “from scratch” (July data). Thus for July data the quality of manual recognition of spikes turned to be worse. This shows that the algorithm significantly helped the recognition by eye. It also provides some estimate of the amount of errors made when relying on manual spike detection.

Missed spikes and extra events recognized by the algorithm in August data were separately examined and the following conclusions were made: usually extra events represent either geomagnetic pulsations or other natural geomagnetic signals occurring in a narrow frequency band, whereas missed spikes in some cases represent long anomalous intervals not caused by trucks or airplanes.

The results of the blind test confirm that the learned algorithm is able to detect most of the spikes, and shows that there is some variability from one day/week/month to the next.

5. Discussion

In the present paper we introduced the algorithm SPs, able to automatically recognize spikes caused by artificial disturbances in magnetic observatory data sampled every second. We applied this algorithm to the recently installed observatory in Easter Island, where nearby trucks and planes cause several tens of such spikes every day. We showed that, after a 20-day learning phase in July 2009, the algorithm is able to recognize more than 94% of the spikes on the three components and the intensity recordings in August 2009, while the percentage of false alarms is less than 6%. At all the stages the algorithm showed worse results in processing vertical component Z.

A detailed examination of the false alarms reveals that most of them are due to geomagnetic pulsations. It is indeed very difficult sometime, even for a trained data expert, to distinguish a pulsation from an artificial spike. The occurrence of a pulsation can generally be inferred from the simultaneous occurrence of a pulsation-like signal at a nearby observatory. This functionality is not included in the present version of the algorithm. In some rare cases, false alarms are due to the temporary increase of the background noise, whose origin is unknown.

A standard method to detect spikes in magnetic observatories consists in taking the difference dF = Fs − Fv between the field modulus Fs = F directly measured by the scalar magnetometer and that Fv calculated from the three components measured by the vector magnetometer. Normally, dF should vary by a up to a few tenths of nT around a constant non-zero value due to the differences in transfer functions and locations of the instruments. Instrumental spikes and other anomalies generally lead to a larger than normal value of dF, which can easily be detected. Typical IPM disturbances caused by nearby trucks and planes do also cause an increase of the dF absolute value, due to the distance between the two magnetometers (about two meters) and their different transfer functions. However, in some cases, the resulting dF spike is not easily distinguishable from the instrumental noise, as can be seen in the example shown in Fig. 8. On the contrary, quite often dF record does not reflect spikes, which are present in initial geomagnetic records. The corresponding example is given in Fig. 9. It should be noted that the both examples lie within one hour period of one day.

Example of “false spike” seen on dF record (bottom) on the left. Spikes recognized by eye on initial records X, Y, Z and F are marked on the corresponding records with black. The “false spike” seen on dF record could be representative of low amplitude spikes on X, Y, Z and F records and therefore less visible in the background noise of the X, Y, Z and F recordings.

Another disadvantage of dF method is that it needs presence of both vector data on the three components and scalar data on total field intensity and consequently correct operation of the both devices is required. The method becomes invalid if one of the devices doesn’t work properly or registration of one of the three vector components is failed. On the contrary, data filtration using the SPs algorithm can be applied to any particular record regardless of the presence of other records. It makes the algorithm applicable not only at magnetic observatories but also at magnetic stations where only variational data registration is carried out.

We plan to carry out further studies on seasonal and activity level dependence of the recognition results. The described algorithm is currently being implemented in the operation of the Russian-Ukrainian geomagnetic data center hosted by the Geophysical Center of the Russian Academy of Sciences. The development of a web application based upon the SPs algorithm is also being considered, in order to make it available to the wider magnetic observatory community.

References

Agayan, S. M., S. R. Bogoutdinov, A. D. Gvishiani, E. M. Graeva, J. Zlotnicki, and M. V. Rodkin, Study of signal morphology basing on fuzzy logic algorithms, Geophys. Res. Proc. Moscow, IPE RAS, 1, 143–155, 2005 (in Russian).

Bogoutdinov, S. R., A. D. Gvishiani, S. M. Agayan, A. A. Solovyev, and E. Kihn, Recognition of disturbances with specified morphology in time series. part 1: spikes on magnetograms of the worldwide INTERMAGNET network, Izv. Phys. Solid Earth, 46 (11), 1004–1016, 2010.

Chulliat, A., X. Lalanne, L. R. Gaya-Piqué, F. Truong, and J. Savary, The new Easter Island magnetic observatory, in Proceedings of the XIIIth IAGA Workshop on Geomagnetic Observatory Instruments, Data Acquisition and Processing, edited by J. J. Love, 271 pp., U.S. Geological Survey Open-File Report 2009-1226, 2009a.

Chulliat, A., J. Savary, K. Telali, and X. Lalanne, Acquisition of 1-second data in IPGP magnetic observatories, in Proceedings of the XIIIth IAGA Workshop on Geomagnetic Observatory Instruments, Data Acquisition and Processing, edited by J. J. Love, 271 pp., U.S. Geological Survey Open-File Report 2009-1226, 2009b.

Draper, N. R. and H. Smith, Applied Regression Analysis, 407 pp., Wiley New York, 1966.

Gvishiani, A. D., S. M. Agayan, Sh. R. Bogoutdinov, J. Zlotnicki, and J. Bonnin, Mathematical methods of geoinformatics. III. Fuzzy comparisons and recognition of anomalies in time series, Cybern. Syst. Anal., 44 (3), 309–323, 2008a.

Gvishiani, A. D., S. M. Agayan, and Sh. R. Bogoutdinov, Fuzzy recognition of anomalies in time series, Dokl. Earth Sci., 421 (5), 838–842, 2008b.

Gvishiani, A. D., S. M. Agayan, S. R. Bogoutdinov, and A. A. Soloviev, Discrete mathematical analysis and geological and geophysical applications, Vestnik KRAUNZ. Earth Sciences, 2, 16, 109–125, 2010 (in Russian).

Knuth, D., The Art of Computer Programming. Volume 3: Sorting and Searching, 800 pp., Addison-Wesley USA, 1968.

Love, J. J., Magnetic monitoring of Earth and space, Phys. Today, 61, 31–37, 2008.

Marshall, R. A., E. A. Smith, M. J. Francis, C. L. Waters, and M. D. Sciffer, A preliminary risk assessment of the Australian region power network to space weather, Space Weather, 9, S10004, doi:10.1029/2011SW000685, 2011.

Matzka, J., A. Chulliat, M. Mandea, C. Finlay, and E. Qamili, Geomagnetic observations for main field studies: from ground to space, Space Sci. Rev., 155, 29–64, doi:10.1007/s11214-010-9693-4, 2010.

Reay, S. J., W. Allen, O. Baillie, J. Bowe, E. Clarke, V. Lesur, and S. Macmillan, Space weather effects on drilling accuracy in the North Sea, Ann. Geophys., 23, 3081–3088, doi:10.5194/angeo-23-3081-2005, 2005.

Samson, J. C., Geomagnetic pulsations and plasma waves in the Earth’s magnetosphere, in Geomagnetism. Vol. 4, edited by J. A. Jacobs, 481–592, 825 pp., Academic Press London, 1991.

Soloviev, A. A., Sh. R. Bogoutdinov, S. M. Agayan, A. D. Gvishiani, and E. Kihn, Detection of hardware failures at INTERMAGNET observatories: Application of artificial intelligence techniques to geomagnetic records study, Russ. J. Earth Sci., 11, ES2006, doi:10.2205/2009ES000387, 2009.

Soloviev, A. A., S. M. Agayan, A. D. Gvishiani, S. R. Bogoutdinov, and A. Chulliat, Recognition of disturbances with specified morphology in time series. Part 2: Spikes on 1-second magnetograms, Izv. Phys. Solid Earth, 2012 (accepted).

St-Louis, B., INTERMAGNET Technical Reference Manual, Version 4.4, 86 pp., 2008.

Worthington, E. W., E. A. Sauter, and J. J. Love, Analysis of USGS one-second data, in Proceedings of the XIIIth IAGA Workshop on Geomagnetic Observatory Instruments, Data Acquisition and Processing, edited by J. J. Love, 271 pp., U.S. Geological Survey Open-File Report 2009-1226, 2009.

Zadeh, L. A., Fuzzy sets, Inf. Control., 8, 338–353, 1965.

Acknowledgements

We thank Alan W. P. Thomson and Hans-Joachim Linthe for constructive reviews. The research has been carried out in the framework of collaboration program between Institut de Physique du Globe de Paris (IPGP), Moscow Institute of Physics of the Earth of Russian Academy of Sciences (IPE RAS) and Geophysical Center of RAS (GC RAS). The development of the algorithm SPs has been carried out in the framework of the project number 12-05-90428 supported by Russian Foundation for Basic Research. The Easter Island (IPM) magnetic observatory is jointly operated by Dirección Meteorológica de Chile (DMC) and IPGP. We thank INTERMAGNET for promoting high standards of magnetic observatory practice (www.intermagnet.org). This is IPGP contribution number 3288.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made.

The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder.

To view a copy of this licence, visit https://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Soloviev, A., Chulliat, A., Bogoutdinov, S. et al. Automated recognition of spikes in 1 Hz data recorded at the Easter Island magnetic observatory. Earth Planet Sp 64, 743–752 (2012). https://doi.org/10.5047/eps.2012.03.004

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.5047/eps.2012.03.004