Abstract

Over the past 50 years there has been a strong interest in applying eye-tracking techniques to study a myriad of questions related to human and nonhuman primate psychological processes. Eye movements and fixations can provide qualitative and quantitative insights into cognitive processes of nonverbal populations such as nonhuman primates, clarifying the evolutionary, physiological, and representational underpinnings of human cognition. While early attempts at nonhuman primate eye tracking were relatively crude, later, more sophisticated and sensitive techniques required invasive protocols and the use of restraint. In the past decade, technology has advanced to a point where noninvasive eye-tracking techniques, developed for use with human participants, can be applied for use with nonhuman primates in a restraint-free manner. Here we review the corpus of recent studies (N=32) that take such an approach. Despite the growing interest in eye-tracking research, there is still little consensus on “best practices,” both in terms of deploying test protocols or reporting methods and results. Therefore, we look to advances made in the field of developmental psychology, as well as our own collective experiences using eye trackers with nonhuman primates, to highlight key elements that researchers should consider when designing noninvasive restraint-free eye-tracking research protocols for use with nonhuman primates. Beyond promoting best practices for research protocols, we also outline an ideal approach for reporting such research and highlight future directions for the field.

Similar content being viewed by others

Introduction

Neural and muscular control of the eyes may have evolved to facilitate stability of the retinal image during head and body movements. Stabilizing gaze during movement fixes the visual field projection onto the retina, allowing for photosensitive receptors on the retina to depolarize (Walls, 1962). Across phyla, these compensatory movements are often ballistic, in the form of eye, head, or body saccades (Land, 1999). Analogous movements of the head and body have been observed in phyla as distant as mantids (Rossel, 1980), Mollusca (Collewijn, 1970), and arthropods (Land, 1969; Paul, Nalbach, & Varjú, 1990). As an extension of this involuntary-compensatory motor control system, many animals, including mammals, have evolved the capacity for eye movements, including fixation, smooth pursuit, and voluntary saccades, that allow for foveation on salient points of interest in the environment (Schumann et al., 2008).

Eye-tracking studies in humans have shown that the distribution of fixations on a particular scene can vary dramatically depending on the task a subject is engaged in (Yarbus, 1967). This finding provided one of the first demonstrations that eye movements and fixations can provide qualitative and quantitative insights into cognitive processes. Since then, researchers have studied eye movements to describe how individuals interact with their world in at least two important ways. First, tracking eye movements allows a researcher to gain insight about an individual’s normal and abnormal cognitive processing, which can be extended for comparative assessment within and across subjects. Second, eye tracking allows a researcher to quantitatively assess and qualitatively describe an individual’s interaction with their environment. Such techniques not only offer us insight into spontaneous and unconscious decisions that humans are likely unable to (reliably) articulate (as well as conscious ones), but they also provide a unique opportunity to gain a deeper understanding of preverbal or nonverbal individuals. Thus, in recent years, especially with the advancement of noninvasive approaches, eye tracking has seen increased adoption among those studying preverbal human infants and children (Gredebäck, Johnson, & von Hofsten, 2009; Papagiannopoulou, Chitty, Hermens, Hickie, & Lagopoulos, 2014) and nonverbal animals, including nonhuman primates (Machado & Nelson, 2011), dogs (Karl, Boch, Virányi, Lamm, & Huber, 2020), and rodents (Zoccolan, Graham, & Cox, 2010), although the applications of such research are likely not yet fully realized (e.g., Billington, Webster, Sherratt, Wilkie, & Hassall, 2020).

Given the common use of nonhuman primates (hereafter: primates) as an animal model in research, their similar physiology to humans, and the vast insights they can provide into the foundational cognitive underpinnings and evolutionary origins of human cognition, primates were the first nonhuman animals to be used in eye-tracking research protocols. For over 50 years, researchers have attempted to gain insights into primate cognition using a variety of eye-tracking methods. At the most fundamental level, tracking the eye movements of primates reveals what captures and holds their attention, since eye movement and fixation patterns are markers of overt attention (Smith, Rorden, & Jackson, 2004). This technique has subsequently been applied to study attention in a variety of primate species, including prosimians, monkeys, and apes. Today, we have been able to move beyond simply recording primates’ attention to stimuli, and, using eye-tracking technology, we can get a deeper understanding of primate socio-cognitive decision-making (Kano, Krupenye, Hirata, Tomonaga, & Call, 2019; Krupenye, Kano, Hirata, Call, & Tomasello, 2016; Krupenye, Kano, Hirata, Call, & Tomasello, 2017; Krupenye & Call, 2019; Shepherd, Deaner, & Platt, 2008), spatial awareness and object perception (Hall-Haro, Johnson, Price, Vance, & Kiorpes, 2008; Ruiz & Paradiso, 2012), and memory and cognitive reasoning (Alvarado, Murphy, & Baxter, 2017; Howard, Wagner, Woodward, Ross, & Hopper, 2017; Kano & Hirata, 2015), as well as gaining insights into developmental changes in primates’ vision and engagement (e.g., Gunderson, 1983; Muschinski et al., 2016; Simpson et al., 2017; Wang, Payne, Moss, Jones, & Bachevalier, 2020). Eye-tracking paradigms also offer enhanced flexibility with respect to what and how stimuli are presented, as stimuli presented on a computer screen can be manipulated to test hypotheses that are not possible in real-world scenarios, and stimulus presentation can be repeated precisely while standardizing methods across subjects (Hopper, Lambeth, & Schapiro, 2012). For example, stimuli can be altered (Gothard, Brooks, & Peterson, 2009; Paukner et al., 2013) or avatars can be used to simulate specific target information (Krupenye & Hare, 2018; Paukner et al., 2014). However, the manner in which we obtain that information has advanced greatly over the decades.

While early attempts to measure eye gaze in primates were relatively crude, the more sophisticated and sensitive techniques that later emerged required invasive protocols (e.g., involving surgery and implantation of recording devices) and the use of restraint (e.g., head posts and primate chairs). In the past decade, however, technology has advanced to a point where noninvasive eye-tracking techniques, developed for use with human participants, can be applied to primates. Even more recently, researchers have explored ways to present stimuli to primate subjects and track their responses using completely restraint-free methods. Yet, despite the growing interest in this approach to eye-tracking research, there is still little consensus on “best practices,” both in terms of deploying test protocols and in reporting methods and results. Therefore, here we highlight key elements that researchers should consider when designing noninvasive restraint-free eye-tracking research protocols for use with primates, and we also outline best practices in terms of reporting. For context, we first discuss the refinement of eye-tracking practices that have been used with primate subjects over the decades.

Early attempts at noninvasive measurement of primate eye gaze

Prior to the advent of noninvasive remote eye trackers, several noninvasive methods for recording visual attention in primates were developed. One of the earliest methods allowed monkeys to scroll through stimuli by pressing a lever, which controlled a slide carousel. When the monkeys (Macaca sp.) pressed the lever, the apparatus projected an image onto a wall that was visually accessible from the monkey’s test cage; duration or frequency of lever pressing was taken as a measure of visual interest (e.g., Fujita, 1987; Sackett, 1966). Although innovative, this metric is a relatively indirect estimate of visual attention, as lever pressing does not necessarily equate to visual attention to an image. Moreover, the manual response likely required initial learning, which could have further impacted the results. Nonetheless, studies using this method revealed that macaques prefer looking at images of their own species, with specific studies illuminating developmental (Sackett, 1966) and comparative (Fujita, 1987) insights into such preferences.

A second method, primarily used with infant pigtailed macaques (M. nemestrina), involved an experimenter holding the monkey in front of two screens on which the stimuli were presented. The subject’s gaze was recorded with a video camera and the experimenter could view the direction of the monkey’s gaze on a television monitor. Comparable to methods used at that time to measure and record human infants’ attention to stimuli (e.g., Baillargeon, 1987), in these early tests with primates the experimenter used foot pedals to record the duration of the subject’s gaze on each of the two stimuli in real time (Gunderson, Grant-Webster, & Sackett, 1989; Gunderson & Sackett, 1984; Gunderson & Swartz, 1985; Lutz, Lockard, Gunderson, & Grant, 1998). This method was used to investigate monkeys’ preferences for facial stimuli (Lutz et al., 1998) and visual pattern recognition (Gunderson & Sackett, 1984; Gunderson & Swartz, 1985; Gunderson et al., 1989).While this method provides a more direct measure of eye gaze than the above-described lever-pressing metric, live-scoring a subject’s looking behavior is open to errors, experimenter bias, and possibly unintentional experimenter cuing, and no doubt requires a high level of training to achieve expertise and reliability.

A third method for noninvasive measurement of visual attention was to record subjects’ attention to a single moving stimulus, which takes advantage of primates’ tendency to visually track pertinent stimuli. Primarily used with infant primates (e.g., Kuwahata, Adachi, Fujita, Tomonaga, & Matsuzawa, 2004; Myowa-Yamakoshi & Tomonaga, 2001), subjects were presented with a stimulus that was moved 90 degrees to the right or left along a semicircular track. Subjects were filmed and their visual tracking of the stimulus (measured in degrees from a neutral starting point) was scored. Similar to the foot pedal method, the visual tracking method required subjects to be held by an experimenter – which may not be feasible with all species or age groups – and required researchers to manually code looking time. This method has been used to study face recognition in both monkeys (M. fuscata and M. mulatta, Kuwahata et al., 2004) and apes (Hylobates agilis, Myowa-Yamakoshi & Tomonaga, 2001). Both of these studies replicated methods used by developmental psychologists to test the ontogeny of human infants’ recognition of facial features (Johnson & Morton, 1991), further highlighting how protocols developed to test preverbal human infants have been successfully applied to test nonverbal primates (and vice versa). Exchange between comparative and developmental methodologies continues to prove fruitful (e.g., Howard et al., 2017; Krupenye et al., 2016; Krupenye & Hare, 2018).

The most commonly used approach for the noninvasive, but manual, measurement of primates’ attention is to film subjects as they are presented with stimuli and later code their visual attention to stimuli frame-by-frame. This approach has been applied in a number of contexts and with a range of stimuli. These experiments involve either a free-viewing paradigm, in which stimuli are shown for a predetermined length and the duration of the subject’s attention to them is coded, or a habituation-dishabituation task, whereby subjects are first habituated to a single image for a predetermined amount of time, and then that same image is presented together with a novel image and the subject’s relative attention to the novel and known stimuli is recorded (Winters, Dubuc, & Higham, 2015). For either method, a video camera is placed facing the subject and is used to document the subject’s eye movements throughout the test. After completion of the test, the experimenter codes the subject’s gaze toward the stimuli offline. Often, increased attention to a specific stimulus is taken as a preference for that stimulus, while in looking-time tasks, visual preference for a novel image over a familiar one is taken to indicate recognition of the familiar image. In a lab setting, one or two computer monitors are typically used to display the target stimuli (e.g., Dufour, Pascalis, & Petit, 2006; Neiworth, Hassett, & Sylvester, 2007; Pascalis & Bachevalier, 1998; Sclafani et al., 2016; Waitt et al., 2003); however, physical stimuli that differ in some way have also been presented to primates in this manner (e.g., Paukner, Huntsberry, & Suomi, 2010). This approach has been used extensively to study face preferences or recognition in a variety of primate species (e.g., M. mulatta, Waitt, Gerald, Little, & Kraiselburd, 2006; Sapajus apella, Paukner, Wooddell, Lefevre, Lonsdorf, & Lonsdorf, 2017; Pan troglodytes, Myowa-Yamakoshi, Tomonaga, Tanaka, & Matsuzawa, 2003). Furthermore, this method has been successfully adapted for use with free-ranging monkeys in which pairs of printed photographs or physical test objects have been presented to macaques (M. mulatta) to test a variety of questions related to face perception, understanding of socio-sexual cues, and physical cognition (e.g., Higham et al., 2011; Hughes, Higham, Allen, Elliot, & Hayden, 2015; Hughes & Santos, 2012; Mahajan et al., 2011). Similar frame-by-frame coding has also been used to measure visual attention to single stimuli (e.g., Marticorena et al. 2011; Simpson, Paukner, Suomi, & Ferrari, 2014) or video images (Anderson, Kuroshima, Paukner, & Fujita, 2009). While flexible, this method has drawbacks. As video coding is completed manually, the process is time- and labor-intensive and requires training for reliability. Moreover, gaze directions can be difficult to estimate, as the target of the gaze is often not included on the video footage in order to facilitate blind coding, and without a white sclera, judging the direction of a primate’s gaze can be challenging.

Invasive or restraint-based eye-tracking techniques

While the aforementioned methods can be applied with a variety of species and in a range of settings, the majority of these techniques offer limited accuracy and control and also require laborious coding, which is time consuming and error prone. Thus, researchers have turned to more accurate, but more invasive, eye-tracking tools to precisely measure visual attention in primates (Johnston & Everling, 2019; Mitchell & Leopold, 2015; Moran & Desimone, 1985). Such approaches offer a more detailed understanding of what primates attend to beyond the simple discrimination between two stimuli or the duration of attention to a single stimulus afforded by the noninvasive approaches described above. More recent approaches have offered researchers not only increased precision, but also the flexibility to present multimodal stimuli to study primates’ cross-modal integration of sensory cues (e.g., Ghazanfar, Chandrasekaran, & Logothetis, 2008; Ghazanfar, Maier, Hoffman, & Logothetis, 2005; Payne & Bachevalier, 2013; Sliwa, Duhamel, Pascalis, & Wirth, 2011).

A primary concern in obtaining accurate measurements of eye position is ensuring that the primate’s head remains motionless such that subjects can only track stimuli via discrete eye movements. A subject’s movement restriction is typically accomplished through the use of primate chairs (e.g., Hall-Haro, Johnson, Price, Vance, & Kiorpes, 2008; Hu et al., 2013; Sugita, 2008), with additional means to restrain the head (e.g., Emery, Lorincz, Perrett, Oram, & Baker, 1997; Machado, Bliss-Moreau, Platt, & Amaral, 2011; Machado, Whitaker, Smith, Patterson, & Bauman, 2015). To achieve precise visual measurement, some studies have relied on implantation of head posts or fixation devices (e.g., Adams, Economides, Jocson, & Horton, 2007; Blonde et al., 2018; Dal Monte, Noble, Costa, & Averbeck, 2014) in addition to scleral search coils, which are implanted directly into the eye (e.g., Deaner, Khera, & Platt, 2005; Gothard, Erickson, & Amaral, 2004; Shepherd, Deaner, & Platt, 2006). More recent efforts using such techniques have explored ways to afford primate subjects increased freedom of movement without compromising the accuracy or precision of the eye-tracking data (e.g., De Luna, Mustafar, & Rainer, 2014; Milton, Shahidi, & Dragoi, 2020).

Scleral search coils, in which a small coil of wire is implanted in the sclera, have been used with primates for nearly 50 years (Fuchs & Robinson, 1966; Judge, Richmond, & Chu, 1980), building from a technique developed in the 1960s for use with humans (Robinson, 1963). Electric currents are generated in the search coils, via the use of electromagnets, from which the direction and angular displacement of the eye can be inferred (Shelhamer & Roberts, 2010). Such a system offers high spatial and temporal resolution, but it also suffers a number of limitations. As with any surgical intervention, implantation of fixation devices or search coils carries the risk of infection, pain, and discomfort to the subject, which may not only affect their visual attention but also pose a risk to their health and well-being. Additionally, the coils have a limited use period, which may require additional surgical procedures for them to be replaced or repaired. Moreover, not all investigators may have access to the expertise and facilities required to undertake these surgical modifications, and certain facilities (e.g., sanctuaries and zoos) do not permit such invasive approaches for research purposes.

In response to these concerns, optical trackers were adapted to measure primate gaze and attention (Morimoto, Koons, Amir, & Flickner, 2000). Not only does this approach negate the need for surgical procedures, but it also enables the researcher to record pupil size as well as eye movement. For example, infrared eye trackers face the subject and measure corneal reflections as the eye moves, permitting both the diameter of the pupil and the direction of the gaze to be calculated. Kimmel, Mammo, and Newsome (2012) directly compared the efficacy of a sclera-embedded search coil (C-N-C Engineering) and an infrared eye tracker (EyeLink 1000 optical system, SR Research) in two monkeys (M. mulatta). From this study, Kimmel et al. (2012) found “broad agreement” between the two systems, and while they noted a number of discrepancies, they concluded that the noninvasive eye-tracker device “now rivals that of the search coil, rendering optical systems appropriate for many if not most applications, p 49.” However, it should be noted that for both approaches, the monkeys were tested under restraint: during testing the monkeys were placed in a chair and their heads stabilized. The use of head restraints and primate chairs requires a period of training and adjustment that can be time-consuming and not suitable for all subjects, species, or research settings. Finally, research protocols often demand that the subject is separated from their social group for testing, which may induce additional anxiety and stress (Cronin, Jacobson, Bonnie, & Hopper 2017).

More recently, some researchers have adopted eye-tracking methods that are noninvasive but that still rely on light restraint or interaction with primates for testing. For example, and following the way in which much cognitive testing is run in humans where babies are held by a caregiver, some noninvasive studies with infant rhesus macaques (M. mulatta) have involved researchers gently holding the monkeys, orienting them in front of a stimulus (e.g., Alvarado et al., 2017; Damon et al., 2017; Dettmer et al., 2016; Paukner, Bower, Simpson, & Suomi, 2013; Paukner Simpson, Ferrari, Mrozek, & Suomi, 2014; Paukner, Slonecker, Murphy, Wooddell, & Dettmer, 2018; Simpson et al., 2016, 2017, 2019; Slonecker, Simpson, Suomi, & Paukner, 2018). Other studies have placed unrestrained monkey infants on their sedated mothers to facilitate eye tracking (e.g., Muschinski et al., 2016; Wang, et al., 2020). Such methods have been used to address a range of questions including neonatal imitation (Paukner et al., 2014), preferences for social stimuli (Dettmer et al., 2016), and memory (Slonecker et al., 2018). A similar approach has been used with enculturated chimpanzees: researchers sat with the chimpanzee and held their head in place when viewing stimuli to facilitate eye-tracking recording (e.g., Hirata, Fuwa, Sugama, Kusunoki, & Fujita, 2010; Myowa-Yamakoshi, Scola, & Hirata, 2012).

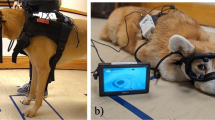

A second noninvasive, light-restraint method that has been applied with primates and other nonhuman animals is the use of wearable eye trackers (i.e., mounted on headgear). This technique has been used successfully with free-ranging lemurs (Lemur catta, Shepherd & Platt, 2006), chimpanzees (P. troglodytes, Kano & Tomonaga, 2013), peahens (Pavo cristatus, Yorzinski, Patricelli, Babcock, Pearson, & Platt, 2013; Yorzinski, Patricelli, Platt, & Land, 2015), and domestic dogs (Canis lupus familiaris, Kis, Hernádi, Miklósi, Kanizsár, & Topál, 2017; Williams, Mills, & Guo, 2011). For these methods, more so than for most studies using headgear with human populations, habituation and training must be involved in order for most primates to tolerate wearing headgear and to mitigate the high risk that individuals can destroy the equipment. Thus, the most broadly applicable approach, across subjects, species, and settings, is likely a completely noninvasive, restraint-free protocol.

Noninvasive and restraint-free eye-tracking approaches

Contemporary eye-tracking technology has advanced such that certain data can be collected without the need for invasive procedures or the use of restraint devices. This creates opportunities for research to be conducted at certain facilities or with certain individuals and species in which invasive procedures are not permitted or feasible. For example, while zoos and sanctuaries house a higher diversity of species than do traditional research settings, they typically are not able to accommodate research protocols that require extensive training, separation of primates from group mates, or invasive protocols (Hopper, 2017; Ross & Leinwand, 2020). Noninvasive and restraint-free approaches offer the potential for eye-tracking research to be conducted in such settings, meaning a greater variety of individuals and species could be tested, expanding our understanding of cognition across and within species. Indeed, to date, noninvasive eye-tracking research has been successfully implemented in a number of zoos and sanctuaries, although only with a few species thus far (Fig. 1). Beyond setting, a subject’s age or health factors may also restrict which primates can participate in invasive studies that entail sedation or surgery, and so noninvasive restraint-free techniques may allow for greater flexibility as to which populations can be tested. In this way, noninvasive restraint-free eye-tracking methods offer a potential way to increase the diversity of research subjects and settings, subsequently expanding our knowledge of underrepresented species in cognitive research. Additionally, as many eye-tracking units are now small and mobile, it may be feasible to test individuals across multiple enclosures or facilities with a single device, reducing the upfront cost of such research and further allowing for wide-scale comparative research. Finally, given the lack of required habituation training or surgeries, testing can be completed in a relatively short time frame, further reducing the burden on the primates and host institution, which may facilitate longitudinal ontogenetic research where data at specific developmental milestones need to be quickly gathered, and for which extensive training may create undesirable lags in testing schedules.

The average number of subjects tested per study by species in noninvasive, restraint-free eye-tracking research studies with primates (see also Table 1)

At the time of writing, we have identified 32 peer-reviewed articles that report eye-tracking studies run with primates in a noninvasive and completely restraint-free manner (Table 1). The first such study was conducted with chimpanzees (P. troglodytes) housed at the Primate Research Institute of Kyoto University, Japan, and investigated how chimpanzees and humans visually process pictures of primates, non-primate animals, and humans (Kano & Tomonaga, 2009). Since the publication of this first study, there has been continued interest in this approach, with an average of three articles reporting such methods published in each of the subsequent 10 years (i.e., 2010–2019). Furthermore, these methods have been successfully applied to primates in a range of settings including sanctuaries, zoos, and research facilities (Table 1). However, all but four of the studies we identified were run with ape species (Gorilla gorilla, P. troglodytes, P. paniscus, and P. abelii), with chimpanzees being tested more frequently than any other species (Table 1, Fig. 1). In certain studies, authors tested primates at more than one site to increase the sample size tested; for example, Kano et al. (2019) tested a total of 29 chimpanzees housed across three facilities (Table 1). In addition to the overrepresentation of certain species, we also identified overrepresentation of individual subjects: most studies were run at one of three sites (Primate Research Institute, Japan, Kumamoto Sanctuary, Japan, and the Wolfgang Köhler Primate Research Center at the Leipzig Zoo, Germany), which means that, although the number of noninvasive eye-tracking studies is growing, a relatively small number of primates is overrepresented in this sample (Table 1). More recently, however, noninvasive and restraint-free eye-tracking techniques have been implemented at new sites (e.g., Lincoln Park Zoo, USA and Buffalo Zoo, USA) and with non-ape species (e.g., S. apella and Callicebus cupreus) (Table 1, Fig. 1).

As the 32 studies we identified were run across a range of settings and facilities, there are differences in what the various research groups have defined as a “restraint-free” method (see also the Methods section below for a more detailed review of the different approaches used and our recommendations for future protocols). While none of the subjects in the 32 studies listed in Table 1 were restrained (e.g., in a primate chair, or held by an experimenter), some subjects were tested in relatively small testing booths and/or separated from their social group for testing, thus manipulated in some manner by the experimenter. It is worth noting that in most of these cases, primates voluntarily enter such testing rooms and elect to be separated in order to participate in eye-tracking research. In a couple of cases, however, subjects were tested in a group setting in their home enclosure without additional encouragement to engage with the research (Chertoff et al., 2018; Howard et al., 2017), a method that can be considered completely restraint-free. While such an approach reduces the control that experimenters have over the subjects’ location in relation to the eye tracker and their attention to the stimuli, and in turn the variability in how different subjects experience the stimuli, such an approach likely increases the external validity of the results and enhances the welfare of subjects (Cronin et al., 2017), while paving the way toward testing free-ranging or wild primates with eye-tracking technology.

Methods: Best practices and lessons learned

Given that there is growing interest in using noninvasive eye-tracking devices with primates to address a variety of questions, what lessons can be learned from the corpus of studies that have already been conducted? What equipment is best suited for particular species or environments? How should an experimental protocol be designed to maximize the accuracy and reliability of the data collected from unrestrained primates? By reviewing the previously published studies as well as pooling our own experiences using noninvasive eye trackers with primates and human infants, we hope to shed light on this approach and provide guidance for best practices for those wishing to test primates by noninvasive means without the use of restraint. While the majority of the 32 studies we reviewed (Table 1) used an eye tracker to ask an empirical question, often regarding face perception, memory, or social cognition, a subset were run with the explicit aim of protocol development and refinement (i.e., Chertoff et al., 2018; Kano & Tomonaga, 2009; Ryan et al., 2019; Wilson, Buckley, & Gaffan, 2010). These studies provide especially useful insights into what techniques can be applied with certain populations (e.g., infant primates, Ryan et al., 2019) or in certain nontraditional research settings (e.g., zoos, Chertoff et al., 2018), but they also contain detailed information about training protocols, calibration, and validation techniques (e.g., Wilson et al., 2010). Here we collate information across the 32 studies that have been conducted in the 10 years following the first reported noninvasive restraint-free eye-tracking study with primates (Kano & Tomonaga, 2009), to provide insights into common approaches and lessons learned.

As noted above, eye-tracking research is constrained by the need for subjects to attend to the stimuli presented, for there to be an unobscured view of the subject’s eyes (and therefore gaze), and for the subject’s head to remain in a relatively stable position. Such considerations are harder to achieve when working with unrestrained primates, who can turn their head, or even move away from the eye tracker entirely. For example, Chertoff et al. (2018), working with zoo-housed gorillas, reported that “because the gorillas were free to leave at any time, only data for one or two stimuli were collected at a given time, sometimes resulting in incomplete recordings, p 295” and that, ultimately, of a possible six test subjects, only “two gorillas stayed in front of the screen long enough to record gaze data, p 296”. In fact, some degree of data loss was reported by the majority of the studies that we reviewed, including both the loss of data for entire subjects and the loss of subsets of data for certain subjects. For example, Kano, Call, and Tomonaga (2012) noted that two of the apes they tested did not reliably attend to all stimuli, as they sometimes averted their heads from certain stimuli, but that attention was high for 13 subjects. Importantly, and as we will outline below, with increased experience, researchers are now able to ensure high-quality data and relatively high rates of voluntary participation in noninvasive eye-tracking studies. Specifically, this has been achieved through a series of procedural innovations that encourage subjects’ engagement with the task (e.g., Kano et al., 2011; Ryan et al., 2019) and careful consideration of the ways in which stimuli are presented (e.g., only a few trials a day to accommodate primates’ short attention spans or repeating trials to ensure data are captured).

Finally, although eye-tracking has been validated in a number of primate populations using devices and algorithms that have been optimized for humans (Table 1), some subject pools may require population-specific hardware or software solutions to become testable. This is a potential concern specifically for infant and juvenile primates or for species that are more distantly related to humans, as these groups likely differ more markedly from humans in their facial and ocular morphology. Below, we discuss these considerations, present approaches to accommodate different species, and look to future methodological and technological refinements that may further help facilitate eye-tracking research with primates in a noninvasive and restraint-free manner.

Hardware

As can be seen in Table 1, the most commonly reported manufacturer used for fully noninvasive primate testing is Tobii (Tobii Technology, Sweden). Given that (Tobii) eye trackers have been developed for use with human participants, and primates’ eyes and faces differ from humans in terms of size and morphology (e.g., Glittenberg et al., 2009; Kobayashi & Kohshima, 2001) as well as inter-pupil distance, users have reported varying success across the different models for use with primates (e.g., Kano, Call, & Tomonaga, 2012, reported that the eye tracker they used [Tobii X120] was unable to track both eyes of one adult male gorilla due to the wide distance between his eyes). In spite of this, Kano and Tomonaga (2009) reported that for humans and chimpanzees tested under comparable protocols (using Tobii X120), “the average error when viewing the screen (the distance between measured and intended gaze points) was less than 0.5° in both species, p 1950.” Hirata, Fuwa, Sugama, Kusunoki, and Fujita (2010) also reported that the average errors were between 0.15° and 0.66° in the six chimpanzees tested using an equivalent setting (Tobii T60). Loss rate in data collection, which occurs in a restraint-free eye tracker due to participants’ eye blinks and postural changes, and the subsequent brief moments before the eye-tracker recaptures the participants’ eyes, was reported to be comparable (6–7%) in the chimpanzees and humans tested under comparable protocols (Kano & Tomonaga, 2009). However, according to our experience, this loss rate can vary between studies and species depending on the subject, species, and the testing environment (lighting, barrier between subject and eye tracker).

Fully integrated Tobii models (e.g., T60, T60XL, TX300) involve a combination of both the eye-tracking device and a monitor. Such models provide adequate levels of accuracy and sampling rates (reported to be up to 0.4 degrees of accuracy in human participants and 300 Hz, depending on the model). Unfortunately, these models have now been discontinued by the manufacturer, and thus are harder to come by for laboratories seeking to set up new testing facilities. Newer “mobile” models released by Tobii, and also models previously used with primates (e.g., X2-60, X3-120, X120), provide a smaller form factor that allows for more mobility and can be paired with any number of monitors. Specifically, rather than a single integrated unit, these systems are simply an eye-tracking device that the researcher can attach to their own monitor (e.g., Howard et al., 2017) or use in a freestanding manner (e.g., Wolf & Tomasello, 2019). These mobile systems have accuracy and data collection similar to that of the fully integrated systems (up to 0.4 degrees of accuracy in human participants and 120 Hz sampling rates), and given their size and portability, researchers have been able to deploy them outside of a lab setting with human populations, highlighting the flexibility they afford (e.g., Kardan et al., 2017, used a Tobii X2-60 eye tracker to test human participants in rural communities in the state of Yucatan, Mexico). However, there is currently little data to verify whether these smaller models show comparable performance to more widely used machines for identifying, calibrating, and continuously tracking primate subjects’ eyes.

The newest research-based models from Tobii (e.g., the Tobii Pro Spectrum, Tobii Pro Nano) have yet to be tested with primates in a rigorous way, and thus comparisons to other models are not possible at this time. However, one of us (F. Kano) evaluated these models with chimpanzees and found that both models failed to capture the eyes of five of six chimpanzees tested. While many of us have personally used the earlier Tobii models for our own research, we have experienced varying degrees of efficacy with the different models, dependent on our test subject species and testing environment, with most of us preferring the TX-300 or X-120 models, which appear better able to detect and, continuously and reliably, track primates’ eyes through various interfaces. Indeed, these two models were used in the majority of the studies we identified in our review (only five articles reported using different models, Table 1). Unfortunately, at this time, and unlike research on human participants (e.g., Brisson et al., 2013; Morgante, Zolfaghari, & Johnson, 2012; Niehorster, Cornelissen, Holmqvist, Hooge, and Hessels, 2018), no studies to date have explicitly reviewed or compared tracker manufacturer or model efficiency in primates, making quantitative comparisons across these different manufacturers’ hardware systems difficult.

While eye-tracking systems manufactured by Tobii are those most commonly used for remote or restraint-free testing in primates, other models have been used in situations where the primate is lightly restrained (e.g., held gently or positioned by an experimenter, fitted with a head-mounted tracking system) or placed in a head rest. These experiments have used ISCAN (Nummela et al., 2019), Applied Science Laboratories (Zola, Manzanares, Clopton, Lah, & Levey, 2013), and EyeLink (Kawaguchi et al., 2019) models with success. However, the necessity of restraint and/or direct interaction with the animal prevents such approaches from being considered both noninvasive and restraint-free.

Software

Many eye-tracking hardware systems can be purchased with accompanying software that allows for basic stimulus presentation and data analysis. For example, Tobii hardware systems are often used in conjunction with Tobii Studio or Tobii Pro Studio, or the more recently released Tobii Pro Lab. Unfortunately, none of the articles we reviewed provided any evaluation of the software or customized codes used in terms of efficacy or flexibility. Therefore, here we present our own experience in conducting testing with such software, including the new iterations of Tobii software (unpublished data). In our experience in using them, commercial software packages are often user-friendly, allowing for a less intensive entry into eye-tracking research and obviating the need for programming fluency. However, they can be costly and may require a subscription for technical support and software updates. Furthermore, they may lack full flexibility in terms of stimulus presentation (e.g., requiring certain file formats), stimulus programming (e.g., lacking the ability to program gaze-contingent paradigms), and data analysis, although we note, for example, that the newer Tobii Pro Lab offers greater flexibility in terms of methodological design and trial presentation as compared to Tobii Studio. Furthermore, most packages allow the researcher to export raw data for independent analysis. Given the potential restrictions and limitations of “off-the-shelf” software, some researchers have turned to more general software tools such as EPrime (Psychology Software Tools, Inc., Sharpsburg, PA), MATLAB (MathWorks, Natick, MA), R (R Core Team, 2017), or Python (van Rossum and Drake, 1995) for various aspects of data collection and analysis, while some have utilized eye-tracking-specific third-party software, such as GazeTracker.

Testing setup

Eye-tracking systems typically involve the integration of a computer (laptop or desktop, to control stimulus presentation and gaze recording), external monitor(s) and speakers (to present stimuli, except when gaze-tracking live scenes), and the tracking apparatus. Additional components might include a video camera or webcam oriented to the subject for offline coding or verification, or an external processing unit to assist with mobile data processing and connections across systems (often used with the Tobii X2-60 or X3-120). Two or more of these components may be incorporated into a single apparatus. For example, some systems combine the computer monitor, the tracking apparatus, and a built-in video camera, requiring only a computer fitted with the appropriate software for a complete setup. Others have components that can be added ad hoc, which can allow for increased flexibility in terms of testing environments. For example, units touted as “mobile” (e.g., Tobii X2-60) often boast a small tracking apparatus that can be flexibly affixed to monitors of various sizes and used in combination with a laptop or a more permanent desktop system. In place of a monitor, it is also possible to eye-track a live scene, if subjects have been calibrated to the parameters of that scene (e.g., Wolf & Tomasello, 2019, Table 1).

As shown in Table 1, computer monitor sizes previously utilized with primates vary from 43 to 63 cm, although the maximum monitor size (and aspect ratio) is typically constrained by the requirements of the hardware, so we suggest that new users refer to user manuals or company documentation before selecting a monitor. Related to this, a subject’s distance from the monitor may help inform the necessary screen size, as this is a crucial component to consider when calculating stimulus visual angle (see below). As with monitor size, subject viewing distance is constrained by the limitations of individual eye-tracking systems, and our review found a distance of 50–70 cm between the subject and screen to be the most common. Some experimental setups will yield a more stable viewing distance (e.g., test settings where subjects are rewarded for staying in one location) than others in which the viewing distance is considerably variable across trials (e.g., free-viewing setups where subjects come and go at will or are free to move within a larger enclosure), and below we discuss ways in which researchers have incentivized subjects to stay in relatively fixed locations without the use of restraint. Importantly, researchers should carefully document and report these parameters in research reports.

In addition to the size of the screen and the subject’s relative distance from it, another important environmental factor to consider is lighting. From our personal experience we recommend that those working with primates should avoid testing in direct sunlight or in conditions that are very dark. Indeed, Tobii recommends that “eye tracking studies be performed in a controlled environment. Sunlight should be avoided since it contains high levels of infrared light which will interfere with the eye tracker system. Sunlight affects eye tracking performance severely and longer exposure can overheat the eye tracker, p 24” (Tobii, 2019). From our review of the literature, researchers did not typically report the light levels (lux) of their testing environment, but this should be encouraged as it would facilitate replication and greater understanding of what test conditions work best for different primate species.

As mentioned previously, all of the testing setups reported by the studies we identified in our review (Table 1) allowed for some freedom of movement on the part of the subject, as all were devoid of traditional constraints such as chairs, head posts, or masks. However, variability was observed in terms of the size of testing enclosures and incentives provided to keep subjects in place in front of the eye tracker. We consider each of these elements in turn.

Considering the size of the testing environment, in our review of the literature we found great variability in terms of the size and familiarity of the testing enclosure across species and facility. In some studies (particularly those testing smaller species) subjects were tested in a dedicated testing (or “transport”) box, equipped with a small viewing window (e.g., Ryan et al., 2019). As subjects could only see the visual stimuli by looking through this small window, the distance and angle of subject viewing remained relatively constant across trials and subjects without the need for further physical restraint. Instead of transporting subjects to a new location, some labs have opted to create testing cubicles or rooms adjacent to the subjects’ home cage, allowing subjects to voluntarily enter this dedicated testing space (e.g., Howard, Festa, & Lonsdorf, 2018; Kano & Tomonaga, 2009). These spaces are smaller than the subjects’ home cage and so afford more experimental control, but still allow increased freedom of movement by the subject (meaning that researchers report variable viewing distances and angles across trials). Finally, in two studies (both run with zoo-housed primates), the eye-tracking system was placed at the periphery of the subjects’ home enclosure, and the primates were free to come and watch the visual stimuli at will (Chertoff et al., 2018; Howard et al., 2017). This setup allows subjects to be tested in a familiar environment and without separation from their social group, though it requires study designs that can work with various trial or testing block lengths to account for absolute freedom of movement, and greater success was found with subjects that were already familiar with cognitive testing.

In addition to the size of the testing enclosure, we also found variation in the substrates through which primates viewed the stimuli (Table 1). In most instances, primates viewed stimuli through plastic (acrylic/Plexiglas or polycarbonate) viewing panels (Fig. 2), but a few studies tested primates through cage mesh or without any visual barrier (Table 1). Unfortunately, at this time, no empirical evaluation of different viewing substrates has been conducted, and drawing comparisons across published data would be too speculative due to the numerous confounds across studies (i.e., differences in species tested, environmental factors such as illumination, hardware used, and test stimuli and protocols employed). However, Kano et al. (2011) reported that testing chimpanzees via an acrylic barrier as compared to no barrier did not impact the accuracy of eye-tracking data obtained. The type of material implemented as a barrier is likely influenced by the species being studied (e.g., visual barriers for apes would need to be much more robust than those for use with small platyrrhine monkeys) and the feasibility of cage modifications in light of cost or logistical restrictions (e.g., if testing primates at a zoo, the researcher may not be afforded an option to modify caging for testing, see Chertoff et al., 2018; Howard et al., 2017). For those establishing new eye tracking programs and who have the capacity to retrofit or construct new testing suites, evaluating the relative efficacy of different interfaces (perhaps through the use of interchangeable viewing windows) would be valuable.

Three of the 32 studies that we identified did not employ any barrier between the test subjects and the eye-tracking device. In one case it was because the chimpanzee subjects had been habituated to such testing protocols, although this scenario is extremely rare (Kano, Hirata, Call, & Tomonaga, 2011). The other two studies that reported providing no barrier (Ryan et al., 2019; Wilson, Buckley, & Gaffan, 2010) both tested monkeys that viewed stimuli through small apertures in the test cage. Not only does such an approach negate any confounds of a barrier between the subjects’ eyes and the eye tracker, but the small viewing window can help to encourage the subjects’ attention to the stimuli (see Ryan et al., 2019, for a discussion of this approach). Mesh-based barriers are appealing in that they permit eye tracking without modification to infrastructure (e.g., at zoos, where a dedicated testing environment may not be available). Testing through mesh has been successfully implemented in some locations (e.g., Howard et al., 2017); however, metal bars or cage mesh can obstruct the eye tracker’s ability to detect a subject’s eyes and can also lead the eye tracker to frequently lose them. Consequently, such setups will likely result in higher rates of data loss, and the relative success of such an approach is dependent on the gauge of the mesh and the size of the test subject. Viewing through mesh is probably suitable only for certain testing paradigms (e.g., preferential-looking tests) where lost data are unlikely to bias the results in any particular direction, rather than those tests that demand the collection of more fined-grained data. To overcome these concerns and permit a greater range of paradigms, many researchers present primates with stimuli through transparent acrylic or polycarbonate windows (e.g., Kano, Hirata, Call, & Tomonaga, 2011; Krupenye et al., 2016). Both materials appear suitable for eye-tracking; they differ mainly in that polycarbonate is stronger than acrylic, and therefore panels can be thinner (although this is not known to impact gaze-tracking in any way), whereas acrylic is more scratch-resistant and therefore probably does not need to be replaced as frequently as polycarbonate. However, the thickness of the plastic may vary, and few of the published reports provide the thickness of the transparent barrier used, so comparisons across studies to understand how thickness impacts eye detection are limited. From our personal experience, however, testing through glass is not efficacious.

Researchers have also developed various strategies to incentivize primates to voluntarily approach the test apparatus and remain in a constant position throughout stimulus presentation without the need for physical restraint. Different incentive strategies may impact the relative stability of viewing angle and distance during testing. Some studies provide no incentive or reinforcement save for engaging stimuli (e.g., Ryan et al., 2019), while others have provided food reinforcement, but only directly before and after subjects have completed the study paradigm (e.g., Howard et al., 2017, 2018) (i.e., to reward general participation, rather than for looking at specific stimuli). Finally, there are a number of instances where subjects are provided a constant food reinforcement during testing (e.g., peanut butter, Lonsdorf, Engelbert, & Howard, 2019; juice drips, Kano, Hirata, Call, & Tomonaga, 2011) or are rewarded for fixating on specific stimuli (Wilson et al., 2010). For example, Kano et al. (2011) presented primates with stimuli that they could view through a transparent panel, and a juice nozzle was installed in the panel at a height that naturally positioned the primates’ eyes in a detectable orientation relative to the eye tracker (Fig. 3). A slow drip of juice was continuously delivered to encourage subjects to approach the setup and to remain in position throughout the entire test. Such an approach not only encourages the subject to maintain a constant distance from the eye tracker, but also decreases the subject’s head movements during testing. Though the loss rates in data collection have not been directly compared across these different reinforcement types and schedules, it seems fair to assume that constant reinforcement might allow for more stability than only occasional or no reward. However, researchers should consider how various reinforcements might interact with their question of interest, as constant reinforcement might incentivize subjects to view stimuli for longer than they might in a more naturalistic setting.

A noninvasive, restraint-free eye-tracking setup in which subjects drink juice throughout testing from a fixed point that orients their face toward the eye tracker and keeps their head in a steady position. Shown here, an orangutan (left) and a gorilla (right), both at the Wolfgang Köhler Primate Research Center, Germany. Photographs courtesy of F. Kano

Common paradigms and associated metrics

Eye-tracking studies that measure attention (as opposed to pupillometry) generally have one of several goals. As noted when describing the various approaches that have been used to test primate eye movement, many of these experimental protocols have been developed from methods originally used with preverbal human infants and young children, in some cases allowing for comparisons across humans and primates (e.g., Howard, Riggins, & Woodward, in press). At the simplest level, the vast majority of the 32 studies that we identified (Table 1) used one of two gross approaches: they either measured subjects’ general attention to and engagement with stimuli (e.g., Ryan et al., 2019), or evaluated subjects’ relative attention to two stimuli, which were either embedded within a scene (e.g., Hattori, Kano, & Tomonaga, 2010) or presented as two separate stimuli on the screen (e.g., Lonsdorf et al., 2019).

Violation-of-expectation studies generally measure overall attention to a scene following perceptually similar expected or unexpected events (Martin & Santos, 2014), with the prediction that unexpected events will require more processing time and produce longer looking durations. Habituation-dishabituation paradigms first habituate subjects to a series of stimuli (i.e., present the stimuli repeatedly, until the subject’s attention declines to a predetermined extent) before presenting various test events and measuring subsequent attention to novel elements (Howard et al., 2017). Similar to violation-of-expectation paradigms, habituation-dishabituation paradigms assume that test events that are more dissimilar (perceptually or conceptually) to the habituation events will elicit greater spikes in attention.

Other paradigms investigate subjects’ attention to specific areas of interest. Often termed preferential-looking paradigms, these studies may measure allocation of attention between two equal-sized areas of interest (e.g., a male versus a female conspecific face, Lonsdorf et al., 2019) or viewing targets in a complex scene (e.g., features on a face, or actors in a social array, Kano and Call, 2015). Preferential-looking tasks may be designed to measure natural viewing patterns, what sorts of information, stimuli, or events elicit preferential attention, or associations with immediately preceding or concurrent visual or auditory stimuli.

Anticipatory-looking paradigms have been designed to provide nonverbal measures of primates’ predictions, under minimal task demands (Kano et al., 2017; Krupenye & Call, 2019). Primates often look to locations where they expect something to imminently happen, and thus under controlled settings, looking can reflect prediction. Many anticipatory-looking paradigms present videos in which an object or an agent is on an ambiguous trajectory toward two possible locations (e.g., a hand reaching toward one of two objects). Predictions are assessed by subjects’ first look, or biases in looking to one location over the other, before the object or agent arrives at either (e.g., before the hand actually grasps either object). Familiarization and test sequences can be used to manipulate features of the stimuli (e.g., where the actor went last time, or where the actor saw an object hidden) to investigate whether primates can anticipate outcomes based on various cognitive abilities, such as long-term memory (e.g., Kano & Hirata, 2015), or by tracking social information, like the goals or beliefs of an agent (e.g., Kano and Call, 2014b; Kano et al., 2019; Krupenye et al., 2016, 2017).

The above-described research themes represent the focus of the majority of the 32 studies we identified in our review (Table 1). A common element to all of them is that little to no training was required, as the aim is to measure subjects’ spontaneous response to stimuli. In contrast, in object discrimination or match-to-sample tasks, researchers aim to study primates’ ability to transfer rules across stimuli sets as a test of cognitive reasoning. In early approaches, subjects were required to point to a “correct” stimulus, either directly (e.g., Menzel, 1969; Tanaka, 2001) or indirectly via computer cursor (e.g., Rumbaugh, Kirk, Washburn, Savage-Rumbaugh, & Hopkins, 1989; Parr, Winslow, Hopkins, and de Waal, 2000), but primates can be trained to look toward certain stimuli, and an eye tracker can be used to document their selections (e.g., Krauzlis & Dill, 2002, with some studies combining the requirement of looking and reaching responses, e.g., Scherberger, Goodale, & Andersen, 2002). While this approach has been commonly used via invasive and/or restraint-based eye-tracking protocols, Wilson et al. (2010) documented and validated a noninvasive restraint-free protocol for administering object discrimination tasks with primates in which subjects made choices by fixating on stimuli visually. Related to this approach, two studies by Kaneko and colleagues used an eye tracker to validate their subjects’ attention to a fixation point between test trials of a discrimination task that the chimpanzees responded to manually via a trackball (Kaneko, Sakai, Miyabe-Nishiwaki, & Tomonaga, 2013; Kaneko & Tomonaga, 2014). Thus, these studies were not assessing the chimpanzees’ visual engagement with stimuli per se but, rather, were using it to ensure consistency in engagement across trials in their study.

Designing engaging stimuli

Stimuli generally consist of a combination of images, videos, and sound. Of the 32 studies we identified in our review, a third presented movie clips to subjects (e.g., Kano & Call, 2014a; Kano & Hirata, 2015) and two thirds presented photographs, clip art, or other static images (e.g., Kano & Tomonaga, 2009; Mühlenbeck et al., 2016), with static and moving stimuli sometimes used in combination (e.g., Howard et al., 2018, 2017; Kano, Moore, Krupenye, Hirata, & Tomonaga, 2018a). Other stimuli types reported included animated photographs (Kret, Tomonaga, & Matsuzawa, 2014), colors (Mühlenbeck et al., 2015), and real-world scenes (Wolf & Tomasello, 2019).

In voluntary viewing setups, choice of content can be critical for capturing and sustaining subject attention. By delivering stimuli that mirror problems a given species might face in the wild, researchers can elevate natural interest and engagement and potentially produce more meaningful and generalizable results. Indeed, as part of the experimental protocol, some studies first evaluated subjects’ general attention to the screen as well as their engagement with specific elements presented on the screen (e.g., Howard et al., 2017; Kano & Call, 2014b). Stimuli can be naturalistic in content, such as images of conspecifics (e.g., Kano & Call, 2015) and social conflicts (e.g., Kano & Hirata, 2015; Krupenye et al., 2016). For certain paradigms, like anticipatory looking, a high degree of interest is fundamental, since subjects must be motivated not only to track all relevant events but also to anticipate outcomes (Kano, Krupenye, Hirata, & Call, 2017; Krupenye & Call, 2019; Kano et al., 2019). Moreover, whereas videos are likely processed in a cognitively similar way to “real” interaction partners (Gothard et al., 2018), in some cases primates may not perceive or interact with photographs or videos in the same way as they do with “real” stimuli (Hopper et al., 2012). Thus, careful consideration should be given to the chosen stimulus and its relation to ecological validity.

Other ways to enhance subjects’ interest include incorporating perceptually salient, novel, or dynamic elements, all of which are likely to naturally capture most primates’ attention. Some species or individuals may be interested in stimuli that do not rely on salience, novelty, or dynamism, but for others these features may be crucial for success. Finally, researchers should carefully consider the duration of their stimuli, as primates may lose motivation for sustained viewing over time, especially with restraint-free protocols in which subjects are free to move away from the stimuli. However, attentional endurance is likely to depend on the nature of the stimuli themselves as well as the species and individuals being studied and their prior experience with such testing.

Reporting: Proposed standards

The data that are produced, and subsequently analyzed, from eye-tracking experiments are shaped to some extent by a variety of research practices and design decisions that should be comprehensively reported within a manuscript (Wass, Forssman, & Leppänen, 2014). As we have noted above, key methodological approaches can influence the quality of data that are collected, and this will inform protocol development, but these elements must also be reported when publishing the results from eye-tracking experiments so that readers can fully interpret results, and comparisons can be drawn across studies. While reviewing previously published studies with primates (Table 1), we found much variation in what methodological details were reported. Therefore, here, we provide some key methodological elements that we encourage all researchers to report with their findings.

Calibration

Generally, calibration involves the presentation of small icons, one at a time, in various locations on the monitor. The subject must attend the icon for a predetermined and automatically presented duration (e.g., 250 ms) or until the subject’s gaze has been detected by the software, at which point the researcher presents the next calibration stimulus. After successful calibration to multiple locations on the screen, the system can generalize across the full range of potential eye inputs to calculate each eye’s point of gaze on the screen. To verify successful calibration, many studies report checking the accuracy of gaze estimates using a function provided by the eye-tracking software (e.g., the estimated gaze distributions around the calibration points in Tobii Studio). Many studies use the same calibration information for a subject across test sessions, and therefore manually check the accuracy of existing calibrations at the start of each new test session with that subject. However, in reviewing the 32 published studies that have used eye trackers with primates via a noninvasive restraint-free approach, we identified a great deal of variance across studies (namely across labs) in how calibration was conducted and reported (Table 1 provides details).

As ocular and facial morphology differ across subjects (e.g., across age classes or between males and females, especially for sexually dimorphic species) and species, we recommend as best practice individually calibrating each subject before testing. However, we recognize that such an approach is not always feasible, and a single subject’s calibration “template” can be applied across subjects in combination with data checks and validation methods. Indeed, while the majority of the studies we identified reported using a conspecific calibration, a few used a human to calibrate the device before using it to test primates (e.g., with apes: Howard et al., 2017; with monkeys: Howard et al., 2018), and one study relied on default calibration options (Chertoff et al., 2018). Regardless of approach, there are several key features of the calibration process that should be reported to allow readers to evaluate the reliability of the calibration process.

First, eye-tracking software often allows researchers to decide how many calibration points to use (e.g., Tobii Pro Lab allows for 2, 5, or 9 calibration points via the inbuilt calibration software). Because it can be difficult to elicit sufficient sustained looks to a large number of calibration points, many studies with primates have used the minimum two points for calibration prior to testing (indeed, 23 of the 32 studies we identified reported using a two-point calibration with primates, Table 1). Provided that the calibration data are accurate and precise (i.e., the calibration output shows that the data are centered closely around the calibration points of interest), two-point calibration is sufficient to produce accurate gaze data (at least in a Tobii system). Where possible, and particularly for studies investigating attention to very small areas of interest, however, researchers may opt to use a greater number of calibration points (e.g., Kaneko & Tomonaga, 2014; Ryan et al., 2019). Researchers can also test for drift (the calibration error due to changes occurring in the eye surface) during testing, and how such verification tests are performed should be reported (see, e.g., Kano and Tomonaga, 2011a). Furthermore, those testing infant or juvenile primates over the course of development should aim to repeatedly calibrate their subjects to account for changes in morphology with growth (e.g., Ryan et al., 2019, reported that inter-pupillary distance was 4 mm greater in adult titi monkeys than in infants).

Second, for the purpose of both reproducibility and sharing solutions to subject inattention to calibration stimuli, we recommend that researchers report the specific details as to how they conducted calibration (see Londsorf, Engelbert, & Howard, 2019, for examples of calibration screenshots, heat maps, and average fixation distance from the calibration point used with capuchins). For example, Kano and Tomonaga (2009) provide detailed information about how chimpanzee subjects were trained and calibrated; Wilson, Buckley, and Gaffan (2010) describe the rationale of their calibration approach and show screen shots of the stimuli and presentation; and Kano and Tomonaga (2009, 2010, 2011a, b) and Hirata et al. (2010) further report fixation error values (i.e. the average distance between the intended and the recorded fixations). Beyond the protocol used for calibration, researchers should also report what stimuli (shape, size, color) are used for calibration (Lonsdorf et al., 2019). However, in many of the studies we reviewed, such details were not provided. Reporting such information is key given that some researchers replace default calibration icons with conspecific images or videos that better attract the attention of subjects (e.g., Ryan et al., 2019), while others present real-life objects, such as food, in front of calibration icons to elevate subject attention (e.g., Kano et al., 2019; Krupenye et al., 2016 – such real-world stimuli must also be used when calibrating for non-screen-based eye tracking, i.e., when subjects view real-world events, Wolf & Tomasello, 2019).

Third, researchers should report any procedures for checking calibration quality, manually or otherwise, and for determining when to recalibrate (see, e.g., Wilson et al., 2010). Provided that the features of the setup remain the same (calibrations are produced for a specific screen size, position relative to the eye tracker, etc.) and the lighting conditions are consistent, some systems allow calibrations to be reused over multiple sessions for each subject. However, researchers should at least manually check that an existing calibration remains accurate before using it in a subsequent session. One way is to present a screen with small icons in a grid-like fashion; gaze can be attracted to icons on the screen and assessed manually by the researchers for accuracy, and recalibration can be pursued whenever necessary (e.g., Kano et al., 2011; Wilson et al., 2010). Despite the importance of these details, such protocol elements and environmental factors were rarely described in the articles that we reviewed.

Stimuli, areas of interest, and visual angle

Most gaze-based eye-tracking analyses document attention to specific regions of the screen where stimuli or events of interest are presented (indeed, all but two of the articles identified in our review utilized this approach to evaluate subjects’ attention to and interest in the stimuli). These regions are generally referred to as areas of interest (AOIs) or, sometimes, regions of interest (ROIs). For both the interpretation of findings and reproducibility of methods, in addition to reporting the dimensions of the screen (in centimeters), researchers should report the overall (width × height) dimensions of the screen in pixels as well as the precise coordinates and dimensions of all AOIs. Ideally, figures should be included that visually display AOIs relative to the broader stimuli as well (e.g., Howard et al., 2017). For confirmatory analyses, AOIs should be predefined before the data are examined. From the articles that we reviewed, we noted a number of common approaches in how researchers applied AOIs for use with primates. AOIs were typically used to determine subjects’ relative attention to elements within a scene (e.g., Hattori, Kano, & Tomonaga, 2010; Kano & Hirata, 2015), features on a face (e.g., Kano, Call, & Tomonaga, 2012; Kano & Tomonaga, 2010), or simply to one of two stimuli presented on the screen (e.g., Howard et al., 2017; Lonsdorf et al., 2019). Furthermore, depending on the question, researchers sometimes nested AOIs, for example to explore a subjects’ relative attention to a face within a scene, and then to specific elements of that face (e.g., Chertoff et al., 2018; Kano, Shepherd, Hirata, Tomonaga, & Call, 2018b).

As described above, stimulus viewing is impacted by the physical size of the screen and the distance between the screen and the subject. This information can be captured by reporting aspects of the visual angle. Visual angle describes the angle subtended at the eye by the boundaries of the screen. Visual angle basically encapsulates the degrees of the visual field that are contained within the screen size at a given distance. A useful measure of visual angle is how much of the screen (in centimeters) is contained within one degree of visual angle. Degree of error should also be reported in visual angle. Also, for experiments that allow subjects to move freely during testing, the visual angle will continually change because the relative position between the subject and the screen will continually change throughout testing; for such studies we recommend that the ideal visual angle is reported, as well as the (estimated) range of visual angle measurements, for each subject (e.g., Lonsdorf et al., 2019).

Data filters

Often, data are filtered or processed in some way in order to generate output measures. These procedures should be fully reported (for reporting examples see Mühlenbeck, Liebal, Pritsch, & Jacobsen, 2016; Kano & Tomonaga, 2009). With regard to the detection of saccades and fixations, there are largely two methods: detecting saccades based on the velocity peaks (or acceleration) of eye movement, or detecting fixations based on the predefined distance between the recorded gaze samples (Duchowski, 2007). In general, the data from low-resolution eye trackers (e.g., 60 Hz) are better processed with the latter method, because saccades could be easily confounded with noise in the case of sparse samples. Many researchers use the default saccade/fixation filters in the software provided by the manufacturer of the eye tracker (e.g., Tobii fixation filter, as used by Kano & Call, 2014b). These default saccade/fixation filters often employ a unique series of data processing to reduce noise (e.g., gap fill-in, moving average) and detect saccades/fixations (based on the velocity, distance, or both) (see Tobii, n.d.). Researchers should select an optimal filtering method and its parameters based on the quality of raw eye-tracking data and report which filtering methods and parameters (if changed from the default) they use. With regard to the processing of fixation data, some researchers may only be interested in summing the number or durations of fixations (i.e., continuous looking at a particular localized area) within a particular AOI during a given window of time. Indeed, the majority of studies we reviewed (Table 1) reported metrics associated with the duration or proportion of time subjects attended to certain stimuli or elements within stimuli, while a smaller subset reported more detailed elements including number of fixations (Howard et al., 2018), fixation rate (e.g., Pritsch, Telkmeyer, Mühlenbeck, & Liebal, 2017), fixation order (e.g., Kano, Call, & Tomonaga, 2012), first look (e.g., Kano et al., 2018a), and saccade latency (e.g., Kano, Hirata, Call, & Tomonaga, 2011).

Exclusion and retesting criteria

In some instances it is necessary to exclude individual trials or entire subjects from analyses. This may be necessary for a number of reasons, such as experimenter error (e.g., the wrong trials were run), a subject failing to complete an entire series of trials, or a subject failing to view critical segments of a video (as described above). Exclusion criteria should be predefined before data collection and comprehensively reported. The number of trials and/or subjects that are excluded should also be reported.

Animal cognition researchers often face limitations in the number of available subjects. Consequently, it may be necessary to retest subjects on trials they missed or that were excluded. For example, Mühlenbeck, Liebal, and Jacobsen (2015) reported: “because of the orangutans’ shorter attention span, the recordings had missing data when the orangutans moved away from the eye tracker or turned their heads. We filled the data gaps by repeated measurements of the same entire trial, p 7-17.” In such cases, it is important to clearly define and report criteria for determining whether to retest subjects. Kano and Tomonaga (2011b), for example, operationalized their protocol for repeating testing with chimpanzees as follows: “we repeated trials in which the gaze data had been lost for longer than 600 ms due to participants looking away from the monitor or blinking more than twice. We then replaced these trials with the new trials if those were completed satisfactorily; if not, we excluded these trials from the analysis, p 882.” When test sessions are repeated, it is also critical to determine and report the delay between test sessions for each subject. Specifically, researchers should ask themselves: Will subjects be retested immediately after a failed trial or at the end of a session or full testing schedule? Is there a limit on the number of times a trial can be repeated before it will be fully excluded? Depending on the nature of the study, it may be of interest to report the number and/or proportion of trials that result from retesting. In our review of the literature, while many studies with primates reported measures taken to increase completion rates, many did not provide detailed information about how such repeat testing was administered – important both for replication but also for others planning their own methodological protocols.

Future directions

Just as the available technology for tracking eye gaze and movement has advanced tremendously in the preceding years, we foresee a number of methodological refinements that will broaden the scope of eye-tracking research with primates. Such advancements will improve the range and detail of data recorded, increase the flexibility of hardware and software, open up new avenues of research, and facilitate research with previously untested species or populations.

Hardware

As the community of researchers interested in eye tracking grows, important advances will improve the accuracy of eye-tracking technology. For fully noninvasive, restraint-free eye-tracking systems used in primate studies, one difficulty is ensuring that subjects’ head and eye position can each be reliably estimated between calibration and testing procedures. Refinements to both testing protocol and hardware help address this. For example, one way to achieve this is to have subjects drink juice from a fixed dispenser in front of the screen (Fig. 3), an approach pioneered by Kano et al. (2011) (see also Kawaguchi et al., 2019), or to have subjects view stimuli through a window that encourages them to focus their attention and limit body movement (e.g., Ryan et al., 2019). Some noninvasive eye-tracking systems, such as the aforementioned Tobii systems, are capable of model-based estimates of gaze position that rely on estimating the subject’s head position and eye position relative to the camera (see also Li, Winfield, & Parkhurst, 2005; Stiefelhagen, Yang, & Waibel, 1997), although long-term reliability and support for these systems has been elusive. Such approaches also allow for the estimation of eye gaze from 2D images and potentially without the need for dedicated eye trackers (e.g., Wood & Bulling, 2014; Yang & Zhang, 2001).

In addition to improvements in accuracy, we also predict that eye tracking systems will become less expensive. For example, we note the affordable EyeTribe eye-tracking model, described by Dalmaijer (2014), which unfortunately was recently discontinued (Dalmaijer, 2016). A proliferation of low-cost and open-source hardware (including miniaturized infrared cameras, low-energy CPUs, and data streaming devices) may facilitate the development of other affordable eye-tracking options in the future.

We also anticipate advances in wearable eye trackers. For example, Shepherd and Platt (2006) trained ring-tailed lemurs (Lemur catta) to wear infrared video-based eye trackers. Although not a restraint-free approach, as they require interaction with the subject to apply the eye-tracking device, they may confer benefits as, once habituated, animals can move freely in their enclosure or habitat while data are gathered. Similar eye-tracking systems are now commercially available (Niehorster et al., 2020), though these have not been tested with primates to date. Recently, a novel head-mounted magnetic eye-tracking device was developed for use with rodents that facilitates geometric computation of eye-in-head angle rather than computations based on a single pupil size estimate and corneal reflection (see Figure 3 of Payne & Raymond, 2017). However, to our knowledge, no commercially available eye tracker currently uses this principle, and such an approach still requires surgery to mount the plastic head-post that secures the device. Lastly, and building upon principles first published by Dodge and Cline (1901), further advances are being made using technology that does not rely on cameras at all, but which uses micro-scanners (e.g., AdHawk Microsystems). Micro-scanners are smaller and lighter and provide higher-frequency eye position information than any available video oculography system, but those advantages coincide with a loss of pupillometry data. To date, however, these micro-scanners have not been used with primates.

Software

Software improvements may lead to major advances in how noninvasive, remote, and head-mounted systems are used to collect and analyze eye-tracking data.