Abstract

The Nencki Affective Picture System (NAPS; Marchewka, Żurawski, Jednoróg, & Grabowska, Behavior Research Methods, 2014) is a standardized set of 1,356 realistic, high-quality photographs divided into five categories (people, faces, animals, objects, and landscapes). NAPS has been primarily standardized along the affective dimensions of valence, arousal, and approach–avoidance, yet the characteristics of discrete emotions expressed by the images have not been investigated thus far. The aim of the present study was to collect normative ratings according to categorical models of emotions. A subset of 510 images from the original NAPS set was selected in order to proportionally cover the whole dimensional affective space. Among these, using three available classification methods, we identified images eliciting distinguishable discrete emotions. We introduce the basic-emotion normative ratings for the Nencki Affective Picture System (NAPS BE), which will allow researchers to control and manipulate stimulus properties specifically for their experimental questions of interest. The NAPS BE system is freely accessible to the scientific community for noncommercial use as supplementary materials to this article.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Given that there is no single gold-standard method for the measurement of emotion, researchers are often faced with a need to select appropriate and controlled stimuli for inducing specific emotional states (Gerrards-Hesse, Spies, & Hesse, 1994; Mauss & Robinson, 2009; Scherer, 2005). The Nencki Affective Picture System (NAPS; 2014) is a set of 1,356 photographs divided into five content categories (people, faces, animals, objects, and landscapes). All of the photographs have been standardized on the basis of dimensional theories of emotions, according to which several fundamental dimensions can characterize each affective experience. In the case of the NAPS, these dimensions are valence (ranging from highly negative to highly positive), arousal (ranging from relaxed/unaroused to excited/aroused), and approach–avoidance (ranging from a tendency to avoid to a tendency to approach a stimulus) (Osgood, Suci, & Tannenbaum, 1957; Russell, 2003). Although the identity and number of dimensions have been debated (Fontaine, Scherer, Roesch, & Ellsworth, 2007; Stanley & Meyer, 2009), this approach has been successfully used in many studies and has provided much insight into affective experience (Bayer, Sommer, & Schacht, 2010; Briesemeister, Kuchinke, & Jacobs, 2014; Colibazzi et al., 2010; Kassam, Markey, Cherkassky, Loewenstein, & Just, 2013; Viinikainen et al., 2010).

As a different way to conceptualize human emotions, they can be categorized in terms of discrete emotional states (Darwin, 1872; Ekman, 1992; Panksepp, 1992), and each emotion has unique experiential, physiological, and behavioral correlates. As was stated by Ekman (1992) in his theory of basic emotions, “a number of separate emotions . . . differ one from another in important ways” (p. 170). In line with this theoretical framework, researchers argue that one- or two-dimensional representations fail to capture important aspects of the emotional experience and do not reflect critical differences between certain emotions (Remmington, Fabrigar, & Visser, 2000). Instead, at least five different discrete emotion categories are proposed to reflect facial or vocal expression, namely: happiness, sadness, anger, fear, and disgust. By using the term “basic emotions,” Ekman (1992) wanted to indicate that “evolution played an important role in shaping both the unique and the common features which these emotions display as well as their current function” (p. 170). They are supposed to originate from biological markers, regardless of any cultural differences (Ekman, 1993). This categorical model of emotions has also provided numerous empirical insights (Briesemeister, Kuchinke, & Jacobs, 2011a; Mikels et al., 2005; Silva, Montant, Ponz, & Ziegler, 2012; Stevenson, Mikels, & James, 2007; Tettamanti et al., 2012; Vytal & Hamann, 2010).

A longstanding dispute concerning whether emotions are better conceptualized in terms of discrete categories or underlying dimensions has gained new insight from different methods in the domain of neuroimaging (see Briesemeister, Kuchinke, Jacobs, & Braun, 2015; Fusar-Poli et al., 2009; Kassam et al., 2013; Lindquist, Wager, Kober, Bliss-Moreau, & Barrett, 2012). Although some studies have identified consistent neural correlates that are associated with basic emotions and affective dimensions, the studies have ruled out simple one-to-one mappings between emotions and brain regions. This points to the need for more complex, network-based representations of emotions (Hamann, 2012; Saarimaki et al., 2015). Given that both discrete emotion and dimensional theories are greatly overlapping in their explanatory values (Reisenzein, 1994), further experimental investigations are needed using combined approaches (Briesemeister, Kuchinke, & Jacobs, 2014; Briesemeister et al., 2015; Eerola & Vuoskoski, 2011; Hinojosa et al., 2015). Therefore, providing appropriate pictorial stimuli combining both perspectives will be of great usefulness.

To meet this need, many of the existing datasets of standardized stimuli in various modalities that were originally assessed in line with the dimensional approach have now received complementary ratings on the expressed emotion categories (Briesemeister, Kuchinke, & Jacobs, 2011b; Mikels et al., 2005; Stevenson et al., 2007; Stevenson & James, 2008). Due to this contribution, it has become possible to investigate various topics in affective neuroscience, such as temporal and spatial neural dynamics in the perception of basic emotions from complex scenes (Costa et al., 2014), or the neural correlates of different attentional strategies during affective picture processing (Schienle, Wabnegger, Schoengassner, & Scharmüller, 2014). With such stimuli, different theories of emotion processing and their applicability to affective processing studies have also been examined (Briesemeister et al., 2015). Moreover, basic emotion ratings have enabled researchers to select pictures in order to study the neural correlates of affective experience and therapeutic effects in different clinical populations (Delaveau et al., 2011). In this way, a combination of the dimensional approach, useful to describe a number of broad features of emotion, and the categorical approach, focused on capturing discrete emotional responses, is supplying researchers with a more complete view of affect.

The aim of the present study was to provide researchers with a list of reliable discrete emotion norms for a subset of images selected from the Nencki Affective Picture System as being characterized both with the intensities of basic emotions (happiness, anger, fear, disgust, sadness, and surprise) and the affective dimensions of valence and arousal. Additionally, the obtained ratings are going to be analyzed for the problems of the relationship between affective dimensions and basic emotions and the relations between the affective variables and the content categories. This subset hereafter is referred to as NAPS BE.

Method

Materials

A subset of 510 images was selected from the NAPS database in order to proportionally cover the dimensional affective space across the content categories of animals, faces, landscapes, objects, and people. The selection was driven by reports showing that in the International Affective Picture System (IAPS; Bradley & Lang, 2007), the distribution of stimuli across the valence and arousal dimensions is related to human versus inanimate picture content (Colden, Bruder, & Manstead, 2008). Specifically, pictures depicting humans were over-represented in the high arousal–positive and high arousal–negative areas of affective space, as compared to inanimate objects, which were especially frequent in the low arousal–neutral valence area. In order to avoid a similar pattern in our dataset, and to provide a variety of stimulus content for the basic emotion classification, we chose and counterbalanced pictures from each content category covering the whole affective space. Also, we aimed at limiting the number of neutral stimuli in each subset. In this way, we obtained the following numbers of images per category: 98 animals, 161 faces, 49 landscapes, 102 objects, and 100 people. The landscape category was the least numerous, since these pictures were predominantly not arousing and of neutral valence. The NAPS BE images that proportionally covered the dimensional affective space of valence and arousal across the content categories of animals, faces, landscapes, objects, and people are depicted on Fig. S1 (supplementary materials).

Participants

A total of 124 healthy volunteers (67 females, 57 males; mean age = 22.95 years, SD = 3.76, range = 19 to 37) without history of any neurological illness or treatment with psychoactive drugs took part in the study. The participants were mainly Erasmus (European student exchange programme) students from various European countries recruited at the University of Warsaw and the University of Zagreb. All of them were proficient speakers of English, and the procedure was conducted in English in order to obtain more universal norms. All of the participants obtained a financial reward of 30 PLN (approximately EUR 7).

Procedure

Participants were first asked to fill in the informed consent form and to read instructions displayed on the computer screen (see the Appendix), then they familiarized themselves with their task in a short training session with exemplary stimuli. All of the participants were informed that in case of feeling any discomfort due to the content of the pictures, they should report it immediately to stop the experimental session. English was the language of the instructions, rating scales, and communication with the participants. During the experiment, they individually rated images through a platform available on a local server, with an average distance of 60 cm from the computer screen.

Each participant was exposed to a series of 170 images chosen pseudorandomly from all of the categories and presented consecutively under the following constraints: No more than two pictures from each affective valence category (positive, neutral, and negative) and no more than three pictures from each content category appeared consecutively. In order to avoid serial position (primacy and recency) effects, each subset of 170 pictures was divided into three parts; these parts were positioned in three possible ways and were counterbalanced across the participants.

Single images were presented in a full-screen view for 2 s. Each presentation was followed by an exposure of the rating scales (for the assessment of the basic emotions and affective dimensions) on a new screen with a smaller picture presented in the upper part of the screen. The task of the participants was to evaluate each picture on the eight scales described below. Completing the task with no time constraints took approximately 45–60 min. The local Research Ethics Committee in Warsaw approved the experimental protocol of the study.

Rating scales

Analogously to some previously used procedures (Briesemeister et al., 2011b; Mikels et al., 2005; Stevenson et al., 2007), participants were asked to use six independent 7-point Likert scales to indicate the intensity of the feelings of happiness, anger, fear, sadness, disgust, and surprise (with 1 indicating not at all and 7 indicating very much) elicited by each presented image, as is presented in Fig. 1. This procedure allowed the participants to indicate multiple labels for a given image. Although surprise has been considered by some researchers to be a neutral cognitive state (Ortony & Turner, 1990) rather than an emotion, and therefore does not appear in certain classifications of the basic emotions (Ekman, 1999; Izard, 2009), it was also included in the ratings.

Additionally, the pictures were rated on two affective dimensions using the Self-Assessment Manikin (Lang, 1980), as is also presented in Fig. 1. The scale of emotional valence was used to estimate the extent of the positive or negative reaction evoked by a given picture, ranging from 1 to 9 (1 for very negative emotions and 9 for very positive emotions). On the scale of arousal, participants estimated to what extent a particular picture made them feel unaroused or aroused, ranging from 1 to 9 (1 for unaroused/relaxed and 9 for very much aroused—e.g., jittery or excited). Although the ratings of these two affective dimensions were originally included in NAPS, they were previously obtained with the use of continuous bipolar semantic sliding scales (SLIDER) by moving a bar over a horizontal scale. The original NAPS ratings showed a more linear association between the valence and arousal dimensions, as compared to the “boomerang-shaped” relationship found, for instance, in our sample and in the IAPS database (Lang, Bradley, & Cuthbert, 2008).

Data analysis

The data analysis is arranged into three sections. First, we investigated whether the obtained ratings were consistent across the individuals taking part in the experiment and what was the upper limit of the correlations. Therefore, we addressed the issue of the consistency of the collected ratings, applying split-half reliability estimation. Second, we described the distributions of the norms in order to provide researchers with useful characteristics of the dataset. Although the ratings of each basic emotion were given for each picture (provided in the supplementary materials, Table S2), we were also interested in searching for the pictures expressing specifically one basic emotion much more than the others. Thus, we used several methods for classifying pictures to particular basic emotions, which we consider to be useful for more precise experimental manipulations of the NAPS BE stimuli. The last section is devoted to further analyses of the patterns observed in the obtained ratings and addresses the potential doubts of researchers. In order to give a rationale for combining the theoretical frameworks of affective dimensions and basic emotions instead of choosing only one, we investigated the relationship between these approaches. Our research question was whether the information collected by emotional categories represented the same emotional information described by the dimensional ratings. To answer this question, regression analyses were performed, using the categorical data for each picture to predict the dimensional data, and vice versa. Finally, we aimed at showing researchers that other stimulus parameters are important for their experiments. Our research question was whether there were any differences in the mean basic emotion intensities across the content categories of the pictures. We investigated this relation with multivariate analysis of variance (MANOVA), considering Content Category (animals, faces, landscapes, objects, and people) and classes of the pictures’ Valence (negative, neutral, and positive) as between-subjects factors, and all of the affective ratings as dependent variables. Answering these research questions should encourage future users of NAPS BE to use all of the provided norms and variables in their experiments.

Results

Reliability

Since the applicability of the collected affective norms in experimental studies is highly dependent on their reliability, we addressed this issue by applying split-half reliability estimation, following descriptions provided in the literature (Monnier & Syssau, 2014; Montefinese, Ambrosini, Fairfield, & Mammarella, 2014; Moors et al., 2013). The whole sample was split into halves in order to form two groups with the odd and even experiment entrance ranks. Within each group, the mean ratings of each basic emotion were calculated for each picture. Pairwise Pearson’s correlation coefficients of these means between the two groups were then calculated and adjusted using the Spearman–Brown formula. All correlations were significant (p < .01). The obtained reliability coefficients were high and comparable to the values obtained in other datasets of standardized stimuli (Bradley & Lang, 2007; Imbir, 2014; Monnier & Syssau, 2014; Moors et al., 2013)- namely, r = .97 for happiness, r = .98 for sadness, r = .93 for fear, r = .94 for surprise, r = .95 for anger, r = .97 for disgust, r = .93 for arousal, and r = .98 for valence.

Ratings of the affective variables

For each picture, we obtained from 39 to 44 ratings (M = 41.33, SD = 2.06) on each scale from the 124 participants of the study. In order to further explore the present data, we divided the whole set of pictures by their valence classes into negative, neutral, and positive pictures, according to the criteria introduced in previous studies (e.g., Ferré, Guasch, Moldovan, & Sánchez-Casas, 2012; Kissler, Herbert, Peyk, & Junghofer, 2007). These criteria were based on the mean valences for negative, neutral, and positive pictures, which usually took values around 2, 5, and 7, respectively. Therefore, we classified pictures with values of valence ranging from 1 to 4 as negative (M = 3.10, SD = 0.58), pictures with values ranging from 4 to 6 as neutral (M = 5.02, SD = 0.55), and pictures with values ranging from 6 to 9 as positive (M = 6.52, SD = 0.39). These criteria resulted in the following proportions in the present database: 148 negative pictures (28.6 %), 203 neutral pictures (40.8 %), and 159 positive pictures (30.6 %). The following distributions of negative, neutral, and positive pictures were observed in the different content categories: animals (25.5 % negative, 43.9 % neutral, 30.6 % positive), faces (26.1 % negative, 30.4 % neutral, 43.5 % positive), landscapes (12.2 % negative, 38.8 % neutral, 49.0 % positive), objects (19.6 % negative, 69.6 % neutral, 10.8 % positive), people (55.0 % negative, 21.0 % neutral, 24.0 % positive).

The distributions of all of the basic emotions, as collected for each picture and with pictures divided by their valence classes, are depicted in Fig. 2. We split the full range of the basic emotions (1–7 on the rating scales) into seven bins. For each bin, the number of means falling within the bin range was calculated for each basic emotion separately. Numbers obtained in this way (normalized by dividing them by the number of pictures in a particular valence class) are plotted for each valence class separately in each of the panels of Fig. 2.

The distributions of all the basic-emotion intensity ratings among negative pictures seem to be skewed, with a strong bias toward the low range of the scale. Only 31 % and 23 % of the pictures were rated above the middle value of the rating scales (=4) for sadness and disgust, respectively. All of the other basic emotion intensities were almost always rated lower. This low-intensity bias, which stands for a relative lack of pictures presenting high-intensity values of basic emotions, is strongest for happiness and surprise and weakest for sadness and disgust. All of the basic emotion intensities were rated low among the neutral pictures, with the highest median value being for happiness (Mdn = 2.15). In the positive picture group, the distribution of happiness covers the middle of the rating scale, Mdn = 4.15.

Basic-emotion classification

The analysis above shows that the majority of images do not express just one discrete emotion, but rather are associated with several different emotional states. Therefore, from the practical point of view it might be important to select stimuli representing one particular emotion much more than any other. Such images will be very useful for further studies in which an emotional category is considered an important factor (Briesemeister et al., 2015; Chapman, Johannes, Poppenk, Moscovitch, & Anderson, 2012; Costa et al., 2014; Croucher, Calder, Ramponi, Barnard, & Murphy, 2011; Flom, Janis, Garcia, & Kirwan, 2014; Schienle et al., 2014; van Hooff, van Buuringen, El M’rabet, de Gier, & van Zalingen, 2014). Importantly, several methods of stimulus classification according to the basic emotion categories available in the literature (Briesemeister et al., 2011b; Mikels et al., 2005) can be employed, depending on the specific interest of the researcher. One of the most popular is based on the overlapping of confidence intervals (CIs; Mikels et al., 2005). Using this method, the 85 % CI was constructed around the mean intensity of each basic emotion for a given picture, and a category membership was determined according to the overlap of the CIs. A single emotion category was ascribed to a given picture if the mean of one emotion was higher than the means of all of the other emotions, and if the CI for that emotion did not overlap with the CIs for the other five emotional categories. An image was classified as blended if two or three means were higher than the rest and if the CIs of those means overlapped only with each other. Finally, if the CIs of more than three means overlapped, such an image was classified as undifferentiated (Mikels et al., 2005).

The aforementioned procedure was used to find images that elicited one discrete emotion more than the others. As a result, 510 images used in the study were divided into six categories: happiness (n = 240), anger (n = 2), sadness (n = 62), fear (n = 11), disgust (n = 51), and surprise (n = 2), giving a total number of 368 pictures that were matched to specific basic emotions. The other pictures were classified as blended, including two (n = 21) or three (n = 22) emotions, or were classified as undifferentiated, eliciting similar amounts of four, five, or six emotions (n = 20, 25, and 54 pictures, respectively). Some example images from the animals category are presented in Fig. 3.

We computed a series of one-way analyses of variance solely on the pictures classified with the CI method (Mikels et al., 2005) as eliciting single basic emotions. For each group of pictures classified with a particular basic emotion, we compared the intensity ratings of this basic emotion in these pictures and in the pictures classified with all the other basic emotions. We obtained a significant effect of the basic-emotion classification in each case—namely, for happiness, F(5, 362) = 200.43, p < .001; sadness, F(5, 362) = 449.92, p < .001; fear, F(5, 362) = 147.10, p < .001; surprise, F(5, 362) = 44.19, p < .001; anger, F(5, 362) = 138.02, p < .001; and disgust, F(5, 362) = 350.14, p < .001.

The frequencies of each basic emotion among the pictures classified as single, blended, and undifferentiated basic emotions are presented in Fig. 4. It is noteworthy that the three panels of this figure cannot be compared with regard to the sums of the pictures, since in the middle and right panels the same image contributed to several bars, whereas the number of pictures equals the sum of the bars in the first panel. The bars should be interpreted only in terms of the single bars informing us how often a particular emotion was represented as single, blended, or undifferentiated.

In order to provide researchers with an overview of the groups of pictures distinguished with the CI classification method, descriptive statistics for the basic emotions and affective dimensions are presented in Table 1.

As was mentioned in previous studies (e.g., Mikels et al., 2005), alternative methods could be used to investigate the data. For instance, the CI method would classify images rated by one discrete emotion as having significantly higher ratings than the others, even though the intensity of this single rating was lower than those for other images that elicit blended or undifferentiated emotions. Following this, we provide a conservative classification method (Briesemeister et al., 2011b), according to which pictures were assigned to a specific discrete emotion category if the mean rating in one discrete emotion was more than one standard deviation higher than the ratings for other discrete emotions. Finally, the most liberal classification criterion was applied (Briesemeister et al., 2011b), according to which all of the pictures that received a higher mean rating in a particular discrete emotion were labeled as being related to this emotion. The results of all three classification methods are presented in Table 2.

Since all of the methods of classification are based on means and CIs, the picture classifications of our data did not differ substantially across the three methods described above. No pictures were classified with different basic emotions according to the different methods. The only difference was the obtained numbers of pictures classified as expressing specific basic emotions. Table S2 includes the results of each classification method for each single picture.

Relationship between basic emotions and affective dimensions

An exploration of the relationships between the basic emotions and affective dimensions showed that these variables were highly intercorrelated, as is demonstrated in Table 3.

Additionally, regression analyses were computed using the discrete emotional category ratings in order to examine the extent to which these variables could predict the ratings of valence and arousal (Bradley & Lang, 1999). We performed four separate analyses using the six emotional category ratings to predict valence and arousal within the three valence classes distinguished in the previous sections (negative, neutral, and positive), in line with analyses reported in literature (Montefinese et al., 2014; Stevenson et al., 2007; Stevenson & James, 2008).

After removing the insignificant coefficients, we repeated the regressions; all four models turned out to fit the data, and the basic-emotion intensities explained a large percentage of the variance of valence [F(5, 142) = 172.41, p < .001, R 2 = .86, for negative pictures; F(5, 197) = 413.99, p < .001, R 2 = .91, for neutral pictures; and F(3, 155) = 182.33, p < .001, R 2 = .78, for positive pictures] and of arousal [F(6, 141) = 93.47, p < .001, R 2 = .80, for negative pictures; F(6, 196) = 157.52, p < .001, R 2 = .83, for neutral pictures; and F(6, 152) = 59.14, p < .001, R 2 = .70, for positive pictures].

Standardized β coefficients were calculated for all six emotional categories. As for negative pictures, valence was strongly related to sadness, disgust, happiness, fear, and anger, yet it was not related to surprise. Arousal, in turn, was related to fear, disgust, and sadness, but not to anger and surprise. In the case of neutral pictures, valence was most strongly related to happiness, sadness, disgust, and fear, and additionally to surprise, but not to anger. Arousal was also not related to anger, yet it was related to fear, happiness, sadness, disgust, and surprise. As far as positive pictures were concerned, valence was related to happiness, sadness, and disgust only. Arousal was related to fear, disgust, anger, and sadness (but only fear was significant).

However, partial correlations (representing the unique influence of one predictor relative to the part of the variance of a dependent variable unexplained by the other predictors) revealed that discrete emotions contributed to valence and arousal in different ways (Ric, Alexopoulos, Muller, & Aubé, 2013) (Table 4). The ratings of affective dimensions were predicted particularly well by the level of happiness among positive pictures; by the levels of happiness, sadness, and fear among neutral pictures; and by the levels of sadness, fear, and disgust among negative pictures. The distribution of the ratings of pictures classified as eliciting particular discrete emotions on the basis of the CI criterion is presented in the affective space of valence and arousal in Fig. 5.

The regressions calculated using the dimensional ratings to predict emotional category ratings were similar to the previous ones, also showing a lack of homogeneity in their relationships (beta weights and a statistical analysis are presented in Table S1 in the supplementary materials).

Relations between the affective variables and the content categories

Subsequently, we performed a MANOVA including the five Content Categories (animals, faces, landscapes, objects, and people) and the three classes of Picture Valence (negative, neutral, and positive) as between-object factors, and the ratings of the six basic emotions intensities as well as the ratings of the two affective dimensions as dependent variables. Before that, we tested the assumption of the absence of multicollinearity between the dependent variables. The variance inflation factor (VIF) showed that multicollinearity might be a problem (Myers, 1990) for valence (VIF = 17.56) and happiness (VIF = 11.29). Therefore, we removed valence as a dependent variable from the analysis. Additionally, conducting collinearity diagnostics checked for interdependence of the independent variables. The obtained tolerance and VIF values were not considered problematic (tolerance > 10 and VIF < 10; Myers, 1990).

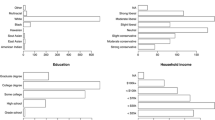

As for the between-object effects, we found significant main effects of content category [F(28, 1968) = 7.99, p < .001, η p 2 = .10] and valence class [F(14, 980) = 121.25, p < .001, η p 2 = .63], as well as a significant effect of the interaction between the two [F(56, 3465) = 4.73, p < .001, η p 2 = .07]. Further analysis of this interaction showed interesting patterns specific to each basic emotion. This interaction was further interpreted through an analysis of the simple main effects of content category performed separately for each valence class, and the results are depicted in Fig. 6. There were significant differences in the mean basic-emotion intensities among the pictures of different valence classes, depending on their content category. To start listing all of them, the ratings of happiness were lower for objects than for landscapes for both neutral and positive pictures. As far as sadness was concerned, among negative pictures the ratings were significantly higher for faces and lower for objects than for the other categories. The ratings of fear were higher than those of the other categories for people (among both negative and neutral pictures) as well as animals (among neutral pictures). As for surprise, among positive pictures these ratings were lower for animals and people than among the neutral pictures. Anger among the negative pictures was rated significantly higher for landscapes than for the other categories. Finally, disgust among the negative and neutral pictures was rated higher for objects and lower for faces than for the other content categories. All of the significant differences (p < .05) are marked with an asterisk in Fig. 6.

Mean intensities of all discrete emotion categories, as a function of all semantic categories and all valence classes. *Significant differences between the mean intensities of particular basic emotions of content categories, marked with relevant colors: animals = blue, faces = red, landscapes = green, objects = purple, and people = orange; p < .05

Discussion

The present study aimed at providing categorical data that would allow NAPS to be used more generally in studies of emotion from a discrete categorical perspective, as well as providing a means of investigating the association of the dimensional and categorical approaches to the study of affect.

Concerning the relationship between affective dimensions and emotional category ratings, our findings are in line with the previous characterizations of affective stimuli, including written words (Stevenson et al., 2007), emotional faces (Olszanowski et al., 2015), and affective sounds (Stevenson & James, 2008). These results showed differences in the predictions based on categories, depending on the predicted dimension, as well as on whether the pictures were positive or negative. The regressions using the dimensional ratings to predict emotional category ratings were similar to the previous regressions, with a lack of homogeneity in the ability of the categorical ratings to predict the dimensional ratings. In other words, emotional categories cannot be extrapolated from the affective dimensions; conversely, dimensional information cannot be extrapolated from the emotional categories. The heterogeneous relationships between each emotional category and the different affective dimensions of the stimuli confirms the importance of using categorical data both independently and as a supplement to dimensional data (Stevenson et al., 2007). From a practical point of view, using both dimensional and discrete emotion classifications, the researcher could design a more ecologically valid paradigm by utilizing, for instance, negative pictures that were not biased toward any particular discrete emotion, or by using pictures evoking only a particular discrete emotion (Stevenson & James, 2008).

What can be considered a particularity of the NAPS BE dataset is the fact that sadness is related not only to low arousal. As has been stated in literature (Javela, Mercadillo, & Martín Ramírez, 2008), the definition of the elements and particular elicitors of one emotion becomes difficult when one considers that individuals could experience many negative emotions when being confronted with a certain unpleasant stimulus (Mikels et al., 2005), such as a visual scene (Bradley, Codispoti, Cuthbert, & Lang, 2001; Bradley, Codispoti, Sabatinelli, & Lang, 2001). Considering that the experiences of anger, fear, and sadness elicit similar electromyographic activity (Hu & Wan, 2003), it may be argued that these emotions are related to similar levels of arousal. For instance, anger and sadness could both be elicited with the occurrence of negative events, such as blaming others and loss (Abramson, Metalsky, & Alloy, 1989; Smith & Lazarus, 1993). Thus, these might be differentiated from each other only by considering how they are appraised, but not by the related arousal. On the other hand, according to the “core affect” theory (Barrett & Bliss-Moreau, 2009; Russell, 2003), the core affective feelings evoked during an emotion depend on the situation; for instance, fear can be pleasant and highly arousing (in a rollercoaster car) or unpleasant and less arousing (detecting bodily signs of an illness) (Wilson-Mendenhall, Barrett, & Barsalou, 2013). Therefore, there might be situations in which sadness is related to high arousal, or in which high-arousing sadness is closely related to other negative, high-arousing emotions.

Importantly, we chose images that were counterbalanced in terms of content categories, thanks to which we could explore the relationship of the affective variables and the content categories included in the NAPS. This examination revealed significant main effects of content category and valence class, indicating differences across all of them. Additionally, we found significant interactions of content category and valence class. Such interactions had been reported previously for verbal materials with regard to affective dimensions (Ferré et al., 2012), yet not for visual material and basic emotions. Further analysis of this interaction in our data showed that interesting patterns were visible, especially among positive pictures for happiness and among negative pictures for sadness, fear, surprise, anger, and disgust. Depending on the basic emotion of interest, there were differences in the ratings of various content categories: For instance, sadness was induced much less by objects, and disgust much less by faces, than were any of the other categories. These interactions show that content categories should be taken into account by researchers attempting to choose appropriate stimuli to induce specific basic emotions.

When compared to the previously offered datasets of affective pictures characterized by discrete emotions, NAPS BE offers larger samples of images expressing single basic emotions, as classified with the CI method (Mikels et al., 2005). For instance, IAPS contains fewer images expressing disgust and sadness (ns = 31 and 42, respectively; Bradley & Lang, 2007) than does NAPS BE (ns = 51 and 62). Another advantage of NAPS BE is that it enables researchers to control for the physical properties of the images (Marchewka, Żurawski, Jednoróg, & Grabowska, 2014). However, the greatest advantage of the presently introduced dataset is that it offers pictorial stimuli characterized from both dimensional and basic-emotion perspectives, which makes it extremely useful for experiments within a combined approach.

Limitations and future directions

An important limitation of the present study, similarly to previous ones (Mikels et al., 2005), is that we were not able to differentiate representative numbers of stimuli that induce clear basic emotions such as surprise and anger. The small number of images expressing anger in NAPS BE is in line with the previous results (Mikels et al., 2005) and might be explained by the fact that it is difficult to elicit extreme unpleasantness, high effort, high certainty, and strong human agency with the passive and essentially effortless viewing of static images.

Another possible limitation is that NAPS BE, just like NAPS (Marchewka et al., 2014), lacks very positive pictures with high arousal (e.g., pictures with erotic content). However, the Erotic Subset for NAPS (NAPS ERO; Wierzba et al., 2015) has been prepared. Also, the images included in NAPS BE are only moderately inductive of basic emotions. First, this may result from the nature of static images. Second, it has been claimed that pictures evoking basic emotions of higher intensities probably also evoke different emotions (van Hooff et al., 2014). Therefore, perhaps only mild emotions can be evokedi by images classified by single basic emotions. These moderate intensities should be taken into account when investigating the specific effects of basic emotions through the use of NAPS BE.

Normative ratings were collected in a group of participants from various European and non-European countries using the English language, which could potentially have influenced the obtained results (Majid, 2012). For instance, using a nonnative language to evaluate emotions could potentially increase participants’ arousal, due to anxiety (Caldwell-Harris & Ayçiçeǧi-Dinn, 2009). Thus, future investigations of the basic emotions expressed by NAPS BE should exploit cross-linguistic variation to take into account possible principles operating between language and emotion.

Finally, we have not applied all of the possible methods of classifying emotional stimuli; for instance, we did not use a recently published method based on Euclidean distance (Wierzba et al., 2015).

Currently, we are working on dedicated software called the Nencki Affective Picture System Search Tool, which will allow researchers to choose stimuli according to the normative ratings within a combined theoretical framework.

References

Abramson, L. Y., Metalsky, G. I., & Alloy, L. B. (1989). Hopelessness depression: A theory-based subtype of depression. Psychological Review, 96, 358–372. doi:10.1037/0033-295X.96.2.358

Barrett, L. F., & Bliss-Moreau, E. (2009). Affect as a psychological primitive. In M. P. Zanna (Ed.), Advances in experimental social psychology (Vol. 41, pp. 10.1016/S0065-2601(08)00404-8–218). London, UK: Academic Press. doi:10.1016/S0065-2601(08)00404-8

Bayer, M., Sommer, W., & Schacht, A. (2010). Reading emotional words within sentences: The impact of arousal and valence on event-related potentials. International Journal of Psychophysiology, 78, 299–307. doi:10.1016/j.ijpsycho.2010.09.004

Bradley, M. M., Codispoti, M., Cuthbert, B. N., & Lang, P. J. (2001a). Emotion and motivation I: Defensive and appetitive reactions in picture processing. Emotion, 1, 276–298. doi:10.1037/1528-3542.1.3.276

Bradley, M. M., Codispoti, M., Sabatinelli, D., & Lang, P. J. (2001b). Emotion and motivation II: Sex differences in picture processing. Emotion, 1, 300–319. doi:10.1037/1528-3542.1.3.300

Bradley, M. M., & Lang, P. J. (1999). Affective Norms for English Words (ANEW): Stimuli, instruction manual and affective ratings (Technical Report No. C-1). Gainesville, FL: University of Florida, NIMH Center for Research in Psychophysiology.

Bradley, M. M., & Lang, P. J. (2007). The International Affective Picture System (IAPS) in the study of emotion and attention. In J. A. Coan & J. J. B. Allen (Eds.), Handbook of emotion elicitation and assessment (pp. 29–46). Oxford, UK: Oxford University Press.

Briesemeister, B. B., Kuchinke, L., Jacobs, A. M., & Braun, M. (2015). Emotions in reading: Dissociation of happiness and positivity. Cognitive, Affective, & Behavioral Neuroscience, 15, 287–298. doi:10.3758/s13415-014-0327-2

Briesemeister, B. B., Kuchinke, L., & Jacobs, A. M. (2011a). Discrete emotion effects on lexical decision response times. PLoS ONE, 6, e23743. doi:10.1371/journal.pone.0023743

Briesemeister, B. B., Kuchinke, L., & Jacobs, A. M. (2011b). Discrete emotion norms for nouns: Berlin affective word list (DENN-BAWL). Behavior Research Methods, 43, 441–448. doi:10.3758/s13428-011-0059-y

Briesemeister, B. B., Kuchinke, L., & Jacobs, A. M. (2014). Emotion word recognition: Discrete information effects first, continuous later? Brain Research, 1564, 62–71. doi:10.1016/j.brainres.2014.03.045

Caldwell-Harris, C. L., & Ayçiçeǧi-Dinn, A. (2009). Emotion and lying in a non-native language. International Journal of Psychophysiology, 71, 193–204. doi:10.1016/j.ijpsycho.2008.09.006

Chapman, H. A., Johannes, K., Poppenk, J. L., Moscovitch, M., & Anderson, A. K. (2012). Evidence for the differential salience of disgust and fear in episodic memory. Journal of Experimental Psychology. General, 142, 1100–1112. doi:10.1037/a0030503

Colden, A., Bruder, M., & Manstead, A. S. R. (2008). Human content in affect-inducing stimuli: A secondary analysis of the international affective picture system. Motivation and Emotion, 32, 260–269. doi:10.1007/s11031-008-9107-z

Colibazzi, T., Posner, J., Wang, Z., Gorman, D., Gerber, A., Yu, S., . . . Peterson, B. S. (2010). Neural systems subserving valence and arousal during the experience of induced emotions. Emotion, 10, 377–389. doi:10.1037/a0018484

Costa, T., Cauda, F., Crini, M., Tatu, M.-K., Celeghin, A., de Gelder, B., & Tamietto, M. (2014). Temporal and spatial neural dynamics in the perception of basic emotions from complex scenes. Social Cognitive and Affective Neuroscience, 9, 1690–1703. doi:10.1093/scan/nst164

Croucher, C. J., Calder, A. J., Ramponi, C., Barnard, P. J., & Murphy, F. C. (2011). Disgust enhances the recollection of negative emotional images. PLoS ONE, 6, e26571. doi:10.1371/journal.pone.0026571

Darwin, C. (1872). The expression of the emotions in man and animals. American Journal of the Medical Sciences, 232, 477. doi:10.1097/00000441-195610000-00024

Delaveau, P., Jabourian, M., Lemogne, C., Guionnet, S., Bergouignan, L., & Fossati, P. (2011). Brain effects of antidepressants in major depression: A meta-analysis of emotional processing studies. Journal of Affective Disorders, 130, 66–74. doi:10.1016/j.jad.2010.09.032

Eerola, T., & Vuoskoski, J. K. (2011). A comparison of the discrete and dimensional models of emotion in music. Psychology of Music, 39, 18–49. doi:10.1177/0305735610362821

Ekman, P. (1992). An argument for basic emotions. Cognition & Emotion, 6, 169–200. doi:10.1080/02699939208411068

Ekman, P. (1993). Facial expression and emotion. American Psychologist, 48, 384–392. doi:10.1037/0003-066X.48.4.384

Ekman, P. (1999). Basic emotions. In T. Dalgleish & M. J. Power (Eds.), Handbook of cognition and emotion (pp. 45–60). New York, NY: Wiley.

Ferré, P., Guasch, M., Moldovan, C., & Sánchez-Casas, R. (2012). Affective norms for 380 Spanish words belonging to three different semantic categories. Behavior Research Methods, 44, 395–403. doi:10.3758/s13428-011-0165-x

Flom, R., Janis, R. B., Garcia, D. J., & Kirwan, C. B. (2014). The effects of exposure to dynamic expressions of affect on 5-month-olds’ memory. Infant Behavior and Development, 37, 752–759. doi:10.1016/j.infbeh.2014.09.006

Fontaine, J. R. J., Scherer, K. R., Roesch, E. B., & Ellsworth, P. C. (2007). The world of emotion is not two-dimensional. Psychological Science, 18, 1050–1057. doi:10.1111/j.1467-9280.2007.02024.x

Fusar-Poli, P., Placentino, A., Carletti, F., Landi, P., Allen, P., Surguladze, S., . . . Politi, P. (2009). Functional atlas of emotional faces processing: A voxel-based meta-analysis of 105 functional magnetic resonance imaging studies. Journal of Psychiatry & Neuroscience, 34, 418–432. Retrieved from www.ncbi.nlm.nih.gov/pmc/articles/PMC2783433/

Gerrards-Hesse, A., Spies, K., & Hesse, F. W. (1994). Experimental inductions of emotional states and their effectiveness: A review. British Journal of Psychology, 85, 55–78. doi:10.1111/j.1750-3841.2009.01222.x

Hamann, S. (2012). Mapping discrete and dimensional emotions onto the brain: Controversies and consensus. Trends in Cognitive Sciences, 16, 458–466. doi:10.1016/j.tics.2012.07.006

Hinojosa, J. A., Martínez-García, N., Villalba-García, C., Fernández-Folgueiras, U., Sánchez-Carmona, A., Pozo, M. A., & Montoro, P. R. (2015). Affective norms of 875 Spanish words for five discrete emotional categories and two emotional dimensions. Behavior Research Methods. doi:10.3758/s13428-015-0572-5. Advance online publication.

Hu, S., & Wan, H. (2003). Imagined events with specific emotional valence produce specific patterns of facial EMG activity. Perceptual and Motor Skills, 97, 1091–1099. doi:10.2466/pms.2003.97.3f.1091

Imbir, K. K. (2014). Affective norms for 1,586 Polish words (ANPW): Duality-of-mind approach. Behavior Research Methods. doi:10.3758/s13428-014-0509-4. Advance online publication.

Izard, C. E. (2009). Emotion theory and research: Highlights, unanswered questions, and emerging issues. Annual Review of Psychology, 60, 1–25. doi:10.1146/annurev.psych.60.110707.163539

Javela, J. J., Mercadillo, R. E., & Martín Ramírez, J. (2008). Anger and associated experiences of sadness, fear, valence, arousal, and dominance evoked by visual scenes. Psychological Reports, 103, 663–681. doi:10.2466/PR0.103.3.663-681

Kassam, K. S., Markey, A. R., Cherkassky, V. L., Loewenstein, G., & Just, M. A. (2013). Identifying emotions on the basis of neural activation. PLoS ONE, 8, e66032. doi:10.1371/journal.pone.0066032

Kissler, J., Herbert, C., Peyk, P., & Junghofer, M. (2007). Buzzwords: Early cortical responses to emotional words during reading. Psychological Science, 18, 475–480. doi:10.1111/j.1467-9280.2007.01924.x

Lang, P. J. (1980). Behavioral treatment and bio-behavioral assessment: Computer applications. In J. B. Sidowski, J. H. Johnson, & T. A. Williams (Eds.), Technology in mental health care delivery systems (pp. 119–137). Norwood, NJ: Ablex.

Lang, P. J., Bradley, M. M., & Cuthbert, B. N. (2008). International Affective Picture System (IAPS): Affective ratings of pictures and instruction manual (Technical Report No. A-8). Gainesville, FL: University of Florida, Center for Research in Psychophysiology.

Lindquist, K. A., Wager, T. D., Kober, H., Bliss-Moreau, E., & Barrett, L. F. (2012). The brain basis of emotion: A meta-analytic review. Behavioral and Brain Sciences, 35, 121–143. doi:10.1017/S0140525X11000446

Majid, A. (2012). Current emotion research in the language sciences. Emotion Review, 4, 432–443. doi:10.1177/1754073912445827

Marchewka, A., Żurawski, Ł., Jednoróg, K., & Grabowska, A. (2014). The Nencki Affective Picture System (NAPS): Introduction to a novel, standardized, wide-range, high-quality, realistic picture database. Behavior Research Methods, 46, 596–610. doi:10.3758/s13428-013-0379-1

Mauss, I. B., & Robinson, M. D. (2009). Measures of emotion: A review. Cognition & Emotion, 23, 209–237. doi:10.1080/02699930802204677

Mikels, J. A., Fredrickson, B. L., Larkin, G. R., Lindberg, C. M., Maglio, S. J., & Reuter-Lorenz, P. A. (2005). Emotional category data on images from the International Affective Picture System. Behavior Research Methods, 37, 626–630. doi:10.3758/BF03192732

Monnier, C., & Syssau, A. (2014). Affective norms for French words (FAN). Behavior Research Methods, 46, 1128–1137. doi:10.3758/s13428-013-0431-1

Montefinese, M., Ambrosini, E., Fairfield, B., & Mammarella, N. (2014). The adaptation of the Affective Norms for English Words (ANEW) for Italian. Behavior Research Methods, 46, 887–903. doi:10.3758/s13428-013-0405-3

Moors, A., De Houwer, J., Hermans, D., Wanmaker, S., van Schie, K., Van Harmelen, A.-L., . . . Brysbaert, M. (2013). Norms of valence, arousal, dominance, and age of acquisition for 4,300 Dutch words. Behavior Research Methods, 45, 169–177. doi:10.3758/s13428-012-0243-8

Myers, R. H. (1990). Classical and modern regression with applications (Duxbury advanced series in statistics and decision sciences, Vol. 2). Boston, MA: Duxbury Press.

Olszanowski, M., Pochwatko, G., Kuklinski, K., Scibor-Rylski, M., Lewinski, P. & Ohme, R.K. (2015). Warsaw set of emotional facial expression pictures: a validation study of facial display photographs. Frontiers in Psychology, 5, 1516. doi:10.3389/fpsyg.2014.01516

Ortony, A., & Turner, T. J. (1990). What’s basic about basic emotions? Psychological Review, 97, 315–331. doi:10.1037/0033-295X.97.3.315

Osgood, C. E., Suci, G. J., & Tannenbaum, P. H. (1957). The measurement of meaning. Urbana, IL: University of Illinois Press.

Panksepp, J. (1992). A critical role for “affective neuroscience” in resolving what is basic about basic emotions. Psychological Review, 99, 554–560. doi:10.1037/0033-295X.99.3.554

Reisenzein, R. (1994). Pleasure-arousal theory and the intensity of emotions. Journal of Personality and Social Psychology, 67, 525–539. doi:10.1037/0022-3514.67.3.525

Remmington, N. A., Fabrigar, L. R., & Visser, P. S. (2000). Reexamining the circumplex model of affect. Journal of Personality and Social Psychology, 79, 286–300. doi:10.1037/0022-3514.79.2.286

Ric, F., Alexopoulos, T., Muller, D., & Aubé, B. (2013). Emotional norms for 524 French personality trait words. Behavior Research Methods, 45, 414–421. doi:10.3758/s13428-012-0276-z

Russell, J. A. (2003). Core affect and the psychological construction of emotion. Psychological Review, 110, 145–172. doi:10.1037/0033-295X.110.1.145

Saarimaki, H., Gotsopoulos, A., Jääskeläinen, I. P., Lampinen, J., Vuilleumier, P., Hari, R., . . . Nummenmaa, L. (2015). Discrete neural signatures of basic emotions. Cerebral Cortex. Advance online publication. doi:10.1093/cercor/bhv086

Scherer, K. R. (2005). What are emotions? And how can they be measured? Social Science Information, 44, 695–729. doi:10.1177/0539018405058216

Schienle, A., Wabnegger, A., Schoengassner, F., & Scharmüller, W. (2014). Neuronal correlates of three attentional strategies during affective picture processing: An fMRI study. Cognitive, Affective, & Behavioral Neuroscience, 14, 1320–1326. doi:10.3758/s13415-014-0274-y

Silva, C., Montant, M., Ponz, A., & Ziegler, J. C. (2012). Emotions in reading: Disgust, empathy and the contextual learning hypothesis. Cognition, 125, 333–338. doi:10.1016/j.cognition.2012.07.013

Smith, C. A., & Lazarus, R. S. (1993). Appraisal components, core relational themes, and the emotions. Cognition & Emotion, 7, 233–269. doi:10.1080/02699939308409189

Stanley, D. J., & Meyer, J. P. (2009). Two-dimensional affective space: A new approach to orienting the axes. Emotion, 9, 214–237. doi:10.1037/a0014612

Stevenson, R. A., & James, T. W. (2008). Affective auditory stimuli: Characterization of the International Affective Digitized Sounds (IADS) by discrete emotional categories. Behavior Research Methods, 40, 315–321. doi:10.3758/BRM.40.1.315

Stevenson, R. A., Mikels, J. A., & James, T. W. (2007). Characterization of the affective norms for English words by discrete emotional categories. Behavior Research Methods, 39, 1020–1024. doi:10.3758/BF03192999

Tettamanti, M., Rognoni, E., Cafiero, R., Costa, T., Galati, D., & Perani, D. (2012). Distinct pathways of neural coupling for different basic emotions. NeuroImage, 59, 1804–1817. doi:10.1016/j.neuroimage.2011.08.018

Van Hooff, J. C., van Buuringen, M., El M’rabet, I., de Gier, M., & van Zalingen, L. (2014). Disgust-specific modulation of early attention processes. Acta Psychologica, 152, 149–157. doi:10.1016/j.actpsy.2014.08.009

Viinikainen, M., Jääskeläinen, I. P., Alexandrov, Y., Balk, M. H., Autti, T., & Sams, M. (2010). Nonlinear relationship between emotional valence and brain activity: Evidence of separate negative and positive valence dimensions. Human Brain Mapping, 31, 1030–1040. doi:10.1002/hbm.20915

Vytal, K., & Hamann, S. (2010). Neuroimaging support for discrete neural correlates of basic emotions: A voxel-based meta-analysis. Journal of Cognitive Neuroscience, 22, 2864–2885. doi:10.1162/jocn.2009.21366

Wierzba, M., Riegel, M., Pucz, A., Lesniewska, Z., Dragan,W. Ł, Gola, M., Jednoróg, K., & Marchewka, A. (2015a). Erotic subset for the Nencki Affective Picture System (NAPS ERO): Cross-sexual comparison study. Manuscript under review.

Wierzba, M., Riegel, M., Wypych, M., Jednoróg, K., Turnau, P., Grabowska, A., & Marchewka, A. (2015b). Basic Emotions in the Nencki Affective Word List (NAWL BE): New Method of Classifying Emotional Stimuli. PLoS ONE, 10(7), e0132305. doi:10.1371/journal.pone.0132305

Wilson-Mendenhall, C. D., Barrett, L. F., & Barsalou, L. W. (2013). Neural evidence that human emotions share core affective properties. Psychological Science, 24, 947–956. doi:10.1177/0956797612464242

Author note

We are grateful to Paweł Turnau, who constructed a Web-based assessment platform used to collect the affective ratings. We also appreciate the help of Łukasz Okruszek and Maksymilian Bielecki in preparing the statistical analysis of the collected affective ratings. Likewise, we thank Benny Briesemeister, the author of DENN-BAWL (Briesemeister et al., 2011b), for his helpful comments on the construction of NAPS BE. Finally, we thank the reviewers of this article for their valuable and constructive comments, which let us remarkably improve it. The study was supported by the Polish Ministry of Science and Higher Education, Iuventus Plus Grant No. IP2012 042072. The authors have declared that no competing interests exist.

Author information

Authors and Affiliations

Corresponding author

Appendix: Instructions for the picture ratings in English

Appendix: Instructions for the picture ratings in English

Thank you for agreeing to participate in this study.

We are interested in people’s responses to pictures representing a wide spectrum of situations. For the next 50 min, you will be looking at color photographs on the computer screen.

Your task will be to evaluate each photograph on six 7-point scales, indicating the degree to which you feel happiness, surprise, sadness, anger, disgust, and fear while viewing the picture.

The far left of each scale represents total absence of the given emotion (1 = I do not feel it at all), while the far right of the scale represents the highest intensity of the emotion (7 = I feel it very strongly).

Also, fill in responses for the following two scales describing how you feel while viewing the picture.

For the arousal scale (1 = I feel completely unaroused or calm, and 9 = I feel completely aroused or excited).

For the valence scale (1 = I feel completely happy or satisfied, and 9 = I feel completely unhappy or annoyed).

There are no right or wrong answers; respond as honestly as you can. Before we start, I’d like you to read and sign the “informed consent” statement.

You may find some of the pictures rather disturbing; should you feel uncomfortable, feel free to quit the experiment at any time.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Riegel, M., Żurawski, Ł., Wierzba, M. et al. Characterization of the Nencki Affective Picture System by discrete emotional categories (NAPS BE). Behav Res 48, 600–612 (2016). https://doi.org/10.3758/s13428-015-0620-1

Published:

Issue Date:

DOI: https://doi.org/10.3758/s13428-015-0620-1