Abstract

The belief-bias effect is one of the most-studied biases in reasoning. A recent study of the phenomenon using the signal detection theory (SDT) model called into question all theoretical accounts of belief bias by demonstrating that belief-based differences in the ability to discriminate between valid and invalid syllogisms may be an artifact stemming from the use of inappropriate linear measurement models such as analysis of variance (Dube et al., Psychological Review, 117(3), 831–863, 2010). The discrepancy between Dube et al.’s, Psychological Review, 117(3), 831–863 (2010) results and the previous three decades of work, together with former’s methodological criticisms suggests the need to revisit earlier results, this time collecting confidence-rating responses. Using a hierarchical Bayesian meta-analysis, we reanalyzed a corpus of 22 confidence-rating studies (N = 993). The results indicated that extensive replications using confidence-rating data are unnecessary as the observed receiver operating characteristic functions are not systematically asymmetric. These results were subsequently corroborated by a novel experimental design based on SDT’s generalized area theorem. Although the meta-analysis confirms that believability does not influence discriminability unconditionally, it also confirmed previous results that factors such as individual differences mediate the effect. The main point is that data from previous and future studies can be safely analyzed using appropriate hierarchical methods that do not require confidence ratings. More generally, our results set a new standard for analyzing data and evaluating theories in reasoning. Important methodological and theoretical considerations for future work on belief bias and related domains are discussed.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

The ability to draw necessary conclusions from given information constitutes one of the building blocks of knowledge acquisition. Without deduction, there would be no science, no technology, and no modern society (Johnson-Laird & Byrne, 1991). Over acentury of research has demonstrated that people can reason deductively, albeit imperfectly so (e.g., Störring, 1908; Wilkins, 1929). One key demonstration of the imperfect nature of deduction is aphenomenon known as belief bias, which has inspired an impressive amount of research and has been considered to be akey explanandum for any viable psychological theory of reasoning (for reviews, see Dube et al., 2010; Evans, 2002; Klauer et al., 2000). Consider the following syllogism (Markovits & Nantel, 1989):

All flowers have petals.

All roses have petals.

Therefore, all roses are flowers.

This syllogism is logically invalid, as the conclusion (i.e., the sentence beginning with “Therefore”) does not necessarily follow from the two premises, assuming the premises are true (i.e., the conclusion is possible, but not necessary). However, the fact that this syllogism’s conclusion states something consistent with real-world knowledge leads many individuals to endorse it as logically valid. More generally, syllogisms with believable conclusions are more often endorsed than structurally identical syllogisms that include unbelievable conclusions instead (e.g., “no roses are flowers”). At the heart of the belief bias effect is the interplay between individuals’ attempts to rely on the rules of logic and their general tendency to incorporate prior beliefs into their judgments and inferences (e.g., Bransford & Johnson, 1972; Cherubini et al., 1998; Schyns & Oliva, 1999). Although a reliance on prior belief is believed to be desirable and adaptive in many circumstances (Skyrms, 2000), it can be detrimental in cases where the goal is to assess the form of the arguments (e.g., in a court of law). Moreover, beliefs are often misguided and logical reasoning is necessary to determine if and when this is the case.

These detriments are likely to be far reaching in our lives, as highlighted by early work focusing on the social-psychological implications of belief bias (e.g., Feather, 1964; Kaufmann & Goldstein, 1967). Batson (1975), for example, found that presenting evidence that contradicts stated religious belief sometimes increases the intensity of belief. Motivated reasoning effects of this sort have been reported in hundreds of studies (Kunda, 1990), including, appropriately, on the Wason selection task (Dawson et al., 2002). Indeed, one of the foundational observations in the reasoning literature is the tendency for people to confirm hypotheses rather than disconfirm them (Wason, 1960, 1968; Wason and Evans, 1974), often referred to as confirmation bias (Nickerson, 1998) or attitude polarization (Lord et al., 1979). What makes belief bias notable is that, unlike in studies of motivated reasoning or attitude polarization, the beliefs that bias syllogistic reasoning are not of particular import to the reasoner (such as the “all roses are flowers” example above). Moreover, syllogistic reasoning offers a very clear logical standard by which to contrast the effect of belief bias. Thus, in a certain sense, developing a good account of belief bias in reasoning is foundational to understanding motivated reasoning and attitude polarization.

Theoretical accounts of belief bias

In the last three decades, several theories have been proposed to describe how exactly beliefs interact with reasoning processes (e.g., Dube et al., 2010; Evans et al., 1983, 2001; Klauer et al., 2000; Markovits & Nantel, 1989; Newstead et al., 1992; Oakhill & Johnson-Laird, 1985; Quayle & Ball, 2000). For example, according to the selective scrutiny account (Evans et al., 1983), individuals uncritically accept arguments with a believable conclusion, but reason more thoroughly when conclusions are unbelievable. In contrast, proponents of a misinterpreted necessity account (Evans et al., 1983; Markovits & Nantel, 1989; Newstead et al., 1992) argue that believability only plays a role after individuals have reached conclusions that are consistent with, but not necessitated by, the premises (as in the example above).

Alternatively, mental-model theory (Johnson-Laird, 1983; Oakhill & Johnson-Laird, 1985) proposes that individuals evaluate syllogisms by generating mental representations that incorporate the premises. When the conclusion is consistent with one of these representations, the syllogism tends to be perceived as valid. However, when the conclusion is seen as unbelievable, the individual is assumed to engage in the creation of alternative mental representations that attempt to refute the conclusion (i.e., counterexamples). Only when a model is found wherein the (unbelievable) conclusion is consistent with these alternative representations, is the syllogism perceived to be valid.

Another account, transitive-chain theory (Guyote & Sternberg, 1981) proposes that reasoners encode set-subset relations between the terms of the syllogism inspired by the order in which said terms are encountered when reading the syllogism. These mental representations are then combined according to a set of matching rules with different degrees of exhaustiveness. The theory predicts that unbelievable contents add an additional burden to this information processing, leading to worse performance compared to syllogisms with believable contents.

Yet another account, selective processing theory (Evans et al., 2001), proposes that individuals use a conclusion-to-premises reasoning strategy. Participants are assumed to first evaluate the believability of the conclusion, after which they conduct a search for additional evidence. Believable conclusions trigger a search for confirmatory evidence, whereas unbelievable conclusions induce a disconfirmatory search. For valid problems the conclusion is consistent with all possible representations of the premises, so believability will not have a large effect on reasoning. By contrast, for indeterminately invalid problems a representation which is inconsistent with the premises can typically be found with a disconfirmatory search, leading to increased logical reasoning accuracy for unbelievable problems. Most recently, the model has been extended to predict that individual differences in thinking ability mediate these effects, such that more able thinkers are more likely to be influenced by their prior beliefs (Stupple et al., 2011; Trippas et al., 2013).

This brief description does not exhaust the many theoretical accounts proposed in the literature, each of them postulating distinct relationships between reasoning processes and prior beliefs (e.g., Newstead et al., 1992; Quayle & Ball, 2000; Polk & Newell, 1995; Thompson et al., 2003; for reviews see Dube et al., 2010; Klauer et al., 2000). However, irrespective of the precise interplay between beliefs and reasoning processes, a constant feature of these theories is that the ability to discriminate between logically valid and invalid syllogisms is predicted to be higher when conclusions are unbelievable (although the opposite prediction has also been made by transitive-chain theory). In sum, virtually all theories propose that beliefs have some effect on reasoning ability, the latter having been operationalized in terms of the ability to discriminate between valid and invalid syllogisms. In this manuscript we test if believability affects discriminability using a mathematical model based on signal detection theory. Before describing this model in detail, it is important to consider the motivation behind this quite prevalent assumption.

The experimental design most commonly used in modern studies on the belief bias was popularized by the seminal work of Evans et al., (1983). They used a \(2\times 2\) design that orthogonally manipulated the logical status of syllogisms (Logic: valid vs. invalid syllogisms) along with the believability of the conclusion (Belief: believable vs. unbelievable syllogisms) while controlling for a number of potential confounds concerning the structure of syllogisms (e.g., figure and mood; for a review, see Khemlani & Johnson-Laird, 2012). Based on this Logic \(\times \) Belief experimental design, one can compare the endorsement rates (using binary response options “valid” and “invalid”) associated with the different levels of each factor. Table 1 provides a summary of this design.

The endorsement rates obtained with such a \(2\times 2\) design can be decomposed in terms of the contributions of logical validity (i.e., logic effect), conclusion believability (i.e., belief effect), and their interaction, as would be done with a linear model such as multiple regression. Taking Table 1 as an example, there is an effect of logical validity, with valid syllogisms being more strongly endorsed overall than their invalid counterparts ((.92 + .46)/2 − (.92 + .08)/2 > 0). There is also an effect of conclusion believability, as syllogisms with believable conclusions were endorsed at a much greater rate than syllogisms with unbelievable conclusions ((.92 + .92)/2 − (.46 + .08)/2 > 0). Finally, there is an interaction between validity and believability (Logic \(\times \) Belief interaction): the difference in endorsement rates between valid and invalid syllogisms is much smaller when conclusions are believable than when they are unbelievable ((.92 − .92) − (.46 − .08) = −.38). At face value, the negative interaction emerging from these differences suggests that individuals’ reasoning abilities are reduced when dealing with syllogisms involving believable conclusions (although the effect is typically interpreted the other way around, such that people reason better when syllogisms have unbelievable conclusions; e.g., Lord et al., 1979). Since Evans et al., (1983), the interaction found in Logic \(\times \) Belief experimental designs like the one illustrated in Table 1 is usually referred to as the interaction index.

Overall, these results suggest three things: First, that individuals can discriminate valid from invalid arguments, albeit imperfectly (i.e., individuals can engage in deductive reasoning). Second, that people are more likely to endorse syllogisms as valid if their conclusions are believable (i.e., consistent with real-world knowledge) than if they are not. Third, that people are more likely to discriminate between logically valid and invalid conclusions when those conclusions are unbelievable. In contrast with the main effects of logical validity and believability, which are not particularly surprising from a theoretical point of view (Evans and Stanovich, 2013), the Logic \(\times \) Belief interaction has been the focus of many research endeavors and is considered to be a basic datum that theories of the belief bias need to explain in order to be viable (Ball et al., 2006; Evans & Curtis-Holmes, 2005; Morley et al., 2004; Newstead et al., 1992; Quayle & Ball, 2000; Shynkaruk & Thompson, 2006; Stupple & Ball, 2008; Thompson et al., 2003; Roberts & Sykes, 2003).

Researchers’ reliance on the interaction index to gauge changes in reasoning abilities was the target of extensive criticisms by Klauer et al., (2000) and Dube et al., (2010). Both Klauer et al. and Dube et al. demonstrated that the linear-model-based approach used to derive the interaction index hinges on questionable assumptions regarding the way endorsement rates for valid and invalid syllogisms relate to each other. They argued that any analysis of the belief-bias effect rests upon some theoretical measurement model whose core assumptions need to be checked before any interpretation of the results can be safely made. Using extended experimental designs that go beyond the traditional Logic \(\times \) Belief design (e.g., introducing response-bias manipulations, payoff matrices, the use of confidence-rating scales) and including extensive model-validation tests, Klauer et al. and Dube et al. showed that the assumptions underlying the linear-model-based approach are incorrect, raising doubts about studies that take the interaction index as a direct measure of change in reasoning abilities. But whereas Klauer et al.’s results were still in line with the notion that conclusion believability affects the ability to discriminate between valid and invalid syllogisms, the work by Dube et al., (2010) argued that conclusion believability does not affect individuals’ discrimination abilities at all. Instead, their account suggests that conclusion believability affects only the general tendency towards endorsing syllogisms as valid (irrespective of their logical status). Dube et al.’s results are therefore at odds with most theories of deductive reasoning (but see Klauer & Kellen, 2011 and the response by Dube et al., 2012).Footnote 1

The results of Dube et al., (2010) can be interpreted as calling for the establishment of a new standard for methodological and statistical practices in the domain of syllogistic reasoning and deductive reasoning more generally (Heit and Rotello, 2014). Simply put, the use of flawed reasoning indices should be abandoned in favor of extended experimental designs that allow for the testing of the assumptions underlying the data analysis method. Specifically, their simulation and experimental results suggest moving from requesting binary judgments of validity to the use of experimental designs that request participants to report their judgments using a confidence-rating scale (e.g., a six-point scale from 1: very sure invalid to 6: very sure valid). These data can then be used to obtain receiver operating characteristic (ROC) functions and fit signal detection theory (SDT), a prominent measurement model in the literature that has been successfully applied in many domains (e.g., memory, perception; for introductions, see Green & Swets, 1966; Kellen & Klauer, 2018; Macmillan & Creelman, 2005). The parameter estimates provided by the SDT model can inform us on the exact nature of the observed differences in endorsement rates. Although experimental data from previous studies could potentially be reanalyzed with a version of SDT—known as the equal variance SDT model—which does not require confidence ratings, there is evidence from simulations suggesting that reliance on this simpler version of SDT would hardly represent an improvement over the interaction index (Heit & Rotello, 2014): a more extensive version of SDT—known as the unequal variance SDT model–appears to be necessary.Footnote 2

Taken at face value, the implications of Dube et al., (2010) work are severe and far-reaching, as they suggest that the majority of the work published in the last 30 years on belief bias must be conducted anew with extended experimental designs in order to determine whether the original findings can be validated with SDT (see also Rotello et al., 2015, for a similar suggestion in other psychological domains). However, there are legitimate concerns that Dube et al.’s results could have been distorted by their reliance on aggregated data. And if this is indeed the case, then it is possible that the implications are less severe. Aggregation overlooks the heterogeneity that is found among participants and stimuli. The problems associated with data aggregation, which have been long documented in the psychological literature (e.g., Estes, 1956; Estes & Todd Maddox, 2005; Judd et al., 2012), also hold for the case of ROC data (e.g., DeCarlo, 2011; Malmberg & Xu, 2006; Morey et al., 2008; Pratte & Rouder, 2011; Pratte et al., 2010). But to the best of our knowledge, these concerns have only been mentioned before in the context of the belief bias effect (e.g., Dube et al., 2010; Klauer et al., 2000), but have not been directly addressed. In order to address these concerns head on, we relied on a hierarchical Bayesian implementation of the unequal variance SDT model that takes into account differences across stimuli, participants, and studies. Using this model, we were able to conduct a meta-analysis over a confidence-rating ROC data corpus comprised of over 900 participants coming from 22 studies. To the best of our knowledge, this corpus contains the vast majority of published and unpublished research on belief bias in syllogistic reasoning for which confidence ratings were collected. The results obtained from this meta-analysis will allow us to answer the following questions:

-

Can the equal variance SDT model provide a sensible account of the data, dimissing the need for extended experimental designs?

-

Does the believability of conclusions affect people’s ability to discriminate between valid and invalid syllogisms?

In addition to these main questions, we will also briefly revisit the evidence for the role of individual differences in belief bias for a subset of the data for which this information is available. Our results discussed below show that the confidence-rating data are very much in line with the predictions made by the equal variance SDT model which can be applied without the availability of confidence ratings, suggesting that previously published belief bias studies can be reanalyzed using a probit or logit regression. The results also suggest that despite the heterogeneity found among participants and stimuli, the believability of conclusions does not generally affect people’s ability to discriminate between valid and invalid syllogisms when considered across the entire corpus, partially confirming (Dube et al., 2010) original account. However, a closer inspection using individual covariates suggest a relationship between people’s reasoning abilities and the way they are affected by beliefs, as suggested by Trippas et al. (2013, 2014, 2015). Altogether, these results suggest that syllogistic reasoning should be analyzed using hierarchical statistical methods together with additional individual covariates. In contrast, the routine collection of confidence ratings with the aim of modeling data, while certainly a possibility, is by no means necessary.

The remainder of this manuscript is organized as follows: First, we will review some of the problems associated with traditional analyses of the belief-bias effect based on a linear model, followed by an introduction to SDT and the analysis of ROC data. We then turn to the risks associated with the aggregation of heterogeneous data across participants and stimuli and how they can be sidestepped through the use of hierarchical Bayesian methods. In addition to the meta-analysis, we report a series of validation checks that corroborate our findings. Next, we present data from a new experiment using a K-alternative forced choice task which corroborates the main conclusion from our meta-analysis. Finally, we discuss potential future applications for the data-analytic methods used here and theoretical implications for belief bias.

Implicit linear-model assumptions and SDT-based criticisms

In order to understand the problems associated with the linear-model approach, it is necessary to describe in greater detail how it provides a linear decomposition of the observed endorsement rates in terms of simple effects and interactions. The probability of an endorsement (responding “valid”) in a typical \(2\times 2\) experimental design with factors of Logic (L: invalid = -1; valid = 1) and Belief (B: unbelievable = -1, believable = 1) is given by:

where parameters \(\beta _{0}\), \(\beta _{L}\), \(\beta _{B}\), and \(\beta _{LB}\) denote, in order, the intercept (i.e., the grand mean propensity to endorse syllogisms), the main effects of Logic and Belief (βL and \(\beta _{B}\) actually only represent \(\frac {1}{2}\) times the main effects), and the interaction between the latter two (LB = L × B). It is assumed that there is a linear relationship between a latent construct, which we will refer to as “reasoning ability”, and the effects of Logic and Belief (Evans et al., 1983; Evans and Curtis-Holmes, 2005; Newstead et al., 1992; Roberts & Sykes, 2003; Stupple & Ball, 2008).

A first problem with this linear-model approach is the fact that it does not respect the nature of the data it attempts to characterize. The parameters can take on any values, enabling predictions that are outside of the unit interval in which proportions are represented. Another concern relates with the way that the indices/parameters are typically interpreted, in particular the interaction index \(\beta _{LB}\). Specifically, negative interactions like the one described in Table 1 do not necessarily imply a diminished reasoning ability but may simply reflect the existence of a non-linear relationship between this latent construct and the factors of the experimental design (see Wagenmakers et al., 2012). This point was made by Dube et al., (2010), who highlighted the fact that the relationship between the latent reasoning ability and the factors of the experimental design can be assessed by means of receiver operating characteristic (ROC) functions. In the case of syllogistic reasoning, ROCs plot the endorsement rates of invalid syllogisms (false alarm rate; FAR) on the x-axis, and the endorsement rates of valid syllogisms (hit rate; HR) on the y-axis (e.g., Fig. 1).

Left Panel: Examples of ROCs predicted by the linear model. Center Panel: Illustration of how differences in response bias and discriminability in the linear model are expressed in terms of ROCs. Right Panel: Example of data that according to the linear model imply differences in discriminability for believable and unbelievable syllogisms, but could be described in terms of different response biases if the predicted ROC was curvilinear

First, consider an experimental design without believability manipulation in which only the syllogisms’ logical validity is manipulated. According to the linear model described in Eq. 1, predicted hit and false-alarm rates are given by:

It is easy to see that the hit rate and false-alarm rate are related in a linear fashion. Consider for example an observation with \(\beta _{0} = .5\) and \(\beta _{L} = .25\). This results in a FAR = .25 and HR = .75 (i.e., a logic effect of .5), denoted A in Fig. 1 (left panel). Now consider we increase the mean endorsement rate, but not the effect of validity, by .15 such that \(\beta _{0} = .65\) and \(\beta _{L} = .25\). This results in a FAR = .4 and HR = .9, denoted B. Next, consider we manipulate the effect of validity, but not the mean endorsement rate, in reference to point A such that \(\beta _{0} = .5\) and \(\beta _{L} = .1\). This gives us FAR = .4 and HR = .6 denoted as C. Finally, we manipulate both the mean endorsement and the effect of validity in reference to point A such that \(\beta _{0} = .65\) and \(\beta _{L} = .1\). This gives us FAR = .55 and HR = .75 denoted D. As can be easily seen, A and B are connected by a linear ROC with unit slope (i.e., slope = 1) and ROC-intercept equal to the main effect of logic, \(2\beta _{L}\) = .5. Likewise, C and D are connected by a linear ROC with unit slope and ROC-intercept \(2\beta _{L}\) = .2. This allows two simple conclusions (see center panel of Fig. 1): (1) Manipulations that affect the discriminability between valid and invalid syllogism affect the ROC-intercept and create different ROC lines. (2) All data points resulting from manipulations that affect the average endorsement rate, but not the ability to discriminate between valid and invalid syllogism, lie on the same linear ROC with unit slope. Manipulations within one item class (e.g., believable syllogisms) that leave the discriminability unaffected are also called response bias.

Now consider a full experimental design with in which both validity and believability are manipulated. This gives us the following linear model:

From these equations it is easy to see that in the absence of an interaction (i.e., \(\beta _{LB} = 0\)) all data points would fall on the same unit-slope ROC. The only change for each pair of FAR and HR for each believability condition is that the same value is either subtracted (for unbelievable syllogisms) or added (for believable syllogisms). And adding a constant to both x- and y-coordinates only moves a point along a unit slope. In contrast, what the interaction does is to alter the Logic effect for each believability condition; it creates separate ROCs. For example, negative values of \(\beta _{LB}\) increase the Logic effect for unbelievable syllogisms and decrease the Logic effect for believable syllogisms. Hence, if \(\beta _{LB} \neq 0\) the two believability conditions would fall on two separate ROCs, with the ROC for unbelievable syllogisms above the one for believable syllogisms for negative values of \(\beta _{LB}\).

The assumption that ROCs are linear (with slope 1) is questionable, given that the ROCs obtained across a wide range of domains tend to show a curvilinear shape (Green and Swets, 1966; Dube & Rotello, 2012); but see Kellen et al., (2013). The possibility of ROCs being curvilinear is problematic for the linear model given that it can misinterpret differences in response bias as differences in discriminability. For example, in the right panel of Fig. 1 we illustrate a case in which the discriminability for believable syllogisms is found to be lower than for unbelievable syllogisms (negative interaction index \(\beta _{I}\)), despite the fact that according to SDT (dashed curve) the observed ROC points can be understood as differing in terms of response bias alone. Moreover, potentially curvilinear ROC shapes are theoretically relevant given that they are considered a signature prediction of signal detection theory (SDT).

Signal detection theory

According to the SDT model, the validity of syllogisms is represented on a continuous latent-strength axis, which in the present context we will simply refer to as argument strength (Dube et al., 2010). The argument strength of a given syllogism can be seen as the output of a participant’s reasoning processes (e.g., Chater & Oaksford, 1999; Oaksford & Chater, 2007). A syllogism is endorsed as valid whenever its argument strength is larger than a response criterion \(\tau \). When the syllogism’s argument strength is smaller than the response criterion, the syllogism is deemed as invalid. This response criterion is assumed to reflect an individual’s general bias towards endorsement: more lenient individuals will place the response criterion at lower argument-strength values than individuals who tend to be quite conservative in their endorsements. Different criteria have consequences for the amount of correct and incorrect judgments that are made: for example, conservative criteria lead to less false alarms than their liberal counterparts but also lead to less hits.

A common assumption in SDT modeling is that the argument strengths of valid and invalid syllogisms can be described by Gaussian distributions with some mean \(\mu \) and standard deviation \(\sigma \). These distributions reflect the expectation and variability in argument strength that is associated with valid and invalid syllogisms. The farther apart these two distributions are—that is, the smaller their overlap—the better individuals are in discriminating between valid and invalid syllogisms. Figure 2 (top panel) illustrates a pair of evidence strength distributions associated with valid and invalid syllogisms and a response criterion.

Illustration of the Gaussian SDT model. The top panel shows argument strength distributions for valid and invalid syllogisms (and their respective parameters) in the case of binary choices. The bottom panel illustrates the same model in the case of responses in a six-point confidence-rating scale (for clarity, some parameters and labels are omitted here)

From the postulates of SDT, it follows that the probabilities of endorsing valid (V) and invalid (I) syllogisms correspond to the areas of the two distributions that are to the right side of the response criterion \(\tau \). Formally,

where \(\mu _{I}\) and \(\sigma _{I}\) correspond to the mean and standard deviation of the distribution for invalid syllogisms, and \(\mu _{V}\) and \(\sigma _{V}\) are their counterparts for valid syllogisms. The function \({\Phi }(\cdot )\) corresponds to the cumulative distribution function of the standard Gaussian distribution, which translates values from a latent argument-strength scale (with support across the real line) onto a probability scale between 0 and 1. This translation ensures that the model predictions are in line with the nature of the data they attempt to characterize.

The lower-left panel of Fig. 3 show how the differences in the position of the response criteria are expressed in terms of hits and false alarms. Note that the illustration of the latent distributions postulated by SDT in Fig. 2 does not specify the origin nor the unit of the latent argument–strength axis. In order to establish both the origin and unit, it is necessary to fix some of the model’s parameters. It is customary to fix the standard deviation \(\sigma _{I}\) to 1 and the mean \(\mu _{I}\) to either 0 or \(-\mu _{V}\). When these restrictions are imposed, one can simply focus on the parameters for valid syllogisms, \(\mu _{V}\) and \(\sigma _{V}\) (but alternative restrictions are possible, one of which will be used later on). It is important to note that these scaling restrictions do not affect the performance of the model in any way. The overall ability to discriminate between valid and invalid syllogisms can be summarized by an adjusted distance measure \(d_{a}\) (Simpson and Fitter, 1973):

Illustration of SDT predictions. The top row illustrates how ROC predictions change across values of μV (with μI = 0 and σI = 1). The middle row illustrates how ROC predictions change across values of σV. The bottom-left panel shows how hits and false alarms change due changes in the response criterion. The bottom-right panel illustrates how confidence-rating ROCs (4-point scale) are based on the cumulative probabilities associated with the different confidence responses

If one would assume the parametrization in which \(\mu _{I} = -\mu _{V}\) and \(\sigma _{I} = 1\), the similarity between Eqs. 2 and 3 of the linear model and Eqs. 8 and 9 of the SDT model becomes obvious. Response criterion \(\tau \) plays the same role as the intercept \(\beta _{0}\), in that both determine the endorsement rate for invalid syllogisms. Meanwhile, the mean \(\mu _{V}\) plays the role of \(\beta _{L}\) by capturing the effect of Logic (L)— i.e., a reflection of reasoning aptitude, with a value of 0 suggesting an inability to discriminate between valid and invalid arguments. From this standpoint, the differences between the linear model and SDT models essentially boil down to the latter assuming a parameter \(\sigma _{V}\) that modulates how the response-criterion \(\tau \) affects the hit rate, and the use of the non-linear function \({\Phi }(\cdot )\) which translates the latent argument-strength values into manifest response probabilities (DeCarlo, 1998) and maps the real line onto the probability scale. Although these differences may seem minor or even pedantic, they are highly consequential, as they ultimately lead both models to yield rather distinct interpretations of the same data (see the right panel of Fig. 1). Figure 3 shows ROCs generated by the SDT model under different parameter values: as the ability to discriminate between valid and invalid syllogisms increases (e.g., \(\mu _{V}\) increases), so does the area under the ROC. Moreover, parameter \(\sigma _{V}\) affects the symmetry/asymmetry of the ROC relative to the negative diagonal, with ROCs only being symmetrical when \(\sigma _{V} = \sigma _{I}\). Note that all these ROCs are curvilinear, in contrast with the unit-slope linear ROCs predicted by the ANOVA model (compare with the left panel of Fig. 1).

Dube et al., (2010) showed that the linear model can produce an inaccurate account of the data simply due to the mismatch between the model’s predictions and the observed ROC data. Specifically, if the ROCs are indeed curved as predicted by SDT, then the linear model is likely to incorrectly interpret these data as evidence for a difference in discrimination. This difference in discrimination would be captured by a statistically significant interaction index. For example, consider the right panel of Fig. 1, which illustrates a case where the hit and false-alarm rates observed across believability conditions all fall on the same curved ROC, a pattern indicating that these conditions only differ in terms of the response bias being imposed (i.e., these rates reflect the same ability to discriminate between valid and invalid syllogisms): the linear model cannot capture both ROC points in the same unit-slope line, which yields the erroneous conclusion that there is a difference in the level of valid/invalid discrimination for believable and unbelievable syllogisms (a difference captured by the interaction index \(\beta _{I}\)). Note that this erroneous conclusion does not vanish by simply collecting more data—in fact, additional data will only reinforce the conclusion, an aspect that can lead researchers to a false sense of reassurance. Rotello et al., (2015) discussed how researchers tend to be less critical of the interpretation of their measurements when they are replicated on a regular basis. Given that negative interaction indices are regularly found in syllogistic-reasoning studies, very few researchers have considered evaluating the measurement model that underlies this index (the exceptions are Dube et al., 2010; Klauer et al., 2000).

In order to assess the shape of syllogistic-reasoning ROCs and compare them with the predictions coming from the linear and SDT models, Dube et al., (2010) relied on an extended experimental design in which confidence-rating judgments were also collected. In the SDT framework, confidence ratings can be modeled via a set of ordered response criteria (for details, see Green & Swets, 1966; Kellen & Klauer, 2018). For instance, according to SDT, in the case of a six-point scale ranging from “1: very sure invalid” to “6: very sure valid”, the probability of a confidence rating \(1 \leq k \leq 6\) can be obtained by establishing five response criteria \(\tau _{k}\), with \(\tau _{k-1} \leq \tau _{k}\) for all \( 2 \leq k \leq 6\), as illustrated in the lower panel of Fig. 2:

Cumulative confidence probabilities can then be used to construct confidence-rating ROCs (for an example, see the bottom-right panel of Fig. 3). Dube et al.’s (2010) confidence-rating ROCs were found to be curvilinear, closely following the SDT model’s predictions. Moreover, Dube et al. showed that the belief-bias effect did not affect discriminability, in contrast with the large body of work based on the interaction index that attributed such an effect to differences in discriminability. Figure 4 provides a graphical depiction of this result, with both ROCs for believable and unbelievable syllogisms following a single monotonic curve. Overall, it turned out that the degree of overlap between the distributions for valid and invalid syllogisms was not affected by the believability of the conclusions. Moreover, Dube et al. showed that the linear-model-based approach tends to misattribute the belief-bias effect to individuals’ ability to discriminate between syllogisms, simply due to its failure to accurately describe the shape of the ROC. These issues were further fleshed out by Heit and Rotello (2014) in a series of simulations and experimental studies showing that different measures that do not hinge on ROC data tend to systematically mischaracterize the differences found between believable and unbelievable syllogisms.

ROC data from Dube et al. (2010, Exp. 2)

SDT’s point measure d’: A more efficient, equally valid approach?

Due to a general reliance on the interaction index, there is a real possibility that much of the literature on the belief bias effect is founded on an improper interpretation of an empirical finding. Ideally, this situation could be easily resolved by simply reanalyzing the existing binary data obtained from the commonly used Logic \(\times \) Belief paradigm with an alternative SDT model that could provide parameter estimates with such data, to see if the conclusions regarding reasoning ability hold up. The equal-variance SDT (EVSDT) model, which fixes \(\sigma _{V}\) and \(\sigma _{I}\) to be equal to the same value seems like an ideal candidate in this respect as it is able to estimate discrimination (μV) directly from a single pair of hit and false-alarm rates. This discrimination estimate is widely known in the literature as \(d^{\prime }\) (Green & Swets, 1966). When, without loss of generality, we fix \(\mu _{I} = 0\) and \(\sigma _{V} =\sigma _{I} = 1\):

where \({\Phi }^{-1}(\cdot )\) is the inverse of the Gaussian cumulative distribution function.

One important aspect of the EVSDT model is that it is formally equivalent to probit regression, with Logic and Belief factors (and their interaction):

A key difference between the linear model previously discussed (see Eq. 1) and probit regression is that the latter includes a link function \({\Phi } (\cdot )\) that maps the linear model onto a 0-1 probability scale (DeCarlo, 1998). If this simplified model is deemed appropriate, then one could keep relying on a logic \(\times \) belief interaction index to assess the impact of beliefs on reasoning abilities.Footnote 3

Like SDT, the simpler EVSDT model also predicts curvilinear ROCs, however they are all constrained to be symmetrical with respect to the negative diagonal. This additional constraint raises questions regarding the suitability of EVSDT: do the EVSDT’s predictions match the ROC data? And if not to which extent does this mismatch affect the characterization of the belief-bias effect? In other domains such as recognition memory and perception, ROCs have been found to be asymmetrical, with \(\sigma _{V} > \sigma _{I}\) (see Dube & Rotello, 2012; Starns et al., 2012). When applied to these asymmetric ROCs, \(d^{\prime }\) provides distorted results, with discriminability being overestimated in the presence of stricter response criteria, and underestimated for more lenient criteria (for an overview, see Verde et al., 2006). Similar results have been found in the case of syllogistic reasoning, with asymmetrical ROCs speaking strongly against the EVSDT model. Dube et al., (2010) found the restriction \(\sigma _{V} = \sigma _{I}\) to yield predictions that systematically mismatch the ROC data.

These shortcomings were corroborated in a more comprehensive evaluation by Heit and Rotello (2014). They reported a simulation showing that, if anything, the use of \(d^{\prime }\) only amounts to a small improvement over the interaction index. Specifically, data were generated via a bootstrap procedure and discrimination for syllogisms with believable and unbelievable conclusions were assessed with \(d^{\prime }\) and the interaction index. Both measures were found to be strongly correlated and very often reached the same incorrect conclusion. The only difference was that \(d^{\prime }\) led to incorrect conclusions slightly less often than the interaction index. Overall, the use of the EVSDT model and its measure \(d^{\prime }\) does not seem to constitute a reasonable solution for the study of the belief-bias effect. These results suggest that researchers need to rely on extended designs (e.g., confidence ratings) whenever possible (Heit & Rotello, 2014, p. 90). But as it will be shown below, the dismissal of the EVSDT model and \(d^{\prime }\) is far from definitive. In fact, it is entirely possible that this dismissal is the byproduct of an unjustified reliance on ROC data that aggregate responses across heterogeneous participants and stimuli.

The problem of aggregating data from heterogeneous sources

One of the challenges experimental psychologists regularly face is the sparseness of data at the level of individuals as well as stimuli. Typically, one can only get a small number of responses from each participant, only have a small set of stimuli available, and can only obtain one response per participant-stimulus pairing. In the end, only very little data is left to work with. A typical solution to this sparseness problem consists of aggregating data across stimuli or participants. Although previous work has shown that although data aggregation is not without merits (Cohen et al., 2008), its use implies the assumption that that there are no differences between participants nor stimuli. In the presence of heterogeneous participants and stimuli, this assumption can lead to a host of undesirable effects. One classic demonstration of the risks of data aggregation in the social sciences is Condorcet’s Paradox (Condorcet, 1785), which demonstrates how preferences (e.g., between political candidates) aggregated across individuals might not reflect properties that hold for any individual. In this specific case, it is shown that aggregated preferences often violate a fundamental property of rational preferences known as transitivity (e.g., if option A is preferred to B, and option B is preferred to C, then option A is preferred to C), even though all of the aggregated individual preferences were actually transitive (for a discussion, see Regenwetter et al., 2011).

In the case of traditional data-analytic methods such as linear models, the aggregation of data coming from heterogeneous participants and stimuli often leads to distorted results and severely inflated type I errors. These distortions can also compromise the replication and generalization of findings (for an overview, see Judd et al., 2012). Other approaches which do not rely on aggregation, for instance analyzing the data for each participant individually prior to summarizing them, is also not ideal given that this approach may seriously inflate the probability of type 2 errors due to the data sparseness. The problems associated with data aggregation and pure individual-level analysis have led to a growing reliance on statistical methods that do not rely exclusively on either, but a compromise between both, effectively establishing a new standard in terms of data analysis (e.g., Baayen et al., 2008; Barr et al., 2013; Snijders & Bosker, 2012). Some of these methods have been adopted in recent work on probabilistic and causal reasoning (e.g., Haigh et al., 2013; Rottman & Hastie, 2016; Singmann et al., 2016, 2014; Skovgaard-Olsen et al., 2016), but these methods have not been applied to the study of the measurement assumptions underlying belief bias. For example, for a very long time it was established in the literature that the effects of practice in cognitive and motor skills were better characterized by a power function than by an exponential function (Newell et al., 1981). However, this finding was based on functions aggregated across participants. Later simulation work showed that when agregated across participants, exponential practice functions were better accounted for by a power function (Anderson & Tweney, 1997; Heathcote et al., 2000). In an analysis involving data from almost 500 participants, Heathcote et al. showed that non-aggregated data were better described by an exponential function, a result that demonstrates how a reliance on aggregate data can lead researchers astray for several decades. Another example can be found in the domain of cognitive neuroscience, where it is common practice to aggregate across multiple participants’ fMRI-data. In contrast to the prevailing assumption in the field, individual patterns of brain activity are not exclusively driven by external or measurement noise, but are potentially linked to systematic inter-individual differences in strategy use (Miller et al., 2002).

Aggregating across heterogeneous stimuli

Let us now describe some of the distortions that could be caused by the unaccounted presence of heterogeneous participants and stimuli (for similar scenarios, see Morey et al., 2008; Pratte et al., 2010): First, consider the judgments from a single individual who was requested to evaluate a list of valid and invalid syllogisms. Let us assume that these judgments are perfectly in line with the SDT model. Furthermore, assume that among the valid syllogisms, half were easy, \(\mu _{V,\text {easy}}= 3\), and the other half were hard, \(\mu _{V,\text {hard}}= 1\) (with \(\mu _{I}= 0\)). Moreover, assume that all argument-strength distributions have the same standard deviation, with \(\sigma _{V,\text {easy}} = \sigma _{V,\text {hard}} = \sigma _{I} = 1\). These distributions are illustrated on the left panel of Fig. 5.

When the researcher must aggregate across easy and hard syllogisms because they cannot be differentiated a priori, one would hope to obtain parameter estimates that are in line with the average of the distributions’ parameters, namely \(\mu _{V}= 2\) and \(\sigma _{V}= 1\). Note that this average would respect the fact that all distributions have the same variances, yielding symmetric ROCs. Unfortunately, the parameter estimates one obtains from aggregating across stimuli does not produce such a result. Instead, the parameter estimates obtained underestimate \(\mu _{V}\) and inflate \(\sigma _{V}\). The problem here is that the average of both distributions will have a greater standard deviation than the average of \(\sigma _{V,\text {easy}}\) and \(\sigma _{V,\text {hard}}\). In this particular example, data aggregation led to an asymmetric ROC (see the center panel of Fig. 5) with estimates \(\mu _{V}= 1.88\) and \(\sigma _{V} = 1.32\).Footnote 4 Based on these estimates, a researcher would erroneously conclude that ROCs are asymmetric and that one is required to estimate \(\sigma _{V}\) (perhaps using a confidence-rating task) in order to accurately characterize the data. To make matters worse these distortions are asymptotic in the sense that they would not vanish by simply having more data. On the contrary, they only reinforce the distorted results. These results show that a scenario in which the rejection of EVSDT is driven by the use of heterogeneous stimuli is far from unlikely, given that there is substantial variability in the propensity to accept different syllogistic structures all classified as similarly complex (Evans et al.,, 1999). The presence of such asymptotic distortions is particularly troubling given that it can lead researchers to dismiss a large body of work in favor of new studies involving extended experimental designs.

Aggregating across heterogeneous participants

We now turn to two examples involving the aggregation of judgments coming from two heterogeneous participants A and B. The first example is formally equivalent to the one just described in the subsection above (i.e., the left and center panels of Fig. 5 serve to illustrate it as well). Assume that participant A shows worse discriminability than (μV,A = 1) than participant B (μV,B = 3), with everything else being equal (again, \(\mu _{0} = 1\), and \(\sigma _{V,\text {easy}} = \sigma _{V,\text {hard}} = \sigma _{I} = 1\)). Note that both participants’ ROCs are symmetrical. As in the case of heterogeneous stimuli, the aggregation of the data from these two individuals would lead to an asymmetric ROC and an inflated estimate of \(\sigma _{V}\) (again, 1.32). In this scenario, the fact that one participant performs better than the other one is enough to distort the overall shape of the ROC. Once again, this possibility is far from unexpected in light of the fact that individual differences in reasoning ability are commonly found (Stanovich, 1999; Trippas et al., 2015).

The second example concerns differences in response bias, which can also produce distortions: For example, let us imagine two participants that have the same ability to discriminate between valid and invalid syllogisms, \(\mu _{V}= 1\), but differ in terms of their response biases. Specifically, let us assume that participant A relies on a conservative criterion \(\tau = 1.5\) (i.e., is less likely to endorse syllogisms), whereas participant B relies on the more lenient criterion \(\tau = 0\) (i.e., is more likely to endorse syllogisms). The hit and false-alarm rate pairs for these two participants are (.31, .07) and (.84,.50), respectively. The pair obtained when aggregating both pairs, (.57, .28), is associated with \(\mu _{V}\)= .76, a value that is smaller than any individual’s discriminability. As shown in the right panel of Fig. 5, the concavity of the ROC function implies that the average of any two hit and false-alarm pairs coming from a single function (i.e., with the same discriminability) will always result in a pair that falls below that function. When evaluating a single experimental condition (e.g., syllogisms with believable conclusions), the distortions caused by aggregating heterogeneous participants can lead to an underestimation of discriminability.

Such underestimation of discriminability is especially pernicious when different experimental conditions are used (e.g., syllogisms with believable versus unbelievable conclusions, or reasoning under a fixed-time limit versus self-paced conditions, etc), as it can create spurious differences or mask real ones. For example, individuals might be better at discriminating syllogisms with believable conclusions than their unbelievable counterparts (cf., Guyote & Sternberg, 1981). But if the inter-individual variability in terms of the adopted response criteria is larger in the former than in the latter, then the resulting underestimation can mask the differences in discriminability. Alternatively, if discriminability is the same across two conditions, differences in terms of the inter-individual variability of response criteria can introduce spurious differences in the estimates obtained with the aggregate data. It is possible that some inconsistencies found in the literature (e.g., Dube et al., 2010; Klauer et al., 2000) are driven by this. For instance, Trippas et al., (2013), who also employed the SDT model, observed no effect of believability on discriminability only for participants of lower cognitive ability, with higher ability reasoners showing a more typical effect of beliefs on accuracy. This suggests that treating all participants as equivalent is perhaps not the best assumption.

A hierarchical Bayesian meta-analytic approach

Fortunately, the problems associated with aggregation can be avoided by relying on hierarchical methods that take the heterogeneity at the participant and stimulus levels—logical structures in our case—into account (e.g., Baayen et al., 2008; Barr et al., 2013; Snijders & Bosker, 2012). Specifically, both participants and stimuli considered in the analyses are assumed to be random samples from higher group-level distributions, whose parameters are also estimated from the data. Note that when facing multiple studies, one can conceptualize each study as a random sample from a distribution of studies. Usually, each of these higher group-level distributions are assumed to follow a Gaussian distribution with some mean and variance. In the case of participant-level differences, the mean of this group-level distribution captures the average individual parameter value whereas the variance expresses the variability observed across participants. An analogous interpretation holds for the group-level distributions from which stimuli are assumed to originate.

Our hierarchical extension of SDT was implemented in a Bayesian framework (Gelman et al., 2013; Carpenter et al., 2017). In a Bayesian framework, the information one has regarding the parameters is represented by probability distributions. We begin by establishing prior distributions that capture our current state of ignorance. These prior distributions are then updated in light of the data using Bayes’ theorem, resulting in posterior distributions that reflect a new state of knowledge (for an overview of hierarchical Bayesian approaches, see Lee & Wagenmakers, 2013; Rouder & Jun, 2005). The estimation of posterior parameter distributions can be conducted using Markov chain Monte Carlo methods (for an introduction, see Robert & Casella, 2009). In the present work, we employed Hamiltonian Monte Carlo (e.g., Monnahan et al., 2016 and relied on weakly informative or non-informative priors that imposed minimal constraints on the values taken on by the parameters. These prior constraints are quickly overrun by the information present in the data.

The information captured by the posterior parameter distributions can be conveniently summarized by their respective means and 95% (highest-density) credible intervals. Each interval corresponds to the (smallest) region of values that include the true parameter value with probability .95. Moreover, the overall quality of a model can be checked by comparing the observed data with the predictions based on the model’s posterior parameter distributions (Gelman and Shalizi, 2013). If the observed data deviate substantially from the predictions then one can conclude that the model is failing to provide an adequate characterization.

Hierarchical extension of signal-detection model

The contributions of participant and individual differences can be conveniently characterized in terms of a generalized linear model. For example, the probability that participant p will endorse a syllogism s could be described as

where \(\bar {\mu }\) denotes the grand mean. Parameter \(\xi _{p}\) corresponds to the p th participant’s displacement from that grand mean, whereas \(\eta _{s}\) corresponds to the s th stimulus’s displacement. Displacements \(\xi _{p}\) and \(\eta _{s}\) are both assumed to come from zero-centered group-level distributions (for a similar approach, see Rouder et al., 2008). Based on this linear decomposition of participant and stimulus effects, the estimate of the overall probability of syllogisms being endorsed is given by \({\Phi }(\bar {\mu })\), the probability of participant p endorsing any syllogism corresponds to \({\Phi }(\bar {\mu } + \xi _{p})\), and the probability of syllogism s being endorsed by somebody is \({\Phi }(\bar {\mu } + \eta _{s})\).Footnote 5 A hierarchical approach provides a compromise between the assumption that all participants and stimuli are effectively the same (as done when aggregating data) and the assumption that all participants and stimuli are unique (as done when analyzing data for each participant individually). Specifically, the assumption that both participants and stimuli come from group-level distributions implies the presence of differences between the participants/stimuli, but also the existence of similarities that should not be overlooked. The estimation of individual and group-level parameters are informed by each other—a principle known as partial pooling—leading to parameter estimates that are more reliable than what would be obtained via independent estimation from individual datasets (e.g., Ahn et al., 2011; Katahira, 2016; for a discussion, see Scheibehenne and Pachur, 2015).

As previously discussed, the SDT model characterizes individuals’ responses in terms of latent strength distributions defined with means \(\mu \), standard deviations \(\sigma \), and response criteria \(\tau \). We will therefore introduce our hierarchical extension of SDT at the level of these parameters (Klauer, 2010; Rouder & Jun, 2005; Morey et al., 2008; Pratte & Rouder, 2011; Pratte et al., 2010). Because of the identifiability issues associated with SDT (see Footnote 3), we modeled believable and unbelievable syllogisms separately.

The probability of participant \(p_{h}\) in experimental study h endorsing an invalid syllogism \(s_{i}\) or valid syllogism \(s_{v}\) are given by:

Individual mean parameters \(\mu _{I,h,p_{h},s_{i}}\) and \(\mu _{V,h,p_{h},s_{i}}\) are established as a linear function of group-level means (\({\bar \mu }\)), and their respective experimental-study- (χ), participant- (ξ), and stimulus-level (η) deviations from those means:

Note the use of subscripts and superscripts (i.e., \(\xi ^{\mu _{I}}_{p_{h}}\) does not mean “\(\xi _{p_{h}}\) to the power of \(\mu _{I}\)”). For reference on the different parameters and sub/superscripts, see Table 2. Also, note that additional parameters could be easily added to the model, as is routinely done with predictor variables in multiple-regression models (we provide a demonstration of this in the general discussion). For example, Trippas et al. (2013, 2015) considered the relationship between individual differences variables such as cognitive ability and analytic cognitive style with SDT estimates of discriminability and response bias. The use of other predictor variables such as fMRI data have also been entertained (e.g., Roser et al., 2015).

A similar linear structure holds for the individual standard-deviation parameters \(\sigma _{I,h,p_{h},s_{i}}\) and \(\sigma _{V,h,p_{h},s_{i}}\), however it is implemented on a log scale:

where log() corresponds to the natural logarithm and exp() to the exponential function.

Meta-analytic model

In terms of the meta-analytic model we implemented a variant of what is known as a random-effects or random study-effects meta-analysis (Borenstein et al., 2010; Whitehead, 2003). Note that the usage of the term ’random-effects’ in this context slightly differs from the other usage in this manuscript and simply means that our model allowed each individual study to have its own idiosyncratic effect and that we did not assume that all study had exactly the same overall effect. For the participant-level deviations \(\xi ^{x}_{p_{h}}\) (where \(x \in \{ \mu _{I}, \mu _{V}, \sigma _{I}, \sigma _{V} \}\)) we assume they follow a normal distribution with mean 0 and study-specific variance \(\sigma ^{2}_{\xi ^{x},h}\),

where \(\mathcal {N}\) corresponds to the distribution function of the normal or Gaussian distribution.Footnote 6

For the study-specific deviations \({\chi ^{x}_{h}}\) we assumed the common random-effects meta-analytic model,

where \(\sigma ^{2}_{e,\xi ^{x},h}\) is the within-study error variance, \(\sigma ^{2}_{e,\xi ^{x},h} = \frac {\sigma ^{2}_{\xi ^{x},h}}{N_{h}}\) (where \(N_{h}\) is the number of participants in study h; \(\sigma _{e,\xi ^{x},h}\) is also known as the standard-error), and \(\upsilon ^{2}\) the between-study error variance.Footnote 7 As can be seen from the previous two equations, the main difference between our meta-analysis based on the individual trial-level data and a traditional meta-analysis is that in our case the within-study error variance is estimated in the same step as all other parameters and not treated as observed data.

For ease of presentation, the formulas in the previous paragraph present a slight simplification of our actual model. For all displacement parameters, \({\chi ^{x}_{h}}\) (study-specific), \(\xi ^{x}_{P_{h}}\) (participant-level), and \({\eta ^{x}_{s}}\) (stimulus/item-specific) we also estimated the correlation among the deviations across the different SDT parameters x. Thus, all displacements are actually assumed to come from a zero-centered multivariate Gaussian distributions with covariance matrices \(\mathbf {{\Sigma }}_{S}\), \(\mathbf {{\Sigma }}_{P}\), and \(\mathbf {{\Sigma }}_{I}\), respectively (Klauer, 2010). For the covariance matrices \(\mathbf {{\Sigma }}_{S}\) and \(\mathbf {{\Sigma }}_{P}\) the standard deviations are as described in the previous paragraph and we additionally estimated one correlation matrix for each covariance matrix. For \({\eta ^{x}_{s}}\) we estimated one standard deviation for each x and one correlation matrix. The complete model is presented in the Appendix. The covariance matrices capture different dependencies that could be potentially found across participants’ parameter estimates. For instance, the participant-level covariance matrix \(\mathbf {{\Sigma }}_{P}\) indicates how individual parameters, say \(\mu _{V}\) and \(\sigma _{V}\), covary across participants. The estimation of all these covariance matrices, which amount to a so-called “maximal random-effects structure” is strongly advised as it known to improve the generalizability and accuracy of the hierarchical model’s account of the data (Barr et al., 2013): Specifically, the hierarchical structure of the model’s parameters allows us to more safely make generalizations from our parameters of interest. For example, the group-level means (e.g., \({\bar \sigma }_{V}\)) summarize the information that we have about the individuals, after factoring out their differences. These parameters allow us then to make general inferences regarding the population, such as whether \(\sigma _{V}\) is systematically greater than \(\sigma _{I}\), as currently claimed in literature (Dube et al., 2010; Heit & Rotello, 2014).

The extension of this model to the case of a K-point confidence-rating paradigm follows exactly what is already described in Eqs. 11 and 12, with the specification of \(K-1\) ordered response criteria \(\tau _{h,p_{h},k}\) per participant. The use of a different set of criteria per participant allows the model to capture different response styles that people often manifest (Tourangeau et al., 2000). As previously mentioned, it is customary to fix the parameters of the invalid-syllogism distributions, but in the present case we decided to instead fix \(\tau _{h,p_{h},1}\) and \(\tau _{h,p_{h},{K-1}}\) to 0 and 1, respectively. This restriction, which does not affect the ability of the model to account for ROC data, nor the interpretation of the parameters, implies that the mean and standard deviation parameters from all argument-strength distributions are freely estimated (for a similar approach, see Morey et al., 2008). The motivation behind the use of this particular set of parameter restrictions was that it provided a more convenient specification of the different sets of participant-, stimulus-, and group-level parameters and at the same time allowed for identical prior distributions (see below) for the two standard deviations \(\sigma _{V}\) and \(\sigma _{I}\), which are of interest here. Furthermore, we assumed that the remaining three response criteria per individual participant, \(\tau _{h,p_{h},2}\) to \(\tau _{h,p_{h},{K-2}}\), were each drawn from a separate group-level Gaussian distribution and then transformed on the unit scale using the cumulative distribution function of the standard Gaussian distribution. The sampling was performed such that the three to-be-estimated criteria per individual participant were ordered.Footnote 8

In line with the literature (e.g., Dube et al., 2010; Trippas et al., 2013), we modeled the data for believable and unbelievable syllogisms separately using the same model. The reason for modeling these data separately is that SDT does not yield identifiable parameters (i.e., infinitely many sets of parameter values produce the exact same predictions; see Bamber & van Santen, 2000; Moran, 2016) when parameter restrictions are only applied on the parameters concerning one stimulus type (e.g., believable syllogisms) and everything else is left to be freely estimated (e.g., different response criteria for believable and unbelievable syllogisms). However, applying restrictions to each stimulus type while allowing criteria to vary freely between them is equivalent to fitting them separately (for detailed discussions; see Singmann, 2014; Wickens & Hirshman, 2000).

Meta-analysis of extant ROC data

Our analysis differs from regular meta-analyses (e.g., Borenstein et al., 2010) in two important ways. First, we obtained the raw (i.e., participant- and trial-level) data and performed our meta-analysis on this non-aggregated data. This has the benefit that all variability estimates are obtained directly from the data and not inferred from other statistical indices. Second, our meta-analysis is performed using a fully generative model; it allows us to use the obtained parameter estimates to generate new synthetic data from for any part of the data corpus (e.g., for individual participants or studies). The data corpus and modeling scripts are available at: https://osf.io/8dfyv/.

The hierarchical Bayesian SDT model established here was fitted to a data corpus comprised of 22 studies, for a total of 993 participants. To the best of our knowledge, these datasets consist of all published and non-published studies on belief bias including ROC data for which individual- and item-level information is available. In the included datasets, (1) three-term categorical syllogisms were used as stimuli, (2) confidence ratings were collected on each trial, (3) data was available on the trial-level, and (4) information about the syllogistic structures was available for each trial. Over 80% (18/22) of the included studies were previously published. All of these studies involved participants evaluating the validity of believable and unbelievable syllogisms using a six-point confidence scale. Table 3 provides a description of these studies. An important aspect of these datasets is that they involve judgments obtained across a wide range of experimental conditions, in term of stimuli, instructions, response deadlines, stimulus-presentation conditions, among others. This diversity is particularly important when attempting to establish the robustness of any phenomenon, as it ensures that it is not circumscribed to a narrow set of conditions.

In terms of stimulus differences, we considered the different forms that syllogisms can take on. A categorical syllogism is an argument which consists of three terms, denoted here by A, B, and C, which are combined in two premises to produce a conclusion. The two terms which are present in the conclusion, A and C, are referred to as the end terms. The term which is present in each premise is referred to as the middle term, is denoted B. For example, in the “rose syllogism” given earlier, A = roses, B = petals, C = flowers. The two premises and conclusion each include one of four quantifiers: Universal affirmative (A; e.g., All A are B), universal negative (E; e.g., No A are B), particular affirmative (I; Some A are B), and particular negative (O; e.g., Some A are not B). The logical validity of a syllogistic structure is defined by its mood, its figure, and the direction of the terms in the conclusion. The mood is a description of which quantifiers occur in the syllogism. For instance, if the premises and the conclusion are preceded by the quantifiers “All”, “Some”, and “No”, respectively, then the syllogism’s mood is AIE. Given that a syllogism consists of three statements and that there are four possible quantifiers for each statement, there are 64 possible moods. The figure denotes how the terms in the conclusion are ordered. There are four possible figures: 1: (A-B; B-C), 2: (B-A; C-B), 3: (A-B, C-B), 4: (B-A; B-C).Footnote 9 Finally, there are two possible conclusion directions: 1: (A-C) and 2: (C-A). Combining the 64 moods with the four figures and the two conclusion directions yields a total of 512 possible syllogisms, of which only 27 are logically valid (Evans et al., 1999). The combinations of form and figure in syllogisms can be conveniently coded by concatenating the two letters associated to the quantifiers of the premises, the number associated with the figure, the letter associated with the quantifier of the conclusion and the direction of the conclusion. The “rose syllogism” used earlier as an example would be coded as AA3_A2: both premises and conclusion start with the “All” quantifier, the syllogistic figure is 3, and the conclusion direction is 2—from C to A. A complete list of all the syllogistic figures used in the reanalyzed studies and their respective codes is included our supplemental material is hosted on the Open Science Framework (OSF). Specifically at: https://osf.io/8dfyv/.

Results

We begin by evaluating the ability of the hierarchical model to fit the data. Specifically, we will evaluate its sufficiency (whether the model fits) and necessity (whether there is heterogeneity in stimuli and participants). With regards to the sufficiency of this hierarchical account, we implemented a model check by comparing the model predictions based on the model’s posterior parameter distributions and comparing it to the observed data (e.g., Gelman & Shalizi, 2013). Although SDT models for confidence-rating data are relatively flexible (Klauer, 2015), they cannot predict all possible data patterns in ROC space. This check allowed us to assess whether the model was able to describe the observed data sufficiently well. In this particular case, we generated one set of predictions based on each of the individual posterior-parameter distributions and subsequently aggregated them in order to compare with the ROCs obtained with the aggregate data. As can be seen in Figs. 6 and 7, the predictions based on the model’s posterior-parameter distributions are very similar to the ROCs observed across studies. This similarity strongly suggests that the model provides an adequate characterization of the data.

Believable-syllogism ROCs observed in each of the reanalyzed studies (for details, see Table 2). Note that these ROCs are based on the aggregated data. The shaded regions correspond to the hierarchical SDT model’s predictions based on its posterior parameter estimates

Unbelievable-syllogism ROCs observed in each of the reanalyzed studies (for details, see Table 2). Note that these ROCs are based on the aggregated data. The shaded regions correspond to the hierarchical SDT model’s predictions based on its posterior parameter estimates

With regards to the necessity of a hierarchical account, we inspected the posterior estimates of the variability parameters of the participant- (\({\bar \sigma _{\xi ^{x}}}\)), stimulus- (\(\sigma _{{\eta ^{x}_{s}}}\)), and study-effects (υ) of the different SDT parameters. All of these variability parameters clearly deviated from zero (i.e., their 95% credible intervals do not include 0), indicating the presence of heterogeneity among participants, believable and unbelievable syllogisms, and studies. As discussed in detail by Smith and Batchelder (2008), the presence of such heterogeneity indicates the need for a hierarchical framework that does not rely on data aggregation.

The first question we posed was whether a simplified version of SDT could provide a sensible account of the data. As can be seen in Fig. 8, for both believable and unbelievable syllogisms, the posterior group-level estimates of \(\frac {\sigma _{V}}{\sigma _{I}}\) were very close to 1 and their associated 95% credible intervals include values both above and below 1. Also, the posteriors were concentrated in a small range of values (see the diamonds in Fig. 8), reflecting the diagnostic value of the present data in terms of assessing ROC asymmetry. Overall, these results suggest that EVSDT, a simplified SDT model assuming that \(\sigma _{V} = \sigma _{I}\), provides an adequate account of the data (Kruschke, 2015). Another way of framing this result is that data from almost 1000 participants were not sufficient to dismiss the EVSDT’s assumption that \(\sigma _{V} = \sigma _{I}\). One exception to this pattern is Study 2, corresponding to the simple-syllogism condition of Trippas et al. (2013, Exp. 1), for which the posterior \(\frac {\sigma _{V}}{\sigma _{I}}\) mean and 95% credibility interval are larger than 1. This result suggests that the ROC symmetry of the EVSDT model fails at extreme performance levels, as is the case for Study 2, where performance is close to ceiling.

Posterior estimates of group-level \(\frac {\sigma _{V}}{\sigma _{I}}\) observed in each study (squares; \(\bar {\mu } + \chi ^{\sigma }\)), and the posterior estimate obtained across studies (diamonds; \(\bar {\mu }\) alone). The bars and the width of the diamond correspond to the 95% credible intervals. The size of the squares reflects the width of the credible intervals

In order to quantify the general degree of support for the EVSDT obtained from the posterior \(\sigma _{V}\) and \(\sigma _{I}\) estimates, we computed Bayes factors (BF; Kass & Raftery, 1995) that quantified the evidence in favor of EVSDT versus an unconstrained SDT model. In this specific case, the constrained EVSDT model was represented by the null hypothesis \(\mathcal {H}_{0}\) stating that the group-level \(\frac {\sigma _{V}}{\sigma _{I}}\) can take a small range of values, between .99 and 1.01, and an encompassing alternative hypothesis \(\mathcal {H}_{A}\) that imposed no such constraint.Footnote 10 In typical settings, the use of Bayes Factors requires the computation of marginal likelihoods for (at least) two models, which can be quite challenging (but see Gronau et al., 2017). But in this specific case in which the hypotheses considered consist of nested ranges of admissible parameter values (specifically, the range of \(\frac {\sigma _{V}}{\sigma _{I}}\)), Bayes Factors can be easily computed. As shown by Klugkist and Hoijtink (2007), the Bayes Factor for the two nested hypothesis corresponds to ratio of probabilities: The posterior probability that \(.99 < \frac {\sigma _{V}}{\sigma _{I}} < 1.01\), and its prior counterpart. The obtained Bayes factors were 17.28 and 11.84 for believable and unbelievable syllogisms, which indicates that the posterior probability of \(\frac {\sigma _{V}}{\sigma _{I}}\) values very close to 1 were 17 and 11 times greater after observing the data than before. According to the classification suggested by Vandekerckhove et al., (2015), this indicates strong support for \(\mathcal {H}_{0}\).

Let us now turn to our second question, whether there is a difference in discriminability for believable and unbelievable syllogisms. The group-level posterior \(d_{a}\) estimates reported in Fig. 9 are virtually equivalent for believable and unbelievable syllogisms, with an almost complete overlap of their respective 95% credible intervals. This result indicates that the believability of conclusions does not have an impact on participants’ ability to discriminate between valid and invalid syllogisms, which is in line with Dube et al.’s (2010) findings. The present meta-analysis serves to dismiss any concerns that such a result could be due to aggregation biases or a handful of studies, and reiterates the challenge that it represents to the major theories proposed in the literature (Dube et al., 2010; Klauer et al., 2000). We quantified the strength of the evidence in favor of the null hypothesis that the differences in \(d_{a}\) between believable and unbelievable syllogisms should take on a small range of values around 0 (values between \(-\).01 and .01). We obtained a Bayes factor of 7.34, indicating substantial evidence in favor of \(\mathcal {H}_{0}\).

Forest plot with the posterior group-level estimates (and respective 95% credible intervals) of discriminability (da) for believable and unbelievable syllogisms. The size of the squares reflects the width of the credible intervals. The probability P (Unbel > Bel) corresponds to the posterior probability that the group-level da estimate for unbelievable syllogisms is larger than for believable syllogisms

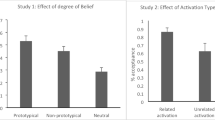

Figure 10 illustrates the posterior estimates of the stimulus-based differences (\({\eta ^{x}_{s}}\)) for believable and unbelievable syllogisms for the four SDT parameters for which we estimated stimulus effects.Footnote 11 Overall, most differences are very close to zero; only for some forms did we find noteworthy deviations. These results indicate that the impact of the stimuli on the parameter estimates is small for most argument forms. However, note that the stimuli considered in 21 out of 22 studies came from only 16 syllogistic forms.