Abstract

Attentional control is thought to play a critical role in determining the amount of information that can be stored and retrieved from visual working memory (VWM). We tested whether and how task-irrelevant feature-based salience, known to affect the control of visual attention, affects VWM performance. Our results show that features of a task-irrelevant color singleton are more likely to be recalled from VWM than non-singleton items and that this increased memorability comes at a cost to the other items in the display. Furthermore, the singleton effect in VWM was negatively correlated with an individual’s baseline VWM capacity. Taken together, these results suggest that individual differences in VWM storage capacity may be partially attributable to the ability to ignore differences in task-irrelevant physical salience.

Similar content being viewed by others

During the past two decades of research in cognitive neuroscience, there has been considerable interest in understanding the relationship between attention and working memory (Awh & Jonides, 2001; Postle, 2006; Chun, 2011; Kiyonaga & Egner, 2013). Such research has demonstrated that attentional control can determine what is remembered (Griffin & Nobre, 2003) and that the contents of memory can influence what is attended (Soto, Hodsoll, Rotshtein, & Humphreys, 2008; Sun, Shen, Shaw, Cant, & Ferber, 2015), indicating that these two cognitive faculties are indeed linked. The investigation of how attention contributes to memory representations has been especially pivotal in our understanding of individual differences in visual working memory (VWM) capacity (Engle, 2002; Vogel, McCullough, & Machizawa, 2005; McNab & Klingberg, 2008; Fukuda & Vogel, 2009; Fukuda, Woodman, & Vogel, 2015), where differences in the control of attention have been found to covary with differences in performance in visual working memory tasks. However, it is not clear how the control of attention could contribute to the amount of information encoded into VWM in canonical tasks where no filtering, the simultaneous process of enhancing some while suppressing other items, is required (Luck & Vogel, 1997; Wilken & Ma, 2004). Using a VWM task without any filtering requirement, we show that differences in salience between stimuli—a factor well known to determine the distribution of attention—affect which items are more frequently recalled from VWM, and that an individual’s memory capacity predicts the degree to which their memory performance is susceptible to differences in physical salience.

We used feature singletons (Theeuwes, 1992), which are defined as stimuli that differ from concurrently viewed stimuli along a salient visual dimension (e.g., color). In the same way that target stimuli pop-out from a display when they possess a unique salient feature, allowing for rapid target detection (Treisman & Gelade, 1980), a distractor that possesses a unique feature tends to attract attention in an automatic manner, slowing down processing of the target stimulus (Theeuwes, 1992), unless the appropriate task-set is adopted (Bacon & Egeth, 1994; Theeuwes, 2004; Belopolsky, Zwaan, Theeuwes, & Kramer, 2007). While standard tasks used to measure VWM capacity do not present singletons in memory sample arrays, items that are to be encoded vary in many visual features, leading to an imbalance in salience. Salience itself, of course, is typically task-irrelevant; participants are supposed to simply extract the feature values of the presented items for storage in memory. However, attentional research on singletons demonstrates that ignoring differences in task-irrelevant salience is nearly impossible when all stimuli must be sampled. In other words, given that task-irrelevant singletons reliably attract attention, it is reasonable to assume that singletons, when present, would be rapidly uploaded into VWM and may even be recalled more frequently from VWM than non-singletons. That is, any increase in the memorability of one item could lead to a reduction in the memorability of other items, such that a highly salient item (i.e., a singleton) is encoded at the expense of less salient items (i.e., the non-singleton items). Indeed, to the extent that task-irrelevant salience orients visual attention, singletons may increase memory for items in a similar manner to voluntary attention, directed saccades, and uninformative onsets (Bays & Husain, 2008; Bays et al., 2011; Schmidt et al., 2002). However, task-irrelevant singletons can be ignored successfully when attention is controlled using a top-down set (Bacon & Egeth, 1994; Leber & Egeth, 2006), meaning that salience might not always translate into VWM priority.

To test these two possibilities, we used task-irrelevant singletons to determine whether differences in salience contribute to capacity limitations in VWM compared with displays with homogenous objects. We predicted that in the former displays, singletons would show a memory gain when tested. We further compared the memory for non-singleton objects in these displays to a baseline condition (no singleton, but the same set size) to assess whether the predicted memory gain for the singleton would come at a cost to the non-singleton items. To ensure that we could disentangle differences between graded and discrete changes in VWM representation, participants completed a delayed estimation task (Wilken & Ma, 2004; Zhang & Luck, 2008; Bays, Catalao, & Husain, 2009) where memory error for orientation was measured and fit with a three-component model to obtain estimates of the contribution of different sources of memory error (precision, correct responses, swap responses, and guess responses).

Methods

Participants

Fifty-five undergraduate volunteers participated in this experiment for monetary compensation. All participants were naïve to the experimental hypotheses and provided informed consent before participation in accordance with procedures approved by the University of Toronto Research Ethics Board.

Materials, methods, and procedure

The experiment was conducted on a PC computer equipped with a standard USB mouse and keyboard, and a 40-cm × 30-cm CRT monitor, with a screen resolution of 1280 × 960 pixels and a refresh rate of 85 hz. Stimuli were presented using Matlab (Mathworks, Natick, MA) along with the Psychophysics toolbox (Kleiner et al., 2007) and were viewed from a distance of 40 cm.

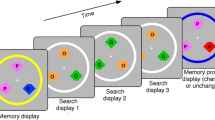

A schematic of the trial types is depicted in Fig. 1. Each session consisted of 5 practice trials and 512 experimental trials, divided into 8 blocks. A trial consisted of four events: an initial fixation display (for 1000 ms), a memory sample display (100 ms, to preclude eye movements), a retention interval (900 ms), and a probe display (until response). The fixation display consisted of a central fixation cross drawn in white in the form of a “+” in Courier New Font at a text size of 18 points (approximately 0.5°), centered on a uniform, gray background. Fixation was not monitored, however, participants were instructed to maintain fixation.

Schematic of the trial types used in the experiment. Memory samples consisted of four isosceles triangles whose orientations were pseudo-randomized to be remembered. For half of all trials, one triangle was colored in a unique color. After a retention interval, one of the four items was probed, and participants reported its previous orientation by adjusting the probe’s orientation. On Singleton Present trials, the singleton was just as likely to be tested as any of the non-singleton items

The memory sample display consisted of four, pseudo-randomly positioned isosceles triangles equidistant from the fixation mark. Participants were to memorize the orientations of each triangle, which were randomized with the constraint that each orientation was a minimum of 30° from all other orientations. The triangles were 2.7° in height, with a base of 1.4°, and appeared 9° from fixation. To ensure that no occlusion occurred, triangles were separated by at least 4.5°, center-to-center. The memory sample display could also differ in the presence or absence of a feature singleton. On No Singleton trials, all four triangles shared the same color, which was randomly sampled from a circular list of L*a*b values, all of which shared a radial distance of 50 units from [70, 0, 0] in L*a*b space, where a and b values could vary, but the luminance (L) was held constant. On Singleton Present trials, one triangle was colored such that it was 90° away in L*a*b color space from the other triangles (either clockwise or counterclockwise) in the circular color list. The triangles were drawn with a 0.4° white border to enhance the contrast from the background.

The retention interval display was identical to the fixation display, except that it lasted for 900 ms, and was followed by a probe display. In this probe display, a single colored circular placeholder, with a radius of 1.3°, was presented in the location of one of the triangles from the sample display. The circular placeholder’s location and color matched one of the four memory sample triangles. Importantly, in the Singleton Present condition, this probe matched the singleton triangle with a frequency of one in four trials, so that there was no strategic incentive to encode the singleton item. Once the mouse cursor was moved away from the center of the screen, the probe was redrawn as a triangle whose orientation pointed towards the current location of the mouse cursor. Participants reported the orientation of the probed item by moving the mouse around the probe stimulus to perceptually match it to the remembered orientation of the probed item. To input a response, participants clicked the mouse. For practice trials only, 1000 ms of feedback was provided after each response, in the form of the triangle being redrawn in its original position.

Results

For each trial, memory error was calculated by subtracting the reported angle of orientation (in degrees) from the actual angle of orientation for the probed object and taking the absolute value. The average error was 41.16°, and the standard error of the sample mean (SEM) was 2.36°.

To assess the effect of irrelevant color singletons on VWM, we calculated average absolute report error for three conditions: No Singleton present (NS), Singleton Present and Non-singleton tested (SPN), and Singleton Present with a Singleton tested (SPS), shown in Fig. 2. One-way, repeated measures ANOVA with Condition (NS, SPN, SPS) as within-subjects factors showed a main effect of Condition, F(2, 106) = 4.03, p = 0.02, η2 = 0.07, such that SPS trials led to better memory performance than NS trials, F(1, 53) = 3.96, p = 0.05, η2 = 0.07, and SPN trial led to poorer memory performance than NS trials, F(1, 53) = 4.34, p = 0.04, η2 = 0.08, as shown by follow-up, pairwise contrast analyses. Thus, irrelevant singletons received a boost in accuracy, and this increase in accuracy came at the expense of memory for non-singletons in the memory array.

Average absolute memory error, in degrees, for the three conditions. NS: No Singleton, SPN: Singleton Present; Non-singleton tested, SPS: Singleton Present; Singleton tested. Error bars represent one within-subjects standard deviation (Cousineau, 2005)

To determine whether the effects in absolute error were driven by a change in memory precision or by a change in the probability of remembering the target item (p(Correct)), we fitted signed response error scores in each condition using the three-component model of VWM (Bays, Catalao, & Husain, 2009). Briefly, this model uses maximum-likelihood estimation to decompose the overall distribution of response error into three different sources: correct responses (i.e., responses represented by a circular normal distribution, i.e., Von Mises, centered on the target item’s value), swap responses (i.e., responses represented by a circular normal distribution centered on each of the non-target items’ values), and guess responses (i.e., a uniform distribution, where every response is equally likely). The model provides three probability values, reflecting the likelihood of each type of responses in the submitted dataset, as well as a measure of memory precision (the standard deviation of the target and non-target distributions). Note that because this estimation procedure uses all responses to estimate parameter presumed to underlie memory performance, it does not classify individual responses into correct responses, swaps, or guesses, rather, the fitting algorithm searches parameter space to optimize parameter estimates in order to yield the best fit to the data.

Given that we observed an effect of singletons on overall memory error, we ran separate one-way, repeated measures ANOVAs to specifically determine which memory parameters were impacted by the presence of a singleton. The results showed that p(Correct), the likelihood of retrieving the orientation of the probed item, however precisely, was modulated by the presence of a singleton, F(2, 106) = 5.82, p = 0.004, η2 = 0.10, with p(Swap) showing a complementary modulation, F(2, 106) = 5.92, p = 0.004, η2 = 0.10, but no other aspects of memory performance (precision, or guess responses) were affected, Fs(2, 106) < 1.02, ps > 0.36, η2s < 0.02. The probability of correctly reporting the tested item’s orientation was 0.56 in the NS condition (SE = 0.03), 0.55 in the SPN condition (SE = 0.03), and 0.59 (SE = 0.03) in the SPS condition. Follow-up contrasts showed that, as with absolute error, singletons were remembered more often than items in the NS condition, F(1, 53) = 5.95, p = 0.018, η2 = 0.10, and non-Singletons were remembered less often than NS items, F(1, 53) = 4.49, p = 0.039, η2 = 0.08. Comparing overall performance on Singleton-Present trials to NS trials, regardless of the tested item, showed a reliable difference, t(53) = 2.35, p = 0.023, such that Singleton Present trials exhibited more correct responses, M SP = 0.58, SE SP = 0.02, M NS = 0.56, SE NS = 0.03, which was driven by a decrease in swap responses, t(53) = 2.07, p = 0.02. Taken together, we conclude that salient items are less likely to be confused with other remembered items but are not remembered with greater precision.

Lastly, we noted that the size of this performance change—from a p(Correct) of 0.56 in the NS condition to a p(Correct) of 0.59 in the SPS condition—was modest. Given that attentional control is known to vary between low- and high-capacity individuals (Fukuda & Vogel, 2009), we assessed the size of the singleton effect (p(Correct) on SPS trials – p(Correct) on SPN trials) as a function of participants’ baseline VWM performance (p(Correct) on NS trials), shown in Fig. 3. A simple linear regression, using heteroscedasticity-consistent standard errors (Hayes & Cai, 2007), showed that 8 % of the variance in p(Correct) change when a singleton appeared in the memory sample was shared with participants’ p(Correct) when stimuli were homogenous, β = −2.00, SE = 0.084, R 2 = 0.081, p = 0.02. Put differently, individuals with lower baseline VWM capacity were more susceptible to singleton capture. To determine the source of the memory change, we further regressed the change in the two types of memory failures (p(Swap) and p(Guess)) between the SPS and SPN conditions with participants’ baseline memory performance (p(Correct) in the NS condition; see Appendix for graphical depictions). The resulting regressions showed a marginal relationship between low VWM performance in NS trials and likelihood of guessing the orientation of a non-Singleton compared with a singleton on Singleton Present trials, β = 0.25, SE = 0.14, R 2 = 0.081, p = 0.08, and no relationship between baseline VWM performance and the probability of a swap error for Singleton and non-Singleton items, β = −0.04, SE = 0.073, R 2 = 0.007, p = 0.58. Thus, it appears that individual differences in the effect of a task-irrelevant singleton are better characterized as a bias to encode the singleton at the expense of non-singletons, as opposed to a change in the color-based grouping of items in VWM that could have led to increased swaps between non-singletons.

Discussion

We examined the contribution of visual salience to the temporary storage of visual information. When a unique item appeared in a to-be-remembered display, this item was more likely to be recalled, at the expense of non-unique items. Decomposing performance into different sources of memory error (i.e., Precision, Swap errors, and Guess errors) revealed that singletons were more often discretely remembered than non-singletons but not remembered with greater precision. Critically, this effect existed in the absence of any incentive to remember the salient item; its unique color was completely task-irrelevant. Additionally, we have shown that individuals with lower baseline VWM capacity, as measured by performance on trials with no singleton (NS), are more susceptible to task-irrelevant salience. Our results are consistent with existing models that include attentional priority as a factor determining encoding into VWM (Bundesen, 1990; Bowman & Wyble, 2007). The effects of task-irrelevant visual salience can have cascading implications beyond perception, influencing what can be recalled from VWM.

A number of studies have shown that attention can determine what information will be stored in VWM. For instance, providing cues as to which object is likely to be tested will increase its odds of surviving the capacity limits of VWM at the expense of memory for other objects both before (Bays & Husain, 2008; Bays et al., 2011; Zhang & Luck, 2008) and after (Griffin & Nobre, 2003; Zhang & Luck, 2008; Sligte, Scholte, & Lamme, 2008) encoding. While this demonstrates an ability to strategically allocate VWM resources, investigations of individual differences have shown that the allocation of VWM resources is not always optimal. This conclusion is largely drawn from performance in tasks where some, but not all, items in a display must be encoded into VWM. In these tasks, participants who perform poorly in standard VWM tasks also tend to perform poorly in filtering conditions (Vogel, McCullough, & Machizawa, 2005; Fukuda & Vogel, 2009).

Very few studies, however, have investigated whether differences in attentional control can account for variability in the ability to store information in VWM when no filtering is necessary. A recent exception is the work of Fukuda, Woodman, and Vogel (2015), who have argued that the decreased ability to control attention at encoding contributes to the poor performance at high set sizes. Specifically, when more items are presented than can be successfully encoded, the competition between multiple items interferes with the successful encoding of items, thus implicating attentional control as a factor in VWM capacity even when all items are equally relevant. Our results extend this argument in two important ways. First, by controlling the task-irrelevant salience of to-be-remembered items, we have shown that differences in salience between items can cause VWM resources to be unevenly allocated within a set of task-relevant items. Furthermore, salient items are more likely to be encoded for those with lower capacity. Second, our results show that capacity does not need to be exceeded by much before attentional control becomes a limiting factor in performance; our experiment used a set size of 4, typically used as a baseline from which the effect of exceeding capacity is measured (Fukuda, Woodman, & Vogel, 2015; Pailian & Halberda, 2015).

The effect of singletons on visual search has been attributed largely to the preattentive stage of vision, such that it reliably affects search behavior only when target identification is driven by a global analysis of the search display (Theeuwes 2004; Belopolsky et al., 2007). Coupling this conclusion with the results of the present experiment, we suggest that differences in salience reduce the ability to equally prioritize all items in memory. Given that the change detection and delayed estimation tasks normally used to assess VWM test memory for individuated items, it would be sensible to encode and store items as separate pieces of information, each with equal priority (unless some items are tested more than others). This is not to say that participants should not selectively encode items, but any selection should be task-relevant. Individuals with low VWM capacity appear to be more strongly affected by task-irrelevant stimulus differences; in our task, color was task-irrelevant and thus did not carry any predictive values pertaining to the information that would be important. This is consistent with Fukuda and Vogel’s (2011) findings that individuals with low capacity have difficulties ignoring irrelevant items that share a feature with a to-be-detected target. Together, these results point to the conclusion that those who perform poorly on VWM tasks have difficulty restricting attention to task-relevant information, whether that requires segregating items by color (Vogel, McCullough, & Machizawa, 2005) or ignoring irrelevant color differences, as in the current study.

The present results further highlight the importance of balancing the salience of to-be-remembered items when measuring individual differences in VWM capacity. Although it is assumed that all items in a memory array will be equally attended when no strategic incentive is provided towards any given stimulus, our results indicate that this assumption should be revised. Differences in physical salience between items are associated with an uneven distribution of attention to items in a display, and these differences will more strongly affect those who tend to perform more poorly in VWM tasks. Although laboratory tasks for measuring VWM capacity tend to use simple, geometric stimuli, even low-level differences can affect subsequent memory; uniqueness in location improves VWM encoding (Emrich & Ferber, 2012), and color homogeneity improves change detection (Lin & Luck, 2009). Both results are consistent with the notion that differences in salience are able to create an uneven distribution of VWM resources. Given the numerous attributes that are argued to reflexively attract attention (e.g., emotional valence: Yiend, 2010; reward history; Anderson, Laurent, & Yantis, 2011; bottom-up priming: Theeuwes, Reimann, & Mortier, 2006) assessing the relationship between salience—broadly construed—and memorability is likely to be an important step in understanding how visual working memory supports cognition and action in real-world contexts.

References

Anderson, B. A., Laurent, P. A., & Yantis, S. (2011). Value-driven attentional capture. Proceedings of the National Academy of Sciences, 108(25), 10367–10371.

Awh, E., & Jonides, J. (2001). Overlapping mechanisms of attention and spatial working memory. Trends in Cognitive Sciences, 5(3), 119–126.

Bacon, W. F., & Egeth, H. E. (1994). Overriding stimulus-driven attentional capture. Perception & Psychophysics, 55(5), 485–496.

Bays, P. M., Catalao, R. F., & Husain, M. (2009). The precision of visual working memory is set by allocation of a shared resource. Journal of Vision, 9(10), 1–11.

Bays, P. M., Gorgoraptis, N., Wee, N., Marshall, L., & Husain, M. (2011). Temporal dynamics of encoding, storage, and reallocation of visual working memory. Journal of Vision, 11(10), 6.

Bays, P. M., & Husain, M. (2008). Dynamic shifts of limited working memory resources in human vision. Science, 321(5890), 851–854.

Belopolsky, A. V., Zwaan, L., Theeuwes, J., & Kramer, A. F. (2007). The size of an attentional window modulates attentional capture by color singletons. Psychonomic Bulletin & Review, 14(5), 934–938.

Bowman, H., & Wyble, B. (2007). The simultaneous type, serial token model of temporal attention and working memory. Psychological Review, 114(1), 38–70.

Bundesen, C. (1990). A theory of visual attention. Psychological Review, 97(4), 523–547.

Chun, M. M. (2011). Visual working memory as visual attention sustained internally over time. Neuropsychologia, 49(6), 1407–1409.

Cousineau, D. (2005). Confidence intervals in within-subject designs: A simpler solution to Loftus and Masson’s method. Tutorials in Quantitative Methods for Psychology, 1(1), 42–45.

Emrich, S. M., & Ferber, S. (2012). Competition increases binding errors in visual working memory. Journal of Vision, 12(4), 1–16.

Engle, R. W. (2002). Working memory capacity as executive attention. Current Directions in Psychological Science, 11(1), 19–23.

Fukuda, K., & Vogel, E. K. (2009). Human variation in overriding attentional capture. The Journal of Neuroscience, 29(27), 8726–8733.

Fukuda, K., & Vogel, E. K. (2011). Individual differences in recovery time from attentional capture. Psychological Science, 22(3), 361–368.

Fukuda, K., Woodman, G. F., & Vogel, E. K. (2015). Individual differences in visual working memory capacity: Contributions of attentional control to storage. In P. Jolicoeur, C. Lefebvre, & J. Martinez-Trujillo (Eds.), Mechanisms of sensory working memory: Attention and performance XXV. London: Elsevier.

Griffin, I. C., & Nobre, A. C. (2003). Orienting attention to locations in internal representations. Journal of Cognitive Neuroscience, 15(8), 1176–1194.

Hayes, A. F., & Cai, L. (2007). Using heteroscedasticity-consistent standard error estimators in OLS: regression: An introduction and software implementation. Behavior Research Methods, 39(4), 709–722.

Kiyonaga, A., & Egner, T. (2013). Working memory as internal attention: Toward an integrative account of internal and external selection processes. Psychonomic Bulletin & Review, 20(2), 228–242.

Kleiner, M., Brainard, D., Pelli, D., Ingling, A., Murray, R., & Broussard, C. (2007). What’s new in psychtoolbox-3. Perception, 36(14), 1.

Leber, A. B., & Egeth, H. E. (2006). It’s under control: Top-down search strategies can override attentional capture. Psychonomic Bulletin & Review, 13(1), 132–138.

Lin, P. H., & Luck, S. J. (2009). The influence of similarity on visual working memory representations. Visual Cognition, 17(3), 356–372.

Luck, S. J., & Vogel, E. K. (1997). The capacity of visual working memory for features and conjunctions. Nature, 390(6657), 279–281.

McNab, F., & Klingberg, T. (2008). Prefrontal cortex and basal ganglia control access to working memory. Nature Neuroscience, 11(1), 103–107.

Pailian, H., & Halberda, J. (2015). The reliability and internal consistency of one-shot and flicker change detection for measuring individual differences in visual working memory capacity. Memory & Cognition, 43(3), 397–420.

Postle, B. R. (2006). Working memory as an emergent property of the mind and brain. Neuroscience, 139(1), 23–38.

Schmidt, B. K., Vogel, E. K., Woodman, G. F., & Luck, S. J. (2002). Voluntary and automatic attentional control of visual working memory. Perception & Psychophysics, 64(5), 754–763.

Sligte, I. G., Scholte, H. S., & Lamme, V. A. (2008). Are there multiple visual short-term memory stores. PLoS One, 3(2), e1699.

Soto, D., Hodsoll, J., Rotshtein, P., & Humphreys, G. W. (2008). Automatic guidance of attention from working memory. Trends in Cognitive Sciences, 12(9), 342–348.

Sun, S. Z., Shen, J., Shaw, M., Cant, J. S., & Ferber, S. (2015). Automatic capture of attention by conceptually generated working memory templates. Attention, Perception, & Psychophysics, 77(6), 1841–1847.

Theeuwes, J. (1992). Perceptual selectivity for color and form. Perception & Psychophysics, 51(6), 599–606.

Theeuwes, J. (2004). Top-down search strategies cannot override attentional capture. Psychonomic Bulletin & Review, 11(1), 65–70.

Theeuwes, J., Reimann, B., & Mortier, K. (2006). Visual search for featural singletons: No top- down modulations, only bottom-up priming. Visual Cognition, 14(4-8), 466–489.

Treisman, A. M., & Gelade, G. (1980). A feature-integration theory of attention. Cognitive Psychology, 12(1), 97–136.

Vogel, E. K., McCollough, A. W., & Machizawa, M. G. (2005). Neural measures reveal individual differences in controlling access to working memory. Nature, 438(7067), 500–503.

Wilken, P., & Ma, W. J. (2004). A detection theory account of change detection. Journal of Vision, 4(12), 1120–1135.

Yiend, J. (2010). The effects of emotion on attention: A review of attentional processing of emotional information. Cognition and Emotion, 24(1), 3–47.

Zhang, W., & Luck, S. J. (2008). Discrete fixed-resolution representations in visual working memory. Nature, 453(7192), 233–235.

Author Note

This research was supposed by NSERC Discovery grants to Jay Pratt (194537) and Susanne Ferber (216203-13), an OGS Scholarship to Sol Z. Sun, and an NSERC Scholarship (PGS-D) to Jason Rajsic. The authors thank Alexander Fung and Nafisa Bhuiyan for their help with data collection.

Author information

Authors and Affiliations

Corresponding author

Appendix: Individual differences figures

Appendix: Individual differences figures

Individual performance as a function of baseline memory performance: p(Correct). a p(Correct) for trials with a singleton present, regardless of the tested item. b p(Correct) for trials with a singleton present, with singleton test and non-singleton test performance separated. c p(Guess) for trials with a singleton present, with test-types separated. d p(Swap) for trials with a singleton present, with test-types separated

Rights and permissions

About this article

Cite this article

Rajsic, J., Sun, S.Z., Huxtable, L. et al. Pop-out and pop-in: Visual working memory advantages for unique items. Psychon Bull Rev 23, 1787–1793 (2016). https://doi.org/10.3758/s13423-016-1034-5

Published:

Issue Date:

DOI: https://doi.org/10.3758/s13423-016-1034-5