Abstract

The medial prefrontal cortex (mPFC) and the core region of the nucleus accumbens (AcbC) are key regions of a neural system that subserves risk-based decision making. Here, we examined whether dopamine (DA) signals conveyed to the mPFC and AcbC are critical for risk-based decision making. Rats with 6-hydroxydopamine or vehicle infusions into the mPFC or AcbC were examined in an instrumental task demanding probabilistic choice. In each session, probabilities of reward delivery after pressing one of two available levers were signaled in advance in forced trials followed by choice trials that assessed the animal’s preference. The probabilities of reward delivery associated with the large/risky lever declined systematically across four consecutive blocks but were kept constant within four subsequent daily sessions of a particular block. Thus, in a given session, rats need to assess the current value associated with the large/risky versus small/certain lever and adapt their lever preference accordingly. Results demonstrate that the assessment of within-session reward probabilities and probability discounting across blocks were not altered in rats with mPFC and AcbC DA depletions, relative to sham controls. These findings suggest that the capacity to evaluate the magnitude and likelihood of rewards associated with alternative courses of action seems not to rely on intact DA transmission in the mPFC or AcbC.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

For optimal decision making in an uncertain and changing environment, animals often need to evaluate the expected costs and benefits of the available response options—for example, the magnitude and likelihood of rewards associated with alternative courses of action. Considerable evidence suggests that animals as diverse as fish, birds, and mammals are able to estimate the probability (“risk”) of obtaining different rewards when making decisions as to which course of action to choose (Kacelnik & Bateson, 1997). Furthermore, when offered a choice between response options with small/certain and large/risky rewards, many animals, including humans, prefer the small/certain response option and, therefore, display risk aversion (Kacelnik & Bateson, 1997; Stephens & Krebs, 1986).

Recent studies suggest that the medial prefrontal cortex (mPFC) and the nucleus accumbens (Acb) are key structures of a neural system that subserves risk-based decision making, with risk referring to choice situations with known distributions of possible rewards associated with the available response options. For instance, in rats tested in probabilistic choice tasks, transient or permanent inactivation of the mPFC and Acb, but not of the anterior cingulate cortex and orbitofrontal cortex, altered the preferences for large/risky reward relative to small/certain reward (Cardinal & Howes, 2005; St Onge & Floresco, 2010; Stopper & Floresco, 2011). Furthermore, a growing body of evidence suggests that brain dopamine (DA) systems are involved in different forms of cost/benefit-related decision making (Cousins, Atherton, Turner, & Salamone, 1996; Gan, Walton, & Phillips, 2010; Mai, Sommer, & Hauber, 2012; Ostlund & Maidment, 2012; Phillips, Walton, & Jhou, 2007; Schweimer & Hauber, 2006; Schweimer, Saft, & Hauber, 2005), including risk-based decision making (Day, Jones, Wightman, & Carelli, 2010; Simon et al., 2011; St Onge, Abhari, & Floresco, 2011; St Onge, Chiu, & Floresco, 2010; Sugam, Day, Wightman, & Carelli, 2012; Zeeb, Robbins, & Winstanley, 2009). Recent electrophysiological studies demonstrated that midbrain DA neurons are activated by stimuli predicting risky rewards and generate a neuronal signal that varies monotonically with risk (Fiorillo, Tobler, & Schultz, 2003). Moreover, drug-induced manipulations of DA activity influence risk-based decision making. For instance, alcohol can alter risk-based decision making by compromising DA signaling to risk (Nasrallah et al., 2011). Furthermore, a systemic D1- and/or D2-receptor blockade in rats markedly reduced the preference for large/risky rewards, suggesting that a decreased brain DA transmission can reduce risky choice, possibly due to increased probability discounting (St Onge & Floresco, 2009). However, little is known about the contribution of DA signals in target regions of midbrain DA neurons in controlling risk-based decision making. Recent studies demonstrated that prefrontal DA receptors contribute in a dissociable manner to risk-based decision making; that is, an intra-mPFC D1-receptor blockade reduced risky choice, whereas an intra-mPFC D2-receptor blockade increased risky choice (St Onge et al., 2011). Furthermore, phasic DA release in the core subregion of the nucleus accumbens (AcbC) encodes the subjective value of upcoming rewards, a signal that may influence decisions to take risks (Sugam et al., 2012). Here, we sought to characterize the role of DA in risk-based decision making in more detail and examined in rats the effects of DA depletions in the mPFC (Experiment 1) and AcbC (Experiment 2) in a probabilistic choice task.

Method

Experiment 1

Subjects

Naive male Lister hooded rats (Harlan Winkelmann, Borchen, Germany) weighting between 200 and 224 g upon arrival were used. They were housed in groups of up to 4 animals in transparent plastic cages (55 × 39 × 27 cm; Ferplast, Nuernberg, Germany) in a 12:12-h light:dark cycle (lights on at 7.00 a.m.) with ad lib access to water. Standard laboratory chow (Altromin, Lage, Germany) was given ad lib for at least 5 days after arrival. Thereafter, food was restricted to 15 g per animal and day to maintain them at approximately 85 % of their free-feeding weight. For environmental enrichment, a plastic tube (20 cm, Ø 12 cm) was fixed on the lid of each cage. Temperature (22 ± 2 °C) and humidity (50 % ± 10 %) were kept constant in the animal house. All animal experiments were performed according to the German Law on Animal Protection and were approved by the proper authorities.

Apparatus

Training and testing took place in identical operant chambers (24 × 21 × 30 cm; Med Associates, St. Albans, VT) housed within sound-attenuating cubicles. An electric fan integrated into the cubicle provided a constant background noise. One wall of each operant chamber contained a central food magazine where casein pellets (45-mg dustless precision pellets; Bioserv, Frenchtown, NJ) were delivered by a pellet dispenser. The food magazine was equipped with an infrared head entry detector, and a stimulus light was installed above the food magazine. Two retractable levers were on either side of the food magazine. A houselight mounted on the top center of the opposite wall illuminated the chamber. A computer system (MedPCSoftware; Med Associates) controlled the equipment and recorded the data.

Habituation and leverpress training

First, all rats were habituated to the operant chamber on two consecutive days in a 15-min session with free access to 25 pellets on the food magazine. The chamber was illuminated by the houselight and stimulus light. Thereafter, rats received a 30-min session with pellet delivery after a variable interval (average interval, 60 s; minimum interval, 10 s; maximum interval, 110 s); pellet delivery was paired with illumination of the stimulus light. After habituation, animals were trained for 5 days in daily 15-min sessions to leverpress using a fixed ratio-1 (FR-1) schedule, first for one lever and then repeated for the other lever. Each lever was extended for the entire session, and the houselight was always illuminated.

Subsequently, rats were trained on a simplified version of the full task for 3 days with one daily session. Each of the 48 training trials per session started with houselight and stimulus light illumination and with the levers retracted. A nose-poke response in the food magazine within 10 s extinguished the stimulus light and extended one of the levers. Each lever was presented on 24 trials; the order of presentation was chosen pseudorandomly. If the rat responded to the lever within 10 s, the lever retracted, and a single pellet was delivered. Then the houselight was extinguished, and the intertrial interval (ITI), set at 35 s, was started. Failure to respond within 10 s, either by starting the trial with a nose-poke response or by leverpressing, resulted in a time-out period of 35 s, during which all lights were extinguished and levers retracted. These trials were recorded as omissions. Rats were removed once all trials were completed or after 45 min had elapsed. After leverpress training, 1 animal was excluded due to a strong bias to one lever.

Probabilistic choice task

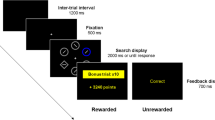

The probabilistic choice task was based on procedures described by Cardinal and Howes (2005) and Nasrallah, Yang and Bernstein (2009) (see Fig. 1). For all testing sessions, one of the two levers was designated as being the small/certain lever, and the other as the large/risky lever, which remained constant for each animal throughout the study and was counterbalanced across rats. Choice of the certain lever had a certain delivery of one pellet and choice of the risky lever had a probabilistic delivery of four pellets. The probabilistic delivery of the risky lever changed in the course of the experiment, but probabilities were kept static within a session and were altered only between sessions.

Schematic of a single free-choice trial in the probabilistic choice task: Choice of the small/certain lever led to a certain delivery of one pellet, and choice of the large/risky lever led to a delivery of four pellets. The probability of delivery of the large/risky lever was varied systematically across blocks from p = 1, to .5, .25, and .125

Each daily 30-min session consisted of 24 forced trials followed by 24 free-choice trials. Each trial started with houselight and stimulus light illumination and with the levers retracted. Upon a nose-poke response to the food magazine within 10 s, the stimulus light extinguished. On forced trials, one of the levers was extended to expose a rat to a response option and its associated value. Each lever was presented on 12 forced trials; the order of presentation was chosen pseudorandomly. On free-choice trials, both levers were extended at the same time. If the rat responded to a lever on forced or choice trials within 10 s, all levers were retracted, and a reward was delivered on the basis of the associated probability. Multiple pellets were delivered 0.5 s apart. After pellet delivery, the houselight was extinguished to mark the end of a trial. The ITI was set at 35 s. Failure to respond within 10 s, either by starting the trial with a nose-poke response or by leverpressing, resulted in a time-out period of 35 s, during which all lights were extinguished and levers retracted. These trials were recorded as omissions.

After termination of leverpress training, rats were tested preoperatively on the probabilistic choice task on four consecutive days, with the probability of obtaining large/risky reward (4 pellets) set to p = 1 and with the small/certain lever leading to a delivery of 1 pellet (p = 1). Thereafter, animals were subjected to stereotaxic surgery. After a recovery period of at least 5 days, rats were tested in the probabilistic choice task, with probabilities of obtaining the large/risky reward systematically declining across four blocks. Each block comprised four sessions, one per day. In block 1 (four pellets at p = 1, denoted as “4p at p = 1”), the probability of obtaining the large/risky reward was set to p = 1; in block 2 (“4p at p = .5”) to p = .5; in block 3 (“4p at p = .25”) to p = .25; and in block 4 (“4p at p = .125”) to p = .125; with the probability of getting a small reward of one pellet always set at p = 1 (denoted as “1p at p = 1”). Programmed and experienced probabilities can differ in such probabilistic tasks—in particular, if a limited number of forced trials were given to assess low reward probabilities. For instance, in block 4, if using programmed probabilities on 12 forced trials to the large/risky lever (“4p at p = .125”), theoretically, one rat could experience 3 rewarded and 9 unrewarded trials (p experienced = .25), whereas another rat could experience 0 rewarded and 12 unrewarded trials (p experienced = 0). An earlier study provided evidence that such effects may not influence choice behavior (Cardinal & Howes, 2005). Nevertheless, in our task, rewards across blocks with different reward probabilities were delivered in a pseudorandom, rather than probabilistic, manner to exclude such discrepancies in experienced probabilities. On choice trials, large/risky and small/certain leverpresses were measured. Additionally, body weights were monitored across blocks.

Surgery

Subjects received either 6-OHDA lesions or sham lesions of the mPFC. After pretreatment with atropine sulfate (0.05 mg/kg, s.c.; Braun, Melsungen, Germany), the animals were anesthetized with sodium pentobarbital (60 mg/kg, i.p.; Narcoren, Merial, Hallbergmoos, Germany) and xylazine (4 mg/kg, i.m.; Rompun, Bayer, Leverkusen, Germany) and were secured in a stereotaxic apparatus with atraumatic ear bars (Kopf Instruments, Tujunga, USA). The skull was exposed, and two small holes were drilled bilaterally above the target region. For DA depletions of the mPFC, animals received bilateral injections of 6 μg 6-OHDA hydrochlorid (Sigma-Aldrich, Steinheim, Germany) in 0.4 μl saline containing 0.01 % ascorbic acid (Sigma-Aldrich, Steinheim, Germany), using a 1-μl Hamilton syringe, at the following coordinates: AP + 3.0 mm; ML ± 0.8 mm; DV − 4.5 mm and −3.5 mm (tooth bar at −3.3 mm below interaural line according to the atlas of Paxinos & Watson, 1998). Sham controls received injections of 0.4 μl saline containing 0.01 % ascorbic acid at the same coordinates. Infusion time was 2 min, and the needle was left in place for an additional 5 min to allow for diffusion. After surgery, rats received an injection of 2 ml saline and an analgesic drug (Rimadyl; Pfizer, Karlsruhe, Germany; 4 mg/kg, s.c.).

Histology

Tyrosine hydroxylase (TH) immunohistochemistry was used to assess the exact location and extent of the loss of DA terminals within the mPFC. On completion of the behavioral testing, animals were euthanized by an overdose of isoflurane (Abbott, Wiesbaden, Germany) and were perfused transcardially with 0.01 % heparin sodium salt in phosphate-buffered saline (PBS) followed by 4 % paraformaldehyde in PBS. The brains were removed, postfixed in paraformaldehyde for 24 h, and dehydrated in 30 % sucrose for at least 48 h. Coronal brain sections were cut (30 μm; Microm, Walldorf, Germany) in the region of the mFPC. The slices were initially washed in TRIS-buffered saline (TBS; 3 × 10 min), treated for 15 min with TBS containing 2 % hydrogen peroxide and 10 % methanol, washed again in TBS (3 × 10 min), and then blocked for 20 min with 4 % natural horse serum (NHS; Vector Laboratories, Burlingame, CA) in TBS containing 0.2 % Triton X-100 (TBS-T; Sigma Aldrich). Slices were incubated overnight at 4 °C in a primary antibody (mouse, anti-TH, 1:7,500 in TBS-T containing 4 % NHS; Immunostar, Hudson, WI), then washed in TBS-T (3 × 10 min) and incubated in a secondary antibody (horse, antimouse, rat-adsorbed, biotinylated IgG (H + L), 1:500 in TBS-T containing 4 % NHS; Vector Laboratories) for 90 min at room temperature. Using the biotin-avidin system, slices were washed in TBS-T containing the avidin–biotinylated enzyme complex (1:500, ABC-Elite Kit; Vector Laboratories) for 60 min at room temperature, washed in TBS (3 × 10 min) and stained with 3,3′-diaminobenzidine (DAB Substrate Kit, Vector Laboratories). The brain slices were then washed in TBS (3 × 10 min), mounted on coated slides, dried overnight, dehydrated in ascending alcohol concentrations, treated with xylene, and finally coverslipped using DePex (Serva, Heidelberg, Germany). To determine the size and placement of the lesions, the TH-immunoreactivity was analyzed under a microscope, with reference to the atlas of Paxinos and Watson (1998).

Data analysis and statistics

Percentage choices of the large/risky reward lever and body weights are given as means ± standard error of the mean (SEM). Data were subjected to a repeated measures analysis of variance (ANOVA) with three within-subjects factors (block, days of testing, large reward probability condition) and one between-subjects factor (treatment). All statistical computations were carried out with STATISTICA (version 7.1, StatSoft, Inc., Tulsa, OK). The level of statistical significance (α-level) was set at p ≤ .05 (α-levels > .05 were designated as n.s., not significant).

Experiment 2

Unless otherwise noted, the same procedures as those in Experiment 1 were used.

Habituation and leverpress training

Animals in Experiment 2 had 5 days of leverpress training (Experiment 1: 2 days) and were trained for 2 days (Experiment 1: 3 days) in the simplified version of the full task.

Surgery

For DA depletions of the AcbC, animals received bilateral injections of 6 μg 6-OHDA hydrochlorid (Sigma-Aldrich, Steinheim, Germany) in 0.4 μl saline containing 0.01 % ascorbic acid (Sigma-Aldrich, Steinheim, Germany) at the following coordinates, using a 1-μl Hamilton syringe: AP + 1.2 mm; ML ± 2.1 mm; DV − 7.0 mm (tooth bar at −3.3 mm below interaural line according to the atlas of Paxinos & Watson, 1998). Sham AcbC controls received injections of 0.4 μl saline containing 0.01 % ascorbic acid at the same coordinates. Infusion time was 4 min, and the needle was left in place for an additional 6 min to allow for diffusion.

Results

Experiment 1

Histology

The lesion size and placement were assessed by reconstructing the damaged areas on stereotaxic atlas templates from Paxinos and Watson (1998). No animal was excluded due to misplaced mPFC DA depletions. Sample sizes were as follows: sham controls, n = 14; rats with mPFC DA depletions, n = 15. Figure 2 provides photomicrographs of a representative sham-lesioned rat and a 6-OHDA-induced lesion. TH-positive fibers in the mPFC were abundant in sham-lesioned rats, as shown by earlier studies (Berger, Gaspar & Verney, 1991; Berger, Thierry, Tassin, & Moyne, 1976), but were rare in rats with 6-OHDA lesions. The areas that were nearly devoid of TH immunoreactivity appeared from about 3.7 mm to 2.2 mm relative to bregma and were restricted predominantly to the prelimbic and infralimbic cortex, with minimal damage to the anterior cingulate cortex.

Loss of TH-positive fibers in the mPFC of 6-OHDA-lesioned animals in Experiment 1. Representative microphotographs of a 6-OHDA-induced loss of TH-immunoreactive fibers in the mPFC (middle, mPFC sham lesion; bottom, mPFC DA depletion). Top: Schematic representation of coronal section in which photographs were taken (bregma + 2.2 mm) (Paxinos & Watson, 1998). Arrows indicate dopaminergic fibers; cc: corpus callosum

Choice behavior

Preoperatively, rats to be subjected to sham lesions and mPFC DA depletions displayed a clear preference (~70 %) for choosing the large/risky lever (Fig. 3). An ANOVA showed that there were no significant main effects of treatment, F(1, 27) = 1.79, n.s., but there was a significant main effect of days, F(3, 81) = 10.76, p < .001. Furthermore, there was no day × treatment interaction, F(3, 81) = 1.81, n.s. Results further revealed that choice behavior of sham controls and rats with mPFC DA depletions was sensitive to changes of large reward probabilities across blocks, but, notably, a lesion effect was not evident. In line with this description, an ANOVA revealed main effects of block, F(3, 81) = 220.73, p < .001, and days, F(3, 81) = 40.59, p < .001, but no main effect of treatment, F(1, 27) = 0.34, n.s. Furthermore, there was no block × treatment interaction, F(3, 81) = 0.42, n.s., but there was a significant block × day interaction, F(9, 243) = 7.40, p < .001. Furthermore, there was no block × day × treatment interaction, F(9, 243) = 0.30, n.s.

Effects of 6-OHDA lesions of the mPFC on the probabilistic choice. Mean (± SEM) percentages of large/risky lever choices per day in sham-lesioned (n = 14, filled circles) and 6-OHDA-lesioned (n = 15, open circles) rats are given. During postoperative testing, the probability of obtaining the large/risky reward systematically declined across the four blocks

Body weights

At time of surgery, body weights of the sham controls and rats with mPFC DA depletion did not differ [sham controls, 272.1 ± 3.5 g; lesioned rats, 275.3 ± 4.1 g; t(27) = 0.59, n.s.]. Postoperatively, body weight gain was marginally enhanced in rats with mPFC DA depletions, relative to sham controls. An ANOVA revealed significant main effects of days, F(1, 27) = 72.80, p < .001, but not of treatment, F(1, 27) = 1.48, n.s., and there was a day × treatment interaction, F(1, 27) = 6.04, p < .05. Body weights at the last test day were 297.1 ± 4.8 g (sham controls) and 309.7 ± 4.5 g (rats with mPFC DA depletion).

Experiment 2

Histology

The lesion size and placement were assessed by reconstructing the damaged areas on stereotaxic atlas templates from Paxinos and Watson (1998). Due to incomplete AcbC DA depletions, 3 animals were excluded from further analysis; ultimate sample sizes were as follows: sham controls, n = 14; rats with AcbC DA depletions, n = 11. Figure 4a provides a schematic representation of the animals with the minimum and the maximum extent of AcbC lesion, respectively, as well as an animal with a representative AcbC lesion. A photomicrograph of a representative 6-OHDA-induced lesion is shown in Fig. 4b. TH-positive fibers in the AcbC were abundant in sham-lesioned rats but were rare in rats with 6-OHDA lesions. In most animals, loss of TH-positive fibers in the AcbC appeared from about 2.2 to 0.2 mm relative to bregma, with the maximum extension at approximately 1.2 mm relative to bregma. Furthermore, 6-OHDA lesions largely spared the shell region of the Acb.

a Loss of TH-positive fibers in the AcbC of 6-OHDA-lesioned animals in Experiment 2. The drawing show a reconstruction of the regions that were nearly devoid of TH-immunoreactive fibers, indicating in each slice the animal with the largest lesion (gray areas) and the animal with the smallest lesion (black areas). An animal with a representative lesion is also shown (cross-hatched areas). The numbers indicate the distances from bregma, in millimeters. b Representative photomicrograph of a 6-OHDA-induced loss of TH-immunoreactive fibers in the AcbC (top, AcbC sham lesion; bottom, AcbC-DA depletion)

Choice behavior

As is shown in Fig. 5, preoperatively, rats to be subjected to sham lesions and AcbC DA depletions displayed a strong preference (~95 %) for choosing the large/risky lever. An ANOVA showed that there were no significant main effects of treatment, F(1, 23) = 2.39, n.s., and of days, F(3, 69) = 0.68, n.s., and there was no day × treatment interaction, F(3, 69) = 1.60, n.s.

Effects of 6-OHDA lesions of the AcbC on the probabilistic choice. Mean (± SEM) percentages of large/risky lever choices per day in sham-lesioned (n = 14, filled circles) and 6-OHDA-lesioned (n = 11, open circles) rats are given. During postoperative testing the probability of obtaining the large/risky reward systematically declined across the four blocks

Results further revealed that choice behavior of sham controls and rats with AcbC DA depletions was sensitive to changes of large reward probabilities across blocks, but, importantly, there was no discernible lesion effect. In line with this description, an ANOVA revealed significant main effects of block, F(3, 69) = 206.63, p < .001, and days, F(3, 69) = 73.89, p < .001, but no main effect of treatment, F(1, 23) = 0.26, n.s. Furthermore, there was no block × treatment interaction, F(3, 69) = 0.06, n.s., but there was a block × day interaction, F(9, 207) = 7.65, p < .001, and there was no block × day × treatment interaction, F(9, 207) = 1.08, n.s.

Body weights

At time of surgery, body weights of the sham controls and rats with AcbC DA depletion did not differ [sham controls, 278.4 ± 3.5 g; lesioned rats, 274.0 ± 4.6 g; t(23) = 0.77, n.s.]. However, postoperatively, the body weight gain over days was somewhat lower in rats with AcbC DA depletion relative to sham controls. In line with this observation, an ANOVA revealed main effects of days, F(1, 23) = 150.97, p < .001, and treatment, F(1, 23) = 5.90, p < .05, as well as a block × day interaction, F(3, 69) = 50.40, p < .001. Body weights at the last test day were 305.1 ± 4.1 g (sham controls) and 292.2 ± 4.7 g (rats with AcbC DA depletion).

Discussion

This study demonstrates that, relative to sham controls, the assessment of reward probabilities, as well as probability discounting, was not altered in rats with prefrontal and Acb DA depletions. Thus, the capacity to evaluate the magnitude and likelihood of rewards associated with alternative courses of action seems not to rely on intact DA transmission in the mPFC or AcbC.

Probabilistic choice task

In each session, probabilities of reward delivery were signaled in advance on forced trials followed by choice trials that assessed the animal’s preference. The probabilities of reward delivery associated with the large/risky lever declined systematically over four consecutive blocks but were kept constant within 4 subsequent daily sessions of a particular block, as in related choice paradigms (Schweimer & Hauber, 2006; Walton, Bannerman, Alterescu, & Rushworth, 2003). Thus, in a given session, rats need to assess the current value associated with the large/risky versus small/certain lever and adapt their lever preference accordingly.

As was demonstrated in Experiments 1 and 2, in the present task, sham controls consistently displayed probability discounting across blocks, with indifferent choice behavior in the neutral block (four pellets at p = .25 vs. one pellet at p = 1) as predicted by the value matching law (Herrnstein, 1961). Furthermore, consistent with theoretical (Herrnstein, 1961) and empirical (Kacelnik & Bateson, 1997) accounts, sham controls showed risk aversion if the large reward was relatively uncertain (four pellets at p = .125 vs. one pellet at p = 1) as also had been observed in various earlier studies (Cardinal & Howes, 2005; Mobini et al., 2002; Simon, Gilbert, Mayse, Bizon, & Setlow, 2009). Some probabilistic choice tasks, rather than across-block shifts as used here, involved within-session shifts of reward probabilities (Cardinal & Howes, 2005; St Onge & Floresco, 2009), demanding a persistent evaluation and updating of the current values associated with the available response options. Probably due to enhanced cognitive demands, including sustained attention and switching, such tasks require extended training over 12–26 sessions to achieve stable performance (Cardinal & Howes, 2005; Floresco & Whelan, 2009). By contrast, our task with fixed within-session probabilities was less demanding and, thus, relatively easy to learn with limited pretraining (4 sessions), as also had been shown in studies using similar tasks (Nasrallah et al., 2011; Nasrallah et al., 2009). Yet we observed preference shifts across sessions within a given block and higher variance in choice behavior, which, as compared with other probabilistic choice tasks with extended pretraining, may reduce the sensitivity of our task to small treatment effects. Our pilot experiments revealed that, by increasing the number of trials per session or the number of days per block, performance became more stable and less variable, but, importantly, at the same time the preference for the large/risky lever remained higher across blocks and did not fall below ~50 % in the last block (4p at p = .125 vs. 1p at p = 1). In other words, these schedules did not allow showing risk aversion, a major feature of risk-based decision making (Kacelnik & Bateson, 1997; Stephens & Krebs, 1986). By contrast, with the current schedule, sham controls eventually displayed, in the last block (4p at p = .125 vs. 1p at p = 1), a low preference for the large/risky lever (~10 %)—that is, marked risk aversion. Thus, the task in its present form should be sensitive to experimental manipulations that both increase and decrease risky choices. Furthermore, we cannot exclude the possibility that the use of task variants that include, for example, a monotonic decrease, rather than an increase, in probability to obtain large reward might have revealed altered decision making in rats with mPFC or AcbC DA depletions. However, our preliminary results obtained with such task variants provide no evidence in favor of this possibility.

Prefrontal dopamine and probabilistic choice

Our histological analysis revealed an almost total loss of prefrontal TH-positive fibers. Likewise, previous studies showed that prefrontal 6-OHDA infusions had relatively restricted effects around the infusion site (Lex & Hauber, 2010a; Pycock, Carter, & Kerwin, 1980). Moreover, prefrontal 6-OHDA infusions of markedly lower concentrations (4–6 μg/μl) reduced DA levels by up to 80 % (Bubser, 1994; McGregor, Baker, & Roberts, 1996; Pycock et al., 1980) and caused cognitive impairments in maze tasks (Bubser & Schmidt, 1990) and operant tasks (Kheramin et al., 2004). In addition, DA depletions after prefrontal 6-OHDA injections are relatively persistent (Bubser & Schmidt, 1990); therefore, recovery of DA terminals seems unlikely. Collectively, these findings suggest that the failure to detect behavioral changes in our animals with prefrontal DA depletion may not reflect behavioral plasticity accounted for by minimal amounts of residual DA.

Furthermore, prefrontal 6-OHDA injections markedly reduce prefrontal noradrenaline (NA) levels, although to a lesser extent than DA levels, even after pretreatment with the NA/serotonin reuptake inhibitor desipramine (which we did not use) (e.g., Koch & Bubser, 1994; Morrow, Elsworth, Rasmusson, & Roth, 1999). These findings suggest that in our rats, NA input to the mPFC might be affected as well. To our knowledge, the role of NA in risk-based decision making is yet unknown.

Intact risk-based decision making in animals with prefrontal DA depletions was unexpected, given that a blockade of prefrontal D1 and D2 receptors influenced probability discounting in a dissociable manner (St Onge et al., 2011). Specifically, St Onge et al. (2011) suggested that a reduced mPFC D2 receptor activity rendered animals less sensitive to reward probability shifts, whereas a reduced mPFC D1 receptor activity rendered animals more sensitive to reward omissions experienced during risky choices, thereby increasing lose-shift tendencies and reducing risky choice. By contrast, our data suggest that mPFC DA depletion did not alter risk-based decision making. One could argue that our task is insensitive to DA dysfunction, yet this possibility seems unlikely, because a similar version of this task was able to detect altered risk-based decision making caused by drug-induced signaling impairments in DA neurons (Nasrallah et al., 2011). However, several other reasons might account for these discrepant findings. First, regarding the basic experimental design, it is important to note that many earlier studies analyzing effects of DA receptor ligands (e.g., St Onge et al., 2011) used a within-subjects design that provides greater sensitivity, as compared with a between-subjects design employed here to analyze probabilistic choice in sham controls versus rats with DA depletions. Second, behavioral effects of an impaired DA transmission induced by a transient and selective DA receptor blockade (St Onge et al., 2011) and a permanent DA depletion as used here can differ for a number of reasons; for example, permanent DA depletion could facilitate behavioral control by alternative neural circuits more effectively. Third, cognitive demands of probabilistic choice tasks employed in both studies vary considerably; that is, our decision-making task involves probability discounting across blocks with fixed within-session reward probabilities, while in the task used by St Onge and Floresco (2010), large-reward probabilities were variable within sessions. Importantly, the authors of this study demonstrated that the mPFC is not critical for the assessment of reward probabilities if they are fixed across a session but supports assessment of reward probabilities if they vary within a given session. In other words, the mPFC seems to be critical for assessing rapid changes in reward probabilities and may subserve an updating function. Our present study extends these findings and implies that normal mPFC DA transmission is neither necessary for estimating the relative risk of available response options nor critical for long-term probability discounting across blocks but leaves open the possibility that, as proposed by St Onge et al. (2011), mPFC DA could support detection of rapid changes of reward probabilities.

Accumbens dopamine and probabilistic choice

Consistent with earlier studies using an almost identical protocol (Lex & Hauber, 2010b; Mai et al., 2012), TH-positive fibers in the AcbC were abundant in sham controls but were rare in rats with 6-OHDA lesions. Correspondingly, previous studies revealed that similar doses of 6-OHDA produced massive striatal DA depletions (Amalric & Koob, 1987; Meredith, Ypma, & Zahm, 1995). In line with earlier findings (e.g., Mai et al., 2012), AcbC DA depletions moderately reduced body weight gains during the course of the experiment. Collectively, these findings point to the view that the 6-OHDA-lesioned rats examined here had pronounced AcbC DA depletions. Furthermore, because 6-OHDA-induced loss of striatal DA terminals remained stable over 4 weeks (Blandini, Levandis, Bazzini, Nappi, & Armentero, 2007), it is unlikely that AcbC lesions recovered during the course of our experiments.

The assessment of reward probabilities and probability discounting was intact in animals with DA depletions, thus indicating that AcbC DA may not mediate the capacity to evaluate the magnitude and likelihood of rewards associated with alternative courses of action, at least in a decision-making task that involves probability discounting across blocks with fixed within-session reward probabilities. Considerable evidence suggests that risk-based decision making engages not only the AcbC (Cardinal & Howes, 2005), but also mesoaccumbens DA systems as well. For instance, DA neurons display higher neuronal activity to cues predicting large, immediate, or highly probable rewards, as opposed to cues that predict low, delayed, or improbable rewards (Day, Jones, & Carelli, 2011; Roesch, Calu & Schoenbaum, 2007). Furthermore, phasic DA release in the AcbC encodes information about the relative values of the available response options, regardless of the actual choice of an animal (Day et al., 2010; Sugam et al., 2012). On the basis of these findings, it has been proposed that phasic DA in the AcbC may support the evaluation of risky behaviors and, in turn, risk-based decision making (Sugam et al., 2012). By contrast, our observation that AcbC DA depletion did not compromise the capacity to assess reward probabilities of the available response to bias action selection accordingly calls into question a causal role of normal AcbC DA signaling in risk-based decision making. However, it is important to note that abnormal DA signaling in the AcbC—for example, as result of chronic drug intake—can induce profound changes in risk valuation and, thus, risk-based decision making (Nasrallah et al., 2011). Likewise, aberrant increases of AcbC DA activity—for example, after systemic amphetamine (St Onge et al., 2010)—may contribute to alter risk-based decision making. Furthermore, recent studies revealed that the shell subregion, rather than the AcbC, seems to play a more critical role in risk-based decision making (Stopper & Floresco, 2011), pointing to the possibility that normal DA signaling in the shell region could support this form of decision making.

Conclusions

Midbrain DA neurons play a critical role in risk-based decision making (Fiorillo et al., 2003). Consistent with this account, maladaptive risk taking was observed in a number of neuropsychiatric disorders with dysfunctional DA systems such as attention-deficit–hyperactivity disorder, schizophrenia, major depression, addiction, and Parkinson’s disease (Bechara et al., 2001; Ernst et al., 2003; Kobayakawa, Koyama, Mimura, & Kawamura, 2008; Ludewig, Paulus, & Vollenweider, 2003; Taylor Tavares et al., 2007). However, little is known about the contribution of DA signals in target regions of midbrain DA neurons in controlling risk-based decision making. Recent studies implicated mPFC and AcbC DA signaling in mediating different components of risk-based decision making. For instance, St Onge et al. (2011) revealed that mPFC DA D1 and D2 receptor activity contributes in a dissociable manner to risk-based decision making, possibly by supporting distinct underlying psychological processes. Furthermore, Sugam et al. (2012) recently showed that DA projections to the AcbC encode the subjective value of future rewards that may influence behavioral risk preferences. Our present study examined for the first time the effects of DA depletions on probabilistic choice and showed intact risk-based decision making in rats with mPFC and AcbC DA depletion. Our findings point to the view that the basal capacity to evaluate the magnitude and likelihood of rewards associated with alternative courses of action, as well as the assessment of long-term changes of reward probabilities, seems not to rely on intact prefrontal or AcbC DA transmission, at least in a simple probabilistic choice task such as that used here. However, it is important to note that mPFC or AcbC DA may well support risk-based decision making in more complex tasks that, for instance, demand an assessment of short-term changes in reward probabilities. Furthermore, a considerable body of evidence suggests that an abnormal or enhanced Acb or prefrontal DA activity can induce profound changes in risk-based decision making (Nasrallah et al., 2011; St Onge et al., 2011; St Onge & Floresco, 2010). Likewise, repeated amphetamine exposure can increase risky choice by inducing numerous neural changes, including an enhanced Acb DA activity (Floresco & Whelan, 2009). Together, these findings in animal models, along with clinical data (Rogers, 2011), point to a complex role of DA in risk-based decision making. Our findings would suggest that a loss of DA activity in the mPFC and AcbC per se is not sufficient to alter probabilistic choice.

References

Amalric, M., & Koob, G. F. (1987). Depletion of dopamine in the caudate nucleus but not in nucleus accumbens impairs reaction-time performance in rats. The Journal of Neuroscience, 7(7), 2129–2134.

Bechara, A., Dolan, S., Denburg, N., Hindes, A., Anderson, S. W., & Nathan, P. E. (2001). Decision-making deficits, linked to a dysfunctional ventromedial prefrontal cortex, revealed in alcohol and stimulant abusers. Neuropsychologia, 39(4), 376–389.

Berger, B., Gaspar, P., & Verney, C. (1991). Dopaminergic innervation of the cerebral cortex: Unexpected differences between rodents and primates. Trends in Neurosciences, 14(1), 21–27.

Berger, B., Thierry, A. M., Tassin, J. P., & Moyne, M. A. (1976). Dopaminergic innervation of the rat prefrontal cortex: A fluorescence histochemical study. Brain Research, 106(1), 133–145.

Blandini, F., Levandis, G., Bazzini, E., Nappi, G., & Armentero, M. T. (2007). Time-course of nigrostriatal damage, basal ganglia metabolic changes and behavioural alterations following intrastriatal injection of 6-hydroxydopamine in the rat: New clues from an old model. The European Journal of Neuroscience, 25(2), 397–405. doi:10.1111/j.1460-9568.2006.05285.x

Bubser, M. (1994). 6-Hydroxydopamine lesions of the medial prefrontal cortex of rats do not affect dopamine metabolism in the basal ganglia at short and long postsurgical intervals. Neurochemical Research, 19(4), 421–425.

Bubser, M., & Schmidt, W. J. (1990). 6-Hydroxydopamine lesion of the rat prefrontal cortex increases locomotor activity, impairs acquisition of delayed alternation tasks, but does not affect uninterrupted tasks in the radial maze. Behavioural Brain Research, 37(2), 157–168.

Cardinal, R. N., & Howes, N. J. (2005). Effects of lesions of the nucleus accumbens core on choice between small certain rewards and large uncertain rewards in rats. BMC Neuroscience, 6, 37. doi:10.1186/1471-2202-6-37

Cousins, M. S., Atherton, A., Turner, L., & Salamone, J. D. (1996). Nucleus accumbens dopamine depletions alter relative response allocation in a T-maze cost/benefit task. Behavioural Brain Research, 74(1–2), 189–197.

Day, J. J., Jones, J. L., & Carelli, R. M. (2011). Nucleus accumbens neurons encode predicted and ongoing reward costs in rats. The European Journal of Neuroscience, 33(2), 308–321. doi:10.1111/j.1460-9568.2010.07531.x

Day, J. J., Jones, J. L., Wightman, R. M., & Carelli, R. M. (2010). Phasic nucleus accumbens dopamine release encodes effort- and delay-related costs. Biological Psychiatry, 68(3), 306–309. doi:10.1016/j.biopsych.2010.03.026

Ernst, M., Grant, S. J., London, E. D., Contoreggi, C. S., Kimes, A. S., & Spurgeon, L. (2003). Decision making in adolescents with behavior disorders and adults with substance abuse. The American Journal of Psychiatry, 160(1), 33–40.

Fiorillo, C. D., Tobler, P. N., & Schultz, W. (2003). Discrete coding of reward probability and uncertainty by dopamine neurons. Science, 299(5614), 1898–1902. doi:10.1126/science.1077349

Floresco, S. B., & Whelan, J. M. (2009). Perturbations in different forms of cost/benefit decision making induced by repeated amphetamine exposure. Psychopharmacology (Berl), 205(2), 189–201. doi:10.1007/s00213-009-1529-0

Gan, J. O., Walton, M. E., & Phillips, P. E. (2010). Dissociable cost and benefit encoding of future rewards by mesolimbic dopamine. Nature Neuroscience, 13(1), 25–27. doi:10.1038/nn.2460

Herrnstein, R. J. (1961). Relative and absolute strength of response as a function of frequency of reinforcement. Journal of the Experimental Analysis of Behavior, 4, 267–272. doi:10.1901/jeab.1961.4-267

Kacelnik, A., & Bateson, M. (1997). Risk-sensitivity: Crossroads for theories of decision-making. Trends in Cognitive Sciences, 1(8), 304–309.

Kheramin, S., Body, S., Ho, M. Y., Velazquez-Martinez, D. N., Bradshaw, C. M., Szabadi, E., … Anderson, I. M. (2004). Effects of orbital prefrontal cortex dopamine depletion on inter-temporal choice: A quantitative analysis. Psychopharmacology, 175(2), 206–214. doi:10.1007/s00213-004-1813-y

Kobayakawa, M., Koyama, S., Mimura, M., & Kawamura, M. (2008). Decision making in Parkinson’s disease: Analysis of behavioral and physiological patterns in the Iowa gambling task. Movement Disorders, 23(4), 547–552. doi:10.1002/mds.21865

Koch, M., & Bubser, M. (1994). Deficient sensorimotor gating after 6-hydroxydopamine lesion of the rat medial prefrontal cortex is reversed by haloperidol. The European Journal of Neuroscience, 6(12), 1837–1845.

Lex, B., & Hauber, W. (2010a). The role of dopamine in the prelimbic cortex and the dorsomedial striatum in instrumental conditioning. Cerebral Cortex, 20(4), 873–883. doi:10.1093/cercor/bhp151

Lex, B., & Hauber, W. (2010b). The role of nucleus accumbens dopamine in outcome encoding in instrumental and Pavlovian conditioning. Neurobiology of Learning and Memory, 93(2), 283–290. doi:10.1016/j.nlm.2009.11.002

Ludewig, K., Paulus, M. P., & Vollenweider, F. X. (2003). Behavioural dysregulation of decision-making in deficit but not nondeficit schizophrenia patients. Psychiatry Research, 119(3), 293–306.

Mai, B., Sommer, S., & Hauber, W. (2012). Motivational states influence effort-based decision making in rats: The role of dopamine in the nucleus accumbens. Cognitive, Affective and Behavioral Neuroscience, 12(1), 74–84. doi:10.3758/s13415-011-0068-4

McGregor, A., Baker, G., & Roberts, D. C. (1996). Effect of 6-hydroxydopamine lesions of the medial prefrontal cortex on intravenous cocaine self-administration under a progressive ratio schedule of reinforcement. Pharmacology, Biochemistry, and Behavior, 53(1), 5–9.

Meredith, G. E., Ypma, P., & Zahm, D. S. (1995). Effects of dopamine depletion on the morphology of medium spiny neurons in the shell and core of the rat nucleus accumbens. The Journal of Neuroscience, 15(5 Pt 2), 3808–3820.

Mobini, S., Body, S., Ho, M. Y., Bradshaw, C. M., Szabadi, E., Deakin, J. F., & Anderson, I. M. (2002). Effects of lesions of the orbitofrontal cortex on sensitivity to delayed and probabilistic reinforcement. Psychopharmacology (Berlin), 160(3), 290–298. doi:10.1007/s00213-001-0983-0

Morrow, B. A., Elsworth, J. D., Rasmusson, A. M., & Roth, R. H. (1999). The role of mesoprefrontal dopamine neurons in the acquisition and expression of conditioned fear in the rat. Neuroscience, 92(2), 553–564.

Nasrallah, N. A., Clark, J. J., Collins, A. L., Akers, C. A., Phillips, P. E., & Bernstein, I. L. (2011). Risk preference following adolescent alcohol use is associated with corrupted encoding of costs but not rewards by mesolimbic dopamine. Proceedings of the National Academy of Sciences of the United States of America, 108(13), 5466–5471. doi:10.1073/pnas.1017732108

Nasrallah, N. A., Yang, T. W., & Bernstein, I. L. (2009). Long-term risk preference and suboptimal decision making following adolescent alcohol use. Proceedings of the National Academy of Sciences of the United States of America, 106(41), 17600–17604. doi:10.1073/pnas.0906629106

Ostlund, S. B., & Maidment, N. T. (2012). Dopamine receptor blockade attenuates the general incentive motivational effects of noncontingently delivered rewards and reward-paired cues without affecting their ability to bias action selection. Neuropsychopharmacology, 37(2), 508–519. doi:10.1038/npp. 2011.217

Paxinos, G., & Watson, C. (1998). The rat brain in stereotaxic coordinates (4th ed.) (4th ed.). San Diego: Academic.

Phillips, P. E., Walton, M. E., & Jhou, T. C. (2007). Calculating utility: Preclinical evidence for cost-benefit analysis by mesolimbic dopamine. Psychopharmacology, 191(3), 483–495. doi:10.1007/s00213-006-0626-6

Pycock, C. J., Carter, C. J., & Kerwin, R. W. (1980). Effect of 6-hydroxydopamine lesions of the medial prefrontal cortex on neurotransmitter systems in subcortical sites in the rat. Journal of Neurochemistry, 34(1), 91–99.

Roesch, M. R., Calu, D. J., & Schoenbaum, G. (2007). Dopamine neurons encode the better option in rats deciding between differently delayed or sized rewards. Nature Neuroscience, 10(12), 1615–1624. doi:10.1038/nn2013

Rogers, R. D. (2011). The roles of dopamine and serotonin in decision making: Evidence from pharmacological experiments in humans. Neuropsychopharmacology, 36(1), 114–132. doi:10.1038/npp. 2010.165

Schweimer, J., & Hauber, W. (2006). Dopamine D1 receptors in the anterior cingulate cortex regulate effort-based decision making. Learning and Memory, 13(6), 777–782. doi:10.1101/lm.409306

Schweimer, J., Saft, S., & Hauber, W. (2005). Involvement of catecholamine neurotransmission in the rat anterior cingulate in effort-related decision making. Behavioral Neuroscience, 119(6), 1687–1692. doi:10.1037/0735-7044.119.6.1687

Simon, N. W., Gilbert, R. J., Mayse, J. D., Bizon, J. L., & Setlow, B. (2009). Balancing risk and reward: A rat model of risky decision making. Neuropsychopharmacology, 34(10), 2208–2217. doi:10.1038/npp. 2009.48

Simon, N. W., Montgomery, K. S., Beas, B. S., Mitchell, M. R., LaSarge, C. L., Mendez, I. A., … Setlow, B. (2011). Dopaminergic modulation of risky decision-making. The Journal of Neuroscience, 31(48), 17460–17470. doi:10.1523/JNEUROSCI.3772-11.2011

St Onge, J. R., Abhari, H., & Floresco, S. B. (2011). Dissociable contributions by prefrontal D1 and D2 receptors to risk-based decision making. The Journal of Neuroscience, 31(23), 8625–8633. doi:10.1523/JNEUROSCI.1020-11.2011

St Onge, J. R., Chiu, Y. C., & Floresco, S. B. (2010). Differential effects of dopaminergic manipulations on risky choice. Psychopharmacology (Berlin), 211(2), 209–221. doi:10.1007/s00213-010-1883-y

St Onge, J. R., & Floresco, S. B. (2009). Dopaminergic modulation of risk-based decision making. Neuropsychopharmacology, 34(3), 681–697. doi:10.1038/npp. 2008.121

St Onge, J. R., & Floresco, S. B. (2010). Prefrontal cortical contribution to risk-based decision making. Cerebral Cortex, 20(8), 1816–1828. doi:10.1093/cercor/bhp250

Stephens, D. W., & Krebs, J. R. (1986). Foraging theory. Princeton: Princeton University Press.

Stopper, C. M., & Floresco, S. B. (2011). Contributions of the nucleus accumbens and its subregions to different aspects of risk-based decision making. Cognitive, Affective and Behavioral Neuroscience, 11(1), 97–112. doi:10.3758/s13415-010-0015-9

Sugam, J. A., Day, J. J., Wightman, R. M., & Carelli, R. M. (2012). Phasic nucleus accumbens dopamine encodes risk-based decision-making behavior. Biological Psychiatry, 71(3), 199–205. doi:10.1016/j.biopsych.2011.09.029

Taylor Tavares, J. V., Clark, L., Cannon, D. M., Erickson, K., Drevets, W. C., & Sahakian, B. J. (2007). Distinct profiles of neurocognitive function in unmedicated unipolar depression and bipolar II depression. Biological Psychiatry, 62(8), 917–924. doi:10.1016/j.biopsych.2007.05.034

Walton, M. E., Bannerman, D. M., Alterescu, K., & Rushworth, M. F. (2003). Functional specialization within medial frontal cortex of the anterior cingulate for evaluating effort-related decisions. The Journal of Neuroscience, 23(16), 6475–6479.

Zeeb, F. D., Robbins, T. W., & Winstanley, C. A. (2009). Serotonergic and dopaminergic modulation of gambling behavior as assessed using a novel rat gambling task. Neuropsychopharmacology, 34(10), 2329–2343. doi:10.1038/npp. 2009.62

Author note

Supported by a grant from the Deutsche Forschungsgemeinschaft (HA2340/9-1). We are grateful to Igor Nevar for assistance in animal husbandry.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Mai, B., Hauber, W. Intact risk-based decision making in rats with prefrontal or accumbens dopamine depletion. Cogn Affect Behav Neurosci 12, 719–729 (2012). https://doi.org/10.3758/s13415-012-0115-9

Published:

Issue Date:

DOI: https://doi.org/10.3758/s13415-012-0115-9