Abstract

Important characteristics of the environment can be represented in the temporal pattern of sensory stimulation. In two experiments, we compared accuracy of temporal processing by different modalities. Experiment 1 examined binary categorization of rate for visual (V) or vibrotactile (T) stimulus pulses presented at either 4 or 6 Hz. Inter-pulse intervals were either constant or variable, perturbed by random Gaussian variates. Subjects categorized the rate of T pulse sequences more accurately than V sequences. In V conditions only, subjects disproportionately tended to mis-categorize 4-Hz pulse rates, for all but the most variable sequences. In Experiment 2, we compared gap detection thresholds across modalities, using the same V and T pulses from Experiment 1, as well as with bimodal (VT) pulses. Visual gap detection thresholds were larger (3\(\times \)) than tactile thresholds. Additionally, performance with VT stimuli seemed to be nearly completely dominated by their T components. Together, these results suggest (i) that vibrotactile temporal acuity surpasses visual temporal acuity, and (ii) that vibrotactile stimulation has considerable, untapped potential to convey temporal information like that needed for eyes-free alerting signals.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Important characteristics of the environment can be represented in temporal patterns of stimulation within multiple sensory modalities. Much is known about the eyes’ and ears’ ability to process temporal stimulation, but relatively little is known about the processing capacity of our largest sensory organ, the skin. Despite that limited knowledge, various applications make use of time-varying vibrotactile cues. For example, a mobile phone’s distinctive pattern of vibrations can identify the caller; vibrations from a smart watch can remind the wearer to perform some action; and vibrations from a car’s steering wheel can warn of a possible collision (Chiasson et al., 2003; Elliott et al., 2009; McGrath et al., 2004). These and other potential uses of the skin as a communication channel make it important to know the skin’s capacity to process temporal patterns of stimulation, and how limitations on that capacity compare to other sensory channels.

It is well established that auditory temporal processing surpasses both tactile and visual temporal processing (Desloge et al., 2014; Jones et al., 2009; Rammsayer, 2014; Recanzone, 2003; Stauffer et al., 2012; Villalonga et al., 2021; Welch et al., 1986). Fewer psychophysical studies have directly compared tactile and visual temporal processing, and these visuo-tactile comparisons yield mixed results. Subjects tend to have slightly higher sensitivity to temporal information conveyed by tactile signals compared to visual (Azari et al., 2020; Ball et al., 2017; Bresciani et al., 2008), although there is also evidence of higher temporal sensitivity with visual signals than with tactile signals (Grondin & Rousseau, 1991). Notably, differences between visual and tactile temporal sensitivity generally either fail to reach statistical significance (Ball et al., 2017; Jones et al., 2009), or are easily negated by changing the task parameters (e.g., unpredictably varying stimulus modality from trial to trial, or using time intervals >1 s; see Azari et al. , 2020; Grondin & Rousseau, 1991).

Many previous studies of temporal sensitivity asked subjects to judge the duration of single stimuli. It is not guaranteed that results with judgments of single intervals can be generalized to more complex temporal stimuli such as temporal frequency or rate (Breska & Ivry, 2018; Rammsayer & Brandler, 2004; Teki et al., 2011). Temporal frequency is the rhythmic pattern of stimulation typically described as a beat-based temporal structure. A recent study suggested that perceived rate is built up over time from the perceived durations of component intervals (Motala et al., 2020). This finding suggests interval-based timing mechanisms contribute to rate perception, and that modality differences in interval timing could also affect perception of more complex temporal structures.

In a recent study, we investigated how stimulus modality affected ability to classify pulse rates as “fast” (6 Hz) or “slow” (3 Hz), as well as the impact of each inter-pulse interval on rate perception (Villalonga et al., 2020). With stimuli delivered as trains of either vibrotactile or visual pulses, subjects categorized stimulus rate equally well with either modality of stimulation. That result held even with non-isochronous pulse trains (i.e., when the regularity in timing of successive pulses was degraded by additive temporal noise). Interestingly, the earliest inter-pulse intervals in each pulse sequence had a larger impact on accurate rate categorization than later intervals.

In the present study, we tested whether more challenging conditions would produce similar results, and whether sensitivity to individual intervals between pulses plays a role in rate perception. Specifically, we tested temporal acuity for unfilled intervals across visual and tactile modalities, taking convergent approaches in a pair of experiments. Experiment 1, a rate categorization task, showed that sensitivity to rate information depends upon sensory modality, with improved performance on tactile trials compared to visual. Experiment 2 extended this finding to gap detection: visual gap detection thresholds were significantly larger than tactile thresholds, and bimodal thresholds were largely dominated by tactile components.

Experiment 1: Using Temporal Noise to Investigate Rate Processing

Several different sources of noise can corrupt sensory responses. Although intrinsic noise is present in any perceptual process, external noise added to a stimulus under controlled conditions can reveal the computations governing perception of that stimulus (Allard et al., 2015; Pelli & Farell, 1999). To quantify perceptual efficiency (Lu & Dosher, 1999) or to characterize information processing during particular tasks (Gold, 2014; Gold et al., 2004), vision researchers have injected noise into luminance (Gold, 2014; Hall et al., 2014) and orientation stimuli (Girshick et al., 2011). We adapted this approach to compare temporal processing of tactile and visual stimuli. Specifically, we injected varying amounts of temporal noise into both visual and vibrotactile stimulus sequences. Subjects were required to categorize each sequence as having a mean rate of either 4 or 6 Hz. On some trials, the sequence of pulses was isochronous (i.e., temporally regular), but on most trials, this temporal regularity was perturbed by random variation in the timing of pulses. We asked whether increasing amounts of temporal noise would differentially impair rate categorization in the two modalities, revealing a differential robustness in the modalities’ capacity to transmit rate information.

Method

Subjects

Twenty-eight subjects were tested (20 female, 6 male, 2 declined to identify; mean age = 18.7 years, SD = 0.9). This sample size gave us 88.3% power to observe an effect size comparable to that in our previous, related experiment (\(\eta _p^2\) = 0.16; Villalonga et al. , 2020). Experimental procedures were approved by Brandeis University’s Institutional Review Board and were conducted in accordance with the Declaration of Helsinki. Subjects gave written informed consent prior to participation.

Apparatus and Stimuli

The flow of the experiment and stimulus delivery was controlled by PsychoPy software (Peirce et al., 2019) running on an Apple Mac Mini computer. The delivery and timing of stimulus pulses were controlled by an Arduino microcontroller (Banzi & Shiloh, 2014) that communicated with the computer via serial port.

Apparatus for stimulus presentation. The LED and tactor were embedded in a bespoke hand cradle and used for presentation of visual and vibrotactile stimuli, respectively. Multiple interchangeable components in the hand cradle allowed us to accommodate hands of different widths and finger lengths in order to ensure that the tip of a subject’s index finger was positioned atop the vibrating tactor.

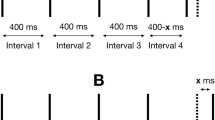

In Experiment 1, series of 50-ms pulses (grey vertical bars, A & B) were presented on each trial. The stimulus terminated when either 10 pulses had been presented or if the subject made a response. Pulse sequences presented in the 0% noise condition (A) were isochronous, with each IPI fixed at a nominal IPI for the given rate (C). On trials with noise, each nominal IPI in the trial sequence was independently perturbed by a random variate (B). Scaling noise by the nominal rate produced IPI sampling distributions for 4-Hz and 6-Hz trials that were unequal in variance (D-G). Sampling distributions were truncated to prevent IPIs \(\le 1\) ms. Note: Diagrams of pulse sequences (A & B) are not drawn to scale.

Vibrotactile (T) pulses, each 50 ms long, were delivered to the distal pad of the subject’s left index finger using a C-2 tactor (Engineering Acoustics, Inc., Casselberry, FL). Pulses were generated by driving a 7.87-mm diameter, metal tactor element up and down against the skin at 250 Hz. The Arduino controlled activation of the tactor via a DRV2605L Haptic Motor Driver board (Texas Instruments, Dallas, TX, USA). Visual (V) pulses were 50-ms flashes produced by a 5-mm diameter LED with a red lens. The initial, positive phase of vision’s temporal impulse response function grows more compressed in time with increasing mean luminance (Kelly, 1971). So, to optimize the precision of visual responses to our stimulus pulses, LED luminance was set at a value in the upper end of its safe operating range. As measured by filling the 1 degree field of a Minolta LS-1000 photometer, mean LED luminance was 968.5 cd/m\(^2\).

The tactor and LED were both fixed in the adjustable, 3D-printed hand cradle, shown in Fig. 1. The LED’s spatial adjacency to the tactor promoted approximate co-localization of visual and vibrotactile stimuli (Badde et al., 2018). The cradle held a subject’s left hand comfortably, ensuring that the tip of the index finger was located directly on the tactor. Removable 3D-printed inserts adjusted the hand cradle’s dimensions for best fit to individual subjects’ hands (Donelson & Gordon, 1995).

Subjects were monitored throughout the experiment to ensure that they kept their finger in the correct position on the tactor, with their eyes open. Subjects were instructed to fixate on the LED throughout the experiment. To prevent auditory signals generated by the tactor’s vibrations from impacting subjects’ responses, masking white noise was delivered to subjects over noise-canceling headphones.

On each trial, subjects received a sequence of 50-ms stimulus pulses separated by empty inter-pulse intervals (IPIs; Fig. 2). All pulses on a single trial were presented either visually, as LED flashes, or as vibrotactile pulses to the finger tip. Equal numbers of sequences with the two mean rates, 4 or 6 Hz, were randomly intermixed across trials. On 20% of trials, pulses were isochronous: each 50-ms pulse was separated from the next by either 200-ms IPIs (4-Hz trials) or 116-ms IPIs (6-Hz trials; Fig. 2A). On the other 80% of trials, pulse sequences were temporally stochastic, with a random variate (hereafter, "noise") added to each IPI in a sequence (Fig. 2B). The noise \(x_i\) was generated by sampling zero-mean Gaussian distributions, \(x_i \sim N(0,\sigma ^{2})\), where \(\sigma \) varied by both noise level and pulse rate. For the isochronous, 0% noise condition, \(\sigma \) = 0 (Fig. 2C). Non-isochronous sequences were chosen randomly from stochastic sequences with one of four noise levels: 5, 10, 20, and 40% noise. These four noise levels were operationally defined by scaling the variance of the IPI sampling distribution, where \(\sigma \) = 0.05k, 0.1k, 0.2k, and 0.4k, respectively, with k being the nominal period in milliseconds for the rate condition (4 Hz: k = 250; 6 Hz: k = 166). Variates were sampled independently for each IPI in a sequence and for each trial. The sampled distributions were truncated at the lower tail to prevent IPIs \(\le \)1 ms. Figure 2 depicts the IPI sampling distributions for each noise level (panels C-G).

Task

A 500-ms tone (440 Hz) alerted the subject to the start of a trial. Then, 500 ms later, the trial’s pulse sequence began and continued until the subject responded or until 10 pulses (nine IPIs) had been presented. The maximum number of pulses provided on each trial was determined based on previous work in our laboratory that showed subjects invariably needed fewer than 10 pulses to categorize a sequence’s rate (Villalonga et al., 2020). Subjects responded by categorizing the pulse rate as “slow” or “fast", which were the correct responses for 4-Hz and 6-Hz trials, respectively.

Subjects communicated their judgments by pressing one of two computer keyboard keys with their free, right hand. The keys assigned to “slow” and “fast” judgments were counterbalanced between subjects. Subjects were instructed to respond as soon as they made a decision about the pulse rate, and could therefore end the trial before the entire stimulus (10 pulses separated by 9 IPIs) had been presented. Including the time elapsed by the stimulus itself (on average, 1.80 s for 4-Hz stimuli and 1.54 s for 6-Hz stimuli), subjects were allowed up to 8 s to respond, after which the trial automatically ended. Successive trials were separated by an inter-trial interval of 1 s.

To learn the response mapping, each subject first completed a practice block of 20 trials with feedback after each trial. Pulse modality and rate were randomized across trials of the practice block, but noise was held constant at 0% to familiarize subjects with the stimuli and task. Following the practice block, subjects completed 16 test blocks of 50 trials each, yielding a total of 800 test trials from each subject. Trials were blocked by modality, alternating between V and T from block to block. Block order was counterbalanced between subjects. Pulse rate and noise level were randomized from trial to trial within each block.

Data Analysis

Data were analyzed in R (R Core Team, 2021). Trials on which a subject failed to respond were excluded from analysis (n=95, 0.4% of trials). Trials with premature responses, defined as a response that occurred before the onset of the second pulse in the sequence (i.e., a response without any rate information), were also excluded (n=47, 0.2% of trials).

Sensitivity (A) and decision criterion (B) by noise level for each modality. On average, subjects were better able to categorize rate information conveyed by T pulse sequences than by V pulse sequences. Subjects’ decision criteria varied between modalities, revealing they were more likely to erroneously categorize V sequences as “fast”. Error bars reflect 95% confidence intervals, n=28.

Subjects’ performance was defined by non-parametric estimates of sensitivity (A) and decision criterion (b), computed using the method proposed by Zhang and Mueller (2005). The choice of non-parametric rather than parametric measures was dictated by the fact that for all noise levels except 0%, IPIs for 4- and 6-Hz stimuli came from distributions of unequal variance (see Fig. 2). We calculated subject-level A and b estimates separately for each of the 10 conditions (2 modalities\(~\times ~\)5 noise levels). For these estimates, a hit was defined as a response of “slow” to a 4-Hz stimulus, and a false alarm as a response of “slow” to a 6-Hz stimulus. A higher value of A indicates greater accuracy in categorizing stimulus rate. The value of b reflects the location of a subject’s criterion for deciding whether a stimulus rate was 4 or 6 Hz, and therefore the relative probability of each possible misattribution error. Specifically, a value of \(b=1\) means that the subject used a neutral criterion, favoring neither response; that is, \(b=1\) means that both types of misattributions were equally likely. \(b<1\) signifies that mistaken “slow” responses exceeded mistaken "fast" responses, and \(b>1\) signifies that mistaken “fast” responses exceeded mistaken "slow" responses.

Separate within-subject, two-way omnibus ANOVAs (afex package; Singmann et al. , 2022) tested for differences between modalities and among noise levels for both A and b. We evaluated sphericity across noise levels using Mauchly’s test; violations were corrected with Greenhouse-Geisser \(\mathcal {E}\) (Verma, 2015). Using the emmeans package (Lenth, 2022), we tested for a simple effect of modality in each noise level, for both dependent variables. Additionally, we tested whether \(b=1\) in each condition using single-sample t-tests, adjusting p-values and confidence intervals with a Bonferroni correction for 10 estimates.

Error rate for each combination of modality and noise level. A. Subjects had similar accuracy on 4-Hz and 6-Hz T trials. B. Subjects made more errors on 4-Hz V trials than 6-Hz V trials, that is, they were more likely to label a V pulse rate as “fast” overall, regardless of nominal rate. Error bars reflect within-subject standard error, n=28.

Results

Figure 3A shows mean sensitivity (A) for each combination of noise level and modality. As expected, on average, sensitivity significantly declined as noise increased (Table 1). Additionally, modality had a significant effect on sensitivity (Table 1), with higher T sensitivity compared to V, on average (Fig. 3A). Post hoc tests for a simple effect of modality at each noise level showed significantly better sensitivity on T trials than on V trials with 5% noise (mean difference = 0.029, 95% CI: [0.010, 0.047]), and 40% noise (mean diff. = 0.05, 95% CI: [0.024, 0.076]). Sensitivity did not significantly differ between modalities with 0%, 10%, or 20% noise (Table 1). Despite significant modality effects at some but not all of the noise levels, we did not observe a significant interaction between modality and noise level (Table 1).

Figure 3B shows the mean decision criterion (b) in each condition. A within-subjects ANOVA yielded a significant main effect of modality (Table 1). Subjects used significantly different decision criteria between modalities on trials with low noise, that is, 0-20% noise (Table 1). Over that range, the mean difference in criterion (\(b_{diff} = b_T - b_V\)) was, at each noise level: 0% noise \(b_{diff}\) = -0.265, 95% CI: (-0.434, -0.096); 5% noise = -0.364 (-0.560, -0.168); 10% noise = -0.427 (-0.623, -0.232); 20% noise = -0.403 (-0.607, -0.200) (see Table 1 for p-values and effect sizes). On these low-noise trials, subjects’ mean V criterion significantly differed from neutral (\(b_V \ne \) 1, Table 2). With the highest noise level (40%), subjects’ mean V criterion shifted toward neutral, b = 1 (Table 2), similar to subjects’ mean T criterion (\(b_{diff}\) = 0.029, 95% CI [-0.176, 0.234]; Table 1, Fig. 3B). Subjects used a fairly consistent decision criterion on T trials over all five noise levels (blue line in Fig. 3B). Although that criterion tended to favor "fast" responses (that is, \(b_T>\) 1), it did not significantly differ from neutral (b = 1) in any of the five noise levels (Table 2).

Experiment 1 Discussion

Sensitivity

Adding random temporal noise to pulse trains impaired categorization of rate, and did so similarly for both V and T stimuli. On average, however, vibrotactile rate was more accurately categorized than visual rate, regardless of how much variability was injected into the signal. Previous comparisons of visual and tactile temporal pattern perception produced comparable results, that is, temporal patterns presented as tactile stimuli are processed more accurately than patterns presented as visual stimuli (Espinoza-Monroy & de Lafuente, 2021; Kang et al., 2018). For example, Kang et al., (2018) found that subjects could detect repeats of random pulsatile patterns better with tactile pulses than with visual pulses. The authors also demonstrated that perceptual learning of temporal patterns was more likely to occur with tactile than with visual pulse sequences (Kang et al., 2018). Our study extends these findings to the case of rate categorization, and demonstrates that with considerable temporal variability, subjects categorize vibrotactile rate more accurately than visual rate.

Decision Criterion

The difference in decision criterion between modalities may be best understood in terms of tendency to make errors in judging each rate category, 4 Hz and 6 Hz. Specifically, when b = 1, the neutral criterion value, errors were equally likely for both stimuli; when \(b>1\), 4-Hz stimuli were more likely to be deemed 6-Hz than the reverse. Figure 4 depicts error rates by modality. Subjects made similar proportions of errors on 4-Hz T trials and 6-Hz T trials (Fig. 4A), suggesting that a neutral, unbiased decision criterion was used on T trials regardless of noise level (\(b_T \sim 1\), Table 2, Fig. 3B). Subjects used a different criterion on most V trials, one that reflects the higher error rate on 4-Hz V trials compared to 6-Hz V trials (Fig. 4B). In other words, on V trials, subjects were more likely to incorrectly label a 4-Hz V stimulus "fast" than they were to incorrectly label a 6-Hz V stimulus "slow".

One potential interpretation of this finding is that most V pulse sequences tended to appear “fast” to subjects, regardless of the nominal rate. Studies of perceived interval duration across sensory modalities provide indirect support for this possibility. For example, with physical duration equated, visual stimuli tend to appear to last longer than than tactile stimuli (Azari et al., 2020; Jones et al., 2009; Tomassini et al., 2011). If the perceptual response to an individual V pulse lasted longer than the perceptual response to a T pulse, then the unfilled IPIs between V pulses may have appeared shorter than their T counterparts, increasing the likelihood that both 4- and 6-Hz V stimuli would tend to seem fast. While the complexity of this experiment’s stimuli and task foreclosed testing the proposition that individual V pulses seem to last longer than individual T pulses, we expand upon the potential role of sensory persistence in the General Discussion.

Experiment 2: Unimodal and Bimodal Temporal Sensitivity

For another perspective on temporal resolution across visual and tactile pulse perception, we measured thresholds for detecting an unfilled interval between two successive visual pulses and two successive vibrotactile pulses. Guided by the results of Experiment 1, where temporal acuity was poorer with visual stimuli, we expected higher V gap detection thresholds in Experiment 2.

In addition to measuring gap detection thresholds for unimodal V and T pulses, we measured the gap detection threshold for bimodal stimuli comprising temporally coterminous T and V pulses. The pooling or combination of information from multiple sources has long been among neuroscience’s major concerns (Chandrasekaran, 2017; Parker, 1998; Sinnett et al., 2008). When those distinct sources are multiple sensory modalities, their interaction can take many different forms, including facilitative combination (Forster et al., 2002; Sperdin et al., 2009), destructive competition (Sinnett et al., 2008; Sun & Sekuler, 2021), compromise (Crommett et al., 2019), and unimodal dominance (Burr et al., 2009; Colavita, 1974; Villalonga et al., 2021). With one exception, these studies did not explicitly require temporal judgments. The exception, Villalonga et al., (2021), measured subjects’ ability to categorize the temporal deviation of a single pulse presented after an isochronous series of pulses. Pulses were either auditory, vibrotactile, or a bimodal combination of the two. Temporal acuity for the auditory stimulus was superior to acuity for the timing of the vibrotactile pulse. Importantly, performance with bimodal stimuli seemed to be dominated by the more reliably-categorized auditory stimulus. Although the stimulus structure and task demands in Villalonga et al. ’s study distinguished it from the paradigm we used in Experiment 2, which entailed just two pulses per trial, both tasks have some dependence on temporal acuity. That connection led us to predict that in our task, too, the stimulus from the modality with better temporal acuity would dominate bimodal performance.

Method

Subjects

Fourteen subjects (11 female, 2 male, 1 declined to identify; mean age = 22.3 years, SD = 3.4) participated in the experiment. With n=14 subjects, we had 99.9% power to observe an effect size reported in a similar visuo-tactile task (\(\eta _p^2 = 0.45\); Bultitude et al. , 2016). Experimental procedures were approved by Brandeis University’s Institutional Review Board and were conducted in accordance with the Declaration of Helsinki. Subjects gave written informed consent prior to participation, and none had participated in Experiment 1.

Apparatus and Stimuli

Using the same apparatus as in Experiment 1 (Fig. 1), MATLAB and the Psychophysics Toolbox controlled stimulus presentation and collected responses (Brainard, 1997; Kleiner et al., 2007; Matlab, 2017; Pelli, 1997). In addition to the same V and T stimuli as in Experiment 1, we included a third condition in which concurrent V and T components were paired to produce a bimodal, visuo-tactile (VT) stimulus. In the VT condition, coterminous unimodal components were presented together.

Diagram of the trial structure in Experiment 2. Subjects received either a stimulus comprising a single, continuous pulse (\(\varvec{SP_i}\)) followed by a double-pulse stimulus (\(\varvec{DP_i}\); top), or \(\varvec{DP_i}\) followed by \(\varvec{SP_i}\) (bottom). The IPI \(\varvec{X_i}\) separating pulses in \(\varvec{DP_i}\) ranged 2-32 ms. All pulses presented in a single trial came from the same modality condition (V, T, or VT). After the second stimulus, subjects had up to 2 s to indicate whether \(\varvec{DP_i}\) had occurred first or second

Task

A 2IFC discrimination paradigm determined the temporal threshold for detecting a brief, unfilled interval between successive stimulus pulses (Fig. 5). A 200-ms long tone announced the beginning of a trial. Then, 800 ms later, two different stimuli were presented in random order. One was the “double-pulse” stimulus (\(\varvec{DP_i}\)), comprising two 50-ms pulses separated by an empty IPI with duration \(= \varvec{X_i}\); the other was the “single-pulse” stimulus (\(\varvec{SP_i}\)) comprising a single uninterrupted pulse whose total duration matched the total duration of the accompanying double pulse stimulus. Note that, to ensure that differences in total stimulus duration was not correlated with \(\varvec{X_i}\), the duration of \(\varvec{SP_i}\) was always equal to the total duration of \(\varvec{DP_i}\) = 100 ms + \(\varvec{X_i}\). For each trial i, the value of \(\varvec{X_i}\) was drawn randomly from a discrete uniform distribution of five different gap durations, \(\varvec{X_i}= \{ 2, 4, 8, 16, 32 \}\) ms. Previous research (Desloge et al., 2014; Gescheider et al., 2003; Van Doren et al., 1990) and our own preliminary testing suggested that this range would likely encompass the thresholds across conditions. Both stimuli, \(\varvec{DP_i}\) and \(\varvec{SP_i}\), were always presented in the same modality on each trial (V , T , or VT), and were separated by an inter-stimulus interval of 1 s. The subject indicated whether \(\varvec{DP_i}\) occurred first or second on that trial, using the computer keyboard, as in Experiment 1, with response key mappings counterbalanced across subjects. Following the termination of the second stimulus, subjects had up to 2 s to respond (shortened from Experiment 1 in which subjects responded on average in well under 2 s). Trials were separated by an inter-trial interval of 0.5 s.

Subjects completed one practice block of 10 trials per modality condition, with the option of repeating each practice block until they felt confident to proceed with the task. Trials in the practice block were graded in difficulty, beginning with four trials where \(\varvec{X_i} = 50\) ms and ending with six trials where \(\varvec{X_i} = 32\) ms (the easiest experimental condition). Test trials, block randomized by modality (V , T , or VT), were presented in blocks of 50 trials with stimulus order and gap duration randomized from trial to trial. During the break between blocks, subjects received feedback in the form of percent correct for the last completed block, and were informed which modality would be tested next. Subjects completed a total of three test blocks in each modality, producing a total of 450 test trials per subject (30 trials per gap duration, per modality condition).

Data Analysis.

Data were analyzed in R (R Core Team, 2021). As in Experiment 1, we excluded data from trials where subjects failed to respond (57 trials, 0.9% of all trials). For two reasons, we did not exclude any trials based on premature responding. First, our 2IFC design may have led to very short RTs on trials in which \(\varvec{DP_i}\) appeared first. Second, when we examined the distribution of responses on trials with RT < 100 ms (96 trials, 1.5% of the data), the majority of these responses occurred on trials with the easiest (i.e., supra-threshold) conditions. Reasoning that these trials produced valid measurements, we chose not to exclude them from analysis. Following exclusions, the remaining data were aggregated by subject and condition.

To estimate gap detection threshold in each modality condition, we fit group-level, modality-specific logistic psychometric functions (PFs) using the glm function in R, with a 2AFC binomial link function from R’s psyphy package (Knoblauch, 2002; Knoblauch & Maloney, 2012). We also fit subject-level PFs for each condition, using the glmer function from R’s lme4 package (Bates et al., 2015). In the subject-level model, we included intercepts and slopes as random effects. We estimated each modality’s gap detection threshold by taking the inverse of the group-level PF at \(p = 0.76\). This defined the gap duration that would produce 76% correct (\(d' = 1\); Klein , 2001). To generate the standard error for each threshold, we generated three bootstrap distributions (one for each modality-specific threshold) with 5,000 re-samples, using the boot function from R’s boot package (Cantry & Ripley, 2021). To compare unimodal to bimodal performance and test our hypothesis that the more sensitive modality would dominate bimodal judgments, we used Pearson’s product-moment correlation tests to compare individual subjects’ mean accuracy from each unimodal condition to the bimodal condition.

Results

Psychometric modeling revealed differences in the two-pulse thresholds for V, T, and VT stimuli. Group-level gap detection thresholds for the three types of stimuli are shown by the dashed vertical lines in Fig. 6A, and again, with error bars added, by the points in Fig. 6B. Subject-level PFs followed the same overall trend as the group model (see Supplementary Fig. 1). The estimated V gap threshold was 15.5 ms (SE: 0.78 ms). In contrast, the estimated T gap threshold was 5.0 ms (SE: 0.37 ms), and the estimated VT threshold was 5.9 ms (SE: 0.42 ms).

Psychometric modeling of Experiment 2 data. A: Group-level logistic psychometric functions (PFs) fit using maximum likelihood estimation. Ribbons around each PF represent the PF’s standard error. Points show the mean measured accuracy in each condition; error bars around each point represent within-subject standard error. Dashed vertical lines denote the estimated sensory threshold in each modality, which we defined at 76% correct (\(d' = 1\); black dashed horizontal line). B: Average thresholds and bootstrapped standard errors for V, T, and VT stimuli.

Correlation of subject-level unimodal and bimodal performance in Experiment 2. A: Tactile vs. bimodal accuracy. B: Visual vs. bimodal accuracy. Points are mean accuracy for individual subjects. The dashed black line represents the value expected if bimodal performance (on the vertical axis) were determined entirely by the unimodal performance (on the horizontal axis). Also shown in each panel is the 95% confidence region around the maximum likelihood line of best fit.

To further examine performance at the level of individual subjects, we calculated each subject’s mean accuracy over all five levels of \( \varvec{X_i}\), for each modality condition separately. Subjects were most accurate on T trials (mean accuracy 81.12%, 0.99% within-subject standard error [WSSE]), followed by VT trials (79.95%, 0.91% WSSE), and least accurate on V trials (65.98%, 1.49% WSSE). Subject-level T accuracy was strongly correlated with VT accuracy, \(R^2\) = 0.78, p < 0.001. In contrast, subject-level V accuracy was not significantly correlated with VT accuracy, \(R^2\) = 0.16, p = 0.153. Figure 7 shows the results for these correlations. To test whether bimodal performance might be attributable to complete dominance by either unimodal cue, we compared the slope of the best-fit maximum likelihood line (shown in blue in Fig. 7) to 1.0, the theoretical case for unimodal cue dominance (shown in black in Fig. 7). Both slopes significantly differed from 1 (T: \(\beta \) = 0.698, p = 0.015; V: \(\beta \) = 0.429, p = 0.020). Taken together, these results suggest that even though there was not complete dominance by either unimodal cue in the bimodal percept, T performance was the stronger predictor of VT performance.

Experiment 2 Discussion

We found subjects’ V thresholds to be nearly 3\(\times \) their T thresholds (Fig. 6), showing that the T modality supports finer temporal acuity. For example, as Fig. 6A shows, subjects would be able detect a 5-ms unfilled interval separating two T pulses more than 75% of the time, but would not perform any better than the guess rate with V pulses separated by a gap of the same length. Subjects’ mean accuracy in each modality condition also reflected this difference, with highest accuracy on unimodal T trials and lowest accuracy on unimodal V trials.

As mentioned above, performance in T and VT conditions was much more strongly correlated than was performance in V and VT conditions. This difference strengthens the conclusion we drew from Fig. 6A, namely that with bimodal stimuli, subjects based their judgments largely on the tactile component of the stimulus. This finding can be interpreted as an analog of what Barlow called “the lower envelope principle” (Barlow, 1972, 1995). That principle states that the behavioral threshold is determined entirely by the most sensitive of multiple sensory responses: “Sensory thresholds are set by the class of sensory unit that has the lowest threshold for the particular stimulus used and are little influenced by the presence or absence of responses in the enormous number of other neurons that are less sensitive to the stimulus” (Barlow, 1995, p. 418). In our experiment, the VT threshold appears to have been set predominantly, but not entirely, by T inputs: that the slope of the predicted T line differs from 1 (Fig. 7A) shows there was not complete T dominance.

General Discussion

A central question drove this study: How does temporal information processing differ between tactile and visual sensory modalities? Using two convergent approaches, rate categorization and gap detection, we demonstrated that human subjects are more sensitive to temporal information conveyed by brief pulses of vibrotactile stimulation, compared to visual flashes. Experiment 1 provided clear evidence of modality-related differences in rate categorization; Experiment 2 demonstrated a possible cause of that result, namely that subjects perceive unfilled intervals marked by individual tactile pulses with better temporal accuracy than they do with visual pulses. In the following section, we compare our findings to those of other studies of sensory timing and consider the implications of our results.

Interval and Pattern Timing

That temporal sensitivity for tactile stimulation is better than temporal sensitivity to visual stimulation is not a novel idea. Empirical support for that idea comes largely from measurements of perceived duration of single, isolated intervals (Azari et al., 2020; Ball et al., 2017; Grondin, 2014; Tomassini et al., 2011; van Erp Jan & Werkhoven, 2004), and results from our Experiment 2 add to this body of support. Our study additionally demonstrates that this difference between modalities is not limited to the single interval duration judgments that so many previous studies focused on. With Experiment 1, we extend that conclusion to judgments of the average rate of a sequence of intervals. The distinction between judgments of single versus sequences of intervals has particular importance in neural models of sub-second timing that distinguish between timing of simple intervals and timing of more complex temporal patterns (Hardy & Buonomano, 2016). Different task demands can recruit different distributed timing mechanisms (Bouwer et al., 2020; Buhusi & Meck, 2005; Levitan et al., 2015; Merchant et al., 2008; Paton & Buonomano, 2018). While we found consistent tactile superiority in pattern timing (Experiment 1) and interval timing (Experiment 2) tasks, we cannot determine from the present study whether the modality difference in both tasks arose from shared or distinct underlying timing mechanisms, or some combination thereof.

Despite this limitation, one possible influence shared between both experiments is sensory persistence. It is well known that the response to a brief visual stimulus outlasts the stimulus itself (e.g., Kelly , 1971; Sperling , 1960), a persistence that is reflected in vision’s temporal impulse response function (Georgeson, 1987; Ikeda, 1986; Nilsson, 1979). Because of persistence, two pulses separated by a sufficiently short empty interval may be perceived as a single, continuous, uninterrupted pulse. Neural persistence has also been documented in the tactile modality, specifically in the Pacinian channel targeted by 250-Hz vibrations (Gescheider et al., 1999, 2003), but how the time course of tactile persistence compares to that of visual persistence is unknown. The shorter tactile gap detection threshold we observed in Experiment 2 supports the idea that tactile persistence was briefer than its visual counterpart. A longer visual persistence could also explain the difference in error types that was specific to visual rate judgments in Experiment 1. If IPIs appeared truncated on V trials compared to T trials, subjects would have made more ”fast“ judgments on V trials overall, leading to higher error rates on 4-Hz than 6-Hz V trials, as we observed (Fig. 4).

The decision to employ a binary categorization of rate in Experiment 1 limited the inferences about temporal processing that the experiment could support. Even with sequences that were corrupted by considerable noise, subjects succeeded in reliably distinguishing between two rates. Obviously, the two rates included in our task do not tile the space of all possible temporal patterns that can be applied to the skin. As a result, the experiment leaves wide open the possibility that many other types of temporal patterns can be successfully categorized and used to communicate information about the environment, when either applied to the skin or presented visually. For example, one simple extension of Experiment 1 might entail discrimination of frequency-modulated signals, such as pulse trains that systematically increase or decrease in frequency (i.e., frequency sweeps; Crommett et al. , 2019; Yi & Sekule, 2022). Also deserving of investigation is whether tactile perception would outperform visual perception with more complex temporal patterns, like periodic rhythmic structures (Bouwer et al., 2020) or random patterns of stimulation (Kang et al., 2018). Importantly, to test the limits of tactile stimulation to transmit information via temporal patterns, future research might also determine the degree to which vibrotactile signal intensity, which we held constant, interacts with temporal perception (Sharma et al., 2022), as well as with mechanisms of perceptual learning and memory for temporal sequences (Gold et al., 2014; Kang et al., 2018).

Cross-Modal Timing Interactions

Results from these experiments have multiple implications for multisensory integration research. Tactile signals were perceived with better temporal resolution than visual signals in both experiments, and in Experiment 2 subjects largely based their judgments about bimodal stimuli upon the tactile component of the stimulus. Consistent with the modality appropriateness hypothesis (Welch & Warren, 1980), these findings suggest that vibrotactile perception is better suited for processing temporal information than the visual system, which has importance in the framework of sensory dominance. It is well documented, for example, that auditory signals influence visual perception in the temporal domain due to audition’s superior temporal acuity (Burr et al., 2009; Recanzone, 2003; Shimojo & Shams, 2001; Shipley, 1964). Similarly, tactile temporal processing may drive temporal perception of visuotactile stimuli, given its demonstrated unimodal advantage.

Our results support previous studies that have reported touch-induced bias on visual perception (Bresciani et al., 2008; Violentyev et al., 2005). In these studies, subjects were instructed to attend to and report the number of visual flashes they perceived, while ignoring a concurrent sequence of tactile pulses. A notable difference between these and the current study is that subjects were not instructed to attend to either unimodal component while ignoring the other: subjects were instructed to use both channels of sensory information to make their responses. When studying cross-modal influences on counting judgments (Werkhoven et al., 2009), the influence of vision on touch was stronger than the influence of touch on vision when subjects were not instructed ahead of time to attend to a particular modality. While our Experiment 2 results contradict this finding, we were limited by our decision to always use congruent bimodal pairings (i.e., the number of tactile pulses always matched the number of visual pulses). It will be important for future work to include both congruent and incongruent bimodal conditions, as well as to explore the effects of attention on visuo-tactile integration of temporal information.

Locus and Type of Tactile Stimulation

In Experiments 1 and 2, vibrotactile stimuli were delivered only to the distal tip of the left index finger. We chose that site because of its particular sensitivity to light touch (Sekuler et al., 1973; Weinstein & Kenshalo, 1968). Many other studies of tactile perception have also used the index finger as the locus of stimulation (Bresciani et al., 2008; Crommett et al., 2019; Espinoza-Monroy & de Lafuente, 2021; Fujisaki & Nishida, 2009; Machulla & Ernst, 2016), with some others extending stimulation to other fingertips (Ball et al., 2017; Kang et al., 2018; Rahman et al., 2020) or the entire hand (Azari et al., 2020; Tomassini et al., 2011). Additionally, these investigations of tactile temporal sensitivity have utilized not just vibrotactile stimulation, but also electrocutaneous stimulation and individual taps. Variations in the type of tactile stimulation used across studies may have led to the mixed results of previous visuo-tactile comparisons. A unique aspect of tactile stimulation is that different forms of tactile information could be delivered simultaneously to multiple distributed loci. Comparable knowledge about various body parts’ sensitivity to stimulus timing is lacking; the absence of such data means we must be cautious about extrapolating from our results to other loci of stimulation and forms of tactile stimulation (Dim & Ren, 2017).

One potential application of the present research is in the development of devices that use vibrotactile stimulation to convey important information to users (Choi & Kuchenbecker, 2013; Janidarmian et al., 2022). Investigating sensitivity to tactile temporal patterns of stimulation at various loci could greatly expand the communicative potential of vibrotactile signaling, beyond a basic alerting function (Chiasson et al., 2003). Future investigations should proceed with careful attention to the density of Pacinian corpuscles at each potential locus of stimulation, which could create variation in temporal acuity to pulsatile vibrations (Choi & Kuchenbecker, 2013), as well as effects of aging, which has been shown to impact neural persistence (Gescheider et al., 2003; Humes et al., 2009; Van Doren et al., 1990) and mechanisms of multisensory integration (de Dieuleveult et al., 2017; Poliakoff et al., 2006).

Conclusion

Our study adds to a growing literature on the modality-dependent nature of different forms of temporal processing. Studies of this kind gain importance from the widespread implementation of multimodal pulse rates and other temporal patterns in communication devices for individuals with sensory deficits (Choi & Kuchenbecker, 2013). As designers of these devices look to incorporate vibrotactile signals into existing displays, gauging the information-carrying potential of this understudied modality is crucial. Understanding how temporal information is processed when delivered via different sensory modalities is not only important from a device-engineering perspective: it also gives us valuable insight into how the brain perceives time (Bratzke & Ulrich, 2019; Buonomano & Karmarkar, 2002; Ivry & Spencer, 2004). Work in this domain has clinical importance, for time-keeping abilities are impaired in some neurological disorders, such as schizophrenia and Parkinson’s disease (Gu et al., 2015; Penney et al., 2005; Tracy et al., 1998). For these reasons, ongoing research on time perception seeks to establish a “taxonomy of perceptual time” (Meck & Ivry, 2016; Paton & Buonomano, 2018), a classification scheme that defines and differentiates various forms of perceptual timing based on underlying neural mechanisms. Overall, then, our findings point to the value of further study of visuo-tactile timing, and multisensory timing more broadly.

Data Availability

The data analyzed in the current study as well as the scripts used for statistical analysis can be found at: https://osf.io/u2jt3/?view_only=a39c3428d2fd445b80897d8915b2857f

References

Allard, R., Faubert, J., & Pelli, D. (2015). Using noise to characterize vision. Frontiers in Psychology, 6, 1707.

Azari, L., Mioni, G., Rousseau, R., & Grondin, S. (2020). An analysis of the processing of intramodal and intermodal time intervals. Attention, Perception & Psychophysics, 82, 1473–1487. https://doi.org/10.3758/s13414-019-01900-7

Badde, S., Navarro, K., & Landy, M. (2018). Vision and Touch Are Not Automatically Integrated. Journal of Vision, 18, 95. https://doi.org/10.3758/s13414-019-01900-7

Ball, D., Arnold, D., & Yarrow, K. (2017). Weighted integration suggests that visual and tactile signals provide independent estimates about duration. Journal of Experimental Psychology: Human Perception and Performance, 43, 868–880. https://doi.org/10.1167/18.10.95

Banzi, M., Shiloh, M. (2014) Getting Started with Arduino: The Open Source Electronics Prototyping Platform

Barlow, H. (1972). Single units and sensation: A neuron doctrine for perceptual psychology? Perception, 1, 0301–0066. https://doi.org/10.1068/p010371

Barlow, H. (1995). The neuron doctrine in perception. In M. Gazzaniga (Ed.), The Cognitive Neurosciences. The MIT Press

Bates, D., Mächler, M., Bolker, B., & Walker, S. (2015). Fitting Linear Mixed-Effects Models Using lme4. Journal of Statistical Software, 67, 1–48. https://doi.org/10.48550/arXiv.1406.5823

Bouwer, F., Honing, H., & Slagter, H. (2020). Beat-based and memory-based temporal expectations in rhythm: Similar perceptual effects, different underlying mechanisms. Journal of Cognitive Neuroscience, 7, 1221–1241. https://doi.org/10.1162/jocn_a_01529

Brainard, D. (1997). The psychophysics toolbox. Spatial Vision, 10, 433–436.

Bratzke, D., & Ulrich, R. (2019). Temporal reproduction within and across senses: Testing the supramodal property of the pacemaker-counter model. Journal of Experimental Psychology: Human Perception and Performance, 45, 1218–1235. https://doi.org/10.1037/xhp0000667

Bresciani, J. P., Dammeier, F., & Ernst, M. (2008). Tri-modal integration of visual, tactile and auditory signals for the perception of sequences of events. Brain Research Bulletin, 75, 753–760. https://doi.org/10.1016/j.brainresbull.2008.01.009

Breska, A., & Ivry, R. (2018). Double dissociation of single-interval and rhythmic temporal prediction in cerebellar degeneration and Parkinson’s disease. Proceedings of the National Academy of Sciences of the United States of America, 115, 12283–12288. https://doi.org/10.1073/pnas.1810596115

Buhusi, C., & Meck, W. (2005). What makes us tick? Functional and neural mechanisms of interval timing. Nature Reviews Neuroscience, 6, 755–765. https://doi.org/10.1038/nrn1764

Bultitude, J., Juravle, G., & Spence, C. (2016). Tactile Gap Detection Deteriorates during Bimanual Symmetrical Movements under Mirror Visual Feedback. PLOS ONE, 11, 1–14. https://doi.org/10.1371/journal.pone.0146077

Buonomano, D., & Karmarkar, U. (2002). How do we tell time? Neuroscientist, 18, 42–51. https://doi.org/10.1177/107385840200800109

Burr, D., Banks, M., & Morrone, M. (2009). Auditory dominance over vision in the perception of interval duration. Experimental Brain Research, 198, 49. https://doi.org/10.1007/s00221-009-1933-z

Cantry, A., Ripley, B. (2021). boot: Bootstrap R (S-Plus) Functions. https://CRAN.R-project.org/package=boot

Chandrasekaran, C. (2017). Computational principles and models of multisensory integration. Current Opinion in Neurobiology, 43, 25–34. https://doi.org/10.1016/j.conb.2016.11.002

Chiasson, J., McGrath, B., & Rupert, A. (2003). Enhanced situation awareness in sea, air and land environments. FL: Pensacola.

Choi, S., & Kuchenbeckerm, K. (2013). Vibrotactile display: Perception, technology, and applications. Proceedings of the IEEE, 101, 2093–2104. https://doi.org/10.1109/JPROC.2012.2221071

Colavita, F. (1974). Human sensory dominance. Perception & Psychophysics, 16, 409–412. https://doi.org/10.3758/BF03203962

Crommett, L., Madala, D., & Yau, J. (2019). Multisensory perceptual interactions between higher-order temporal frequency signals. Journal of Experimental Psychology: General, 148, 1124–1137. https://doi.org/10.1037/xge0000513

de Dieuleveult, A., Siemonsma, P., van Erp, J. B., & Brouwer, A. M. (2017). Effects of aging in multisensory integration: A systematic review. Frontiers in Aging Neuroscience, 9, 80. https://doi.org/10.3389/fnagi.2017.00080

Desloge, J., Reed, C., Braida, L., Perez, Z., Delhorne, L., & Villabona, T. (2014). Auditory and Tactile Gap Discrimination by Observers with Normal and Impaired Hearing. The Journal of the Acoustical Society of America, 135, 838–850. https://doi.org/10.1121/1.4861246

Dim, N., & Ren, X. (2017). Investigation of suitable body parts for wearable vibration feedback in walking navigation. International Journal of Human-Computer Studies, 97, 34–44. https://doi.org/10.1016/j.ijhcs.2016.08.002

Donelson, S. M., & Gordon, C. (1995). Matched Anthropometric Database of US Marine Corps Personnel: Summary Statistics. MA: Newton Centre.

Elliott, L., Coovert, M., Redden, E. (2009). International Conference on Human-Computer Interaction. Overview of meta-analyses investigating vibrotactile versus visual display options, 435-443, Springer

Espinoza-Monroy, M., & de Lafuente, V. (2021). Discrimination of regular and irregular rhythms explained by a time difference accumulation model. Neuroscience, 459, 16–26. https://doi.org/10.1016/j.neuroscience.2021.01.035

Forster, B., Cavina-Pratesi, C., Aglioti, S., & Berlucchi, G. (2022). Redundant target effect and intersensory facilitation from visual-tactile interactions in simple reaction time. Experimental Brain Research, 143, 480–487. https://doi.org/10.1007/s00221-002-1017-9

Fujisaki, W., & Nishida, S. (2009). Audio-tactile superiority over visuo-tactile and audio-visual combinations in the temporal resolution of synchrony perception. Experimental Brain Research, 198, 245–259. https://doi.org/10.1007/s00221-009-1870-x

Georgeson, M. (1987). Temporal properties of spatial contrast vision. Vision Research, 27, 765–80. https://doi.org/10.1016/0042-6989(87)90074-5

Gescheider, G. A., Berryhill, M. E., Verrillo, R. T., & Bolanowski, S. J. (1999). Vibrotactile temporal summation: Probability summation or neural integration? Somatosensory & Motor Research, 16(3), 229–242. https://doi.org/10.1080/08990229970483

Gescheider, G., Berryhill, M., Verillo, R., Bolanowski, S., & Chatterton, S. (2003). Temporal Gap Detection in Tactile Channels. Somatosensory & Motor Research, 20, 239–247. https://doi.org/10.1080/08990220310001622960

Girshick, A., Landy, M., & Simoncelli, E. (2011). Cardinal rules: Visual orientation perception reflects knowledge of environmental statistics. Nature Neuroscience, 14, 926–932. https://doi.org/10.1038/nn.2831

Gold, J. (2014). Information processing correlates of a size-contrast illusion. Frontiers in Psychology, 5, 142. https://doi.org/10.3389/fpsyg.2014.00142

Gold, J., Aizenman, A., Bond, S., & Sekuler, R. (2014). Memory and Incidental Learning for Visual Frozen Noise Sequences. Vision Research, 99, 19–36. https://doi.org/10.1016/j.visres.2013.09.005

Gold, J., Sekuler, A., & Bennett, P. (2004). Characterizing perceptual learning with external noise. Cognitive Science, 28, 167–207. https://doi.org/10.1016/j.cogsci.2003.10.005

Grondin, S. (2014). Why studying intermodal duration discrimination matters. Frontiers in Psychology, 5, 628. https://doi.org/10.3389/fpsyg.2014.00628

Grondin, S., & Rousseau, R. (1991). Judging the relative duration of multimodal short empty time intervals. Perception & Psychophysics, 49, 245–56. https://doi.org/10.3758/bf03214309

Gu, B.M., Jurkowski, A., Lake, J., Malapani, C., Meck, W. (2015). Time Distortions in Mind. Bayesian models of interval timing and distortions in temporal memory as a function of Parkinson’s disease and dopamine-related error processing, 281-327

Hall, C., Wang, S., Bhagat, R., & McAnany, J. (2014). Effect of luminance noise on the object frequencies mediating letter identification. Frontiers in Psychology, 5, 663. https://doi.org/10.3389/fpsyg.2014.00663

Hardy, N. F., & Buonomano, D. V. (2016). Neurocomputational models of interval and pattern timing. Current Opinion in Behavioral Sciences, 8, 250–257. https://doi.org/10.1016/j.cobeha.2016.01.012

Humes, L., Busey, T., & Craig, J. (2009). Kewley-Port The effects of age on sensory thresholds and temporal gap detection in hearing, vision, and touch. Attention, Perception, & Psychophysics, 71, 860–871. https://doi.org/10.3758/APP.71.4.860

Ikeda, M. (1986). Temporal impulse response. Vision Research, 26, 1431–40. https://doi.org/10.1016/0042-6989(86)90166-5

Ivry, R., & Spencer, R. (2004). The neural representation of time. Current Opinion in Neurobiology, 14, 225–232. https://doi.org/10.1016/j.conb.2004.03.013

Janidarmian, M., Roshan Fekr, A., Radecka, K., & Zilic, Z. (2022). Wearable vibrotactile system as an assistive technology solution. Mobile Networks and Applications, 27, 709–717. https://doi.org/10.1007/s11036-019-01304-9

Jones, L., Poliakoff, E., & Wells, J. (2009). Good vibrations: Human interval timing in the vibrotactile modality. Quarterly Journal of Experimental Psychology, 62, 2171–2186. https://doi.org/10.1080/17470210902782200

Kang, H., Lancelin, D., & Pressnitzer, D. (2018). Memory for random time patterns in audition, touch, and vision. Neuroscience, 389, 118–132. https://doi.org/10.1016/j.neuroscience.2018.03.017

Kelly, D. (1971). Theory of flicker and transient responses. I. Uniform fields. Journal of the Optical Society of America, 61, 537–546.

Klein, S. (2001). Measuring, estimating, and understanding the psychometric function: A commentary. Perception & Psychophysics, 63, 1421–1455. https://doi.org/10.3758/BF03194552

Kleiner, M., Brainard, D., Pelli, D., Ingling, A., Murray, R., & Broussard, C. (2007). What’s new in Psychtoolbox-3? Perception, 36, 1–16.

Knoblauch, K. (2002). psyphy: Functions for Analyzing Psychophysical Data in R. https://CRAN.R-project.org/package=psyphy

Knoblauch K., Maloney, L. (2012). Modeling Psychophysical Data in R. The Psychometric Function: Continuation, 141-166. Springer New York, NY

Lenth, R. (2022). emmeans: Estimated Marginal Means, aka Least-Squares Means. https://CRAN.R-project.org/package=emmeans

Levitan, C., Ban, Y. H., Stiles, N. R. B., & Shimojo, S. (2015). Rate perception adapts across the senses: evidence for a unified timing mechanism. Scientific Reports, 5, 8857. https://doi.org/10.1038/srep08857

Lu, Z. L., & Dosher, B. A. (1999). Characterizing human perceptual inefficiencies with equivalent internal noise. Journal of the Optical Society of America A, 16, 764–778. https://doi.org/10.1364/JOSAA.16.000764

Machulla, T. J., & Ernst, M. O. (2016). The consistency of crossmodal synchrony perception across the visual, auditory, and tactile senses. Journal of Experimental Psychology: Human Perception and Performance, 42, 1026. https://doi.org/10.1037/xhp0000191

Matlab, M. (2017). version 9.3.0 (R2017b) Natick. Massachusetts.

McGrath, B., Estrada, A., Braithwaite, M., Raj, A., & Rupert, A. (2004). Tactile situation awareness system flight demonstration. AL Army Aeromedical Research Lab: Fort Rucker.

Meck, W. H., & Ivry, R. B. (2016). Editorial overview: Time in perception and action. Current Opinion in Behavioral Sciences, 8, 6–10.

Merchant, H., Zarco, W., & Prado, L. (2008). Do we have a common mechanism for measuring time in the hundreds of millisecond range? Evidence from multiple-interval timing tasks. Journal of Neurophysiology, 99, 939–49. https://doi.org/10.1152/jn.01225.2007

Motala, A., Heron, J., McGraw, P., Roach, N., & Whitaker, D. (2020). Temporal rate is not a distinct perceptual metric. Scientific Reports, 10, 8654. https://doi.org/10.1038/s41598-020-64984-4

Nilsson, T. (1979). Visual temporal discriminations of brief pulse intervals. Vision Research, 19, 41–48. https://doi.org/10.1016/0042-6989(79)90119-6

Parker, A. (1998). Newsome W Sense and the single neuron: probing the physiology of perception. Annual Review of Neuroscience, 21, 227–277. https://doi.org/10.1146/annurev.neuro.21.1.227

Paton, J., & Buonomano, D. (2018). The neural basis of timing: Distributed mechanisms for diverse functions. Neuron, 98, 687–705. https://doi.org/10.1016/j.neuron.2018.03.045

Peirce, J., Gray, J., Simpson, S., MacAskill, M., Höchenberger, R., Sogo, H., Kastman, E., & Lindeløv, J. (2019). PsychoPy2: Experiments in behavior made easy. Behavior Research Methods, 51, 195–203. https://doi.org/10.3758/s13428-018-01193-y

Pelli, D. (1997). The VideoToolbox software for visual psychophysics: Transforming numbers into movies. Spatial Vision, 10, 437–442.

Pelli, D., & Farell, B. (1999). Why use noise?. Journal of the Optical Society of America. A, Optics, Image Science, and Vision, 16, 647–653. https://doi.org/10.1364/josaa.16.000647

Penney, T., Meck, W., Roberts, S., Gibbon, J., & Erlenmeyer-Kimling, L. (2005). Interval-timing deficits in individuals at high risk for schizophrenia. Brain and Cognition, 58, 109–118. https://doi.org/10.1016/j.bandc.2004.09.012

Poliakoff, E., Shore, D. I., Lowe, C., & Spence, C. (2006). Visuotactile temporal order judgments in ageing. Neuroscience Letters, 369, 207–211. https://doi.org/10.1016/j.neulet.2005.11.034

R Core Team. (2021). R: A Language and Environment for Statistical Computing Vienna, Austria R Foundation for Statistical Computing

Rahman, M. S., Barnes, K. A., Crommett, L. E., Tommerdahl, M., & Yau, J. (2020). Auditory and tactile frequency representations are co-embedded in modality-defined cortical sensory systems. Neuroimage, 215,. https://doi.org/10.1016/j.neuroimage.2020.116837

Rammsayer, T. (2014). The effects of type of interval, sensory modality, base duration, and psychophysical task on the discrimination of brief time intervals. Attention, Perception, & Psychophysics, 76, 1185–1196. https://doi.org/10.3758/s13414-014-0655-x

Rammsayer, T. H., & Brandler, S. (2004). Aspects of temporal information processing: A dimensional analysis. Psychological Research, 69, 115–123. https://doi.org/10.1007/s00426-003-0164-3

Recanzone, G. H. (2003). Auditory influences on visual temporal rate perception. Journal of Neurophysiology, 89, 1078–1093. https://doi.org/10.1152/jn.00706.2002

Sekuler, R., Nash, D., & Armstrong, R. (1973). Sensitive, objective procedure for evaluating response to light touch. Neurology, 23, 1282–1291. https://doi.org/10.1212/wnl.23.12.1282

Sharm, D., Ng, K. W., Birznieks, I., & Vickery, R. M. (2022). Perceived Tactile Intensity at a Fixed Primary Afferent Spike Rate Varies with the Temporal Pattern of Spikes. Journal of Neurophysiology, 128, 1074–1084. https://doi.org/10.1152/jn.00284.2022

Shimojo, S., & Shams, L. (2001). Sensory Modalities Are Not Separate Modalities. Sensory Modalities Are Not Separate Modalities: Plasticity and Interactions, 11, 505–509. https://doi.org/10.1016/S0959-4388(00)00241-5

Shipley, T. (1964). Auditory flutter-driving of visual flicker. Science, 145, 1328–1330. https://doi.org/10.1126/science.145.3638.1328

Singmann, H., Bolker, B., Westfall, J., Aust, F., Ben-Shachar, M. (2022). afex: Analysis of Factorial Experiments. https://CRAN.R-project.org/package=afex

Sinnett, S., Soto-Faraco, S., & Spence, C. (2008). The co-occurrence of multisensory competition and facilitation. Acta Psychologica, 128, 153–161. https://doi.org/10.1016/j.actpsy.2007.12.002

Sperdin, H., Cappe, C., Foxe, J., & Murray, M. (2009). Early, low-level auditory-somatosensory multisensory interactions impact reaction time speed. Frontiers in Integrative Neuroscience, 3,. https://doi.org/10.3389/neuro.07.002.2009

Sperling, G. (1960). The information available in brief visual presentations. Psychological Monographs: General and Applied, 74, 1–29. https://doi.org/10.1037/h0093759

Stauffer, C., Haldemann, J., Troche, S. J., & Rammsayer, T. H. (2012). Auditory and visual temporal sensitivity: Evidence for a hierarchical structure of modality-specific and modality-independent levels of temporal information processing. Psychological Research, 76, 20–31. https://doi.org/10.1007/s00426-011-0333-8

Sun, Y., & Sekuler, R. (2021). Decision-making and multisensory combination under time stress. Perception, 50, 627–645. https://doi.org/10.1177/03010066211017458

Teki, S., Grube, M., Kumar, S., & Griffiths, T. D. (2011). Distinct neural substrates of duration-based and beat-based auditory timing. Journal of Neuroscience, 31, 3805–3812. https://doi.org/10.1523/JNEUROSCI.5561-10.2011

Tomassini, A., Gori, M., Burr, D., Sandini, G., & Morrone, C. (2011). Perceived duration of Visual and Tactile Stimuli Depends on Perceived Speed. Frontiers in Integrative Neuroscience, 5, 51. https://doi.org/10.3389/fnint.2011.00051

Tracy, J. I., Monaco, C., McMichael, H., Tyson, K., Chambliss, C., Christensen, H. L., & Celenza, M. A. (1998). Information-processing characteristics of explicit time estimation by patients with schizophrenia and normal controls. Perceptual and Motor Skills, 86, 515–526. https://doi.org/10.2466/pms.1998.86.2.515

van Erp Jan, B. F., & Werkhoven, P. J. (2004). Vibro-tactile and visual asynchronies: Sensitivity and consistency. Perception, 33, 103–111. https://doi.org/10.1068/p5014

Van Doren, C. L., Gescheider, G. A., & Verrillo, R. T. (1990). Vibrotactile Temporal Gap Detection as a Function of Age. The Journal of the Acoustical Society of America, 87, 2201–2206. https://doi.org/10.1121/1.399187

Verma, J. P. (2015). Repeated Measures Design for Empirical Researchers. John Wiley & Sons.

Villalonga, M. B., Sussman, R. F., & Sekuler, R. (2020). Feeling the Beat (and Seeing It, Too): Vibrotactile, Visual, and Bimodal Rate Discrimination. Multisensory Research, 33, 31–59. https://doi.org/10.1163/22134808-20191413

Villalonga, M. B., Sussman, R. F., & Sekuler, R. (2021). Perceptual timing precision with vibrotactile, auditory, and multisensory stimuli. Attention, Perception, & Psychophysics, 83, 2267–2280. https://doi.org/10.3758/s13414-021-02254-9

Violentyev, A., Shimojo, S., & Shams, L. (2005). Touch-Induced Visual Illusion. NeuroReport, 16, 1107. https://doi.org/10.1097/00001756-200507130-00015

Weinstein, S., & Kenshalo, D. (1968). The Skin Senses. Intensive, extensive aspects of tactile sensitivity as a function of body part, sex and laterality, 195, 222.

Welch, R. B., & Warren, D. H. (1980). Immediate perceptual response to intersensory discrepancy. Psychological Bulletin, 88, 638–667.

Welch, R. B., DuttonHurt, L. D., & Warren, D. H. (1986). Contributions of audition and vision to temporal rate perception. Perception & Psychophysics, 39, 294–300. https://doi.org/10.3758/bf03204939

Werkhoven, P. J., van Erp, J. B. F., & Philippi, T. G. (2009). Counting Visual and Tactile Events: The Effect of Attention on Multisensory Integration. Attention, Perception, & Psychophysics, 71, 1854–1861. https://doi.org/10.3758/APP.71.8.1854

Yi, L., & Sekuler, R. (2022). Audiovisual interaction with rate-varying signals. i-Perception, 13, 20416695221116652. https://doi.org/10.1177/20416695221116653

Zhang, J., & Mueller, S. T. (2005). A note on ROC analysis and non-parametric estimate of sensitivity. Psychometrika, 70, 203–212.

Acknowledgements

We thank Alberto Pierobon and Timothy Hebert for assistance with apparatus design; Rachel F. Sussman, Eliana Ellenberger, and Rachel Peng for assistance with data collection; and Paul DiZio, Nicolai D. Ayasse, and John C. Ksander for thoughtful feedback on earlier drafts of this manuscript. MBV was supported by National Institute of General Medical Sciences training grant T32 GM132498. The content is solely the responsibility of the authors and does not represent the official views of any funding agency. The authors declare they have no conflicts of interest.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Villalonga, M.B., Sekuler, R. Keep your finger on the pulse: Better rate perception and gap detection with vibrotactile compared to visual stimuli. Atten Percept Psychophys 85, 2004–2017 (2023). https://doi.org/10.3758/s13414-023-02736-y

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.3758/s13414-023-02736-y