Abstract

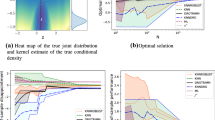

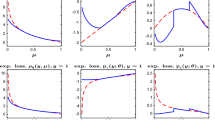

Based on X ∼ Nd(θ, σ 2 X Id), we study the efficiency of predictive densities under α-divergence loss Lα for estimating the density of Y ∼ Nd(θ, σ 2 Y Id). We identify a large number of cases where improvement on a plug-in density are obtainable by expanding the variance, thus extending earlier findings applicable to Kullback-Leibler loss. The results and proofs are unified with respect to the dimension d, the variances σ 2 X and σ 2 Y , the choice of loss Lα; α ∈ (−1, 1). The findings also apply to a large number of plug-in densities, as well as for restricted parameter spaces with θ ∈ Θ ⊂ ℝd. The theoretical findings are accompanied by various observations, illustrations, and implications dealing for instance with robustness with respect to the model variances and simultaneous dominance with respect to the loss.

Similar content being viewed by others

References

J. Aitchison, “Goodness of Prediction Fit”, Biometrika 62, 547–554 (1975).

J. Aitchison and I. R. Dunsmore, Statistical Prediction Analysis (Cambridge Univ. Press, Cambridge, 1975).

A. J. Baranchik, “A family of Minimax Estimators of the Mean of a Multivariate Normal Distribution”, Ann. Math. Statist. 41, 642–645 (1970).

J. O. Berger, “Minimax Estimation of a Multivariate Normal Mean under Polynomial Loss”, J. Multivariate Anal. 8, 173–180 (1978).

L. D. Brown, E. I. George, and X. Xu, “Admissible Predictive Density Estimation”, Ann. Statist. 36, 1156–1170 (2008).

J. M. Corcuera and F. Giummolè, “A Generalized Bayes Rule for Prediction”, Scand. J. Statist. 26, 265–279 (1999A).

J. M. Corcuera and F. Giummolè, “On the Relationship between α Connections and the Asymptotic Properties of Predictive Distributions”, Bernoulli 5, 163–176 (1999B).

I. Csiszàr, “Information-Type Measures of Difference of Probability Distributions and Indirect Observations”, Studia Sci. Math. Hungar. 2, 299–318 (1967).

D. Fourdrinier, É. Marchand, A. Righi, and W. E. Strawderman, “On Improved Predictive Density Estimation with Parametric Constraints”, Electron. J. Statist. 5, 172–191 (2011).

D. Fourdrinier, I. Ouassou, and W. E. Strawderman, “Estimation of a Mean Vector Under Quartic Loss”, J. Statist. Plann, Inference 138, 3841–3857 (2008).

E. I. George, F. Liang, and X. Xu, “Improved Minimax Predictive Densities under Kullback-Leibler Loss”, Ann. Statist. 34, 78–91 (2006).

M. Ghosh, V. Mergel, and G. S. Datta, “Estimation, Prediction and the Stein Phenomenon under Divergence Loss”, J. Multivariate Anal. 99, 1941=–1961 (2008).

T. Kubokawa, É. Marchand, and W. E. Strawderman, “On Predictive Density Estimation for Location Families under Integrated Absolute Value Loss”, Bernoulli 23, 3197–3212 (2017).

T. Kubokawa, É. Marchand, and W. E. Strawderman, “On Predictive Density Estimation for Location Families under Integrated Squared Error Loss”, J. Multivariate Anal. 142, 57–74 (2015A)

T. Kubokawa, É. Marchand, and W. E. Strawderman, “On Improved Shrinkage Estimators under Concave Loss”, Statist. Probab. Lett. 96, 241–246 (2015B).

A. L’Moudden, É. Marchand, O. Kortbi, and W. E. Strawderman, “On Predictive Density Estimation for Gamma Models with Parametric Constraints”, J. Statist. Plann. Inference 185, 56–68 (2017).

É. Marchand, F. Perron, and I. Yadegari, “On Estimating a Bounded Normal Mean with Applications to Predictive Density Estimation”, Electron. J. Statist. 11, 2002–2025 (2017).

É. Marchand and N. Sadeghkhani, “On Predictive Density Estimation with Additional Information”, Electron. J. Statist. (in press) (2017).

É. Marchand and W. E. Strawderman, “A Unified Minimax Result for Restricted Parameter Spaces”, Bernoulli 18, 635–643 (2012).

Y. Maruyama and T. Ohnishi, “Harmonic Bayesian Prediction under α-Divergence”, arXiv:1605.05899v4 (2017).

Y. Maruyama and W. E. Strawderman, “Bayesian Predictive Densities for Linear Regression Models under α-Divergence Loss: Some Results and Open Problems”, in Contemporary Developments in Bayesian analysis and Statistical Decision Theory: A Festschrift for William E. Strawderman, IMS Collections (2012), Vol. 8, pp. 42–56.

T. Yanagimoto and T. Ohnishi, “Bayesian Prediction of a Density Function in Terms of e-Mixture”, J. Statist. Plann. Inference 139, 3064–3075 (2009).

Acknowledgments

Eric Marchand’s research is supported in part by the Natural Sciences and Engineering Research Council of Canada. We thank Bill Strawderman who provided the lower bound in (2.19), as well as Jean Vaillancourt for a careful reading that led to some improvements.

Author information

Authors and Affiliations

Corresponding authors

About this article

Cite this article

L’Moudden, A., Marchand, È. On Predictive Density Estimation under α-Divergence Loss. Math. Meth. Stat. 28, 127–143 (2019). https://doi.org/10.3103/S1066530719020030

Received:

Published:

Issue Date:

DOI: https://doi.org/10.3103/S1066530719020030

Keywords

- alpha-divergence

- dominance

- frequentist risk

- Hellinger loss

- multivariate normal

- plug-in

- predictive density

- restricted parameter space

- variance expansion