Abstract

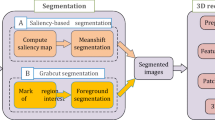

To speed up the reconstruction of 3D dynamic scenes in an ordinary hardware platform, we propose an efficient framework to reconstruct 3D dynamic objects using a multiscale-contour-based interpolation from multi-view videos. Our framework takes full advantage of spatio-temporal-contour consistency. It exploits the property to interpolate single contours, two neighboring contours which belong to the same model, and two contours which belong to the same view at different times, corresponding to point-, contour-, and model-level interpolations, respectively. The framework formulates the interpolation of two models as point cloud transport rather than non-rigid surface deformation. Our framework speeds up the reconstruction of a dynamic scene while improving the accuracy of point-pairing which is used to perform the interpolation. We obtain a higher frame rate, spatio-temporal-coherence, and a quasi-dense point cloud sequence with color information. Experiments with real data were conducted to test the efficiency of the framework.

Similar content being viewed by others

References

Ahmed, N., Junejo, I.N., 2013. A system for 3D video acquisition and spatio-temporally coherent 3D animation reconstruction using multiple RGB-D cameras. Int. J. Signal Process. Image Process. Patt. Recogn., 6(2):113–128.

Allain, B., Franco, J.S., Boye, R.E., 2015. An efficient volumetric framework for shape tracking. IEEE Conf. on Computer Vision and Pattern Recognition, p.268–276. http://dx.doi.org/10.1109/CVPR.2015.7298623

Arita, D., Taniguchi, R., 2001. RPV-II: a stream-based realtime parallel vision system and its application to real-time volume reconstruction. 2nd Int. Workshop on Computer Vision Systems, p.174–189. http://dx.doi.org/10.1007/3-540-48222-9_12

Baumgart, B.G., 1974. Geometric Modeling for Computer Vision. PhD Thesis, Stanford University, Stanford, USA.

Bilir, S.C., Yemez, Y., 2012. Non-rigid 3D shape tracking from multiview video. Comput. Vis. Image Understand., 116(11): 1121–1134. http://dx.doi.org/10.1016/j.cviu.2012.07.001

Borovikov, E., Sussman, A., Davis, L., 2003. A high performance multi-perspective vision studio. 17th Annual Int. Conf. on Supercomputing, p.348–357. http://dx.doi.org/10.1145/782814.782862

Cheung, G.K.M., Kanade, T., Bouguet, J.Y., 2000. A real time system for robust 3D voxel reconstruction of human motions. IEEE Conf. on Computer Vision and Pattern Recognition, p.714–720. http://dx.doi.org/10.1109/CVPR.2000.854944

Cheung, G.K.M., Baker, S., Kanade, T., 2003. Visual hull alignment and refinement across time: a 3D reconstruction algorithm combining shape-from-silhouette with stereo. IEEE Computer Society Conf. on Computer Vision and Pattern Recognition, p.375–382. http://dx.doi.org/10.1109/CVPR.2003.1211493

Díaz-Más, L., Muñoz-Salinas, R., Madrid-Cuevas, F.J., et al., 2010. Shape from silhouette using Dempster-Shafer theory. Patt. Recog., 43(6):2119–2131. http://dx.doi.org/10.1016/j.patcog.2010.01.001

Duckworth, T., Roberts, D.J., 2011. Accelerated polyhedral visual hulls using OpenCL. IEEE Virtual Reality Conf., p.203–204. http://dx.doi.org/10.1109/VR.2011.5759469

Franco, J.S., Boyer, E., 2009. Efficient polyhedral modeling from silhouettes. IEEE Trans. Patt. Anal. Mach. Intell., 31(3):414–427. http://dx.doi.org/10.1109/TPAMI.2008.104

Furukawa, Y., Ponce, J., 2009. Carved visual hulls for imagebased modeling. Int. J. Comput. Vis., 81(1):53–67. http://dx.doi.org/10.1007/s11263-008-0134-8

Furukawa, Y., Ponce, J., 2010. Accurate, dense, and robust multiview stereopsis. IEEE Trans. Patt. Anal. Mach. Intell., 32(8):1362–1376. http://dx.doi.org/10.1109/TPAMI.2009.161

Haro, G., 2012. Shape from silhouette consensus. Patt. Recogn., 45(9):3231–3244. http://dx.doi.org/10.1016/j.patcog.2012.02.029

Hasler, N., Rosenhahn, B., Thormahlen, T., et al., 2009. Markerless motion capture with unsynchronized moving cameras. IEEE Conf. on Computer Vision and Pattern Recognition, p.224–231. http://dx.doi.org/10.1109/CVPR.2009.5206859

Hauswiesner, S., Khlebnikov, R., Steinberger, M., et al., 2012. Multi-GPU image-based visual hull rendering. 12th Eurographics Symp. on Parallel Graphics and Visualization, p.119–128.

Hofmann, M.H., Davrila, M.G., 2009. Multi-view 3D human pose estimation combining single-frame recovery, temporal integration and model adaptation. IEEE Conf. on Computer Vision and Pattern Recognition, p.2214–2221. http://dx.doi.org/10.1109/CVPR.2009.5206508

Huang, C.H., Lu, D.M., Diao, C.Y., 2013. Accelerated visual hulls of complex objects using contribution weights. Proc. 7th Int. Conf. on Image and Graphics, p.685–689. http://dx.doi.org/10.1109/ICIG.2013.139

Huang, C.H., Lu, D.M., Diao, C.Y., 2014a. A point cloud representation using plane-space-local-area-colorconsistency. J. Comput.-Aided Des. Comput. Graph., 26(8):1297–1303 (in Chinese).

Huang, C.H., Boyer, E., Navab, N., et al., 2014b. Human shape and pose tracking using keyframes. IEEE Conf. on Computer Vision and Pattern Recognition, p.3446–3453. http://dx.doi.org/10.1109/CVPR.2014.440

Kanaujia, A., Haering, N., Taylor, G., et al., 2011. 3D human pose and shape estimation from multi-view imagery. IEEE Computer Vision and Pattern Recognition Workshops, p.49–56. http://dx.doi.org/10.1109/CVPRW.2011.5981821

Kim, D., Dahyot, R., 2012. Bayesian shape from silhouettes. Int. Workshop on Multimedia Understanding Through Semantics, Computation, and Learning, p.78–89. http://dx.doi.org/10.1007/978-3-642-32436-9_7

Laurentini, A., 1994. The visual hull concept for silhouettebased image understanding. IEEE Trans. Patt. Anal. Mach. Intell., 16(2):150–162. http://dx.doi.org/10.1109/34.273735

Li, K., Dai, Q.H., Xu, W.L., 2011. Markerless shape and motion capture from multiview video sequences. IEEE Trans. Circ. Syst. Video Technol., 21(3):320–334. http://dx.doi.org/10.1109/TCSVT.2011.2106251

Liu, Y.B., Dai, Q.H., Xu, W.L., 2010. A point-cloud-based multiview stereo algorithm for free-view-point video. IEEE Trans. Vis. Comput. Graph., 16(3):407–418. http://dx.doi.org/10.1109/TVCG.2009.88

Matsuyama, T., Wu, X.J., Takai, T., et al., 2004. Real-time dynamic 3-D object shape reconstruction and high-fidelity texture mapping for 3-D video. IEEE Trans. Circ. Syst. Video Technol., 14(3):357–369. http://dx.doi.org/10.1109/TCSVT.2004.823396

Matusik, W., Buehler, C., Raskar, R., et al., 2000. Image-based visual hulls. ACM Special Interest Group on Computer Graphics, p.369–374. http://dx.doi.org/10.1145/344779.344951

Nakajima, H., Makihara, Y., Hsu, H., et al., 2012. Point cloud transport. 21st Int. Conf. on Pattern Recognition, p.3803–3806.

Nakazawa, M., Mitsugami, I., Makihara, Y., et al., 2012. Dynamic scene reconstruction using asynchronous multiple Kinects. 21st Int. Conf. on Pattern Recognition, p.469–472.

Perez, J.M., Aledo, P.G., Sanchez, P.P., 2012. Real-time voxel-based visual hull reconstruction. Microprocess. Microsyst., 36(5):439–447. http://dx.doi.org/10.1016/j.micpro.2012.05.003

Raeesi N., M.R., Wu, Q.M.J., 2010. A complete visual hull representation using bounding edges. 11th Pacific-Rim Conf. on Multimedia, p.171–182. http://dx.doi.org/10.1007/978-3-642-15702-8_16

Taneja, A., Ballan, L., Pollefeys, M., 2011. Modeling dynamic scenes recorded with freely moving cameras. 10th Asian Conf. on Computer Vision, p.613–626.

Vlasic, D., Peers, P., Baran, I., et al., 2009. Dynamic shape capture using multi-view photometric stereo. ACM Trans. Graph., 28(5):174. http://dx.doi.org/10.1145/1618452.1618520

Wang, S.Y., Yu, H.M., 2012. Convex relaxation for a 3D spatiotemporal segmentation model using the primal-dual method. J. Zhejiang Univ.-Sci. C (Comput. & Electron.), 13(6):428–439. http://dx.doi.org/10.1631/jzus.C1100331

Wu, X.J., Takizawa, O., Matsuyama, T., 2006. Parallel pipeline volume intersection for real-time 3D shape reconstruction on a PC cluster. IEEE Int. Conf. on Computer Vision Systems, p.1–4. http://dx.doi.org/10.1109/ICVS.2006.49

Xia, D., Li, D.H., Li, Q.G., 2011. A novel approach for computing exact visual hull from silhouettes. Optik, 122(24):2220–2226. http://dx.doi.org/10.1016/j.ijleo.2011.02.013

Zhang, Z., Seah, H.S., Quah, C.K., et al., 2011. A multiple camera system with real-time volume reconstruction for articulated skeleton pose tracking. 17th Int. Multimedia Modeling Conf., p.182–192. http://dx.doi.org/10.1007/978-3-642-17832-0_18

Author information

Authors and Affiliations

Corresponding author

Additional information

Project supported by the National Basic Research Program (973) of China (No. 2012CB725305), the Natural Science Foundation of Guizhou Province, China (No. 20132094), the National Social Science Fund, China (No. 12&ZD32), and the Introducing Talents Foundation of Guizhou University, China (No. 2012028)

ORCID: Chu-hua HUANG, http://orcid.org/0000-0003-3507-4256

Rights and permissions

About this article

Cite this article

Huang, Ch., Lu, Dm. & Diao, Cy. A multiscale-contour-based interpolation framework for generating a time-varying quasi-dense point cloud sequence. Frontiers Inf Technol Electronic Eng 17, 422–434 (2016). https://doi.org/10.1631/FITEE.1500316

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1631/FITEE.1500316

Keywords

- Multi-view video

- Free-viewpoint video

- Point-pair

- Multiscale-contour-based interpolation

- Spatio-temporalcontour

- Consistency

- Time-varying point cloud sequence