Abstract

Numerical continuation and bifurcation methods can be used to explore the set of steady and time–periodic solutions of parameter dependent nonlinear ODEs or PDEs. For PDEs, a basic idea is to first convert the PDE into a system of algebraic equations or ODEs via a spatial discretization. However, the large class of possible PDE bifurcation problems makes developing a general and user–friendly software a challenge, and the often needed large number of degrees of freedom, and the typically large set of solutions, often require adapted methods. Here we review some of these methods, and illustrate the approach by application of the package pde2path to some advanced pattern formation problems, including the interaction of Hopf and Turing modes, patterns on disks, and an experimental setting of dead core pattern formation.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Ordinary differential equation (ODE) models and partial differential equation (PDE) models usually come with a number of parameters \(\lambda \) (growth factors, diffusion constants …), and an important task is to characterize the dependence of solutions on parameters. Numerical continuation aims to trace branches

of solutions \(u\) of nonlinear equations \(G(u,\lambda )=0\in Y\) through parameter space, where \(X\), \(Y\) are Banach spaces and \(G:X\times {\mathbb{R}}\to Y\) has some smoothness detailed below, and where \(s\) is an arclength along the solution branch, which may fold back and forth in \(\lambda \). If \(u\) is a steady solution (fixed point) of an ODE \(\frac{{\mathrm{d}}}{{\mathrm{d}}t}u=-G(u,\lambda )\), then \(X=Y={\mathbb{R}}^{n}\) and \(G(u,\lambda )=0\) is an algebraic system, and if \(u:\Omega \to {\mathbb{R}}^{N}\) is a steady solution of a PDE \({\partial }_{t} u=-G(u,\lambda )\), \(\Omega \subset {\mathbb{R}}^{d}\) a bounded domain, then \(X\) and \(Y\) are function spaces and \(G(u,\lambda )=0\) is a boundary value problem. Additionally, often time periodic orbits \(u=u(t)\) with \(u(t+T)=u(t)\) of ODEs and PDEs are of special interest.

If \({\partial }_{u} G(u(s_{0}),\lambda (s_{0}))\in L(X,Y)\) is invertible, then the implicit function theorem yields that the branch \((s_{0}-\delta ,s_{0}+\delta )\ni s\mapsto (u(s),\lambda (s))\) is locally unique. Of special interest are singular points where \({\partial }_{u} G(u(s_{0}),\lambda (s_{0}))\) is not invertible and hence other branches may bifurcate. At such points we may aim to switch to bifurcating branches, obtaining so–called bifurcation diagrams, altogether aiming at an as complete as possible description of the set of solutions of a given problem.

Numerical continuation and bifurcation methods have been an active field over the last 50 years, see, e.g., [32, 37, 45, 87] for textbooks and research monographs, and, e.g., [23, 24, 52, 60, 65] for reviews. One main starting point is the (pseudo-)arclength parametrization of solution branches [36], leading to algorithms implemented in many packages such as AUTO [25], matcont [19], xppaut [27], aimed (primarily) at the numerical bifurcation analysis of ODEs, and coco [14], which is a rather general toolbox. See also [79] for a recent Julia package. For PDEs, the first step is often a spatial discretization, which turns the PDE into a large ODE system, such that in principle the above software can be applied. However, the possibly large number of degrees of freedom (DoF), in particular for spatially two– or three–dimensional problems, often requires adapted algorithms. Software aimed at PDEs includes LOCA [59] and oomph [33].

Here we give an overview of the numerical computation of bifurcation diagrams for nonlinear PDEs, first reviewing some basics, and then giving examples using the MATLAB package pde2path [68, 73]. This is aimed at PDEs of the form

where \(M_{d}\in {\mathbb{R}}^{N\times N}\) is the (dynamical) mass matrix, \(M_{d}={\mathrm{Id}}\) in many cases, and

with \(u{=}u(x,t){\in }{\mathbb{R}}^{N}\) (\(N\) components), \(x{\in }\Omega {\subset }{\mathbb{R}}^{d}\) some bounded domain, \(d{\in }\{1,2,3\}\) (the 1D, 2D and 3D case, respectively), and time \(t\ge 0\), and where (2) can be complemented with various boundary conditions (BCs). In (3), \(c\) is a diffusion tensor, \(b\) describes advection, and \(a\in {\mathbb{R}}^{N\times N}\) and \(f\in {\mathbb{R}}^{N}\) should be thought as describing linear and nonlinear terms without spatial derivatives. The mass matrix \(M_{d}\in {\mathbb{R}}^{N\times N}\) in (2) may be singular, and this gives much flexibility to (2), for instance to also implement parabolic–elliptic coupled problems. All the tensors/vectors in (3) can depend on \(x\) and \(u\), although mostly we restrict to semilinear problems, where \(c\) does not depend on \(u\). A focus of pde2path is on bifurcations of solution branches of the steady state problem

but we also consider Hopf bifurcations and time–periodic orbits and their bifurcations in (2). The default setting of pde2path uses the finite element method (FEM), essentially as provided by the package OOPDE [58], to first discretize \(u\) in space, in 1D, 2D, or 3D, using Lagrangian \(P^{1}\) elements. However, some higher order FEM is also provided, and for instance [71] contains examples of FEM–free implementation of various right hand sides \(G\), mostly based on Chebychev and Fourier methods.

The three main design goals of pde2path are versatility, easy usage, and easy customization. Therefore, in [67, 76] and [68] we explain the usage of pde2path via a rather large number of working examples included in the software download at [73], where we also provide tutorials which include further comments on implementations. Here we proceed similarly, in a condensed form: In §2 we explain some basic notions of bifurcations, and a few algorithms of numerical continuation and bifurcation. In §3 we then give some examples of applications of pde2path, first to rather standard PDE bifurcation problems, which however do not seem to have been treated in this way before, namely:

-

1.

In §3.1 we consider the Reaction–Diffusion type system

$$\begin{gathered} \begin{aligned} {\partial }_{t} u_{1}&={\partial }_{x}^{2} u_{1}+ \frac{u_{2}-u_{1}}{(u_{2}-u_{1})^{2}+1}-\tau u_{1}, \\ {\partial }_{t} u_{2}&=d{\partial }_{x}^{2} u_{2}+\alpha (j_{0}-(u_{2}-u_{1})), \end{aligned} \end{gathered}$$(5)over the interval \(\Omega =(-l,l)\) with Neumann BCs for \(u_{1}\) and \(u_{2}\), taken from [53]. The system describes charge transport in a layered semiconductor; \(u_{1}\) is an interface charge in the device, \(u_{2}\) a normalized voltage across it, and \((\tau ,j_{0},\alpha ,d)\in {\mathbb{R}}^{4}\) is a parameter vector. There is the trivial (spatially homogeneous) branch \(u_{1}^{*}=\frac{j_{0}}{\tau (j_{0}^{2}+1)}\), \(u_{2}^{*}=j_{0}+u_{1}^{*}\), which may undergo Turing instabilities (bifurcation to finite wave number spatially periodic steady states) and Hopf instabilities (bifurcation to spatially homogeneous time–periodic orbits). In particular, there are codimension–two points, near which both instabilities occur, and their interaction may lead to a variety of states, including Turing–Hopf mixed modes, i.e. localized Hopf modes embedded in Turing structures, or vice versa. Similar results have been obtained in [15] for the so–called Brusselator system instead of (5). Some of these patterns can be studied via amplitude equations, but for larger amplitudes [53] and [15] use direct numerical simulation (DNS), aka time integration. Here we shall study these patterns via numerical continuation and bifurcation in a complementing way.

-

2.

Following [81], we consider the cubic-quintic Swift-Hohenberg (SH) equation

$$\begin{gathered} {\partial }_{t} u=-(1+\Delta )^{2} u+\varepsilon u+\nu u^{3}-u^{5}, \text{ parameters $\varepsilon ,\nu \in {\mathbb{R}}$,} \end{gathered}$$(6)on a disk domain \(\Omega =\{(x_{1},x_{2}): \|x\|=\sqrt{x_{1}^{2}+x_{2}^{2}}< R\}\), where \(\Delta ={\partial }_{x_{1}}^{2}+{\partial }_{x_{2}}^{2}\) is the Laplacian, with Neumann BCs \({\partial }_{n} u={\partial }_{n}\Delta u=0\), where \(n\) is the outer normal at \(\|x\|=R\). Equations of type (6) (with various nonlinearities, for instance \(f(u)=\nu u^{2}-u^{3}\) instead of \(f(u)=\nu u^{3}-u^{5}\) in (6)) are prototypical examples for finite wave number (Turing) pattern formation, and are thus also studied as model problems in [68], over 1D, 2D and 3D boxes (intervals, rectangles and cuboids). The new feature in [81] is the disk domain. The problem has the trivial branch \(u\equiv 0\), and for small \(\varepsilon >0\) we find various subcritical bifurcating patterns, and also study some of their secondary (and tertiary) bifurcations.

Our third class of examples is genuinely “experimental” in the sense that standard bifurcation theory does not apply. It deals with

-

3.

Dead core patterns, for instance in scalar equations of type

$$\begin{gathered} {\partial }_{t} u=c\Delta u-\lambda f(u)\text{ in }\Omega , \quad u=1 \text{ on }{\partial }\Omega , \end{gathered}$$(7)with \(f(u)\sim u_{+}^{\gamma }\) at \(u=0\), \(u_{+}=\max (u,0)\), where \(0<\gamma <1\) and hence \(f(u)\) is not differentiable (and not even Lipschitz) at \(u=0\). For large \(\lambda \), such equations can have dead core solutions which feature a subdomain \(\Omega _{0}\subset \Omega \) where \(u\equiv 0\), which is not possible for Lipschitz nonlinearities. The non-differentiability of \(f\) means that standard bifurcation analysis does not apply to (7). However, using minor modifications of the standard pde2path setup we can still treat (7) and generalizations of (7) numerically, and find various branches with dead core solutions.

Our main aim with these examples is to present interesting bifurcation problems and how they can be studied by numerical continuation and bifurcation. Other recent applications of pde2path are given in, e.g., [6, 9, 26, 41, 44, 55, 62, 69, 75, 78, 82, 83, 86]. In Table 1 we collect some acronyms frequently used in PDEs and bifurcation theory, and some specific for this paper.

Acknowledgments

pde2path started as joint work with Daniel Wetzel and Jens Rademacher, with major contributions by Tomas Dohnal and Hannes deWitt following soon. Some recent major revisions have been mostly my work. pde2path heavily relies on the MATLAB FEM package OOPDE [58] by Uwe Prüfert, and uses some further packages such as TOM by Francesca Mazzia [51], pqzschur [42] by Daniel Kressner, trullekrul by Kristian Eijebjerg Jensen [35], and ilupack [5] by Matthias Bollhöfer; of course, AUTO [25] by Eusebius Doedel et al. has been a role model. A big thank you to the authors of these packages.

Initially, pde2path was planned as an in–house tool to quickly enable students to study steady state bifurcations for 2D reaction–diffusion systems, based on the MATLAB pdetoolbox (see also [73] for octave compatibility). As it was well received by students and colleagues, in 2013 we decided to go public with a basic version, after just a few months of development, and only treating 2D steady state problems (and simple bifurcation points). At the time I was not quite aware of what we were getting into. Ever since, I have learnt a lot about bifurcations, the FEM, and software organization, and one experience I’d like to share is: even if you just have a small starting version of a software that might be useful, try to make it simple for others, provide working examples, and go public. If the software turns out to be useful, the feedback and requests by users are a tremendous help and motivation. Therefore, also thanks to all the users, and please keep sending bug reports, feedback, and requests.

2 Continuation and Bifurcation

In [68, Part I], I give a brief discussion of the theoretical background underlying continuation and bifurcation. For convenience, here I summarize the main ideas; although none of this is original, I give only a few references, and instead refer to [68] for further background and references. Readers familiar with (numerical) bifurcation theory can skip directly to §3 and come back to §2 when needed.

The fundamental result underlying all bifurcation theory is the Implicit Function Theorem (IFT), which can be proved via the contraction mapping theorem. See, e.g., [85, §4.7] for precise statements and a thorough discussion, while here we just recall the main ideas. As an example, consider

For each \(\lambda \ge -1\) we have the two solutions \(u=u_{\pm }(\lambda )=1\pm \sqrt{1+\lambda }\), and together we have the solution branch

showing that here it is useful to see \(\lambda \) as a function of \(u\). However, all we want to use now is that for \(\lambda _{0}=0\) we can “guess” the solution \(u_{0}=0\). We treat \(\lambda \) as a parameter and seek zeros of \(G(u,\lambda )\) near \((u,\lambda )=(u_{0},\lambda _{0})=(0,0)\) in form of a (smooth) resolution \(u=u(\lambda )\). Implicit differentiation yields

If \({\partial }_{u} G(u_{0},\lambda _{0})\ne 0\), then we can solve for

Similarly, \(0=\frac{d^{2}}{d\lambda ^{2}} G(u(\lambda ),\lambda )={ \partial }_{u}^{2} G(u,\lambda ) u^{\prime \,2}+{\partial }_{u}G(u,\lambda )u'' +2{ \partial }_{u}{\partial }_{\lambda }G(u,\lambda ) u' +{\partial }_{\lambda }^{2} G(u,\lambda ) \), which yields, with \(G_{u}^{0}={\partial }_{u} G(u_{0},\lambda _{0})\),

and altogether we can formally obtain the Taylor expansion of \(u(\lambda )\) around \(\lambda _{0}\). In the example we have \(u'(0)=-1/2\), \(u''(0)=1/4\), and hence

Two natural question are: a) Does the Taylor expansion (12) converge (near \(\lambda =0\))? Are there other solutions of \(G(u,\lambda )=0\) near \((u,\lambda )=(0,0)\), not given by \(u(\lambda )\)? Of course, for our example we know all the answers because we explicitly know the solution: Clearly, (12) can only converge locally, as near \((u,\lambda )=(1,-1)\) there does not exists a resolution \(u=u(\lambda )\). Instead, a so–called saddle–node bifurcation occurs, which is also called a fold or a turning point. The theory behind (12) (and much more) is summarized in the IFT: Let \(X\), \(Y\) and \(\Lambda \) be Banach spaces, and let \(B_{\varepsilon }^{X}(u_{0})\) denote the open \(\varepsilon \)–ball around \(u_{0}\) in \(X\), and \(L(X,Y)\) the Banach space of continuous linear operators \(A:X\rightarrow Y\).

Theorem 2.1

Implicit Function Theorem

Assume that \(W\) is open in \(X{\times }\Lambda \), \(G{\in }C^{0}(W,Y)\), and that

-

\((u_{0},\lambda _{0})\stackrel{{\mathrm{wlog}}}{=}(0,0)\in W\) with \(G(u_{0},\lambda _{0})=0\);

-

\(G\) is continuously differentiable in \(u\), and \(A_{0}{=}{\partial }_{u} G(u_{0},\lambda _{0})\) is invertible with \(A_{0}^{-1}{\in }L(Y,X)\).

Then the following holds:

i) There exist \(\varepsilon ,\delta >0\) and \(H\in C(B_{\delta }^{\Lambda }(\lambda _{0}), B_{\varepsilon }^{X}(u_{0}))\) such that \((H(\lambda ),\lambda )\) is the unique solution of \(G(u,\lambda )=0\) in \(B_{\varepsilon }^{X}(u_{0}) \times B_{\delta }^{\Lambda }(\lambda _{0})\).

ii) If \(G\in C^{k}(W,Y)\), then \(H\in C^{k}(B_{\delta }^{\Lambda }(\lambda _{0}),X)\).

iii) If \(G\) is analytic, then \(H\) is analytic.

Remark 2.2

a) The results in ii) and iii) justify Taylor expansions, and the proof of Theorem 2.1 is constructive in the sense that it yields formulas for the Taylor expansion of \(H\), like in (12).

b) Concerning example (8), i) gives the local uniqueness, and ii) gives the local convergence. From the proof of the IFT (or directly from the calculus leading to (12)) we can estimate the convergence radius, namely \(|\lambda |<1\), but in practice we are satisfied with the local results. In particular, the branch \((u(\lambda ),\lambda )\) through \((0,0)\) can be continued as long as \({\partial }_{u} G(u,\lambda )\ne 0\). As already indicated in (9), it may be useful to switch the roles of \(u\) and \(\lambda \). In (8) we have \({\partial }_{\lambda }G(u,\lambda ){=}-1{\ne }0\) (independent of \((u,\lambda )\)), and hence we can always find a (local, and in fact global) resolution \(\lambda {=}\lambda (u)\) (namely (9)).

c) The condition that \({\partial }_{u} G\) is invertible is sufficient for the resolution \(u=u(\lambda )\), but not necessary. An example is \(G(u,\lambda )=u^{3}-\lambda \). The (unique) resolution \(u=h(\lambda )={ {\mathrm{sign}}}(\lambda )|\lambda |^{1/3}\) exists for all \(\lambda \in {\mathbb{R}}\), even though \({\partial }_{u} G(0,0)=0\). However, ii) and iii) here indeed do not hold at \((u,\lambda )=(0,0)\). ⌋

2.1 Standard Bifurcation Examples for ODEs

To explain the notion of bifurcation and the meaning of bifurcation diagrams, we consider the three so–called normal forms of elementary steady bifurcations, and a simple Hopf bifurcation example.

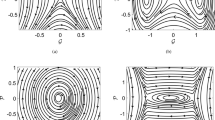

Example 2.3

a) Saddle–node (or fold) bifurcation, \(G(u,\lambda )=\lambda -u^{2}\). Here the two solution branches \(u=u_{\pm }(\lambda )=\pm \sqrt{\lambda }\) exist for \(\lambda \ge 0\), see Fig. 1(a1). Of course, equivalently \(\lambda =u^{2}\), but for now we stick to the \(u=u(\lambda )\) point of view. For any \(\lambda >0\) we have \({\partial }_{u} G(u_{\pm }(\lambda ),\lambda )=\pm 2\sqrt{\lambda } \ne 0\), such that the solutions are locally unique by the IFT. Plots of solutions (or of some functionals of solutions, e.g., norms) as functions of the parameter as in Fig. 1(a1) are called bifurcation diagrams (BDs). In (a2) we show the same branches and additionally sketch the flow of the associated ODE \(\frac{{\mathrm{d}}}{{\mathrm{d}}t}u=G(u,\lambda )\). Moreover, as custom in bifurcation diagrams, stable branches (see below) are plotted in full (or thick) lines, while unstable branches as dashed (or thin) lines.

Elementary steady bifurcations. Here and in the following, full lines indicate branches of stable solutions (stable branches in short). In (a1) we show a pure BD for a fold bifurcation, while in (a2) (and (b,c)) the arrows indicate the flow of the associated ODEs \(\frac{{\mathrm{d}}}{{\mathrm{d}}t}u=G(u,\lambda )\) (Color figure online)

b) Transcritical bifurcation, \(G(u,\lambda )=\lambda u+u^{2}\). For all \(\lambda \in {\mathbb{R}}\) we have the trivial solution \(u=0\). The linearization \({\partial }_{u} G(0,\lambda )=\lambda \) is only singular at \(\lambda =0\), such that the IFT cannot be applied at \(\lambda =0\). The “non–trivial” branch \(u=-\lambda \) bifurcates at the bifurcation point \((u,\lambda )=(0,0)\), exists on “both sides” of the critical point \(\lambda =0\), and there is an exchange of stability. See Fig. 1(b).

c) Pitchfork bifurcation, \(G(u,\lambda )=\lambda u-u^{3}\). As in b), the trivial solution \(u=0\) is locally unique, except at \(\lambda =0\). The “non–trivial” solutions \(u=\pm \sqrt{\lambda }\) bifurcate at the bifurcation point \((u,\lambda )=(0,0)\) and exist for \(\lambda \ge 0\). For \(G(u,\lambda )=\lambda u+u^{3}\) the bifurcating branches are \(u=\pm \sqrt{-\lambda }\) and exist for \(\lambda <0\). See Fig. 1(c). ⌋

The point \((u,\lambda )_{*}=(0,0)\) in all three cases is called a bifurcation point. In b) and c) the point \((u,\lambda )_{*}\) is also called a branch point, as two branches intersect there. At the saddle–node bifurcation there is no “branching off”, and we rather prefer to call \((u,\lambda )^{*}\) a fold point, or simply a fold. Importantly, the transcritical bifurcation and the pitchfork bifurcation are non–generic. This means that they require some special structure (or symmetries) of a nonlinear problem \(G(u,\lambda )=0\) to occur at all, but such symmetries are often enforced by physics. See [68, §1.2] (and the references therein) for more on genericity (or structural stability), co–dimensions, and imperfections.

By the IFT, a necessary condition for all three bifurcations is that \({\partial }_{u} G(u_{*},\lambda _{*})\) is not invertible, i.e., has a zero eigenvalue. Additionally, the eigenvalues of the Jacobian \(A={\partial }_{u} G(u_{*},\lambda _{*})\) at a steady state \(u_{*}\) often determine the stability of \(u_{*}\). The fixed point \(u_{*}\) is called stable for \(\frac{{\mathrm{d}}}{{\mathrm{d}}t}u=-G(u,\lambda )\), if solutions to nearby initial conditions \(u_{0}\) stay close to \(u_{*}\), i.e., if for all \(\varepsilon >0\) there exists a \(\delta >0\) such that \(\|u_{0}-u_{*}\|<\delta \) implies \(\|u(t)-u_{*}\|<\varepsilon \). If additionally \(\|u(t)-u_{*}\|\to 0\), then \(u_{*}\) is called asymptotically stable. If \(u_{*}\) is not stable, then it is called unstable. Bifurcating branches are often classified as super–or subcritical. Supercritical means that the bifurcating branch exists in the \(\lambda \)–range where the original (trivial) branch has lost stability, while subcritical means that it exists where the trivial branch is stable.

If \(u_{*}\) is a fixed point of \(\frac{{\mathrm{d}}}{{\mathrm{d}}t}u=-G(u,\lambda )\), then we may consider the linearization

at \(u_{*}\). This can be solved explicitly by an exponential ansatz \(v(t)=c{\mathrm{e}}^{-\mu t}\phi \), where \(\mu \in {\mathbb{C}}\) is an eigenvalue of \(A\) and \(\phi \) the associated eigenvector (for semisimple eigenvalues, i.e., same algebraic and geometric multiplicity, to be generalized in case that \(A\) has Jordan blocks), and from this it follows that \(u_{*}\) is

-

(i)

asymptotically stable, if all eigenvalues \(\mu \) of \(A\) fulfill \({\mathrm{Re}}\mu >0\);

-

(ii)

unstable, if at least one eigenvalue \(\mu \) has \({\mathrm{Re}}\mu <0\), or if there are Jordan blocks to a zero eigenvalue.

The remaining cases, that there is a semisimple eigenvalue \(\mu =0\), or that there are purely imaginary eigenvalues \(\mu =\pm {\mathrm{i}}\omega \), are associated to possible bifurcations, and the dynamics close to \(u_{*}\) must be studied in detail, for instance via center–manifold reduction. A similar principle of linearized stability also holds for many evolutionary PDEs, see, e.g., [61, Theorem 5.2.23].

Example 2.4

Hopf bifurcation

Consider

with \(u_{j}(t) \in {\mathbb{R}}\) and \(\lambda \in {\mathbb{R}}\). The linearization \(A = \left ( \begin{smallmatrix} \lambda & 1 \\ -1 & \lambda \end{smallmatrix} \right )\) at \(u= 0\) has the eigenvalues \(\mu _{1,2} = \lambda \pm {\mathrm{i}}\). Thus, two complex conjugate eigenvalues cross the imaginary axis at \(\lambda = 0\). Introducing polar coordinates \((u_{1},u_{2}) = r(\sin \phi ,\cos \phi )\) with \(r \geq 0\) and \(\phi \in {\mathbb{R}}/(2\pi {\mathbb{Z}})\) gives

For \(\lambda >0\) we obtain the (stable) fixed point \(r=\sqrt{\lambda }\) of the \(\frac{{\mathrm{d}}}{{\mathrm{d}}t}r\) equation, and hence a family of (stable) periodic orbits (POs)

bifurcates from the trivial branch \(u{=}0\) at \(\lambda {=}0\), see Fig. 2. This is called a Hopf bifurcation. For fixed \(\lambda >0\) the family attracts every solution with an exponential rate \({\mathcal{O}}(\exp (- 2\lambda t))\). ⌋

Hopf bifurcation of POs for \(\lambda >0\) in (14). In the BD we now need to plot some norm of \(u\) (Color figure online)

Remark 2.5

The stability of POs is somewhat less straightforward to define (via Poincaré sections and maps) than the stability of steady states, and more difficult to analyze (via Floquet multipliers). Similarly, bifurcations from POs (nontrivial Floquet multipliers \(\mu \) with \(|\mu |=1\)) are more difficult, both analytically and numerically, than steady bifurcations, or (Hopf–) bifurcations of POs from steady states, and we refer to [68, Chap. 1] for details and references. ⌋

2.2 The Crandall–Rabinowitz Theorem, and Remarks

From the examples in §2.1 we may expect that the crossing of a simple eigenvalue through 0 generically leads to steady bifurcations, while a pair of nonzero eigenvalues crossing the imaginary axis leads to Hopf bifurcations. This is true, under some technical assumptions, and can be proved via Liapunov–Schmidt reduction, where again we refer to [68, §2.2], and here only state a prototype bifurcation theorem for steady bifurcation from simple eigenvalues [11].Footnote 1 We consider (4), i.e., \(G(u,\lambda )=0\), and for simplicity assume that this has the trivial branch \(u=0\), \(\lambda \in {\mathbb{R}}\), and without loss of generality consider a bifurcation at \((u,\lambda )=(0,0)\). Let \(A_{0}={\partial }_{u} G(0,0)\), denote the kernel (null-space) of \(A_{0}\) by \(N(A_{0})=\{u\in X: A_{0}u=0\}\), the range by \(R(A_{0})=\{A_{0}u: u\in X\}\), and assume that

-

(H1)

\(\dim N(A_{0})={\mathrm{codim}}R(A_{0})=1\); (\(A_{0}\) is Fredholm with \({\mathrm{ind}}(A_{0})=0\) and 0 is a simple eigenvalue)

-

(H2)

\(G\in C^{3}(W\times \Lambda ,Y)\), where \(W\times \Lambda \) is an open neighborhood of \((0,0)\) in \(X\times {\mathbb{R}}\).

Theorem 2.6

Crandall–Rabinowitz.

Consider (4) and assume that additional to (H1),(H2) we have the transversality condition

where \(N(A_{0})={\mathrm{lin}}\{\phi \}\), \(\|\phi \|=1\). Then \((0,0)\) is a branch point (BP) for (4), and the nontrivial branch bifurcating at \((0,0)\) is given locally by a \(C^{1}\) curve

where \(u(s)=s\phi +{\mathcal{O}}(s^{2})\). All solutions of \(G(u,\lambda )=0\) in a neighborhood of \((0,0)\) are either on \(\gamma \) or on the trivial branch.

Like the proof of the IFT, the proof of Theorem 2.6 is constructive in the sense that it yields bifurcation formulas, i.e., formulas for \(\lambda '(0)\), \(\lambda ''(0)\), …(if desired). Moreover, there are various extensions of Theorem 2.6, concerning, e.g., the exchange of stability at a BP, and possible global behaviors of bifurcating branches (Rabinowitz alternative).

The assumption that 0 is a simple eigenvalue is crucial; in case of higher multiplicity, different things can happen: no bifurcation, or bifurcation of higher dimensional ‘leaves’, or bifurcation of several branches (often more than the multiplicity of the zero eigenvalue). In principle, one must then derive and solve the so–called (leading order) algebraic bifurcation equations. In this, often symmetry (which usually creates the higher multiplicity of the eigenvalue) can be heavily exploited to simplify the problem. This comes under the name of equivariant branching, and is often crucial for bifurcations in PDEs, but here we refer to [31, 34] and the references therein for details, and [68, §2.5] for an overlook.

2.3 Basic Numerical Algorithms

The general bifurcation theory, of which Theorem 2.6 is one example, works in Banach spaces, while the examples in §2.1 were for simple scalar (resp. 2-component for the Hopf case) ODEs. For the numerical continuation and bifurcation in pde2path we first discretize PDEs (of the form (2)) in space, yielding (high dimensional) algebraic problems (with a slight abuse of notation)

or ODEs (or, if the dynamical mass matrix \(M_{d}\in {\mathbb{R}}^{n\times n}\) is singular, differential algebraic systems)

For the computation of branches (and bifurcations) for (17) and (18), some special parametrizations and methods have been established. Here we recall the basic ideas of arclength continuation, focusing on methods which are implemented in pde2path. Besides the algorithms for (one parameter) branch continuation and steady bifurcations, we shall give some remarks on multiple-parameter continuation of, e.g., fold points (FPs), branch points (BPs), and Hopf bifurcation points (HPs). To some extent, these methods can be formulated in general Banach spaces \(X\), but for convenience we restrict to \(X={\mathbb{R}}^{n}\). In particular, \({\partial }_{u} G(u,\lambda )\in {\mathbb{R}}^{n\times n}\) is always Fredholm of index 0. We essentially present the algorithms in an abstract mathematical way, but where useful add comments pertaining to the pde2path implementation.

Remark 2.7

a) We emphasize that the notation \(G(u,\lambda )=0\in {\mathbb{R}}^{n}\) is motivated by \(u\in {\mathbb{R}}^{n}\) corresponding to the values of the field \(u:\Omega \rightarrow {\mathbb{R}}^{N}\) at the \(n_{p}\) mesh points, hence \(n=\mathit{Nn}_{p}\), while \(\lambda \in {\mathbb{R}}\) is one “active” scalar parameter. Strictly speaking, for the computation of solution branches \(s\mapsto (u(s),\lambda (s))\in {\mathbb{R}}^{n+1}\) there is no reason to distinguish (in notation or in substance) between the field values \(u=(u_{1},\ldots ,u_{n})\in {\mathbb{R}}^{n}\) and the parameter \(\lambda \in {\mathbb{R}}\). The only thing that matters is that we have \(n\) equations in the \(n+1\) unknowns \((u,\lambda )\). Thus, we could as well rename \(\lambda =u_{n+1}\), and essentially this is what is done in pde2path, i.e., all parameters are appended to the vector \({\mathtt{u}}=(u,{\mathtt{pars}})\), and the active parameter “\(\lambda \)” (or several active ones) is (are) marked by a pointer.

b) Often we meet situations where the system of PDEs must be extended by additional equations, for instance so–called phase conditions (PCs). Each additional such equation \(Q_{i}=0\), \(i=1,\ldots ,q\), naturally requires to free (“activate”) one more parameter, such that we obtain one primary active parameter \(\lambda \), and a number of secondary active parameters \(w\in {\mathbb{R}}^{q}\). Similarly, the continuation of POs typically requires to free at least one additional active parameter, usually the period \(T\), and to set a PC as at least one additional equation. In principle, we could put the additional equations into the present framework by extending \(u\) to \((u,\lambda ,w)\in {\mathbb{R}}^{n+1+q}\) and \(G\) to \((G,Q_{1},\ldots ,Q_{q}):{\mathbb{R}}^{n+1+q}\times {\mathbb{R}} \rightarrow {\mathbb{R}}^{n+q}\), but in practice we find it more transparent to keep the distinction between the field \(u\) and the parameter(s) \((\lambda ,w)\), and between the “PDE” \(G(u,\lambda )=0\) or \({\partial }_{t} u=-G(u,\lambda )\) and the auxiliary equations \(Q_{1}=0\), \(\ldots ,Q_{n_{q}}=0\). Thus, we essentially first focus on the 1–parameter case (\(q=0\)), and come back to fold-point–, branch-point–, and Hopf-point–continuation in §2.4. ⌋

Arclength Continuation

A standard method for numerical continuation of branches of \(G(u,\lambda ){=}0\), where \(G:X{\times }{\mathbb{R}}{\rightarrow }X\) is at least \(C^{1}\), is (pseudo)arclength continuation. Consider a branch

parameterized by \(s\in {\mathbb{R}}\) and the extended system

where \(p\) is used to make \(s\) an approximation of arclength on the solution branch. Given \(s_{0}\) and a point \((u_{0},\lambda _{0}):=(u(s_{0}),\lambda (s_{0}))\), and additionally a tangent vector

the standard choice is

Here \(0<\xi <1\) is a weight, typically chosen as \(\xi =1/n_{u}\), and \(\tau _{0}\) is assumed to be normalized in the weighted norm

For fixed \(s\) and \(\|\tau _{0}\|_{\xi }=1\), \(p(u,\lambda ,s)=0\) thus defines a hyperplane perpendicular (in the inner product \(\left \langle \cdot ,\cdot \right \rangle _{\xi }\)) to \(\tau _{0}\) at distance \({\mathrm{ds}}:=s-s_{0}\) from \((u_{0},\lambda _{0})\). We may then use a predictor

for a solution of (19) on that hyperplane, followed by a corrector using Newton’s method in the form

where \(z={\mathcal{A}}^{-1} b\) stands for the solution of the linear system \({\mathcal{A}}z=b\), see Fig. 3.Footnote 2 Since \({\partial }_{s} p=-1\), on a smooth solution arc we have

Thus, after convergence of (22b) yields a new point \((u_{1},\lambda _{1})\) with Jacobian \({\mathcal{A}}\), the tangent direction \(\tau _{1}\) at \((u_{1},\lambda _{1})\) with conserved orientation, i.e., sign \(\left \langle \tau _{0},\tau _{1} \right \rangle =1\), can be computed from

Although \({\mathcal{A}}(s)\) is singular at (steady) BPs, the convergence of the Newton loop near a branch follows from the Newton–Kantorovich theorem and the so–called Bordering Lemma [17, Lemma 3.1], which deals with the structure of \({\mathcal{A}}\in {\mathbb{R}}^{(n+1)\times (n+1)}\), which consists of the main part \(G_{u}\in {\mathbb{R}}^{n\times n}\) and the borders \(G_{\lambda }\in {\mathbb{R}}^{n\times 1}\), \(\xi u_{0}'\in {\mathbb{R}}^{1\times n}\), and \((1-\xi )\lambda _{0}'\in {\mathbb{R}}\). Similar bordered structures appear again and again in continuation and bifurcation analysis and numerics. Algorithm 2.1 summarizes the basic continuation idea, already including some elementary stepsize ds control.

Algebraic Bifurcation Equations

In the following discussion of numerical branch switching of steady states, \((u_{0},\lambda _{0})\) is called a BP, if two or more smooth branches intersect non-tangentially in \((u_{0},\lambda _{0})\). \((u_{0},\lambda _{0})\) is called a simple BP if exactly two branches intersect. At a BP \((u_{0},\lambda _{0})\) we have

where here and in the following \(G_{u}^{0}={\partial }_{u} G(u_{0},\lambda _{0})\) and \(G_{\lambda }^{0}={\partial }_{\lambda }G(u_{0},\lambda _{0})\). From (a) we have

and by (b) there exists a unique \(\phi _{0}\in N(G^{0}_{u})^{\perp }\) such that \(G_{u}^{0}\phi _{0}+G_{\lambda }^{0}=0\) and \(\left \langle \phi _{0},\psi _{j} \right \rangle =0\), \(j=1,\ldots ,m\). If \(s\mapsto (u(s),\lambda (s))\) is a smooth branch with \(G(u(s),\lambda (s))\equiv 0\) and \((u,\lambda )(s_{0})=(u_{0},\lambda _{0})\), then

by implicit differentiation, and hence

where \(\alpha _{j}=\left \langle \phi _{j},u'(s_{0}) \right \rangle \), \(1\le j \le m\). By differentiating again,

all evaluated at \(s=s_{0}\). Since the left hand side is in \(R(G_{u}^{0})\), so is the right hand side, and applying \(\left \langle \cdot ,\psi _{j} \right \rangle \), \(j=1,\ldots ,m\) yields a system of \(m\) quadratic bifurcation equations (QBE) for the \(m+1\) coefficients \(\{\alpha _{0},\alpha _{1},\ldots ,\alpha _{m}\}\) (really \(m\) coefficients, because (28) is homogeneous), namely

In summary, the tangent \((u_{0}',\lambda _{0}')\) to a branch through \((u_{0},\lambda _{0})\) is of the form (26) with \(\alpha _{0},\alpha _{1},\ldots ,\alpha _{m}\) a solution of (28), unique up to a multiplicative constant \(\gamma \). Thus, (28) gives a necessary condition to determine bifurcating branches. Conversely, each distinct isolated zero \((\alpha _{0},\alpha )\) gives a distinct solution branch of \(G(u,\lambda )\) [38]. Here \((\alpha _{0},\alpha ^{*})\) is called isolated if for fixed \(\alpha _{0}\) and some \(\delta >0\) the only solution in \(U_{\delta }^{{\mathbb{R}}^{m}}(\alpha ^{*})\) is \(\alpha ^{*}\). By the IFT, a sufficient condition for this is that \({\partial }_{\alpha }B(\alpha _{0},\alpha )\) is non-singular.

Simple Branch Points

In general, only for \(m=1\) the QBE determine all (i.e., both) branches through \((u_{0},\lambda _{0})\). In this case, (28) reduces to

and if \((\alpha _{0},\alpha _{1})\) is one solution, then the other is distinct (linear independent) if \(a\alpha _{1}+b\alpha _{0}\ne 0\). For the branch switching, let \((\alpha _{0},\alpha _{1})\) with \(\alpha _{0}=\lambda _{0}'\) and \(\alpha _{1}=\left \langle \psi ,u_{0}' \right \rangle \) be determined by the branch already computed, and, assuming the generic case \(\alpha _{0}\ne 0\), let \(\phi _{0}=\frac{1}{\alpha _{0}} (u_{0}'-\alpha _{1}\phi _{1})\). Then the other root of (29) is \(({\overline{\alpha }}_{0},{\overline{\alpha }}_{1})\) with \(\frac{{\overline{\alpha }}_{1}}{{\overline{\alpha }}_{0}}=-\left ( \frac{\alpha _{1}}{\alpha _{0}}+\frac{2b}{a}\right )\), and the tangent to the bifurcating branch is \(\tau _{1}=({\overline{\alpha }}_{1}\phi _{1}+{\overline{\alpha }}_{0} \phi _{0},{\overline{\alpha }}_{0})\). For normalization we choose \({\overline{\alpha }}_{0}=a\), and thus obtain Algorithm 2.2.

Detection of BPs, Exchange of Stability

\(m=1\) in (25) together with \(a\alpha _{1}+b\alpha _{0}\ne 0\) are the general bifurcation conditions for simple BPs, also for \(X\) a general Banach space, cf. [11]. In our restriction \(X={\mathbb{R}}^{n}\) we further obtain the following results:

-

(B1)

[37, §5.8]. If \(\mu _{1}(s_{0})=0\) is an algebraically simple eigenvalue of \({\mathcal{A}}\), and \(\mu _{1}(s)\) changes sign at \(s_{0}\), then \((u_{0},\lambda _{0})\) is a simple BP. This yields a simple but efficient criterion to detect BPs:

BDT1 (bifurcation detection test 1). To detect simple BPs in \(G:{\mathbb{R}}^{n+1}\rightarrow {\mathbb{R}}^{n}\) we monitor sign changes of det \({\mathcal{A}}\). This can be done efficiently if we already have an \(\mathit{LU}\)–decomposition of \(A\), as \(\det {\mathcal{A}}=\det A\det (D-\mathit{CA}^{-1}B)\), and as \(\det A=(\Pi _{i=1}^{n} L_{\mathit{ii}})(\Pi _{i=1}^{n} U_{\mathit{ii}})\) and \(A^{-1}B\) is obtained from back-substitution.

-

(B2)

[37, §5.21]. Under the same assumptions as in (B1), \(\det \,G_{u}(s)\) changes sign at \(s_{0}\) on the branches through \((u_{0},\lambda _{0})\) for which \(\mu _{1}'(s_{0})\ne 0\).

BDT1 excludes FPs, where a simple eigenvalue of \({\mathcal{A}}\) reaches zero but det \({\mathcal{A}}\) does not change sign. Moreover, BDT1 detects an odd number (counting multiplicities) of eigenvalues crossing, but excludes bifurcations via even numbers of eigenvalues crossing. This excludes Hopf bifurcations, but also, e.g., steady BPs of even multiplicity. Thus, in pde2path we also provide an alternative algorithm BDT2 to detect BPs (and Hopf bifurcation points), which is based on computing a few eigenvalues of \(G_{u}\).

After detection of a bifurcation between \(s_{k}\) and \(s_{k+1}\), the bifurcation can be localized by a bisection method, with a secant, tangent, or quadratic predictor. Although this is a slow method for finding roots of continuous real functions, in the setting of calculating sign changes of det \(A\) via LU decomposition (BDT1), and also for BDT2, it seems difficult to improve. Alternatively, see §2.4 for so–called extended systems for fold–, branch– and Hopf–point localization and continuation.

From (B2) we obtain that bifurcations with \(\lambda '(s_{0})\ne 0\) from stable branches for \(\frac{{\mathrm{d}}}{{\mathrm{d}}t}u=-G(u,\lambda )\) necessarily lead to an exchange of stability: if \(u(s)\) is stable for \(s< s_{0}\), i.e., all eigenvalues of \(G_{u}(u(s),\lambda (s))\) have positive real parts,Footnote 3 then \(\mu _{1}(s_{0})=0\) and \(\mu _{1}'(s_{0})<0\) such that \(G_{u}(u(s),\lambda (s))\) has one negative eigenvalue and \(u(s)\) is unstable for \(s>s_{0}\) and \(|s-s_{0}|\) sufficiently small. The pitchfork example \(\frac{{\mathrm{d}}}{{\mathrm{d}}t}u=\lambda u-u^{3}\) shows that the condition that \(\lambda '(s_{0})\ne 0\) cannot be dropped: there is no loss of stability on the nontrivial branch \((u,\lambda )(s)=(s,s^{2})\) at the bifurcation point \((u,\lambda )=(0,0)\) with \(s=0\).

Higher Multiplicity

The case \(m\ge 2\) is more difficult, and the QBE (28) may (and typically will) not yield (all) bifurcating branches. The question which order of Taylor expansion in the sense of (27) is needed is called determinacy. In a loose sense, see [87, §6.4] for precise definitions, a given system of algebraic bifurcations equations in \(\alpha \) (such as (28)) is called \(k\)–determined if any small perturbation of order \(k+1\) does not qualitatively change the set of (small) solutions. In this sense, transcritical bifurcations are (generically, i.e., unless some special structure occurs) 2–determined, and pitchfork bifurcations are generically 3–determined. Thus, to compute the \(\alpha \) for pitchfork branches we need to at least consider the associated cubic bifurcation equations (CBE). In principle, in case of higher order indeterminacies, this must be further continued to higher order, which quickly becomes rather complicated.

In pde2path, we take a practical approach and proceed as follows. The function qswibra searches for solutions \((\alpha _{0},\alpha )\) of the QBE (28) with \(\alpha _{0}\ne 0\) (transcritical bifurcations).Footnote 4 All solutions found are stored in p.mat.qtau, and an orthonormal base of the kernel is stored in p.mat.ker, where as in Algorithm 2.2p is used as the name of the pde2path struct which stores the problem data. Subsequently, we can either select (via seltau) a bifurcating direction from p.mat.qtau, or generate (via gentau) a guess for a bifurcating direction as a linear combination of p.mat.ker, and afterwards call cont.

The approach is summarized in Algorithm 2.3. It is only theoretically sound for 2-determined branches and (in the form cswibra) for 3-determined pitchforks, but it works well and robustly for all the problems we considered so far in pde2path, though some fine tuning (via the optional argument aux, containing tolerances and initial guesses for Newton loops) is sometimes needed. In case of continuous symmetries, further preparatory steps must be taken; see [68] for details.

qswibra, and subsequent seltau/gentau for branch-switching at multiple bifurcation points, then cont. Otherwise same meaning of arguments dir,pt as in Algorithm 2.2

Hopf Bifurcation and PO Continuation

A Hopf bifurcation generically occurs if a pair \(\mu _{\pm }(s)=\mu _{r}(s)\pm {\mathrm{i}}\mu _{i}(s)\) of eigenvalues for the linearization \(M_{d}\frac{{\mathrm{d}}}{{\mathrm{d}}t}v{=}-G_{u}(u,\lambda )v\) crosses the imaginary axis,

Unfortunately, there is no general method to detect (31) which can be used for large \(n_{u}\). If (30) comes from a dissipative problem, where most eigenvalues of \(G_{u}\) are in the right complex half plane and bounded away from the imaginary axis, then we may try to just compute \(n_{\text{eig}}\) eigenvalues of \(G_{u}\) of smallest modulus, which for moderate \(n_{\text{eig}}\) can be done efficiently (by inverse vector iteration). Extending this idea, we can also use spectral shifts \({\mathrm{i}}\omega _{j}\), \(j=1,\ldots ,n_{\mathrm{eig}}\), near which we expect eigenvalues to cross the imaginary axis and compute and inspect a few eigenvalues near each \({\mathrm{i}}\omega _{j}\). The method, called BDT2 in pde2path, is ad hoc, but with suitable care works quite robustly.

If (via BDT2 and, e.g., bisection) we have localized a (simple) HP, then we may want to compute the bifurcating branch of POs, Hopf branch or PO branch in short. Letting \(\mu _{j}(\lambda )={\mathrm{i}}\omega _{H}+{\mathcal{O}}(\lambda -\lambda _{H})\), the first order predictor for the bifurcating branch is, from general results on Hopf bifurcations,

with step length \({\mathrm{ds}}\), where \(\psi \) is the eigenvector associated to \({\mathrm{i}}\omega _{H}\). The continuation of the PO branch is again a predictor–corrector method. First we rescale \(t=\mathit{Tt}\) in (18) to obtain

with unknown period \(T\), but with initial guess \(T=2\pi /\omega _{H}\) at bifurcation. Since (33) is autonomous (does not explicitly depend on time \(t\)), if \(u_{H}\) is a PO of (33), then so is any time translate \(\widetilde{u}_{H}(t)=u_{H}(t+\delta )\) of \(u_{H}\). Thus, to obtain unique solutions of (33) we use a PC, for which we choose

where \(\left \langle \cdot ,\cdot \right \rangle \) is the scalar product in \({\mathbb{R}}^{n_{u}}\) and \(\dot{u}_{0}(t)\) is from the previous continuation step. For the step length condition we choose

where again \(T_{0}\), \(\lambda _{0}\) are from the previous step, \({\mathrm{ds}}\) is the step–length, \('=\frac{\, {\mathrm{d}}}{\, {\mathrm{d}}s}\) denotes differentiation with respect to arclength, \(\xi _{\text{H}}\) and \(w_{T}\) denote weights for the \(u\) and \(T\) components of the unknown solution, and \(t_{0}=0< t_{1}<\cdots <t_{m}=1\) is the temporal discretization. Thus, the step length is \({\mathrm{ds}}\) in the weighted norm

Setting \(U=(u,T,\lambda )\), in each continuation step we need to solve the extended system

where \({\mathcal{G}}(U)=0\) is the discretization of (33), including the periodicity condition \(u_{m}-u_{1}=0\). Using Newton’s method for (37) we have

In (38), \({\partial }_{u}{\mathcal{G}}\in {\mathbb{R}}^{\mathit{mn}_{u}\times \mathit{mn}_{u}}\) is a large (but sparse) matrix, and \({\mathcal{A}}\) is bordered with border–width 2, and bordered elimination solvers such as lssbel [68, §4.5] may yield order of magnitude speedups.

Floquet Multipliers and PO Bifurcations

The (in)stability of a PO \(u_{H}\), and possible bifurcations, are analyzed via the Floquet multipliers \(\gamma _{j}\), obtained from finding nontrivial solutions \((v,\gamma )\) of the variational boundary value problem (in time)

The map \(v(0)\mapsto v(1)={\mathcal{M}}v(0)\) defines the so–called monodromy matrix \({\mathcal{M}}\in {\mathbb{R}}^{n_{u}\times n_{u}}\), and the multipliers \(\gamma _{j}\) are the eigenvalues of ℳ. By translational invariance of (33), there always is the trivial multiplier \(\gamma _{1}=1\), with associated solution \(v=\frac{{\mathrm{d}}}{{\mathrm{d}}t}u_{H}\) of (39). A simple test for the accuracy of the multiplier computation is the numerical error of the trivial multiplier \(\gamma _{1}\), i.e.

We define the index of a PO by

such that unstable orbits are characterized by \({\mathrm{ind}}(u_{H})>0\). Based on the IFT for (37), a necessary condition for the bifurcation from a branch \(\lambda \mapsto u_{H}(\cdot ,\lambda )\) of POs, a PO bifurcation in short, is that at some \((u_{H}(\cdot ,\lambda _{0}),\lambda _{0})\), additional to the trivial multiplier \(\gamma _{1}=1\) there is a second multiplier \(\gamma _{2}\) (or a complex conjugate pair \(\gamma _{2,3}\)) with \(|\gamma _{2}|=1\), which may lead to the following PO bifurcations:

-

(i)

\(\gamma _{2}=1\), yielding a periodic orbit fold (PO fold), or periodic orbit BP (PO BP).

-

(ii)

\(\gamma _{2}=-1\), yielding a period–doubling (PD) bifurcation, i.e., the bifurcation of POs \(\tilde{u}(\cdot ;\lambda )\) with approximately double the period, \(\tilde{u}(\tilde{T};\lambda )=\tilde{u}(0;\lambda )\), \(\tilde{T}(\lambda )\approx 2T(\lambda )\) for \(\lambda \) near \(\lambda _{0}\).

-

(iii)

\(\gamma _{2,3}={\mathrm{e}}^{\pm {\mathrm{i}}\vartheta }\), \(\vartheta \ne 0\), \(\pi \), yielding a torus (or Naimark–Sacker) bifurcation, i.e., the bifurcation of POs \(\tilde{u}(\cdot ,\lambda )\) with two “periods” \(T(\lambda )\) and \(\tilde{T}(\lambda )\); if \(T(\lambda )/\tilde{T}(\lambda )\notin {\mathbb{Q}}\), then \({\mathbb{R}}\ni t\mapsto \tilde{u}(t)\) is dense in certain tori.

Using the same \(t\)–discretization for \(v\) in (39) as for \(u\) in (33), the multipliers \(\gamma _{j}\) can be computed from the Jacobian \({\mathcal{A}}\) in (38), but this must be done with care, as discussed in, e.g., [28, 49], see also [68, §3.4] for more comments, including the pde2path implementation and subsequent PO branch switching.

2.4 Further Algorithms and Comments

The algorithms from §2.3 are the basis of arclength continuation of steady states and periodic orbits, including bifurcation detection and localization, and branch switching. Here we comment on two further classes of algorithms, namely extended systems for special point localization and continuation, and PCs, which both play an important role in practical applications of continuation methods.

Extended Systems

An alternative to bisection for FP, BP and HP localization is to set up appropriate extended systems. Assume that a FP has been detected between \((u,\lambda )(s_{a})\) and \((u,\lambda )(s_{b})\), i.e., near \((u_{0},\lambda _{0})=(u,\lambda )(s_{0})\) where for instance we may use just one or two bisection step(s) to approximate \((u,\lambda )(s_{0})\) between \((u,\lambda )(s_{a})\) and \((u,\lambda )(s_{b})\). We then set up the extended system

so that \(\phi \) is in the kernel of \(G_{u}\) with \(\left \langle \phi ,\phi \right \rangle =1\). The bordering lemma [17, Lemma 3.1] yields that the Jacobian

is non-singular at a (generic, i.e., quadratic) FP, and hence we may run a Newton loop on (42), using \((u_{0},\lambda _{0})\) and the eigenvector \(\phi _{0}\) belonging to the eigenvalue \(\mu _{0}\) of smallest modulus at \((u_{0},\lambda _{0})\) as a starting guess, which upon convergence gives us the fold point \((u^{*},\lambda ^{*})\). Similarly, in many problems it is useful to continue FPs in a second parameter to obtain fold curves. Thus freeing a second parameter \(\beta \), say, and writing \(w=(\lambda ,\beta )\) we set up the extended system

where \(p(U,s)\) is the analog of the arclength (20), i.e., now

Now (44) can be used for fold continuation. This means the continuation of a FP \((u^{*},\lambda ^{*})\) as a function of \(\beta \), and as (44) is again in arclength parametrization \((u,\lambda ,\beta )=(u,\lambda ,\beta )(s)\) we can for instance continue around folds of folds. Similarly, there are extended systems for the localization and continuation of BPs and HPs, and associated functions in pde2path, again see [68, Chap. 3], and §3.1 and §3.2 for examples.

More on Phase Conditions

As noted in Remark 2.7b), in continuation often the PDE \(M{\partial }_{t} u=-G(u,\lambda )\) must be extended by further equations \(Q(u,\lambda )=0\), additional to the arclength condition \(p(u,\lambda ,s)=0\) as in (19) with \(p\) given in (20), modified to \(\psi (u,\lambda ,T,s)=0\) in (35) for the Hopf case. Two examples of additional equations are already given in (42) for FP continuation, and in (34) as a phase condition (PC) to remove the time–shift of POs from the continuation.

Similar PCs are also needed to remove other continuous symmetries, e.g., for spatially translationally invariant problems, which occur for periodic BCs. For instance, over a 1D interval \(\Omega \) with periodic BCs, a constant coefficient PDE \(M{\partial }_{t} u=-G(u)\) is translationally invariant, and if \(u\) with \({\partial }_{x} u\not \equiv 0\) is a solution of \(G(u,\lambda )=0\), so is \(u(\cdot -\xi )\) for any \(\xi \in \Omega \). Hence

and \({\partial }_{u} G\) always has \({\partial }_{x} u\) in its kernel, which is problematic for, e.g., robust convergence of Newton loops, and bifurcation detection. To remove the continuous translational symmetry we add a PC \(Q(u,\lambda )=0\), here of the form

where \(u_{{\mathrm{old}}}\) is either a fixed profile, or the solution from the previous continuation step. Since \(\left \langle {\partial }_{x} u_{{\mathrm{old}}},u_{{\mathrm{old}}}\right \rangle =\frac{1}{2} \int _{\Omega }{\partial }_{x} u_{{\mathrm{old}}}^{2}\, { \mathrm{d}}x =0\) we can simplify \(Q(u,\lambda )=\left \langle {\partial }_{x} u_{{\mathrm{old}}},u \right \rangle _{L^{2}}\), but this makes no difference for the implementation. Moreover, \({\partial }_{u} Q(u,\lambda ) v=\left \langle {\partial }_{x} u_{{ \mathrm{old}}},v \right \rangle _{L^{2}}\) has a simple form and can be easily provided. The additional equation (47) requires to free an additional parameter \(\eta \), which may be seen as a Lagrange multiplier for the constraint \(Q(u,\lambda )=0\), and we add the generator of the underlying symmetry group to \(G\), i.e., here,

Similar PCs for instance occur as mass–conservation in the Cahn–Hilliard problem [68, §6.9], or as rotational invariance for problems over disks [68, §6.8], and §3.2. Moreover, PCs are often also useful for problems which are only approximately invariant. For instance, problems with Dirichlet BCs or Neumann BCs are not invariant wrt spatial translations, but strongly localized solutions (peaks) which do not “see” the boundaries may be almost translationally invariant, which generates almost zero eigenvalues, which should be removed via PCs. See §3.2.

3 Applications

The MATLAB package pde2path is designed to apply the algorithms from §2, and several more, in a user friendly way to the large class of PDE systems of type (2). Many worked out examples are given in [67, 68, 76] and in the tutorials at [73], and are included as demos in the software download. See also [16] for a quickstart guide (download and installation instructions), reference card, and an overview of the pde2path demos.Footnote 5 Here we apply pde2path to the example problems (5), (6), and (7) and further dead core problems, with the respective demos again available at [73]. These demos follow some standard setup, and here we only give minimal comments on implementations; for details including commented code see the accompanying supplementary information [72].

3.1 Turing and Hopf Bifurcations in a 2–Component System

We start with the system (5), i.e.,

Throughout we fix \((\tau ,d)=(8,0.05)\), and initially also \(\alpha =0.02\), and take \(j_{0}\) as the primary continuation/bifurcation parameter. For all \((j_{0},\alpha )\), (49) has the unique spatially homogeneous steady state

Denoting the terms without derivatives in (49) by \(f\), the linearization of (49) at \(u^{*}\) has the form

with constant coefficient differential operator \(L({\partial }_{x})\), where \(D=\) diag \((1,d)\). Over ℝ, (51) has solutions of the form

where \(k\in {\mathbb{R}}\) is called wave number, \({\mathrm{e}}^{{\mathrm{i}}\mathit{kx}}\phi (k)\) is called a (Fourier–)mode, and \((\mu (k),\phi (k))\in {\mathbb{C}}\times {\mathbb{C}}^{2}\) is an eigenpair of \(\hat{L}({\mathrm{i}}k):=-k^{2} D+{\partial }_{u} f(u^{*})\in {\mathbb{R}}^{2 \times 2}\). The function(s)

is called the dispersion relation, where for each \(k\) we sort the eigenvalues such that \({\mathrm{Re}}\mu _{2}(k)\le {\mathrm{Re}}\mu _{1}(k)\) (here we use the more standard sign convention that \({\mathrm{Re}}\mu >0\) means instability). Over \(\Omega =(-l_{x},l_{x})\) we additionally have to fulfill the Neumann BCs, which restricts \(k\) to the (dual) lattice \({\mathcal{L}}=\pi {\mathbb{Z}}/(2l_{x})\). The solution \(u^{*}\) is stable if \({\mathrm{Re}}\mu _{1}(k)<0\) for all \(k\in {\mathcal{L}}\), unstable if there exists a \(k\) such that \({\mathrm{Re}}\mu _{1}(k)>0\), and instabilities and bifurcations may occur if there is a \(k=k_{c}\) such that \({\mathrm{Re}}\mu _{1}(k_{c})=0\). The instability is called a long wave instability if \(k_{c}=0\) and \({\mathrm{Im}}\mu _{1}(k_{c})={\mathrm{Im}}\mu _{2}(k_{c})=0\); it is a Hopf instability if \(k_{c}=0\) and \({\mathrm{Im}}\mu _{1}(k_{c})={\mathrm{Im}}\mu _{2}(k_{c})\ne 0\), while for \(k_{c}\ne 0\) (and consequently \({\mathrm{Im}}\mu _{1}(k_{c})=0\)) it is called a Turing instability.Footnote 6

Figure 5(a) shows the Turing and Hopf lines in the \(j_{0}\)–\(\alpha \) plane, where the respective instabilities first occur. These lines can be computed analytically, see [53] (for \(\Omega ={\mathbb{R}}\)), but here we compute them numerically by BP and HP continuation (see §2.4) on \(\Omega =(-l_{x},l_{x})\), \(l_{x}=8\pi /k_{c}\), with \(k_{c}= (\alpha \tau /D)^{1/4}\).Footnote 7 In Fig. 4(b–d) we show the dispersion relations \(k\mapsto \mu _{1}(k)\) at different values of \((j_{0},\alpha )\), where the ∗ illustrate the allowed \(k\) over \(\Omega \). For given \(j_{0}\), the steady state \(u^{*}\) is stable for \(\alpha \) above the max of the red and blue lines. As we decrease \(\alpha \), (or decreasing \(j_{0}\) from 3.5 for fixed \(\alpha =0.05\), say) we either first cross the blue line, meaning that \(u^{*}\) looses stability to a Turing mode, or the red line, meaning that \(u^{*}\) loses stability to a Hopf mode. Moreover, after crossing into the ‘instability region’, there are typically further (Hopf or Turing) instabilities in quick succession. There are two codimension–2–points, at \((j_{0},\alpha )^{*}\approx (2.85,0.036)\) and \((j_{0},\alpha )^{\star }\approx (1.25,0.035)\). In any neighborhood of these, the first instability can be either to a Hopf or to a Turing mode.

Turing and Hopf Bifurcation lines in the \(j_{0}\)–\(\alpha \) plane as obtained from BP and HP continuation (a), and dispersion relations in the stable case (b), Turing unstable case (c), and Hopf unstable case (d), \((j_{0},\alpha )\) as indicated. The ∗ in (b–d) show the allowed wave numbers on the interval \(\Omega =(-l_{x},l_{x})\) with \(l_{x}=4\pi /k_{c}\) and Neumann BCs (Color figure online)

Some First Results

To plot BDs for (49), we use the “norm” \(\|u\|_{2}\) defined as

for steady states (a), and POs (b), respectively, such that \(\|u\|_{2}=0\) means \(u\equiv u^{*}\). This gives better graphical separation of branches in the BDs.

In Fig. 5(a) we show steady state (Turing) branches (T1, blue, to T4, green) bifurcating from the trivial branch \(u\equiv u^{*}\), a secondary steady branch (T1-1 orange), and two secondary Hopf bifurcations (T1-1-h10, red, from the 10th HP on the T1-1 branch, and similarly T1-1-h18 from the 18th HP).Footnote 8 The blue branch T1 bifurcates subcritically, and becomes stable in the fold near pt30. The further Turing branches (with sideband wave numbers) behave similarly, and branch T4 goes furthest to the right. Moreover, on all the Turing branches there are secondary bifurcations to localized (steady) patterns, and also some Hopf bifurcations to patterns modulated in time. Here we first follow the 1st secondary branch (T1-1, orange) which contains (steady) fronts between \(u=u^{*}\) and Turing patterns, snaking up by adding one oscillation for every two folds, and with stable segments in direction SE to NW. By even extension of solutions over the left boundary (based on the Neumann BCs), we can also regard such fronts as localized patterns.

Results for (5) from the demo seco/cmds1 [72], \((\alpha ,\tau ,D)=(0.02,0.05,8)\) fixed, using \(j_{0}\) as continuation parameter, starting with \(j_{0}=3.3\) on the trivial branch. Domain \(\Omega =(-l_{x},l_{x})\) with \(l_{x}=8\pi /k_{c}\), with critical wave number \(k_{c}\). (a) partial BD: first 4 Turing branches (blue and green), snake of T1-front (T1-1, orange), two Hopf branches (T1-1-h10, brown, and T1-1-h18, red) bifurcating from the snake. (b) sample solutions from (a). (c) zoom into a snake of localized Turing patterns (again orange), with one bifurcating Hopf branch (Color figure online)

First indicated in [57], such snaking branches of localized patterns (“homoclinic snaking”, in 1D, 2D and 3D) have seen much interest in recent years, see [2, 4, 7, 10, 39, 40, 74, 75], and [68, Ch. 8 and 9] for various examples illustrated with pde2path. In Fig. 5(a), the stable segments of the snake are bounded by folds on the right and HPs on the left, and the red branches are the PO branches bifurcating at the HPs 10 and 18. The solutions on these branches essentially consist of the (steady) Turing pattern on the left, and (small amplitude) oscillations in time around \(u^{*}\) on the right. These PO branches connect pairs of HPs on the orange branch, and all only contain unstable solutions, see panel (b2) for two samples.Footnote 9 The orange snake connects to the 4th Turing branch T4 near its fold, and thus connects patterns of different wave lengths. Panel (c) gives a partial illustration of the next snake bifurcating from the T1 branch, and one example Hopf branch bifurcating from this second snake, which all again only contain unstable solutions. Finally, there are many further BPs on all the Turing branches, giving a hint of the very rich bifurcation structure of steady states for (49), near the codimension–2 point.

Large Amplitude Mixed Modes

In Fig. 6 we (re)start with the primary Hopf bifurcation of a spatially homogeneous PO (blue branch) from the trivial branch (for graphical reasons plotted after the 15th point). The branch bifurcates subcritically, and becomes stable at large amplitude after the fold, see, e.g., pt22. The Floquet spectrum at H1/pt16 in (b) illustrates that at lower amplitude there are many unstable directions, and consequently many PO bifurcations before the branch becomes stable. Finally, we consider a mixed mode branch that is not obtained by bifurcation from an already known branch, but by splicing together the Turing pattern T1/pt35 on part of the domain, with the PO from H1/pt25 on the complement. This is just an initial guess for subsequent Newton loops to get on a solution branch, and depending on the choice of, e.g., the “splitpoint” (by trial and error), there may be very large initial residuals and the Newton loop may fail. However, here we get to pt1 on the red branch, called lh1a, which happens to be stable.Footnote 10 Continuing in one direction, the oscillating parts of the solutions shrink, e.g., lh1a/pt40, while in the other direction they grow in a snaking fashion, with stable segments. However, ultimately the branch becomes and stays unstable in both directions, and goes back and forth in \(j_{0}\), with solutions loosing more and more structure, and we were not able to determine where it bifurcates from/connects to. This is somewhat unsatisfying, and unfortunately not altogether untypical. Essentially, it means that we have to accept that there are “too many branches” to get a complete picture, but on the other hand Figs. 5 and 6 show that continuation and bifurcation are useful tools to understand big parts of the organization of sets of solutions.

Selected outputs from seco/cmds2.m. (a) BD of primary Hopf branch H1 (blue) and mixed modes lh1a and lh1b (red and magenta). (b) Samples of spatially homogeneous periodic orbits. (c,d) Samples of solutions from mixed mode branch; solutions lh1a/1, lh1b/20, lh1b/110 stable, remaining samples unstable (Color figure online)

Comments

Where does the multitude of solution branches (of which we only give a small sample in Fig. 5 and Fig. 6) come from? And what do we learn from the continuation/bifurcation approach compared to just finding (stable) solutions (steady or time-periodic) from DNS as in [53]?

Regarding the first question, the dispersion relation(s) \({\mathrm{Re}}\mu (k)\) of eigenvalues as a function of wave number \(k\) are rather flat. As a consequence, over non–small domains \(\Omega \) there are many eigenvalues (Turing and/or Hopf) crossing the imaginary axis near criticality in short succession, and there can be sub–harmonic (half wave number or frequency) Turing and/or Hopf modes. This explains the multiplicity of patterns close to bifurcation, which for a similar situation are also analyzed via amplitude equations in [15]; [53] and [15] then use DNS to find a variety of (stable) patterns further away from the primary bifurcation, including some not discussed here, e.g., spiking of subharmonic Turing-Hopf modes. See also [84] for DNS in related systems and a discussion of the role of subcritical bifurcations in localized oscillating patterns. Here we study patterns far from the primary bifurcation via continuation, which for instance allows the computation of the localized steady patterns in the snakes in Fig. 5 in a very efficient way, and which gives further information about how different patterns are connected. Thus, both approaches complement each other. Finally, we may expect a still much richer solution space for (49) in 2D, where already the steady states allow a much richer variety of spots vs stripes (see also §3.2).

3.2 Pattern Formation on Disks

Our second example deals with somewhat non–standard patterns due to geometric constraints, and with (spatial) PCs. The SH equation (6), i.e., \({\partial }_{t} u=-(1+\Delta )^{2} u+\varepsilon u+\nu u^{3}-u^{5}\), is a prototypical model for pattern formation. See [63] and, e.g., [12, 13, 56, 61] for reviews. We assume Neumann BCs \({\partial }_{n} u={\partial }_{n}\Delta u=0\) for \(u\) and \(\Delta u\), and rewrite the 4th order equation (6), as a parabolic–elliptic system,

with a singular dynamical mass matrix \(M_{d}= \left ( \begin{smallmatrix} 1&0 \\ 0&0\end{smallmatrix} \right )\), \(f(u_{1})=\nu u_{1}^{3}-u_{1}^{5}\), and Neumann BCs for \(u_{1}\) and \(u_{2}\). See, e.g., [68, Remark 8.1] for the equivalence of (6) and (55) over convex Lipschitz domains, or general domains with a smooth boundary. We first fix \(\nu >0\), and use \(\varepsilon \in {\mathbb{R}}\) as the primary bifurcation parameter. Over \({\mathbb{R}}^{d}\), the trivial branch \(u\equiv 0\) loses stability at \(\varepsilon =0\) to modes \({\mathrm{e}}^{{\mathrm{i}}{\vec{k}}\cdot {\vec{x}}}\) with wave number \(k=\|{\vec{k}}\|\approx 1\) and growth rate \(\mu (k)=-(1-k^{2})^{2}+\varepsilon \). Essentially the same holds over bounded boxes with Neumann BCs or periodic BCs, with \({\vec{k}}\) from the pertinent lattice, depending on the box size. For both, the cubic-quintic case (6) and the quadratic-cubic case with \(f(u)=\nu u^{2}-u^{3}\) with \(\nu >0\), there are subcritical bifurcations of branches of patterned states, and subsequent secondary bifurcations to snaking branches of localized patterns, and loosely speaking, one difference between the quadratic-cubic and the cubic-quintic case is that the former favors hexagons, while the latter favors stripes. See, e.g., [68, Chap. 8], and the references therein.

Here we report a few results for (55) on a disk, referring to [81] for further details. The SH equation (6), and hence the system (55), is variational with respect to the energy

Using integration by parts and the boundary conditions we obtain

Hence ℱ decreases along orbits of (6), and since \(\mathcal{F}[u]\) is bounded from below and since for fixed \((\varepsilon ,\nu )\) we have a discrete set of steady states, every solution converges to a steady state, and in particular there are no Hopf bifurcations and no POs. Introducing polar coordinates \((x,y)=\rho (\cos \phi ,\sin \phi )\), the primary bifurcation directions (from the trivial branch) are given by Fourier–Bessel modes

where \(m\in {\mathbb{N}}_{0}\) is the azimuthal wave number, \(J_{m}\) is the \(m\)th Bessel function of the first kind, \(\alpha =0\) or \(\alpha =\pi /2\), and the constants \(a_{m}\), \(b_{m}\) (with \(a_{m}^{2}+b_{m}^{2}=1\)) and \(k_{\pm }\) are from a discrete set determined by the boundary conditions. Even for rather small disk radius \(R\), there is a rather large number of instabilities for small \(\varepsilon \), see Fig. 7(a), and the order in which they appear depends sensitively on the radius \(R\), see [81].

(a) The first six bifurcation directions from \(u=0\) on the half-disk with \(R=14\) and \(\varepsilon \) values \((0.003,0.279,0.292,0.732,8.8,13.2)\times 10^{-3}\). (b) Bifurcation diagram of the 5th branch (axisymmetric, black) and the 6th branch (\(D_{4}^{-}\) mode), and sample solutions as indicated by the numbers; \(\nu =2\) (Color figure online)

In a first classification, the modes can be distinguished into axisymmetric ones \(m=0\) and nonaxisymmetric ones \(m\ne 0\), and additionally into localized ones (large near the center of the disk) and wall modes (large near the boundary). Following the remarks in §2.4, for the localized modes (axisymmetric or not) it turns out to be useful to switch on translational PCs, while for the non-axisymmetric modes on the full disk we need rotational PCs due to the rotational invariance of the Laplacian and of the disk.

In the following we shall briefly report, for \(R=14\), on the branches corresponding to the first axisymmetric mode \(\phi _{5}\) and the \(D_{4}^{-}\) mode \(\phi _{6}\) (Fig. 7(b)), on some secondary bifurcations from the radial branch (Fig. 8), and on “daisy” branches corresponding to the wall mode \(\phi _{3}\), and their secondary bifurcations to localized daisies. This focus on the 5th, 6th and 3rd bifurcating branches may appear arbitrary, but these are prototypical branches, and, as already said, the sequence of the bifurcations from \(u\equiv 0\) strongly depends on the radius \(R\). Again, the goal is to obtain a basic understanding of the organization of the set of steady states.

Branches of multiarm solutions for \(R=14\), \(\nu =2\). (a)–(c) \(m=2,3,4\) branches bifurcating from an axisymmetric spot at low \(\|u\|_{2}\). (d) Zoom of the bifurcation diagram with the \(m=12\) crown branch (magenta) and the branches of 1-arm (orange), 2-arm (blue), 3-arm (red), and 4-arm (green) states that bifurcate from it; the \(m=3,4\)-arm states connect to the corresponding branches in panels (b) and (c). The four critical eigenfunctions on the \(m=12\) crown branch are shown alongside, together with the solution profile at location 5 on this branch (Color figure online)

Remark 3.1

a) Due to the already large number of close–together patterned solutions on rather small disks, potential branch–jumping during continuation becomes a problem, in particular in the neighborhood of bifurcation points. By this we mean the uncontrolled and undetected switching of states from one solution branch to another, typically coming with a loss of symmetry. Obvious ways to mitigate this are choosing a smaller domain (see (b)), and carefully choosing a radially symmetric mesh, see also [68, §4.2]. Additionally, it turns out that choosing a piecewise quadratic (Lagrangian \(P^{2}\)) FEM, see [70], instead of the default piecewise linear \(P^{1}\) FEM helps to mitigate branch jumping.

b) Following [81] and the tutorial at [80] we present our results only on the half disk, where also at \(x_{1}=0\) we impose Neumann BCs for \((u_{1},u_{2})\). The solutions can then be mirrored to the full disk. This simplifies the numerics, as we need less degrees of freedom for the discretization, and as for localized solutions (see below) we do not need a PC in \(x_{1}\), and, equally importantly, keeping symmetry of solution branches is easier on the smaller domain. However, the half disk also reduces the number of allowed modes to \(\alpha =0\) in (58). Therefore, when below discussing stability of nontrivial solutions, we always first extend them to the full disk and then compute the spectrum. ⌋

Some Results

In Fig. 7(b) we present a bifurcation diagram of the branches from \(\phi _{5}\) and \(\phi _{6}\), for \(\nu =2\).Footnote 11 The black branch bifurcates at \(\varepsilon =\varepsilon _{5}\approx 0.88\times 10^{-2}\) in direction \(\phi _{5}\), and contains axisymmetric states which start as a spot at small norm; the branch then snakes in \(\varepsilon \), containing short stable segments, before turning into a domain filling stable target state in the last fold. However, this behavior depends in a very sensitive and interesting way on the disk radius. For instance, for \(R=15\) the primary spot branch does not connect to a target state, but instead reconnects to \(u=0\) at slightly larger \(\varepsilon \), as discussed in detail in [81]. The red branch in Fig. 7(b) is an example of states with \(D_{m}^{-}\) symmetry, which means that the states are invariant under rotation by \(2\pi /m\) together with a sign change \(u\mapsto -u\). The branch starts with localized \(D_{4}^{-}\) states (hence again needs a \(y\)–PC); along the branch, the arms first extend towards the wall (state 90), and then broaden, and the SW → NE segments of this branch contain stable segments.

Figure 8 shows some secondary bifurcations from the radial branch, starting with 2–arms, 3–arms and 4–arms branches (with \(D_{m}\) symmetry, i.e., invariance under rotation by \(2\pi /m\)) bifurcating at small norm (a–c). Again the arms grow towards the wall, and the 3-arms and 4–arms branches broaden as they reach the wall, and seem to reconnect to the axisymmetric black branch near its upper left fold. The 2-arms branch in (a) behaves differently and rather loses spots after point 4. In (d) we look at the reconnection more closely. It turns out that the 3-arms and 4-arms branches do not connect directly to the target, but to a “crown” state which bifurcates from the radial branch near its last fold, and has \(D_{12}\) angular structure at the wall; see sample 5 in Fig. 8(d). Conversely, there are 1–arm and 2–arm states bifurcating from the crown branch; see the critical eigenvectors at BPs 1 and 2 in (d), and the further discussion of these bifurcating branches in [81].

In Fig. 9(a) we set \(\nu =1.4\) and consider the blue branch bifurcating at \(\varepsilon =\varepsilon _{3}\approx 0.292\times 10^{-3}\) in direction of the wall mode \(\phi _{3}\). Following [46] we call these solutions daisy states. At the first BP on the daisy branch, a branch (green) of localized daisies bifurcates, snakes up by adding a petal at every other fold, and reconnects to the blue daisy branch near its top left fold, where the daisies become stable. This is very similar to the snaking of the T1-1 branch in Fig. 5, or to the snaking of localized periodic patterns in the 1D SH equation [8]; like there, here we also have an intertwined 2nd branch of odd localized daisies, and the two are connected by rungs, cf. [81, Fig. 12].

However, the quasi 1D snaking on the \(R=14\) disk only holds at moderate subcriticality, i.e., moderate \(\nu \). For instance, for \(\nu =2\) there also bifurcates a localized 1–petal daisy branch from the daisy branch at low norm, and a 1–hole “plucked” daisy branch near the fold, but the two do not connect. To understand this breakup, in [81] we use some fold continuation in \(\nu \) of the folds as in Fig. 9(a). It turns out that these folds do not continue to \(\nu =2\), but the fold continuation folds back at some \(\nu <2\). In Fig. 9(b) we continued FP12 from (a) to \(\nu =1.5\), and then switch back to continuation in \(\varepsilon \). In the “up” direction, the branch (in green) behaves as in (a), but in “down” direction the branch (in red) does not loose further petals to connect to the daisy branch at low norm. Instead, the petals start to grow towards the center of the domain. Such states of patches of stripes are also known for the SH equation in the plane (without boundary) and called worms. Thus, in [81] we term states like 100 “boundary worms”.

Here we end the review of [81], which discusses the above results and a number of further patterns in more detail. One crucial point is that continuation and bifurcation software helps to uncover the very rich solution structure in a more systematic way than just DNS. All of the above branches (and many more in [81]) start out unstable, but later contain stable segments, and (secondary, tertiary,..) bifurcations to further branches with stable parts.

3.3 Experiments on Dead Core Pattern Formation

Our last (class of) example(s) is “experimental”, in the sense that the standard analytical bifurcation theory does not apply because the problems are not smooth, and not even piecewise Lipschitz. This also reflects in the need to modify some of the pde2path algorithms.

A classical example of a dead core (DC) problem is given by the elliptic equation

where \(\Omega \subset {\mathbb{R}}^{d}\) is a bounded domain, \(\lambda >0\) a parameter, and \(f:{\mathbb{R}}\to {\mathbb{R}}\) a function such that

The associated parabolic problem is

Setting \(v=u^{\gamma }\), (61) with \(f(u)=u_{+}^{\gamma }\), \(u_{+}=\max (u,0)\), is equivalent to the degenerate diffusion problem

where \(f(v^{\delta })=v\), which however does not seem simpler to analyze than (61).

For large \(\lambda \), (59) has DC solutions which feature a subdomain \(\Omega _{0}\subset \Omega \) where \(u\equiv 0\). The motivation comes from reaction–diffusion problems where the reaction is so fast near \(u=0\) that the diffusion cannot supply further reactants and a DC without reactants develops. For (59) in 1D with \(f(u)=u_{+}^{\gamma }\) and wlog \(\Omega =(0,1)\), DC solutions can be computed explicitly. For instance, for \(\gamma =1/2\) the ansatz

yields \(\beta =4\), \(\alpha =\lambda ^{2}/144\) and \(\delta =1/2-\sqrt{12/\lambda }\), i.e., a DC for \(\lambda >48\), which turns out to be the unique non–negative solution for \(\lambda >48\), while for \(\lambda <48\) the unique solution is positive. The DC grows as \(\lambda \to \infty \), and the solution is a classical one and in fact in \(C^{3}([0,1])\), while the positive solutions for \(\lambda <48\) are \(C^{\infty }\). Similar computations can also be done for balls in \(d=2,3\) (2D, 3D cases), and are useful for numerical checks.

Problems of this kind, in \(d\) space dimensions and under various BCs and general assumptions, have been studied analytically in detail, including also the case of degenerate diffusion and quasilinear cases. We refer to [29] and the references therein for various older results on (59), which can be summarized as follows: DC solutions exist for many problem classes, and are generally rather smooth, i.e., at least classical solutions; the dead cores \(\Omega _{0}=\{x\in \Omega : u(x)=0\}\) are convex if \(\Omega \) is convex, and their “free boundaries” \({\partial }\Omega _{0}\) determined together with the solution itself are \(C^{2+\gamma }\) if \({\partial }\Omega \) is smooth. See also [22, 47, 64] for more recent results and, e.g., [22] for a comprehensive review of the physical background including the case of systems of equations, for which we also refer to, e.g., [18].

For (59), some results on multiplicity of solutions are available, see for instance [30] and the references therein. These yield that for small \(\lambda \) (relative to the domain size) there is a unique positive solution, and for \(\lambda \) sufficiently large there is a unique DC solution, while [30] also discusses uniqueness vs non–uniqueness in DC problems with BCs depending on a parameter \(\lambda \). However, a genuine bifurcation analysis of DC solutions seems to be largely lacking. The existing theory for bifurcations in nonsmooth dynamical systems, e.g., [20, 21, 48, 50], does not apply as it deals with at least piecewise Lipschitz systems, with the notable exception of [43] where scaling laws near folds in non–Lipschitz systems are derived.

Numerical Issues and Implementation

In the demo acdc, available at [73], we numerically study slight modifications of (59) in 1D and 2D, which (via \(x\)–dependent nonlinearities [1]) allow various stable branches with multiple interfaces bifurcating from \(u\equiv 1\), which subsequently develop different DCs. Here we give a few general comments on modifications of pde2path needed for DC problems, essentially sticking with (59), which then can immediately be transferred to the DC Schnakenberg system studied below.