Abstract

The article reviews the statistical theory of signal detection in application to analysis of deterministic gravitational-wave signals in the noise of a detector. Statistical foundations for the theory of signal detection and parameter estimation are presented. Several tools needed for both theoretical evaluation of the optimal data analysis methods and for their practical implementation are introduced. They include optimal signal-to-noise ratio, Fisher matrix, false alarm and detection probabilities, \({\mathcal F}\)-statistic, template placement, and fitting factor. These tools apply to the case of signals buried in a stationary and Gaussian noise. Algorithms to efficiently implement the optimal data analysis techniques are discussed. Formulas are given for a general gravitational-wave signal that includes as special cases most of the deterministic signals of interest.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

In this review we consider the problem of detection of deterministic gravitational-wave signals in the noise of a detector and the question of estimation of their parameters. The examples of deterministic signals are gravitational waves from rotating neutron stars, coalescing compact binaries, and supernova explosions. The case of detection of stochastic gravitational-wave signals in the noise of a detector is reviewed in [8]. A very powerful method to detect a signal in noise that is optimal by several criteria consists of correlating the data with the template that is matched to the expected signal. This matched-filtering technique is a special case of the maximum likelihood detection method. In this review we describe the theoretical foundation of the method and we show how it can be applied to the case of a very general deterministic gravitational-wave signal buried in a stationary and Gaussian noise.

Early gravitational-wave data analysis was concerned with the detection of bursts originating from supernova explosions [144]. It involved analysis of the coincidences among the detectors [70]. With the growing interest in laser interferometric gravitational-wave detectors that are broadband it was realized that sources other than supernovae can also be detectable [135] and that they can provide a wealth of astrophysical information [122, 77]. For example, the analytic form of the gravitational-wave signal produced during the inspiral phase of a compact binary coalescence is known in terms of a few parameters to a good approximation (see, e.g., [30] and Section 2.4 of [66]). Consequently one can detect such a signal by correlating the data with the predicted waveform (often called the template) and maximizing the correlation with respect to the parameters of the waveform. Using this method one can pick up a weak signal from the noise by building a large signal-to-noise ratio over a wide bandwidth of the detector [135]. This observation has led to rapid development of the theory of gravitational-wave data analysis. It became clear that the detectability of sources is determined by optimal signal-to-noise ratio, which is the power spectrum of the signal divided by the power spectrum of the noise integrated over the bandwidth of the detector.

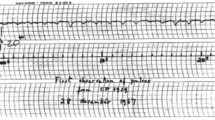

An important landmark was a workshop entitled Gravitational Wave Data Analysis held in Dyffryn House and Gardens, St. Nicholas near Cardiff, in July 1987 [123]. The meeting acquainted physicists interested in analyzing gravitational-wave data with the basics of the statistical theory of signal detection and its application to detection of gravitational-wave sources. As a result of subsequent studies, the Fisher information matrix was introduced to the theory of the analysis of gravitational-wave data [50, 76]. The diagonal elements of the Fisher matrix give lower bounds on the variances of the estimators of the parameters of the signal and can be used to assess the quality of astrophysical information that can be obtained from detections of gravitational-wave signals [41, 75, 25]. It was also realized that the application of matched-filtering to some sources, notably to continuous sources originating from neutron stars, will require extraordinary large computing resources. This gave a further stimulus to the development of optimal and efficient algorithms and data analysis methods [124].

A very important development was the work by Cutler et al. [43] where it was realized that for the case of coalescing binaries matched filtering was sensitive to very small post-Newtonian effects of the waveform. Thus, these effects can be detected. This leads to a much better verification of Einstein’s theory of relativity and provides a wealth of astrophysical information that would make a laser interferometric gravitational-wave detector a true astronomical observatory complementary to those utilizing the electromagnetic spectrum. As further development of the theory, methods were introduced to calculate the quality of suboptimal filters [13], to calculate the number of templates required to do a search using matched-filtering [102], to determine the accuracy of templates required [33], and to calculate the false alarm probability and thresholds [68]. An important point is the reduction of the number of parameters that one needs to search for in order to detect a signal. Namely estimators of a certain type of parameters, called extrinsic parameters, can be found in a closed analytic form and consequently eliminated from the search. Thus, a computationally-intensive search need only be performed over a reduced set of intrinsic parameters [76, 68, 78].

Techniques reviewed in this paper have been used in the data analysis of prototypes of gravitational-wave detectors [100, 99, 12] and in the data analysis of gravitational-wave detectors currently in operation [133, 23, 4, 3, 2].

2 Response of a Detector to a Gravitational Wave

There are two main methods of detecting gravitational waves currently in use. One method is to measure changes induced by gravitational waves on the distances between freely-moving test masses using coherent trains of electromagnetic waves. The other method is to measure the deformation of large masses at their resonance frequencies induced by gravitational waves. The first idea is realized in laser interferometric detectors (both Earth-based [105, 141, 53] and space-borne [85, 137] antennas) and Doppler tracking experiments [14], whereas the second idea is implemented in resonant mass detectors (see, e.g., [22]).

2.1 Doppler shift between freely falling particles

We start by describing the change of a photon’s frequency caused by a passing gravitational wave and registered by particles (representing different parts of a gravitational-wave detector) freely falling in the field of the gravitational wave. The detailed derivation of the formulae we show here can be found in Chapter 5 of [66] (see also [47, 15, 120]). An equivalent derivation of the response of test masses to gravitational waves in the local Lorentz gauge (without making use of the long-wavelength approximation) is given in [113].

We employ here the transverse traceless (TT) coordinate system (more about the TT gauge can be found, e.g., in Section 35.4 of [91] or in Section 1.3 of [66]). A spacetime metric describing a plane gravitational wave traveling in the +z direction of the TT coordinate system (with coordinates x0 ≡ ct, x1 ≡ x, x2 ≡ y, x3 ≡ z), is described by the line element

where h+ and h× are the two independent polarizations of the wave. We assume that the wave is weak, i.e., for any instant of time t,

We will neglect all terms of order h2 or higher. The form of the line element (1) implies that the functions h+(t) and h×(t) describe the wave-induced perturbation of the flat Minkowski metric at the origin of the TT coordinate system (where x = y = z = 0). It is convenient to introduce the three-dimensional matrix of the spatial metric perturbation produced by the gravitational wave (at the coordinate system’s origin),

Let two particles freely fall in the field (1) of the gravitational wave, and let their spatial coordinates remain constant, so the particles’ world lines are described by equations

where (xa, ya, za) are spatial coordinates of the ath particle and τa is its proper time. These two particles measure, in their proper reference frames, the frequency of the same photon traveling along a null geodesic xα = xα(λ), where λ is some affine parameter. The coordinate time, at which the photon’s frequency is measured by the ath particle, is equal to ta (a = 1, 2); we assume that t2 > t1. Let us introduce the coordinate time duration t12 of the photon’s trip and the Euclidean coordinate distance L12 between the particles:

Let us also introduce the 3-vector n of unit Euclidean length directed along the line connecting the two particles. We arrange the components of this vector into the column 3 × 1 matrix n (thus, we distinguish here the 3-vector n from its components being the elements of the matrix n; the same 3-vector can be decomposed into components in different spatial coordinate systems):

where the superscript T denotes matrix transposition. If one neglects the spacetime curvature caused by the gravitational wave, then α, β, and γ are the angles between the path of the photon in the 3-space and the coordinate axis x, y, or z, respectively (obviously, α, β, γ ∈ 〈0;; π〉 and cos2 α + cos2 β + cos2 γ = 1). Let us denote the value of the frequency registered by the ath particle by νa (a = 1, 2) and let us finally define the relative change of the photon’s frequencies,

then, it can be shown (see Chapter 5 of [66] for details) that the frequency ratio y12 can be written [making use of the quantities introduced in Eqs. (3) and (5)–(6)] as follows (the dot means here matrix multiplication):

It is convenient to introduce the unit 3-vector k directed from the origin of the coordinate system to the source of the gravitational wave. In the coordinate system adopted by us the wave is traveling in the +z direction. Therefore, the components of the 3-vector k, arranged into the column matrix k, are

The positions of the particles with respect to the origin of the coordinate system we describe by the 3-vectors xa (a = 1, 2), the components of which we put into the column matrices xa:

Making use of Eqs. (9)–(10) we rewrite the basic formula (8) in the following form

To obtain the response for all currently working and planned detectors it is enough to consider a configuration of three particles shown in Figure 1. Two particles model a Doppler tracking experiment, where one particle is the Earth and the other is a distant spacecraft. Three particles model a ground-based laser interferometer, where the masses are suspended from seismically-isolated supports or a space-borne interferometer, where the three test masses are shielded in satellites driven by drag-free control systems. In Figure 1 we have introduced the following notation: O denotes the origin of the TT coordinate system related to the passing gravitational wave, xa(a = 1, 2, 3) are 3-vectors joining O and the particles, na and La (a = 1, 2, 3) are, respectively, 3-vectors of unit Euclidean length along the lines joining the particles and the coordinate Euclidean distances between the particles, where a is the label of the opposite particle. We still assume that the spatial coordinates of the particles do not change in time.

Schematic configuration of three freely-falling particles as a detector of gravitational waves. The particles are labelled 1, 2, and 3, their positions with respect to the origin O of the coordinate system are given by 3-vectors xa (a = 1, 2, 3). The Euclidean separations between the particles are denoted by La, where the index a corresponds to the opposite particle. The unit 3-vectors na point between pairs of particles, with the orientation indicated.

Let us denote by ν0 the frequency of the coherent beam used in the detector (laser light in the case of an interferometer and radio waves in the case of Doppler tracking). Let the particle 1 emit the photon with frequency ν0 at the moment t0 towards the particle 2, which registers the photon with frequency ν′ at the moment \({t\prime} = {t_0} + {L_3}/c + {\mathcal O(h)}\). The photon is immediately transponded (without change of frequency) back to the particle 1, which registers the photon with frequency ν at the moment \(t = {t_0} + 2{L_3}/c + {\mathcal O(h)}\). We express the relative changes of the photon’s frequency y12 ≔ (ν′ − ν0)/ν0 and y21 ≔ (ν − ν′)/ν′ as functions of the instant of time t. Making use of Eq. (11) we obtain

The total frequency shift y121 ≔ (ν − ν0)/ν0 of the photon during its round trip can be computed from the one-way frequency shifts y12 and y21 given above:

2.2 Long-wavelength approximation

Let L be the size of the detector and ƛ ≔ λ/(2π) be the reduced wavelength of the gravitational wave impinging on the detector. In the long-wavelength approximation the condition ƛ ≫ L is fulfilled. The angular frequency of the wave equals ω = c/ƛ. Time delays caused by the finite speed of the wave propagating across the detector are of order Δt ∼ L/c, but

so time delays across the detector are much shorter than the period of the gravitational wave and can be neglected. It means that with a good accuracy the gravitational-wave field can be treated as being uniform (but time-dependent) in the space region that covers the entire detector. To detect gravitational waves with some dominant angular frequency ω one must collect data over time intervals longer (sometimes much longer) than the gravitational-wave period. This implies that in Eq. (8) for the relative frequency shift, the typical value of the quantity \(\bar t: = {t_1} - {z_1}/c\) will be much larger than the retardation time Δt ≔ L12/c Therefore, we can expand this equation with respect to Δt and keep terms only linear in Δt. After doing this one obtains (see Section 5.3 in [66] for more details):

where overdot denotes differentiation with respect to time.

For the configuration of particles shown in Figure 1, the relative frequency shifts y12 and y21 given by Eqs. (12) can be written, by virtue of the formula (15), in the form

so that they are equal to each other up to terms \({\mathcal O({h^2},\Delta {t^2})}\). The photon’s total round-trip frequency shift y121 [cf. Eq. (13)] is thus equal to

There are important cases where the long-wavelength approximation is not valid. These include satellite Doppler tracking measurements and the space-borne LISA detector for gravitational-wave frequencies larger than a few mHz.

2.3 Solar-system-based detectors

Real gravitational-wave detectors do not stay at rest with respect to the TT coordinate system related to the passing gravitational wave, because they also move in the gravitational field of the solar system bodies, as in the case of the LISA spacecraft, or are fixed to the surface of the Earth, as in the case of Earth-based laser interferometers or resonant bar detectors. Let us choose the origin O of the TT coordinate system employed in Section 2.1 to coincide with the solar system barycenter (SSB). The motion of the detector with respect to the SSB will modulate the gravitational-wave signal registered by the detector. One can show that as far as the velocities of the particles (modeling the detector’s parts) with respect to the SSB are non-relativistic, which is the case for all existing or planned detectors, Eqs. (12) can still be used, provided the 3-vectors xa and na (a = 1, 2, 3) will be interpreted as made of the time-dependent components describing the motion of the particles with respect to the SSB.

It is often convenient to introduce the proper reference frame of the detector with coordinates \(({{\hat x}^a})\). Because the motion of this frame with respect to the SSB is non-relativistic, we can assume that the transformation between the SSB-related coordinates (xα) and the detector’s proper reference frame coordinates \(({{\hat x}^a})\) has the form

where the functions \({{\hat x}^i}_{\hat O}(t)\) describe the motion of the origin Ô of the proper reference frame with respect to the SSB, and the functions \({\rm{O}}_j^i(t)\) account for the different orientations of the spatial axes of the two reference frames. One can compute some of the quantities entering Eqs. (12) in the detector’s coordinates rather than in the TT coordinates. For instance, the matrix Ĥ of the wave-induced spatial metric perturbation in the detector’s coordinates is related to the matrix H of the spatial metric perturbation produced by the wave in the TT coordinate system through equation

where the matrix O has elements \({\rm{O}}_j^i\). If the transformation matrix O is orthogonal, then O−1 = OT, and Eq. (19) simplifies to

See [31, 54, 68, 78] for more details.

For a ground-based laser-interferometric detector, the long-wavelength approximation can be employed (however, see [27, 115, 114] for a discussion of importance of high-frequency corrections, which modify the interferometer response function computed within the long-wavelength approximation). In the case of an interferometer in a standard Michelson and equal-arm configuration (such configurations can be represented by Figure 1 with the particle 1 corresponding to the corner station of the interferometer and with L2 = L3 = L), the observed relative frequency shift Δν(t)/ν0 is equal to the difference of the round-trip frequency shifts in the two detector’s arms [136]:

Let (xd, yd, zd) be the components (with respect to the TT coordinate system) of the 3-vector rd connecting the origin of the TT coordinate system with the corner station of the interferometer. Then x1 = (xd, yd, zd)T, kT · x1 = −zd, and, making use of Eq. (17), the relative frequency shift (21) can be written as

The difference Δϕ(t) of the phase fluctuations measured, say, by a photo detector, is related to the corresponding relative frequency fluctuations Δν(t) by

One can integrate Eq. (22) to write the phase change Δϕ(t) as

where the dimensionless function h,

is the response function of the interferometric detector to a plane gravitational wave in the long-wavelength approximation. To get Eqs. (24)–(25) directly from Eqs. (22)–(23) one should assume that the quantities n2, n3, and zd [entering Eq. (22)] do not depend on time t. But the formulae (24)–(25) can also be used in the case when those quantities are time dependent, provided the velocities ẋa of the detector’s parts with respect to the SSB are non-relativistic. The error we make in such cases is on the order of \({\mathcal O(hv)}\), where v is a typical value of the velocities ẋa. Thus, the response function of the Earth-based interferometric detector equals

where all quantities here are computed in the SSB-related TT coordinate system. The same response function can be written in terms of a detector’s proper-reference-frame quantities as follows

where the matrices Ĥ and H are related to each other by means of formula (20). In Eq. (27) the proper-reference-frame components \({{{\rm{\hat n}}}_2}\) and \({{{\rm{\hat n}}}_3}\) of the unit vectors directed along the interferometer arms can be treated as constant (i.e., time independent) quantities.

From Eqs. (26) and (3) it follows that the response function h is a linear combination of the two wave polarizations h+ and h×, so it can be written as

The functions F+ and F× are the interferometric beam-pattern functions. They depend on the location of the detector on Earth, the position of the gravitational-wave source in the sky, and the polarization angle of the wave (this angle describes the orientation, with respect to the detector, of the axes relative to which the plus and cross polarizations of the wave are defined, see, e.g., Figure 9.2 in [135]). Derivation of the explicit formulae for the interferometric beam patterns F+ and F× can be found, e.g., in Appendix C of [66].

In the long-wavelength approximation, the response function of the interferometric detector can be derived directly from the equation of geodesic deviation [126]. Then the response is defined as the relative difference between the wave-induced changes of the proper lengths of the two arms, i.e., \(h(t): = (\Delta {{\hat L}_2}(t) - \Delta {{\hat L}_3}(t))/{{\hat L}_0}\), where \({{\hat L}_0} + \Delta {{\hat L}_2}(t)\) and \({{\hat L}_0} + \Delta {{\hat L}_3}(t)\) are the instantaneous values of the proper lengths of the interferometer’s arms and \({{\hat L}_0}\) is the unperturbed proper length of these arms.

In the case of an Earth-based resonant-bar detector the long-wavelength approximation is very accurate and the dimensionless detector’s response function can be defined as \(h(t): = \Delta \hat L(t)/{{\hat L}_0}\), where \(\Delta \hat L(t)\) is the wave-induced change of the proper length \({{\hat L}_0}\) of the bar. The response function h can be computed in terms of the detector’s proper-reference-frame quantities from the formula (see, e.g., Section 9.5.2 in [135])

where the column matrix \({{\rm{\hat n}}}\) consists of the components (computed in the proper reference frame of the detector) of the unit vector n directed along the symmetry axis of the bar. The response function (29) can be written as a linear combination of the wave polarizations h+ and h×, i.e., the formula (28) is also valid for the resonant-bar response function but with some bar-pattern functions F+ and F× different from the interferometric beam-pattern functions. Derivation of the explicit form of the bar patterns can be found, e.g., in Appendix C of [66].

2.4 General form of the response function

In many cases of interest the response function of the detector to a plane gravitational wave can be written as a linear combination of n waveforms hk(t; ξ) which all depend on the same set of parameters ξ = (ξ1, …, ξm)] with constant amplitudes ak(k = 1, …, n),

where the vector θ collects all the signal’s parameters. It is convenient to introduce column matrices

then one can briefly write θ = (a, ξ) and the response (30) can be written as

The functions hk (k = 1, …, n) are independent of the parameters a. The parameters a are called extrinsic (or amplitude) parameters whereas the parameters ξ are called intrinsic.

Eq. (32) with n = 4 is a model of the response of the space-based detector LISA to gravitational waves from a binary system [78]. Also for n = 4 the same equation models the response of a ground-based detector to a continuous source of gravitational waves like a rotating neutron star [68]. For ground-based detectors the long-wavelength approximation can be applied and within this approximation the functions hk (k = 1, …, 4) are given by

where ϕ(t; ξ) is the phase modulation of the signal and u(t; ξ) and v(t; ξ) are slowly varying amplitude modulations. The gravitational-wave signal from spinning neutron stars may consist of several components of the form (32). For short observation times over which the amplitude modulation functions are nearly constant, the response of the ground-based detector can further be approximated by

where A0 and ϕ0 are constant amplitude and initial phase, respectively, and g(t; ξ) is a slowly varying function of time. Eq. (34) is a good model for the response of a detector to the gravitational wave from a coalescing compact binary system [135, 30]. We would like to stress that not all deterministic gravitational-wave signals may be cast into the general form (32).

3 Statistical Theory of Signal Detection

The gravitational-wave signal will be buried in the noise of the detector and the data from the detector will be a random (or stochastic) process. Consequently, the problem of extracting the signal from the noise is a statistical one. The basic idea behind signal detection is that the presence of the signal changes the statistical characteristics of the data x, in particular its probability distribution. When the signal is absent the data have probability density function (pdf) p0(x), and when the signal is present the pdf is p1(x).

A thorough introduction to probability theory and mathematical statistics can be found, e.g., in [51, 130, 131, 101]. A full exposition of statistical theory of signal detection that is only outlined here can be found in the monographs [147, 74, 143, 139, 87, 58, 107]. A general introduction to stochastic processes is given in [145] and advanced treatment of the subject can be found in [84, 146]. A concise introduction to the statistical theory of signal detection and time series analysis is contained in Chapters 3 and 4 of [66].

3.1 Hypothesis testing

The problem of detecting the signal in noise can be posed as a statistical hypothesis testing problem. The null hypothesis H0 is that the signal is absent from the data and the alternative hypothesis H1 is that the signal is present. A hypothesis test (or decision rule) δ is a partition of the observation set into two subsets, \({\mathcal R}\) and its complement \({\mathcal R\prime}\). If data are in \({\mathcal R}\) we accept the null hypothesis, otherwise we reject it. There are two kinds of errors that we can make. A type I error is choosing hypothesis H1 when H0 is true and a type II error is choosing H0 when H1 is true. In signal detection theory the probability of a type I error is called the false alarm probability, whereas the probability of a type II error is called the false dismissal probability. 1 − (false dismissal probability) is the probability of detection of the signal. In hypothesis testing theory, the probability of a type I error is called the significance of the test, whereas 1 − (probability of type II error) is called the power of the test.

The problem is to find a test that is in some way optimal. There are several approaches to finding such a test. The subject is covered in detail in many books on statistics, for example, see [72, 51, 80, 83].

3.1.1 Bayesian approach

In the Bayesian approach we assign costs to our decisions; in particular we introduce positive numbers Cij, i, j = 0, 1, where Cij is the cost incurred by choosing hypothesis Hi when hypothesis Hj is true. We define the conditional risk R of a decision rule δ for each hypothesis as

where Pj is the probability distribution of the data when hypothesis Hj is true. Next, we assign probabilities π0 and π1 = 1 − π0 to the occurrences of hypotheses H0 and H1, respectively. These probabilities are called a priori probabilities or priors. We define the Bayes risk as the overall average cost incurred by the decision rule δ:

Finally we define the Bayes rule as the rule that minimizes the Bayes risk r(δ).

3.1.2 Minimax approach

Very often in practice we do not have control over or access to the mechanism generating the state of nature and we are not able to assign priors to various hypotheses. In such a case one criterion is to seek a decision rule that minimizes, over all δ, the maximum of the conditional risks, R0(δ) and R1(δ). A decision rule that fulfills that criterion is called a minimax rule.

3.1.3 Neyman-Pearson approach

In many problems of practical interest the imposition of a specific cost structure on the decisions made is not possible or desirable. The Neyman-Pearson approach involves a trade-off between the two types of errors that one can make in choosing a particular hypothesis. The Neyman-Pearson design criterion is to maximize the power of the test (probability of detection) subject to a chosen significance of the test (false alarm probability).

3.1.4 Likelihood ratio test

It is remarkable that all three very different approaches — Bayesian, minimax, and Neyman-Pearson — lead to the same test called the likelihood ratio test [44]. The likelihood ratio Λ is the ratio of the pdf when the signal is present to the pdf when it is absent:

We accept the hypothesis H1 if Λ > k, where k is the threshold that is calculated from the costs Cij, priors πi, or the significance of the test depending on which approach is being used.

3.2 The matched filter in Gaussian noise

Let h be the gravitational-wave signal we are looking for and let n be the detector’s noise. For convenience we assume that the signal h is a continuous function of time t and that the noise n is a continuous random process. Results for the discrete-in-time data that we have in practice can then be obtained by a suitable sampling of the continuous-in-time expressions. Assuming that the noise is additive the dat x can be written as

The autocorrelation function of the noise n is defined as

where E denotes the expectation value.

Let us further assume that the detector’s noise n is a zero-mean and Gaussian random process. It can then be shown that the logarithm of the likelihood function is given by the following Cameron-Martin formula

where 〈0; To〉 is the time interval over which the data was collected and the function q is the solution of the integral equation

For stationary noise, its autocorrelation function (39) depends on times t and t′ only through the difference t − t′. It implies that there exists then an even function κn of one variable such that

Spectral properties of stationary noise are described by its one-sided spectral density, defined for non-negative frequencies f ≥ 0 through relation

for negative frequencies f < 0, by definition, Sn(f) = 0. The spectral density Sn can also be determined by correlations between the Fourier components of the detector’s noise

For the case of stationary noise with one-sided spectral density Sn, it is convenient to define the scalar product (x∣y) between any two waveforms x and y,

where ℜ denotes the real part of a complex expression, the tilde denotes the Fourier transform, and the asterisk is complex conjugation. Making use of this scalar product, the log likelihood function (40) can be written as

From the expression (46) we see immediately that the likelihood ratio test consists of correlating the data x with the signal h that is present in the noise and comparing the correlation to a threshold. Such a correlation is called the matched filter. The matched filter is a linear operation on the data.

An important quantity is the optimal signal-to-noise ratio ρ defined by

By means of Eq. (45) it can be written as

We see in the following that ρ determines the probability of detection of the signal. The higher the signal-to-noise ratio the higher the probability of detection.

An interesting property of the matched filter is that it maximizes the signal-to-noise ratio over all linear filters [44]. This property is independent of the probability distribution of the noise.

3.3 Parameter estimation

Very often we know the waveform of the signal that we are searching for in the data in terms of a finite number of unknown parameters. We would like to find optimal procedures of estimating these parameters. An estimator of a parameter θ is a function \(\hat \theta (x)\) that assigns to each data set the “best” guess of the true value of θ. Note that because \(\hat \theta (x)\) depends on the random data it is a random variable. Ideally we would like our estimator to be (i) unbiased, i.e., its expectation value to be equal to the true value of the parameter, and (ii) of minimum variance. Such estimators are rare and in general difficult to find. As in the signal detection there are several approaches to the parameter estimation problem. The subject is exposed in detail in [81, 82]. See also [149] for a concise account.

3.3.1 Bayesian estimation

We assign a cost function C(θ′, θ) of estimating the true value of θ as θ′. We then associate with an estimator \({\hat \theta}\) a conditional risk or cost averaged over all realizations of data x for each value of the parameter θ:

where X is the set of observations and p(x, θ) is the joint probability distribution of data x and parameter θ. We further assume that there is a certain a priori probability distribution π(θ) of the parameter θ. We then define the Bayes estimator as the estimator that minimizes the average risk defined as

where E is the expectation value with respect to an a priori distribution π, and Θ is the set of observations of the parameter θ. It is not difficult to show that for a commonly used cost function

the Bayesian estimator is the conditional mean of the parameter θ given data x, i.e.,

where p(θ∣x) is the conditional probability density of parameter θ given the data x.

3.3.2 Maximum a posteriori probability estimation

Suppose that in a given estimation problem we are not able to assign a particular cost function C(θ′, θ). Then a natural choice is a uniform cost function equal to 0 over a certain interval Iθ of the parameter θ. From Bayes theorem [28] we have

where p(x) is the probability distribution of data x. Then, from Eq. (50) one can deduce that for each data point x the Bayes estimate is any value of θ that maximizes the conditional probability p(θ∣x). The density p(θ∣x) is also called the a posteriori probability density of parameter θ and the estimator that maximizes p(θ∣x) is called the maximum a posteriori (MAP) estimator. It is denoted by \({{\hat \theta}_{{\rm{MAP}}}}\). We find that the MAP estimators are solutions of the following equation

which is called the MAP equation.

3.3.3 Maximum likelihood estimation

Often we do not know the a priori probability density of a given parameter and we simply assign to it a uniform probability. In such a case maximization of the a posteriori probability is equivalent to maximization of the probability density p(x, θ) treated as a function of θ. We call the function l(θ, x) ≔ p(x, θ) the likelihood function and the value of the parameter θ that maximizes l(θ, x) the maximum likelihood (ML) estimator. Instead of the function l we can use the function Λ(θ, x) = l(θ, x)/p(x) (assuming that p(x) > 0). Λ is then equivalent to the likelihood ratio [see Eq. (37)] when the parameters of the signal are known. Then the ML estimators are obtained by solving the equation

which is called the ML equation.

3.4 Fisher information and Cramèr-Rao bound

It is important to know how good our estimators are. We would like our estimator to have as small a variance as possible. There is a useful lower bound on variances of the parameter estimators called the Cramèr-Rao bound, which is expressed in terms of the Fisher information matrix Γ(θ) For the signal h(t; θ), which depends on K parameters collected into the vector θ = (θ1,…, θk), the components of the matrix Γ(θ) are defined as

The Cramèr-Rao bound states that for unbiased estimators the covariance matrix C(θ) of the estimators θ fulfills the inequality

(The inequality A ≥ B for matrices means that the matrix A − B is nonnegative definite.)

A very important property of the ML estimators is that asymptotically (i.e., for a signal-to-noise ratio tending to infinity) they are (i) unbiased, and (ii) they have a Gaussian distribution with covariance matrix equal to the inverse of the Fisher information matrix.

In the case of Gaussian noise the components of the Fisher matrix are given by

where the scalar product (·∣·) is defined in Eq. (45).

4 Maximum-likelihood Detection in Gaussian Noise

In this section, we study the detection of a deterministic gravitational-wave signal h(t; θ) of the general form given by Eq. (32) and the estimation of its parameters θ using the maximum-likelihood (ML) principle. We assume that the noise n(t) in the detector is a zero-mean, Gaussian, and stationary random process. The data x in the detector, in the case when the gravitational-wave signal h(t; θ) is present, is x(t; θ) = n(t) + h(t; θ). The parameters θ = (a, ξ) of the signal (32) split into extrinsic (or amplitude) parameters a and intrinsic ones ξ.

4.1 The \({\mathcal F}\)-statistic

For the gravitational-wave signal h(t; a, ξ) of the form given in Eq. (32) the log likelihood function (46) can be written as

where the components of the column n × 1 matrix N and the square n × n matrix M are given by

The ML equations for the extrinsic parameters a, ∂ logΛ[x; a, ξ]/∂a = 0, can be solved explicitly to show that the ML estimators â of the parameters a are given by

Replacing the extrinsic parameters a in Eq. (59) by their ML estimators â, we obtain the reduced log likelihood function,

that we call the \({\mathcal F}\)-statistic. The \({\mathcal F}\)-statistic depends nonlinearly on the intrinsic parameters ξ of the signal, it does not depend on the extrinsic parameters a.

The procedure to detect the gravitational-wave signal of the form (32) and estimate its parameters consists of two parts. The first part is to find the (local) maxima of the \({\mathcal F}\)-statistic (62) in the intrinsic parameters space. The ML estimators \({\hat \xi}\) of the intrinsic parameters ξ are those values of ξ for which the \({\mathcal F}\)-statistic attains a maximum. The second part is to calculate the estimators â of the extrinsic parameters a from the analytic formula (61), where the matrix M and the correlations N are calculated for the parameters ξ replaced by their ML estimators \({\hat \xi}\) obtained from the first part of the analysis. We call this procedure the maximum likelihood detection. See Section 4.6 for a discussion of the algorithms to find the (local) maxima of the \({\mathcal F}\)-statistic.

4.1.1 Targeted searches

The \({\mathcal F}\)-statistic can also be used in the case when the intrinsic parameters are known. An example of such an analysis called a targeted search is the search for a gravitational-wave signal from a known pulsar. In this case assuming that gravitational-wave emission follows the radio timing, the phase of the signal is known from pulsar observations and the only unknown parameters of the signal are the amplitude (or extrinsic) parameters a [see Eq. (30)]. To detect the signal one calculates the \({\mathcal F}\)-statistic for the known values of the intrinsic parameters and compares it to a threshold [67]. When a statistically-significant signal is detected, one then estimates the amplitude parameters from the analytic formulae (61).

In [109] it was shown that the maximum-likelihood \({\mathcal F}\)-statistic can be interpreted as a Bayes factor with a simple, but unphysical, amplitude prior (and an additional unphysical sky-position weighting). Using a more physical prior based on an isotropic probability distribution for the unknown spin-axis orientation of emitting systems, a new detection statistic (called the \({\mathcal B}\)-statistic) was obtained. Monte Carlo simulations for signals with random (isotropic) spin-axis orientations show that the \({\mathcal B}\)-statistic is more powerful (in terms of its expected detection probability) than the \({\mathcal F}\)-statistic. A modified version of the \({\mathcal F}\)-statistic that can be more powerful than the original one has been studied in [20].

4.2 Signal-to-noise ratio and the Fisher matrix

The detectability of the signal h(t; θ) is determined by the signal-to-noise ratio ρ. In general it depends on all the signal’s parameters θ and can be computed from [see Eq. (47)]

The signal-to-noise ratio for the signal (32) can be written as

where the components of the matrix M(ξ) are defined in Eq. (60).

The accuracy of estimation of the signal’s parameters is determined by Fisher information matrix Γ. The components of Γ in the case of the Gaussian noise can be computed from Eq. (58). For the signal given in Eq. (32) the signal’s parameters (collected into the vector θ) split into extrinsic and intrinsic parameters: θ = (a, ξ), where a = (a1, …, an) and ξ = (ξ1,…, ξm). It is convenient to distinguish between extrinsic and intrinsic parameter indices. Therefore, we use calligraphic lettering to denote the intrinsic parameter indices: \({\xi _{\mathcal A}},{\mathcal A} = 1, \ldots,m\). The matrix Γ has dimension (n + m) × (n + m) and it can be written in terms of four block matrices for the two sets of the parameters a and ξ,

where Γaa is an n × n matrix with components (∂h/∂ai∣∂h/∂aj) (i, j = 1, …, n), Γaξ is an n × m matrix with components \((\partial h/\partial {a_i}\backslash \partial h/\partial {\xi _\mathcal A})(i = 1, \ldots, n,\mathcal A = 1, \ldots, m)\), and finally Γξξ is m × m matrix with components \((\partial h/\partial {\xi _\mathcal A}\backslash \partial h/\partial {\xi _\mathcal B})(\mathcal A,\mathcal B = 1, \ldots, m)\).

We introduce two families of the auxiliary n × n square matrices \({{\rm{F}}_{(\mathcal A)}}\) and \({{\rm{S}}_{({\mathcal A}{\mathcal B})}}({\mathcal A},{\mathcal B} = 1, \ldots, m)\), which depend on the intrinsic parameters ξ only (the indexes \({\mathcal A},{\mathcal B}\) within parentheses mean that they serve here as the matrix labels). The components of the matrices \({{\rm{F}}_{(\mathcal A)}}\) and \({{\rm{S}}_{({\mathcal A}{\mathcal B})}}\) are defined as follows:

Making use of the definitions (60) and (66)–(67) one can write the more explicit form of the matrices Γaa, Γaξ, and Γξξ,

The notation introduced above means that the matrix Γaξ can be thought of as a 1 × m row matrix made of n × 1 column matrices \({{\rm{F}}_{(\mathcal A)}}\). Thus, the general formula for the component of the matrix Γaξ is

The general component of the matrix Γξξ is given by

The covariance matrix C, which approximates the expected covariances of the ML estimators of the parameters θ, is defined as Γ−1 Applying the standard formula for the inverse of a block matrix [90] to Eq. (65), one gets

where the matrices Caa, Caξ, and Cξξ can be expressed in terms of the matrices Γaa = M, Γaξ, and Γξξ as follows:

In Eqs. (74)–(76) we have introduced the m × m matrix:

We call the matrix \({\bar \Gamma}\) (which is the Schur complement of the matrix M) the projected Fisher matrix (onto the space of intrinsic parameters). Because the matrix \({\bar \Gamma}\) is the inverse of the intrinsic-parameter submatrix Cξξ of the covariance matrix C, it expresses the information available about the intrinsic parameters that takes into account the correlations with the extrinsic parameters. The matrix \({\bar \Gamma}\) is still a function of the putative extrinsic parameters.

We next define the normalized projected Fisher matrix (which is the m × m square matrix)

where ρ is the signal-to-noise ratio. Making use of the definition (77) and Eqs. (71)–(72) we can show that the components of this matrix can be written in the form

where \({{\rm{A}}_{({\mathcal A}{\mathcal B})}}\) is the n × n matrix defined as

From the Rayleigh principle [90] it follows that the minimum value of the component \({({{\bar \Gamma}_n}({\rm{a}},\xi))_{{\mathcal A}{\mathcal B}}}\) is given by the smallest eigenvalue of the matrix \({{\rm{M}}^{- 1}} \cdot {{\rm{A}}_{({\mathcal A}{\mathcal B})}}\). Similarly, the maximum value of the component \({({{\bar \Gamma}_n}({\rm{a}},\xi))_{{\mathcal A}{\mathcal B}}}\) is given by the largest eigenvalue of that matrix.

Because the trace of a matrix is equal to the sum of its eigenvalues, the m × m square matrix \({\tilde \Gamma}\) with components

expresses the information available about the intrinsic parameters, averaged over the possible values of the extrinsic parameters. Note that the factor 1/n is specific to the case of n extrinsic parameters. We shall call \({\tilde \Gamma}\) the reduced Fisher matrix. This matrix is a function of the intrinsic parameters alone. We shall see that the reduced Fisher matrix plays a key role in the signal processing theory that we present here. It is used in the calculation of the threshold for statistically significant detection and in the formula for the number of templates needed to do a given search.

For the case of the signal

the normalized projected Fisher matrix \({{\bar \Gamma}_n}\) is independent of the extrinsic parameters A0 and ϕ0, and it is equal to the reduced matrix \({\tilde \Gamma}\) [102]. The components of \({\tilde \Gamma}\) are given by

where Γ0 is the Fisher matrix for the signal g(t; ξ) cos (ϕ(t; ξ) − ϕ0).

4.3 False alarm and detection probabilities

4.3.1 False alarm and detection probabilities for known intrinsic parameters

We first present the false alarm and detection probabilities when the intrinsic parameters ξ of the signal are known. In this case the \({\mathcal F}\)-statistic is a quadratic form of the random variables that are correlations of the data. As we assume that the noise in the data is Gaussian and the correlations are linear functions of the data, \({\mathcal F}\) is a quadratic form of the Gaussian random variables. Consequently the \({\mathcal F}\)-statistic has a distribution related to the χ2 distribution. One can show (see Section III B in [65]) that for the signal given by Eq. (30), \(2{\mathcal F}\) has a χ2 distribution with n degrees of freedom when the signal is absent and noncentral χ2 distribution with n degrees of freedom and non-centrality parameter equal to the square of the signal-to-noise ratio when the signal is present.

As a result the pdfs p0 and p1 of the \({\mathcal F}\)-statistic, when the intrinsic parameters are known and when respectively the signal is absent or present in the data, are given by

where n is the number of degrees of freedom of χ2 distribution and In/2−1 is the modified Bessel function of the first kind and order n/2 − 1. The false alarm probability PF is the probability that \({\mathcal F}\) exceeds a certain threshold \({{\mathcal F}_0}\) when there is no signal. In our case we have

The probability of detection PD is the probability that \({\mathcal F}\) exceeds the threshold \({{\mathcal F}_0}\) when a signal is present and the signal-to-noise ratio is equal to ρ:

The integral in the above formula can be expressed in terms of the generalized Marcum Q-function [132, 58], \({P_{\rm{D}}}(\rho, {{\mathcal F}_0}) = Q(\rho, \sqrt {2{{\mathcal F}_0}})\). We see that when the noise in the detector is Gaussian and the intrinsic parameters are known, the probability of detection of the signal depends on a single quantity: the optimal signal-to-noise ratio ρ.

4.3.2 False alarm probability for unknown intrinsic parameters

Next we return to the case in which the intrinsic parameters ξ are not known. Then the statistic \({\mathcal F}[x;\xi ]\) given by Eq. (62) is a certain multiparameter random process called the random field (see monographs [5, 6] for a comprehensive discussion of random fields). If the vector ξ has one component the random field is simply a random process. For random fields we define the autocovariance function \({\mathcal C}\) just in the same way as we define such a function for a random process:

where ξ and ξ′ are two values of the intrinsic parameter set, and E0 is the expectation value when the signal is absent. One can show that for the signal (30) the autocovariance function \({\mathcal C}\) is given by

where Q is an n × n matrix with components

Obviously Q(ξ, ξ) = M(ξ), therefore \({\mathcal C}(\xi, \xi) = n/2\).

One can estimate the false alarm probability in the following way [68]. The autocovariance function \({\mathcal C}\) tends to zero as the displacement Δξ = ξ′ − ξ increases (it is maximal for Δξ = 0). Thus we can divide the parameter space into elementary cells such that in each cell the autocovariance function \({\mathcal C}\) is appreciably different from zero. The realizations of the random field within a cell will be correlated (dependent), whereas realizations of the random field within each cell and outside of the cell are almost uncorrelated (independent). Thus, the number of cells covering the parameter space gives an estimate of the number of independent realizations of the random field.

We choose the elementary cell with its origin at the point ξ to be a compact region with boundary defined by the requirement that the autocovariance \({\mathcal C}(\xi, {\xi \prime})\) between the origin ξ and any point ξ′ at the cell’s boundary equals half of its maximum value, i.e., \({\mathcal C}(\xi, \xi)/2\). Thus, the elementary cell is defined by the inequality

with ξ at the cell’s center and ξ′ on the cell’s boundary.

To estimate the number of cells we perform the Taylor expansion of the autocovariance function up to the second-order terms:

As \({\mathcal C}\) attains its maximum value when ξ − ξ′ = 0, we have

Let us introduce the symmetric matrix G with components

then, the inequality (91) for the elementary cell can approximately be written as

It is interesting to find a relation between the matrix G and the Fisher matrix. One can show (see [78], Appendix B) that the matrix G is precisely equal to the reduced Fisher matrix \({\tilde \Gamma}\) given by Eq. (81).

If the components of the matrix G are constant (i.e., they are independent of the values of the intrinsic parameters ξ of the signal), the above equation defines a hyperellipsoid in m-dimensional (m is the number of the intrinsic parameters) Euclidean space ℝm. The m-dimensional Euclidean volume Vcell of the elementary cell given by Eq. (95) equals

where Γ denotes the Gamma function. We estimate the number Ncells of elementary cells by dividing the total Euclidean volume V of the m-dimensional intrinsic parameter space by the volume Vcell of one elementary cell, i.e., we have

The components of the matrix G are constant for the signal h(t; A0, ϕ0, ξ) = A0 cos (ϕ(t; ξ) − ϕ0), provided the phase ϕ(t; ξ) is a linear function of the intrinsic parameters ξ.

To estimate the number of cells in the case when the components of the matrix G are not constant, i.e., when they depend on the values of the intrinsic parameters ξ, one replaces Eq. (97) by

This formula can be thought of as interpreting the matrix G as the metric on the parameter space. This interpretation appeared for the first time in the context of gravitational-wave data analysis in the work by Owen [102], where an analogous integral formula was proposed for the number of templates needed to perform a search for gravitational-wave signals from coalescing binaries.

The concept of number of cells was introduced in [68] and it is a generalization of the idea of an effective number of samples introduced in [46] for the case of a coalescing binary signal.

We approximate the pdf of the \({\mathcal F}\)-statistic in each cell by the pdf \({p_0}({\mathcal F})\) of the \({\mathcal F}\)-statistic when the parameters are known [it is given by Eq. (84)]. The values of the \({\mathcal F}\)-statistic in each cell can be considered as independent random variables. The probability that \({\mathcal F}\) does not exceed the threshold \({{\mathcal F}_0}\) in a given cell is \(1 - {P_F}({{\mathcal F}_0})\), where \({P_F}({{\mathcal F}_0})\) is given by Eq. (86). Consequently the probability that \({\mathcal F}\) does not exceed the threshold \({{\mathcal F}_0}\) in all the Ncells cells is \({[1 - {P_F}({{\mathcal F}_0})]^{{N_{{\rm{cells}}}}}}\). Thus, the probability \(P_F^T\) that \({\mathcal F}\) exceeds \({{\mathcal F}_0}\) in one or more cells is given by

By definition, this is the false alarm probability when the phase parameters are unknown. The number of false alarms NF is given by

A different approach to the calculation of the number of false alarms using the Euler characteristic of level crossings of a random field is described in [65].

It was shown (see [39]) that for any finite \({{\mathcal F}_0}\) and Ncells, Eq. (99) provides an upper bound for the false alarm probability. Also in [39] a tighter upper bound for the false alarm probability was derived by modifying a formula obtained by Mohanty [92]. The formula amounts essentially to introducing a suitable coefficient multiplying the number Ncells of cells.

4.3.3 Detection probability for unknown intrinsic parameters

When the signal is present in the data a precise calculation of the pdf of the \({\mathcal F}\)-statistic is very difficult because the presence of the signal makes the data’s random process non-stationary. As a first approximation we can estimate the probability of detection of the signal when the intrinsic parameters are unknown by the probability of detection when these parameters are known [it is given by Eq. (87)]. This approximation assumes that when the signal is present the true values of the intrinsic parameters fall within the cell where the \({\mathcal F}\)-statistic has a maximum. This approximation will be the better the higher the signal-to-noise ratio ρ is.

4.4 Number of templates

To search for gravitational-wave signals we evaluate the \({\mathcal F}\)-statistic on a grid in parameter space. The grid has to be sufficiently fine such that the loss of signals is minimized. In order to estimate the number of points of the grid, or in other words the number of templates that we need to search for a signal, the natural quantity to study is the expectation value of the \({\mathcal F}\)-statistic when the signal is present.

Thus, we assume that the data x contains the gravitational-wave signal h(t; θ) defined in Eq. (32), so x(t; θ) = h(t; θ) + n(t). The parameters θ = (a, ξ) of the signal consist of extrinsic parameters a and intrinsic parameters ξ. The data x will be correlated with the filters hi(t; ξ′) (i = 1,…, n) parameterized by the values ξ′ of the intrinsic parameters. The \({\mathcal F}\)-statistic can thus be written in the form [see Eq. (62)]

where the matrices M and N are defined in Eqs. (60). The expectation value of the \({\mathcal F}\)-statistic (101) is

where the matrix Q is defined in Eq. (90). Let us rewrite the expectation value (102) in the following form,

where ρ is the signal-to-noise ratio and where we have introduced the normalized correlation function \({{\mathcal C}_{\rm{n}}}\),

From the Rayleigh principle [90] it follows that the minimum value of the normalized correlation function is equal to the smallest eigenvalue of the matrix M(ξ)−1 · Q(ξ, ξ′) · M(ξ′)−1 · Q(ξ, ξ′)T, whereas the maximum value is given by its largest eigenvalue. We define the reduced correlation function \({\mathcal C}\) as

As the trace of a matrix equals the sum of its eigenvalues, the reduced correlation function \({\mathcal C}\) is equal to the average of the eigenvalues of the normalized correlation function \({{\mathcal C}_{\rm{n}}}\). In this sense we can think of the reduced correlation function as an “average” of the normalized correlation function. The advantage of the reduced correlation function is that it depends only on the intrinsic parameters ξ, and thus is suitable for studying the number of grid points on which the \({\mathcal F}\)-statistic needs to be evaluated. We also note that the normalized correlation function \({\mathcal C}\) precisely coincides with the autocovariance function \({\mathcal C}\) of the \({\mathcal F}\)-statistic given by Eq. (89).

As in the calculation of the number of cells in order to estimate the number of templates we perform a Taylor expansion of \({\mathcal C}\) up to second order terms around the true values of the parameters, and we obtain an equation analogous to Eq. (95),

where G is given by Eq. (94). By arguments identical to those in deriving the formula for the number of cells we arrive at the following formula for the number of templates:

When C0 = 1/2 the above formula coincides with the formula for the number Ncells of cells, Eq. (98). Here we would like to place the templates sufficiently closely so that the loss of signals is minimized. Thus 1 − C0 needs to be chosen sufficiently small. The formula (107) for the number of templates assumes that the templates are placed in the centers of hyperspheres and that the hyperspheres fill the parameter space without holes. In order to have a tiling of the parameter space without holes we can place the templates in the centers of hypercubes, which are inscribed in the hyperspheres. Then the formula for the number of templates reads

For the case of the signal given by Eq. (34) our formula for the number of templates is equivalent to the original formula derived by Owen [102]. Owen [102] has also introduced a geometric approach to the problem of template placement involving the identification of the Fisher matrix with a metric on the parameter space. An early study of the template placement for the case of coalescing binaries can be found in [121, 45, 26]. Applications of the geometric approach of Owen to the case of spinning neutron stars and supernova bursts are given in [33, 16].

4.4.1 Covering problem

The problem of how to cover the parameter space with the smallest possible number of templates, such that no point in the parameter space lies further away from a grid point than a certain distance, is known in mathematical literature as the covering problem [38]. This was first studied in the context of gravitational-wave data analysis by Prix [ ]. The maximum distance of any point to the next grid point is called the covering radius R. An important class of coverings are lattice coverings. We define a lattice in m-dimensional Euclidean space ℝm to be the set of points including 0 such that if u and v are lattice points, then also u + v and u − v are lattice points. The basic building block of a lattice is called the fundamental region. A lattice covering is a covering of ℝm by spheres of covering radius R, where the centers of the spheres form a lattice. The most important quantity of a covering is its thickness Θ defined as

In the case of a two-dimensional Euclidean space the best covering is the hexagonal covering and its thickness ≃ 1.21. For dimensions higher than 2 the best covering is not known. However, we know the best lattice covering for dimensions m ≤ 23. These are A*m lattices, which have thicknesses \({\Theta _{A_m^{\ast}}}\) equal to

where Vm is the volume of the m-dimensional sphere of unit radius. The advantage of an A*m lattice over the hypercubic lattice grows exponentially with the number of dimensions.

For the case of gravitational-wave signals from spinning neutron stars a 3-dimensional grid was constructed [18]. It consists of prisms with hexagonal bases. Its thickness is around 1.84, which is much better than the cubic grid with a thickness of approximately 2.72. It is worse than the best 3-dimensional lattice covering, which has a thickness of around 1.46.

In [19] a grid was constructed in the 4-dimensional parameter space spanned by frequency, frequency derivative, and sky position of the source, for the case of an almost monochromatic gravitational-wave signal originating from a spinning neutron star. The starting point of the construction was an A*4 lattice of thickness ≃ 1.77. The grid was then constrained so that the nodes of the grid coincide with Fourier frequencies. This allowed the use of a fast Fourier transform (FFT) to evaluate the maximum-likelihood \({\mathcal F}\)-statistic efficiently (see Section 4.6.2). The resulting lattice is only 20% thicker than the optimal A*4 lattice.

Efficient 2-dimensional banks of templates suitable for directed searches (in which one assumes that the position of the gravitational-wave source in the sky is known, but one does not assume that the wave’s frequency and its derivative are a priori known) were constructed in [104]. All grids found in [104] enable usage of the FFT algorithm in the computation of the \({\mathcal F}\)-statistic; they have thicknesses 0.1–16% larger than the thickness of the optimal 2-dimensional hexagonal covering. In the construction of grids the dependence on the choice of the position of the observational interval with respect to the origin of time axis was employed. Also the usage of the FFT algorithms with nonstandard frequency resolutions achieved by zero padding or folding the data was discussed.

The above template placement constructions are based on a Fisher matrix with constant coefficients, i.e., they assume that the parameter manifold is flat. The generalization to curved Riemannian parameter manifolds is difficult. An interesting idea to overcome this problem is to use stochastic template banks where a grid in the parameter space is randomly generated by some algorithm [89, 57, 86, 119].

4.5 Suboptimal filtering

To extract gravitational-wave signals from the detector’s noise one very often uses filters that are not optimal. We may have to choose an approximate, suboptimal filter because we do not know the exact form of the signal (this is almost always the case in practice) or in order to reduce the computational cost and to simplify the analysis. In the case of the signal of the form given in Eq. (32) the most natural and simplest way to proceed is to use as detection statistic the \({\mathcal F}\) statistic where the filters h′k(t; ζ) (k = 1, …, n) are the approximate ones instead of the optimal ones hk(t; ξ) (k = 1,…, n) matched to the signal. In general the functions h′k(t; ζ) will be different from the functions hk(t; ξ) used in optimal filtering, and also the set of parameters ζ will be different from the set of parameters ξ in optimal filters. We call this procedure the suboptimal filtering and we denote the suboptimal statistic by \({{\mathcal F}_{\rm{s}}}\). It is defined as [see Eq. (62)]

where the data-dependent n × 1 column matrix Ns and the square n × n matrix Ms have components [see Eq. (60)]

We need a measure of how well a given suboptimal filter performs. To find such a measure we calculate the expectation value of the suboptimal statistic \({{\mathcal F}_{\rm{s}}}\) in the case where the data contains the gravitational-wave signal, i.e., when x(t; a, ξ) = n(t) + h(t; a, ξ). We get

where we have introduced the matrix Qs with components

Let us rewrite the expectation value (113) in the following form,

where ρ is the optimal signal-to-noise ratio [given in Eq. (64)]. This expectation value reaches its maximum equal to (n + ρ2)/2 when the filter is perfectly matched to the signal. Therefore, a natural measure of the performance of a suboptimal filter is the quantity FF defined by

We call the quantity FF the generalized fitting factor. From the Rayleigh principle, it follows that the generalized fitting factor is the maximum of the largest eigenvalue of the matrix M(ξ)−1 · Qs(ξ, ζ) · Ms(ζ)−1 · Qs(ξ, ζ)T over the intrinsic parameters of the signal.

In the case of a gravitational-wave signal given by

the generalized fitting factor defined above reduces to the fitting factor introduced by Apostolatos [13]:

The fitting factor is the ratio of the maximal signal-to-noise ratio that can be achieved with suboptimal filtering to the signal-to-noise ratio obtained when we use a perfectly matched, optimal filter. We note that for the signal given by Eq. (117), FF is independent of the value of the amplitude A0.

For the case of a signal of the form

where ϕ0 is a constant phase, the maximum over ϕ0 in Eq. (118) can be obtained analytically. Moreover, assuming that over the bandwidth of the signal the spectral density of the noise is constant and that over the observation time cosϕ(t; ξ) oscillates rapidly, the fitting factor is approximately given by

In designing suboptimal filters one faces the issue of how small a fitting factor one can accept. A popular rule of thumb is accepting FF = 0. 97. Assuming that the amplitude of the signal and consequently the signal-to-noise ratio decreases inversely proportionally to the distance from the source this corresponds to 10% loss of the signals that would be detected by a matched filter.

Proposals for good suboptimal (search) templates for the case of coalescing binaries are given in [35, 134] and for the case-spinning neutron stars in [65, 18].

4.6 Algorithms to calculate the \({\mathcal F}\)-statistic

4.6.1 The two-step procedure

In order to detect signals we search for threshold crossings of the \({\mathcal F}\)-statistic over the intrinsic parameter space. Once we have a threshold crossing we need to find the precise location of the maximum of \({\mathcal F}\) in order to estimate accurately the parameters of the signal. A satisfactory procedure is the two-step procedure. The first step is a coarse search where we evaluate \({\mathcal F}\) on a coarse grid in parameter space and locate threshold crossings. The second step, called a fine search, is a refinement around the region of parameter space where the maximum identified by the coarse search is located.

There are two methods to perform the fine search. One is to refine the grid around the threshold crossing found by the coarse search [94, 92, 134, 127], and the other is to use an optimization routine to find the maximum of \({\mathcal F}\) [65, 78]. As initial values to the optimization routine we input the values of the parameters found by the coarse search. There are many maximization algorithms available. One useful method is the Nelder-Mead algorithm [79], which does not require computation of the derivatives of the function being maximized.

4.6.2 Evaluation of the \({\mathcal F}\)-statistic

Usually the grid in parameter space is very large and it is important to calculate the optimum statistic as efficiently as possible. In special cases the \({\mathcal F}\)-statistic given by Eq. (62) can be further simplified. For example, in the case of coalescing binaries \({\mathcal F}\) can be expressed in terms of convolutions that depend on the difference between the time-of-arrival (TOA) of the signal and the TOA parameter of the filter. Such convolutions can be efficiently computed using FFTs. For continuous sources, like gravitational waves from rotating neutron stars observed by ground-based detectors [65] or gravitational waves form stellar mass binaries observed by space-borne detectors [78], the detection statistic \({\mathcal F}\) involves integrals of the general form

where \({\tilde \xi}\) are the intrinsic parameters excluding the frequency parameter ω, m is the amplitude modulation function, and ωϕmod the phase modulation function. The amplitude modulation function is slowly varying compared to the exponential terms in the integral (121). We see that the integral (121) can be interpreted as a Fourier transform (and computed efficiently with an FFT), if ϕmod = 0 and if m does not depend on the frequency ω. In the long-wavelength approximation the amplitude function m does not depend on the frequency. In this case, Eq. (121) can be converted to a Fourier transform by introducing a new time variable tb [124],

Thus, in order to compute the integral (121), for each set of the intrinsic parameters \({\tilde \xi}\) we multiply the data by the amplitude modulation function m, resample according to Eq. (122), and perform the FFT. In the case of LISA detector data when the amplitude modulation m depends on frequency we can divide the data into several band-passed data sets, choosing the bandwidth for each set to be sufficiently small so that the change of m exp(iωϕmod) is small over the band. In the integral (121) we can then use as the value of the frequency in the amplitude and phase modulation function the maximum frequency of the band of the signal (see [78] for details).

4.7 Accuracy of parameter estimation

4.7.1 Fisher-matrix-based assessments

Fisher matrix has been extensively used to assess the accuracy of estimation of astrophysically-interesting parameters of different gravitational-wave signals. For ground-based interferometric detectors, the first calculations of the Fisher matrix concerned gravitational-wave signals from inspiralling compact binaries (made of neutron stars or black holes) in the leading-order quadrupole approximation [50, 76, 63] and from quasi-normal modes of Kerr black hole [48].

Cutler and Flanagan [41] initiated the study of the implications of the higher-order post-Newtonian (PN) phasing formula as applied to the parameter estimation of inspiralling binary signals. They used the 1.5PN phasing formula to investigate the problem of parameter estimation, both for spinning and non-spinning binaries, and examined the effect of the spin-orbit coupling on the estimation of parameters. The effect of the 2PN phasing formula was analyzed independently by Poisson and Will [106] and Królak, Kokkotas and Schäfer [75]. In both cases the focus was to understand the leading-order spin-spin coupling term appearing at the 2PN level when the spins were aligned perpendicularly to the orbital plane. Compared to [75], [106] also included a priori information about the magnitude of the spin parameters, which then leads to a reduction in the rms errors in the estimation of mass parameters. The case of a 3.5PN phasing formula was studied in detail by Arun et al. [17]. Inclusion of 3.5PN effects leads to an improved estimate of the binary parameters. Improvements are relatively smaller for lighter binaries. More recently the Fisher matrix was employed to assess the errors in estimating the parameters of nonspinning black-hole binaries using the complete inspiral-merger-ring-down waveforms [7].

Various authors have investigated the accuracy with which the LISA detector can determine binary parameters including spin effects. Cutler [40] determined LISA’s angular resolution and evaluated the errors of the binary masses and distance considering spins aligned or anti-aligned with the orbital angular momentum. Hughes [60] investigated the accuracy with which the redshift can be estimated (if the cosmological parameters are derived independently), and considered the black-hole ring-down phase in addition to the inspiralling signal. Seto [128] included the effect of finite armlength (going beyond the long wavelength approximation) and found that the accuracy of the distance determination and angular resolution improve. This happens because the response of the instrument when the armlength is finite depends strongly on the location of the source, which is tightly correlated with the distance and the direction of the orbital angular momentum. Vec-chio [140] provided the first estimate of parameters for precessing binaries when only one of the two supermassive black holes carries spin. He showed that modulational effects decorrelate the binary parameters to some extent, resulting in a better estimation of the parameters compared to the case when spins are aligned or antialigned with orbital angular momentum. Hughes and Menou [61] studied a class of binaries, which they called “golden binaries,” for which the inspiral and ring-down phases could be observed with good enough precision to carry out valuable tests of strong-field gravity. Berti, Buonanno and Will [29] have shown that inclusion of non-precessing spin-orbit and spin-spin terms in the gravitational-wave phasing generally reduces the accuracy with which the parameters of the binary can be estimated. This is not surprising, since the parameters are highly correlated, and adding parameters effectively dilutes the available information.

Extensive study of accuracy of parameter estimation for continuous gravitational-wave signals from spinning neutron stars was performed in [64]. In [129] Seto used the Fisher matrix to study the possibility of determining distances to rapidly rotating isolated neutron stars by measuring the curvature of the wave fronts.

4.7.2 Comparison with the Cramèr-Rao bound

In order to test the performance of the maximization method of the \({\mathcal F}\)-statistic it is useful to perform Monte Carlo simulations of the parameter estimation and compare the simulated variances of the estimators with the variances calculated from the Fisher matrix. Such simulations were performed for various gravitational-wave signals [73, 26, 65, 36]. In these simulations one observes that, above a certain signal-to-noise ratio, called the threshold signal-to-noise ratio, the results of the Monte Carlo simulations agree very well with the calculations of the rms errors from the inverse of the Fisher matrix. However, below the threshold signal-to-noise ratio they differ by a large factor. This threshold effect is well known in signal processing [139]. There exist more refined theoretical bounds on the rms errors that explain this effect, and they were studied in the context of the gravitational-wave signals from coalescing binaries [98].

Use of the Fisher matrix in the assessment of accuracy of the parameter estimation has been critically examined in [138], where a criterion has been established for the signal-to-noise ratio above which the inverse of the Fisher matrix approximates well covariances of the parameter estimators. In [148, 142] the errors of ML estimators of parameters of gravitational-wave signals from nonspinning black-hole binaries were calculated analytically using a power expansion of the bias and the covariance matrix in inverse powers of the signal-to-noise ratio. The first-order term in this covariance matrix expansion is the inverse of the Fisher information matrix. The use of higher-order derivatives of the likelihood function in these expansions makes the errors prediction sensitive to the secondary lobes of the pdf of the ML estimators. Conditions for the validity of the Cramèr-Rao lower bound are discussed in [142] as well, and some new features in regions of the parameter space so far not explored are predicted (e.g., that the bias can become the most important contributor to the parameters errors for high-mass systems with masses 200 M⊙ and above).

There exists a simple model that explains the deviations from the covariance matrix and reproduces well the results of the Monte Carlo simulations (see also [25]). The model makes use of the concept of the elementary cell of the parameter space that we introduced in Section 4.3.2. The calculation given below is a generalization of the calculation of the rms error for the case of a monochromatic signal given by Rife and Boorstyn [116].

When the values of parameters of the template that correspond to the maximum of the functional \({\mathcal F}\) fall within the cell in the parameter space where the signal is present, the rms error is satisfactorily approximated by the inverse of the Fisher matrix. However, sometimes, as a result of noise, the global maximum is in the cell where there is no signal. We then say that an outlier has occurred. In the simplest case we can assume that the probability density of the values of the outliers is uniform over the search interval of a parameter, and then the rms error is given by

where Δ is the length of the search interval for a given parameter. The probability that an outlier occurs will be higher the lower the signal-to-noise ratio is. Let q be the probability that an outlier occurs. Then the total variance σ2 f the estimator of a parameter is the weighted sum of the two errors

where σCR is the rms errors calculated from the covariance matrix for a given parameter. One can show [65] that the probability q can be approximated by the following formula:

where p0 and p1 are the pdfs of the \({\mathcal F}\)-statistic (for known intrinsic parameters) when the signal is absent or present in data, respectively [they are given by Eqs. (84) and (85)], and where Ncells is the number of cells in the intrinsic parameter space. Eq. (125) is in good but not perfect agreement with the rms errors obtained from the Monte Carlo simulations (see [65]). There are clearly other reasons for deviations from the Cramèr-Rao bound as well. One important effect (see [98]) is that the functional \({\mathcal F}\) has many local subsidiary maxima close to the global one. Thus, for a low signal-to-noise ratio the noise may promote the subsidiary maximum to a global one.

4.8 Upper limits

Detection of a signal is signified by a large value of the \({\mathcal F}\)-statistic that is unlikely to arise from the noise-only distribution. If instead the value of \({\mathcal F}\) is consistent with pure noise with high probability we can place an upper limit on the strength of the signal. One way of doing this is to take the loudest event obtained in the search and solve the equation

for signal-to-noise ratio ρUL, where PD is the detection probability given by Eq. (87), \({{\mathcal F}_{\rm{L}}}\) is the value of the \({\mathcal F}\)-statistic corresponding to the loudest event, and β is a chosen confidence [23, 2]. Then ρUL is the desired upper limit with confidence β.

When gravitational-wave data do not conform to a Gaussian probability density assumed in Eq. (87), a more accurate upper limit can be obtained by injecting the signals into the detector’s data and thereby estimating the probability of detection PD [4].

5 Network of Detectors

Several gravitational-wave detectors can observe gravitational waves from the same source. For example a network of bar detectors can observe a gravitational-wave burst from the same supernova explosion, or a network of laser interferometers can detect the inspiral of the same compact binary system. The space-borne LISA detector can be considered as a network of three detectors that can make three independent measurements of the same gravitational-wave signal. Simultaneous observations are also possible among different types of detectors. For example, a search for supernova bursts can be performed simultaneously by resonant and interferometric detectors [21].

Let us consider a network consisting of N gravitational-wave detectors and let us denote by xI the data collected by the Ith detector (I = 1, …, N). We assume that noises in all detectors are additive, so the data xI is a sum of the noise nI in the Ith detector and eventually a gravitational-wave signal hI registered by the Ith detector,

It is convenient to collect all the data streams, all the noises, and all the gravitational-wave signals into column N × 1 matrices denoted respectively by x, n, and h,

then Eqs. (127) can shortly be written as

if additionally all detectors’ noises are stationary, Gaussian, and continuous-in-time random processes with zero means, the network log likelihood function is given by

where the scalar product (· ∣ ·) is defined by

Here Sn is the one-sided cross spectral density matrix of the noises of the detector network, which is defined by (here E denotes the expectation value)