Abstract

Gravitational wave detectors are already operating at interesting sensitivity levels, and they have an upgrade path that should result in secure detections by 2014. We review the physics of gravitational waves, how they interact with detectors (bars and interferometers), and how these detectors operate. We study the most likely sources of gravitational waves and review the data analysis methods that are used to extract their signals from detector noise. Then we consider the consequences of gravitational wave detections and observations for physics, astrophysics, and cosmology.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 A New Window onto the Universe

The last six decades have witnessed a great revolution in astronomy, driven by improvements in observing capabilities across the electromagnetic spectrum: very large optical telescopes, radio antennas and arrays, a host of satellites to explore the infrared, X-ray, and gamma-ray parts of the spectrum, and the development of key new technologies (CCDs, adaptive optics). Each new window of observation has brought new surprises that have dramatically changed our understanding of the universe. These serendipitous discoveries have included:

-

the relic cosmic microwave background radiation (Penzias and Wilson [287]), which has become our primary tool for exploring the Big Bang;

-

the fact that quasi-stellar objects are at cosmological distances (Maarten Schmidt [323]), which has developed into the understanding that they are powered by supermassive black holes;

-

pulsars (Hewish and Bell [189]), which opened up the study of neutron stars and illuminated one endpoint for stellar evolution;

-

X-ray binary systems (Giacconi and collaborators [326]), which now enable us to make detailed studies of black holes and neutron stars;

-

gamma-ray bursts coming from immense distances (Klebesadel et al. [216]), which are not fully explained even today;

-

the fact that the expansion of the universe is accelerating (two teams [313, 288]), which has led to the hunt for the nature of dark energy.

None of these discoveries was anticipated by the observing team, and in many cases the instruments were built to observe completely different phenomena.

Within a few years another new window on the universe will open up, with the first direct detection of gravitational waves. There is keen interest in observing gravitational waves directly, in order to test Einstein’s theory of general relativity and to observe some of the most exotic objects in nature, particularly black holes. But, in addition, the potential of gravitational wave observations to produce more surprises is very high.

The gravitational wave spectrum is completely distinct from, and complementary to, the electromagnetic spectrum. The primary emitters of electromagnetic radiation are charged elementary particles, mainly electrons; because of overall charge neutrality, electromagnetic radiation is typically emitted in small regions, with short wavelengths, and conveys direct information about the physical conditions of small portions of the astronomical sources. By contrast, gravitational waves are emitted by the cumulative mass and momentum of entire systems, so they have long wavelengths and convey direct information about large-scale regions. Electromagnetic waves couple strongly to charges and so are easy to detect but are also easily scattered or absorbed by material between us and the source; gravitational waves couple extremely weakly to matter, making them very hard to detect but also allowing them to travel to us substantially unaffected by intervening matter, even from the earliest moments of the Big Bang.

These contrasts, and the history of serendipitous discovery in astronomy, all suggest that electromagnetic observations may be poor predictors of the phenomena that gravitational wave detectors will eventually discover. Given that 96% of the mass-energy of the universe carries no charge, gravitational waves provide us with our first opportunity to observe directly a major part of the universe. It might turn out to be as complex and interesting as the charged minor component, the part that we call “normal” matter.

Several long-baseline interferometric gravitational-wave detectors planned over a decade ago [Laser Interferometer Gravitational-Wave Observatory (LIGO) [18], GEO [244], VIRGO [109] and TAMA [363]] have begun initial operations [3, 245, 19] with unprecedented sensitivity levels and wide bandwidths at acoustic frequencies (10 Hz–10 kHz) [197]. These large interferometers are superseding a world-wide network of narrow-band resonant bar antennas that operated for several decades at frequencies near 1 kHz. Before 2020 the space-based LISA [71] gravitational wave detector may begin observations in the low-frequency band from 0.1 mHz to 0.1 Hz. This suite of detectors can be expected to open up the gravitational wave window for astronomical exploration, and at the same time perform stringent tests of general relativity in its strong-field dynamic sector.

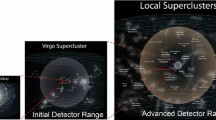

Gravitational wave antennas are essentially omni-directional, with linearly polarized quadrupolar antenna patterns that typically have a response better than 50% of its average over 75% of the sky [197]. Their nearly all-sky sensitivity is an important difference from pointed astronomical antennas and telescopes. Gravitational wave antennas operate as a network, with the aim of taking data continuously. Ground-based interferometers can at present (2008) survey a volume of order 104 Mpc3 for inspiraling compact star binaries — among the most promising sources for these detectors — and plan to enhance their range more than tenfold with two major upgrades (to enhanced and then advanced detectors) during the period 2009–2014. For the advanced detectors, there is great confidence that the resulting thousandfold volume increase will produce regular detections. It is this second phase of operation that will be more interesting from the astrophysical point of view, bringing us physical and astrophysical insights into populations of neutron star and black hole binaries, supernovae and formation of compact objects, populations of isolated compact objects in our galaxy, and potentially even completely unexpected systems. Following that, LISA’s ability to survey the entire universe for black hole coalescences at milliHertz frequencies will extend gravitational wave astronomy into the cosmological arena.

However, the present initial phase of observation, or observations after the first enhancements, may very well produce the first detections. Potential sources include coalescences of binaries consisting of black holes at a distance of 100–200 Mpc and spinning neutron stars in our galaxy with ellipticities greater than about 10−6. Observations even at this initial level may, of course, also reveal new sources not observable in any other way. These initial detections, though not expected to be frequent, would be important from the fundamental physics point of view and could enable us to directly test general relativity in the strongly nonlinear regime.

Gravitational wave detectors register gravitational waves coherently by following the phase of the wave and not just measuring its intensity. Since the phase is determined by large-scale motions of matter inside the sources, much of the astrophysical information is extracted from the phase. This leads to different kinds of data analysis methods than one normally encounters in astronomy, based on matched filtering and searches over large parameter spaces of potential signals. This style of data analysis requires the input of pre-calculated template signals, which means that gravitational wave detection depends more strongly than most other branches of astronomy on theoretical input. The better the input, the greater the range of the detectors.

The fact that detectors are omni-directional and detect coherently the phase of the incoming wave makes them in many ways more like microphones for sound than like conventional telescopes. The analogy with sound can be helpful, since microphones can be used to monitor environments for disturbances in any location, and since when we listen to sounds our brains do a form of matched filtering to allow us to interpret the sounds we want to understand against a background of noise. In a very real sense, gravitational wave detectors will be listening to the sounds of a restless universe. The gravitational wave “window” will actually be a listening post, a monitor for the most dramatic events that occur in the universe.

1.1 Birth of gravitational astronomy

Gravity is the dominant interaction in most astronomical systems. The big surprise of the last three decades of the 20th century was that relativistic gravitation is relevant in so many of these systems. Strong gravitational fields are Nature’s most efficient converters of mass into energy. Examples where strong-field relativistic gravity is important include the following:

-

neutron stars, the residue of supernova explosions, represent up to 0.1% (by number) of the entire stellar population of any galaxy;

-

stellar-mass black holes power many binary X-ray sources and tend to concentrate near the centers of globular clusters;

-

massive black holes in the range 106–109 M⊙ seem almost ubiquitous in galaxies that have central bulges, and power active galaxies, quasars, and giant radio jets;

-

and, of course, the Big Bang is the only naked singularity we expect to be able to see.

Most of these systems are either dynamical or were formed in catastrophic events; many are or were, therefore, strong sources of gravitational radiation. As the 21st century opens, we are on the threshold of using this radiation to gain a new perspective on the observable universe.

The theory of gravitational radiation already makes an important contribution to the understanding of a number of astronomical systems, such as neutron star binaries, cataclysmic variables, young neutron stars, low-mass X-ray binaries, and even the anisotropy of the microwave background radiation. As the understanding of relativistic phenomena improves, it can be expected that gravitational radiation will play a crucial role as a theoretical tool in modeling relativistic astrophysical systems.

1.2 What this review is about

The first three-quarters of the 20th century were required to place the mathematical theory of gravitational radiation on a sound footing. Many of the most fundamental constructs in general relativity, such as null infinity and the theory of conserved quantities, were developed at least in part to help solve the technical problems of gravitational radiation. We will not cover this history here, for which there are excellent reviews [259, 132]. There are still many open questions, since it is impossible to construct exact solutions for most interesting situations. For example, we still lack a full understanding of the two-body problem, and we will review the theoretical work on this problem below. But the fundamentals of the theory of gravitational radiation are no longer in doubt. Indeed, the observation of the orbital decay in the binary pulsar PSR B1913+16 [388] has lent irrefutable support to the correctness of the theoretical foundations aimed at computing gravitational wave emission, in particular to the energy and angular momentum carried away by the radiation.

It is, therefore, to be expected that the evolution of astrophysical systems under the influence of strong tidal gravitational fields will be associated with the emission of gravitational waves. Consequently, these systems are of interest both to a physicist, whose aim is to understand fundamental interactions in nature, their inter-relationships and theories describing them, and to an astrophysicist, who wants to dig deeper into the environs of dense or nonlinearly gravitating systems in solving the mysteries associated with relativistic phenomena listed in Sections 6, 7 and 8. Indeed, some of the gravitational wave antennas that are being built are capable of observing systems to cosmological distances, and even to the edge of the universe. The new window, therefore, is also of interest to cosmologists.

This is a living review of the prospects that lie ahead for gravitational antennas to test the predictions of general relativity as a fundamental theory, for using relativistic gravitation as a means to understand highly energetic sources, for interpreting gravitational waves to uncover the (electromagnetically) dark universe, and ultimately for employing networks of gravitational wave detectors to observe the first fraction of a second of the evolution of the universe.

We begin in Section 2 with a brief review of the physical nature of gravitational waves, giving a heuristic derivation of the formulas involved in the calculation of the gravitational wave observables such as the amplitude, frequency and luminosity of gravitational waves. This is followed in Section 3 by a discussion of the astronomical sources of gravitational waves, their expected event rates, amplitudes, waveforms and spectra. In Section 4 we then give a detailed description of the existing and upcoming gravitational wave antennas and their sensitivity. Included in Section 4 are bar and interferometric antennas covering both ground and space-based experiments. Section 4 also compares the sensitivity of the antennas with the strengths of astronomical sources and expected signal-to-noise ratios (SNRs). We then turn in Section 5 to data analysis, which is a central component of gravitational wave astronomy, focusing on those aspects of analysis that are crucial in gleaning physical, astrophysical and cosmological information from gravity wave observations.

Sections 7–9 treat in some detail how gravitational wave observations will aid in a better understanding of nonlinear gravity and test some of its fundamental predictions. In Section 6 we review the physics implications of gravitational wave observations, including new tests of general relativity that can be performed via gravitational wave observations, how these observations may help in formulating and gaining insight into the two-body problem in general relativity, and how gravitational wave observations may help to probe the structure of the universe and the nature of dark energy. In Section 7 we look at the astronomical information returned by gravitational wave observations, and how these observations will affect our understanding of black holes, neutron stars, supernovae, and other relativistic phenomena. Section 8 is devoted to the cosmological implications of gravitational wave observations, including placing constraints on inflation, early phase transitions associated with spontaneous symmetry breaking, and the large-scale structure of the universe.

This review is by no means exhaustive. We plan to expand it to include other key topics in gravitational wave astronomy with subsequent revisions.

Unless otherwise specified we shall use a system of units in which c = G = 1, which means 1 M⊙ ≃ 5 × 10−6 s ≃ 1.5 km, 1 Mpc ≃ 1014 s. We shall assume a universe with cold dark-matter density of ΩM = 0.3, dark energy of ΩΛ = 0.7, and a Hubble constant of H0 = 70 km s−1 Mpc−1.

2 Gravitational Wave Observables

To benefit from gravitational wave observations we must first understand what are the attributes of gravitational waves that we can observe. This section is devoted to a short discussion of the nature of gravitational radiation.

2.1 Gravitational field vs gravitational waves

Gravitational waves are propagating oscillations of the gravitational field, just as light and radio waves are propagating oscillations of the electromagnetic field. Whereas light and radio waves are emitted by accelerated electrically-charged particles, gravitational waves are emitted by accelerated masses. However, since there is only one sign of mass, gravitational waves never exist on their own: they are never more than a small part of the overall external gravitational field of the emitter. One may wonder, therefore, how it is possible to infer the presence of an astronomical body by the gravitational waves that it emits, when it is clearly not possible to sense its much larger stationary (essentially Newtonian) gravitational potential. There are, in fact, two reasons:

-

In general relativity, the effects of both the stationary field and gravitational radiation are described by the tidal forces they produce on free test masses. In other words, single geodesics alone cannot detect gravity or gravitational radiation; we need at least a pair of geodesics. While the stationary tidal force due to the Newtonian potential ϕ of a self-gravitating source at a distance r falls off as ∇∇ϕ ∼ r−3, the tidal force due to the gravitational wave amplitude h that it emits at wavelength λ decreases as ∇∇h ∼ r−1λ−2. Therefore, the stationary coulomb gravitational potential is the dominant tidal force close to the gravitating body (in the near zone, where r ≤ λ). However, in the far zone (r ≫ λ) the tidal effect of the waves is much stronger.

-

The stationary part of the tidal field is a DC effect, and simply adds to the stationary tidal forces of all other objects in the universe. It is not possible to discriminate one source from another. Gravitational waves carry time-dependent tidal forces, and so they can be discriminated from the stationary field if one knows what kind of time dependence to look for. Interferometers are ideal detectors in this respect because they sense only changes in the position of an interference fringe, which makes them insensitive to the DC part of the tidal field.

Because gravitational waves couple so weakly to our detectors, those astronomical sources that we can detect must be extremely luminous in gravitational radiation. Even at the distance of the Virgo cluster of galaxies, a detectable source could be as luminous as the full Moon, if only for a millisecond! Indeed, while radio astronomers deal with flux levels of Jy, mJy and even μJy, in the case of gravitational wave sources we encounter fluxes that are typically 1020 Jy or larger. Gravitational wave astronomy therefore is biased toward looking for highly energetic, even catastrophic, events.

Extracting useful physical, astrophysical and cosmological information from gravitational wave observations is made possible by measuring a number of gravitational wave attributes that are related to the properties of the source. In the rest of this section we discuss those attributes of gravitational radiation that can be measured via gravitational wave observations. In the process we will review the basic formulas used in computing the gravitational wave amplitude and luminosity of a source. These will then be used in Section 3 to make an order-of-magnitude estimate of the strength of astronomical sources of gravitational waves.

2.2 Gravitational wave polarizations

Because of the equivalence principle, single isolated particles cannot be used to measure gravitational waves: they fall freely in any gravitational field and experience no effects from the passage of the wave. Instead, one must look for inhomogeneities in the gravitational field, which are the tidal forces carried by the waves, and which can be measured only by comparing the positions or interactions of two or more particles.

In general relativity, gravitational radiation is represented by a second rank, symmetric trace-free tensor. In a general coordinate system, and in an arbitrary gauge (coordinate choice), this tensor has ten independent components. However, as in the electromagnetic case, gravitational radiation has only two independent states of polarization in Einstein’s theory: the plus polarization and the cross polarization (the names being derived from the shape of the equivalent force fields that they produce). In contrast to electromagnetic waves, the angle between the two polarization states is π/4 rather than π/2. This is illustrated in Figure 1, where the response of a ring of free particles in the (x, y) plane to plus-polarized and cross-polarized gravitational waves traveling in the z-direction is shown. The effect of the waves is to cause a tidal deformation of the circular ring into an elliptical ring with the same area. This tidal deformation caused by passing gravitational waves is the basic principle behind the construction of gravitational wave antennas.

In Einstein’s theory, gravitational waves have two independent polarizations. The effect on proper separations of particles in a circular ring in the (x, y)-plane due to a plus-polarized wave traveling in the z-direction is shown in (a) and due to a cross-polarized wave is shown in (b). The ring continuously gets deformed into one of the ellipses and back during the first half of a gravitational wave period and gets deformed into the other ellipse and back during the next half.

The two independent polarizations of gravitational waves are denoted h+ and h×. These are the two primary time-dependent observables of a gravitational wave. The polarization of gravitational waves from a source, such as a binary system, depends on the orientation of the dynamics inside the source relative to the observer. Therefore, measuring the polarization provides information about, for example, the orientation of the binary system.

2.3 Direction to a source

Gravitational wave antennas are linearly-polarized quadrupolar detectors and do not have good directional sensitivity. As a result we cannot deduce the direction to a source using a single antenna. One normally needs simultaneous observation using three or more detectors so that the source can be triangulated in the sky by measuring the time differences in signal arrival times at various detectors in a network. Ground-based detectors have typical separation baselines of L ∼ 3 × 106 m, so that at a wavelength of λ = 3 × 105 m = 1 ms (a frequency of 1 kHz) the network has a resolution of δθ = λ/L = 0.1 rad. If the amplitude SNR is high, then one can localize the source by a factor of 1/SNR better than this.

For long-lived sources, however, a single antenna synthesizes many antennas by observing the source at different points along its orbit around the sun. The baseline for such observations is 2 AU, so that, for a source emitting radiation at 1 kHz, the resolution is as good as Δθ = 10−6 rad, which is smaller than an arcsecond.

For space-based detectors orbiting the sun, like LISA, the baseline is again 2 AU, but the observing frequency is some five or six orders of magnitude lower, so the basic resolution is only of order 1 radian. However, as we shall see later, some of the sources that a space-based detector will observe have huge amplitude SNRs in the range of SNR ∼ 103–104, which improves the resolution to arcminute accuracies in the best cases.

2.4 Amplitude of gravitational waves — the quadrupole approximation

The Einstein equations are too difficult to solve analytically in the generic case of a strongly gravitating source to compute the luminosity and amplitude of gravitational waves from an astronomical source. We will discuss numerical solutions later; the most powerful available analytic approach is called the post-Newtonian approximation scheme. This approximation is suited to gravitationally-bound systems, which constitute the majority of expected sources. In this scheme [79, 169], solutions are expanded in the small parameter (v/c)2, where v is the typical dynamical speed inside the system. Because of the virial theorem, the dimensionless Newtonian gravitational potential ϕ/c2 is of the same order, so that the expansion scheme links orders in the expanded metric with those in the expanded source terms. The lowest-order post-Newtonian approximation for the emitted radiation is the quadrupole formula, and it depends only on the density (ρ) and velocity fields of the Newtonian system. If we define the spatial tensor Qjk, the second moment of the mass distribution, by the equation

then the amplitude of the emitted gravitational wave is, at lowest order, the three-tensor

This is to be interpreted as a linearized gravitational wave in the distant almost-flat geometry far from the source, in a coordinate system (gauge) called the Lorentz gauge.

2.4.1 Wave amplitudes and polarization in TT-gauge

A useful specialization of the Lorentz gauge is the TT-gauge, which is a comoving coordinate system: free particles remain at constant coordinate locations, even as their proper separations change. To get the TT-amplitude of a wave traveling outwards from its source, project the tensor in Equation (2) perpendicular to its direction of travel and remove the trace of the projected tensor. The result of doing this to a symmetric tensor is to produce, in the transverse plane, a two-dimensional matrix with only two independent elements:

This is the definition of the wave amplitudes h+ and h× that are illustrated in Figure 1. These amplitudes are referred to as the coordinates chosen for that plane. If the coordinate unit basis vectors in this plane are êx and êy, then we can define the basis tensors

In terms of these, the TT-gravitational wave tensor can be written as

If the coordinates in the transverse plane are rotated by an angle ψ, then one obtains new amplitudes h′+ and h′× given by

This shows the quadrupolar nature of the polarizations, and is consistent with our remark in association with Figure 1 that a rotation of π/4 changes one polarization into the other.

It should be clear from the TT projection operation that the emitted radiation is not isotropic: it will be stronger in some directions than in othersFootnote 1. It should also be clear from this that spherically-symmetric motions do not emit any gravitational radiation: when the trace is removed, nothing remains.

2.4.2 Simple estimates

A typical component of d2Qjk/dt2 will (from Equation (1)) have magnitude (Mv2)nonsph, where (Mv2)nonsph is twice the nonspherical part of the kinetic energy inside the source. So a bound on any component of Equation (2) is

It is interesting to observe that the ratio ϵ of the wave amplitude to the Newtonian potential ϕext of its source at the observer’s distance r is simply bounded by

and this bound is attained if the entire mass of the source is involved in the nonspherical motions, so that \({(M{\upsilon ^2})_{{\rm{nonsph}}}} \sim M\upsilon _{{\rm{nonsph}}}^2\). By the virial theorem for self-gravitating bodies

where ϕint is the maximum value of the Newtonian gravitational potential inside the system. This provides a convenient bound in practice [328]:

The bound is attained if the system is highly nonspherical. An equal-mass star binary system is a good example of a system that attains this bound.

For a neutron star source, one has ϕint ∼ 0.2. If the star is in the Virgo cluster (r ∼ 18 Mpc) and has a mass of 1.4 M⊙, and if it is formed in a highly-nonspherical gravitational collapse, then the upper limit on the amplitude of the radiation from such an event is 1.5 × 10−21. This is a simple way to get the number that has been the goal of detector development for decades, to make detectors that can observe waves at or below an amplitude of about 10−21.

2.5 Frequency of gravitational waves

The signals for which the best waveform predictions are available have well-defined frequencies. In some cases the frequency is dominated by an existing motion, such as the spin of a pulsar. But in most cases the frequency will be related to the natural frequency for a self-gravitating body, defined as

where \({\bar \rho}\) is the mean density of mass-energy in the source. This is of the same order as the binary orbital frequency and the fundamental pulsation frequency of the body. Even though this is a Newtonian formula, it provides a remarkably good order-of-magnitude prediction of natural frequencies, even for highly relativistic systems such as black holes.

The frequency of the emitted gravitational waves need not be the natural frequency, of course, even if the mechanism is an oscillation with that frequency. In many cases, such as binary systems, the radiation comes out at twice the oscillation frequency. But since, at this point, we are not trying to be more accurate than a few factors, we will ignore this distinction here. In later sections, with specific source models, we will get the factors right.

The mean density and hence the frequency are determined by the size R and mass M of the source, taking \(\bar \rho = 3M/4\pi {R^3}\). For a neutron star of mass 1.4 M⊙ and radius 10 km, the natural frequency is f0 = 1.9 kHz. For a black hole of mass 10 M⊙ and radius 2M = 30 km, it is f0 = 1 kHz. And for a large black hole of mass 2.5 × 106 M⊙, such as the one at the center of our galaxy, this goes down in inverse proportion to the mass to f0 = 4 mHz. In general, the characteristic frequency of the radiation of a compact object of mass M and radius R is

Figure 2 shows the mass-radius diagram for likely sources of gravitational waves. Three lines of constant natural frequency are plotted: f0 = 104 Hz, f0 = 1 Hz, and f0 = 10−4 Hz. These are interesting frequencies from the point of view of observing techniques: gravitational waves between 1 and 104 Hz are in principle accessible to ground-based detectors, while lower frequencies are observable only from space. Also shown is the line marking the black-hole boundary. This has the equation R = 2M. There are no objects below this line, because they would be smaller than the horizon size for their mass. This line cuts through the ground-based frequency band in such a way as to restrict ground-based instruments to looking at stellar-mass objects. No system with a mass above about 104 M⊙ can produce quadrupole radiation in the ground-based frequency band.

Mass-radius plot for gravitational wave sources. The horizontal axis is the total mass of a radiating system, and the vertical axis is its size. Typical values from various sources for ground-based and space-based detectors are shown. Lines give order-of-magnitude constraints and relations. Characteristic frequencies are estimated from f ∼ (Gρ/4π)1/2. The black-hole and binary lines are described in the text.

A number of typical relativistic objects are placed in the diagram: a neutron star, a pair of neutron stars that spiral together as they orbit, some black holes. Two other interesting lines are drawn. The lower (dashed) line is the 1-year coalescence line, where the orbital shrinking timescale due to gravitational radiation backreaction (cf. Equation (28)) is less than one year. The upper (solid) line is the 1-year chirp line: if a binary lies below this line, then its orbit will shrink enough to make its orbital frequency increase by a measurable amount in one year. (In a one-year observation one can, in principle, measure changes in frequency of 1 yr−1, or 3 × 10−8 Hz.)

It is clear from the Figure that any binary system that is observed from the ground will coalesce within an observing time of one year. Since pulsar binary statistics suggest that neutron-star-binary coalescences happen less often than once every 105 years in our galaxy, ground-based detectors must be able to register these events in a volume of space containing at least 106 galaxies in order to have a hope of seeing occasional coalescences. That corresponds to a volume of radius roughly 100 Mpc. For comparison, first-generation ground-based interferometric detectors have a reach of around 20 Mpc for such binaries, while advanced interferometers should extend that to about 200 Mpc.

2.6 Luminosity in gravitational waves

The general formula for the local stress-energy of a gravitational wave field propagating through flat spacetime, using the TT-gauge, is given by the Isaacson expression [259, 332]

where the angle brackets denote averages over regions of the size of a wavelength and times of the length of a period of the wave. The energy flux of a wave in the xi direction is the T0i component.

The gravitational wave luminosity in the quadrupole approximation is obtained by integrating the energy flux from Equation (14) over a distant sphere. When one correctly takes into account the projection factors mentioned after Equation (2), one obtains [259]

where Q is the trace of the matrix Qjk. This equation can be used to estimate the backreaction effect on a system that emits gravitational radiation.

Notice that the expression for Lgw is dimensionless when c = G = 1. It can be converted to normal luminosity units by multiplying by the scale factor

This is an enormous luminosity. By comparison, the luminosity of the sun is only 3.8 × 1026 W, and that of a typical galaxy would be 1037 W. All the galaxies in the visible universe emit, in visible light, on the order of 1049 W. We will see that gravitational wave systems always emit at a fraction of L0, but that the gravitational wave luminosity can come close to L0 and can greatly exceed typical electromagnetic luminosities. Close binary systems normally radiate much more energy in gravitational waves than in light. Black hole mergers can, during their peak few cycles, compete in luminosity with the steady luminosity of the entire universe!

Combining Equations (2) and (15) one can derive a simple expression for the apparent luminosity of radiation \({\mathcal F}\), at great distances from the source, in terms of the gravitational wave amplitude [332]:

The above relation can be used to make an order-of-magnitude estimate of the gravitational wave amplitude from a knowledge of the rate at which energy is emitted by a source in the form of gravitational waves. If a source at a distance r radiates away energy E in a time T, predominantly at a frequency f, then writing ḣ = 2πfh and noting that \({\mathcal F} \sim E/(4\pi {r^2}T)\), the amplitude of gravitational waves is

When the time development of a signal is known, one can filter the detector output through a copy of the expected signal (see Section 5 on matched filtering). This leads to an enhancement in the SNR, as compared to its narrow-band value, by roughly the square root of the number of cycles the signal spends in the detector band. It is useful, therefore, to define an effective amplitude of a signal, which is a better measure of its detectability than its raw amplitude:

Now, a signal lasting for a time T around a frequency f would produce n ≃ fT cycles. Using this we can eliminate T from Equation (18) and get the effective amplitude of the signal in terms of the energy, the emitted frequency and the distance to the source:

Notice that this depends on the energy only through the total fluence, or time-integrated flux E/4πr2 of the wave. As in many other branches of astronomy, the detectability of a source is ultimately a function of its apparent luminosity and the observing time. However, one should not ignore the dependence on frequency in this formula. Two sources with the same fluence are not equally easy to detect if they are at different frequencies: higher frequency signals have smaller amplitudes.

3 Sources of Gravitational Waves

3.1 Man-made sources

One source can unfortunately be ruled out as undetectable: man-made gravitational radiation. Imagine creating a wave generator with the following extreme properties. It consists of two masses of 103 kg each (a small car) at opposite ends of a beam 10 m long. At its center the beam pivots about an axis. This centrifuge rotates 10 times per second. All the velocity is nonspherical, so \(\upsilon _{{\rm{nonsph}}}^2\) in Equation (9) is about 105 m2 s−2. The frequency of the waves will actually be 20 Hz, since the mass distribution of the system is periodic in time with a period of half the rotation period. The wavelength of the waves will, therefore, be 1.5 × 107 m, similar to the diameter of the earth. In order to detect gravitational waves, not near-zone Newtonian gravity, the detector must be at least one wavelength from the source, say diametrically opposite the centrifuge on the Earth. Then the amplitude h can be deduced from Equation (9): h ≃ 5 × 10−43. This is far too small to contemplate detecting! The story changes, fortunately, when we consider astrophysical sources of gravitational waves, where nature arranges for masses that are 1027 times larger than our centrifuge to move at speeds close to the speed of light!

Until observations of gravitational waves are successfully made, one can only make intelligent guesses about most of the sources that will be seen. There are many that could be strong enough to be seen by the early detectors: star binaries, supernova explosions, neutron stars, the early universe. In this section, we make rough luminosity estimates using the quadrupole formula and other approximations, which are usually accurate to within factors of order two, and, very importantly, they show how key observables scale with the properties of the systems. Where appropriate we also make use of predictions from the much more accurate modelling that is available for some sources, such as binary systems and black hole mergers. The detectability depends, of course, not only on the intrinsic luminosity of the source, but on how far away it is. Often the biggest uncertainties in making predictions are the spatial density and event rate of any particular class of sources. This is not surprising, since our information at present comes from electromagnetic observations, and as our earlier remarks about the differences between the mechanisms of emission of gravitational and electromagnetic radiation make clear, electromagnetic observations may not strongly constrain the source population.

3.2 Gravitational wave bursts from gravitational collapse

Neutron stars and black holes are formed from the gravitational collapse of a highly evolved star or the core collapse of an accreting white dwarf. In either case, if the collapse is nonspherical, perhaps induced by strong rotation, then gravitational waves could carry away some of the binding energy and angular momentum depending on the geometry of the collapse. Collapse events are thought to produce supernovae of various types, and increasingly there is evidence that they also produce most of the observed gamma-ray bursts [191] in hypernovae and collapsars [397, 249]. Supernovae of Type II are believed to occur at a rate of between 0.1 and 0.01 per year in a milky-way equivalent galaxy (MWEG); thus, within the Virgo supercluster, we might expect an event rate of about 30 per year. Hypernova events are considerably rarer and might only contribute observable gravitational-wave events in current and near-future detectors if they involve so much rotation that strong non-axisymmetric instabilities are triggered.

Simulating gravitational collapse is a very active area of numerical astrophysics, and most simulations also predict the energy and spectral characteristics of the emitted gravitational waves [167]. However, it is still beyond the capabilities of computers to simulate a gravitational collapse event with all the physics that might be necessary to give reliable predictions: three-dimensional hydrodynamics, neutrino transport, realistic nuclear physics, magnetic fields, rotation. In fact, it is still by no means clear why Type II supernovae explode at all: simulations typically have great difficulty reversing the inflow and producing an explosion with the observed light-curves and energetics. It may be that the answer lies in some of the physics that has to be oversimplified in order to be used in current simulations, or in some neutrino physics that we do not yet know, or in some unexplored hydrodynamic mechanism [276]. In a typical supernova, simulations suggest that gravitational waves might extract between about 10−7 and 10−5 of the total available mass-energy [264, 147, 148], and the waves could come off in a burst whose frequency might lie in the range of ∼ 200–1000 Hz.

We can use Equation (18) to make a rough estimate of the amplitude, if the emitted energy and timescale are known. Using representative values for a supernova in our galaxy, lying at 10 kpc, emitting the energy equivalent of 10−7 M⊙ at a frequency of 1 kHz, and lasting for 1 ms, the received amplitude would be

The upper bound in Equation (11) would give the same amplitude for a source 60 times further away, which reflects the fact that simulations find it difficult to put significant energy into gravitational waves. This amplitude is large enough for current ground-based detectors to observe with a reasonably high confidence, but of course the event rate within 10 kpc is expected to be far too small to make an early detection likely.

3.3 Gravitational wave pulsars

Some likely gravitational wave sources behave like the centrifuge example we used in the first paragraph of this section, only on a grander scale. Suppose a neutron star of radius R and mass M spins with a frequency f and has an irregularity, a deformation of its otherwise axially symmetric shape. We idealize this as a “bump” of mass m on its surface, although of course it will really be a distribution of mass leading to an asymmetrical quadrupole tensor. The moment of inertia of the bump will be mR2, and it is conventional to parameterize the bump in terms of the fractional asymmetry it creates in the moment of inertia tensor itself. If we idealize the star as having uniform density, then the spherical moment of inertia is 2 M R2/5, and so the bump has fractional asymmetry

The bump will emit gravitational radiation at frequency 2f because the star spins about its net center of mass, so it effectively has mass excesses on both sides of the star. The nonspherical velocity will be just vnonsph = 2πRf. The radiation amplitude will be, from Equation (9),

and the luminosity, from Equation (15) (assuming that roughly four comparable components of Qjk contribute to the sum),

The radiated energy would presumably come from the rotational energy of the star Mv2/5. This would lead to a spindown of the star on a timescale

It is believed that neutron star crusts are not strong enough to support fractional asymmetries larger than about ϵ ∼ 10−6 [370], and realistic asymmetries may be much smaller.

From these considerations one can estimate the likelihood that the observed spindown timescales of pulsars are due to gravitational radiation. In most cases, it seems that gravitational wave losses could account for a substantial amount of the spindown: the required asymmetries are much smaller than 10−4, often smaller than 10−7. But an interesting exception is the Crab pulsar, PSR J0534+2200, whose young age and consequently short spindown time (measured to be 8.0 × 1010 s, about 2500 yr) would require an exceptionally large asymmetry. If we take the neutron star’s radius to be 10 km, so that M/R ∼ 0.21 and the speed of any irregularity is v/c ∼ 6.2 × 10−3, then Equation (24) would require an asymmetry of ϵ ∼ 1.4 × 10−3. Of course, we have made a lot of approximations to get here, only keeping our estimates of amplitudes and energies correct to within factors of two, but a more careful calculation reduces this only by a factor of two to ϵ ∼ 7 × 10−4 [12]. What makes this interesting is the fact that an asymmetry this large would produce radiation detectable by first-generation interferometers. Conversely, an upper limit from first-generation interferometers would provide direct observational limits on the asymmetry and on the fraction of energy lost by the Crab pulsar to gravitational waves.

From Equation (23) the Crab pulsar would, if its spindown is dominated by gravitational wave losses, produce an amplitude at the Earth of h ∼ 1.5 × 10−24, if its distance is 2 kpc. Is this detectable when present instruments are only capable of seeing millisecond bursts of radiation at levels of 10−21? The answer is yes, if the observation time is long enough. Indeed, the latest LIGO observations have not detected any gravitational waves from the Crab pulsar, which has been used to set an upper limit on the asymmetry in its mass distribution [12]. The limit depends on the model assumed for the pulsar. If one assumes that gravitational waves are produced at exactly twice the pulsar spin frequency and uses the inferred values of the pulsar orientation and polarization angle, then for a canonical value of the moment-of-inertia I = 1038 kg m2, one gets an upper limit on the ellipticity of ϵ ≤ 1.8 × 10−4, assuming the pulsar is at 2 kpc. This is a factor of 4.2 below the spindown limit [12]. If, however, one assumes that gravitational waves are emitted at a frequency close, but not exactly equal, to twice the spin frequency and one uses a uniform prior for the orientation and polarization angle, then one gets ϵ ≤ 9 × 10−4, which is 0.8 of the limit derived from the spin-down rate.

Indeed, even signals weaker than the amplitude determined by the Crab spindown rate will be observable by present detectors, and these may be coming from a larger variety of neutron stars, in particular low-mass X-ray binary systems (LMXBs). The neutron stars in them are accreting mass and angular momentum, so they should be spinning up. Observations suggest that most neutron stars are spinning at speeds between about 300 and 600 Hz, far below their maximum, which is greater than 1000 Hz. The absence of faster stars suggests that something stops them from spinning up beyond this range. Bildsten suggested [77] that the limiting mechanism may be the re-radiation of the accreted angular momentum in gravitational waves, possibly due to a quadrupole moment created by asymmetrical heating induced by the accreted matter. Another possible mechanism [285] is that a “bump” of the kind we have treated is formed by accreting matter channeled onto the surface by the star’s magnetic field. It is also possible that accretion drives an instability in the star that leads to steady emission [308, 270]. In either case, the stars could turn out to be long-lived sources of gravitational waves. This idea, which is a variant of one proposed long ago by Wagoner [383], is still speculative, but the numbers make a plausible case. We discuss it in more detail in Section 7.3.5.

3.4 Radiation from a binary star system

3.4.1 Radiation from a binary system and its backreaction

A binary star system can also be treated as a “centrifuge”. Two stars of the same mass m in a circular orbit of radius R have all their mass in nonspherical motion, so that

where Ω is the orbital angular velocity. The gravitational wave amplitude can then be written

Since the internal radius R of the orbit is not an observable, it is sometimes convenient to replace R by the orbital angular frequency Ω using the above orbit equation, giving

The gravitational wave luminosity of such a system is, by a calculation analogous to that for bumps on neutron stars (assuming that four components of Qij to be significant),

in units given by the fundamental luminosity L0 in Equation (16). This shows that self-gravitating systems always emit at a fraction of L0, since M/R is always smaller than 1, but it can approach L0 for highly-relativistic systems where M/R ∼ 1.

The radiation of energy by the orbital motion causes the orbit to shrink. The shrinking will make any observed gravitational waves increase in frequency with time. This is called a chirp. The timescaleFootnote 2 for this in a binary system with equal masses is

As the binary evolves, the frequency and amplitude of the wave grow and this drives the binary to evolve even more rapidly. The signal’s frequency, however, will not increase indefinitely; the slow inspiral phase ends either when the stars begin to interact and merge or (if they are very compact) when the distance between the stars is roughly at the last stable orbit (LSO) R = 6M, which corresponds to a gravitational wave frequency of

where we have normalized this to a binary with M = 20 M⊙. This is the last stable orbit (LSO) frequency.

A compact-object binary that coalesces after passing through the last stable orbit is a powerful source of gravitational waves, with a luminosity that approaches the limiting luminosity L0. This is called a coalescing binary in gravitational wave searches. Since a typical search might last on the order of one year, a coalescing binary can be defined as a system that has a chirp time smaller than one year. In Figure 2 the coalescence line is shown as a straight line with slope 3/4 (set tchirp to a constant in Equation (28)). Binary systems below this line have a chirp time smaller than one year. It is evident from the figure that all binary systems observable by ground-based detectors will coalesce in less than a year.

As mentioned for gravitational wave pulsars, the raw amplitude of the radiation from a long-lived system is not by itself a good guide to its detectability, if the waveform can be predicted. Data analysis techniques like matched filtering are able to eliminate most of the detector noise and allow the recognition of weaker signals. The improvement in amplitude sensitivity is roughly proportional to the square root of the number of cycles of the waveform that one observes. For neutron stars that are observed from a frequency of 10 Hz until they coalesce, there could be on the order of 104 cycles, meaning that the sensitivity of a second-generation interferometric detector would effectively be 100 times better than its broadband (prefiltering) sensitivity. Such detectors could see typical coalescences at ∼ 200 Mpc. The event rate for second-generation detectors is estimated at around 40 events per year, with rather large error bars [101, 211, 242].

3.4.2 Chirping binaries as standard sirens

When we consider real binaries we must do the calculation for systems that have unequal masses. Still assuming for the moment that the binary orbit is circular, the quadrupole amplitude turns out to be

where we define the chirp mass \({\mathcal M}\) as

with μ the reduced mass, M the total mass and ν the symmetric mass ratio. We have left out of Equation (30) a factor of order one that depends on the angle from which the binary system is viewed. The two polarization amplitudes can be found in Equation (132).

Remarkably, the other observable, namely the shrinking of the orbit as measured by the rate of change of the orbital frequency Pb also depends on the masses just through \({\mathcal M}\) [290]:

In this case, the chirp time is

This is just the equal-mass chirp time of Equation (28) scaled inversely with the symmetric mass ratio ν = m1m2/M2. From this equation it is clear that systems with large mass ratios between the components can spend a long time in highly relativistic orbits, whereas equal-mass binaries can be expected to merge after only a few orbits in the highly relativistic regime.

If one observes Pb and Ṗb, one can infer \({\mathcal M}\) from Equation (32). Then, from the observed amplitude in Equation (30), the only remaining unknown is the distance r to the source. Gravitational wave observations of orbits that shrink because of gravitational energy losses can therefore directly determine the distance to the source [329]. By analogy with the “standard candles” of electromagnetic astronomy, these systems are now being called “standard sirens”. Although our calculation here assumed an equal-mass circular system, the conclusion is robust: any binary, even with ellipticity and extreme mass ratio, encodes its distance in its gravitational wave signal.

This is another way in which gravitational wave observations are complementary to electromagnetic ones, providing information that is hard to obtain electromagnetically. One consequence is the possibility that observations of coalescing compact object binaries could allow one to measure the Hubble constant [329] or other cosmological parameters. This will be particularly interesting for the LISA project, whose observations of black hole binaries could contribute an independent measurement of the acceleration of the universe [195, 131, 48].

Because chirping systems are so interesting we have also drawn, in Figure 2, a line where the chirp time can be measured in one year. This means that the change in frequency due to the chirp must be larger than the frequency resolution 1 yr−1. A little algebra shows that the condition for the chirp to be resolved in an observation time T in a binary with period Pb is

Since Pb ∝ R3/2M−1/2, this condition leads to a line of slope 7/11 in the logarithmic plot in Figure 2. The line drawn there corresponds to a resolution time T of one year. All binaries below this line will chirp in a short enough time for their distances to be measured.

3.4.3 Binary pulsar tests of gravitational radiation theory

The most famous example of the effects of gravitational radiation on an orbiting system is the Hulse-Taylor Binary Pulsar, PSR B1913+16. In this system, two neutron stars orbit in a close eccentric orbit. The pulsar provides a regular clock that allows one to deduce, from post-Newtonian effects, all the relevant orbital parameters and the masses of the stars. The key to the importance of this binary system is that all of the important parameters of the system can be measured before one takes account of the orbital shrinking due to gravitational radiation reaction. This is because a number of post-Newtonian effects on the arrival time of pulses at the Earth, such as the precession of the position of the periastron and the time-dependent gravitational redshift of the pulsar period as it approaches and recedes from its companion, can be measured accurately, and they fully determine the masses, the semi-major axis and the eccentricity of their orbit [394, 344].

Equation (28) for the chirp time predicts that this system would change its orbital period Pb = 7.75 hrs on the timescale (taking M = 1.4 M⊙ and R = 106 km)

From this one can infer that Ṗb ∼ 1.5 × 10−14. But this has to be corrected for our oversimplification of the orbit as circular: an eccentric orbit evolves much faster because, during the phase of closest approach, the velocities are much higher, and the emitted luminosity is a very strong function of the velocity. Using equations first computed by Peters and Mathews [290], for the actual eccentricity of 0.62, one finds (see Equation (109) below) ṖT = −(2.40242 ± 0.00002) × 10−12. Observations [394, 388] currently give ṖO = −(2.4184 ± 0.0009) × 10−12. There is a significant discrepancy between these, but it can be removed by realizing that the binary system is accelerating toward the center of our galaxy, which produces a small period change. Taking this into account gives a corrected prediction of −(2.4056 ± 0.0051) × 10−12, and this agrees with the observation within the uncertainties [394, 355]. This is the most sensitive test that we have of the correctness of Einstein’s equations with respect to gravitational radiation, and it leaves little room for doubt in the validity of the quadrupole formula for other systems that may generate detectable radiation.

A number of other binary systems are now known in which such tests are possible [344]. The most important of the other systems is the “double pulsar” in which both neutron stars are seen as pulsars [246]. This system will soon overtake the Hulse-Taylor binary as the most accurate test of gravitational radiation.

3.4.4 White-dwarf binaries

Binary systems at lower frequencies are much more abundant than coalescing binaries, and they have much longer lifetimes. LISA will look for close white-dwarf binaries in our galaxy, and will probably see thousands of them. White dwarfs are not as compact as black holes or neutron stars. Although their masses can be similar to that of a neutron star their sizes are much larger, typically 3,000 km in radius. As a result, white-dwarf binaries never reach the last stable orbit, which would occur at roughly 1.5 kHz for these masses. We will discuss the implications of multi-messenger astronomy for white-dwarf binaries in Section 7.4.

The maximum amplitude of the radiation from a white-dwarf binary will be several orders of magnitude smaller than that of a neutron star or black hole binary at the same distance but close to coalescence. However, a binary system with a short period is long lived, so the effective amplitude (after matched filtering) improves as the square root of the observing time. Besides that, these sources are nearer: there are many thousands of such systems in our galaxy radiating in the LISA frequency window above about 1 mHz, and LISA should be able to see all of them. Below 1 mHz there are even more sources, so many that LISA will not resolve them individually, but will see them blended together in a stochastic background of radiation, as shown in Figure 5.

3.4.5 Supermassive black hole binaries

Observations indicate that the center of every galaxy probably hosts a black hole whose mass is in the range of 106–109 M⊙ [305], with the black holes mass correlating well with the mass of the galactic bulge. A black hole whose mass is in the above range is called a supermassive black hole (SMBH). There is now abundant observational evidence that galaxies often collide and merge, and there are good reasons to believe that when that happens, friction between the SMBHs and the stars and gas of the irregular merged galaxy will lead the SMBHs to spiral into a common nucleus and (on a timescale of some 108 yr) even get close enough to be driven into complete orbital decay by gravitational radiation reaction. In many systems this should lead to a black hole merger within a Hubble time [221]. For a binary with two nonspinning M = 106 M⊙ black holes, the frequency of emitted gravitational waves at the last stable orbit is, from Equation (29), fLSO = 4 mHz; during and after the merger the frequency rises from 4 mHz to the quasi-normal-mode frequency of 24 mHz (if the spin of the final black hole is negligible). (Naturally, all these frequencies simply scale inversely with the mass for other mass ranges.) This is in the frequency range of LISA, and observing these mergers is one of the central purposes of the mission.

SMBH mergers are so spectacularly strong that they will be visible in LISA’s data stream even before applying any matched filter, although good models of the inspiral and particularly the merger radiation will be needed to extract source parameters. Because the masses of such black holes are so large, LISA can see essentially any merger that happens in its frequency band anywhere in the universe, even out to extremely high redshifts. It can thereby address astrophysical questions about the origin, growth and population of SMBHs. The recent discovery of an SMBH binary [221] and the association of X-shaped radio lobes with the merger of SMBH binaries [254] has further raised the optimism concerning SMBH merger rates, as has the suggestion that an SMBH has been observed to have been expelled from the center of its galaxy, an event that could only have happened as a result of a merger between two SMBHs [222]. The rate at which galaxies merge is about 1 yr−1 out to a red-shift of z = 5 [185], and LISA’s detection rate for SMBH mergers might be roughly the same.

Modelling of the merger of two black holes requires numerical relativity, and the accuracy and reliability of numerical simulations is now becoming good enough that they will soon become an integral part of gravitational wave searches.

3.4.6 Extreme and intermediate mass-ratio inspiral sources

The SMBH environment of our own galaxy is known to contain a large number of compact objects and white dwarfs. Near the central SMBH there is a disproportionately large number of stellar-mass black holes, which have sunk there through random gravitational encounters with the normal stellar population (dynamical friction). Three body interaction will occasionally drive one of these compact objects into a capture orbit of the central SMBH. The compact object will sometimes be captured [305, 338, 337] into a highly eccentric trajectory (e > 0.99) with the periastron close to the last stable orbit of the SMBH. Since the mass of the captured object will be about 1–100 M⊙, while the SMBH will have a far greater mass, we essentially have a “test mass” falling in the geometry of a Kerr black hole. By Equation (33) we would expect that the small body would spend many orbits in the relativistic regime near the horizon of the large black hole: a 10 M⊙ black hole falling into a 106 M⊙ black hole would require on the order of 105 orbits. The emitted gravitational radiation [317, 179, 178, 67, 171, 57] would consist of a very long wave train that carries information about the nearly geodesic trajectory of the test body, thereby providing a very clean probe to survey the spacetime geometry of the central object (which could be a Kerr black hole or some other compact object) and test whether or not this geometry is as predicted by general relativity [318, 198, 177, 176, 68].

This kind of event happens occasionally to every SMBH that lives in the center of a galaxy. Indeed, since the SNR from matched filtering builds up in proportion to the square root of the observation time tchirp ∝ ν−1 = (µ/M)−1 [cf. Equation (33)] and the inherent amplitude of the radiation is linear in ν [cf. Equation (30)], the SNR varies with the symmetric mass ratio as \(\sqrt \nu\) and typical SNR will be about ten to a thousand times smaller than an SMBH binary at the same distance. LISA will, therefore, be able to see such sources only to much smaller distances, say between 1 to 10 Gpc depending on the mass ratio. For events at such distances LISA’s SNR after matched filtering could be in the range 10–100, but matched filtering will be very difficult because of the complexity of the orbit, especially of its evolution due to radiation effects. However, this volume of space contains a large number of galaxies, and the event rate is expected to be several tens to hundreds per year [67]. There will be a background from more distant sources that might in the end set the limit on how much sensitivity LISA has to these events.

Due to relativistic frame dragging, for each passage of the apastron the test body could execute several nearly circular orbits at its periastron. Therefore, long periods of low-frequency, small-amplitude radiation will be followed by several cycles of high-frequency, large-amplitude radiation [317, 179, 178, 67, 171, 57]. The apastron slowly shrinks, while the periastron remains more or less at the same location, until the final plunge of the compact object before merger. Moreover, if the central black hole has a large spin then spin-orbit coupling leads to precession of the orbital plane thereby changing the polarization of the wave as seen by LISA.

Thus, there is a lot of structure in the waveforms owing to a number of different physical effects: contribution from higher-order multipoles due to an eccentric orbit, precession of the orbital plane, precession of the periastron, etc., and gravitational radiation backreaction plays a pivotal role in the dynamics of these systems. If one looks at the time-frequency map of such a signal one notices that the signal power is greatly smeared across the map [320], as compared to that of a sharp chirp from a nonspinning black-hole binary. For this reason, this spin modulated chirp is sometimes referred to as a smirch [322]. More commonly, such sources are called extreme mass ratio inspirals (EMRIs) and represent systems whose mass ratio is in the range of ∼ 10−3–10−6. Inspirals of systems with their mass ratio in the range ∼ 10−2–10−3 are termed intermediate mass ratio inspirals or IMRIs. These latter systems correspond to the inspiral of intermediate mass black holes of mass ∼ 103–104 M⊙ and might constitute a prominent source in LISA provided the central SMBH grew in mass as a result of a number of mergers of small black holes [30, 31, 32].

While black hole perturbation theory with a careful treatment of radiation reaction is necessary for the description of EMRIs, IMRIs may be amenable to a description using a hybrid scheme of post-Newtonian approximations and perturbation theory. This is an area that requires more study.

3.5 Quasi-normal modes of a black hole

In 1970, Vishveshwara [381] discussed a gedanken experiment, similar in philosophy to Rutherford’s (real) experiment with the atom. In Vishveshwara’s experiment, he scattered gravitational radiation off a black hole to explore its properties. With the aid of such a gedanken experiment, he demonstrated for the first time that gravitational waves scattered off a black hole will have a characteristic waveform, when the incident wave has frequencies beyond a certain value, depending on the size of the black hole. It was soon realized that perturbed black holes have quasi-normal modes (QNMs) of vibration and in the process emit gravitational radiation, whose amplitude, frequency and damping time are characteristic of its mass and angular momentum [296, 220]. We will discuss in Section 6.4 how observations of QNMs could be used in testing strong field predictions of general relativity.

We can easily estimate the amplitude of gravitational waves emitted when a black hole forms at a distance r from Earth as a result of the coalescence of compact objects in a binary. The effective amplitude is given by Equation (20), which involves the energy E put into gravitational waves and the frequency f at which the waves come off. By dimensional arguments E is proportional to the total mass M of the resulting black hole. The efficiency at which the energy is converted into radiation depends on the symmetric mass ratio ν of the merging objects. One does not know the fraction of the total mass emitted nor the exact dependence on ν. Flanagan and Hughes [164] argue that E ∼ 0.03(4ν)2M. The frequency f is inversely proportional to M; indeed, for Schwarzschild black holes f = (2πM)−1. Thus, the formula for the effective amplitude takes the form

where α is a number that depends on the (dimensionless) angular momentum a of the black hole and has a value between 0.7 (for a = 0, Schwarzschild black hole) and 0.4 (for a = 1, maximally spinning Kerr black hole). For stellar mass black holes at a distance of 200 Mpc the amplitude is:

for SMBHs, even at cosmological distances, the amplitude of quasinormal mode signals is pretty large:

In the first case we have a pair of 10 M⊙ black holes inspiraling and merging to form a single black hole. In this case the waves come off at a frequency of around 500 Hz [cf. Equation (13)]. The initial ground-based network of detectors might be able to pick these waves up by matched filtering, especially when an inspiral event precedes the ringdown signal. A 100 M⊙ black hole plunging into a 106 M⊙ black hole at a distance of 6.5 Gpc (z ≃ 1) gives out radiation at a frequency of about 15 mHz. Note that in the latter case the radiation is redshifted from 30 mHz to 15 mHz. Such an event produces an amplitude just large enough to be detected by LISA. At the same distance, a pair of 106 M⊙ SMBHs spiral in and merge to produce a fantastic amplitude of 3 × 10−17, way above the LISA background noise. In this case, the signals would be given off at about 7.5 mHz and will be loud and clear to LISA. It will not only be possible to detect these events, but also to accurately measure the masses and spins of the objects before and after merger and thereby test the black hole no-hair theorem and confirm whether the result of the merger is indeed a black hole or some other exotic object (e.g., a boson star or a naked singularity).

3.6 Stochastic background

In addition to radiation from discrete sources, the universe should have a random gravitational wave field that results from a superposition of countless discrete systems and also from fundamental processes, such as the Big Bang. Observing any of these backgrounds would bring useful information, but the ultimate goal of detector development is the observation of the background radiation from the Big Bang. It is expected to be very weak, but it will come to us unhindered from as early as 10−30 s, and it could illuminate the nature of the laws of physics at energies far higher than we can hope to reach in the laboratory.

It is usual to characterize the intensity of a random field of gravitational waves by its energy density as a function of frequency. Since the energy density of a plane wave is the same as its flux (when c = 1), we have from Equation (17)

But the wave field in this case is a random variable, so we must replace h2 by a statistical mean square amplitude per unit frequency (Fourier transform power per unit frequency) called Sgw(f), so that the energy density per unit frequency is proportional to f2Sgw(f). It is then conventional to talk about the energy density per unit logarithm of the frequency, which means multiplying by f. The result, after being careful about averaging over all directions of the waves and all independent polarization components, is [27, 359]

Finally, what is of most interest is the energy density as a fraction of the closure or critical cosmological density, given by the Hubble constant H0 as \({\rho _c} = 3H_0^2/8\pi\). The resulting ratio is called Ωgw(f):

The only tight constraint on Ωgw from non-gravitational-wave astronomy is that it must be smaller than 10−5, in order not to disturb the agreement between the standard Big Bang model of nucleosynthesis (of helium and other light elements) and observation. If the universe contains this much gravitational radiation today, then at the time of nucleosynthesis the (blue-shifted) energy density of this radiation would have been comparable to that of the photons and the three neutrino species. Although the radiation would not have participated in the nuclear reactions, its extra energy density would have required that the expansion rate of the universe at that time be significantly faster, in order to evolve into the universe we see today. In turn, this faster expansion would have provided less time for the nuclear reactions to “freeze out”, altering the abundances from the values that are observed today [281, 346]. First-generation interferometers should be able to set direct limits on the cosmological background at around this level. Radiation in the lower-frequency LISA band, from galactic and extra-galactic binaries, is expected to be much smaller than this bound.

Random radiation is indistinguishable from instrumental noise in a single detector, at least for short observing times. If the random field is produced by an anisotropically-distributed set of astrophysical sources (the binaries in our galaxy, for example) then over a year, as the detector changes its orientation, the noise from this background should rise and fall in a systematic way, allowing it to be identified. But this is a rather crude way of detecting the radiation, and a better way is to perform a cross-correlation between two detectors, if available.

In cross-correlation, which amounts to multiplying the outputs and integrating, the random signal in one detector essentially acts as a template for the signal in the other detector. If they match, then there will be a stronger-than-expected correlation. Notice that they can only match well if the wavelength of the gravitational waves is longer than the separation between the detectors: otherwise time delays for waves reaching one detector before the other degrade the match. The outcome is not like standard matched filtering, however, since the “filter” of the first detector has as much noise superimposed on its template as the other detector. As a result, the amplitude SNR of the correlated field grows only with observing time T as T1/4, rather than the square root growth that characterizes matched filtering [359].

4 Gravitational Wave Detectors and Their Sensitivity

Detectors of gravitational waves generally divide into two classes: beam detectors and resonant mass detectors. In beam detectors, gravitational waves interact with a beam of electromagnetic radiation, which is monitored in some way to register the passage of the wave. In resonant mass detectors, the gravitational wave transfers energy to a massive body, from which the resultant oscillations are observed.

Both classes include a variety of systems. The principal beam detectors are the large ground-based laser interferometers currently operating in several locations around the globe, such as the LIGO system in the USA. The ESA-NASA LISA mission aims to put a laser interferometer into space to detect milliHertz gravitational waves. But beam detectors do not need to involve interferometry: the radio beams transponded to interplanetary spacecraft can carry the signature of a passing gravitational wave, and this method has been used to search for low-frequency gravitational waves. And radio astronomers have for many years monitored the radio beams of distant pulsars for evidence of gravitational waves; new radio instrumentation is turning this into a powerful and promising method of looking for stochastic backgrounds and individual sources. And at ultra-low frequencies, gravitational waves in the early universe may have left their imprint on the polarization of the cosmic microwave background.

Resonant mass detectors were the first kind of detector built in the laboratory to detect gravitational waves: Joseph Weber [387] built two cylindrical aluminum bar detectors and attempted to find correlated disturbances that might have been caused by a passing impulsive gravitational wave. His claimed detections led to the construction of many other bar detectors of comparable or better sensitivity, which never verified his claims. Some of those detectors were not developed further, but others had their sensitivities improved by making them cryogenic, and today there are two ultra-cryogenic detectors in operation (see Section 4.1).

In the following, we will examine the principal detection methods that hold promise today and in the near future.

4.1 Principles of the operation of resonant mass detectors

A typical “bar” detector consists of a cylinder of aluminum with a length ℓ ∼ 3 m, a very narrow resonant frequency between f ∼ 500 Hz and 1.5 kHz, and a mass M ∼ 1000 kg. A short gravitational wave burst with h ∼ 10−21 will make the bar vibrate with an amplitude

To measure this, one must fight against three main sources of noise.

-

1.

Thermal noise. The original Weber bar operated at room temperature, but the most advanced detectors today, Nautilus [51] and Auriga [227], are ultra-cryogenic, operating at T = 100 mK. At this temperature the root mean square (rms) amplitude of vibration is

$$\left\langle {\delta {\ell ^2}} \right\rangle _{th}^{1/2} = {\left({{{kT} \over {4{\pi ^2}M{f^2}}}} \right)^{1/2}}\sim 6 \times {10^{- 18}}\;{\rm{m}}{.}$$(39)This is far larger than the gravitational wave amplitude expected from astrophysical sources. But if the material has a high Q (say, 106) in its fundamental mode, then that changes its thermal amplitude of vibration in a random walk with very small steps, taking a time Q/f ∼ 1000 s to change by the full amount. However, a gravitational wave burst will cause a change in 1 ms. In 1 ms, thermal noise will have random-walked to an expected amplitude change (1000 s/1 ms)1/2 = Q1/2 times smaller, or (for these numbers)

$$\left\langle {\delta {\ell ^2}} \right\rangle _{th:\;1\;{\rm{ms}}}^{1/2} = {\left({{{kT} \over {4{\pi ^2}M{f^2}Q}}} \right)^{1/2}}\sim 6 \times {10^{- 21}}\;{\rm{m}}{.}$$(40)So ultra-cryogenic bars can approach the goal of detection near h = 10−20 despite thermal noise.

-

2.

Sensor noise. A transducer converts the bar’s mechanical energy into electrical energy, and an amplifier increases the electrical signal to record it. If sensing of the vibration could be done perfectly, then the detector would be broadband: both thermal impulses and gravitational wave forces are mechanical forces, and the ratio of their induced vibrations would be the same at all frequencies for a given applied impulsive force.

But sensing is not perfect: amplifiers introduce noise, and this makes small amplitudes harder to measure. The amplitudes of vibration are largest in the resonance band near f, so amplifier noise limits the detector sensitivity to gravitational wave frequencies near f. But if the noise is small, then the measurement bandwidth about f can be much larger than the resonant bandwidth f/Q. Typical measurement bandwidths are 10 Hz, about 104 times larger than the resonant bandwidths, and 100 Hz is not out of the question [59].

-

3.

Quantum noise. The zero-point vibrations of a bar with a frequency of 1 kHz are

$$\left\langle {\delta {\ell ^2}} \right\rangle _{quant}^{1/2} = {\left({{\hbar \over {4\pi Mf}}} \right)^{1/2}}\sim 4 \times {10^{- 21}}\;{\rm{m}}{.}$$(41)This is comparable to the thermal limit over 1 ms. So, as detectors improve their thermal limits, they run into the quantum limit, which must be breached before a signal at 10−21 can be seen with such a detector.

It is not impossible to do better than the quantum limit. The uncertainty principle only sets the limit above if a measurement tries to determine the excitation energy of the bar, or equivalently the phonon number. But one is not interested in the phonon number, except in so far as it allows one to determine the original gravitational wave amplitude. It is possible to define other observables that also respond to the gravitational wave and can be measured more accurately by squeezing their uncertainty at the expense of greater errors in their conjugate observable [110]. It is not yet clear whether squeezing will be viable for bar detectors, although squeezing is now an established technique in quantum optics and will soon be implemented in interferometric detectors (see below).