Abstract

For the class of (partially specified) internal risk factor models we establish strongly simplified supermodular ordering results in comparison to the case of general risk factor models. This allows us to derive meaningful and improved risk bounds for the joint portfolio in risk factor models with dependence information given by constrained specification sets for the copulas of the risk components and the systemic risk factor. The proof of our main comparison result is not standard. It is based on grid copula approximation of upper products of copulas and on the theory of mass transfers. An application to real market data shows considerable improvement over the standard method.

Similar content being viewed by others

1 Introduction

In order to reduce the standard upper risk bounds for a portfolio \(S=\sum _{i=1}^{d} X_{i}\) based on marginal information, a promising approach to include structural and dependence information are partially specified risk factor models, see Bernard et al. (2017b). In this approach, the risk vector X=(Xi)1≤i≤d is described by a factor model

with functions fi, systemic risk factor Z, and individual risk factors εi. It is assumed that the distributions Hi of (Xi,Z), 1≤i≤d, and thus also the marginal distributions Fi of Xi and G of Z are known. The joint distribution of (εi)1≤i≤d and Z, however, is not specified in contrast to the usual independence assumptions in factor models. It has been shown in Bernard et al. (2017b) that in the partially specified risk factor model a sharp upper bound in convex order of the joint portfolio is given by the conditionally comonotonic sum, i.e., it holds

for some U∼U(0,1) independent of Z. Furthermore, \(S_{Z}^{c}\) is an improvement over the comonotonic sum, i.e,

For a law-invariant convex risk measure \(\Psi \colon L^{1}(\Omega,\mathcal {A},P) \to \mathbb {R}\) that has the Fatou-property it holds that Ψ is consistent with respect to the convex order which yields that

assuming generally that Xi∈L1(P) are integrable and defined on a non-atomic probability space \((\Omega,\mathcal {A},P)\,,\) see (Bäuerle and Müller (2006), Theorem 4.3).

We assume that Z is real-valued. Then, the improved upper risk bound depends only on the marginals Fi, the distribution G of Z, and on the bivariate copulas \(C^{i}=C_{X_{i},Z}\) specifying the dependence structure of (Xi,Z). An interesting question is how the worst case dependence structure and the corresponding risk bounds depend on the specifications Ci, 1≤i≤d. More generally, for some subclasses \({\mathcal {S}}^{i}\subset \mathcal {C}_{2}\) of the class of two-dimensional copulas \(\mathcal {C}_{2}\,,\) the problem arises how to obtain (sharp) risk bounds given the information that \(C^{i}\in {\mathcal {S}}^{i}\,,\) 1≤i≤d. More precisely, for univariate distribution functions Fi,G, we aim to solve the constrained maximization problem

for some suitable dependence specification sets \({\mathcal {S}}^{i}\,.\) As an extension of (5), we also determine solutions of the constrained maximization problem

with dependence specification sets \({\mathcal {S}}^{i}\) and marginal specification sets \({\mathcal {F}}_{i}\subset {\mathcal {F}}^{1}\,,\) where \({\mathcal {F}}^{1}\) denotes the set of univariate distribution functions.

A main aim of this paper is to solve the constrained supermodular maximization problem

for \(F_{i}\in {\mathcal {F}}_{i}\) and \(G\in {\mathcal {F}}_{0}\,.\) A solution of this stronger maximization problem allows more general applications. In particular, it holds that

and thus a solution of (7) also yields a solution of (5).

Note that solutions of the maximization problems do not necessarily exist because both the convex ordering of the constrained sums and the supermodular ordering are partial orders on the underlying classes of distributions that do not form a lattice, see Müller and Scarsini (2006). In general, the existence of solutions also depends on the marginal constraints Fi and G. In this paper, we determine solutions of the maximization problems for large classes \({\mathcal {F}}_{i}\subset {\mathcal {F}}^{1}\) of marginal constraints under some specific dependence constraints \({\mathcal {S}}^{i}\,.\)

In Ansari and Rüschendorf (2016), some results on the supermodular maximization problem are given for normal and Kotz-type distributional models for the risk vector X. Some general supermodular ordering results for conditionally comonotonic random vectors are established in Ansari and Rüschendorf (2018). Therein, as a useful tool, the upper product \(\bigvee _{i=1}^{d} D^{i}\) of bivariate copulas \(D^{i}\in \mathcal {C}_{2}\) is introduced by

for u=(u1,…,ud)∈[0,1]d, where ∂2 denotes the partial derivative operator w.r.t. the second variable. (Note that we superscribe copulas with upper indices in this paper which should not be confused with exponents.) If the risk factor distribution G is continuous, then \(\bigvee _{i=1}^{d} C^{i}\) is the copula of the conditionally comonotonic risk vector \(\left (F_{X_{i}|Z}^{-1}(U)\right)_{1\leq i \leq d}\) with specifications \(C_{X_{i},Z}=C^{i}\,,\) see Ansari and Rüschendorf (2018), Proposition 2.4. Thus, ordering the dependencies of conditionally comonotonic random vectors is based on ordering the corresponding upper products. In particular, a strong dependence ordering condition on the copulas \(A^{i},B^{i}\in \mathcal {C}_{2}\) (based on the sign sequence ordering) allows us to infer inequalities of the form

see (Ansari and Rüschendorf 2018), Theorem 3.10. In this paper, we characterize upper product inequalities of the type

for copulas \(D^{2},\ldots D^{d},E\in \mathcal {C}_{2}\,,\) where M2 denotes the upper Fréchet copula in the bivariate case. These inequalities are based on simple lower orthant ordering conditions on the sets \({\mathcal {S}}^{i}\) such that solutions of the maximization problems (5) – (7) exist and can be determined.

The problem to find risk bounds for the Value-at-Risk (VaR) or other risk measures of a portfolio under the assumption of partial knowledge of the marginals and the dependence structure is a central problem in risk management. Bounds for the VaR (or the closely related distributional bounds), resp., for the Tail-Value-at-Risk (TVaR) based on some moment information have been studied extensively in the insurance literature by authors such as Kaas and Goovaerts (1986), Denuit et al. (1999), de Schepper and Heijnen (2010), Hürlimann (2002); Hürlimann (2008), Goovaerts et al. (2011), Bernard et al. (2017a); Bernard et al. (2018), Tian (2008), and Cornilly et al. (2018). Hürlimann (2002) derived analytical bounds for VaR and TVaR under knowledge of the mean, variance, skewness, and kurtosis.

The more recent literature has focused on the problem of finding risk bounds under the assumption that all marginal distributions are known but the dependence structure of the portfolio is either unknown or only partially known. Risk bounds with pure marginal information were intensively studied but were often found to be too wide in order to be useful in practice (see Embrechts and Puccetti (2006); Embrechts et al. (2013); Embrechts et al. (2014)). Related aggregation-robustness and model uncertainty for risk measures are also investigated in Embrechts et al. (2015). Several approaches to add some dependence information to marginal information have been discussed in ample literature (see Puccetti and Rüschendorf (2012a); Puccetti and Rüschendorf (2012b); Puccetti and Rüschendorf (2013); Bernard and Vanduffel (2015), Bernard et al. (2017a); Bernard et al. (2017b), Bignozzi et al. (2015); Rüschendorf and Witting (2017); Puccetti et al. (2017)). For some surveys on these developments, see Rueschendorf (2017a, b).

Apparently, a relevant dependence information and structural information leading to a considerable reduction of the risk bounds is given by the partially specified risk factor model as introduced in Bernard et al. (2017b). In this paper, we show that for a large relevant class of partially specified risk factor models–the internal risk factor models–more simple sufficient conditions for the supermodular ordering of the upper products–and thus for the conditionally comonotonic risk vectors–can be obtained by simple lower orthant ordering conditions on the dependence specifications. These simplified conditions allow easy applications to ordering results for risk bounds with subset specification sets \({\mathcal {S}}^{i}\) described above. We give an illuminating application to real market data which clearly shows the potential usefulness of the comparison results. For some further details, we refer to the dissertation of Ansari (2019).

2 Internal risk factor models

A simplified supermodular ordering result for conditionally comonotonic random vectors can be obtained in the case that the risk factor Z is itself a component of these risk vectors. As a slight generalization, we define the notion of an internal risk factor model.

Definition 1

(Internal risk factor model)

A (partially specified) internal risk factor model with internal risk factorZ is a (partially specified) risk factor model (Xi)1≤i≤d, Xi=fi(Z,εi), such that for some j∈{1,…,d} and a non-decreasing function gj holds Xj=gj(Z).

Without loss of generality, the distribution function of the internal risk factor can be chosen continuous, i.e., \(Z\sim G\in {\mathcal {F}}_{c}^{1}\,.\) Thus, the not necessarily uniquely determined copula of (Xj,Z) can be chosen as the upper Fréchet copula M2. This means that Xj and Z are comonotonic and Z can be considered as a component of the risk vector X which explains the denomination of Z as an internal risk factor.

In partially specified risk factor models, the dependence structure of the worst case conditionally comonotonic vector is represented by the upper product of the dependence specifications if \(G\in {\mathcal {F}}_{c}^{1}\,,\) i.e.,

Thus, assuming w.l.o.g. that j=1, our aim is to derive supermodular ordering results for the upper product M2∨D2∨⋯∨Dd with respect to the dependence specifications Di.

For a function \(f\colon \mathbb {R}^{d}\to \mathbb {R}\,,\) let \(\triangle _{i}^{\varepsilon } f(x):=f(x+\varepsilon e_{i})-g(x)\) be the difference operator, where ε>0 and ei denotes the unit vector w.r.t. the canonical base in \(\mathbb {R}^{d}\,.\) Then, f is said to be supermodular, resp., directionally convex if \(\triangle _{i}^{\varepsilon _{i}} \triangle _{j}^{\varepsilon _{j}} f\geq 0\) for all 1≤i<j≤d, resp., 1≤i≤j≤d. For d-dimensional random vectors ξ,ξ′, the supermodular ordering ξ≤smξ′, resp., the directionally convex ordering ξ≤dcxξ′ is defined via \({\mathbb {E}} f(\xi) \leq {\mathbb {E}} f(\xi ^{\prime })\) for all supermodular, resp., directionally convex functions f for which the expectations exist. The lower, resp., upper orthant ordering ξ≤loξ′, resp., ξ≤uoξ′ is defined by the pointwise comparison of the corresponding distribution, resp., survival functions, i.e., \(F_{\xi }(x)\leq F_{\xi ^{\prime }}(x)\,,\) resp., \(\overline {F}_{\xi }(x)\leq \overline {F}_{\xi ^{\prime }}(x)\) for all \(x\in \mathbb {R}^{d}\,.\) Remember that the convex ordering ζ≤cxζ′ for real-valued random variables ζ,ζ′ is defined via \({\mathbb {E}} \varphi (\zeta)\leq {\mathbb {E}} \varphi (\zeta ^{\prime })\) for all convex functions φ for which the expectation exists. Note that these orderings depend only on the distributions and, thus, are also defined for the corresponding distribution functions. For an overview of stochastic orderings, see Müller and Stoyan (2002), Shaked and Shantikumar (2007), and Rüschendorf (2013).

The following theorem is a main result of this paper. It characterizes the upper product inequality (9) concerning partially specified internal risk factor models.

Theorem 1

(Supermodular ordering of upper products)

Let \(D^{2}\ldots,D^{d},E\in \mathcal {C}_{2}\,.\) Then, the following statements are equivalent:

- (i)

Di≤loE for all 2≤i≤d.

- (ii)

\(M^{2}\vee D^{2}\vee \cdots \vee D^{d} \leq _{lo} M^{2}\vee \underbrace {E \vee \cdots \vee E}_{(d-1)\text {-times}}\,.\)

- (iii)

\(M^{2}\vee D^{2}\vee \cdots \vee D^{d} \leq _{sm} M^{2}\vee \underbrace {E \vee \cdots \vee E}_{(d-1)\text {-times}}\,.\)

The proof of the equivalence of (i) and (ii) is not difficult, whereas the equivalence w.r.t. the supermodular ordering in (iii) which we derive in Section 3 requires some effort. Its proof is based on the mass transfer theory for discrete approximations of the upper products and, further, on a conditioning argument using extensions of the standard orderings ≤lo, ≤uo, ≤sm as well as of the comonotonicity notion to the frame of signed measures.

Proof

(Proof of ‘(i) ⇔ (ii)’:) Assume that Di≤loE. Then, for u=(u1,…,ud)∈[0,1]d, we obtain from the definition of the upper product that

using that \(\phantom {\dot {i}\!}\partial _{2} M^{2}(u_{1},t)={\mathbb{1}}_{\{u_{1}\geq t\}}\) almost surely.

The reverse direction follows from the closures of the upper product (see Ansari and Rüschendorf (2018), Proposition 2.4.(iv)) and of the lower orthant ordering under marginalization.

The proof of ‘(i) ⇔ (iii)’ is given in Section 3. □

As a consequence of the above supermodular ordering theorem for upper products, we obtain improved bounds in partially specified internal risk factor models in comparison to the standard bounds based on marginal information.

Theorem 2

(Improved bounds in internal risk factor models)

For \(F_{j}\in {\mathcal {F}}^{1}\,,\) let Xj∼Fj, 1≤j≤d, be real-valued random variables such that \(C^{i}=C_{X_{i},X_{1}}\leq _{lo}E\) for all 2≤i≤d. Then, for Y1,…,Yd with Xj=dYj for all 1≤j≤d and \(C_{Y_{i},Y_{1}}=E\) for all 2≤i≤d holds

for U∼U(0,1) independent of Y1. In particular, this implies

Proof

Without loss of generality, let Xi∼U(0,1). Then, (X1,…,Xd) follows a partially specified internal risk factor model with internal risk factor Z=X1 and dependence constraints \(C_{X_{i},Z}=C^{i}\,,\) 2≤i≤d. We obtain

where the first inequality follows from Ansari and Rüschendorf (2018), Proposition 2.4.(i) and the second inequality holds due to Theorem 1. Thus, (12) follows from the representation in (10). The statement in (13) is a consequence of (8) and (12). □

Remark 1

-

(a)

The upper bound in (12) is comonotonic conditionally on Y1. Further, the vector \(\left (F_{Y_{2}|Y_{1}}^{-1}(U),\ldots,F_{Y_{d}|Y_{1}}^{-1}(U)\right)\) is comonotonic because all copulas \(C_{Y_{i},Y_{1}}=E\) coincide, 2≤i≤d, cf. Ansari and Rüschendorf (2018), Proposition 2.4(v).

-

(b)

For d=2, (12) reduces to (X1,X2)≤sm(Y1,Y2) and the upper product in Theorem 1 simplifies to M2∨D2=(D2)T, resp., M2∨E=ET, where the copula CT is the transposed copula of \(C\in \mathcal {C}_{2}\,,\) i.e., CT(u,v)=C(v,u). In this case, the statements of Theorems 1 and 2 are known from the literature, see, e.g., (Müller (1997), Theorem 2.7). Further, for d>2, the result in Theorem 2 cannot be obtained by a simple supermodular mixing argument because, in the general case, a supermodular ordering of all conditional distributions is not possible, i.e., there exists a z outside a null set such that

$$(X_{1},\ldots,X_{d})|X_{1}=z~\not \leq_{sm} ~(Y_{1},F_{Y_{2}|Y_{1}}^{-1}(U),\ldots,F_{Y_{d}|Y_{1}}^{-1}(U))|Y_{1}=z\,,$$unless \(C_{X_{i},X_{1}}=E\) for all i, see (Ansari (2019), Proposition 3.18).

-

(c)

If (Xi,X1) are negatively lower orthant dependent for all 2≤i≤d, i.e., \(C_{X_{i},X_{1}}(u,v)\leq \Pi ^{2}(u,v)=uv\) for all (u,v)∈[0,1]2, then Theorem 2 simplifies to

$$\begin{aligned} (X_{1},\ldots,X_{d})&\leq_{sm} \left(X_{1},F_{X_{2}}^{-1}(U),\ldots,F_{X_{d}}^{-1}(U)\right)\\ \text{and}~~~~\sum_{i=1}^{d} X_{i} &\leq_{cx} X_{1}+\sum\limits_{i=2}^{d} F_{X_{i}}^{-1}(U)\,, \end{aligned} $$where U∼U(0,1) is independent of X1.

-

(d)

For \(G\in {\mathcal {F}}^{1}_{c},\) the right side in (12), resp., (13) solves the constrained maximization problem (7), resp., (5) for the dependence specification sets

$$\begin{array}{@{}rcl@{}} {\mathcal{S}}^{1}&=&\{M^{2}\}\,,~~~\text{and}\\ {\mathcal{S}}^{i}&=&\{C\in \mathcal{C}^{2}\,|\,C\leq_{lo} E\}\,,~~2\leq i \leq d\,. \end{array} $$(14)

As a consequence of Theorem 2, we also obtain improved upper bounds under some correlation information. For a bivariate random vector \((V_{1},V_{2})\sim C\in \mathcal {C}_{2}\,,\) denote Spearman’s ρ, resp., Kendall’s τ of (V1,V2) by ρS(V1,V2)=ρS(C), resp., τ(V1,V2)=τ(C).

For r∈[−1,1], define \(C^{r}(u,v):=\sup \{C(u,v)~|~C\in \mathcal {C}_{2}\,,~\rho _{S}(C)=r\}\,,\) (u,v)∈[0,1]2. Then, Cr is a bivariate copula and is given by

where \(\phi (a,b)=\frac 1 6 \left [(9b+3\sqrt {9b^{2}-3a^{6}})^{1/3}+(9b-3\sqrt {9b^{2}-3a^{6}})^{1/3}\right ]\,,\) see Nelsen et al. (2001) [Theorem 4].

For t∈[−1,1], define \(D^{t}(u,v):=\sup \{C(u,v)~|~C\in \mathcal {C}_{2}\,,~\tau (C)=t\}\,,\) (u,v)∈[0,1]2. Then, Dt is a bivariate copula and given by

see Nelsen et al. (2001) [Theorem 2].

The risk bounds can be improved under correlation bounds as follows.

Corollary 1

(Improved bounds based on correlations)

Let X1,…,Xd be real-valued random variables such that either

- (i)

ρS(X1,Xi)<0.5 for all 2≤i≤d, or

- (ii)

τ(X1,Xi)<0 for all 2≤i≤d.

Let r:= max2≤i≤d{ρS(X1,Xi)}, resp., t:= max2≤i≤d{τ(X1,Xi)}. Then, for Y1,…,Yd with Yj=dXj, 1≤j≤d, and \(C_{Y_{i},Y_{1}}=C^{r}\,,\) resp., \(C_{Y_{i},Y_{1}}=D^{t}\) for all 2≤i≤d, it holds true that

where U∼U(0,1) is independent of Y1.

Proof

The result follows from Theorem 2 using the monotonicity of the distributional bound Cr in r, resp., Dt in t w.r.t. the lower orthant ordering, see Nelsen et al. (2001) [Corollary 5 (a),(b),(e), resp., Corollary 3 (a),(b),(e)]. □

Remark 2

For r∈(−1,0.5) and t∈(−1,0), it holds that ρS(Cr)>r and τ(Dt)>t, see Nelsen et al. (2001) [Corollary 3(h), resp., 5(h)]. Thus, for \(F_{i}\in {\mathcal {F}}^{1},\) 1≤i≤d,\(G\in {\mathcal {F}}_{c}^{1}\) and

2≤i≤d, only an improved upper bound in supermodular ordering for the constrained risk vectors but not a solution of maximization problem (5), resp., (7) can be achieved.

To also allow a comparison of the univariate marginal distributions, remember that a bivariate copula D is conditionally increasing (CI) if there exists a bivariate random vector (U1,U2)∼D such that U1|U2=u2 is stochastically increasing in u2 and U2|U1=u1 is stochastically increasing in u1. Equivalently, ∂2D(u,v) is almost surely decreasing in v for all u∈[0,1] and ∂1D(u,v) is almost surely decreasing in u for all v∈[0,1].

If the upper bound E in Theorem 2 is conditionally increasing, then the case of increasing marginals in convex order can also be handled.

Theorem 3

(Improved bounds in ≤dcx-order) Let X1,…,Xd be real-valued random variables with \(C_{X_{i},X_{1}}\leq _{lo}E\) for all 2≤i≤d. Assume that E is conditionally increasing. Then, for Y1,…,Yd with Xj≤cxYj for all 1≤j≤d and \(C_{Y_{i},Y_{1}}=E\) for all 2≤i≤d holds

where U∼U(0,1) is independent of Y1. This implies

Proof

Let Y1′,…,Yd′ with Xj=dYj′ for all 1≤j≤d and \(C_{Y_{i}',Y_{1}'}=E\) for all 2≤i≤d. Then, we obtain from (12) that

for V∼U(0,1) independent of Y1′. Since both \(\left (Y_{1}',F_{Y_{2}',Y_{1}'}^{-1}(V),\ldots,F_{Y_{d}',Y_{1}'}^{-1}(V)\right)\) and \(\left (Y_{1},F_{Y_{2}|Y_{1}}^{-1}(U),\ldots,F_{Y_{d}|Y_{1}}^{-1}(U)\right)\) have the same copula \(M^{2}\vee \underbrace {E\vee \cdots \vee E}_{(d-1)\text {-times}}\,,\) which is easily shown to be CI, the statement follows from Müller and Scarsini (2001), Theorem 4.5 using Yi′≤cxYi. □

Remark 3

For \(F_{1},\ldots,F_{d}\in {\mathcal {F}}^{1},\) consider the sets \({\mathcal {F}}_{i}':=\{F\in {\mathcal {F}}^{1}|F\leq _{cx} F_{i}\}\,.\) Let the sets \({\mathcal {S}}^{i}\) of dependence specifications be given as in (14). If \(E=C_{Y_{i},Y_{1}}\) is CI and Yi∼Fi, then the upper bound in (16) solves maximization problem (6) with marginal specification sets \({\mathcal {F}}_{0}={\mathcal {F}}_{c}^{1}\) and \({\mathcal {F}}_{i}={\mathcal {F}}_{i}'\) for 1≤i≤d.

For a generalization of Theorem 2, we need an extension of (8) as follows.

Lemma 1

Let \(X=\left (X_{k}^{i}\right)_{1\leq i \leq d, 1\leq k \leq m}\) and \(Y=\left (Y_{k}^{i}\right)_{1\leq i \leq d, 1\leq k \leq m}\) be (d×m)-matrices of real random variables with independent columns.

If \(\left (X_{k}^{i}\right)_{1\leq i \leq d}\leq _{sm} \left (Y_{k}^{i}\right)_{1\leq i \leq d}\) for all 1≤k≤m, then it holds true that

for all increasing convex functions ψi and increasing functions \(f_{k}^{i}\,.\)

Proof

By straightforward calculations, it can be shown that the function \(h\colon (\mathbb {R}^{m})^{d} \to \mathbb {R}\) given by

is supermodular for all increasing convex functions φ. Then, the invariance under increasing transformations and the concatenation property of the supermodular order (see, e.g., Shaked and Shantikumar (2007) [Theorem 9.A.9(a),(b)]) imply that

where ≤icx denotes the increasing convex order. Since it holds for 1≤i≤d that \(\sum _{k=1}^{m} f_{k}^{i}\left (X_{k}^{i}\right) {\stackrel {\mathrm {d}}=} \sum _{k=1}^{m} f_{k}^{i}\left (Y_{k}^{i}\right)\,,\) we obtain

Hence, the assertion follows from Shaked and Shantikumar (2007) [Theorem 4.A.35]. □

The application to improved portfolio TVaR bounds in Section 4 is based on the following generalization of Theorem 2.

Theorem 4

(Concatenation of upper bounds)

For \(F_{i}^{k}\in {\mathcal {F}}^{1}\,,\) let \(\left (X_{1}^{k},\ldots,X_{d}^{k}\right)\,,\) 1≤k≤m, be independent random vectors with \(X_{i}^{k}\sim F_{i}^{k}\,.\) Assume that \(C_{X_{i}^{k},X_{1}^{k}}\leq _{lo} E^{k}\) for \(E^{k}\in \mathcal {C}_{2}\) for all 2≤i≤d, 1≤k≤m. Then, for independent vectors \(\left (Y_{1}^{k},\ldots,Y_{d}^{k}\right)\) with \(Y_{i}^{k}{\stackrel {\mathrm {d}}=} X_{i}^{k}\) and \(C_{Y_{i}^{k},Y_{1}^{k}}=E^{k}\) for all 2≤i≤d, 1≤k≤m holds

where U1,…,Um∼U(0,1)are i.i.d. and independent of \(Y_{1}^{k}\) for all k. This implies

for all increasing convex functions φ1,…,φd.

Proof

Statement (17) follows from Theorem 2 with the concatenation property of the supermodular ordering. Statement (18) is a consequence of Theorem 2 and Lemma 1. □

Remark 4

Under the assumptions of Theorem 4, the right hand side in (18) solves maximization problem (5) for

where \(F_{i}=F_{\varphi _{i}\left (\sum _{k} X_{i}^{k}\right)}\,,\) 1≤i≤d and \(G\in {\mathcal {F}}_{c}^{1}\,.\)

3 Proof of the supermodular ordering in Theorem 1

In this section, we prove the equivalence of (i) and (iii) in Theorem 1. This requires some preparations. We approximate the upper products by discrete upper products based on grid copula approximations. Then, we show that these discrete upper products can be supermodularly ordered using a conditioning argument and mass transfer theory from Müller (2013). However, requires an extension of the orderings ≤lo, ≤uo, ≤sm, and of comonotonicity to the frame of signed measures.

3.1 Extensions of ≤lo, ≤uo, and ≤sm to signed measures

For a Borel-measurable subset \(\Xi \subset \mathbb {R}^{d}\,,\) denote by \({\mathcal {B}}(\Xi)\) the Borel- σ-algebra on Ξ. Denote by \({\mathcal {M}}_{d}^{1}\) the set of probability measures on \({\mathcal {B}}(\Xi)\,.\) A signed measure on \({\mathcal {B}}(\Xi)\) is a σ-additive mapping \(\mu \colon {\mathcal {B}}(\Xi) \to \mathbb {R}\) such that μ(∅)=0. Let \({\mathbb {M}}^{0}_{d}={\mathbb {M}}_{d}^{0}(\Xi)\,,\) resp., \({\mathbb {M}}^{1}_{d}={\mathbb {M}}_{d}^{1}(\Xi)\) be the set of all signed measures μ on \({\mathcal {B}}(\Xi)\) with μ(Ξ)=0, resp., μ(Ξ)=1 and finite variation norm ∥μ∥=μ+(Ξ)+μ−(Ξ)<∞, where μ+,μ− are the unique measures obtained from the Hahn–Jordan decomposition of μ=μ+−μ−. Then, the definition of the orderings ≤lo, ≤uo, and ≤sm can be extended to signed distributions using this decomposition.

Definition 2

Let \(P,Q\in {\mathbb {M}}^{1}_{d}\) be signed measures. Then, define

- (i)

the lower orthant orderP≤loQ if P((−∞,x])≤Q((−∞,x]) holds for all \(x\in \mathbb {R}^{d}\,,\)

- (ii)

the upper orthant orderP≤uoQ if P((x,∞))≤Q((x,∞)) holds for all \(x\in \mathbb {R}^{d}\,,\)

- (iii)

the supermodular orderP≤smQ if \(\int f(x)\, {\mathrm {d}} P(x)\leq \int f(x) \, {\mathrm {d}} Q(x)\) holds for all supermodular integrable functions f.

We generalize the concept of comonotonicity to signed measures as follows.

3.2 Quasi-comonotonicity

We say that a probability distribution Q, resp., a distribution function F is comonotonic if there exists a comonotonic random vector ξ such that ξ∼Q, resp., Fξ=F.

For a signed measure \(P\in {\mathbb {M}}_{d}^{1}\,,\) we define the associated measure generating functionF=FP by F(x)=P((−∞,x]) and its univariate marginal measure generating functionsFi by \(F_{i}(x_{i})=P(\mathbb {R}\times \cdots \mathbb {R}\times (-\infty,x_{i}]\times \mathbb {R}\times \cdots \times \mathbb {R})\) for \(x=(x_{1},\ldots,x_{d})\in \mathbb {R}^{d}\) and 1≤i≤d. We define the notion of quasi-comonotonicity as follows.

Definition 3

(Quasi-comonotonicity) We denote P, resp., F as quasi-comonotonic if \(F(x)=\min \limits _{1\leq i \leq d}\left \{F_{i}(x_{i})\right \}\) for all \(x=(x_{1},\ldots,x_{d})\in \mathbb {R}^{d}.\)

Obviously, if \(P\in {\mathcal {M}}^{1}_{d}\,,\) then the quasi-comonotonicity and comonotonicity of P are equivalent.

The following lemma characterizes the lower orthant ordering of (quasi-) comonotonic distributions in terms of the upper orthant order.

Lemma 2

Let \(P\in {\mathbb {M}}_{d}^{1}\) be a signed distribution with univariate marginal distribution functions Fi, 1≤i≤d. Let \(Q\in {\mathcal {M}}_{d}^{1}\) be a probability distribution. Assume that Fi(t)≤1 for all \(t\in \mathbb {R}\,,\) 1≤i≤d. If P is quasi-comonotonic and Q is comonotonic, then it holds that

Proof

Let \(A_{i}=\{(y_{1},\ldots,y_{d})\in \mathbb {R}^{d}\,|\, y_{i}\in (x_{i},\infty ]\},\phantom {\dot {i}\!}\) 1≤i≤d, and let \(a_{j}:=F_{i_{j}}(x_{i_{j}})\phantom {\dot {i}\!}\) for i1,…,id∈{1,…,d} such that a1≥…≥ad. Then, the survival function \(\overline {F}\) corresponding to F is calculated by

where the fourth equality holds true because P is quasi-comonotonic, Fi≤1 and Fi(∞)=1 for all i. The fifth equality follows since there are \(\binom {k-1}{k-j}\) subsets of {1,…,k} with k−j+1 elements such that k is the maximum element. The sixth equality holds due to the symmetry of the binomial coefficient.

Let G be the distribution function corresponding to Q with univariate margins Gi. Then, it holds analogously that \(\overline {G}(x)=Q((x,\infty))=1-\max _{i}\left \{G_{i}(x_{i})\right \}\) for \(x=(x_{1},\ldots,x_{d})\in \mathbb {R}^{d}\,.\) We obtain that

where we use for the second equivalence that Fi,Gi≤1 and Fi(∞)=Gi(∞)=1 for all 1≤i≤d. The third equivalence holds true because Gi≥0 and Gi(−∞)=0 for all i. □

3.3 Grid copula approximation

In this subsection, we consider the approximation of the upper product by grid copulas. In the proof of the supermodular ordering in Theorem 1, we make essential use of the property that this approximation is done by distributions with finite support.

For \(n\in {\mathbb {N}}\) and d≥1, denote by

the (extended) uniform unit n-grid of dimension d with edge length \(\tfrac 1 n\,.\)

The following notion of an n-grid d-copula is related to an d-subcopula with domain \({\mathbb {G}}_{n}^{d}\,,\) see, e.g., Nelsen (2006), Definition 2.10.5. For our purpose, we also need a signed version. Denote by ⌊·⌋ the componentwise floor function.

Definition 4

(Grid copula) For \(d\in {\mathbb {N}}\,,\) a (signed) n-grid d-copula (briefly grid copula) \(D\colon [0,1]^{d} \to \mathbb {R}\) is the (signed) measure generating function of a (signed) measure \(\mu \in {\mathbb {M}}_{d}^{1}({\mathbb {G}}_{n,0}^{d})\) with uniform univariate margins, i.e., it holds that

- (i)

\(D(u)=D\left (\frac {\lfloor n u \rfloor }{n}\right)=\mu \left (\left [0,\frac {\lfloor n u \rfloor }{n}\right ]\right)\) for all u∈[0,1]d, and

- (ii)

for all i=1,…,d holds \(D(u)=\tfrac k n\) for all k=0,…,n, if \(u_{i}=\tfrac k n\) and uj=1 for all j≠i.

Denote by \(\mathcal {C}_{d,n}\) (resp., \(\in \mathcal {C}_{2,n}^{s}\)) the set of all (signed) n-grid d-copulas.

An \(\tfrac 1 n\)-scaled doubly stochastic matrix or, if the dimension of the matrix is clear, a mass matrix is defined as an n×n-matrix with non-negative entries and row, resp., column sums equal to \(\tfrac 1 n\,.\) By an signed \(\tfrac 1 n\)-scaled doubly stochastic matrix or also signed mass matrix, we mean an \(\tfrac 1 n\)-scaled doubly stochastic matrix where negative entries are also allowed.

Obviously, there is a one-to-one correspondence between the set of (signed) n-grid 2-copulas and the set of (signed) \(\tfrac 1 n\)-scaled doubly stochastic matrices.

For a bivariate (signed) n-grid copula \(E\in \mathcal {C}_{2,n}\) (\(\in \mathcal {C}_{2,n}^{s}\)), the associated (signed) probability mass function e is defined by

where \(\Delta _{n}^{i}\) (distinct from \(\triangle _{i}^{\varepsilon _{i}}\)) denotes the difference operator of length \(\tfrac 1 n\) with respect to the i-th variable, i.e.,

for \(u\in {\mathbb {G}}_{n,0}^{d}\) and the i-th unit vector ei. Further, define its associated (signed) mass matrix (ekl)1≤k,l≤n by

For every d-copula \(D\in \mathcal {C}_{d}\,,\) denote by \({\mathbb {G}}_{n}(D)\) its canonical n-grid d-copula given by

Define the upper product \(\bigvee \colon (\mathcal {C}_{2,n})^{d} \to \mathcal {C}_{d,n}\) for grid copulas \(D_{n}^{1},\ldots,D_{n}^{d}\in \mathcal {C}_{2,n}\) by

for (u1,…,ud)∈[0,1]d. A version for signed grid copulas is defined analogously.

The following result gives a sufficient supermodular ordering criterion for the upper product based on the approximations by grid copulas, see Ansari and Rüschendorf (2018), Proposition 3.7.

Proposition 1

Let \(D^{i},E^{i}\in \mathcal {C}_{2}\) be bivariate copulas for 1≤i≤d. Then, it holds true that

We make use of the above ordering criterion because the approximation is done by distributions with finite support. But the supermodular ordering of distributions with finite support enjoys a dual characterization by mass transfers as follows.

3.4 Mass transfer theory

This section and the notation herein is based on the mass transfer theory as developed in Müller (2013).

For signed measures \(P,Q\in {\mathbb {M}}^{1}_{d}\) with finite support, denote the signed measure Q−P a transfer from P to Q. To indicate this transfer, write

where \((Q-P)^{-}=\sum _{i=1}^{n} \alpha _{i} \delta _{x_{i}}\) and \((Q-P)^{+}=\sum _{i=1}^{m} \beta _{i} \delta _{y_{i}}\) for αi,βj>0 and \(x_{i},y_{j}\in \mathbb {R}^{d}\,,\) 1≤i≤n, 1≤j≤m. A reverse transfer from P to Q is a transfer from Q to P.

Since \(Q=P+(Q-P)=P-\sum _{i=1}^{n} \alpha _{i} \delta _{x_{i}} + \sum _{i=1}^{m} \beta _{i} \delta _{y_{i}}\,,\) the mapping in (21) illustrates the mass that is transferred from P to Q. By definition, it holds that \(Q-P\in {\mathbb {M}}_{d}^{0}\,.\) Thus, mass is only shifted and, in total, neither created nor lost.

For a set \(M\subset {\mathbb {M}}^{0}_{d}\) of transfers, one is interested in the class \({\mathcal {F}}\) of continuous functions \(f\colon S\to \mathbb {R}\) such that

whenever μ∈M, where \(\mu :=\sum _{j=1}^{m} \beta _{j} \delta _{y_{j}}-\sum _{i=1}^{n} \alpha _{i} \delta _{x_{i}}\,,\)αi,βj>0. Then, \({\mathcal {F}}\) is said to be induced from M.

We focus on the set of Δ-monotone, resp., Δ-antitone, resp., supermodular transfers. These sets induce the classes \({\mathcal {F}}_{\Delta }\) of Δ-monotone, resp., \({\mathcal {F}}_{\Delta }^{-}\) of Δ-antitone, resp., \({\mathcal {F}}_{sm}\) of supermodular functions on S.

Definition 5

Let η>0. Let x≤y with strict inequality xi<yi for k indices i1,…,ik for some k∈{1,…,d}. Denote by \({\mathcal {V}}_{o}(x,y)\,,\) resp., \({\mathcal {V}}_{e}(x,y)\) the set of all vertices z of the k-dimensional hyperbox [x,y] such that the number of components with zi=xi, i∈{i1,…,ik} is odd, resp., even.

- (i)

A transfer indicated by

$$\begin{array}{@{}rcl@{}} \eta\left(\sum_{z\in {\mathcal{V}}_{0}(x,y)} \delta_{z} \right)\to \eta\left(\sum_{z\in {\mathcal{V}}_{e}(x,y)} \delta_{z} \right) \end{array} $$is called a (k-dimensional) Δ-monotone transfer.

- (ii)

A transfer indicated by

$$\begin{aligned} \eta \left(\sum_{z\in {\mathcal{V}}_{o}(x,y)} \delta_{z} \right) &\to \eta\left(\sum_{z\in {\mathcal{V}}_{e}(x,y)} \delta_{z}\right)~~~\text{if }k \text{ is even, and}\\ \eta \left(\sum_{z\in {\mathcal{V}}_{e}(x,y)} \delta_{z} \right) &\to \eta\left(\sum_{z\in {\mathcal{V}}_{o}(x,y)} \delta_{z}\right)~~~\text{if }k \text{ is odd} \end{aligned} $$is called a (k-dimensional) Δ-antitone transfer.

- (iii)

For \(v,w\in \mathbb {R}^{d}\,,\) a transfer indicated by

$$\eta(\delta_{v} + \delta_{w}) \to \eta (\delta_{v\wedge w}+\delta_{v\vee w})$$is called a supermodular transfer, where ∧, resp., ∨ denotes the component-wise minimum, resp., maximum.

The characterizations of the orderings ≤uo, ≤lo, resp., ≤sm by mass transfers due to (Müller (2013), Theorems 2.5.7 and 2.5.4) also hold in the case of signed measures because the proof makes only a statement on transfers, i.e., on the difference of measures.

Proposition 2

For signed measures \(P,Q\in {\mathbb {M}}^{1}_{d}\) with finite support holds:

- (i)

P≤uoQ if and only if Q can be obtained from P by a finite number of Δ-monotone transfers.

- (ii)

P≤loQ if and only if Q can be obtained from P by a finite number of Δ-antitone transfers.

- (iii)

P≤smQ if and only if Q can be obtained from P by a finite number of supermodular transfers.

Remark 5

From Definition 5, we obtain that exactly the one-dimensional Δ-monotone, resp., Δ-antitone transfers affect the univariate marginal distributions. Hence, for measures \(P,Q\in {\mathcal {M}}^{1}_{d}(\Xi)\) with equal univariate distributions, i.e., \(\phantom {\dot {i}\!}P^{\pi _{i}}=Q^{\pi _{i}}\,,\)πi the i-th projection, for all 1≤i≤d, holds that P≤uoQ, resp., P≤loQ if and only if Q can be obtained from P by a finite number of at least 2-dimensional Δ-monotone, resp., Δ-antitone transfers. But note that also the one-dimensional Δ-monotone, resp., Δ-antitone transfers can affect the copula, resp., dependence structure.

Now, we are able to give the proof of the main ordering result of this paper.

3.5 Proof of ‘(i) ⇔ (iii)’ in Theorem 1

Assume that (iii) holds. Then, the closures of the upper product and the supermodular ordering under marginalization imply (Di)T=M2∨Di≤smM2∨E=ET. But this means that Di≤loE.

For the reverse direction, assume that Di≤loE for all 2≤i≤d. Consider the discretized grid copulas \(D_{n}^{i}:={\mathbb {G}}_{n}(D^{i}), M_{n}^{2}:={\mathbb {G}}_{n}(M^{2}),\) and \(E_{n}:={\mathbb {G}}_{n}(E)\,,\) 2≤i≤d, and denote by \(d_{n}^{i}\,,\) resp., en the associated mass matrices of \(d_{n}^{i}\,,\) resp., En. We prove for the upper products of grid copulas, defined in (20), that

showing that there exists a finite number of supermodular transfers that transfer Cn to Bn. This yields (iii) applying Propositions 2 (iii) and 1.

To show (22), consider for 2≤i≤d the signed grid copulas \((D_{n,k}^{i})_{1\leq k \leq n}\) on \({\mathbb {G}}_{n}^{2}\) defined through the signed mass matrices \((D_{n,k}^{i})_{1\leq k \leq n}\) given by

for 1≤k≤n−1.

For all 2≤i≤d and for all \(n\in {\mathbb {N}}\,,\) the sequence \((D_{n,k}^{i})_{1\leq k \leq n}\) of signed mass matrices adjusts the signed mass matrix \(d_{n}^{i}\) column by column to the signed mass matrix en. It holds that \(d_{n,n}^{i}=e_{n}\) for all i and n.

For \(C_{n,k}:=M^{2}_{n}\vee D_{n,k}^{2} \vee \cdots \vee D_{n,k}^{d}\,,\) 1≤k≤n, we show that

for all 1≤k≤n−1. Then, transitivity of the supermodular ordering implies (22) because Cn,1=Cn and Cn,n=Bn.

We observe that Di≤loE yields \(D_{n}^{i}\leq _{lo} E_{n}\) and also

for all 1≤k≤n−1. Further, we observe that Cn,k and Cn,k+1 are (signed) grid copulas with uniform univariate marginals, i.e.,

for all \(u_{j}\in {\mathbb {G}}_{n,0}^{1}\) and 1≤j≤d. This holds because \(\Delta _{n}^{2} D_{n,k}^{i}(u_{i},t)\leq \tfrac 1 n\) for all \((u_{i},t)\in {\mathbb {G}}_{n,0}^{2}\) and for all i and k, even if \(d_{n,k}^{i}\) can get negative for \(t=\tfrac k n\) and some ui<1.

By construction of \((D_{n,k}^{i})_{1\leq k \leq n}\,,\) it holds that

for all 1≤k≤n−1 and for all \(u_{i}\in {\mathbb {G}}_{n,0}^{1}\,,\) 2≤i≤d.

To show (24), fix column k∈{1,…,n−1} of the signed mass matrices. Conditioning under \(u_{1}\in {\mathbb {G}}_{n}^{1}\,,\) consider the conditional (signed) measure generating functions

for l=1,…,n, where

is the upper product of the (signed) grid copulas \(M_{n},D_{n,l}^{2},\ldots,D_{n,l}^{d}\) for \(u\in {\mathbb {G}}_{n,0}^{d}\,.\) Hence, it holds for the conditional (signed) measure generating function that

and for its corresponding (signed) survival function that

where \(u_{-1}=(u_{2},\ldots,u_{d})\in {\mathbb {G}}_{n,0}^{d-1}\,.\)

By the construction of \((D_{n,l}^{i})_{1\leq l \leq n}\,,\) it holds that

We show that

where \(P^{U_{1}}(\cdot)\times P_{C_{n,k}^{\cdot }}\,,\) resp., \(P^{U_{1}}(\cdot)\times P_{C_{n,k+1}^{\cdot }}\) is the conditional measure generating function of \(P_{C_{n,k}}\,,\) resp., \(P_{C_{n,k+1}}\) given the set \(\{\tfrac k n,\tfrac {k+1} n\}\times {\mathbb {G}}_{n,0}^{d-1}\,.\) Then, (28) and (31) imply (24) using (a slightly generalized version of) the closure of the supermodular ordering under mixtures given by Shaked and Shantikumar (2007) [Theorem 9.A.9.(d)].

To show (29), let us fix \(u_{1}=\tfrac k n\,.\) Then, we calculate

where the first equality follows from (27), the first inequality is Jensen’s inequality, the second inequality is due to (25). Equality (33) holds because En is a grid copula and does not depend on i, the third equality holds by definition of \(\Delta _{n}^{2}\,,\) and the last equality is true because En is a grid copula, thus 2-increasing, and hence \(\Delta _{n}^{2} E_{n}(\cdot,t)\) is increasing for all \(t\in {\mathbb {G}}_{n}^{1}\,.\)

Then, from (32) and (34) it follows for the k-th columns of the matrices that

where the equality holds true due to (27). This means that

holds. Further, \(C_{n,k}^{u_{1}}\) corresponds to a quasi-comonotonic signed measure in \({\mathbb {M}}_{d}^{1}\) with univariate marginals given by \(n\Delta _{n}^{2} D_{n,k}^{i}(\cdot,u_{1})\leq 1\,,\) and \(C_{n,k+1}^{u_{1}}\) corresponds to a comonotonic probability distribution. Thus, we obtain from Lemma 2 that (29) holds.

Next, we show (30). Due to (29) and Proposition 2, there exists a finite number of reverse Δ-monotone transfers that transfer \(C_{n,k}^{u_{1}}\) to \(C_{n,k+1}^{u_{1}}\,,\) i.e., there exist \(m\in {\mathbb {N}}\) and a finite sequence \(\left (P_{l}^{u_{1}}\right)_{1\leq l \leq m}\) of signed measures on \({\mathbb {G}}_{n}^{d-1}\) such that

Since the univariate margins of \(C_{n,k}^{u_{1}}\) and \(C_{n,k+1}^{u_{1}}\) do not coincide, some of the transfers \(\left (\mu _{l}^{u_{1}}\right)_{l}\) must be one-dimensional, see Remark 5. Each one-dimensional transfer \(\mu _{l}^{u_{1}}\) transports mass from one point \(u^{l}=\left (u_{2}^{l},\ldots,u_{d}^{l}\right)\in {\mathbb {G}}_{n}^{d-1}\) to another point \(v^{l}=\left (v_{2}^{l},\ldots,v_{d}^{l}\right)\in {\mathbb {G}}_{n}^{d-1}\) such that \(v_{\iota }^{l}< u_{\iota }^{l}\) for an ι∈{2,…,d} and \(u_{j}^{l}=v_{j}^{l}\) for all j≠ι, i.e., \(\mu _{l}^{u_{1}}=\eta ^{l} \left (\delta _{v^{l}}-\delta _{u^{l}}\right)\) is indicated by

for some ηl>0. Since applying mass transfers is commutative, we first choose to apply all of these one-dimensional reverse Δ-monotone transfers. Because δ-dimensional Δ-monotone transfers leave the univariate marginals unchanged for δ≥2, see Remark 5, the univariate margins of \(C_{n,k}^{u_{1}}\) must be adjusted to the univariate margins of \(C_{n,k+1}^{u_{1}}\) having applied all of these one-dimensional reverse Δ-monotone transfers.

Then, since the grid copula of \(C_{n,k+1}^{u_{1}}\) is the upper Fréchet bound and hence the greatest element in the ≤uo-ordering, no further reverse Δ-monotone transfer is possible. Thus, \(C_{n,k+1}^{u_{1}}\) is reached from above having applied only one-dimensional Δ-monotone transfers \(\mu _{l}^{u_{1}}\,,\) 1≤l≤m−1, on \(P_{C_{n,k}^{u_{1}}}\,,\) i.e.,

For all reverse Δ-monotone transfers \(\mu _{l}^{u_{1}},\phantom {\dot {i}\!}\) consider its corresponding reverse transfer \(\mu _{l}^{u_{1}+1/n}:=-\mu _{l}^{u_{1}}\phantom {\dot {i}\!}\) on \({\mathbb {G}}_{n}^{d-1}\) indicated by \(\phantom {\dot {i}\!}\eta ^{l} \delta _{v^{l}}\to \eta ^{l} \delta _{u^{l}}\,.\) Define

The transfers \(\phantom {\dot {i}\!}\left (\mu _{l}^{u_{1}+1/n}\right)_{l}\) are one-dimensional Δ-monotone transfers. Then, it holds true that they adjust the univariate marginals of \(\phantom {\dot {i}\!}P_{C_{n,k}^{u_{1}+1/n}}\) to the univariate marginals of \(P_{C_{n,k+1}^{u_{1}+1/n}}.\phantom {\dot {i}\!}\) This can be seen because only two entries (in column k) of matrix ι are changed by the mass transfer \(\mu _{l}^{u_{1}}.\phantom {\dot {i}\!}\) All other columns and matrices j≠ι are unaffected by this transfer. From (28) follows that exactly the reverse transfers \(\mu _{l}^{u_{1}+1/n}\phantom {\dot {i}\!}\) applied simultaneously on the corresponding entries in column k+1 of mass matrix ι guarantee the uniform margin condition (26) to stay fulfilled. Having applied all transfers μl, then each column j≠k+1 of the mass matrix \(d_{n,k}^{i}\) is adjusted to column j of the mass matrix \(d_{n,k+1}^{i}\) for all 2≤i≤d. But this also means that column k+1 of the mass matrix \(d_{n,k}^{i}\) must be adjusted to column k+1 of \(d_{n,k+1}^{i}\) due to the uniform margin condition.

Since applying the one-dimensional transfers \(\phantom {\dot {i}\!}\mu _{l}^{u_{1}+1/n}\) on \(\phantom {\dot {i}\!}P_{C_{n,k}^{u_{1}+1/n}}\) (which is comonotonic) can change the dependence structure, the signed measure \(P_{m}^{u_{1}+1/n}\) is not necessarily quasi-comonotonic, i.e., \(P_{m}^{u_{1}+1/n}\phantom {\dot {i}\!}\) does not necessarily coincide with \(P_{C_{n,k+1}^{u_{1}+1/n}}\) (which is quasi-comonotonic). We show that

Since \(C_{n,k}^{u_{1}}\leq _{lo} C_{n,k+1}^{u_{1}}\,,\) see (35), it also holds that

where we use that

for all 2≤i≤d. By construction of \(\left (d^{i}_{n,l}\right)_{1\leq l\leq n}\,,\) it follows that

This implies

But this means that \(C_{n,k}^{u_{1}+1/n}\geq _{lo} C_{n,k+1}^{u_{1}+1/n}\,.\) Due to (23), it holds that \(C_{n,k}^{u_{1}+1/n}\) is comonotonic and \(C_{n,k+1}^{u_{1}+1/n}\) is quasi-comonotonic with univariate marginal measure generating functions \(n\Delta _{n}^{2} D_{n,k+1}^{i}\left (\cdot,\tfrac {k+1}n\right)\leq 1\,.\) Thus, Proposition 2 yields (30).

Further, (30) and Proposition 2 imply that there exist \(m'\in {\mathbb {N}}\) and a finite number of reverse Δ-monotone transfers \(\phantom {\dot {i}\!}(\gamma _{l})_{1\leq l \leq m'}\) that adjust \(P_{C_{n,k+1}^{u_{1}+1/n}}\phantom {\dot {i}\!}\) to \(\phantom {\dot {i}\!}P_{C_{n,k}^{u_{1}+1/n}}\,.\) With the same argument as above, these transfers are one-dimensional. Further, the reverse transfers \(\phantom {\dot {i}\!}(\gamma _{l}^{r})_{1\leq l \leq m'}\,,\) where \(\gamma _{l}^{r}=-\gamma _{l}\,,\) correspond to the Δ-monotone transfers \(\phantom {\dot {i}\!}\left (\mu _{l}^{u_{1}+1/n}\right)_{1\leq l \leq m}\) that adjust the margins of \(C_{n,k}^{u_{1}+1/n}\phantom {\dot {i}\!}\) to the margins of \(\phantom {\dot {i}\!}C_{n,k+1}^{u_{1}+1/n}\,.\) This yields m=m′,\(\sum _{l=1}^{m-1} \mu _{l}^{u_{1}+1/n} =\sum _{l=1}^{m'-1} \gamma _{l}^{r}\phantom {\dot {i}\!}\) and thus \(P_{m}^{u_{1}+1/n}=P_{C_{n,k+1}^{u_{1}+1/n}}\,,\) which proves (39). Hence, (38) yields

It remains to show (31). Each transfer \(\mu _{l}^{u_{1}}\,,\,,\) resp.„ \(\mu _{l}^{u_{1}+1/n}\) on \({\mathbb {G}}_{n}^{d-1}\) can be extended to a reverse Δ-monotone, resp., Δ-monotone transfer μl,r, resp., μl on \(\{u_{1}\}\times {\mathbb {G}}_{n}^{d-1}\,,\) resp., \(\{u_{1}+\tfrac 1 n\}\times {\mathbb {G}}_{n}^{d-1}\,,\) indicated by

Then, for each l∈{1,…,m−1}, applying the transfers μl,r and μl in (41) simultaneously yields exactly a transfer νl on \(\{u_{1},u_{1}+\tfrac 1 n\}\times {\mathbb {G}}_{n}^{d-1}\) between (u1,ul) and \(\left (u_{1}+\tfrac 1 n,v^{l}\right)\,,\) indicated by

Each transfer νl is a supermodular transfer. Denote by ε{x} the one-point probability measure in x. Then, finally, we obtain

which implies (31) using Proposition 2. The first and last equality hold due to the definition of the measures. The second equality is given by (37) and (40), the third equality holds by the definition of μl,r, resp., μl, and the fourth equality holds true by the definition of νl.\(\square \)

Remark 6

-

(a)

The proof is based on an approximation by finite sequences of signed grid copulas that fulfill the conditioning argument in (28)–(31). Further, we use the necessary condition that the lower orthant ordering holds true–indeed, (32) and (34) yield Cn,k≤loCn,k+1–in order to show that the supermodular ordering is also fulfilled.

-

(b)

The condition that the upper bound E for Di is a joint upper bound, i.e., it does not depend on i, is crucial for the proof. Otherwise, Eq. (33) can fail, see also (11). In general, it holds that

$$\begin{array}{@{}rcl@{}} D^{i}\leq_{lo} E^{i} ~\forall i ~\not \Longrightarrow ~M^{2} \vee D^{1} \vee \cdots \vee D^{d} \leq_{lo} M^{2} \vee E^{1} \vee \cdots \vee E^{d}\,. \end{array} $$For a counterexample assume that D1=D2<loE1<loE2. Then, it holds

$$M^{2}\vee D^{1} \vee D^{2} (1,\cdot,\cdot)=M^{2} >_{lo} E^{1}\vee E^{2} = M^{2}\vee E^{1}\vee E^{2} (1,\cdot,\cdot)\,,$$using the marginalization and the maximality property of the upper product, see Ansari and Rüschendorf (2018), Proposition 2.4, which yields a contradiction to M2∨D1∨D2≤loM2∨E1∨E2.

-

(c)

While in the proof the \(D_{n,k}^{i}\,,\) 1≤k≤n, can be signed grid copulas with \(\Delta _{n}^{2} D_{n,k}^{i}(u_{i},t)\leq \tfrac 1 n\) for all \((u_{i},t)\in {\mathbb {G}}_{n,0}^{2}\,,\) it is necessary that En is a grid copula and not only a signed grid copula. Otherwise, both monotonicity properties in (33) and (34) can fail.

We illustrate the idea of the proof with an example for n=4 and d=3 :

Example 1

Let \(D^{2}_{4},D^{3}_{4},E_{4}\) be 4-grid copulas given through the mass matrices \(d^{2}_{4},d^{3}_{4}\,,\) resp., e4 by

Then, we observe that \(D^{2}_{4},D^{3}_{4}\leq _{lo} E_{4}\,.\) Consider the signed 4-grid copulas \(D_{4,l}^{i}\,,\) 1≤l≤4, i=2,3, in Fig. 1 constructed by (23). The conditional distribution of \(M^{2}_{4}\vee D^{2}_{4,1}\vee D^{3}_{4,1}\) under \(u_{1}=\tfrac 1 4\) is given by \(4\, \Delta _{4}^{2} \, M^{2}_{4}\vee D^{2}_{4,1}\vee D^{3}_{4,1}\left (\tfrac 1 4,\cdot,\cdot \right)=4 \min \limits _{i}\{\Delta _{4}^{2} D^{i}\left (\cdot,\tfrac 1 4\right)\}\,,\) where the arguments of the min-function correspond to the distributions given through the first columns of \(d_{4}^{2}\,,\) resp., \(d_{4}^{3}\,.\)

Since

the solid-marked reverse Δ-monotone transfers can be applied to adjust the first column of \(d^{2}_{4,1}\,,\) resp., \(d^{3}_{4,1}\) to the first column of e4. These transfers are balanced by the dashed-marked Δ-monotone transfers in the second columns which guarantee that the new matrices \(d_{4,2}^{2}\,,\) resp., \(d_{4,2}^{3}\) are still (signed) copula mass matrices. This procedure is repeated column by column until \(d_{4,4}^{2}=d_{4,4}^{3}=e_{4}\,.\)

4 Application to improved portfolio TVaR bounds

In this section, we determine improved Tail-Value-at-Risk bounds for a portfolio \(\Sigma _{t}=\sum _{i=1}^{8} Y_{t}^{i}\,,\)t≥0, t in trading days, of d=8 (derivatives on) assets \(S_{t}^{i}\) applying Theorem 4 about internal risk factor models. More specifically, let \(Y_{t}^{i}=S_{t}^{i}\) for i=1,…,6 and \(Y_{t}^{i}=(S_{t}^{i}-K^{i})_{+}\) for i=7,8, where K7=70 and K8=10. In this application, \((S_{t}^{i})_{t\geq 0}\) denotes the asset price process of Audi (i=1), Allianz (i=2), Daimler (i=3), Siemens (i=4), Adidas (i=5), Volkswagen (i=6), SAP (i=7), resp., Deutsche Bank (i=8).

We aim to determine improved TVaR bounds for ΣT for T=1 year=254 trading days, resp., T=2 years=508 trading days. The underlying process \(S_{t}=(S_{t}^{1},\ldots,S_{t}^{8})\) is modeled by an integrable exponential process St=S0 exp(Lt) under the following assumptions:

Let \(m\in {\mathbb {N}}\) and 0=t0<t1<⋯<tm=T with \(t_{i}-t_{i-1}=\tfrac T m\) for 1≤i≤m.

- (I)

The component processes \((L_{t}^{i})_{t\geq 0}\) are Lévy processes for all i.

- (II)

The increments \((\xi _{k}^{1},\ldots,\xi _{k}^{d}):=(L_{t_{k}}^{1}-L_{t_{k-1}}^{1},\ldots,L_{t_{k}}^{d}-L_{t_{k-1}}^{d})\,,\) 1≤k≤m, are independent in k (but not necessarily stationary).

- (III)

For all k, there exists a bivariate copula \(E^{k}\in \mathcal {C}_{2}\) such that \(C_{\xi _{k}^{i},\xi _{k}^{1}}\leq _{lo} E^{k}\) for all 2≤i≤d.

Assumptions (I)–(III) are consistent. Assumption (I) is a standard assumption on the log-increments of \((S_{t}^{i})_{t\geq 0}\) while Assumption (II) generalizes the dependence assumptions for multivariate Lévy models because neither multivariate stationarity nor independence for all increments is assumed. Assumption (III) reduces the dependence structure between the k-th log-increment of the i-th component and the k-th log-increment of the first component (which is the internal risk factor) by a subclass \({\mathcal {S}}_{k}^{i}=\{C\in \mathcal {C}_{2}|C\leq _{lo} E^{k}\}\) of bivariate copulas.

Then, Theorem 4 yields improved bounds in convex order for the portfolio ΣT if the claims \(Y_{T}^{i}\) are of the form \(Y_{T}^{i}=\psi _{i}(S_{T}^{i})=\psi _{i}(\exp (L_{T}^{i}))=\psi _{i}\left (\exp \left (\sum _{k=1}^{m} \xi _{k}^{i}\right)\right)\) with ψi increasing convex.

For the estimation of the distribution of \(S_{T}^{i}\,,\) we make the following specification of Assumption (4):

- (1)

Each \(\left (S_{t}^{i}\right)_{t\geq 0}\,,\)i=1,…,8, follows an exponential NIG process, i.e.,

$$\begin{array}{@{}rcl@{}} S_{t}^{i}=S_{0}^{i}\exp\left(L_{t}^{i}\right)\,~~t\geq 0\,, \end{array} $$where \(S_{0}^{i}>0\) and where each \(\left (L_{t}^{i}\right)_{t\geq 0}\) is an NIG process with parameters αi,βi,δi,νi.

For the estimation of upper bounds in supermodular order for the increments \(\left (\xi _{k}^{1},\ldots,\xi _{k}^{8}\right)\), we specify Assumption (I) as follows:

- (3)

For fixed ν∈(2,∞], the copula Ek in Assumption (III) is given by a t-copula with some correlation parameter ρk∈[−1,1] (which we specify later) and ν degrees of freedom, i.e., \(E^{k}=C_{\nu }^{\rho _{k}}\,.\)

We make use of the relation between the (pseudo-)correlation parameter ρ of elliptical copulas and Kendall’s τ given by \(\rho (\tau)=\sin \left (\tfrac \pi 2 \tau \right)\,,\) see McNeil et al. (2015) [Proposition 5.37], because Kendall’s rank correlation does not depend on the specified univariate marginal distributions in contrast to Pearson’s correlation. Thus, in order to determine a reasonable value for ρk, we estimate an upper bound for \(\tau _{k}:=\max _{2\leq i \leq 8}\{\tau _{k}^{i}\}\,,\) where \(\tau _{k}^{i}:=\tau \left (C_{\xi _{k}^{i},\xi _{k}^{1}}\right)\,.\) Since it is not possible to determine the dependence structure of each increment from a single observation, we estimate \(\tau _{k}^{i}\) from a sample of past observations. To do so, we assume that the dependence structure of \(\left (\xi _{k}^{i},\xi _{k}^{1}\right)\) does not jump too rapidly to strong positive dependence in a short period of time as follows:

- (2)

For \(n\in {\mathbb {N}}\,,\) define the averaged correlations over the past n time points at time k by \(\tau _{k,n}^{i}:=\tfrac 1 n \sum _{j=0}^{n-1}\tau _{k-n+j}^{i}\,,\) for k>n. Then, we assume that

$$\begin{array}{@{}rcl@{}} \tau_{k}=\max_{2\leq i \leq d}\{\tau_{k}^{i}\}\leq \max_{2\leq i \leq d}\{\tau_{k,n}^{i}\}+\epsilon_{k} \end{array} $$(42)for some error εk≥0 (which we fix later).

The above assumptions include the basic assumptions of multivariate exponential Lévy models because the stationarity condition in Assumption (II) yields (42). Further, ρk=1 yields Ek=M2 which means that Assumption (III) is trivially fulfilled in this case. Note that in this application the dependence constraints are allowed to come from quite a big subclass of copulas (see Remark 4).

Under the Assumptions (I)–(III), the dependence structure of \(\left (Y_{T}^{i},Y_{T}^{1}\right)\) is not uniquely determined for i=2,…,8. Thus, we need to solve the constrained maximization problem (5) to obtain improved upper bounds compared to applying (2) for partially specified risk factor models.

We mention that the structure of this section and the underlying data are similar to Ansari and Rüschendorf (2018), Section 4. But, there, the risk factor (which is the “DAX”) is an external risk factor which is not part of the portfolio, whereas in our application the internal risk factor “AUDI” is part of the underlying portfolio. This allows use of the simplified ordering conditions established in this paper. Further, the improved TVaR-bounds in this application are based on large sets of dependence specifications of the daily log-returns (see Assumption (III) and Remark 4), whereas in Ansari and Rüschendorf (2018) all the dependence constraints on the time- T log-returns are assumed to come from a one-parametric family of copulas.

4.1 Application to real market data

As data set, we take the daily adjusted close data from “Yahoo! Finance” from 23/04/2008 to 20/04/2018. It contains the values of 2540 trading days for 8 assets (with some missing data) which we denote by \(\left (s_{k}^{1},\ldots,s_{k}^{8}\right)_{1\leq k \leq 2540}\,.\) More precisely, \(\left (s_{k}^{1}\right)_{k}\) are the adjusted close data of “AUDI AG (NSU.DE)”, \(\left (s_{k}^{2}\right)_{k}\) of “Allianz SE (ALV.DE)”, \(\left (s_{k}^{3}\right)_{k}\) of “Daimler AG (DAI.DE)”, \(\left (s_{k}^{4}\right)_{k}\) of “Siemens Aktiengesellschaft (SIE.DE)”, \(\left (s_{k}^{5}\right)_{k}\) of “adidas AG (ADS.DE)”, \(\left (s_{k}^{6}\right)_{k}\) “Volkswagen AG (VOW.DE)”, \(\left (s_{k}^{7}\right)_{k}\) of “SAP SE (SAP.DE)” and \(\left (s_{k}^{8}\right)_{k}\) of “Deutsche Bank Aktiengesellschaft (DBK.DE)”.

We choose \(\hat {\tau }_{k,n}^{i}:=\hat {\tau }\left (\left (x_{k-n+j}^{i},x_{k-n+j}^{1}\right)_{0\leq j< n}\right)\) as an estimator for \(\tau _{k,n}^{i}\) in Assumption (4), where \(\hat {\tau }\) denotes Kendall’s rank correlation coefficient (see, e.g., (McNeil et al. (2015), equation (5.50))) and \(\left (x_{k}^{i}\right)_{2\leq k \leq 2540}\) are the historical log-returns of the i-th component, i.e., \(x_{k}^{i}:=\log s_{k}^{i}-\log s_{k-1}^{i}\) for 2≤k≤2540. Further, we choose n=30 and εk=ε=0.05 in (42).

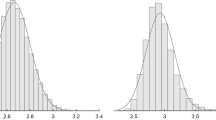

In Fig. 2, the historical estimates \(\hat {\tau }_{k,30}\) are illustrated for 31≤k≤2540 and for i=2,…,8. Further, the plot at the bottom-right shows the maximum of the historical estimates \(\hat {\tau }_{k,30}=\max _{2\leq i \leq 8}\{\hat {\tau }_{k,30}^{i}\}\) (solid graph) as an estimator for τk, and it also shows the estimated historical upper bound \(\overline {\hat {\rho }_{k}}:= \max _{2\leq i\leq 8}\{\rho \left (\hat {\tau }_{k,n}^{i}+\epsilon _{k}\right)\}\) (dotted graph) with error εk for ρk, 31≤k≤2540, see Assumption 4.

Plots of estimated Kendall’s rank correlation coefficients \(\hat {\tau }_{k,n}^{i}\,,\) for i=2,…,8, for n=30, and for different k; at the bottom right: \(\hat {\tau }_{k,30}=\max _{2\leq i \leq 8}\{\hat {\tau }_{k,30}^{i}\}\) (solid) and \(\overline {\hat {\rho }}_{k}=\max _{2\leq i \leq 8}\{\rho (\hat {\tau }_{k,30}^{i}+\epsilon _{k})\}\) (dotted) for different k and εk=ε=0.05.

As we observe from Fig. 2 there is no strong correlation between the log-returns \(\left (x_{k}^{1}\right)_{k}\) of “AUDI” and the log-returns \(\left (x_{k}^{i}\right)_{k}\,,\)i≠1, of the other assets. We use this property to apply Theorem 4 as follows.

For the prediction of an improved worst-case upper bound for ΣT w.r.t. convex order for T=1 year, resp., T=2 years, we choose the worst-case period of the historical estimates \(\overline {\hat {\rho }}_{k}\) for ρk with a length of m=254 trading days, resp., m=508 trading days. We identify visually that \((\overline {\hat {\rho }}_{k})_{k}\) takes the historically largest values in a period of length m=254, resp., m=508 for 1797≤k≤2050, resp., 1543≤k≤2050, see the plot at the bottom right in Fig. 2. Thus, we decide on \((\overline {\hat {\rho }}_{k})_{1797\leq k \leq 2050}\,,\) resp., \((\overline {\hat {\rho }}_{k})_{1543\leq k \leq 2050}\) as the worst-case estimate for (ρk)1≤k≤254, resp., (ρk)1≤k≤508 with error εk=0.05 in (42).

Then, we obtain from Theorem 4 that

where \(\zeta _{k}^{i}\sim \xi _{k}^{i}\) for 1≤i≤8,\(C_{\zeta _{k}^{i},\zeta _{k}^{1}}=E^{k}=C_{\nu }^{\rho _{k}}\) for \(\rho _{k}=\overline {\hat {\rho }}_{2050-m+k+1}\) and 2≤i≤8, Uk∼U(0,1) and \(U^{k},\zeta _{l}^{1}\) independent for all 1≤k,l≤m. Denote by \(\tau _{\zeta _{k}^{1}}\) the distributional transform of \(\zeta _{k}^{1}\,,\) see Rüschendorf (2009), and let tν be the distribution function of the t-distribution with ν degrees of freedom. Then, it holds that

where f is given by

Note that the distribution function of (f(r,ν,Z,ε),Z), Z,ε∼U(0,1) independent, is the t-copula with correlation r and ν degrees of freedom, see Aas et al. (2009).

The Tail-Value-at-Risk at level λ (also known as Expected Shortfall) is defined by

for a real-valued random variable ζ. If ζ is integrable, then TVaRλ is a convex law-invariant risk measure, see, e.g., Föllmer and Schied (2010), which satisfies the Fatou-property. As a consequence of (4) and (43) we obtain

4.2 Empirical results and conclusion

The improved risk bounds \({\text {TVaR}}_{\lambda }\left (\Sigma _{T,(\rho _{k}),\nu }^{c}\right)\) for TVaRλ(ΣT) are compared in Table 1 with the standard comonotonic risk bound \({\text {TVaR}}_{\lambda }\left (\Sigma _{T}^{c}\right)\) (5 million simulated points) for different values of λ and ν and for T=1 year (=254 trading days), resp., T=2 years (=508 trading days).

As observed from Table 1, there is a substantial improvement of the risk bounds up to 20% for T=1 year and about 20% for T=2 years for all degrees of freedom ν of the t-copulas \(C_{\nu }^{\rho _{k}}\) and high levels of λ. For T=2 years, the improvement is even better because the two-year worst-case period for \(\overline {\hat {\rho _{k}}}\) also contains the one-year worst-case period for \(\overline {\hat {\rho _{k}}}\) where in the latter one attains higher values.

We see that the improvement is larger for higher values of ν. This can be explained by the fact that \(C_{\nu }^{\rho }\) has a higher tail-dependence for smaller values of ν, see, e.g., Demarta and McNeil (2005). Thus, for small ν, more extreme events (= realizations of the log-increments) occur more often simultaneously which sums up to a higher risk.

The results of this application clearly indicate the potential usefulness and flexibility of the comparison results for the supermodular ordering to an improvement of the standard risk bounds.

References

Aas, K., Czado, C., Frigessi, A., Bakken, H.: Pair-copula constructions of multiple dependence. Insur. Math. Econ. 44(2), 182–198 (2009).

Ansari, J.: Ordering risk bounds in partially specified factor models. University of Freiburg, Dissertation (2019).

Ansari, J., Rüschendorf, L.: Ordering results for risk bounds and cost-efficient payoffs in partially specified risk factor models. Methodol. Comput. Appl. Probab., 1–22 (2016).

Ansari, J., Rüschendorf, L.: Ordering risk bounds in factor models. Depend. Model.6.1, 259–287 (2018).

Bäuerle, N., Müller, A.: Stochastic orders and risk measures: consistency and bounds. Insur. Math. Econ. 38(1), 132–148 (2006).

Bernard, C., Vanduffel, S.: A new approach to assessing model risk in high dimensions. J. Bank. Financ. 58, 166–178 (2015).

Bernard, C., Rüschendorf, L., Vanduffel, S.: Value-at-Risk bounds with variance constraints. J. Risk. Insur. 84(3), 923–959 (2017a).

Bernard, C., Rüschendorf, L., Vanduffel, S., Wang, R.: Risk bounds for factor models. Financ. Stoch. 21(3), 631–659 (2017b).

Bernard, C., Denuit, M., Vanduffel, S.: Measuring portfolio risk under partial dependence information. J. Risk Insur. 85(3), 843–863 (2018).

Bignozzi, V., Puccetti, G., Rüschendorf, L: Reducing model risk via positive and negative dependence assumptions. Insur. Math. Econ. 61, 17–26 (2015).

Cornilly, D., Rüschendorf, L., Vanduffel, S.: Upper bounds for strictly concave distortion risk measures on moment spaces. Insur Math Econ. 82, 141–151 (2018).

de Schepper, A., Heijnen, B.: How to estimate the Value at Risk under incomplete information. J. Comput. Appl. Math. 233(9), 2213–2226 (2010).

Demarta, S., McNeil, A. J.: The t copula and related copulas. Int. Stat. Rev. 73(1), 111–129 (2005).

Denuit, M., Genest, C., Marceau, E.: Stochastic bounds on sums of dependent risks. Insur. Math. Econ. 25(1), 85–104 (1999).

Embrechts, P., Puccetti, G.: Bounds for functions of dependent risks. Financ. Stoch. 10(3), 341–352 (2006).

Embrechts, P., Puccetti, G., Rüschendorf, L.: Model uncertainty and VaR aggregation. J. Banking Financ. 37(8), 2750–2764 (2013).

Embrechts, P., Puccetti, G., Rüschendorf, L., Wang, R., Beleraj, A.: An academic response to basel 3.5. Risks. 2(1), 25–48 (2014).

Embrechts, P., Wang, B., Wang, R.: Aggregation-robustness and model uncertainty of regulatory risk measures. Financ. Stoch. 19(4), 763–790 (2015).

Föllmer, H., Schied, A.: Convex and coherent risk measures.Encycl. Quant. Financ., 355–363 (2010).

Goovaerts, M. J., Kaas, R., Laeven, R. J. A.: Worst case risk measurement: back to the future?Insur. Math. Econ. 49(3), 380–392 (2011).

Hürlimann, W.: Analytical bounds for two Value-at-Risk functionals. ASTIN Bull. 32(2), 235–265 (2002).

Hürlimann, W.: Extremal moment methods and stochastic orders. Bol. Asoc. Mat. Venez. 15(2), 153–301 (2008).

Kaas, R., Goovaerts, M. J.: Best bounds for positive distributions with fixed moments. Insur. Math. Econ. 5, 87–95 (1986).

McNeil, A. J., Frey, R., Embrechts, P.: Quantitative Risk Management. Concepts, Techniques and Tools., second edn. Princeton University Press, Princeton (2015).

Müller, A.: Stop-loss order for portfolios of dependent risks. Insur. Math. Econ. 21(3), 219–223 (1997).

Müller, A.: Duality theory and transfers for stochastic order relations. In: Stochastic orders in reliability and risk. Springer, New York (2013).

Müller, A., Scarsini, M.: Stochastic comparison of random vectors with a common copula. Math. Oper. Res. 26(4), 723–740 (2001).

Müller, A., Scarsini, M.: Stochastic order relations and lattices of probability measures. SIAM J. Optim. 16(4), 1024–1043 (2006).

Müller, A., Stoyan, D.: Comparison Methods for Stochastic Models and Risks. Wiley, Chichester (2002).

Nelsen, R. B.: An introduction to copulas. 2nd ed. Springer, New York (2006).

Nelsen, R. B., Quesada-Molina, J. J., Rodríguez-Lallena, J. A., Úbeda-Flores, M.: Bounds on bivariate distribution functions with given margins and measures of association. Commun. Stat. Theory Methods. 30(6), 1155–1162 (2001).

Puccetti, G., Rüschendorf, L.: Bounds for joint portfolios of dependent risks. Stat. Risk. Model. Appl. Financ. Insur. 29(2), 107–132 (2012a).

Puccetti, G., Rüschendorf, L.: Computation of sharp bounds on the distribution of a function of dependent risks. J. Comput. Appl. Math. 236(7), 1833–1840 (2012b).

Puccetti, G., Rüschendorf, L.: Sharp bounds for sums of dependent risks. J. Appl. Probab. 50(1), 42–53 (2013).

Puccetti, G., Rüschendorf, L., Small, D., Vanduffel, S.: Reduction of Value-at-Risk bounds via independence and variance information. Scand. Actuar. J. 2017(3), 245–266 (2017).

Rüschendorf, L.: On the distributional transform, Sklar’s theorem, and the empirical copula process. J. Stat. Plann. Inference. 139(11), 3921–3927 (2009).

Rüschendorf, L.: Mathematical Risk Analysis. Springer, New York (2013).

Rüschendorf, L.: Improved Hoeffding–Fréchet bounds and applications to VaR estimates. In: Copulas and Dependence Models with Applications. Contributions in Honor of Roger B. Nelsen. In: Úbeda Flores, M., de Amo Artero, E., Durante, F., Fernández Sánchez, J. (eds.), pp. 181–202. Springer, Cham (2017a). https://doi.org/10.1007/978-3-319-64221-5_12.

Rüschendorf, L.: Risk bounds and partial dependence information. In: From Statistics to Mathematical Finance, pp. 345–366. Springer, Festschrift in honour of Winfried Stute, Cham (2017b).

Rüschendorf, L., Witting, J.: VaR bounds in models with partial dependence information on subgroups. Depend Model. 5, 59–74 (2017).

Shaked, M., Shantikumar, J. G.: Stochastic Orders. Springer, New York (2007).

Tian, R.: Moment problems with applications to Value-at-Risk and portfolio management. Georgia State University, Dissertation (2008).

Acknowledgments

We thank the reviewers for their comments that greatly improved the manuscript.

Author information

Authors and Affiliations

Contributions

Both authors read and approved the final manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare that they have no competing interests.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Ansari, J., Rüschendorf, L. Upper risk bounds in internal factor models with constrained specification sets. Probab Uncertain Quant Risk 5, 3 (2020). https://doi.org/10.1186/s41546-020-00045-y

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/s41546-020-00045-y