Abstract

Background

Artificial intelligence (AI) systems are proposed as a replacement of the first reader in double reading within mammography screening. We aimed to assess cancer detection accuracy of an AI system in a Danish screening population.

Methods

We retrieved a consecutive screening cohort from the Region of Southern Denmark including all participating women between Aug 4, 2014, and August 15, 2018. Screening mammograms were processed by a commercial AI system and detection accuracy was evaluated in two scenarios, Standalone AI and AI-integrated screening replacing first reader, with first reader and double reading with arbitration (combined reading) as comparators, respectively. Two AI-score cut-off points were applied by matching at mean first reader sensitivity (AIsens) and specificity (AIspec). Reference standard was histopathology-proven breast cancer or cancer-free follow-up within 24 months. Coprimary endpoints were sensitivity and specificity, and secondary endpoints were positive predictive value (PPV), negative predictive value (NPV), recall rate, and arbitration rate. Accuracy estimates were calculated using McNemar’s test or exact binomial test.

Results

Out of 272,008 screening mammograms from 158,732 women, 257,671 (94.7%) with adequate image data were included in the final analyses. Sensitivity and specificity were 63.7% (95% CI 61.6%-65.8%) and 97.8% (97.7-97.8%) for first reader, and 73.9% (72.0-75.8%) and 97.9% (97.9-98.0%) for combined reading, respectively. Standalone AIsens showed a lower specificity (-1.3%) and PPV (-6.1%), and a higher recall rate (+ 1.3%) compared to first reader (p < 0.0001 for all), while Standalone AIspec had a lower sensitivity (-5.1%; p < 0.0001), PPV (-1.3%; p = 0.01) and NPV (-0.04%; p = 0.0002). Compared to combined reading, Integrated AIsens achieved higher sensitivity (+ 2.3%; p = 0.0004), but lower specificity (-0.6%) and PPV (-3.9%) as well as higher recall rate (+ 0.6%) and arbitration rate (+ 2.2%; p < 0.0001 for all). Integrated AIspec showed no significant difference in any outcome measures apart from a slightly higher arbitration rate (p < 0.0001). Subgroup analyses showed higher detection of interval cancers by Standalone AI and Integrated AI at both thresholds (p < 0.0001 for all) with a varying composition of detected cancers across multiple subgroups of tumour characteristics.

Conclusions

Replacing first reader in double reading with an AI could be feasible but choosing an appropriate AI threshold is crucial to maintaining cancer detection accuracy and workload.

Similar content being viewed by others

Background

Early detection with mammography screening along with best practice treatment are recognized as crucial elements in reducing breast cancer-specific mortality and morbidity [1], and most European and high-income countries have implemented organised mammography screening programmes [2, 3]. The rollout of the Danish screening programme for women aged 50–69 years was completed in 2010, and the programme has shown high compliance with international standards [4, 5], based on quality assurance indicators in conformity with European guidelines [6]. However, widespread capacity issues and shortage of breast radiologists propose a threat to the continued feasibility and efficiency of the screening programme. Addressing these challenges, The Danish Health Authority has recommended replacing first reading breast radiologists in the double reading setting with an artificial intelligence (AI) system, if shown efficient [7].

Deep learning-based AI decision support systems have in recent years gained popular interest as a potential solution to resource scarcity within mammography screening as well as improving cancer detection. Strong claims have been made that an AI system could replace trained radiologists [8, 9]. Multiple validation studies have reported a standalone AI cancer detection accuracy at a level comparable to or even exceeding current standard for breast cancer screening [10,11,12]. While the results might seem promising, these are yet to be replicated in large real-life screening populations. Moreover, the quantity and quality of the existing evidence has been deemed insufficient [13], and recent guidelines by the European Commission Initiative on Breast Cancer have recommended against single reading supported with AI [14].

In this external validation study, we aimed to investigate the accuracy of a commercially available AI system for cancer detection in a Danish mammography screening population with at least two years of follow-up. The AI system was evaluated both in a simulated Standalone AI scenario and a simulated AI-integrated screening scenario replacing first reader, compared with the first reader and double reading with arbitration.

Methods

Study design and population

This study was designed as a retrospective, multicentre study on the accuracy of an AI system for breast cancer detection in mammography screening. The study is reported in accordance with Standards for Reporting of Diagnostic Accuracy Studies (STARD) statement of 2015 (Supplementary eMethod 1) [15]. Ethical approval was obtained from the Danish National Committee on Health Research Ethics (identifier D1576029) which waived the need for individual informed consent.

The study population was a consecutive cohort from all breast cancer screening centres in the Region of Southern Denmark (RSD) in the cities Aabenraa, Esbjerg, Odense, and Vejle. The study sites cover all the RSD, one of five Danish regions, with approximately 1.2 million inhabitants, comprising 20% of the entire population of Denmark and constituting an entire screening population.

All women who participated in screening between Aug 4, 2014, and Aug 15, 2018, in RSD were eligible for inclusion. The majority were women between 50 and 69 years participating in the standardised two-year interval screening programme. A small group with previous breast cancer or genetic predisposition to breast cancer were biennially screened from the age of 70–79 years or from 70 years of age until death, respectively.

Exclusion criteria were insufficient follow-up until cancer diagnosis, next consecutive screening, or at least two years after the last performed screening in the inclusion period, insufficient image quality or lacking images, and unsupported data type by the AI system.

Data sources and extraction

A complete list of the study population including reader decisions and site of screening was locally extracted from the local Radiological Information System using the study participants’ unique Danish Civil Personal Register numbers. Image data was extracted in raw DICOM format from the joint regional radiology Vendor Neutral Archive. All screening examinations had been acquired with a single mammography vendor, Siemens Mammomat Inspiration (Siemens Healthcare A/S, Erlangen, Germany). The standard screening examination was two views per breast, but could be less, e.g. in case of prior mastectomy, or more if additional images were taken, e.g. due to poor image quality.

Information on cancer diagnosis and histological subtype, with tumour characteristics for invasive cancers including tumour size, malignancy grade, TNM stage, lymph node involvement, estrogen receptor (ER) status, and HER2 status, was acquired through matching with the Danish Clinical Quality Program – National Clinical Registries (RKKP), specifically the Danish Breast Cancer Cooperative Group database and the Danish Quality Database on Mammography Screening [4, 16]. Inconsistencies in the data were, if possible, resolved by manually searching the electronic health records.

Screen reading

The screen reading consisted of independent, blinded double reading by 22 board-certified breast radiologists with experience in screen reading ranging from newly trained to over 20 years of experience. There was no fixed designation of the readers, however, the second reader is usually a senior breast radiologist. The reading assessments were ultimately classified into a binary outcome: either normal (continued screening) or abnormal (recall). Cases of disagreement were sent to a decisive third reading, i.e. arbitration, by the most experienced screening radiologist who had access to the first two readers’ decisions, although the arbitrator could also have been second reader of the same examination. Diagnostic work-up of recalled women was performed at dedicated breast imaging units at the study sites.

AI system

As index test for this study, we used the commercially available CE marked and FDA cleared AI system Transpara version 1.7.0 (ScreenPoint Medical BV, Nijmegen, Netherlands), a software-only device based on deep convolutional neural networks intended for use as concurrent reading aid for breast cancer detection on mammography. The model was trained and tested using large databases acquired through multivendor devices from institutions across the world [10, 17]. The data used in this study has never been used for training, validation or testing of any AI models.

Transpara was installed on an on-premises dedicated server system to which only the local investigators had access. All screening mammograms meeting Transpara’s DICOM conformance statement were sent for processing. Transpara assigned a per-view regional prediction score from 1 to 98 denoting the likelihood of cancer, with 98 indicating the highest likelihood of the finding being malignant. The maximum of the view-level raw scores gave a total examination score, Transpara exam score, on a scale from 0 to 10 with five decimal points.

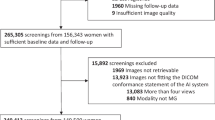

Evaluation scenarios

The detection accuracy of the AI system was assessed in two scenarios: (1) “Standalone AI” where AI accuracy was evaluated against that of the first reader, and (2) “AI-integrated screening”, a simulated screening setup, in which the AI replaced the first reader, compared against the combined reading outcome, i.e. the observed screen reading decision of double reading with arbitration in the standard screening workflow without AI (Fig. 1). In the AI-integrated screening scenario, the original decisions of the second reader and arbitrator were applied. In cases of disagreement between the AI and second reader, where an arbitration was not originally performed at screening, a simulated arbitrator was defined with arbitration decisions at an accuracy level which approximated the original arbitrator’s sensitivity and specificity from the study sample. These simulated arbitration decisions were applied as the arbitration outcome in cases lacking an original arbitration decision.

Comparison between the standard screening workflow and the study scenarios

(A) The standard screening workflow in which the combined reading outcome of each mammogram is the result of independent, blinded double reading with arbitration for discordant readings. (B) The Standalone AI scenario in which the AI system replaces all readers, and the AI detection accuracy is compared to that of the first reader in the study sample. (C) The AI-integrated screening scenario in which AI replaces the first reader in the standard screening workflow, and the detection accuracy of the simulated screening setup is compared to that of the combined reading outcome from the study sample.

In both study scenarios (A) and (B), a binary AI score was defined by applying two different thresholds for the AI decision outcome. The cut-off points were chosen by matching at the mean sensitivity and specificity of the first reader outcome, AIsens and AIspec, respectively. If the AI and second reader decisions were discordant in the AI-integrated screening scenario and an arbitration decision was lacking in the original dataset, the arbitration decision outcome was simulated to match the same accuracy level of the original arbitrator from the study sample

As the AI system is not intended for independent reading and does not have an internally prespecified threshold to classify images, the Transpara exam score was in both scenarios dichotomized into an AI score that would enable comparability with the radiologists. In this study, two different thresholds were explored as test abnormality cut-off points, AIsens and AIspec, which were set to match the mean sensitivity and specificity, respectively, of the first reader outcome from the study sample. Outcomes above the threshold were considered as recalls. There is a lack of consensus in the literature on how to determine an appropriate test threshold [13], but by matching the cut-off point at the first reader’s sensitivity or specificity, this would hypothetically ensure that the proposed AI-integrated screening would not entail an increase in false positive recalls or missed cancers, respectively, which could be clinically justifiable in screening practice.

Performance metrics and reference standard

In both scenarios, the measures of detection accuracy were sensitivity and specificity as coprimary endpoints, and positive predictive value (PPV), negative predictive value (NPV), recall rate, and arbitration rate as secondary endpoints. The reference standard for positive cancer outcome was determined through histopathological verification of breast malignancy including non-invasive cancer, i.e. ductal carcinoma in situ, at screening (screen-detected cancer) or up until the next consecutive screening within 24 months (interval cancer). The reference standard for negative cancer outcome was defined as cancer-free follow-up until the next consecutive screening or within 24 months. The choice of a two-years’ follow-up period for the reference standard concords with that commonly used in cancer registries and quality assessment of biennial screening programmes. However, breast cancer can be present long before it is diagnosed [18], and diagnostic work-up of AI-recalled cases is not performed to confirm the presence of such potential cancers. To take this potential bias into account and to investigate for early detection patterns, an exploratory analysis of detection accuracy was performed with inclusion of next-round screen-detected cancers (diagnosed in the subsequent screening) and long-term cancers (diagnosed > 2–7 years after screening).

Statistical analysis

Binomial proportions for the accuracy of AI and radiologists were calculated and supplemented by 95% Clopper-Pearson (‘exact’) confidence intervals (CI). AI accuracy was compared to that of radiologists using McNemar’s test or exact binomial test when discordant cells were too small. Accuracy analysis of all outcomes across radiologist position is presented in the supplementary material (eTable 1). To examine consistency of the AI accuracy among subgroup variables, detection rates were calculated by cancer subgroups. Furthermore, detection agreements and discrepancies between the radiologists and AI were investigated across cancer subgroups (Supplementary eTables 2–3). A p value of less than 0.05 was considered statistically significant. Stata/SE 17 (College Station, Texas 77,845 USA) was used for data management and analyses.

Results

Study sample and characteristics

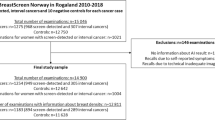

We retrieved a total of 272,008 unique screening mammograms from 158,732 women in the study population, among which 14,337 (5.3%) were excluded from the analyses (Fig. 2).

The characteristics of the 257,671 mammograms included in the analyses are summarised in Table 1. The cancer prevalence in the sample was 2014 (0.8%) of which 1517 (74.3%) were screen-detected, yielding a detection rate of 5.9 per 1000 screening mammograms and a recall rate of 2.7%.

The accuracy of the first reader in terms of sensitivity and specificity was 63.7% (95% CI 61.6%-65.8%) and 97.8% (97.7-97.8%), respectively (Table 2), which was used to choose the thresholds for the AI score. Hence, AIsens and AIspec used a Transpara exam score of 9.56858 and 9.71059, respectively. The distribution of the Transpara exam scores across the study sample has been visualised in the supplementary material (eFigure 1). The accuracy of the combined reading in terms of sensitivity and specificity was 73.9% (95% CI 72.0%-75.8%) and 97.9% (97.9-98.0%), respectively. The accuracy analysis across coprimary and secondary outcomes in both study scenarios is described in Table 2. Moreover, a comparison between the screening outcome and the reference standard (true and false positives and negatives) in both study scenarios, along with a descriptive workload analysis, is presented in the supplementary material (eTable 4).

Standalone AI accuracy

Standalone AIsens achieved a lower specificity (-1.3%) and PPV (-6.1%) and a higher recall rate (+ 1.3%) compared to first reader (p < 0.0001 for all). For the latter, this corresponded to 3369 (+ 48.3%) more recalls (Supplementary eTable 4). Standalone AIspec obtained a lower sensitivity (-5.1%; p < 0.0001) and PPV (-1.3%; p = 0.01) than first reader, while the recall rate at 2.7% was not significantly different (p = 0.24). In comparison to first reader, the cancer distribution, as detailed in Table 3, showed a higher proportion of detected interval cancers for Standalone AIsens by 100 (+ 17.8%) cancers and Standalone AIspec by 70 (+ 12.5%) cancers, while the detection of screen-detected cancers was lower by 100 (-6.8%) and 174 (-11.8%) cancers, respectively (p < 0.0001 for all). Breakdowns by cancer subgroups showed the differences to be distributed across all subgroups for both screen-detected cancers and interval cancers without any evident pattern for any of the variables (Table 4). However, subgroup analyses revealed underlying detection discrepancies between first reader and the AI system with a notable number of the AI-detected cancers being missed by first reader, and vice versa (Supplementary eTable 2).

AI-integrated screening accuracy

Integrated AIsens achieved a higher sensitivity by + 2.3% (p = 0.0004) compared to combined reading, at the cost of a lower specificity (-0.6%) and PPV (-3.9%), and higher recall rate (+ 0.6%) and arbitration rate (+ 2.2%) (p < 0.0001 for all). In absolute terms, this corresponded to 1708 recalls (+ 24.9%) and 5831 arbitrations (+ 78.4%) (Supplementary eTable 4). Integrated AIspec showed no significant difference in any of the outcome measures apart from a higher arbitration rate by + 1.1% (p < 0.0001), amounting to 2841 (+ 38.2%) arbitrations (Supplementary eTable 4). Compared to the combined reading, detection rates in relation to screen-detected cancers were lower for Integrated AIsens by 54 (-3.7%) cancers and for Integrated AIspec by 66 (-4.5%) cancers but were higher in relation to interval cancers by 100 (+ 17.8%) cancers and 79 (+ 14.1%) cancers, respectively (p < 0.0001 for all) (Table 3). Subgroup analyses showed a lower proportion of detection discrepancies compared to the Standalone AI scenario, with only few interval cancers being missed in the AI-integrated screening and detected by the combined reading, and no screen-detected cancers being missed by the combined reading (Supplementary eTable 3).

Next-round screen-detected and long-term cancers

When including next-round screen-detected cancers and long-term cancers in the accuracy analysis, the sensitivity of Standalone AI and Integrated AI with both thresholds were statistically significantly higher than first reader and combined reading, respectively (p < 0.0001 for all), with varying statistically significantly lower, higher, or no different specificity (Supplementary eTable 5). However, the sensitivity of the index test and comparator were notably lower compared to those presented in Table 2.

Discussion

Summary of findings

We achieved a large representative study sample with a cancer detection rate and recall rate in line with previous reports on screening outcome from Danish screening rounds [4, 19]. In the Standalone AI scenario, the accuracy at both AI abnormality thresholds was found statistically significantly lower than that of the first reader across most outcome measures, mainly due to lower detection of scree-detected cancers. However, the AI system had a statistically significantly higher interval cancer detection rate and a higher accuracy across most outcome measures when next-round screen-detected cancers and long-term cancers were included in the cancer outcome. In the AI-integrated screening scenario, detection accuracy was at the level of or statistically significantly higher than the combined reading, depending on the chosen threshold, only with a slightly higher arbitration rate. A statistically significantly higher recall rate was observed for Integrated AIsens but not for Integrated AIspec. A notable proportion of cancers were missed by the AI system and detected by first reader, and vice versa, although detection discrepancies were to a lesser extent evident in the AI-integrated screening scenario.

Comparison with literature

Our results on Standalone AI accuracy corroborate findings observed by Leibig and colleagues who reported significantly lower sensitivity and specificity of an in-house and commercial AI system in a standalone AI pathway compared to a single unaided radiologist, when the threshold was set to maintain the radiologist’s sensitivity [20]. Schaffter and colleagues showed significantly lower specificity by both an in-house top-performing AI system and an aggregated ensemble of top-performing AI algorithms compared to first reader and consensus reading, when sensitivity was set to match that of first reader [21]. Conversely, multiple other studies reported equal or higher standalone AI accuracy compared to human readers [10,11,12, 22], however, most had overall high risk of bias or applicability concerns according to several systematic reviews [13, 23, 24]. Numerous studies have explored different simulated screening scenarios with an AI system, for instance as reader aid or triage, and although many report higher AI accuracy, these also suffer from similar methodological limitations [13, 23, 24].

Among the possible implementation strategies within double reading, partial replacement with AI replacing one reader seems to be the preferred AI-integrated screening scenario by breast screening readers [25], although only few recent studies, other than the current, have investigated this scenario. Larsen and colleagues evaluated the same AI system tested in this study as one of two readers in a setting in which abnormal readings were sent to consensus [26]. Using different consensus selection thresholds in two scenarios yielded a lower recall rate, higher consensus rate, and overall higher sensitivity when including interval cancer. However, AI-selected cases for consensus, missing an original consensus decision in the dataset, were not included in the decision outcome of the scenarios, creating uncertainty around the reliability of the recall and accuracy estimates. Sharma and colleagues tested an in-house commercial AI system in a simulated double reading with AI as one reader, which showed non-inferiority or superiority across all accuracy metrics compared to non-blinded double reading with arbitration, although the arbitration rate was not reported [27]. The study used historical second reader decisions as arbitration outcomes in cases where the original arbitration was absent, meaning that the AI decision was not included in the comparison, which could have caused an underestimation of the differences in accuracy between the AI and the radiologists. An unpublished study by Frazer and colleagues evaluated an in-house AI system in a reader-replacement scenario in which the arbitration outcome for a missing historic arbitration was simulated by matching the retrospective third-reading performance, as in the current study [28]. Compared to double reading with arbitration, the AI-integrated screening scenario with the improved system threshold achieved higher sensitivity and specificity and a lower recall rate at the cost of a highly increased arbitration rate. Unfortunately, > 25% of the study population was excluded, mostly due to lack of follow-up, introducing a high risk of selection bias.

Methodological considerations and limitations

In addition to many studies lacking a representative study sample, comparison of results across the literature is further complicated by varying choice of comparators, reference standard, abnormality threshold levels, and inconsistency in applying accuracy measures in accordance to reporting guidelines [13, 29]. Contrary to previous research, the main strengths of this study were the unselected, consecutive population-wide cohort, availability of high-quality follow-up data with a low exclusion rate, and subspecialised breast radiologists as comparators, thereby representing a more reliable real-life population and reference standard. By simulating the arbitration decision to match the arbitrator’s accuracy, when original arbitrations were absent, we could achieve more realistic estimates of the accuracy outcomes in the AI-integrated screening scenario, although this did not take into account how AI implementation can alter radiologists’ behaviour or decisions in a clinical setting. It should be stressed that standalone applications of AI, as evaluated in this study, are for now not clinically possible nor justified due to legal and ethical limitations among others.

Our work did have several limitations. The chosen AI score cut-off points were derived based on the sample in the current study which could lead to loss of generalisability to other screening populations with a differing screening setting and workflow, diverse ethnic groups, and imaging vendors among others. For instance, the image data in the study were derived from only one mammography vendor, limiting the generalisability of results to mammograms acquired from other sources. Hence, differences or changes in a screening site’s technical setup or other factors affecting image output should be considered when deciding on a relevant AI threshold in relation to AI deployment in clinical practice. This could prospectively be resolved by having a local validation dataset or procedure in case of any such changes or variations in external or internal factors related to the AI system, through which a site-based adaptive strategy for threshold selection can be devised.

Most other limitations were related to the retrospective nature of this study, among which is the lack of diagnostic work-up on cases recalled by the AI system but not by radiologists. If these were true positive but not detected within the same screening round, the accuracy of the AI system would be underestimated. Conversely, recalls of cases without cancer at screening but with an interval cancer developing before the next round would count as true positives, and since exact AI cancer-suspected areas were not evaluated for false positive markings, AI accuracy could have been overestimated. Hence, abnormal AI predictions could be clinically significant cancers, overdiagnosed cancers, or false positives. The magnitude of such potential prediction misclassifications and thereby bias skewing the accuracy estimates is difficult to assess in mammography screening without a gold standard for all participants, such as MRI or other imaging along with biopsy, as it would be unnecessary and unethical to subject all women to comprehensive testing. Our findings of a higher detection rate of interval cancers and higher accuracy in both scenarios, when including next-round screen-detected and long-term cancers (Supplementary eTable 5), could indicate a tendency towards an underestimation of AI accuracy due to the current definition of the reference standard and the lack of a gold standard in mammography screening. However, the number of true positive AI-detected cancers might be limited in view of findings in a previous study showing that only 58% of AI-marked interval cancers, which were considered missed by radiologists or had minimal radiographic malignancy signs (i.e. false negatives), were correctly located and could potentially be detected at screening [30]. This study used an older version of the same AI system as the current study but at a threshold score of 9.01 compared to 9.57 and 9.71 for AIsens and AIspec, respectively. Furthermore, the majority of interval cancers have been reported to be comprised of true or occult interval cancers [31], which even with AI-prompts would not be expected to be detected at screening or diagnostic work-up. These findings relating to interval cancers should not be less valid for next-round screen-detected and long-term cancers, and in particular cancers with a short doubling time, such as grade 3 tumours, making it unlikely for these to have been detected with an AI positive assessment. The reported results on interval cancers which were missed by human readers but detected by or with the AI system (Supplementary eTables 2–3), especially those diagnosed ≥ 12 months after screening, should therefore be interpreted with caution in light of the radiological and biological characteristics of interval cancers.

What further contributes to the uncertainty around estimates in accuracy studies of this type is the intrinsic verification bias due to different reference standards depending on the screening decision outcome [32]. The choice of management to confirm disease status was, for instance, correlated with the readers’ screen decisions, likely introducing a systematic bias favouring the accuracy of the radiologists.

While our study design reinforces the reliability and generalisability of the findings in this study, we recognise that more accurate quantification of the actual detection accuracy of AI requires prospective studies which have the advantage of estimating the effect of AI-integrated screening on detection accuracy and workload. This is further emphasised considering that the workload reduction achieved in this study for Integrated AIsens through decreasing human screen reads with > 48% would to some degree be counterbalanced by the found increase in recall rate of almost 25% (Supplementary eTable 4). Only with Integrated AIspec, which showed a stable recall rate, AI-integrated screening could be considered feasible enough to ensure actual alleviation of workforce pressures, stressing the importance of selecting an appropriate AI threshold value. Well-designed randomised controlled trials are warranted to elucidate the implications of clinical implementation of AI as one of two readers in mammography screening, the choice of a clinically relevant threshold, as well as the effects on cancer detection, workflow, and radiologist interpretation and behaviour. The first two prospective studies reported only recently short-term results of population-based AI-integrated screening with positive screening outcome in terms of cancer detection rate and workload reduction, providing a promising outlook for safe AI deployment within mammography screening [33, 34].

Conclusions

In conclusion, findings of this retrospective and population-wide mammography screening accuracy study suggest that an AI system with an appropriate threshold could be feasible as a replacement of the first reader in double reading with arbitration. The spectrum of detected cancers differed significantly across multiple cancer subgroups with a general tendency of lower accuracy for screen-detected cancers and higher accuracy for interval cancers. Discrepancies in cancers detected by the AI system and radiologists could be harnessed to improve detection accuracy of particular subtypes of interval cancers by applying AI for decision support in double reading.

Data availability

The image dataset and local radiology dataset collected for this study are not publicly available due to Danish and European regulations for the use of data in research, but investigators are on reasonable request encouraged to contact the corresponding author for academic inquiries into the possibility of applying for deidentified participant data through a Data Transfer Agreement procedure. The registry datasets from the Danish Breast Cancer Cooperative Group (DBCG) and the Danish Quality Database on Mammography Screening (DKMS) used and analysed during the current study are available from the Danish Clinical Quality Program – National Clinical Registries (RKKP), but restrictions apply to the availability of these data, which were used under license for the current study, and so are not publicly available. Data are, however, available upon reasonable request to and with permission from the RKKP, provided that the necessary access requirements are fulfilled.

Abbreviations

- AI:

-

Artificial intelligence

- AIsens :

-

Artificial intelligence score cut-off point matched at mean first reader sensitivity

- AIspec :

-

Artificial intelligence score cut-off point matched at mean first reader specificity

- CE:

-

Conformité Europëenne

- CI:

-

Confidence interval

- DCIS:

-

Ductal carcinoma in situ

- DICOM:

-

Digital Imaging and Communications in Medicine

- FDA:

-

Food and Drug Administration

- NPV:

-

Negative predictive value

- PPV:

-

Positive predictive value

- RSD:

-

Region of Southern Denmark

- SD:

-

Standard deviation

- STARD:

-

Standards for Reporting Diagnostic Accuracy Studies

References

World Health Organization. Guide to cancer early diagnosis. Geneva: World Health Organization; 2017.

Berry DA, Cronin KA, Plevritis SK, Fryback DG, Clarke L, Zelen M, et al. Effect of screening and adjuvant therapy on mortality from Breast cancer. N Engl J Med. 2005;353(17):1784–92.

European Commission. Cancer Screening in the European Union. (2017) Report on the implementation of the Council Recommendation on cancer screening. 2017. https://ec.europa.eu/health/sites/health/files/major_chronic_diseases/docs/2017_cancerscreening_2ndreportimplementation_en.pdf. Accessed 22 Apr 2023.

Mikkelsen EM, Njor SH, Vejborg I. Danish quality database for Mammography Screening. Clin Epidemiol. 2016;8:661–6.

Lynge E, Beau A-B, von Euler-Chelpin M, Napolitano G, Njor S, Olsen AH, et al. Breast cancer mortality and overdiagnosis after implementation of population-based screening in Denmark. Breast Cancer Res Treat. 2020;184(3):891–9.

Perry N, Broeders M, de Wolf C, Törnberg S, Holland R, von Karsa L. European guidelines for quality assurance in Breast cancer screening and diagnosis. 4th ed. Luxembourg: Office for Official Publications of the European Communities; 2006.

Danish Health Authority. Kapacitetsudfordringer på brystkræftområdet: Faglig gennemgang af udfordringer og anbefalinger til løsninger. 2022. https://www.sundhedsstyrelsen.dk/-/media/Udgivelser/2022/Kraeft/Brystkraeft/Faglig-gennemgang-og-anbefalinger-til-kapacitetsudfordringer-paa-brystkraeftomraadet.ashx. Accessed 22 Apr 2023.

Chockley K, Emanuel E. The end of Radiology? Three threats to the future practice of Radiology. J Am Coll Radiol. 2016;13(12 Pt A):1415–20.

Obermeyer Z, Emanuel EJ. Predicting the Future - Big Data, Machine Learning, and Clinical Medicine. N Engl J Med. 2016;375(13):1216–9.

Rodriguez-Ruiz A, Lang K, Gubern-Merida A, Broeders M, Gennaro G, Clauser P, et al. Stand-alone Artificial intelligence for Breast Cancer detection in Mammography: comparison with 101 radiologists. J Natl Cancer Inst. 2019;111(9):916–22.

McKinney SM, Sieniek M, Godbole V, Godwin J, Antropova N, Ashrafian H, et al. International evaluation of an AI system for Breast cancer screening. Nature. 2020;577(7788):89–94.

Lotter W, Diab AR, Haslam B, Kim JG, Grisot G, Wu E, et al. Robust Breast cancer detection in mammography and digital breast tomosynthesis using an annotation-efficient deep learning approach. Nat Med. 2021;27(2):244–9.

Freeman K, Geppert J, Stinton C, Todkill D, Johnson S, Clarke A, et al. Use of artificial intelligence for image analysis in Breast cancer screening programmes: systematic review of test accuracy. BMJ. 2021;374:n1872.

European Commission Initiative on Breast Cancer. Use of artificial intelligence. 2022 [cited 2023 March 11,]. Available from: https://healthcare-quality.jrc.ec.europa.eu/ecibc/european-breast-cancer-guidelines/artificial-intelligence.

Bossuyt PM, Reitsma JB, Bruns DE, Gatsonis CA, Glasziou PP, Irwig L, et al. STARD 2015: an updated list of essential items for reporting diagnostic accuracy studies. BMJ. 2015;351:h5527.

Christiansen P, Ejlertsen B, Jensen MB, Mouridsen H. Danish Breast Cancer Cooperative Group. Clin Epidemiol. 2016;8:445–9.

Lauritzen AD, Rodríguez-Ruiz A, von Euler-Chelpin MC, Lynge E, Vejborg I, Nielsen M et al. An Artificial Intelligence-based Mammography Screening protocol for Breast Cancer: outcome and radiologist workload. Radiology. 2022:210948.

Förnvik D, Lång K, Andersson I, Dustler M, Borgquist S, Timberg P, Estimates of Breast Cancer Growth Rate from Mammograms and its Relation to Tumour Characteristics. Radiat Prot Dosimetry. 2016;169(1–4):151–7.

Lynge E, Beau AB, Christiansen P, von Euler-Chelpin M, Kroman N, Njor S, et al. Overdiagnosis in Breast cancer screening: the impact of study design and calculations. Eur J Cancer. 2017;80:26–9.

Leibig C, Brehmer M, Bunk S, Byng D, Pinker K, Umutlu L. Combining the strengths of radiologists and AI for Breast cancer screening: a retrospective analysis. Lancet Digit Health. 2022;4(7):e507–e19.

Schaffter T, Buist DSM, Lee CI, Nikulin Y, Ribli D, Guan Y, et al. Evaluation of combined Artificial Intelligence and Radiologist Assessment to Interpret Screening mammograms. JAMA Netw Open. 2020;3(3):e200265–e.

Kim H-E, Kim HH, Han B-K, Kim KH, Han K, Nam H, et al. Changes in cancer detection and false-positive recall in mammography using artificial intelligence: a retrospective, multireader study. Lancet Digit Health. 2020;2(3):e138–e48.

Anderson AW, Marinovich ML, Houssami N, Lowry KP, Elmore JG, Buist DSM et al. Independent External Validation of Artificial Intelligence Algorithms for Automated Interpretation of Screening Mammography: a systematic review. J Am Coll Radiol. 2022;19(2 Pt A):259 – 73.

Hickman SE, Woitek R, Le EPV, Im YR, Mouritsen Luxhøj C, Aviles-Rivero AI, et al. Machine learning for Workflow Applications in Screening Mammography: systematic review and Meta-analysis. Radiology. 2021;302(1):88–104.

de Vries CF, Colosimo SJ, Boyle M, Lip G, Anderson LA, Staff RT, et al. AI in breast screening mammography: breast screening readers’ perspectives. Insights into Imaging. 2022;13(1):186.

Larsen M, Aglen CF, Hoff SR, Lund-Hanssen H, Hofvind S. Possible strategies for use of artificial intelligence in screen-reading of mammograms, based on retrospective data from 122,969 screening examinations. Eur Radiol. 2022;32(12):8238–46.

Sharma N, Ng AY, James JJ, Khara G, Ambrózay É, Austin CC, et al. Multi-vendor evaluation of artificial intelligence as an Independent reader for double reading in Breast cancer screening on 275,900 mammograms. BMC Cancer. 2023;23(1):460.

Frazer HML, Peña-Solorzano CA, Kwok CF, Elliott M, Chen Y, Wang C et al. AI integration improves Breast cancer screening in a real-world, retrospective cohort study. medRxiv. 2022:2022.11.23.22282646 (preprint).

Liu X, Faes L, Kale AU, Wagner SK, Fu DJ, Bruynseels A, et al. A comparison of deep learning performance against health-care professionals in detecting Diseases from medical imaging: a systematic review and meta-analysis. Lancet Digit Health. 2019;1(6):e271–e97.

Lång K, Hofvind S, Rodríguez-Ruiz A, Andersson I. Can artificial intelligence reduce the interval cancer rate in mammography screening? Eur Radiollogy. 2021;31:5940–7.

Houssami N, Hunter K. The epidemiology, radiology and biological characteristics of interval breast cancers in population mammography screening. NPJ Breast Cancer. 2017;3:12.

Taylor-Phillips S, Seedat F, Kijauskaite G, Marshall J, Halligan S, Hyde C, et al. UK National Screening Committee’s approach to reviewing evidence on artificial intelligence in Breast cancer screening. Lancet Digit Health. 2022;4(7):e558–e65.

Lång K, Josefsson V, Larsson AM, et al. Artificial intelligence-supported screen reading versus standard double reading in the Mammography screening with Artificial Intelligence trial (MASAI): a clinical safety analysis of a randomised, controlled, non-inferiority, single-blinded, screening accuracy study. Lancet Oncol. 2023;24:936–44.

Dembrower K, Crippa A, Colón E, Eklund M, Strand F. Artificial intelligence for Breast cancer detection in screening mammography in Sweden: a prospective, population-based, paired-reader, non-inferiority study. Lancet Digit Health. 2023.

Acknowledgements

We are grateful to the Region of Southern Denmark for the funding of this study. We thank ScreenPoint Medical for providing the AI system for this study. We are grateful to the Danish Clinical Quality Program – National Clinical Registries (RKKP), the Danish Breast Cancer Cooperative Group (DBCG) and the Danish Quality Database on Mammography Screening (DKMS) for the provision of data. We thank Henrik Johansen (Regional IT) for technical assistance and data management. We thank all supporting breast radiologists and mammography centres in the Region of Southern Denmark for contributing with their expertise and collaboration during the study conduct. We thank the women and patients for their participation. The authors alone are responsible for the views expressed in this article and they do not necessarily represent the decisions, policies, or view of the Region of Southern Denmark or any other collaborator.

Funding

The study was funded through the Innovation Fund by the Region of Southern Denmark (grant number 10240300). The funder of the study had no role in study design, data collection, data analysis, data interpretation, or writing of the report.

Open access funding provided by University of Southern Denmark

Author information

Authors and Affiliations

Contributions

MTE, OGr, MN, LBL and BSBR contributed to conceptualization of the study, project administration, or supervision. OGr and BSBR secured funding. MTE, SWS, MN, KL and OGe contributed to management, analysis, or interpretation of data. MTE wrote the first draft of the manuscript and had the final responsibility for the decision to submit for publication. All authors contributed to revision of the manuscript critically for important intellectual content and approval of the final version to be submitted for publication. All authors had access to all data, and MTE and SWS had access to and verified raw data.

Corresponding author

Ethics declarations

Ethics approval and consent to participate

Ethical approval was obtained from the Danish National Committee on Health Research Ethics (identifier D1576029) which waived the need for individual informed consent.

Consent for publication

Not applicable.

Competing interests

MN holds shares in Biomediq A/S. KL has been an advisory board member for Siemens Healthineers and has received lecture honorarium from Astra Zeneca. All other authors declare that they have no competing interests.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/. The Creative Commons Public Domain Dedication waiver (http://creativecommons.org/publicdomain/zero/1.0/) applies to the data made available in this article, unless otherwise stated in a credit line to the data.

About this article

Cite this article

Elhakim, M.T., Stougaard, S.W., Graumann, O. et al. Breast cancer detection accuracy of AI in an entire screening population: a retrospective, multicentre study. Cancer Imaging 23, 127 (2023). https://doi.org/10.1186/s40644-023-00643-x

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/s40644-023-00643-x