Abstract

We present an attempt to improve the quality of the geomagnetic field measurements from the Polar Orbiting Geophysical Observatory (POGO) satellite missions in the late 1960s. Inaccurate satellite positions are believed to be a major source of errors for using the magnetic observations for field modelling. To improve the data, we use an iterative approach consisting of two main parts: one is a main field modelling process to obtain the radial field gradient to perturb the orbits and the other is the state-of-the-art GPS orbit modelling software BERNESE to calculate new physical orbits. We report results based on a single-day approach showing a clear increase of the data quality. That single-day approach leads, however, to undesirable orbital jumps at midnight. Furthermore, we report results obtained for a much larger data set comprising almost all of the data from the three missions. With this approach, we eliminate the orbit discontinuities at midnight but only tiny quality improvements could be achieved for geomagnetically quiet data. We believe that improvements to the data are probably still possible, but it would require the original tracking observations to be found.

Similar content being viewed by others

Background

Measurements of the magnetic field during the previous century are of importance for characterising the secular variation. Starting with Cosmos-49 in 1964, satellite data has played an increasingly important role in defining the magnetic field, culminating in the present-day Swarm mission beginning in 2013 (Olsen and The Scarf team 2013). Here we report on data collected by the series of Orbiting Geophysical Observatory (OGO) satellites from the 1960s. These data quite likely suffer from imprecision in their geographical positions as a result of poor tracking abilities and rudimentary gravitational models. We feel that there is an opportunity to improve these heritage data by using up-to-date methods and gravity fields. As we shall see, the original tracking data were lost, so our efforts represent a compromise at what could be done if the original data were to be found.

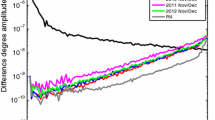

The aim of our study is to attempt to correct the positions of the geomagnetic field measurements of the OGO missions using the advanced orbit software BERNESE and to check the new orbits by investigating how compatible the magnetic data are with a magnetic potential field. BERNESE takes an initial orbit and produces a new admissible orbit which is as close as possible to the old orbit. One can use BERNESE to show that the supplied OGO orbits are not physically possible orbits. The top panel of Fig. 1 shows that BERNESE alters OGO orbits by up to ±200 m. For comparison, we show the same results for the much more recent CHAMP satellite from September 2004 in the bottom panel of the same figure. Note that the scale for the CHAMP data is a tenth of that for the OGO data. The ultimate aim of correcting orbit positions is to obtain satellite data with improved data quality for that time which then can further be used to study the 1969 jerk or to improve historical field models. This idea grew out of the 4th Ørsted International Science team meeting and is discussed in Jackson and Olsen (2003), and we present it in this special issue in the spirit of aiming to improve chracterisations of the secular variation of the historical field.

The mid-1960s of the last century, 8 years after the first outer space satellite Sputnik, heralded the start of the OGO programme. Its primary objective was to conduct a diverse number of experiments comprising scientific and technological measurements within the Earth’s atmosphere, the magnetosphere and cislunar space to obtain a better understanding of the Earth-Sun relations and of the Earth as a planet. The polar orbit (POGO) mission, within the OGO programme, was conducted at lower altitudes with perigee and apogee heights of 400 and 1510 km above the Earth, respectively. This orbit, with inclination of 82° or greater, provides thorough coverage of the polar regions (Gleghorn and Wiggins 1965). Out of the six OGO satellites, only the three low altitude POGO satellites (OGO-2, OGO-4 and OGO-6) are of interest for the purpose of geomagnetic field modelling. A short POGO mission summary is given in Table 1. However, the magnetic field measurements are associated with large a priori orbit errors. Therefore, the error budget is dominated by orbit errors, which could contribute up to ±25 nT (we state standard deviations throughout). Geopotential models, which are used to determine valid orbits, have been improved enormously over the last few decades. In 1981, Taylor et al. (1981) already showed that a more sophisticated gravity model can introduce magnetic anomalies, at POGO satellite altitude, of 1.6 to 3.2 nT, depending on the latitude. Therefore, reprocessing the orbits with the use of a state-of-the-art geopotential model could probably increase the data quality. But simply correcting the given POGO orbits leads to valid orbits which are potentially biased by the POGO orbits, which we believe themselves to be in error. For that reason, we tried to avoid the dependencies on old orbits by perturbing these orbits in a manner described in the ‘Orbit perturbation’ section. The overall aim is to find orbits which reduce residuals in magnetic field models whilst simultaneously being themselves true possible orbits. Despite considerable efforts, the original tracking data seems to have been lost. Thus, the optimal strategy of a full reprocessing of the original data is not available to us.

Following Gleghorn and Wiggins (1965), the tracking functions and command of the OGO were designed to be compatible with the National Aeronautics and Space Administration’s Minitrack Network as well as prime data acquisition stations located at Rosman, North Carolina; Fairbanks, Alaska; Australia; Johannesburg, South Africa and Quito, Ecuador. Orbits of most satellites before the OGO programme had been determined by the use of the worldwide satellite network, formerly known as the Minitrack Network. This network of tracking stations and the necessary computational techniques were well established and were also used in the OGO programme. The use of two ground tracking stations simultaneously permitted high accuracy trilateration of the satellite. This system was supplemented by a range and range-rate system which was expected to permit the more accurate computation of the orbit parameters in a shorter time, especially in the case of the highly eccentric orbit in which the satellite spent a large fraction of its time at large distances from the Earth where the angular rates are very low. The overall goal of the tracking programme was to be able to determine the position of the satellite at all times within a sphere of uncertainty having a radius of 1 km or less at radial distances of less than 1000 km and of 100 km at radial distances of 17 Earth radii (Ludwig 1963).

The total magnetic field strength (intensity measurement) was measured with a pair of optically pumped, self-oscillating dual-cell rubidium vapour magnetometers. The instruments were designed to measure a field range from 15,000 to 64,000 nT. OGO-2 and OGO-4 measured with a sampling interval of about 0.5 s whereas OGO-6 measured on a 0.288-s interval (Cain and Langel 1971; Ludwig 1963). The sensor was mounted on the end of a 6-m-long boom to reduce the noise from the spacecraft itself which was tested prior to launch and was found to be less than 1 nT at the sensor location. The accuracy of the instrument itself is better than 2 nT as direct comparison with proton magnetometers have shown (Farthing and Folz 1967). The noise resulting from the digitization (measuring the frequency over a finite interval) is ±4 nT for OGO-2 and OGO-4 and ±6 nT for OGO-6. Another error source comparable to that of the instrument is the absolute time assigned to any given measurement. Because of the movement of the satellite, the field is changing up to 40 nT/s. Therefore, an error of about 25 ms results in a measurement error of about 1 nT. Since the timing accuracy is estimated to be better than 30 ms with some rare excursions of 60 ms, it is likely that the time error is of order of 1 nT (Cain and Langel 1971). However, positioning errors overshadow the rather small instrumental and timing errors as already mentioned earlier. The standard deviation of the errors from all known sources, including inaccuracies in orbital position, was found after modelling to be 5.63 nT (Langel 1974). Examinations with geomagnetic field models, however, show that an altitude error of 100 m results in a measurement error of about 2.5 nT whereas a horizontal error of the same size results in a measurement error of about 0.5 nT. Remember again that the target tracking accuracy at low altitude was 1 km, which would corresponds to a maximum error of 25 nT. The maximum and the rms of the intensity errors per 100 m due to positioning errors at 400 km altitude from geomagnetic field models are shown in Table 2.

POGO orbits were determined using simple gravity field models up to spherical harmonic degree 7 and order 6, plus three higher order resonance terms (Taylor et al. 1981). Thus, there are two sources contributing to positional error: i) imprecision in determination of actual spot position, through range and range-rate determination; and ii) imprecision in reduction of the data to produce valid orbits, i.e. determination of observations when only timing information was available. Unfortunately, the unprocessed original data are not available any more and we are left only with processed data which are available from the Goddard Space Flight Center (Greenbelt, Maryland, USA). Therefore, a reprocessing of the orbits with BERNESE, using a state-of-the-art gravity field and tidal models, may reduce the position errors and hence increase the data quality. To remove the potential bias from the old orbit in an objective way, we radially perturb the orbits according to the maximum radial gradient of |B|, reducing the residual to a magnetic potential field. For more detail about our approach, see the ‘Data compilation’ section.

The data compilation that we used is described in the next section. In the ‘Results and discussion’ section, we report the results obtained by processing a single day only, namely August 8, 1969, together with the results obtained by using the combined-day approach. A discussion of the results obtained is given in the ‘Conclusions’ section.

Methods

Data compilation

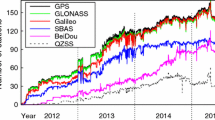

OGO-2 acquired data from October 14, 1965 until October 2, 1967, but because of an early failure in the attitude control system, the measurements were limited to twilight local times (when the orbit was in full sunlight). OGO-4 operated almost continuously from July 28, 1967 until January 19, 1969, and OGO-6 from June 5, 1969 until August 29, 1970 (Cain and Langel 1971; Langel 1974). Because of gaps in the days, not all the available observations have been taken into account for our processing. Only days within a longer continuous period of days have been selected, in order to be able to do a sensible orbit estimation with BERNESE. The range of the chosen days goes from January 22, 1966 until August 24, 1970. That data set was further decimated to a datum interval of minimum 10 s for a better data handling. We refer to that data set as POGO_corr. As a next step that data set has been processed once by the BERNESE software resulting in new physical orbits which are then our starting data for the process flow described in the ‘Processing’ subsection (see also Fig. 2).

However, for the modelling process, only geomagnetically quiet data were selected. By geomagnetically quiet data, we mean: i) at all latitudes, the D st -index must change by less than 2.1 nT/h; ii) at all latitudes, it is required that K p≤1+ and that Kp of the previous 3-h interval is ≤2o and iii) only data from dark regions (sun 5° below horizon) were used. Such selection criteria have in the past successfully enabled the construction of high-resolution models of both the core and crustal magnetic field. These quiet data are further reduced to those with residuals of less than 30 nT with respect to the prediction of the combined field from the CM4 main field model (Sabaka et al. 2004) up to spherical harmonic degree 13 at each epoch and the xCHAOS magnetospheric field model (Olsen and Mandea 2008). From these quiet data, the xCHAOS magnetospheric field model predictions, |B ext|, are removed according to the following formula

where F mod is the total field intensity used for the modelling, F obs is the observed total field intensity, Δ F is the portion of the magnetospheric field to the observed total field intensity and B main is the CM4 main field at corresponding epoch. We refer to that data set as POGO_mod. Justification of the use of the xCHAOS magnetospheric model derives from the following rationale: Of the three external constituent parts, the first two are assumed to be stationary in the SM and GSM frames, respectively. This means they are assumed to be independent of the solar cycle phase and thus identical in the 1960s and the years 2000–2010, which is the time span from which the model coefficients have been derived. The time variation of those two parts contains daily and seasonal variations, given by the ‘wobble’ of the GSM and SM frames wrt a fixed location on Earth. The third part depends explicitly on the D st -index (more concretely: on the decomposition of D st into its external part E st and its induced part I st ) and therefore has an explicit time dependence (given by that of D st ). The estimated regression coefficients are assumed to be solar cycle independent, but we used the actual values of D st =E st +I st of the POGO time instant to correct the POGO magnetic observations for magnetospheric field contributions. The xCHAOS model coefficients have been derived using the Ørsted and CHAMP data including solar maximum and minimum conditions. The model fits the observations equally well for both conditions, indicating that the assumed solar cycle independence is justified.

We note that the use of CM4 for removing outliers is slightly circular, since CM4 itself was built using POGO data; however, the tolerence level of 30 nT is sufficiently large that we do not expect that this has a substantial effect. The error budgets for the modelling were set very conservatively to 7 nT independent of the measurement location, even though it can be assumed that the real errors of the original data are around 5.6 nT (Langel 1974). The first POGO_mod data set contains about 696,997 observations.

Note that with each processing loop, a new POGO_mod and POGO_corr data set was generated since satellite positions are modified in each iteration step. Figure 3 shows the temporal distribution (the number of observations per month) of the POGO_corr data set in the left diagram and of the first POGO_mod data set in the right diagram. Note that only an eighth of the total data can be used for the modelling.

For the single-day approach, the day with the most measurements was chosen from the original data subset, which is August 8, 1969. The orbit tracks for the day are shown in Fig. 4 in a Robinson projection. However, to obtain a sufficiently good spatial data coverage, we did not apply the aforementioned quiet data selection criteria. Taking the geomagnetic quiet data only would lead to the small section of the total arc which is marked in red in Fig. 4. Therefore, we only chose the observations with a residual of less than 30 nT to the prediction of the CM4 main field model (Sabaka et al. 2004) (up to degree 13) after removing the xCHAOS magnetospheric field model (Olsen and Mandea 2008) prediction. That data set than contains about 7907 observations for the first iteration process out of the total of 8311 measurements for that particular day. Again, for every iteration, new data sets were generated. In the next section, we describe the different methods and notations used to perform the orbit corrections.

BERNESE GPS software

The BERNESE GPS software was developed by the Astronomical Institute of the University of Bern, AIUB, Switzerland. It includes a reduced dynamics orbit generator. The force model in the orbit generator includes i) Earth’s gravitational potential to selected order and degree, here 120; ii) gravitational effects from Sun, Moon, Jupiter, Venus, and Mars; and iii) elastic Earth tidal corrections, pole tide and ocean tides. Further, the solar radiation pressure and air drag are estimated and applied as pseudo-stochastic pulses (instantaneous velocity changes at specified epochs) in order to make the best fit between the input orbit and the reduced dynamics orbit. However, it must be said that the BERNESE software originally is intended to determine orbits for the GPS satellites and it could not be guaranteed that it also will work properly for our purpose because the POGO time was earlier than the actual GPS invention (personal communication of R. Dach, head GPS research and BERNESE development group at AIUB). Further, note that BERNESE is not able to generate unique orbits, it rather verifies if the input orbit points come from a physical orbit or not.

For a single-day input orbit, the new reduced dynamics orbit can easily be generated within one arc. However, by processing all days as single days, it is certainly sure that the new orbits are not continuous at midnight which of course is the case for the real satellite orbit. Because of memory and runtime limitations, it was not possible to generate all the new orbits for one POGO mission into one arc, which would have been the optimal satellite solution. We tackled the continuity problem in such a way that we used a moving window fit (similar to a moving average) consisting of 5 days fitting within three arcs. That leads to a middle arc which is overlapping into the neighbouring days by 8 h. The individual discontinuities between the three arcs are negligibly small. For some days, BERNESE was unable to produce valid orbits with that setup; in these cases, we used either a 3-day fitting window or the arcs from neighbouring days (±2 days). In some rare cases, we were forced to use a single-day fit only. The new orbits modified all three coordinates (radius, co-latitude and longitude) of the original orbit.

The new orbit coordinates are generated on a regular time interval. We were using a 1-s time interval which was on one side dense enough for the chosen data (see the ‘Data compilation’ section for more information about the used data sets) and on the other side small enough to be able to handle the output files in a sensible manner. Since the original data were not measured on the same time grid, linear interpolation was used within the time intervals to obtain the new positions for the time steps of the original data.

Main field modelling

Notations and concept used here for the main field modelling are standard (see, for example, Langel 1987). We adopt the spherical polar co-ordinate system and denote position by r=(r,θ,ϕ) where r is radius, θ is co-latitude and ϕ is the longitude. In what follows, we assume that the mantle and the atmosphere are an insulator where no electric currents flow so the magnetic field B can be derived from a potential field and can thus be represented by B=−∇V, where V is the magnetic potential. As there are no magnetic monopoles (∇·B=0), we look for the solution of Laplace’s equation, ∇2 V=0. The radial component of the magnetic field, B r , entirely describes the potential V for this problem with Neumann boundary conditions. Note B r only describes the potential if one assumes that the sources are entirely of internal (or entirely of external) origin. The solution for the potential V accounting only for internal sources is conventionally written in terms of a spherical harmonic expansion

where \({g_{l}^{m}},{h_{l}^{m}}\) are the time depending Gauss coefficients and \({P_{l}^{m}}\) are the associated Legendre functions. Here we take L=10 as the maximum spherical harmonic degree. Following Bloxham and Jackson (1992), we use B-splines of order 4 as the basis functions, M n (t), to represent the time dependence of the coefficients \({g_{l}^{m}}\),

with a similar expression for the \({h_{l}^{m}}\). The chosen time span is 1966–1971 using a knot spacing of 0.5 years.

For the inverse problem, we use a robust L 1-norm measure. Model estimation methods using such an L 1-norm measure of misfit have been found to perform well in geomagnetic field modelling applications (Lesur et al. 2008; Thomson and Lesur 2007; Walker and Jackson 2000). We implemented the L 1-norm using an iteratively reweighted least squares (IRWLS) algorithm (Scales et al. 1998; Walker and Jackson 2000).

The standard non-regularised least squares problem (Aster et al. 2005; Gubbins 2004) involves solving an optimisation problem to find the model m which minimises the objective function

where d are the field observations, m are the model parameters and A is the forward functional matrix, W k is a weighting matrix derived from the misfit of each datum in the previous (kth) model iteration (Walker and Jackson 2000) and C e is the data covariance matrix containing information concerning estimated errors: we take it to be diagonal, consistent with the assumption of independence of errors. We do not apply any weighting to account for variations in data density over the sphere, meaning that we aim to fit each datum equally well.

To get a solution for our optimisation problem, we used a quasi-Newton scheme (see, for example, Luenberger 1969; Tarantola 2005). This may be written in the form

where μ k are real constants small enough to avoid divergence of the algorithm and large enough to allow the algorithm to advance. The factors μ k are here chosen to be unity, since the Hessian metric usually accounts sufficiently well for the local geometry of the objective function. Substituting (4) into (5) and taking μ k =1 leads to the scheme

Note that when W k =I, where I is the identity matrix, this scheme reduces to that for the conventional L 2-norm measure of misfit. Iteration is required to find a solution because W k depends on m k and because we use scalar (intensity) data rather than vector data. Note that for the single-day results presented in the ‘Results and discussion’ section, the time-independent version of (2) and (6) was used for the field modelling.

Orbit perturbation

To account for the bias in the orbits, we were interested in the gradient of the magnetic field in respect to the altitude. We restrict ourselves to the radial gradient because that is the gradient with the largest impact according to Table 2. The linear approximation for a radially disturbed scalar field prediction, F(r), is given by

Applying Eq. 7 to a zero residual condition leads to

where δ F is the residual F obs(r)−F(r).

Therefore, the radial perturbation is given by the residual and the partial derivative through

However, to obtain reasonable orbit changes with BERNESE (reducing the number of critical days producing errors), a threshold ρ is set for dr, so that the maximum radial perturbation per iteration is less than that threshold, |d r|≤ρ. For the single-day analysis, ρ was set to 300 m whereas for the combined-day approach, ρ was reduced to 150 m after the first three iterations.

Processing

To remove the bias of the processed original orbits in an objective way, the following scheme, see Fig. 2, was thought to be appropriate and was therefore applied:

-

Use the original data as the first input orbits for the BERNESE software;

-

The new orbits are used for the main field modelling;

-

For each data point, find the residual to that model, calculate the maximum gradient of |B| with respect to the radius (see Eq. 9), and perturb the altitude according to that;

-

These new points, which are now on inadmissible orbits, build the new input orbits for the next run with the BERNESE software;

-

That process is thought to be iterative to find a convergence between the introduced perturbation due the magnetic field gradient and the orbit changes in BERNESE. That means that the corrections induced due to the residuals from the magnetic field model are back corrected by BERNESE.

Note that the orbit perturbations are applied to both quiet and noisy data whereas for the field modelling, only quiet data was used. That is because all the data should be used for BERNESE since magnetic quiet data do not always cover long enough arcs as it can be seen, for example, in the red section of the arc in Fig. 4 for the case of August 8, 1968. Therefore, longer arcs are preferable due to the non-uniqueness of the new orbits created by BERNESE. In the following section, we present the results obtained from the single day as well as with combining all the data described in the ‘Data compilation’ section.

Results and discussion

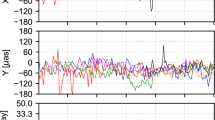

First, we report results based on the single-day corrections only. For all the single-day orbit corrections, we used a spherical harmonic field model up to degree 10 with zero spatial damping. We conducted 35 process iterations according to the scheme described in the ‘Processing’ subsection and Fig. 2. It turned out that after iteration 10, we were not able to further decrease the rms value of the residuals anymore as well as the minimum and maximum value. Instead, they started to increase again with the following iterations. But up to iteration 10, all the different residual values could be reduced by more than 25 % and the minimum value even by nearly 60 %. Furthermore, the number of data that could be used for the field modelling process increased with a maximum number of data after iteration 7. Therefore, more residuals fall bellow our 30-nT threshold in the data selection criteria. A detailed summary of the residuals of the first 12 iterations is presented in Table 3 together with the values obtained from the original data. Looking at Table 3, one also can see that the maximum and minimum values settle down to values around 49 and −38 nT, respectively. Figure 5 shows the normalised probability density of the residuals from the original data set (red) used for modelling and the modelling data obtained after 10 iterations (blue). It clearly shows a decrease of the residuals.

Dimensional residual distributions for the single-day analysis. The red curve shows the residuals of the original data (August 8, 1969) used for the field modelling, and the blue curve shows the residuals of the modelling data after 10 iterations. μ is the mean value of the residuals, and σ is their rms value

After the promising single-day results, we report now the results that we have achieved using the much larger POGO_mod data set (described in the ‘Data compilation’ section) covering most of the three POGO satellite missions. The setup for the field modelling was the same as that of the single-day approach, the only difference being the use of a time-dependent field model. As already mentioned in the ‘Main field modelling’ section, the temporal damping was also set to zero similar to the spatial one. We were solving for a field model up to spherical harmonic degree 14. However, only the spherical harmonics up to degree 10 of the obtained field model were used to calculate the orbit perturbation to reduce the field residuals. Note also, as it is shown in Fig. 3, that only about an eighth of the POGO_corr data set could be used for the field modelling process after applying our quiet day selection criteria. Unlike to the single-day analysis, we only did six full process iterations. The reason for the rather small number of iterations is an increasing number of days producing errors in the BERNESE step (see the ‘BERNESE GPS software’ section for more information) as more iterations were performed. This was despite reducing the threshold for the maximum radial perturbation after the first three iterations from 300 to 150 m. The dimensional residual distributions of the data to the best possible model, obtained from the POGO_mod data set, are shown in Fig. 6. The original data are presented in red whereas the data obtained after six process iterations are shown in blue. We do not to present a table similar to Table 3 for the combined-day approach because the reader does not gain more information than is already presented in Fig. 6.

The reader may ask themselves whether one should worry about the so-called the Backus effect when making field models from purely intensity data. It is certainly the fact that the models derived are non-unique, but here our purposes are different; we seek to look at whether the data are fitted to an adequate level. The problem of non-uniqueness is not one that bears on this latter point.

Conclusions

From the analysis of the residuals in Table 3 and Fig. 5, it appears that we were able to improve the data quality for a single day applying our iterative approach. However, our iterative scheme (see the ‘Processing’ subsection) did not fully converge even though the residual values settled down after 10 iterations. Of course, it remains a question if the rms of the magnetic residuals is a good indicator for data quality or not—we think so. The lower rms value is however not the only indication for an improvement of the data. We believe that the comprehensive CM4 model (Sabaka et al. 2004) is able to represent the magnetic field for the time period of the POGO mission well. Therefore, an other possible proof for the data improvement is the fact that the number of observations with an residual less than 30 nT to the CM4 model could be increased. The results show that our iterative approach is feasible to tackle the problem of improving the POGO data quality by altering the satellite orbit.

In contrast to the single-day results, the results obtained from the combined days were somewhat disappointing. The residual values (see Fig. 6) for the modelling data sets POGO_mod could not really be reduced with the iteration process. It seems that the maximum residual was already saturated after two iterations even though we were also using a radial correction threshold ρ of ±300 m up to the third iteration. The rms of the residuals could be reduced but only by a very tiny amount compared to the single-day approach. A reason for the little improvement is certainly the fact that we were using many fewer iterations compared to the single-day case. The limited number of iterations was a direct consequence of the increasing numbers of days causing errors in the BERNESE step, which we were unfortunately not able to overcome. The rather weak results obtained using the combined days might also be a result of the field modelling process, namely the rather conservative error budget and the use of no temporal and spatial damping.

From the analysis of the introduced orbital changes (not reported here in the paper), one can see that the changes are in a consistent way over the whole day, especially for the radial component (the component we perturbed in our process). Therefore, we do not have to expect large orbit jumps at midnight. Further, the rather small improvements achieved in the data quality probably will not introduce a significant change in already existing magnetic field models obtained by using the original data.

Future authors who are interested in improving the position corresponding to the POGO magnetic field measurements may wish to use more sophisticated magnetic field models and include observatory data, which will allow to solve additionally for the remaining external field component. In general, our method for satellite orbit corrections presented here could in principle be used for further work.

References

Aster, R, Borchers B, Thurber C (2005) Parameter estimation and inverse problems. Elsevier Academic Press, Amsterdam.

Bloxham, J, Jackson A (1992) Time-dependent mapping of the magnetic field at the core-mantle boundary. J Geophys Res 97: 19537–19563.

Cain, JC, Langel RA (1971) Geomagnetic survey by the polar-orbiting geophysical observatories. In: Zmuda AJ (ed)World Magnetic Survey. IAGA Bull. 28, 65–75.. Int. Ass Geomagn. Aeron., Paris.

Farthing, WH, Folz WC (1967) Rubidium vapor magnetometer for near earth orbiting spacecraft. Rev Sci Instrum 38: 1023–1030.

Gleghorn, GJ, Wiggins ET (1965) Design and development of the Orbiting Geophysical Observatory. Ann N Y Acad Sci 134: 205–233.

Gubbins, D (2004) Time series analysis and inverse theory for geophysicists. Cambridge, UK.

Jackson, A, Olsen N (2003) Possibilities of re-analysis of old satellite data In: 4th Oersted International Science Team Conference.. DMI, Copenhagen.

Langel, R (1987) The main field, in geomagnetism, Vol. I (Jacobs JA, ed.). Academic Press, London.

Langel, RA (1974) Near-earth magnetic disturbance in total field at high latitudes 1. Summary of data from OGO 2, 4, and 6. J Geophys Res 79: 2363–2371.

Lesur, V, Wardinski I, Rother M, Mandea M (2008) GRIMM: the GFZ reference internal magnetic model based on vector satellite and observatory data. Geophys J Int 173: 382–394.

Ludwig, GH (1963) The Orbiting Geophysical Observatories. Space Sci Rev 2: 175–218.

Luenberger, DG (1969) Optimization by vector space methods. John Wiley & Sons,Inc., New York.

Olsen, N, The Scarf team (2013) The Swarm satellite constellation application and research facility (SCARF) and Swarm data products. Earth Planets Space 65: 1189–1200.

Olsen, N, Mandea M (2008) Rapidly changing flows in the earth’s core. Nat Geosci 1: 390–394.

Sabaka, T, Olsen N, Purucker M (2004) Extending comprehensive models of the Earth’s magnetic field with Ørsted and CHAMP data. Geophys J Int 159: 521–547.

Scales, J, Gersztenkorn A, Treital S (1998) Fast lp solution of large, sparse, linear systems: application to seismic travel time tomography. J Comp Phys 75. pages=314–333,

Tarantola, A (2005) Inverse problem theory and methods for model parameter estimation. SIAM, Society for Industrial and Applied Mathematics, Philadelphia.

Taylor, PT, Schanzle A, Jones T, Langel R, Kahn W (1981) Influence of gravity field uncertainties on the results from POGO and MAGSAT geomagnetic surveys. Geophys Res Lett 8: 1246–1248.

Thomson, A, Lesur V (2007) An improved geomagnetic data selection algorithm for global geomagnetic field modelling. Geophys J Int 169: 951–963.

Walker, M, Jackson A (2000) Robust modelling of the Earth’s magnetic field. Geophys J Int 143: 799–808.

Acknowledgements

We would like to thank Markus Rothacher and Rolf Dach for the helpful discussions regarding the BERNESE GPS software. We thank Mike Purucker and Joe Cain for the assistance with POGO-related questions. This work was partially supported by NERC grant O/S/2001/01227 to the Geospace consortium. We also thank Andrey Sheyko for performing some initial calculations. The paper benefitted from reviews by R. Holme and an anonymous reviewer.

Author information

Authors and Affiliations

Corresponding authors

Additional information

Competing interests

The authors declare that they have no competing interests.

Authors’ contributions

The project was conceived by AJ and NO. RS and FC developed the GPS orbit recalculations. RS, NO, and AJ applied these to the OGO data. All authors read and approved the final manuscript.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (https://creativecommons.org/licenses/by/4.0), which permits use, duplication, adaptation, distribution, and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Stockmann, R., Christiansen, F., Olsen, N. et al. POGO satellite orbit corrections: an opportunity to improve the quality of the geomagnetic field measurements?. Earth Planet Sp 67, 102 (2015). https://doi.org/10.1186/s40623-015-0254-7

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/s40623-015-0254-7