Abstract

In this paper, we introduce and analyze a new general hybrid iterative algorithm for finding a common element of the set of common zeros of two families of finite maximal monotone mappings, the set of fixed points of a nonexpansive mapping and the set of solutions of the variational inequality problem for a monotone, Lipschitz-continuous mapping in a real Hilbert space. Our algorithm is based on four well-known methods: Mann’s iteration method, composite method, outer-approximation method and extragradient method. We prove the strong convergence theorem for the proposed algorithm. The results presented in this paper extend and improve the corresponding results of Wei and Tan (Fixed Point Theory Appl. 2014:77, 2014). Some special cases are also discussed.

Similar content being viewed by others

1 Introduction

Let H be a real Hilbert space and C be a nonempty, closed, and convex subset of H. A mapping \(T:C \to C\) is said to be monotone if

A multi-valued mapping \(T:H \to2^{H}\) is said to be maximal monotone if its graph is not properly contained in the graph of any other monotone mapping. Lots of researches are focused on the maximal monotone mapping due to its importance.

In 1976, to solve the inclusion problem \(0 \in Ax\), Rockafellar [1] introduced the following proximal point method:

where \(J_{r_{n}} = ( I + r_{n}A )^{ - 1}\) and \(A:H \to 2^{H}\) is a maximal monotone mapping. It is shown that the iterative sequence \(\{ x_{n} \}\) converges weakly to a zero of A under some appropriate conditions. The strong convergence of the sequence has been extensively discussed by Zegeye and Shahzad [2] and Hu and Liu [3] in Banach spaces.

In 2014, Wei and Tan [4] introduced the following Mann-type composite viscosity iterative scheme for finding the common zeros of two families of finite maximal monotone mappings. In particular, they proved the following theorem.

Theorem 1.1

([4], Theorem 2.2)

Let H be a real Hilbert space, C be a nonempty closed and convex subset of H, \(A_{i},B_{j}\ ( i = 1,2, \ldots,k;j = 1,2, \ldots,l ):C \to C\) be two families of m-accretive mappings. Suppose

with \(J_{r_{n}}^{A_{i}} = ( I + r_{n}A_{i} )^{ - 1}\), \(J_{r_{n}}^{B_{j}} = ( I + r_{n}B_{j} )^{ - 1}\), \(\sum_{m = 0}^{l} a_{m} = 1\) and \(a_{m} \in ( 0,1 )\). The sequences \(\{ x_{n} \}\), \(\{ y_{n} \}\), and \(\{ u_{n} \}\) are generated by

for every \(n = 0,1,2, \ldots\) , where \(f:C \to C\) is a contraction. If \(D = ( \bigcap_{i = 1}^{k}A_{i}^{ - 1}0 ) \cap ( \bigcap_{j = 1}^{l}B_{j}^{ - 1}0 )\) is nonempty, \(\{ \alpha_{n} \}\), \(\{ \beta_{n} \}\), and \(\{ v_{n} \}\) are three sequences in \(( 0,1 )\) and \(\{ r_{n} \} \subset ( 0, + \infty )\) satisfy the following conditions:

-

(i)

\(\sum_{n = 1}^{\infty} \vert \alpha_{n + 1} - \alpha_{n} \vert < + \infty\), and \(\alpha_{n} \to0\) as \(n\to\infty\);

-

(ii)

\(\sum_{n = 1}^{\infty} \beta_{n} = + \infty\), \(\sum_{n = 1}^{\infty} \vert \beta_{n + 1} - \beta_{n} \vert < + \infty\), and \(\beta_{n} \to0\) as \(n \to\infty\);

-

(iii)

\(\sum_{n = 1}^{\infty} \vert v_{n + 1} - v_{n} \vert < + \infty\), and \(v_{n} \to0\) as \(n \to\infty\);

-

(iv)

\(\sum_{n = 1}^{\infty} \vert r_{n + 1} - r_{n} \vert < + \infty\), and \(r_{n} \to r^{*} \ge\varepsilon> 0\) as \(n \to\infty\).

Then \(\{ x_{n} \}\) converges strongly to a point \(p_{0} \in D\), which is the unique solution of the following variational inequality:

Remark 1.1

Actually, the m-accretive mapping in a Hilbert space defined in Wei and Tan [4] is a maximal monotone mapping.

Theorem 1.1 gives rise naturally to the question we concerned: the real sequences \(\{ \alpha_{n} \}\), \(\{ \beta_{n} \}\), \(\{ v_{n} \}\), and \(\{ r_{n} \}\) are satisfying the conditions (i), (ii), (iii), and (iv). When is the restrictions of the real sequences relaxed? The purpose of our paper is to give an affirmative answer to the question. Moreover, a new algorithm and an extensive problem are considered.

On the other hand, the variational inequality problem is to find \(u \in C\) such that

where \(A:C \to H\) is a nonlinear mapping. The set of solutions of the variational inequality problem is denoted by \(VI ( C,A )\). The variational inequality problem was first discussed by Lions [5]. For finding a solution of the variational inequality problem in Euclidean space \(R^{n}\), Korpelevich [6] introduced the following extragradient method:

for every \(n = 0, 1, 2, \ldots\) where C is a nonempty, closed, and convex subset of \(R^{n}\), \(A:C \to R^{n}\) is a monotone and ρ-Lipschitz-continuous mapping, \(\lambda\in ( 0,\frac{1}{\rho} )\). She showed that if \(VI ( C,A )\) is nonempty, then the generated sequences \(\{ x_{n} \}\) and \(\{ \overline{x}_{n} \}\) converge to the same point \(z \in VI ( C,A )\). The extragradient iterative process was successfully generalized and extended not only to Euclidean but also to Hilbert and Banach spaces; see, e.g., the recent references of [7–9].

Furthermore, Iiduka and Takahashi [10] introduced the following outer-approximation method:

for every \(n = 0, 1, 2, \ldots\) , where \(A:C \to H\) is an ρ-inverse strongly monotone mapping, \(S:C \to C\) is a nonexpansive mapping, \(0 < a \le\lambda_{n} \le b < 2\rho\) and \(0 \le\alpha_{n} \le c < 1\). They showed that if \(F ( S ) \cap VI ( C,A )\) is nonempty, then the generated sequence \(\{ x_{n} \}\) converges to \(P_{F ( S ) \cap VI ( C,A )}x\). The outer-approximation method was originally introduced by Haugazeau in 1968 and was successfully generalized and extended in recent papers [11–14].

In this paper, inspired and motivated by the above work, we introduce the following general hybrid iterative algorithm, which is based on four well-known methods: Mann’s iteration method, the composite method, the outer-approximation method, and the extra-gradient method.

Algorithm 1.1

Let H be a real Hilbert space, C be a nonempty, closed, and convex subset of H, \(A:C \to H\) be a monotone and ρ-Lipschitz-continuous mapping, \(S:C \to C\) be a nonexpansive mapping, \(A_{i},B_{j}\ ( i = 1,2, \ldots,k;j = 1,2, \ldots,l ):C \to C\) be two families of finite maximal monotone mappings. Suppose

with \(J_{r_{n}}^{A_{i}} = ( I + r_{n}A_{i} )^{ - 1}\), \(J_{r_{n}}^{B_{j}} = ( I + r_{n}B_{j} )^{ - 1}\), \(\sum_{m = 0}^{l} a_{m} = 1\) and \(a_{m} \in ( 0,1 )\). The sequences \(\{ x_{n} \}\), \(\{ y_{n} \}\), \(\{ z_{n} \}\), and \(\{ w_{n} \}\) are generated by

for every \(n = 0, 1, 2, \ldots\) .

Our algorithm is first used for finding the common zeros of two families of finite maximal monotone mappings. By using Algorithm 1.1, we will find a common element of the set of common zeros of two families of finite maximal monotone mappings, the set of fixed points of a nonexpansive mapping and the set of solutions of the variational inequality problem for a monotone, Lipschitz-continuous mapping. We will prove the strong convergence theorem for the proposed algorithm, which extends and improves the corresponding results in the early and recent literature; see, e.g., [3, 4, 6, 10, 14].

2 Preliminaries

Throughout our paper, let H be a real Hilbert space with the inner product \(\langle\cdot, \cdot \rangle\) and norm \(\Vert \cdot \Vert \), C be a nonempty, closed, and convex subset of H, and I be the identity mapping on H. We write \(x_{n}\stackrel{w}{\longrightarrow} x\) to indicate that the sequence \(\{ x_{n} \}\) converges weakly to x and \(x_{n} \to x\) to indicate that the sequence \(\{ x_{n} \}\) converges strongly to x. For every point \(x \in H\), there exists an unique nearest point in C, denoted by \(P_{C}x\), such that

\(P_{C}\) is said to be the metric projection of H onto C. A mapping \(T:C \to C\) is said to be ρ-Lipschitz-continuous if

\(T:C \to C\) is said to be nonexpansive if

Obviously, the 1-Lipschitz-continuous mapping is a nonexpansive mapping. It is well known that \(P_{C}\) is nonexpansive.

We use \(F ( T )\) to denote the set of fixed points of T, that is, \(F ( T ) = \{ x \in C:Tx = x \}\). We use \(T^{ - 1}0\) to denote the set of zeros of T, that is, \(T^{ - 1}0 = \{ x \in C:Tx = 0 \}\). We use \(J_{r}^{T} \) (\(r > 0\)) to denote the resolvent operator of T, that is, \(J_{r}^{T} = ( I + rT )^{ - 1}\). As is well known, \(J_{r}^{T}\) is nonexpansive and \(F ( J_{r}^{T} ) = T^{ - 1}0\).

It is well known that a monotone mapping T is maximal if and only if for \(( x,f ) \in H \times H\), \(\langle x - y,f - g \rangle\ge0\) for every \(( y,g ) \in \operatorname{Graph} ( T )\) implies \(f \in Tx\). Next we provide an example to illustrate the concept of maximal monotone mapping. Let \(A:C \to H\) be a monotone, ρ-Lipschitz-continuous mapping and \(N_{C}v\) be the normal cone to C at \(v \in C\), i.e., \(N_{C}v = \{ \omega\in H: \langle v - u, w \rangle\ge0, \forall u \in C \}\). Define

it is well known that in this case T is maximal monotone and \(0 \in Tv\) if and only if \(v \in VI ( C,A )\); see [15]. At the same time, it is well known that H satisfies Opial’s condition [16], i.e., for any sequence \(\{ x_{n} \}\) with \(x_{n}\stackrel{w}{\longrightarrow}x\), the inequality

holds for every \(y \in H\) with \(y \ne x\). H also admits Kadec-Klee property, i.e., sequential weak convergence on the unit sphere coincides with norm convergence; see [17].

In order to prove our main results, we need the following lemmas.

Lemma 2.1

[18]

For \(\forall x \in H\) and \(\forall y \in C\), \(P_{C}\) is characterized by the properties:

-

(i)

\(\langle x - P_{C}x, P_{C}x - y \rangle\ge0\);

-

(ii)

\(\Vert P_{C}x - y \Vert ^{2} + \Vert x - P_{C}x \Vert ^{2} \le \Vert x - y \Vert ^{2}\).

Lemma 2.2

[4]

For \(\forall x \in H\), \(\forall y \in A^{ - 1}0\) and \(r > 0\),

Lemma 2.3

[4]

Let H be a real Hilbert space, C be a nonempty, closed, and convex subset of H, \(A_{i},B_{j}\ ( i = 1,2, \ldots ,k;j = 1,2, \ldots,l ):C \to C\) be two families of finite maximal monotone mappings such that \(( \bigcap_{i = 1}^{k}A_{i}^{ - 1}0 ) \cap ( \bigcap_{j = 1}^{l}B_{j}^{ - 1}0 )\) is nonempty. Suppose

with \(J_{r_{n}}^{A_{i}} = ( I + r_{n}A_{i} )^{ - 1}\), \(J_{r_{n}}^{B_{j}} = ( I + r_{n}B_{j} )^{ - 1}\), \(\sum_{m = 0}^{l} a_{m} = 1\), \(a_{m} \in ( 0,1 )\) and \(r_{n} > 0\). Then \(S_{r_{n}}^{A_{k}A_{k - 1} \cdots A_{1}}:C \to C\) and \(T_{r_{n}}:C \to C\) are nonexpansive.

Lemma 2.4

[4]

Let H, C, \(A_{i}\), \(B_{j}\), \(S_{r_{n}}^{A_{k}A_{k - 1} \cdots A_{1}}\), and \(T_{r_{n}}\) be the same as those in Lemma 2.3, suppose \(( \bigcap_{i = 1}^{k}A_{i}^{ - 1}0 ) \cap ( \bigcap_{j = 1}^{l}B_{j}^{ - 1}0 )\) is nonempty, then \(F ( S_{r_{n}}^{A_{k}A_{k - 1} \cdots A_{1}} ) = \bigcap_{i = 1}^{k}A_{i}^{ - 1}0\) and \(F ( T_{r_{n}} ) = \bigcap_{j = 1}^{l}B_{j}^{ - 1}0\).

3 Strong convergence theorems

Theorem 3.1

Let H be a real Hilbert space, C be a nonempty, closed, and convex subset of H, \(A:C \to H\) be a monotone and ρ-Lipschitz-continuous mapping, \(S:C \to C\) be a nonexpansive mapping, \(A_{i},B_{j}\ ( i = 1,2, \ldots,k;j = 1,2, \ldots,l ):C \to C\) be two families of finite maximal monotone mappings such that \(D = ( \bigcap_{i = 1}^{k}A_{i}^{ - 1}0 ) \cap ( \bigcap_{j = 1}^{l}B_{j}^{ - 1}0 ) \cap F ( S ) \cap VI ( C,A )\) is nonempty. Suppose

with \(J_{r_{n}}^{A_{i}} = ( I + r_{n}A_{i} )^{ - 1}\), \(J_{r_{n}}^{B_{j}} = ( I + r_{n}B_{j} )^{ - 1}\), \(\sum_{m = 0}^{l} a_{m} = 1\) and \(a_{m} \in ( 0,1 )\).

If \(\{ \alpha_{n} \}\), \(\{ \beta_{n} \}\), \(\{ \gamma_{n} \}\), \(\{ \lambda_{n} \}\), and \(\{ r_{n} \}\) satisfy the following conditions:

-

(i)

\(0 \le\alpha_{n} \le b < 1\);

-

(ii)

\(0 < c \le\beta_{n} \le1\);

-

(iii)

\(0 < d \le\gamma_{n} \le1\), \(\beta_{n} + \gamma_{n} \le1\);

-

(iv)

\(0 < p \le\lambda_{n} \le q < \frac{1}{\rho}\);

-

(v)

\(0 < \eta\le r_{n} < + \infty\),

where b, c, d, p, q and η are constants, then the sequences \(\{ x_{n} \}\), \(\{ y_{n} \}\), \(\{ z_{n} \}\), and \(\{ w_{n} \}\) generated by Algorithm 1.1 converge strongly to \(P_{D}x\).

Proof

We will split the proof into five steps.

Step 1. \(D = ( \bigcap_{i = 1}^{k}A_{i}^{ - 1}0 ) \cap ( \bigcap_{j = 1}^{l}B_{j}^{ - 1}0 ) \cap F ( S ) \cap VI ( C,A ) \subset C_{n} \cap Q_{n}\).

First, we show \(D \subset C_{n}\).

Put \(t_{n} = P_{C} ( x_{n} - \lambda_{n}Ay_{n} )\). Take a fixed \(p\in D\) arbitrarily, then we get \(p \in ( \bigcap_{i = 1}^{k}A_{i}^{ - 1}0 ) \cap ( \bigcap_{j = 1}^{l}B_{j}^{ - 1}0 )\), \(p \in F ( S )\) and \(p \in VI ( C,A )\). From Lemma 2.1(ii) and the monotonicity of A, we have

Since \(y_{n} = P_{C} ( x_{n} - \lambda_{n}Ax_{n} )\), A is ρ-Lipschitz-continuous and by Lemma 2.1(i), we have

Substituting (3.2) into (3.1) and by condition (iv), we have

From (3.3), Algorithm 1.1, the convexity of \(\Vert \cdot \Vert ^{2}\) and the nonexpansiveness of S, we have

By (3.4), Algorithm 1.1, Lemma 2.3, and Lemma 2.4, we have

By virtue of the definition of \(C_{n}\) and (3.5), we have \(p \in C_{n}\). So, \(D \subset C_{n}\), for every \(n = 0, 1, 2, \ldots\) .

Next, through the mathematical induction method, we will prove that \(\{ x_{n} \}\) is well defined and \(D \subset C_{n} \cap Q_{n}\), \(n = 0, 1, 2, \ldots \) . For \(n = 0\), \(x_{0} = x \in C\), \(Q_{0} = C\). Hence \(D \subset C_{0} \cap Q_{0}\). Suppose that \(\{ x_{n} \}\) is given and \(D \subset C_{k} \cap Q_{k}\) for some \(k \in N\). Because D is nonempty, \(C_{k} \cap Q_{k}\) is nonempty. It is obvious that \(C_{n}\) is closed and \(Q_{n}\) is closed and convex. As \(C_{n} = \{ z \in C:\Vert z_{n} - x_{n} \Vert ^{2} + 2 \langle z_{n} - x_{n}, x_{n} - z \rangle\le0 \}\), we also have \(C_{n}\) is convex, for every \(n = 0, 1, 2, \ldots \) . Thus, \(C_{k} \cap Q_{k}\) is a nonempty closed convex subset of C, so there exists an unique element \(x_{k + 1} \in C_{k} \cap Q_{k}\) such that \(x_{k + 1} = P_{C_{k} \cap Q_{k}}x\). It is obvious that

Since \(D \subset C_{k} \cap Q_{k}\), we have

That is, \(z \in Q_{k + 1}\). Hence \(D \subset Q_{k + 1}\). Therefore, we get \(D \subset C_{k + 1} \cap Q_{k + 1}\). Thus, \(D \subset C_{n} \cap Q_{n}\), for every \(n = 0, 1, 2, \ldots \) .

Step 2. \(\{ x_{n} \}\), \(\{ y_{n} \}\), \(\{ z_{n} \}\), \(\{ t_{n} \}\) and \(\{ w_{n} \}\) are all bounded.

Let \(p_{0} = P_{D}x\), then \(p_{0} \in D \subset C_{n} \cap Q_{n}\) from step 1. From \(x_{n + 1} = P_{C_{n} \cap Q_{n}}x\) and the definition of the metric projection, we have

for every \(n = 0, 1, 2, \ldots \) . Therefore, \(\{ x_{n} \}\) is bounded. By virtue of (3.3), (3.4) and (3.5), we also obtain \(\{ t_{n} \}\), \(\{ z_{n} \}\) and \(\{ w_{n} \}\) are also bounded.

Again from (3.5), conditions (i) and (v), we have

So, \(\{ y_{n} \}\) is bounded.

Step 3. \(\lim_{n \to\infty} \Vert x_{n} - y_{n} \Vert = 0\), \(\lim_{n \to\infty} \Vert x_{n} - z_{n} \Vert = 0\), \(\lim_{n \to\infty} \Vert x_{n} - w_{n} \Vert = 0\) and \(\lim_{n \to\infty} \Vert x_{n} - t_{n} \Vert = 0\).

As \(Q_{n} = \{ z \in C: \langle x_{n} - z,x - x_{n} \rangle \ge0 \}\), we have \(\langle x_{n} - z,x - x_{n} \rangle \ge0\) for all \(z \in Q_{n}\) and by the definition of the metric projection, we have \(x_{n} = P_{Q_{n}}x\). Because of \(x_{n + 1} = P_{C_{n} \cap Q_{n}}x \in C_{n} \cap Q_{n} \subset Q_{n}\) and (3.6), we have

for every \(n = 0, 1, 2, \ldots \) . Therefore there exists \(\lim_{n \to \infty} \Vert x_{n} - x \Vert \). By Lemma 2.1(ii), we have

this implies that

By \(x_{n + 1} \in C_{n}\) and the definition of \(C_{n}\), we have \(\Vert x_{n + 1} - w_{n} \Vert \le \Vert x_{n + 1} - x_{n} \Vert \) and hence

From conditions (i), (iv), (3.7) and (3.10), we have

In (3.3), using another technique, by condition (iv), we get

From (3.4) and (3.12), we have

So, (3.14) implies that

Since \(\{ x_{n} \}\), \(\{ w_{n} \}\) are bounded, and by conditions (i), (iv), (3.10), and (3.15), we have

By \(\Vert x_{n} - t_{n} \Vert \le \Vert x_{n} - y_{n} \Vert + \Vert y_{n} - t_{n} \Vert \), (3.11) and (3.16), we get

From (3.4), (3.5), Lemma 2.2, Lemma 2.3 and Lemma 2.4, we have, for \(\forall p \in D\),

Then (3.18) implies that

From (3.10), (3.19), condition (ii), we have

Again, using a similar technique to (3.18), we have

Using the same method in (3.19) and (3.20), we have

By induction, we have the following results:

By Lemma 2.2, Lemma 2.3, Lemma 2.4, (3.4), and the convexity of \(\Vert \cdot \Vert ^{2}\), \(\forall p \in D\), we have

Then (3.26) implies that

From the boundedness of \(\{ x_{n} \}\) and \(\{ w_{n} \}\), condition (iii), (3.10) and (3.27), we have

For \(j = 1, 2, \ldots, l\). From (3.28), we have

By Algorithm 1.1, we have

Step 4. \(W ( x_{n} ) \subset D\), where \(W ( x_{n} )\) denotes the set of all the weak limit points of \(\{ x_{n} \}\).

Since \(\{ x_{n} \}\) is bounded, there exists a subsequence of \(\{ x_{n} \}\); for simplicity, we still denote it by \(\{ x_{n} \}\), such that \(x_{n}\stackrel{w}{\longrightarrow}u\) as \(n \to\infty\). In the following, we will prove \(u \in D\).

First, we show \(u \in F ( S )\). Assume \(u \notin F ( S )\), i.e., \(u \ne Su\). Since \(z_{n} = \alpha_{n}x_{n} + ( 1 - \alpha_{n} )St_{n}\), we have

By virtue of condition (i), (3.17), (3.32) and (3.33), we have

By (3.17) and \(x_{n}\stackrel{w}{\longrightarrow}u\), we have \(t_{n}\stackrel{w}{\longrightarrow}u\), where \(\{ t_{n} \} \) is a subsequence of \(\{ t_{n} \}\) for simplicity. From (3.34) and Opial’s condition [16], we have

This is a contradiction. So, we obtain \(u \in F ( S )\).

Second, we show \(u \in\bigcap_{i = 1}^{k}A_{i}^{ - 1}0\). From \(x_{n} - z_{n} \to0\) and \(x_{n}\stackrel{w}{\longrightarrow}u\), we have \(z_{n}\stackrel{w}{\longrightarrow}u\), where \(\{ z_{n} \}\) is a subsequence of \(\{ z_{n} \}\) for simplicity. From (3.20)-(3.24), we have \(S_{r_{n}}^{A_{1}}z_{n}\stackrel{w}{\longrightarrow}u\), \(S_{r_{n}}^{A_{2}A_{1}}z_{n}\stackrel{w}{\longrightarrow}u\), \(S_{r_{n}}^{A_{k - 1}A_{k - 2} \cdots A_{1}}z_{n}\stackrel{w}{\longrightarrow}u\) and \(S_{r_{n}}^{A_{k}A_{k - 1} \cdots A_{1}}z_{n}\stackrel{w}{\longrightarrow}u\).

Since \(( I + r_{n}A_{1} )S_{r_{n}}^{A_{1}}z_{n} = z_{n}\), by (3.24) and condition (v), we have

So, \(A_{1}u = 0\) and then \(u \in A_{1}^{ - 1}0\).

Since \(( I + r_{n}A_{2} )S_{r_{n}}^{A_{2}A_{1}}z_{n} = S_{r_{n}}^{A_{1}}z_{n}\), and by (3.23), we have

So, \(A_{2}u = 0\) and then \(u \in A_{2}^{ - 1}0\).

By induction, we have

So, \(A_{k}u = 0\) and then \(u \in A_{k}^{ - 1}0\). Thus, \(u \in\bigcap_{i = 1}^{k}A_{i}^{ - 1}0\).

Third, we show \(u \in\bigcap_{j = 1}^{l}B_{j}^{ - 1}0\). In fact, by virtue of (3.28) and condition (v), we have

So, \(B_{j}u = 0\), for \(j = 1, 2, \ldots, l\). We can easily see that \(u \in\bigcap_{j = 1}^{l}B_{j}^{ - 1}0\).

Finally, we show \(u \in VI ( C,A )\). Let

where \(N_{C}v\) is the normal cone to C at \(v \in C\). Let \(G ( T )\) be the graph of T and \(( v,\omega ) \in G ( T )\). So, we have \(\omega\in Tv = Av + N_{C}v\) and hence \(\omega- Av \in N_{C}v\). From the definition of the normal cone and \(t_{n} = P_{C} ( x_{n} - \lambda_{n}Ay_{n} ) \in C\), we have

From Lemma 2.1(i), we have

and hence

For simplicity, we assume that \(\{ y_{n} \}\) and \(\{ t_{n} \}\) are also subsequences of \(\{ y_{n} \}\) and \(\{ t_{n} \}\) respectively. Because of \(x_{n} - y_{n} \to0\), \(x_{n} - t_{n} \to0\) and \(x_{n}\stackrel{w}{\longrightarrow}u\), we have \(y_{n}\stackrel{w}{\longrightarrow}u\) and \(xt_{n}\stackrel{w}{\longrightarrow}u\). By the monotonicity of A, (3.35), and (3.36), we obtain

Hence, we obtain \(\langle v - u, \omega- 0 \rangle\ge0\) as \(n \to\infty\). Since T is maximal monotone, we have \(0 \in Tu\) and so \(u \in VI ( C,A )\).

Simply stated, \(u \in D\). We get \(W ( x_{n} ) \subset D\).

Step 5. \(x_{n} \to P_{D}x\) as \(n \to\infty\).

Let \(p_{0} = P_{D}x\), \(\forall u \in W ( x_{n} )\), from step 4, we have \(u \in D\). Suppose \(x_{n}\stackrel{w}{\longrightarrow}u\) as \(n \to\infty\), where \(\{ x_{n} \}\) is looked as a subsequence of \(\{ x_{n} \}\) for simplicity. From the definition of the metric projection and the weak lower semi-continuity of \(\Vert \cdot \Vert \), we have

So, we obtain

From \(x_{n} - x\stackrel{w}{\longrightarrow}u - x\) and the Kadec-Klee property, we have \(x_{n} - x \to u - x\) and hence \(x_{n} \to u\).

Since \(x_{n} = P_{Q_{n}}x\), \(p_{0} \in D \subset C_{n} \cap Q_{n} \subset Q_{n}\) and Lemma 2.1(i), we have

As \(n \to\infty\), we get \(- \Vert p_{0} - u \Vert ^{2} \ge \langle p_{0} - u,x - p_{0} \rangle\ge0\). Hence \(u = p_{0}\). This implies that \(x_{n} \to p_{0} = P_{D}x\) as \(n \to\infty\). From step 3, it is easy to see \(y_{n} \to P_{D}x\), \(z_{n} \to P_{D}x\) and \(w_{n} \to P_{D}x\) as \(n \to\infty\).

This completes the proof. □

From Theorem 3.1, we can get some strong convergence theorems.

Theorem 3.2

Let H be a real Hilbert space, C be a nonempty, closed, and convex subset of H, \(A:C \to H\) be a monotone and ρ-Lipschitz-continuous mapping, \(A_{i},B_{j}\ ( i = 1,2, \ldots,k; j = 1,2, \ldots,l ):C \to C\) be two families of finite maximal monotone mappings such that \(D = ( \bigcap_{i = 1}^{k}A_{i}^{ - 1}0 ) \cap ( \bigcap_{j = 1}^{l}B_{j}^{ - 1}0 ) \cap VI ( C,A )\) is nonempty. Suppose

with \(J_{r_{n}}^{A_{i}} = ( I + r_{n}A_{i} )^{ - 1}\), \(J_{r_{n}}^{B_{j}} = ( I + r_{n}B_{j} )^{ - 1}\), \(\sum_{m = 0}^{l} a_{m} = 1\) and \(a_{m} \in ( 0,1 )\).

The sequences \(\{ x_{n} \}\), \(\{ y_{n} \}\), \(\{ z_{n} \}\) and \(\{ w_{n} \}\) are generated by

if \(\{ \beta_{n} \}\), \(\{ \gamma_{n} \}\), \(\{ \lambda_{n} \}\) and \(\{ r_{n} \}\) satisfy the following conditions:

-

(i)

\(0 < c \le\beta_{n} \le1\);

-

(ii)

\(0 < d \le\gamma_{n} \le1\), \(\beta_{n} + \gamma_{n} \le1\);

-

(iii)

\(0 < p \le\lambda_{n} \le q < \frac{1}{\rho}\);

-

(iv)

\(0 < \eta\le r_{n} < + \infty\),

for every \(n = 0, 1, 2, \ldots \) , where c, d, p, q and η are constants, then the sequences \(\{ x_{n} \}\), \(\{ y_{n} \}\), \(\{ z_{n} \}\), and \(\{ w_{n} \}\) converge strongly to \(P_{D}x\).

Proof

Put \(S = I\) and \(\alpha_{n} = 0\) for all \(n = 0, 1, 2, \ldots \) . By Theorem 3.1, we get the desired results. □

Theorem 3.3

Let H be a real Hilbert space, C be a nonempty, closed, and convex subset of H, \(S:C \to C\) be a nonexpansive mapping, \(A_{i},B_{j}\ ( i = 1,2, \ldots,k;j = 1,2, \ldots,l ):C \to C\) be two families of finite maximal monotone mappings such that \(D = ( \bigcap_{i = 1}^{k}A_{i}^{ - 1}0 ) \cap ( \bigcap_{j = 1}^{l}B_{j}^{ - 1}0 ) \cap F ( S )\) is nonempty. Suppose

with \(J_{r_{n}}^{A_{i}} = ( I + r_{n}A_{i} )^{ - 1}\), \(J_{r_{n}}^{B_{j}} = ( I + r_{n}B_{j} )^{ - 1}\), \(\sum_{m = 0}^{l} a_{m} = 1\) and \(a_{m} \in ( 0,1 )\).

The sequences \(\{ x_{n} \}\), \(\{ z_{n} \}\), and \(\{ w_{n} \}\) are generated by

if \(\{ \alpha_{n} \}\), \(\{ \beta_{n} \}\), \(\{ \gamma_{n} \}\), and \(\{ r_{n} \}\) satisfy the following conditions:

-

(i)

\(0 \le\alpha_{n} \le b < 1\);

-

(ii)

\(0 < c \le\beta_{n} \le1\);

-

(iii)

\(0 < d \le\gamma_{n} \le1\), \(\beta_{n} + \gamma_{n} \le1\);

-

(iv)

\(0 < \eta\le r_{n} < + \infty\),

for every \(n = 0, 1, 2, \ldots \) , where b, c, d and η are constants, then the sequences \(\{ x_{n} \}\), \(\{ z_{n} \}\) and \(\{ w_{n} \}\) converge strongly to \(P_{D}x\).

Proof

Let \(A = 0\) in Theorem 3.1, we obtain the result. □

Theorem 3.4

Let H be a real Hilbert space, C be a nonempty, closed, and convex subset of H, \(A_{i},B_{j}\ ( i = 1,2, \ldots ,k;j = 1,2, \ldots,l ):C \to C\) be two families of finite maximal monotone mappings such that \(D = ( \bigcap_{i = 1}^{k}A_{i}^{ - 1}0 ) \cap ( \bigcap_{j = 1}^{l}B_{j}^{ - 1}0 )\) is nonempty. Suppose

with \(J_{r_{n}}^{A_{i}} = ( I + r_{n}A_{i} )^{ - 1}\), \(J_{r_{n}}^{B_{j}} = ( I + r_{n}B_{j} )^{ - 1}\), \(\sum_{m = 0}^{l} a_{m} = 1\) and \(a_{m} \in ( 0,1 )\). The sequences \(\{ x_{n} \}\) and \(\{ y_{n} \}\) are generated by

if \(\{ \beta_{n} \}\), \(\{ \gamma_{n} \}\), and \(\{ r_{n} \}\) satisfy the following conditions:

-

(i)

\(0 < c \le\beta_{n} \le1\);

-

(ii)

\(0 < d \le\gamma_{n} \le1\), \(\beta_{n} + \gamma_{n} \le1\);

-

(iii)

\(0 < \eta\le r_{n} < + \infty\),

for every \(n = 0, 1, 2, \ldots \) , where c, d, and η are constants, then the sequences \(\{ x_{n} \}\) and \(\{ y_{n} \}\) converge strongly to \(P_{D}x\).

Proof

Let \(A = 0\), \(S = I\) and \(\alpha_{n} = 0\) for all \(n = 0, 1, 2, \ldots \) in Theorem 3.1, we obtain the result.

If \(A_{i},B_{j}\ ( i = 1,2, \ldots,k;j = 1,2, \ldots,l ):H \to 2^{H}\) are set-valued mappings, the resolvent operator \(J_{r}^{T} \) (\(r > 0 \)) of T, is defined by \(J_{r}^{T}x = \{ z \in H:x \in z + rTz \}= ( I + rT )^{ - 1}x\), \(\forall x \in H\), where I denote the identity mapping on H. As is well known, \(J_{r}^{T}:H \to H\) is a single valued mapping. We have the following strong convergence theorem. □

Theorem 3.5

Let H be a real Hilbert space, \(A:H \to H\) be a monotone and ρ-Lipschitz-continuous mapping, \(S:H \to H\) be a nonexpansive mapping, \(A_{i},B_{j}\ ( i = 1,2, \ldots,k; j = 1,2, \ldots,l ): H \to2^{H}\) be two families of finite set-valued maximal monotone mappings such that \(D = ( \bigcap_{i = 1}^{k}A_{i}^{ - 1}0 ) \cap ( \bigcap_{j = 1}^{l}B_{j}^{ - 1}0 ) \cap F ( S ) \cap A^{ - 1}0\) is nonempty. Suppose

with \(J_{r_{n}}^{A_{i}} = ( I + r_{n}A_{i} )^{ - 1}\), \(J_{r_{n}}^{B_{j}} = ( I + r_{n}B_{j} )^{ - 1}\), \(\sum_{m = 0}^{l} a_{m} = 1\) and \(a_{m} \in ( 0,1 )\).

The sequences \(\{ x_{n} \}\), \(\{ y_{n} \}\) and \(\{ z_{n} \}\) are generated by

if \(\{ \alpha_{n} \}\), \(\{ \beta_{n} \}\), \(\{ \gamma_{n} \}\), \(\{ \lambda_{n} \}\), and \(\{ r_{n} \}\) satisfy the following conditions in Theorem 3.1, then the sequences \(\{ x_{n} \}\), \(\{ y_{n} \}\) and \(\{ z_{n} \}\) converge strongly to \(P_{D}x\).

Proof

As is well known, \(P_{H} = I\) and \(VI ( H,A ) = A^{ - 1}0\). Using the similar arguments to those in the proof of Theorem 3.1, we get the desired result immediately. □

Remark 3.1

Theorems 3.1-3.5 greatly improve and extend the previous work in the following respects:

-

(1)

We study the problem of finding a common element of the set of common zeros of two families of finite maximal monotone mappings, the set of fixed points of a nonexpansive mapping and the set of solutions of the variational inequality problem for a monotone, Lipschitz-continuous mapping, i.e., \(( \bigcap_{i = 1}^{k}A_{i}^{ - 1}0 ) \cap ( \bigcap_{j = 1}^{l}B_{j}^{ - 1}0 ) \cap F ( S ) \cap VI ( C,A )\). The problems of finding common elements of [7], Theorem 2.2 and [17], Theorems 3.1 and 4.1 are all special cases of our problem.

-

(2)

The hybrid iterative Algorithm 1.1 greatly generalizes and extends some corresponding algorithms in [4, 6, 10, 14, 19]. It is first used for finding common zeros of two families of finite maximal monotone mappings. The method of proof is also different from the earlier ones.

-

(3)

All parameter sequences \(\{ \alpha_{n} \}\), \(\{ \beta_{n} \}\), \(\{ \gamma_{n} \}\), \(\{ \lambda_{n} \}\) and \(\{ r_{n} \}\) satisfy weaker restrictions in our theorems than those in the theorems of [3, 4]. For example, \(0 \le\alpha_{n} \le b < 1\), neither \(\sum_{n = 1}^{\infty} \vert \alpha_{n + 1} - \alpha_{n} \vert < + \infty\), nor \(\alpha_{n} \to0\) as \(n \to\infty\).

4 Applications

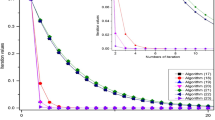

In this section, we will give several examples from the practice with numerical analysis with their new algorithms.

Let H be a real Hilbert space, \(\varphi:H \to R \cup \{ + \infty \}\) be a proper, convex, lower semicontinuous functional. The subdifferentiable operator of φ, denoted by \(\partial\varphi: H \to2^{H}\), is defined at \(x \in H\) by

For each \(x \in H\), \(\partial\varphi ( x )\) is called the subgradient of φ at x. Using different methods, Rockafellar [20] and Alves and Svaiter [21] proved that the subdifferentiable operator is a maximal monotone mapping, respectively. Thus, from Theorem 3.5, we get the following result immediately.

Theorem 4.1

Let H be a real Hilbert space, \(A:H \to H\) be a monotone and ρ-Lipschitz-continuous mapping, \(S:H \to H\) be a nonexpansive mapping, \(\varphi_{1i},\varphi_{2j}\ ( i = 1,2, \ldots,k; j = 1,2, \ldots,l ): H \to R \cup \{ + \infty \}\) be two finite families of proper, convex, lower semicontinuous functionals, \(\partial \varphi_{1i}\) and \(\partial\varphi_{2j}\) be their subdifferentiable operators, respectively, such that \(D = ( \bigcap_{i = 1}^{k}\partial\varphi_{1i}^{ - 1}0 ) \cap ( \bigcap_{j = 1}^{l}\partial\varphi_{2j}^{ - 1}0 ) \cap A^{ - 1}0\) is nonempty. Suppose

with \(J_{r_{n}}^{\partial\varphi_{i}} = ( I + r_{n}\partial\varphi_{i} )^{ - 1}\), \(\sum_{m = 0}^{l} a_{m} = 1\) and \(a_{m} \in ( 0,1 )\). The sequences \(\{ x_{n} \}\), \(\{ y_{n} \}\) and \(\{ z_{n} \}\) are generated by

if \(\{ \alpha_{n} \}\), \(\{ \beta_{n} \}\), \(\{ \gamma_{n} \}\), \(\{ \lambda_{n} \}\), and \(\{ r_{n} \}\) satisfy the conditions in Theorem 3.1, then the sequences \(\{ x_{n} \}\), \(\{ y_{n} \}\), and \(\{ z_{n} \}\) converge strongly to \(P_{D}x\).

We also know a mapping \(T:C \to C\) is called pseudocontractive if

It is equivalent to the following definition:

If T is a pseudocontractive, ρ-Lipschitz-continuous mapping, then \(A = I - T\) is monotone and \(( \rho+ 1 )\)-Lipschitz-continuous mapping, and \(F ( T ) = VI ( C,A )\), more details see [14, 19]. So, by Theorem 3.1, we have the following result immediately.

Theorem 4.2

Let H be a real Hilbert space, C be a nonempty, closed, and convex subset of H, \(T:C \to C\) be a pseudocontractive and ρ-Lipschitz-continuous mapping, \(S:C \to C\) be a nonexpansive mapping, \(A_{i},B_{j}\ ( i = 1,2, \ldots,k;j = 1,2, \ldots,l ):C \to C\) be two families of finite maximal monotone mappings such that \(D = ( \bigcap_{i = 1}^{k}A_{i}^{ - 1}0 ) \cap ( \bigcap_{j = 1}^{l}B_{j}^{ - 1}0 ) \cap F ( S ) \cap F ( T )\) is nonempty. Suppose

with \(J_{r_{n}}^{A_{i}} = ( I + r_{n}A_{i} )^{ - 1}\), \(J_{r_{n}}^{B_{j}} = ( I + r_{n}B_{j} )^{ - 1}\), \(\sum_{m = 0}^{l} a_{m} = 1\) and \(a_{m} \in ( 0,1 )\).

The sequences \(\{ x_{n} \}\), \(\{ y_{n} \}\), \(\{ z_{n} \}\), and \(\{ w_{n} \}\) are generated by

for every \(n = 0, 1, 2, \ldots \) . If \(\{ \alpha_{n} \}\), \(\{ \beta_{n} \}\), \(\{ \gamma_{n} \}\), \(\{ \lambda_{n} \}\), and \(\{ r_{n} \}\) satisfy the following conditions:

-

(i)

\(0 \le\alpha_{n} \le b < 1\);

-

(ii)

\(0 < c \le\beta_{n} \le1\);

-

(iii)

\(0 < d \le\gamma_{n} \le1\), \(\beta_{n} + \gamma_{n} \le1\);

-

(iv)

\(0 < p \le\lambda_{n} \le q < \frac{1}{\rho+ 1}\);

-

(v)

\(0 < \eta\le r_{n} < + \infty\),

where b, c, d, p, q and η are constants, then the sequences \(\{ x_{n} \}\), \(\{ y_{n} \}\), \(\{ z_{n} \}\), and \(\{ w_{n} \}\) converge strongly to \(P_{D}x\).

References

Rockafellar, RT: Monotone operators and the proximal point algorithm. SIAM J. Control Optim. 14, 877-898 (1976)

Zegeye, H, Shahzad, N: Strong convergence theorems for a common zero of a finite family of m-accretive mappings. Nonlinear Anal. 66, 1161-1169 (2007)

Hu, LG, Liu, LW: A new iterative algorithm for common solutions of a finite family of accretive operators. Nonlinear Anal. 70, 2344-2351 (2009)

Wei, L, Tan, RL: Strong and weak convergence theorems for common zeros of finite accretive mappings. Fixed Point Theory Appl. 2014, Article ID 77 (2014)

Lions, JL: Quelques Méthodes de Résolution des Problèmes aux Limites Non Linéaires. Dunod, Paris (1969)

Korpelevich, GM: The extragradient method for finding saddle points and other problems. Matecon 12, 747-756 (1976)

He, BS, Yang, ZH, Yuan, XM: An approximate proximal-extragradient type method for monotone variational inequalities. J. Math. Anal. Appl. 300, 362-374 (2004)

Noor, MA: New extragradient-type methods for general variational inequalities. J. Math. Anal. Appl. 277, 379-394 (2003)

Solodov, MV, Svaiter, BF: An inexact hybrid generalized proximal point algorithm and some new results on the theory of Bregman functions. Math. Oper. Res. 25, 214-230 (2000)

Iiduka, H, Takahashi, W: Strong convergence theorem by a hybrid method for nonlinear mappings of nonexpansive and monotone type and applications. Adv. Nonlinear Var. Inequal. 9, 1-10 (2006)

Bauschke, HH, Combettes, PL: A weak-to-strong convergence principle for Fejér-monotone methods in Hilbert spaces. Math. Oper. Res. 26, 248-264 (2001)

Bauschke, HH, Combettes, PL: Construction of best Bregman approximations in reexive Banach spaces. Proc. Am. Math. Soc. 131, 3757-3766 (2003)

Solodov, MV, Svaiter, BF: Forcing strong convergence of proximal point iterations in a Hilbert space. Math. Program. 87, 189-202 (2000)

Nadezhkina, N, Takahashi, W: Strong convergence theorem by a hybrid method for nonexpansive mappings and Lipschitz-continuous monotone mappings. SIAM J. Optim. 4, 1230-1241 (2006)

Rockafellar, RT: On the maximality of sums of nonlinear monotone operators. Trans. Am. Math. Soc. 149, 75-88 (1970)

Opial, Z: Weak convergence of the sequence of successive approximations for nonexpansive mappings. Bull. Am. Math. Soc. 73, 591-597 (1967)

Radon, J: Theorie und Anwendungen der absolut additiven Mengenfunctionen. Sitz. Akad. Wiss. Wien. 122, 1295-1438 (1913)

Takahashi, W: Nonlinear Functional Analysis. Yokohama Publishers, Yokohama (2000)

Ceng, LC, Ansari, QH, Wong, MM, Yao, JC: Mann type hybrid extragradient method for variational inequalities, variational inclusions and fixed point problems. Fixed Point Theory 13(2), 403-422 (2012)

Rockafellar, RT: On the maximal monotonicity of subdifferential mappings. Pac. J. Math. 33, 209-216 (1970)

Alves, MM, Svaiter, BF: A new proof for maximal monotonicity of subdifferential operators. J. Convex Anal. 2, 345-348 (2008)

Acknowledgements

This work was supported by the Innovation Program of Shanghai Municipal Education Commission (15ZZ068), the Ph.D. Program Foundation of Ministry of Education of China (20123127110002) and the Program for Shanghai Outstanding Academic Leaders in Shanghai City (15XD1503100).

Author information

Authors and Affiliations

Corresponding author

Additional information

Competing interests

The authors declare that they have no competing interests.

Authors’ contributions

All authors contributed equally and significantly in writing this article. All authors read and approved the final manuscript.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Qiu, YQ., Ceng, LC., Chen, JZ. et al. Hybrid iterative algorithms for two families of finite maximal monotone mappings. Fixed Point Theory Appl 2015, 180 (2015). https://doi.org/10.1186/s13663-015-0428-9

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/s13663-015-0428-9