Abstract

Background

Grey literature includes a range of documents not controlled by commercial publishing organisations. This means that grey literature can be difficult to search and retrieve for evidence synthesis. Much knowledge and evidence in public health, and other fields, accumulates from innovation in practice. This knowledge may not even be of sufficient formality to meet the definition of grey literature. We term this knowledge ‘grey information’. Grey information may be even harder to search for and retrieve than grey literature.

Methods

On three previous occasions, we have attempted to systematically search for and synthesise public health grey literature and information—both to summarise the extent and nature of particular classes of interventions and to synthesise results of evaluations. Here, we briefly describe these three ‘case studies’ but focus on our post hoc critical reflections on searching for and synthesising grey literature and information garnered from our experiences of these case studies. We believe these reflections will be useful to future researchers working in this area.

Results

Issues discussed include search methods, searching efficiency, replicability of searches, data management, data extraction, assessing study ‘quality’, data synthesis, time and resources, and differentiating evidence synthesis from primary research.

Conclusions

Information on applied public health research questions relating to the nature and range of public health interventions, as well as many evaluations of these interventions, may be predominantly, or only, held in grey literature and grey information. Evidence syntheses on these topics need, therefore, to embrace grey literature and information. Many typical systematic review methods for searching, appraising, managing, and synthesising the evidence base can be adapted for use with grey literature and information. Evidence synthesisers should carefully consider the opportunities and problems offered by including grey literature and information. Enhanced incentives for accurate recording and further methodological developments in retrieval will facilitate future syntheses of grey literature and information.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Background

Public health researchers may want to include ‘grey literature’ in evidence syntheses for at least three reasons. Firstly, including grey literature can reduce the impact of publication bias as studies with null findings are less likely to be published in peer-reviewed journals [1]. Secondly, grey literature can provide useful contextual information on how, why, and in whom complex public health interventions are effective [2–6]. Finally, syntheses of grey literature can help applied researchers and practitioners understand what interventions exist for a particular problem, the full range of evaluations (if any) that have been conducted, and where further intervention development and evaluation is needed.

Numerous definitions of grey literature exist. These tend to focus on the fact that it is not controlled by commercial organisations, making it difficult to search for and retrieve [7–9]. One common definition restricts grey literature to literature ‘protected by intellectual property rights, of sufficient quality to be collected and preserved by library holdings or institutional repositories’ [10]. Other definitions are more inclusive and propose that, given the growth of new forms of media, grey literature should not be restricted to written ‘literature’ [4].

Much knowledge and evidence in applied settings, such as public health practice, accumulates from innovation in practice [7]. This may include the rationale for why new approaches were tried; what changes, if any, were made to previous approaches and why; what was done and how; and what happened. In some cases, this may be accompanied by more formal process evaluation and, most rarely, outcome evaluation (Hillier-Brown F, Summerbell C, Moore H, Wrieden W, Abraham C, Adams J, Adamson A, Araujo-Soares V, White M, Lake A. A description of interventions promoting healthier ready-to-eat meals (to eat in, to take away, or to be delivered) sold by specific food outlets in England: a systematic mapping and evidence synthesis. BMC public health 2015, unpublished). Interventions and evaluations that were primarily conducted as part of, or to inform, practice may be particularly unlikely to be described in peer-reviewed publications or even formally documented in reports available to others in electronic or hard copy. Information on these activities may, instead, be stored in more private or informal spaces such as meeting notes, emails, or even just in people’s memories. This information is likely to be of insufficient formality to meet the definition of grey literature. We term this ‘grey information’.

The phrase ‘grey information’ has been used previously to extend the concept of grey literature to a wider range of sources [11], but it is not widely used and we are not aware of a previously stated clear definition. The term ‘grey data’ [12] has also been used specifically to describe user-generated web content—something that we feel is more formal and public than ‘grey information’ but less formal than ‘grey literature’. Table 1 describes defining aspects and examples of the three terms: grey literature, grey data, and grey information.

Systematically identifying grey literature and grey data is not a straightforward task [5, 7–9, 12, 13]. Systematically identifying ‘grey information’ is likely to be even more challenging. A number of case studies have been published describing procedures for searching and retrieving ‘grey literature’ in public health contexts [13, 14]. These tend to adopt relatively similar approaches including searching databases of peer-reviewed and grey literature; conducting structured searches of relevant websites and search engines; and contacting relevant experts [5, 8, 9, 13].

On three occasions, various authors of this article have attempted to systematically search for and synthesise public health grey literature and information. Here, we briefly describe our experiences of these three case studies and then critically reflect on these. ‘Critical reflection’ is a concept most often associated with adult learning and professional development. Although poorly and diversely defined, critical reflection is generally associated with post hoc examination of experiences in an attempt to improve future practice [15, 16]. Our aim was to provide insights on searching for and synthesising grey literature and information that may be useful for future researchers.

Methods

The aims, methods, results, and conclusions of our three case studies are summarised in Table 2.

Review 1: the health, social, and financial impacts of welfare right advice delivered in healthcare settings [17]

Our first review included grey literature alongside peer-reviewed literature in a systematic review of the health, social, and financial impacts of welfare rights advice delivered in healthcare settings [17]. In part, this systematic review was conducted in preparation for an application for funding for a randomised controlled trial of the impacts of welfare rights advice on health in older people [18, 19]. Thus, we were interested both in the extent and findings of other research. We conducted a quantitative synthesis of the average financial impacts of welfare rights advice and a narrative synthesis of other quantitative and qualitative findings.

As expected, less than half of the evaluations of welfare right advice included in the review were published in peer-reviewed journals. The remainder were published in reports published by delivery organisations, universities, other research organisations, and service and research funders.

Review 2: adult cooking skill interventions in England [20]

Our second attempt to review grey literature explored the nature, content, and range, but not effects, of interventions seeking to enhance adult cooking skills delivered in England [20]. Our intention was to identify the most sustainable and theoretically promising of these to take forward for more formal evaluation. Similar to other reviews [21], our synthesis focused on categorising interventions according to delivery setting and training model and summarising the training delivered, throughput, setup and running costs, funding, and behaviour change techniques used [22].

This review focused entirely on grey literature and information and did not include any searching for peer-reviewed literature. A scoping review of peer-reviewed outcome evaluations of adult cooking skill interventions was conducted in parallel [23].

Review 3: interventions promoting healthier ready-to-eat meals (to eat in, to take away, or to be delivered) sold by specific food outlets in England (Hillier-Brown F, Summerbell C, Moore H, Wrieden W, Abraham C, Adams J, Adamson A, Araujo-Soares V, White M, Lake A. A description of interventions promoting healthier ready-to-eat meals (to eat in, to take away, or to be delivered) sold by specific food outlets in England: a systematic mapping and evidence synthesis. BMC public health 2015, unpublished)

Finally, we recently completed a review of interventions aiming to promote healthier ready-to-eat meals (to eat in, to take away, or to be delivered) sold by food outlets in England (Hillier-Brown F, Summerbell C, Moore H, Wrieden W, Abraham C, Adams J, Adamson A, Araujo-Soares V, White M, Lake A. A description of interventions promoting healthier ready-to-eat meals (to eat in, to take away, or to be delivered) sold by specific food outlets in England: a systematic mapping and evidence synthesis. BMC public health 2015, unpublished). This explored the nature and range of interventions implemented and summarised evaluation findings. We used a narrative approach to evidence synthesis, characterising interventions, identifying issues of design and delivery, and summarising evaluation findings on process, acceptability, cost, and impact. Our intention in this case was to use the findings to inform development and evaluation of new interventions based on the most promising features of previous ones.

Whilst this review did include searches of peer-reviewed literature, these only identified one included study—although two other relevant peer-reviewed papers were identified using other methods. As in review 2, a linked review of peer-reviewed evidence was conducted in parallel [24].

Results and discussion

Whilst there is much useful guidance available on evidence synthesis in general [25–27], and searching for and synthesising grey literature in particular [5, 8, 9, 13, 28–30], one size rarely fits all. Throughout, and in common with the best research practice, our methods were guided by our aims.

Searching

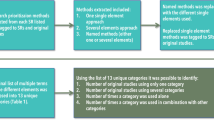

In evidence syntheses, the sensitivity, specificity, and type of information retrieved by searches is highly dependent on the search strategy used. As described above and in Table 2, we used a variety of different methods to search for information across all three reviews. We reflect on some of the issues raised below and summarise some of our conclusions in Table 3.

Search methods

In all three cases, and as recommended by others [5, 8, 9, 13, 28–30], we used a wide variety of methods to search for relevant grey literature and information. Across our three examples, we searched trial databases (e.g. www.isrctn.com), grey literature databases (e.g. www.opengrey.eu), websites of relevant organisations (e.g. charities with an interest in social inclusion in review 1), and a popular internet search engine (i.e. www.google.co.uk).

We also contacted those working in the areas we were interested in. We sent both personalised requests to key informants, as well as more generic requests to professional organisations and groups, using a variety of methods. In reviews 1 and 3, researchers working in relevant fields were contacted via email and requests for information were sent to relevant email lists, posted on online bulletin boards, and published in the ‘professional press’ (e.g. newsletters of professional organisations). In reviews 2 and 3, we also attempted to contact relevant individuals working in all local public health departments in England. In review 3, we incorporated social media into our search strategy.

Review 1 was conducted in 2005 when social media and social networks were less well established than they are now. To target large networks of professionals in this case, we published requests for help in the ‘professional press’. By the time review 3 was conducted, in 2014, social media had become an important space for professional networking. We posted numerous tweets requesting relevant information and asking those who saw them to repost (i.e. ‘retweet’) them to their own networks—in order to increase the potential number of people who saw these requests. Many of these tweets tagged (i.e. ‘@mentioned’) relevant professional organisations. We are not aware of previous reviewers using social media to identify grey (or peer-reviewed) literature or information.

This transition in methods from reviews 1–3 over just less than a decade reflects how information storage and sharing has changed over this time in the UK. At the same time, information storage and sharing patterns may vary internationally. Search methods need to adapt to local and international trends in information systems, and researchers should be flexible to this.

Searching efficiency

As with ‘typical’ systematic reviews [31, 32], our searching sacrificed specificity for sensitivity. Searches yielded many results that did not meet our inclusion criteria. The resource and scientific implications of the trade-off between search specificity and sensitivity have been widely discussed in the systematic review literature [33, 34].

Previous case studies have described very different ‘hit’ rates associated with different grey literature search strategies. In a review of interventions to promote walking and cycling, requests for help emailed to key informants added little to database searching [35]. Whereas, in a review of behaviour change interventions published only in grey literature, 70 % of items included in the final synthesis came from key informants [5]. Similarly, we found that different methods for locating information were differentially effective across our three reviews. In review 1, generic requests sent to email lists and published in the professional press were particularly useful. On a number of occasions, these requests were passed through a number of people before someone responded with relevant information—further adding to the time taken to conduct searching that is discussed below. Perhaps, similarly, in review 3, Twitter requests were particularly valuable. These were widely retweeted, vastly increasing the pool of potential viewers, but this appeared to be a much quicker process than cascading of email requests and requests in the professional press.

The efficiency of different search methods are, at least partly, dependent on the quality of the search strategy used. Simple comparisons, such as those described above, are not necessarily fair. Nor is it clear if the differences in efficiency are predictable. If the efficiency of different approaches to searching could be predicted in advance, this could help reviewers to focus their resources.

Our resources were most limited in review 2, and it became evident early in searching that we would not be able to complete a comprehensive search for all adult cooking skill interventions in England. We made a pragmatic decision to focus instead on identifying intervention types—based on delivery context and training model. As others have done [13], we borrowed the concept of ‘data saturation’ from qualitative research and stopped searching when we felt we were not identifying any new intervention types. We felt that sacrificing sensitivity in this case did not compromise our ability to meet our aims.

Using others to target searching

In reviews 2 and 3, we attempted to ask all local public health departments in England what relevant projects they were aware of. We are not aware of any peer-reviewed publications which report the efforts of other evidence synthesisers, or indeed primary researchers, who have attempted to systematically contact all local public health departments across one country in this way. That said, we recognise that the gathering of data on the activity and type of public health interventions conducted at various geographic levels is a relatively common activity. To facilitate this, we identified named individuals and contact email addresses for those with relevant roles using internet searches and telephone calls. This was a time-consuming task in itself. The requests for information we sent specifically asked recipients to pass our enquiries onto those they felt were best placed to respond. As with other email requests (see above), there were examples where messages had been passed through a number of individuals before someone responded.

Replicability of searches

Whilst in all cases, we had clear plans describing what we felt were comprehensive, systematic, and replicable approaches to information searching, it is hard to claim that these led to replicable results. Certainly, it would be possible for future investigators to replicate our search methods, but it is unlikely that these would lead to the same results on replication, as would be expected when using electronic databases. On two different occasions, different people would be likely to see calls for information on social networks or in the professional press. Even if the same people did see requests for help on different occasions, many other contextual factors may influence how likely they were to respond or pass them onto those most likely to be able to respond.

As time passes and grey literature and information becomes lost or forgotten, potential respondents’ ability to provide usable information may also decline. Whilst contacting both those currently and previously in posts as key informants may, theoretically, reduce this problem, it may not be practically possible. Others have highlighted the problem of replicability in relation to internet searching, particularly using search engines such as www.google.com which returns results based on, amongst other things, recent popularity [8, 9].

The conclusion that searching for grey literature and information can be systematic, but not necessarily replicable, reinforces the importance of using many overlapping searching approaches. This maximises the chances that any particular piece of relevant information will be found.

Developing the ‘best’ search methods

Whilst our search methods were similar to, and built on, those described by others as well as on ‘best practice’ guidance [5, 8, 9, 13, 28–30], it is difficult to be sure what the ‘best’ search methods for retrieving grey literature and information are. Whilst it is possible to validate search approaches in peer-reviewed literature against a ‘gold standard’ of hand searching [31, 32], no similar gold standard exists for grey literature and information: there is no definitive repository against which other search methods can be compared. This makes it difficult to ever be sure that all relevant information has been found or validate new search methods.

Data management

In all three reviews, we found data management to be challenging. Technology now allows fairly straightforward integration of academic databases and reference management software—both of which facilitate information organisation and record keeping. Such workflows are not well developed for grey literature and information. Developing clear filing and recording systems, using simple spreadsheets, helped us to keep track of where and how information had been identified.

However, we found it harder to capture other aspects of our searches. For example, whilst tools like NCapture allow social media content to be imported in NVivo for qualitative analysis, they do not necessarily provide a useful facility for capturing how many people (and who) ‘retweeted’ a particular tweet. It is even harder to capture when requests for information are circulated using more private methods such as email. For these reasons, we are not able to provide accurate estimates of how many people saw our requests for information.

Data extraction

In all three reviews, we developed and used data extraction forms to record information. In review 1, we adopted a similar approach to data extraction used in many ‘typical’ systematic reviews—if information was not provided in the written report we obtained, we assumed this information was missing. However, systematic review guidance encourages reviewers to attempt to minimise missing data by contacting authors of original papers [25]. We adopted an approach much more similar to this in reviews 2 and 3. In fact, many data extraction forms in these reviews were completed during telephone calls or following email conversations with key informants. To maximise accuracy, in review 3, we asked informants to check electronic versions of completed data extraction forms. As there is often little or no documentary evidence to refer back to, ensuring data extraction forms are as accurate and complete as possible is particularly important in reviews of grey literature and information. This reflects and reinforces the fact that much information on interventions in public health practice is not well documented and can be ‘temporary’: once the relevant individuals move to new posts, and interventions recede into the past, individual and institutional memories are likely to fade. This further contributes to the limited replicability of this sort of grey information searching.

Despite the efforts we made in reviews 2 and 3 to speak with those directly responsible for intervention design and delivery, we were often not able to obtain the information we intended to capture. For example, of 102 interventions identified in review 3, we were not able to obtain any information beyond a programme name in 27 cases. In most, if not all, cases, our failure to obtain information appeared to be because such information was not documented or easily obtainable. For example, many of the costs of public health interventions in everyday practice are unclear even to those responsible for them. Whilst the cost of additional staff may be explicit, costs for office space for those staff might be absorbed by organisations and so be much more implicit.

The problem of limited data availability is common to all types of evidence synthesis, but others have noted it as a particular problem when synthesising grey literature [7, 13]. When attempting to synthesise the extent of public health practice, it may be important to be aware of the types of information that are and are not important to practitioners and easy for them to record and hence are likely to be documented. For example, service throughput appears to be much more likely to be documented than outcomes of interventions (Hillier-Brown F, Summerbell C, Moore H, Wrieden W, Abraham C, Adams J, Adamson A, Araujo-Soares V, White M, Lake A. A description of interventions promoting healthier ready-to-eat meals (to eat in, to take away, or to be delivered) sold by specific food outlets in England: a systematic mapping and evidence synthesis. BMC public health 2015, unpublished) [17].

Risk of bias and value of information

The risk of bias of any piece of information is dependent, in part, on the question it is being used to answer. In review 2, and part of review 3, our aims were to describe the nature and range of particular classes of interventions. The risk of bias of individual pieces of grey literature and information in this case is likely to be low—there is little reason why such information would be misrecorded. In contrast, in review 1 and part of review 3, we aimed to synthesise evaluation findings. The risk of bias of grey literature and information in this case may be likely to be higher. Indeed, in reviews 1 and 3, we described some aspects of evaluation methods relating to risk of bias. In both cases, we concluded that the majority of studies were methodologically weak and at high risk of bias.

Evaluations found in grey literature and information may be at high risk of bias for a number of reasons. In public health practice, evaluations are often conducted by the same practitioners who developed and delivered an intervention. This results in an inherent conflict of interest which may increase risk of bias. In public health practice, resources for evaluation are often limited, limiting the scope of what is possible [36]. Furthermore, the interest of funders and practitioners is often on throughput rather than outcomes [37], limiting the scope of what is necessary. Whilst many evaluations included in our reviews were at high risk of bias in terms of conclusions about effects on outcomes, they may well have been fit for the purpose for which they were conducted.

Methods for assessing risk of bias in controlled trials are well established [38, 39], and tools for other types of study are becoming available [40–42]. However, these approaches may be too narrow in perspective for grey literature and information. Realist synthesis takes a researcher-driven ‘value of information’ approach to assessing studies, rather than the more familiar protocol-driven risk of bias approach used in ‘typical’ systematic reviews. In the value of information paradigm, individual studies are included if the information they provide is considered relevant and rigorous enough to help contribute to answering the research question [6, 43, 44]. Whilst this requires researchers to make judgements about what is ‘relevant and rigorous enough’, it may result in inclusion of more potentially useful grey literature and information than stricter approaches which exclude studies based on risk of bias assessments.

Data synthesis

Many approaches to data synthesis in the context of systematic reviewing and evidence synthesis have now been described, and these are not limited to quantitative meta-analysis [25, 45]. Although we performed a quantitative synthesis in review 1, this focused on the economic benefits of welfare rights advice to recipients (which could be summarised in £/week). We were not able to summarise health and social implications so simply and used narrative syntheses for these.

In review 3, in an attempt to capture all the data available to us, we adopted a three tiered approach to synthesis. Firstly, we listed all relevant interventions that we found (n = 102; tier 1). Secondly, for those interventions for which we had further information on content and delivery, we summarised this using a standard template (n = 75; tier 2). Finally, we summarised the results of any evaluations of included interventions (n = 30; tier 3). Interventions in each tier were nested within each other such that all interventions were included in the tier 1 synthesis, but only a subset of these were included in tier 2, and only a subset of those in tier 2 were included in the tier 3.

These differences in synthesis approach reflect both the contrasting aims of different reviews and how flexible and responsive researchers should be to the realities of data availability within grey literature and grey information.

Time and resources

Systematic reviews can be time and resource intensive. In ‘typical’ systematic reviews, preliminary scoping reviews can help reviewers estimate the size of a full review and resources required [46]. ‘Rapid reviews’ of peer-reviewed literature offer the hope and potential for conducting much quicker evidence syntheses that arrive at the same conclusions as full reviews [47–49]. Unfortunately, there is no clear equivalent of scoping or rapid reviews in relation to grey literature and information. As others have noted, searching for less formally archived information is, almost by nature, time-consuming and inefficient [5, 8, 50].

Encouraging public health practitioners to deposit intervention documents and information in online repositories (e.g. www.ncdlinks.org) could enable more efficient information retrieval on current and recent practice [7]. But the utility of such databases relies heavily on their coverage, and previous attempts to ensure high coverage have been varying in their success [51]. With few obvious current incentives for busy practitioners to deposit information in these repositories, it is not necessarily clear how they could be made more useful. Further attention could be given to developing such incentives. In addition, developing better searching and retrieval methods should also facilitate syntheses of grey literature and information, particularly using more sophisticated methods for internet searching such as text analytics or data mining [7, 52]. However, if grey information is not recorded in a searchable way (e.g. is retained only on private networks or in memory), this is also only a partial solution. Action is required to improve both information deposition and information retrieval.

Differentiating evidence synthesis from primary research

Although we approached and considered all three of our case studies as evidence syntheses, they could be considered as verging on primary research. This is particularly the case for reviews 2 and 3 where we made attempts to contact all local authorities in England and collect unpublished information via telephone or email interviews with key informants. Contacting authors is encouraged in ‘typical’ systematic reviews, particularly to collect information that may be incompletely recorded in published outputs [25]. This type of contact is not routinely considered primary research, as the contact is limited to clarifying or augmenting existing published information—rather than collecting entirely new data. However, in many cases in reviews 2 and 3, no published information was available to clarify or augment meaning that these reviews could, perhaps, be considered as collecting new data.

This grey area between evidence synthesis and primary research is particularly important in terms of research ethics. In general, research ethics committee review is not required for evidence synthesis projects because they do not involve research participants [53]. In line with this, we did not obtain research ethics committee review for any of the case studies described. It is not clear at what point ‘key informants’ become ‘research participants’ and hence when the type of evidence synthesis we conducted in reviews 2 and 3 becomes primary research that does require research ethics committee review. Further consideration, and clarification, of this issue by research ethics organisations would be helpful. In the meantime, and as has been previously proposed, it may be judicious for researchers proposing to conduct this type of work to at least discuss it informally with their local research ethics committee before proceeding [54].

Conclusion

We propose the term ‘grey information’ to capture a wide range of documented and undocumented information that may be excluded by common definitions of ‘grey literature’. Information on applied public health research questions relating to the nature and range of public health interventions, and many evaluations of these interventions, may be predominantly, or only, held in grey literature and grey information. Evidence syntheses on these topics need, therefore, to embrace grey literature and information. Many standard systematic review methods for searching, appraising, managing, and synthesising the evidence base can be adapted for use with grey literature and information. Evidence synthesisers should carefully consider the opportunities and problems offered by including grey literature and information. Further action to improve both information deposition and retrieval would facilitate more efficient and complete syntheses of grey literature and information.

References

Hopewell S, McDonald S, Clarke M, Egger M. Grey literature in meta-analyses of randomized trials of health care interventions. Cochrane Database Syst Rev. 2007;(2):Mr000010. http://www.ncbi.nlm.nih.gov/pubmed/17443631.

Craig P, Dieppe P, Macintyre S, Mitchie S, Nazareth I, Petticrew M. Developing and evaluating complex interventions: the new Medical Research Council guidance. BMJ. 2008;337:979–83.

Moore GF, Audrey S, Barker M, Bond L, Bonell C, Hardeman W, Moore L, O’Cathain A, Tinati T, Wight D, et al. Process evaluation of complex interventions: Medical Research Council guidance. BMJ. 2015;350:h1258.

Pappas C, Williams I. Grey literature: its emerging importance. Journal of Hospital Librarianship. 2011;11(3):228–34.

Franks H, Hardiker NR, McGrath M, McQuarrie C. Public health interventions and behaviour change: reviewing the grey literature. Public Health. 2012;126(1):12–7.

Pawson R, Greenhalgh T, Harvey G, Walshe K. Realist synthesis: an introduction. In: ESRC research methods programme. Manchester: University of Manchester; 2004.

Turner AM, Liddy ED, Bradley J, Wheatley JA. Modeling public health interventions for improved access to the gray literature. J Med Libr Assoc. 2005;93(4):487–94.

Benzies KM, Premji S, Hayden KA, Serrett K. State-of-the-evidence reviews: advantages and challenges of including grey literature. Worldviews Evid Based Nurs. 2006;3(2):55–61.

Mahood Q, Van Eerd D, Irvin E. Searching for grey literature for systematic reviews: challenges and benefits. Research Synthesis Methods. 2014;5(3):221–34.

Schopfel J. Towards a prague definition of grey literature. In: Twelfth International Conference on Grey Literature: Transparency in Grey Literature. Prague, Czech Republic: DANS; 2010.11–26.

Chavez TA, Perrault AH, Reehling P, Crummett C. The impact of grey literature in advancing global karst research: an information needs assessment for a globally distributed interdisciplinary community. Publishing Research Quarterly. 2007;23(1):3–18.

Banks M. Blog posts and tweets: the next frontier for grey literature. In: Darace D, Schopfel J, editors. Grey literature in library and information studies. Berlin: DeGruyter Saur; 2010. p. 217–26.

Godin K, Stapleton J, Kirkpatrick SI, Hanning RM, Leatherdale ST. Applying systematic review search methods to the grey literature: a case study examining guidelines for school-based breakfast programs in Canada. Syst Rev. 2015;4:138.

Canadian Agency for Drugs and Technologies in Health. Grey Matters: A Practical Search Tool for Evidence-based Medicine. In: Health CAfDaT, editor. 2014.

Mann K, Gordon J, MacLeod A. Reflection and reflective practice in health professions education: a systematic review. Advances in health sciences education : theory and practice. 2009;14(4):595–621.

White S, Fook J, Gardner F. Critical reflection : a review of contemporary literature and understandings. In: White S, Fook J, Gardner F, editors. Critical reflection in health and social care. Maidenhead: Open University Press; 2006. p. 3–20.

Adams J, White M, Moffatt S, Howel D, Mackintosh J. A systematic review of the health, social and financial impacts of welfare rights advice delivered in healthcare settings. BMC Public Health. 2006;6:doi:10.1186/1471-2458-1186-1181.

White M, Howell D, Deverill M, Moffatt S, Lock K, McColl E, Milne E, Rubin G, Aspray T. Randomised controlled trial, economic and qualitative process evaluation of domiciliary welfare rights advice for socio-economically disadvantaged older people recruited via primary health care: the Do-Well study. Newcastle upon Tyne: Newcastle University; 2013.

Haighton C, Moffatt S, Howel D, McColl E, Milne E, Deverill M, Rubin G, Aspray T, White M. The Do-Well study: protocol for a randomised controlled trial, economic and qualitative process evaluations of domiciliary welfare rights advice for socio-economically disadvantaged older people recruited via primary health care. BMC Public Health. 2012;12:382.

Adams J, Simpson E, Penn L, Adamson A, White M. Research to support the evaluation and implementation of adult cooking skills interventions in the UK: phase 1 report. Newcastle upon Tyne: Public Health Research Consortium; 2011.

Bardus M, van Beurden SB, Smith JR, Abraham C. A review and content analysis of engagement, functionality, aesthetics, information quality, and change techniques in the most popular commercial apps for weight management. Int J Behav Nutr Phys Act. 2016;13(1):1–9.

Abraham C, Michie S. A taxonomy of behaviour change techniques used in interventions. Health Psychol. 2008;27:379–87.

Rees R, Hinds K, Dickson K, O’Mara-Eves A, Thomas J. Communities that cook: a systematic review of the effectiveness and appropriateness of interventions to introduce adults to home cooking. London: EPPI-Centre, Social Science Research Unit, Institute of Education, University of London; 2012.

Hillier-Brown FC, Moore HJ, Lake AA, Adamson AJ, White M, Adams J, Araujo-Soares V, Abraham C, Summerbell CD. The effectiveness of interventions targeting specific out-of-home food outlets: protocol for a systematic review. Syst Rev. 2014;3(1):1–5.

Higgins J, Green S (eds.). Cochrane Handbook for Systematic Reviews of Interventions version 5.1. [updated March 2011]. Available from www.cochrane-handbook.org: The Cochrane Collaboration; 2011.

Systematic reviews: CRD’s guidance for undertaking reviews in health care. York: Centre for Reviews and Dissemination, University of York; 2009.

Hammerstrøm K, Wade A, Jørgensen A-MK. Searching for studies: a guide to information retrieval for Campbell systematic reviews. 2010.

Grey literature: home [http://guides.mclibrary.duke.edu/greyliterature]. Accessed 19 Sep 2016.

Grey literature for health sciences: getting started [http://guides.library.ubc.ca/greylitforhealth]. Accessed 19 Sep 2016.

How to find…: Grey literature [http://libguides.library.curtin.edu.au/c.php?g=202368&p=1332723]. Accessed 19 Sep 2016.

Montori VM, Wilczynski NL, Morgan D, Haynes RB. Optimal search strategies for retrieving systematic reviews from Medline: analytical survey. BMJ. 2005;330(7482):68.

Haynes RB, McKibbon KA, Wilczynski NL, Walter SD, Werre SR, for the Hedges Team. Optimal search strategies for retrieving scientifically strong studies of treatment from Medline: analytical survey. BMJ. 2005;330:1179.

Sampson M, McGowan J, Cogo E, Grimshaw J, Moher D, Lefebvre C. An evidence-based practice guideline for the peer review of electronic search strategies. J Clin Epidemiol. 2009;62(9):944–52.

Boluyt N, Tjosvold L, Lefebvre C, Klassen TP, Offringa M. Usefulness of systematic review search strategies in finding child health systematic reviews in MEDLINE. Arch Pediatr Adolesc Med. 2008;162(2):111–6.

Ogilvie D, Hamilton V, Egan M, Petticrew M. Systematic reviews of health effects of social interventions: 1. Finding the evidence: how far should you go? J Epidemiol Community Health. 2005;59(9):804–8.

Roberts K, Cavill N, Rutter H. Standard evaluation framework for weight management interventions. London: National Obesity Observatory; 2009.

Department for Health. The public health outcomes framework for England, 2013-2011. London: Department of Health; 2012.

Higgins JPT, Altman DG, Gøtzsche PC, Jüni P, Moher D, Oxman AD, Savović J, Schulz KF, Weeks L, Sterne JAC. The Cochrane Collaboration’s tool for assessing risk of bias in randomised trials. BMJ. 2011;343:d5928.

Lundh A, Gøtzsche PC. Recommendations by Cochrane Review Groups for assessment of the risk of bias in studies. BMC Med Res Methodol. 2008;8(1):1–9.

Sanderson S, Tatt ID, Higgins JP. Tools for assessing quality and susceptibility to bias in observational studies in epidemiology: a systematic review and annotated bibliography. Int J Epidemiol. 2007;36(3):666–76.

Thomas B, Ciliska D, Dobbins M, Micucci S. A process for systematically reviewing the literature: providing the research evidence for public health nursing interventions. Worldviews Evid Based Nurs. 2004;1(3):176–84.

Deeks JJ, Dinnes J, D'Amico R, Sowden AJ, Sakarovitch C, Song F, Petticrew M, Altman DG. Evaluating non-randomised intervention studies. Health technology assessment (Winchester, England). 2003;7(27):iii-x, 1-173.

Wong G, Greenhalgh T, Westhorp G, Buckingham J, Pawson R. RAMESES publication standards: realist syntheses. BMC Med. 2013;11(1):21.

Pawson R, Greenhalgh T, Harvey G, Walshe K. Realist review—a new method of systematic review designed for complex policy interventions. J Health Serv Res Policy. 2005;10 Suppl 1:21–34.

Barnett-Page E, Thomas J. Methods for the synthesis of qualitative research: a critical review. BMC Medical Research Methodology. 2009;9(59). doi:10.1186/1471-2288-1189-1159.

Armstrong R, Hall BJ, Doyle J, Waters E. ‘Scoping the scope’ of a cochrane review. J Public Health. 2011;33(1):147–50.

Schünemann HJ, Moja L. Reviews: rapid! rapid! rapid! …and systematic. Syst Rev. 2015;4(1):1–3.

Polisena J, Garritty C, Kamel C, Stevens A, Abou-Setta AM. Rapid review programs to support health care and policy decision making: a descriptive analysis of processes and methods. Syst Rev. 2015;4(1):1–7.

Featherstone RM, Dryden DM, Foisy M, Guise J-M, Mitchell MD, Paynter RA, Robinson KA, Umscheid CA, Hartling L. Advancing knowledge of rapid reviews: an analysis of results, conclusions and recommendations from published review articles examining rapid reviews. Syst Rev. 2015;4(1):1–8.

Cook AM, Finlay IG, Edwards AGK, Hood K, Higginson IJ, Goodwin DM, Normand CE, Douglas H-R. Efficiency of searching the grey literature in palliative care. J Pain Symptom Manage. 2001;22(3):797–801.

Kothari A, Hovanec N, Hastie R, Sibbald S. Lessons from the business sector for successful knowledge management in health care: a systematic review. BMC Health Services Research. 2011;11(173). doi:10.1186/1472-6963-11-173.

Lefebvre C, Glanville J, Wieland LS, Coles B, Weightman AL. Methodological developments in searching for studies for systematic reviews: past, present and future? Syst Rev. 2013;2(1):1–9.

World Medical Association Declaration of Helsinki: ethical principles for medical research involving human subjects. JAMA. 2013;310(20):2191-2194.

Vergnes JN, Marchal-Sixou C, Nabet C, Maret D, Hamel O. Ethics in systematic reviews. J Med Ethics. 2010;36(12):771–4.

Acknowledgements

Not applicable

Funding

This paper presents independent research funded by the National Institute for Health Research (NIHR)’s School for Public Health Research (SPHR) with support from Durham and Newcastle Universities and the NIHR Collaboration for Leadership in Applied Health Research and Care of the South West Peninsula (PenCLAHRC). The views expressed are those of the authors and not necessarily those of the NHS, the NIHR, or the Department of Health.

The School for Public Health Research (SPHR) is funded by the National Institute for Health Research (NIHR). SPHR is a partnership between the Universities of Sheffield, Bristol, Cambridge, and UCL; The London School for Hygiene and Tropical Medicine; The Peninsula College of Medicine and Dentistry; the LiLaC collaboration between the Universities of Liverpool and Lancaster and Fuse; The Centre for Translational Research in Public Health, a collaboration between Newcastle, Durham, Northumbria, Sunderland, and Teesside Universities.

FHB, CDS, HJM, WLW, AA, VAS, and AAL are members of Fuse; JA and MW are funded by the Centre for Diet and Activity Research (CEDAR). Fuse and CEDAR are UKCRC Public Health Research Centre of Excellence. Funding for Fuse and CEDAR from the British Heart Foundation, Cancer Research UK, Economic and Social Research Council, Medical Research Council, and the National Institute for Health Research, under the auspices of the UK Clinical Research Collaboration, is gratefully acknowledged.

The funders played no role in the design of the study, analysis, and interpretation of data, writing the manuscript, or the decision to submit for publication.

Availability of data and materials

This manuscript does not refer to any new data. Of the three case studies that form the focus for the discussion, two have either been previously published and are now in the public domain [17, 20] and one is under review (Hillier-Brown F, Summerbell C, Moore H, Wrieden W, Abraham C, Adams J, Adamson A, Araujo-Soares V, White M, Lake A. A description of interventions promoting healthier ready-to-eat meals (to eat in, to take away, or to be delivered) sold by specific food outlets in England: a systematic mapping and evidence synthesis. BMC public health 2015, unpublished).

Authors’ contributions

JA and MW (with additional co-authors) conducted case study 1 [17]; JA and MW (with additional co-authors) conducted case study 2 [20]; FHB, CS, HJM, JA, VAS, MW, and ALA (with additional co-authors) conducted case study 3 (Hillier-Brown F, Summerbell C, Moore H, Wrieden W, Abraham C, Adams J, Adamson A, Araujo-Soares V, White M, Lake A. A description of interventions promoting healthier ready-to-eat meals (to eat in, to take away, or to be delivered) sold by specific food outlets in England: a systematic mapping and evidence synthesis. BMC public health 2015, unpublished). FHB, CS, HJM, JA, VAS, MW, and ALA contributed to the development of the ideas described in this paper. JA led the writing of the current manuscript. FHB, CS, HJM, JA, VAS, MW, and ALA provided the critical comments on previous drafts of the manuscript and agreed to submit the final version. All authors read and approved the final manuscript.

Competing interests

The authors declare that they have no competing interests.

Consent for publication

Not applicable

Ethics approval and consent to participate

This is not a primary piece of research, no participants were recruited, and ethical approval was not required.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The Creative Commons Public Domain Dedication waiver (http://creativecommons.org/publicdomain/zero/1.0/) applies to the data made available in this article, unless otherwise stated.

About this article

Cite this article

Adams, J., Hillier-Brown, F.C., Moore, H.J. et al. Searching and synthesising ‘grey literature’ and ‘grey information’ in public health: critical reflections on three case studies. Syst Rev 5, 164 (2016). https://doi.org/10.1186/s13643-016-0337-y

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/s13643-016-0337-y