Abstract

Background

Identifying relevant studies for inclusion in a systematic review (i.e. screening) is a complex, laborious and expensive task. Recently, a number of studies has shown that the use of machine learning and text mining methods to automatically identify relevant studies has the potential to drastically decrease the workload involved in the screening phase. The vast majority of these machine learning methods exploit the same underlying principle, i.e. a study is modelled as a bag-of-words (BOW).

Methods

We explore the use of topic modelling methods to derive a more informative representation of studies. We apply Latent Dirichlet allocation (LDA), an unsupervised topic modelling approach, to automatically identify topics in a collection of studies. We then represent each study as a distribution of LDA topics. Additionally, we enrich topics derived using LDA with multi-word terms identified by using an automatic term recognition (ATR) tool. For evaluation purposes, we carry out automatic identification of relevant studies using support vector machine (SVM)-based classifiers that employ both our novel topic-based representation and the BOW representation.

Results

Our results show that the SVM classifier is able to identify a greater number of relevant studies when using the LDA representation than the BOW representation. These observations hold for two systematic reviews of the clinical domain and three reviews of the social science domain.

Conclusions

A topic-based feature representation of documents outperforms the BOW representation when applied to the task of automatic citation screening. The proposed term-enriched topics are more informative and less ambiguous to systematic reviewers.

Similar content being viewed by others

Background

The screening phase of systematic reviews aims to identify citations relevant to a research topic, according to a certain pre-defined protocol [1–4] known as the Population, the Intervention, the Comparator and the Outcome (PICO) framework. This framework seeks to identify the Population, the Intervention, the Comparator and the Outcome. This process is usually performed manually, which means that reviewers need to read thousands of citations during the screening phase, due to the rapid growth of the biomedical literature [5], making it an expensive and time-consuming process. According to Wallace et al. [6], an experienced reviewer is able to screen two abstracts per minute on average, with more complex abstracts taking longer. Moreover, a reviewer needs to identify all eligible studies (i.e. 95–100 % recall) [7, 8] in order to minimise publication bias. The number of relevant citations is usually significantly lower than the number of the irrelevant, which means that reviewers have to deal with an extremely imbalanced datasets. To overcome these limitations, methods such as machine learning, text mining [9, 10], text classification [11] and active learning [6, 12] have been used to partially automate this process, in order to reduce the workload, without sacrificing the quality of the reviews. Many approaches based on machine learning have shown to be helpful in reducing the workload of the screening phase [10]. The majority of reported methods exploit automatic or semi-automatic text classification to assist in the screening phase. Text classification is normally performed using the bag-of-words (BOW) model. The model assumes that the words in the documents are used as features for the classification, but their order is ignored. One of the problems of the BOW model is that the number of unique words that appear in a complete corpus (a collection of documents) can be extremely large; using such a large number of features can be problematic for certain algorithms. Thus, a more compact representation of documents is necessary to allow machine learning algorithms to perform more efficiently. In contrast to previous approaches that have used only BOW features, in this study, we systematically compare the two feature representations (Latent Dirichlet allocation (LDA) features and BOW features). Additionally, we investigate the effect of using different parameters (kernel functions) on the underlying classifier (i.e. support vector machine (SVM)).

Topic analysis

Topic analysis is currently gaining popularity in both machine learning and text mining applications [13–16]. A topic model is normally defined as an approach for discovering the latent information in a corpus [17]. LDA [18] is an example of a probabilistic topic modelling technique [19], which assumes that a document covers a number of topics and each word in a document is sampled from the probability distributions with different parameters, so each word would be generated with a latent variable to indicate the distribution it comes from. By computing the extent to which each topic is represented in a document, the content of the document can be represented at a higher level than possible using the BOW approach, i.e. as a set of topics. The generative process of LDA follows the below steps to generate a document w in a corpus D, while Table 1 gives a list of all involved notation:

-

Choose K topics ϕ∼Dir(nβ)

-

Choose topics proportions \(\vec {\theta }_{m} \sim \text {Dir}(\vec {\alpha })\)

-

For each word w n in document m:

-

1.

Choose a topic \(z_{n,m} \sim \text {Multinomial}(\vec {\theta }_{m}) \)

-

2.

Choose a word wn,m from \(p(w_{n,m} |\vec {\phi }_{z_{n,m}}, \vec {\theta }_{m})\), a multinomial probability conditioned on the topic z n .

The hyperparameters \(\vec {\alpha }\) and \(\vec {\beta }\) are the parameters of the prior probability distributions which facilitate calculation. The hyperparameters are initialized as constant values. They may be considered as hidden variables which require estimation. The joint probability, i.e. the complete-data likelihood of a document, can be specified according to Fig. 1. The joint probability is the basis of many other derivations [20].

Besides LDA, there are many other approaches for discovering abstract information from a corpus. Latent semantic analysis [21] makes use of singular value decomposition (SVD) to discover the semantic information in a corpus; SVD is a factorization of matrix which has many applications in statistics and signal processing. Unlike other topic models producing results, an approach [22] based on the anchor-word algorithm [23] provides an efficient and visual way for topic discovery. This method firstly reduces the dimensions of words co-occurrence matrix into two or three, then identify the convex hull of these words, which can be considered as a rubber band holding these words. The words at anchor points are considered as topics.

Related work

Automatic text classification for systematic reviews has been investigated by Bekhuis et al. [24] who focussed on using supervised machine learning to assist with the screening phase. Octaviano et al. [25] combined two different features, i.e. content and citation relationship between the studies, to automate the selection phase as much as possible. Their strategy reduced workload by 58.2 %. Cohen et al. [26] compared different feature representations for supervised classifiers. They concluded that the best feature set used a combination of n-grams and Medical Subject Headings (MeSH) [27] features. Felizardo et al. developed a visual text mining tool that integrated many text mining functions for systemic reviews and evaluated the tool with 15 graduate students [28]. The results showed that the use of the tool is promising in terms of screening burden reduction. Fiszman et al. [29] combined symbolic semantic processing with statistical methods for selecting both relevant and high-quality citations. Frimza et al. [30] introduced a per-question classification method that uses an ensemble of classifiers that exploit the particular protocol used in creating the systematic review. Jonnalagadda et al. [31] described a semi-automatic system that requires human intervention. They successfully reduced the number of articles that needed to be reviewed by 6 to 30 % while maintaining a recall performance of 95 %. Matwin et al. [32] exploited a factorised complement naive Bayes classifier for reducing the workload of experts reviewing journal articles for building systematic reviews of drug class efficacy. The minimum and maximum workload reductions were 8.5 and 62.2 %, respectively, and the average over 15 topics was 33.5 %. Wallace et al. [12] showed that active learning has the potential to reduce the workload of the screening phase by 50 % on average. Cohen et al. [33] constructed a voting perceptron-based automated citation classification system which is able to reduce the number of articles that needs to be reviewed by more than 50 %. Bekhuis et al. [34] investigated the performance of different classifiers and feature sets in terms of their ability to reduce workload. The reduction was 46 % for SVMs and 35 % for complement naive Bayes classifiers with bag-of-words extracted from full citations. From a topic modelling perspective, Miwa et al. [8] firstly used LDA to reduce the burden of screening for systematic reviews using an active learning strategy. The strategy utilised the topics as another feature representation of documents when no manually assigned information such as MeSH terms is available. Moreover, the author used topic features for training ensemble classifiers. Similarly, Bekhuis et al. [35] investigated how the different feature selections, including topic features, affect the performance of classification.

Methods

Results obtained by Miwa et al. [8] showed that LDA features can significantly reduce the workload involved in the screening phase of a systematic review. Building on previous approaches, we investigate how topic modelling can assist systematic reviews. By using topics generated by LDA as the input features for each document, we train a classifier and compare it with a classifier trained on the BOW representation. Technical terms extracted by the TerMine term extraction web service [36] were located in each document to allow them to be represented as a set of words and terms which would make topics more readable and eliminate ambiguity. The objectives of this paper are the following:

-

To investigate whether LDA can be successfully applied to text classification in support of the screening phase in systematic reviews.

-

To compare the performance of two methods for text classification: one based on LDA topics and the other based on the BOW model.

-

To evaluate the impact of using different numbers of topics in topic-based classification.

Experimental design

In order to carry out a systematic comparison of the two different approaches to text classification, our study is divided into two parts. Firstly, we evaluate the baseline approach, i.e. an SVM using BOW features. This SVM classifier is created using LIBSVM [37]. The second part of the experiment involves applying LDA for modelling topic distribution in the datasets, followed by the training of an SVM-based classifier using the topic distribution as features. Documents in the dataset are randomly and evenly spilt into training and test sets, keeping the ratio between relevant and irrelevant documents in each set the same as the ratio in the entire dataset. Henceforth, in this article, the documents relevant to a topic (i.e. positively labelled instances) are referred to as “relevant instances”. BOW features are weighted by term frequency/inverse document frequency (TF-IDF) as a baseline. The topic-based approach applies LDA to produce a topic distribution for each document. We used Gensim [38], an implementation of LDA in Python, to predict the topic distribution for each document. The topic distributions are utilised for both training and testing the classifier and evaluating the results. Other modelling strategies and classifiers (e.g. k-nearest neighbours) were also explored. However, since they failed to obtain robust results, we do not present further details.

To evaluate the classifiers, the standard metrics of precision, recall, F-score, accuracy, area under the receiver operating characteristic curve (ROC) and precision-recall curve (PRC). However, in our case, accuracy was found not to be a suitable indicator of an effective performance, due to the significant imbalance between relevant and irrelevant instances in the dataset; this ratio is 1:9 approximately for each corpus (Table 2) which will be introduced later. Based upon this ratio, weights are added to every training instance in order to reduce the influence caused by imbalanced data [39]. In evaluating classification performance, we place a particular emphasis on recall since, as explained above, high recall is vital to achieve inclusiveness, which is considered to be such an important factor in the perceived validity of a systematic review.

Since most of our corpora are domain-specific, non-compositional multi-word terms may lose their original meaning if we split such terms into constituent words and ignore word order and grammatical relations. Thus, multi-word terms are automatically extracted using TerMine, which is a tool designed to discover multi-word terms by ranking candidate terms from a part-of-speech (POS) tagged corpus according to C-value [36]. Candidate terms are identified and scored via POS filters (e.g. adjective*noun+). A subset of these terms is extracted by defining a threshold for the C-value. TerMine makes use of both linguistic and statistical information in order to identify technical terms in a given corpus with the maximum accuracy. There are some other topic models that attempt to present multi-word expressions in topics. For example, the LDA collocation model [40] introduced a new latent variable to indicate if a word and its immediate neighbour can constitute a collocation. Unlike the methods mentioned, the advantage of TerMine is that it is applied independently of the topic modelling process. Thus, once it has been used to locate terms in a corpus, different topic models can be applied, without having to re-extract the terms each time the parameters of the topic model are changed. It is also important to note that long terms may have other shorter terms nested within them. Such nested terms may also be identified by TerMine. For example, “logistic regression model” contains the terms “logistic regression” and “regression model”. However, there is no doubt that the original term “logistic regression model” is more informative. Thus, our strategy to locate the terms is that the longer terms are given higher priority to be matched and our maximum length for a term is four tokens.

As for parameter tuning, all the experiments have been performed with default parameters for classifiers and symmetry hyperparameters for LDA, which means that every topic will be sampled with equal probability.

Results and discussion

We performed our experiments using five datasets corresponding to completed reviews, in domains of social science and clinical trials. These reviews constitute the “gold standard” data, in that for each domain, they include expert judgements about which documents are relevant or irrelevant to the study in question. The datasets were used as the basis for the intrinsic evaluation of the different text classification methods. Our conclusions are supported by the Friedman test (Table 3) which is a nonparametric test that measure how different three or more matched or paired groups are based on ranking. Given that the methods we applied produced roughly comparable patterns of performance across each of the five different datasets, we report here only on the results for one of the corpora. However, the specific results achieved for the other corpora are included as supplementary material (Additional file 1).

Dataset

We applied the models to three datasets provided by the Evidence Policy and Practice Information and Coordinating Center (EPPI-center) [41] and two datasets previously presented in Wallace et al. [6]. These labelled corpora include reviews ranging from clinical trials to reviews in the domain of social science. The datasets correspond specifically to cigarette packaging, youth development, cooking skills, chronic obstructive pulmonary disease (COPD), proton beam and hygiene behaviour. Each corpus contains a large number of documents and, as mentioned above, there is an extremely low proportion of relevant documents in each case. For example, the youth development corpus contains a total of 14,538 documents, only 1440 of which are relevant to the study. Meanwhile, the cigarette packaging subset contains 3156 documents in total, with 132 having been marked as relevant. Documents in the datasets were firstly prepared for automatic classification using a series of pre-processing steps consisting of stop-word removal, conversion of words to lower case and removal of punctuation, digits and the words that appear only once. Finally, word counts were computed and saved in a tab-delimited format (SVMlight format), for subsequent utilisation by the SVM classifiers. Meanwhile, TerMine was used to identify multi-word terms in each document, as the basis for characterising their content. Preliminary experiments indicated that only using multi-word terms to characterise documents may not be sufficient since, in certain documents, the number of such terms could be small or zero. Accordingly, words and terms were retained as features for an independent experiment.

BOW-based classification

Table 4 shows the performance of the SVM classifiers trained with TF-IDF features when applied to all corpora. Due to the imbalance between relevant and irrelevant instances in the dataset, each positive instance was assigned a weight, as mentioned above. Default values for SVM training parameters were used (i.e. no parameter tuning was carried out), although three different types of kernel functions were investigated, i.e. linear, radial basis function (RBF) and polynomial (POLY). Unlike the linear kernel that aims to find a unique hyperplane between positive and negative instances, RBF and POLY can capture more complex distinctions between classes than the linear kernel. As illustrated in Fig. 2, the BOW-based classification achieves the best performance when the linear kernel function is used. However, it is necessary to recall that the ratio of positively (i.e. relevant) to negatively (i.e. irrelevant) labelled instances is approximately 1:9 in our corpora. Hence, even if a classifier labels all test samples as irrelevant instances, a very-high accuracy will still be obtained. However, for systematic reviews, it is most important to retrieve the highest possible number of relevant documents; recall is a much better indicator of performance than accuracy. Secondly, both the RBF and polynomial kernel functions obtained zero for precision, recall and F1-score. This can be attributed to the imbalanced nature of the corpora [42]. Additionally, the BOW representation produces a high dimensional space (given the large number of unique words in the corpora). In this high dimensional space, the two non-linear kernels (RFB and POLY) yield a very low performance.

Topic-based classification

Topic-based classification was undertaken by firstly analysing and predicting the topic distribution for each document and then classifying the documents using topics as features. During the phase of training the model, the topic assigned to each word in a document can be considered as a hidden variable, this problem can be solved by using approximation methods such as Monte Carlo Markov chain (MCMC) or variational inference. However, these methods are sensitive to initial parameter settings which are usually set randomly before the first iteration. Consequently, the results could fluctuate within a certain range. The results produced by topic-based classification are all average results. However, our results show that topic distribution is an ideal replacement for the traditional BOW features. Besides other advantages, the most obvious advantage of which is to reduce the dimensions of features for representing a document. Experimental settings were identical in the evaluation of the two sets of classifiers, except for the features being topic distributions in one case and BOW in the other. The optimal LDA model was derived through experimentation with differing numbers of topics (which can also be referred to as “topic density”). In the experiments performed, several values for this parameter were explored.

Table 5 shows the results of the evaluation of SVM models trained with topic distribution features using linear, RBF and POLY kernel functions, respectively. We show how the performance varies according to different topic density values for the LDA model. These values were varied from 2 to 100 (inclusive), in increments of 10, and from 100 to 500 in increments of 100 approximately. Generally, each topic density would correspond to a certain size of corpus and vocabulary. Empirically, the larger the size of the corpora and vocabulary, the greater the number of topics that is needed to accurately represent their contents, and vice versa. Tables 6 and 7 show two samples of sets of words and/or terms that are representative of a topic in the same corpus (youth development). Term-enriched (TE) topics include multi-word terms identified by TerMine as well as single words, whilst ordinary topics consist only of single words. From the tables, it can be clearly seen that term-enriched topics are more distinctive and readable than single-word topics. As the classification performance was similar to the single-word topic-based classification, a table like Table 5 will not be presented here. However, a comparison of the classification performance for the three approaches, i.e. BOW-based, topic-based and TE-topic-based will be presented in the next section.

Comparison of approaches

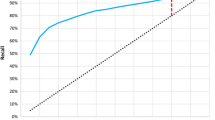

A comparison of the performance of the BOW-based model (BOW in legend) against the performance of models trained with topic-based model (TPC) and term enriched-topic model (TE) is presented in this section. According to the results of using a linear function for model training (Fig. 2), models based on topic and TE-topic distribution features yield lower precision, F-score, ROC and PRC but obtain higher recall. For this comparison, the best performing topic-based model (with topic density set to 150 for youth development corpus) was used. It can be observed from Fig. 2 that the BOW-based model outperforms the topic- and TE-topic based one in terms of all metrics except for recall. Figures 3 and 4 illustrate the results of using RBF and POLY kernel functions, respectively, in training BOW, topic-based models and TE-topic-based model on the youth development corpus. It can be observed that employing these kernels, the SVM models trained with topic and TE-topic distributions outperform those trained with BOW features by a large margin. Another observation is that training using RBF and POLY kernel functions significantly degraded the performance of BOW-based models. Using RBF and POLY kernel functions, the BOW-based classifiers perform poorly, with zero in precision, recall and F-score. As noted earlier, high accuracy is not a good basis for judging performance due to the imbalance between positive and negative instances, i.e. even if a classifier labels every document as a negative sample, accuracy will still be around 90 %. Figure 5 gives the comparison of different kernel functions using topic features on the youth development corpus, indicating that taking all measures into account, a linear kernel function gave the best overall performance, achieving the highest score in every metric other than recall. However, both RBF and POLY kernel functions outperformed linear, albeit by only 4 %, on the recall measure, which we have identified as highly pertinent to the systematic review use-case. We used a generic list of kernel functions ranked from high to low in terms of recall for topic-based and TE-topic-based feature in Table 8: POLY >RBF>LINEAR. For a ranked list of feature types in terms of recall, it is: TPC >TE>BOW. Additionally, Figs. 6 and 7 show precision-recall and ROC curves achieved by the models.

Conclusions

Our experiments demonstrated that the performance of BOW SVM with linear kernel function has produced the most robust results achieving the highest values in almost every metric, except for recall. But on any systematic reviews classification task, poor performance in recall needs to be addressed. The BOW model yielded a poor performance with RBF and POLY kernel functions due to the data imbalance and dimensionality issue. Topic-based classification significantly addresses this problem by dramatically reducing the dimensionality of the representation of a document (topic feature). The topic-based classifier yielded a higher recall, which means more relevant documents will be identified. Moreover, the topic features enable the classifier to work with RBF and POLY kernels and produce better recall comparing with a linear kernel. The same patterns were observed in all corpora, although there is only one example presented in this article.

As future work, we will further investigate the generalisability of the model to diverse domains. Moreover, we plan to explore different machine learning and text mining techniques that can be used to support systematic reviews such as paragraph vectors and active learning. Also, further experiments will be performed in a more realistic situation. For example, whether topics could help reviewers’ decision in “live” systematic review would be an interesting research area in the future. An intuitive image of TE topics has been made in this article. For public health reviews where topics are multidimensional, the presence of diverse multi-word terms in a dataset can be an important element that affects the performance of classifiers. But TE topics have the potential to deal with these difficulties. Further investigation on TE topics will be performed, which would benefit reviewers and help them to understand topics more easily compared to ordinary topics.

Abbreviations

- BOW:

-

bag-of-words

- LDA:

-

latent Dirichlet allocation

- ATR:

-

automatic term recognition

- PICO:

-

the Population, the intervention, comparator and the outcome

- SVM:

-

support vector machine

- SVD:

-

singular value decomposition

- TF-IDF:

-

term frequency/inverse document frequency

- ROC:

-

receiver operating characteristic

- PRC:

-

precision-recall curve

- POS:

-

part-of-speech

- RBF:

-

radial basis function

- POLY:

-

polynomial

- MCMC:

-

Monte Carlo Markov chain

- TE:

-

term-enriched

References

Barza M, Trikalinos TA, Lau J. Statistical considerations in meta-analysis. Infect Dis Clin North Am. 2009; 23(2):195–210.

Counsell C. Formulating questions and locating primary studies for inclusion in systematic reviews. Ann Intern Med. 1997; 127(5):380–7.

Boudin F, Nie JY, Bartlett JC, Grad R, Pluye P, Dawes M. Combining classifiers for robust PICO element detection. BMC Med Inform Decis Mak. 2010; 10(1):29.

Demner-Fushman D, Lin J. Answering clinical questions with knowledge-based and statistical techniques. Comput Ling. 2007; 33(1):63–103.

Hunter L, Cohen KB. Biomedical language processing: perspective what’s beyond PubMed?Mol Cell. 2006; 21(5):589.

Wallace BC, Small K, Brodley CE, Trikalinos TA. Active learning for biomedical citation screening. In: Proceedings of the 16th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining. New York, NY, USA: ACM: 2010. p. 173–82.

Cohen AM. Performance of support-vector-machine-based classification on 15 systematic review topics evaluated with the WSS@95 measure. J Am Med Inform Assoc. 2011; 18(1):104–4.

Miwa M, Thomas J, O’Mara-Eves A, Ananiadou S. Reducing systematic review workload through certainty-based screening. J Biomed Inform. 2014; 51:242–53.

Ananiadou S, Rea B, Okazaki N, Procter R, Thomas J. Supporting systematic reviews using text mining. Syst Rev. 2015; 4(1):5.

O’Mara-Eves A, Thomas J, McNaught J, Miwa M, Ananiadou S. Using text mining for study identification in systematic reviews: a systematic review of current approaches. Sys Rev. 2015; 4(1). doi:10.1186/2046-4053-4-5. Highly Accessed.

García Adeva J, Pikatza Atxa J, Ubeda Carrillo M, Ansuategi Zengotitabengoa E. Automatic text classification to support systematic reviews in medicine. Expert Syst Appl. 2014; 41(4):1498–1508.

Wallace BC, Trikalinos TA, Lau J, Brodley C, Schmid CH. Semi-automated screening of biomedical citations for systematic reviews. BMC Bioinf. 2010; 11(1):55.

Lukins SK, Kraft NA, Etzkorn LH. Source code retrieval for bug localization using latent Dirichlet allocation. In: Reverse Engineering, 2008. WCRE’08. 15th Working Conference On. Antwerp: IEEE: 2008. p. 155–64.

Henderson K, Eliassi-Rad T. Applying latent Dirichlet allocation to group discovery in large graphs. In: Proceedings of the 2009 ACM Symposium on Applied Computing. New York, NY, USA: ACM: 2009. p. 1456–1461.

Maskeri G, Sarkar S, Heafield K. Mining business topics in source code using latent Dirichlet allocation. In: Proceedings of the 1st India Software Engineering Conference. New York, NY, USA: ACM: 2008. p. 113–20.

Linstead E, Lopes C, Baldi P. An application of latent Dirichlet allocation to analyzing software evolution. In: Machine learning and applications, 2008. ICMLA’08. Seventh International Conference On. San Diego, CA: IEEE: 2008. p. 813–8.

Topic Model. http://en.wikipedia.org/wiki/Topic_model, accessed 1-Oct-2015.

Blei DM, Ng AY, Jordan MI. Latent Dirichlet allocation. Js Mach Learn Res. 2003; 3:993–1022.

Redner RA, Walker HF. Mixture densities, maximum likelihood and the EM algorithm. SIAM Rev. 1984; 26(2):195–239.

Heinrich G. Parameter estimation for text analysis. 2005. Technical report. http://www.arbylon.net/publications/text-est.pdf. accessed 1-10-2015.

Berry MW, Dumais ST, O’Brien GW. Using linear algebra for intelligent information retrieval. SIAM review. 1995; 37(4):573–595.

Lee M, Mimno D. Low-dimensional embeddings for interpretable anchor-based topic inference. In: Proceedings of Empirical Methods in Natural Language Processing: 2014.

Arora S, Ge R, Moitra A. Learning topic models—going beyond SVD. In: Foundations of Computer Science (FOCS), 2012 IEEE 53rd Annual Symposium On. New Brunswick, NJ: IEEE: 2012. p. 1–10.

Bekhuis T, Demner-Fushman D. Towards automating the initial screening phase of a systematic review. Stud Health Technol Inform. 2010; 160(Pt 1):146–50.

Octaviano FR, Felizardo KR, Maldonado JC, Fabbri SC. Semi-automatic selection of primary studies in systematic literature reviews: is it reasonable?Empir Softw Eng. 2015; 20(6):1898–1917.

Cohen AM. Optimizing feature representation for automated systematic review work prioritization. In: AMIA Annual Symposium Proceedings: 2008. p. 121. American Medical Informatics Association.

Medical Subject Headings. https://www.nlm.nih.gov/pubs/factsheets/mesh.html, accessed 1-Oct-2015.

Romero Felizardo K, Souza SR, Maldonado JC. The use of visual text mining to support the study selection activity in systematic literature reviews: a replication study. In: Replication in empirical software engineering research (RESER), 2013 3rd International Workshop On. Baltimore, MD: IEEE: 2013. p. 91–100.

Fiszman M, Bray BE, Shin D, Kilicoglu H, Bennett GC, Bodenreider O, et al.Combining relevance assignment with quality of the evidence to support guideline development. Stud Health Technol Inform. 2010; 160(01):709.

Frunza O, Inkpen D, Matwin S. Building systematic reviews using automatic text classification techniques. In: Proceedings of the 23rd International Conference on Computational Linguistics: Posters. Beijing, China: Association for Computational Linguistics: 2010. p. 303–11.

Jonnalagadda S, Petitti D. A new iterative method to reduce workload in systematic review process. Int J Comput Biol Drug Des. 2013; 6(1):5–17.

Matwin S, Kouznetsov A, Inkpen D, Frunza O, O’Blenis P. A new algorithm for reducing the workload of experts in performing systematic reviews. J Am Med Inform Assoc. 2010; 17(4):446–53.

Cohen AM, Hersh WR, Peterson K, Yen PY. Reducing workload in systematic review preparation using automated citation classification. J Am Med Inform Assoc. 2006; 13(2):206–19.

Bekhuis T, Demner-Fushman D. Screening nonrandomized studies for medical systematic reviews: a comparative study of classifiers. Artif Intell Med. 2012; 55(3):197–207.

Bekhuis T, Tseytlin E, Mitchell KJ, Demner-Fushman D. Feature engineering and a proposed decision-support system for systematic reviewers of medical evidence. PloS one. 2014; 9(1):86277.

Frantzi K, Ananiadou S, Mima H. Automatic recognition of multi-word terms: the C-value/NC-value method. Int J Digit Libr. 2000; 3(2):115–30.

Chang CC, Lin CJ. LIBSVM: a library for support vector machines. ACM Trans Intell Syst Technol (TIST). 2011; 2(3):27.

Řehuřek R, Sojka P. Software framework for topic modelling with large corpora. In: Proceedings of the LREC 2010 Workshop on New Challenges for NLP Frameworks. Valletta, Malta: ELRA: 2010. p. 45–50. http://is.muni.cz/publication/884893/en.

Kotsiantis S, Kanellopoulos D, Pintelas P. Handling imbalanced datasets: a review. GESTS Int Trans Comput Sci Eng. 2006; 30(1):25–36.

Steyvers M, Griffiths T. Matlab topic modeling tooklbox 1.3. 2005. http://psiexp.ss.uci.edu/research/programs_data/toolbox.htm, accessed 1-Oct-2015.

EPPI-center. http://eppi.ioe.ac.uk/cms/, accessed 1-Oct-2015.

Akbani R, Kwek S, Japkowicz N. Applying support vector machines to imbalanced datasets. In: Machine learning: ECML 2004. Berlin Heidelbeg: Springer: 2004. p. 39–50.

Acknowledgements

This work was supported by MRC (“Supporting Evidence-based Public Health Interventions using Text Mining”, MR/L01078X/1). We are grateful to the EPPI Centre for providing the public health datasets used in this paper. We would also like to thank Ioannis Korkontzelos, Paul Thompson and Matthew Shardlow for helpful discussions and comments related to this work.

Author information

Authors and Affiliations

Corresponding author

Additional information

Competing interests

The authors declare that they have no competing interests.

Authors’ contributions

YHM drafted the first version of manuscript and conducted the majority of experiments. SA supervised the whole process, and GK supervised the main parts of the experiments in terms of topic modelling. All authors have read and approved the final manuscript.

Additional file

Additional file 1

Supplementary figures. Specific results achieved for the other corpora. (DOCX 368 kb)

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The Creative Commons Public Domain Dedication waiver (http://creativecommons.org/publicdomain/zero/1.0/) applies to the data made available in this article, unless otherwise stated.

About this article

Cite this article

Mo, Y., Kontonatsios, G. & Ananiadou, S. Supporting systematic reviews using LDA-based document representations. Syst Rev 4, 172 (2015). https://doi.org/10.1186/s13643-015-0117-0

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/s13643-015-0117-0