Abstract

Background

The main objective of this methodological manuscript was to illustrate the role of using qualitative research in emergency settings. We outline rigorous criteria applied to a qualitative study assessing perceptions and experiences of staff working in Australian emergency departments.

Methods

We used an integrated mixed-methodology framework to identify different perspectives and experiences of emergency department staff during the implementation of a time target government policy. The qualitative study comprised interviews from 119 participants across 16 hospitals. The interviews were conducted in 2015–2016 and the data were managed using NVivo version 11. We conducted the analysis in three stages, namely: conceptual framework, comparison and contrast and hypothesis development. We concluded with the implementation of the four-dimension criteria (credibility, dependability, confirmability and transferability) to assess the robustness of the study,

Results

We adapted four-dimension criteria to assess the rigour of a large-scale qualitative research in the emergency department context. The criteria comprised strategies such as building the research team; preparing data collection guidelines; defining and obtaining adequate participation; reaching data saturation and ensuring high levels of consistency and inter-coder agreement.

Conclusion

Based on the findings, the proposed framework satisfied the four-dimension criteria and generated potential qualitative research applications to emergency medicine research. We have added a methodological contribution to the ongoing debate about rigour in qualitative research which we hope will guide future studies in this topic in emergency care research. It also provided recommendations for conducting future mixed-methods studies. Future papers on this series will use the results from qualitative data and the empirical findings from longitudinal data linkage to further identify factors associated with ED performance; they will be reported separately.

Similar content being viewed by others

Background

Qualitative research methods have been used in emergency settings in a variety of ways to address important problems that cannot be explored in another way, such as attitudes, preferences and reasons for presenting to the emergency department (ED) versus other type of clinical services (i.e., general practice) [1,2,3,4].

The methodological contribution of this research is part of the ongoing debate of scientific rigour in emergency care, such as the importance of qualitative research in evidence-based medicine, its contribution to tool development and policy evaluation [2, 3, 5,6,7]. For instance, the Four-Hour Rule and the National Emergency Access Target (4HR/NEAT) was an important policy implemented in Australia to reduce EDs crowding and boarding (access block) [8,9,10,11,12,13]. This policy generated the right conditions for using mixed methods to investigate the impact of 4HR/NEAT policy implementation on people attending, working or managing this type of problems in emergency departments [2, 3, 5,6,7, 14,15,16,17].

The rationale of our study was to address the perennial question of how to assess and establish methodological robustness in these types of studies. For that reason, we conducted this mixed method study to explore the impact of the 4HR/NEAT in 16 metropolitan hospitals in four Australian states and territories, namely: Western Australia (WA), Queensland (QLD), New South Wales (NSW), and the Australian Capital Territory (ACT) [18, 19].

The main objectives of the qualitative component was to understand the personal, professional and organisational perspectives reported by ED staff during the implementation of 4HR/NEAT, and to explore their perceptions and experiences associated with the implementation of the policy in their local environment.

This is part of an Australian National Health and Medical Research Council (NH&MRC) Partnership project to assess the impact of the 4HR/NEAT on Australian EDs. It is intended to complement the quantitative streams of a large data-linkage/dynamic modelling study using a mixed-methods approach to understand the impact of the implementation of the four-hour rule policy.

Methods

Methodological rigour

This section describes the qualitative methods to assess the rigour of the qualitative study. Researchers conducting quantitative studies use conventional terms such as internal validity, reliability, objectivity and external validity [17]. In establishing trustworthiness, Lincoln and Guba created stringent criteria in qualitative research, known as credibility, dependability, confirmability and transferability [17,18,19,20]. This is referred in this article as “the Four-Dimensions Criteria” (FDC). Other studies have used different variations of these categories to stablish rigour [18, 19]. In our case, we adapted the criteria point by point by selecting those strategies that applied to our study systematically. Table 1 illustrates which strategies were adapted in our study.

Study procedure

We carefully planned and conducted a series of semi-structured interviews based on the four-dimension criteria (credibility, dependability, confirmability and transferability) to assess and ensure the robustness of the study. These criteria have been used in other contexts of qualitative health research; but this is the first time it has been used in the emergency setting [20,21,22,23,24,25,26].

Sampling and recruitment

We employed a combination of stratified purposive sampling (quota sampling), criterion-based and maximum variation sampling strategies to recruit potential participants [27, 28]. The hospitals selected for the main longitudinal quantitative data linkage study, were also purposively selected in this qualitative component.

We targeted potential individuals from four groups, namely: ED Directors, ED physicians, ED nurses, and data/admin staff. The investigators identified local site coordinators who arranged the recruitment in each of the participating 16 hospitals (6 in NSW, 4 in QLD, 4 in WA and 2 in the ACT) and facilitated on-site access to the research team. These coordinators provided a list of potential participants for each professional group. By using this list, participants within each group were selected through purposive sampling technique. We initially planned to recruit at least one ED director, two ED physicians, two ED nurses and one data/admin staff per hospital. Invitation emails were circulated by the site coordinators to all potential participants who were asked to contact the main investigators if they required more information.

We also employed criterion-based purposive sampling to ensure that those with experience relating to 4HR/NEAT were eligible. For ethical on-site restrictions, the primary condition of the inclusion criteria was that eligible participants needed to be working in the ED during the period that the 4HR/NEAT policy was implemented. Those who were not working in that ED during the implementation period were not eligible to participate, even if they had previous working experience in other EDs.

We used maximum variation sampling to ensure that the sample reflects a diverse group in terms of skill level, professional experience and policy implementation [28]. We included study participants irrespective of whether their role/position was changed (for example, if they received a promotion during their term of service in ED).

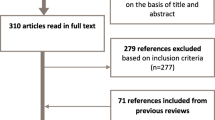

In summary, over a period of 7 months (August 2015 to March 2016), we identified all the potential participants (124) and conducted 119 interviews (5 were unable to participate due to workload availability). The overall sample comprised a cohort of people working in different roles across 16 hospitals. Table 2 presents the demographic and professional characteristics of the participants.

Data collection

We employed a semi-structured interview technique. Six experienced investigators (3 in NSW, 1 in ACT, 1 in QLD and 1 in WA) conducted the interviews (117 face-to-face on site and 2 by telephone). We used an integrated interview protocol which consisted of a demographically-oriented question and six open-ended questions about different aspects of the 4HR/NEAT policy (see Additional file 1: Appendix 1).

With the participant’s permission, interviews were audio-recorded. All the hospitals provided a quiet interview room that ensured privacy and confidentiality for participants and investigators.

All the interviews were transcribed verbatim by a professional transcriber with reference to a standardised transcription protocol [29]. The data analysis team followed a stepwise process for data cleaning, and de-identification. Transcripts were imported to qualitative data analysis software NVivo version 11 for management and coding [30].

Data analysis

The analyses were carried out in three stages. In the first stage, we identified key concepts using content analysis and a mind-mapping process from the research protocol and developed a conceptual framework to organise the data [31]. The analysis team reviewed and coded a selected number of transcripts, then juxtaposed the codes against the domains incorporated in the interview protocol as indicated in the three stages of analysis with the conceptual framework (Fig. 1).

In this stage, two cycles of coding were conducted: in the first one, all the transcripts were revised and initially coded, key concepts were identified throughout the full data set. The second cycle comprised an in-depth exploration and creation of additional categories to generate the codebook (see Additional file 2: Appendix 2). This codebook was a summary document encompassing all the concepts identified as primary and subsequent levels. It presented hierarchical categorisation of key concepts developed from the domains indicated in Fig. 1.

A summarised list of key concepts and their definitions are presented in Table 3. We show the total number of interviews for each of the key concepts, and the number of times (i.e., total citations) a concept appeared in the whole dataset.

The second stage of analysis compared and contrasted the experiences, perspectives and actions of participants by role and location. The third and final stage of analysis aimed to generate theory-driven hypotheses and provided an in-depth understanding of the impact of the policy. At this stage, the research team explored different theoretical perspectives such as the carousel model and models of care approach [16, 32,33,34]. We also used iterative sampling to reach saturation and interpret the findings.

Ethics approval and consent to participate

Ethics approval was obtained for all participating hospitals and the qualitative methods are based on the original research protocol approved by the funding organisations [18].

Results

This section described the FDC and provided a detailed description of the strategies used in the analysis. It was adapted from the FDC methodology described by Lincoln and Guba [23,24,25,26] as the framework to ensure a high level of rigour in qualitative research. In Table 1, we have provided examples of how the process was implemented for each criterion and techniques to ensure compliance with the purpose of FDC.

Credibility

Prolonged and varied engagement with each setting

All the investigators had the opportunity to have a continued engagement with each ED during the data collection process. They received a supporting material package, comprising background information about the project; consent forms and the interview protocol (see Additional file 1: Appendix 1). They were introduced to each setting by the local coordinator and had the chance to meet the ED directors and potential participants, They also identified local issues and salient characteristics of each site, and had time to get acquainted with the study’s participants. This process allowed the investigators to check their personal perspectives and predispositions, and enhance their familiarity with the study setting. This strategy also allowed participants to become familiar with the project and the research team.

Interviewing process and techniques

In order to increase credibility of the data collected and of the subsequent results, we took a further step of calibrating the level of awareness and knowledge of the research protocol. The research team conducted training sessions, teleconferences, induction meetings and pilot interviews with the local coordinators. Each of the interviewers conducted one or two pilot interviews to refine the overall process using the interview protocol, time-management and the overall running of the interviews.

The semi-structured interview procedure also allowed focus and flexibility during the interviews. The interview protocol (Additional file 1: Appendix 1) included several prompts that allowed the expansion of answers and the opportunity for requesting more information, if required.

Establishing investigators’ authority

In relation to credibility, Miles and Huberman [35] expanded the concept to the trustworthiness of investigators’ authority as ‘human instruments’ and recommended the research team should present the following characteristics:

-

Familiarity with phenomenon and research context: In our study, the research team had several years’ experience in the development and implementation of 4HR/NEAT in Australian EDs and extensive ED-based research experience and track records conducting this type of work.

-

Investigative skills: Investigators who were involved in data collections had three or more years’ experience in conducting qualitative data collection, specifically individual interview techniques.

-

Theoretical knowledge and skills in conceptualising large datasets: Investigators had post-graduate experience in qualitative data analysis and using NVivo software to manage and qualitative research skills to code and interpret large amounts of qualitative data.

-

Ability to take a multidisciplinary approach: The multidisciplinary background of the team in public health, nursing, emergency medicine, health promotion, social sciences, epidemiology and health services research, enabled us to explore different theoretical perspectives and using an eclectic approach to interpret the findings.

These characteristics ensured that the data collection and content were consistent across states and participating hospitals.

Collection of referential adequacy materials

In accordance with Guba’s recommendation to collect any additional relevant resources, investigators maintained a separate set of materials from on-site data collection which included documents and field notes that provided additional information in relation to the context of the study, its findings and interpretation of results. These materials were collected and used during the different levels of data analysis and kept for future reference and secure storage of confidential material [26].

Peer debriefing

We conducted several sessions of peer debriefing with some of the Project Management Committee (PMC) members. They were asked at different stages throughout the analysis to reflect and cast their views on the conceptual analysis framework, the key concepts identified during the first level of analysis and eventually the whole set of findings (see Fig. 1). We also have reported and discussed preliminary methods and general findings at several scientific meetings of the Australasian College for Emergency Medicine.

Dependability

Rich description of the study protocol

This study was developed from the early stages through a systematic search of the existing literature about the four-hour rule and time-target care delivery in ED. Detailed draft of the study protocol was delivered in consultation with the PMC. After incorporating all the comments, a final draft was generated for the purpose of obtaining the required ethics approvals for each ED setting in different states and territories.

To maintain consistency, we documented all the changes and revisions to the research protocol, and kept a trackable record of when and how changes were implemented.

Establishing an audit trail

Steps were taken to keep a track record of the data collection process [24]: we have had sustained communication within the research team to ensure the interviewers were abiding by an agreed-upon protocol to recruit participants. As indicated before, we provided the investigators with a supporting material package. We also instructed the interviewers on how to securely transfer the data to the transcriber. The data-analysis team systematically reviewed the transcripts against the audio files for accuracy and clarifications provided by the transcriber.

All the steps in coding the data and identification of key concepts were agreed upon by the research team. The progress of the data analysis was monitored on a weekly basis. Any modifications of the coding system were discussed and verified by the team to ensure correct and consistent interpretation throughout the analysis.

The codebook (see Additional file 2: Appendix 2) was revised and updated during the cycles of coding. Utilisation of the mind-mapping process described above helped to verify consistency and allowed to determine how precise the participants’ original information was preserved in the coding [31].

As required by relevant Australian legislation [36], we maintained complete records of the correspondence and minutes of meetings, as well as all qualitative data files in NVivo and Excel on the administrative organisation’s secure drive. Back-up files were kept in a secure external storage device, for future access if required.

Stepwise replication—measuring the inter-coders’ agreement

To assess the interpretative rigour of the analysis, we applied inter-coder agreement to control the coding accuracy and monitor inter-coder reliability among the research team throughout the analysis stage [37]. This step was crucially important in the study given the changes of staff that our team experienced during the analysis stage. At the initial stages of coding, we tested the inter-coder agreement using the following protocol:

-

Step 1 – Two data analysts and principal investigator coded six interviews, separately.

-

Step 2 – The team discussed the interpretation of the emerging key concepts, and resolved any coding discrepancies.

-

Step 3 – The initial codebook was composed and used for developing the respective conceptual framework.

-

Step 4 – The inter-coder agreement was calculated and found a weighted Kappa coefficient of 0.765 which indicates a very good agreement (76.5%) of the data.

With the addition of a new analyst to the team, we applied another round of inter-coder agreement assessment. We followed the same steps to ensure the inter-coder reliability along the trajectory of data analysis, except for step 3—a priori codebook was used as a benchmark to compare and contrast the codes developed by the new analyst. The calculated Kappa coefficient 0.822 indicates a very good agreement of the data (See Table 4).

Confirmability

Reflexivity

The analysis was conducted by the research team who brought different perspectives to the data interpretation. To appreciate the collective interpretation of the findings, each investigator used a separate reflexive journal to record the issues about sensitive topics or any potential ethical issues that might have affected the data analysis. These were discussed in the weekly meetings.

After completion of the data collection, reflection and feedback from all the investigators conducting the interviews were sought in both written and verbal format.

Triangulation

To assess the confirmability and credibility of the findings, the following four triangulation processes were considered: methodological, data source, investigators and theoretical triangulation.

Methodological triangulation is in the process of being implemented using the mixed methods approach with linked data from our 16 hospitals.

Data source triangulation was achieved by using several groups of ED staff working in different states/territories and performing different roles. This triangulation offered a broad source of data that contributed to gain a holistic understanding of the impact of 4HR/NEAT on EDs across Australia. We expect to use data triangulation with linked-data in future secondary analysis.

Investigators triangulation was obtained by consensus decision making though collaboration, discussion and participation of the team holding different perspectives. We also used the investigators’ field notes, memos and reflexive journals as a form of triangulation to validate the data collected. This approach enabled us to balance out the potential bias of individual investigators and enabling the research team to reach a satisfactory consensus level.

Theoretical triangulation was achieved by using and exploring different theoretical perspectives such as the carousel model and models of care approach [16, 32,33,34]. that could be applied in the context of the study to generate hypotheses and theory driven codes [16, 32, 38].

Transferability

Purposive sampling to form a nominated sample

As outlined in the methods section, we used a combination of three purposive sampling techniques to make sure that the selected participants were representative of the variety of views of ED staff across settings. This representativeness was critical for conducting comparative analysis across different groups.

Data saturation

We employed two methods to ensure data saturation was reached, namely: operational and theoretical. The operational method was used to quantify the number of new codes per interview over time. It indicates that the majority of codes were identified in the first interviews, followed by a decreasing frequency of codes identified from other interviews.

Theoretical saturation and iterative sampling were achieved through regular meetings where progress of coding and identification of variations in each of the key concepts were reported and discussed. We also used iterative sampling to reach saturation and interpret the findings. We continued this iterative process until no new codes emerged from the dataset and all the variations of an observed phenomenon were identified [39] (Fig. 2).

Discussion

Scientific rigour in qualitative research assessing trustworthiness is not new. Qualitative researchers have used rigour criteria widely [40,41,42]. The novelty of the method described in this article rests on the systematic application of these criteria in a large-scale qualitative study in the context of emergency medicine.

According to the FDC, similar findings should be obtained if the process is repeated with the same cohort of participants in the same settings and organisational context. By employing the FDC and the proposed strategies, we could enhance the dependability of the findings. As indicated in the literature, qualitative research has many times been questioned in history for its validity and credibility [3, 20, 43, 44].

Nevertheless, if the work is done properly, based on the suggested tools and techniques, any qualitative work can become a solid piece of evidence. This study suggests that emergency medicine researchers can improve their qualitative research if conducted according to the suggested criteria. The triangulation and reflexivity strategies helped us to minimise the investigators’ bias, and affirm that the findings were objective and accurately reflect the participants’ perspectives and experiences. Abiding by a consistent method of data collection (e.g., interview protocol) and conducting the analysis with a team of investigators, helped us minimise the risk of interpretation bias.

Employing several purposive sampling techniques enabled us to have a diverse range of opinions and experiences which at the same time enhanced the credibility of the findings. We expect that the outcomes of this study will show a high degree of applicability, because any resultant hypotheses may be transferable across similar settings in emergency care. The systematic quantification of data saturation at this scale of qualitative data has not been demonstrated in the emergency medicine literature before.

As indicated, the objective of this study was to contribute to the ongoing debate about rigour in qualitative research by using our mixed methods study as an example. In relation to innovative application of mixed-methods, the findings from this qualitative component can be used to explain specific findings from the quantitative component of the study. For example, different trends of 4HR/NEAT performance can be explained by variations in staff relationships across states (see key concept 1, Table 3). In addition, some experiences from doctors and nurses may explain variability of performance indicators across participating hospitals. The robustness of the qualitative data will allow us to generate hypotheses that in turn can be tested in future research.

Careful planning is essential in any type of research project which includes the importance of allocating sufficient resources both human and financial. It is also required to organise precise arrangements for building the research team; preparing data collection guidelines; defining and obtaining adequate participation. This may allow other researchers in emergency care to replicate the use of the FDC in the future.

This study has several limitations. Some limitations of the qualitative component include recall bias or lack of reliable information collected about interventions conducted in the past (before the implementation of the policy). As Weber and colleagues [45] point out, conducting interviews with clinicians at a single point in time may be affected by recall bias. Moreover, ED staff may have left the organisation or have progressed in their careers (from junior to senior clinical roles, i.e. junior nursing staff or junior medical officers, registrars, etc.), so obtaining information about pre/during/post-4HR/NEAT was a difficult undertaking. Although the use of criterion-based and maximum-variation sampling techniques minimised this effect, we could not guarantee that the sampling techniques could have reached out all those who might be eligible to participate.

In terms of recruitment, we could not select potential participants who were not working in that particular ED during the implementation, even if they had previous working experience in other hospital EDs. This is a limitation because people who participated in previous hospitals during the intervention could not provide valuable input to the overall project.

In addition, one would claim that the findings could have been ‘ED-biased’ due to the fact that we did not interview the staff or administrators outside the ED. Unfortunately, interviews outside the ED were beyond the resources and scope of the project.

With respect to the rigour criteria, we could not carry out a systematic member checking as we did not have the required resources for such an expensive follow-up. Nevertheless, we have taken extensive measures to ensure confirmation of the integrity of the data.

Conclusions

The FDC presented in this manuscript provides an important and systematic approach to achieve trustworthy qualitative findings. As indicated before, qualitative research credentials have been questioned. However, if the work is done properly based on the suggested tools and techniques described in this manuscript, any work can become a very notable piece of evidence. This study concludes that the FDC is effective; any investigator in emergency medicine research can improve their qualitative research if conducted accordingly.

Important indicators such as saturation levels and inter-coder reliability should be considered in all types of qualitative projects. One important aspect is that by using FDC we can demonstrate that qualitative research is not less rigorous than quantitative methods.

We also conclude that the FDC is a valid framework to be used in qualitative research in the emergency medicine context. We recommend that future research in emergency care should consider the FDC to achieve trustworthy qualitative findings. We can conclude that our method confirms the credibility (validity) and dependability (reliability) of the analysis which are a true reflection of the perspectives reported by the group of participants across different states/territories.

We can also conclude that our method confirms the objectivity of the analyses and reduces the risk for interpretation bias. We encourage adherence to practical frameworks and strategies like those presented in this manuscript.

Finally, we have highlighted the importance of allocating sufficient resources. This is essential if other researchers in emergency care would like to replicate the use of the FDC in the future.

Following papers in this series will use the empirical findings from longitudinal data linkage analyses and the results from the qualitative study to further identify factors associated with ED performance before and after the implementation of the 4HR/NEAT.

Abbreviations

- 4HR/NEAT:

-

Four Hour Rule/National Emergency Access Target

- ACT:

-

Australian Capital Territory

- ED/EDs:

-

Emergency department(s)

- FDC:

-

Four-Dimensions Criteria

- HREC:

-

Health Research Ethics Committee

- NSW:

-

New South Wales

- PMC:

-

Project Management Committee

- QLD:

-

Queensland

- WA:

-

Western Australia

References

Rees N, Rapport F, Snooks H. Perceptions of paramedics and emergency staff about the care they provide to people who self-harm: constructivist metasynthesis of the qualitative literature. J Psychosom Res. 2015;78(6):529–35.

Jessup M, Crilly J, Boyle J, Wallis M, Lind J, Green D, Fitzgerald G. Users’ experiences of an emergency department patient admission predictive tool: a qualitative evaluation. Health Inform J. 2016;22(3):618–32. https://doi.org/10.1177/1460458215577993.

Choo EK, Garro AC, Ranney ML, Meisel ZF, Morrow Guthrie K. Qualitative research in emergency care part I: research principles and common applications. Acad Emerg Med. 2015;22(9):1096–102.

Hjortdahl M, Halvorsen P, Risor MB. Rural GPs’ attitudes toward participating in emergency medicine: a qualitative study. Scand J Prim Health Care. 2016;34(4):377–84.

Samuels-Kalow ME, Rhodes KV, Henien M, Hardy E, Moore T, Wong F, Camargo CA Jr, Rizzo CT, Mollen C. Development of a patient-centered outcome measure for emergency department asthma patients. Acad Emerg Med. 2017;24(5):511–22.

Manning SN. A multiple case study of patient journeys in Wales from A&E to a hospital ward or home. Br J Community Nurs. 2016;21(10):509–17.

Ranney ML, Meisel ZF, Choo EK, Garro AC, Sasson C, Morrow Guthrie K. Interview-based qualitative research in emergency care part II: data collection, analysis and results reporting. Acad Emerg Med. 2015;22(9):1103–12.

Forero R, Hillman KM, McCarthy S, Fatovich DM, Joseph AP, Richardson DB. Access block and ED overcrowding. Emerg Med Australas. 2010;22(2):119–35.

Fatovich DM. Access block: problems and progress. Med J Aust. 2003;178(10):527–8.

Richardson DB. Increase in patient mortality at 10 days associated with emergency department overcrowding. Med J Aust. 2006;184(5):213–6.

Sprivulis PC, Da Silva J-A, Jacobs IG, Frazer ARL, Jelinek GA. The association between hospital overcrowding and mortality among patients admitted via Western Australian emergency departments.[Erratum appears in Med J Aust. 2006 Jun 19;184(12):616]. Med J Aust. 2006;184(5):208–12.

Richardson DB, Mountain D. Myths versus facts in emergency department overcrowding and hospital access block. Med J Aust. 2009;190(7):369–74.

Geelhoed GC, de Klerk NH. Emergency department overcrowding, mortality and the 4-hour rule in Western Australia. Med J Aust. 2012;196(2):122–6.

Nugus P, Forero R. Understanding interdepartmental and organizational work in the emergency department: an ethnographic approach. Int Emerg Nurs. 2011;19(2):69–74.

Nugus P, Holdgate A, Fry M, Forero R, McCarthy S, Braithwaite J. Work pressure and patient flow management in the emergency department: findings from an ethnographic study. Acad Emerg Med. 2011;18(10):1045–52.

Nugus P, Forero R, McCarthy S, McDonnell G, Travaglia J, Hilman K, Braithwaite J. The emergency department “carousel”: an ethnographically-derived model of the dynamics of patient flow. Inte Emerg Nurs. 2014;21(1):3–9.

Jones P, Chalmers L, Wells S, Ameratunga S, Carswell P, Ashton T, Curtis E, Reid P, Stewart J, Harper A, et al. Implementing performance improvement in New Zealand emergency departments: the six hour time target policy national research project protocol. BMC Health Serv Res. 2012;12:45.

Forero R, Hillman K, McDonnell G, Fatovich D, McCarthy S, Mountain D, Sprivulis P, Celenza A, Tridgell P, Mohsin M, et al. Validation and impact of the four hour rule/NEAT in the emergency department: a large data linkage study (Grant # APP1029492). Vol. $687,000. Sydney, Perth, Brisbane, Canberra: National Health and Medical Research Council; 2013.

Forero R, Hillman K, McDonnell G, Tridgell P, Gibson N, Sprivulis P, Mohsin M, Green S, Fatovich D, McCarthy S, et al. Study Protocol to assess the implementation of the Four-Hour National Emergency Access Target (NEAT) in Australian Emergency Departments. Sydney: AIHI, UNSW; 2013.

Morse JM. Critical analysis of strategies for determining rigor in qualitative inquiry. Qual Health Res. 2015;25(9):1212–22.

Schou L, Hostrup H, Lyngso EE, Larsen S, Poulsen I. Validation of a new assessment tool for qualitative research articles. J Adv Nurs. 2012;68(9):2086–94.

Tuckett AG. Part II. Rigour in qualitative research: complexities and solutions. Nurse Res. 2005;13(1):29–42.

Lincoln YS, Guba EG. But is it rigorous? Trustworthiness and authenticity in naturalistic evaluation. N Dir Eval. 1986;1986(30):73–84.

Lincoln YS, Guba EG. Naturalistic inquiry, 1st edn. Newbury Park: Sage Publications Inc; 1985.

Guba EG, Lincoln YS. Epistemological and methodological bases of naturalistic inquiry. ECTJ. 1982;30(4):233–52.

Guba EG. Criteria for assessing the trustworthiness of naturalistic inquiries. ECTJ. 1981;29(2):75.

Palinkas LA, Horwitz SM, Green CA, Wisdom JP, Duan N, Hoagwood K. Purposeful sampling for qualitative data collection and analysis in mixed method implementation research. Adm Policy Ment Health Ment Health Serv Res. 2015;42(5):533–44.

Patton MQ. Qualitative research and evaluation methods. 3rd edn. Thousand Oaks: Sage Publishing; 2002.

McLellan E, MacQueen KM, Neidig JL. Beyond the qualitative interview: data preparation and transcription. Field Methods. 2003;15(1):63–84.

QSR International Pty Ltd: NVivo 11 for windows. 2015.

Whiting M, Sines D. Mind maps: establishing ‘trustworthiness’ in qualitative research. Nurse Res. 2012;20(1):21–7.

Forero R, Nugus P, McDonnell G, McCarthy S. Iron meets clay in sculpturing emergency medicine: a multidisciplinary sense making approach. In: Deng M, Raia F, Vaccarella M, editors. Relational concepts in medicine (eBook), vol. 2012. Oxford: Interdisciplicary Press; 2012. p. 18.

FitzGerald G, Toloo GS, Romeo M. Emergency healthcare of the future. Emerg Med Australas. 2014;26(3):291–4.

NSW Ministry of Health. In: Health NDo, editor. Emergency department models of care. North Sydney: NSW Ministry of Health; 2012. p. 65.

Miles MB, Huberman AM. Qualitative data analysis: an expanded sourcebook: Sage; 1994.

Council NHaMR. In: Council NHaMR, editor. Australian code for the responsible conduct of research: Australian Government; 2007.

Kuckartz U. Qualitative text analysis: a guide to methods, practice and using software: Sage; 2014.

Ulrich W, Reynolds M. Critical systems heuristics. In: Systems approaches to managing change: a practical guide, Version of record. London: Springer; 2010. p. 243–92.

Bowen GA. Naturalistic inquiry and the saturation concept: a research note. Qual Res. 2008;8(1):137–52.

Krefting L. Rigor in qualitative research - the assessment of trustworthiness. Am J Occup Ther. 1991;45(3):214–22.

Hamberg K, Johansson E, Lindgren G, Westman G. Scientific rigour in qualitative research - examples from a study of womens health in family-practice. Fam Pract. 1994;11(2):176–81.

Tobin GA, Begley CM. Methodological rigour within a qualitative framework. J Adv Nurs. 2004;48(4):388–96.

Kitto SC, Chesters J, Grbich C. Quality in qualitative research. Med J Aust. 2008;188(4):243–6.

Tuckett AG, Stewart DE. Collecting qualitative data: part I: journal as a method: experience, rationale and limitations. Contemp Nurse. 2003;16(1–2):104–13.

Weber EJ, Mason S, Carter A, Hew RL. Emptying the corridors of shame: organizational lessons from England’s 4-hour emergency throughput target. Ann Emerg Med. 2011;57(2):79–88.e71.

Acknowledgements

We acknowledge Brydan Lenne who was employed in the preliminary stages of the project, for ethics application preparation and ethics submissions, and her contribution in the planning stages, data collection of the qualitative analysis and preliminary coding of the conceptual framework is appreciated. Fenglian Xu, who was also employed in the initial stages of the project in the data linkage component. Jenine Beekhuyzen, CEO Adroit Research, for consultancy and advice on qualitative aspects of the manuscript and Liz Brownlee, owner/manager Bostock Transcripts services for the transcription of the interviews. Brydan Lenne, Karlene Dickens; Cecily Scutt and Tracey Hawkins who conducted the interviews across states. We also thank Anna Holdgate, Michael Golding, Michael Hession, Amith Shetty, Drew Richardson, Daniel Fatovich, David Mountain, Nick Gibson, Sam Toloo, Conrad Loten, John Burke and Vijai Joseph who acted as site contacts on each State/Territory. We also thank all the participants for their contribution in time and information provided. A full acknowledgment of all investigators and partner organisations is enclosed as an attachment (see Additional file 3: Appendix 3).

Funding

This project was funded by the Australian National Health and Medical Research Council (NH&MRC) Partnership Grant No APP1029492 with cash contributions from the following organisations: Department of Health of Western Australia, Australasian College for Emergency Medicine, Ministry of Health of NSW and the Emergency Care Institute, NSW Agency for Clinical Innovation, and Emergency Medicine Foundation, Queensland.

Availability of data and materials

All data generated or analysed during this study are included in this published article and its supplementary information files have been included in the appendix. No individual data will be available.

Author information

Authors and Affiliations

Contributions

RF, GF, SMC made substantial contributions to conception, design and funding of the study. RF, SN, NG, SMC with acquisition of data. RF, SN, JDC for the analysis and interpretation of data. RF, SN, JDC, MM, GF, NG, SMC and PA were involved in drafting the manuscript and revising it critically for important intellectual content and gave final approval of the version to be published. All authors have participated sufficiently in the work to take public responsibility for appropriate portions of the content; and agreed to be accountable for all aspects of the work in ensuring that questions related to the accuracy or integrity of any part of the work are appropriately investigated and resolved.

Corresponding author

Ethics declarations

Ethics approval and consent to participate

As indicated in the background, our study received ethics approval from the respective Human Research Ethics Committees of Western Australian Department of Health (DBL.201403.07), Cancer Institute NSW (HREC/14/CIPHS/30), ACT Department of Health (ETH.3.14.054) and Queensland Health (HREC/14/QGC/30) as well as governance approval from the 16 participating hospitals. All participants received information about the project; received an invitation to participate and signed a consent form and agreed to allow an audio recording to be conducted.

Consent for publication

All the data used from the interviews were de-identified for the analysis. No individual details, images or recordings, were used apart from the de-identified transcription.

Competing interests

RF is an Associate Editor of the Journal. No other authors have declared any competing interests.

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Additional files

Additional file 1:

Appendix 1. Interview form. Text. (PDF 445 kb)

Additional file 2:

Appendix 2. Codebook NVIVO. Text code. (PDF 335 kb)

Additional file 3:

Appendix 3. Acknowledgements. Text. (PDF 104 kb)

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The Creative Commons Public Domain Dedication waiver (http://creativecommons.org/publicdomain/zero/1.0/) applies to the data made available in this article, unless otherwise stated.

About this article

Cite this article

Forero, R., Nahidi, S., De Costa, J. et al. Application of four-dimension criteria to assess rigour of qualitative research in emergency medicine. BMC Health Serv Res 18, 120 (2018). https://doi.org/10.1186/s12913-018-2915-2

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/s12913-018-2915-2