Abstract

Background

Recommendations in clinical practice guidelines for non-specific low back pain (NSLBP) are not necessarily translated into practice. Multiple studies have investigated different interventions to implement best evidence into clinical practice yet no synthesis of these studies has been carried out to date.

The aim of this study was to systematically review available studies to determine whether implementation interventions in this field have been effective and to identify which strategies have been most successful in changing healthcare practitioner behaviours and improving patient outcomes.

Methods

A systematic review was undertaken, searching electronic databases until end of December 2012 plus hand searching, writing to key authors and using prior knowledge of the field to identify papers. Included studies evaluated an implementation intervention to improve the management of NSLBP in clinical practice, measured key outcomes regarding change in practitioner behaviour and/or patient outcomes and subjected their data to statistical analysis. The Cochrane Effective Practice and Organisation of Care (EPOC) recommendations about systematic review conduct were followed. Study inclusion, data extraction and study risk of bias assessments were conducted independently by two review authors.

Results

Of 7654 potentially eligible citations, 17 papers reporting on 14 studies were included. Risk of bias of included studies was highly variable with 7 of 17 papers rated at high risk. Single intervention or one-off implementation efforts were consistently ineffective in changing clinical practice. Increasing the frequency and duration of implementation interventions led to greater success with those continuously ongoing over time the most successful in improving clinical practice in line with best evidence recommendations.

Conclusions

Single intervention or one-off implementation interventions may seem attractive but are largely unsuccessful in effecting meaningful change in clinical practice for NSLBP. Increasing frequency and duration of implementation interventions seems to lead to greater success and the most successful implementation interventions used consistently sustained strategies.

Similar content being viewed by others

Background

Low back pain

Low back pain (LBP) is a major healthcare problem around the world [1–3], recently ranked as the number one cause of years lived with disability [3]. No geographic region, particular age group or section of society is immune from the effect of this condition with the World Health Organisation (WHO) estimating that nearly everyone will experience LBP sometime during their lives [4]. LBP is also a major economic burden, with an estimated 818,000 disability-adjusted life years lost annually worldwide due to work related LBP [5].

For many people with LBP a specific diagnosis is not possible with an estimated 85 % of cases not attributable to specific serious pathology or nerve root irritation [6–8]; thus most patients with LBP have non-specific low back pain (NSLBP) [9, 10]. Clinical practice guidelines based on the best available evidence have been developed to direct management of NSLBP. The Quebec Task Force performed the first comprehensive review into best available evidence for NSLBP in 1987 [11, 12] and highlighted the absence of high-quality evidence to guide clinical decision-making [13]. Since then there has been a considerable increase in research regarding diagnosis, prognosis and treatment of NSLBP [12]. There has also been an increase in the number of best practice guidelines for the management of NSLBP, with at least 11 countries publishing their own national guidelines by 2001 [14]. However despite these multiple sources of best available evidence to inform healthcare practitioners about the management of patients with NSLBP, research has shown that these recommendations are not routinely translated into everyday clinical practice [15–17] the so called ‘know-do gap’ [18]. The mere production and dissemination of clinical practice guidelines in itself is insufficient to change clinical practice [19]. Evidence-based guidelines need implementation interventions to support their implementation into clinical practice and investigating these interventions has led to a new area of science, that of implementation research [20, 21].

A range of implementation interventions have been studied in the field of NSLBP [22, 23]. These interventions vary with respect to their type, target end user, intensity and frequency and they range from simple techniques like postal dissemination of guidelines or educational reminders [24, 25] to more complex, ongoing interventions aimed at changing the entire practice of healthcare practitioners [26, 27]. Within each intervention there are different methods used to impart the information including passive strategies that place the onus on the individual healthcare practitioner to act on the information contained within the guideline [28], specific behaviour change techniques such as persuasive communication [29], opinion leaders [30] and multifaceted behavioural change strategies targeting not only the healthcare practitioner but the organisational system in which they work [31, 32].

Why it was important to do this review

There are a growing number of empirical implementation studies that have evaluated interventions to support the implementation of best available evidence into clinical practice for NSLBP. These studies vary in terms of the healthcare practitioner behaviours targeted, the nature and theoretical underpinning of the implementation interventions selected and the success or otherwise of these in changing clinical practice. Given the worldwide impact of NSLBP and that implementation of best practice recommendations within guidelines may improve patient outcomes, it is timely to review the available published evidence on implementation interventions.

Methods

Aims and objectives

This systematic review aimed to summarise the empirical literature about interventions aiming to support the implementation of best available evidence into clinical practice for the management of NSLBP.

Specifically this review had the following objectives:

-

1.

Determine whether implementation interventions have been effective in terms of improving healthcare practitioner behaviour or patient outcomes.

-

2.

Identify which implementation interventions have been shown to be more effective than others in changing the clinical behaviours of healthcare practitioners and improving patient outcomes.

-

3.

Summarise the implementation interventions used, the theoretical models behind them and the evidence base supporting them.

-

4.

Critically appraise the quality of research studies in this area.

Design

A systematic review of the English language published literature was chosen as the most suitable study design to address the aims and objectives. Only English language papers were considered as translation of foreign language papers was beyond the resources of this project. It was anticipated that there would not be sufficient homogenous studies to permit numerical pooling of data in a meta-analysis but rather that the review would provide a narrative synthesis of the current research in this field.

Study eligibility criteria

Randomised controlled trials (RCT), non-randomised controlled trials, controlled before-after studies and studies with an interrupted time series design were included. This followed the Cochrane Effective Practice and Organisation of Care Group (EPOC) guidance for the appropriate types of studies to include in a systematic review of implementation interventions [33]. As per EPOC recommendations cluster RCTs, non-randomised cluster trials and controlled before-after cluster trials were included only if they had at least two intervention sites and two control sites and interrupted time series studies were included only if they had at least three data points before and three data points after the introduction of the intervention. Included studies also had to have used a quantitative outcome measure and to have subjected the measure to statistical analysis, comparing the outcome from the intervention with that from a control or comparison.

Using the PICO format (Population; Intervention; Comparator and Outcome) advocated by the Centre for Reviews and Dissemination, University of York [34] and the Preferred Reporting Items for Systematic Reviews and Meta-analyses (PRISMA) statement [35], the population was defined as any healthcare practitioner involved in the treatment of NSLBP. The included interventions were implementation interventions designed to improve clinical practice for the management of NSLBP. The comparator was the type of control or comparison group and could include other types of implementation intervention(s), no implementation intervention (‘usual care’) or a before/after comparison. The outcomes were the variables analysed for evidence of change and included any measured healthcare practitioner behaviour change or change in patient outcomes, for example rates of requests for radiographs, adherence to best practice guidelines, lumbar spine surgery rate, pain score or physical function score. Only full peer reviewed published papers were included.

Studies that specifically tested an implementation intervention with patients who had serious or specific types of LBP, such as patients with fracture, radicular pain/nerve root compression, were excluded.

Search strategy

The search strategy for the electronic databases was developed with expert health librarians. A pilot search was first performed on the MEDLINE and EMBASE electronic databases and then expanded. This expanded search was then performed on AMED, Applied Social Science Index and Abstracts (ASSIA), CINAHL PLUS, Cochrane Central Register of Controlled Trials (Central), DARE, EMBASE, ERIC (Proquest), NARIC REHABDATA Literature Database, PEDRO, Physical Education Index (Proquest), SPORTS Discus and Web of Knowledge/Science. A full list of search terms and how they were combined for MEDLINE can be found in Additional file 1. Searching of the electronic databases was from January1987 to 31st December 2012. 1987 was chosen as the start year as this is when the first comprehensive review into best available evidence for LBP was performed by the Quebec Task Force [11, 12]. All references generated from the electronic databases were then exported to Ref Works, an online bibliographic database management software tool and duplicates were removed. In addition to the above, efforts were made to identify additional studies by contacting experts in the field of LBP research and specifically those previously involved in LBP implementation research. These experts were identified from a list of participants at the Odense International Forum XII: Primary Care Research on Back Pain (16th-19th October 2012, Odense, Denmark) and were contacted by email to ascertain whether there were any papers currently in press that might be suitable for inclusion in this review that would be published and available in time for this search. The WHO International Clinical Trials Registry Platform website [36] was also checked to identify any registered appropriate studies under the search terms ‘low back pain’ and ‘implementation’.

All titles and abstracts of studies identified from the above searches were then independently screened by the review lead author and by one of the co-authors to determine potentially eligible studies. These potentially eligible studies were then independently screened by two review authors in full text to determine their final inclusion. Any disagreements on eligibility were settled by discussion and consensus and by involvement of the decision of the other co-author if required. Reference lists of the included papers and two systematic reviews about the implementation of clinical practice guidelines in general [37, 38] were searched for any additional studies.

Risk of bias assessment of included studies

The EPOC risk of bias tool [39] was used to assess the risk of bias of included studies. The choice to use this tool was based on the following: it was developed specifically for use with implementation research studies attempting to change the practice of healthcare practitioners and organisation of healthcare systems; it is suitable for use with different study designs (RCT; non-randomised controlled trials; controlled before-after studies and interrupted time series studies) and it has been used in other similar, published systematic reviews [40–42]. The EPOC risk of bias tool involves rating whether the study has a low risk, high risk or unclear risk of bias in nine different categories (see Table 5). For details of how the EPOC risk of bias tool was applied please refer to Additional file 2.

Data extraction

A specific data extraction form was developed for this review based on the EPOC data extraction form [43] and the information required to meet the objectives of this review. The data extraction form collected details of study characteristics such as the healthcare practitioners included and location of the study (clinic/hospital or community), the stated primary and secondary outcome measures such as radiograph request rate and healthcare practitioner adherence to best practice guidelines, details of the implementation intervention such as how information was imparted and how data were collected as well as the study’s risk of bias. The data from all included studies were extracted by the lead author with the two co-authors independently extracting data from half of the included studies each. The results of this process were then double checked by the lead author to make sure that all relevant information had been gathered.

Data synthesis

The data extracted and the characteristics of the studies were compared and contrasted. The implementation interventions tested and the study results were analysed for any consistent patterns in terms of effectiveness (such as type of implementation intervention, type of healthcare practitioner targeted, frequency or duration of implementation intervention, frequency of implementation strategy). Based on the clearest pattern observed the studies were split into three categories based on the duration and frequency of the implementation intervention used. The interventions and results of each of the studies were then described and compared. The risk of bias for the two groupings of study type as per the EPOC guidance (one group including RCTs, non-randomised controlled trials and controlled before-after studies and the other group interrupted time series studies) were also described and compared. A meta-analysis was not possible due to the heterogeneity of the implementation interventions studied and the outcomes reported.

Results

Study flow

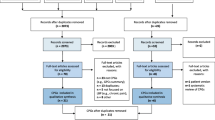

Of 11802 potentially relevant citations, 11784 were identified from the database searching and 18 were identified via hand referencing and prior knowledge. Of these 11802 citations, 4148 were excluded as duplicates. After screening the titles and abstracts of the remaining 7654 citations, 7501 citations were excluded leaving a short list of 153 citations. The full text of these were acquired and screened. Of these 153 papers, 136 were excluded. Seventeen papers reporting 14 different studies met the inclusion criteria. Please see Fig. 1 for the ‘PRISMA flow diagram for included records’. Please refer to Additional file 3 for reasons of exclusion for the 136 full text papers.

Study types

Ten included studies were RCTs and of these, two were cluster RCTs. Two included studies were interrupted time series design, one was a non-randomised controlled trial and one was a controlled before-after study.

Study outcomes

The included studies examined the effect of the implementation interventions on either healthcare practitioner behaviour change such as adherence to a range of best practice guidelines for treatment of NSLBP or only rates of radiograph requests (10 studies), the effect on patient outcomes such as pain or function (two studies) or a combination (two studies).

Types of implementation interventions tested

Education programmes were tested in six studies reported in eight papers [23, 26, 27, 30, 31, 44–46]. These programmes utilised a variety of methods to impart the programme content including didactic lectures, follow-up sessions to determine how staff were getting on with the new approach and to answer queries, interactive workshops and printed material. Of these studies, Schectman et al. [23] utilised multifaceted education sessions (an education programme with audit and feedback) for both healthcare practitioners and patients whereas the others focused on education programmes for healthcare practitioners alone. The intervention tested by Becker et al. [44] combined an education programme with training for healthcare practitioners in motivational counselling.

Ongoing reminders and feedback were tested in two studies [24, 28]. The remaining six studies all used different forms of implementation interventions: these were postal dissemination and feedback following audit [22], postal dissemination only [25], educational outreach visits and printed educational material [29], the setting up of special clinics for NSLBP patients [32], feedback on diagnostic test ordering [47] and a special radiograph requisition form [48]. There was no clear pattern of effectiveness in terms of the type of implementation intervention used (see Tables 1, 2, 3 and 4). The same implementation intervention led to significant changes in practice in some studies [31, 44] but not others [27, 46].

Theoretical models underpinning the implementation intervention

Only one of the 14 included studies described the theoretical underpinning of the implementation intervention chosen. Dey et al. [29] referenced a system of persuasive communication called the Elaboration Likelihood Model of Persuasion [49]. Other studies did use well-known methods of implementation interventions such as audit and feedback [20] or local opinion leaders [30] but the authors did not explicitly provide the rationale for their selection. Given the lack of clarity in most studies about the theoretical models underpinning the selection of implementation interventions, no clear pattern of effectiveness based on the theoretical underpinning of the interventions was observed.

Number and types of healthcare practitioners studied

A total of 1749 healthcare practitioners were examined in 11 studies giving a mean of 159 practitioners per study with a range of 15 to 462. Four studies did not state the number of healthcare practitioners involved. General Practitioners (GPs)/primary care physicians were the target group of healthcare practitioners in most studies (11 of the 14). Of these, four also included other healthcare practitioners: primary care/practice nurses [44], orthopaedic surgeons and neurosurgeons [31], specialists in rheumatology, rehabilitation and occupational medicine [32], internists and associate practitioners [23]. The other three studies focused on surgical, internal medicine and emergency medicine junior doctors [50] or physiotherapists [26, 30, 45]. There was no clear pattern of effectiveness observed based on the type of healthcare practitioners targeted by the implementation intervention (see Tables 1, 2, 3 and 4).

Effects of the implementation interventions

Tables 1, 2, 3 and 4 summarise the results of the 14 included studies. The results are presented below, grouped according to the frequency and duration of the implementation interventions since review of the studies highlighted a clear pattern of association between these characteristics and the effectiveness of the implementation intervention.

Studies that used ‘one-off’ or single implementation interventions

Five studies tested a ‘one-off’ or single event implementation intervention (Table 1) such as one education workshop lasting two hours by Engers et al. [46]. Of these studies, three specified primary outcome measures but none reported a statistically significant difference across all of their measured outcomes between their intervention and comparison. Matowe et al. [25] reported no statistically significant difference; Stevenson et al. [30] reported two statistically significant differences from a total of six outcome measures and Engers et al. [46] reported two statistically significant differences at six weeks out of five patient outcome measures but in the direction favouring the control group and only one at 52 weeks in the direction favouring the intervention group. Dey et al. [29] did not specify a primary outcome measure(s) but reported a statistically significant difference in only one of their five outcome measures; Engers et al. [27] also did not specify a primary outcome measure but reported only one statistically significant difference in the direction favouring the intervention group out of four healthcare practitioner behaviours measured.

Studies testing short-term implementation interventions with no ongoing implementation effort

Only one study, reported in two papers, used a short-term implementation intervention with no ongoing, implementation effort (Table 2). This comprised an initial two-hour workshop with the postal distribution of printed educational materials and further postal educational materials at 4 and 8 weeks [26, 45]. The first of these papers [26] did not specify a primary outcome measure but reported a moderate change in the intervention group compared to the control across five outcome measures. Four of these were healthcare practitioner behaviours as recorded in the patient record and the fifth measured the number of patient cases for whom all four healthcare practitioner behaviours occurred. However the second paper summarising this study [45] reported no statistically significant differences at 6, 12, 26 or 52 weeks in three primary outcome measures relating to patient outcomes.

Studies that tested ongoing implementation interventions

The remaining nine studies used ongoing interventions of various types to implement improvements in practice. Of these, six used interventions that intermittently reinforced the implementation effort over time whereas the other three included continual reinforcement of the target behaviour change on a regular sometimes even daily, basis. These two groups of studies are summarised below and in Tables 3 and 4.

Studies that tested ongoing, intermittently reinforced, implementation interventions

The results reported in these six studies were mixed; at best they reported partial success only across the wide range of outcomes assessed (Table 3). Bishop & Wing [28] studied differences in healthcare practitioner behaviours following their implementation intervention of providing a copy of guidelines appropriate to patient management at different timeframes (at patient assessment, between 0–4 weeks of treatment, between 5–12 weeks and beyond 12 weeks). They reported no statistically significant differences between the intervention and control group in five healthcare practitioner behaviours at the time of patient assessment and only one statistically significant difference after the 0–4 week timeframe out of seven healthcare practitioner behaviours. There were unclear effects after the 5–12 weeks timeframe and no results were presented beyond 12 weeks. Schectman et al. [23] also did not find any statistically significant differences between their two implementation groups versus control in any of the four healthcare practitioner behaviour outcomes they studied. However they stated that the education and feedback group did show a statistically significant increase in overall guideline consistent behaviour compared to control and an overall statistically significant decline of utilisation of services compared to the control. Becker et al. [44] reported moderate success mainly from one type of implementation intervention - motivational counselling training for practice nurses and three education sessions with feedback for GPs. They also tested an intervention that included only the GP education sessions. The difference in the primary outcome measure between the motivational counselling group compared to control (postal dissemination of guidelines only) was statistically significant at 6 months but there were no other statistically significant differences at 6 or 12 months. Both intervention groups showed statistically significant differences in one secondary outcome measure at 6 months versus control but this was only maintained in the intervention group that did not receive motivational counselling at 12 months. Of the other four secondary outcome measures only one demonstrated a statistically significant difference between the motivational counselling group and control at 12 months. Winkens et al. [47] also reported partial success with no reduction in the overall request rates for lumbar spine radiographs but did report a statistically significant reduction in the non-rational lumbar spine radiograph requests in the intervention group compared to control. Two papers reported successful outcomes; Goldberg et al. [31] concluded a reduction in surgery rates in their intervention group compared to control and Kerry et al. [22] reported a large and statistically significant reduction of radiograph requests in their intervention group compared to control. Table 3 summarises the interventions used and their results.

Studies that tested consistently ongoing implementation interventions

The remaining three studies reporting in four papers utilised at least one component of an implementation intervention that was consistently ongoing over time therefore providing reinforcement of the key behaviour changes desired (Table 4). An example of this type of intervention by Baker et al. [50] was a special request form that had to be completed in order to arrange for the patient to have a lumbar spine radiograph. The key pattern observed across these studies was that they all reported successful outcomes. Eccles et al. [24] reported a statistically significant reduction in lumbar spine radiograph requests compared to control in two of their three implementation groups. Ramsey et al. [48] went on to show that this effect was consistent in the long term over 12 months with no decay of effect. Baker et al. [50] also reported a successful outcome with respect to reduction in lumbosacral radiograph requests, maintained over three years. McGuirk et al. [32] reported a statistically significant reduction in primary outcomes measures of bodily pain at 3 and 6 months and physical function at 12 months as well as a significant reduction in pain at 3 and 12 months. Also a range of secondary outcome measures relating to healthcare professional behaviours and patient outcomes were statistically significantly different between the intervention group and control. Table 4 summarises the interventions and results.

Risk of bias

Of the 17 papers included in this review, the level of risk of bias was varied; nine were considered to have a low risk of bias, one an unclear risk of bias and seven a high risk of bias.

Risk of bias in RCTs, non-randomised controlled trials and controlled before-after studies

Of 14 papers, six had a low risk of bias: Bekkering et al. [26]; Dey et al. [29]; Eccles et al. [24]; Engers et al. [46]; Kerry et al. [22]; Winkens et al. [47] – these studies had no or only one category that was classified as at high risk of bias. These high risk categories were considered less important to the integrity of the study – ‘Were incomplete outcome data adequately addressed?’ [26] and [46], ‘Was knowledge of the allocated intervention adequately prevented during study?’ [29]. One study had an unclear risk of bias: Bishop & Wing [28] – this study rated unclear risk in the category of ‘Were baseline outcome measurements similar?’ and high risk in the category of ‘Was the allocation adequately concealed?’ Seven studies had a high risk of bias with all papers rating high risk in two or more categories: Becker et al. [44]; Bekkering et al. [45]; Engers et al. [27]; Goldberg et al. [31]; McGuirk et al. [32]; Schectman et al. [23] and Stevenson et al. [30]. The categories on the risk of bias tool that were rated most frequently as at high risk were: ‘Incomplete outcome data not adequately addressed’ (seven studies), ‘Were baseline outcome measurements similar?’ (five studies) and ‘Were baseline characteristics similar?’ (three studies). Table 5 summarises the results of the risk of bias assessment. Additional file 2 defines how the risk of bias was scored for each category.

Risk of bias in interrupted time series studies

All of the three papers with this study design had a low risk of bias (Baker et al., [50]; Matowe et al., [25] and Ramsey et al. [48]). Only one category on the risk of bias tool across the three papers was of high risk: ‘Was the intervention independent of other changes?’ (Matowe et al. [25]).

Discussion

Summary of results

The key findings of this review show implementation interventions can change healthcare practitioner behaviours to be more in line with best practice recommendations for the management of NSLBP and that some of these interventions are also associated with improvements in patient outcomes. However there was no consistent pattern in terms of the effectiveness of specific types of implementation interventions such as educational events, audit and feedback or use of opinion leaders, of generally passive or active implementation strategies or of targeting specific groups of healthcare practitioners. Rather the results of the 14 included studies showed that implementation intervention effectiveness was more likely to be determined by the frequency and duration of the intervention. Those studies that reported no or very few significant differences in outcome measures all utilised one-off, single or short-term implementation interventions lasting no more than eight weeks. Conversely studies that investigated ongoing and regular implementation interventions demonstrated greater success in changing clinical practice and in sustaining those changes over time. Such successful interventions included those which are traditionally ‘passive’ implementation strategies (such as changing to a new form with which to request radiographs, designed to reduce the number of requests [48]) as well as ‘active’ interventions (such as combined community-wide education and audit processes used to reduce the rates of lumbar spine surgery [31]). The key determinant of success appeared to be the ongoing nature of the intervention rather than the intervention type.

Implications for clinical practice and research

The evidence from this systematic review suggests that future research focused on improving the translation of best evidence into the clinical management of patients with NSLBP needs to more carefully select and justify the type of implementation intervention(s). One-off, single implementation efforts may be attractive in terms of ease of delivery, low burden for participants, small time commitment and low cost. However this review shows that such one-off, single implementation interventions or even those that last for a short time (up to eight weeks in this review) are unlikely to be successful in changing healthcare practitioner behaviour. Rather the results suggest that ongoing and frequent implementation interventions are required to effectively change clinical practice and improve patient outcomes. This is in line with general recommendations on how to achieve successful implementation [51, 52]. While not always the case, it is likely that such implementation interventions might be costly and time consuming, require changes to the structure of healthcare practitioners’ duties as well as changes to the structure, policies and procedures of healthcare systems. In summary ongoing support may be needed to effect a change in the culture of the individual healthcare practitioners and the organisation within which they work to ensure sustained change in practice that is in line with best available evidence [53]. This would require the updating of practice as current evidence is refined and new evidence comes to light. Such implementation interventions require the co-operation of the target healthcare practitioners, who may not view the implementation as a high priority [54, 55] or may cede to patient requests and by doing so deviate from the best evidence-based recommendations [56].

Ongoing, regular implementation interventions may be costly to organise and sustain yet only two studies of the 14 included in this review reported costs. McGuirk et al. [32] reported that the average cost per patient under evidence-based care was AUS$276 compared to AUS$472 but made no mention of how much their intervention (special evidence based clinics staffed by motivated practitioners) cost to implement. In the study by Goldberg et al. [31] five communities received a complex, multifaceted intervention comprising several information delivery methods over a sustained period of time at an intervention cost of USA$40,000 per community per year. To give some perspective regarding these communities, Goldberg et al. [31] stated that there was an adult population of 123,829 across the five communities, with 7.4 GPs and 4.2 surgeons per 10,000. However, as can be seen from other successful implementation studies such as the introduction and mandatory use of a new radiographic request form by Baker et al. [50], ongoing implementation efforts do not necessarily have to be costly.

Before conducting implementation studies the cost, alterations to daily working practices, changes to workplace policy and procedures and ‘buy in’ or engagement from healthcare practitioners all need to be considered. Given this review highlights the importance of interventions that are ongoing over time, this suggests that a sustainability plan might be needed for after the end of the implementation intervention testing period.

Behaviour change theory and the evidence-base underpinning the implementation interventions of included studies

The depth and breadth of individual implementation interventions and the different combinations made it challenging to synthesise the data from studies in this review. Although many of the included studies did provide a clear rationale for their selection of implementation interventions it seemed that only certain elements were justified such as the method of knowledge transfer (e.g. via local opinion leaders or by audit and feedback). None of the studies described the basis for other features of their interventions such as frequency and duration, which our review has found to be important.

Behaviour change is required for any implementation intervention to be effective. Michie et al. [57] suggested that better understanding and specifying the behaviour changes required makes implementation interventions more likely to succeed. They also recommended that the elements of the behaviour change intervention should be developed based on the analysis of the antecedents and consequences controlling implementation behaviours and that this analysis should be informed by relevant psychological theory. Examples suggested by Michie and colleagues include the Theoretical Domains Framework, the Capability, Opportunity, Motivation – Behaviour (COM-B) framework and the Behaviour Change Wheel [58]. These processes and behaviour change frameworks were incomplete or absent in the papers included in this review.

Eccles et al. [59] concur with Michie et al. [57] and provide examples of three types of psychological theory: motivational (how individuals come to wish/intend to change behaviour), action (how individuals move from intention to actual behaviour) and stage theory, which proposes an orderly progression through discrete stages towards behaviour change. Stage theory in particular appears to underpin the most important features of successful implementation interventions.

Risk of bias of included papers

The risk of bias results for the 17 included papers does weaken the conclusions from this review. In total 7 or 41 % of papers were rated as having a high risk of bias. These include the papers by Becker et al. [44], Goldberg et al. [31], McGuirk et al. [32] and Schectman et al. [23] all of which reported at least moderately successful outcomes. Of these four papers, three were rated at high risk of bias in the category, ‘were incomplete outcome data adequately addressed?’ and the other paper (Schectman et al., [23] was rated as unclear for this category. Rating high risk in this category clearly affects the confidence in the results. These four papers also scored unclear risk for the category ‘was knowledge of the allocated intervention adequately prevented during the study?’ meaning that they did not specify this information in their paper.

Strengths and limitations

The methods of this review were developed in conjunction with experts in the field and followed the guidance from the Cochrane Collaboration and EPOC. The electronic database searching was thorough and followed that suggested by the Cochrane Handbook for Systematic Reviews of Interventions [60]. Study eligibility and risk of bias of included studies were determined through independent assessment by members of the study team and in line with Cochrane guidance [59]. The main limitation of this review is the variable risk of bias within the included published papers. Adhering to the EPOC guidance for study eligibility meant that many studies were excluded based on their study design. In addition the included studies were heterogeneous in terms of study design, type of implementation intervention used, healthcare practitioners targeted, practitioner behaviour targeted and study setting. This heterogeneity made it challenging to synthesise the results. Clearly the quality of research in this field varies and further high quality studies of implementation interventions in the field of NSLBP are needed. One further limitation of note is that only papers published in English were considered for inclusion.

Conclusion

The results of this review indicate that the most successful interventions to support implementation of best available evidence into clinical practice for NSLBP are those that occur more frequently and are ongoing. Other factors such as intervention type, complexity or target healthcare practitioner or behaviour did not appear to determine the success of the implementation intervention tested. These results must be interpreted with some caution given that many included papers were at high risk of bias. Further high quality studies are needed to robustly test the effectiveness of implementation interventions in this field. The investigators of future implementation studies in this area should develop a strong rationale for the implementation intervention(s) chosen by identifying barriers and facilitators to implementation of best available evidence, select relevant implementation interventions to overcome these barriers and enhance the facilitators and follow best practice guidelines in design, conduct and reporting of their studies. In particular future studies need to give careful consideration to the frequency and duration of their implementation intervention and evaluate cost-effectiveness.

Abbreviations

ASSIA, Applied Social Science Index and Abstracts; EPOC, The Cochrane Effective Practice and Organisation of Care Group; LBP, Low back pain; NSLBP, Non-specific low back pain; PICO, Population; Intervention; Comparator and Outcome; PRISMA, Preferred Reporting Items for Systematic Reviews and Meta-analyses; RCT, Randomised controlled trial; SF-36, Short Form 36; VAS, Visual analogue scale; WHO, World Health Organisation

References

Murray CJL, Vos T, Lazano R, Naghavi M, Flaxman AD, Michaud C, et al. Disability-adjusted life years (DALYs) for 291 diseases and injuries in 21 regions, 1990–2010: A systematic analysis for the Global Burden of Disease Study 2010. Lancet. 2010;380:2197–223.

Lim SS, Vos T, Flaxman AD, Danaei G, Shibuya K, Adair-Rohani H, et al. A comparative risk assessment of burden of disease and injury attributable to 67 risk factors and risk factor clusters in 21 regions, 1990–2010: A systematic analysis for the Global Burden of Disease study 2010. Lancet. 2010;380:2224–60.

Vos T, Flaxman AD, Naghavi M, Lazanq R, Michaud C, Ezzati M, et al. Years lived with disability (YLDs) for 1160 sequelae of 289 diseases and injuries 1990–2010: A systematic analysis for the Global Burden of Disease Study. Lancet. 2010;380:2163–96.

Ehrilch GE, Khaltaev NG. Low back pain initiative. The World Health Organisation. 1999. http://www.who.int/iris/handle/10665/66296. Accessed 10 Feb 2013.

Punnett L, Pruss-Utun A, Nelson DI, Fingerhut MA, Leigh J, Tak SW, Phillips S. (2005) Estimating the global burden of low back pain attributable to combined occupational exposures. Am J Ind Med. 2005;48(6):459–69.

Deyo RA, Ranville J, Kent DL. What can the history and physical examination tell us about low back pain? JAMA. 1992;268:760–5.

Ehrlich GE. Low back pain. Bull World Health Organ. 2003;81:671–6.

Burton AK, Balagué F, Cardon G, Eriksen HR, Henrotin Y, Lahad A, Leclerc A, Muller G, van der Beck AJ. Chapter 2. European guidelines for prevention in low back pain. Eur Spine J. 2006;15 Suppl 2:136–68.

Chou R, Qaseem A, Snow V, Casey D, Cross T, Shekelle P, Owens DK. Diagnosis and treatment of low back pain: A joint clinical practice guideline from the American College of Physicians and the American Pain Society. Ann Intern Med. 2007;147:478–91.

McCarthy CJ, Arnall FA, Strimpakos N, Freemont A, Oldham JA. The biopsychosocial classification of non-specific low back pain: A systematic review. Phys Ther Rev. 2004;9:17–30.

Burton AK, Waddell G. Clinical guidelines in the management of low back pain. Baillieres Clin Rheumatol. 1998;12(1):17–35.

Koes BW, van Tulder M, Lin C-WC, Macedo LG, McAuley J, Maher C. An updated overview of clinical guidelines for the management of non-specific low back pain in primary care. Eur Spine J. 2010;19:2075–94.

Spitzer WO, Abenhaim L, Dupis M, Belanger AY, Bloch R, Bombardier C, et al. Scientific approach to the assessment and measurement of activity-related spinal disorders: A mono-graph for clinicians – Report of the Quebec Task Force on Spinal Disorders. Spine. 1987;12(Supp):1–59.

Koes BW, van Tulder MW, Ostelo R, Burton AK, Waddell G. Clinical guidelines for the management of low back pain in primary care. Spine. 2001;26(22):2504–14.

Bishop PB, Wing PC. Compliance with clinical practice guidelines in family physicians managing worker’s compensation board patients with acute lower back pain. Spine J. 2003;3:442–50.

Scott NA, Moga C, Harstall C. Managing low back pain in the primary care setting: The know-do gap. Pain Res Manag. 2010;15(6):392–400.

Williams CM, Maher CG, Hancock MJ, McAuley JH, McLachlan AJ, Britt H, Fahridin S, Harrison C, Latimer J. Low back pain and best practice care: A survey of General Practice physicians. Arch Intern Med. 2010;170(3):271–7.

Pablos-Mendez A, Shademani R. Knowledge translation in global health. J Contin Educ Health Prof. 2006;26(1):81–6.

Brownson RC, Colditz GA, Proctor EK. Dissemination and Implementation Research in Health. Translating Science to Practice. 1st ed. New York: Oxford University Press; 2010.

Mitton C, Adair CE, McKenzie E, Patten SB, Perry BW. Knowledge transfer and exchange: Review and synthesis of the literature. Millbank Quarterly. 2007;85(4):729–68.

Ward V, House A, Hamer S. Developing a framework for transferring knowledge into action: A thematic analysis of the literature. Journal of Health Services, Research and. Policy. 2009;14(3):156–64.

Kerry S, Oakeshott P, Dundas D, Williams J. Influence of postal distribution of the Royal College of Radiologists’ guidelines together with feedback on radiological referral rates, on X-ray referrals from general practice: a randomised controlled trial. Fam Pract. 2000;17:46–52.

Schectman JM, Schroth WS, Verme D, Voss JD. Randomised controlled trial of education and feedback for implementation of guidelines for acute low back pain. J Gen Internal Med. 2003;18:773–80.

Eccles M, Steen N, Grimshaw J, Thomas L, McNamee P, Soutter J, Wilsdon J, Matowe L, Needham G, Gilbert F, Bond S. Effect of audit and feedback and reminder messages on primary-care radiology referrals: a randomised trial. Lancet. 2001;357:1406–9.

Matowe L, Ramsay CR, Grimshaw JM, Gilbert FJ, MacLeod M-J, Needham G. Effects of mailed dissemination of the Royal College of Radiologist’ guidelines on General Practitioner referrals for radiography: A time series analysis. Clin Radiol. 2002;57:575–8.

Bekkering GE, Hendriks HJM, van Tulder MW, Knol DL, Hoeijenbos M, Oostendorp RAB, Bouter LM. Effect on the process of care of an active strategy to implement clinical guidelines on physiotherapy for low back pain: a cluster randomised controlled trial. Qual Saf Health Care. 2005;14:107–12.

Engers AJ, Wensing M, van Tulder MW, Timmermans A, Oostendorp RAB, Koes BW, Grol R. Implementation of the Dutch Low Back Pain Guideline for General Practitioners. A cluster randomised controlled trial. Spine. 2005;30(6):595–600.

Bishop PB, Wing CB. Knowledge transfer in family physicians managing patients with acute low back pain: a prospective randomised control trial. Spine J. 2006;6:282–8.

Dey P, Simpson CWR, Collins SI, Hodgson G, Dowrick CF, Simison AJM, Rose MJ. Implementation of RCGP guidelines for acute low back pain: a cluster randomised controlled trial. Br J Gen Pract. 2004;54:33–7.

Stevenson K, Lewis M, Hay E. Does physiotherapy management of low back pain change as a result of an evidence-based educational programme? J Eval Clin Pract. 2006;12(3):365–75.

Goldberg HI, Deyo RA, Taylor VM, Cheadle AD, Conrad DA, Loeser JD, Heagerty PJ, Diehr P. Eff Clin Pract. 2001;4:95–104.

McGuirk B, King W, Govind J, Lowry J, Bogduk N. Safety, efficacy and cost effectiveness of evidence-based guidelines for the management of acute low back pain in primary care. Spine. 2001;26(23):2615–22.

EPOC resources. Study designs accepted in EPOC reviews. 2012. http://epoc.cochrane.org/epoc-resources. Accessed 24 Feb 2013.

Centre for Reviews and Dissemination, University of York. Systematic Reviews. CRD’s guidance for undertaking reviews in health care. York Publishing Services Ltd., York. 2008. https://www.york.ac.uk/media/crd/Systematic_Reviews.pdf. Accessed 28 Feb 2013.

Moher D, Liberati A, Tetzlaff J, Altman DG. Preferred reporting items for systematic reviews and meta-analyses: the PRISMA statement. BMJ. 2009;339:332–6.

World Health Organisation: International Clinical Trials Registry Platform. 2012. http://www.who.int/ictrp/en/. Accessed 3 March 2013

Lugtenberg M, Burgers JS, Westert GP. Effects of evidence-based clinical practice on quality of care: a systematic review. Qual Saf Health Care. 2009;18:385–92.

van der Wees PJ, Jamtvedt G, Rebbeck T, de Bie RA, Dekker J, Hendriks EJM. Multifaceted strategies may increase implementation of physiotherapy clinical guidelines: a systematic review. Australian J Physiother. 2008;54:233–41.

EPOC Resources: Risk of Bias – EPOC Specific. 2012. http://epoc.cochrane.org/epoc-resources. Accessed 4 Sept 2012.

Arditi C, Rège-Walther M, Wyatt JC, Durieux P, Burnand B. Computer-generated reminders delivered on paper to healthcare professionals; effects on professional practice and health care outcomes. Cochrane Database of Systematic Reviews. 2012. Issue 12. Issue 12. Art. No.: CD001175. doi:10.1002/14651858.CD001175.pub3.

Flodgren G, Parmelli E, Doumit G, Gattellari M, O’Brien MA, Grimshaw J, Eccles MP. Local opinion leaders: effects on professional practice and health care outcomes. Cochrane Database of Systematic Reviews. 2011. Issue 8. Art. No.: CD000125. doi:10.1002/14651858.CD000125.pub4.

Forsetlund L, Bjørndal A, Rashidian A, Jamtvedt G, O’Brien MA, Wolf FM, Davis D, Odgaard-Jensen J, Oxman AD. Continuing education meetings and workshops: effects on professional practice and health care outcomes. Cochrane Database of Systematic Reviews. 2012. Issue 2. Art. No.: CD003030. doi:10.1002/14651858.CD003030.pub2.

EPOC Resources: Data collection checklist. http://epoc.cochrane.org/epoc-resources 2012. Accessed 4 Sept 2012.

Becker A, Leonhardt C, Kochen MM, Keller S, Wegscheider K, Baum E, Donner-Banzhoff N, Pfingdsten M, Hildebrandt J, Basler H-D, Chenot JF. Effects of two guidelines implementation strategies on patient outcomes in primary care. Spine. 2008;33:473–80.

Bekkering GE, van Tulder MW, Hendriks EJM, Koopmanschap MA, Knol DL, Bouter LM, Oostendorp AB. Implementation of clinical guidelines on physical therapy for patients with low back pain: Patients with low back pain: Randomised trial comparing patient outcomes after a standard and active implementation strategy. Phys Ther. 2005;85:544–55.

Engers AJ, Wensing M, van Tulder MW, Timmermans AE, Oostendorp RAB, Koes BW, Grol R. Effect on patient outcomes of a brief, multifaceted educational intervention for general practitioners to implement low back pain guidelines: a randomised trial. Nijmegen University: A thesis submitted in partial fulfilment of the requirements of the requirements of Nijmegen University for the degree of Doctor of Quality of Care Research; 2004.

Winkens RAG, Pop P, Bugter-Maessen AMA, Grol RPTM, Kester ADM, Beusmans GHMI, Knottnerus JA. Randomised controlled trial of routine individual feedback to improve rationality and reduce numbers of test requests. Lancet. 1995;345:498–502.

Ramsay CR, Eccles M, Grimshaw JM, Steen N. Assessing the long-term effect of educational reminder messages on primary care radiology referrals. Clin Radiol. 2003;58:319–21.

Petty RE, Cacioppo JT. The elaboration likelihood model of persuasion. Adv Exp Soc Psychol. 1986;19:123–205.

Baker SR, Rabin A, Lantos G, Gallagher EJ. The effect of restricting the indications for lumbosacral spine radiography in patients with acute back symptoms. Am J Radiol. 1987;149:535–8.

Grimshaw J, Freemantle N, Wallace S, Russell I, Hurwitz B, Watt I, Long A. Developing and implementing clinical practice guidelines. Qual Health Care. 1995;4:55–64.

Grol R, Wensing M, Eccles M. Improving patient care: the implementation of change in clinical practice. 1st ed. Butterworth, Heinemann, London: Elsevier; 2004.

Brown GT, Rodger S. Research utilization models: Frameworks for implementing evidence-based occupational therapy practice. Occup Ther Int. 1999;6:282–8.

Cusick A, McCluskey A. Becoming an evidence-based practitioner through professional development. Aust Occup Ther J. 2000;47:159–70.

Gagan M, Hewitt-Taylor J. The issues for nurses involved in implementing evidence in practice. Br J Nurs. 2004;13(20):1216–20.

Schers H, Wensing M, Huijsmans Z, van Tulder M, Grol R. Implementation barriers for General Practice. Guidelines on low back pain. A qualitative study. Spine. 2001;26(15):E348–53.

Michie S, Johnson M. Changing clinical behaviour by making guidelines specific. Br Med J. 2004;328:343–5.

Michie S, van Stralen MM, West R. The behaviour change wheel: A new method for characterising and designing behaviour change interventions. Implement Sci. 2011;6:42.

Eccles M. Grimshaw. R, Walker A, Johnston M, Pitts N. Changing the behaviour of healthcare professionals: the use of theory in promoting the uptake of research findings. J Clin Epidemiol. 2005;58:107–12.

Cochrane Handbook for Systematic Reviews of Interventions. 2012. http://handbook.cochrane.org/. Accessed 29 Dec 2012.

Acknowledgements

The authors would like to acknowledge the support of the following:

Dan Franklin, Physiotherapy Manager, Back in Action Physiotherapy Ltd., London

Jo Jordan, Research Information Manager, Research Institute of Primary Care and Health Sciences, Keele University

Dr Rachel Gick, Health Librarian, Keele University

Kirsty Duncan, PhD Student, Research Institute of Primary Care and Health Sciences, Keele University.

Funding

This research was completed as part of a Masters degree in Neuromusculoskeletal Healthcare at Keele University, Staffordshire, England undertaken by the lead author, Simon Mesner, between 2009 and 2013. All costs relating to the Masters degree and hence the completion of this research, were met personally by Simon Mesner.

Nadine E Foster is supported through an NIHR Research Professorship (NIHR-RP-011-015). Simon French is supported by a Professorship from the Canadian Chiropractic Research Foundation. The views expressed in this publication are those of the authors and not necessarily those of the NHS, the NIHR or the Department of Health.

Availability of data and materials

Please find all summarised datasets reported in the results section and the tables.

Authors’ contributions

SAM was the lead author. He undertook this review as part fulfilment of a Masters degree in Neuromusculoskeletal Healthcare at Keele University. SAM collaborated on the conception of the review, undertook the background research to the review, led the development of the literature review, undertook the electronic database searches and hand searching, collated the results, decided on the key patterns in the results and wrote the first draft of the manuscript. NEF was the thesis lead supervisor to SAM. NEF collaborated on the conception of the review, reviewed and edited each section of the review except for the discussion and conclusion, reviewed the short list of potential studies to be included and decided any difference of opinion between SAM and SDF regarding final study inclusion. NEF reviewed and contributed to drafts of this manuscript and approved the final manuscript. SDF advised on study types to be included in the review, reviewed the long and short list of potential studies to be included and collaborated with SAM in deciding on the final studies to be included. SDF reviewed and contributed to drafts of this manuscript and approved the final manuscript.

Authors’ information

Simon Alexander Mesner, Clinical Specialist Chartered Physiotherapist, 502, Caspian, Apartments, 5, Salton Square, London England E14 7GJ, simonmesner@gmail.com

Nadine E Foster, NIHR Professor of Musculoskeletal Health in Primary Care, Arthritis Research UK Primary Care Centre, Institute of Primary Care and Health Sciences, Keele University, Keele, Staffordshire England ST5 5BG, n.foster@keele.ac.uk

Simon David French, Associate Professor, Canadian Chiropractic Research Foundation Professorship in Rehabilitation Therapy, School of Rehabilitation Therapy, Faculty of Health Sciences, Queen’s University, Kingston, Ontario, Canada; Senior Research Fellow, Centre for Health, Exercise and Sports Medicine, School of Health Sciences, The University of Melbourne, Melbourne, Victoria, Australia, simon.french@queensu.ca

Competing interests

The authors declare that they have no competing interests.

Consent to publication

Not applicable.

Ethics approval and consent to participate

This systematic review did not include new data collection from participants and as such research ethical approval and individual participant consent was not required.

Author information

Authors and Affiliations

Corresponding author

Additional files

Additional file 1:

MEDLINE search. (DOCX 82 kb)

Additional file 2:

Suggested risk of bias criteria for EPOC reviews. (DOCX 96 kb)

Additional file 3:

Reasons for exclusion of the 136 full text papers. (DOCX 142 kb)

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The Creative Commons Public Domain Dedication waiver (http://creativecommons.org/publicdomain/zero/1.0/) applies to the data made available in this article, unless otherwise stated.

About this article

Cite this article

Mesner, S.A., Foster, N.E. & French, S.D. Implementation interventions to improve the management of non-specific low back pain: a systematic review. BMC Musculoskelet Disord 17, 258 (2016). https://doi.org/10.1186/s12891-016-1110-z

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/s12891-016-1110-z