Abstract

Background

Participants in voluntary research present a different demographic profile than those who choose not to participate, affecting the generalizability of many studies. Efforts to evaluate these differences have faced challenges, as little information is available from non-participants. Leveraging data from a recent randomized controlled trial that used health administrative databases in a jurisdiction with universal medical coverage, we sought to compare the quality of care provided by participating and non-participating physicians prior to the program’s implementation in order to assess whether participating physicians provided a higher baseline quality of care.

Methods

We conducted clustered regression analyses of baseline data from provincial health administrative databases. Participants included all family physicians who were eligible to participate in the Improved Delivery of Cardiovascular Care (IDOCC) project, a quality improvement project rolled out in a geographically defined region in Ontario (Canada) between 2008 and 2011. We assessed 14 performance indicators representing measures of access, continuity, and recommended care for cancer screening and chronic disease management.

Results

In unadjusted and patient-adjusted models, patients of IDOCC-participating physicians had higher continuity scores at the provider (Odds Ratio (OR) [95% confidence interval]: 1.06 [1.03–1.09]) and practice (1.06 [1.04–1.08]) level, lower risk of emergency room visits (Rate Ratio (RR): 0.93 [0.88–0.97]) and hospitalizations (RR:0.87 [0.77–0.99]), and were more likely to have received recommended diabetes tests (OR: 1.25 [1.06–1.49]) and cancer screening for cervical cancer (OR: 1.32 [1.08–1.61] and breast cancer (OR: 1.32 [1.19–1.46]) than patients of non-participating physicians. Some indicators remained statistically significant in the model after adjusting for provider factors.

Conclusions

Our study demonstrated a participation bias for several quality indicators. Physician characteristics can explain some of these differences. Other underlying physician or practice attributes also influence interest in participating in quality improvement initiatives and existing quality levels. The standard for addressing participation bias by controlling for basic physician and practice level variables is inadequate for ensuring that results are generalizable to primary care providers and practices.

Similar content being viewed by others

Background

Voluntary participation is a hallmark of almost all research studies. This approach to recruiting participants can lead to the situation where those enrolling are different from those who refuse to participate. It is important to understand the differences between the participating and non-participating groups to evaluate the generalizability and relevance of the conclusions from the study. Comparisons of the groups are typically very limited because very little information is available from those who chose not to participate. Investigators must respect the individual’s decision to not participate and cannot survey them to collect data that would extend comparisons with those who agreed to participate. Publically available information is often used to show there are differences between groups, but these comparisons are typically limited to simple demographic information. [1] This is particularly problematic when information is unavailable that would characterize the participants who could benefit the most from the intervention. In many situations the physicians who most need the help are the least likely to participate; thus, the conclusions of the studies may not apply to them. [2]

There is evidence that family physicians who agree to participate in research are different from those who do not. Participants are more likely to be female, [3] younger, [3, 4] and graduates of local (as opposed to international) medical schools. [1] They are more likely to practice in rural areas, [4] under a salaried model, in larger practices, and to spend more hours per week providing direct patient care. [5] It has long been suspected that low performing physicians are less likely to volunteer to participate in studies. However, because studies only have access to recruitment data for non-participants, our current knowledge is limited to what can be discerned from readily available practice and provider recruitment information.

To explore this issue, we drew on data from the Improved Delivery of Cardiovascular Care (IDOCC) study, a pragmatic voluntary regional quality improvement (QI) research initiative conducted between 2008 and 2010 aiming to improve the delivery of cardiovascular care. Using data from population-based administrative health databases, we sought to compare the quality of care provided by participating and non-participating physicians prior to the IDOCC program’s implementation in order to assess whether the quality of care of physicians who participated in that QI project was higher than for those who did not participate. We considered using 14 well-established quality of care outcomes that capture cancer screening, access, continuity, and chronic disease management. [6, 7]

Methods

The IDOCC study

IDOCC was a stepped wedge, multifaceted, pragmatic, QI research study designed to assist primary care providers to improve their delivery of evidence-based care for the secondary prevention of cardiovascular disease. [8, 9] The trial was retrospectively registered on Clinicaltrials.gov on December 17, 2007 (https://clinicaltrials.gov/ct2/show/NCT00574808?id=NCT00574808&rank=1).

Context and design

We conducted a cross sectional evaluation of baseline data for the IDOCC project, a voluntary QI research initiative in the Champlain Local Health Integration Network (LHIN), Ontario, Canada. Care of that population of 1.2 million is covered by a tax-supported universal health care system.

Recruitment

The program was open to active family physicians providing general services within the Champlain LHIN in Eastern Ontario. Recruitment followed the Dillman approach [10] with invitation letters explaining the expectations from the participants, the role of the practice facilitator, the potential benefit to patients, and the eligibility of participants to claim continuing professional development credits. No financial compensation or any other incentives were provided.

We made every effort to reach all primary care practices within the region. We relied on various physician listings and a snowball approach to identify all potentially eligible family practices. Recruitment efforts were extensive, and were discontinued only after reaching the target number of 30 practices in each sub-region or until resources were exhausted. Recruitment letters were sent to the 533 primary care practices identified in the Champlain LHIN, inviting all eligible physicians to participate. Eligibility was restricted to practices that had been operating for at least 2 years so that adequate data was available for the indicator measurements, and to family physicians who provided comprehensive primary care to a panel of patients and were not planning to retire within the two-year intervention timeframe. Family physicians agreeing to participate signed an informed consent form. That form included permission to access their provincial health administrative data held at the Institute for Clinical Evaluative Sciences (ICES).

Ninety-nine practices were excluded due to non-eligibility (not providing comprehensive care, providing walk in services only or having been in place less than 2 years). Of the 434 eligible practices, 93, including 194 physicians, agreed to participate. Ten practices withdrew prior to the initiation of the intervention, primarily because of competing priorities, leaving 83 participating practices (19%) and 182 physicians.

Dataset creation

We relied on data housed at ICES, a not for profit research institute that holds and links several health related billing databases for Ontario from which patient and physician profiles and performance measures can be obtained. Encounters with the healthcare system for all LHIN residents are captured in these databases, with the exception of those taking place in Community Health Centres (CHCs), a salaried model in which family physicians do not bill for services. The 35 participating CHC physicians were excluded from the evaluation, leaving 147 eligible physicians. The datasets were linked using unique encoded identifiers and analyzed at ICES.

To the extent possible, the health administrative dataset was created to mirror the eligibility criteria imposed in the study. The data set from this study is held securely in coded form at ICES. While data sharing agreements prohibit ICES from making the data set publicly available, access may be granted to those who meet pre-specified criteria for confidential access, available at www.ices.on.ca/DAS. The authors did not have special access privileges. Interested researchers would be able to access the data in the same manner as the authors.

Physicians

Target population (see Table 1): We identified all physicians who practiced within the Champlain LHIN using the practice postal code information contained in the ICES Physician Database (IPDB). We then applied the same exclusion criteria to those physicians as would have been applied in the intervention study. We excluded physicians who were recorded as having a specialty other than “General Practice” or “Family Practice with Emergency Medicine” in the IPDB. Applying that criteria at the IDOCC start date (April 2007) identified 1340 physicians: 1201 IDOCC non-participants and 139 IDOCC participants. We extended the eligibility years to 2009 to ensure that young physicians having participated in IDOCC could be captured, which identified an additional 182 physicians, including the remaining 8 IDOCC physicians who engaged in Step III (April 2009).

We limited the dataset to physicians whose billing patterns demonstrated that they provided general, comprehensive primary care (having billed at least eight of the 21 comprehensive primary care fee schedule codes during the past 2 years). This led to the exclusion of 400 physicians, including three IDOCC physicians who were new graduates working under capitation remuneration and thus offered a fixed income, independent of clinical activity, for 2 years while they established a practice panel. During this “income stabilization” period, physicians do not bill for their activities and would therefore not meet that criterion. While we know that these three physicians did provide comprehensive care, they were excluded to ensure the same criteria were applied to the two both groups.

In Ontario, the median patient panel size is approximately 1,400. We excluded physicians with fewer than 100 patients because these outliers likely provide walk-in services only, were near retirement or were new graduates who haven’t yet built their panel, and had too few patients to be adequately evaluated.

Time Period: Baseline characteristics were captured for the 2 years prior to the IDOCC April 2007 recruitment start date (April 2005–March 2007) for all physicians having met the criteria on that date. For the physicians identified in 2009, the baseline characteristics were evaluated for the two prior years (April 2007–March 2009).

Physician profile: We used the Corporate Provider Database and the IPDB to obtain information on physician characteristics, including sex, age, having received medical training abroad, remuneration (fee-for-service vs capitation) and team (non-interprofessional vs. interprofessional) structure, practice rurality, and practice group size at the end of the two-year period.

Patients

Patients officially registered to a physician were identified using the Client Agency Program Enrolment database and were attributed to that physician. Approximately 20% of the population is not formally registered to the family physician from whom they receive care. These individuals were “virtually” attributed to the family physician who provided the largest dollar amount of services over the baseline two-year period. [11, 12] Information on patient characteristics including age, sex, and rurality were drawn from Ontario’s Registered Persons Database. Income quintile was derived from the patients’ postal code using aggregate census data. Patients who acquired Ontario Health Insurance Program (OHIP) coverage at a time other than birth were deemed to be immigrants.

Quality of care measures

We considered 14 quality of care outcomes spanning four categories (cancer screening, access, continuity, and chronic disease management). These indicators represent measures widely accepted as reflecting primary care performance and are commonly used for that purpose). [6, 7] The cancer screening outcomes captured whether or not patients had undergone the screening test for which they were eligible (Ontario Breast Screening Program, OHIP laboratory fees). Access outcomes captured the rate of emergency room visits per year, both overall and for low urgency (National Ambulatory Care Reporting System), and the rate of hospital admissions for ambulatory care sensitive conditions (Discharge Abstract Database). Because the likelihood of using the emergency room and having a hospitalization is strongly tied to rurality, [13] we limited the evaluation of these measures to patients living in urban regions (Rurality Index of Ontario < 10). Continuity outcomes were the mean Usual Provider Care Index and the Practice Care Index, which represent the proportion of patients’ primary care visits made to their designated provider and within the practice of their designated provider, respectively. Chronic disease management outcomes captured whether or not patients received care (medication and tests) according to recommended guidelines. Tests were obtained from the OHIP billings and medication from the Ontario Drug Benefit database which contains prescription information of individuals for whom medication is covered by the provincial insurance; those on social assistance and all those > 65 years. Additional details on these indicators are presented in Additional file 1.

Analyses

We described the patient profile, provider characteristics and quality of care measures for participating (IDOCC) and non-participating (Non-IDOCC) physicians. We conducted hierarchical regressions with patients nested within providers for access (Poisson), continuity (linear), and chronic disease management (logistic) indicators. For each quality of care indicator, we used a stepped regression approach to assess differences across IDOCC’s participants and non-participants. We evaluated the relationship without adjustment (Model 1), accounted for patient factors (Model 2), and then added physician factors (Model 3) in the regression model. Model 2 allowed a “fairer” comparison between the groups, while Model 3 allowed us to understand the impact of provider factors on the differences in performance across groups.

Results

Table 2 reports descriptive statistics for the two groups. Capitation-based practices make up 13% of all Champlain practices, but 37% of participating practices. Similarly, family physicians working under the capitation model (45% vs. 18%), in rural practices (19% vs. 10%), as well as female physicians (55% vs. 47%), non-solo practitioners (92% vs. 73%), and to a lesser extent Canadian trained physicians (88% vs. 83%) and physicians with larger mean panel sizes (1,239 (1,154–1324) vs. 1,109 (1,061-1,158)) were more represented among IDOCC participants. Patient profiles differed little across groups.

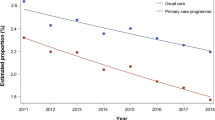

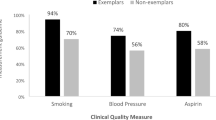

Table 3 reports the estimated ratios measuring differences in quality of care outcomes between participating and non-participating physicians using the three modelling approaches described above. Additional file 2 provides the complete regression results model for the 14 outcomes considered. The direction of effect for all quality indicators was in favour of IDOCC physicians providing better care at baseline. After accounting for patient factors, the difference was statistically significant for two indicators within each dimension: 1) Cancer screening: cervical (Odds Ratio [95% confidence interval] (OR): 1.32 [1.08–1.61] and breast (OR: 1.32 [1.19–1.46]); 2) Access: emergency room visits (Rate Ratio (RR): 0.93 [0.88–0.97] and hospitalizations for ambulatory care sensitive conditions (RR:0.87 [0.77–0.99]; 3) Chronic disease management: eye exam (OR: 1.15 [1.06–1.25] and a glycated haemoglobin test (OR: 1.25 [1.06–1.49]); and 4) Continuity: provider (OR: 1.06 [1.03–1.09]) and practice (OR: 1.06 [1.04–1.08]) level.

Adjusting for physician factors had no effect on access (RR for emergency room visits: 0.93 [0.88–0.98] and hospitalizations for ambulatory care sensitive conditions: 0.85 [0.75–0.97]) or continuity (OR for: 1.06 [1.03–1.09] and practice: 1.04 [1.02–1.06]) measures, but attenuated the effect observed in cancer screening (OR for: 1.16 [1.01–1.34] and breast: 1.15 [1.05–1.26]) and rendered the chronic disease management indicators non-significant. In these analyses, all included physician factors had a statistically significant association with at least one quality of care indicator (Additional file 2). Female physicians were associated with better quality of care across all but one indicator. Physicians who were younger, received their training in Canada, and belonged to group practices were usually, but not consistently, associated with better quality of care (See Additional file 2).

Discussion

This study is the first to demonstrate that primary care physicians who agree to participate in a voluntary QI research initiative have a better performance quality level at baseline compared to those who do not. Accounting for patient characteristics, all 14 Quality of Primary Care indicators covering the four dimensions studied favoured the IDOCC group, with eight reaching statistical significance.

The observed differences in family physician profiles across the two groups were largely consistent with previous publications. IDOCC participants were more likely to be female, [3] and work in rural, [4] non-solo, [4, 5] capitation practices. [14] They had a larger clinical load [5], were less likely to be foreign trained, [1] but did not differ in age, [3, 4] or the profile of their patients.

Some of these characteristics had previously been associated with better quality of care. Female family physicians are reported to provide better chronic disease prevention and management. [15,16,17,18] A study conducted by members of our team demonstrated lower emergency room visits and hospitalization amongst patients receiving their care from female family physicians. [19] Similarly, there is some evidence of better outcomes from larger practices and practices whose remuneration is capitation-based. [20,21,22] However, while including all measured physician characteristics attenuated some of the observed differences, suggesting that differences in physician profile likely mediate some of the disparities in performance level across the groups; it did not completely eliminate these differences. The inclusion of physician characteristics had little impact on the measures of continuity, and clinical outcome measures such as the risk of emergency room visits and hospitalizations. These results suggest that other unmeasured provider- or practice-level attributes may affect the likelihood of agreeing to participate in a quality improvement program and the quality of care delivered.

Studies have suggested that practices’ attributes influence their providers’ likelihood of participating in QI initiatives. Practices are more likely to participate in QI initiatives when they have less hierarchical leadership structures, provide supportive or less stressful working environments, encourage innovation, value collaboration, or have a quality champion. [23,24,25,26,27,28] A recent review describing the barriers that limit physicians’ participation in innovations suggested that, in addition to the well-documented issues of competing priorities and limited resources, physicians who do not engage in innovations may be unwilling to expand efforts towards a yet unproven approach. [29]

Voluntary QI research studies face a challenge due to a ceiling effect, as the physicians most inclined to participate often have the least room to improve their delivery of care. Consequently, QI programs rarely achieve meaningful impact. [30] A systematic review found that QI initiatives aimed at improving diabetic control were most effective in practices that initially demonstrated poor performance. [31]

Recruitment strategies need to reach physicians and practices most likely to benefit from QI initiatives. However, more aggressive recruitment strategies or mandatory participation alone are unlikely to be sufficient, as achieving improvement in quality often requires investment in time and commitment by practice members to result in change. [32] Physicians disinclined to participate may be less invested in the initiative and achieve little improvement despite having access to the knowledge and resources provided by the QI program. The notion that a systematic approach to quality improvement is a fundamental part of practice remains relatively new, and it may take some years before it becomes part of most physicians’ professional identity. [5]

Study limitations

We relied on a pragmatic trial implemented across a geographically diverse region in which over 1,000 family physicians working in different primary care models deliver care to approximately 1.2 million individuals. The study achieved participation rates comparable to other QI research programs. We used health administrative data to conduct a population-based evaluation capturing a broad range of quality of care outcomes. Because diabetes has high prevalence, and the guidelines for its management are well established and easily measured using health administrative data, it is commonly used to assess the quality of chronic conditions in population based studies. A broader set of indicators related to chronic conditions would have provided a more in depth assessment. We were not able to include CHCs in our comparisons and these made up nearly one quarter of our participants. The quality of care measures we assessed and the patient and provider profiles we analyzed were limited to those available in health administrative data. Factors that contribute to a physician’s decision to participate or not in a QI initiative may be related to the healthcare system context in which they operate. The results of this may not be entirely generalizable to other contexts.

Conclusions

The participants who voluntarily agreed to participate in the IDOCC study differed in several ways from those who refused. The family physicians who agree to participate have a better performance quality level at baseline compared to those who do not. While characteristics of participating physicians can explain some of these differences, other underlying physician or practice attributes are also likely to be influencing both interest in QI initiatives and existing quality levels. QI initiatives are not adequately reaching their target population. Our results may help inform policymakers to develop effective recruitment and engagement strategies for similar programs, with the hope of enhancing their efficiency and cost effectiveness.

It is important to understand the differences between the participating and non-participating physicians in order to evaluate the generalizability and relevance of the conclusions from studies that rely on voluntary participation. Comparisons of the groups are typically very limited because very little information is available from those who choose not to participate. In this particular circumstance where we were able to compare participants and non-participants much more extensively than usual, we found important differences demonstrating that the physicians that need the help most were the least likely to participate. Further research is warranted to determine how widespread is the participation bias caused by the voluntary nature of research participation.

Availability of data and materials

The datasets used and/or analysed during the current study are available from the corresponding author on reasonable request.

Abbreviations

- ICES:

-

Institute for Clinical Evaluative Sciences

- IDOCC:

-

Improved Delivery of Cardiovascular Care

- IPDB:

-

ICES Physician Database

- LHIN:

-

Local Health Integration Network

- OHIP:

-

Ontario Health Insurance Program

- QI:

-

Quality Improvement

References

Wall TC, Mian MA, Ray MN, Casebeer L, Collins BC, Kiefe CI, et al. Improving physician performance through internet-based interventions: who will participate? J Med Internet Res. 2005;7:e48.

van Heuvelen MJG, Hochstenbach JBM, Brouwer WH, de Greef MHG, Zijlstra GAR, van Jaarsveld E, et al. Differences between participants and non-participants in an RCT on physical activity and psychological interventions for older persons. Aging Clin Exp Res. 2005;17:236–45.

Borgiel AE, Williams JI, Davis DA, Dunn EV, Hobbs N, Hutchison B, et al. Evaluating the effectiveness of 2 educational interventions in family practice. CMAJ. 1999;161:965–70.

Shelton BJ, Wofford JL, Gosselink CA, McClatchey MW, Brekke K, Conry C, et al. Recruitment and retention of physicians for primary care research. J Community Health. 2002;27:79–89.

Audet AM, Doty MM, Shamasdin J, Schoenbaum SC. Measure, learn, and improve: physicians' involvement in quality improvement. Health Aff (Millwood ). 2005;24:843–53.

Dahrouge S, Hogg W, Younger J, Muggah E, Russell G, Glazier RH. Primary care physician panel size and quality of care: a population-based study in Ontario, Canada. Ann Fam Med. 2016;14:26–33.

Jaakkimainen L, Klein-Geltink JE, Guttmann A, Barnsley J, Zagorski BM, Kopp A et al.: Indicators of primary care based on administrative data. In In Primary Care in Ontario. Institute for Clinical Evaluative Sciences; 2006:227.

Liddy C, Hogg W, Russell G, Wells G, Armstrong C, Akbari A et al.: The improved delivery of cardiovascular care (IDOCC) through outreach facilitation: study protocol and implementation details of a cluster randomized controlled trial in primary care. BMC Implementation Science 2011, in print.

Liddy C: A real-world stepped wedge cluster randomized trial of practice facilitation to improve cardiovascular care. Implement Sci 10:150, 2015 2015, 150.

Dillman DA. Mail and telephone surveys: the total design method. New York: Wiley; 1978.

Reid R, Bogdanovic B, Roos NP, Black C, MacWilliam L, Menec V. Do some physician groups see sicker patients than others? Implications for primary care policy in Manitoba. 2001. Manitoba Centre for Health Policy and Evaluation: University of Manitoba.

Wranik DW, Durier-Copp M. Physician remuneration methods for family physicians in Canada: expected outcomes and lessons learned. Health Care Anal. 2010;18:35–59.

Hossain MM, Laditka JN. The influence of rurality on the volume of non-urgent emergency department visits. Spat Spatiotemporal Epidemiol. 2011;2.

Borgiel AE, Dunn EV, Lamont CT, MacDonald PJ, Evensen MK, Bass MJ, et al. Recruiting family physicians as participants in research. Fam Pract. 1989;6:168–72.

Eisinger F, Pivot X, Coscas Y, Viguier J, Calazel-Benque A, Blay JY, et al. Impact of general practitioners’ sex and age on systematic recommendation for cancer screening. European Journal of Cancer Prevention. 2011;20:Suppl–41.

Ince-Cushman D, Correa JA, Shuldiner J, Segouin J. Association of primary care physician sex with cervical cancer and mammography screening. Can Fam Physician. 2013;59:e11–8.

Lurie N, Slater J, McGovern P, Ekstrum J, Quam L, Margolis K. Preventive care for women--does the sex of the physician matter? N Engl J Med. 1993;329:478–82.

Baumhakel M, Muller U, Bohm M. Influence of gender of physicians and patients on guideline-recommended treatment of chronic heart failure in a cross-sectional study. Eur J Heart Fail. 2009;11:299–303.

Dahrouge S, Seale E, Hogg W, Russell G, Younger J, Muggah E et al. (Eds):a comprehensive assessment of family physician gender and quality of care: a cross-sectional analysis in Ontario, Canada. In Med Care 2016.

Beaulieu MD, Haggerty J, Tousignant P, Barnsley J, Hogg W, Geneau R, et al. Characteristics of primary care practices associated with high quality of care. Can Med Assoc J. 2013;185:E590–6.

Kiran T, Kopp A, Moineddin R, Glazier RH. Longitudinal evaluation of physician payment reform and team-based care for chronic disease management and prevention. CMAJ. 2015;187:E494–502.

Nodora JN, Martz WD, Ashbeck EL, Jacobs ET, Thompson PA, Martinez ME. Primary care physician compliance with colorectal cancer screening guidelines. Cancer Causes Control. 2011;22:1277–87.

Elovainio M, Steen N, Presseau J, Francis J, Hrisos S, Hawthorne G, et al. Is organizational justice associated with clinical performance in the care for patients with diabetes in primary care? Evidence from the improving quality of care in diabetes study. Fam Pract. 2013;30:31–9.

Hung DY, Glasgow RE, Dickinson LM, Froshaug DB, Fernald DH, Balasubramanian BA, et al. The chronic care model and relationships to patient health status and health-related quality of life. Am J Prev Med. 2008;35:Suppl–406.

Luxford K, Safran DG, Delbanco T. Promoting patient-centered care: a qualitative study of facilitators and barriers in healthcare organizations with a reputation for improving the patient experience. Int J Qual Health Care. 2011;23.

Quinn MA, Wilcox A, Orav EJ, Bates DW, Simon SR. The relationship between perceived practice quality and quality improvement activities and physician practice dissatisfaction, professional isolation, and work-life stress. Med Care. 2009;47:924–8.

Rushmer R, Kelly D, Lough M, Wilkinson JE, Davies HT. Introducing the learning practice--I. the characteristics of learning organizations in primary care. J Eval Clin Pract. 2004;10:375–86.

Siriwardena AN. Engaging clinicians in quality improvement initiatives: art or science? Qual Prim Care. 2009;17:303–5.

Holmboe ES, Cassel CK. The role of physicians and certification boards to improve quality. Am J Med Qual. 2007;22:18–25.

Schouten LM, Hulscher ME, van Everdingen JJ, Huijsman R, Grol RP. Evidence for the impact of quality improvement collaboratives: systematic review. [review] [15 refs]. BMJ. 2008;336:1491–4.

Tricco AC, Ivers NM, Grimshaw JM, Moher D, Turner L, Galipeau J, et al. Effectiveness of quality improvement strategies on the management of diabetes: a systematic review and meta-analysis. [review]. Lancet. 2012;379:2252–61.

Hogg W, Baskerville N, Nykiforuk C, Mallen D. Improved preventive care in family practices with outreach facilitation: understanding success and failure. J Health Serv Res Policy. 2002;7:195–201.

Acknowledgements

Thank you to Justin Joschko and Ilisha French for assistance with editing and finalizing the manuscript.

Funding

Primary Health Care Services program of the Ontario Ministry of Health and Long Term Care (MOHLTC), Champlain Cardiovascular Disease Prevention Network (partly supported through unrestricted funding from Pfizer Canada), Canadian Institutes for Health Research, and The Ottawa Hospital Academic Medical Organization’s Innovation Fund. None of these funding sources had a role in the design of this sub study, the collection, analysis, and interpretation of data, or the writing of the manuscript. This study was supported by the Institute for Clinical Evaluative Sciences (ICES), which is funded by an annual grant from the Ontario Ministry of Health and Long-Term Care (MOHLTC).

Author information

Authors and Affiliations

Contributions

SD conceptualized the sub study, led the analysis and writing of the paper. CDA participated in the analysis and writing of the paper. WH conceived of the original study and sub study, assisted with the data collection, participated in the analysis and writing of the paper. JS assisted with the data collection, analysis and writing of the paper. CL oversaw the implementation of the main study and participated in the analysis and writing of the paper. All authors read and approved of the final version of the manuscript.

Corresponding author

Ethics declarations

Ethics approval and consent to participate

This study was approved by the institutional review board at Sunnybrook Health Sciences Centre, Toronto, Canada, and the Ottawa Health Science Network Research Ethics Board (2007292-01H). Access to ICES data was obtained following the submission of our data analysis plan to that organization.

Consent for publication

Not applicable.

Competing interests

The opinions, results and conclusions reported in this paper are those of the authors and are independent from the funding sources. No endorsement by ICES or the Ontario MOHLTC is intended or should be inferred.

Parts of this material are based on data and information compiled and provided by CIHI. However, the analyses, conclusions, opinions and statements expressed herein are those of the author, and not necessarily those of CIHI.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Additional files

Additional file 1:

IDOCC Rep: Performance indicator. Operational definition of performance indicators for Cancer screening, Access, Chronic disease management and continuity. (DOCX 15 kb)

Additional file 2:

IDOCC Rep: Details of regressions. Results of regressions models for Cancer screening, Access, Chronic disease management and continuity indicators. (DOCX 76 kb)

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The Creative Commons Public Domain Dedication waiver (http://creativecommons.org/publicdomain/zero/1.0/) applies to the data made available in this article, unless otherwise stated.

About this article

Cite this article

Dahrouge, S., Armstrong, C.D., Hogg, W. et al. High-performing physicians are more likely to participate in a research study: findings from a quality improvement study. BMC Med Res Methodol 19, 171 (2019). https://doi.org/10.1186/s12874-019-0809-6

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/s12874-019-0809-6