Abstract

Introduction

Current sepsis guidelines recommend antimicrobial treatment (AT) within one hour after onset of sepsis-related organ dysfunction (OD) and surgical source control within 12 hours. The objective of this study was to explore the association between initial infection management according to sepsis treatment recommendations and patient outcome.

Methods

In a prospective observational multi-center cohort study in 44 German ICUs, we studied 1,011 patients with severe sepsis or septic shock regarding times to AT, source control, and adequacy of AT. Primary outcome was 28-day mortality.

Results

Median time to AT was 2.1 (IQR 0.8 – 6.0) hours and 3 hours (-0.1 – 13.7) to surgical source control. Only 370 (36.6%) patients received AT within one hour after OD in compliance with recommendation. Among 422 patients receiving surgical or interventional source control, those who received source control later than 6 hours after onset of OD had a significantly higher 28-day mortality than patients with earlier source control (42.9% versus 26.7%, P <0.001). Time to AT was significantly longer in ICU and hospital non-survivors; no linear relationship was found between time to AT and 28-day mortality. Regardless of timing, 28-day mortality rate was lower in patients with adequate than non-adequate AT (30.3% versus 40.9%, P < 0.001).

Conclusions

A delay in source control beyond 6 hours may have a major impact on patient mortality. Adequate AT is associated with improved patient outcome but compliance with guideline recommendation requires improvement. There was only indirect evidence about the impact of timing of AT on sepsis mortality.

Similar content being viewed by others

Introduction

In the treatment of severe sepsis, timely and effective antimicrobial therapy (AT) as well as source control is crucial and has become a key element in the resuscitation bundles proposed by the Surviving Sepsis Campaign (SSC)[1]. The SSC guidelines recommend obtaining blood cultures and applying intravenous broad-spectrum antimicrobials within 1 hour after the onset of severe sepsis or septic shock; the guidelines also recommend initiating surgical source control within 12 hours[1]. Numerous studies have shown that a delay of AT and inappropriate initial AT in this condition is associated with poor outcome[2–6]. One retrospective study in patients with septic shock suggests an increase of patient mortality between 7 and 8% per hour within the first 6 hours after onset of arterial hypotension[3]. There is also evidence that delayed surgery is associated with lower survival rates[7–9], but the appropriate time frame remains poorly defined[10].

In numerous retrospective and before-and-after studies, improved adherence to the sepsis bundles was associated with improved patient outcome[4, 11–14]. A meta-analysis suggests that among the individual elements of the resuscitation bundle, timely and appropriately administered antimicrobials are the most important predictors for survival[15]. However, compliance with sepsis guideline recommendations is poor[16]. In a Spanish multicenter trial, only 18.4% of the studied patients received AT within the first hour of severe sepsis or septic shock. The administration of antimicrobials within 1 hour was independently associated with a lower risk of hospital death[6].

Application of antimicrobials without microbiological evidence of infection might be associated with an unfavorable outcome[17]. Likewise, starting AT in patients with severe sepsis before obtaining blood cultures was among the factors that were associated with higher hospital mortality[4]. Compliance with the recommendation to draw two pairs of blood cultures before AT, however, was only in the range of 54.4 to 64.5% of patients[4, 13, 18].

Most of the previous studies investigating the timing of antimicrobials and the compliance with the SSC sepsis bundles reported only ICU mortality rates, focused mainly on patients treated in the emergency department or in the ICU, and did not evaluate the impact of delayed source control on patient outcome[3, 5, 17, 19, 20]. However, many of these patients may be admitted also from general wards or the operating room and may require effective surgical and other measures of source control. We therefore extended our assessment of infection control measures to include these patients as well. The primary aim of our cohort study was to prospectively test the hypothesis that a delay in AT and source control after onset of sepsis-related organ dysfunction impacts patient outcome. In addition, we aimed to assess compliance with recent best-practice recommendations for the diagnosis and therapy of sepsis.

Methods

Study design

This prospective study was designed as a longitudinal multicenter observational cohort study in 42 German hospitals to determine the time to AT, surgical source control and compliance with sepsis recommendations related to AT in patients with suspected severe sepsis or septic shock and its impact on 28-day, ICU, and hospital mortality. Participation of hospitals was voluntary but was restricted to hospitals involved in the primary care of sepsis patients and committed to participate in a quality improvement process. Hospitals without ICUs were excluded from this study. Potential study centers were recruited by regional and national research and quality improvement networks. The objectives of the study, inclusion and exclusion criteria, and the documentation procedures were discussed in several national meetings before the start of the study. The study was designed as a pragmatic study with a minimal case report form to allow for the participation of hospitals without research staff. This study served as a run-in study for a cluster-randomized trial assessing whether a multifaceted educational program accelerates the onset of AT and improves survival (Medical Education for Sepsis Source Control and Antibiotics MEDUSA, ClinicalTrials.gov Identifier NCT01187134).

Patients

Between December 2010 and April 2011, all consecutive adult patients treated in the ICU for proven or suspected infection with at least one new organ dysfunction related to the infection were eligible for inclusion. Organ dysfunctions were defined as follows: acute encephalopathy, thrombocytopenia defined as a platelet count <100,000/μl or a drop in platelet count >30% within 24 hours, arterial oxygen partial pressure <10 kPa (75 mmHg) when breathing room air or partial pressure of arterial oxygen/fraction of inspired oxygen ratio <33 kPa (<250 mmHg), renal dysfunction defined as oliguria (diuresis ≤0.5 ml/kg body weight/hour) despite adequate fluid resuscitation or an increase of serum creatinine more than twice the local reference value, metabolic acidosis with a base excess < −5 mmol/l or a serum lactate >1.5 times the local reference value, and arterial hypotension defined as systolic arterial blood pressure <90 mmHg or mean arterial blood pressure <70 mmHg for >1 hour despite adequate fluid loading or vasopressor therapy at any dosage to maintain higher blood pressures[21]. Patients who received initial infection control measures for sepsis in another hospital and patients who did not receive full life-sustaining treatment were excluded. The study was reviewed and approved by the local ethics committees, which waived the need for informed consent because of the observational nature of the study (see Acknowledgements). The study was also approved by the local data protection boards.

Data collection

Onset of severe sepsis or septic shock was defined as the time of first infection-related organ dysfunction as documented in the patient file. Patient location at time of onset of severe sepsis was defined as the patient location where the first infection-related organ dysfunction was documented. For patients who developed severe sepsis outside the ICU, this could be the prehospital setting, the emergency department, the hospital ward, or the operating room. Time and type of first AT as well as pre-existing AT were also recorded from the medical records. Any AT prescribed up to 24 hours before the onset of organ dysfunction but for the current infectious episode was considered previous AT. Perioperative antimicrobial prophylaxis was not regarded as specific AT for sepsis. Change of empirical AT was assessed on day 5. Initial AT was defined as inadequate if escalation had occurred within the first 5 days. For each patient, a blinded arbitrator assessed whether the initial AT complied with German guideline recommendations[22]. Source control was defined as removal of an anatomic source of infection either by surgery or intervention (that is, computed tomography-guided drainage). Source control was defined as inadequate if the technical procedure was unsuccessful. Time to source control was obtained from the medical record. Other factors included serum lactate and procalcitonin at the time of onset of severe sepsis, number of blood culture sets taken, and ICU and hospital mortality. Severity of disease was assessed by the Simplified Acute Physiology Score II and the Sequential Organ Failure Assessment score on the day of sepsis diagnosis[23, 24].

Data were collected by a web-based electronic case report form using OpenClinica® (OpenClinica, LLC, Waltham, MA, USA). Data integrity was confirmed by data checks within the database, resulting in queries to the investigator where applicable. Additionally, onset of infection-related organ dysfunction and subsequent AT were checked for plausibility by the MEDUSA study staff and discussed with the study center staff where applicable.

Statistical analysis

The primary endpoint was survival status at day 28 after onset of severe sepsis. Categorical data are expressed as absolute or relative frequencies; the chi-square or Fisher's exact test was used for inferential statistics. Continuous data are expressed as the median and interquartile range; the Mann–Whitney U test was used for inferential statistics. Missing data were not replaced by calculation. We divided patients according to the timing of antimicrobial treatment into the following groups: previous AT, 0 to 1 hours, 1 to 3 hours, 3 to 6 hours and >6 hours[6]. Patients were grouped by time to source control into two groups: within 6 hours or >6 hours[25]. Odds ratios (OR) with 95% confidence intervals (CI) for the risk of death within 28 days depending on time to AT or time to source control were calculated by univariate and multivariate logistic regression only in those patients were treatment was started after onset of organ dysfunction. In patients with sepsis, prior research has identified the initial Sequential Organ Failure Assessment score, age, and serum lactate[20] as confounders for the risk of death. These parameters were therefore included in the multivariable logistic regression analysis to calculate adjusted ORs. In addition, indication for source control, escalation as well as de-escalation of empirical AT within 5 days, presence of community acquired infection, focus of infection, and state of blood culture withdrawal were only included into the final model if they were associated with 28-day mortality at P < 0.20. Goodness of fit was assessed by the c-statistic and the Hosmer-Lemeshow test.

Results

Participating centers and patients

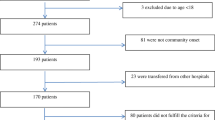

Hospital and ICU characteristics of the 44 participating centers are shown in Table 1. A total of 1,048 patients were included during the 5-month study period. Of these, 37 patients were excluded for missing values in 28-day mortality or time to AT, resulting in 1,011 evaluable patients. Approximately one-half of the patients (n = 504, 49.9%) were treated for surgical reasons. Organ dysfunctions on the day of study inclusion were shock (n = 632, 76.4%), lactacidosis (n = 424, 51.3%), acute renal failure (n = 307, 37.2%) thrombocytopenia (n = 238, 28.8%), pulmonary dysfunction (n = 492, 65.8%), and septic encephalopathy (n = 338, 41.2%). A total 85.1% of the patients had more than one organ failure. Patient characteristics are shown in Table 2. The overall 28-day mortality was 34.8%; ICU mortality and hospital mortality were 33.0% and 41.4%, respectively. There was no association of academic versus nonacademic hospitals or hospital size with 28-day mortality.

Timing and adequacy of antimicrobial therapy

Three hundred and seventy (36.6%) patients received antimicrobials within 1 hour of the onset of organ dysfunction; among these, 186 (18.4%) patients received antimicrobials in the first hour and 184 patients (18.2%) received antimicrobials prior to onset of organ dysfunction. Six hundred and forty-one (63.3%) patients received their first AT more than 1 hour after onset of organ dysfunction (Figure 1). Median time to AT was 2.1 (interquartile range: 0.8 to 6) hours in all patients and 2.8 (interquartile range: 1.0 to 2.8) hours for patients that received their AT after development of organ dysfunction. Median times by location where sepsis developed were 2.8 (1 to 7.2) hours in intermediate care wards, 2.7 (1.5 to 5. 2) hours in hospital wards, 2.3 (0.6 to 7.3) hours in ICUs, 2.2 (1.4 to 3.0) hours prehospital, 2.0 (1.0 to 3.5) hours in emergency departments, and 1.1 (0.2 to 4.7) hours in operating theatres. Three hundred and twenty-one patients were admitted to the ICU with septic shock. Median time to AT was 2.1 (1.0 to 5.0) hours in this subgroup. The 28-day mortality was 34.9% in the 186 patients who received AT during the first hour and 36.2% in 641 patients when AT was given more than 1 hour after onset of the first sepsis-related organ dysfunction (P = 0.76). For the subgroup of 370 patients who received AT within 1 hour, 28-day mortality was 32.4% compared with 36.2% for those 641 patients receiving delayed AT (P = 0.227). There was no linear relationship between time to AT and 28-day mortality (Figure 1). There was also no association between time to AT and risk of death within 28 days (OR per hour increase of time to AT: 1.0 (95% CI: 1.0 to 1.0), P = 0.482) in those 849 patients that received their AT after the first organ dysfunction. Considering a mean time to AT of 6.1 (± standard deviation 9.5) hours, the data would allow one to identify an effect OR of 1.02 per hour increase with an alpha level of 0.05 and a power of 0.8.

The 28-day mortality was lower (29.9%) in patients who developed severe sepsis or septic shock while being treated with antimicrobials compared with a mortality rate of 35.9% in the patients who were treated only after diagnosis, although the difference was not statistically significant (P = 0.121). Time to first AT was longer for nonsurvivors than survivors (Table 3). This difference reached statistical significance for ICU mortality (P = 0.023) and hospital mortality (P = 0.02) but not for 28-day mortality (P = 0.112). For 763 (75.5%) patients, the initial empirical therapy complied with the German recommendations for AT. The 28-day mortality was 34.9% in the compliant group and 34.3% in the noncompliant group (P = 0.869).

The most frequently used empirical antimicrobial agents were piperacillin/tazobactam or ampicillin/sulbactam (26.7%), followed by imipenem/cilastatin (8.2%) and meropenem (7.8%). Empirical AT was escalated within the first 5 days in 423 (41.9%) patients. Therapy was escalated more often in patients receiving antimicrobials not in compliance with guideline recommendations (125 of 245, 51%) than in patients treated in compliance with guidelines (298 of 762, 39.1%; P < 0.01). For 96 patients (9.5%), AT was de-escalated within 5 days. The 28-day mortality rate was 22.9% in patients with de-escalation compared with 36.0% in patients without de-escalation (P = 0.01).

For 587 (58.1%) patients, AT was deemed adequate. In patients with inadequate AT, the 28-day mortality rate was significantly higher compared with adequately treated patients (40.9% vs. 30.3%, P < 0.001; Table 4). This increased risk was also evident when patients received AT within the first hour: in patients receiving AT within 1 hour, 28-day mortality was 26.9% (32/119) when this AT was deemed adequate compared with 48.5% (32/66) for patients with inadequate AT; likewise, in patients receiving AT later than 1 hour, 28-day mortality was 31.2% (146/468) for adequate compared with 42.7% (118/278) for an inadequate AT (P < 0.001). Among 588 patients not requiring surgical or interventional source control, 101/335 (30.1%) patients with adequate AT and 112/253 (44.3%) patients with inadequate AT died within 28 days (P < 0.001).

By multivariable analysis, inadequate empirical AT (OR (95% CI): 1.44 (1.05 to 1.99)) as well as age (OR (95% CI): 1.04 (1.03 to 1.06)), initial Sequential Organ Failure Assessment score (OR (95% CI): 1.18 (1.13 to 1.24)) and maximum serum lactate levels on the day of diagnosis of severe sepsis or septic shock (OR (95% CI): 1.09 (1.05 to 1.14)) were significantly associated with an increased risk of death. Adjusted for these and further covariates, a delay in the administration of AT more than 1 hour after onset of organ dysfunction (OR (95% CI): 0.96 (0.69 to 1.33)) was not associated with an increased 28-day mortality (Table 5). Likewise, no association between time to AT and outcome was seen in the subgroup of patients without need for surgical or interventional source control (Table 5).

Blood culture testing

Blood cultures were taken before AT in 649 (64.2%) patients, and 48.8% of these cultures were positive. In 269 patients (41.4% of patients from whom blood cultures were drawn), only one set of blood cultures was obtained. In the 317 positive blood cultures, 187 (62.1%) showed Gram-positive bacteria, 127 (42.2%) showed Gram-negative bacteria, and 20 (6.6%) showed fungi; 32 (10.6%) of the positive blood cultures revealed more than one pathogen.

Among the 317 patients with a positive blood culture, 63.3% received antimicrobials before onset of organ dysfunction and 103 (33.7%) received antimicrobials after onset of organ dysfunction. The 28-day mortality in these groups was 35.9% and 31.5%, respectively (P = 0.440).

Time and adequacy to source control

Surgical (84.8%) or interventional (15.9%) source control was performed in 422 patients: overall median time to source control was 3 (–0.1 to 13.7) hours and 6 (2 to 20) hours, respectively, in those patients where source control was initiated after development of organ dysfunction (n = 314). One hundred and fifty-eight of 314 patients (50.3%) received source control within 6 hours after onset of infection-related organ dysfunction. The time to source control was significantly longer in nonsurvivors than in survivors (Table 3). In 55 patients (13.3%), source control was assessed as being inadequate. The 28-day mortality was 65.5% in patients with inadequate source control compared with 26.7% in patients with adequate source control (P <0.01). There was no direct relationship between time to source control and risk of death within 28 days (OR per hour increase of time to source control: 1.0 (95% CI: 1. to 1.0), P = 0.725). Patients who had surgical source control delayed for more than 6 hours had a significantly higher 28-day mortality (42.9% vs. 26.7%, P <0.001); this delay was independently associated with an increased risk of death (Table 5). There was neither a statistically significant interaction nor a collinearity between time to AT and time to source control.

Discussion

This prospective observational trial included 1,011 evaluable patients with severe sepsis from a large group of academic and nonacademic hospitals. The main finding of this study was that surgical source control within the first 6 hours was associated with 16% lower 28-day mortality. This finding is of interest since the SSC guidelines recently increased the window for source control from 6 hours[25] to 12 hours[1] after diagnosis. This decision is based on a single study in patients with necrotizing soft tissue infection, where a delay of surgery >14 hours was associated with an increased risk of death[7]. However, this study by Boyer and colleagues did not examine the effect of shorter delays on mortality. While current data suggest that delayed surgery adversely affects outcome[26, 27], studies to allow the determination of an optimal time point of surgical source control are rare. A retrospective analysis of patients with fecal peritonitis did not confirm a relationship between duration until source control and mortality[28]. However, overall mortality in this study was very low with 19.1% and only 24-hour time intervals were reported. Our data are more consistent with an observational study of children reporting that all patients who received surgical debridement for necrotizing fasciitis at later than 3 hours died[8]. Likewise, a study in patients with perforated peptic ulcers found that each hour delay in surgical source control increases 30-day mortality by 2%[9]. Clearly, more data on the relationship between time to source control and patient outcome are needed. In the interim, surgical source control should be performed as soon as possible.

Our observation that early AT of the underlying infection of sepsis before onset of organ dysfunction is associated with a trend towards lower 28-day mortality in the range of 6% supports the importance of early recognition and antimicrobial treatment of infection underlying sepsis[1]. The finding that the median time to antimicrobial treatment was about 40 minutes shorter in survivors than in nonsurvivors confirms other studies[18, 29]. Median times to antimicrobial administration were 2.1 hours after diagnosis of severe sepsis or septic shock and thus exceeded guideline recommendations[1]. Similar delays have been reported in other studies[5, 13, 18, 20, 30, 31]. In contrast to our data, a number of studies demonstrated an association between patient outcome and time to AT in patients with severe infections[2, 32–35]. Like other studies[6, 20], we could not confirm the data of Kumar and coworkers in patients with septic shock that suggested an increase of 7.6% in hospital mortality per hour delay in AT[3]. This may be related to differences in the patient population or study methodology. Kumar and colleagues focused their work on patients with septic shock and observed a median time to AT of 6 hours – three times longer than what we and other studies have observed[3].

There are some other considerations that may explain the different findings about time to AT and its association with mortality. Firstly, some studies used the time until adequate AT[3, 5, 34] rather than time to first AT, as we did. We rather applied an approach similar to Puskarich and colleagues because it seems unreasonable to assess the quality of primary care with microbiological data that are not available for the treating physician at that time[20]; other studies also used this design[2, 17, 36]. Furthermore, the underlying pathogen may remain unknown and alternative definitions of adequacy such as guideline adherence[3, 34] need to replace the microbiological definition of adequacy anyhow. Secondly, the definition of the starting time for the duration until AT is defined significantly different across the available studies and includes hospital[33, 35] or ICU admission[2], onset of arterial hypotension[3, 34], and the time when cultures were obtained[17, 36]. We have chosen onset of infection-related organ dysfunction since this is a clinical feature that should trigger initiation of primary sepsis care. All of the chosen starting times may overlook that significant organ dysfunction occurred before the defined time. These considerations suggest that the investigation of the impact of timing of AT on patient outcome is limited in observational studies.

The concept of early empirical AT has recently been challenged. Puskarich and colleagues did not find an increase in mortality with each hour delay in AT in emergency department patients with septic shock[20]. In a before-and-after-study in critically ill surgical patients, AT initiated only after microbiological confirmation was associated with a lower mortality rate than early empirical AT[17]. However, the overall long delays to antimicrobial administration in both groups (11 and 17.7 hours, respectively) limit interpretation of results from that study[37].

In general, compliance with sepsis guideline recommendations was poor. Only one-third of patients received their first antimicrobial agent according to current guideline recommendations before or within 1 hour of diagnosis of severe sepsis. Blood cultures before AT were taken in 649 (64.2%) patients; however, two sets of blood cultures were obtained in only one-half of these patients. Choice of antimicrobials complied with German recommendations for empirical AT[22] in 75% of cases. Nevertheless, in about 40% of patients the treating physicians considered first AT as inadequate and escalated AT within the first 5 days. Overall, 28-day mortality of these patients was considerably increased. The association between adequacy of AT and patient outcome remained significant regardless of whether AT was given earlier or later than 1 hour after onset of severe sepsis. This was also true for the 588 patients not requiring surgical or interventional source control. Therefore it seems unlikely that AT was deemed inadequate and changed because the patient deteriorated for reasons unrelated to the microbiological inappropriateness of AT, such as inadequate surgical source control. Increased mortality in patients with inappropriate initial AT has also been observed in other studies[38, 39]. Recent data from the EUROBACT study concluded that infections with multiresistant organisms are associated with a delay of appropriate AT and increased mortality[40].

Current guidelines recommend at least two sets of blood cultures before starting AT[1]. In our study, two-thirds of patients had blood cultures drawn before AT. However, only one set was drawn in about 50% of those patients. Drawing blood cultures before initiation of broad-spectrum antimicrobials was associated with a lower risk of death in the SSC database[4] but not in our study. This may be explained by the much larger sample size in the SSC database.

Our study has strengths and weaknesses. Strengths include the prospective data collection and multicenter design. Unlike previous studies, our study used short-term prospective data collection and is therefore not influenced by secular trends. Furthermore, reporting of times to AT not only in the ICU but also in other locations as well as outside the hospital and inclusion of medical centers with all levels of care increases the generalizability of our results. Although we enrolled over 1,000 patients, the sample size may not have been large enough to detect small differences in outcome; moreover, we cannot rule out that eligible patients were not included in the study because of limited resources. We also did not include patients who were not referred to the ICU. However, it is unlikely that many such patients were missed since in Germany the majority of patients with organ dysfunction are referred to an ICU or intermediate care unit.

We did not assess adequacy of AT by means of microbiological susceptibility testing results because many of the included hospitals lacked the staff to report such data for a study. Instead, we used the pragmatic approach to ask physicians to record any change of AT within 5 days, which was defined a priori as an indication of inadequate initial therapy. Despite the fact that we found this association also in nonsurgical patients, we cannot rule out that AT was changed because the patient deteriorated for reasons that were not related to the microbiological inappropriateness of AT. Except for serum lactate measurements, which were obtained in 95.2% of the patients at baseline, we did not assess the compliance with other guideline recommendations and therefore cannot rule out that mortality rates were potentially influenced by unmeasured effects; for instance, timely fluid resuscitation or appropriate use of other supportive measures.

Conclusions

More data on the relationship between time to source control and patient outcome are needed. In the interim, surgical source control should be performed as soon as possible. Adequacy of empirical AT is important for the survival in sepsis, and choice of initial AT is an important decision in the therapy of these patients. There was only indirect evidence about the impact of timing of AT on sepsis mortality but evidence about this issue varies significantly among the available studies. Randomized controlled trials are thus necessary to further elucidate the impact of AT timing on survival. Quality improvement initiatives should not be restricted to severe sepsis but should also focus on the timely recognition and adequate treatment of infections to prevent their progress to severe sepsis.

Key messages

-

A delay of surgical or interventional source control of more than 6 hours was associated with increased mortality.

-

Although survivors had a shorter time to AT than nonsurvivors, there was no significant association or linear relationship between time to AT and survival.

-

An inadequate empiric AT was associated with an increased mortality.

-

Compliance with guidelines regarding anti-infectious measures regarding timing and choice of empiric AT, withdrawal of blood cultures, and de-escalation of AT should be improved.

Abbreviations

- AT:

-

antimicrobial therapy

- CI:

-

confidence interval

- OR:

-

odds ratio

- SSC:

-

Surviving Sepsis Campaign.

References

Dellinger RP, Levy MM, Rhodes A, Annane D, Gerlach H, Opal SM, Sevransky JE, Sprung CL, Douglas IS, Jaeschke R, Osborn TM, Nunnally ME, Townsend SR, Reinhart K, Kleinpell RM, Angus DC, Deutschman CS, Machado FR, Rubenfeld GD, Webb SA, Beale RJ, Vincent JL, Moreno R, Surviving Sepsis Campaign Guidelines Committee including the Pediatric Subgroup: Surviving sepsis campaign: international guidelines for management of severe sepsis and septic shock: 2012. Crit Care Med 2013, 41: 580-637. 10.1097/CCM.0b013e31827e83af

Larche J, Azoulay E, Fieux F, Mesnard L, Moreau D, Thiery G, Darmon M, Le Gall JR, Schlemmer B: Improved survival of critically ill cancer patients with septic shock. Intensive Care Med 2003, 29: 1688-1695. 10.1007/s00134-003-1957-y

Kumar A, Roberts D, Wood KE, Light B, Parrillo JE, Sharma S, Suppes R, Feinstein D, Zanotti S, Taiberg L, Gurka D, Cheang M: Duration of hypotension before initiation of effective antimicrobial therapy is the critical determinant of survival in human septic shock. Crit Care Med 2006, 34: 1589-1596. 10.1097/01.CCM.0000217961.75225.E9

Levy MM, Dellinger RP, Townsend SR, Linde-Zwirble WT, Marshall JC, Bion J, Schorr C, Artigas A, Ramsay G, Beale R, Parker MM, Gerlach H, Reinhart K, Silva E, Harvey M, Regan S, Angus DC: The surviving sepsis campaign: results of an international guideline-based performance improvement program targeting severe sepsis. Crit Care Med 2010, 38: 367-374. 10.1097/CCM.0b013e3181cb0cdc

Gaieski DF, Mikkelsen ME, Band RA, Pines JM, Massone R, Furia FF, Shofer FS, Goyal M: Impact of time to antibiotics on survival in patients with severe sepsis or septic shock in whom early goal-directed therapy was initiated in the emergency department. Crit Care Med 2010, 38: 1045-1053. 10.1097/CCM.0b013e3181cc4824

Ferrer R, Artigas A, Suarez D, Palencia E, Levy MM, Arenzana A, Perez XL, Sirvent JM: Effectiveness of treatments for severe sepsis: a prospective multicenter observational study. Am J Respir Crit Care Med 2009, 180: 861-866. 10.1164/rccm.200812-1912OC

Boyer A, Vargas F, Coste F, Saubusse E, Castaing Y, Gbikpi-Benissan G, Hilbert G, Gruson D: Influence of surgical treatment timing on mortality from necrotizing soft tissue infections requiring intensive care management. Intensive Care Med 2009, 35: 847-853. 10.1007/s00134-008-1373-4

Moss RL, Musemeche CA, Kosloske AM: Necrotizing fasciitis in children: prompt recognition and aggressive therapy improve survival. J Pediatr Surg 1996, 31: 1142-1146. 10.1016/S0022-3468(96)90104-9

Buck DL, Vester-Andersen M, Moller MH, Danish Clinical Register of Emergency S: Surgical delay is a critical determinant of survival in perforated peptic ulcer. Br J Surg 2013, 100: 1045-1049. 10.1002/bjs.9175

Marshall JC, Maier RV, Jimenez M, Dellinger EP: Source control in the management of severe sepsis and septic shock: an evidence-based review. Crit Care Med 2004, 32: S513-S526. 10.1097/01.CCM.0000143119.41916.5D

Cardoso T, Carneiro AH, Ribeiro O, Teixeira-Pinto A, Costa-Pereira A: Reducing mortality in severe sepsis with the implementation of a core 6-hour bundle: results from the Portuguese community-acquired sepsis study (SACiUCI study). Crit Care 2010, 14: R83. 10.1186/cc9008

Castellanos-Ortega A, Suberviola B, Garcia-Astudillo LA, Holanda MS, Ortiz F, Llorca J, Delgado-Rodriguez M: Impact of the surviving sepsis campaign protocols on hospital length of stay and mortality in septic shock patients: results of a three-year follow-up quasi-experimental study. Crit Care Med 2010, 38: 1036-1043. 10.1097/CCM.0b013e3181d455b6

Ferrer R, Artigas A, Levy MM, Blanco J, Gonzalez-Diaz G, Garnacho-Montero J, Ibanez J, Palencia E, Quintana M, de la Torre-Prados MV: Improvement in process of care and outcome after a multicenter severe sepsis educational program in Spain. JAMA 2008, 299: 2294-2303. 10.1001/jama.299.19.2294

Chamberlain DJ, Willis EM, Bersten AB: The severe sepsis bundles as processes of care: a meta-analysis. Aust Crit Care 2011, 24: 229-243. 10.1016/j.aucc.2011.01.003

Barochia AV, Cui X, Vitberg D, Suffredini AF, O'Grady NP, Banks SM, Minneci P, Kern SJ, Danner RL, Natanson C, Eichacker PQ: Bundled care for septic shock: an analysis of clinical trials. Crit Care Med 2010, 38: 668-678. 10.1097/CCM.0b013e3181cb0ddf

Brunkhorst FM, Engel C, Ragaller M, Welte T, Rossaint R, Gerlach H, Mayer K, John S, Stuber F, Weiler N, Oppert M, Moerer O, Bogatsch H, Reinhart K, Loeffler M, Hartog C: Practice and perception – a nationwide survey of therapy habits in sepsis. Crit Care Med 2008, 36: 2719-2725. 10.1097/CCM.0b013e318186b6f3

Hranjec T, Rosenberger LH, Swenson B, Metzger R, Flohr TR, Politano AD, Riccio LM, Popovsky KA, Sawyer RG: Aggressive versus conservative initiation of antimicrobial treatment in critically ill surgical patients with suspected intensive-care-unit-acquired infection: a quasi-experimental, before and after observational cohort study. Lancet Infect Dis 2012, 12: 774-780. 10.1016/S1473-3099(12)70151-2

Phua J, Koh Y, Du B, Tang YQ, Divatia JV, Tan CC, Gomersall CD, Faruq MO, Shrestha BR, Gia Binh N, Arabi YM, Salahuddin N, Wahyuprajitno B, Tu ML, Wahab AY, Hameed AA, Nishimura M, Procyshyn M, Chan YH: Management of severe sepsis in patients admitted to Asian intensive care units: prospective cohort study. Br Med J 2011, 342: d3245. 10.1136/bmj.d3245

Hitti EA, Lewin JJ 3rd, Lopez J, Hansen J, Pipkin M, Itani T, Gurny P: Improving door-to-antibiotic time in severely septic emergency department patients. J Emerg Med 2012, 42: 462-469. 10.1016/j.jemermed.2011.05.015

Puskarich MA, Trzeciak S, Shapiro NI, Arnold RC, Horton JM, Studnek JR, Kline JA, Jones AE: Association between timing of antibiotic administration and mortality from septic shock in patients treated with a quantitative resuscitation protocol. Crit Care Med 2011, 39: 2066-2071. 10.1097/CCM.0b013e31821e87ab

Brunkhorst FM, Engel C, Bloos F, Meier-Hellmann A, Ragaller M, Weiler N, Moerer O, Gruendling M, Oppert M, Grond S, Olthoff D, Jaschinski U, John S, Rossaint R, Welte T, Schaefer M, Kern P, Kuhnt E, Kiehntopf M, Hartog C, Natanson C, Loeffler M, Reinhart K: Intensive insulin therapy and pentastarch resuscitation in severe sepsis. N Engl J Med 2008, 358: 125-139. 10.1056/NEJMoa070716

Vogel F, Bodmann KF, Expert Committee of the Paul-Ehrlich-Gesellschaft: [Recommendations about the calculated parenteral initial therapy of bacterial diseases in adults]. Chemother J 2004, 13: 46-105.

Vincent JL, Moreno R, Takala J, Willatts S, De Mendonca A, Bruining H, Reinhart K, Suter PM, Thijs LG: The SOFA (sepsis-related organ failure assessment) score to describe organ dysfunction/failure. On behalf of the working group on sepsis-related problems of the european society of intensive care medicine. Intensive Care Med 1996, 22: 707-710. 10.1007/BF01709751

Le Gall JR, Lemeshow S, Saulnier F: A new simplified acute physiology score (SAPS II) based on a European/North American multicenter study. JAMA 1993, 270: 2957-2963. 10.1001/jama.1993.03510240069035

Dellinger RP, Levy MM, Carlet JM, Bion J, Parker MM, Jaeschke R, Reinhart K, Angus DC, Brun-Buisson C, Beale R, Calandra T, Dhainaut JF, Gerlach H, Harvey M, Marini JJ, Marshall J, Ranieri M, Ramsay G, Sevransky J, Thompson BT, Townsend S, Vender JS, Zimmerman JL, Vincent JL: Surviving sepsis campaign: international guidelines for management of severe sepsis and septic shock: 2008. Crit Care Med 2008, 36: 296-327. 10.1097/01.CCM.0000298158.12101.41

Mulier S, Penninckx F, Verwaest C, Filez L, Aerts R, Fieuws S, Lauwers P: Factors affecting mortality in generalized postoperative peritonitis: multivariate analysis in 96 patients. World J Surg 2003, 27: 379-384. 10.1007/s00268-002-6705-x

Torer N, Yorganci K, Elker D, Sayek I: Prognostic factors of the mortality of postoperative intraabdominal infections. Infection 2010, 38: 255-260. 10.1007/s15010-010-0021-4

Tridente A, Clarke GM, Walden A, McKechnie S, Hutton P, Mills GH, Gordon AC, Holloway PA, Chiche JD, Bion J, Stuber F, Garrard C, Hinds CJ, Gen OI: Patients with faecal peritonitis admitted to European intensive care units: an epidemiological survey of the GenOSept cohort. Intensive Care Med 2014, 40: 202-210. 10.1007/s00134-013-3158-7

Labelle A, Juang P, Reichley R, Micek S, Hoffmann J, Hoban A, Hampton N, Kollef M: The determinants of hospital mortality among patients with septic shock receiving appropriate initial antibiotic treatment. Crit Care Med 2012, 40: 2016. 2021 10.1097/CCM.0b013e318250aa72

Francis M, Rich T, Williamson T, Peterson D: Effect of an emergency department sepsis protocol on time to antibiotics in severe sepsis. CJEM 2010, 12: 303-310.

Shapiro NI, Howell MD, Talmor D, Lahey D, Ngo L, Buras J, Wolfe RE, Weiss JW, Lisbon A: Implementation and outcomes of the multiple urgent sepsis therapies (MUST) protocol. Crit Care Med 2006, 34: 1025-1032. 10.1097/01.CCM.0000206104.18647.A8

Iregui M, Ward S, Sherman G, Fraser VJ, Kollef MH: Clinical importance of delays in the initiation of appropriate antibiotic treatment for ventilator-associated pneumonia. Chest 2002, 122: 262-268. 10.1378/chest.122.1.262

Meehan TP, Fine MJ, Krumholz HM, Scinto JD, Galusha DH, Mockalis JT, Weber GF, Petrillo MK, Houck PM, Fine JM: Quality of care, process, and outcomes in elderly patients with pneumonia. JAMA 1997, 278: 2080. 2084 10.1001/jama.1997.03550230056037

Bagshaw SM, Lapinsky S, Dial S, Arabi Y, Dodek P, Wood G, Ellis P, Guzman J, Marshall J, Parrillo JE, Skrobik Y, Kumar A, Cooperative Antimicrobial Therapy of Septic Shock Database Research Group: Acute kidney injury in septic shock: clinical outcomes and impact of duration of hypotension prior to initiation of antimicrobial therapy. Intensive Care Med 2009, 35: 871-881. 10.1007/s00134-008-1367-2

Garnacho-Montero J, Aldabo-Pallas T, Garnacho-Montero C, Cayuela A, Jimenez R, Barroso S, Ortiz-Leyba C: Timing of adequate antibiotic therapy is a greater determinant of outcome than are TNF and IL-10 polymorphisms in patients with sepsis. Crit Care 2006, 10: R111. 10.1186/cc4995

Barie PS, Hydo LJ, Shou J, Larone DH, Eachempati SR: Influence of antibiotic therapy on mortality of critical surgical illness caused or complicated by infection. Surg Infect (Larchmt) 2005, 6: 41-54. 10.1089/sur.2005.6.41

Noble DW, Gould IM: Antibiotics for surgical patients: the faster the better? Lancet Infect Dis 2012, 12: 741-742. 10.1016/S1473-3099(12)70208-6

Harbarth S, Garbino J, Pugin J, Romand JA, Lew D, Pittet D: Inappropriate initial antimicrobial therapy and its effect on survival in a clinical trial of immunomodulating therapy for severe sepsis. Am J Med 2003, 115: 529-535. 10.1016/j.amjmed.2003.07.005

Kumar A, Ellis P, Arabi Y, Roberts D, Light B, Parrillo JE, Dodek P, Wood G, Simon D, Peters C, Ahsan M, Chateau D: Initiation of inappropriate antimicrobial therapy results in a fivefold reduction of survival in human septic shock. Chest 2009, 136: 1237-1248.

Abah A, Koulenti D, Laupland K, Misset B, Valles J, Bruzzi de Carvalho F, Paiva JA, Cakar N, Ma X, Eggimann P, Antonelli M, Bonten MJ, Csomos A, Krueger WA, Mikstacki A, Lipman J, Depuydt P, Vesin A, Garrouste-Orgeas M, Zahar JR, Blot S, Carlet J, Brun-Buisson C, Martin C, Rello J, Dimopoulos G, Timsit JF: Characteristics and determinants of outcome of hospital-acquired bloodstream infections in intensive care units: the EUROBACT International Cohort Study. Intensive Care Med 2012, 38: 1930. 1945 10.1007/s00134-012-2695-9

Acknowledgements

Financial support was received from the German Federal Ministry of Education and Research via the integrated research and treatment Center for Sepsis Control and Care (FKZ 01EO1002).

In addition to the authors, the following investigators and institutions participated in the MEDUSA study: Department of Intensive Care Medicine, University Hospital Aachen (G Marx, T Schürholz); Department of Anesthesiology, Intensive Care Medicine, and Pain Therapy, Hospital Altenburger Land, Altenburg (M Blacher, M Kretzschmar); Department of Anesthesiology and Intensive Care Medicine, Ilm-Kreis-Kliniken Arnstadt-Ilmenau, Arnstadt (H Schlegel-Höfner); Department of Anesthesiology and Intensive Care Medicine, HELIOS Klinikum Aue (P Fischer); Department of Anesthesiology and Intensive Care Medicine, Zentralklinik Bad Berka GmbH, Bad Berka (T Schreiber); Department of Anesthesiology and Intensive Care Medicine, Hufelandkrankenhaus GmbH, Bad Langensalza (R Steuckart); Department of Anesthesiology and Intensive Care Medicine, Bundeswehrkrankenhaus Berlin (H Bubser, K Dey); Department of Anesthesiology, Intensive Care Medicine, and Pain Therapy, Vivantes Klinikum Neukölln, Berlin (H Gerlach); Department of Intensive Care Medicine, HELIOS Kliniken Berlin-Buch, Berlin (J Brederlau); Department of Anesthesiology and Intensive Care Medicine, Charité Berlin (C Spies); Department of Anesthesiology and Intensive Care and Emergency Medicine, HELIOS Klinikum Emil von Behring, Berlin (A Lubasch, O Franke); Department of Anesthesiology, Intensive Care Medicine, Emergency Medicine, and Pain Therapy, Ev. Krankenhaus Bielefeld (F Bach); Department of Anesthesiology, Intensive Care Medicine, and Pain Therapy, HELIOS St. Josefs-Hospital Bochum–Linden, Bochum (U Bachmann-Holdau); Department of Anesthesiology and Intensive Care Medicine, St. Georg Hospital Eisenach (J Eiche); Department of Anesthesiology and Intensive Care Medicine, Waldkrankenhaus Rudolf Elle GmbH, Eisenberg (M Lange, D Volkert); Department of Anesthesiology, Intensive Care Medicine, and Pain Therapy, Helios Klinikum Erfurt (A Meier-Hellmann); Department of Anesthesiology and Intensive Care Medicine, Catholic Hospital St. Johann Nepomuk Erfurt (T Clausen); Department of Internal Medicine, Bürgerhospital Friedberg (A Niedenthal, M Sternkopf); Department of Anesthesiology and Intensive Care Medicine, GeoMed Klinikum Gerolzhofen (H Schulz); Department of Anesthesiology, Intensive Care Medicine, and Pain Therapy, Klinik am Eichert, Göppingen (S Rauch); Department of Anesthesiology and Intensive Care Medicine, Ernst-Moritz-Arndt-University Greifswald (M Gründling); Department of Anesthesiology and Intensive Care Medicine, Helios St. Elisabeth Klinik Hünfeld (N Knöck); Department of Anesthesiology and Intensive Care Medicine, Ilm-Kreis-Kliniken Arnstadt–Ilmenau, Ilmenau (G Scheiber); Department of Anesthesiology and Intensive Care Medicine, University Medical Center Schleswig-Holstein, Campus Kiel (N Weiler); Department of Anesthesiology, Intensive Care Medicine, and Pain Therapy, HELIOS-Klinikum Krefeld GmbH, Krefeld (E Berendes, S Nicolas); Department of Anesthesiology and Intensive Care Medicine, Hospital Landshut–Achdorf, Landshut (M Anetseder, Z Textor); Department of Anesthesiology and Intensive Care Medicine, University Hospital Leipzig (U Kaisers, P Simon); Department of Intensive Care and Emergency Medicine, Hospital Meiningen (G Braun); Department of Anesthesiology and Intensive Care Medicine, Saale-Unstrut-Klinikum Naumburg (K Becker); Department of Anesthesiology and Intensive Care Medicine, Südharz-Krankenhaus Nordhausen (R Laubinger, U Klein); Department of Anesthesiology and Intensive Care Medicine, Thüringen-Klinik Pößneck (F Knebel); Department of Intensive Care and Emergency Medicine, ASKLEPIOS-ASB Krankenhaus Radeberg (R Sinz); Department of Anesthesiology, Intensive Care Medicine, and Pain Therapy, Thüringen-Kliniken ‘Georgius Agricola’, Saalfeld/Saale (A Fischer); Department of Anesthesiology and Intensive Care Medicine, Diakonie-Klinikum Schwäbisch Hall, Schwäbisch Hall (K Hornberger, K Rosenhagen); Department of Anesthesiology and Intensive Care and Emergency Medicine, Ev. Jung-Stilling-Krankenhaus, Siegen (A Seibel); Department of Anesthesiology and Intensive Care Medicine, SRH Zentralklinikum Suhl (W Schummer); Department of Internal Medicine, University Hospital Tübingen (A Heininger); Department of Anesthesiology, Intensive Care Medicine, and Pain Therapy, Sophien- und Hufeland-Klinikum gGmbH, Weimar (C Lascho, F Schmidt); Department of Intensive Care Medicine, HELIOS Klinikum Wuppertal (G Wöbker).

The following ethics bodies approved the study: Ethics Committee (EC) of the Friedrich-Schiller-University (primary vote), EC of the medical association of Thuringia, EC of the medical association of Bavaria, EC of the Christian-Albrechts-University Kiel, EC of the medical association of Saxony-Anhalt, EC of the medical association of Bavaria, EC of the medical association of Westphalia-Lippe and the Westphalian Wilhelms-University Munster, EC of the University Leipzig, EC of the University Witten/Herdecke, EC of the medical association of Saarland, EC of the medical association of Hesse, EC of the medical association of Baden-Württemberg, EC of the Ulm University, EC of the Ernst-Moritz-Arndt-University Greifswald, EC of the medical association of Lower Saxony, EC of the medical association of Saxony, EC of the medical association of North Rhine, EC of the Eberhard-Karls University Tübingen, EC of the Carl-Gustav-Carus University Dresden, EC of the RWTH Aachen, EC of the Friedrich-Wilhelm-University Bonn, and EC of the medical association of Hamburg.

Author information

Authors and Affiliations

Consortia

Corresponding author

Additional information

Competing interests

The authors declare that they have no competing interests.

Authors’ contribution

All authors made substantive intellectual contributions to the manuscript. FB and KR conceived and designed the study, drafted the manuscript, and were responsible for the grant funding. DaS, DT-R, HR, PS, RR, DK, KD, MW, ST, DiS, AW, MR, KS, JE, GK, and UK participated in the acquisition of the data, were responsible for the conduct of the study and helped to revise the manuscript. CE and HH participated in the study design and the statistical data analysis and helped to revise the manuscript. JCM, SH, and CH participated in the assessment of the data analysis and revised the manuscript. All authors read and approved the final manuscript.

Authors’ original submitted files for images

Below are the links to the authors’ original submitted files for images.

Rights and permissions

This article is published under an open access license. Please check the 'Copyright Information' section either on this page or in the PDF for details of this license and what re-use is permitted. If your intended use exceeds what is permitted by the license or if you are unable to locate the licence and re-use information, please contact the Rights and Permissions team.

About this article

Cite this article

Bloos, F., Thomas-Rüddel, D., Rüddel, H. et al. Impact of compliance with infection management guidelines on outcome in patients with severe sepsis: a prospective observational multi-center study. Crit Care 18, R42 (2014). https://doi.org/10.1186/cc13755

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/cc13755